A Comprehensive Framework for Validating Food Intake Wearables: Protocols, Metrics, and Clinical Applications

This article provides a structured validation protocol for wearable sensors designed to monitor food intake, addressing a critical need for standardization in digital nutrition science.

A Comprehensive Framework for Validating Food Intake Wearables: Protocols, Metrics, and Clinical Applications

Abstract

This article provides a structured validation protocol for wearable sensors designed to monitor food intake, addressing a critical need for standardization in digital nutrition science. Aimed at researchers, scientists, and drug development professionals, it synthesizes current evidence and methodologies to establish a robust framework for evaluating sensor technology. The content systematically covers the foundational principles of dietary monitoring wearables, details the application of specific validation methodologies, addresses common challenges and optimization strategies, and presents a comparative analysis of validation approaches. By integrating insights from recent systematic reviews, clinical study protocols, and feasibility trials, this guide aims to enhance the reliability, accuracy, and clinical adoption of these technologies for both research and therapeutic applications.

The Science of Dietary Sensing: Core Principles and Sensor Modalities

Traditional dietary assessment methods, including 24-hour recalls, food diaries, and food frequency questionnaires (FFQs), rely on participant self-reporting and are consequently compromised by significant limitations [1] [2]. These methods are inherently prone to recall bias, inaccuracies in portion size estimation, and social desirability bias, where participants systematically under-report intake of foods perceived as unhealthy [3] [4].

The scale of under-reporting is substantial; food records are estimated to cause 11–41% underestimations for energy intake [5]. Social desirability bias can lead to a downward bias equating to about 450 kcal over the interquartile range of the social desirability scale [3]. This fundamental measurement error compromises the validity of nutritional epidemiology and the effectiveness of dietary interventions [2] [4].

The Promise of Objective Wearable Sensors

Wearable sensing technology offers a promising solution by providing continuous, objective data on dietary intake with minimal user input, thereby reducing recall bias and enhancing convenience [6]. These technologies can capture a wide range of data, from eating behaviors to physiological responses to food intake.

The field has seen a marked increase in research activity since 2020, reflecting growing recognition of its potential [6]. The following table summarizes the primary sensor modalities and their applications in dietary monitoring.

Table 1: Wearable Sensor Modalities for Dietary Assessment

| Sensor Type | Measured Parameters | Detection Capabilities | Example Devices |

|---|---|---|---|

| Inertial (IMU) [1] [5] | Wrist rotation, acceleration | Hand-to-mouth gestures, bite counting | Smartwatches, custom wristbands |

| Acoustic [6] [1] | Sound waves | Chewing, swallowing sounds | Neck-worn microphones |

| Physiological [5] | Heart Rate (HR), Skin Temperature (Tsk), Oxygen Saturation (SpO2) | Postprandial metabolic responses | Custom multi-sensor wristbands |

| Camera-Based [1] [7] | Image data | Food type, portion size, eating environment | Egocentric cameras (eButton, AIM) |

| Bioimpedance [8] | Electrical impedance | Changes in fluid concentration related to glucose absorption | Healbe GoBe2 wristband |

Performance and Validation of Sensor-Based Methods

Quantitative Performance of Selected Modalities

Objective validation is crucial for establishing the credibility of these new technologies. The performance of different sensor-based methods has been evaluated in both laboratory and free-living settings.

Table 2: Performance Metrics of Selected Dietary Assessment Technologies

| Technology/Method | Setting | Key Performance Metric | Result |

|---|---|---|---|

| Bite-Counting (Wrist IMU) [9] | Free-living | Variance in Energy Intake (EI) explained | 23.4% of meal-level EI variance explained by bite count |

| EgoDiet (Wearable Camera) [7] | Laboratory (London) | Mean Absolute Percentage Error (MAPE) for portion size | 31.9% (vs. 40.1% by dietitians) |

| EgoDiet (Wearable Camera) [7] | Field (Ghana) | Mean Absolute Percentage Error (MAPE) for portion size | 28.0% (vs. 32.5% for 24HR) |

| Multi-Sensor Wristband (Healbe GoBe2) [8] | Free-living | Mean bias in kcal/day (Bland-Altman) | -105 kcal/day (SD 660), with wide limits of agreement |

A key finding explaining the viability of some methods is that the amount of food consumed (portion size) often matters more than dietary composition for estimating energy intake. In free-living conditions, bite-based EI estimates accounted for 41.5% of the variance in daily energy intake, while the energy density (ED) of the food accounted for only 0.2% of the variance [9].

Detailed Experimental Protocol: Multimodal Sensor Validation

The following protocol, adapted from a published study, provides a template for validating a multi-sensor wearable device that integrates motion and physiological sensing [5].

Objective: To investigate the relationship between multimodal physiological/behavioral responses and food intake via a customised wearable monitor.

Study Design:

- Participants: 10 healthy volunteers (BMI 18–30 kg/m²), excluding individuals with chronic conditions affecting metabolism (e.g., diabetes, gastrointestinal diseases).

- Meal Intervention: Randomized crossover design where participants consume pre-defined high-calorie (1052 kcal) and low-calorie (301 kcal) meals on separate visits.

- Sensor Deployment: Participants wear a custom multi-sensor wristband throughout the eating episode and for one hour postprandially.

Data Acquisition:

- Behavioral Data: An Inertial Measurement Unit (IMU) records wrist movements to detect and analyze hand-to-mouth gestures.

- Physiological Data:

- Heart Rate (HR) & Oxygen Saturation (SpO2): Tracked via an integrated pulse oximeter and Photoplethysmography (PPG) sensor.

- Skin Temperature (Tsk): Monitored continuously by a skin surface temperature sensor.

- Validation Measures:

- Vital Signs: A bedside monitor measures blood pressure and provides validation for HR and SpO₂.

- Blood Biomarkers: Blood is collected via intravenous cannula to measure glucose, insulin, and hormone levels (e.g., ghrelin) at baseline and postprandially.

Data Analysis:

- Primary Analysis: Compare pre- vs. post-meal changes in HR and other physiological parameters for high- vs. low-calorie meals using paired statistical tests (e.g., linear mixed models).

- Secondary Analysis: Correlate physiological features with glycemic biomarkers (glucose, insulin) to explore predictive relationships.

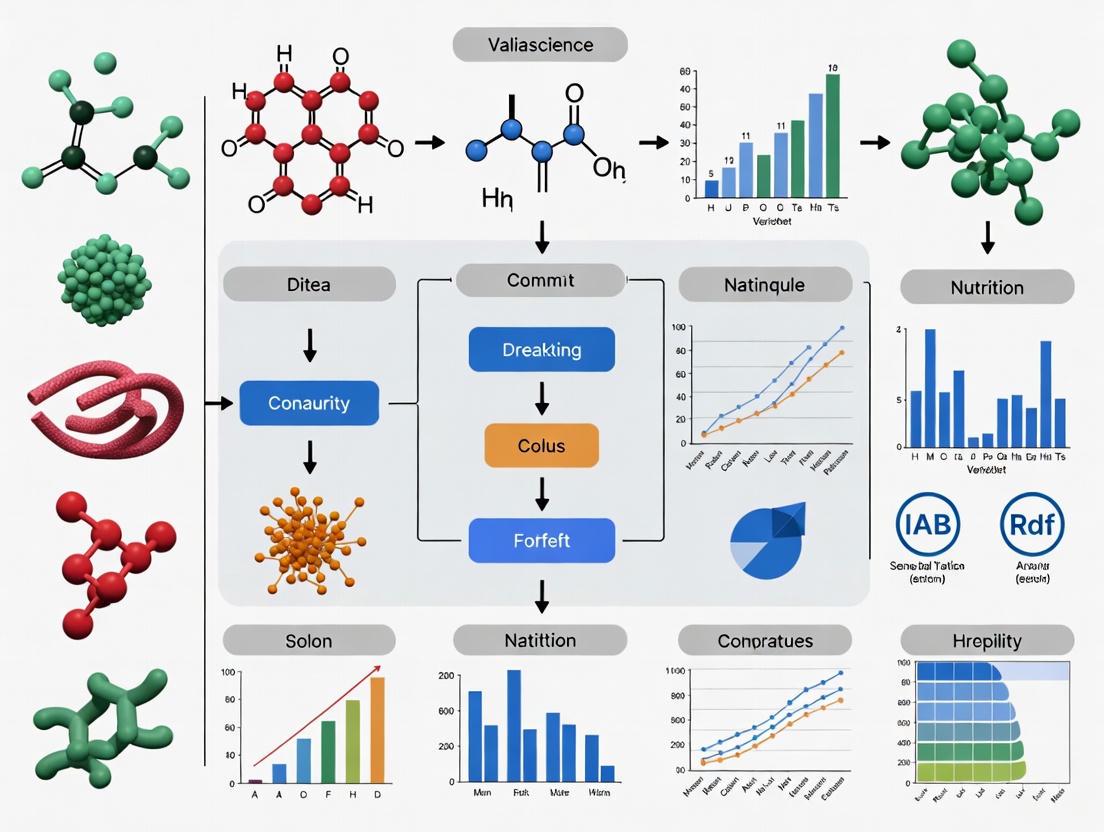

Diagram 1: Sensor validation workflow.

The Researcher's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Dietary Monitoring Studies

| Item / Solution | Primary Function in Research | Example Application / Notes |

|---|---|---|

| Custom Multi-Sensor Wristband [5] | Integrates IMU, PPG, temperature, and oximetry sensors to capture behavioral and physiological responses. | Core device for multimodal data acquisition; requires custom firmware for synchronized data logging. |

| Wearable Egocentric Cameras [7] | Passively captures first-person-view image data for analyzing food type, container, and portion size. | eButton (chest-pin) and AIM (eyeglass-mounted); used as a reference for food intake context. |

| Bite Counting Algorithm [9] | Processes gyroscopic data from wrist-worn sensors to count bites based on characteristic wrist-roll motion. | Enables estimation of energy intake based on bite count and average kcal/bite (individualized by sex). |

| Remote Food Photography Method (RFPM) [9] | Provides a validated ground truth for energy intake; participants photograph food before/after meals. | Images analyzed by trained dietitians; superior to self-report but requires participant adherence. |

| Standardized Meal Kits [5] | Provides controlled energy loads (high/low calorie) for laboratory validation studies. | Essential for testing sensor response to known dietary inputs under controlled conditions. |

| Continuous Glucose Monitor (CGM) [8] | Measures interstitial glucose levels to capture postprandial glycemic response. | Can be used as an objective biomarker to correlate with intake timing and meal composition. |

The move toward objective dietary assessment is critical for advancing nutritional science, improving clinical care, and developing effective public health policies. Wearable sensors—including motion, acoustic, physiological, and camera-based systems—offer a viable path forward by mitigating the biases inherent in self-reporting.

Future research must focus on validating these technologies in diverse, free-living populations, addressing challenges such as user privacy and signal processing robustness [1] [7]. The integration of multiple sensor modalities, as outlined in the provided protocols, represents the most promising approach for developing a comprehensive, accurate, and practical tool for objective dietary assessment.

Diagram 2: Sensor fusion for dietary monitoring.

The accurate assessment of dietary intake is a fundamental challenge in nutritional science, clinical research, and chronic disease management. Traditional methods, such as food diaries and self-reporting, are plagued by inaccuracies, recall bias, and high participant burden, often leading to significant underestimation of energy intake [6] [5]. Within the context of validating novel food intake wearables, a structured framework for evaluating sensor technologies is paramount. This document establishes a taxonomy of wearable sensors—Inertial, Acoustic, Optical, and Physiological—and provides detailed application notes and experimental protocols for their characterization and validation in food intake monitoring research. This taxonomy serves as a critical tool for researchers and drug development professionals to systematically assess the performance, limitations, and appropriate use cases of these emerging technologies.

Sensor Taxonomy and Application Notes

Wearable sensors for food intake monitoring can be categorized based on their underlying sensing modality and the type of data they capture. The following section delineates these categories, their operating principles, and their specific applications in dietary assessment.

Inertial Sensors (Accelerometers, Gyroscopes, Magnetometers)

Operating Principle: Inertial Measurement Units (IMUs) typically combine accelerometers (measuring proper acceleration), gyroscopes (measuring angular velocity), and magnetometers (measuring magnetic field orientation) to track movement and orientation [10]. These sensors are core to detecting and classifying physical activities.

Food Intake Applications: In dietary monitoring, IMUs are primarily used to detect hand-to-mouth gestures and wrist movements characteristic of eating episodes [6] [5]. The temporal sequence, frequency, and magnitude of these movements can be used to identify the onset, duration, and, in some cases, the intensity of an eating event. For example, a custom multi-sensor wristband equipped with an IMU can track "eating behaviours by tracking hand movements" to distinguish eating from other activities [5].

Key Considerations:

- Placement: The wrist is the most common location for capturing eating gestures [5].

- Data Processing: Sophisticated machine learning algorithms are required to classify eating gestures from other daily activities [11].

Acoustic Sensors (Microphones)

Operating Principle: Acoustic sensors convert sound vibrations into electrical signals. In wearables, several transduction mechanisms are employed, including:

- Piezoelectric: Use materials like polyvinylidene fluoride (PVDF) that generate an electric charge in response to mechanical stress, suitable for capturing body-conducted sounds [12].

- Capacitive: Measure changes in capacitance between a diaphragm and a backplate caused by sound-induced vibrations, known for high sensitivity [12].

Food Intake Applications: Acoustic sensors are adept at detecting chewing and swallowing sounds [6]. These bio-acoustic signals provide direct evidence of food consumption and can sometimes be used to infer food texture or type based on the acoustic signature. A key advantage is their ability to capture the oral phase of digestion directly.

Key Considerations:

- Noise Immunity: A significant challenge is distinguishing chewing and swallowing sounds from ambient background noise.

- Privacy: Audio recording raises privacy concerns, which must be addressed through on-device processing and data anonymization.

Optical Sensors (Photoplethysmography - PPG)

Operating Principle: PPG is an optical technique that uses a light source and a photodetector on the skin surface to measure blood volume changes in the microvascular bed of tissue. Pulsatile blood flow causes subtle variations in light absorption, which can be used to derive cardiovascular parameters [5] [10].

Food Intake Applications: While not directly detecting eating gestures, PPG sensors are used to monitor physiological responses to food intake. Postprandial (after-meal) changes, such as increases in heart rate (HR) and alterations in Heart Rate Variability (HRV), can serve as indirect correlates of meal consumption and energy load [5]. This modality is often integrated into a multi-sensor system for a more comprehensive assessment.

Key Considerations:

- Motion Artifacts: PPG signals are highly susceptible to corruption from movement, which can be mitigated by fusion with IMU data.

- Indirect Measure: Physiological changes are not specific to eating and can be triggered by exercise, stress, or other factors.

Physiological/Multimodal Sensors

Operating Principle: This category encompasses sensors that track broader physiological states and often represents a fusion of multiple sensing modalities into a single device or platform [5] [13]. This can include the optical (PPG), inertial (IMU), and other sensors mentioned above, plus additional ones like:

- Electrodermal Activity (EDA) Sensors: Measure skin conductance, which varies with sweat gland activity and can be an indicator of sympathetic nervous system arousal.

- Skin Temperature (SKT) Sensors: Monitor peripheral temperature, which can fluctuate with digestion and metabolism [14] [5].

- Bioimpedance Sensors: Measure the electrical impedance of tissue, which can change with hydration and body composition.

Food Intake Applications: Multimodal systems aim to provide a holistic view of the body's response to eating. For instance, a study protocol exists to investigate "physiological responses to energy intake" by simultaneously tracking HR (via PPG), oxygen saturation (SpO2), and skin temperature (Tsk) using a custom wearable band, validated against blood glucose and hormone levels [5]. The fusion of these signals aims to improve the accuracy of eating event detection and energy intake estimation.

Key Considerations:

- Data Fusion Complexity: Integrating signals from disparate sources requires advanced algorithms but can overcome the limitations of any single sensor.

- Power Consumption: Multi-sensor systems typically have higher power demands.

Table 1: Quantitative Performance Metrics of Wearable Sensors in Dietary Monitoring

| Sensor Type | Primary Measurand | Detected Eating Parameter | Reported Performance (Example) | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| Inertial (IMU) | Acceleration, Angular Velocity | Hand-to-mouth gestures, Bites | High accuracy in activity recognition (>95% in controlled settings) [5] | Direct capture of eating behavior, Reliable technology | Cannot estimate energy intake alone |

| Acoustic | Sound/Vibration | Chewing, Swallowing | Effective in detecting oral processing events [6] | Direct capture of mastication, Potential for food type identification | Susceptible to ambient noise, Privacy concerns |

| Optical (PPG) | Blood Volume Pulse | Heart Rate (as a postprandial response) | Significant correlation with meal size (r = 0.990; P = 0.008) [5] | Indirect correlate of metabolic response, Common in consumer wearables | Non-specific, Confounded by other factors (e.g., exercise) |

| Physiological (EDA, SKT) | Skin conductance, Temperature | Arousal, Metabolic rate | Changes observed during/post meal [5] | Provides context on physiological state | Highly non-specific to eating, Slow response time |

Experimental Protocols for Sensor Validation

Rigorous validation is required to establish the efficacy and reliability of wearable sensors for dietary monitoring. The following protocols provide a framework for this process.

Protocol for Validating Inertial and Physiological Sensor Fusion

Objective: To evaluate the performance of a multi-sensor wristband (incorporating IMU and PPG) in detecting eating episodes and correlating physiological responses with energy intake.

Materials:

- Custom multi-sensor wristband with IMU and PPG sensors [5].

- Bedside vital sign monitor (for validation of HR, SpO2) [5].

- Intravenous cannula and equipment for blood sampling.

- Pre-defined high-calorie and low-calorie meals [5].

Procedure:

- Participant Preparation: Recruit healthy volunteers meeting inclusion criteria (e.g., age 18-65, BMI 18-30 kg/m²). Obtain informed consent.

- Baseline Measurement: Fit the participant with the wearable sensor and the bedside monitor. Collect fasted baseline data for HR, SpO2, and skin temperature for a minimum of 5 minutes. Collect a fasted blood sample for glucose, insulin, and appetite hormone analysis.

- Intervention: In a randomized order, provide the participant with either a high-calorie (e.g., 1052 kcal) or low-calorie (e.g., 301 kcal) meal. Instruct participants to use standard cutlery.

- Data Acquisition:

- IMU: Record continuous data from the accelerometer and gyroscope throughout the eating episode and for at least 60 minutes post-prandially.

- PPG: Record continuous HR and pulse wave data synchronously with the IMU.

- Vitals: Record HR and SpO2 from the bedside monitor at regular intervals (e.g., every 5-10 minutes).

- Blood Sampling: Collect blood samples at pre-defined intervals post-meal (e.g., 15, 30, 60, 90, 120 minutes) for biomarker analysis.

- Data Analysis:

- Eating Event Detection: Apply machine learning algorithms (e.g., pattern recognition) to the IMU data to identify the start and end times of the eating episode based on hand-to-mouth movements.

- Physiological Response: Calculate the change in HR, HRV, and other parameters from baseline for both meal types.

- Statistical Analysis: Use paired t-tests or ANOVA to compare physiological changes between high- and low-calorie meals. Perform correlation analysis between sensor-derived features (e.g., number of gestures, HR increase) and energy intake or postprandial glycaemic response.

Protocol for Validating Acoustic Sensor Performance

Objective: To assess the accuracy of a wearable acoustic sensor in detecting chewing and swallowing sounds against a ground truth (e.g., video observation).

Materials:

- Wearable acoustic sensor (e.g., piezoelectric or MEMS-based) [12].

- Synchronized video recording setup.

- A variety of test foods with different textures (e.g., apple, chips, banana, bread).

Procedure:

- Sensor Placement: Affix the acoustic sensor to the skin on the neck or near the temporomandibular joint, as appropriate for the device.

- Controlled Feeding: In a lab setting, participants will consume the test foods one at a time. The entire session is recorded on video.

- Data Collection: Simultaneously record the audio signal from the wearable sensor and the video.

- Ground Truth Annotation: An expert reviewer will annotate the video recording to mark the precise timestamps of each chew and swallow. This serves as the ground truth.

- Data Analysis:

- Signal Processing: Apply band-pass filters to the raw acoustic signal to isolate frequencies characteristic of chewing (e.g., 100-3000 Hz) and swallowing.

- Event Detection: Use an algorithm (e.g., threshold-based or machine learning) to detect putative chewing and swallowing events from the acoustic signal.

- Performance Calculation: Compare the algorithm's output against the video ground truth. Calculate performance metrics including Accuracy, Precision, Recall (Sensitivity), and F1-score for the detection of chewing and swallowing events.

Table 2: Essential Research Reagent Solutions and Materials

| Item Name | Function/Application | Example/Notes |

|---|---|---|

| Empatica E4 | Consumer-grade wearable for physiological data acquisition. | Records BVP, EDA, SKT, and 3-axis acceleration [14]. |

| Zephyr BioHarness 3 | Consumer-grade wearable for physiological data acquisition. | Records ECG, Respiration, SKT, and acceleration [14]. |

| SensorTile.box (STb) | Programmable IoT module for inertial data acquisition. | Provides high-frequency 3-axis accelerometer and gyroscope data; used in research for high-quality raw data [14]. |

| Custom Multi-sensor Wristband | Integrated platform for concurrent inertial and physiological monitoring. | Often research-built; combines IMU, PPG, temperature sensors for dietary studies [5]. |

| Piezoelectric Polymer (PVDF) | Material for flexible acoustic sensors. | Used in wearable microphones to capture body-conducted sounds like chewing [12]. |

| Ag/AgCl Ink | Material for constructing dry electrodes. | Used in electrodeposition for creating stable reference electrodes in wearable chemical sensors [10]. |

Visualization of Sensor Data Processing Workflows

The following diagrams, generated using DOT language, illustrate the logical flow of data from acquisition to outcome in a multi-sensor dietary monitoring system.

Multi-Sensor Data Fusion and Processing Workflow

Experimental Validation Protocol for a Dietary Wearable

Accurate and objective assessment of dietary intake is a fundamental challenge in nutrition science and health research. Traditional methods, such as food diaries and 24-hour recalls, rely on self-reporting and are prone to inaccuracies, recall bias, and substantial participant burden, often resulting in energy intake underestimations of 11-41% [5]. The rapid advancement of wearable sensing technology presents a promising solution for objective dietary monitoring by reducing recall bias and enhancing user convenience [6]. These technologies enable continuous monitoring of dietary behaviors in naturalistic settings, providing insights previously difficult to obtain. This article defines key eating behavior metrics within the context of validating food intake wearables, providing researchers with structured protocols and reference data to standardize the evaluation of these emerging technologies across clinical and free-living environments.

Core Eating Behavior Metrics and Measurement Performance

Wearable sensors for dietary monitoring capture a hierarchy of behavioral and physiological events, from basic ingestion actions to derived energy estimates. The table below summarizes the key metrics, their definitions, and representative measurement accuracies reported in validation studies.

Table 1: Key Eating Behavior Metrics and Sensor Performance

| Metric Category | Specific Metric | Definition & Significance | Exemplary Performance (from validation studies) |

|---|---|---|---|

| Ingestive Actions | Bite Count | The number of times food is brought to the mouth. A primary marker for eating episode initiation and duration. | High accuracy for detection (>95% in controlled settings) [15]. |

| Chew Count | The number of mastication cycles. Correlates with food texture and properties. | Used in models for mass intake estimation [15]. | |

| Swallow Count | The number of swallowing events. Indicates food clearance and is related to the amount consumed. | Manual annotation shows high inter-rater reliability (ICC >0.95) [15]. | |

| Temporal Patterns | Meal Duration | Total time from the first to the last ingestive action of an eating episode. | Accurately detected via motion sensors [5]. |

| Eating Rate | Speed of consumption (e.g., grams per minute or bites per minute). A risk factor for overconsumption. | Derived from bite count and meal duration. | |

| Energy & Mass Intake | Food Mass Intake | Total weight (grams) of food and beverages consumed during a meal. | Mean Absolute Percentage Error (MAPE) of 25.2% ± 18.9% using sensor and video features [15]. |

| Energy Intake | Total energy (kilocalories) consumed during a meal. The ultimate goal for many dietary assessments. | Mean Absolute Percentage Error (MAPE) of 30.1% ± 33.8% using sensor and video features [15]. | |

| Physiological Responses | Heart Rate (HR) | Increases post-meal due to the thermic effect of food; correlates with meal energy content. | Significant correlation with meal size (r = 0.990) [5]. |

| Skin Temperature (Tsk) | Rises with increased metabolism during digestion. | A potential marker for meal detection, used in multi-parameter models [5]. |

The performance of these metrics varies significantly based on sensor modality, algorithm complexity, and study setting. Subject-independent (group-calibrated) models for mass and energy intake, while practical for broad application, currently exhibit considerable error margins (25-30% MAPE) [15]. This highlights the critical need for standardized validation protocols to benchmark device performance accurately.

Experimental Protocols for Wearable Validation

A robust validation protocol is essential to establish the accuracy and reliability of wearable devices in measuring the metrics defined above. The following sections detail key methodological components.

Protocol for Multimodal Sensor Validation

This protocol, adapted from a recent study, outlines a comprehensive approach for validating wearable devices that track both physiological and behavioral parameters [5].

- Objective: To investigate the relationship between food intake and physiological/behavioral responses measured by a customized multi-sensor wristband, and to validate these measurements against gold-standard references.

- Study Population:

- Sample Size: 10 healthy participants, sufficient to detect significant physiological changes (e.g., heart rate) with a power of 0.9 [5].

- Inclusion Criteria: Adults (18-65 years) with BMI 18-30 kg/m².

- Exclusion Criteria: Chronic conditions affecting eating or metabolism (e.g., diabetes, eating disorders, cardiovascular disease).

- Experimental Design:

- A randomized crossover design where participants consume pre-defined high-calorie (e.g., 1052 kcal) and low-calorie (e.g., 301 kcal) meals during separate visits in a controlled laboratory setting [5].

- Meals should represent common food choices to enhance ecological validity.

- Data Acquisition and Gold-Standard Comparators:

- Wearable Sensor Data: Participants wear a multi-sensor device equipped with:

- Inertial Measurement Unit (IMU): Captulates hand-to-mouth movements and eating gestures.

- Photoplethysmography (PPG) Sensor / Pulse Oximeter: Continuously records Heart Rate (HR) and Oxygen Saturation (SpO2).

- Skin Temperature Sensor: Monitors peripheral temperature changes.

- Validation Data:

- Direct Observation/Video Recording: Serves as the gold standard for annotating bites, chews, and swallows. This method has demonstrated high inter-rater reliability (ICC >0.95) [15].

- Weighed Food Inventory: The mass of each food item is weighed before and after the meal to calculate exact mass and energy intake.

- Bedside Vital Signs Monitor: Provides validated measurements of HR, SpO2, and blood pressure to cross-check the wearable's physiological data.

- Blood Sampling: Serial blood draws via intravenous cannula to measure postprandial glucose, insulin, and appetite-related hormones, linking sensor data to glycaemic response [5].

- Wearable Sensor Data: Participants wear a multi-sensor device equipped with:

- Data Analysis:

- Correlate and compare sensor-derived metrics (e.g., number of hand gestures, HR change) with gold-standard measures.

- Use regression models to explore the predictive power of sensor features for estimating mass and energy intake.

Considerations for Free-Living Validation

While laboratory studies are crucial for initial validation, testing in free-living conditions is necessary to assess real-world applicability.

- Design: Deploy the wearable device for an extended period (e.g., 10-14 days) while participants go about their normal lives [16].

- Reference Methods:

- Image-Based Food Records: Use devices like the eButton, worn on the chest to automatically capture meal images, providing data on food type and volume [16].

- Ecological Momentary Assessment (EMA): Prompt participants via smartphone to self-report eating episodes shortly after they occur.

- Key Outcomes:

The Researcher's Toolkit

The following table outlines essential reagents, devices, and software used in the experimental protocols for validating food intake wearables.

Table 2: Key Research Reagent Solutions for Dietary Monitoring Validation

| Item Name | Type | Primary Function in Protocol |

|---|---|---|

| Inertial Measurement Unit (IMU) | Sensor | Embedded in wrist-worn devices to capture 3D acceleration and rotation, enabling detection of hand-to-mouth gestures and eating episodes [5]. |

| Photoplethysmography (PPG) Sensor | Sensor | Uses light to detect blood volume changes at the skin surface, providing continuous measurements of heart rate, a physiological correlate of meal intake [5] [17]. |

| Piezoelectric Strain Sensor | Sensor | Attached below the ear to detect jaw movements, allowing for the counting of chews and characterization of chewing patterns [15]. |

| eButton | Device | A wearable, image-based data collection system worn on the chest. It automatically takes pictures at regular intervals to record food consumption and context in free-living settings [16]. |

| Continuous Glucose Monitor (CGM) | Device | A wearable sensor that measures interstitial glucose levels every few minutes. It serves as a objective biomarker for correlating food intake with glycaemic response [16]. |

| Nutrient Data System for Research (NDS-R) | Software | A comprehensive software system used by nutritionists to calculate the nutrient composition, including energy content, of consumed foods based on type and weight [15]. |

| Video Recording System | Equipment | The gold-standard tool for direct observation of eating episodes, allowing for manual annotation of bites, chews, and swallows with high precision [15]. |

Signaling Pathways and Workflow Diagrams

The following diagram illustrates the logical framework and workflow for validating key eating behavior metrics using a multi-sensor wearable device, from data collection to the derivation of intake estimates.

Diagram 1: Validation Workflow for Food Intake Wearables. This diagram outlines the process of acquiring sensor data, extracting key metrics, and validating them against gold-standard methods to derive estimates of dietary intake.

The field of wearable dietary monitoring is advancing rapidly, moving beyond simple detection of eating episodes toward the estimation of mass and energy intake. The core metrics of bites, chews, swallows, and their associated physiological responses provide a multi-faceted data stream for objective assessment. However, as the performance data indicates, even the most advanced sensor systems currently exhibit significant error margins when estimating energy intake in group-calibrated models [15]. This underscores the critical importance of the rigorous, multi-modal validation protocols detailed in this article. Future work must focus on refining algorithms, particularly through the fusion of behavioral and physiological data, and expanding validation from controlled lab settings to diverse, free-living populations. By adhering to standardized frameworks for defining metrics and validating technology, researchers can accelerate the development of reliable tools that transform dietary assessment in both clinical practice and nutritional research.

Core Physiological Parameters and Food Intake

Wearable devices detect food consumption by monitoring specific physiological and behavioral parameters that change in response to eating and digestion. The table below summarizes the key parameters identified in recent research.

Table 1: Key Physiological and Behavioral Parameters for Dietary Monitoring

| Parameter | Measured By | Response to Food Intake | Significance for Dietary Monitoring |

|---|---|---|---|

| Heart Rate (HR) | Photoplethysmography (PPG), Pulse Oximeter [5] | Increases post-meal; correlated with meal energy content [5] | Primary indicator for detecting eating events and differentiating energy loads. |

| Skin Temperature (Tsk) | Skin surface temperature sensor [5] | Elevates due to increased metabolism during digestion [5] | Supports the detection of an eating event and the postprandial metabolic state. |

| Oxygen Saturation (SpO₂) | Pulse Oximeter [5] | Temporarily decreases, potentially due to intestinal oxygen consumption [5] | A secondary physiological marker that contributes to multi-parameter intake detection. |

| Hand-to-Mouth Movements | Inertial Measurement Unit (IMU: accelerometer, gyroscope) [5] | Characteristic patterns during eating episodes [5] | Provides behavioral context, helping to distinguish eating from other activities that may cause physiological changes (e.g., exercise). |

| Bio-impedance | Electrodes measuring electrical impedance across the body [18] | Variations occur due to dynamic circuits formed between hands, mouth, utensils, and food [18] | Can recognize specific food-intake activities (e.g., cutting, drinking) and classify food types based on electrical properties. |

Experimental Protocol for Validating Wearable Dietary Monitors

This section outlines a detailed experimental protocol adapted from a recent study protocol for objectively validating a wearable dietary monitoring tool [5].

Study Design and Participant Selection

- Objective: To investigate the relationship between multimodal sensor data and food intake.

- Design: Controlled, randomized study visits at a clinical research facility.

- Participants: 10 healthy volunteers [5].

- Inclusion Criteria: Age 18-65 years, BMI 18-30 kg/m², willingness to provide informed consent [5].

- Exclusion Criteria: Chronic medical conditions (e.g., diabetes, obesity, cardiovascular disease), participation in another recent research study [5].

Interventions and Meals

- Meal Types: Each participant consumes pre-defined high-calorie and low-calorie meals in a randomized order [5].

- Meal Composition:

- Eating Instructions: Participants use cutlery and hands to reflect common real-world eating behaviors [5].

Data Acquisition and Measurements

Data is collected from wearable sensors, clinical-grade devices, and biological samples to ensure comprehensive validation.

Table 2: Data Acquisition Methods and Timing

| Data Type | Measurement Method | Key Metrics | Frequency/Timing |

|---|---|---|---|

| Wearable Sensor Data | Custom multi-sensor wristband [5] | HR, SpO₂, Skin Temperature, 3-axis acceleration/rotation | Worn 5 min pre-meal to 60 min post-meal; continuous. |

| Clinical Vital Signs | Bedside vital sign monitor [5] | Blood Pressure, HR, SpO₂ | Used for validation of wearable sensor readings. |

| Blood Biochemistry | Intravenous cannula and blood draws [5] | Blood Glucose, Insulin, Hormone Levels (e.g., appetite regulators) | Collected at predefined intervals relative to meal consumption. |

Data Analysis Plan

- Primary Analysis: Investigate heart rate changes associated with dietary events (pre- vs. post-meal) and energy loads (high vs. low-calorie) [5].

- Secondary Analysis: Analyze changes in other physiological parameters (skin temperature, SpO₂, blood pressure) and eating behaviors (hand movements) [5].

- Exploratory Analysis: Explore relationships between physiological features from the wearable and glycaemic biomarkers (blood glucose, insulin) [5].

Signaling Pathways and Experimental Workflow

The following diagrams illustrate the logical relationship between food consumption and physiological signals, and the workflow for the validation protocol.

Diagram 1: From Food Intake to Wearable Detection

Diagram 2: Experimental Validation Protocol Workflow

The Scientist's Toolkit: Key Research Reagents and Materials

The table below details essential materials and their functions for conducting experiments in wearable dietary monitoring.

Table 3: Essential Research Materials for Dietary Monitoring Validation

| Item | Function/Application | Example/Specification |

|---|---|---|

| Multi-Sensor Wristband | The primary data acquisition device for physiological and behavioral parameters. | Custom or commercial device integrating PPG, IMU, skin temperature sensor, and pulse oximeter [5]. |

| Bedside Vital Signs Monitor | Provides gold-standard validation for heart rate, oxygen saturation, and blood pressure readings from the wearable sensor [5]. | Clinical-grade monitor (e.g., Nellcor pulse oximeter) [5] [19]. |

| Intravenous Cannula | Enables repeated blood sampling with minimal discomfort for the participant, crucial for capturing glycemic and hormonal responses [5]. | Standard clinical venous cannula. |

| Assay Kits | Quantify levels of key biomarkers in blood plasma/serum to correlate physiological signals with internal state. | ELISA or similar kits for Glucose, Insulin, Ghrelin, Leptin, etc. |

| Calibrated Meals | Standardized dietary interventions to elicit measurable and comparable physiological responses across participants. | Pre-portioned meals with precisely defined macronutrient and calorie content (e.g., 301 kcal vs. 1052 kcal) [5]. |

| Bioimpedance Sensor Circuit | For research exploring food-type classification and activity recognition via electrical properties. | A system with electrodes (e.g., one on each wrist) to measure dynamic impedance changes during eating [18]. |

Building a Validation Protocol: From Laboratory to Free-Living Settings

Accurate dietary intake assessment is a cornerstone of nutrition research, yet traditional methods like food diaries and 24-hour recalls are plagued by significant limitations, including participant burden, recall bias, and systematic underreporting, with energy intake underestimation estimated at 11–41% [5]. These memory-based methods are inherently non-falsifiable and provide only a perceived rather than true measure of intake [19]. The emergence of wearable sensors and artificial intelligence (AI)-based dietary monitoring technologies promises to revolutionize this field by providing objective, continuous data. However, the validity of these novel tools is entirely dependent on the quality of the reference methods, or "ground truth," against which they are calibrated [19]. Establishing this ground truth requires a multi-faceted approach, ranging from direct weighing of food consumed to the measurement of physiological biomarkers. This document outlines established reference protocols and their application in validating next-generation food intake wearables, providing researchers with a critical framework for ensuring data accuracy and reliability in both controlled and free-living settings.

Foundational Reference Methods for Dietary Validation

Weighed Food Records and Standardized Meals

The Weighed Food Record (WFR) is considered a gold-standard reference method for validating dietary intake in free-living and semi-controlled conditions. In this protocol, participants are provided with digital scales and trained to weigh and record all food and beverages consumed before and after eating [20]. The weight difference, combined with nutrient data from food composition databases, provides a precise measure of actual intake.

For even greater control in laboratory settings, researchers employ standardized meals. These involve providing participants with pre-weighed, calibrated meals of known nutrient composition, often prepared in a metabolic kitchen. The study then measures the intake directly, either via pre- and post-consumption weighing by researchers or through sophisticated monitoring systems.

A recent validation study utilizing this method collected 714 food images from 57 young adults who simultaneously completed 3-day WFRs. The food was purchased from local supermarkets, and the nutritional composition was carefully calculated, providing a robust ground truth for validating AI-based image analysis [20]. Another protocol uses a crossover design where participants consume pre-defined high- and low-calorie meals in a randomized order to test a wearable sensor's ability to detect differential physiological responses to energy load [5].

Key Experimental Protocol: Validating AI with Weighed Food Records

- Objective: To validate the accuracy of an image-based AI dietary assessment tool against the ground truth of WFRs.

- Participants: Recruit a cohort (e.g., n=57) representative of the target population.

- Procedure:

- Train participants on simultaneous WFR and food image capture procedures.

- Participants record all consumed food and beverages for a set period (e.g., 3 consecutive days) using provided digital scales and a smartphone camera.

- For each eating episode, participants weigh the food before eating, capture one or more images, and then weigh any leftovers.

- Data Analysis:

- Calculate actual nutrient intake from WFRs using food composition tables.

- Process food images through the AI system to generate AI-estimated nutrient intake.

- Compare AI estimates to WFR values using Bland-Altman plots to assess agreement and calculate relative errors, correlation coefficients (e.g., Spearman's ρ), and concordance (e.g., Lin's Concordance Correlation Coefficient) [20].

Laboratory-Based Intake Monitoring: The Universal Eating Monitor

For high-resolution monitoring of eating microstructure, Universal Eating Monitors (UEMs) represent a pinnacle of laboratory-based precision. Traditional UEMs are custom-designed tables embedded with scales that continuously record food weight throughout a meal, allowing for the precise tracking of metrics like eating rate, bite size, and meal duration [21].

A recent advancement is the "Feeding Table," a UEM that incorporates multiple scales to simultaneously and independently track the intake of up to 12 different foods. This system addresses a critical limitation of single-scale UEMs by enabling the study of food choice and macronutrient intake patterns during a single meal. Data are typically collected every 2 seconds and transmitted in real-time to a computer, providing an exceptionally detailed ground truth for validating wearable sensors that claim to detect eating gestures or estimate intake rate [21].

Key Experimental Protocol: Feeding Table Validation of Wearable Sensors

- Objective: To validate a wearable sensor's estimation of meal timing, duration, and intake amount against the precise measurements of a Feeding Table.

- Setting: Controlled laboratory environment.

- Procedure:

- Participants are seated at the Feeding Table, which is set with multiple pre-weighed food items.

- Participants wear the sensor(s) to be validated (e.g., wrist-worn IMU, biosensor band).

- Participants consume a meal ad libitum while the Feeding Table continuously records the weight of each food item.

- Synchronized video recording may be used to corroborate food selection and eating gestures.

- Data Analysis:

- Extract meal start/stop times, total intake (g), and eating rate from the Feeding Table data.

- Use the wearable sensor data to algorithmically detect eating episodes and estimate intake metrics.

- Calculate accuracy, precision, and recall for episode detection, and use intra-class correlation coefficients (ICCs) and correlation analyses to compare intake amounts and timing. The Feeding Table has demonstrated high ICCs for energy (0.94) and macronutrient intake in test-retest scenarios, providing a robust benchmark [21].

Biomarkers and Physiological Response Monitoring

Beyond direct intake measurement, physiological responses provide an objective, non-self-reported ground truth that can correlate with food consumption. These biomarkers are particularly valuable for validating wearables that claim to detect eating events or metabolic impact based on physiological signals.

Multimodal Physiological and Behavioural Sensing

A cutting-edge approach involves the use of a customized wearable multi-sensor band to track physiological and behavioural responses tied to eating and digestion. These sensors typically include:

- Inertial Measurement Units (IMUs): To capture hand-to-mouth movements and distinguish eating gestures from other activities [5].

- Photoplethysmography (PPG) and Pulse Oximeters: To monitor heart rate (HR) and blood oxygen saturation (SpO₂). Post-meal increases in HR have been correlated with meal size [5].

- Skin Temperature (Tsk) Sensors: To track changes associated with the thermic effect of food [5].

- Continuous Glucose Monitors (CGMs): To measure interstitial glucose responses, which serve as a direct biomarker of carbohydrate intake and metabolic health [22] [23].

Key Experimental Protocol: Validating Physiological Eating Event Detection

- Objective: To determine if a wearable device can accurately detect eating events and distinguish between high- and low-energy meals based on physiological and motion signatures.

- Design: Randomized crossover study.

- Participants: A cohort of healthy volunteers (e.g., n=10) [5].

- Procedure:

- Participants are fitted with the multi-sensor wearable and a reference CGM.

- An intravenous cannula may be inserted for frequent blood sampling to validate glucose, insulin, and hormone levels against CGM and other sensor readings [5].

- In a controlled facility, participants consume pre-defined high- and low-calorie meals in a randomized order, with sensors worn throughout.

- Reference measurements (e.g., blood pressure, HR, SpO₂) are concurrently taken with a traditional bedside monitor [5] [24].

- Data Analysis:

- Synchronize data from all sensors and reference devices.

- Identify physiological feature changes (e.g., HR increase, Tsk fluctuation, SpO₂ dip) and motion patterns associated with known meal times.

- Train and test machine learning models to classify eating events and, if possible, estimate energy load, using the known meal times and compositions as ground truth. One study using CGM and activity data demonstrated an accuracy of 92.3% in detecting eating moments [22].

Data Analysis and Validation Criteria

The statistical comparison between a novel device and the ground truth reference is a critical step in validation. The following table summarizes key performance metrics and benchmarks from recent studies.

Table 1: Performance Benchmarks for Dietary Assessment Validation

| Validation Method | Metric | Reported Benchmark | Interpretation |

|---|---|---|---|

| AI vs. Weighed Food Records [20] | Relative Error (Energy) | 0.10% to 38.3% [25] | Lower % indicates higher accuracy. Performance is better with single vs. mixed foods. |

| Concordance (Lin's CCC) | 0.874 - 0.540 (for various nutrients) [20] | Values closer to 1 indicate stronger agreement. | |

| Wearable vs. Bedside Monitor [24] | Bland-Altman Limits of Agreement | 94.2% of data within limits (SpO₂, DBP, PR) [24] | High % within limits indicates strong agreement between devices. |

| CGM-based Meal Detection [22] | Prediction Accuracy | 92.3% (Training), 76.8% (Test) [22] | Accuracy of detecting eating moments from glucose/activity data. |

| Feeding Table (UEM) [21] | Intra-class Correlation (Energy) | 0.94 [21] | High ICC (>0.9) indicates excellent test-retest reliability. |

Analytical Workflow for Method Validation

The pathway from raw data collection to validated model involves a structured workflow of synchronization, feature extraction, and statistical analysis to ensure robust conclusions.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Dietary Validation Studies

| Item | Function / Application | Example Use Case |

|---|---|---|

| Metabolic Kitchen | Prepares standardized meals with precise nutrient composition. | Providing high-/low-calorie test meals for controlled intervention studies [5]. |

| Digital Food Scales | Accurately weigh food items pre- and post-consumption. | Core instrument for participants completing Weighed Food Records (WFRs) [20]. |

| Continuous Glucose Monitor (CGM) | Measures interstitial glucose levels continuously. | Serving as a biomarker for carbohydrate intake and validating meal detection algorithms [22] [23]. |

| Multi-Sensor Wearable Band | Tracks physiological (HR, SpO₂, Tsk) and behavioural (IMU) data. | Investigating physiological responses to food intake and detecting eating gestures [5]. |

| Universal Eating Monitor (UEM) | Precisely tracks food weight and eating microstructure in lab settings. | Providing high-resolution ground truth for eating rate and meal duration [21]. |

| Food Composition Database (FCD) | Provides nutritional information for foods identified or weighed. | Converting food identification and portion sizes into nutrient estimates [19] [20]. |

| Bland-Altman Analysis | Statistical method to assess agreement between two measurement techniques. | Quantifying the agreement between a wearable's calorie estimate and WFR data [19] [24] [20]. |

The validation of food intake wearable technologies relies on a core set of performance metrics that quantitatively assess how well these devices detect and characterize eating behavior. Accuracy, precision, sensitivity, and specificity provide the statistical foundation for evaluating algorithmic performance against ground-truth measures. These metrics enable direct comparison between diverse sensing modalities—including inertial sensors, acoustic monitors, bio-impedance sensors, and camera-based systems—across both controlled laboratory and free-living environments. Establishing standardized protocols for calculating these metrics is essential for advancing the field of automated dietary monitoring (ADM) and ensuring reliable measurement of eating behaviors such as food intake episodes, chewing sequences, swallowing events, and food type classification.

Defining Core Performance Metrics

The four core metrics are derived from confusion matrix analysis, which cross-tabulates predicted outcomes from wearable algorithms with actual observed outcomes. The table below provides formal definitions and calculation methods for each metric.

Table 1: Definitions and Calculations of Core Performance Metrics

| Metric | Definition | Calculation | Interpretation in Dietary Monitoring |

|---|---|---|---|

| Accuracy | Overall proportion of correct predictions | (TP + TN) / (TP + TN + FP + FN) | Overall ability to correctly identify both eating and non-eating periods |

| Precision | Proportion of correctly predicted positive events among all predicted positives | TP / (TP + FP) | Reliability of eating event detections; low precision indicates many false eating alerts |

| Sensitivity (Recall) | Proportion of actual positives correctly identified | TP / (TP + FN) | Ability to capture all actual eating events; missed meals are false negatives |

| Specificity | Proportion of actual negatives correctly identified | TN / (TN + FP) | Ability to correctly reject non-eating activities |

TP = True Positive; TN = True Negative; FP = False Positive; FN = False Negative

Quantitative Performance Across Sensing Modalities

Recent validation studies demonstrate varying performance profiles across different sensing technologies. The following table synthesizes reported metric ranges from published research on wearable dietary monitoring systems.

Table 2: Reported Performance Metrics by Sensor Modality

| Sensor Modality | Target Behavior | Accuracy | Precision | Sensitivity | Specificity | Citation |

|---|---|---|---|---|---|---|

| Inertial (IMU) | Hand-to-Mouth Gestures | 85-97% | 89-95% | 84-93% | 88-96% | [26] [1] |

| Acoustic | Chewing/Swallowing | 78-92% | 81-90% | 75-94% | 80-95% | [1] [18] |

| Bio-impedance (iEat) | Food Intake Activities | - | - | - | - | [18] |

| Camera-Based (EgoDiet) | Food Portion Estimation | - | - | - | - | [7] |

| Multimodal Fusion | Eating Episode Detection | 90-98% | 92-96% | 89-95% | 91-97% | [26] [1] |

Bio-impedance sensing (iEat) achieves an 86.4% macro F1-score (harmonic mean of precision and recall) for recognizing food intake activities like cutting, drinking, and eating with utensils, and 64.2% macro F1-score for classifying seven food types [18]. Camera-based systems (EgoDiet) demonstrate a Mean Absolute Percentage Error (MAPE) of 28.0-31.9% for portion size estimation, outperforming dietitian estimates (40.1% MAPE) and 24-hour recall (32.5% MAPE) [7]. While these studies report high performance in controlled settings, a systematic review notes that performance typically decreases by 10-25% when moving to free-living environments with more confounding factors [1].

Experimental Protocols for Metric Validation

Protocol for Inertial Sensor Validation

Objective: Validate performance metrics for wrist-worn inertial measurement units (IMUs) in detecting eating gestures.

Participants: Recruit 10-15 healthy volunteers with balanced gender representation and BMI 18-30 kg/m² [26] [5].

Materials:

- Commercial IMU sensors (accelerometer, gyroscope, magnetometer)

- Data acquisition system (Bluetooth or local storage)

- Standardized meal sets (high and low calorie)

- Video recording system for ground truth annotation

Procedure:

- Sensor Placement: Secure IMU on the dominant wrist using a adjustable strap.

- Calibration: Perform sensor calibration in neutral position before meals.

- Meal Protocol:

- Present standardized high-calorie (≈1050 kcal) and low-calorie (≈300 kcal) meals in randomized order [5].

- Include varied eating utensils (hands, fork, spoon) to assess generalizability.

- Data Collection:

- Record continuous IMU data during entire eating episode.

- Synchronize with video recording for ground truth annotation.

- Ground Truth Annotation:

- Annotate start/end times of each eating gesture using video recording.

- Classify gesture type (bite, drink, cut, etc.) with timestamp.

- Data Analysis:

- Extract features from IMU signals (time-domain, frequency-domain).

- Train machine learning classifiers (SVM, Random Forest, CNN) on 70% of data.

- Test on remaining 30% and calculate performance metrics against ground truth.

Validation Notes: Assess cross-participant generalizability using leave-one-subject-out cross-validation. Report confusion matrices for each meal type and eating utensil condition [1].

Protocol for Multimodal Sensor Validation

Objective: Validate performance metrics for combined physiological and motion sensors in detecting eating events and estimating energy intake.

Participants: 10 healthy volunteers meeting inclusion criteria [26] [5].

Materials:

- Custom multi-sensor wristband (PPG, IMU, temperature, oximeter)

- Bedside vital sign monitor for validation

- Intravenous cannula for blood sampling (glucose, insulin, hormones)

- Standardized meal sets

Procedure:

- Sensor Configuration:

- Deploy custom wristband with multiple sensors [5].

- Validate physiological readings against bedside monitor.

- Experimental Protocol:

- Record baseline measurements for 5 minutes pre-meal.

- Serve standardized meal within 20-minute consumption window.

- Continue monitoring for 60 minutes post-meal.

- Collect blood samples at regular intervals for biomarker correlation.

- Data Synchronization:

- Synchronize all sensor streams with common time reference.

- Mark meal events with button press or audio marker.

- Ground Truth Establishment:

- Video record all eating episodes for manual annotation.

- Pre-weigh all food items and weigh leftovers.

- Record timestamps for each bite and food type.

Analysis Method:

- Apply signal processing to extract features from all sensor modalities.

- Use feature fusion techniques to combine physiological and motion data.

- Train ensemble classifiers to detect eating events and estimate energy intake.

- Calculate correlation between sensor features and postprandial biomarker responses [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials for Wearable Dietary Monitoring Validation

| Category | Item | Specification | Research Function |

|---|---|---|---|

| Sensors | Inertial Measurement Unit (IMU) | 9-DoF (Accel, Gyro, Mag), ≥100Hz sampling | Captures hand-to-mouth gestures and eating motions [1] |

| Bio-impedance Sensor | 2- or 4-electrode, 10-100 kHz | Detects food-handling via impedance changes [18] | |

| Acoustic Sensor | Miniature microphone, 8-16 kHz range | Monitors chewing and swallowing sounds [1] | |

| Data | Vital Sign Monitor | Clinical-grade BP, SpO₂, HR | Validates wearable physiological readings [5] |

| Software | Video Recording System | Time-synchronized, multi-angle | Provides ground truth for eating episodes [1] |

| Annotation Software | ELAN, ANVIL, or custom solutions | Enables manual labeling of eating events [7] | |

| Analysis | Machine Learning Framework | Python Scikit-learn, TensorFlow | Implements classification algorithms [1] |

| Meal | Standardized Food Sets | Pre-weighed, known nutrition | Controls for variability in food properties [5] |

| Support | Laboratory Weighing Scale | 0.1g precision | Measures exact food consumption [7] |

The validation of wearable technology for assessing dietary intake represents a significant frontier in nutritional science and precision health. These technologies promise to overcome the well-documented limitations of traditional self-reported dietary assessment methods, which rely on participant memory and are susceptible to systematic bias and misreporting [19]. A robust validation framework is essential to establish the accuracy, reliability, and utility of these emerging devices. This protocol outlines a structured approach to validation study design, integrating the PICOS framework to formulate precise research questions and incorporating controlled meal challenges as a gold-standard comparator for objective intake measurement. The convergence of these methodologies provides a comprehensive strategy for generating high-quality evidence regarding the performance of food intake wearables in free-living and controlled settings.

Foundational Frameworks: The PICOS Model

The PICOS (Population, Intervention, Comparator, Outcomes, Study design) framework is a critical tool for formulating focused, answerable clinical research questions and structuring study designs [27] [28]. Its application ensures that validation studies for food intake wearables are precisely defined, methodologically sound, and their results interpretable.

PICOS Components and Applications

The table below delineates the core components of the PICOS framework and their specific applications in the context of validating food intake wearables.

Table 1: Application of the PICOS Framework to Food Intake Wearable Validation Studies

| PICOS Element | Description | Application Example |

|---|---|---|

| Population (P) | The specific group of participants being studied. | Adults aged 18-50, free of chronic metabolic disease, with a BMI between 18.5 and 30 kg/m² [19]. |

| Intervention (I) | The technology or method being evaluated. | Use of a specific wearable sensor (e.g., wristband, egocentric camera) for automated tracking of energy and macronutrient intake [19] [7]. |

| Comparator (C) | The reference method against which the intervention is measured. | Controlled meal challenges with weighed food intake [29] or doubly labeled water for energy expenditure [19]. |

| Outcomes (O) | The specific metrics used to determine the technology's performance. | Mean Absolute Percentage Error (MAPE) for energy and macronutrient intake; correlation coefficients (Pearson's r) between device-estimated and reference values [7]. |

| Study Design (S) | The architecture of the research study (e.g., randomized controlled trial, crossover, cohort). | A cross-sectional validation study or a randomized crossover trial comparing the wearable to the reference standard in a free-living or controlled setting [30] [29]. |

Formulating PICOS Questions for Wearable Validation

Translating the PICOS framework into a structured research question is a prerequisite for a successful validation study. The following examples illustrate how to construct these questions:

- Example 1 (Accuracy in a Controlled Setting): In healthy adults (P), does the EgoDiet wearable camera system (I), compared to directly weighed food intake during a controlled meal challenge (C), accurately estimate energy intake with a Mean Absolute Percentage Error of less than 10% (O) in a cross-sectional laboratory study (S)?

- Example 2 (Performance in Free-Living): In free-living adults with type 2 diabetes (P), does a commercial nutrition-tracking wristband (I), compared to the 24-hour dietary recall method (C), demonstrate a higher correlation with urinary nitrogen recovery as a biomarker of protein intake (O) in a prospective cohort study (S)?

Core Experimental Protocols

This section details the specific methodologies required to execute a validation study, focusing on the gold-standard comparator of controlled meal challenges and the subsequent data analysis.

Protocol: Controlled Meal Challenge Design and Execution

Controlled feeding trials serve as a high-precision comparator for validating wearable devices by providing ground-truth data on nutritional intake [29].

3.1.1 Objective To design and administer a controlled meal challenge that delivers known quantities and compositions of food to participants, enabling the direct comparison of actual intake to the values estimated by the wearable technology.

3.1.2 Materials and Reagents Table 2: Essential Research Reagents and Materials for a Controlled Feeding Study

| Item | Function/Description |

|---|---|

| Standardized Weighing Scale | Precisely measures food portions to the nearest 0.1g prior to consumption (e.g., Salter Brecknell) [7]. |

| Food Composites for Analysis | Homogenized samples of each meal, stored at -80°C for subsequent proximate nutritional analysis to verify macronutrient content [29]. |

| 24-Hour Urine Collection Kit | Includes containers and instructions for participants. Urinary nitrogen recovery is analyzed to objectively assess adherence to protein intake, serving as a non-self-reported biomarker of compliance [29]. |

| Blinded Menu Sets | Multiple versions of menus (e.g., Dietary Guidelines for Americans vs. Typical American Diet) where similar dishes are used but recipes are modified to meet different nutritional goals, helping to blind participants to the study hypothesis [29]. |

| Dietary Adherence Tools | Daily food checklists, returned container weigh-backs, and a real-time dashboard for monitoring self-reported consumption [29]. |

3.1.3 Step-by-Step Methodology

Menu Development & Rationale:

- Develop a core menu that can be aligned to different dietary patterns (e.g., high-carbohydrate vs. high-fat). This allows for testing the wearable across a range of food types and nutrient distributions [29].

- Base food choices on national consumption surveys (e.g., NHANES What We Eat in America) to ensure realism and generalizability [29].

- Formulate meals using a combination of fresh, frozen, and manufactured foods from consistent vendors to minimize nutrient variability throughout the study duration [29].

Recipe Standardization and Scaling:

- Create detailed recipes with standardized cooking methods.

- Scale all recipes for individual energy requirements, which are calculated based on participants' baseline resting metabolic rate and physical activity level [29].

Food Procurement and Preparation:

- Designate specific vendors for shelf-stable, fresh, and specialty food items to ensure consistent supply and quality.

- Prepare all meals in a dedicated metabolic kitchen. Weigh all raw and cooked ingredients to the nearest 0.1g for each participant's meal.

Food Delivery and Participant Blinding:

- Package meals in labeled, portion-controlled containers for participant pick-up or delivery.

- Implement blinding strategies by using visually similar dishes across different dietary interventions (e.g., modifying a pasta dish with different sauces to alter sodium or fat content without obvious visual cues) [29].

Adherence Monitoring:

- Utilize multiple methods to verify that participants consume only the provided foods:

- Weigh-backs: Weigh any returned food containers to calculate the actual amount consumed.

- Self-report: Provide daily checklists for participants to report consumption and any deviations.

- Biomarker Analysis: Collect 24-hour urine samples to measure nitrogen recovery, which should correlate highly with the protein intake recorded from the provided food [29].

- Utilize multiple methods to verify that participants consume only the provided foods:

The following workflow diagram summarizes the controlled meal challenge protocol.

Protocol: Validating Wearable Technology Against a Reference

This protocol outlines the parallel process of deploying the wearable technology to be validated alongside the controlled meal challenge.

3.2.1 Objective To assess the accuracy and precision of a wearable device in estimating nutritional intake by comparing its outputs to the ground-truth data generated from a controlled meal challenge.

3.2.2 Materials

- Wearable device(s) for testing (e.g., wristband, egocentric camera like eButton or AIM) [7].

- Associated software and algorithms for data processing and intake estimation.

- Continuous glucose monitors (CGM) or other ancillary sensors for correlative physiological data (optional) [19].

3.2.3 Step-by-Step Methodology

Participant Recruitment and Screening:

- Recruit participants based on the predefined "Population" criteria from the PICOS framework.

- Exclude individuals with chronic diseases, food allergies, restricted diets, or those taking medications that affect metabolism to reduce confounding variables [19].

- Obtain informed consent and conduct baseline assessments (anthropometrics, fasting blood draw).

Device Deployment and Data Collection:

- Provide participants with the wearable device and standardize its use (e.g., wearing the eButton camera on the chest or the AIM on eyeglasses) [7].

- Instruct participants to wear the device continuously throughout the testing period, which includes the controlled meal challenge and subsequent free-living phases.

- For camera-based systems, ensure continuous capture of eating episodes. For sensor-based wristbands, ensure proper skin contact and charging.

Data Processing and Analysis:

- For Camera Systems (e.g., EgoDiet): Process video footage through a pipeline that includes:

- Segmentation (EgoDiet:SegNet): Identifies and separates food items and containers in the image [7].

- 3D Modeling (EgoDiet:3DNet): Estimates camera-to-container distance and reconstructs 3D models of containers to determine scale [7].

- Feature Extraction (EgoDiet:Feature): Calculates metrics like Food Region Ratio (FRR) and Plate Aspect Ratio (PAR) to infer portion size [7].

- Portion Estimation (EgoDiet:PortionNet): Uses extracted features to estimate the consumed portion size in weight/volume [7].

- For Sensor-based Systems: Use proprietary algorithms to convert physiological signals (e.g., bioimpedance) into estimates of energy and macronutrient intake [19].

- For Camera Systems (e.g., EgoDiet): Process video footage through a pipeline that includes:

Statistical Comparison to Reference:

- Compare the wearable's estimates of energy (kcal) and macronutrients (g) to the values from the weighed food records.

- Employ statistical methods such as Bland-Altman analysis to assess agreement and calculate limits of bias [19].

- Calculate performance metrics like Mean Absolute Percentage Error (MAPE) and correlation coefficients.

The diagram below illustrates the complete validation framework, integrating both the wearable device testing and the reference method.

Data Analysis and Visualization

Rigorous data analysis and clear visualization are paramount for interpreting validation study results and communicating them effectively to the scientific community.

Quantitative Analysis of Validation Data

The primary objective is to quantify the agreement between the wearable device (test method) and the controlled meal challenge (reference method). The table below summarizes key performance metrics and their interpretation, drawing from real-world validation studies.

Table 3: Key Metrics for Quantitative Analysis of Wearable Validation Data

| Metric | Description | Interpretation | Example from Literature |

|---|---|---|---|

| Mean Absolute Percentage Error (MAPE) | The average of the absolute percentage differences between estimated and actual values. | Lower values indicate better accuracy. A MAPE of 28-32% has been reported for vision-based methods versus 24-hour recall [7]. | EgoDiet camera system: MAPE of 28.0% for portion size vs. 32.5% for 24HR [7]. |

| Bland-Altman Analysis | Plots the difference between two methods against their average, establishing limits of agreement (LoA). | Assesses bias (mean difference) and the range within which 95% of differences lie. A wider LoA indicates poorer agreement [19]. | A wristband study showed a mean bias of -105 kcal/day with 95% LoA between -1400 and 1189 kcal, indicating high variability [19]. |

| Correlation Coefficient (r) | Measures the strength and direction of a linear relationship between two variables. | Values close to +1 or -1 indicate a strong linear relationship. Does not measure agreement. | Used to correlate wearable output with urinary nitrogen as a biomarker of intake [29]. |

| Urinary Nitrogen Recovery | A biomarker for protein intake; the ratio of urinary nitrogen to dietary nitrogen intake. | Values ~80% are typical. A lack of difference between diet groups supports adherence, validating the reference data [29]. |

Data Visualization for Comparative Analysis

Effective graphs are essential for exploring and presenting data that compares a quantitative variable (e.g., energy intake error) across different groups (e.g., different wearable devices or dietary conditions) [31].

- Boxplots (Parallel Boxplots): These are the best choice for summarizing the distribution of errors or intake values. They display the median, quartiles, and potential outliers, allowing for easy visual comparison of the central tendency and variability between the wearable-estimated and reference values [31].

- Bland-Altman Plots: This specialized plot is the gold standard for visualizing agreement between two measurement techniques. The plot of the differences against the means allows for immediate assessment of any systematic bias (e.g., the device consistently over- or under-estimates) and whether this bias changes with the magnitude of the measurement [19].

- Bar Charts: Useful for comparing the mean values of estimated versus actual intake for different macronutrients (e.g., protein, fat, carbohydrates) across clear, distinct categories [32].

Application Note: Key UX Findings in Dietary Wearable Research

This application note synthesizes critical user experience (UX) findings from recent studies on wearable sensors for dietary monitoring, providing researchers with evidence-based insights for device development and validation protocol design.

Table 1: Documented User Experience Metrics and Adherence Patterns in Wearable Dietary Monitoring Studies

| Study & Device Type | Study Duration | Compliance/Adherence Findings | Comfort & Acceptability Issues | Usability Facilitators |

|---|---|---|---|---|

| GoBe2 Wristband (Bioimpedance) [19] | Two 14-day periods | 304 input cases of daily intake collected from participants; transient signal loss identified as major error source | Not explicitly reported, but signal loss may relate to wearability | Algorithm converts bioimpedance signals to nutrient intake estimates |

| Multi-Sensor Wristband (IMU, PPG, Temp) [5] | Single visit (5-min pre-meal to 1-hr post-meal) | Protocol designed for controlled settings; real-world adherence requires further study | Device fit monitored via flexible force sensor; skin contact ensured for PPG | Integrates inertial, physiological sensors; validates with bedside monitors |

| eButton (Camera + CGM) [16] | 10 days (eButton), 14 days (CGM) | Feasible for Chinese Americans with T2D to use over study period; structured support is essential | CGM: sensor fell off, trapped in clothes, caused skin sensitivity. eButton: privacy concerns, positioning difficulty | Facilitators: Device ease of use, increased mindfulness, sense of control, comfort. Aided portion control |

| Bite Counter (Wrist-worn IMU) [9] | 14 days | Data from 82 participants with ≥10 eating occasions each; demonstrates field feasibility | Minimal burden enables sustained use; form factor resembles common smartwatches | Passive capture of wrist roll motions; accurate EI estimation from bite count alone |

Key qualitative insights reveal that usability facilitators are crucial for adoption. Participants across studies reported that devices which were easy to use, increased mindfulness of eating, and provided a greater sense of control over dietary choices were significantly more likely to be used consistently [16]. The comfort and form factor of the device directly influences compliance, with common wearables like wristbands generally causing fewer issues than body-worn cameras or adhered sensors [16].

Experimental Protocols for UX Evaluation

This section outlines standardized protocols for evaluating compliance, comfort, and usability of food intake wearables, supporting the generation of comparable, high-quality evidence.

Protocol 1: Mixed-Methods Field Evaluation for Chronic Disease Populations

Objective: To evaluate the feasibility, acceptability, and UX of wearable dietary sensors in specific chronic disease populations over a multi-week period in free-living conditions [16].

Population: Target population groups (e.g., patients with Type 2 Diabetes, chronic kidney disease) with consideration for cultural and literacy factors [33] [16]. Sample size ~10-30 participants.

Intervention:

- Participants wear one or more dietary monitoring devices (e.g., eButton, CGM, wrist-worn sensor) for 10-14 days [16].

- Devices are used in conjunction with companion tools (e.g., paper diaries, smartphone apps) as required by the system.

Primary UX Metrics:

- Quantitative:

- Device-Based Compliance: Percentage of valid data days collected versus total possible days.

- System Usability Scale (SUS): Administered post-study to quantify perceived usability [33].

- Usage Logs: Frequency of app interactions (if applicable) and manual entries.

- Qualitative:

- Semi-Structured Interviews: Conducted post-study to explore barriers, facilitators, comfort, privacy concerns, and perceived usefulness [16].

- Thematic Analysis: Transcribed interviews are coded and analyzed to identify key UX themes.

Table 2: Key Materials and Reagents for Dietary Wearable Research

| Category / Item Name | Function / Purpose in Research | Example Use Case |

|---|---|---|

| Inertial Measurement Unit (IMU) | Tracks hand-to-mouth gestures via accelerometer, gyroscope, and magnetometer to detect bites and eating episodes [5] [1]. | Wrist-worn bite counting [9]. |

| Photoplethysmography (PPG) Sensor | Monitors physiological responses (e.g., Heart Rate) to food intake by measuring blood volume changes [5]. | Investigating postprandial heart rate changes [5]. |

| Continuous Glucose Monitor (CGM) | Measures interstitial glucose levels to track glycemic response to meals; can be paired with dietary sensors [16]. | Visualizing food intake-glycemic response relationship in T2D [16]. |

| Skin Temperature Sensor | Monitors skin temperature (Tsk) variations, a potential physiological indicator of food intake and digestion [5]. | Detecting post-meal metabolic changes [5]. |

| Pulse Oximeter Module | Tracks blood oxygen saturation (SpO2), which may decrease slightly following a meal due to digestive processes [5]. | Multi-parameter physiological response monitoring [5]. |