A Systematic Review of Dietary Intake Biomarkers: From Discovery to Clinical Application in Precision Nutrition

This systematic review synthesizes current evidence on biomarkers of dietary intake, addressing a critical need for objective assessment tools in nutritional research and clinical practice.

A Systematic Review of Dietary Intake Biomarkers: From Discovery to Clinical Application in Precision Nutrition

Abstract

This systematic review synthesizes current evidence on biomarkers of dietary intake, addressing a critical need for objective assessment tools in nutritional research and clinical practice. We explore the foundational landscape of biomarkers discovered through metabolomics, evaluate methodological approaches for their application, identify key challenges in validation and implementation, and compare their performance against traditional dietary assessment methods. Targeted at researchers, scientists, and drug development professionals, this review highlights how dietary biomarkers can overcome limitations of self-reported data, enhance compliance monitoring in clinical trials, and advance precision nutrition. The findings underscore the potential of biomarker panels to capture complex dietary patterns while addressing current limitations in specificity and validation.

The Biomarker Landscape: Discovering Objective Measures of Dietary Exposure

Accurate assessment of dietary intake is a fundamental challenge in nutritional science and epidemiology. Current dietary assessment tools, such as food frequency questionnaires (FFQs) and 24-hour recalls, rely on self-reporting and are susceptible to significant measurement errors, including misclassification bias, recall bias, and misreporting [1]. These limitations can compromise the efficiency and efficacy of dietary interventions and obscure true diet-disease relationships. Objective biomarkers of dietary intake provide a complementary methodology for improving assessment accuracy in free-living populations by offering a more direct, biological measure of consumption [1].

Dietary biomarkers are generally classified into two primary categories: exposure/recovery biomarkers and outcome/concentration biomarkers [1]. Exposure or recovery biomarkers are directly related to dietary intake, while outcome or concentration biomarkers can be impacted by an individual's inherent characteristics, such as genetics, metabolism, or pre-existing health conditions, and thus provide an indirect assessment of diet. The development and validation of these biomarkers, particularly through advanced metabolomic technologies, represent a key step toward strengthening research data validity and accurately measuring outcomes in chronic disease management [1] [2].

Table 1: Core Categories of Dietary Biomarkers

| Biomarker Category | Definition | Key Characteristics | Examples |

|---|---|---|---|

| Exposure/Recovery Biomarkers | Directly measure the biological presence of a food or its metabolites [1]. | Directly related to dietary intake; not substantially influenced by endogenous metabolism. | Doubly labeled water for energy intake; Urinary nitrogen for protein intake [1]. |

| Outcome/Concentration Biomarkers | Measure biological states or compounds that can be indirectly affected by diet [1]. | Influenced by individual physiology (e.g., genetics, health status); an indirect assessment of diet. | Serum carotenoids for fruit/vegetable intake; Erythrocyte membrane fatty acids for fat intake [3]. |

This technical guide elaborates on the critical distinction between exposure and recovery biomarkers, detailing their applications, discovery methodologies, and validation processes within the context of modern precision nutrition research.

Biomarker Classification and Definitions

A biomarker is defined as a measurable biological component or state of a component that is indicative of a specific biological or disease state [4]. In the context of diet, a dietary biomarker is a feature that is indicative of dietary intake, while a biosignature refers to a collection of features that together define a biomarker [4].

Exposure and Recovery Biomarkers

Exposure and recovery biomarkers are considered the gold standard for the objective assessment of dietary intake. These biomarkers are directly derived from the consumption of food and are not substantially influenced by the body's endogenous metabolic processes.

- Recovery Biomarkers: This subtype is based on the principle of recovering a known fraction of a nutrient or its metabolite in urine over a specific period. Their quantitative nature is their greatest strength. The most rigorously validated examples are doubly labeled water for measuring total energy expenditure (and thus energy intake under steady-state conditions) and urinary nitrogen for estimating protein intake [1] [3]. These biomarkers are used to calibrate self-reported intake data in epidemiological studies.

- Exposure Biomarkers: These biomarkers indicate recent exposure to a specific food or food component but do not necessarily permit precise quantitative estimation of the amount consumed. They often reflect the presence of food-specific compounds or their unique metabolites in biological fluids. Examples include sulfurous compounds from cruciferous vegetables or galactose derivatives from dairy products found in urine [1].

Outcome/Concentration Biomarkers and Other Types

In contrast to exposure biomarkers, outcome or concentration biomarkers are influenced by an individual's innate characteristics and provide an indirect link to diet.

- Outcome/Concentration Biomarkers: These biomarkers represent a biological state that is modulated by dietary intake but is also affected by individual factors such as genetics, metabolism, gut microbiome composition, and health status [1]. For instance, the concentration of carotenoids in serum is a commonly used biomarker for fruit and vegetable intake, but its levels can be influenced by factors like fat absorption efficiency and metabolic rate [3].

- Other Biomarker Classifications: Beyond the scope of nutritional exposure, biomarkers are also categorized in medical research by their clinical application. These include risk biomarkers (identify likelihood of developing a disease), diagnostic biomarkers (detect an early disease state or subtype), and prognostic biomarkers (predict disease progression or recurrence) [4].

Applications in Research and Clinical Practice

Objective dietary biomarkers are transformative tools with wide-ranging applications that enhance the scientific rigor of nutrition research and its translation into clinical practice.

- Mitigating Measurement Error in Research: The primary application is to complement and correct for measurement errors inherent in self-reported dietary assessment methods like FFQs. By providing an objective measure, biomarkers help mitigate misclassification bias, thereby strengthening the validity of associations between diet and health outcomes in observational studies [1] [2].

- Validation of New Assessment Tools: Biomarkers serve as objective reference measures for validating novel dietary assessment methodologies. For example, the Experience Sampling-based Dietary Assessment Method (ESDAM) is being validated against doubly labeled water (for energy intake), urinary nitrogen (for protein intake), and serum carotenoids (for fruit and vegetable intake) [3].

- Precision Nutrition and Phenotyping: Biomarkers are central to the NIH's vision for precision nutrition. They enable nutrition phenotyping—identifying the integrated set of observable measurements that represent an individual's overall metabolic response to diet. This facilitates the development of personalized dietary recommendations [1].

- Monitoring Compliance in Interventions: In controlled feeding trials and clinical settings, biomarkers can objectively verify participant adherence to a prescribed dietary regimen, moving beyond self-reported compliance [2].

Current State of Validated Biomarkers

Despite the recognized need, the number of fully validated dietary biomarkers remains limited. A systematic review focusing on urinary metabolites identified numerous candidate biomarkers but highlighted that most are better at describing intake of broad food groups rather than distinguishing individual foods [1].

Table 2: Examples of Food-Associated Biomarkers from Recent Research

| Food Group | Reported Biomarker Matrix | Candidate Biomarkers / Characteristics |

|---|---|---|

| Fruits & Vegetables | Urine | Polyphenols and their metabolites; Sulfurous compounds (cruciferous); Proline betaine (citrus) [1]. |

| Soy Foods | Urine | Isoflavones such as daidzein and genistein [1]. |

| Coffee/Cocoa/Tea | Urine | Methylxanthines (e.g., caffeine, theobromine); various polyphenol metabolites [1]. |

| Dairy | Urine | Galactose derivatives; other innate milk components [1]. |

| Whole Grains | Urine | Alkylresorcinols and their metabolites [1]. |

| Alcohol | Urine | Ethyl glucuronide, ethyl sulfate [1]. |

The systematic review concluded that urinary biomarkers have strong utility for monitoring changes in intake of broad categories like citrus fruits, cruciferous vegetables, whole grains, and soy foods, but often lack the specificity to identify individual food items within these groups [1]. This underscores a significant gap in the field.

Discovery and Validation Frameworks

The process of discovering and validating a novel dietary biomarker is complex and requires a systematic, multi-phase approach. The Dietary Biomarkers Development Consortium (DBDC) exemplifies a rigorous framework for this purpose [2] [5] [6].

The DBDC Three-Phase Approach

The DBDC is a major initiative to discover and validate biomarkers for foods commonly consumed in the United States diet. Its structured approach is designed to ensure that candidate biomarkers are both sensitive and specific [2].

- Phase 1: Discovery and Pharmacokinetics: Controlled feeding trials are conducted where healthy participants consume pre-specified amounts of test foods. Blood and urine specimens are collected at multiple timepoints and subjected to metabolomic profiling to identify candidate compounds. This phase characterizes the pharmacokinetic parameters (time-to-peak, half-life) of the candidate biomarkers [2] [6].

- Phase 2: Evaluation in Complex Diets: The ability of candidate biomarkers to identify consumption of their associated foods is tested within the context of various controlled dietary patterns. This determines if the biomarker remains specific when the test food is consumed as part of a mixed diet [2].

- Phase 3: Validation in Free-Living Populations: The final phase evaluates the validity of candidate biomarkers to predict recent and habitual consumption in independent observational studies of free-living individuals. This is the critical test of a biomarker's real-world utility [2].

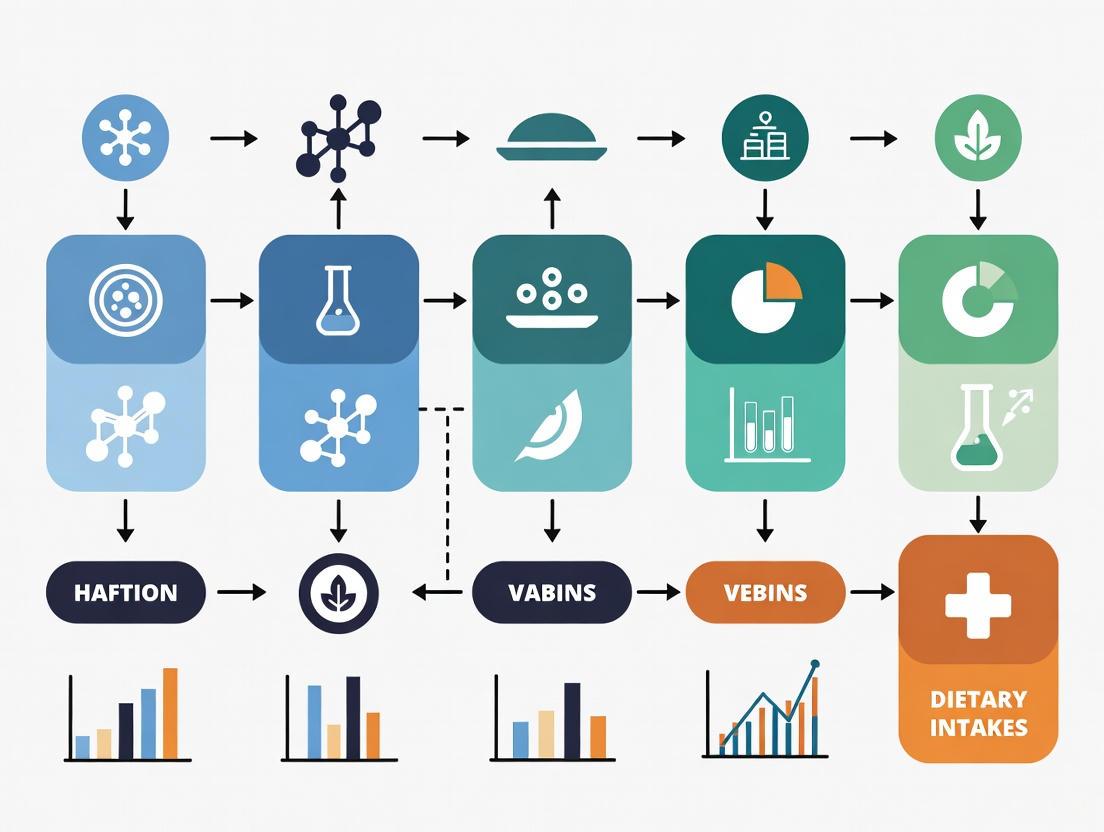

Diagram 1: DBDC Biomarker Validation Workflow. This diagram outlines the three-phase framework used by the Dietary Biomarkers Development Consortium for the systematic discovery and validation of dietary biomarkers. PK: Pharmacokinetics; DR: Dose-Response.

Key Considerations for Validation

For a metabolite to be considered a valid biomarker of food intake, it should meet several criteria proposed by experts in the field, including plausibility (a biologically reasonable link to the food), dose-response, time-response, robustness, and reliability in free-living populations [6]. A major challenge has been that most dietary biomarker studies have not fully examined these pharmacokinetic and dose-response relationships [6].

Experimental Protocols and Methodologies

The discovery and validation of dietary biomarkers rely on a combination of controlled study designs, precise biological sampling, and advanced analytical techniques.

Controlled Feeding Trials

These studies are the cornerstone of biomarker discovery (Phase 1). As implemented by the DBDC, they involve administering specific test foods in known amounts to healthy participants [2] [6]. The design allows researchers to directly link the consumption of a food to the appearance of metabolites in biological fluids, establishing a clear cause-and-effect relationship.

Biospecimen Collection and Handling

Standardized protocols for collecting, processing, and storing biospecimens are critical for data quality and reproducibility.

- Urine Collection: Often collected as 24-hour urine to quantify total daily excretion of nutrients like nitrogen (for protein) or food-specific metabolites. For pharmacokinetic studies, multiple spot or timed urine samples are collected postprandially [1] [3].

- Blood Collection: Used to isolate serum, plasma, or specific components like erythrocytes. For example, erythrocyte membrane fatty acids are a longer-term biomarker of fatty acid intake compared to plasma levels [3].

- Storage: Samples are typically aliquoted and stored at -80°C to preserve metabolite stability until analysis [6].

Metabolomic Profiling

Advanced metabolomics is the primary technology for biomarker discovery. The typical workflow involves:

- Sample Preparation: Proteins are precipitated, and metabolites are extracted using solvents.

- Chromatographic Separation: Using techniques like Ultra-High-Performance Liquid Chromatography (UHPLC) to separate complex mixtures of metabolites.

- Mass Spectrometry (MS) Detection: Liquid Chromatography-MS (LC-MS) is the workhorse platform, often coupled with Hydrophilic-Interaction Liquid Chromatography (HILIC) to capture a wide range of polar metabolites [2] [6]. These platforms provide high sensitivity and specificity for identifying and quantifying thousands of metabolites simultaneously.

- Data Analysis: High-dimensional bioinformatics analyses, including multivariate statistics and machine learning, are used to identify metabolite patterns associated with the consumption of specific test foods [2] [4].

Validation Study Design

An example of a comprehensive validation protocol is outlined in a study validating the Experience Sampling-based Dietary Assessment Method (ESDAM) [3]. This prospective observational study assesses the validity of a new dietary tool against both self-reported (24-hour recalls) and objective biomarkers over a four-week period. The primary outcomes are energy intake (vs. doubly labeled water) and protein intake (vs. urinary nitrogen), with secondary outcomes including fruit/vegetable intake (vs. serum carotenoids) and fatty acid intake (vs. erythrocyte membrane fatty acids) [3].

Table 3: Research Reagent Solutions for Dietary Biomarker Studies

| Reagent / Material | Function / Application | Example Use Case |

|---|---|---|

| Doubly Labeled Water (2H218O) | Gold-standard measure of total energy expenditure in free-living individuals [3]. | Validation of energy intake assessment methods like ESDAM [3]. |

| LC-MS/MS Systems | High-sensitivity platform for identifying and quantifying unknown and known metabolites in biospecimens [2] [6]. | Discovery of novel food-specific metabolites in plasma and urine from feeding trials. |

| HILIC Columns | Liquid chromatography columns designed for the separation of polar metabolites, complementing reverse-phase LC [2]. | Expanding the coverage of the metabolome during profiling of urine samples. |

| Stable Isotope-Labeled Standards | Internal standards for mass spectrometry that correct for variability in sample preparation and ionization [6]. | Accurate quantification of specific candidate biomarker compounds. |

| Automated 24-Hour Dietary Recall Systems | Structured, interviewer-administered tool for collecting self-reported dietary intake as a comparison method [3]. | Assessing convergent validity of new dietary assessment methods like ESDAM. |

| Continuous Glucose Monitors (CGM) | Objective method for detecting eating episodes and assessing compliance with dietary reporting prompts [3]. | Monitoring participant adherence in real-time during validation studies. |

Diagram 2: Experimental Biomarker Discovery Workflow. This diagram visualizes the key steps and materials involved in a typical controlled feeding study for dietary biomarker discovery. PK: Pharmacokinetics; DR: Dose-Response.

Challenges and Future Directions

The field of dietary biomarker research faces several significant challenges. The complexity of diet, with its high degree of intercorrelation between nutrients and foods, complicates the identification of specific markers [6]. Furthermore, the influence of inter-individual variability (e.g., genetics, gut microbiome) on metabolite production and kinetics means that a single biomarker may not be universally applicable [1] [4]. As of 2022, a systematic review concluded that while biomarkers for broad food groups show promise, the ability to distinguish individual foods is still limited [1].

Future efforts will focus on expanding the number of validated biomarkers through consortia like the DBDC. The DBDC aims to create a publicly accessible database of its findings, which will serve as a vital resource for the global research community [2] [6]. There is also a growing emphasis on using biomarkers not just for validation but as integral components of dietary assessment in precision nutrition, ultimately aiming to develop robust biosignatures that can accurately characterize an individual's dietary pattern and metabolic phenotype.

Metabolomics has emerged as a pivotal tool in nutritional science, enabling the objective identification of dietary intake biomarkers that address the significant limitations of self-reported data. Through targeted and untargeted analytical approaches, researchers have identified putative biomarkers for a diverse range of food groups, including fruits, vegetables, high-fiber grains, meats, seafood, and coffee. This technical guide synthesizes current methodologies, validated biomarkers, and experimental protocols central to metabolomics-driven discovery in the context of systematic dietary biomarker research. It further outlines the critical validation criteria necessary to transition putative biomarkers into robust tools for assessing dietary exposure, monitoring intervention compliance, and advancing precision nutrition initiatives.

Current Landscape of Validated Food Intake Biomarkers

The application of metabolomics has led to the discovery of numerous metabolites associated with the consumption of specific foods and complex dietary patterns. These biomarkers are broadly classified as exposure biomarkers, which are food-derived compounds or their metabolites, and effect biomarkers, which reflect endogenous metabolic shifts in response to dietary intake [7]. The table below summarizes some of the most well-characterized putative biomarkers for key food groups, as identified through systematic reviews and intervention studies.

Table 1: Putative Biomarkers of Food Intake Across Major Food Groups

| Food Group | Putative Biomarkers | Biological Sample | Level of Evidence |

|---|---|---|---|

| Citrus Fruits | Proline betaine | Plasma, Urine | Good [7] [8] |

| Cruciferous Vegetables | Sulfur-containing metabolites (e.g., S-methyl-L-cysteine sulfoxide) | Urine | Fair [1] |

| Whole Grains & High-Fiber Foods | Alkylresorcinols, Enterolactones, Short-chain fatty acids (SCFAs) | Plasma, Urine | Good for alkylresorcinols [9] [10] |

| Red Meat & Seafood | Carnitine, Acetylcarnitine, Trimethylamine N-oxide (TMAO) | Plasma, Serum | Good [9] [10] |

| Fish | Omega-3 fatty acids (EPA, DHA) | Serum, Plasma | Good [10] |

| Coffee | Trigonelline, Nicotinic acid | Urine, Plasma | Good [9] [10] |

| Soy Foods | Isoflavones (Daidzein, Genistein) | Urine | Good [1] |

| Dairy | Galactose derivatives, Dihydroferulic acid | Urine | Fair [1] |

A comprehensive review of 244 studies identified 69 metabolites as good candidate biomarkers of food intake, establishing a foundational resource for the field [9]. However, it is crucial to note that many identified biomarkers require further validation against established criteria before they can be widely implemented in research and clinical practice.

Experimental Methodologies for Biomarker Discovery

Study Designs for Discovery and Validation

Robust experimental design is paramount for the discovery of reliable biomarkers. The preferred designs include:

- Acute Controlled Intervention Trials: Participants consume a single dose of the food of interest, and biological samples (blood, urine) are collected at multiple time points post-consumption (e.g., 0, 2, 4, 6, 8, 24 hours). This design helps establish a causal link between intake and metabolite appearance and defines the kinetic profile (time-response) of the biomarker [7]. A control arm is essential to ensure biomarker specificity.

- Short-to-Medium Term Interventions: These studies involve providing participants with the food of interest over days or weeks. This approach is effective for identifying biomarkers of habitual intake and for assessing the dose-response relationship, which is a key validation criterion [7].

- Observational Studies with Dietary Assessment: Large cohort studies with self-reported dietary data (e.g., FFQs, 24-h recalls) can be used to correlate metabolite levels with reported food intake. While useful for confirming findings from interventions, this design carries a higher risk of confounding due to correlated food consumption patterns [11] [8].

Analytical Techniques and Platforms

Metabolomic profiling relies on two primary analytical techniques, often used in complementary fashion:

- Mass Spectrometry (MS):

- Liquid Chromatography-MS (LC-MS): The most frequently employed platform in nutritional metabolomics due to its high sensitivity and broad coverage of metabolites [12] [11]. It is ideal for analyzing semi-polar to polar compounds like most food-derived metabolites.

- Gas Chromatography-MS (GC-MS): Excellent for the separation and identification of volatile compounds or those made volatile through derivatization, such as organic acids and sugars [9].

- MS-based approaches can be either untargeted (hypothesis-generating, measuring thousands of unknown features) or targeted (hypothesis-driven, quantifying a predefined set of metabolites with high precision) [9] [11].

- Nuclear Magnetic Resonance (NMR) Spectroscopy: While less sensitive than MS, NMR is highly reproducible, requires minimal sample preparation, and provides structural information [9] [11]. It is often used for high-throughput screening and absolute quantification.

The Biomarker Validation Pathway

The discovery of a metabolite association is merely the first step. For a biomarker to be considered robust, it must be rigorously validated. The FoodBall consortium and other expert groups have proposed a set of validation criteria [7] [8]:

- Plausibility: The biomarker must be chemically present in the food or be a biologically plausible metabolite of a food component.

- Dose-Response: A change in biomarker concentration should be proportional to the amount of food consumed.

- Time-Response: The kinetics of appearance, peak concentration, and clearance in biological fluids should be characterized.

- Robustness: The biomarker should perform consistently across different population groups (varying in age, sex, BMI, health status).

- Reliability: The biomarker measurement should show good agreement with other assessment methods, though perfect correlation with error-prone self-report data is not always expected.

- Stability & Analytical Performance: The biomarker must be chemically stable in the chosen biofluid, and the analytical method must be validated for precision, accuracy, and sensitivity.

Diagram 1: The biomarker validation pathway, outlining key sequential criteria.

Experimental Workflow: From Sample to Biomarker

The standard workflow for a nutritional metabolomics study involves several critical stages, from initial study design to final biological interpretation. The following diagram and subsequent breakdown detail this process.

Diagram 2: End-to-end experimental workflow for metabolomic biomarker discovery.

Phase 1: Experimental Design & Sample Collection

- Intervention Design: For a study on citrus fruit biomarkers, an acute crossover trial would be ideal. Participants would consume a controlled dose of citrus (e.g., orange juice) after a washout period, with a control arm receiving a citrus-free meal [7].

- Sample Collection: Blood (plasma/serum) and urine samples are collected at baseline and at pre-defined post-prandial intervals (e.g., 2h, 4h, 6h, 8h, 24h). Plasma captures metabolically active compounds, while urine often shows a higher concentration of food-derived compounds and is useful for acute markers [11]. Samples are immediately processed and stored at -80°C.

Phase 2: Metabolite Profiling & Data Generation

- Sample Preparation: Proteins are precipitated from plasma using cold organic solvents like methanol or acetonitrile. Urine samples may be diluted or subjected to solid-phase extraction.

- Instrumental Analysis: Prepared samples are analyzed using LC-MS in untargeted mode to capture a wide array of metabolites. For citrus studies, LC-MS is particularly suitable for detecting polar compounds like proline betaine [12].

- Quality Control: Pooled quality control (QC) samples are analyzed intermittently throughout the sequence to monitor instrument stability and for data quality assurance.

Phase 3: Data Processing & Statistical Analysis

- Data Preprocessing: Raw data are converted into a peak table containing metabolite features (mass/retention time pairs) and their intensities. This involves peak picking, alignment, and normalization to correct for technical variation [11].

- Statistical Analysis:

- Unsupervised Methods: Principal Component Analysis (PCA) is used to visualize inherent data clustering and identify outliers.

- Supervised Methods: Partial Least Squares-Discriminant Analysis (PLS-DA) is applied to maximize the separation between groups (e.g., post-consumption vs. baseline) and identify the most significant metabolite features driving this separation.

Phase 4: Metabolite Identification & Validation

- Metabolite Identification: Significant features are identified by matching their accurate mass and fragmentation spectrum (MS/MS) against metabolomic databases such as the Human Metabolome Database (HMDB) or FooDB [9].

- Validation: The identity of a key biomarker like proline betaine is confirmed using a chemically synthesized standard analyzed with the same LC-MS method. Subsequent targeted quantitative assays are often developed for validated biomarkers.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful execution of a nutritional metabolomics study requires a suite of specialized reagents, kits, and analytical platforms.

Table 2: Essential Research Reagents and Platforms for Nutritional Metabolomics

| Item / Solution | Function / Application | Example Use Case |

|---|---|---|

| AbsoluteIDQ p180 Kit (Biocrates) | Targeted metabolomics kit for simultaneous quantification of up to 188 metabolites (acylcarnitines, amino acids, lipids, etc.). | High-throughput phenotyping in cohort studies; validating discoveries from untargeted analyses [13]. |

| LC-MS/MS System | High-sensitivity platform for untargeted and targeted metabolite profiling and quantification. | Discovery of novel biomarkers and subsequent validation in large sample sets [12] [11]. |

| Volumetric Absorptive Microsampling (VAMS) devices (e.g., Mitra) | Standardized collection of small-volume blood samples from a finger-prick; samples are stable at ambient temperature. | Enabling scalable and remote sample collection for consumer-grade tests or large-scale field studies [10]. |

| Human Metabolome Database (HMDB) | Manually curated database containing detailed information about >6800 human metabolites. | Reference for metabolite identification based on mass and spectral matching [9] [11]. |

| FooDB | Comprehensive database of >70,000 food components and constituents. | Identifying potential food origins of metabolites discovered in biological samples [9]. |

| Stable Isotope-Labeled Standards | Internal standards (e.g., 13C- or 2H-labeled compounds) added to samples prior to analysis. | Correcting for matrix effects and losses during sample preparation, ensuring accurate quantification [11]. |

Metabolomics has fundamentally advanced our capacity to discover putative biomarkers of food intake, moving the field beyond reliance on error-prone self-reported data. The systematic application of controlled interventions, advanced mass spectrometry, and rigorous validation pathways has yielded a growing repository of biomarkers for major food groups. These biomarkers are already being applied to monitor compliance in dietary intervention trials and to calibrate self-reported intake in epidemiological studies [7] [8]. The future of this field lies in the continued validation of existing candidate biomarkers, the development of standardized, high-throughput analytical methods, and the integration of metabolomic data with other omics layers to power precision nutrition and deepen our understanding of the complex interplay between diet, metabolism, and human health.

Accurate assessment of dietary intake is paramount for understanding diet-disease relationships, yet traditional tools like food frequency questionnaires (FFQs) are susceptible to misreporting and measurement error [14]. Biomarkers of dietary intake offer a complementary, objective approach to characterize exposure to specific foods and nutrients. This technical guide provides an in-depth examination of biomarkers for plant-based foods, focusing on polyphenols, sulfurous compounds, and broader metabolite profiles, framed within the context of systematic reviews of dietary intake biomarker research. For researchers and drug development professionals, this whitepaper details the core biomarkers, their biological matrices, quantitative data, and associated methodologies required for their analysis in clinical and research settings.

Core Biomarker Classes and Quantitative Data

Biomarkers of plant-based food intake can be broadly categorized by their chemical nature and the food groups they represent. The following sections and tables summarize the primary biomarkers, their sources, and their detection levels in biological samples.

Polyphenols as Biomarkers

Polyphenols are a diverse class of bioactive compounds abundantly found in plant-based foods such as fruits, vegetables, tea, coffee, and soy. They are frequently represented in urinary metabolite profiles [14].

- Isoflavones: Found predominantly in soy-based foods, these are among the most robust biomarkers for legume intake. Daidzein and genistein, and their metabolites (e.g., equol, O-desmethylangolensin), are commonly measured in urine.

- Flavanones: Hesperetin and naringenin are specific biomarkers for citrus fruit consumption.

- Enterolactone: This lignan is produced by the gut microbiota from precursors found in seeds (e.g., flaxseed), whole grains, and some vegetables, serving as a biomarker for high-fiber plant food intake.

- Total Carotenoids: Measured in plasma, carotenoids (e.g., α-carotene, β-carotene, lutein, lycopene) are strong biomarkers for fruit and vegetable consumption.

Table 1: Key Polyphenol and Carotenoid Biomarkers for Plant-Based Foods

| Biomarker Class | Specific Biomarker(s) | Primary Food Sources | Biological Matrix | Relative Abundance in Vegetarian vs. Non-Vegetarian Diets* |

|---|---|---|---|---|

| Isoflavones | Daidzein, Genistein, Equol | Soybeans, Tofu, Soy Milk | Urine | 6-fold higher in Vegans [15] |

| Lignans | Enterolactone | Flaxseed, Whole Grains, Seeds | Urine | 4.4-fold higher in Vegans [15] |

| Carotenoids | α-Carotene, β-Carotene, Lutein | Fruits & Vegetables (e.g., carrots, leafy greens) | Plasma | 1.6-fold higher in Vegans [15] |

| Flavanones | Hesperetin, Naringenin | Citrus Fruits (oranges, grapefruit) | Urine | Associated with citrus fruit intake [14] |

| Polyphenols (General) | Various Hippuric Acids | Tea, Coffee, Fruits | Urine | Associated with tea/coffee and fruit intake [14] |

Data based on comparisons from the Adventist Health Study-2 (AHS-2) cohort [15].

Sulfurous Compounds and Other Food-Specific Biomarkers

Certain plant-based foods contain unique compounds that give rise to specific metabolites, allowing for precise identification of intake.

- Sulfurous Compounds: Cruciferous vegetables (e.g., broccoli, cabbage, kale) are rich in glucosinolates. Upon consumption, these are hydrolyzed to isothiocyanates (e.g., sulforaphane) and other metabolites, such as mercapturic acids, which are detectable in urine and serve as highly specific biomarkers [14].

- Alkylresorcinols: These phenolic lipids are found almost exclusively in the bran layer of whole-grain wheat and rye, making them excellent biomarkers for whole-grain cereal intake.

- Fatty Acid Profiles: Adipose tissue and plasma fatty acid composition can reflect dietary patterns. Vegans and vegetarians show distinct profiles, including higher levels of linoleic acid (18:2ω-6) and total ω-3 fatty acids (primarily α-linolenic acid, ALA) compared to non-vegetarians [15].

Table 2: Other Specific Biomarkers and Fatty Acid Profiles

| Biomarker/Fatty Acid | Food Source | Biological Matrix | Key Findings |

|---|---|---|---|

| Isothiocyanates | Cruciferous Vegetables | Urine | Specific sulfur-containing biomarkers for broccoli, cabbage, etc. [14] |

| Alkylresorcinols | Whole Grains (wheat, rye) | Plasma, Urine | Correlate with whole-grain cereal intake [14] |

| 1-Methylhistidine | Meat (Muscle protein) | Urine | 92% lower in vegans, validating low meat intake [15] |

| Linoleic Acid (18:2ω-6) | Plant Oils, Nuts, Seeds | Adipose Tissue, Plasma | 23.3% in Vegans vs. 19.1% in Non-Vegetarians [15] |

| Total ω-3 Fatty Acids | Flaxseed, Walnuts, Chia Seeds | Adipose Tissue, Plasma | 2.1% in Vegans vs. 1.6% in Non-Vegetarians [15] |

| Saturated Fatty Acids | Animal Fats, Dairy | Adipose Tissue, Plasma | Significantly lower relative abundance in vegans [15] |

Experimental Protocols for Biomarker Analysis

Robust methodologies are critical for the accurate identification and quantification of dietary biomarkers. The following protocols outline standardized approaches for sample collection, processing, and analysis.

Protocol 1: Urinary Metabolite Profiling for Polyphenols and Sulfurous Compounds

This protocol is adapted from methodologies described in systematic reviews and cohort studies [14] [15].

- 1. Sample Collection: Collect spot urine samples or, preferably, 24-hour urine collections. Stabilize samples with an antioxidant (e.g., ascorbic acid) and acidify if necessary. Store immediately at -80°C.

- 2. Sample Preparation:

- Thaw samples on ice and vortex.

- Aliquot 500 µL of urine into a microcentrifuge tube.

- Add an internal standard (e.g., daidzein-d4 for polyphenols).

- Enzymatic deconjugation: Incubate with β-glucuronidase/sulfatase (e.g., from Helix pomatia) in a buffered solution (e.g., sodium acetate buffer, pH 5.0) for 2-4 hours at 37°C.

- Perform solid-phase extraction (SPE) using C18 or mixed-mode cartridges. Elute analytes with methanol.

- Evaporate eluent to dryness under a gentle stream of nitrogen and reconstitute in mobile phase (e.g., water/methanol) for LC-MS analysis.

- 3. Instrumental Analysis - LC-MS/MS:

- Chromatography: Use a reverse-phase C18 column (e.g., 2.1 x 100 mm, 1.8 µm) maintained at 40°C. The mobile phase consists of (A) 0.1% formic acid in water and (B) 0.1% formic acid in acetonitrile. Apply a gradient elution from 5% B to 95% B over 10-15 minutes.

- Mass Spectrometry: Operate an electrospray ionization (ESI) source in negative and/or positive mode. Use multiple reaction monitoring (MRM) for sensitive and specific quantification. Key transitions include, for example, daidzein (253→132), enterolactone (297→107), and sulforaphane-mercapturic acid (178→114).

- 4. Data Analysis: Quantify metabolites using calibration curves of authentic standards. Normalize data to creatinine concentration to account for urine dilution.

Protocol 2: Analysis of Plasma Carotenoids and Adipose Tissue Fatty Acids

This protocol is based on lipid profiling methods used in large cohort studies like AHS-2 [15].

- 1. Sample Collection:

- Plasma: Collect fasting blood samples in EDTA tubes. Centrifuge to isolate plasma and store at -80°C, protected from light.

- Adipose Tissue: Obtain subcutaneous adipose tissue biopsies via a standardized procedure (e.g., from the buttock or abdomen). Snap-freeze in liquid nitrogen and store at -80°C.

- 2. Sample Preparation - Carotenoids (Plasma):

- Thaw plasma samples on ice in a dark environment.

- Aliquot 200 µL of plasma and add internal standards (e.g., tocopherol-acetate).

- Precipitate proteins with ethanol containing butylated hydroxytoluene (BHT) as an antioxidant.

- Extract carotenoids (and other lipophilic compounds) with hexane.

- Evaporate the hexane layer and reconstitute in a suitable solvent (e.g., ethanol:dichloromethane, 50:50) for HPLC analysis.

- 3. Sample Preparation - Fatty Acids (Adipose Tissue):

- Weigh ~10-50 mg of adipose tissue.

- Extract total lipids using a chloroform:methanol mixture (e.g., 2:1 v/v) via the Folch method.

- Transesterify the extracted lipids to fatty acid methyl esters (FAMEs) using methanolic boron trifluoride (BF3) or acid-catalyzed methylation.

- Extract FAMEs with hexane for GC analysis.

- 4. Instrumental Analysis:

- HPLC for Carotenoids: Use a C30 carotenoid column with a gradient mobile phase of methanol, methyl-tert-butyl ether (MTBE), and water. Detect using a photodiode array (PDA) detector set at specific wavelengths (e.g., 450 nm for β-carotene, 450 nm for lutein).

- GC-FID/MS for FAMEs: Use a high-polarity capillary GC column (e.g., CP-Sil 88, 100 m x 0.25 mm). Employ a temperature gradient program. Identify and quantify FAMEs by comparing retention times and mass spectra with those of authentic FAME standards using a flame ionization detector (FID) or mass spectrometer (MS).

Biomarker Discovery and Validation Workflow

The process of identifying and validating a dietary biomarker follows a structured pipeline from discovery to application. The diagram below illustrates this multi-stage workflow.

Biomarker Discovery and Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials, reagents, and instruments required for conducting research on biomarkers of plant-based food intake.

Table 3: Essential Research Reagents and Materials for Dietary Biomarker Analysis

| Item | Function/Application | Example Specifications |

|---|---|---|

| β-Glucuronidase/Sulfatase | Enzymatic deconjugation of phase II metabolites (glucuronides, sulfates) in urine to free aglycones for analysis. | From Helix pomatia; ≥100,000 units/mL; in sodium acetate buffer. |

| Solid-Phase Extraction (SPE) Cartridges | Clean-up and concentration of analytes from complex biological matrices like urine and plasma. | Reverse-phase C18 (e.g., 60 mg/3 mL); Mixed-mode (C18/SCX). |

| LC-MS/MS Grade Solvents | Mobile phase preparation for liquid chromatography to ensure high sensitivity and minimal background noise. | Acetonitrile, Methanol, Water (with 0.1% Formic Acid). |

| Authentic Chemical Standards | Identification and quantification of target biomarkers by creating calibration curves. | Daidzein (≥98%), Genistein (≥98%), Enterolactone (≥98%), Sulforaphane (≥95%). |

| Stable Isotope-Labeled Internal Standards | Correction for analyte loss during sample preparation and matrix effects in mass spectrometry. | Daidzein-d4, Genistein-d4, 13C-Enterolactone. |

| FAME Mix Reference Standard | Identification and quantification of individual fatty acids in gas chromatography. | 37-component FAME mix (e.g., from Supelco), suitable for CP-Sil 88 columns. |

| UPLC/HPLC System with PDA Detector | High-resolution separation and UV/Vis detection of compounds like carotenoids and polyphenols. | Acquity UPLC H-Class (Waters) or equivalent; C18 or C30 analytical columns. |

| Triple Quadrupole Mass Spectrometer | Sensitive and specific detection and quantification of biomarkers using Multiple Reaction Monitoring (MRM). | API 4000 (Sciex) or Xevo TQ-S (Waters) coupled with an ESI source. |

| Gas Chromatograph with FID/MS | Separation, identification, and quantification of volatile compounds, particularly fatty acid methyl esters (FAMEs). | Agilent 8890 GC System with a CP-Sil 88 column and FID/MS detector. |

Biomarkers such as polyphenols, sulfurous compounds, and specific metabolite profiles provide an objective and powerful means to assess intake of plant-based foods, overcoming limitations inherent in self-reported dietary data. The quantitative data and detailed methodologies presented in this whitepaper provide a foundation for researchers to robustly measure these biomarkers. Their application in systematic reviews and large-scale studies is crucial for validating dietary patterns, understanding diet-disease relationships, and advancing the field of precision nutrition. Future research should focus on the discovery of novel biomarkers, particularly for under-represented plant foods, and the standardization of methods to enable comparability across studies.

Accurate dietary assessment is fundamental to understanding diet-disease relationships, yet traditional reliance on self-reported data from tools like food frequency questionnaires (FFQs) and 24-hour recalls introduces significant measurement error, misreporting bias, and misclassification [1]. Objective dietary biomarkers, measurable biological indicators of food intake, provide a powerful alternative for quantifying exposure to specific foods, nutrients, and dietary patterns, thereby strengthening the scientific rigor of nutritional epidemiology and precision nutrition research [16] [2].

This technical guide synthesizes current evidence on biomarkers for two major food categories: animal-based foods and ultra-processed foods (UPFs). The rapid global rise in UPF consumption, now exceeding 50% of energy intake in countries like the USA and UK, and ongoing debates regarding the health impacts of animal versus plant-based proteins underscore the urgent need for objective measurement tools [17] [18]. We focus on metabolomic approaches, which comprehensively measure small-molecule metabolites in biofluids like blood and urine, offering a detailed snapshot of dietary exposure and metabolic response [19] [20]. This review is structured to provide researchers with a clear overview of validated and candidate biomarkers, detailed experimental methodologies, and critical research gaps to inform future studies.

Biomarkers for Animal-Based Foods

Current Evidence and Candidate Biomarkers

Biomarkers for animal-based foods often arise from their unique nutrient profile, including specific proteins, saturated fats, and micronutrients not readily available from plant-based sources. The following table summarizes key candidate biomarkers and their detection in biological samples.

Table 1: Candidate Biomarkers for Animal-Based Foods

| Food Category | Candidate Biomarker(s) | Biological Sample | Key Characteristics/Notes |

|---|---|---|---|

| General Animal Protein | Urinary Nitrogen [1] | Urine | A long-established recovery biomarker for total protein intake. |

| Meat | Specific metabolites from sulfurous compounds, creatine, creatinine [1] | Urine | Potential to distinguish between red meat, poultry, and processed meat varieties. |

| Dairy | Galactose derivatives, odd-chain saturated fatty acids (e.g., 15:0, 17:0) [1] | Urine, Blood | Odd-chain fatty acids are considered robust biomarkers for dairy fat intake. |

| Fish & Seafood | Omega-3 Fatty Acids (DHA, EPA) [21] | Blood (serum/plasma) | Highly specific for fatty fish intake; DHA is critical for brain health. |

The geometric framework for nutrition (GFN) analysis of global data suggests that the health associations of animal-based protein (ABP) are complex and age-dependent. Ecological studies indicate that higher ABP supplies at a national level are associated with improved early-life survivorship (measured as proportion of a cohort alive at age 5), while later-life survival (proportion alive at age 60) benefits more from plant-based protein (PBP) supplies [18]. This highlights the context-dependent nature of dietary exposure and the need for biomarkers to move beyond mere intake quantification to understanding metabolic health impacts.

Research Gaps

Substantial gaps remain in the biomarker research for animal-based foods. A primary challenge is the lack of specificity; many current biomarkers indicate intake of a broad category (e.g., "meat") but cannot reliably distinguish between specific types such as unprocessed red meat, poultry, or processed meats [1]. Furthermore, the interaction between diet and an individual's unique physiology—including genetics, gut microbiome composition, and baseline health—creates significant inter-individual variability in metabolic response that current biomarkers do not fully capture [16]. Validated biomarkers for specific animal-based foods, like different types of meat and dairy products, remain limited and are a priority for the developing field of precision nutrition [2].

Biomarkers for Ultra-Processed Foods (UPFs)

The Poly-Metabolite Score: A Novel Approach

A significant recent advancement is the development of a poly-metabolite score for UPF intake. In a landmark study, NIH researchers used metabolomic data from both an observational study (n=718) and a controlled feeding trial (n=20) to identify hundreds of metabolites in blood and urine that correlated with the percentage of energy derived from UPFs [19] [22]. Using machine learning, they distilled these metabolites into predictive patterns, creating a composite poly-metabolite score that could accurately differentiate between high-UPF (80% of energy) and zero-UPF diets in the feeding trial [19]. This objective tool has the potential to reduce reliance on self-reported data in large population studies.

Categorization of UPF Biomarkers

The identified metabolites associated with UPF intake can be categorized into several chemical classes, which may reflect both the composition of UPFs and the body's biological response to them. The diagram below illustrates the workflow for biomarker discovery and the major classes of UPF-associated metabolites.

Figure 1: UPF Biomarker Discovery Workflow and Metabolite Classes. The poly-metabolite score was developed by integrating data from controlled and observational studies, followed by machine learning analysis that identified key classes of discriminatory metabolites [19] [20] [22].

These metabolite classes provide insights into potential biological mechanisms. For instance, xenobiotics may directly reflect exposure to additives like artificial sweeteners, colors, and emulsifiers used in UPF manufacturing [20]. Shifts in lipids and amino acids could indicate broader metabolic disturbances, such as alterations in energy metabolism or inflammation, linked to high UPF consumption [19] [17].

Health Context and Validation

The drive to develop UPF biomarkers is underscored by robust evidence linking their consumption to adverse health outcomes. A systematic review of 104 long-term studies found that 92 showed higher risks for at least one chronic disease, with meta-analyses identifying significant associations with 12 health conditions, including obesity, type 2 diabetes, cardiovascular disease, and depression [17]. A recent 8-week randomized controlled crossover feeding trial (n=55) provided direct experimental evidence, demonstrating that even when matched to national dietary guidelines, an ad libitum UPF diet resulted in significantly less weight loss and reduced fat mass loss compared to a minimally processed food (MPF) diet [23]. This trial also found differential effects on cardiometabolic risk factors, such as triglycerides, which decreased more on the MPF diet [23].

Methodological Framework for Biomarker Discovery

The discovery and validation of dietary biomarkers require a rigorous, multi-phase approach, as championed by initiatives like the Dietary Biomarkers Development Consortium (DBDC) [2].

Experimental Designs and Protocols

A combination of study designs is essential for robust biomarker development.

- Controlled Feeding Trials: These are the gold standard for discovery. The DBDC employs designs where test foods are administered in prespecified amounts to healthy participants. This allows for characterizing the pharmacokinetic profile of candidate biomarkers, including their appearance, peak concentration, and clearance in blood and urine [2]. The NIH feeding study that informed the UPF poly-metabolite score is a prime example, where participants consumed 0% and 80% UPF diets in a randomized crossover design [19] [22].

- Observational Studies: Large cohorts with stored biospecimens and detailed dietary records are used to identify metabolite-diet associations in free-living populations and to validate findings from controlled studies. The IDATA study, which provided data for 718 participants, served this purpose for the UPF biomarker research [19] [22].

- Analytical Techniques: Metabolomics, primarily using liquid chromatography-mass spectrometry (LC-MS), is the dominant technology for high-throughput profiling of the hundreds to thousands of small molecules in biospecimens [2]. Subsequent bioinformatics and machine learning are critical for parsing these complex datasets to identify discriminatory metabolite patterns.

The Scientist's Toolkit: Key Research Reagents and Materials

Table 2: Essential Research Materials for Dietary Biomarker Studies

| Item/Category | Function in Research |

|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) | The core analytical platform for untargeted and targeted metabolomic profiling of blood (plasma/serum) and urine samples [2]. |

| Stable Isotope-Labeled Standards | Used in mass spectrometry for absolute quantification of specific candidate biomarkers, correcting for analytical variation. |

| Controlled Test Foods/Meals | Precisely formulated foods administered in feeding trials to establish a direct, dose-response link between intake and biomarker levels [2]. |

| Biospecimen Repositories | Collections of well-annotated blood and urine samples from large observational cohorts and clinical trials, essential for validation [19] [2]. |

| Bioinformatics Pipelines | Software and statistical packages for processing raw metabolomic data, performing feature identification, and applying machine learning algorithms [19]. |

The following diagram outlines the key stages of the multi-phase validation pathway for dietary biomarkers.

Figure 2: The Dietary Biomarker Validation Pathway. This multi-stage framework, as implemented by consortia like the DBDC, ensures biomarkers are rigorously tested from initial discovery to real-world application [2].

The field of dietary biomarkers is advancing rapidly, moving beyond single nutrients to embrace complex dietary patterns and food processing levels. The development of a poly-metabolite score for UPFs represents a paradigm shift, demonstrating the power of machine learning applied to metabolomic data to create objective measures of complex exposures [19] [22]. For animal-based foods, the challenge remains to develop more specific biomarkers that can distinguish between food subtypes and account for inter-individual metabolic variability.

Critical gaps and future directions include:

- Specificity for Animal-Based Subtypes: A pressing need exists for biomarkers that can differentiate between processed and unprocessed meats, lean and fatty cuts, and varied farming practices [1] [16].

- Mechanistic Insights: Future research should focus on linking dietary biomarkers not only to intake but also to underlying physiological mechanisms and health outcomes, such as inflammation, metabolic dysregulation, and gut health [20] [16].

- Standardization and Accessibility: Widespread adoption of biomarkers requires standardized analytical protocols, shared databases, and the validation of accessible biofluids like urine to reduce the burden of collection [1] [2].

- Integration with Other 'Omics': A precision nutrition future demands the integration of dietary biomarkers with genomic, proteomic, and microbiome data to fully understand individual responses to diet [16].

As global dietary patterns continue to evolve, with UPF consumption rising and the debate over protein sources intensifying, the role of objective biomarkers becomes ever more critical. They are indispensable tools for refining dietary guidance, informing public health policy, and ultimately advancing the goal of precision nutrition to improve human health.

Accurate assessment of dietary intake is a fundamental challenge in nutritional epidemiology. Traditional tools, such as food frequency questionnaires (FFQs) and 24-hour recalls, are susceptible to measurement error and misreporting bias, which can compromise the validity of diet-disease relationship studies. [1] The field is increasingly turning to objective biochemical measures—dietary biomarkers—to complement and enhance self-reported data. These biomarkers, measurable in biological samples like blood or urine, provide a more reliable indicator of food intake by reflecting the actual physiological exposure to food-derived compounds. [1]

This whitepaper provides a technical guide to food group-specific biomarkers, focusing on four key groups: citrus fruits, cruciferous vegetables, whole grains, and soy. Framed within the context of a broader thesis on systematic reviews of dietary intake biomarkers, this document is intended for researchers, scientists, and drug development professionals. It synthesizes current evidence, presents quantitative data in structured tables, details experimental protocols, and visualizes key concepts to support advanced research in precision nutrition.

Biomarker Fundamentals and Classification

Dietary biomarkers are generally classified as exposure or recovery biomarkers, which are directly related to dietary intake, and concentration biomarkers, which can be influenced by individual characteristics like genetics and health status. [1] Urinary biomarkers are particularly attractive for large-scale studies due to the non-invasive nature of sample collection. [1] The utility of a biomarker is determined by its specificity to a food or food group, the dose-response relationship with intake, and its kinetic profile in the body.

For plant-based foods, biomarkers are often represented by specific phytochemicals or their metabolites. For instance, polyphenols are common markers for fruits, while sulfurous compounds distinguish cruciferous vegetables. [1] The following sections delve into the specific biomarkers for each food group, their validation, and their application in research.

Food Group Specific Biomarkers

Citrus Fruits

Primary Biomarkers and Health Context Citrus fruit intake is commonly assessed through urinary flavanone metabolites, specifically naringenin and hesperetin. [1] A systematic review of urinary biomarkers categorized citrus fruits among the plant-based foods effectively represented by their unique polyphenol profiles. [1] Furthermore, higher fruit intake and associated biomarkers, such as serum vitamin C, have been linked to improved health outcomes, including a lower risk of all-cause mortality among cancer survivors. [24]

Quantitative Data on Citrus Fruit Biomarkers Table 1: Biomarkers Associated with Citrus Fruit Intake

| Biomarker Name | Biological Matrix | Associated Health Outcome | Key Findings |

|---|---|---|---|

| Flavanone Metabolites (Naringenin, Hesperetin) | Urine [1] | Not Specified | Identified as key biomarkers for characterizing citrus fruit intake. [1] |

| Serum Vitamin C | Blood/Serum [24] | All-cause and Cancer-specific Mortality | Inversely associated with all-cause mortality (HR=0.73) and cancer-specific mortality (HR=0.55) in cancer survivors. [24] |

| Composite Biomarker Score (incl. Vitamin C, Carotenoids) | Blood/Serum [24] | All-cause Mortality | Inversely associated with all-cause mortality (HR=0.73) in cancer survivors. [24] |

Cruciferous Vegetables

Primary Biomarkers and Health Context Cruciferous vegetables (CV) such as broccoli, cabbage, and Brussels sprouts are characterized by their high content of glucosinolates. Upon plant cell disruption, glucosinolates are hydrolyzed by the enzyme myrosinase into bioactive isothiocyanates. [25] These isothiocyanates and their metabolites serve as specific biomarkers for CV intake. [1] A recent meta-analysis of 17 studies confirmed a significant inverse association between CV consumption and the risk of colon cancer (OR=0.80). [25]

Quantitative Data on Cruciferous Vegetable Biomarkers Table 2: Biomarkers and Health Associations for Cruciferous Vegetables

| Biomarker/Food | Biological Matrix | Associated Health Outcome | Key Findings |

|---|---|---|---|

| Isothiocyanates & Metabolites | Urine [1] | Not Specified | Serve as specific biomarkers for cruciferous vegetable intake. [1] |

| Cruciferous Vegetables (Dietary Intake) | N/A | Colon Cancer Risk | Pooled analysis shows inverse association with colon cancer risk (OR=0.80; 95% CI: 0.72-0.90). [25] |

| Cruciferous Vegetables (Dose-Response) | N/A | Colon Cancer Risk | Non-linear dose-response analysis shows progressive risk decrease with higher consumption levels. [25] |

Whole Grains

Primary Biomarkers and Health Context Whole grain (WG) intake can be objectively measured using plasma alkylresorcinols, which are phenolic lipids almost exclusively found in the bran layer of wheat and rye. [26] A prospective cohort study demonstrated that higher plasma alkylresorcinol concentrations were inversely associated with weight gain in adulthood, providing objective biomarker evidence supporting the role of whole grains in weight management. [26] An umbrella review further confirmed that WG consumption improves key aspects of metabolic health, including glycemic control and lipid metabolism. [27]

Quantitative Data on Whole Grain Biomarkers Table 3: Biomarkers and Health Associations for Whole Grains

| Biomarker/Food | Biological Matrix | Associated Health Outcome | Key Findings |

|---|---|---|---|

| Alkylresorcinols | Plasma [26] | Weight Change | Inversely associated with weight gain over 20 years (-0.004 kg/nmol/L; 95% CI: -0.007, -0.002). [26] |

| Whole Grain (Dietary Intake) | N/A | Weight Change | Inversely associated with weight gain (-0.013 kg/g whole grain/day; 95% CI: -0.026, 0.000). [26] |

| Whole Grain (Dietary Intake) | N/A | Metabolic Health | Umbrella review confirms benefits for diabetes management, hyperlipidemia, and inflammation. [27] |

Soy

Primary Biomarkers and Health Context Soy isoflavones, such as daidzein and genistein, are well-established biomarkers for soy food intake. Their levels in urine, plasma, or serum are positively correlated with soy consumption across different populations. [28] [1] The development of sophisticated detection methods, such as packed-nanofiber solid-phase extraction combined with ultraviolet spectrophotometry, has improved the accuracy of quantifying these biomarkers in complex matrices like urine. [28] Prospective studies have linked higher intake of specific soy foods, such as natto (fermented soybeans), and their components, like vitamin K, with a reduced risk of atrial fibrillation in women. [29]

Quantitative Data on Soy Biomarkers Table 4: Biomarkers and Health Associations for Soy

| Biomarker/Food | Biological Matrix | Associated Health Outcome | Key Findings |

|---|---|---|---|

| Soy Isoflavones (Daidzein, Genistein) | Urine, Plasma, Serum [28] [1] | Not Specified | Positively correlated with soy intake; used as objective biomarkers. [28] |

| Natto (Fermented Soy) | N/A | Atrial Fibrillation (AF) Risk | In women, highest intake tertile associated with decreased AF risk (HR=0.44; 95% CI: 0.24–0.80). [29] |

| Vitamin K (from Soy) | N/A | Atrial Fibrillation (AF) Risk | In women, highest intake tertile associated with decreased AF risk (HR=0.67; 95% CI: 0.48–0.94). [29] |

Detailed Experimental Protocols

Protocol for Soy Isoflavone Detection in Urine

This protocol outlines a modern method using packed-fiber solid-phase extraction (PFSPE) for sample pretreatment, followed by analysis with an ultraviolet (UV) spectrophotometer. [28]

1. Materials and Reagents

- Chemicals: Soybean isoflavone standard (purity ≥98%), methanol, acetonitrile (chromatographic grade), hydrochloric acid, sodium chloride, tetrahydrofuran (THF), N, N-dimethylformamide (DMF), Polystyrene (PS, Mw = 192,000 g/mol).

- Equipment: High-voltage DC power supply, syringe pump, scanning electron microscope, UV-visible spectrophotometer, pH meter.

- SPE Columns: Homemade packed-nanofiber solid-phase extraction columns.

2. Preparation of Electrospun Nanofiber Sorbent

- Polymer Solution Preparation: Dissolve 1 g of polystyrene (PS) in a mixture of 6 mL THF and 4 mL DMF (6:4, v/v). Stir at 20°C for 12 hours to obtain a uniform 10% (w/v) polymer solution.

- Electrospinning: Load the PS solution into a 10 mL syringe equipped with a 23-gauge stainless steel needle. Apply a high voltage (specific kV to be optimized) with the needle as the positive terminal and an aluminum foil collector as the negative terminal. The flow rate and distance between the needle and collector are controlled to produce consistent nanofibers.

- Fiber Characterization: Analyze the morphology of the electrospun PS nanofibers using scanning electron microscopy (SEM) to ensure a high surface area and porous structure.

3. Sample Pretreatment with PFSPE

- Column Packing: Pack the prepared electrospun nanofibers into a solid-phase extraction cartridge.

- Conditioning: Condition the PFSPE column with a suitable organic solvent (e.g., methanol) followed by an aqueous buffer.

- Sample Loading: Acidify the urine sample and load it onto the conditioned PFSPE column.

- Washing: Remove interfering impurities from the urine matrix (e.g., urea, salts) by washing with a suitable solvent.

- Elution: Elute the purified and concentrated soybean isoflavones from the PFSPE column using an organic solvent like methanol or acetonitrile.

4. Instrumental Analysis

- UV Spectrophotometry: Quantitatively analyze the eluted soybean isoflavones using a UV-visible spectrophotometer. The isoflavones, with their 3-benzopyrone structure, have strong ultraviolet absorption at characteristic wavelengths.

- Quantification: Determine the concentration of soybean isoflavones in the original urine sample by comparing the absorbance to a standard curve prepared with known concentrations of the isoflavone standard.

Protocol for Biomarker Analysis in Cruciferous Vegetable Studies (Meta-Analysis)

This protocol details the statistical methodology used in a recent dose-response meta-analysis on cruciferous vegetable intake and colon cancer risk. [25]

1. Literature Search and Study Selection

- Databases: Search multiple electronic databases (e.g., Embase, Scopus, Web of Science, PubMed, Cochrane Library) from inception to the current date (e.g., June 28, 2025).

- Search Strategy: Use a predetermined strategy combining keywords and Medical Subject Headings (MeSH) terms such as "Cruciferous Vegetable," "Colonic Neoplasms," and "Colon Cancer."

- Inclusion/Exclusion Criteria: Include observational studies (cohort and case-control) with adults, quantified CV intake, and reported odds ratios (OR) or relative risks (RR) with 95% confidence intervals (CI). Exclude animal studies, reviews, and studies without extractable effect estimates.

2. Data Extraction and Quality Assessment

- Standardized Extraction: Two independent reviewers extract data using a piloted form. Data points include first author, publication year, study design, population characteristics, CV intake levels, and fully adjusted effect estimates (OR/RR with 95% CI).

- Quality Assessment: Assess the methodological quality of included studies using the Newcastle-Ottawa Scale (NOS), which scores studies on selection, comparability, and exposure/outcome assessment.

3. Statistical Analysis and Meta-Analysis

- Pooled Estimate: Calculate a summary odds ratio using a random-effects model, which accounts for heterogeneity between studies. Quantify statistical heterogeneity using I² statistics.

- Dose-Response Analysis: Evaluate the dose-response relationship using restricted cubic spline models. Standardize all CV intake to grams per day (e.g., one serving = 80 g) for consistency.

- Sensitivity and Bias Analysis: Perform leave-one-out sensitivity analysis to evaluate the influence of individual studies. Assess publication bias using Egger's test and the trim-and-fill method.

Visualizations and Workflows

Biomarker Validation and Application Workflow

Diagram Title: Biomarker Workflow from Intake to Application

Soy Isoflavone Detection Workflow

Diagram Title: Soy Isoflavone Detection via PFSPE-UV

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Reagents and Materials for Dietary Biomarker Research

| Item Name | Function/Application | Specific Example from Research |

|---|---|---|

| Electrospun Nanofibers | Solid-phase extraction (SPE) adsorbent for sample pretreatment. | Polystyrene nanofibers used to purify and concentrate soybean isoflavones from urine, removing matrix interferences. [28] |

| Packed-Fiber SPE (PFSPE) Columns | Sample preparation device for enrichment and purification of analytes from complex biological matrices. | Homemade PFSPE columns used for the extraction of isoflavones prior to UV analysis, improving detection accuracy. [28] |

| UV-Visible Spectrophotometer | Quantitative analytical instrument for detecting compounds that absorb UV or visible light. | Used for the rapid detection and quantification of soybean isoflavones after PFSPE purification. [28] |

| Alkylresorcinol Standards | Reference compounds for quantifying whole grain intake biomarkers in biological fluids. | Used as calibration standards in chromatographic methods to measure alkylresorcinol levels in plasma, reflecting whole grain wheat/rye intake. [26] |

| Isothiocyanate Metabolite Assays | Kits or methods for detecting and quantifying cruciferous vegetable-derived compounds. | Used to measure specific metabolites in urine, serving as exposure biomarkers for cruciferous vegetable intake. [1] |

| Restricted Cubic Spline Models | Statistical tool for evaluating non-linear dose-response relationships in meta-analyses. | Applied in meta-analysis to model the relationship between cruciferous vegetable intake (g/d) and colon cancer risk. [25] |

Food group-specific biomarkers represent a powerful tool for moving nutritional epidemiology toward greater precision and objectivity. As detailed in this whitepaper, robust biomarkers have been established for citrus fruits (flavanones, vitamin C), cruciferous vegetables (isothiocyanates), whole grains (alkylresorcinols), and soy (isoflavones). The integration of advanced analytical techniques, such as nanofiber-based SPE, and sophisticated statistical methods, like dose-response meta-analysis, strengthens the evidence base linking dietary patterns to health outcomes.

The consistent inverse associations observed between higher biomarker-assessed intake of these food groups and reduced risks of chronic diseases underscore the public health importance of promoting their consumption. For researchers, the ongoing development and validation of biomarkers are critical for enhancing dietary assessment, understanding diet-disease mechanisms, and evaluating the efficacy of nutritional interventions. Future work should focus on discovering novel biomarkers, validating existing ones across diverse populations, and integrating multi-omics approaches to build a more comprehensive picture of the diet-health relationship.

From Laboratory to Practice: Methodological Approaches and Real-World Applications

The selection of appropriate biological specimens is a foundational step in the design of robust biomarker studies, particularly within nutritional epidemiology and dietary intake assessment. Biomarkers, defined as objectively measured characteristics evaluated as indicators of normal biological or pathogenic processes, have become indispensable tools for complementing and validating traditional self-reported dietary assessment methods [30]. The choice between blood-based matrices (plasma/serum) and urine represents a critical methodological crossroad, with each medium offering distinct advantages and limitations. This technical guide provides a systematic comparison of urinary and plasma biomarkers, framing the discussion within the context of dietary biomarker research to inform evidence-based specimen selection for researchers, scientists, and drug development professionals.

Fundamental Characteristics of Biomarker Specimens

Biomarkers can be classified by their temporal relationship to disease processes and their application in clinical investigation. Antecedent biomarkers identify predisposition or risk, screening biomarkers detect subclinical disease, diagnostic biomarkers classify disease existence, and prognostic biomarkers predict disease course [30]. Understanding this classification is essential for appropriate specimen selection.

Table 1: Classification and Applications of Biomarker Types

| Biomarker Type | Temporal Relationship | Primary Applications | Example in Nutrition |

|---|---|---|---|

| Antecedent | Pre-disease | Risk prediction, susceptibility assessment | Genetic polymorphisms affecting nutrient metabolism |

| Screening | Early disease phase | Population screening, early detection | Urinary sugars for diabetes risk screening |

| Diagnostic | Active disease | Disease classification, confirmation | Plasma lipids for cardiovascular disease diagnosis |

| Prognostic | Post-diagnosis | Disease course prediction, monitoring | Urinary prostaglandins for inflammation monitoring |

Biological Matrices Compared

Plasma and serum, the liquid fractions of blood, provide a comprehensive snapshot of systemic physiology. These matrices contain circulating nutrients, metabolites, proteins, and other analytes reflecting real-time metabolic status. Blood collection, while standardized, is invasive, requires trained personnel, and may limit frequent sampling in free-living populations [31] [32].

Urine is an ultra-filtrate of blood produced by the kidneys, containing metabolic waste products, excreted nutrients, and other biomarkers. Its collection is non-invasive, painless, and suitable for frequent sampling without professional supervision. Urine often contains a reduced number of interfering proteins compared to blood, potentially simplifying analytical protocols [31] [32].

Comparative Analysis: Urinary vs. Plasma Biomarkers

Advantages and Limitations

Table 2: Comprehensive Comparison of Urine and Plasma/Serum Biomarkers

| Characteristic | Urine Biomarkers | Plasma/Serum Biomarkers |

|---|---|---|

| Collection Method | Non-invasive, self-administered | Invasive, requires trained phlebotomist |

| Collection Frequency | High frequency, longitudinal sampling feasible | Limited by invasiveness and participant burden |

| Patient Compliance | Generally high | May be lower for repeated measures |

| Sample Stability | Variable; may require specific preservation | Generally good with proper processing |

| Risk of Contamination | Higher potential during collection | Lower with aseptic technique |

| Volume Obtainable | Large volumes typically available | Limited by safety considerations |

| Analytical Interference | Fewer interfering proteins | Complex matrix with abundant proteins |

| Cost of Collection | Lower (no clinical setting required) | Higher (requires clinical resources) |

| Reflects | Recent exposure, excretion patterns | Real-time systemic concentrations |

| Home Monitoring | Well-suited for point-of-care devices | Limited outside clinical settings |

| Concentration Factors | Influenced by hydration status, urine flow | Relatively stable within physiological ranges |

Performance in Specific Applications

Dietary Intake Assessment

Urinary biomarkers offer particular utility in nutritional assessment, where they often serve as recovery biomarkers reflecting recent intake of specific food components. Systematic reviews have identified urinary metabolites associated with intake of fruits, vegetables, grains, dairy, soy, coffee, tea, and alcohol [1]. Plant-based foods are frequently represented by polyphenol metabolites, while other food groups are distinguishable by innate compositional characteristics, such as sulfurous compounds in cruciferous vegetables or galactose derivatives in dairy [1].

The Dietary Biomarkers Development Consortium (DBDC) represents a major initiative to systematically discover and validate dietary biomarkers using controlled feeding trials and metabolomic profiling of both blood and urine specimens [2]. This effort highlights the complementary nature of these matrices for advancing precision nutrition.

Disease Diagnosis and Monitoring

In clinical contexts, urine biomarkers can outperform serum biomarkers for certain conditions, particularly those affecting the urinary system or characterized by excreted metabolites [33]. For acute kidney injury (AKI), studies directly comparing biomarker performance in plasma and urine have found that urinary biomarkers may offer higher specificity for kidney damage, as they originate directly from the affected organ [32].

Research on central nervous system (CNS) diseases, including brain tumors and cerebrovascular conditions, has demonstrated that urine contains disease-specific biomarker "fingerprints" capable of distinguishing different pathological states with high sensitivity and specificity [34]. This surprising finding suggests urine may contain systemic biomarkers reflecting distant disease processes.

Methodological Considerations and Protocols

Experimental Workflows

The following diagram illustrates a standardized workflow for comparative biomarker studies, incorporating both urinary and plasma matrices:

Diagram Title: Biomarker Analysis Workflow

Specimen Collection Protocols

Urine Collection Protocol

For urinary biomarker studies, first-morning void samples are often collected as they represent concentrated urine following overnight fasting. For 24-hour collections, participants receive detailed instructions and containers, often with preservatives for unstable analytes [1] [32]. Key considerations include:

- Timing: Document collection time and duration precisely

- Preservation: Immediate refrigeration or chemical preservatives for unstable analytes

- Processing: Vortexing to homogenize, centrifugation (e.g., 1500 rpm for 5 minutes), aliquoting, and storage at -80°C [34]

- Normalization: Creatinine adjustment to account for dilution/concentration effects

Plasma/Serum Collection Protocol

Blood collection follows standardized phlebotomy procedures with specific tube types:

- Plasma: Collected in anticoagulant tubes (EDTA, heparin, citrate)

- Serum: Collected in tubes without anticoagulant, allowed to clot

- Processing: Centrifugation (e.g., 3000 rpm for 10 minutes for EDTA-plasma), aliquoting, and storage at -80°C [32]

- Timing: Document collection time relative to meals, interventions, or circadian rhythm

Analytical Considerations

Normalization Strategies

Urinary biomarker concentrations require normalization to account for variations in hydration status:

- Creatinine adjustment: Most common method (analyte/creatinine ratio)

- Specific gravity normalization: Alternative to creatinine

- 24-hour excretion: Gold standard but burdensome for participants

Plasma biomarkers may be adjusted for:

- Lipid levels: For fat-soluble compounds

- Albumin: For protein-bound analytes

- Hematocrit: For blood-based measurements

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Reagents and Materials for Biomarker Studies

| Reagent/Material | Function | Application Notes |

|---|---|---|

| EDTA Blood Collection Tubes | Anticoagulant for plasma separation | Preserves protein integrity; requires mixing after collection |

| Serum Separator Tubes | Facilitates serum clot formation and separation | Must stand vertically for 30+ minutes before centrifugation |

| Sterile Urine Collection Cups | Non-invasive urine collection | Must be non-cytotoxic for cell-based analyses |

| Protease Inhibitor Cocktails | Inhibits protein degradation in urine | Added immediately after collection for protein biomarkers |

| Cryogenic Vials | Long-term sample storage at -80°C | Must be leak-proof for biobanking |

| Bradford/Lowry Assay Kits | Total protein quantification | Essential for urine normalization [34] |

| Creatinine Assay Kits | Urinary dilution normalization | Enzymatic methods preferred over Jaffe for accuracy [32] |

| Multiplex Immunoassay Panels | High-throughput protein biomarker quantification | Luminex-based platforms commonly used [32] [34] |