Addressing Measurement Error in Dietary Pattern Studies: Methods, Impacts, and Solutions for Research Validity

This article provides a comprehensive examination of measurement error in dietary pattern research, a critical methodological challenge that can distort dietary patterns and attenuate disease associations.

Addressing Measurement Error in Dietary Pattern Studies: Methods, Impacts, and Solutions for Research Validity

Abstract

This article provides a comprehensive examination of measurement error in dietary pattern research, a critical methodological challenge that can distort dietary patterns and attenuate disease associations. Covering foundational concepts through advanced applications, we explore the spectrum from classical measurement error models to innovative pattern recognition technologies and network analysis approaches. The content addresses practical strategies for minimizing error through study design, statistical adjustment methods, and validation protocols, with specific consideration for diverse populations and clinical contexts. Aimed at researchers, scientists, and drug development professionals, this resource synthesizes current evidence and methodologies to enhance the reliability and validity of nutritional epidemiology and its applications in biomedical research.

Understanding Measurement Error: Foundational Concepts and Impacts on Dietary Pattern Research

Frequently Asked Questions (FAQs)

FAQ 1: What are the fundamental types of measurement error in dietary assessment? Measurement error in dietary assessment is broadly categorized into two types: systematic error (bias) and within-person random error (day-to-day variation) [1]. Systematic error results in measurements that consistently depart from the true value in the same direction and cannot be reduced by taking repeated measures. It includes intake-related bias (e.g., the "flattened-slope" phenomenon where high-intake individuals under-report and low-intake individuals over-report) and person-specific bias (related to individual characteristics like social desirability) [1]. Within-person random error represents the difference between an individual's reported intake on a specific day and their long-term average intake, which can be addressed through statistical modeling with repeated measures [1].

FAQ 2: How does measurement error impact diet-disease association studies? Measurement error creates three primary problems in diet-disease association studies: (1) bias in estimated relative risks, typically attenuating them toward the null; (2) loss of statistical power to detect true diet-disease relationships; and (3) potential invalidity of conventional statistical tests, particularly in multivariable models [2]. The attenuation can be substantial - for example, a true relative risk of 2.0 might be estimated as 1.03-1.06 for energy intake and 1.10-1.12 for protein intake when using food frequency questionnaires [2]. To compensate for this power loss, sample sizes may need to be 5-100 times larger depending on the nutrient [2].

FAQ 3: What dietary assessment methods are available and how do their error profiles differ? Different dietary assessment methods have distinct error profiles and are suitable for different research contexts [3]:

Table: Comparison of Dietary Assessment Methods and Their Error Profiles

| Method | Time Frame | Primary Error Type | Key Advantages | Key Limitations |

|---|---|---|---|---|

| 24-Hour Recall | Short-term | Random error [3] | Low participant burden; does not require literacy; captures wide variety of foods | Relies on memory; requires multiple administrations to estimate usual intake |

| Food Record | Short-term | Random error [4] | Does not rely on memory; detailed data | High participant burden; reactivity (changing diet for recording) |

| Food Frequency Questionnaire (FFQ) | Long-term | Systematic error [2] [4] | Cost-effective for large samples; designed to capture usual intake | Limited food list; portion size estimation challenges; systematic biases by BMI, age |

FAQ 4: What statistical methods are available to correct for measurement error? Several statistical approaches can correct for measurement error effects [4] [5]:

- Regression calibration: Replaces the error-prone measurement with its expectation given the true exposure and other covariates

- Multiple imputation: Imputes multiple plausible values for the true exposure based on error-prone measurements and reference data

- Moment reconstruction: Creates a new variable with the same mean and variance as the true exposure Regression calibration is the most commonly used method, but it requires careful attention to its assumptions, particularly the classical measurement error model [4]. Multiple imputation and moment reconstruction can handle more complex error structures, including differential measurement error [4] [5].

FAQ 5: What reference instruments are available for assessing measurement error? Reference instruments for dietary assessment include [2] [5]:

- Recovery biomarkers: Objective measures with known quantitative relationships between intake and recovery (e.g., doubly labeled water for energy intake, 24-hour urinary nitrogen for protein intake)

- Alloyed gold standards: The best-performing practical instruments (e.g., multiple 24-hour recalls or food records)

- Concentration biomarkers: Measured concentrations in blood or tissues (e.g., serum carotenoids) Recovery biomarkers are considered the gold standard but exist for only a few nutrients (energy, protein, potassium) and are expensive to implement [2].

Troubleshooting Guides

Problem: Attenuated effect estimates in diet-disease associations Solution: Implement measurement error correction methods using validation study data [2] [4]:

- Conduct an internal validation study where a subset of participants completes both the main instrument (e.g., FFQ) and a reference instrument (e.g., multiple 24-hour recalls or biomarkers)

- Estimate the relationship between the main instrument and true intake using the validation data

- Apply regression calibration to adjust effect estimates in the main study

- Report both adjusted and unadjusted estimates with confidence intervals

Problem: Inadequate statistical power due to measurement error Solution: Increase sample size and optimize study design [2]:

- Calculate the necessary sample size inflation factor based on the attenuation factor (λ) for your specific nutrient: nadjusted = noriginal/λ

- Consider collaborative studies or meta-analyses to achieve sufficient sample size

- Use multiple dietary assessments per participant to reduce within-person random error

- Focus on energy-adjusted nutrients (densities or residuals) which typically have less attenuation than absolute intakes [2]

Problem: Differential measurement error in case-control studies Solution: Use methods robust to differential error [4] [5]:

- Consider moment reconstruction or multiple imputation approaches instead of regression calibration

- In prospective designs, collect dietary data before disease diagnosis to minimize differential recall

- For case-control studies, use biomarkers as objective reference measures when feasible

- Conduct sensitivity analyses to assess potential impact of differential error

Experimental Protocols

Protocol 1: Internal Validation Study Design Purpose: To collect data necessary for quantifying and correcting measurement error in the main study instrument [5].

Materials:

- Main dietary assessment instrument (e.g., FFQ)

- Reference instrument (e.g., multiple 24-hour recalls, food records, or biomarkers)

- Trained interviewers (if using interviewer-administered instruments)

- Data management system

Procedure:

- Randomly select a subset of participants from the main study cohort (typically 100-500 participants)

- Administer both the main instrument and reference instrument to these participants

- For reference instruments requiring multiple administrations (e.g., 24-hour recalls), schedule them on non-consecutive days covering different seasons and days of the week

- Ensure the time frame reference periods align between instruments (e.g., same previous year for FFQ and 24-hour recalls)

- Collect additional relevant covariates (age, BMI, sex, education) that may relate to measurement error

- Process and nutrient-code all dietary data using standardized procedures

- Estimate measurement error model parameters relating main instrument to reference instrument

- Apply calibration equations to the entire cohort for measurement error correction

Protocol 2: Biomarker-Based Validation Study Purpose: To validate self-report instruments using objective recovery biomarkers [2] [6].

Materials:

- Doubly labeled water for energy expenditure assessment

- Protocols for 24-hour urine collection for nitrogen (protein) and potassium

- Stable isotope analysis facilities

- Dietary self-report instruments

Procedure:

- Recruit weight-stable participants in energy balance

- Administer doubly labeled water and collect urine samples over 10-14 days for energy assessment

- Conduct multiple 24-hour urine collections for protein and potassium assessment

- Administer self-report dietary instruments concurrently

- Analyze biomarker data following established protocols

- Calculate intake from biomarkers: energy intake = energy expenditure (assuming weight stability), protein intake = 24h urinary nitrogen × 6.25

- Assess relationships between self-report and biomarker measures

- Develop calibration equations accounting for systematic biases by BMI, age, and other relevant factors

Table: Attenuation Factors for Common Nutrients from the OPEN Biomarker Study [2]

| Nutrient | Attenuation Factor (Men) | Attenuation Factor (Women) | True RR=2.0 Becomes |

|---|---|---|---|

| Energy | 0.08 | 0.04 | 1.03-1.06 |

| Protein | 0.16 | 0.14 | 1.10-1.12 |

| Potassium | 0.29 | 0.23 | 1.17-1.22 |

| Protein Density | 0.40 | 0.32 | 1.25-1.32 |

| Potassium Density | 0.49 | 0.57 | 1.40-1.48 |

Research Reagent Solutions

Table: Essential Research Materials for Measurement Error Studies

| Reagent/Instrument | Function | Key Features | Application Context |

|---|---|---|---|

| Doubly Labeled Water | Recovery biomarker for total energy expenditure | Objective measure; quantitative relationship with energy output | Validation against energy intake; requires specialized lab analysis |

| 24-Hour Urinary Nitrogen | Recovery biomarker for protein intake | Direct measure of protein metabolism | Protein intake validation; requires complete urine collection |

| Automated Multiple-Pass Method (AMPM) | Standardized 24-hour recall methodology | Structured interviewing technique to enhance completeness | Reference instrument in validation studies; used in NHANES |

| ASA24 (Automated Self-Administered 24-Hour Recall) | Self-administered 24-hour recall system | Automated multiple-pass method; reduces interviewer burden | Large-scale validation studies; cost-effective reference instrument |

| GloboDiet (formerly EPIC-SOFT) | Computer-assisted 24-hour recall method | Standardized across countries and cultures | International studies; standardized dietary assessment |

Measurement Error Classification and Impact

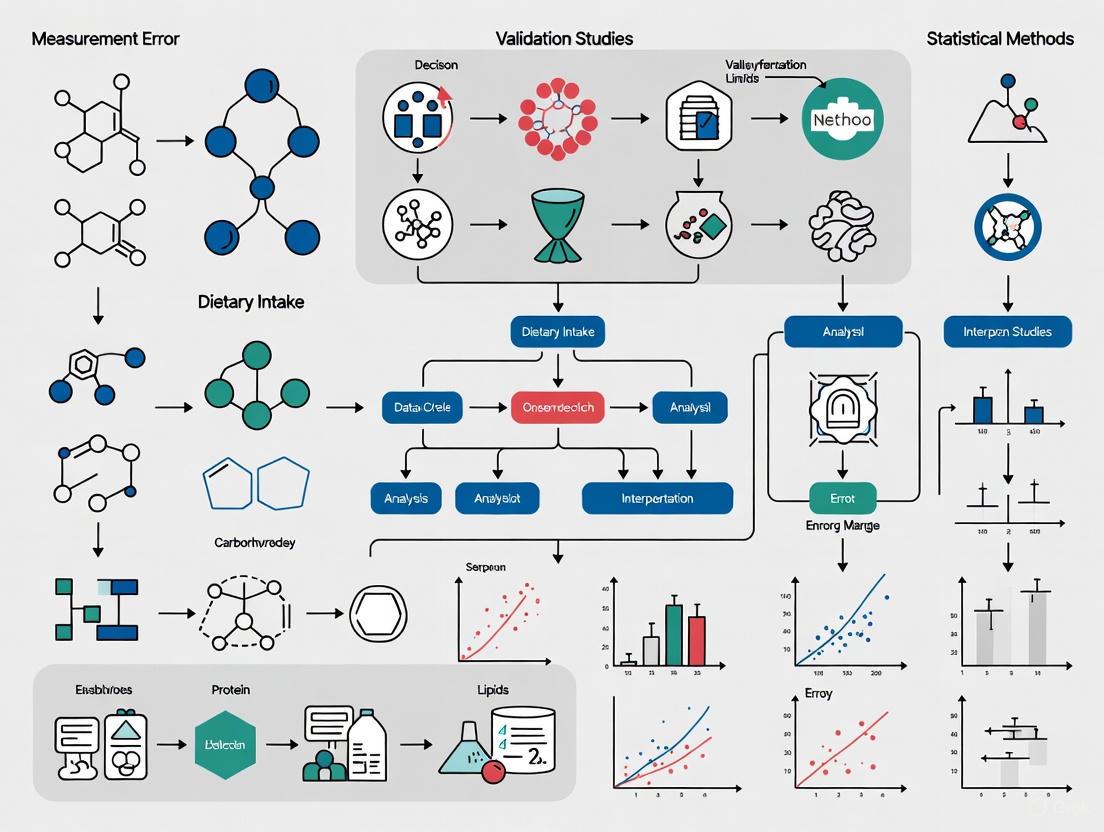

Measurement Error Correction Workflow

In scientific research, particularly in fields like nutritional epidemiology and environmental health, measurement error refers to the difference between the true value of a variable and its observed value [7]. These errors are ubiquitous across all types of studies and can significantly impact research findings, leading to biased conclusions, reduced statistical power, and distorted relationships between variables [8] [9]. Understanding the different types of measurement error is crucial for proper study design, analysis, and interpretation of results.

This guide provides researchers with a comprehensive troubleshooting framework for identifying, understanding, and addressing measurement error in their experiments, with special emphasis on dietary pattern studies.

Understanding Core Measurement Error Models

FAQ: What are the fundamental types of measurement error models I'm likely to encounter?

Answer: Researchers commonly encounter three primary measurement error models, each with distinct characteristics and implications for data analysis:

- Classical Error Model: Assumes the measured value varies randomly around the true value

- Linear Error Model: Extends the classical model to include both random error and systematic bias

- Berkson Error Model: Posits that the true value varies randomly around the measured value

Comparative Analysis of Error Models

Table 1: Characteristics of Core Measurement Error Models

| Model Type | Mathematical Formulation | Key Assumptions | Impact on Estimates | Common Applications |

|---|---|---|---|---|

| Classical | (X^* = X + e) | Error (e) has mean zero, independent of X | Attenuation (bias toward null); loss of power | Laboratory measurements; instrument imprecision [8] [9] |

| Linear | (X^* = \alpha0 + \alphaX X + e) | Error (e) has mean zero, independent of X | Can cause bias in varying directions | Self-reported data; dietary assessment [9] |

| Berkson | (X = X^* + e) | Error (e) has mean zero, independent of X* | Increased imprecision; unbiased effect estimates in linear models [10] | Environmental studies; aggregated exposure data [11] [12] |

Visual Guide to Measurement Error Models

Diagram 1: Structural relationships between true values, measured values, and error components in the three primary measurement error models.

Troubleshooting Guide: Identifying Measurement Error in Dietary Studies

FAQ: How does measurement error specifically impact dietary pattern research?

Answer: In dietary pattern studies, measurement errors can substantially distort the derived patterns and attenuate diet-disease associations. The impact varies depending on the dietary assessment method and the type of measurement error present [13].

Table 2: Impact of Measurement Error on Dietary Pattern Analysis

| Error Type | Impact on Principal Component Factor Analysis | Impact on K-means Cluster Analysis | Effect on Diet-Disease Associations |

|---|---|---|---|

| Systematic Error | Consistency rates: 67.5% to 100% | Consistency rates: 13.4% to 88.4% | Attenuation of coefficients; harmful associations (true coefficient: 0.5) observed as 0.295 to 0.449 |

| Random Error | Greater distortion with larger errors | Greater distortion with larger errors | Attenuation of coefficients; beneficial associations (true coefficient: -0.5) observed as -0.231 to -0.394 [13] |

Answer: Dietary intake data are affected by multiple sources of error arising from the complex cognitive process of reporting food consumption [7]:

- Recall bias: Participants may forget certain foods consumed (omissions) or report foods not actually consumed (intrusions)

- Social desirability bias: Systematic under-reporting of "unhealthy" foods and over-reporting of "healthy" foods

- Portion size estimation errors: Difficulty in accurately estimating and reporting amounts consumed

- Interviewer effects: Variations in how different interviewers probe for information

- Coding errors: Mistakes in converting reported foods to nutrient data

- Limitations in food composition databases: Incomplete or inaccurate nutrient data for specific foods [7] [3]

Methodological Protocols for Addressing Measurement Error

Experimental Protocol: Designing Validation Studies for Dietary Assessment Tools

Purpose: To quantify and characterize measurement error in dietary assessment instruments through comparison with objective biomarkers.

Materials and Reagents:

- 24-hour urinary sodium, potassium, and nitrogen as recovery biomarkers

- Automated Self-Administered 24-hour Dietary Assessment Tool (ASA24)

- Interviewer-administered Automated Multiple-Pass Method (AMPM) protocols

- Food composition database (e.g., USDA Standard Reference)

Procedure:

- Recruit participants representative of your target population

- Collect true intake data through 24-hour urine collections for sodium, potassium, and protein (nitrogen × 6.25)

- Administer self-reported dietary assessment tools (24-hour recalls, food frequency questionnaires, or food records)

- Code and process self-reported dietary data using standardized protocols

- Calculate nutrient intakes from self-reported data using appropriate food composition databases

- Statistically compare self-reported intake values with biomarker values to quantify measurement error structure

- Assess whether measurement error differs by time, treatment group, or participant characteristics [14]

Troubleshooting Tip: If implementing full biomarker collection is not feasible, consider a reproducibility study with repeated administrations of the dietary assessment tool to estimate random error components.

Experimental Protocol: Implementing Bias-Correction Methods

Purpose: To correct for the biasing effects of measurement error in statistical analyses.

Materials:

- Primary study data (exposure, outcome, covariates)

- Validation data or reliability data

- Statistical software with measurement error correction capabilities (R, SAS, Stata)

Procedure:

- Characterize the measurement error using validation data to estimate error model parameters

- Select appropriate correction method based on error type and study design:

- Regression calibration: Replace error-prone measurements with calibrated values

- Simulation-extrapolation (SIMEX): Simulate increasing error levels and extrapolate back to no error

- Multiple imputation for measurement error (MIME): Impute true values based on error model

- Implement correction method using appropriate software tools

- Assess sensitivity of results to different assumptions about the error structure [8]

Troubleshooting Tip: When external validation data are unavailable, conduct sensitivity analyses to evaluate how different magnitudes of measurement error might affect your conclusions.

Essential Research Reagent Solutions

Table 3: Key Methodological Tools for Addressing Measurement Error

| Tool Category | Specific Solution | Primary Function | Considerations for Use |

|---|---|---|---|

| Dietary Assessment Methods | 24-hour Recalls (ASA24, AMPM) | Capture short-term dietary intake | Multiple non-consecutive days needed to estimate usual intake; requires literacy for self-administered versions [3] |

| Dietary Assessment Methods | Food Frequency Questionnaires (FFQ) | Assess habitual dietary patterns over extended periods | Limited food lists; better for ranking individuals than estimating absolute intake [3] |

| Dietary Assessment Methods | Food Records | Comprehensive recording of all foods/beverages consumed | High participant burden; potential for reactivity (changing diet for ease of recording) [3] |

| Biomarkers | Recovery Biomarkers (doubly labeled water, urinary nitrogen) | Objective measures of energy and protein intake | Considered gold standard but expensive and burdensome [14] |

| Statistical Methods | Regression Calibration | Correct for bias in estimated associations | Requires validation data; assumes non-differential error [8] |

| Statistical Methods | Simulation-Extrapolation (SIMEX) | Correct for measurement error through simulation | Does not require full validation data; computationally intensive [8] |

Advanced Considerations for Specific Research Contexts

FAQ: How does measurement error differ in longitudinal intervention studies?

Answer: In longitudinal interventions, particularly those involving lifestyle changes, measurement error can become differential—changing over time and/or differing between treatment groups [15]. This creates unique challenges:

- Intervention group participants may alter reporting behavior to appear compliant with study recommendations

- Reporting accuracy may improve due to training or worsen due to participant fatigue

- Time-varying error structure can introduce complex biases in treatment effect estimates

- Sample size requirements often need to be increased to maintain statistical power [15]

Protocol Adjustment: For longitudinal studies, collect validation data at multiple time points across all treatment groups to characterize how measurement error changes throughout the study period [14].

FAQ: When should I be concerned about Berkson versus classical error?

Answer: The distinction becomes critical when selecting appropriate correction methods and interpreting results:

- Berkson error typically arises when group-level exposure assignments are applied to individuals (e.g., environmental exposure based on residential location) [11] [12]

- Classical error is more common with instrument imprecision and biological variability [8]

- Key differentiator: In linear models, Berkson error does not bias effect estimates (only increases imprecision), while classical error causes attenuation toward the null [10] [12]

Diagnostic Approach: Examine your measurement process—if individual measurements are assigned based on group averages, Berkson error likely predominates. If individual measurements are taken with imprecise instruments, classical error may be more relevant.

Decision Framework for Selecting Appropriate Methods

Diagram 2: Decision framework for selecting appropriate measurement error assessment and correction methods based on study design, resources, and error type.

How Measurement Error Distorts Dietary Patterns and Attenuates Disease Associations

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the primary types of measurement error in dietary assessment? Measurement error in nutritional epidemiology is typically categorized into two main types: systematic error (bias) and random error [14]. Systematic error refers to consistent, directional departures from true intake, such as constant over-reporting or under-reporting. Random error creates variability in measurements without a consistent pattern, reducing precision [14]. These errors can be further described using specific measurement error models: the classical model (purely random error), linear measurement error model (both systematic and random error), and Berkson error model (where true values vary around measured values) [9].

Q2: How does measurement error specifically distort identified dietary patterns? Measurement error causes significant distortion in derived dietary patterns, with the severity increasing with error magnitude. Simulation studies based on the China Multi-Ethnic Cohort demonstrate that consistency rates for dietary patterns derived via principal component factor analysis (PCFA) range from 67.5% to 100%, while consistency rates for K-means cluster analysis (KCA) range from 13.4% to 88.4% under measurement error conditions [16]. Patterns derived through PCFA with low discrepancy in factor loadings and patterns from KCA with small cluster sizes are particularly vulnerable to distortion [16].

Q3: Why do we observe attenuation in diet-disease association estimates? Measurement error in nutritional exposures attenuates estimated association coefficients toward the null, effectively diluting the observed strength of relationships between dietary patterns and health outcomes [16]. For a beneficial association with a true coefficient of -0.5, estimated coefficients under measurement error range from -0.287 to -0.450 for PCFA and from -0.231 to -0.394 for KCA [16]. Similarly, for harmful associations (true coefficient 0.5), estimates range from 0.295 to 0.449 for PCFA and from -0.003 to 0.373 for KCA [16].

Q4: Can measurement error structure change during longitudinal interventions? Yes, emerging evidence suggests measurement error can be differential in longitudinal randomized trials. In studies of sodium intake interventions, the relationship between self-reported intake and biomarker values varied by both time and treatment condition [14]. Participants in intervention groups may alter reporting behaviors due to increased nutritional awareness or social desirability bias, while all participants may experience reporting fatigue or improved accuracy with repeated assessments [14].

Q5: What advanced statistical methods can correct for measurement error in food substitution analysis? Compositional data analysis (CoDA) provides a promising framework for correcting measurement errors in food substitution studies [17]. This approach respects the inherent sum-to-one constraint in dietary data (where all components must sum to 100%) and can model multivariate nutrient intakes while correcting for both random and systematic errors [17]. Extension of these models to longitudinal data allows researchers to account for temporal changes in dietary patterns and measurement errors across multiple time points [17].

Quantitative Impact of Measurement Error on Dietary Pattern Analysis

Table 1: Impact of Measurement Error on Dietary Pattern Consistency Rates

| Analysis Method | Error Type | Consistency Rate Range | Most Vulnerable Patterns |

|---|---|---|---|

| Principal Component Factor Analysis (PCFA) | Systematic & Random | 67.5% - 100% | Patterns with factor loadings of low discrepancies |

| K-means Cluster Analysis (KCA) | Systematic & Random | 13.4% - 88.4% | Patterns with small cluster sample sizes |

Table 2: Attenuation of Diet-Disease Associations Under Measurement Error Conditions

| True Association Coefficient | Analysis Method | Estimated Coefficient Range | Degree of Attenuation |

|---|---|---|---|

| Beneficial (-0.5) | PCFA | -0.287 to -0.450 | 10% - 42.6% |

| Beneficial (-0.5) | KCA | -0.231 to -0.394 | 21.2% - 53.8% |

| Harmful (0.5) | PCFA | 0.295 to 0.449 | 10.2% - 41% |

| Harmful (0.5) | KCA | -0.003 to 0.373 | 25.4% - 100.6% |

Experimental Protocols for Measurement Error Characterization

Protocol 1: Longitudinal Measurement Error Assessment Using Biomarkers

Purpose: To characterize measurement error structure in self-reported dietary data across time and intervention groups using biomarker reference measurements [14].

Materials:

- 24-hour dietary recall instruments

- Biological sample collection kits (urine, blood, or other appropriate matrices)

- Nutrient analysis database (e.g., USDA Standard Reference)

- Laboratory equipment for biomarker quantification

Procedure:

- Collect parallel measurements of self-reported intake (24-hour recall) and biomarker reference (24-hour urine sodium) at baseline and follow-up time points (e.g., 6 and 18 months)

- For each participant and time point, calculate the difference between self-reported values and biomarker measurements

- Build mixed effects regression models with flexible variance-covariance structure

- Test interactions between time, treatment condition, and self-reported intake using backward selection approaches

- Determine whether measurement error differs significantly across time or treatment conditions

Validation: This protocol was successfully implemented in the Trials of Hypertension Prevention (TOHP) and PREMIER studies, demonstrating differential measurement error by time and treatment group [14].

Protocol 2: Triads Method for Measurement Error Correction Using Biomarkers

Purpose: To obtain unbiased estimates of the relationship between true intake and surrogate measurements using three different assessment methods [18].

Materials:

- Food frequency questionnaire (FFQ)

- Reference instrument (diet records or 24-hour recalls)

- Biomarker measurements (e.g., plasma vitamin C, urinary nitrogen)

- Statistical software capable of structural equation modeling

Procedure:

- Collect replicate measurements using FFQ (Q), reference instrument (R), and biomarker (M) on the same subjects at multiple time points

- Apply the model: Qij = μqj + αq + qi + βqTi + eQij; Rij = μrj + αr + ri + βrTi + eRij; Mij = μmj + Ti + eMij

- Estimate parameters accounting for shift-bias (α) and scale-bias (β) factors

- Calculate the regression coefficient of true intake (T) on surrogate measurement (Q) as λTQ = Cov(Qij, Ti)/Var(Qij)

- Use method of moments approaches to obtain unbiased estimates even with correlated systematic error

Validation: This approach has been applied in the EPIC-Norfolk study using FFQ, 7-day diet records, and plasma vitamin C measurements collected 4 years apart [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Measurement Error Research

| Research Tool | Primary Function | Key Applications | Considerations |

|---|---|---|---|

| Food Frequency Questionnaire (FFQ) | Assess habitual dietary intake | Large epidemiological studies; Subject to both systematic and random error [18] | |

| 24-Hour Dietary Recall | Detailed intake assessment over previous day | Validation studies; Multiple recalls improve accuracy [14] | |

| Biomarkers (Urinary, Blood) | Objective intake measurement | Reference standard in validation studies [14] | Invasive and expensive |

| Compositional Data Analysis (CoDA) | Model dietary data with sum-to-one constraint | Food substitution analysis [17] | Respects multivariate nature of diet |

| Regression Calibration | Correct association estimates for measurement error | Primary analysis correction [9] | Requires validation data |

| Simulation Extrapolation (SIMEX) | Correct for measurement error via simulation | When error structure is known [14] | Computationally intensive |

Visualizing Measurement Error Concepts and Workflows

Measurement Error Sources in Dietary Assessment

Measurement Error Correction Workflow

The Challenge of Usual Exposure Assessment in Long-Term Dietary Studies

Accurate assessment of usual dietary intake is fundamental to nutritional epidemiology, yet it remains one of the field's most significant methodological challenges [19]. Measurement error—the difference between reported intake and true consumption—systematically distorts research findings, potentially obscuring genuine diet-disease relationships and compromising scientific evidence [7]. Understanding these errors is essential for researchers interpreting nutritional studies or designing investigations into dietary patterns and health outcomes.

Dietary measurement errors are broadly categorized into two types: systematic error (bias) and within-person random error [1]. Systematic error results in measurements that consistently depart from true values in the same direction and cannot be eliminated through repeated measures. Within-person random error represents day-to-day variation in an individual's diet and measurement inaccuracies that occur randomly [1].

Core Concepts & Definitions FAQ

Q1: What is "usual dietary intake" and why is it important in long-term studies? Usual intake refers to the long-term average consumption of foods or nutrients for an individual [9]. Since most chronic diseases develop over extended periods, usual intake rather than short-term consumption represents the relevant exposure for understanding diet-disease relationships [3].

Q2: What are the main types of measurement error in dietary assessment?

- Systematic Error (Bias): Consistent, non-random error where reported values systematically differ from true intake. This includes:

- Within-Person Random Error: Day-to-day variation in diet and random measurement errors that cause reported intake to fluctuate around the usual intake [1].

Q3: How does measurement error differ between FFQs and 24-hour recalls? Food Frequency Questionnaires (FFQs) are primarily affected by systematic error and rely on generic memory, while 24-hour recalls (24HRs) are mainly subject to within-person random error and rely on specific memory of recent intake [3]. This distinction significantly impacts how data from these instruments must be analyzed and interpreted.

Diagram: Classification of Dietary Measurement Error Types and Their Primary Associations with Common Assessment Instruments

Troubleshooting Common Experimental Problems

Problem 1: Attenuated Associations in Diet-Disease Relationships

Symptoms: Observed effect sizes are weaker than expected; relative risk estimates are biased toward null (closer to 1.0); difficulty detecting statistically significant associations even with large sample sizes.

Root Cause: Non-differential measurement error in dietary exposure variables causes attenuation (flattening) of true dose-response relationships [2]. The OPEN study demonstrated severe attenuation factors for nutrients assessed by FFQ: energy (0.04-0.08), protein (0.14-0.16), and potassium (0.23-0.29) [2]. This means a true relative risk of 2.0 could appear as 1.03-1.06 for energy, 1.10-1.12 for protein, and 1.17-1.22 for potassium.

Solutions:

- Use energy adjustment methods (density or residual approaches) to reduce attenuation [2].

- Implement regression calibration using validation study data to correct relative risk estimates [2].

- Increase sample size substantially—the OPEN study indicated needs for 5-100 times larger samples depending on the nutrient [2].

- Consider using dietary biomarkers where available to improve exposure assessment.

Table 1: Quantifying Attenuation in Diet-Disease Associations from the OPEN Study

| Dietary Component | Attenuation Factor (Men) | Attenuation Factor (Women) | Apparent RR if True RR=2.0 |

|---|---|---|---|

| Energy | 0.08 | 0.04 | 1.06-1.03 |

| Protein | 0.16 | 0.14 | 1.12-1.10 |

| Potassium | 0.29 | 0.23 | 1.22-1.17 |

| Protein Density | 0.40 | 0.32 | 1.40-1.32 |

| Potassium Density | 0.49 | 0.57 | 1.48-1.40 |

Problem 2: Inaccurate Estimation of Population Usual Intake

Symptoms: Inability to accurately rank individuals by consumption levels; distorted estimates of population percentiles; incorrect identification of groups exceeding or falling below dietary recommendations.

Root Cause: Within-person random variation (day-to-day diet changes) obscures true long-term usual intake when using short-term assessment methods like 24-hour recalls [1]. Single-day assessments particularly struggle to characterize intake of episodically consumed foods.

Solutions:

- Collect multiple non-consecutive days of dietary data (at least 2-3 days per participant) [3].

- Use statistical modeling (e.g., National Cancer Institute method) to remove within-person variation and estimate usual intake distributions [1].

- For episodically consumed foods, implement two-part models accounting for consumption probability and amount consumed.

Problem 3: Participant Misreporting Bias

Symptoms: Systematic under-reporting of energy intake, particularly for specific food categories; differential reporting by participant characteristics (e.g., BMI, gender); social desirability bias affecting reported consumption of "healthy" and "unhealthy" foods.

Root Cause: Cognitive and psychological factors including memory limitations, social desirability bias, and characteristics influencing self-presentation [7]. Heavier individuals and women tend to underreport intake more significantly [20].

Solutions:

- Use multiple-pass interview techniques with standardized prompts to enhance completeness [7].

- Incorporate biomarkers (doubly labeled water for energy, urinary nitrogen for protein) to identify and correct for misreporting [21].

- Consider using technology-assisted methods (digital food records) to reduce memory reliance.

- Account for participant characteristics known to affect reporting accuracy in analyses.

Research Reagent Solutions

Table 2: Essential Methodological Tools for Addressing Dietary Measurement Error

| Research Tool | Primary Function | Key Applications | Technical Considerations |

|---|---|---|---|

| Recovery Biomarkers (Doubly labeled water, Urinary nitrogen) | Provide objective, unbiased measures of intake for specific nutrients | FFQ validation; Calibration equations; Misreporting assessment | Limited to energy, protein, potassium; Expensive; Complex implementation [2] [21] |

| Concentration Biomarkers (Blood carotenoids, Adipose tissue fatty acids) | Correlate with dietary intake, though affected by metabolism | Ranking individuals by intake; Assessing associations with health outcomes | Influenced by individual metabolism and characteristics; Not measures of absolute intake [21] |

| Multiple 24-Hour Recalls | Capture short-term intake with less systematic bias than FFQs | Usual intake estimation; Surveillance studies; Reference method in validation | Requires multiple administrations (≥2); Statistical modeling needed for usual intake [19] [3] |

| Web-Based Assessment Tools (ASA24, Intake24) | Automated self-administered 24-hour recall systems | Large-scale studies; Reduced cost compared to interviewer-administered recalls | Requires literate population with computer access; May need adaptation for target population [7] |

| Statistical Modeling (Regression calibration, Measurement error models) | Correct for measurement error in diet-disease associations | Improving risk estimation; Accounting for instrument imperfection | Requires validation study data; Model assumptions must be verified [2] [9] |

Experimental Protocol Guide

Protocol 1: Designing an Internal Validation Study

Purpose: To collect data necessary for quantifying and correcting measurement error in your main dietary assessment instrument.

Methodology:

- Subsample Selection: Randomly select a representative subset (typically 100-500 participants) from your main cohort [9].

- Reference Instrument Administration:

- Temporal Sequencing: Administer reference instruments close in time to the main instrument (FFQ) but not so close that participants remember specific answers.

- Data Collection: Ensure identical nutrient database and processing methods for both main and reference instruments.

Analysis Approach:

- Calculate de-attenuation factors for nutrients of interest [2].

- Develop calibration equations to correct main study data [9].

- For multivariate models, use multivariate regression calibration [2].

Protocol 2: Implementing Multiple 24-Hour Recalls for Usual Intake Assessment

Purpose: To estimate population usual intake distributions while accounting for within-person variation.

Methodology:

- Study Design: Administer at least two non-consecutive 24-hour recalls per participant [3].

- Sampling Framework: Use random sampling of days (including weekends and weekdays) across different seasons if possible.

- Administration Method:

- Data Processing: Convert food consumption to nutrients using standardized food composition database.

Analysis Approach:

- Use the National Cancer Institute method or equivalent to estimate usual intake distributions [1].

- For episodically consumed foods, implement two-part models accounting for probability of consumption and consumption-day amount.

Diagram: Comprehensive Workflow for Dietary Studies with Integrated Measurement Error Addressing

Advanced Methodological FAQ

Q4: When should I use biomarkers versus self-report instruments in dietary pattern studies? Biomarkers and self-report instruments serve complementary roles. Recovery biomarkers (doubly labeled water, urinary nitrogen) are optimal for validating total energy and specific nutrient intake but are expensive and limited to few dietary components [21]. Concentration biomarkers (blood carotenoids, adipose tissue fatty acids) work well for ranking individuals by intake of related foods but don't measure absolute intake. Self-report instruments remain essential for capturing comprehensive dietary patterns, food combinations, and culturally meaningful eating behaviors [21]. The most robust studies combine both approaches.

Q5: How can I address measurement error when studying dietary patterns rather than single nutrients? Dietary pattern research introduces additional complexity because multiple correlated components are measured with error. In this situation:

- Use principle component analysis or similar methods that account for measurement error in multiple variables simultaneously.

- Consider using reduced rank regression which can incorporate biomarker data to identify patterns most predictive of biological intermediates.

- Apply multivariate regression calibration when correcting diet-disease associations [2].

- Acknowledge that patterns heavily weighted toward well-measured components (those with good biomarkers) will have better measurement characteristics.

Q6: What emerging technologies show promise for improving dietary assessment? Several innovative approaches are developing:

- Omics technologies: Metabolomics can identify novel intake biomarkers; genomics can use genetic variants as proxies for intake in Mendelian randomization [21].

- Mobile technology: Smartphone apps with image-based food records and natural language processing reduce participant burden and may improve accuracy [20].

- Integration approaches: Combining traditional methods with digital tools and biomarkers in adaptive designs [19].

- Standardized automated systems: Tools like ASA24, Intake24, and GloboDiet improve standardization across studies [7].

Addressing measurement error is not merely a statistical exercise but a fundamental requirement for generating valid evidence in nutritional epidemiology. The strategies outlined in this technical support guide—appropriate instrument selection, validation study implementation, statistical correction methods, and biomarker integration—provide researchers with practical approaches to mitigate these challenges. As methodological research continues to advance, incorporating these error-addressing strategies into study designs will remain essential for producing reliable evidence about diet-health relationships that can inform public health recommendations and clinical practice.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between differential and non-differential measurement error?

A1: The fundamental difference lies in whether the error is related to the study outcome or group assignment.

- Non-differential error occurs when the measurement inaccuracy is similar across all study groups. The error is unrelated to the disease status or intervention group. In statistical terms, the measured exposure (X^*) is conditionally independent of the outcome (Y) given the true exposure (X) and other covariates (Z) [9].

- Differential error occurs when the magnitude or direction of measurement error differs between study groups (e.g., between cases and controls, or between intervention and control groups). The error provides extra information about the outcome beyond the true exposure [22] [9].

Q2: How does each error type affect risk estimates in nutritional studies?

A2: The effects differ significantly between error types, as summarized in the table below.

| Error Type | Effect on Risk Estimate | Common Causes in Dietary Research |

|---|---|---|

| Non-differential | Generally biases estimates toward the null (attenuation); reduces statistical power [2]. | Random recall lapses; within-person variation; portion size estimation difficulties [7] [23]. |

| Differential | Unpredictable direction of bias; can create or mask apparent associations [22]. | Social desirability bias (systematic under-reporting in intervention group); recall bias in case-control studies [22] [7]. |

For example, in the OPEN study, non-differential error from food frequency questionnaires attenuated relative risks so severely that a true risk of 2.0 would be estimated as only 1.03-1.06 for energy intake and 1.10-1.12 for protein intake [2].

Q3: What study designs are most vulnerable to differential measurement error?

A3: Intervention studies and case-control studies face the highest risks.

- Lifestyle intervention trials: Participants may modify reporting to appear compliant, creating differential error between intervention and control groups [22]. Simulation studies show this can require 25-100% larger sample sizes to maintain statistical power [22] [2].

- Case-control studies: Recall bias occurs when cases remember or report exposures differently than controls [9].

- Longitudinal studies with repeated measures: Reporting accuracy may improve over time due to training or deteriorate due to respondent burden [22].

Q4: What methodologies can prevent or correct for measurement errors?

A4: Researchers have several tools available, ranging from study design to statistical analysis.

Prevention Strategies:

- Incorporate multiple dietary assessments (e.g., more than one 24-hour recall) to reduce random error [23]

- Use standardized assessment protocols with quality control procedures [23]

- Include memory aids and prompts in dietary recalls to reduce omissions [7]

- Consider technology-based assessments (e.g., Automated Self-Administered 24-hour recall) to standardize data collection [7]

Correction Methods:

- Conduct internal validation studies using recovery biomarkers (e.g., doubly labeled water) or detailed 24-hour recalls [2]

- Apply regression calibration to adjust risk estimates using validation study data [2]

- Use energy adjustment methods (densities or residuals) to reduce attenuation [2]

- Increase sample sizes to compensate for reduced statistical power [22]

Q5: How does measurement error specifically impact dietary pattern research?

A5: Measurement error presents unique challenges in dietary pattern analysis.

- Distortion of patterns: Both systematic and random errors can alter the identification of dietary patterns. Studies show consistency rates for pattern identification can drop to 67.5% with principal component factor analysis and as low as 13.4% with K-means cluster analysis under significant measurement error [16].

- Attenuation of associations: Larger measurement errors cause greater attenuation of diet-disease associations. For a true beneficial association (coefficient = -0.5), estimated coefficients ranged from -0.287 to -0.450 in factor analysis and -0.231 to -0.394 in cluster analysis [16].

- Pattern vulnerability: Dietary patterns derived with factor loadings of low discrepancies or with small cluster sample sizes are more vulnerable to measurement error [16].

Experimental Protocols for Characterizing Measurement Error

Protocol 1: Internal Validation Study Using Recovery Biomarkers

Objective: Quantify measurement error parameters for correction in main study analysis.

Materials: Food frequency questionnaires (FFQs), 24-hour recall protocols, doubly labeled water for energy expenditure measurement, 24-hour urine collection kits for nitrogen and potassium.

Procedure:

- Recruit a subsample (15-20%) from your main cohort study population [2]

- Administer the main dietary instrument (FFQ) to all participants

- Collect reference measurements within a comparable time frame:

- Estimate measurement error model parameters by regressing reference measurements on FFQ values

- Apply these parameters to correct relative risk estimates in main analysis using regression calibration [2]

Protocol 2: Assessing Differential Error in Intervention Trials

Objective: Detect and quantify differential measurement error between intervention and control groups.

Materials: Self-reported dietary data, recovery biomarkers, psychological measures of social desirability.

Procedure:

- Collect baseline dietary data using standardized methods before randomization [22]

- Implement intervention and control conditions

- Collect follow-up dietary data at predetermined intervals

- Include a biomarker subsudy comparing reported energy intake to energy expenditure measured by doubly labeled water in both groups [23]

- Test for differential reporting using analysis of covariance:

- Adjust sample size calculations to account for identified differential error [22]

The Scientist's Toolkit: Research Reagent Solutions

| Tool | Function | Application Context |

|---|---|---|

| Doubly Labeled Water (DLW) | Measures energy expenditure through isotope elimination; serves as objective recovery biomarker for energy intake validation [2]. | Gold standard for validating energy intake assessments in observational studies and trials. |

| 24-Hour Urinary Nitrogen | Recovers approximately 85% of dietary protein intake; objective biomarker for protein validation [2]. | Validation reference for protein intake measurements. |

| Automated Multiple-Pass 24-Hour Recall | Standardized interview method with multiple passes to enhance complete dietary reporting [7]. | Reference instrument in validation studies; primary dietary assessment in large surveys. |

| Social Desirability Scales | Assesses tendency to respond in socially acceptable manner; identifies participants likely to under-report certain foods [7]. | Understanding psychological sources of systematic measurement error. |

| Regression Calibration | Statistical method that uses validation study data to correct attenuated relative risks [2]. | Correcting measurement error in main study analyses when validation data available. |

Measurement Error Pathways and Impacts

Figure 1: Sources and Consequences of Dietary Measurement Error

Statistical Characterization of Error Types

Figure 2: Statistical Models for Measurement Error

Advanced Methods and Statistical Approaches for Error Mitigation in Dietary Assessment

Traditional Dietary Assessment Methods and Their Inherent Limitations

Troubleshooting Guides

Troubleshooting Guide: Addressing Underreporting in Food Frequency Questionnaires (FFQs)

Problem: Suspected systematic underreporting of energy and specific nutrients, particularly among individuals with higher Body Mass Index (BMI).

Explanation: Underreporting is not random; it is a systematic error where participants consistently report consuming less food than they actually do. This is often linked to social desirability bias (the desire to report "healthier" intake) and is more prevalent for foods perceived as unhealthy [24] [25]. This error attenuates diet-disease relationships, making true associations harder to detect [2] [25].

Solutions:

- Statistical Correction: Apply regression calibration using data from a validation sub-study where participants completed both the FFQ and a more accurate tool like multiple 24-hour recalls or a biomarker like doubly labeled water [2] [5].

- Machine Learning Adjustment: For specific underreported foods (e.g., high-fat items), use a predictive model. Train a random forest classifier on a "healthy" subpopulation with objective data (e.g., blood lipids, BMI) to predict accurate consumption levels, then adjust reports from the broader cohort accordingly [24].

- Use Biomarkers: Where possible, integrate recovery biomarkers (doubly labeled water for energy, urinary nitrogen for protein) to quantify and correct for the scale of underreporting at the group level [3] [5].

Troubleshooting Guide: Managing Day-to-Day Variation in 24-Hour Recalls

Problem: A single 24-hour recall provides a "snapshot" of intake that does not represent an individual's "usual" or long-term diet due to large within-person variation.

Explanation: Individuals do not eat the same foods every day. A single day of intake, especially for nutrients like cholesterol or vitamin A, can be highly variable and misleading for classifying an individual's habitual intake [3] [26]. This random error reduces the statistical power to detect true diet-disease associations.

Solutions:

- Multiple Recalls: Collect multiple non-consecutive 24-hour recalls per participant. The number needed depends on the nutrient of interest and study objectives, but often 2-3 recalls are a minimum, with more required for rarely consumed foods or highly variable nutrients [3] [26].

- Statistical Modeling: Use specialized methods like the National Cancer Institute (NCI) method or the Multiple Source Method (MSM) to estimate "usual intake" distributions from short-term recall data, accounting for within- and between-person variation [26] [27].

- Blended Approach: Combine a small number of 24-hour recalls with a Food Frequency Questionnaire (FFQ). The FFQ provides data on consumption frequency of rarely eaten foods, which improves the accuracy of the usual intake estimation derived from the recalls [27].

Troubleshooting Guide: Mitigating the "Flattened-Slope" Phenomenon

Problem: Regression dilution, where the observed association between a dietary exposure and a health outcome is biased toward the null (attenuated), making effects appear smaller than they truly are.

Explanation: This is a classic consequence of measurement error in exposures. In nutritional epidemiology, the error structure is often non-classical. Individuals with high true intake tend to underreport, while those with low true intake tend to overreport, "flattening" the observed dose-response relationship [2]. For example, an FFQ might attenuate a true relative risk of 2.0 down to an observed value of 1.1-1.2 for protein intake [2].

Solutions:

- Energy Adjustment: Analyze nutrients using densities (e.g., percent of energy from fat) or residuals, as these energy-adjusted intakes often have less severe attenuation than absolute intakes [2].

- Regression Calibration: Replace the error-prone exposure value in your statistical model with its expected value given the true (but unobserved) exposure, estimated from a calibration study. This requires validation data [2] [5].

- Increase Sample Size: To compensate for the loss of statistical power caused by attenuation, a larger sample size is needed. Studies using FFQs may require samples 5 to over 10 times larger than if intake were measured perfectly [2].

Frequently Asked Questions (FAQs)

FAQ: What is the single biggest limitation of self-reported dietary data? The most pervasive limitation is systematic misreporting, particularly the underreporting of energy intake. This error is not random; it is correlated with participant characteristics like BMI and is more severe for foods perceived as unhealthy. This bias threatens the validity of both absolute intake estimates and observed diet-disease relationships [25] [3] [2].

FAQ: When should I use an FFQ versus multiple 24-hour recalls? The choice depends on your research question and resources.

- Use an FFQ in large epidemiological studies (n > 1,000) where the goal is to rank individuals by their long-term habitual intake to investigate associations with disease, and when resource constraints preclude more intensive methods [3].

- Use multiple 24-hour recalls when you need a more accurate estimate of absolute intake for a group or individual, or for studies of smaller sample size where greater accuracy is required. The 24-hour recall is generally considered less biased for estimating current diet and energy intake at the group level [3] [26].

FAQ: How can I correct for measurement error if I don't have biomarker data? A robust method is regression calibration using a reference instrument within an internal validation sub-study. Have a subset of your participants (e.g., 100-500) complete both your main instrument (e.g., FFQ) and a more detailed reference method (e.g., multiple 24-hour recalls or food records). The data from this sub-study is used to model the relationship between the error-prone and more accurate measures, and this model is then applied to correct the data for the entire cohort [2] [5].

FAQ: Why are diet-disease associations from observational studies sometimes unreliable? Many reported associations are unreliable due to a combination of measurement error, residual confounding, and collinearity between nutrients.

- Measurement error attenuates relative risks toward the null.

- Residual confounding occurs when unmeasured or imperfectly measured factors (e.g., socioeconomic status, overall health consciousness) influence both reported diet and the disease outcome.

- Collinearity makes it difficult to disentangle the effect of a single nutrient from the complex food matrix and overall dietary pattern in which it is consumed [2] [28] [29].

Data Presentation: Comparison of Traditional Dietary Assessment Methods

Table 1: Key Characteristics and Limitations of Major Dietary Assessment Methods

| Method | Time Frame | Primary Use | Main Strengths | Inherent Limitations & Primary Error Type |

|---|---|---|---|---|

| Food Frequency Questionnaire (FFQ) | Long-term (months to years) | Habitual diet; ranking individuals in large studies | Low cost and participant burden for large samples; captures rare foods. | Systematic under-reporting (esp. energy, unhealthy foods); portion size estimation error; memory reliant [3] [2] [25]. |

| 24-Hour Dietary Recall (24HR) | Short-term (previous 24 hours) | Current diet; estimating group means with multiple recalls | Does not require literacy; less reactivity than records; multiple recalls improve accuracy. | Large within-person variation; relies on memory; single recall not representative of usual intake; requires multiple admin for habit estimation [3] [26]. |

| Food Record / Diary | Short-term (typically 3-7 days) | Current diet; detailed nutrient analysis | Does not rely on memory if filled concurrently; high detail for specific nutrients. | High participant burden and literacy required; reactivity (subjects change diet); recording fatigue reduces accuracy over days [3] [25]. |

| Screening / Brief Tool | Varies (often short-term) | Rapid assessment of specific food groups/nutrients | Very low burden; targeted to research question. | Limited scope; not for total diet assessment; must be validated for specific population [3]. |

Table 2: Impact and Mitigation of Different Types of Measurement Error

| Type of Error | Impact on Diet-Disease Association | Recommended Correction Strategies |

|---|---|---|

| Random Within-Person | Attenuates relative risks toward the null; reduces statistical power. | Collect repeated measurements (e.g., multiple 24HRs); use statistical models (e.g., NCI method) to estimate usual intake [26] [5]. |

| Systematic (e.g., Under-reporting) | Can cause attenuation or, in multi-variable models, unpredictable bias (e.g., residual confounding). | Use recovery biomarkers (e.g., doubly labeled water) for calibration; apply regression calibration or machine learning adjustment methods [2] [24] [5]. |

| Differential (e.g., Recall Bias) | Severe bias in any direction; most common in case-control studies. | Use prospective study designs where diet is reported before disease diagnosis [2] [9]. |

Experimental Protocols

Protocol 1: Machine Learning-Based Correction for Underreporting in an FFQ

Purpose: To correct for systematic underreporting of specific food items in an FFQ dataset using a supervised machine learning model and objectively measured physiological data [24].

Workflow:

Methodology:

- Input Data: Utilize an existing dataset containing FFQ responses and objective measures such as BMI, body fat percentage, blood lipids (LDL, total cholesterol), and fasting glucose [24].

- Data Splitting: Split the study population into two groups based on health risk criteria (e.g., body fat percentage, age, sex). The "healthy" group is assumed to report their dietary intake more accurately and is used as a training set [24].

- Model Training: Train a Random Forest (RF) classification model on the "healthy" group. The model uses the objective measures (BMI, lipids, etc.) as predictors to classify the frequency and quantity of consumption of the target food items (e.g., bacon, fried chicken) from the FFQ. Hyperparameters are tuned via cross-validation [24].

- Prediction and Adjustment: Apply the trained RF model to the "unhealthy" group. The model predicts the most probable consumption category for each individual. If the originally reported FFQ value for an unhealthy food is lower than the model's prediction, it is replaced with the predicted value to correct for underreporting [24].

Protocol 2: The Blended Approach for Usual Dietary Intake Estimation

Purpose: To estimate an individual's usual food intake by combining the strengths of repeated 24-Hour Food Lists (24HFLs) and a Food Frequency Questionnaire (FFQ), thereby mitigating the limitations of each instrument when used alone [27].

Workflow:

Methodology:

- Data Collection: Participants complete at least two non-consecutive 24HFLs, which are simplified, web-based checklists of foods consumed in the last 24 hours (without portion sizes). They also complete a traditional FFQ covering the past year [27].

- Two-Part Statistical Model:

- Part 1 - Consumption Probability: A logistic mixed model is applied to the 24HFL data to estimate the probability that an individual consumes a specific food on any given day. A key feature is the inclusion of the FFQ consumption frequency data as a covariate in this model, which improves the estimate, especially for irregularly consumed foods [27].

- Part 2 - Consumption Amount: The average amount of the food consumed on a "consumption day" is estimated. This can be derived from the same study if portion data is available, or it can be imported from a more detailed external reference population survey (e.g., a survey with weighed food records) [27].

- Calculate Usual Intake: The usual intake for each individual and food group is calculated by multiplying the estimated consumption probability (from Part 1) by the estimated consumption amount (from Part 2) [27].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Instruments and Biomarkers for Dietary Assessment and Validation

| Tool / Reagent | Function in Dietary Research | Key Utility and Notes |

|---|---|---|

| Doubly Labeled Water (DLW) | Recovery biomarker for measuring total energy expenditure. | Serves as an objective reference for validating self-reported energy intake. Considered a "gold standard" but is costly and technically complex [25] [5]. |

| 24-Hour Urinary Nitrogen | Recovery biomarker for protein intake. | Provides an objective measure of absolute protein intake over a 24-hour period for validating protein reports from FFQs or recalls [3] [5]. |

| Food Frequency Questionnaire (FFQ) | Primary instrument for assessing habitual diet in large cohorts. | The workhorse of nutritional epidemiology. Must be selected or developed for the specific population and nutrients of interest. Critical to understand its error structure via validation [3] [2]. |

| Automated Self-Administered 24-Hour Recall (ASA-24) | Web-based, automated 24-hour recall system. | Reduces cost and interviewer burden of 24-hour recalls. Allows for standardized collection of multiple recalls, facilitating usual intake estimation [3]. |

| Regression Calibration Software | Statistical programs to correct relative risk estimates for measurement error. | Essential for implementing methods like regression calibration. Software and guidance are available from sources like the National Cancer Institute's Dietary Assessment Primer [2] [9] [5]. |

| Multiple Source Method (MSM) Web Tool | Free, web-based tool for estimating usual food and nutrient intake. | A user-friendly implementation of the two-part statistical model. Allows researchers to combine 24-hour recall and FFQ data to derive usual intake distributions for their study population [27]. |

Technical Support Center: Troubleshooting & FAQs

This technical support center is designed for researchers and scientists working at the intersection of nutritional epidemiology and machine learning. It provides targeted troubleshooting for two innovative pattern recognition technologies—Diet ID and Deep Q-Networks (DQN)—within the critical context of mitigating measurement error in dietary pattern research.

Frequently Asked Questions (FAQs)

Q1: What are the primary sources of measurement error in dietary pattern analysis, and how do these technologies address them?

- A1: In traditional dietary assessment (e.g., questionnaires, 24-hour recalls), measurement errors can be systematic or random. These errors distort derived dietary patterns and attenuate diet-disease association coefficients [16] [13]. Diet ID addresses this by using a visual pattern recognition approach, which avoids the recall and logging burden associated with traditional methods, thereby reducing a major source of random error [30]. DQN systems, used in simulation environments, contend with error in their Q-value estimates. This is mitigated through techniques like target networks and Double Q-learning, which prevent feedback loops of overestimation error and lead to more stable and reliable policy learning [31].

Q2: During DQN training, my agent's performance degrades over time, or it gets stuck preferring a single action. What is the cause and solution?

- A2: This is a classic symptom of two common DQN failures.

- Cause 1: Moving Target. The Q-network is learning to match a target that it is simultaneously changing, leading to a destructive feedback cycle [31].

- Solution: Implement a slowly updating target network. Instead of using the latest Q-network weights to calculate the target, use an older copy. Update this target network periodically either by directly copying weights every N steps or, more smoothly, using an Exponentially Moving Average (EMA) of the main network's weights [31].

- Cause 2: Overly Optimistic Q-Values. The

maxoperation in the standard Q-learning update inherently leads to overestimation of Q-values, causing the agent to be overconfident in suboptimal actions [31]. - Solution: Implement Double DQN. Train two independent Q-networks and use the minimum of their estimates to compute the target Q-value. This simple change introduces pessimism, which counters the overestimation bias and leads to more stable training [31].

Q3: How does the Diet ID assessment ensure its dietary pattern images are scientifically valid and not a source of systematic error?

- A3: The diet patterns and images in Diet ID are not arbitrary. They are engineered from scientific research literature to capture the eating patterns of over 90% of the population. Each pattern is analyzed for over 150 nutrients and scored for quality using the Healthy Eating Index (HEI) 2015, a validated diet scoring system. The visuals are then created by a culinary cartography team to accurately represent these scientifically derived patterns [30]. This rigorous process minimizes systematic error in pattern classification.

Q4: What are the technical requirements for integrating the Diet ID assessment into a research workflow?

- A4:

- Browsers: Use Google Chrome, Safari, or Mozilla Firefox. Internet Explorer is not supported [30].

- Administrative Access: Researchers need to be set up as an "ADMIN" by Diet ID to access the dashboard and population data [30].

- Authentication: Login requires an email, password, and a 6-digit One-Time Password (OTP) sent via email [30].

- Data Collection: The assessment can be completed by participants via a custom link before a visit, or administered live via screen-sharing during a video call [30].

Troubleshooting Guides

Guide 1: Addressing DQN Training Instability

| Symptom | Likely Cause | Solution | Underlying Principle |

|---|---|---|---|

| Performance collapses after initial improvement; high loss values. | Moving target in Q-learning update. | Implement a target network updated via EMA. | Decouples the target prediction from the rapidly changing online network, stabilizing the learning signal [31]. |

| Agent persistently chooses one action; performance plateaus at a low level. | Overestimation bias from the max operator. |

Implement Double Q-learning. | Using the minimum Q-value from two networks reduces optimistic bias, leading to more accurate value estimates [31]. |

| Poor performance from the start; no learning. | Insufficient exploration or high learning rate. | Ensure epsilon-greedy strategy is used (e.g., start epsilon=1, decay slowly). Reduce the optimizer's learning rate (e.g., from 0.001 to 0.0001). | Ensures adequate state-action space exploration and prevents the network from overreacting to early, noisy updates [32]. |

Experimental Protocol for Stable DQN Training:

- Network Architecture: Use a simple multi-layer perceptron (e.g., 4→32→16→8→4→2 for CartPole) with ReLU activations [32].

- Experience Replay: Store experiences (state, action, reward, next_state) in a replay buffer. Sample small batches (e.g., 10) randomly for training to break correlated data [32].

- Target Network: Initialize a separate target network with identical architecture to the main Q-network. Update the target network's weights as an EMA of the main network's weights (e.g.,

beta=0.99) [31]. - Double Q-Learning: Modify the target calculation. For a batch of samples, use the main network to select the best action for the next state, but the target network to evaluate the Q-value for that action. This can be combined with taking the minimum across two networks for further stability [31].

- Hyperparameters: Use a discount factor (gamma) of 0.9, a learning rate of 0.001, and an epsilon decay schedule that covers a sufficient number of steps (e.g., 1000) [32].

Guide 2: Mitigating Measurement Error in Diet ID Implementation

| Symptom | Potential Source of Error | Solution | Impact on Research Data |

|---|---|---|---|

| Inconsistent dietary patterns across repeated assessments for the same participant. | Random error from participant misinterpretation of images or transient dietary changes. | Standardize administration: Provide clear, uniform instructions and conduct assessments in a consistent setting (e.g., before the visit, in a quiet room) [30]. | Reduces within-subject variability, enhancing the signal-to-noise ratio for detecting true changes in dietary patterns. |

| Derived dietary patterns do not align with patterns from other assessment tools (e.g., FFQs). | Systematic error in the visual pattern mapping or cohort misrepresentation. | Understand the tool's basis: Diet ID patterns are based on the HEI and Dietary Guidelines for Americans. Cross-validate with a brief food list in a subsample to calibrate [30]. | Helps characterize and account for systematic differences between tools, preventing misinterpretation of pattern labels. |

| Attenuated or non-significant diet-disease associations in analysis. | General measurement error, which biases association coefficients toward zero. | Acknowledge inherent limitation: Use statistical methods like regression calibration or simulation to quantify and correct for the potential attenuation effect [16] [13]. | Allows for a more accurate estimation of the true effect size between a dietary pattern and a health outcome. |

Experimental Protocol for Validating Diet ID in a Research Cohort:

- Cohort Setup: Recruit a sample representative of your study population. Obtain ADMIN access from Diet ID [30].

- Standardized Administration: Provide each participant with a custom assessment link via email and instruct them to complete it in a single session before their baseline visit [30].

- Data Collection: The system automatically collects data on diet pattern, HEI score, BMI (from entered height/weight), and health goals [30].

- Data Access & Export: Log into the Admin Dashboard using your credentials and OTP. Access individual reports or population summary stats. Export data via the shareable report URL or PDF [30].

- Validation Sub-Study: In a random subsample (e.g., 10-20%), administer a second dietary assessment method (e.g., 24-hour recall) closely following the Diet ID assessment. This allows for direct comparison and quantification of measurement error [16].

Table 1: Impact of Measurement Error on Dietary Pattern-Disease Associations [16] [13]

This table shows how increasing measurement error attenuates (weakens) the observed association between a dietary pattern and a disease outcome in statistical models.

| True Association Coefficient | Type of Measurement Error | Analysis Method | Resulting Estimated Coefficient (Range) | Attenuation Effect |

|---|---|---|---|---|

| -0.5 (Beneficial) | Systematic & Random | Principal Component Factor Analysis (PCFA) | -0.287 to -0.450 | 10% to 57.4% |

| -0.5 (Beneficial) | Systematic & Random | K-means Cluster Analysis (KCA) | -0.231 to -0.394 | 21.2% to 53.8% |

| 0.5 (Harmful) | Systematic & Random | Principal Component Factor Analysis (PCFA) | 0.295 to 0.449 | 10.2% to 41.0% |

| 0.5 (Harmful) | Systematic & Random | K-means Cluster Analysis (KCA) | -0.003 to 0.373 | 25.4% to 100.6%* |

*An estimated coefficient of -0.003 represents a complete reversal and attenuation of the harmful effect.

Table 2: DQN Troubleshooting Solutions & Their Technical Specifications

| Solution | Key Hyperparameter | Technical Function | Empirical Result |

|---|---|---|---|

| Target Network (EMA) | EMA beta (e.g., 0.99, 0.995) | Slowly blends target network weights with online weights, stabilizing the training target. | Prevents feedback loops and dramatic performance collapses during training [31]. |

| Double Q-Learning | (None - an algorithm change) | Uses two networks and takes the minimum Q-value estimate to compute the target, reducing overestimation. | Leads to more conservative and reliable value estimates, improving policy performance [31]. |

| Experience Replay | Replay Buffer Size (e.g., 100,000), Batch Size (e.g., 32, 64) | Breaks temporal correlation in data by sampling random batches from a memory store. | Smoothes and stabilizes the training process, improving data efficiency [32]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Dietary Pattern & Reinforcement Learning Research

| Item / Solution | Function in Research | Application Context |

|---|---|---|

| Diet ID Platform | Provides a rapid, visual-based assessment of dietary patterns, outputting a diet quality score (HEI) and pattern classification to reduce measurement error [30]. | Nutritional Epidemiology, Cohort Studies, Clinical Trials. |

| Healthy Eating Index (HEI) 2015 | A validated metric to score diet quality based on adherence to the Dietary Guidelines for Americans; used by Diet ID to quantify pattern quality [30]. | Diet Pattern Validation, Public Health Monitoring. |

| Target Q-Network | A slowly updating copy of the main Q-network used to generate stable target values during DQN training, preventing divergence [31]. | Deep Reinforcement Learning, Agent-Based Simulation. |

| Double Q-Learning Algorithm | A modification to the DQN algorithm that reduces the overestimation of Q-values by decoupling action selection from evaluation [31]. | Stable RL Policy Optimization. |

| Experience Replay Buffer | A memory store of past agent experiences (state, action, reward, next state) that allows for batch sampling to decorrelate sequential data [32]. | Efficient DQN Training. |

Experimental Workflow Visualizations

Diet ID Assessment Workflow

Stable DQN Training with Error Mitigation

Frequently Asked Questions (FAQs)

What is a Gaussian Graphical Model (GGM) and how does it differ from traditional dietary pattern analysis methods?

GGM is a novel graphical method that shows the pairwise conditional correlation between food groups, independent of the effects of other food groups [33]. Unlike traditional methods like Principal Component Analysis (PCA) that create uncorrelated linear combinations, GGM identifies dietary networks representing the underlying structure of how food groups are consumed in relation to one another [33]. This approach reveals the conditional independence structure in the dataset without requiring prior knowledge of relationships between variables [33].

How does measurement error specifically impact GGM-derived dietary patterns?

Measurement errors can distort derived dietary patterns and attenuate dietary pattern-disease associations [16]. In simulation studies, larger measurement errors caused more serious distortion of dietary patterns, with consistency rates declining significantly [16]. Both systematic and random errors can affect the stability of identified patterns, with the impact varying depending on the derivation method and pattern characteristics [16].

What are the central food items typically identified in GGM dietary networks?

Research has consistently identified several central food items across different dietary networks [34] [33]:

- Healthy/Vegetable Network: Raw vegetables, cooked vegetables [34] [33]

- Grain Network: Various grains [34]

- Unhealthy Network: Processed meats [33]

- Saturated Fats Network: Butter, margarine [34] [33]

- Other Networks: Fresh fruits, snacks, red meat [34]

What statistical software can I use to implement GGM for dietary pattern analysis?

GGM analysis can be performed in R (version 3.4.3 or higher) using specific packages [33]:

glasso(graphical lasso) for estimating sparse inverse covariance matrices [33]linkcommfor detecting nested and overlapping communities in networks [33]

Troubleshooting Guides

Issue: Unstable or Poorly Defined Dietary Networks