Addressing Within-Person Variation in Biomarker Measurements: A Comprehensive Guide for Robust Research and Clinical Application

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, quantify, and mitigate the effects of within-person biomarker variation.

Addressing Within-Person Variation in Biomarker Measurements: A Comprehensive Guide for Robust Research and Clinical Application

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, quantify, and mitigate the effects of within-person biomarker variation. Covering foundational concepts to advanced applications, it explores the biological and technical sources of variability, presents statistical methods like repeat-measure error models and variance component partitioning, addresses critical pitfalls such as identity confounding in machine learning, and compares validation approaches including joint modeling versus two-stage methods. The content synthesizes current evidence to guide the development of reliable, reproducible biomarkers for precision medicine, emphasizing rigorous study design and analytical techniques to enhance biomarker utility in both research and clinical decision-making.

Understanding the Sources and Impact of Biomarker Variability

Frequently Asked Questions (FAQs)

What are the main components of variance in biomarker measurements?

The total variance in biomarker measurements is typically divided into three main components:

- Within-Person Variance: This refers to the fluctuation in biomarker levels within the same individual over time. It can be caused by factors like diet, hydration, time of day, and short-term physiological changes [1] [2].

- Between-Person Variance: This represents the differences in average biomarker levels between different individuals. It is influenced by factors such as genetics, age, body mass index (BMI), long-term health status, and lifestyle [1] [2].

- Methodological Variance: Also known as technical variability, this component arises from the measurement process itself. It includes variation due to sample collection, handling, storage, and the analytical technique (e.g., mass spectrometry) [3] [4].

Why is it critical to distinguish between within-person and between-person variance?

Accurately separating these variances is essential for the design and interpretation of research. Confusing within-person fluctuation for a true between-person difference can lead to incorrect conclusions [5].

- Exposure-Response Relationships: In epidemiological studies, high within-person variability can attenuate (bias toward zero) the observed strength of the relationship between a biomarker of exposure and a health outcome. Using a biomarker with lower within-person variance relative to between-person variance provides a less biased estimate of this relationship [1].

- Study Power and Design: Understanding these components helps researchers determine the optimal number of participants and the number of repeated samples per participant needed to reliably detect a true effect [1] [2].

What is the typical contribution of biological vs. methodological variance?

In well-controlled laboratory settings, the biological component (within- and between-person) is often the dominant source of variability. One analysis of volunteer studies found that the median variability attributed to biological differences was substantial even in highly homogeneous groups [2] [6]. However, in practice, methodological variance can be a significant contributor if not tightly controlled, sometimes leading to the failure of a biomarker to be clinically useful [3] [7].

The table below summarizes the sources and impact of the different variance components based on data from volunteer and occupational studies [1] [2] [6].

| Variance Component | Key Influencing Factors | Potential Impact on Research |

|---|---|---|

| Within-Person | Time of sample collection, hydration, recent diet, physical activity [1] [2] | Attenuates exposure-response effect estimates; requires repeated measurements per person [1] |

| Between-Person | Age, genetics, BMI, smoking status, long-term health [1] [2] | Defines true differences between population subgroups; crucial for identifying predictive biomarkers [1] |

| Methodological | Sample processing, storage conditions, instrument calibration, operator skill [3] [4] | Introduces non-biological noise, can lead to irreproducible results and biomarker failure [3] [7] |

Troubleshooting Guides

Issue: High Within-Person Variance is Obscuring Group Differences

Problem: Your data shows large fluctuations in biomarker levels within the same participant, making it difficult to detect consistent differences between your study groups (e.g., exposed vs. non-exposed).

Solution:

- Increase Repeated Measurements: Collect multiple samples from each participant over time. This allows you to better estimate each individual's long-term average level [1].

- Standardize Sampling Protocols: Control for known influencers by collecting samples at the same time of day, under standardized conditions (e.g., fasting), and account for hydration levels by measuring and adjusting for creatinine in urine samples [1].

- Use Advanced Statistical Models: Apply linear mixed-effects models. These models are specifically designed to separate and estimate within-person and between-person variance components, providing a clearer view of the underlying group differences [1] [8].

Issue: Suspected Technical Artifacts are Skewing Results

Problem: Uncontrolled methodological variance is suspected, leading to unreliable data and an inability to reproduce findings.

Solution:

- Implement Rigorous Quality Control (QC): Use standardized protocols for sample collection, processing, and storage. Employ automated systems where possible to reduce human error and cross-contamination [3].

- Use Technical Replicates: Run multiple analytical measurements on the same sample to quantify and account for the pure technical variability of your assay [4].

- Apply Variance Component Analysis (VCA): For mass spectrometry data, use specialized VCA methods on balanced or unbalanced experimental designs to distinguish variability from sample preparation, digestion, injection, and other technical steps from the biological signal [4].

Experimental Protocols for Variance Component Analysis

Protocol 1: Estimating Variance Components Using Linear Mixed-Effects Models

This protocol is ideal for studies with repeated biomarker measurements from the same individuals [1].

1. Study Design:

- Participants: Recruit a cohort of participants (e.g., 20 road-paving workers for a PAH exposure study) [1].

- Sampling: Collect repeated biological samples from each participant (e.g., up to 9 urine samples per worker over multiple days) [1].

2. Laboratory Analysis:

- Analyze samples for your target biomarker(s) using a validated method (e.g., GC-MS or LC-MS/MS for urinary PAH metabolites) [1].

- Measure and record covariates for each sample (e.g., creatinine for hydration, time of day, smoking status, age, BMI) [1].

3. Data Analysis:

- Model Fitting: Use a linear mixed-effects model. The model for a logged biomarker value can be specified as:

Biomarker_Value ~ Fixed_Effects_Covariates + (1 | Subject_ID) - Variance Extraction: The model will output variance components:

σ²_b(Between-Subject Variance): The variance of the random intercepts forSubject_ID.σ²_w(Within-Subject Variance): The residual variance.

- Bias Calculation: Calculate the variance ratio

λ = σ²_w / σ²_b. Use this in attenuation formula to understand potential bias in exposure-response slopes [1].

Protocol 2: Assessing Technical Variability in Mass Spectrometry-Based Biomarkers

This protocol is designed to pinpoint sources of technical noise in analytical pipelines, such as Selected Reaction Monitoring (SRM) [4].

1. Experimental Design:

- Samples: Create a dilution series of target proteins spiked into a control matrix (e.g., human serum) to simulate a known "biological" variance [4].

- Replication: Process multiple aliquots of each sample through the entire pipeline—digestion, injection—with technical replicates at each stage [4].

2. Data Acquisition:

- Run the samples in a randomized order across multiple days and using different chromatographic columns to capture these sources of variability [4].

3. Data Analysis:

- Variance Component Analysis (VCA): Use an extended VCA method, such as the adjusted sum of squares approach, which is robust for unbalanced data. This model quantifies the variance contributed by:

- Dilution (the "true" signal)

- Sample-to-sample preparation

- Digestion efficiency

- Injection/reading

- Day-to-day operation [4]

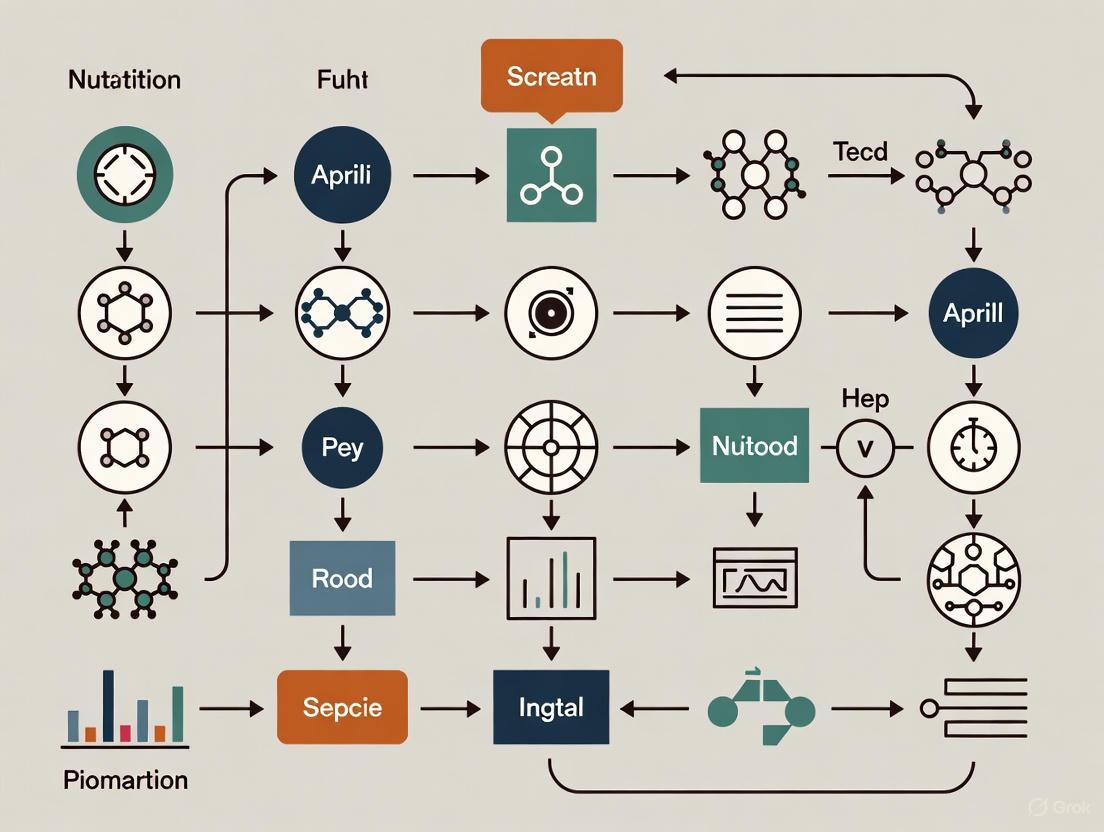

Visualizing Variance Components and Workflows

Diagram: Mixed-Model Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Biomarker Variance Research |

|---|---|

| Linear Mixed-Effects Models | A statistical software procedure (e.g., in R or SAS) used to decompose total variance into within-person and between-person components [1] [8]. |

| Automated Homogenizer (e.g., Omni LH 96) | Standardizes sample preparation, reduces cross-contamination, and minimizes variability introduced during tissue or biofluid processing [3]. |

| AQUA Internal Standards | Labeled peptide standards spiked into samples before mass spectrometry analysis to correct for variability in sample digestion and instrument response [4]. |

| Creatinine Assay Kits | Used to measure creatinine in urine samples, allowing for the adjustment of biomarker concentrations for hydration level (a major source of within-person variance) [1]. |

| Variance Component Analysis (VCA) Software | Specialized statistical tools for complex designs (e.g., in mass spectrometry) to quantify technical variance from digestion, injection, and day-to-day operation [4]. |

Core Concepts: Understanding Longitudinal Biomarker Stability

What is the primary challenge of within-person variation in longitudinal biomarker studies? The core challenge is distinguishing true, disease-initiated biological changes from natural, background fluctuations inherent to healthy individuals. A biomarker with high natural variation requires a larger disease-induced change to be detectable, reducing its sensitivity for early disease detection [9].

Which statistical parameters are used to quantify a biomarker's longitudinal stability? Stability is typically assessed using Coefficients of Variation (CV). The within-person CV measures how much a biomarker fluctuates over time in a single individual, while the between-person CV measures how much the biomarker's baseline level differs across a population. An ideal diagnostic biomarker has low within-person variation but high between-person variation [9].

Can you provide real-world data on biomarker variation? The table below summarizes the within-person and between-person coefficients of variation for a panel of ovarian cancer biomarkers, calculated from healthy controls [9].

| Biomarker | Within-Person CV | Between-Person CV |

|---|---|---|

| CA-125 | 15% | 49% |

| HE4 | 25% | 20% |

| MMP-7 | 25% | 35% |

| CA72-4 | 21% | 84% |

Statistical Methodologies and Analysis

What are the main statistical approaches for analyzing longitudinal biomarker data? There are two primary frameworks. Two-stage methods first calculate summary statistics (e.g., mean, variance) for each subject's longitudinal data, then use these as covariates in a prediction model. Joint models simultaneously analyze the longitudinal and clinical outcome data, which can provide less biased estimates [10].

When should I use a joint model instead of a simpler two-stage method? Joint models are preferred when the number of longitudinal measurements per subject is limited, the effect size of the biomarker is modest, or when the goal is to assess the prognostic effect of biomarker variability itself on a time-to-event clinical outcome [10].

How can I handle outcome-dependent sampling in my study design? When the probability of taking a biomarker measurement is related to an auxiliary variable (e.g., using a fertility monitor to time serum draws for detecting an LH surge), specialized methods are needed. The approach of Schildcrout et al. uses a joint estimating equation to correct for potential bias, while Inverse Probability Weighting (IPW-GEE) is a less efficient but more broadly applicable alternative [11].

Experimental Protocols and Best Practices

What is a proven protocol for assessing the longitudinal stability of a novel biomarker? A standard protocol, as used for plasma miRNAs, involves [12]:

- Sample Collection: Collect serial blood samples (e.g., biweekly) from participants over an extended period (e.g., 3 months).

- Sample Processing: Isolate the biomarker (e.g., total RNA for miRNAs) from plasma.

- Quantification: Perform quantitative measurements (e.g., qPCR). Include a spike-in control (e.g., cel-miR-39-3p) to calibrate for technical variance from RNA isolation and assay efficiency.

- Data Normalization: Normalize the data using stable endogenous control biomarkers to adjust for biological variance.

- Stability Analysis: Filter biomarkers based on reliable detection, then calculate a quantitative stability index (e.g., test-retest standard deviation).

The following diagram illustrates this workflow:

What are key considerations for designing a longitudinal biomarker study?

- Sample Size: Ensure a sufficient number of participants and serial samples per participant. For variance estimation, more participants are often better than more samples per participant [10].

- Frequency and Duration: The sampling frequency and total study duration should be chosen based on the biological rhythm of interest (e.g., circadian, monthly) [9] [12].

- Confounding Factors: Account for and document potential confounders like hemolysis, tobacco use, fasting status, and medication use, as these can significantly impact biomarker levels and variance [12].

Troubleshooting Common Experimental Issues

I have detected high within-person variation in my biomarker. What could be the cause? High variation can stem from:

- Biological Rhythms: Unaccounted-for circadian, ultradian, or infradian rhythms [13].

- Technical Noise: High assay coefficient of variation (CV). Always use calibrators (e.g., cel-miR-39-3p for qPCR) to correct for technical variance from sample processing and assay runs [12].

- Pre-analytical Variables: Inconsistent sample collection, handling, or storage protocols.

- Confounding Factors: Unmeasured lifestyle or environmental factors (e.g., sleep disruption, diet) [13] [12].

My multi-marker panel performs well in a single-time-point test but fails in a longitudinal algorithm. Why? This often occurs because the individual biomarkers in the panel lack a stable, well-defined baseline in healthy individuals. For longitudinal algorithm development, each marker must have low within-person variance relative to its disease-initiated change [9].

How can I visually diagnose issues in my longitudinal data? Plot individual biomarker trajectories over time. Healthy individuals should show stable baselines with minimal drift, while technical batch effects may appear as synchronized spikes across multiple participants at specific visits [12]. The following diagram outlines a logical troubleshooting flow:

Research Reagent Solutions

The table below lists key reagents and their functions for establishing a longitudinal biomarker stability study, as applied in cited research [9] [12].

| Research Reagent | Function in Experiment |

|---|---|

| Pre-treatment Sera | Biological matrix for biomarker measurement; requires standardized collection and storage at -80°C [9]. |

| Roche Elecsys Immunoassays | Automated, quantitative measurement of protein biomarkers (e.g., CA-125, CA72-4) with low assay CV [9]. |

| ELISA Kits (e.g., R&D Systems) | Quantification of specific protein biomarkers (e.g., HE4, MMP-7) via standard immunoassay [9]. |

| qPCR Assays | Quantification of RNA biomarkers (e.g., miRNAs); requires robust normalization [12]. |

| cel-miR-39-3p Spike-in Control | Synthetic RNA added during RNA isolation to calibrate and correct for technical variance in sample processing and qPCR efficiency [12]. |

| Endogenous Control miRNAs (e.g., miR-16-5p) | Stable, endogenous biomarkers used for normalization to adjust for biological variance between samples [12]. |

In biomarker research, understanding and quantifying variability is fundamental to ensuring that measurements are reliable, reproducible, and meaningful. Two statistical metrics are cornerstone to this process: the Coefficient of Variation (CV) and the Intraclass Correlation Coefficient (ICC). The CV is a standardized measure of dispersion that describes the variability in a set of measurements relative to the mean. It is particularly useful for assessing the precision of an assay or instrument [14]. In contrast, the ICC is used to measure reliability by quantifying how strongly units in the same group resemble each other. It is especially valuable for assessing the agreement between different raters, instruments, or repeated measurements over time (test-retest reliability) [15] [16].

The proper application of these metrics allows researchers to dissect the different sources of variability inherent in biomarker data. This includes analytical variability (from the measurement process itself), within-subject biological variability (natural fluctuation in a biomarker within an individual over time), and between-subject variability (differences in the biomarker across a population) [17] [10]. Accurately characterizing these components is critical for developing robust biomarkers, as high levels of unaccounted-for variability can obscure true biological signals, lead to biased estimates in association studies, and ultimately result in failed experiments or unreliable diagnostic tools [18]. This guide provides troubleshooting advice and methodological protocols to help you correctly implement and interpret CV and ICC in your research.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My ICC value is lower than expected. What are the potential causes and how can I investigate them?

A low ICC value generally indicates poor reliability among your measurements or raters. The following flowchart outlines a systematic troubleshooting approach to diagnose the root cause.

Beyond the common issues illustrated above, consider the study design itself. If the time interval between test and retest measurements is too long, the underlying biomarker may have genuinely changed, artificially lowering reliability. Conversely, if the interval is too short, memory effects can inflate agreement. Furthermore, ensure that the measurement instrument itself has sufficient precision for your biomarker; an assay with high analytical CV will inherently limit the maximum achievable ICC [3].

Q2: When should I use ICC versus CV to report the reliability of my biomarker assay?

The choice between ICC and CV depends on the specific aspect of reliability you wish to capture and the design of your study. The table below summarizes the key distinctions and appropriate use cases.

| Metric | Primary Use Case | Best for Assessing | Underlying Question |

|---|---|---|---|

| Coefficient of Variation (CV) | Quantifying precision and dispersion of repeated measurements from a single instrument or assay [14]. | Analytical variability (e.g., intra-assay precision, inter-assay precision). | "How much does a single measurement result vary around its true value?" |

| Intraclass Correlation Coefficient (ICC) | Quantifying agreement and consistency between multiple raters, instruments, or time points [15] [16]. | Reliability (e.g., inter-rater, test-retest, intra-rater). | "Can different raters/methods/time points be used interchangeably?" |

In practice, these metrics are often complementary. For a full validation of a new biomarker assay, you should report both. A low CV demonstrates that your measurement technique is precise, while a high ICC shows that it can reliably distinguish between different subjects despite the inherent biological and measurement noise [19]. ICC is generally the preferred metric for clinical reliability because it accounts for between-subject variability, making it more generalizable [16]. CV is most informative when applied to data measured on a ratio scale with a meaningful zero point [14].

Q3: My biomarker's CV is very high. What steps can I take to reduce excessive variability?

A high CV indicates that dispersion is large relative to your mean value, which can mask true biological effects. The first step is to systematically investigate the source of the variability. The following diagram maps the primary areas to investigate and corresponding mitigation strategies.

If high variability persists after investigating these areas, the issue may be high within-subject biological variability, which is an inherent property of the biomarker. In this case, mitigation involves changing the study design, such as increasing the number of repeated measurements per subject to better estimate the subject's true long-term average [17] [18].

Q4: How do I select the correct form of the ICC for my study, and how should I report it?

Selecting the appropriate ICC model is critical, as using an incorrect form can lead to misleading conclusions. Your choice hinges on three key factors, which can be determined by answering the questions in the workflow below.

When reporting ICC in your manuscripts, transparency is key. You should always specify the software used, the model type (e.g., two-way random), the unit (single or average), and the type of agreement (absolute agreement or consistency). Additionally, always report the ICC estimate alongside its 95% confidence interval to convey the precision of your estimate [16].

Experimental Protocols

Protocol 1: Calculating ICC for Rater Reliability in a Biomarker Study

This protocol provides a step-by-step guide for assessing inter-rater reliability when multiple raters are evaluating a set of subjects, a common scenario in imaging or histology studies.

1. Problem: A research team is developing a new histological scoring system for a liver biomarker. Four pathologists have scored the same 10 biopsy samples. The team needs to determine the reliability of this scoring system before deploying it in a larger study.

2. Experimental Design & Data Collection:

- Subjects: 10 unique biopsy samples.

- Raters: 4 pathologists.

- Procedure: Each pathologist independently scores each of the 10 samples on a continuous scale (e.g., 0-100). The data is arranged in a table where rows represent subjects and columns represent raters.

3. Analysis Steps (Using R Statistical Software):

- Step 1: Load the required library and input the data.

- Step 2: Select the ICC model. In this case, the raters are a fixed set of experts, and we want to know the agreement of a single rater for future use. We choose a Two-Way Mixed-Effects Model, focusing on Absolute Agreement, for a Single Rater.

- Step 3: Run the ICC calculation.

- Step 4: Interpret the output. The output will provide the ICC estimate and its 95% confidence interval [15]. According to common interpretation guidelines (Koo & Li), an ICC of 0.782 indicates "Good" reliability [15] [16].

Protocol 2: Estimating Within-Person Biomarker Variability using ICC and CV

This protocol is designed for studies where a biomarker is measured repeatedly over time in the same individuals to understand its natural fluctuation, which is crucial for determining the number of measurements needed for accurate classification.

1. Problem: Investigators want to understand the within-person variability of urinary nitrogen, a biomarker for protein intake, over a 16-month period to inform the design of a future nutritional epidemiology study [17].

2. Experimental Design & Data Collection:

- Subjects: 52 adults.

- Procedure: The urinary nitrogen biomarker is measured twice for each subject, with the two measurements taken approximately 16 months apart (e.g., in 2002 and 2003).

3. Analysis Steps:

- Step 1: Calculate the Coefficient of Variation (CV) to assess dispersion. The CV is calculated as ( CV = \frac{\sigma}{\mu} ), where ( \sigma ) is the standard deviation and ( \mu ) is the mean [14]. For log-normally distributed biomarker data, a more accurate estimate is ( \widehat{cv}{\text{raw}} = \sqrt{\mathrm{e}^{s{\ln}^{2}} - 1} ), where ( s_{\ln} ) is the standard deviation of the log-transformed data [14].

- Step 2: Calculate the Intraclass Correlation Coefficient (ICC) to assess reliability over time. A One-Way Random Effects Model is often appropriate here, as the focus is on the reliability of the measurement across time itself (a random factor) [15]. The ICC is calculated as the ratio of between-subject variance to total variance (between-subject + within-subject variance).

- Step 3: Interpret the results. In the referenced study, the ICC for protein density (using urinary nitrogen) was 0.54 [17]. This falls into the "Moderate" reliability category. This ICC value can be used in sample size planning for future studies to account for the attenuation bias caused by measurement error.

The Scientist's Toolkit: Key Research Reagents & Software

The following table lists essential materials and computational tools used in the protocols above for quantifying variability in biomarker research.

| Item Name | Function / Application | Example Use Case |

|---|---|---|

| irr Package (R) | A library in R specifically designed for calculating various inter-rater reliability statistics [15]. | Used in Protocol 1 to compute the ICC for the pathologists' scores. |

| Pingouin Package (Python) | An open-source statistical package in Python based on Pandas that includes functions for calculating ICC [16]. | An alternative to R for researchers working in the Python ecosystem. |

| Quality Control (QC) Samples | Pooled biological samples with a stable analyte concentration, run repeatedly across multiple assays [19]. | Used to monitor assay performance and calculate inter-assay CV over time. |

| Automated Homogenizer (e.g., Omni LH 96) | Standardizes sample preparation by automating the disruption and homogenization of tissue or biofluid samples [3]. | Reduces pre-analytical variability (a major source of high CV) by ensuring consistent processing. |

| Standard Operating Procedures (SOPs) | Detailed, written instructions to achieve uniformity in the performance of a specific function [19]. | Critical for minimizing pre-analytical variability in sample collection, processing, and storage. |

Reference Tables

ICC Interpretation Guidelines

Use the following table, based on the work of Koo & Li, to interpret the practical significance of your calculated ICC value [15] [16].

| ICC Value | Interpretation of Reliability | Implication for Practice |

|---|---|---|

| < 0.50 | Poor | The measurement tool has low reliability. It is not suitable for clinical use and requires refinement. |

| 0.50 - 0.75 | Moderate | The tool has acceptable reliability for group-level comparisons or research. |

| 0.75 - 0.90 | Good | The tool has good reliability and may be suitable for some clinical applications, like tracking groups of patients. |

| > 0.90 | Excellent | The tool has high reliability and is suitable for making clinical decisions about individuals. |

Comparing ICC Model Types

The table below summarizes the three primary statistical models for ICC and their appropriate applications [15] [16].

| Model | When to Use | Key Assumption |

|---|---|---|

| One-Way Random Effects | Each subject is rated by a different, random set of raters. (Rarely used in practice) | Raters are a random factor; no rater-specific effects are modeled. |

| Two-Way Random Effects | A random sample of raters is used, and you want to generalize findings to any similar rater from the population. | Both subjects and raters are considered random factors. |

| Two-Way Mixed Effects | The specific raters in your study are the only raters of interest (e.g., a fixed team of experts). | Subjects are a random factor, but raters are a fixed factor. |

Technical Support Center

Troubleshooting Guides & FAQs

This section addresses common experimental challenges in biomarker research, providing targeted solutions to ensure data reliability and reproducibility.

FAQ: Understanding and Addressing Biomarker Variability

What are the primary sources of variability in biomarker measurements?

Biomarker variability arises from three main sources: within-individual biological variability (CVI) (fluctuations within a person over time), between-individual variability (CVG) (differences between people), and methodological variability (CVP + A) (pre-analytical, analytical, and post-analytical errors) [20]. Failure to account for within-person variation, which can be substantial, may exaggerate other correlated errors in your analysis [21].

How can I determine if my biomarker data is affected by high within-person variation?

Calculate the Index of Individuality (II) using the formula: II = (CVI + CVP + A) / CVG [20]. A low II (e.g., less than 0.6) indicates that within-person variation is small compared to the differences between individuals. This suggests that a single measurement may reasonably represent an individual's status. Conversely, a high II means within-person variation is large, and multiple measurements over time are needed to reliably classify an individual's status [20].

We are seeing inconsistent results between assays. What are the first things we should check?

Inconsistent assay-to-assay results are often linked to procedural inconsistencies [3].

- Temperature & Handling: Ensure consistent incubation temperatures and that all reagents are at room temperature before starting the assay. Be aware of environmental fluctuations [22] [3].

- Washing Steps: Insufficient washing is a common cause of high background and poor replicate data. Adhere strictly to washing procedures, ensuring complete drainage between steps [22].

- Reagent Preparation: Double-check pipetting technique and calculations for all dilutions. Use fresh plate sealers for each incubation step to prevent contamination and evaporation [22].

Our biomarker study involves nutritional neuroscience. What is a key methodological consideration?

Utilize repeat-measure biomarker error models. These models account for systematic correlated within-person error and random within-person variation in biomarkers. They are essential for calculating accurate deattenuation factors (λ) and correlation coefficients (ρ) used in measurement error correction, preventing exaggerated correlation estimates between different assessment tools (e.g., food frequency questionnaires and 24-hour recalls) [21].

What is a fundamental step to reduce pre-analytical variability in biomarker studies?

Implement and rigorously follow Standard Operating Procedures (SOPs) for sample collection, processing, and storage. Studies show that labs using robust SOP frameworks have significantly lower error rates [3]. For instance, standardizing protocols for blood drawing, centrifuging, freezing, and shipping is critical, as pre-analytical errors can account for a large proportion of laboratory diagnostic mistakes [20].

Troubleshooting Guide: Common ELISA Problems

The table below outlines frequent issues encountered during ELISA procedures, their potential causes, and recommended solutions [22].

Table: Common ELISA Issues and Solutions

| Problem | Possible Cause | Solution |

|---|---|---|

| Weak or No Signal | Reagents not at room temperature; expired reagents; insufficient detector antibody; scratched wells. | Allow all reagents to warm up for 15-20 minutes; confirm expiration dates; follow recommended antibody dilutions; use caution when pipetting [22]. |

| High Background | Insufficient washing; substrate exposed to light; prolonged incubation times. | Ensure complete drainage during wash steps; store substrate in the dark; adhere to recommended incubation times [22]. |

| Poor Replicate Data | Inconsistent washing; capture antibody not properly bound; cross-contamination between wells. | Follow standardized washing procedures; ensure correct plate coating and blocking; use fresh plate sealers [22]. |

| Poor Standard Curve | Incorrect serial dilutions; issues with capture antibody binding. | Verify pipetting technique and calculations; ensure an ELISA plate (not tissue culture plate) is used with the correct coating protocol [22]. |

Quantitative Data on Biomarker Variability

Understanding the expected scale of variability for different classes of biomarkers is crucial for study design and data interpretation. The following table summarizes key variability metrics for a range of biomarkers, based on research from the Hispanic Community Health Study/Study of Latinos (HCHS/SOL) [20].

Table: Analytical and Biological Variability of Selected Biomarkers [20]

| Biomarker | Within-Individual Variability (CVI) | Between-Individual Variability (CVG) | Index of Individuality (II) |

|---|---|---|---|

| Fasting Glucose | 5.8% | 20.2% | 0.34 |

| Fasting Insulin | 23.1% | 52.9% | 0.51 |

| Total Cholesterol | 5.2% | 12.6% | 0.48 |

| Triglycerides | 21.6% | 50.5% | 0.50 |

| C-reactive Protein (hsCRP) | 52.9% | 107.6% | 0.57 |

| Hemoglobin | 3.5% | 5.8% | 0.69 |

| Ferritin | 19.6% | 62.8% | 0.36 |

| ALT (Liver Enzyme) | 15.8% | 33.3% | 0.54 |

| Creatinine | 5.6% | 19.7% | 0.33 |

| Cystatin C | 5.6% | 15.8% | 0.42 |

Key Insight: Biomarkers with a low Index of Individuality (II), like fasting glucose and creatinine, are more influenced by differences between people. A single measurement can be useful for assessing an individual against a reference population. Biomarkers with a high II, like C-reactive protein, have substantial within-person fluctuation, making multiple measurements essential for accurate personal baseline assessment [20].

Experimental Protocols & Workflows

Detailed Protocol: Assessing Biomarker Variability in a Cohort

This protocol is designed to estimate the different components of biomarker variability within a study population, as demonstrated in HCHS/SOL [20].

1. Study Design:

- Main Cohort: Collect baseline biospecimens (e.g., fasting blood, urine) from all participants following a standardized venipuncture and processing protocol.

- Within-Individual Variation Substudy: Select a random subset of participants (e.g., n=58) for a second, identical biospecimen collection. The time between collections (e.g., weeks or months) should be chosen based on the biological rhythm of the biomarkers of interest.

- Duplicate Measurements: For a larger subset of participants (e.g., n=761-929), include duplicate or blinded replicate samples (e.g., an extra 5% of samples) to assess methodological variability.

2. Sample Collection & Handling:

- Standardization: Implement a strict SOP for all pre-analytical steps: blood drawing, field center processing (centrifuging, aliquoting), shipping, and storage (e.g., -80°C).

- Quality Control: Use barcoding systems to minimize mislabeling. Document all procedures meticulously.

3. Laboratory Analysis:

- Analyze all samples, including replicates, in a randomized order to avoid batch effects.

- Use validated and precise laboratory assays. The coefficient of analytical variation (CVA) should be less than the desirable imprecision for the specific analyte.

4. Statistical Analysis:

- Screen Data: Use scatterplots and Bland-Altman plots to check for linearity, constant variance, and identify outliers (e.g., differences from mean > 3SD).

- Log-Transform: Apply log-transformation to biomarkers with skewed distributions before analysis.

- Calculate Variability Components:

- Within-Individual Variability (CVI): Derived from the repeated measures in the substudy.

- Between-Individual Variability (CVG): Calculated from the baseline measurements of the main cohort.

- Process & Analytical Variability (CVP+A): Estimated from the duplicate measurements.

- Compute Index of Individuality (II): Use the formula

II = (CVI + CVP+A) / CVG.

The Scientist's Toolkit: Essential Research Reagents & Materials

This table details key materials and their functions for ensuring high-quality biomarker research, based on common requirements across the cited studies[c:1][c:3][c:5][c:7].

Table: Essential Research Reagent Solutions for Biomarker Studies

| Item | Function & Importance |

|---|---|

| Validated Assay Kits (e.g., ELISA) | Pre-optimized and validated kits (stored at 2–8°C) provide reliability and reproducibility for quantifying specific proteins. Using expired kits is a common source of assay failure [22]. |

| Automated Homogenization System | Platforms like the Omni LH 96 automate sample preparation, drastically reducing cross-contamination risks and ensuring uniform processing, which is critical for high-throughput biomarker studies [3]. |

| CLIA-Certified / CAP-Accredited Laboratory | Utilizing accredited labs ensures standardized, quality-controlled testing environments, which is a foundational requirement for generating clinically relevant data [23]. |

| Barcode Sample Tracking System | Implementing a barcoding system for biospecimens can reduce mislabeling incidents by over 85%, directly addressing a major source of pre-analytical error [3]. |

| Standardized Buffers (e.g., PBS) | Correctly prepared phosphate-buffered saline (PBS) is essential for diluting antibodies and coating plates when developing "in-house" ELISAs [22]. |

| Single-Use Consumables (e.g., Omni Tips) | Using single-use tips and tubes with automated systems eliminates direct human contact with samples, minimizing the risk of cross-sample contamination and environmental exposure [3]. |

Visualization of Key Concepts

The Cycle of Biomarker Variability & Confounding

This diagram illustrates the self-perpetuating cycle of key biological determinants that confound Alzheimer's disease blood-based biomarker levels, integrating nutrition, inflammation, and metabolism [24].

Data Fusion in Nutritional Cognitive Neuroscience

This workflow depicts the innovative data fusion approach used to integrate multimodal data and identify phenotypes of healthy brain aging, as seen in recent nutritional cognitive neuroscience research [25] [26].

Troubleshooting Guides and FAQs

How does within-person variation in biomarkers affect my study's results?

Unaccounted within-person variation is a major source of measurement error that can severely distort your findings. This variation arises from fluctuations in an individual's biomarker levels across multiple measurements, even under the same conditions.

Failure to address this leads to three critical problems:

- Bias: It can exaggerate correlated errors between different measurement tools, such as food frequency questionnaires (FFQs) and 24-hour diet recalls [21].

- Attenuation: It weakens (attenuates) the observed correlation between your measurements and the true biological value. This can make real associations appear weaker than they are.

- False Conclusions: Ultimately, this can lead to both Type I errors (false positives) and Type II errors (false negatives), undermining the validity of your research conclusions [21] [27].

My correlation coefficients are weaker than expected. Could within-person variation be the cause?

Yes, this is a classic symptom. Within-person variation introduces "noise" that obscures the true "signal" of the relationship you are studying. To identify and correct for this, you should use a repeat-measure biomarker measurement error model.

This specialized statistical model helps to:

- Account for systematic, correlated within-person error.

- Separate random within-person variation from the true biomarker value.

- Calculate a deattenuation factor (λ), which is used to correct the observed correlation coefficients, providing a more accurate estimate of the true relationship [21].

The table below summarizes key quantitative evidence of this impact from real validation studies:

| Study Name | Biomarker | Intraclass Correlation Coefficient (ICC) | Deattenuated Correlation (FFQ vs. Biomarker) |

|---|---|---|---|

| Automated Multiple-Pass Method Validation Study (n=471) | Energy (Doubly Labeled Water) | 0.43 | - |

| Automated Multiple-Pass Method Validation Study (n=471) | Protein Density (Urinary Nitrogen/DLW) | 0.54 | 0.49 |

Quantitative data from a real validation study showing substantial within-person variation (reflected by ICCs less than 1.0) and the subsequent correction via deattenuation [21].

What is the step-by-step method to implement a repeat-measurement error model?

Implementing this approach requires careful planning in both study design and analysis.

Experimental Protocol:

- Study Design: Incorporate repeated biomarker measurements for a subset of your participants. The number of repeats depends on the biomarker's stability and logistical constraints.

- Time Interval: Choose an appropriate time interval between repeats. For example, the Automated Multiple-Pass Method Validation Study collected doubly labeled water and urinary nitrogen measurements twice in 52 adults, approximately 16 months apart [21].

- Data Collection: Consistently collect your main exposure data (e.g., FFQs) alongside the biomarker measurements.

Analysis Workflow: The following diagram outlines the logical flow of the statistical modeling process to account for within-person variation.

What is the difference between within-person variation and common method variance (CMV)?

It is crucial to distinguish these two sources of error, as they require different mitigation strategies.

- Within-Person Variation: This is the natural biological fluctuation of a metric within a single individual over time. It is a source of error in the measurement of a variable and is addressed through repeated measures and specific statistical models in biomarker research [21].

- Common Method Variance (CMV): This is systematic error variance shared among variables in a dataset that arises from the method used to collect the data [28]. For example, it can stem from using a single self-report survey for all variables, item wording, or respondent tendencies like acquiescence bias.

The table below clarifies the key differences:

| Aspect | Within-Person Variation | Common Method Variance (CMV) |

|---|---|---|

| Source | Biological fluctuation; random measurement error | Measurement method (e.g., self-report survey) |

| Scope | Affects a single variable | Artificially inflates relationships between variables |

| Primary Mitigation | Repeat-measure biomarker models; study design | Procedural remedies (e.g., temporal separation); complex statistical models (e.g., SEM) |

| Impact | Attenuates correlations; reduces measured effect sizes | Can inflate or deflate correlations, potentially causing false positives |

How can I proactively design my study to minimize these issues?

Proactive design is the most effective strategy. Here are key considerations:

- Incorporate Repeated Measurements: Plan for and budget the collection of repeated biomarker assessments from a representative subset of your study population. This is non-negotiable for reliably estimating and correcting for within-person variation [21].

- Use Additional Variables: In multivariate models, adding well-chosen independent variables can help mitigate the influence of other errors, like CMV. Research indicates that including four or more additional variables in structural equation models can notably reduce error and bias [28].

- Validate Your Measures: Conduct local validation studies for your key tools (e.g., FFQs) against objective biomarkers to understand the specific measurement error structure in your population [21].

- Formal Troubleshooting: Adopt a structured troubleshooting protocol. When an experiment fails, systematically:

- Repeat the experiment to rule out simple mistakes.

- Check your controls (positive and negative) to confirm the experiment itself is sound.

- Isolate variables one at a time to diagnose the root cause [29].

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Context |

|---|---|

| Doubly Labeled Water (DLW) | A gold-standard biomarker for measuring total energy expenditure in free-living individuals over a period of 1-2 weeks. |

| Urinary Nitrogen (UN) | A biomarker used to estimate dietary protein intake based on the excretion of nitrogen in urine. |

| Food Frequency Questionnaire (FFQ) | A self-report tool designed to capture an individual's usual dietary intake over a long period (e.g., the past year). |

| 24-Hour Diet Recall | A structured interview to capture detailed dietary intake over the previous 24 hours, often used as a more precise (though still imperfect) reference method. |

| Intraclass Correlation Coefficient (ICC) | A statistical measure of reliability that quantifies the proportion of total variance due to between-person differences. Lower ICCs indicate higher within-person variation. |

| Deattenuation Factor (λ) | A correction factor, derived from reliability studies, applied to an observed correlation coefficient to account for attenuation caused by measurement error. |

Statistical Models and Study Designs to Quantify and Control Variation

Implementing Repeat-Measure Biomarker Error Models for Validation Studies

Frequently Asked Questions (FAQs)

1. Why is accounting for within-person variation critical in biomarker validation studies? Biomarkers are subject to both biological fluctuations and measurement error. Within-person variation, if unaccounted for, can exaggerate correlated errors between different measurement instruments and lead to biased estimates of association. Using models that incorporate repeated biomarker measurements allows for the estimation and correction of this variation, leading to more reliable validation study results [21].

2. What is the consequence of ignoring measurement error in biomarker data? Ignoring measurement error can lead to several issues:

- Attenuation bias in regression analysis, where the estimated effect of the biomarker on an outcome is biased towards zero [18].

- Biased estimation of diagnostic accuracy measures, such as the Area Under the Curve (AUC), typically causing an under-estimation of a biomarker's true efficacy [18].

- Invalid p-values and confidence intervals in analyses that rely on repeated measures, due to violation of the independence assumption [30] [31].

3. My study has missing biomarker measurements at some time points. What is the best analytical approach? Mixed-effects models are the most flexible and recommended approach for handling missing data in repeated measures studies. Unlike repeated-measures ANOVA, which typically excludes subjects with any missing data (complete case analysis), mixed-effects models can include all available data points, even if the number and timing of measurements vary across subjects. This helps to maximize statistical power and reduce bias [31].

4. When should I use a repeated-measures ANOVA versus a mixed-effects model? The choice depends on your data structure and assumptions:

- Repeated-measures ANOVA requires a balanced design (same number of measurements for all subjects), strict sphericity (constant variance of differences between time points), and handles time as a categorical variable. It is less flexible with missing data [30] [31].

- Mixed-effects models are more flexible. They can handle unbalanced data and missing measurements, model time as either continuous or categorical, and do not require the sphericity assumption. They are generally preferred for complex longitudinal data in biomedical research [30] [31].

5. What is a "summary statistic approach" and when is it appropriate? This approach involves condensing the repeated measurements from each subject into a single, clinically relevant summary number (e.g., the mean, slope, or area under the curve). This summary statistic is then used in standard statistical tests. It is a simple and valid method that eliminates the problem of correlated data, but its major drawback is the loss of information about within-subject change over time [30].

Troubleshooting Guides

Problem 1: Low Statistical Power to Detect a Treatment Effect

Potential Cause: High within-individual (intra-individual) variability in your biomarker measurements is obscuring the true signal of a treatment effect.

Solutions:

- Increase the number of repeated measurements per subject. Research on Alzheimer's disease plasma biomarkers shows that intra-individual variability is often lower than inter-individual variability. Collecting multiple measurements during baseline and post-treatment periods can improve precision and power [32] [33].

- Consider a specialized trial design. Implement a Single-arm Lead-In with Multiple Measures (SLIM) design. This involves taking several biomarker measurements during a placebo lead-in phase and again during the active treatment phase. By comparing within-subject changes, this design minimizes the confounding effect of large between-subject variability, which is common in diseases like Alzheimer's, and can substantially reduce the required sample size [32].

- Use the correct analytical model. Ensure you are using a statistical method like a mixed-effects model that properly accounts for the correlation between repeated measures and uses all available data, thereby increasing power [31].

Problem 2: Attenuated Effect Estimate in Regression Analysis

Potential Cause: The biomarker measurement is acting as a surrogate for the true underlying exposure and is measured with error.

Solutions:

- Apply a measurement error correction model. Use a repeat-measure biomarker error model to estimate the deattenuation factor (λ) and the correlation coefficient (ρ). These parameters can be used to correct the biased (attenuated) effect estimate obtained from a naive analysis [21].

- Collect replication data. The correction models require data on the short-term variability of the biomarker. This often involves collecting repeated biomarker measurements on a subsample of your study participants to reliably estimate the within-person variation [21].

Problem 3: Inappropriate Dichotomization of a Continuous Biomarker

Potential Cause: A common bad practice in biomarker research is to split a continuous variable into two groups (e.g., "high" vs. "low") using an arbitrary cut-point.

Solutions:

- Avoid dichotomization. "Dichotomania" discards valuable information, reduces statistical power, and assumes a discontinuous relationship that rarely exists in nature. It also makes findings highly dependent on the chosen cut-point, harming reproducibility [5].

- Use the full information in the data. Analyze the biomarker as a continuous variable. Use flexible modeling techniques like regression splines within your mixed model to capture potential nonlinear relationships without losing information [5].

Key Experimental Protocols

Protocol 1: Estimating Short-Term Variability for a Biomarker

This protocol is designed to collect the necessary data for calculating within- and between-subject variability, which is foundational for measurement error correction.

Objective: To determine the intra- and inter-individual variability of a specific biomarker in the target population.

Methodology (Based on an Alzheimer's Disease Biomarker Study [33]):

- Participant Recruitment: Recruit a consecutive sample from your target population (e.g., memory clinic patients).

- Blood Sampling: Collect blood samples from each participant on three separate days.

- Time Interval: Space the visits within a short period (e.g., 4-36 days) to capture short-term biological variation rather than long-term disease progression.

- Sample Processing: Process all samples using a standardized, pre-specified protocol (e.g., centrifuge within 30-120 minutes, aliquot, and store at -80°C) to minimize pre-analytical variability.

- Blinded Analysis: Analyze all samples in a single batch, or in random order, by personnel blinded to the participant's identity and visit number to minimize assay variability and bias.

Key Measurements: Biomarker concentration at each of the three visits for every participant.

Protocol 2: Implementing the SLIM Trial Design

This protocol outlines an innovative early-phase trial design that uses repeated measures to enhance power [32].

Objective: To efficiently evaluate the biological efficacy of a treatment by assessing pre-post changes in a plasma biomarker within the same individuals.

Workflow: The following diagram illustrates the structure of the SLIM trial design.

Methodology [32]:

- Design: Single-arm trial (all participants receive the intervention).

- Placebo Lead-In Phase: A baseline period where all participants receive a placebo. During this phase, collect repeated biomarker measurements (e.g., monthly for 3 months).

- Active Treatment Phase: Immediately after the lead-in, all participants transition to the active treatment. During this phase, collect the same number of repeated biomarker measurements with the same frequency.

- Analysis: Use a mixed-effects model to compare the aggregated biomarker measurements from the active treatment phase against those from the placebo lead-in phase. The model treats each participant as their own control, thereby minimizing the impact of large between-subject variability.

Quantitative Data on Biomarker Variability

The following table summarizes real-world data on the short-term variability of Alzheimer's disease plasma biomarkers, providing a reference for expected variability levels in a memory clinic cohort [33].

Table 1: Short-Term Variability of Alzheimer's Disease Plasma Biomarkers

| Biomarker | Intra-Individual Variability (CV%) | Inter-Individual Variability (CV%) | Reference Change Value (RCV%) |

|---|---|---|---|

| Aβ42/40 ratio | ~3% | ~7% | -15% / +17% |

| GFAP | ~5% | ~18% | -15% / +18% |

| p-tau217 | ~6% | ~16% | -18% / +22% |

| p-tau181 | ~8% | ~20% | -30% / +42% |

| NfL | ~9% | ~39% | -26% / +35% |

| T-tau | ~12% | ~22% | -35% / +53% |

Key Definitions:

- Intra-Individual Variability: The variation of a biomarker's concentration around an individual's homeostatic set-point.

- Inter-Individual Variability: The variation of homeostatic set-points across individuals in a group.

- Reference Change Value (RCV): The minimum percentage change between two consecutive measurements required to be considered statistically significant, accounting for analytical and intra-individual biological variation.

Research Reagent Solutions

Table 2: Essential Materials for Repeat-Measure Biomarker Studies

| Item | Function | Example from Literature |

|---|---|---|

| EDTA-Plasma Tubes | Standardized blood collection tubes containing anticoagulant to ensure sample stability. | Used for collecting plasma samples for Alzheimer's biomarker analysis [33]. |

| Simoa HD-X Analyzer | An ultra-sensitive immunoassay platform for quantifying very low concentrations of biomarkers in blood. | Used to measure plasma Aβ40, Aβ42, p-tau181, p-tau217, NfL, and GFAP [33]. |

| Doubly Labeled Water (DLW) | A biomarker for total energy expenditure, used as an objective reference measure in dietary validation studies. | Served as a validation standard for energy intake in the OPEN and NBS studies [21]. |

| Urinary Nitrogen (UN) | A biomarker for protein intake, used as an objective reference in dietary validation studies. | Used to validate protein intake measurements from food frequency questionnaires [21]. |

| Multiplex Assays | Kits that allow simultaneous measurement of multiple biomarkers from a single sample, conserving precious sample volume. | Neurology 4-plex E assay used for simultaneous measurement of Aβ42, Aβ40, NfL, and GFAP [33]. |

Frequently Asked Questions

1. What is the purpose of partitioning variance in biomarker studies? Partitioning variance helps quantify different sources of variability in your biomarker measurements. This is crucial for determining whether a biomarker is a reliable surrogate for exposure or disease risk. By estimating within-individual (σ²I), between-individual (σ²G), and methodological (σ²P+A) variances, you can assess the biomarker's repeatability and its potential to bias exposure-response relationships in epidemiological studies [34] [1].

2. How do I know if my variance component estimates are reliable?

The reliability of your estimates depends on your study design. Ensure you have collected sufficient repeated measurements from individuals to robustly estimate within-person variability. Using linear mixed models with restricted maximum likelihood (REML) estimation is a standard and reliable approach. Furthermore, you can use parametric bootstrapping, as implemented in packages like partR2, to quantify confidence intervals for your variance estimates [35] [34].

3. My within-individual variance is very high. What could be the cause? High within-individual variance (σ²I) can be caused by several factors:

- True biological fluctuations: Diet, sleep, and physical activity can cause biomarker levels to vary naturally.

- Pre-analytical factors: Inconsistent procedures for blood drawing, processing, shipping, or sample storage can introduce significant variability [34].

- Inadequate covariate adjustment: Failing to account for factors like time of sample collection, hydration status, smoking, or BMI can leave their effects in the "unexplained" within-individual variance [1]. Always review and standardize your protocols.

4. What is a high or low Index of Individuality (II), and why does it matter? The Index of Individuality is calculated as II = (CVI + CVP+A) / CVG. A low II (e.g., < 0.6) indicates high between-subject variability relative to within-subject variability. This suggests that a single measurement is not very useful for classifying an individual within a population reference range, and serial measurements are needed. A high II (e.g., > 1.4) implies that population-based reference values may be more effective [34].

5. How can I handle highly skewed biomarker data in a linear mixed model? It is common for biomarker data to have skewed distributions. A standard practice is to log-transform the biomarker values before analysis. This helps meet the model's assumption of normally distributed residuals. Always check the distribution of your residuals after fitting the model to validate this assumption [34].

6. What software can I use to implement these methods?

- R is highly capable for this analysis. You can use the

lmerfunction from thelme4package to fit linear mixed models [36]. - The

partR2package provides additional functionality for partitioning variance in fixed effects and estimating confidence intervals via bootstrapping [35]. - The

MCMCglmmpackage offers a Bayesian approach to fitting generalized linear mixed models, which can be particularly useful for complex data structures [36].

Troubleshooting Guides

Issue 1: High Methodological Variance (σ²P+A)

Problem: A large proportion of the total variance is attributed to methodological sources (process and analytical variability), masking true biological signals.

Solutions:

- Review Pre-analytical Protocols: Standardize every step, from biospecimen collection (e.g., venipuncture protocol, tourniquet time) to processing (e.g., consistent centrifugation speed, time, and temperature) and storage (e.g., immediate freezing at -80°C) [34]. The HCHS/SOL study used a rigorous, standardized protocol for this purpose.

- Implement Duplicate Measurements: Introduce a QC system with blinded duplicate samples, as done in the HCHS/SOL Sample Handling study. This directly allows you to monitor and quantify methodological variance over time [34].

- Use Quality Control Metrics: Employ data type-specific quality metrics and software (e.g.,

arrayQualityMetricsfor microarray data,fastQCfor NGS data) to identify and filter out poor-quality samples or technical outliers [37].

Issue 2: Unrealistic or Non-converging Model

Problem: The linear mixed model fails to converge or produces variance component estimates that are negative or unrealistically large.

Solutions:

- Check for Outliers: Use scatterplots and Bland-Altman plots to visually identify outliers (e.g., values >3 standard deviations from the mean). Exclude these outliers from the analysis and document the decision [34].

- Simplify the Model: Start with a simple random intercepts model. If the model is too complex for your data (e.g., too many random effects with limited data), it may fail to converge. Gradually build complexity.

- Verify Distributional Assumptions: Check the normality of the residuals. If the data are skewed, apply a log-transformation to the biomarker values before fitting the model [34].

- Consider Alternative Estimators: If using REML with the

lmerfunction is problematic, consider using a Bayesian approach with theMCMCglmmpackage, which can be more stable for complex models [36].

Issue 3: Weak or Attenuated Exposure-Response Relationship

Problem: The association between the biomarker and a health outcome is weak, potentially due to high within-individual variability in the biomarker.

Solutions:

- Select a Less Noisy Biomarker: Calculate the variance ratio λ = σ²w / σ²b for your candidate biomarkers. Biomarkers with a lower λ (i.e., lower within-person variance relative to between-person variance) will cause less attenuation bias in exposure-response relationships [1].

- Increase Repeated Measurements: The attenuation bias is inversely proportional to the number of repeated measurements per person (ni). If possible, design studies with more repeated measures to improve the precision of estimating an individual's long-term average exposure [1].

- Adjust for Key Covariates: Include covariates like time of sample collection, hydration status, smoking, and BMI in your model. In the road-paving study, these factors explained a substantial portion (63-82%) of the variance in some biomarkers, reducing unexplained noise [1].

Key Variance Components and Their Interpretation

Table 1: Glossary of Key Variance Components and Metrics

| Symbol | Name | Interpretation |

|---|---|---|

| σ²I | Within-individual variance | Measure of how much a person's biomarker levels vary over time due to biology and unmeasured short-term factors. |

| σ²G | Between-individual variance | Measure of the variability in long-term average biomarker levels between different people in your population. |

| σ²P+A | Methodological variance | Variability introduced by the entire measurement process, from sample collection and processing to the laboratory assay itself. |

| II | Index of Individuality | Ratio (CVI + CVP+A) / CVG. Indicates whether population reference ranges (high II) or individual reference values (low II) are more appropriate. |

| λ | Variance Ratio | Ratio σ²w / σ²b. Used in bias calculation; a lower λ means the biomarker is a less-biasing surrogate for exposure in dose-response models [1]. |

Table 2: Example Variance Components from Real-World Studies

| Study Context | Biomarker Example | Key Variance Findings | Implication |

|---|---|---|---|

| Road-Paving Workers (PAH Exposure) [1] | Urinary 1-hydroxypyrene (1-OH-Pyr) | Lower within- to between-person variance ratio (λ) compared to other PAH biomarkers. | Less-biasing surrogate for modeling exposure-response relationships. |

| HCHS/SOL (Hispanic Population) [34] | Fasting Glucose, Triglycerides | Higher between-individual variability (CVG) and lower Index of Individuality (II) than previously published studies in other populations. | Population-specific estimates are critical; a single measurement may be less useful for clinical classification in this group. |

| General Biomarker Research [1] | Urinary Metabolites | Covariates (time, hydration, smoking, BMI) explained 63-82% of variance for some metabolites. | Adjusting for covariates is essential to reduce noise and improve signal detection. |

Essential Research Reagent Solutions

Table 3: Key Materials and Reagents for Biomarker Variance Studies

| Item / Solution | Critical Function | Considerations for Variance Reduction |

|---|---|---|

| Standardized Blood Collection Tubes | Consistent sample acquisition. | Use the same type (e.g., serum, EDTA, citrate) and brand across the study to minimize pre-analytical variance [34]. |

| Controlled Temperature Storage (-80°C) | Preserves biomarker integrity. | Minimize freeze-thaw cycles. Use a monitored freezer to prevent degradation that contributes to σ²P+A [34]. |

| Automated Clinical Chemistry Analyzers | Measures biomarker concentrations. | Use the same platform and calibrated instruments for all samples. Include control samples in every batch to monitor analytical variance [34]. |

| Creatinine Assay Kits | Normalizes for urine dilution. | Essential for urinary biomarkers. A colorimetric assay is a standard method to account for hydration status, a key covariate [1]. |

| Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) | Quantifies specific metabolites. | A high-precision method used for measuring hydroxylated PAH metabolites and other specific analytes [1]. |

| Headspace-SPME/GC-MS | Measures volatile organics. | The method used for unmetabolized urinary naphthalene and phenanthrene (U-Nap, U-Phe) [1]. |

Experimental Workflow for Variance Partitioning

The following diagram illustrates the core workflow for designing a study to estimate variance components, based on established methodologies from the cited literature [34] [1].

Study Workflow for Variance Partitioning

Statistical Modeling and Analysis Protocol

The core of the analysis involves using linear mixed models to partition the total variance. The model structure, as applied in the HCHS/SOL study, can be represented as follows [34]:

Model for Within-Individual Variation Study: This model estimates the total within-individual variance (σ²I), which includes both biological and methodological variation.

Where:

y_ijis the biomarker value for individualiat timej.β0is the overall fixed intercept.u_iis the random intercept for individuali, assumed to be normally distributed ~N(0, σ²G).ε_ijis the residual error, representing within-individual variation ~N(0, σ²I).

Model for Sample Handling (Duplicate) Study: This model is used to estimate the methodological variance (σ²P+A) separately.

Where:

y_ikis the biomarker value for thek-th duplicate measurement from individuali.- The variance of

ε_iknow represents the methodological variance, σ²P+A.

Variance Component Calculation: The between-individual variance (σ²G) is estimated directly from the random intercepts in the model. The total variance is the sum of the components: σ²Total = σ²G + σ²I + σ²P+A. These components are then used to calculate the Index of Individuality (II) and the variance ratio (λ) for bias assessment [34] [1].

Visualizing Variance Components and Their Impact

The following diagram illustrates how the different variance components contribute to the total variability observed in a dataset and how they are used to derive key metrics for biomarker evaluation.

Variance Components and Derived Metrics

Key Studies Reporting Deattenuation Factors and Validity Coefficients

The following table summarizes empirical findings on deattenuation factors (λ) and validity coefficients from major methodological studies.

Table 1: Deattenuation Factors and Validity Coefficients from Validation Studies

| Study Name | Biomarker/Dietary Component | Validation Sample Size | Deattenuation Factor (λ) | Validity Coefficient (VC) | Reference |

|---|---|---|---|---|---|

| Automated Multiple-Pass Method Validation Study | Energy (Doubly Labeled Water) | 52 adults with repeat measures | Not explicitly stated | Intraclass Correlation: 0.43 (energy) | [21] |

| Automated Multiple-Pass Method Validation Study | Protein Density (Urinary Nitrogen/DLW) | 52 adults with repeat measures | Calculated for models | Intraclass Correlation: 0.54 (protein density) | [21] |

| Observing Protein and Energy Nutrition (OPEN) Study | Energy and Protein | 261 men, 223 women | Not explicitly stated | Correlation between FFQ and biomarker: 0.49 (protein density) | [21] [18] |

| Dietary Evaluation and Attenuation of Relative Risk (DEARR) Study | Protein | 161 participants | Not explicitly stated | VC(FFQ): 0.77, VC(24HR): 0.68, VC(Urinary Nitrogen): 0.44 | [38] |

| Dietary Evaluation and Attenuation of Relative Risk (DEARR) Study | β-carotene | 161 participants | Not explicitly stated | VC(FFQ): 0.65, VC(24HR): 0.60, VC(Serum): 0.65 | [38] |

| Dietary Evaluation and Attenuation of Relative Risk (DEARR) Study | Folic Acid | 161 participants | Not explicitly stated | VC(FFQ): 0.72, VC(24HR): 0.39, VC(Serum): 0.65 | [38] |

Impact of Measurement Error on Diagnostic Performance

The following table summarizes the effects of measurement error on key diagnostic metrics and common correction approaches.

Table 2: Effects of Measurement Error and Correction Methods in Diagnostic Studies

| Diagnostic Metric | Effect of Measurement Error | Common Correction Methods | Key References |

|---|---|---|---|

| Area Under the Curve (AUC) | Attenuation (underestimation of efficacy) | Non-parametric kernel smoothers; Probitshift models; Skew-normal distribution methods | [18] [39] |

| Sensitivity & Specificity | Bias in estimation | Methods for normal biomarkers; Skew-normal methods for non-normal data | [18] [39] |

| Relative Risk / Hazard Ratio | Attenuation towards the null; Can also inflate estimates in multivariate settings | Method of triads; Regression calibration; Bayesian methods with validation data | [38] [40] |

| Correlation Coefficients | Attenuation (toward zero) | Deattenuation using reliability ratios or intraclass correlations | [21] |

Experimental Protocols

Protocol: Applying the Repeat-Measure Biomarker Error Model

This protocol is based on methodologies used in the OPEN Study and Automated Multiple-Pass Method Validation Study [21].

Purpose: To estimate the deattenuation factor (λ) and correct for within-person variation in biomarker measurements using a repeat-measure biomarker measurement error model.

Workflow Overview:

Materials and Reagents: Table 3: Essential Research Reagents and Materials for Biomarker Validation Studies

| Item | Specification / Example | Primary Function |

|---|---|---|

| Doubly Labeled Water (DLW) | (^2)H(_2)(^{18})O isotopic water | Objective biomarker for total energy expenditure assessment [18] |

| Urinary Nitrogen Analysis | Urine collection kits; Chemoanalytic equipment | Objective biomarker for protein intake validation [21] [18] |

| Serum Carotenoid Analysis | Blood collection tubes; HPLC equipment | Objective biomarker for fruit and vegetable intake validation [38] |

| Food Frequency Questionnaire (FFQ) | Validated instrument (e.g., 100+ items) | Self-reported assessment of long-term dietary intake [21] [38] |

| 24-Hour Dietary Recall (24HR) | Automated Multiple-Pass Method or equivalent | Short-term dietary recall as reference instrument [21] |

| Statistical Software | R, SAS, or Stata with measurement error packages | Implementation of repeat-measure error models and deattenuation calculations [41] |

Procedure:

- Study Design: Recruit a representative sample of participants (typically 150-500) from the target population. The OPEN Study, for example, included 261 men and 223 women [18].

- Biomarker Collection: Collect repeated biomarker measurements from each participant at multiple time points. The Automated Multiple-Pass Method Validation Study collected doubly labeled water and urinary nitrogen measurements twice in 52 adults approximately 16 months apart [21].

- Dietary Assessment: Administer the dietary assessment instruments (FFQ and 24-hour recalls) to all participants according to a standardized protocol.

- Correlation Analysis: Calculate the three key pairwise correlations: (a) between the FFQ and the biomarker, (b) between the FFQ and the 24-hour recall, and (c) between the 24-hour recall and the biomarker.

- Method of Triads Application: Apply the method of triads to estimate the validity coefficient (VC) between each instrument and the "true" unobserved intake. The VC is calculated as the geometric mean of the correlations between the three methods [38].

- Deattenuation Factor Calculation: Use the validity coefficients or intraclass correlation coefficients from the repeat biomarker measures to calculate the deattenuation factor (λ). This model accounts for systematic correlated within-person error and random within-person variation in the biomarkers [21].

- Association Adjustment: Apply the deattenuation factor to correct biased relative risk estimates in diet-disease association analyses by dividing the observed relative risk by λ [38].

Protocol: Correcting for Measurement Error in Multivariate Analyses with Confounders

This protocol addresses the more complex scenario where multiple variables, including confounders, are measured with error, based on methods applied in the EPIC study [40].

Purpose: To adjust for bias in diet-disease associations when both the dietary exposure and confounders (e.g., smoking) are measured with error, using external validation data.

Workflow Overview:

Procedure:

- Model Specification: Define a measurement error model that relates the observed questionnaire values (Q(1), Q(2)) to the true unobserved intakes (T(1), T(2)) for both the dietary exposure and confounder. For example: Q(i) = α({0i}) + α({1i})T(i) + ε({Qi}), where i=1 for dietary intake and i=2 for the confounder [40].

- External Validation Data Incorporation: Gather prior information on the validity of the self-report instruments from external validation studies. This includes estimates of attenuation factors and validity coefficients for both the dietary exposure and confounder.

- Bayesian Implementation: Implement a Markov Chain Monte Carlo (MCMC) sampling approach to estimate the adjusted log hazard ratios. This method combines prior information on instrument validity with the observed study data.

- Sensitivity Analysis: Conduct sensitivity analyses by varying the assumptions about the magnitude of measurement errors and the correlations between these errors. This assesses the robustness of the findings to different measurement error structures.

- Result Interpretation: Compare the measurement-error-adjusted hazard ratios with the naive unadjusted estimates to quantify the direction and magnitude of bias.

Frequently Asked Questions (FAQs) & Troubleshooting

Conceptual and Methodological Questions

Q1: What is the fundamental purpose of the deattenuation factor (λ) in epidemiological research? The deattenuation factor (λ) is used to correct relative risk estimates for the bias introduced by measurement error in exposure assessments. When a variable is measured with error, the observed association with a health outcome is typically biased toward the null hypothesis (attenuated). The deattenuation factor quantifies this bias and is used to inflate the observed relative risk to obtain a better estimate of the true association [21] [38].

Q2: In what scenarios does measurement error not cause simple attenuation of risk estimates? While attenuation is common, measurement error can also inflate risk estimates in specific situations, particularly in multivariate analyses. When confounders are also measured with error, the effects can resonate, potentially making a dietary intake with no true effect appear to have a sizable effect on disease risk. This is especially pronounced when there are strong correlations between the measurement errors of different variables [40].

Q3: How does the "method of triads" relate to deattenuation? The method of triads is a specific approach used to estimate the validity coefficient of a dietary assessment method. It uses the three pairwise correlations between: (1) the FFQ, (2) a reference instrument (e.g., 24-hour recall), and (3) a biomarker. The geometric mean of these correlations provides an estimate of the validity coefficient between each instrument and the "true" intake. This validity coefficient is directly used in the calculation of deattenuation factors for correcting relative risks [38].

Practical Implementation and Troubleshooting