Advanced Literature Search Strategies for Biomarker Discovery: A Multi-Omics and AI-Driven Guide for Researchers

This article provides a comprehensive framework for conducting effective literature searches in the rapidly evolving field of biomarker discovery.

Advanced Literature Search Strategies for Biomarker Discovery: A Multi-Omics and AI-Driven Guide for Researchers

Abstract

This article provides a comprehensive framework for conducting effective literature searches in the rapidly evolving field of biomarker discovery. Tailored for researchers, scientists, and drug development professionals, it outlines strategic approaches to navigate the vast and complex biomedical literature. The guide covers foundational multi-omics concepts, methodological applications of AI and spatial biology, troubleshooting for irreproducibility, and rigorous validation frameworks. By synthesizing current trends and technologies, including high-throughput multi-omics and machine learning, this resource aims to equip scientists with the tools to efficiently identify credible biomarker candidates, optimize discovery pipelines, and accelerate the translation of findings into clinically actionable diagnostics and personalized therapies.

Laying the Groundwork: Core Concepts and Multi-Omics Resources for Biomarker Exploration

Biomarkers, defined as objectively measurable indicators of biological processes, pathogenic processes, or responses to an exposure or intervention, serve as critical tools in modern healthcare and drug development [1]. These molecular, histologic, radiographic, or physiologic characteristics provide a window into human biology, enabling researchers and clinicians to move beyond symptomatic treatment toward precision medicine approaches [2]. The U.S. Food and Drug Administration (FDA) and National Institutes of Health (NIH) have jointly established a standardized terminology system through their Biomarkers, EndpointS, and other Tools (BEST) resource, creating a common framework for biomarker classification and application [1]. This classification system is particularly valuable for researchers developing literature search strategies, as it provides structured terminology for effective information retrieval across scientific databases.

The clinical significance of biomarkers continues to expand with technological advancements. Digital technology and artificial intelligence have revolutionized predictive models based on clinical data, creating opportunities for proactive health management that represents a transformative shift from traditional disease diagnosis and treatment models to health maintenance approaches based on prediction and prevention [3]. This paradigmatic transformation aligns with strategic health initiatives worldwide and addresses demographic challenges posed by increasing chronic disease prevalence in aging populations [3]. For researchers conducting systematic reviews or meta-analyses, understanding these biomarker categories enables precise search syntax development and accurate filtering of relevant studies based on biomarker application rather than merely molecular characteristics.

Table 1: Fundamental Biomarker Categories as Defined by FDA-NIH BEST Resource

| Biomarker Category | Primary Function | Representative Examples |

|---|---|---|

| Diagnostic | Detects or confirms presence of a disease or condition | Prostate-specific antigen (PSA), C-reactive protein (CRP) [4] |

| Prognostic | Predicts disease outcome or progression independent of treatment | Ki-67 (MKI67), BRAF mutations [4] |

| Predictive | Predicts response to a specific therapeutic intervention | HER2/neu status, EGFR mutation status [4] |

| Monitoring | Tracks disease status or therapy response over time | Hemoglobin A1c (HbA1c), Brain natriuretic peptide (BNP) [4] |

| Safety | Indicates potential toxicity or adverse effects | Liver function tests, Creatinine clearance [4] |

| Pharmacodynamic/Response | Shows biological response to a drug treatment | LDL cholesterol reduction in response to statins [4] |

| Susceptibility/Risk | Indicates genetic predisposition or elevated disease risk | BRCA1/BRCA2 mutations [4] |

Biomarker Types: Definitions, Applications, and Methodologies

Diagnostic Biomarkers

Diagnostic biomarkers are used to detect or confirm the presence of a disease or medical condition, and can also provide information about disease characteristics [4]. These biomarkers enable early intervention, often before symptoms become apparent, and are particularly valuable for diseases where early detection significantly improves outcomes. The validation of diagnostic biomarkers requires rigorous assessment of their sensitivity and specificity through receiver-operating characteristic curves, which enable a rational evaluation process despite the frequent challenge of lacking a historical standard for defining disease presence or absence [1].

The clinical application of diagnostic biomarkers requires careful consideration of the context of use. For low-prevalence diseases such as pancreatic or ovarian cancer where a new diagnosis is psychologically devastating or would require invasive evaluation, a biomarker must have a very low false-positive rate [1]. Conversely, for common diseases such as hypertension or hyperlipidemia where repeated assessments carry minimal risk, higher false-positive rates may be acceptable, with greater focus on minimizing false-negative results [1]. This contextual understanding is essential for researchers designing clinical validation studies for novel diagnostic biomarkers.

Prostate-specific antigen (PSA) exemplifies both the utility and complexity of diagnostic biomarkers. While elevated PSA levels can indicate prostate cancer, healthcare providers must interpret these results alongside other clinical data for accurate diagnosis [5]. Similarly, C-reactive protein (CRP) serves as a key biomarker for assessing inflammation in the body, with elevated levels associated with various inflammatory diseases including rheumatoid arthritis, lupus, and cardiovascular diseases [4]. The evolving landscape of diagnostic biomarkers includes emerging technologies such as liquid biopsies, which offer non-invasive detection methods that are revolutionizing patient monitoring and positioned to become standard practice by 2025 [5].

Prognostic Biomarkers

Prognostic biomarkers provide critical information about the likely disease course and outcome independent of therapeutic interventions [6]. These biomarkers help clinicians understand how aggressive a disease is, enabling appropriate treatment planning and patient counseling [4]. Unlike predictive biomarkers, prognostic biomarkers provide information about natural disease progression regardless of specific treatments, making them valuable for patient stratification in clinical trials and understanding disease biology.

The application of prognostic biomarkers is particularly advanced in oncology. Ki-67 (MKI67), a protein marker of cell proliferation, serves as a prognostic biomarker in breast cancer, prostate cancer, and other cancers [4]. High levels of Ki-67 are associated with more aggressive tumors and worse outcomes, providing clinicians with valuable information for treatment planning [4]. Similarly, BRAF mutations in melanoma and other cancers can help predict disease course, though it's important to distinguish this prognostic application from their predictive value for targeted therapies [4].

The evaluation of prognostic biomarkers requires longitudinal cohort studies that capture markers' dynamic changes over time [3]. Studies demonstrate that marker trajectories generally provide more comprehensive predictive information than single time-point measurements, offering vital information about disease natural history [3]. For researchers, this underscores the importance of seeking out studies with extended follow-up periods when evaluating the strength of prognostic biomarker evidence.

Predictive Biomarkers

Predictive biomarkers represent a cornerstone of personalized medicine, enabling clinicians to match patients with optimal treatments based on their unique biological profiles [5]. These biomarkers predict whether a patient will respond favorably or unfavorably to a specific therapy, creating a direct link between biomarker measurement and treatment decisions [4]. This category is particularly critical in oncology, where targeted therapies often come with significant side effects and costs, making pretreatment response prediction invaluable.

The development of predictive biomarkers requires a distinct validation approach focused on treatment interaction. Unlike prognostic biomarkers that correlate with disease outcomes regardless of treatment, predictive biomarkers must demonstrate that the treatment effect differs based on the biomarker status [4]. This typically requires randomized clinical trials where biomarker status is measured prior to treatment assignment, with analysis plans that specifically test for treatment-by-biomarker interactions.

HER2/neu status in breast cancer exemplifies the transformative potential of predictive biomarkers. Testing for HER2/neu status helps predict response to targeted therapies such as trastuzumab (Herceptin), enabling clinicians to identify patients who may benefit from this specific treatment [4]. Similarly, EGFR mutation status in non-small cell lung cancer predicts response to targeted therapies such as gefitinib (Iressa) and erlotinib (Tarceva) [4]. The clinical impact of these biomarkers is substantial, with biomarker-driven approaches dramatically improving treatment efficacy and patient outcomes across various therapeutic areas [5].

Table 2: Comparative Analysis of Diagnostic, Prognostic, and Predictive Biomarkers

| Characteristic | Diagnostic Biomarkers | Prognostic Biomarkers | Predictive Biomarkers |

|---|---|---|---|

| Primary Question Answered | Is the disease present? | How will the disease progress? | Will this treatment work? |

| Clinical Utility | Disease identification and classification | Informing treatment intensity and monitoring frequency | Selecting appropriate therapy |

| Measurement Timing | At time of diagnosis | At time of diagnosis | Before treatment initiation |

| Dependence on Treatment | Independent | Independent | Dependent on specific treatment |

| Representative Examples | PSA for prostate cancer, CRP for inflammation | Ki-67 in cancer, BRAF mutations in melanoma | HER2 status for trastuzumab, EGFR mutations for TKIs |

| Evidence Requirements | Sensitivity/specificity against reference standard | Association with clinical outcomes in untreated populations | Interaction with treatment effect in randomized trials |

Experimental Approaches and Workflows in Biomarker Research

Multi-Omics Integration Strategies

Contemporary biomarker discovery has been revolutionized by multi-omics strategies that integrate genomics, transcriptomics, proteomics, metabolomics, and epigenomics data [7]. This integrated approach provides a comprehensive understanding of cellular dynamics, facilitating biomarker identification that captures the complexity of biological systems [7]. Landmark projects such as The Cancer Genome Atlas (TCGA) Pan-Cancer Atlas, the Pan-Cancer Analysis of Whole Genomes (PCAWG), MSK-IMPACT, and the Clinical Proteomic Tumor Analysis Consortium (CPTAC) have demonstrated the utility of multi-omics in uncovering disease biology and clinically actionable biomarkers [7].

The workflow for multi-omics biomarker discovery typically involves several coordinated steps. Genomics investigates alterations at the DNA level using advanced sequencing technologies such as whole exome sequencing (WES) and whole genome sequencing (WGS) to identify copy number variations, genetic mutations, and single nucleotide polymorphisms [7]. Transcriptomics explores RNA expression using probe-based microarrays and next-generation RNA sequencing, encompassing the study of mRNAs and noncoding RNAs [7]. Proteomics investigates protein abundance, modifications, and interactions using high-throughput methods including mass spectrometry, while metabolomics examines cellular metabolites through techniques like liquid chromatography–tandem mass spectrometry [7].

The integration of these diverse data types presents significant computational challenges. The exponential growth of multi-omics data, driven by rapid advances in next-generation sequencing technologies, has created substantial challenges in data management and analysis [7]. Sophisticated computational approaches are required for meaningful biological inference from these complex datasets [3]. Researchers must develop specialized search strategies to navigate the rapidly evolving landscape of multi-omics databases and analytical tools, including actively maintained resources such as DriverDBv4, GliomaDB, and HCCDBv2 that integrate multiple omics data types [7].

Computational and Machine Learning Approaches

Artificial intelligence and machine learning have emerged as transformative forces in biomarker research, introducing advanced tools for medical data analysis [3]. Deep learning algorithms, with their advanced feature learning capabilities, have enhanced the efficiency of analyzing high-dimensional heterogeneous data, enabling researchers to systematically identify complex biomarker-disease associations that traditional statistical methods often overlook [3]. These computational approaches enable more granular risk stratification and support the development of sophisticated predictive models.

The MarkerPredict framework exemplifies the application of machine learning to predictive biomarker discovery in oncology [8]. This hypothesis-generating framework integrates network motifs and protein disorder to explore their contribution to predictive biomarker discovery [8]. Using literature evidence-based training sets of target-interacting protein pairs with Random Forest and XGBoost machine learning models on three signaling networks, MarkerPredict classified thousands of target-neighbor pairs with high accuracy (0.7–0.96 LOOCV accuracy) [8]. The methodology defined a Biomarker Probability Score (BPS) as a normalized summative rank of the models, identifying numerous potential predictive biomarkers for targeted cancer therapeutics [8].

The implementation of computational biomarker discovery requires specialized research reagents and analytical tools. The following table details essential components of the computational biomarker researcher's toolkit:

Table 3: Research Reagent Solutions for Computational Biomarker Discovery

| Tool Category | Specific Tools/Platforms | Function in Biomarker Research |

|---|---|---|

| Multi-Omics Databases | DriverDBv4, GliomaDB, HCCDBv2 [7] | Provide integrated genomic, transcriptomic, proteomic data from patient cohorts |

| IDP Databases | DisProt, AlphaFold, IUPred [8] | Characterize intrinsically disordered proteins with potential biomarker function |

| Network Analysis | Human Cancer Signaling Network, SIGNOR, ReactomeFI [8] | Enable topological studies of protein interactions and regulatory relationships |

| Machine Learning Frameworks | Random Forest, XGBoost [8] | Perform binary classification of potential biomarker-target pairs |

| Validation Resources | CIViCmine text-mining database [8] | Annotate biomarker properties using literature evidence |

Emerging Trends and Future Directions in Biomarker Research

Technological Innovations

The biomarker landscape is experiencing remarkable transformation through collaborative innovation and technological advancement [5]. Advanced analytical methods, including next-generation sequencing, proteomics, and metabolomics, have become cornerstone technologies in research laboratories, empowering teams to identify and validate biomarkers with unprecedented precision [5]. The integration of artificial intelligence and machine learning has emerged as a game-changing force, accelerating discovery and enhancing understanding by processing complex datasets with remarkable efficiency [5].

Single-cell multi-omics and spatial multi-omics technologies represent particularly promising frontiers in biomarker discovery [7]. These approaches provide unprecedented resolution in characterizing cellular states, activities, and spatial relationships within tissues [7]. Single-cell technologies enable the identification of biomarker expression patterns in rare cell populations that may be masked in bulk tissue analyses, while spatial methodologies preserve critical contextual information about cellular microenvironment and tissue organization that is lost in dissociated cell analyses [7].

The emergence of digital biomarkers derived from sensors and mobile technologies is reshaping development of diagnostic and therapeutic technologies [1]. These biomarkers, which capture behavioral characteristics, physiological fluctuations, and molecular sensing through wearable devices, mobile applications, and IoT sensors, offer new opportunities for continuous physiological monitoring integrated with dynamic risk assessment methodologies [3]. This technological evolution supports the shift toward proactive health management that maintains functional capacity through preventive intervention rather than episodic care response to established disease [3].

Challenges in Biomarker Translation

Despite rapid technological advancement, significant challenges persist in translating biomarker discoveries to clinical practice. Data heterogeneity, inconsistent standardization protocols, limited generalizability across populations, high implementation costs, and substantial barriers in clinical translation collectively hinder biomarker implementation [3]. These challenges necessitate systematic approaches that prioritize multi-modal data fusion, standardized governance protocols, and interpretability enhancement to address implementation barriers from data heterogeneity to clinical adoption [3].

The regulatory qualification process for biomarkers involves rigorous evaluation to ensure reliability for specific interpretations and applications in medical product development [2]. The FDA's Biomarker Qualification Program follows a collaborative, multi-stage submission process that includes a Letter of Intent, Qualification Plan, and Full Qualification Package [2]. This process emphasizes that a biomarker is qualified for a specific context of use, not that the measurement method itself is qualified, highlighting the importance of precisely defining the intended application [2].

Validation rigor remains a critical challenge in biomarker development. The process requires specific, interdependent steps of analytical validation, qualification using an evidentiary assessment, and utilization, with each step being specific to each condition of use for the biomarker [1]. For researchers, this underscores the importance of considering the ultimate regulatory pathway during early biomarker discovery, as mistaken concepts about future use can lead to diversion of funding and scientific effort toward biomarker development programs that are destined to yield inaccurate estimates of effects on animal or human health [1].

The systematic classification of biomarkers into diagnostic, prognostic, and predictive categories provides an essential framework for both research and clinical application. Understanding the distinct roles and validation requirements for each biomarker type enables more precise literature search strategies, more targeted research approaches, and more effective clinical implementation. As biomarker science continues to evolve, maintaining clear distinctions between these categories while recognizing their potential overlaps will be essential for advancing personalized medicine and improving patient outcomes.

The future of biomarker research lies in successfully addressing the translational challenges that currently limit clinical adoption while leveraging technological innovations in multi-omics integration, single-cell analysis, spatial technologies, and artificial intelligence. By developing structured approaches to biomarker qualification that prioritize analytical rigor, clinical relevance, and regulatory science, researchers can bridge the gap between biomarker discovery and clinical utility. This systematic approach will ultimately enhance early disease screening accuracy while supporting risk stratification and precision diagnosis across therapeutic areas, particularly in oncology and chronic diseases where biomarker applications have demonstrated significant impact.

The study of biological systems has been revolutionized by the development of high-throughput technologies that allow for the comprehensive characterization of molecules at various levels of cellular organization. These technologies, collectively known as "omics," provide unique insights into different layers of a biological system [9]. The fundamental premise of multi-omics is that biological functions arise from complex interactions between numerous molecular components across these different layers. By integrating data from multiple omics fields, researchers can achieve a more holistic understanding of biological processes, bridging the gap between genotype and phenotype [10].

Multi-omics strategies have particularly revolutionized biomarker discovery and enabled novel applications in personalized oncology and other medical fields [11]. The integration of these diverse data types helps researchers identify complex patterns and interactions that might be missed by single-omics analyses [9]. This approach has become increasingly important in bioinformatics and biomedical research, facilitating the identification of biomarkers and therapeutic targets for various diseases [9]. As technological advances continue to make these methods more accessible, multi-omics approaches are transforming how researchers investigate biological systems, from basic cellular processes to complex disease mechanisms.

Core Omics Technologies

Defining the Omics Landscape

Biological systems can be understood through multiple molecular layers, each providing distinct but complementary information. The four primary omics technologies form a continuum from genetic blueprint to functional outcomes.

Table 1: Core Omics Technologies and Their Characteristics

| Omics Field | Molecule Studied | Scope of Analysis | Key Technologies | Biological Insight Provided |

|---|---|---|---|---|

| Genomics | DNA (genes) | Complete set of genes/genome | Next-generation sequencing, Sanger sequencing | Genetic instructions, variants, and mutations [10] |

| Transcriptomics | RNA (transcripts) | Complete set of RNA transcripts/transcriptome | RNA sequencing, microarrays | Gene expression patterns, regulation [9] [10] |

| Proteomics | Proteins | Complete set of proteins/proteome | Mass spectrometry, protein arrays | Protein expression, modifications, interactions [9] [10] |

| Metabolomics | Metabolites (<1.5 kDa) | Complete set of small molecules/metabolome | NMR, mass spectrometry | Metabolic activity, physiological status [9] [10] |

Functional Relationships Between Omics Layers

The relationship between these omics layers follows the central dogma of molecular biology but extends to include metabolic outcomes. Genomics provides the fundamental blueprint encoded in DNA. This genetic information is transcribed into RNA through transcriptomics, which then translates into proteins via proteomics. Finally, metabolomics captures the ultimate functional readout of cellular processes through small molecule metabolites [10] [12]. This flow of biological information creates a comprehensive framework for understanding how genetic potential manifests as observable traits or phenotypes.

Metabolomics deserves special emphasis as it sits closest to the phenotype. As low molecular weight compounds, metabolites represent the substrates and by-products of enzymatic reactions and have a direct effect on the phenotype of the cell [10]. While genomics and proteomics provide extensive information about the genotype, they convey limited information about phenotype, making metabolomics a crucial component for understanding the functional state of a biological system [10].

Multi-Omics Integration Strategies

Methodological Approaches for Data Integration

Integrating multiple omics datasets is a challenging but necessary task to fully understand complex biological systems [9]. Several methodological approaches have been developed for this purpose, which can be broadly categorized into three main strategies:

Combined omics integration approaches attempt to explain what occurs within each type of omics data in an integrated manner while generating independent datasets. This approach maintains the integrity of each omics dataset while allowing for comparative analysis across molecular layers.

Correlation-based integration strategies apply statistical correlations between different types of generated omics data and create data structures to represent these relationships, such as networks [9]. These methods include:

- Gene co-expression analysis integrated with metabolomics data: Identifying gene modules that are co-expressed and linking them to metabolites to identify metabolic pathways that are co-regulated with the identified gene modules [9].

- Gene-metabolite network analysis: Creating visualization of interactions between genes and metabolites in a biological system using correlation analysis and network visualization tools like Cytoscape [9].

- Similarity Network Fusion: Building a similarity network for each omics data separately, then merging all networks while highlighting edges with high associations in each omics network [9].

Machine learning integrative approaches utilize one or more types of omics data, potentially incorporating additional information inherent to these datasets, to comprehensively understand responses at the classification and regression levels [9]. These methods are particularly valuable for handling the high dimensionality of omics data and identifying complex, non-linear relationships.

Data Integration Workflow

A typical multi-omics integration workflow involves several standardized steps that transform raw data into biological insights. The process begins with data generation from each omics platform, followed by quality control and preprocessing specific to each data type. The subsequent integration phase applies the methodologies described above, culminating in biological interpretation and validation.

Multi-Omics in Biomarker Discovery

Biomarker Categories and Applications

Biomarkers have various applications in medical research and clinical practice, including risk estimation, disease screening and detection, diagnosis, estimation of prognosis, prediction of benefit from therapy, and disease monitoring [13]. The U.S. Food and Drug Administration (FDA) categorizes biomarkers into several types based on their intended use [14]:

Table 2: Biomarker Categories and Applications

| Biomarker Category | Primary Use | Example |

|---|---|---|

| Susceptibility/Risk | Identify individuals with increased disease risk | BRCA1 and BRCA2 genetic mutations for breast and ovarian cancer [14] |

| Diagnostic | Detect or confirm presence of a disease | Hemoglobin A1c for diabetes mellitus [14] |

| Prognostic | Identify likelihood of disease progression or recurrence | Total kidney volume for autosomal dominant polycystic kidney disease [14] |

| Monitoring | Assess disease status or response to treatment | HCV RNA viral load for Hepatitis C infection [14] |

| Predictive | Identify individuals more likely to respond to specific therapy | EGFR mutation status in non-small cell lung cancer [14] |

| Pharmacodynamic/Response | Show biological response to therapeutic intervention | HIV RNA (viral load) in HIV treatment [14] |

| Safety | Monitor potential adverse effects of treatments | Serum creatinine for acute kidney injury [14] |

Multi-Omics Biomarker Discovery Workflow

The journey from biomarker discovery to clinical implementation follows a structured pathway with distinct stages. Multi-omics approaches have significantly enhanced the early discovery and validation phases of this process by providing comprehensive molecular profiling.

The biomarker development pipeline begins with discovery, where multi-omics strategies identify potential biomarker candidates through integrated analysis of genomic, transcriptomic, proteomic, and metabolomic data [11]. This is followed by analytical validation, which assesses the performance characteristics of the biomarker measurement tool, including accuracy, precision, analytical sensitivity, and specificity [14]. The next stage involves clinical validation, demonstrating that the biomarker accurately identifies or predicts the clinical outcome of interest in the intended population [14]. Finally, regulatory acceptance and implementation into clinical practice complete the pathway, often facilitated by programs like the FDA's Biomarker Qualification Program [14].

Multi-omics approaches are particularly powerful in the discovery phase because they can yield promising biomarker panels at the single-molecule, multi-molecule, and cross-omics levels, supporting cancer diagnosis, prognosis, and therapeutic decision-making [11]. The integration of these diverse data types helps identify robust biomarkers that might be missed when examining single molecular layers in isolation.

Experimental Design and Best Practices

Statistical Considerations for Biomarker Discovery

Robust biomarker discovery requires careful attention to statistical principles throughout the research process. Several key considerations help ensure the validity and reproducibility of findings:

Proper study design is foundational to successful biomarker research. This includes clearly defining scientific objectives and scope, selecting appropriate experimental conditions, implementing adequate sample size determination methods, and applying proper blocking and measurement designs to account for technical variability [15]. Studies aiming to assess intervention effects should include potential confounders as covariates, while purely predictive studies should focus on variables that increase predictive performance [15].

Bias mitigation through randomization and blinding represents another critical aspect. Randomization in biomarker discovery should control for non-biological experimental effects due to changes in reagents, technicians, or machine drift that can result in batch effects [13]. Specimens from controls and cases should be randomly assigned to testing platforms, ensuring equal distribution of cases, controls, and specimen age [13]. Blinding prevents bias by keeping individuals who generate biomarker data from knowing clinical outcomes, thus preventing unequal assessment of biomarker results [13].

Data quality assurance involves comprehensive quality control and filtering analyses, data curation, annotation, and standardization [15]. Relevant quality controls typically include statistical outlier checks and computing data type-specific quality metrics using established software packages for different omics technologies [15]. Quality checks should be applied both before and after preprocessing of raw data to ensure all quality issues have been resolved without introducing artificial patterns.

The Biomarker Toolkit: A Framework for Success

The Biomarker Toolkit provides a validated checklist with literature-reported attributes linked to successful biomarker implementation [16]. This framework groups critical attributes into four main categories:

- Rationale: The fundamental scientific basis and justification for the biomarker

- Analytical validity: Assessment of the biomarker test's performance characteristics (51 attributes, 39.54%)

- Clinical validity: Evidence that the biomarker accurately identifies or predicts the clinical condition (49 attributes, 37.98%)

- Clinical utility: Demonstration that using the biomarker improves patient outcomes and provides value over existing approaches (25 attributes, 19.38%) [16]

Quantitative validation of this toolkit demonstrated that total scores based on these attributes significantly drive biomarker success across different cancer types [16]. This framework can help researchers detect biomarkers with the highest clinical potential and shape how biomarker studies are designed and performed.

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Tools for Multi-Omics Biomarker Discovery

| Tool Category | Specific Examples | Function and Application |

|---|---|---|

| Automation-Ready Instrumentation | SpectraMax multi-mode microplate readers, AquaMax microplate washer | Enable high-throughput screening with walkaway operation and GxP-compliant data capture [17] |

| Validated Assay Kits | Abcam SimpleStep ELISA kits | Provide automation-compatible immunoassays with single-wash, 90-minute protocols for improved reproducibility [17] |

| Data Analysis Software | SoftMax Pro Software, Cytoscape, igraph | Facilitate data processing, curve fitting, compliance reporting, and network visualization [9] [17] |

| Quality Control Tools | fastQC/FQC (NGS data), arrayQualityMetrics (microarray data), pseudoQC/MeTaQuaC/Normalyzer (proteomics/metabolomics) | Compute data type-specific quality metrics and perform statistical outlier checks [15] |

| Single-Cell Technologies | Single-cell RNA-seq (scRNA-seq) platforms | Enable detection of cellular heterogeneity and cell-to-cell communication at single-cell resolution [11] [9] |

| Spatial Multi-Omics Technologies | Spatial transcriptomics, proteomics, and metabolomics platforms | Allow mapping of molecular distributions within tissue architecture while preserving spatial context [11] |

Case Study: Multi-Omics in Liver Injury Research

A compelling example of multi-omics application in biomarker research comes from a study investigating hepatic ischemia-reperfusion injury (IRI) [12]. Researchers employed an integrated transcriptomics, proteomics, and metabolomics approach to elucidate the role of Gp78, an E3 ligase, in liver IRI during liver transplantation.

The experimental protocol involved generating hepatocyte-specific Gp78 knockout (HKO) and overexpressed (OE) mouse models subjected to hepatic IRI. Multi-omics analysis revealed that Gp78 overexpression disturbed lipid homeostasis by remodeling polyunsaturated fatty acid (PUFA) metabolism, causing oxidized lipids accumulation and ferroptosis through promoting ACSL4 expression [12]. This mechanistic insight was only possible through the integration of multiple molecular layers, demonstrating how multi-omics approaches can uncover complex regulatory networks.

The methodology included:

- Animal modeling: Creation of hepatocyte-specific Gp78 knockout and overexpression mice

- Injury model: Subjecting mice to 70% hepatic ischemia-reperfusion

- Multi-omics profiling: Comprehensive transcriptomic, proteomic, and metabolomic analysis

- Mechanistic validation: Chemical inhibition of ferroptosis or ACSL4 to abrogate Gp78 effects

- Network analysis: Integration of omics data to construct regulatory networks

This case study exemplifies how multi-omics strategies can identify novel biomarker candidates (Gp78-ACSL4 axis) and provide insights into disease mechanisms that inform potential therapeutic targets [12].

Multi-omics technologies represent a transformative approach to biological research and biomarker discovery. By integrating data from genomics, transcriptomics, proteomics, and metabolomics, researchers can achieve a comprehensive understanding of biological systems that transcends the limitations of single-omics approaches. The continued development of analytical methods, computational tools, and experimental protocols for multi-omics integration promises to further accelerate biomarker discovery and validation.

As these technologies mature and become more accessible, they are poised to revolutionize personalized medicine by enabling more precise diagnosis, prognosis, and treatment selection. However, realizing this potential requires careful attention to study design, statistical rigor, and validation standards throughout the biomarker development pipeline. Frameworks like the Biomarker Toolkit provide valuable guidance for navigating this complex landscape and maximizing the clinical impact of multi-omics research.

In the field of biomarker discovery and cancer research, leveraging large-scale public data repositories is a cornerstone of modern scientific investigation. These resources provide researchers with the genomic, transcriptomic, proteomic, and clinical data necessary to identify molecular patterns, validate hypotheses, and develop novel therapeutic strategies. This technical guide provides an in-depth examination of four pivotal data resources—The Cancer Genome Atlas (TCGA), Clinical Proteomic Tumor Analysis Consortium (CPTAC), Gene Expression Omnibus (GEO), and Chinese Glioma Genome Atlas (CGGA)—framed within the context of literature search strategies for biomarker discovery research. For biomedical researchers and drug development professionals, mastery of these platforms and their integrative applications significantly enhances the efficiency and robustness of the research workflow.

The following table summarizes the core characteristics, data types, and primary applications of these four key repositories for biomarker discovery research.

Table 1: Core Characteristics of Key Biomedical Data Repositories

| Repository Name | Primary Focus | Key Data Types | Access Method | Notable Features |

|---|---|---|---|---|

| The Cancer Genome Atlas (TCGA) [18] | Comprehensive cancer genomics | Genomic, epigenomic, transcriptomic, proteomic | Genomic Data Commons (GDC) Data Portal | Over 20,000 primary cancer and matched normal samples across 33 cancer types; >2.5 petabytes of data |

| Clinical Proteomic Tumor Analysis Consortium (CPTAC) [19] | Cancer proteogenomics | Proteomic, genomic (WGS, WXS, RNA-Seq) | GDC Data Portal (genomic), CPTAC Data Portal, Proteomic Data Commons (PDC) | Integrates proteomic and genomic data to link genomic alterations to protein function |

| Gene Expression Omnibus (GEO) [20] [21] | Functional genomics data archive | Gene expression (microarray, RNA-seq), count matrices | GEO website, GEOexplorer webserver | User-submitted data; NCBI-generated RNA-seq count matrices for standardized re-analysis |

| Chinese Glioma Genome Atlas (CGGA) [22] [23] | Glioma-focused genomics | mRNA sequencing, clinical data | CGGA website (http://www.cgga.org.cn) | Complementary to TCGA; includes distinct patient cohorts like mRNAseq325 and mRNAseq693 |

Detailed Repository Profiles

The Cancer Genome Atlas (TCGA)

TCGA is a landmark cancer genomics program that molecularly characterized over 20,000 primary cancer and matched normal samples spanning 33 cancer types [18]. This joint effort between the NCI and the National Human Genome Research Institute began in 2006. The project generated over 2.5 petabytes of multi-omics data, including genomic, epigenomic, transcriptomic, and proteomic data, which have led to improvements in cancer diagnosis, treatment, and prevention [18]. All data remains publicly available through the Genomic Data Commons (GDC) Data Portal, which also provides web-based analysis and visualization tools.

Clinical Proteomic Tumor Analysis Consortium (CPTAC)

CPTAC is a national effort to accelerate the understanding of the molecular basis of cancer through the application of large-scale proteome and genome analysis, or proteogenomics [19]. The consortium has contributed genomic data from over 1,500 cancer patients across diverse disease types including endometrial, renal, lung, breast, colon, ovarian, brain, head and neck, and pancreatic cancers [19]. A key feature is that CPTAC genomic data is harmonized and available in the GDC, while proteomic data processed through the CPTAC Common Data Analysis Pipeline (CDAP) is available via the CPTAC Data Portal and the Proteomic Data Commons (PDC). Access to protected data requires authorization through dbGaP [19].

Gene Expression Omnibus (GEO)

GEO is a database repository hosting a substantial proportion of publicly available high throughput gene expression data [21]. A major feature is the NCBI-generated RNA-seq count data, which provides precomputed RNA-seq gene expression counts for human and mouse data submitted to GEO [20]. The pipeline produces both raw counts matrices (suitable for differential expression tools like DESeq2 and edgeR) and normalized counts matrices (FPKM/RPKM and TPM), along with comprehensive gene annotation tables [20]. For researchers without programming proficiency, the GEOexplorer webserver provides a user-friendly interface to perform interactive and reproducible gene expression analysis and visualization of GEO datasets [21].

Chinese Glioma Genome Atlas (CGGA)

The CGGA is a focused resource that provides genomic data specifically for glioma research. It contains large-scale mRNA sequencing data integrated with detailed clinical information, serving as a valuable validation cohort that complements other large projects like TCGA [22] [23]. Specific datasets within CGGA include the mRNAseq325 dataset (139 GBM patients) and the mRNAseq693 dataset (249 GBM patients), which have been used in integrated analyses to identify and validate prognostic gene signatures in glioblastoma [22].

Experimental Protocols for Integrative Analysis

The power of these repositories is maximized when used in combination. The following workflow, derived from recent literature, details a protocol for identifying and validating a biomarker signature for glioblastoma using bulk and single-cell RNA sequencing data from multiple repositories.

Protocol: Identification and Validation of a Prognostic Gene Signature

This protocol is adapted from the methodology used to identify a Macrophage-Associated Prognostic Signature (MAPS) in glioblastoma [22].

Data Acquisition and Preprocessing

- Data Sources: Obtain gene expression and clinical data for glioblastoma patients from TCGA, CGGA (mRNAseq325 and mRNAseq693), and GEO (GSE108476) [22] [23].

- Single-Cell Data: Source single-cell RNA-sequencing data from GEO (e.g., GSE131928, GSE103224) [22] [23].

- Quality Control: For single-cell data, filter out low-quality cells (e.g., those with <200 detected genes). Normalize gene expression for each cell [23].

Identification of Survival-Associated Genes

- Stratification: For each gene in each dataset, stratify patients into high- and low-expression groups based on median expression.

- Survival Analysis: Perform log-rank tests to identify genes significantly associated (p < 0.05) with overall survival.

- Intersection: Identify the overlapping survival-associated genes across all datasets (e.g., 41 genes were found in the MAPS study) [22].

Network and Functional Analysis

- Protein Interactions: Analyze the interactions of the survival-associated genes using the STRING database (https://string-db.org).

- Network Visualization: Use GeneMANIA (https://genemania.org) to visualize co-expression, physical interactions, and shared protein domains [22].

Risk Model Construction

- Algorithm: Use ridge regression with a Cox proportional hazards framework (e.g., via the "glmnet" R package) to construct a risk model.

- Risk Score: Calculate a risk score for each patient using the formula:

Riskscore = Σ(βi · xi), where βi is the regression coefficient and xi is the gene expression value [22]. - Stratification: Determine the optimal cutoff point for risk scores (e.g., using the surv_cutpoint function) to classify patients into high- and low-risk groups.

Single-Cell Validation

- Cell Type Annotation: Process single-cell data using Seurat. Identify major cell clusters (e.g., Malignant cells, Endothelial cells, Tumor-Associated Macrophages) using canonical marker genes [23].

- Signature Localization: Examine the expression of the identified gene signature across cell types to identify the specific cellular compartments driving the prognostic signal (e.g., high expression in tumor-associated macrophages) [22].

Drug Sensitivity Analysis

- Database Query: Identify drugs that can regulate the transcriptional expression of the signature genes using the Comparative Toxicogenomics Database (CTD; https://ctdbase.org).

- Sensitivity Correlation: Analyze correlations between signature expression and drug sensitivity using specialized resources (e.g., https://guolab.wchscu.cn/GSCA) [23].

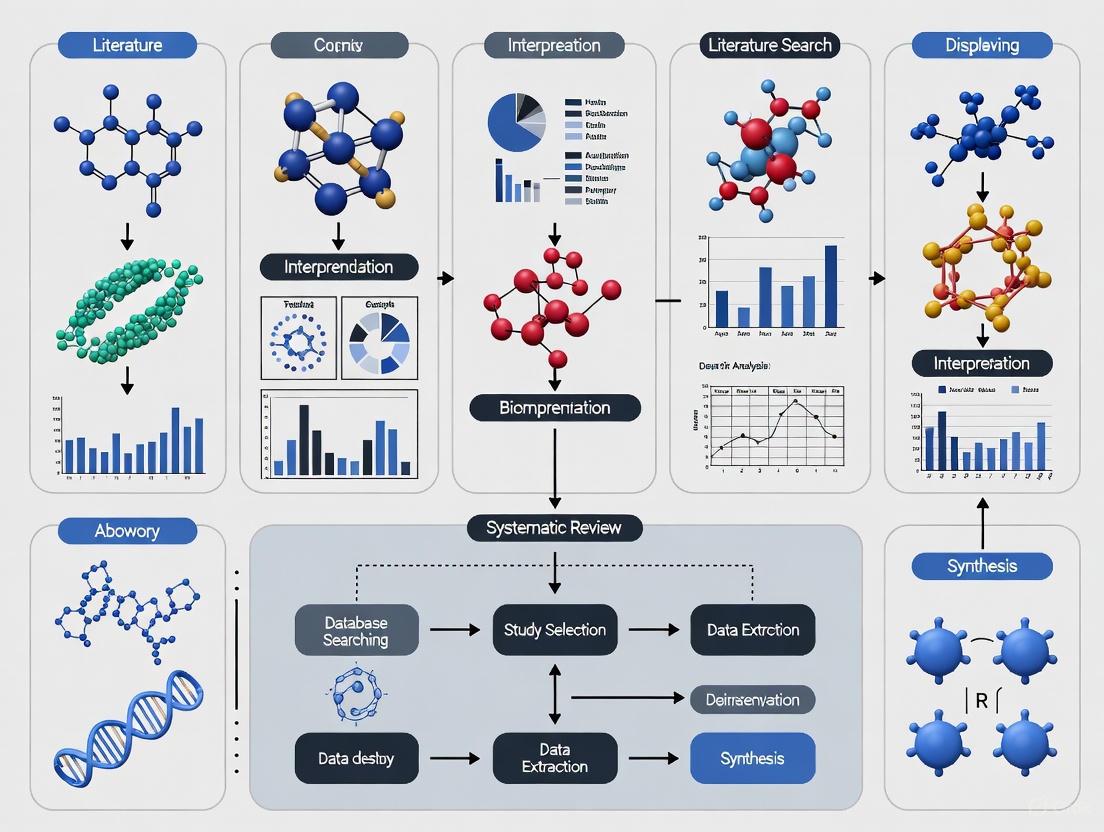

Diagram 1: Biomarker discovery workflow integrating multiple data repositories.

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Successful biomarker discovery requires both computational tools and experimental reagents. The following table details key resources referenced in the experimental protocols.

Table 2: Essential Research Reagent Solutions for Biomarker Discovery

| Tool/Reagent | Category | Primary Function | Application Example |

|---|---|---|---|

| HISAT2 | Computational Tool | Alignment of RNA-seq reads to reference genome | Used in NCBI pipeline to align human RNA-seq reads to GRCh38 [20] |

| featureCounts (Subread) | Computational Tool | Quantification of gene-level counts from aligned reads | Generates raw count files for each SRA run in NCBI pipeline [20] |

| DESeq2 / edgeR / limma | Computational Tool | Differential expression analysis | Analyze raw counts matrices for identifying significantly dysregulated genes [20] |

| Seurat (R package) | Computational Tool | Single-cell RNA-seq data analysis | Processing, normalization, and clustering of single-cell data [22] [23] |

| Harmony | Computational Tool | Batch effect correction | Integration of single-cell datasets from different sources/studies [23] |

| infercnv | Computational Tool | Copy number variation analysis | Distinguishing malignant cells from normal cells in single-cell data [23] |

| CIBERSORTx | Computational Tool | Cell type proportion estimation | Deconvoluting bulk RNA-seq data to estimate cell-type abundances [23] |

| GEOexplorer | Computational Tool | Web-based GEO data analysis | Interactive analysis of GEO datasets without programming proficiency [21] |

| A172 & SKMG1 Cell Lines | Biological Reagent | In vitro glioma models | Functional validation of biomarker genes (e.g., GADD45G) in glioma cell invasion [23] |

Data Integration and Analysis Pathways

The integration of data from multiple repositories follows a logical pathway that moves from data acquisition through validation. The following diagram illustrates this integrative process and the role of each repository within the broader research strategy.

Diagram 2: Data integration pathway across repositories for biomarker validation.

The strategic integration of data from TCGA, CPTAC, GEO, and CGGA provides a powerful framework for advancing biomarker discovery research. TCGA offers comprehensive multi-omics data across cancer types, CPTAC adds the crucial proteomic dimension, GEO provides extensive functional genomics data and user-friendly analysis tools, while CGGA delivers focused glioma datasets for validation. By leveraging the experimental protocols and computational tools outlined in this guide, researchers can systematically navigate these resources to identify, validate, and characterize novel biomarkers with prognostic and therapeutic significance. This integrative approach maximizes the value of public data repositories and accelerates the translation of genomic discoveries into clinical applications.

Semantic Enrichment and AI-Powered Tools for Initial Literature Triage

The field of biomarker discovery is characterized by a rapidly expanding body of scientific literature, creating significant challenges for researchers attempting to stay current with developments while identifying novel research pathways. The volume of new publications exceeds human capacity for comprehensive review, necessitating more efficient approaches to literature management. This challenge is particularly acute in biomarker research, where clinical translation remains exceptionally low—only a small fraction of discovered biomarkers progress to clinical application despite substantial investments in research [16]. This translational gap represents both a problem and an opportunity for improved literature management strategies.

Semantic enrichment and AI-powered triage have emerged as transformative solutions to these challenges. By moving beyond simple keyword matching to understand contextual meaning and relationships within scientific text, these technologies enable researchers to process vast document collections with unprecedented efficiency. When properly implemented, these approaches can identify cross-disciplinary connections, assess clinical relevance, and flag novel concepts that might otherwise escape notice in traditional literature reviews. For biomarker researchers operating in a highly competitive and resource-intensive field, these capabilities are shifting from luxury to necessity.

Foundations of Semantic Enrichment in Scientific Literature Analysis

Core Principles and Methodologies

Semantic enrichment represents a fundamental advancement beyond traditional text-mining approaches by incorporating computational linguistics and domain knowledge to extract meaning from scientific text. This process transforms unstructured text into structured knowledge that can be queried, connected, and analyzed systematically. The core methodology involves multiple stages of text processing, beginning with Named Entity Recognition (NER) to identify and classify biomedical concepts such as genes, proteins, diseases, and biomarkers within documents [24].

Following entity extraction, relationship extraction algorithms identify contextual connections between these entities, such as drug-target interactions or biomarker-disease associations. Contemporary approaches employ transformer-based models that utilize self-attention mechanisms to weigh the importance of different words and phrases within their context, similar to strategies used in large language models like BERT [25]. This capability is particularly valuable for biomarker research, where the significance of a biological molecule may depend entirely on its contextual relationship to specific disease states or therapeutic interventions.

The final stage involves knowledge graph construction, which integrates extracted entities and relationships into a structured network that represents the scientific domain. This network enables sophisticated querying and reasoning capabilities that form the foundation for effective literature triage. For biomarker discovery, these knowledge graphs can incorporate specialized biological ontologies and pathway databases to ensure biological plausibility and enhance discovery relevance [24].

Domain-Specific Applications in Biomarker Research

In biomarker discovery, semantic enrichment has been specifically adapted to address domain-specific challenges. The Biomarker Toolkit provides a validated framework of attributes associated with successful biomarker implementation, offering a structured approach for assessing the clinical potential of biomarker candidates identified in literature [16]. This toolkit groups 129 critical attributes into four main categories: rationale, clinical utility, analytical validity, and clinical validity, providing a systematic approach for evaluating biomarker candidates discovered through literature mining.

Specialized semantic models have also been developed for specific biomarker types. For antibody and nucleic acid biomarkers, frameworks like BioGraphAI employ hierarchical graph attention mechanisms tailored to capture interactions across genomic, transcriptomic, and proteomic modalities [24]. These interactions are guided by biological priors derived from curated pathway databases such as KEGG and Reactome, ensuring that extracted relationships reflect established biological knowledge while identifying novel connections.

Table 1: Key Semantic Enrichment Techniques for Biomarker Literature Triage

| Technique | Function | Biomarker Application |

|---|---|---|

| Named Entity Recognition | Identifies and classifies biomedical concepts | Extraction of gene, protein, and metabolite mentions |

| Relationship Extraction | Identifies contextual connections between entities | Mapping biomarker-disease and biomarker-treatment associations |

| Knowledge Graph Construction | Integrates entities and relationships into structured networks | Identifying cross-disciplinary connections and novel biomarker pathways |

| Ontology Alignment | Maps concepts to standardized biomedical ontologies | Ensuring consistent terminology across studies and domains |

| Semantic Similarity Analysis | Quantifies conceptual relatedness between documents | Identifying literature with similar biomarker signatures despite different terminology |

AI-Powered Tools and Frameworks for Literature Triage

Emerging Architectures and Capabilities

Artificial intelligence has revolutionized literature triage through frameworks capable of processing complex scientific text with human-like comprehension but computer-like scale and speed. The Clinical Transformer represents one such advancement—a deep neural-network framework based on transformer architecture that dynamically adjusts the influence of various disease biomarkers within the context of all available clinical and molecular data [25]. This approach mirrors the contextual processing capabilities that have made transformers dominant in natural language processing, but specifically adapted for clinical and biomarker literature.

These AI frameworks employ multiple learning strategies to maximize effectiveness with typically small biomedical datasets. Transfer learning allows models to be pretrained on large-scale biological datasets like The Cancer Genome Atlas (TCGA) then fine-tuned for specific literature triage tasks [25]. Gradual learning approaches first train models with self-supervised learning for masked feature prediction before fine-tuning for specific literature classification tasks. These strategies enable effective performance even with the limited dataset sizes typical in specialized biomarker domains.

For biomarker discovery, these capabilities are particularly valuable in assessing the clinical relevance and novelty of reported findings. The TriAgent framework exemplifies this application, employing LLM-based multi-agent collaboration to couple automated biomarker discovery with deep research grounding for literature validation and novelty assessment [26]. This system uses a supervisor research agent to generate research topics and delegate targeted queries to specialized sub-agents for evidence retrieval from various data sources, with findings synthesized to classify biomarkers as either grounded in existing knowledge or flagged as novel candidates.

Implementation and Workflow Integration

Effective implementation of AI-powered literature triage requires integration into researcher workflows with appropriate interfaces and output formats. The typical workflow begins with document ingestion from multiple sources including published literature, preprints, clinical trial reports, and proprietary databases. The AI system then processes these documents through a multi-stage filtering pipeline that prioritizes based on relevance, quality, and novelty [15].

Critical to implementation success is explainability—the ability of AI systems to provide transparent justifications for their triage decisions. Modern frameworks incorporate attention mechanisms that highlight the specific text passages and evidence contributing to classification decisions [25]. This capability not only builds researcher trust but also accelerates the assessment process by directing attention to the most salient sections of documents.

The output of these systems typically includes ranked literature lists, structured summaries of key findings, and visualizations of relationships between concepts across the literature landscape. For biomarker researchers, this structured output enables rapid assessment of the evidentiary support for potential biomarkers while identifying gaps and contradictions in the existing knowledge base.

Experimental Protocols for AI-Powered Literature Triage

Framework Evaluation and Validation

Rigorous evaluation of AI-powered literature triage systems requires standardized protocols that assess both technical performance and practical utility. The Biomarker Toolkit provides a validated framework for this purpose, with quantitative assessment demonstrating that total scores based on its attribute checklist significantly predict biomarker implementation success (BC: p>0.0001, 95.0% CI: 0.869–0.935, CRC: p>0.0001, 95.0% CI: 0.918–0.954) [16]. This toolkit enables systematic scoring of biomarker candidates identified through literature mining based on their reported attributes across analytical validity, clinical validity, and clinical utility categories.

Performance benchmarks for AI triage systems should include standard information retrieval metrics including precision, recall, and F1 scores calculated against expert-curated literature sets. In published evaluations, the TriAgent framework achieved a topic adherence F1 score of 55.7 ± 5.0%, surpassing the CoT-ReAct agent by over 10%, and a faithfulness score of 0.42 ± 0.39, exceeding all baselines by more than 50% [26]. These metrics provide quantitative assessment of both relevance and reliability for triage systems.

Additional validation should assess clinical relevance through domain expert evaluation of system outputs. This typically involves blinded assessment of AI-triage results compared to traditional search results, with scoring based on criteria such as clinical applicability, novelty, and actionability. For biomarker research, this assessment should specifically evaluate the system's ability to identify biomarkers with strong clinical utility and analytical validity based on established frameworks [16].

Implementation Workflow

The following diagram illustrates the complete experimental workflow for implementing AI-powered literature triage in biomarker discovery:

Diagram 1: AI-Powered Literature Triage Workflow

The experimental implementation begins with comprehensive document collection from diverse sources including PubMed, specialized databases, and trial registries. The semantic enrichment phase then processes these documents through named entity recognition, relationship extraction, and knowledge graph construction. AI-powered classification applies specialized models to categorize documents by relevance, biomarker type, and clinical application.

Biomarker-specific evaluation employs frameworks like the Biomarker Toolkit to assess candidates against established criteria for successful implementation [16]. Finally, novelty and clinical impact assessment identifies biomarkers with potential for significant advancement, often through comparison to existing knowledge bases and assessment of evidentiary strength. The output consists of prioritized literature with structured summaries that highlight key information for researcher assessment.

Research Reagent Solutions

Successful implementation of semantic enrichment and AI-powered triage requires both computational resources and domain-specific knowledge bases. The following table details essential components for establishing an effective literature triage pipeline for biomarker discovery:

Table 2: Essential Research Reagents for AI-Powered Literature Triage

| Resource Category | Specific Examples | Function in Literature Triage |

|---|---|---|

| Biomedical Ontologies | Gene Ontology, Disease Ontology, MEDIC | Standardized vocabularies for entity recognition and normalization |

| Knowledge Bases | KEGG, Reactome, STRING | Biological pathway context for relationship validation |

| Pre-trained Models | BioBERT, Clinical Transformer, BioGraphAI | Domain-adapted AI models for biomedical text processing |

| Biomarker Evaluation Frameworks | Biomarker Toolkit, REMARK, STARD | Structured criteria for assessing biomarker quality and clinical potential |

| Specialized Databases | TGCA, GENIE, ClinicalTrials.gov | Source data for validation and contextualization of literature findings |

Implementation Considerations

Beyond specific tools, successful implementation requires attention to several practical considerations. Data quality and standardization are fundamental, as semantic enrichment performance depends heavily on consistent annotation and curation [15]. This includes adherence to standardized reporting guidelines such as MIAME for microarray data and MINSEQE for sequencing experiments [15].

Computational infrastructure must be adequate for processing large document collections, with particular attention to scalability for knowledge graph construction and querying. For organizations with limited resources, cloud-based solutions and federated learning approaches can provide access to necessary computational power while maintaining data privacy [27].

Finally, domain expertise remains essential for validating system outputs and interpreting results in appropriate biological and clinical context. The most successful implementations maintain human-in-the-loop workflows where AI systems handle volume processing while domain experts focus on high-value assessment and decision-making based on triaged results.

Semantic enrichment and AI-powered literature triage represent transformative technologies for addressing the information overload challenges in biomarker discovery. By implementing systematic approaches based on the frameworks and protocols outlined in this guide, researchers can significantly accelerate the literature review process while improving the identification of promising biomarker candidates with strong clinical potential.

The field continues to evolve rapidly, with emerging developments in multimodal AI that integrate textual information with molecular structures and clinical imaging, and federated learning approaches that enable collaborative model training while preserving data privacy [27]. These advancements promise even more powerful literature triage capabilities in the near future, potentially further closing the gap between biomarker discovery and clinical application.

For biomarker researchers, the adoption of these technologies is shifting from competitive advantage to necessity. The increasing volume and complexity of scientific literature, combined with growing pressure to improve translational outcomes, creates an environment where AI-powered triage is becoming essential infrastructure for cutting-edge research. By implementing these approaches systematically and rigorously, the biomarker research community can potentially accelerate progress toward the promised benefits of precision medicine.

Establishing a Foundational Search Vocabulary and Ontologies

The exponential growth of scientific data presents both an opportunity and a challenge for researchers in biomarker discovery. While high-throughput technologies like single-cell next-generation sequencing and liquid biopsies produce enormous volumes of data, the ability to effectively search, integrate, and interpret this information determines research efficiency and success [13]. The transition from biomarker discovery to clinical implementation remains hampered by translational gaps, with many candidates failing to reach clinical practice despite significant resource allocation [28] [16]. A systematic approach to literature mining and vocabulary standardization addresses this challenge by enabling researchers to build upon existing knowledge, avoid redundant efforts, and identify the most promising biomarker candidates with higher potential for clinical translation.

Effective literature search strategies in biomarker research require understanding both the biological and computational aspects of the field. Biomarkers are defined as "a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or biological responses to an exposure or intervention" [13]. They serve various applications including risk estimation, disease screening and detection, diagnosis, estimation of prognosis, prediction of benefit from therapy, and disease monitoring [13]. The complexity of biomarker research necessitates a structured approach to vocabulary development and ontology utilization, ensuring that search strategies capture relevant concepts across disciplinary boundaries and data types.

Foundational Vocabulary for Biomarker Discovery

Core Biomarker Terminology and Categories

Establishing a consistent vocabulary is fundamental to effective literature searching in biomarker research. The terminology encompasses both the biological entities and the methodological approaches specific to the field. Table 1 summarizes the essential categories and terminology that form the foundation of systematic search strategies in biomarker discovery.

Table 1: Core Biomarker Categories and Applications

| Category | Definition | Key Search Terms | Primary Applications |

|---|---|---|---|

| Prognostic Biomarkers | Provide information about overall expected clinical outcomes regardless of therapy [13] | "prognostic biomarker," "clinical outcome," "overall survival," "disease progression" | Patient stratification, treatment planning, clinical trial design |

| Predictive Biomarkers | Inform expected clinical outcome based on treatment decisions in biomarker-defined patients [13] | "predictive biomarker," "treatment response," "therapy selection," "pharmacodynamic" | Therapy selection, clinical decision-making, personalized medicine |

| Diagnostic Biomarkers | Detect the presence of disease or specific disease subtypes [13] | "diagnostic biomarker," "disease detection," "screening," "early detection" | Disease diagnosis, screening programs, disease subtyping |

| Risk Stratification Biomarkers | Identify patients at higher than usual risk of disease [13] | "risk biomarker," "susceptibility," "genetic predisposition," "family history" | Preventive medicine, targeted screening, lifestyle interventions |

| Monitoring Biomarkers | Assess disease status or treatment response over time [13] | "monitoring biomarker," "treatment response," "disease monitoring," "longitudinal" | Treatment efficacy assessment, disease recurrence monitoring |

Beyond these categorical distinctions, biomarker research employs specific methodological terminology that guides search strategy development. Key concepts include analytical validity (accuracy of biomarker measurement), clinical validity (ability to predict clinical outcomes), and clinical utility (ability to improve patient outcomes) [28]. Additional essential terms encompass sensitivity (proportion of true positives correctly identified), specificity (proportion of true negatives correctly identified), receiver operating characteristic (ROC) curves, and area under the curve (AUC) as discrimination metrics [13].

Biomarker Performance Metrics and Statistical Terminology

Quantitative assessment of biomarker performance requires understanding specific statistical measures and their implications for search vocabulary. The Biomarker Toolkit initiative identified 129 attributes associated with clinically useful biomarkers, grouped into four main categories: rationale, clinical utility, analytical validity, and clinical validity [28] [16]. These attributes provide a structured framework for developing comprehensive search strategies that address all aspects of biomarker evaluation.

Search vocabulary should incorporate specific statistical terms used in biomarker validation, including hazard ratios (HR) for time-to-event outcomes, confidence intervals (CI), p-values for hypothesis testing, and false discovery rates (FDR) for multiple comparison adjustments in high-dimensional data [13]. For multivariate biomarker panels, terms such as variable selection, shrinkage methods, and overfitting become crucial for retrieving methodologically sound studies [13].

Ontologies and Standardized Vocabularies

Key Ontologies in Biomarker Research

Ontologies provide structured, standardized frameworks for representing knowledge domains through defined terms and their interrelationships. In biomarker research, they enable integration of heterogeneous data sources, facilitate accurate annotation of experiments, and support sophisticated querying across distributed databases [29]. Table 2 outlines the primary ontologies relevant to biomarker discovery and their specific applications.

Table 2: Essential Ontologies for Biomarker Research

| Ontology Name | Scope and Coverage | Primary Applications | Implementation Examples |

|---|---|---|---|

| Quantitative Imaging Biomarker Ontology (QIBO) | 488 terms spanning experimental subject, biological intervention, imaging agent, imaging instrument, and biomarker application [29] | Annotation of imaging experiments, hypothesis generation for biomarker-disease associations, standardized terminology for image retrieval | Annotation of [18F]-FDG PET experiments measuring standardized uptake value (SUV) for tumor response assessment [29] |

| Gene Ontology (GO) | Cellular component, molecular function, and biological process [29] | Functional annotation of genomic biomarkers, pathway analysis, enrichment studies | Annotating biomarker roles in biological processes like apoptosis, angiogenesis, or immune response |

| Molecular Imaging and Contrast Agent Database (MICAD) | Molecular imaging agents, including radioactive labeled small molecules, nanoparticles, antibodies, and labeled cells [29] | Standardizing imaging agent terminology, target annotation, biological application classification | Annotation of imaging agents for specific molecular targets like integrins, growth factors, or stem cells |

The Value of Ontologies extends beyond terminology standardization to enabling knowledge discovery through semantic reasoning. For example, QIBO facilitates the generation of novel biomarker-disease associations by formally representing complex relationships between imaging procedures, biological targets, and clinical applications [29]. This structured approach allows researchers to navigate logically through related concepts and identify potentially valuable connections that might be missed in keyword-based searches.

Implementing Ontologies in Search Strategies

Effective implementation of ontologies in literature search requires understanding both their structure and application methods. The Entity-Attribute-Value (EAV) model provides flexibility for representing diverse biomarker data types, accommodating the broad scope and rapidly changing nature of measurements captured in clinical trials and experimental studies [30]. This approach supports the integration of clinical parameters with high-dimensionality genotyping and expression data, addressing a critical need in biomarker research.

Practical ontology implementation involves mapping research questions to ontology classes and properties. For example, a search for "quantitative imaging biomarkers of apoptosis in lung cancer" would leverage QIBO terms for imaging modalities (e.g., "PET"), biological targets (e.g., "annexin V" for apoptosis measurement), and biomarker applications (e.g., "treatment monitoring") [29]. Simultaneously, Gene Ontology would provide standardized terms for apoptotic processes, while disease ontologies would ensure consistent representation of lung cancer subtypes and stages.

Structured Search Methodology

Search Strategy Development Framework

Developing effective literature search strategies for biomarker discovery requires a systematic approach that integrates foundational vocabulary with ontological frameworks. The process begins with clearly defining the research objective and scope, including specific biomarker applications (diagnostic, prognostic, predictive), disease contexts, and analytical methodologies [15]. This precise formulation guides the selection of appropriate terminologies and ontologies, ensuring comprehensive coverage of relevant concepts.

A structured workflow for search strategy development incorporates both vocabulary selection and ontological alignment, as illustrated in the following diagram:

Diagram: Structured Workflow for Search Strategy Development

The interactive nature of search strategy development requires multiple refinement cycles, beginning with broad searches that are progressively narrowed based on initial results [31]. This process leverages both exact matching of specific terms and fuzzy matching of related concepts to balance recall and precision. For biomarker discovery, particular attention should be paid to covariate inclusion in searches, distinguishing between studies aiming at causal inference (which require specific confounder consideration) and purely predictive studies (where covariate selection focuses on performance optimization) [15].

Advanced Technical Implementation

Technical implementation of sophisticated search strategies employs both traditional database queries and natural language processing approaches. The finite state machine (FSM) method provides a structured framework for identifying biomarker-disease relationships in text mining applications, processing literature through defined states that recognize entities (e.g., gene/protein names), interactions, and contextual relationships [31]. This method combines exact matching for disease terms, fuzzy matching for molecular entities, and list-member matching for interaction networks.

Advanced search methodologies must address the "p >> n problem" common in biomarker research, where the number of potential features (p) far exceeds the number of available samples (n) [15]. Search strategies should incorporate terms related to dimensionality reduction, feature selection methods, and multiple testing corrections to identify studies employing appropriate statistical methods for high-dimensional data. Additionally, integration of clinical and omics data requires vocabulary that spans both domains, addressing challenges of semantic heterogeneity and scale [30].

Experimental Protocols and Data Integration

Biomarker Validation Methodologies

Robust biomarker validation requires specific methodological approaches that should be reflected in literature search strategies. For prognostic biomarker identification, searches should target properly conducted retrospective studies using biospecimens collected from cohorts representing the target population [13]. The validation process typically involves testing associations between the biomarker and clinical outcomes through main effect tests in statistical models, with subsequent validation in external datasets [13].

For predictive biomarkers, search strategies must focus on studies involving randomized clinical trials, with specific attention to interaction tests between treatment and biomarker status in statistical models [13]. The IPASS study of EGFR mutations in non-small cell lung cancer provides a classic example, where a highly significant interaction (P<0.001) demonstrated that gefitinib provided superior progression-free survival compared to carboplatin plus paclitaxel in EGFR mutation-positive patients, but inferior outcomes in wild-type patients [13]. Searches should include terms such as "treatment-biomarker interaction," "randomized clinical trial," and "predictive validation."

Analytical methods for biomarker discovery and validation should be pre-specified in study protocols to avoid data-driven results that are less likely to be reproducible [13]. Search strategies should prioritize studies that document pre-planned analytical approaches, control for multiple comparisons, and report standardized performance metrics including sensitivity, specificity, positive and negative predictive values, and discrimination measures (ROC AUC) [13].

Data Integration Approaches

Biomarker research increasingly requires integration of diverse data types, from high-throughput omics measurements to clinical outcome data. Search strategies should incorporate terminology related to three primary data integration approaches [15]:

Early Integration: Methods like canonical correlation analysis (CCA) that extract common features from several data modalities before applying conventional machine learning algorithms.

Intermediate Integration: Approaches that model different data types separately while allowing interaction during the analysis process.

Late Integration: Algorithms that first learn separate models for different data types and subsequently combine their predictions.

The integration of clinical and biological data presents particular challenges due to differences in structure, scale, and semantics [30]. Effective search strategies should include terms related to data harmonization, ontological alignment, and integration frameworks such as the Entity-Attribute-Value (EAV) model, which provides flexibility for representing diverse clinical and biomarker data within unified repositories [30].

The Scientist's Toolkit

Research Reagent Solutions and Essential Materials

Successful implementation of biomarker discovery and validation strategies requires specific research tools and resources. Table 3 catalogues essential materials and their functions based on established methodologies from the search results.

Table 3: Essential Research Reagents and Resources for Biomarker Discovery

| Resource Category | Specific Tools/Resources | Function and Application |

|---|---|---|

| Data Repositories | The Cancer Imaging Archive (TCIA), National Biomedical Imaging Archive (NBIA) [29] | Provide access to large-scale imaging datasets for biomarker development and validation |