Advancing Dietary Assessment: Strategies for Improving Portion Size Estimation Accuracy in Clinical and Research Recalls

Accurate portion size estimation is a critical yet challenging component of dietary assessment, with direct implications for nutritional science, public health research, and clinical trial outcomes.

Advancing Dietary Assessment: Strategies for Improving Portion Size Estimation Accuracy in Clinical and Research Recalls

Abstract

Accurate portion size estimation is a critical yet challenging component of dietary assessment, with direct implications for nutritional science, public health research, and clinical trial outcomes. This article provides a comprehensive resource for researchers and drug development professionals, synthesizing current evidence on the foundational principles, methodological applications, optimization strategies, and validation frameworks for portion size estimation. It explores the transition from traditional aids to AI-powered digital tools, addresses systematic errors across food types, and presents comparative data on method accuracy to guide the selection and implementation of robust dietary assessment protocols in biomedical research.

The Core Challenge: Understanding Errors and Biases in Portion Size Estimation

The Impact of Inaccurate Portion Sizing on Dietary Data Quality and Research Outcomes

Technical Support Center: Troubleshooting Portion Size Estimation

Frequently Asked Questions (FAQs)

1. What are the most common sources of error in portion size estimation during dietary recalls? Inaccurate portion sizing stems from several key sources. Memory decay leads to recall inaccuracies, though studies show no significant difference in reported portion sizes between 2-hour and 24-hour recalls [1]. The type of food significantly influences error rates; single-unit foods (e.g., bread slices) are typically reported more accurately than amorphous foods (e.g., pasta, scrambled eggs) or liquids [1]. Furthermore, the estimation method itself introduces variability, with textual descriptions often outperforming image-based aids for many food types [1]. A pervasive issue is the flat-slope phenomenon, where large portions are systematically underestimated and small portions are overestimated [1].

2. Which portion size estimation aid (PSEA) provides more accurate data: text-based or image-based tools? Evidence indicates that text-based (TB-PSE) methods generally offer superior accuracy. A 2021 study comparing the two methods found that TB-PSE had a median relative error of 0% compared to 6% for image-based (IB-PSE) methods [1]. Furthermore, TB-PSE demonstrated significantly better performance in capturing portion sizes close to true intake, with 50% of estimates within 25% of true intake versus 35% for IB-PSE [1]. Bland-Altman analysis also showed higher agreement between reported and true intake for TB-PSE [1].

3. How can I validate a new portion size estimation tool in a study? A robust validation protocol involves comparison against a reference method such as Weighed Food Records (WFR) [2]. For quantitative equivalence testing, use statistical methods like the paired two one-sided t-test (TOST) with a pre-specified equivalence margin (e.g., 2.5 points on a diet quality score) [2]. To assess agreement in classification (e.g., risk of poor diet quality), calculate the Kappa coefficient [2]. A 2025 validation study successfully employed this design, using a repeated measures approach where participants completed WFR and then used the novel tool (e.g., cubes or playdough with an app) for the same reference period [2].

4. Are there simplified PSEAs valid for use in field settings with limited resources? Yes, recent research has validated accessible alternatives. The GDQS app, used with simple 3D printed cubes of pre-defined sizes, has been shown equivalent to WFR in assessing diet quality [2]. Playdough has also been validated as a flexible, low-cost alternative to cubes for food group-level portion estimation with the GDQS app, showing no statistical difference in performance [2]. For assessing perceived portion size norms, online image-series tools with 8 successive portion size options have demonstrated good agreement (ICC = 0.85) with equivalent real food options [3].

5. What emerging technologies show promise for improving portion size estimation? Artificial intelligence, particularly Multimodal Large Language Models (MLLMs) combined with Retrieval-Augmented Generation (RAG), represents the cutting edge. The DietAI24 framework uses this technology to recognize foods from images and ground nutrient estimation in authoritative databases like FNDDS, achieving a 63% reduction in mean absolute error for food weight and key nutrients compared to existing methods [4]. This approach enables zero-shot estimation of 65 distinct nutrients and food components, far surpassing the basic macronutrient profiles of traditional computer vision systems [4].

Experimental Protocols for Portion Size Method Validation

Protocol 1: Validation of Novel PSEAs Against Weighed Food Records

- Objective: To assess whether a novel portion size estimation method provides data equivalent to weighed food records (WFR) for the same 24-hour reference period.

- Design: Repeated measures design [2].

- Participants: Convenience sample of approximately 170 participants, stratified by sex and age. Participants must be fluent in the study language and able to complete WFR [2].

- Procedures:

- Day 1 (Training): Conduct in-person group training (40-60 minutes) on using a digital dietary scale and completing WFR forms. Provide participants with a calibrated scale and data collection forms [2].

- Day 2 (Data Collection): Participants weigh and record all foods, beverages, and ingredients consumed during a 24-hour period [2].

- Day 3 (Comparison Method): Participants return to the lab and use the novel PSEA (e.g., mobile app with cubes or playdough) to estimate intake for the same 24-hour period recorded in the WFR. The order of PSEA administration should be randomized [2].

- Data Analysis:

- Use the paired two one-sided t-test (TOST) to test for equivalence between the WFR-derived and PSEA-derived diet metrics, with a pre-specified equivalence margin (e.g., 2.5 points for GDQS) [2].

- Calculate Kappa coefficients to assess agreement in risk categorization between methods [2].

- For individual food groups, report cross-classification percentages (e.g., same or adjacent portion size) and Kappa statistics [3].

Protocol 2: Comparing Accuracy of Text-Based vs. Image-Based PSEAs

- Objective: To compare the accuracy of text-based (TB-PSE) and image-based (IB-PSE) portion size estimation aids under controlled conditions.

- Design: Randomized cross-over study [1].

- Participants: Approximately 40 participants, stratified by sex and age, who are not visually impaired or professionally trained in nutrition [1].

- Procedures:

- Controlled Meal: Provide participants with a pre-weighed, ad libitum lunch comprising a variety of food types (amorphous, liquids, single-units, spreads). Use a variety of tableware to minimize its influence on estimation [1].

- Post-Meal Estimation: At 2 and 24 hours after the meal, participants self-report portion sizes consumed using both TB-PSE and IB-PSE via a digital questionnaire. The order of the two PSEAs should be randomized across participants [1].

- True Intake Calculation: Weigh plate waste to calculate true intake:

True intake (g) = Pre-weighed food (g) - Plate waste (g)[1].

- Data Analysis:

- Compare mean true intakes to reported intakes using non-parametric tests (e.g., Wilcoxon's tests) [1].

- Calculate the proportion of reported portion sizes within 10% and 25% of the true intake for each method [1].

- Use an adapted Bland-Altman approach to assess agreement between true and reported portion sizes for all foods combined and by food type [1].

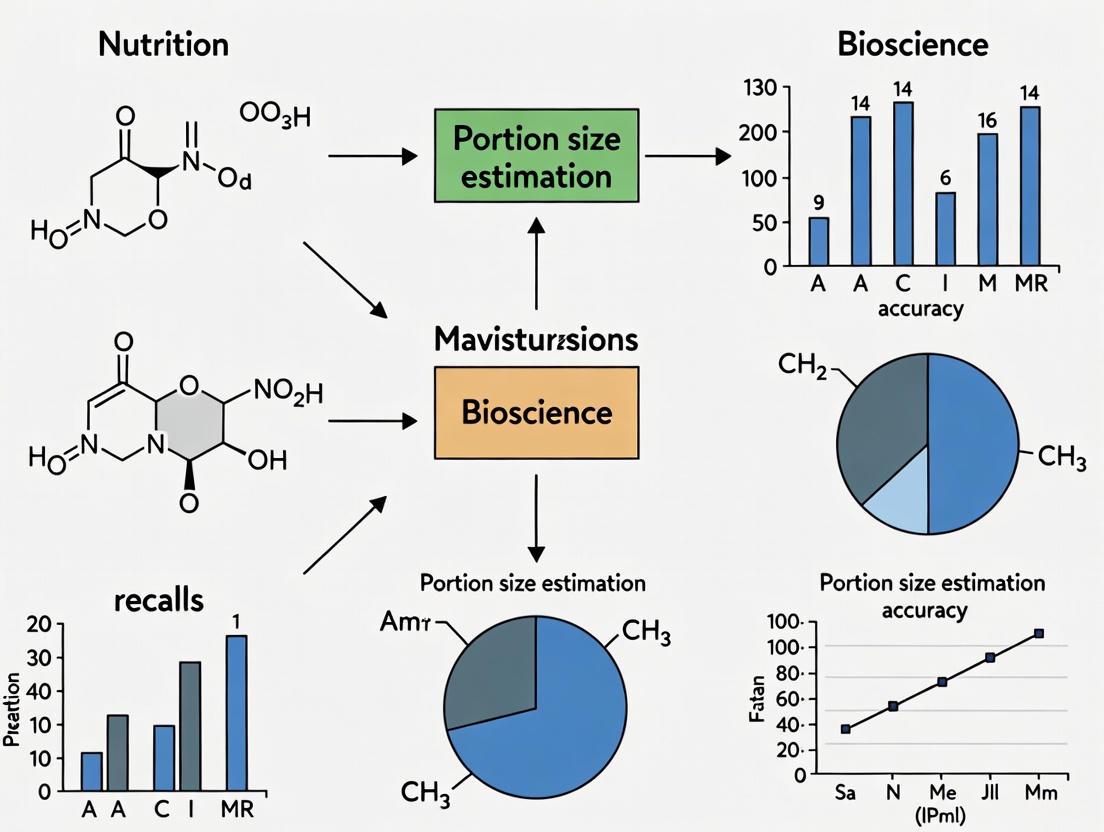

Workflow Visualization

PSEA Validation Workflow

PSEA Selection Guide

Research Reagent Solutions

Table 1: Essential Materials for Portion Size Estimation Research

| Item | Function/Application | Key Features |

|---|---|---|

| Calibrated Digital Scales [2] | Weighed Food Records (reference method) | Capacity: ~7 kg, Accuracy: 1 g (e.g., KD-7000) |

| 3D Printed Cubes [2] | Food group-level volume estimation for GDQS app | Pre-defined sizes based on food group gram cut-offs and densities |

| Playdough [2] | Flexible, low-cost alternative to cubes for volume estimation | Interactive modeling for amorphous and mixed foods |

| Online Image-Series Tools [3] | Assess perceived portion size norms via sliding image scales | 8 successive portion sizes, randomized presentation |

| ASA24 Picture Book [1] | Standardized image-based PSEA | 3-8 portion size images per food item, freely available |

| DietAI24 Framework [4] | AI-powered comprehensive nutrient estimation | MLLM + RAG technology, estimates 65 nutrients from FNDDS |

Table 2: Performance Comparison of Portion Size Estimation Methods

| Method | Overall Median Error | % within 10% of True Intake | % within 25% of True Intake | Key Advantages |

|---|---|---|---|---|

| Text-Based (TB-PSE) [1] | 0% | 31% | 50% | Superior accuracy for most foods, better agreement with true intake |

| Image-Based (IB-PSE) [1] | 6% | 13% | 35% | Lower participant burden, visual cues |

| Cubes with GDQS App [2] | Equivalent to WFR* | N/A | N/A | Validated for diet quality score, good for field use |

| Playdough with GDQS App [2] | Equivalent to WFR* | N/A | N/A | Flexible, low-cost, valid for food group-level estimation |

| DietAI24 (AI) [4] | 63% MAE reduction^ | N/A | N/A | Comprehensive (65 nutrients), high accuracy for mixed dishes |

*Equivalence tested via TOST with 2.5-point margin for GDQS score [2].^Mean Absolute Error reduction for food weight and four key nutrients vs. existing methods [4].

Troubleshooting Guide: Common Cognitive Errors & Solutions

Problem: Inaccurate or incomplete food recall during a 24-hour dietary recall (24HR). Solution: This guide helps you identify and mitigate common cognitively-driven errors to improve data quality in your portion size estimation research.

FAQ 1: Why do participants frequently omit certain foods, like condiments or ingredients in mixed dishes?

- Explanation: This is a classic recall bias issue. Memory is hierarchical; core meal components are better remembered than peripheral additions. Furthermore, visualizing and decomposing complex, multi-ingredient foods (e.g., sandwiches, salads) places high demands on visual imagery and working memory [5] [6].

- Evidence: Validation studies comparing recall to observed intake show high omission rates for items like tomatoes, mustard, lettuce, cheese, and mayonnaise [5].

- Mitigation Strategy: Implement a multiple-pass method with specific, standardized probes for additions, condiments, and ingredients in mixed dishes. Using visual aids (e.g., images of common mixed dishes with their components labeled) can assist memory and conceptualization [5].

FAQ 2: Why does portion size estimation vary so significantly between individuals?

- Explanation: Accurate portion size estimation is a complex cognitive task requiring visuospatial skills, working memory to hold and manipulate images, and executive function to map memories to measurement tools. Individual differences in these cognitive domains lead to high variability in estimation error [7].

- Evidence: Studies show that poorer performance on cognitive tasks of visual attention and executive function (e.g., Trail Making Test) is directly associated with greater error in energy intake estimation [7].

- Mitigation Strategy: Provide a variety of portion size estimation aids tailored to your population (e.g., digital images of foods in different sized plates/bowls, household measuring kits, food models). Training participants on how to use these aids before the main recall can improve proficiency.

FAQ 3: How does a participant's cognitive function specifically impact their reporting accuracy?

- Explanation: Completing a 24HR is a neurocognitively demanding process that relies on several key functions [7]:

- Visual Attention & Executive Function: For navigating the recall process and filtering relevant memories.

- Memory (Working & Visual): To encode, retain, and retrieve details of consumption.

- Cognitive Flexibility: To switch between different food items and meal occasions.

- Evidence: Research using controlled feeding designs has quantified that slower performance on the Trail Making Test (indicating weaker visual attention/executive function) is significantly associated with greater error in self-administered 24HR tools like ASA24 and Intake24. Regression models indicate these cognitive factors can explain ~14-16% of the variance in energy estimation error [7].

- Mitigation Strategy: For studies in older populations or where cognitive decline is a concern, consider an interviewer-administered 24HR. A trained interviewer can provide cues and support that mitigate the impact of individual cognitive limitations [7] [8].

- Explanation: Completing a 24HR is a neurocognitively demanding process that relies on several key functions [7]:

FAQ 4: What is the impact of the retention interval (time between eating and recall) on reporting?

- Explanation: Memory decay occurs over time. A longer retention interval between the eating occasion and the recall attempt increases the likelihood of omissions and intrusions (reporting foods not consumed) [5].

- Evidence: Research in children has demonstrated that a shorter retention interval significantly improves reporting accuracy. While evidence in adults is more limited, the principles of memory retention are universally applicable [5].

- Mitigation Strategy: Schedule 24HR interviews as close to the target period as possible (e.g., the following morning for the previous day's intake). For self-administered tools, prompt participants to complete the recall promptly.

Experimental Protocols for Investigating Cognitive Demands

Protocol 1: Quantifying the Association Between Cognitive Function and Recall Error

This protocol is derived from a controlled feeding study designed to isolate the effect of neurocognitive processes on dietary reporting error [7].

1. Objective: To determine whether variation in performance on standardized cognitive tasks predicts the magnitude of error in self-reported energy and nutrient intake using 24HR methods.

2. Materials:

- See "The Scientist's Toolkit" below for key reagents and cognitive tasks.

3. Participant Population:

- Convenience sample of adults (e.g., university staff and students), excluding individuals with conditions that severely impact diet or cognition [7].

4. Study Design:

- Controlled Feeding: Provide all meals and snacks to participants on designated study days. This establishes the "true" intake against which reported intake will be compared [7].

- Cross-Over Design: Each participant completes multiple 24HR methods (e.g., ASA24, Intake24, Interviewer-Administered 24HR) in a randomized order on separate occasions to control for order effects [7].

5. Procedure:

- Baseline Assessment:

- Administer demographic questionnaire (age, sex, education level).

- Administer a battery of computerized cognitive tasks (in order):

- Trail Making Test (TMT): Assesses visual attention and executive function. The outcome measure is time to completion [7].

- Wisconsin Card Sorting Test (WCST): Assesses cognitive flexibility. The outcome is the percentage of accurate trials [7].

- Visual Digit Span (Forwards/Backwards): Assesses working memory. The outcome is the maximum correct digit span [7].

- Vividness of Visual Imagery Questionnaire (VVIQ): Assesses the strength of visual imagery. The outcome is a self-reported vividness score [7].

- Dietary Reporting:

- On the day after each controlled feeding day, participants complete the assigned 24HR method.

- Data Processing:

- Calculate the "true" energy and nutrient intake from the controlled feeding data.

- Calculate the Percentage Error for each participant and each 24HR method:

((Reported Intake - True Intake) / True Intake) * 100. - Use absolute percentage error in statistical models to assess the magnitude of error regardless of direction [7].

6. Statistical Analysis:

- Use linear regression models to assess the association between each cognitive task score (independent variable) and the absolute percentage error in energy intake (dependent variable).

- Adjust models for potential confounders such as age, sex, and education level.

- The proportion of variance (R²) explained by the cognitive scores indicates their relative importance in driving error [7].

Protocol 2: Validating a Novel Portion Size Estimation Aid

1. Objective: To evaluate the effectiveness of a new portion size estimation aid (e.g., a digital interface with interactive 3D food models) against a traditional method (e.g., printed food photographs).

2. Materials:

- Standardized meals of known weights.

- The novel portion size estimation aid (e.g., tablet app).

- The traditional portion size estimation aid (e.g., booklet of 2D images).

- Food scales.

3. Participant Population:

- Recruit participants representative of the target population for the main study.

4. Study Design:

- Randomized controlled trial. Participants are randomly assigned to use either the novel aid or the traditional aid.

5. Procedure:

- Participants consume a standardized meal where they serve themselves.

- Immediately after the meal, participants use their assigned aid to estimate the portion sizes of each food item they consumed.

- Researchers weigh all food remains to calculate the actual consumed portion size with high accuracy.

6. Data Analysis:

- Calculate the difference between estimated and actual portion sizes for each food item.

- Compare the mean absolute error and bias (systematic over- or under-estimation) between the two intervention groups using t-tests or non-parametric equivalents.

Quantitative Data on Cognitive Factors & Reporting Error

The following table summarizes key quantitative findings from a controlled feeding study that linked cognitive performance to dietary recall error [7].

Table 1: Association Between Cognitive Task Performance and Error in Self-Administered 24-Hour Recalls

| Cognitive Task | Cognitive Domain Measured | Dietary Assessment Tool | Association with Reporting Error (Absolute % Error in Energy) | Variance Explained (R²) |

|---|---|---|---|---|

| Trail Making Test (Longer time = poorer performance) | Visual Attention, Executive Function | ASA24 | B = 0.13 (95% CI: 0.04, 0.21) | 13.6% |

| Trail Making Test (Longer time = poorer performance) | Visual Attention, Executive Function | Intake24 | B = 0.10 (95% CI: 0.02, 0.19) | 15.8% |

| Wisconsin Card Sorting Test | Cognitive Flexibility | ASA24, Intake24 | No significant association | Not Significant |

| Visual Digit Span | Working Memory | ASA24, Intake24 | No significant association | Not Significant |

| Vividness of Visual Imagery | Visual Imagery Strength | ASA24, Intake24 | No significant association | Not Significant |

Note: B coefficient represents the change in absolute percentage error for each unit increase in time (seconds) on the Trail Making Test. This data was derived from a study with 139 participants [7].

Experimental Workflow: Linking Cognition to Recall Accuracy

The diagram below outlines the logical flow and key components of a study designed to investigate how cognitive demands lead to reporting errors in dietary recall.

The Scientist's Toolkit

Table 2: Key Research Reagents and Cognitive Tasks for Investigating Cognitive Demands in Dietary Recall

| Item Name | Function / What It Measures | Application in Dietary Recall Research |

|---|---|---|

| Controlled Feeding Study Design | Provides the "gold standard" reference for true dietary intake against which self-reports are compared [7]. | Essential for quantifying the magnitude and direction of reporting error, allowing for direct validation of recall data. |

| Trail Making Test (TMT) | Assesses visual attention, processing speed, and executive function. Outcome: Time to completion [7]. | Identifies participants who may struggle with the complex, sequential navigation required in a 24HR, leading to greater error [7]. |

| Wisconsin Card Sorting Test (WCST) | Assesses cognitive flexibility and the ability to adapt to changing rules. Outcome: % perseverative errors [7]. | Measures the ability to switch between different food categories and meal occasions during the recall process. |

| Visual Digit Span Task | Assesses working memory capacity. Outcome: Maximum digit span recalled correctly [7]. | Gauges the ability to hold and manipulate food-related information (e.g., portion sizes, ingredients) in mind while formulating a response. |

| Vividness of Visual Imagery Questionnaire (VVIQ) | Self-report measure of the clarity and vividness of voluntary visual imagery [7]. | Evaluates the role of mentally "picturing" a past meal in accurately recalling and describing consumed foods. |

| Automated Multiple-Pass Method (AMPM) | A structured 24HR interview protocol with multiple passes/prompts to minimize memory lapses [5]. | The standard method used in many national surveys (e.g., NHANES) to reduce omissions and improve detail. Serves as a benchmark for testing new tools. |

| ASA24 (Automated Self-Administered 24HR) | A self-administered, web-based 24HR tool based on the AMPM [7] [5]. | Allows for high-throughput data collection. Useful for studying how cognitive factors impact performance in an unassisted, automated environment [7]. |

FAQs: Troubleshooting Portion Size Estimation

Q1: What is the "flat-slope phenomenon" in portion size estimation?

The flat-slope phenomenon is a well-documented pattern in dietary assessment where respondents tend to overestimate small portion sizes and underestimate large portion sizes [9]. This compression of reported values toward a central tendency distorts the true range of consumption and can attenuate observed diet-disease relationships in research [10].

Q2: Why are amorphous foods particularly challenging to estimate?

Amorphous foods—items without a defined shape, such as mashed potatoes, rice, or casseroles—are consistently reported with less accuracy than other food types [11]. The primary challenge is the lack of a stable, recognizable unit or form. This makes it difficult for individuals to conceptualize the amount consumed and to map that mental picture accurately to a portion size aid, leading to greater measurement error [11] [12].

Q3: Does the type of portion size image affect estimation accuracy?

Research indicates that the number of images may be more critical than the angle of the image. Studies for tools like the ASA24 (Automated Self-Administered 24-hour recall) found that using eight images, as opposed to four, led to more accurate estimations [11]. Furthermore, participants showed a strong preference for seeing all portion options (simultaneous presentation) on one screen rather than having them appear sequentially [11].

Q4: How do systematic errors vary by food type?

The direction and magnitude of error are not uniform across all foods. The table below summarizes common error patterns by food category, as identified in controlled feeding studies [11] [12].

Table 1: Systematic Errors in Portion Size Estimation by Food Category

| Food Category | Examples | Common Error Pattern | Notes |

|---|---|---|---|

| Amorphous/Soft Foods | Mashed potatoes, scrambled eggs, salad | Overestimation [11] [12] | Among the most challenging for accurate estimation. |

| Small Pieces | Peas, corn, nuts | Overestimation [12] | - |

| Shaped Foods | Fish sticks, cookies | Overestimation [12] | - |

| Single-Unit Foods | Apple, slice of bread, banana | Underestimation [12] | - |

| Spreads | Butter, jam, cream cheese | High error rate, less accurate reporting [11] | Often consumed in small quantities, leading to high relative error. |

Experimental Protocols for Validation

Validating portion size estimation tools requires study designs that isolate and measure different cognitive processes. The following protocols are commonly used in the field.

Protocol 1: Evaluating Perception with Pre-Weighed Portions

This method directly tests a respondent's ability to match a real-life portion to a photograph.

- Objective: To evaluate the accuracy of a digital food atlas in terms of how well respondents perceive the amount of food displayed in a picture [9].

- Design:

- Pre-weighed, actual portions of food are presented to participants.

- For each food series, multiple quantities are prepared: one matching a reference photo (Q2), one slightly smaller (Q1), and one slightly larger (Q3) [9].

- Participants are shown the actual portion and the corresponding series of food photographs on a computer screen.

- They are then asked to select the photograph that best represents the portion in front of them.

- Key Metrics: The percentage of times the correct or adjacent image is selected; the systematic tendency to over- or under-estimate across different portion sizes [9].

Protocol 2: Assessing Conceptualization and Memory with Observational Feeding

This more comprehensive protocol tests the entire reporting process, from memory to portion size selection.

- Objective: To assess the accuracy of portion-size estimates incorporating both conceptualization and memory, mimicking a real dietary recall scenario [11].

- Design:

- Day 1 (Feeding): Participants select and consume foods for meals (e.g., breakfast and lunch) in a buffet-style setting. The foods represent various categories (amorphous, pieces, spreads, etc.). Serving containers and plate waste are weighed unobtrusively to determine the exact amount consumed [11].

- Day 2 (Recall): The following day, participants complete an unannounced 24-hour dietary recall using a computer tool. They use digital images to report the portion sizes of the foods they consumed the previous day [11] [12].

- Key Metrics: The absolute difference between the actual weight consumed and the reported weight, analyzed by food category and participant characteristics [11] [12].

Research Reagent Solutions

The table below details key tools and methods used in the development and validation of portion size estimation aids.

Table 2: Essential Materials for Portion Size Estimation Research

| Item / Solution | Function in Research |

|---|---|

| Digital Food Photography Atlas | A standardized set of food portion photographs, developed using population-based consumption data (e.g., 5th to 95th percentiles), used as the primary visual aid during dietary recalls [9] [11]. |

| Pre-Weighed Food Portions | Serve as the "gold standard" for validating perception in controlled studies. Portions are carefully weighed and presented to participants to test their ability to match reality to a 2D image [9]. |

| Unobtrusive Digital Scales | Used in feeding studies to determine true intake by weighing serving containers before and after participants self-serve, and again to measure plate waste [11] [12]. |

| Web-Based 24-Hour Recall Tool (e.g., ASA24) | An automated, self-administered dietary recall system that guides participants through a multiple-pass interview and uses integrated digital food images for portion size estimation [11] [5]. |

| Density Factors | Used to apply the portion size data from a photographed food to a similar, non-photographed food by converting between volume and weight, thereby expanding the utility of a finite photo atlas [9]. |

Visualization of Workflows

Portion Size Error Pathways

Portion Aid Validation Workflow

The Influence of Participant Demographics on Estimation Accuracy

Frequently Asked Questions

1. How does a participant's BMI influence their reporting accuracy? Research consistently shows that a higher BMI is correlated with a lower likelihood of providing accurate reports of energy intake. This is often due to a higher degree of under-reporting. One study found that for every unit increase in BMI, the odds of a participant providing a plausible intake record decreased by 19% [13].

2. Are there racial or ethnic differences in dietary reporting accuracy? Yes, significant differences exist. Studies have found that the agreement between different dietary assessment tools (like Food Frequency Questionnaires and 24-hour recalls) can vary considerably by race. For instance, the correlation between instruments was markedly lower for Black women (rho=0.23) compared to White women (rho=0.46), suggesting that standard tools may not perform equally well across all demographic groups [14].

3. Which food types are most often misreported, regardless of demographics? A systematic review identified that some food groups are consistently prone to specific errors [15]:

- Omissions: Vegetables (2–85% of the time) and condiments (1–80%) are frequently omitted entirely from reports.

- Portion Misestimation: Both under- and over-estimation occur for most food types, but portion size errors account for a vast majority of the total error for items like sweets and confectionery.

4. Does the level of social desirability affect a participant's food diary? Yes. Participants with a greater need for social approval are less likely to provide plausible records of their food intake. One study reported that a higher score on the Social Desirability Scale was associated with 69% lower odds of having a plausible food record [13].

Troubleshooting Guides

Problem: Systematic under-reporting of energy intake in your study cohort.

- Potential Cause: The cohort has a high average BMI or contains individuals with high cognitive restraint or concern about social judgment [13].

- Solutions:

- Consider using image-based dietary records where portion size estimation is handled by trained analysts or AI, rather than relying on participant self-estimation [13].

- Use statistical adjustments to account for systematic bias related to BMI.

- Anonymize the data collection process as much as possible to reduce the effect of social desirability.

Problem: Low accuracy for specific food groups, leading to nutrient miscalculation.

- Potential Cause: Certain foods, like amorphous vegetables, small pieces, and spreads, are inherently harder for participants to conceptualize and estimate [11] [12] [15].

- Solutions:

- For amorphous foods (mashed potatoes, rice): Use portion size aids that show mounds or household measures, which can be as accurate as food photographs and are more cost-effective [11].

- For all problematic foods: Ensure your portion size tool uses 8 simultaneous images rather than 4, as this has been shown to improve accuracy. Present these images simultaneously rather than sequentially [11] [16].

Problem: A large portion size estimation error across all food types.

- Potential Cause: The portion size aids (e.g., photographs) are not presented optimally, or the tool is not user-friendly [11] [17].

- Solutions:

- Optimize the visual presentation of portion sizes. For solid foods, a 45° angle is generally most accurate, while for beverages, a 70° angle is better. Combining multiple viewing angles can further improve accuracy [18].

- Validate your image-series with a perception study before full deployment to ensure participants can correctly match images to real food portions [16].

- For online studies, use simplified portion selection tasks with images on a slider or as multiple-choice options, which have shown good agreement with more complex laboratory tasks [17].

Problem: Low participant compliance or reactive reporting (changing diet because it's being measured).

- Potential Cause: The dietary recording burden is too high, or participants are reacting to the observation effect [13].

- Solutions:

- Utilize prospective, image-based methods like the mobile food record (mFR) that reduce memory burden and participant effort for portion size estimation [13].

- Be aware that reactivity is common; one study found participants decreased their reported energy intake by 17% per day over a 4-day recording period. A history of significant weight loss is a key correlate of this behavior [13].

- Keep recording periods as short as possible to minimize this effect.

Table 1: Impact of Demographic and Psychosocial Factors on Reporting Accuracy

| Factor | Impact on Accuracy | Key Statistic | Source |

|---|---|---|---|

| Body Mass Index (BMI) | Higher BMI associated with less plausible energy intake reports. | OR 0.81 (95% CI: 0.72, 0.92) per unit increase in BMI [13]. | [13] |

| Race | Self-administered FFQ had lower correlation with 24HR in Black vs. White older women. | Mean correlation (rho) was 0.46 for Whites vs. 0.23 for Blacks [14]. | [14] |

| Social Desirability | Greater need for social approval linked to implausible intake reporting. | OR 0.31 (95% CI: 0.10, 0.96) [13]. | [13] |

| Sex | Females may estimate portion sizes more accurately than males from images. | Significant difference (P = 0.019) in one validation study [16]. | [16] |

Table 2: Portion Size Estimation Accuracy by Food Category (from ASA24 Recalls)

| Food Category | Typical Estimation Trend | Examples of Misestimation | Source |

|---|---|---|---|

| Small Pieces & Shaped Foods | Overestimation | Candy, pasta, cookies [12]. | [12] |

| Amorphous/Soft Foods | Overestimation (especially with assisted recall) | Mashed potatoes, rice, oatmeal [12]. | [11] [12] |

| Single-Unit Foods | Underestimation | An apple, a slice of bread, a piece of chicken [12]. | [12] |

| Beverages | Lower omission rates, but portion size can be variable. | Orange juice, soft drinks [18] [15]. | [18] [15] |

| Vegetables & Condiments | High Omission Rates | Seasonings, sauces, leafy greens [15]. | [15] |

Experimental Protocols for Key Studies

Protocol 1: Validating Portion Size Image-Series (Perception Study) This method tests the validity of image-based portion aids without relying on participant memory [16].

- Food Preparation: Prepare multiple servings of a test food, each pre-weighed to a specific gram weight.

- Study Setup: In a lab kitchen, present these pre-weighed food portions to participants one at a time.

- Task: Ask participants to observe the real food portion and then select the image from a series (e.g., 7 images labeled A-G) that they perceive to be the closest match.

- Data Collection: Record the participant's image choice for each presented food item.

- Analysis: Classify responses as Correct (exact match), Adjacent (one portion size off), or Misclassified. Calculate the mean weight discrepancy between the chosen image and the correct image.

Protocol 2: Observational Feeding Study (Conceptualization & Memory) This protocol assesses accuracy in a real-world recall scenario involving memory [11].

- Recruitment & Consent: Recruit a diverse sample of participants and obtain informed consent without revealing the true focus on portion sizes.

- Controlled Feeding: On day one, provide participants with buffet-style meals (e.g., breakfast and lunch). Unobtrusively weigh all serving containers before and after participants serve themselves to determine the exact amount taken.

- Plate Waste Weighing: Weigh any food left on plates after the meal to determine the exact amount consumed.

- Dietary Recall: On day two, ask participants to complete a dietary recall using a computer application. They will report the foods consumed and estimate portion sizes using the digital image aids being tested.

- Data Analysis: Calculate the absolute difference between the actual consumed weight and the reported portion size estimate for each food.

Research Reagent Solutions

Table 3: Essential Tools for Portion Size Estimation Research

| Tool / Reagent | Function in Research | Example / Specification |

|---|---|---|

| Digital Food Scales | To obtain objective, gold-standard measurements of food weight served and wasted during controlled feeding studies. | UltraShip UL-35 scale (accurate to 2g) [11]. |

| Standardized Portion Image-Series | Digital aids to help participants conceptualize and report portion sizes in recalls. Typically consist of 7-8 images showing increasing portion sizes [11] [16]. | |

| Fiducial Marker | An object of known size, shape, and color placed in food photos to provide a scale reference for automated portion size estimation or analyst review [13]. | A checkerboard card or a colored cube of known dimensions. |

| Multimodal LLM with RAG | An AI framework for automated food recognition and nutrient estimation from food images, grounded in authoritative nutrition databases to improve accuracy [4]. | DietAI24 framework using GPT Vision and FNDDS database [4]. |

| Food & Nutrient Database | A standardized database linking food items to their nutritional content, essential for converting reported food intake into nutrient data. | Food and Nutrient Database for Dietary Studies (FNDDS) [4]. |

Experimental Workflow Diagram

The diagram below illustrates the logical workflow and key factors influencing accuracy in a portion size estimation study, from participant recruitment to data analysis.

From Traditional Aids to AI: A Toolkit of Portion Size Estimation Methods

FAQs: Troubleshooting Common PSEA Experimental Challenges

1. Why do my participants consistently overestimate small portions and underestimate large ones, and how can I mitigate this? This is a well-documented phenomenon known as the flat-slope syndrome [1]. It is a common cognitive bias where participants struggle to accurately estimate portions at the extremes of the size spectrum.

- Solution: Implement training sessions before data collection. Use pre-weighed food samples representing small, medium, and large portions to calibrate participants' visual estimation skills. Furthermore, ensure the PSEA you select offers a wide range of sizes that cover the expected consumption amounts in your study population.

2. For which food types are traditional PSEAs most and least accurate? The accuracy of PSEAs is highly dependent on food form [1]. The table below summarizes typical performance across food categories.

Table 1: PSEA Accuracy by Food Type

| Food Category | Typical Estimation Accuracy | Common Challenges |

|---|---|---|

| Single-Unit Foods (e.g., bread slices, fruits) | Highest | Fewer challenges; easily conceptualized as discrete units. |

| Spreads (e.g., butter, jam) | High | Small portions are often estimated well, though precise amounts can be tricky. |

| Amorphous Foods (e.g., pasta, rice, scrambled eggs) | Lower | Lack of a defined shape leads to high variability in estimation. |

| Liquids (e.g., milk, juice) | Lower | Transparency and container type can significantly influence perception. |

3. How does memory decay affect the use of PSEAs in 24-hour recalls, and what interview techniques can help? Memory lapses are a major source of error in recall-based methods, leading to the omission of items (especially additions like condiments or ingredients in mixed dishes) and errors in detail [5]. The retention interval between consumption and recall is critical.

- Solution: Utilize a multiple-pass interviewing technique [5]. This method uses standardized probes and prompts to minimize omissions and standardize the level of detail. Techniques include:

- Quick List: The participant rapidly lists all foods and beverages consumed.

- Forgotten Foods Probe: Specifically asking about commonly missed items like fruits, vegetables, sweets, and snacks.

- Detail Pass: Collecting detailed information about each item, including the portion size using the PSEA.

- Final Review: A final opportunity to remember any additional items.

4. We are designing a new dietary assessment tool. Should we choose text-based descriptions (TB-PSE) or image-based aids (IB-PSE)? Validation studies directly comparing these methods suggest that text-based descriptions (TB-PSE) may yield more accurate results [1]. One study found that TB-PSE, which uses a combination of household measures and standard portion sizes, performed better than image-based assessment (IB-PSE) in bringing reported portion sizes within 10% and 25% of true intake [1].

- Recommendation: If high precision is critical, TB-PSE is preferable. However, ensure that all textual descriptions (e.g., "cup," "spoon") are clearly defined and relevant to your target population's cultural context to avoid ambiguity [19].

Technical Specifications & Validation Data

The following table summarizes key performance data from recent validation studies for different PSEA methods.

Table 2: Validation Metrics for Selected PSEA Methods

| PSEA Method | Study Design | Key Validation Metric | Result | Reference |

|---|---|---|---|---|

| 3D Cubes (for GDQS) | Comparison against Weighed Food Records (n=170) | Equivalence margin of 2.5 points on GDQS score | Equivalent (p=0.006) | [2] |

| Playdough (for GDQS) | Comparison against Weighed Food Records (n=170) | Equivalence margin of 2.5 points on GDQS score | Equivalent (p<0.001) | [2] |

| Text-Based (TB-PSE) | Comparison against true intake at lunch (n=40) | Median relative error vs. true intake | 0% error | [1] |

| Image-Based (IB-PSE) | Comparison against true intake at lunch (n=40) | Median relative error vs. true intake | 6% error | [1] |

| Image-Based (IB-PSE) | Comparison against true intake at lunch (n=40) | % of estimates within 10% of true intake | 13% | [1] |

Detailed Experimental Protocol: Validating a New PSEA Against Weighed Food Records

This protocol is adapted from a 2025 validation study for the GDQS app using cubes and playdough [2].

Objective: To assess whether a candidate PSEA provides equivalent diet quality data to the gold-standard Weighed Food Record (WFR) for the same 24-hour reference period.

Day 1: Training and Setup

- Participants attend an in-person training session in small groups.

- Trainers provide a calibrated digital dietary scale (accurate to 1 g), WFR data collection forms, and a detailed guide.

- Participants receive hands-on instruction on weighing foods, beverages, and individual ingredients in mixed dishes.

Day 2: Weighed Food Record (WFR) Execution

- Participants carry out a 24-hour WFR, weighing and recording all consumed items and any leftovers.

- Technical support is available via email or phone to resolve issues in real-time.

Day 3: PSEA Testing

- Participants return to the lab to submit their completed WFR.

- A face-to-face interview is conducted using the dietary assessment tool (e.g., the GDQS app) integrated with the candidate PSEA.

- The order of PSEA methods (e.g., cubes vs. playdough) should be randomized to control for order effects.

- Participants estimate their previous day's consumption using the PSEA.

- Feedback on the usability of the PSEA is collected.

Data Analysis:

- Equivalence Testing: Use a paired two one-sided t-test (TOST) to determine if the diet metric (e.g., GDQS score) from the PSEA is equivalent to the WFR within a pre-specified margin (e.g., 2.5 points).

- Agreement Analysis: Calculate Kappa coefficients to assess agreement in classifying individuals into risk categories (e.g., poor diet quality) between the PSEA and WFR.

Experimental Workflow for PSEA Validation

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Essential Materials for PSEA Research

| Item / Reagent | Technical Specification | Primary Function in Experiment |

|---|---|---|

| Calibrated Digital Scale | Capacity: ~7 kg, Accuracy: 1 g (e.g., KD-7000) [2] | To obtain the gold-standard measurement of true food intake in validation studies. |

| 3D Printed Cubes (Pre-defined) | Set of 10 cubes of varying volumes, sizes based on food group gram cut-offs and density data [2]. | To standardize portion size estimation at the food group level in dietary assessment interviews. |

| Non-toxic Playdough | Standard modeling compound, various colors [2]. | A flexible, interactive PSEA allowing participants to mold the volume of consumed food items. |

| Food Image Atlas | Standardized images (e.g., ASA24 picture book), 3-8 portion size images per item with known gram weights [1]. | To serve as a visual PSEA; participants select the image that best matches their consumed portion. |

| Structured Data Collection Forms | Paper or digital forms for Weighed Food Records, including food and recipe forms [2]. | To systematically record detailed information on foods, ingredients, and weights during the gold-standard assessment. |

Accurate portion size estimation is fundamental to reliable dietary intake surveys, which in turn provide essential data for nutritional interventions, public health policies, and clinical research. Traditional methods like 24-hour recall and food diaries are often plagued by recall difficulties and underreporting, especially as portion sizes increase [18]. Image-based dietary assessment has emerged as a powerful alternative, simplifying the process and improving accuracy over manual record-keeping [20]. However, the validity of this method depends heavily on the quality and perspective of the photographs used. This technical support guide provides evidence-based protocols for optimizing photograph angles to maximize portion estimation accuracy for different food types, a critical consideration for researchers and professionals in nutrition and drug development.

Experimental Protocols: Establishing Optimal Angles

The following methodology is adapted from a validated study designed to evaluate the accuracy of food quantity estimation using multi-angle photographs [18] [21].

Study Design and Participant Recruitment

- Participants: The referenced study involved 82 healthy adults (41 males and 41 females) aged 20-50 years. Participants had no visual impairments and no history of conditions affecting appetite.

- Ethical Approval: The study was approved by an institutional review board, and all participants provided written informed consent.

- Food Selection: Six types of food were selected based on high consumption frequency in the target population: cooked rice, soup, grilled fish, seasoned vegetables, kimchi, and a beverage [18].

Meal Observation and Portion Selection Procedure

- Meal Setup: Experimental meals were arranged to simulate an actual meal setting. Three different meal types (A, B, and C) were prepared, each featuring a different combination of three portion sizes for the six food items, as detailed in Table 1.

- Observation: Participants observed a meal for 3 minutes.

- Recall Test: After a short distraction, participants were shown a series of photographs for each food item. Each series contained five images depicting different portion sizes, all taken from the same angle. Participants selected the photograph they believed matched the portion they had observed.

- Angle Validation: This selection process was repeated using image sets captured from different angles (0°, 45°, and 70° for solid foods; 45°, 60°, and 70° for beverages). Accuracy was calculated as the percentage of correct matches.

Table 1: Example Experimental Meal Portion Sizes

| Food Item | Type A | Type B | Type C |

|---|---|---|---|

| Cooked Rice (mL) | 200 | 250 | 300 |

| Soup (mL) | 250 | 150 | 200 |

| Grilled Fish (mL) | 40 | 80 | 55 |

| Cooked Vegetable (mL) | 50 | 100 | 35 |

| Kimchi (g) | 60 | 25 | 40 |

| Beverage (mL) | 200 | 275 | 125 |

Photographic Setup

- Portion Size Levels: Five portion-size levels for each food were determined based on national consumption percentiles (e.g., 10th, 30th, 50th, 70th, and 90th) [18].

- Camera Angles: Photographs were taken from standardized angles. For solid foods, the angles were 0° (top-down), 45° (angled view), and 70° (side view). For beverages, the angles were 45°, 60°, and 70° [18] [21].

Results and Data Presentation: Optimizing Angles by Food Type

The accuracy of portion size estimation varied significantly depending on both the food type and the camera angle. The following table summarizes the key quantitative findings from the study, highlighting the most effective single angle and the benefit of using combined angles for each food category [18] [21].

Table 2: Food Portion Estimation Accuracy by Photographic Angle

| Food Type | Highest Accuracy Angle (Single) | Accuracy at Best Angle | Accuracy with Combined Angles | Notes |

|---|---|---|---|---|

| Cooked Rice | 45° | 74.4% | 85.4% | Significant improvement with combined angles (P < 0.001). |

| Soup | Varies (Lower overall) | - | - | Consistently low accuracy across all angles; high overestimation rates. |

| Grilled Fish | No significant difference | - | Slight improvement | Accuracy improved slightly when angles were combined. |

| Vegetables | Varies | - | 53.7% | Combined angles significantly improved accuracy (P < 0.05). |

| Kimchi | 45° | 52.4% | - | 45° provided the most accurate single view. |

| Beverages | 70° | 73.2% | - | The steep 70° angle was most effective for liquids. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why is a 45-degree angle generally recommended for solid foods like rice and kimchi? A1: A 45-degree angle corresponds to the average visual perspective of a person seated at a table looking down at their food [18]. This familiar vantage point provides a more comprehensive view of the food's volume and surface area compared to a top-down (0°) view, which can obscure depth, or a side (70°) view, which may not fully capture the surface area.

Q2: For liquid items like soup and beverages, why is a steeper 70-degree angle more effective? A2: A 70-degree angle offers a better line of sight into the bowl or glass, allowing the researcher to see the meniscus (the curved surface of the liquid) and better assess the fill level [18] [21]. Top-down angles are less effective for liquids as they only show the surface and provide no depth information.

Q3: The data shows low accuracy for soup estimation. How can this be improved in a research setting? A3: Soup presents a consistent challenge, likely due to its heterogeneous composition and the difficulty in judging volume in a bowl. To mitigate this, researchers should:

- Combine Multiple Angles: Use a protocol that includes both 45° and 70° images.

- Use a Standardized Vessel: Always serve soup in a bowl of known, standardized size and shape.

- Consider Weight: If feasible, supplement photographic data with weighed food records for the most challenging items [18].

Q4: What are the key technical settings to avoid common photography problems that could compromise data quality? A4: To ensure consistent, analyzable images:

- Avoid Blur: Use a tripod and ensure shutter speed is fast enough (e.g., at least 1/60s or faster) to prevent motion blur [22] [23].

- Ensure Proper Exposure: Check that images are neither overexposed (too bright, losing highlight detail) nor underexposed (too dark, losing shadow detail). Use spot metering for accurate results [22].

- Set Correct White Balance: Avoid unnatural color casts by setting the white balance manually or using a custom setting based on the lighting conditions, rather than relying solely on Auto White Balance [23].

- Use High Resolution: Always shoot at the highest resolution possible to allow for detailed analysis and cropping without quality loss [22].

Workflow Visualization

The following diagram illustrates the optimal workflow for capturing food images for portion size estimation, integrating the findings on camera angles.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for Image-Based Dietary Assessment

| Item | Function in Research |

|---|---|

| Standardized Tableware | Bowls, plates, and glasses of known dimensions are critical for controlling variables that affect volume perception. |

| Tripod | Ensures camera stability, eliminates blur, and allows for precise, repeatable angle positioning (e.g., 45°, 70°) across all shots [22]. |

| Color Calibration Card | Used to set custom white balance, ensuring accurate and consistent color reproduction across different lighting conditions [23]. |

| Digital Camera / Smartphone | The primary data capture tool. Must be capable of capturing high-resolution images. |

| Photographic Portion-Size Estimation Aids (PSEA) | A validated library of images showing each food type at multiple portion sizes, used as a reference during participant recall [18] [24]. |

| Lighting Equipment | Consistent, neutral artificial lighting (e.g., softboxes) minimizes shadows and color casts, creating uniform image conditions. |

Frequently Asked Questions (FAQs) for Researchers

This section addresses common operational and methodological questions for researchers using automated dietary recall tools.

| Category | FAQ | Answer |

|---|---|---|

| Study Design & Setup | What is the recommended sample size and concurrent user capacity? | No total respondent limit for a single study; supports up to 800 concurrent users. For large studies, phase scheduling is recommended [25]. |

| Can the tool be used offline or in interviewer-administered mode? | No offline capability; requires internet. Can be interviewer-administered for low-literacy populations, though self-administered use is ideal [25]. | |

| How can I test the system before launching my study? | Use the public ASA24 Respondent Demonstration version or create a dedicated test study with test accounts via the researcher website [25]. | |

| Respondent Management | What is the average completion time for a 24-hour recall? | Average completion time is 24 minutes; first recall typically takes 2-3 minutes longer [25]. |

| Do respondents require training to use the tool? | No formal training required. Instructional videos and guides are available for respondent support [25] [26]. | |

| What should I do if a respondent forgets their password? | Researchers manage accounts and must reset passwords via the ASA24 researcher website [25]. | |

| Data & Output | How does the system handle sodium/salt intake estimation? | Provides valid sodium estimates, assumes salt added in preparation. Most sodium comes from processed foods [25]. |

| What feedback do respondents receive? | Can receive a Respondent Nutrition Report comparing intake to dietary guidelines immediately or via the researcher [25]. |

Troubleshooting Known Issues & Data Cleaning Guides

This section details specific known issues within ASA24 and provides methodologies for identifying and correcting associated data errors.

Troubleshooting Common Data Issues

| Issue Name | Affected Tool Version | Short Description | Suggested Researcher Action |

|---|---|---|---|

| Errant Supplement Nutrient Value [27] | ASA24-2014 | For supplement "Benefiber 100% Natural Chewable," sodium value is 1000 times too high. | 1. In the INS file, find records with SupplCode=1000616400.2. Divide the SODI (sodium) field value by 1000.3. Recalculate TS and TNS file totals for affected users/dates. |

| Incorrect Fruit Portion [27] | ASA24-2014 | "Raisins" reported with "More than 1 fruit" had portion calculated incorrectly. | 1. In the MS file, find FoodListTerm=Raisins and FruitPortionWhole="More than 1 fruit".2. In the INF file, for affected records, multiply HowMany, FoodAmt, and all nutrients by 0.0167. |

| Incorrect Spread Calculation [27] | ASA24-2014 | Relish/hot sauce amounts on hamburgers were incorrectly calculated. | 1. In the MS file, find records with SandSpreadKind="Relish" or "Hot Sauce" on a burger.2. In the INF file, multiply corresponding nutrient values by 0.0625 (relish) or 0.0208 (hot sauce). |

| Ambiguous Bread Reporting [27] | All Versions | Respondents may report total bread slices for multiple sandwiches instead of per sandwich. | Manually review the MS (Multi-Summary) file for this type of logical error and apply corrections outside the system [27]. |

Experimental Protocol for Data Cleaning and Validation

This protocol outlines the methodology for identifying and correcting the "Incorrect Fruit Portion" error related to raisins, serving as a model for handling similar data issues [27].

Objective

To systematically identify and correct erroneous gram weight and nutrient values for "Raisins" reported with the "More than 1 fruit" option in ASA24-2014 data.

Materials and Reagents

- ASA24 Analysis Files: Specifically the MS (Multi-Summary) file and the INF/INFMYPHEI (Individual Food/Mypyramid Equivalents) file.

- Data Processing Software: Statistical software (e.g., R, SAS, Stata, Python with pandas) capable of merging, filtering, and performing mathematical operations on datasets.

- Correction Multiplier Table:

FoodListTerm FruitPortionWhole Foodcode Multiplier Raisins More than 1 fruit 62125100 0.0167

Step-by-Step Methodology

Case Identification in MS File:

- Load the MS file into your analytical environment.

- Filter records where

FoodListTermis "Raisins" and the variableFruitPortionWholeis "More than 1 fruit". - From these records, note the

Username,ReportingDate,FoodNum, and the value inSpinDial(the number of raisins reported).

Record Location in INF/INFMYPHEI File:

- Load the INF/INFMYPHEI file.

- Merge or filter this file using the

Username,ReportingDate, andFoodNumidentified in Step 1. - Confirm the affected record by checking that the

HowManyvalue in the INF file matches theSpinDialvalue from the MS file.

Application of Data Correction:

- For the affected records identified in Step 2, multiply the values in the following fields by the multiplier (0.0167):

HowManyFoodAmt- All nutrient and component value columns (e.g., energy, carbohydrates, vitamins).

- Replace the original values in the INF/INFMYPHEI file with these newly calculated values.

- For the affected records identified in Step 2, multiply the values in the following fields by the multiplier (0.0167):

Recalculation of Daily Totals:

- Update TN/TNMYPHEI File: For each affected

UsernameandReportingDate, sum all the newly corrected nutrient/component values from the INF/INFMYPHEI file. Replace the original daily total values in the TN/TNMYPHEI file with these new sums. - Update TNS File: Where applicable, recalculate the total nutrient intake including supplements by summing the values in the newly corrected TN file and the TS (Total from Supplements) file for the affected

UsernameandReportingDate.

- Update TN/TNMYPHEI File: For each affected

The Scientist's Toolkit: Research Reagent Solutions

Essential digital materials and their functions for conducting research with automated 24-hour recall tools.

| Item Name | Category | Function in Research |

|---|---|---|

| ASA24 Researcher Website | Study Management Platform | Web portal for creating studies, managing respondent accounts, tracking completion progress, and requesting dietary intake analyses [25]. |

| Food & Nutrient Database for Dietary Studies (FNDDS) | Nutrient Database | Underlying USDA database providing the food codes, gram weights, and nutrient values used to auto-code dietary intake in ASA24 [28]. |

| ASA24 Interview Database | Instrument Database | Contains the logic of the dietary recall, including over 1,100 food probes and millions of possible food pathways from food selection to final code assignment [28]. |

| Portion Size Image Database | Estimation Aid | A library of over 10,000 food images depicting up to 8 portion sizes, sourced from Baylor College of Medicine, to improve the accuracy of self-reported portion sizes [27] [28]. |

| MyPyramid Equivalents Database (MPED) | Food Group Analysis | Allows researchers to convert FNDDS food codes into food group equivalents (e.g., cup equivalents of fruits) for analyzing diet quality against guidelines [28]. |

| ASA24 Sleep Module | Supplementary Module | An optional set of questions activated by the researcher to collect data on sleep timing, quantity, and quality for analysis alongside dietary intake data [25]. |

Quantitative System Performance Data

Key quantitative metrics for planning and evaluating studies using automated dietary recall tools.

| Metric | Value | Context / Note |

|---|---|---|

| Average Recall Completion Time | 24 minutes | Independent of enabled modules; based on ASA24-2016 & 2018 data [25]. |

| Typical Completion Time Range | 17 - 34 minutes | For most respondents [25]. |

| Concurrent User Capacity | 800 respondents | Maximum number of simultaneous users entering data [25]. |

| Unique Detailed Probe Questions | > 2,824 questions | In the respondent system [25]. |

| Unique Food Pathways | > 13 million | Possible sequences of questions and answers [25]. |

| Food Portion Photographs | ~10,000 images | Up to 8 portion sizes per food item [28]. |

Workflow for Portion Size Estimation

The diagram below illustrates the core logical pathway a respondent follows when estimating a portion size for a single food item within tools like ASA24 and Intake24.

Welcome to the MLLM & RAG Technical Support Center

This resource provides troubleshooting guides and FAQs for researchers developing AI systems to improve portion size estimation accuracy in dietary recalls. The content addresses specific technical issues encountered when implementing Multimodal Large Language Models (MLLMs) with Retrieval-Augmented Generation (RAG) for nutritional analysis.

Frequently Asked Questions & Troubleshooting Guides

Core Concept FAQs

Q1: Why should I use RAG with MLLMs for portion size estimation instead of a standalone MLLM?

Standalone MLLMs often generate unreliable nutrient values because they lack access to authoritative nutrition databases during inference. This "hallucination problem" is critical in dietary assessment where incorrect values could compromise health research. RAG addresses this by augmenting MLLMs with external knowledge bases, transforming unreliable nutrient generation into structured retrieval from validated sources like the Food and Nutrient Database for Dietary Studies (FNDDS) [4].

Q2: What are the main architectural approaches for building a multimodal RAG pipeline?

There are three primary approaches [29]:

- Embed all modalities into the same vector space: Use models like CLIP to encode both text and images in the same vector space

- Ground all modalities into one primary modality: Process images by creating text descriptions and metadata during preprocessing

- Separate stores for different modalities: Maintain separate vector stores for each modality and use a multimodal re-ranker to identify the most relevant chunks

Q3: How does the DietAI24 framework specifically improve portion size estimation accuracy?

DietAI24 implements a RAG framework that reduces mean absolute error (MAE) for nutrition content estimation by 63% compared to existing approaches. It enables zero-shot estimation of 65 distinct nutrients and food components without requiring food-specific training data by grounding MLLM responses in the authoritative FNDDS database [4].

Implementation & Troubleshooting

Q4: My system consistently underestimates larger portion sizes. How can I address this systematic bias?

This is a documented challenge. Research shows all models exhibit systematic underestimation that increases with portion size, with bias slopes ranging from -0.23 to -0.50 [30]. To mitigate this:

- Implement reference-based scaling: Include standardized reference objects (cutlery, plates of known dimensions) in all images and explicitly prompt models to use these references [30]

- Calibrate for portion ranges: Develop size-specific correction factors based on validation studies

- Use multiclass classification: Frame portion size estimation as multiclass selection from standardized descriptors rather than regression to match nutritional database structures [4]

Q5: What are the optimal prompting strategies for food recognition and portion size estimation?

Effective prompts should [30] [4]:

- Explicitly instruct the model to use visual references: "estimate volume based on size in relation to other objects in the image"

- Request structured output: "Assemble findings in a table with weight, energy, carbohydrates, fat and protein as columns"

- Chain specialized prompts: Use separate, optimized prompts for food recognition vs. portion estimation tasks

Q6: My retrieval system returns nutritionally similar but visually different foods. How can I improve relevance?

This indicates a modality alignment issue. Solutions include [29]:

- Implement hybrid retrieval: Combine dense vectors for semantic recall with sparse/keyword fallback for exact terms

- Add re-ranking: Implement a dedicated multimodal re-ranker to re-sort initial results

- Metadata filtering: Filter by food categories, preparation methods, and other attributes at query time

- Query expansion: Decompose complex queries into sub-queries for different food components

Performance Data & Validation

Quantitative Performance Comparison of MLLMs for Dietary Assessment

| Model | Weight Estimation MAPE | Energy Estimation MAPE | Correlation with Reference (Weight) | Systematic Bias Trend |

|---|---|---|---|---|

| ChatGPT-4o | 36.3% | 35.8% | 0.65-0.81 | Underestimation increases with portion size |

| Claude 3.5 Sonnet | 37.3% | 35.8% | 0.65-0.81 | Underestimation increases with portion size |

| Gemini 1.5 Pro | 64.2%-109.9% | 64.2%-109.9% | 0.58-0.73 | Underestimation increases with portion size |

| DietAI24 (RAG Framework) | 63% reduction in MAE vs. baselines | 63% reduction in MAE vs. baselines | Significant improvement | Not reported |

Data synthesized from multiple validation studies [30] [4]. MAPE = Mean Absolute Percentage Error.

DietAI24 Framework Performance on Nutrient Estimation

| Nutrient Category | Number of Components | Performance Improvement | Key Application |

|---|---|---|---|

| Macronutrients | 5-7 components | 63% MAE reduction | Basic nutrition assessment |

| Micronutrients | 40+ components | Comprehensive profiling enabled | Clinical research, deficiency studies |

| Food Components | 15+ components | Zero-shot estimation | Dietary pattern analysis |

| Total Coverage | 65 distinct nutrients/components | Far exceeds standard solutions | Epidemiological studies |

Experimental Protocols

Standardized Food Photography Protocol for Validation Studies

Purpose: Ensure consistent, comparable image data for evaluating portion size estimation algorithms [30].

Materials:

- Calibrated digital scale

- Standardized tableware (white porcelain plate, 24.3 cm diameter)

- Reference objects (19 cm fork, 20.5 cm knife)

- Neutral background (beige linen tablecloth)

- Smartphone with dual camera system (e.g., iPhone 13)

Procedure:

- Prepare food items according to standardized recipes

- Weigh each component using calibrated digital scale

- Arrange components in distinct sections on plate

- Position vegetables closest to camera for optimal visibility

- Place reference cutlery 1.5 cm from plate edge

- Capture image from 42° angle, positioned 20.2 cm above and 20 cm from plate edge

- Capture small (50%), medium (100%), and large (150%) portions of starchy components

- Use fresh portions for each photograph rather than reusing items

DietAI24 Framework Implementation Protocol

Phase 1: Database Indexing [4]

- Source authoritative nutritional database (FNDDS with 5,624 food items)

- Transform food descriptions into embeddings using text-embedding-3-large

- Store embeddings in vector database for efficient similarity-based retrieval

Phase 2: Retrieval-Augmented Generation

- Use MLLM (GPT-4V) for initial food recognition from image

- Generate query from visual analysis

- Retrieve relevant food descriptions from vector database

- Augment MLLM prompt with retrieved nutritional information

- Generate final nutrient estimates grounded in authoritative database

Validation: Compare estimates against reference values from direct weighing and nutritional database analysis using Mean Absolute Percentage Error (MAPE) and correlation coefficients [30].

Experimental Workflow Visualization

DietAI24 RAG Framework for Nutrition Estimation

Multimodal RAG Pipeline Approaches

The Scientist's Toolkit: Research Reagent Solutions

Essential Components for MLLM RAG Nutrition Research

| Research Component | Function | Implementation Examples |

|---|---|---|

| Multimodal LLMs | Visual understanding and reasoning from food images | GPT-4V, Claude 3.5 Sonnet, Gemini 1.5 Pro [30] |

| Embedding Models | Convert text descriptions to vector representations | text-embedding-3-large, CLIP for multimodal embedding [31] [4] |

| Vector Databases | Store and retrieve nutritional information efficiently | AstraDB, Chroma, Pinecone [31] |

| Nutritional Databases | Authoritative source of food composition data | FNDDS, USDA National Nutrient Database [30] [4] |

| Document Processing | Extract and structure information from research papers | Unstructured library for PDF partitioning [31] |

| Validation Datasets | Benchmark algorithm performance | ASA24, Nutrition5k datasets [4] |

| Reference Objects | Provide scale reference in food images | Standardized cutlery, plates of known dimensions [30] |

Specialized Models for Nutritional Analysis

| Model Type | Specific Function | Examples |

|---|---|---|

| Chart Interpretation | Extract data from nutritional charts and graphs | DePlot, Pix2Struct [29] |

| Food-Specific MLLMs | Specialized in food recognition and analysis | FoodSky, DietAI24 integrated models [4] |

| Portion Estimation | Convert 2D images to 3D volume estimates | Custom-trained models with reference objects [30] |

Optimizing Protocols and Mitigating Error in Real-World Settings

Frequently Asked Questions (FAQs)

Q1: Why is shortening the reference period an effective way to improve recall accuracy? A shorter reference period reduces telescoping errors, where participants incorrectly remember when an event occurred. Over longer periods, people tend to make more errors in dating events. One study found that participants asked to recall home repairs over a six-month period reported 32% fewer repairs than those recalling over just one month, suggesting longer periods lead to greater inaccuracy [32].

Q2: What types of personal landmarks are most effective for improving recall? Landmarks associated with strong emotions or significant life events are most effective. This includes birthdays, anniversaries, weddings, the birth of a child, graduations, or major public events [32]. For example, framing a reference period around a significant event like a volcanic eruption was shown to reduce forward telescoping [32].

Q3: How does the "decompose the question" technique work? This technique involves breaking down a broad question (e.g., "How much did you spend on groceries?") into smaller, more concrete questions (e.g., "How much did you spend on fruit, vegetables, meat, and dairy?"). This reduces the cognitive load on the participant, making it easier to recall specific details, and is conceptually similar to shortening the chronological reference period [32].

Q4: What is the difference between recall limitation and recall bias? Recall limitation refers to the natural human tendency to forget or distort information over time. Recall bias involves a conscious or unconscious influence on memory recollection, such as when a participant's current beliefs, emotions, or external factors shape how they remember past events [33].

Q5: How can visual aids improve portion size estimation in dietary recalls? Visual aids, like digital photographs of food portions, help participants overcome challenges with perception, conceptualization, and memory. Research indicates that using eight images to represent different portion sizes is more accurate than using four. Presenting all images simultaneously, rather than sequentially, is also preferred by participants and supports more accurate estimation [11].

Troubleshooting Guides

Problem: Inaccurate Portion Size Estimation in Self-Administered Recalls

Issue: Participants consistently overestimate or underestimate the amounts of food they consumed.

Solution:

- Use Aerial Photographs: Implement digital aerial photographs of food portions as estimation aids. Research shows these are as accurate as other image types and are a cost-effective standard [11].

- Optimize Image Presentation: Present multiple portion images (e.g., eight options) simultaneously on a screen, rather than sequentially, to facilitate easier comparison [11].

- Understand Food-Specific Biases: Be aware that accuracy varies by food type. Studies show a tendency to overestimate small pieces, shaped foods, and amorphous/soft foods, and to underestimate single-unit foods [34].

Problem: High Rate of Recall Bias in Retrospective Studies

Issue: Participant memories of past events or exposures are distorted, often systematically differing between study groups (e.g., cases vs. controls).

Solution:

- Shorten the Reference Period: Use the shortest reference period (e.g., last week vs. last year) that is consistent with your research goals to minimize memory decay and telescoping [32].

- Provide Retrieval Cues: Use personal landmarks (birthdays, holidays) or concrete cues in question introductions to provide a mental scaffold for memory retrieval [32].

- Consider a Reverse Chronological Order: In interviews, ask participants to recall the most recent event first and work backward, as this order can sometimes aid memory [32].

- Allow Ample Time: Give participants plenty of time to search their memories during surveys or interviews [32].

Problem: Lack of Contextual Detail in Social Media Use Research

Issue: Self-reported data on frequency and duration of technology use is unreliable and lacks detail on the "why" and "how."

Solution:

- Implement a Stimulated Recall Paradigm:

- Collect Objective Data: Use video footage or in-app data logs to capture actual user behavior [35].

- Conduct a Structured Interview: Review the objective data with the participant in a "co-research" session to facilitate detailed recall of their motivations, interactions, and feelings at the time of use [35].

- Visualize the Data: Use charts or timelines during the interview to map out behaviors, contexts, and subjective experiences [35].

The table below summarizes key quantitative findings from research on recall and portion size estimation.

Table 1: Summary of Key Research Findings on Recall and Estimation

| Research Focus | Key Finding | Magnitude/Effect | Source |

|---|---|---|---|

| Reference Period Length | Fewer events reported in a long vs. short reference period | 32% fewer home repairs reported in a 6-month vs. a 1-month period [32] | |

| Portion Size Image Number | Accuracy of portion size estimation with more images | Using 8 images was more accurate than using 4 images [11] | |

| Portion Size Estimation (Overall) | Average overestimation of consumed foods/beverages | Reported portions were ~7g higher than observed portions [34] | |

| Serial Position Effect | Memory advantage in a sequence | Clear primacy and recency effects for landmarks on a learned route [36] |

Experimental Protocols

Protocol 1: Implementing Shortened Reference Periods and Landmarks in a Survey

Objective: To assess the effect of a dietary intervention on fruit and vegetable consumption over the past week.

Methodology:

- Design:

- Reference Period: Frame the question to cover "the last 7 days" instead of "the last month" to reduce telescoping [32].

- Landmark Introduction: Introduce the question with: "Thinking about the last week, since last [Day of the Week, e.g., Monday], including the weekend..." [32].

- Decompose the Question: Instead of one question on "fruits and vegetables," ask separate questions for "fruit," "leafy greens," "other vegetables," etc. [32].

- Procedure:

- Administer the survey to participants.

- Clearly instruct them to think backward from today to one week ago [32].

- Allow sufficient time for participants to answer each decomposed question.

Protocol 2: Validating Portion Size Estimation Using Digital Images

Objective: To validate the accuracy of portion size reports using digital aerial photographs in an online 24-hour dietary recall tool [11] [34].

Methodology:

- Design (Feeding Study):

- Procedure (Recall):

- The following day, participants complete an unannounced, self-administered 24-hour recall using a tool with integrated digital portion images.

- Present 8 aerial photographs for each food, showing a range of portion sizes from small to large, displayed simultaneously on the screen [11].