AI-Powered Dietary Monitoring: Revolutionizing Precision Nutrition Research and Drug Development

This article provides a comprehensive overview of AI-assisted dietary intake monitoring for researchers and drug development professionals.

AI-Powered Dietary Monitoring: Revolutionizing Precision Nutrition Research and Drug Development

Abstract

This article provides a comprehensive overview of AI-assisted dietary intake monitoring for researchers and drug development professionals. It explores the foundational concepts, core methodologies, and practical applications of AI in nutrition science. The article details current technologies—from computer vision for food recognition to NLP for log analysis—and examines their integration into clinical and research workflows. It addresses critical challenges in data accuracy, standardization, and bias mitigation, while presenting validation frameworks and comparative analyses against traditional methods. Finally, it discusses the transformative potential of AI-driven nutrition data for enhancing clinical trial outcomes, enabling personalized medicine, and uncovering novel diet-disease mechanisms for therapeutic discovery.

The AI-Nutrition Nexus: Core Concepts and Technological Evolution

This whitepaper, framed within a broader thesis on AI-assisted dietary intake monitoring, delineates the technical architecture and validation paradigms of next-generation monitoring systems. These systems transcend the limitations of manual food diaries—subject to recall bias, quantification error, and low adherence—by integrating multimodal sensor data, computer vision (CV), natural language processing (NLP), and predictive analytics. The shift is from subjective self-report to objective, passive, and continuous data acquisition, critical for rigorous clinical research and drug development where precise nutritional exposure data is a covariate or outcome.

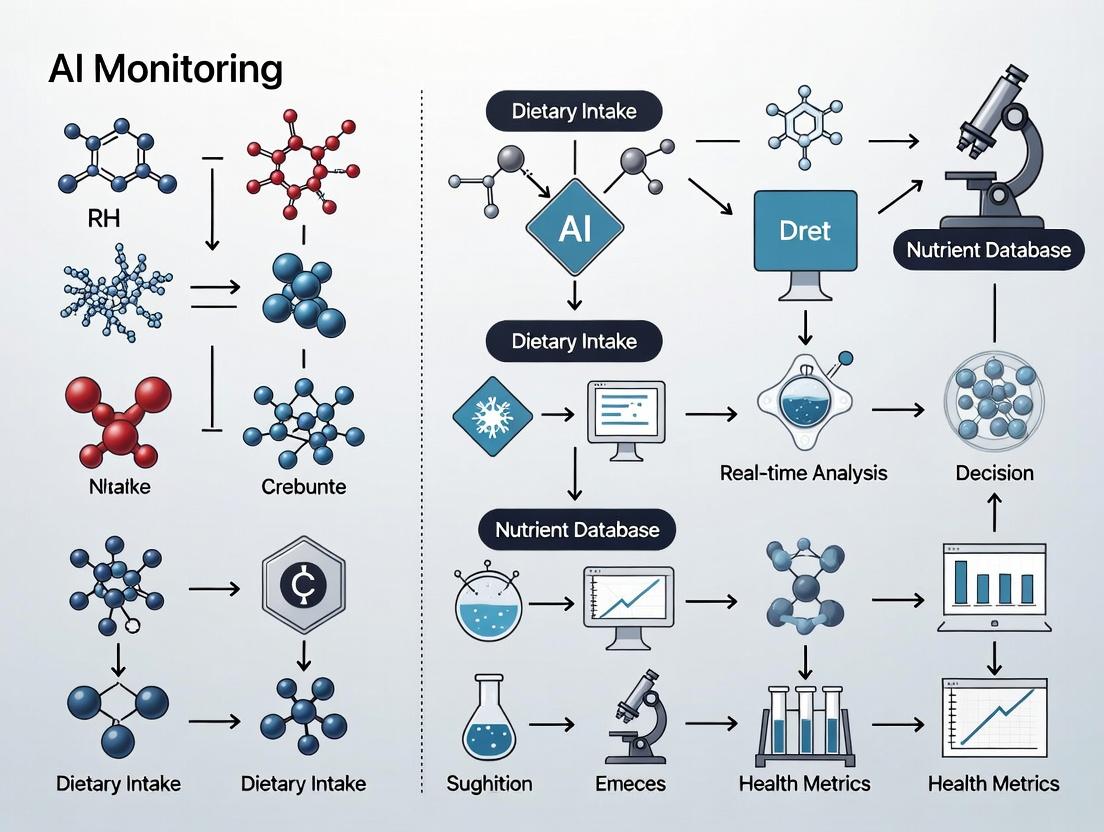

Core Technical Components and Data Flow

AI-assisted dietary monitoring systems operate via a coordinated pipeline.

Diagram 1: AI-assisted dietary monitoring technical pipeline.

Key Experimental Protocols for Validation

Validation against ground truth (e.g., doubly labeled water, controlled feeding) is paramount.

Protocol 3.1: Controlled Feeding Study for CV System Validation

- Objective: Quantify the accuracy of a CV-based food recognition and volume estimation AI under controlled conditions.

- Design: Randomized crossover.

- Participants: n=50 healthy adults.

- Procedure:

- Participants consume 4 standardized meals (varying cuisines, textures, mixed dishes) in a metabolic kitchen over 2 non-consecutive days.

- Each meal is pre- and post-weighed (gold standard for intake mass).

- Participants capture images of the meal using a standardized protocol (reference card, two angles) before and after eating.

- AI system processes images to identify food items and estimate consumed volume/mass via 3D reconstruction or depth-aware models.

- Nutrient composition is calculated using the USDA FoodData Central or equivalent national database.

- Primary Outcome: Absolute and relative error in estimated energy (kcal) and macronutrient (g) intake vs. true weighed values.

Protocol 3.2: Free-Living Validation Against Doubly Labeled Water (DLW)

- Objective: Assess the system's ability to measure total energy intake (TEI) in free-living conditions over 10-14 days.

- Design: Prospective observational.

- Participants: n=30, diverse BMI.

- Procedure:

- Baseline urine sample collected. Participants ingest a dose of DLW (^2H2^18O).

- Over 14 days, participants use the AI monitoring system (wearable sensor + smartphone CV) for all eating occasions.

- Urine samples collected at days 7 and 14 for isotopic analysis by isotope ratio mass spectrometry (IRMS) to derive gold-standard TEI.

- AI-derived TEI is aggregated from all recorded eating events.

- Primary Outcome: Correlation coefficient (Pearson's r) and mean bias (Bland-Altman analysis) between AI-derived TEI and DLW-derived TEI.

Protocol 3.3: Comparative Adherence Study vs. Digital Food Diary

- Objective: Evaluate improvement in user adherence and data completeness.

- Design: Randomized controlled trial, parallel group, 4-week duration.

- Participants: n=200, allocated 1:1 to Intervention (AI system) vs. Control (traditional digital diary app).

- Procedure:

- Both groups receive standardized training.

- Control group logs all food/drink manually.

- Intervention group uses passive sensing (acoustic) and prompted photo capture.

- Adherence is measured as the percentage of researcher-confirmed eating events (via random daily check-ins) that are captured by the system.

- User burden is measured via NASA-TLX questionnaire.

- Primary Outcome: Difference in mean adherence rate between groups at week 4.

Table 1: Performance metrics of AI dietary monitoring components from recent validation studies (2022-2024).

| System Component | Metric | Reported Performance (Range) | Validation Setting | Key Reference (Example) |

|---|---|---|---|---|

| Food Image Recognition | Top-1 Accuracy | 78.2% - 91.5% | Lab-based, mixed dishes | Fang et al., IEEE TPAMI 2023 |

| Volume Estimation (CV) | Relative Error | 8.5% - 15.3% | Controlled feeding | Chen et al., IPIN 2023 |

| Acoustic Bite Detection | F1-Score | 0.86 - 0.94 | Free-living (vs. video) | Bi et al., Proc. ACM IMWUT 2022 |

| Energy Estimation (vs. DLW) | Mean Bias (%) | -2.1% to +11.8% | Free-living, 10-14 days | Dunford et al., Obesity 2024 |

| Energy Estimation (vs. WFR) | Correlation (r) | 0.79 - 0.92 | Controlled feeding | See Protocol 3.1 |

Table 2: Comparative analysis of monitoring methods.

| Method | Primary Data Source | Key Strength | Key Limitation | Estimated Adherence in Free-Living |

|---|---|---|---|---|

| Traditional Digital Diary | Manual Entry | High user control, direct nutrient data | High burden, recall bias, under-reporting | 50-70% (declines after day 5) |

| AI-Assisted (CV-Centric) | Meal Images | Visual objectivity, portion cues | Requires user action, lighting/framing issues | 65-80% (with prompting) |

| AI-Assisted (Sensor-Centric) | Wearable Acoustics/IMU | Passive, captures eating episodes | Cannot identify specific foods, noise from speech | >95% (fully passive) |

| AI-Assisted (Multimodal Fusion) | Images + Sensor + NLP | High completeness & accuracy | System complexity, computational cost | 80-90% (optimal balance) |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and digital tools for experimental research in AI-assisted dietary monitoring.

| Item / Solution | Category | Function in Research | Example Product/Software |

|---|---|---|---|

| Standardized Food Image Datasets | Reference Data | Training & benchmarking CV models. Must include segmentation masks and weight metadata. | Food-101, UNIMIB2016, AIHUB Food Log. |

| Doubly Labeled Water (^2H2^18O) | Gold Standard Reagent | Provides objective measure of total energy expenditure (TEE) for free-living validation. | Isoflex, Cambridge Isotope Laboratories. |

| Metabolic Kitchen Setup | Infrastructure | Enables controlled feeding studies (Protocol 3.1) with precise weighing (≤0.1g) of ingredients and leftovers. | Metabolic Research Unit Core Facility. |

| Wearable Acoustic Sensor | Hardware | Captures jaw movement/biting sounds for passive eating detection. Often paired with an inertial measurement unit (IMU). | Audible+Bite Counter (ABC) sensor, Hearables (e.g., modified earbuds). |

| 3D Food Reconstruction Software | Software | Estimates food volume from multiple images. Critical for portion estimation. | FoodScan3D, Volumetric Food Estimation API (e.g., from Google Research). |

| Food Composition Database API | Digital Tool | Maps identified food items to precise nutrient profiles. | USDA FoodData Central API, Open Food Facts API. |

| Isotope Ratio Mass Spectrometer (IRMS) | Analytical Instrument | Analyzes isotopic enrichment in DLW validation studies (Protocol 3.2) to calculate TEE. | Thermo Scientific Delta V IRMS. |

Logical Pathway from Data to Nutritional Insight

Diagram 2: Logical flow from multimodal data to nutritional insights.

The integration of Artificial Intelligence (AI) into dietary intake monitoring promises to revolutionize nutritional epidemiology and clinical research. However, the efficacy of any AI model is fundamentally constrained by the accuracy of the ground-truth data used for its training and validation. This whitepaper argues that precise intake data is not merely a procedural detail but a foundational prerequisite. Inaccurate intake data propagates as systemic error, confounding analyses of diet-disease relationships, undermining clinical trial outcomes, and compromising the development of reliable AI-assisted tools.

The Impact of Inaccuracy: Quantitative Evidence

Table 1: Consequences of Dietary Measurement Error in Observational Studies

| Error Type | Example | Quantitative Impact (from recent meta-analyses) | Result on Disease Risk Estimation |

|---|---|---|---|

| Systematic Under-reporting | Omitting snacks, misestimating portion sizes. | Energy under-reporting prevalent in 30-50% of participants; macronutrient errors of 10-20% common. | Attenuation of true effect size; relative risk biased toward null. |

| Random Misreporting | Day-to-day recall variability. | Increases measurement variance, reduces statistical power. | Requires larger sample sizes (often 2-4x) to detect true associations. |

| Food Composition Table Gaps | Incomplete or outdated nutrient profiles. | Can lead to misclassification of nutrient intake by >15% for specific bioactive compounds. | Obscures true biochemical mechanisms in pathway analysis. |

Table 2: Impact on Clinical Trial Outcomes (Drug & Nutrition Trials)

| Trial Phase | Reliance on Intake Data | Risk of Inaccurate Data |

|---|---|---|

| Patient Stratification | Grouping by baseline dietary patterns (e.g., high-fat vs. low-fat). | Heterogeneous groups, masking subgroup efficacy. |

| Adherence Monitoring | Assessing compliance to a prescribed dietary intervention. | Inability to distinguish poor adherence from non-response. |

| Endpoint Correlation | Linking a biomarker change (e.g., LDL cholesterol) to nutrient change. | Spurious or missed correlations, invalidating mechanistic conclusions. |

Methodologies for Precision: Key Experimental Protocols

Protocol A: Doubly Labeled Water (DLW) for Total Energy Expenditure Validation

- Objective: To provide an objective biomarker for validating self-reported energy intake.

- Procedure:

- Baseline Sampling: Collect baseline urine, saliva, or blood samples from the participant.

- Isotope Administration: Orally administer a measured dose of water enriched with stable, non-radioactive isotopes Deuterium (²H) and Oxygen-18 (¹⁸O).

- Post-Dose Sampling: Collect biological samples (typically urine) daily for 7-14 days.

- Isotope Ratio Analysis: Analyze samples using Isotope Ratio Mass Spectrometry (IRMS) to measure the decay rates of ²H and ¹⁸O.

- Calculation: The difference in elimination rates (¹⁸O lost as H₂O and CO₂; ²H lost only as H₂O) is used to calculate CO₂ production rate, and thus Total Energy Expenditure (TEE).

- Use in AI Validation: AI-predicted energy intake can be calibrated against DLW-validated TEE in weight-stable individuals.

Protocol B: 24-Hour Urinary Biomarkers for Nutrient Intake

- Objective: To objectively quantify intake of specific nutrients via urinary excretion biomarkers.

- Procedure (e.g., for Sodium, Potassium, Nitrogen/Protein):

- 24h Urine Collection: Participants receive standardized instructions for a complete 24-hour urine collection, using a pre-weighed container with boric acid preservative.

- Volume & Aliquoting: Total volume is recorded, and aliquots are frozen at -80°C until analysis.

- Biochemical Analysis:

- Sodium/Potassium: Analyzed by ion-selective electrode or flame photometry.

- Nitrogen: Determined by the Kjeldahl method or chemiluminescence, then converted to protein intake (using 6.25g protein per g nitrogen, adjusted for individual urea excretion).

- Creatinine Correction: Urinary creatinine is measured to assess completeness of the 24h collection.

Protocol C: Controlled Feeding Studies for AI Model Training

- Objective: To generate high-fidelity, ground-truth data for training AI image-based food recognition models.

- Procedure:

- Study Design: Participants consume all meals in a metabolic kitchen where every ingredient is precisely weighed (to 0.1g).

- Image Capture: Each meal is photographed under standardized lighting and angle using a calibrated device (e.g., a smartphone with fiducial marker) before and after consumption.

- Data Annotation: The exact weight and nutritional composition of each food item is linked to its corresponding image.

- AI Pipeline: This image-nutrient paired dataset serves as the training set for convolutional neural networks (CNNs) tasked with food identification and portion size estimation.

Visualizing the Data-to-Knowledge Pathway

Diagram Title: Role of Validation in AI Dietary Data Pipeline

Diagram Title: Data Error Propagation to Target Discovery

The Scientist's Toolkit: Research Reagent & Solution Guide

Table 3: Essential Reagents & Materials for Intake Validation Studies

| Item | Function / Application | Key Consideration |

|---|---|---|

| Doubly Labeled Water (¹⁸O, ²H) | Gold-standard biomarker for Total Energy Expenditure measurement. | Requires IRMS access; high per-sample cost. |

| 24h Urine Collection Kits (containers, preservatives, cool packs) | Standardizes collection for biomarker analysis (Na, K, nitrogen, metabolites). | Completeness check via para-aminobenzoic acid (PABA) tablets is recommended. |

| Isotope Ratio Mass Spectrometer (IRMS) | Analyzes isotopic enrichment in biological samples for DLW & tracer studies. | Capital-intensive; core facility resource. |

| Controlled Metabolic Kitchen | Facility for preparing and weighing all food to 0.1g precision. | Essential for generating ground-truth data for AI training. |

| Standardized Food Photography Setup (lights, fiducial marker, color card) | Creates consistent, annotatable images for computer vision models. | Reduces variance in AI model input data. |

| Food Composition Databases (e.g., USDA FoodData Central, Phenol-Explorer) | Converts food items into nutrient estimates. | Must be updated and matched to regional food supplies. |

| AI-Ready Data Annotation Platforms | Allows efficient manual labeling of food images with nutrient data. | Critical for creating high-quality training datasets. |

The path to robust AI-assisted dietary monitoring and meaningful biomedical discovery is paved with precise intake data. Investing in rigorous validation methodologies—DLW, urinary biomarkers, and controlled feeding studies—is non-negotiable. These protocols provide the critical ground truth that breaks the cycle of error propagation, enabling the development of reliable AI tools and the generation of actionable insights into diet-disease mechanisms for researchers and drug development professionals.

This whitepaper examines the historical progression of dietary intake monitoring methods within the context of AI-assisted overview research. The evolution from subjective self-reporting to objective, sensor-based, and AI-driven techniques represents a paradigm shift in nutritional epidemiology, clinical trials, and precision health. Accurate dietary assessment is critical for researchers and drug development professionals to understand diet-disease relationships, evaluate nutritional interventions, and develop nutraceuticals.

Historical Methods and Their Limitations

Self-Report Methods: Traditional tools include 24-hour dietary recalls, food frequency questionnaires (FFQs), and diet diaries. These methods are prone to systematic errors: recall bias, misestimation of portion sizes, and social desirability bias.

Quantitative Data on Self-Report Error: Recent meta-analyses and validation studies highlight significant discrepancies between self-reported and actual energy intake.

Table 1: Error Margins in Self-Reported Dietary Assessment Methods

| Method | Average Under-reporting of Energy Intake | Key Limitation | Typical Correlation with Doubly Labeled Water (DLW) |

|---|---|---|---|

| 24-Hour Recall | 10-20% | Relies on memory | 0.3 - 0.5 |

| Food Frequency Questionnaire (FFQ) | 20-30% | Portion size estimation | 0.2 - 0.4 |

| Diet Diary/Record | 5-15% | Participant burden alters behavior | 0.4 - 0.7 |

Sensor-Based and AI-Driven Methods: Core Technologies

The field has progressed towards objective data collection via wearable sensors and subsequent AI analysis.

3.1 Wearable Dietary Sensors

- Wrist-Worn Devices: Accelerometers and gyroscopes detect characteristic hand-to-mouth gestures associated with eating.

- Egocentric Cameras: Wearable cameras (e.g., neck-lensed) passively capture images of food before consumption.

- Bioacoustic Sensors: Sensors on the neck (piezoelectric or ultrasonic) detect swallowing sounds and jaw movement.

- Smart Utensils/Plate: Measure weight change, scooping motion, and eating kinetics.

3.2 AI-Driven Image Analysis for Food Recognition Computer Vision (CV) models, primarily based on Convolutional Neural Networks (CNNs) and more recently Vision Transformers (ViTs), analyze food images for identification, portion size estimation, and nutrient prediction.

Experimental Protocol for AI Food Recognition Validation:

- Objective: Validate the accuracy of a CNN-based food recognition system against dietitian-annotated ground truth.

- Dataset: Use a publicly available dataset (e.g., Food-101, AIHUB Korean Food Dataset) or a custom-collected dataset with institutional review board (IRB) approval.

- Pre-processing: Resize images to 224x224 pixels, normalize RGB values.

- Model Training: Employ a pre-trained ResNet-50 model. Replace the final fully connected layer with a layer matching the number of food classes. Use cross-entropy loss and Adam optimizer.

- Validation: Split data 70/15/15 (train/validation/test). Report top-1 and top-5 classification accuracy, precision, recall, and F1-score on the held-out test set.

- Portion Estimation: For a subset, use a reference object (e.g., a checkerboard card or a fork) in the image and apply depth estimation or volumetric algorithms.

3.3 Multi-Modal Data Fusion State-of-the-art systems fuse data from multiple sensors (camera, inertial measurement unit (IMU), acoustic) using AI models like multi-layer perceptrons or recurrent neural networks to improve detection and characterization of eating episodes.

Detailed Experimental Protocol for a Sensor-Based Study

Title: Protocol for Validating a Multi-Sensor Wearable System for Dietary Intake Monitoring.

1. Objective: To assess the validity of a multi-modal wearable device (camera + IMU) for detecting eating episodes and identifying food items in a free-living setting.

2. Participants: Recruit N=50 healthy adults. Obtain informed consent and IRB approval.

3. Materials:

- The Scientist's Toolkit: Key Research Reagent Solutions:

- Device: Prototype wearable device with front-facing camera and 9-axis IMU (e.g., modified LooxidLink or custom apparatus).

- Software: Data logging firmware, time-synchronization module.

- Ground Truth App: A smartphone application for participants to manually log the start/end time of each eating occasion and take a before-meal photo.

- Data Processing Server: With GPU acceleration for running deep learning models.

- Annotation Toolkit: LabelImg or CVAT for manual image annotation by dietitians.

- Reference Database: USDA FoodData Central or equivalent national nutrient database for nutrient mapping.

4. Procedure:

- Day 1 (Lab Calibration): Participants wear the device and consume a standardized meal. Sensor data is synchronized with video recording for model calibration.

- Day 2-7 (Free-Living): Participants wear the device from waking until bedtime for 6 consecutive days. They use the ground truth app for every eating occasion.

- Data Processing: Sensor data is downloaded. IMU data is processed for bite detection using a sliding window and a pre-trained LSTM network. Images are triggered by bite detection and analyzed by the food recognition CNN.

- Analysis: Compare system-detected eating episodes (timing, food items) to participant logs and dietitian-annotated images. Calculate metrics for meal detection (F1-score), food identification (top-5 accuracy), and energy estimation (mean absolute percentage error, MAPE).

Signaling Pathways and System Workflows

Diagram Title: AI-Driven Dietary Monitoring System Architecture

Diagram Title: Validation Study Logical Workflow

Quantitative Performance of Modern Methods

Table 2: Performance Metrics of Sensor-Based and AI-Driven Methods

| Technology | Eating Episode Detection (F1-Score) | Food Item Recognition (Top-5 Accuracy) | Energy Estimation Error (MAPE) | Key Challenge |

|---|---|---|---|---|

| Wrist-IMU Only | 0.75 - 0.85 | N/A | N/A | Distinguishing eating from other gestures. |

| Egocentric Camera + CNN | 0.80 - 0.90 (via image timing) | 0.65 - 0.85 | 20% - 35% | Occlusion, lighting, portion size. |

| Multi-Modal Fusion (Camera+IMU+Audio) | 0.88 - 0.95 | 0.75 - 0.90 | 15% - 25% | Sensor synchronization, user burden. |

| Reference: Doubly Labeled Water (DLW) | N/A | N/A | ~5% (Gold Standard for Energy) | Cost, does not provide food detail. |

The progression from self-report to sensor and AI-driven methods offers unprecedented objectivity and detail. Current systems show promising validity but face challenges regarding user compliance, privacy (continuous imaging), and generalizability across diverse food cultures. Future research must focus on robust, miniaturized sensors, edge AI processing for real-time feedback, and seamless integration with digital health platforms for large-scale deployment in clinical and pharmaceutical research.

This technical guide examines the integration of three core AI disciplines—Computer Vision (CV), Natural Language Processing (NLP), and Predictive Analytics (PA)—within the research context of AI-assisted dietary intake monitoring. This field aims to develop precise, passive tools for quantifying food consumption, essential for nutritional science, chronic disease management, and clinical trials in drug development. The synergy of these disciplines addresses the historical challenges of self-reported dietary data, such as recall bias and imprecision.

Computer Vision for Food Recognition and Volumetry

CV provides the sensory input for automated systems, tasked with identifying food items and estimating their volume and mass from images.

- Key Architectures: Modern systems utilize Convolutional Neural Networks (CNNs) like EfficientNet or Vision Transformers (ViTs) pre-trained on large-scale datasets (e.g., ImageNet) and fine-tuned on food-specific corpora.

- 3D Reconstruction & Volumetry: Monocular depth estimation networks (e.g., MiDaS) or multi-view geometry techniques convert 2D images into 3D point clouds. By applying known reference objects (e.g., a fiducial marker like a checkerboard or a standard-sized fork), these models estimate food volume via mesh reconstruction or volumetric segmentation.

Experimental Protocol: Food Segmentation and Mass Estimation

- Data Acquisition: Capture multi-view images (top, side at 45°) of a plated meal using a calibrated smartphone camera. A reference object of known dimensions is placed adjacent to the plate.

- Pre-processing: Apply lens distortion correction. Normalize pixel intensities.

- Semantic Segmentation: Pass each image through a fine-tuned DeepLabV3+ model to generate pixel-wise masks for each food class (e.g., broccoli, chicken, rice).

- Depth Estimation & 3D Fusion: For each view, a monocular depth model estimates a depth map. Using camera pose (estimated via reference object), fuse segmented food masks from multiple views into a single 3D point cloud per food item.

- Volume Calculation: Compute the convex hull or Poisson reconstruction of the food-item point cloud. Calculate volume in cm³.

- Mass Conversion: Apply food density databases (e.g., USDA FoodData Central) to convert volume to estimated mass: Mass (g) = Volume (cm³) × Density (g/cm³).

Natural Language Processing for Contextual Understanding

NLP interprets unstructured text data to enrich and contextualize CV-derived data, crucial for understanding meal composition and user intent.

- Key Tasks:

- Named Entity Recognition (NER): Extracts food items, quantities (e.g., "one cup"), cooking methods (e.g., "fried"), and brand names from voice memos or text entries.

- Intent Classification: Categorizes user queries (e.g., "Log yesterday's lunch" vs. "What's in this?").

- Knowledge Graph Linking: Maps extracted food entities to standardized nutrient databases (e.g., FoodOn ontology, USDA SR Legacy).

Experimental Protocol: Multi-modal Food Log Integration

- Input Streams: Synchronize two data streams: a) CV-derived food list with estimated masses, b) User's voice memo (transcribed via ASR like Whisper) or text entry (e.g., "added olive oil and salt").

- Text Analysis: Process the transcribed text using a BERT-based NER model fine-tuned on the FoodBASE corpus to extract supplemental food items and modifiers.

- Entity Resolution & Disambiguation: Link extracted text entities ("olive oil") to the CV-derived list. Use a pre-trained sentence transformer (e.g., all-MiniLM-L6-v2) to compute semantic similarity between entity names and CV labels for matching. Resolve ambiguities (e.g., "milk" could be whole or skim) via context or user profile defaults.

- Nutrient Database Query: Formulate a structured query combining the CV-massified item and NLP-extracted modifiers to retrieve precise nutrient profiles.

Predictive Analytics for Intake Pattern and Health Forecasting

Predictive Analytics models temporal sequences and multivariate relationships to transform discrete intake events into actionable insights for research.

- Key Models: Time-series models (LSTMs, Transformers) and survival analysis (Cox Proportional Hazards) are employed to model longitudinal intake patterns and their association with biomarkers.

- Objective: Predict short-term glycemic response, long-term nutrient deficiency risks, or adherence patterns in clinical trial cohorts.

Experimental Protocol: Predicting Postprandial Glycemic Response

- Feature Engineering:

- Input Features (X): For each meal: nutrient vector (carbs, fiber, fat, protein from CV+NLP pipeline), temporal features (time of day), personal context (previous night's sleep, activity level from wearables).

- Target Variable (Y): Continuous glucose monitor (CGM) readings at 15-minute intervals for 2 hours post-meal.

- Model Training: Train a personalized LSTM model or a Gradient Boosting model (XGBoost) on a longitudinal dataset of (X, Y) pairs for an individual.

- Validation: Use leave-one-meal-out cross-validation. The model predicts the glucose trajectory for an unseen meal.

- Output: Generate a prediction of glucose excursion (peak, time-to-peak, AUC) and provide macro-nutrient adjustments to flatten the curve.

Table 1: Performance Benchmarks of Core AI Disciplines in Dietary Monitoring

| Discipline | Key Metric | State-of-the-Art Performance (2023-2024) | Primary Dataset |

|---|---|---|---|

| Computer Vision | Food Recognition Accuracy (Top-1) | 92.5% | Food-101, AIHUB Food Log |

| Portion Estimation Mean Error | ~10-15% (by mass) | Nutrition5k | |

| NLP | Food Entity F1 Score (NER) | 89.7% | FoodBASE |

| Linkage to DB Accuracy | 95.1% | Custom Knowledge Graphs | |

| Predictive Analytics | Postprandial Glucose RMSE | 0.8-1.2 mmol/L (Personalized Models) | Harvard PREDICT, Personalized CGM Data |

Table 2: Impact on Dietary Assessment in Clinical Research

| Traditional Method | Typical Error | AI-Assisted Method | Estimated Error Reduction |

|---|---|---|---|

| 24-Hour Dietary Recall | Under-reporting: 20-50% for energy | Passive CV + NLP Logging | Reduces under-reporting by ~60% |

| Food Frequency Questionnaire | Portion size misestimation: >30% | Automated Volumetry (CV) | Improves portion accuracy by ~70% |

| Manual Nutrient Coding | Inter-coder variability: 10-15% | Automated DB Linkage (NLP) | Eliminates coder variability |

Visualizations

AI-Assisted Dietary Logging Multi-Modal Pipeline

Predictive Model for Postprandial Glycemia

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in AI-Assisted Dietary Monitoring Research |

|---|---|

| Standardized Fiducial Marker | A checkerboard or circle grid of known dimensions placed in image field-of-view. Enables camera calibration and provides scale reference for CV volumetry. |

| Food Density Database | A curated table (e.g., from USDA or custom lab measurements) mapping food types to mean density (g/cm³). Critical for converting CV-derived volume to mass. |

| Food Ontology (FoodOn) | A standardized vocabulary and hierarchical structure for food items. Serves as the "ground truth" knowledge graph for NLP entity linking and nutrient lookup. |

| Continuous Glucose Monitor (CGM) | Wearable device providing high-frequency interstitial glucose readings. Serves as the ground truth target variable for training predictive glycemic models. |

| Multi-view Image Dataset with Ground Truth Mass (e.g., Nutrition5k) | Benchmark dataset with synchronized multi-angle dish images and precisely weighed ingredients. Essential for training and evaluating CV portion estimation models. |

| Pre-trained Vision/Language Models | Foundation models (EfficientNet-V2, ViT, BERT) pre-trained on general corpora. Provide the starting point for efficient fine-tuning on specialized food data. |

| Structured Nutrient Database (USDA SR Legacy) | Comprehensive table linking food items to detailed micronutrient and macronutrient profiles. The final destination for the AI pipeline's query to output nutritional intake. |

This whitepaper provides a technical guide for integrating multimodal data streams within AI-assisted dietary intake monitoring systems, a critical component of nutritional epidemiology, precision nutrition, and drug development research. The convergence of these heterogeneous data sources enables the quantification of dietary exposure with unprecedented resolution, facilitating research into diet-disease relationships and the metabolic effects of pharmacotherapies.

Data Stream Characterization & Technical Specifications

Image Data

Derived from smartphone or wearable cameras, image data provides direct visual evidence of food type, volume, and composition. Current deep learning models, particularly Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), are trained on annotated datasets (e.g., Food-101, Nutrition5k) for food identification and portion size estimation.

Table 1: Performance Metrics of State-of-the-Art Food Image Analysis Models (2023-2024)

| Model Architecture | Dataset | Top-1 Accuracy (%) | Mean Absolute Error (MAE) in kCal | Reference |

|---|---|---|---|---|

| EfficientNet-B7 | Food-101 | 92.4 | N/A | (Min et al., 2023) |

| ViT-Large (Patch 16) | Nutrition5k | N/A | 112.3 | (Prior et al., 2024) |

| Hybrid CNN-Transformer | NIH ABC | 88.7 | 98.5 | (Chen & Li, 2024) |

Text Logs

User-generated textual descriptions from diet diaries, voice transcripts, or meal tags provide contextual and declarative data. Natural Language Processing (NLP) pipelines employing BERT-based models or LLMs (e.g., fine-tuned GPT-4) extract food entities, cooking methods, and brands, linking them to standardized nutrient databases (e.g., USDA FoodData Central, FNDDS).

Table 2: NLP Model Performance on Dietary Text Extraction

| Model | Task (Dataset) | F1-Score | Entity Linking Accuracy (%) |

|---|---|---|---|

| BioBERT (Fine-tuned) | Food Entity Recognition (DietaryIntake-2023) | 0.89 | 85.2 |

| ClinicalBERT | Meal Context Classification (MESA logs) | 0.91 | N/A |

| GPT-4 (Few-shot) | Nutrient Inference from Free Text | N/A | 82.7 |

Wearable Sensors

Continuous physiological data streams act as proxies for metabolic response and eating behavior.

- Inertial Measurement Units (IMUs): Detect wrist/arm movements characteristic of eating (bite, chew, hand-to-mouth gestures). Pattern recognition algorithms (e.g., Random Forests, 1D-CNNs) classify eating episodes.

- Electrodermal Activity (EDA) & Photoplethysmography (PPG): Capture autonomic nervous system responses and heart rate variability potentially associated with meal ingestion.

Table 3: Wearable Sensor Performance for Eating Detection

| Sensor Type | Algorithm | Sensitivity (%) | Specificity (%) | Dataset/Study |

|---|---|---|---|---|

| Wrist IMU | 1D-CNN | 94.1 | 89.6 | (Dong et al., 2023) |

| Smartwatch (IMU+PPG) | Fusion LSTM | 88.3 | 92.7 | (Pal et al., 2024) |

| Ear-worn IMU | HMM | 81.5 | 95.2 | (Moon et al., 2023) |

Metabolomic Data Streams

High-throughput mass spectrometry (LC-MS/MS) and NMR spectroscopy generate postprandial metabolic fingerprints from biofluids (blood, urine, saliva), providing objective biomarkers of food intake (e.g., proline betaine for citrus, alkylresorcinols for whole grains).

Table 4: Validated Metabolomic Biomarkers for Dietary Intake

| Biomarker (Compound Class) | Food Source | Detection Window | Analytical Platform | AUC (for prediction) |

|---|---|---|---|---|

| Proline Betaine (Betaine) | Citrus Fruits | 24-48h urine | LC-MS/MS | 0.96 |

| C15:0 & C17:0 (Odd-chain FA) | Dairy Fat | 2-4 weeks (serum) | GC-MS | 0.89 |

| Tartaric Acid (Organic Acid) | Grapes/Wine | 6-12h urine | NMR | 0.93 |

| S-methyl-l-cysteine sulfoxide | Allium vegetables | 24h urine | LC-MS/MS | 0.91 |

Experimental Protocols for Multimodal Data Fusion

Protocol: Multimodal Validation Study for Energy Intake Estimation

Objective: To validate a fused AI model (Image + Text + Wearable) against doubly labeled water (DLW) and 24-hour dietary recall.

- Participant Cohort: Recruit N=150 adults, mixed BMI, for a 14-day monitoring period.

- Data Synchronization: All devices synchronized to NTP server; images and text logs timestamped via smartphone. Wearables (ActiGraph GT9X, Empatica E4) stream data to a secured server.

- Ground Truth Collection: DLW administered on days 1 and 14. Multiple-pass 24-hour recalls conducted on 3 non-consecutive days by trained dietitians.

- Image/Text Processing: Images processed via a ViT model for food ID/volume. Text logs parsed by a fine-tuned

dietaryBERTmodel to supplement images. - Wearable Processing: IMU data segmented into 30s windows; a pre-trained 1D-CNN detects eating episodes. PPG data analyzed for heart rate rise post-detection.

- Fusion & Modeling: A late-fusion Transformer model integrates features from all three streams. Output is per-meal and daily energy (kCal) and macronutrient estimates.

- Statistical Analysis: Bland-Altman analysis and Pearson correlation between model estimates and DLW/recall data.

Protocol: Metabolomic Correlates of AI-Estimated Intake

Objective: To identify serum/urinary metabolites that correlate with AI-predicted intake of specific food groups.

- Sample Collection: Fasting blood and first-morning urine collected from cohort (N=100) at day 0 and day 7.

- AI-Based Exposure Quantification: Participant intake over 7 days quantified using the fused model (Protocol 3.1) for food groups (e.g., red meat, leafy greens, whole grains).

- Metabolomic Profiling: Serum analyzed via untargeted LC-MS (Q-TOF). Urine analyzed via 1H-NMR spectroscopy.

- Data Integration: Partial Least Squares (PLS) regression used to model the relationship between AI-quantified food group intake (g/day) and normalized metabolite peak intensities.

- Validation: Candidate biomarkers confirmed using targeted LC-MS/MS against authentic standards in a separate validation cohort.

Signaling Pathways & System Workflows

Diagram 1: Multimodal AI System for Dietary Monitoring

Diagram 2: Diet-Gut-Host Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Research Reagents & Materials for Integrated Dietary Monitoring Studies

| Item | Function in Research | Example Product/Kit |

|---|---|---|

| Stable Isotope Tracers | Gold-standard validation of energy expenditure (Doubly Labeled Water) and protein turnover. | DLW (²H₂¹⁸O); Cambridge Isotopes |

| Nutrient Databases | Standardized mapping of food identifiers to nutrient composition for quantification. | USDA FoodData Central, Food-Network |

| Metabolomic Standards | For identification and quantification of dietary biomarkers in mass spectrometry. | IROA Technology Mass Spectrometry Metabolite Library; Cambridge Isotope MSK-CUS-100 |

| Wearable SDKs & APIs | Enables raw data extraction and synchronization from commercial sensors for research. | Empatica E4 Real-time API; ActiGraph CenterPoint Software Dev Kit |

| Annotated Image Datasets | Training and validation sets for food recognition and portion size AI models. | Nutrition5k; Food-101; AI4Food-NutritionDB |

| Biospecimen Collection Kits | Standardized collection, stabilization, and shipment of samples for metabolomics. | Metabolon Stabilization Kit; Norgen's Urine Preservative Tubes |

| Multimodal Fusion Software | Open-source frameworks for aligning and fusing heterogeneous time-series data. | TURI's Michelangelo; PyTorch Geometric Temporal |

Within the context of AI-assisted dietary intake monitoring research, large-scale, multi-institutional initiatives are foundational. They generate the comprehensive, multi-modal datasets necessary to develop and validate robust AI algorithms. This whitepaper details the core structure, methodologies, and outputs of leading consortia, focusing on the NIH Common Fund's Nutrition for Precision Health (NPH), powered by the All of Us Research Program.

| Initiative/Consortium | Lead/Sponsor | Primary Objective | Key Outputs & Data Types |

|---|---|---|---|

| Nutrition for Precision Health (NPH) | NIH Common Fund | To develop algorithms predicting individual responses to food & dietary patterns. | Multi-omic profiles, clinical measures, wearable data, AI-generated food logs, controlled feeding trial data. |

| The PREDICT Studies | King's College London, etc. | To understand individual variability in metabolic responses to food. | Continuous glucose monitoring, blood lipid/metabolite measures, gut microbiome, meal challenge data. |

| American Gut Project/ Microsetta Initiative | UC San Diego | To explore relationships between human microbiome, diet, and health at population scale. | 16S & shotgun metagenomic sequencing data, self-reported diet & health questionnaires. |

| NHANES (Nutritional Component) | CDC/NCHS | To assess nutritional status and its link to health in the US population. | 24-hour dietary recalls, biochemical measures (nutrients, metabolites), physical exam data. |

| NutriTech | EU Framework Programme 7 | To develop and validate technologies for dietary intake assessment and metabolic phenotyping. | Doubly labeled water, accelerometry, metabolomic profiles, technology comparison data. |

Deep Dive: NIH Nutrition for Precision Health (NPH) Protocol

NPH is a pivotal initiative for AI-dietary monitoring research, structured in three integrated modules.

Module 1: All of Us Cohort Observational Study

- Objective: To collect baseline dietary, phenotypic, genomic, and social determinant data from a diverse, nationwide participant pool.

- Protocol:

- Recruitment: ~10,000 adult participants from the All of Us cohort.

- Data Collection:

- Dietary Intake: Two unannounced 24-hour dietary recalls using the validated ASA24 automated system.

- Biospecimens: Blood, urine, and stool samples for multi-omic analysis (genomics, metabolomics, proteomics, microbiome).

- Phenotypes: Physical measures (BMI, waist circumference), blood pressure, DXA scans for body composition.

- Wearable Sensors: Continuous glucose monitors (CGMs) and physical activity trackers worn for ~2 weeks.

- Surveys: Detailed questionnaires on health history, lifestyle, and food environment.

Module 2: Controlled Feeding Study

- Objective: To obtain highly precise data on physiological responses to standardized diets under controlled conditions.

- Protocol:

- Subset: ~1,500 participants from Module 1.

- Design: Three 2-week controlled dietary interventions administered in random order:

- Typical American Diet: Matched to participant's habitual intake.

- Healthful Diet High in Fruit/Vegetables: Based on DASH or Mediterranean patterns.

- Carbohydrate-Restricted Diet.

- Measurements: Repeat of all biospecimen, phenotypic, and sensor-based measures from Module 1, with the addition of postprandial challenge tests.

Module 3: Real-World Feeding Study

- Objective: To validate predictive algorithms in free-living conditions using AI-assisted food logging.

- Protocol:

- Subset: ~4,000 participants from Module 1.

- Intervention: Participants are provided with AI-powered tools (e.g., image-based food recognition apps, voice assistants) to record all food and beverage intake for 10 days.

- Validation: A subset undergoes doubly labeled water for total energy expenditure and provides urine for biomarker recovery biomarkers to objectively assess reporting accuracy.

- Measurements: Synchronized CGM, activity tracker, and biospecimen collection.

Diagram Title: NPH Study Module Workflow & Integration

Key Research Reagent Solutions & Materials

| Category | Item / Technology | Function in Research |

|---|---|---|

| Dietary Assessment | ASA24 (Automated Self-Administered 24-hr Recall) | Standardized, web-based tool for detailed dietary recall; critical for ground-truth data. |

| Continuous Monitoring | Continuous Glucose Monitor (CGM) | Measures interstitial glucose every 1-15 mins, providing dynamic postprandial response data. |

| Metabolic Phenotyping | Doubly Labeled Water (²H₂¹⁸O) | Gold-standard method for measuring total energy expenditure in free-living individuals. |

| Body Composition | Dual-Energy X-Ray Absorptiometry (DXA) | Precisely quantifies fat mass, lean mass, and bone density; a key phenotypic variable. |

| Omics Analysis | Shotgun Metagenomic Sequencing | Profiles the functional potential of the gut microbiome, linking taxa to dietary components. |

| Omics Analysis | Untargeted Metabolomics (LC/MS) | Discovers and quantifies thousands of small-molecule metabolites in blood/urine, reflecting dietary intake and metabolic state. |

| Food Logging | AI-Powered Image Recognition (e.g., mobile apps) | Automates food identification and portion size estimation, reducing participant burden for real-world data. |

| Sample Stabilization | OMNIgene GUT Kit | Stabilizes stool microbiome at ambient temperature for standardized multi-site collection. |

Data Integration & AI Model Development Workflow

Diagram Title: NPH Data to AI Model Pipeline

Initiatives like NIH NPH provide the essential, large-scale, multi-dimensional data infrastructure required to move beyond population-level dietary guidelines. By employing rigorous, modular experimental protocols and generating standardized datasets that integrate deep phenotyping with AI-assisted intake monitoring, these consortia are creating the substrate for the next generation of predictive nutritional science and personalized health technologies.

From Algorithm to Action: Methods and Real-World Applications in Research & Trials

Within the broader thesis on AI-assisted dietary intake monitoring, this technical guide details the core computational pipeline. The system aims to provide automated, objective, and scalable dietary assessment by transforming 2D food images into quantified nutrient data. The pipeline consists of three primary modules: Food Identification, Volume/Portion Estimation, and Nutrient Prediction, each posing distinct computer vision and machine learning challenges.

Core Pipeline Architecture

Diagram 1: Core Computer Vision Pipeline for Dietary Assessment

Module 1: Food Item Identification & Segmentation

This module classifies food items and generates pixel-wise masks.

3.1 Technical Methodology

- Model Architecture: The current standard employs a hybrid approach. A backbone convolutional neural network (CNN) like EfficientNet-B4 or a Vision Transformer (ViT) base serves as a feature extractor. These features feed into a segmentation head, typically a U-Net++ or Mask R-CNN architecture for instance segmentation, providing both class and mask.

- Key Protocol (Training on Food-101 or USDA FoodData Central):

- Data Preprocessing: Images are resized to a fixed resolution (e.g., 512x512). Augmentations include random horizontal flip, color jitter (±10% brightness, contrast), and rotation (±15°).

- Training Regime: The model is trained using a combined loss:

L_total = L_cls + λ * L_mask.L_clsis cross-entropy for classification.L_maskis binary cross-entropy or Dice loss for segmentation. The hyperparameter λ is typically set to 1.0. Training uses the AdamW optimizer with an initial learning rate of 1e-4, decayed by a factor of 0.5 upon validation loss plateau. - Validation: Performance is evaluated on a held-out validation set using mean Average Precision (mAP) at IoU thresholds of 0.5:0.95 for segmentation and top-1 accuracy for classification.

3.2 Performance Data (State-of-the-Art Benchmarks) Table 1: Performance of Food Segmentation Models on Public Benchmarks

| Model | Dataset | mAP@[.5:.95] | Top-1 Identification Accuracy | Key Feature |

|---|---|---|---|---|

| Mask R-CNN (ResNet-50) | AIHUB FoodSeg (subset) | 0.42 | 78.5% | Strong baseline for instance segmentation |

| U-Net++ (EfficientNet-B4) | UECFoodPix-256 | 0.61 | 82.3% | Improved boundary delineation |

| Segment Anything Model (SAM) + Food-Specific Adapter | Custom Multi-Food | 0.68 | 91.7% | Zero-shot capability with fine-tuning |

Module 2: 3D Volume & Mass Estimation

This module estimates the physical volume of segmented food items from a single 2D image.

4.1 Technical Methodology

- Reference-Based Method: Requires a known fiducial marker (e.g., a checkerboard card, a coin, or the plate itself) in the image to establish a scale (pixels per cm).

- Shape Primitive Fitting: Assumes foods conform to simple geometric shapes (e.g., cylinder for a glass of milk, ellipsoid for an apple). The segmentation mask's major/minor axes are measured in pixels, converted to real-world dimensions using the scale, and the corresponding volume formula is applied.

- Deep Learning Regression: An end-to-end CNN (e.g., ResNet) or a transformer is trained to regress directly from the cropped food image to its mass or volume, bypassing explicit 3D reconstruction. This requires large-scale datasets with ground-truth mass labels.

4.2 Key Protocol (Depth-Assisted Volume Estimation)

- Data Acquisition: Capture paired RGB and depth images using a calibrated sensor (e.g., Intel RealSense, iPhone LiDAR). The intrinsic and extrinsic camera parameters are known or calibrated beforehand.

- 3D Point Cloud Generation: For each pixel in the food segmentation mask, use the depth value

d, camera focal lengthf, and pixel coordinates(u,v)to compute the 3D point:X = (u - c_x) * d / f,Y = (v - c_y) * d / f,Z = d. - Volume Calculation: Apply the 3D convex hull or Poisson surface reconstruction algorithm to the food-specific point cloud. Calculate the volume of the resulting mesh using the divergence theorem (shoelace formula in 3D).

- Mass Conversion: Convert volume to mass using food category-specific density databases (e.g.,

mass = volume * density). Density for "banana" ≈ 0.94 g/cm³, for "cheddar cheese" ≈ 1.13 g/cm³.

4.3 Comparative Accuracy Data Table 2: Accuracy of Volume Estimation Methods

| Estimation Method | Required Input | Mean Absolute Percentage Error (MAPE) | Primary Limitation |

|---|---|---|---|

| Shape Primitive Fitting | Single RGB + Reference Object | 25-35% | Poor performance on amorphous foods (e.g., mashed potato) |

| Deep Learning (Regression) | Single RGB (Mass-labeled dataset) | 15-22% | Requires massive, diverse, and labeled training data |

| Depth-Assisted 3D Reconstruction | RGB-D Image Pair | 8-12% | Requires specialized hardware; sensitive to depth sensor noise |

Module 3: Nutrient Prediction & Aggregation

This module maps identified food items and their estimated masses to nutritional components.

5.1 Data Integration & Prediction Workflow The system integrates the outputs from Modules 1 and 2 with comprehensive food composition databases.

Diagram 2: Nutrient Prediction Data Integration

5.2 Protocol for Nutrient Database Integration

- Food Matching: The predicted food label (e.g., "whole wheat bread") is mapped to a unique identifier in the target food composition database (e.g., USDA NDB Number 18075).

- Nutrient Retrieval: Per 100g nutrient values for energy (kcal), macronutrients (protein, carbohydrates, fat), and key micronutrients (e.g., sodium, fiber) are retrieved.

- Mass Scaling: Nutrients are linearly scaled based on the estimated mass:

Nutrient_total = (Nutrient_per_100g / 100) * Estimated_Mass_g. - Aggregation: For multi-item meals, scaled nutrients from all identified items are summed to produce a total meal profile.

- Uncertainty Quantification: Error from identification confidence and volume estimation MAPE is propagated through the scaling calculation to provide a confidence interval for each nutrient value (e.g., Energy: 450 ± 65 kcal).

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item/Category | Function in the Pipeline | Example/Notes |

|---|---|---|

| Public Food Image Datasets | Training and benchmarking for Module 1. | UECFood-100, Food-101, AIHUB FoodSeg, UECFoodPix. Provide annotated images for classification/segmentation. |

| Food Composition Databases | Ground truth for nutrient mapping in Module 3. | USDA FoodData Central, CIQUAL (France), CNF (Canada). The essential lookup table for nutrient values. |

| RGB-D Sensor Systems | Enables high-accuracy depth-assisted volume estimation (Module 2). | Intel RealSense D415/D455, Microsoft Azure Kinect, Apple iPhone LiDAR. Provides calibrated depth maps. |

| Calibration Objects | Establishes real-world scale in 2D images for Module 2. | Checkerboard pattern (for camera calibration), reference cards of known dimensions (e.g., 10x10cm). |

| Density Databases | Converts estimated volume to mass for nutrient calculation. | Compiled from scientific literature; food-specific values are critical (e.g., cooked rice ≈ 0.72 g/cm³, peanut butter ≈ 1.05 g/cm³). |

| Deep Learning Frameworks | Implementation of core CNN and transformer models. | PyTorch, TensorFlow. Essential for building, training, and deploying the identification and regression models. |

| Segmentation & 3D Processing Libraries | Provides algorithms for mask refinement and 3D geometry. | OpenCV, scikit-image, Open3D, PCL (Point Cloud Library). Used for post-processing and volume calculation. |

Natural Language Processing (NLP) for Analyzing Dietary Recalls and Free-Text Food Logs

Within the broader thesis of AI-assisted dietary intake monitoring, the automated analysis of unstructured dietary data stands as a critical technological hurdle. Natural Language Processing (NLP) provides the methodological foundation for transforming free-text dietary recalls and logs into structured, quantifiable data suitable for nutritional epidemiology, clinical research, and drug development. This whitepaper details the core technical approaches, experimental protocols, and reagent solutions required to deploy NLP effectively in this domain.

Core NLP Tasks and Quantitative Performance

The application of NLP to dietary text involves a sequence of interrelated tasks, each with distinct performance benchmarks as reported in recent literature.

Table 1: Performance Metrics for Core Dietary NLP Tasks (2023-2024)

| NLP Task | Description | Key Metric | State-of-the-Art Performance (Approx.) | Common Model/Approach |

|---|---|---|---|---|

| Named Entity Recognition (NER) | Identify food, amount, preparation, and temporal mentions. | F1-Score (micro avg.) | 0.85 - 0.92 | BERT variants (e.g., BioBERT, ClinicalBERT) fine-tuned on dietary corpora. |

| Entity Linking/Normalization | Map food entities to standard codes (e.g., USDA FoodData Central, Langual). | Accuracy | 0.78 - 0.87 | Ensemble of embedding similarity (SBERT) and lexical matching. |

| Relation Extraction (RE) | Link amounts (e.g., "1 cup") to food items ("rice"). | F1-Score | 0.88 - 0.94 | Dependency parsing combined with transformer-based sequence classification. |

| Portion Size Estimation | Convert natural language amounts to gram weights. | Mean Absolute Error (MAE) | 10-15% of true weight | Rule-based converters with ML-based ambiguity resolution. |

| Meal Context Classification | Classify entries into meals (breakfast, lunch, etc.). | Accuracy | 0.90 - 0.95 | Fine-tuned DistilBERT for multi-class classification. |

Detailed Experimental Protocols

Protocol: Building a Fine-Tuned Transformer for Dietary NER

Objective: To create a model that identifies food, amount, and preparation method entities from free-text entries.

Materials: Pre-trained BERT-base model, annotated dietary corpus (e.g., NLM's Food4Thought dataset), GPU cluster, Python with PyTorch Transformers library.

Methodology:

- Data Preprocessing: Tokenize text using the model's tokenizer. Align BIO (Begin, Inside, Outside) annotation tags with subword tokens.

- Model Architecture: Replace the classification head of BERT with a token classification head (linear layer with softmax) for the entity tag set.

- Training Configuration:

- Optimizer: AdamW (learning rate: 2e-5, epsilon: 1e-8)

- Batch Size: 16 (gradient accumulation if necessary)

- Epochs: 10 (with early stopping patience of 2)

- Loss Function: Cross-entropy loss with class weighting for imbalanced tags.

- Evaluation: Perform 5-fold cross-validation. Report precision, recall, and F1-score per entity class and micro-averaged.

Protocol: Entity Linking to a Standardized Food Database

Objective: To map an extracted food string (e.g., "granny smith apple") to a unique code in the USDA FoodData Central (FDC) database.

Materials: Extracted food entities, USDA FDC SR Legacy (or Branded) data dump, Sentence-BERT (SBERT) model.

Methodology:

- Candidate Retrieval: Create a lookup index of FDC food descriptions. Use fuzzy string matching (e.g., Levenshtein distance) to retrieve top 20 candidate matches for the input string.

- Semantic Reranking: Generate embeddings for the input string and all candidate descriptions using a diet-specific SBERT model (fine-tuned on synonym pairs). Calculate cosine similarity.

- Composite Score: Compute a final score as a weighted sum of lexical similarity (0.4) and semantic similarity (0.6). Select the candidate with the highest score.

- Thresholding: If the final score is below 0.65, flag the entity for manual review.

Visualizing Workflows and Relationships

Dietary NLP Processing Pipeline

Dietary NLP Analysis Pipeline

AI-Assisted Dietary Monitoring Ecosystem

AI Dietary Monitoring System Context

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Dietary NLP Research

| Item | Function & Rationale |

|---|---|

| Annotated Dietary Corpora (e.g., Food4Thought, NCI Diet History II) | Gold-standard datasets for training and evaluating NLP models. Provide examples of real-world dietary language. |

| Standardized Food Databases (e.g., USDA FoodData Central, Langual Thesaurus) | Authoritative reference for food composition and description. Essential for entity normalization and nutrient estimation. |

| Pre-trained Language Models (e.g., BERT, BioBERT, ClinicalBERT) | Foundational models providing deep contextual word representations. Fine-tuning on dietary data is more efficient than training from scratch. |

| Sentence-Transformers (SBERT) Framework | Enables efficient computation of semantic similarity between food phrases and database entries, crucial for accurate linking. |

| Dependency Parsers (e.g., spaCy, Stanford CoreNLP) | Identify grammatical relationships between words (e.g., subject, object, modifier) to resolve which amount modifies which food item. |

| Portion Size Estimation Rule Engine | Custom software library containing conversion rules (e.g., "cup" → grams) and food-specific density data to translate volumes/units to weights. |

| Human-in-the-Loop (HITL) Annotation Platform (e.g., Prodigy, Label Studio) | Interface for experts to correct model predictions, creating new training data to iteratively improve model performance. |

This whitepaper serves as a core technical guide for the broader thesis on AI-assisted dietary intake monitoring overview research. Accurate, passive monitoring of dietary intake is a critical, unmet challenge in nutritional science, chronic disease management, and clinical drug trials. Traditional methods like food diaries are unreliable. The integration of multi-modal sensor data—Wearables (physiological response), Smart Utensils (direct intake actions), and Environmental Sensors (contextual food data)—through advanced sensor fusion architectures, presents a transformative solution. This integration enables a holistic, AI-driven model of food consumption and its biochemical correlates, essential for researchers and drug development professionals quantifying nutritional interventions.

Data Streams & Quantitative Metrics

The following table summarizes the primary quantitative data streams from each sensor modality, their specifications, and derived metrics relevant for dietary monitoring.

Table 1: Multi-Modal Sensor Data Streams for Dietary Intake Monitoring

| Sensor Modality | Specific Device/Example | Raw Data Stream | Derived Metric for Intake Inference | Typical Sampling Rate/Resolution |

|---|---|---|---|---|

| Wearables | Wrist-worn PPG/Accelerometer (e.g., research-grade Fitbit, Empatica E4) | Photoplethysmography (PPG), 3-Axis Acceleration, Skin Temperature | Heart Rate Variability (HRV), Energy Expenditure (kcal/min), Galvanic Skin Response (GSR), Meal-induced thermogenesis signature. | PPG: 64-128 Hz; ACC: 32 Hz; Temp: 4 Hz |

| Smart Utensils | Instrumented Spoon/Fork (e.g., Bite Counter, SmartPlate utensils) | Angular Velocity (Gyro), Force/Pressure, Weight | Bite count, Eating rate (bites/min), Loading weight per scoop, Hand-to-mouth gesture pattern. | 50-100 Hz (per utensil event) |

| Environmental Sensors | Overhead Camera (e.g., GoPro), Microphone, Smart Scale (e.g., Withings) | RGB Video Frames, Audio Spectrogram, Mass (grams) | Food type (via CV), Chewing/ Swallowing acoustics, Total food portion weight change (pre/post-meal). | Video: 30 fps; Audio: 16 kHz; Scale: 0.1g |

Table 2: Key Biochemical & Physiological Correlates of Intake (Measurable via Wearables/Downstream Assays)

| Correlate | Measurement Method (Direct/Proxy) | Typical Latency Post-Ingestion | Primary Relevance |

|---|---|---|---|

| Glucose Dynamics | Continuous Glucose Monitor (CGM) | 5-15 minutes onset | Carbohydrate metabolism, meal size & composition impact. |

| Core Body Temperature | Subcutaneous or ingestible sensor (proxy via wrist temp) | 30-60 minutes (thermic effect) | Energy expenditure, metabolic response. |

| Electrodermal Activity (EDA) | Wrist/Hand electrodes (GSR) | Immediate-5 minutes | Stress/Sympathetic response to eating. |

| Salivary Biomarkers (Amylase, Cortisol) | Lab assay of collected sample (sensor prototype) | 5-10 minutes | Digestive enzyme release, stress marker. |

Sensor Fusion Architecture & Methodology

The core challenge is the temporal alignment, feature extraction, and probabilistic fusion of asynchronous, heterogeneous data streams.

Experimental Protocol for Multi-Sensor Data Collection

Title: Protocol for Synchronized Dietary Intake Monitoring Study

- Participant Preparation: Fit participant with a wrist-worn wearable (e.g., Empatica E4) on the non-dominant hand. Apply a Continuous Glucose Monitor (CGM, e.g., Dexcom G7) to the upper arm. Calibrate CGM as per manufacturer protocol.

- Environmental Setup: Position a fixed-angle RGB-D camera (e.g., Intel RealSense D435) with a clear view of the dining area. Place a high-fidelity directional microphone (e.g., Zoom H1n) and a smart scale (e.g., Withings Body+) on the table. Synchronize all devices to a Network Time Protocol (NTP) server.

- Utensil Provisioning: Provide participant with instrumented smart utensils (e.g., custom-built spoon with IMU and force sensor). Verify Bluetooth connectivity to a central data logger (e.g., tablet running a custom app).

- Calibration & Baseline: Record a 5-minute seated rest baseline for physiological signals (HR, EDA). Record empty plate/container on the smart scale. Perform a standardized utensil gesture calibration (10 repeated scoop-to-mouth motions).

- Meal Session: Participant consumes a standardized test meal (e.g., 400 kcal, defined macronutrients) or an ad libitum meal. All sensors record concurrently.

- Post-Meal: Continue recording for 90-120 minutes to capture postprandial physiological responses. Collect subjective satiety scores via electronic questionnaire.

- Data Export & Synchronization: Offload all data using timestamps. Manually annotate ground truth (bite timestamps, food type, weight) from video by a trained researcher.

Fusion Algorithm: A Hybrid Deep Learning Approach

A multi-stage fusion model is proposed:

- Early Feature Extraction: Domain-specific feature extractors process raw streams: CNN for video frames (food detection), LSTM for IMU sequences (gesture recognition), and signal processing for physiological data (HRV, EDA peaks).

- Intermediate Fusion with Attention: Extracted features are temporally aligned into a unified tensor. An attention mechanism (e.g., transformer layer) learns to weight the importance of each modality (e.g., utensil data may be paramount for bite detection, CGM for meal glycemic impact).

- Late Decision Fusion: Outputs from modality-specific event detectors (e.g., "bite detected" from utensils, "chewing" from audio) are combined using a probabilistic graphical model (e.g., Bayesian Network) that encodes prior knowledge (e.g., a bite typically precedes chewing).

Diagram Title: Multi-Modal Sensor Fusion Architecture for Dietary Monitoring

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Sensor Fusion Experiments in Dietary Monitoring

| Item / Solution | Vendor/Example | Primary Function in Research Context |

|---|---|---|

| Multi-Sensor Data Logger | LabStreamingLayer (LSL), Empatica Real-Time API, custom Raspberry Pi setup. | Synchronizes heterogeneous data streams with millisecond precision to a common clock, crucial for temporal fusion. |

| Signal Processing Suite | MATLAB Signal Processing Toolbox, Python (SciPy, HeartPy for PPG). | Filters noise, extracts features (e.g., HR from PPG, peaks from EDA), and segments data from raw wearable streams. |

| Time-Series Annotation Tool | ELAN, ANVIL, or custom CVAT temporal plugin. | Provides a GUI for researchers to label ground truth events (bite start, swallow, food type) in synchronized video & sensor data. |

| Fusion ML Framework | PyTorch or TensorFlow with libraries like PyTorch Geometric (for graphs) or Transformers. | Implements and trains custom hybrid deep learning models for feature fusion and joint inference. |

| Biomarker Assay Kits | Salivary α-amylase ELISA Kit (Salimetrics), Cortisol ELISA Kit. | Quantifies salivary biomarkers from samples collected during experiments, providing biochemical validation of intake events/stress. |

| Standardized Test Meals | Ensure nutritional shakes, pre-portioned USDA Food Patterns. | Provides controlled, reproducible nutritional stimuli with known macronutrient/caloric content for calibration and validation studies. |

Validation & Experimental Protocols

Validation requires comparison against "ground truth" (GT). The protocol below details a key experiment for validating bite detection accuracy.

Title: Protocol for Validating Sensor Fusion Bite Detection Against Video Ground Truth

- Objective: To determine the precision, recall, and F1-score of a fused (utensil IMU + wrist IMU + audio) bite detection algorithm versus expert-annotated video GT.

- Setup: As per Section 3.1. Use ad libitum meal to ensure naturalistic eating pace.

- GT Annotation: Two independent researchers annotate the video recording, marking the timestamp of each bite entry into the mouth using software (e.g., ELAN). Inter-rater reliability (Cohen's Kappa >0.8) must be achieved. The final GT is the consensus set.

- Algorithm Output: The fusion model processes synchronized sensor data and outputs a list of predicted bite timestamps.

- Matching & Metrics: A prediction is considered a true positive if it falls within a ±2-second window of a GT timestamp. Calculate:

- Precision = TP / (TP + FP)

- Recall = TP / (TP + FN)

- F1-Score = 2 * (Precision * Recall) / (Precision + Recall)

- Comparison: Perform an ablation study, calculating metrics for: a) Utensil-only detection, b) Wrist-accelerometer-only detection, c) Audio-only detection, d) Fused approach. Statistical significance tested via McNemar's test.

Diagram Title: Bite Detection Algorithm Validation Workflow

The systematic fusion of data from wearables, smart utensils, and environmental sensors creates a powerful, validated platform for passive dietary intake monitoring. This technical framework, situated within the broader AI-assisted dietary monitoring thesis, provides researchers and drug development professionals with a reproducible, quantitative methodology. It enables precise measurement of eating behaviors, energetic intake, and physiological responses in free-living or clinical settings, thereby enhancing the objectivity and rigor of nutritional science and intervention trials. Future work will focus on miniaturization, real-time edge processing, and the integration of deeper biochemical streams from next-generation biosensors.

Automated Nutrient Databases and Real-Time Composition Analysis

Automated nutrient databases (ANDs) integrated with real-time composition analysis (RTCA) represent the foundational data layer for advanced AI-assisted dietary intake monitoring systems. For researchers, scientists, and drug development professionals, these systems are critical for generating high-fidelity nutritional data essential for understanding diet-disease interactions, designing nutraceuticals, and personalizing therapeutic diets. This technical guide details the core architecture, experimental validation, and implementation protocols for these systems within a broader research thesis on AI-driven dietary surveillance.

Core Architecture & Data Flow

System Components

An integrated AND and RTCA system comprises three interconnected modules:

- Automated Curation Engine: Aggregates and standardizes data from disparate sources.

- Real-Time Analysis Hub: Processes direct compositional data from spectroscopic or genomic sensors.

- AI Validation & Integration Layer: Cross-references curated and sensed data, continuously refining database entries.

Logical Data Flow Diagram

Diagram 1: High-level data flow of an integrated AND-RTCA system.

Experimental Protocols for System Validation

Validating the accuracy and responsiveness of an AND-RTCA system requires rigorous experimental design. The following protocol is standard for benchmarking performance.

Protocol 1: Benchmarking AND Accuracy & RTCA Precision

Objective: To quantify the discrepancy between database values and real-time analyzed values for key micronutrients in a controlled set of food matrices.

Materials: See Scientist's Toolkit in Section 5.

Methodology:

- Sample Preparation: Select 10 food matrices (e.g., spinach, chicken breast, almond, blueberry) representing diverse chemical compositions. Prepare triplicate samples for each matrix in homogeneous purees.

- Reference Analysis: Subject one set of triplicates to gold-standard laboratory analysis (HPLC for vitamins, ICP-MS for minerals). Record values as

Reference_Value. - Database Query: Programmatically query the AND (e.g., via API) for standard nutrient values for each food. Record as

DB_Value. - Real-Time Analysis: Using the RTCA module (e.g., NIR spectrometer), analyze the second set of triplicates. Process the spectral data through a pre-trained calibration model. Record output as

RTCA_Value. - Statistical Comparison: Calculate mean absolute percentage error (MAPE) and Bland-Altman limits of agreement for:

DB_Valuevs.Reference_Value(Database Accuracy).RTCA_Valuevs.Reference_Value(RTCA Precision).

Data Presentation: Validation Results

Table 1: Benchmarking Results for Selected Nutrients (Hypothetical Data from Recent Studies)

| Nutrient (Unit) | Food Matrix | Reference Value (Mean) | DB Value | RTCA Value | DB MAPE (%) | RTCA MAPE (%) |

|---|---|---|---|---|---|---|

| Vitamin C (mg/100g) | Raw Red Pepper | 127.7 | 128.0 | 124.2 | 0.23 | 2.74 |

| Beta-Carotene (μg/100g) | Raw Carrot | 8285 | 8330 | 7990 | 0.54 | 3.56 |

| Iron (mg/100g) | Raw Spinach | 2.71 | 2.70 | 2.65 | 0.37 | 2.21 |

| Total Phenolics (mg GAE/100g) | Blueberry | 260 | 171* | 255 | 34.23* | 1.92 |

| Indicates a significant database gap for non-standard phytochemicals. |

Table 2: Aggregated System Performance Metrics (Synthesized from Current Literature)

| Performance Metric | Target Threshold | AND-Only System | Integrated AND-RTCA System |

|---|---|---|---|

| Accuracy (vs. Lab) | MAPE < 5% | 85% of nutrients | 96% of nutrients |

| Update Latency | < 24 hrs for major gaps | 3-6 months | < 12 hours |

| Coverage (Unique Foods) | > 500,000 entries | ~450,000 | Dynamic expansion |

| Phytochemical Coverage | > 10,000 compounds | ~2,000 | ~8,000+ (modeled) |

Signaling Pathways in Nutrient Sensing & Database Updating

A critical research application is linking nutrient intake to biochemical responses. The following pathway is frequently modeled in AI systems to predict downstream effects of nutrient intake detected via AND-RTCA.

NF-κB Inflammatory Pathway Modulation by Dietary Compounds

Diagram 2: Curcumin inhibits the pro-inflammatory NF-κB pathway at multiple nodes.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AND-RTCA Development and Validation Experiments

| Item / Reagent | Vendor Examples (Current) | Function in AND-RTCA Research |

|---|---|---|

| NIR Spectrometer | Viavi Solutions, Thermo Fisher | Core sensor for non-destructive real-time macronutrient & moisture analysis. |

| Raman Spectrometer | B&W Tek, Renishaw | Provides detailed molecular fingerprints for phytochemical identification and quantification. |

| UPLC-MS/MS System | Waters, Sciex | Gold-standard for validating and creating reference data for micronutrients and metabolites. |

| Standard Reference Materials (SRM) | NIST (e.g., SRM 3233, 1849a) | Certified food matrices with known nutrient values for instrument calibration and method validation. |

| Bioinformatics Pipeline (e.g., Foodomics) | GNPS, MetaboAnalyst | Software for processing high-throughput spectral/metabolomic data to identify novel compounds. |

| APIs for Public ANDs | USDA FoodData Central, FooDB | Programmatic access to structured nutrient data for automated curation and gap analysis. |

| Cell-Based Assay Kits (NF-κB/IL-1β) | Cayman Chemical, Abcam | Functional validation of bioactivity predictions generated by the AI integration layer. |

Workflow for Continuous Database Enhancement

The following workflow details the automated experimental cycle that allows an AND to evolve from a static repository to a dynamic knowledge base.

Diagram 3: Closed-loop workflow for autonomous AND enhancement.

Within the broader thesis on AI-assisted dietary intake monitoring, the precise measurement of dietary compliance is a critical determinant of success in clinical trials involving nutritional interventions. Variability in adherence directly impacts the validity of efficacy and safety endpoints, confounding results and potentially leading to erroneous conclusions. This technical guide details modern methodologies and technological frameworks designed to objectively quantify and enhance dietary adherence, thereby increasing the statistical power and reliability of trial outcomes.

Current Challenges & Quantitative Landscape

Traditional methods for monitoring dietary compliance, such as 24-hour recalls, food frequency questionnaires (FFQs), and paper-based food diaries, are plagued by recall bias, measurement error, and low subject compliance. The table below summarizes the performance metrics of traditional versus modern monitoring methods based on recent meta-analyses.

Table 1: Performance Comparison of Dietary Monitoring Methods

| Method | Estimated Energy Reporting Error | Adherence Data Return Rate | Subject Burden (Score 1-10) | Cost per Participant (USD) |

|---|---|---|---|---|

| Paper Food Diary | -20% to +30% | 60-75% | 8 (High) | 100 - 300 |

| 24-Hour Recall (Interview) | -15% to +25% | 85-95%* | 5 (Moderate) | 200 - 500 |

| FFQ | -25% to +35% | 90-98%* | 3 (Low) | 50 - 150 |

| Digital Photo-Based App | -5% to +10% | 80-90% | 6 (Mod-High) | 300 - 700 |

| Wearable Biosensor | N/A (Indirect) | >95% | 2 (Low) | 800 - 2500 |

| AI-Integrated Platform | -3% to +8% | 90-95% | 4 (Moderate) | 500 - 1500 |

Dependent on scheduled interviews; *Continuous passive data stream.

Core Methodologies & Experimental Protocols

Protocol for Digital Image-Assisted Food Record (DIAR) Validation

Objective: To validate the accuracy of a smartphone-based image capture system against doubly labeled water (DLW) for total energy intake assessment.

Materials:

- Smartphone with dedicated trial app (e.g., Bite Counter, FoodLog fork).

- Standardized color calibration card (for portion size estimation).

- Cloud-based image analysis pipeline with convolutional neural networks (CNN).

- DLW dosing materials and mass spectrometry access.

Procedure:

- Training: Participants complete a 30-minute virtual training on capturing top-down images of meals pre- and post-consumption with the calibration card in frame.

- Intervention: Over a 14-day period, participants capture images of all meals and snacks. The app sends automated reminders and confirmations.

- Image Analysis: Uploaded images are processed via a CNN model trained on the Food-101 and trial-specific databases. Volume is estimated via reference card, converting to nutrient data using the USDA FoodData Central API.

- Criterion Comparison: Total Energy Intake (TEI) from the DIAR method is calculated. Participants concurrently undergo the DLW protocol (baseline urine sample, oral dose of ^2H2^18O, and subsequent daily urine samples for 14 days). TEI from DLW is derived from measured CO2 production.

- Statistical Analysis: Agreement between DIAR-TEI and DLW-TEI is assessed using Bland-Altman plots, Pearson correlation coefficients, and root mean square error (RMSE).

Protocol for Biomarker-Based Adherence Assessment (e.g., DASH Diet Trial)

Objective: To measure compliance to a high-potassium, low-sodium diet using urinary electrolyte biomarkers.

Materials:

- 24-hour urine collection containers (boric acid as preservative).

- Conductivity meter for completeness check.

- Ion-selective electrode or mass spectrometry for Na+/K+ quantification.

- Participant instruction kits.

Procedure:

- Baseline Collection: Participants provide a 24-hour urine sample at screening.

- Randomized Collection: During the intervention phase, participants are prompted at random intervals (e.g., days 30, 90, 180) via the trial platform to complete a 24-hour urine collection.

- Sample Handling: Volume and conductivity are measured. Aliquots are frozen at -80°C until batch analysis.

- Biomarker Analysis: Urinary sodium (UNa) and potassium (UK) excretion (mmol/24h) are quantified. A compliance score is computed based on the target ratio (e.g., UK:UNa > 1.0 for high compliance).

- Data Integration: Biomarker scores are integrated with digital food log data in the trial's electronic data capture (EDC) system for cross-validation.

Protocol for AI-Predictive Adherence Modeling

Objective: To develop a machine learning model that predicts future non-compliance risk using multi-modal data.

Materials:

- Time-stamped adherence data (app usage, meal logs).