Assessing Accuracy: A Systematic Review of Sensitivity and Specificity in Food Intake Wearables for Clinical Research

This article provides a comprehensive analysis of the sensitivity, specificity, and overall performance metrics of wearable sensors for monitoring food intake, tailored for researchers and drug development professionals.

Assessing Accuracy: A Systematic Review of Sensitivity and Specificity in Food Intake Wearables for Clinical Research

Abstract

This article provides a comprehensive analysis of the sensitivity, specificity, and overall performance metrics of wearable sensors for monitoring food intake, tailored for researchers and drug development professionals. It explores the technological foundations of various sensor modalities—including acoustic, motion, inertial, and camera-based systems—and their methodological applications in capturing eating behaviors. The review critically examines validation study designs, compares device performance across laboratory and free-living settings, and addresses key challenges such as signal interference and user compliance. By synthesizing current evidence and validation frameworks, this work aims to inform the selection and development of robust digital endpoints for nutritional research and clinical trials.

The Technological Foundation: Sensor Modalities and Core Performance Metrics for Dietary Monitoring

For researchers and professionals in drug development and nutritional science, the adoption of wearable sensors for dietary monitoring presents a significant opportunity to overcome the limitations of traditional, self-reported dietary assessment methods. The accurate evaluation of these technologies hinges on a critical analysis of standard performance metrics—sensitivity, specificity, accuracy, and precision. These key performance indicators (KPIs) provide the quantitative foundation for validating wearable devices, from research-grade prototypes to emerging commercial products. This guide objectively compares the performance of various dietary monitoring sensor technologies by synthesizing current experimental data and detailing the methodologies used to obtain it, providing a framework for evidence-based evaluation within the field.

Performance Metrics at a Glance

The table below defines the core metrics used to evaluate the performance of dietary monitoring wearables.

| Metric | Definition | Importance in Dietary Monitoring |

|---|---|---|

| Sensitivity (Recall) | Proportion of actual eating episodes correctly identified [1] | Measures the device's ability to avoid missing meals or bites; low sensitivity leads to under-reporting. |

| Specificity | Proportion of non-eating activities correctly identified as such [1] | Measures the device's ability to reject confounding activities (e.g., talking, walking); low specificity leads to false positives. |

| Accuracy | Proportion of total predictions (both eating and non-eating) that are correct [2] | Provides a general overview of device performance, though can be misleading with imbalanced data. |

| Precision | Proportion of predicted eating episodes that are actual eating episodes [1] | Indicates the reliability of the device's alerts; high precision means most detected events are true eating events. |

Comparative Performance of Wearable Sensor Technologies

Different sensor modalities, from motion tracking to egocentric cameras, offer distinct advantages and challenges. Their performance varies significantly based on the technology used and the environment in which it is tested.

Table 1: Performance Metrics of Different Wearable Sensor Types for Dietary Monitoring

| Sensor Technology / Study | Primary Function | Reported Performance Metrics | Key Findings & Context |

|---|---|---|---|

| Multi-Sensor Systems (Inertial/Acoustic) | Detect eating events via hand-to-mouth gestures, chewing sounds [2] | Accuracy: Ranged from 73% to 95% (across 12 studies) [2]F1-Score: Varied widely across studies [2] | Dominant approach; performance is context-dependent. F1-score, which balances precision and recall, is a common but highly variable metric [2]. |

| Wristband (GoBe2) | Estimate energy intake via bioimpedance (fluid shifts) [3] | Mean Bias: -105 kcal/day vs. reference [3]95% Limits of Agreement: -1400 to 1189 kcal [3] | Showed high variability in estimating daily caloric intake, highlighting challenges in energy estimation versus mere event detection [3]. |

| AI-Wearable Camera (EgoDiet) | Estimate food portion size via computer vision [4] | Mean Absolute Percentage Error (MAPE): 28.0% (in Ghana) vs. 32.5% for 24HR [4] | A passive method that outperformed traditional 24-hour dietary recall for portion size estimation in field studies [4]. |

| Wearable Camera (SenseCam) | Augment food diary for energy intake estimation [5] | Under-reporting Correction: Identified 10.1% to 17.7% more kcal vs. diary alone [5] | Used as a ground-truth tool to reveal significant under-reporting in self-reported food diaries across different populations [5]. |

Experimental Protocols for Validating Dietary Wearables

A critical understanding of the performance data requires insight into the experimental methodologies used for validation. The following are detailed protocols from key studies.

Protocol 1: Validation of a Sensor Wristband for Energy Intake

This protocol assessed the accuracy of the GoBe2 wristband in estimating daily energy intake in free-living conditions [3].

- Objective: To validate the wristband's estimation of daily nutritional intake against a controlled reference method [3].

- Participants: 25 free-living adults [3].

- Intervention: Participants used the wristband and its accompanying mobile app for two separate 14-day test periods [3].

- Reference Method: A highly controlled reference was developed in collaboration with a university dining facility. Researchers prepared and served calibrated study meals and precisely recorded the energy and macronutrient intake of each participant under direct observation [3].

- Data Analysis: The energy intake (kcal/day) measured by the wristband was compared to the reference method using Bland-Altman analysis to assess bias and limits of agreement [3].

Protocol 2: In-Field Eating Detection with Multi-Sensor Systems

This scoping review summarized protocols for automatically detecting eating activity in free-living settings [2].

- Objective: To catalog wearable devices that automatically detect eating activity in non-lab settings and identify their evaluation metrics [2].

- Sensor Systems: The majority of included studies (65%) used multi-sensor systems (e.g., combining accelerometers and acoustic sensors) worn on the wrist or head [2].

- Ground-Truth Validation: All studies used either self-report (e.g., food diaries) or objective methods (e.g., video observation) to validate the sensor-inferred eating activity [2].

- Performance Calculation: The most frequently reported metrics were Accuracy and the F1-score, which were calculated based on the number of true positives, false positives, and false negatives when comparing sensor data to the ground truth [2].

Protocol 3: AI-Wearable Camera for Portion Size Estimation

This study evaluated a passive, vision-based pipeline called EgoDiet for dietary assessment in African populations [4].

- Objective: To evaluate the functionality of EgoDiet for portion size estimation in comparison to dietitian assessments and 24-hour dietary recall (24HR) [4].

- Device & Data Collection: Participants wore one of two customized wearable cameras (AIM or eButton) positioned at eye-level or chest-level to capture images of their meals in London and Ghana [4].

- Reference Method: In a feasibility study, the weight of food items was measured using a standardized weighing scale (Salter Brecknell) to establish a ground truth for portion size [4].

- Data Analysis: The EgoDiet pipeline used several AI modules (EgoDiet:SegNet for food segmentation, EgoDiet:3DNet for depth estimation) to extract features and estimate portion size. Performance was reported as Mean Absolute Percentage Error (MAPE) when compared to the ground truth and to 24HR [4].

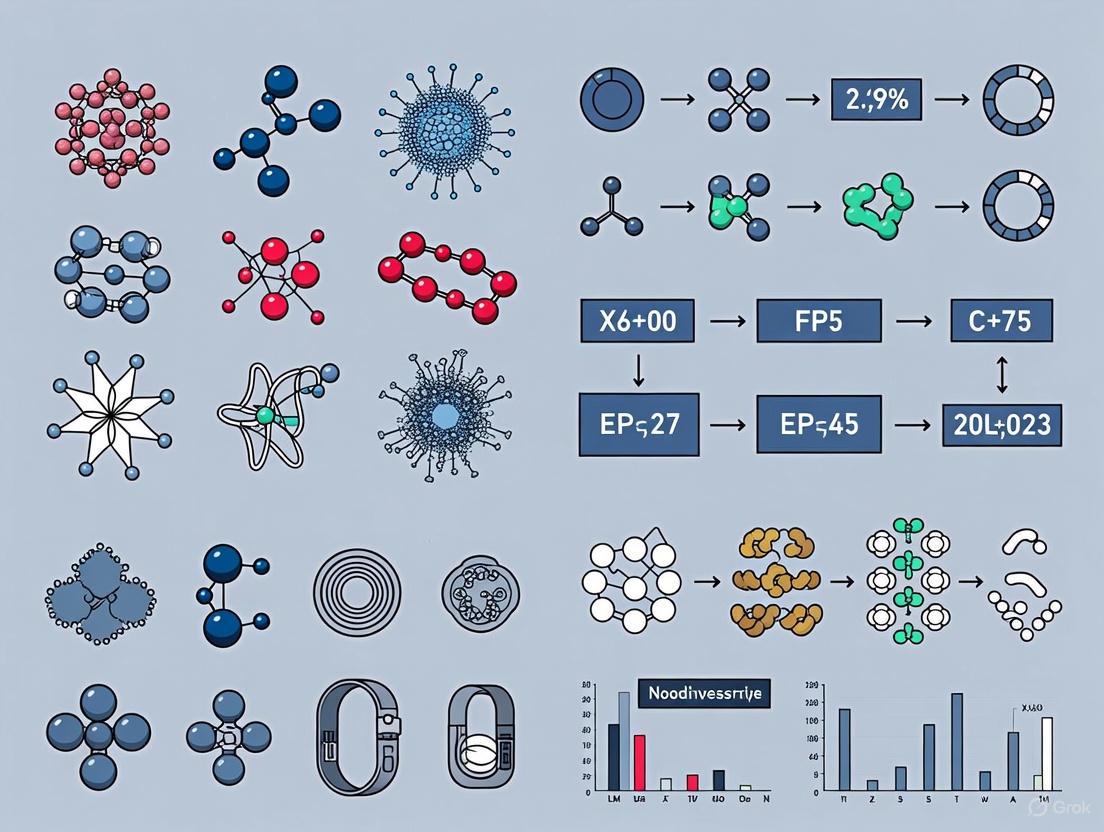

Visualizing a Systematic Validation Workflow

The following diagram illustrates a generalized experimental workflow for validating a wearable dietary monitoring device, integrating elements from the cited protocols.

Experimental Validation Workflow

The Researcher's Toolkit: Key Reagents & Materials

The table below lists essential tools and materials used in the development and validation of wearable dietary monitoring technologies, as featured in the cited research.

Table 2: Essential Research Reagents and Materials for Dietary Monitoring Studies

| Item | Function in Research | Example from Literature |

|---|---|---|

| Automatic Ingestion Monitor (AIM-2) | A research-grade wearable device that combines a camera, resistance, and inertial sensors for objective dietary data collection [1] [4]. | Used in studies to reduce the labour-intensive burden of dietary monitoring and validate sensor performance [1]. |

| eButton | A wearable, chest-pin-like camera that automatically captures images for food identification and portion size estimation [4] [6]. | Deployed in feasibility studies for passive dietary assessment in both the US and Ghana [4] [6]. |

| Continuous Glucose Monitor (CGM) | Measures interstitial glucose levels to provide context on the physiological response to food intake, used to assess adherence and meal impact [3] [6]. | Paired with the eButton to help users visualize the relationship between food intake and glycemic response [6]. |

| Bland-Altman Analysis | A statistical method used to assess the agreement between two different measurement techniques, plotting the difference between methods against their average [3]. | Key for validating the energy intake estimates of the GoBe2 wristband against a reference method, revealing bias and limits of agreement [3]. |

| Standardized Weighing Scale | Provides the ground-truth measurement of food weight for calibrating and validating portion size estimation algorithms [4]. | A Salter Brecknell scale was used to pre-weigh food items in the EgoDiet validation study [4]. |

The landscape of wearable dietary monitoring is diverse, with technologies ranging from motion and acoustic sensors to AI-powered cameras, each demonstrating distinct performance profiles. The KPIs of sensitivity, specificity, accuracy, and precision are essential for a rigorous, cross-platform comparison. Current data indicates that while multi-sensor systems can detect eating events with high accuracy in some contexts, the estimation of actual energy and nutrient intake remains a significant challenge, as evidenced by the substantial error margins in validation studies. The evolution of this field relies on standardized validation protocols, such as those detailed herein, and transparent reporting of all performance metrics. For researchers and drug development professionals, this objective comparison provides a critical foundation for selecting appropriate technologies and interpreting their data, ultimately guiding the integration of wearable sensors into high-quality nutritional and clinical research.

The accurate and objective measurement of food intake is a cornerstone of nutritional science, chronic disease management, and pharmaceutical interventions. Traditional methods, such as food diaries and 24-hour recalls, are plagued by inaccuracies due to reliance on memory and subjective reporting [7]. Wearable sensor technology presents a transformative solution by enabling continuous, objective monitoring of eating behavior in real-world environments. For researchers and drug development professionals, understanding the sensitivity and specificity of these tools is paramount for selecting appropriate endpoints in clinical trials and nutritional studies. This guide provides a systematic comparison of four principal wearable sensor modalities—Acoustic, Inertial Measurement Units (IMU), Strain, and Camera-Based systems—framed within the critical context of their performance in detecting and characterizing food intake.

Sensor Taxonomy and Technological Foundations

Wearable dietary monitoring systems are characterized by their underlying sensing technology, each capturing distinct physiological or behavioral correlates of eating. The following table summarizes the core operational principles and measured parameters of the four sensor classes.

Table 1: Fundamental Classification of Wearable Dietary Monitoring Sensors

| Sensor Type | Primary Measured Parameter | Common Placement Location | Key Detected Eating Metrics |

|---|---|---|---|

| Acoustic | Sound waves from chewing and swallowing [7] | Neck (e.g., sternum), Ear [1] | Chewing count & rate, Swallowing frequency, Food texture characterization [7] |

| Inertial (IMU) | Acceleration, rotational velocity (via accelerometers, gyroscopes) [8] [9] | Wrist, Head [7] | Hand-to-mouth gestures, Bite count, Eating duration, General activity context [7] |

| Strain | Deformation or force from mandibular movement [7] | Jaw/Chin, Neck [7] | Chewing cycles, Bite force, Eating episode onset/offset |

| Camera-Based | Visual data of food and eating environment [7] | Eyeglasses, Chest [1] | Food type identification, Portion size estimation, Eating environment context [7] |

Beyond these established modalities, novel sensing approaches are emerging. Bio-impedance sensing, as exemplified by the iEat system, measures variations in electrical impedance between two wrist-worn electrodes. These variations form unique patterns caused by dynamic circuit changes when the hands interact with food and utensils, enabling the recognition of specific food intake activities and, to a degree, food types [10].

Performance Comparison: Sensitivity and Specificity in Food Intake Monitoring

The utility of a sensor in research is determined by its ability to correctly identify eating events (sensitivity) and reject non-eating activities (specificity). Performance varies significantly across modalities and is highly dependent on the experimental setting.

Table 2: Comparative Performance Metrics of Dietary Wearable Sensors

| Sensor Type | Reported Performance (Typical Range) | Key Strengths (Sensitivity) | Key Limitations (Specificity Risks) |

|---|---|---|---|

| Acoustic | High accuracy (e.g., 84.9% for 7 food types [8]) | Direct detection of ingestive sounds (chewing, swallowing); Can differentiate food textures [7] | Vulnerable to ambient noise (speech, TV); Requires skin contact for optimal signal [7] |

| Inertial (IMU) | F1-scores for bite detection vary widely (e.g., 60%-90%+) [7] | Excellent for detecting stereotypical hand-to-mouth gestures; Ubiquitous in consumer devices [7] | Cannot distinguish eating from similar gestures (e.g., face-touching, smoking); Confounded by whole-body motion [7] |

| Strain | High accuracy for chew counting (>90% in lab settings) [7] | Direct measurement of jaw movement; Highly resistant to external environmental noise | Less effective for liquid intake; Can be uncomfortable for long-term wear; Sensitive to sensor placement |

| Camera-Based | High accuracy for food identification (>90% in controlled settings) [7] | Direct visual evidence of food type and portion size; Rich contextual data [7] | Major privacy concerns; Lighting and occlusion challenges; High computational load [7] |

| Bio-Impedance (iEat) | Macro F1: 86.4% (activities), 64.2% (food types) [10] | Recognizes specific activities (cutting, drinking) with standard utensils; User-independent models [10] | Performance is food-type dependent; Limited evaluation across diverse cuisines and eating styles [10] |

A critical consideration for researchers is the trade-off between sensitivity (detecting true eating events) and specificity (ignoring non-eating activities). For instance, while an IMU on the wrist is highly sensitive to arm movements, its specificity for eating is lower because it cannot differentiate a bite from scratching one's face. Acoustic sensors offer high specificity for ingestive sounds but are less sensitive in noisy environments where those sounds are masked [7]. The most robust research protocols often involve sensor fusion, combining complementary modalities to overcome the limitations of any single one.

Experimental Protocols and Validation Methodologies

To ensure the validity of data collected from wearable sensors, rigorous experimental protocols are employed, often comparing new sensor systems against a ground truth.

Protocol for Validating IMU-Based Systems

In human movement research, a common protocol validates a single IMU placed at the 5th lumbar vertebra (L5)—a proxy for whole-body center of mass (CoM)—against a gold-standard camera-based motion capture system [8].

- Participants: Typically, healthy adults without gait-affecting conditions [8].

- Sensor Placement: An IMU sensor is firmly secured at the L5 location [8].

- Ground Truth System: A multi-camera system (e.g., 12 cameras) tracks retroreflective markers placed on bony landmarks across the body. The true 3D CoM position is calculated using a biomechanical model [8].

- Data Collection: Participants perform activities like walking at a self-selected speed. Data from both systems are collected simultaneously [8].

- Data Processing & Analysis: CoM acceleration is derived from the camera system. IMU acceleration is processed with filters. Time-series data are synchronized based on gait events. Statistical analyses like Pearson Correlation and Bland-Altman Limits of Agreement assess the agreement between the two systems [8].

This methodology reveals that while correlations can be strong, significant differences in acceleration magnitudes can occur during specific gait phases, highlighting the importance of such validation [8].

Protocol for Dietary Monitoring with Bio-Impedance

The development of the iEat system provides a template for evaluating a novel wearable sensor in a dietary context [10].

- Apparatus: A wearable device with one bio-impedance electrode on each wrist [10].

- Participants & Setting: Experiments are conducted in a realistic table-dining environment with multiple volunteers, each completing several meals [10].

- Experimental Tasks: Participants perform defined food-intake activities (e.g., cutting with knife/fork, eating with hand, drinking) with various food types [10].

- Data Annotation: The experiment is video-recorded to provide a ground truth for labeling sensor data [10].

- Signal Processing & Modeling: Impedance signal patterns are analyzed. A machine learning model (e.g., a lightweight neural network) is trained for activity and food type recognition. Performance is evaluated using metrics like macro F1-score [10].

Experimental Workflow for Validating Dietary Wearables

The Researcher's Toolkit: Essential Reagents and Materials

Successful deployment of wearable sensor systems in dietary research requires specific materials and tools. The following table details key components and their functions.

Table 3: Essential Research Reagents and Solutions for Dietary Monitoring Studies

| Item/Reagent | Primary Function in Research Context | Exemplar Use-Case |

|---|---|---|

| High-Fidelity Acoustic Sensor | Captures raw audio signals of chewing and swallowing sounds [8] | Used in neck-worn systems like AutoDietary for solid/liquid food recognition [8] |

| Multi-sensor IMU (Accelerometer, Gyroscope) | Tracks motion and orientation of body segments [8] [9] | Placed on wrist for bite detection via hand-to-mouth gesture analysis [7] |

| Bio-Impedance Sensor & Electrodes | Measures electrical impedance variation across the body [10] | Deployed on both wrists in iEat system to detect food-related activities via circuit changes [10] |

| Gold-Standard Motion Capture System | Provides reference data for validating wearable sensor accuracy [8] | Camera-based system with force plates for synchronizing gait events in IMU validation studies [8] |

| Strain Gauge or Force Sensor | Measures mechanical deformation from jaw movement [7] | Integrated into a chin-worn device for counting chewing cycles [7] |

| Wearable Camera | Captures first-person-view images of food and environment [7] | Mounted on eyeglasses for passive food logging and environment context analysis [1] |

Bio-Impedance Sensing Principle for Dietary Monitoring

The evolving taxonomy of wearable sensors—acoustic, IMU, strain, camera-based, and emerging modalities like bio-impedance—provides a rich toolkit for objective dietary monitoring. Each sensor type offers a unique balance of sensitivity and specificity for different aspects of eating behavior, from detecting ingestion sounds and gestures to identifying food itself. For researchers and drug development professionals, the selection of a sensor must be guided by the specific eating metrics of interest, the target population, and the required level of objectivity. The future of this field lies in the intelligent fusion of multiple sensors, the development of more robust and private algorithms, and the execution of large-scale validation studies in real-world settings to firmly establish the clinical and scientific utility of these devices.

Accurate and objective assessment of dietary intake represents a significant challenge in nutritional science, epidemiology, and chronic disease management. Traditional methods such as food diaries, 24-hour recalls, and food frequency questionnaires rely on self-report, making them susceptible to substantial errors including underreporting, portion size miscalculation, and recall bias [11] [7]. The emergence of wearable sensor technology offers a promising paradigm shift, enabling objective measurement of eating behaviors including bite count, chewing rate, and swallowing frequency. These behavioral metrics serve as valuable proxies for estimating energy intake with greater accuracy and reliability than self-report methods [7]. This guide provides a comparative analysis of technological approaches for measuring eating behaviors, evaluating their underlying methodologies, accuracy metrics, and applicability for research and clinical applications.

Comparative Analysis of Monitoring Technologies

The table below summarizes the performance characteristics of different wearable sensor approaches for monitoring eating behaviors and estimating energy intake.

Table 1: Performance Comparison of Eating Behavior Monitoring Technologies

| Technology Approach | Primary Metrics | Estimated Energy Intake Error | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Bite Count (Wrist Motion) | Bite count via wrist motion | Outperformed human estimation (with/without calorie info) [11] | Non-invasive, integrates with common wearables (watches/bands) | Requires individual calibration (age, gender) [11] |

| Chew & Swallow Count (Acoustic/Strain Sensors) | Counts of chews and swallows (CCS) | Reporting errors not different from diary/photographic method [12] | Direct measurement of ingestive behavior | More obtrusive sensors on head/neck [12] |

| Video Observation (Gold Standard) | Bites, chews, swallows via annotation | Used as reference for sensor validation [13] | High accuracy for behavioral microstructure | Laboratory setting only, resource-intensive [13] |

| Facial Movement Sensing (OCOsense Glasses) | Chew count via facial muscle movements | Strong agreement with video (r=0.955) [14] | Non-invasive, integrates into everyday eyewear | Limited validation across diverse food types [14] |

Experimental Protocols for Eating Behavior Assessment

Bite Count Validation Protocol

The bite count validation study involved 280 participants in a cafeteria setting where participants ate ad libitum [11] [15]. The experimental methodology followed these key steps:

- Participant Preparation: Participants were instrumented with a tethered Bite Counter on their dominant wrist. Height, weight, age, gender, and waist-to-hip ratio were measured prior to eating [11].

- Meal Consumption: Participants selected food freely from a dining hall with a variety of options, consuming as much as they wanted across multiple courses. Meals were videotaped for subsequent validation [11].

- Data Processing: Video recordings were analyzed to establish true bite count and actual calorie intake based on food selection and weight [11] [15].

- Model Development and Validation: The participant cohort was randomly split into training and test groups. A multiple regression model predicting calories-per-bite using age and gender was developed on the training group and validated on the test group [11] [15].

- Comparison with Human Estimation: After eating, participants estimated their calorie consumption, either with or without aid of a calorie-labeled menu. These estimates were compared against the bite-based model predictions [11].

The bite-based model significantly outperformed human estimation with and without calorie information, demonstrating the utility of bite count as an objective proxy for energy intake [11] [15].

Chew and Swallow-Based Estimation Protocol

The chew and swallow-based energy intake estimation study involved 30 participants consuming four laboratory meals [12] [13]:

- Sensor Instrumentation: Participants wore a throat microphone to detect swallowing sounds and a piezoelectric strain sensor below the earlobe to monitor jaw motion during chewing [12].

- Meal Protocol: Each participant consumed three identical training meals and one validation meal with different content. Food selection was personalized, and meals were consumed in a laboratory setting with video recording [12].

- Video Annotation and Data Extraction: A human rater used custom software to annotate video recordings, marking food intake periods, bites, chewing sequences, and swallows. Counts of chews and swallows (CCS) were extracted [12].

- Energy Intake Modeling: Individualized CCS models were developed to estimate energy intake by combining mass estimations (from CCS) with caloric densities of consumed foods obtained through nutritional analysis software [12].

- Validation: The CCS model estimates were compared against weighed food records (gold standard), diet diaries, and photographic food records [12].

Results demonstrated that CCS models presented lower reporting bias and error compared to diet diaries for training meals, with performance for the validation meal being comparable to diary or photographic methods [12].

Technical Workflows for Sensor-Based Monitoring

The following diagram illustrates the generalized technical workflow for estimating energy intake from wearable sensor data, integrating common elements from bite-count and chew-swallow methodologies.

Diagram 1: Technical workflow for sensor-based energy intake estimation

Workflow for Bite Count to Energy Estimation

The diagram below details the specific signal pathway for transforming raw wrist motion data into an energy intake estimate using the bite count method.

Diagram 2: Bite count to energy estimation pathway

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Materials for Eating Behavior Studies

| Device/Software | Primary Function | Research Application |

|---|---|---|

| Bite Counter | Tracks wrist motion to count bites | Validated for free-living and lab studies; measures eating activity via bites [11] |

| Piezoelectric Strain Sensor | Monitors jaw movement during chewing | Placed below earlobe to detect chewing instances and patterns [12] [13] |

| Throat Microphone | Captures swallowing sounds | Detects and counts swallows via acoustic signals from laryngopharynx [12] |

| OCOsense Glasses | Detects facial muscle movements | Monitors chewing behavior through sensors integrated into eyewear [14] |

| Video Recording System | Captures eating episodes for annotation | Gold standard for validating sensor data and manual behavior coding [11] [13] |

| Nutrient Data System for Research (NDS-R) | Nutritional analysis software | Calculates energy intake from food types and weights for ground truth [12] [13] |

Wearable sensors for monitoring eating behaviors represent a significant advancement over traditional self-report methods, offering researchers objective, quantifiable metrics such as bite count, chewing rate, and swallowing frequency. The current evidence demonstrates that bite-based estimation outperforms human calorie estimation, while chew-and-swallow models provide comparable accuracy to dietary records. Key considerations for researchers include the trade-off between sensor obtrusiveness and measurement precision, the importance of individual calibration factors, and the need for validation against gold-standard measures like video annotation. As these technologies evolve toward greater integration with common wearables and improved algorithmic performance, they hold substantial promise for transforming dietary assessment in both research and clinical applications.

The rapid expansion of wearable technology for monitoring food intake and physical behavior in free-living conditions presents a critical methodological challenge: establishing a definitive "ground truth" against which these devices can be validated. Unlike controlled laboratory settings, free-living environments introduce immense complexity, variability, and unpredictability, making traditional validation approaches insufficient. This gold standard problem represents a fundamental bottleneck in advancing the sensitivity and specificity of food intake wearables research.

Recent systematic reviews highlight the severity of this issue. A comprehensive evaluation of free-living validation studies for physical behavior wearables revealed that 72.9% (173/237) of studies were classified as high risk of bias, while only 4.6% (11/237) were classified as low risk [16] [17]. This methodological crisis stems from large variability in validation design, inconsistent selection of criterion measures, and inadequate data synchronization protocols. For food intake monitoring specifically, the challenges are even more pronounced due to the complex, multimodal nature of eating behavior that encompasses physiological, behavioral, and contextual dimensions [1] [7].

The absence of standardized validation frameworks directly impacts the quality of evidence generated for researchers, clinicians, and drug development professionals who rely on these technologies for nutritional assessment, intervention monitoring, and clinical endpoint validation. This article examines current approaches to establishing ground truth in free-living studies, compares validation methodologies across wearable platforms, and provides experimental protocols for improving validation quality in food intake research.

Methodological Frameworks for Validation in Free-Living Conditions

The Multi-Stage Validation Framework

The scientific community has responded to the gold standard problem by proposing structured validation frameworks with increasing levels of ecological validity. Keadle et al. introduced a stage process framework that outlines five sequential validation phases [16]:

- Phase 0 (Mechanical Testing): Basic sensor functionality verification

- Phase 1 (Calibration Testing): Laboratory-based calibration against reference standards

- Phase 2 (Laboratory Evaluation): Structured activities in controlled environments

- Phase 3 (Free-Living Evaluation): Validation in real-world conditions with criterion measures

- Phase 4 (Health Application): Deployment in health research studies

This framework emphasizes that devices should pass through all preceding stages before deployment in health research (Phase 4). The critical distinction between laboratory (Phase 2) and free-living (Phase 3) validation is particularly important, as studies have demonstrated non-negligible differences in error rates between these conditions [16]. Free-living validation is essential because laboratory protocols may result in unnaturally performed activities (e.g., Hawthorne effect), where participants modify their behavior due to awareness of being observed [16].

Criterion Measure Selection for Food Intake Monitoring

Establishing ground truth for food intake wearables requires careful selection of appropriate criterion measures based on the target metric. The table below summarizes the primary criterion measures used in validation studies for different aspects of eating behavior:

Table 1: Criterion Measures for Food Intake Validation

| Target Metric | Criterion Measure | Applications | Limitations |

|---|---|---|---|

| Eating Events | Video Observation (Direct/First-Person) [7] [18] | Detection of bites, chews, swallows | Privacy concerns, obtrusiveness |

| Food Type | Image-Assisted Recall [6] | Food identification, portion size | Relies on participant compliance |

| Temporal Patterns | Video Annotation with Defined Taxonomies [7] | Meal duration, eating rate | Requires standardized definitions |

| Energy Intake | Doubly Labeled Water [16] | Total energy expenditure | Does not capture meal patterns |

| Dietary Adherence | Self-Report Diaries [6] | Contextual food choices | Recall bias, inaccuracies |

The selection of an appropriate criterion measure depends on the specific research question and target metric. For detecting eating episodes and micro-level behaviors (bites, chews, swallows), video observation currently represents the most comprehensive approach, though it raises significant privacy concerns that may affect participant behavior and compliance [7].

Standardized Definitions and Annotation Protocols

A critical advancement in addressing the gold standard problem has been the development of validated definition sets for activity annotation. One study established precise definitions for identifying the initiation and termination of physical activities in older adults, achieving excellent inter-rater reliability with Krippendorff's alpha and Fleiss' kappa all above 0.84 and percentage agreement above 88% [18]. Similar approaches are needed for eating behavior taxonomy, including standardized definitions for bites, chewing sequences, swallows, and meal boundaries.

These definition sets enable independent researchers to consistently annotate high-frequency video footage (25fps) in both free-living and laboratory settings. When synchronized with body-worn sensors, this annotation facilitates the development and validation of classification algorithms at a higher resolution than previously possible [18]. The same principles apply to food intake monitoring, where standardized operational definitions of eating microstructure are urgently needed.

Comparative Validation of Wearable Technologies

Current State of Device Validation

The wearable technology landscape encompasses both research-grade and consumer-grade devices, with varying levels of validation evidence. A systematic review identified 163 different wearables in validation studies, with 58.9% (96/163) validated only once [16]. This fragmentation complicates cross-study comparisons and evidence synthesis. The most frequently validated devices were ActiGraph GT3X/GT3X+ (22.1%), Fitbit Flex (12.3%), and ActivPAL (7.4%), though these focus primarily on physical activity rather than food intake [16].

The distribution of validation studies across behavioral domains reveals significant research gaps. Most studies (64.6%) validated intensity measures such as energy expenditure, while only 19.8% focused on biological state (sleep/awake) and 15.6% on posture or activity-type outcomes [16] [17]. This imbalance is particularly problematic for food intake monitoring, which requires integration across multiple domains.

Performance Metrics for Food Intake Detection

Validation of food intake wearables employs standardized performance metrics adapted from diagnostic accuracy studies. The following table summarizes reported performance metrics across different sensing modalities:

Table 2: Performance Metrics for Food Intake Wearables

| Sensing Modality | Primary Metrics | Reported Performance | Reference Standard |

|---|---|---|---|

| Acoustic Sensors | Accuracy, F1-score [1] | Varies by algorithm | Video observation |

| Inertial Sensors | Sensitivity, Specificity [7] | Wrist: 70-90% detection | Video observation |

| Camera-Based | Food recognition accuracy [7] | 70-85% for common foods | Manual food records |

| Multimodal Fusion | Correlation, Agreement [7] | Improved over single modality | Combined criteria |

Recent research has focused on multimodal sensing approaches that combine complementary data streams. For example, the Automatic Ingestion Monitor V.2 (AIM-2) integrates camera, resistance, and inertial sensors to improve detection accuracy while reducing participant burden [1]. These systems demonstrate the potential of sensor fusion but introduce additional complexity to the validation process.

Specialized Validation for Clinical Populations

Emerging research emphasizes the importance of population-specific validation, particularly for clinical groups that may exhibit different movement patterns or behaviors. One ongoing study is validating wearable activity monitors in patients with lung cancer, who often experience unique mobility challenges and gait impairments that affect device accuracy [19]. This protocol incorporates both laboratory and free-living components, with video recording as the criterion measure for laboratory validation [19].

Similar considerations apply to food intake monitoring in specific populations. For example, a study exploring the use of the eButton and continuous glucose monitor (CGM) in Chinese Americans with type 2 diabetes found that cultural dietary patterns and food preparation methods may require adaptation of validation protocols [6]. These population-specific factors highlight the need for tailored validation approaches rather than one-size-fits-all solutions.

Experimental Protocols for Free-Living Validation

Laboratory vs. Free-Living Protocols

Comprehensive validation requires both laboratory and free-living components to assess device performance across different conditions. Laboratory protocols provide controlled assessment against gold standards, while free-living protocols evaluate ecological validity.

A proposed protocol for validating wearable activity monitors in patients with lung cancer includes the following laboratory components [19]:

- Structured activities (sitting, standing, posture changes)

- Variable-time walking trials at different speeds

- Gait speed assessments

- Video recording for criterion validation

For the free-living component, participants wear devices continuously for 7 days during normal activities, with exclusion only during water-based activities [19]. Similar protocols can be adapted for food intake monitoring, including standardized eating tasks in laboratory settings and extended monitoring in free-living conditions.

The Video Annotation Protocol

Video observation serves as a cornerstone for ground truth establishment in free-living studies. A validated protocol for video annotation includes the following stages [18]:

- Definition Development: Preliminary activity definitions created through literature review and expert consultation

- Iterative Refinement: Definitions improved through rater consensus during annotation practice

- Reliability Testing: Multiple raters annotate the same video footage using the definitions

- Statistical Analysis: Inter-rater reliability assessed using Krippendorff's alpha, Fleiss' kappa, and intraclass correlation coefficients

This protocol achieved excellent reliability for physical activity identification, with ICC values all above 0.9 for activity quantity and duration [18]. Applying similar methodology to eating behavior requires developing standardized definitions for eating-related actions (bites, chews, swallows) and temporal boundaries (meal start/end).

Multimodal Sensor Validation Framework

The complexity of food intake behavior necessitates multimodal sensing approaches, which in turn require sophisticated validation frameworks. The following diagram illustrates an integrated validation workflow for food intake wearables:

Integrated Validation Workflow for Food Intake Wearables

This workflow emphasizes the iterative nature of validation, where algorithm development informs refinement of ground truth measures, and vice versa. Each sensor modality requires validation against appropriate criterion measures, with multimodal fusion presenting additional complexity.

The Researcher's Toolkit: Essential Methods and Materials

Reference Standards and Criterion Measures

Establishing ground truth in free-living studies requires access to appropriate reference standards. The following table details essential "research reagent solutions" for food intake validation:

Table 3: Research Reagents for Food Intake Validation

| Tool Category | Specific Tools | Function | Implementation Considerations |

|---|---|---|---|

| Video Recording | Body-worn cameras, Fixed cameras [18] | Capture eating behavior for annotation | Privacy protection, camera positioning |

| Annotation Software | Video annotation tools | Behavioral coding with timestamps | Compatibility with synchronization protocols |

| Synchronization | Timestamps, Event markers [16] | Temporal alignment of multimodal data | Millisecond precision requirements |

| Reference Sensors | Research-grade accelerometers [19] | Comparison with consumer devices | Placement, sampling frequency |

| Dietary Assessment | eButton, Food diaries [6] | Food identification and portion size | Participant burden, compliance |

Standardized Definition Sets

The development and use of standardized definition sets represents a critical methodological tool for improving validation quality. These definition sets should include:

- Temporal Boundaries: Precise criteria for meal initiation and termination

- Microstructure Definitions: Operational definitions of bites, chews, and swallows

- Food Categorization: Taxonomy for food type classification

- Contextual Factors: Environmental and social context of eating

Adoption of common definition sets enables meta-analysis across studies and facilitates comparison of different algorithms and sensing approaches. The excellent inter-rater reliability achieved in physical activity annotation (Krippendorff's alpha >0.84) demonstrates the feasibility of this approach [18].

Statistical Framework for Validation

Robust validation requires appropriate statistical methods that account for the hierarchical structure of free-living data and the multi-dimensional nature of eating behavior. Key components include:

- Agreement Statistics: Intraclass correlation coefficients (ICC) for continuous measures

- Classification Metrics: Sensitivity, specificity, F1-scores for event detection

- Time-Series Analysis: Dynamic time warping for temporal alignment

- Multilevel Modeling: Accounting for nested data (bites within meals within participants)

The consistent application of these statistical methods across studies would significantly improve the comparability of validation evidence and facilitate evidence synthesis.

The gold standard problem in free-living studies represents both a significant challenge and an opportunity for methodological innovation in food intake wearable research. Current evidence indicates a validation crisis, with most studies exhibiting high risk of bias and limited comparability due to heterogeneous protocols. Addressing this problem requires coordinated effort across multiple domains: developing standardized definition sets for eating behavior, implementing multimodal validation frameworks, adopting robust statistical methods, and creating specialized protocols for clinical populations.

The establishment of reliable ground truth measures is particularly critical for enhancing the sensitivity and specificity of food intake detection. Sensitivity (correct identification of true eating events) and specificity (correct rejection of non-eating events) depend fundamentally on the quality of the reference standard against which devices are validated. Progress in this area will enable researchers, clinicians, and drug development professionals to confidently deploy wearable technologies for dietary monitoring, nutritional intervention assessment, and clinical endpoint measurement in free-living conditions.

Future directions should include the development of open-source validation datasets with high-quality ground truth, consensus standards for food intake validation protocols, and specialized frameworks for different clinical populations. Through collaborative efforts to address the gold standard problem, the field can advance toward more valid, reliable, and ecologically meaningful monitoring of eating behavior in natural environments.

The accurate monitoring of dietary intake is a cornerstone of nutritional science and the management of chronic diseases. Traditional methods, such as food diaries, are prone to inaccuracies and recall bias, with studies indicating they can cause an 11–41% underestimation of energy intake [20]. Wearable sensor technology has emerged as a promising solution, offering objective and continuous data collection. The field is undergoing a significant paradigm shift, moving from reliance on single-sensor systems to sophisticated multi-modal sensor fusion approaches. This evolution is primarily driven by the need to improve the sensitivity and specificity of food intake detection, reducing false positives from confounding activities like talking or scratching one's neck [21]. This guide objectively compares the performance of single-sensor and multi-modal wearable devices, providing researchers and drug development professionals with a detailed analysis of supporting experimental data and methodologies.

Performance Comparison: Single-Sensor vs. Multi-Modal Approaches

The core advantage of multi-modal fusion lies in its ability to leverage complementary data sources, leading to significant gains in detection accuracy. The table below summarizes performance metrics from key studies, illustrating this performance differential.

Table 1: Performance Comparison of Sensor Approaches for Intake Detection

| Study & Approach | Sensors Used | Fusion Method | Key Performance Metric | Result |

|---|---|---|---|---|

| Unimodal IMU (Motion) [21] | Wrist-worn Inertial Measurement Unit (IMU) | Not Applicable (Single Modality) | F1-Score for Drinking Activity | 83.9% |

| Unimodal Acoustic [21] | In-ear Microphone | Not Applicable (Single Modality) | F1-Score for Drinking Activity | 72.1% |

| Multi-Modal (Motion + Acoustic) [21] | Wrist-worn IMU + In-ear Microphone | Feature-level fusion with SVM/XGBoost | F1-Score for Drinking Activity | 96.5% (Event-based) |

| Unimodal IMU [22] | Wrist-worn IMU | Not Applicable (Single Modality) | Segmental F1-Score for Intake Gestures | Baseline (Unimodal-IMU) |

| Unimodal Radar [22] | Contactless FMCW Radar | Not Applicable (Single Modality) | Segmental F1-Score for Intake Gestures | Baseline +4.3% vs. IMU |

| Multi-Modal (IMU + Radar) [22] | Wrist-worn IMU + Contactless Radar | MM-TCN-CMA Framework | Segmental F1-Score for Intake Gestures | +5.2% vs. Unimodal-IMU |

Beyond detection F1-scores, multi-modal systems provide a richer, more contextual understanding of intake events. Single-sensor systems, such as a clinical-grade Actiwatch, excel in a specific niche—using actigraphy (motion and light) for long-term sleep-wake pattern monitoring with high clinical validation [23]. However, they offer low context, meaning they can detect movement but not the specific activity causing it [23]. In contrast, consumer multimodal devices (e.g., Apple Watch, Oura Ring) and research systems fuse data from accelerometers, photoplethysmography (PPG), electrodermal activity (EDA), and temperature sensors to provide high-context data, correlating heart rate with activity to distinguish exercise from stress [23].

Detailed Experimental Protocols and Methodologies

Deep Learning-Based Covariance Fusion for Food Intake Detection

Objective: To develop a computationally efficient data fusion technique that transforms high-dimensional multi-sensor data into a lower-dimensional representation for accurate activity classification [24] [25].

Methodology: This technique is based on the hypothesis that data from different sensors during a specific activity are statistically correlated, and this unique correlation pattern can be visualized and classified [25].

- Data Collection: Researchers used an Empatica E4 wristband to record data from a 3-axis accelerometer, photoplethysmograph (BVP), electrodermal activity (EDA) sensor, temperature sensor, and heart rate monitor [25].

- Covariance Matrix Calculation: Data from all sensors over a 500-sample window formed an observation matrix. The pairwise covariance between each signal was calculated to create a covariance matrix, representing the inter-modality correlation patterns [24] [25].

- 2D Contour Plot Creation: The covariance matrix was visualized as a filled 2D contour plot, transforming the multi-sensor time-series data into a single image where color and isoline patterns correspond to the activity type [25].

- Deep Learning Classification: The generated 2D contour plots were used as input to a deep residual network (comprising 2D convolution, batch normalization, ReLU, and max pooling layers) to learn and classify patterns associated with specific activities like eating, sleeping, or working [25].

The following diagram illustrates this multi-step workflow:

Robust Multi-Modal Learning for Handling Missing Modalities

Objective: To create a fusion framework for intake gesture detection that maintains robust performance even when data from one sensor modality is missing during inference [22].

Methodology:

- Sensor Setup: Data was collected simultaneously from a wrist-worn Inertial Measurement Unit (IMU) and a contactless Frequency-Modulated Continuous Wave (FMCW) radar sensor across 52 meal sessions [22].

- Fusion Architecture: The proposed Multimodal Temporal Convolutional Network with Cross-Modal Attention (MM-TCN-CMA) was designed to efficiently integrate features from both IMU and radar data streams [22].

- Robustness Mechanism: A key feature of the framework is its integrated missing modality handling mechanism. This allows the model to be trained on complete data but still perform effectively during inference with only IMU or only radar data, a common occurrence in real-world deployments [22].

- Validation: The model was evaluated under three conditions: full multimodal data, missing radar data, and missing IMU data. The results showed that the robust fusion framework not only outperformed unimodal baselines with full data but also maintained performance gains when a modality was missing [22].

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers aiming to replicate or build upon these multi-modal fusion studies, the following table details key hardware, software, and datasets used in the featured experiments.

Table 2: Key Research Materials for Multi-Modal Sensor Fusion Studies

| Item Name | Type | Primary Function in Research | Example/Reference |

|---|---|---|---|

| Inertial Measurement Unit (IMU) | Hardware (Wearable) | Captures fine-grained motion data (acceleration, angular velocity) of wrist and arm gestures during eating. | Opal Sensors (APDM) [21], Empatica E4 [25] |

| FMCW Radar | Hardware (Ambient) | Contactless sensing of global spatial and velocity information of body movements; privacy-preserving. | Millimeter-wave Radar [22] |

| In-Ear Microphone | Hardware (Wearable) | Captures acoustic signals of swallowing and chewing for differentiating intake from other activities. | Condenser Microphone [21] |

| Photoplethysmography (PPG) Sensor | Hardware (Wearable) | Monitors physiological responses (heart rate, HRV) to intake by measuring blood volume changes. | Custom multi-sensor wristband [20] |

| Multi-Sensor Wristband | Hardware Platform | Customizable platform for co-locating multiple sensors (PPG, IMU, temperature, oximeter). | Custom wristband [20] |

| Public Radar-IMU Dataset | Dataset | Provides labeled, synchronized data from radar and IMU sensors for training and validating fusion models. | Radar-IMU Multimodal Dataset [22] |

| Deep Learning Frameworks (e.g., CNN, LSTM, TCN) | Software/Algorithm | Used for automatic feature extraction, time-series analysis, and classification from complex sensor data. | Deep Residual Network [25], MM-TCN-CMA [22] |

The evidence from recent studies unequivocally demonstrates that multi-modal sensor fusion represents the future of high-fidelity dietary monitoring. The transition from single-sensor systems to integrated multi-modal approaches directly addresses the critical need for high sensitivity and specificity in food intake wearables. By fusing complementary data sources—such as motion, acoustics, radar, and physiology—these systems can more accurately distinguish true intake gestures from confounding activities, providing a richer, more contextual dataset for researchers. While challenges remain, including computational efficiency and real-world robustness, the experimental data confirms that multi-modal fusion is indispensable for advancing the objective, precise, and reliable monitoring of eating behavior in both clinical and free-living settings [24] [21] [22].

From Data to Insight: Methodologies for Deploying and Applying Wearable Sensors in Research

The accurate detection of food intake is a cornerstone of nutritional science, chronic disease management, and behavioral health research. The selection of optimal sensor placement on the human body represents a critical trade-off between the sensitivity (ability to correctly identify true eating events) and specificity (ability to correctly reject non-eating activities) of monitoring systems [1]. Different anatomical positions provide access to distinct physiological and behavioral signals, each with characteristic strengths and limitations for capturing specific aspects of eating behavior. As wearable sensing technology evolves beyond traditional self-reporting methods, understanding these placement-specific performance characteristics becomes essential for researchers designing studies, interpreting data, and developing interventions [2] [7].

This guide systematically compares the performance characteristics of wrist, neck, and head-mounted wearable sensors, providing researchers with evidence-based insights for selecting appropriate modalities based on specific dietary monitoring objectives.

Comparative Performance of Sensor Placements

The table below summarizes the key performance metrics, target behaviors, and technological considerations for the three primary sensor placement categories based on current research findings.

Table 1: Performance Comparison of Wearable Sensor Placements for Dietary Monitoring

| Sensor Placement | Primary Detection Method | Key Performance Metrics | Target Behaviors/Context | Advantages | Limitations |

|---|---|---|---|---|---|

| Wrist-mounted (e.g., smartwatches, wristbands) | Hand-to-mouth gestures via inertial sensors (accelerometer/gyroscope) [2] [7] | Accuracy: Varies; F1-score: Commonly reported [2] | Bite counting, meal timing, eating duration [7] | High user compliance, socially acceptable, captures hand gestures | Prone to false positives from similar gestures (e.g., face touching, smoking) [26] |

| Neck-mounted (e.g., NeckSense) | Acoustic (chewing/swallowing sounds), bio-impedance (iEat), piezoelectric sensors [27] [7] [10] | Bite detection: >80% accuracy; Chew detection: High sensitivity; Food classification: 64.2% F1-score (iEat) [27] [10] | Chewing rate, swallowing frequency, food type classification, meal microstructure [7] [10] | Direct capture of ingestive sounds, detects food properties, high specificity for eating events | Social acceptability concerns, potential discomfort during long-term wear |

| Head-mounted (e.g., AIM-2, eyeglass-based systems) | Egocentric cameras, accelerometers (jaw movement), proximity sensors [28] [29] | Eating episode detection: 94.59% sensitivity, 70.47% precision (AIM-2 with sensor-image fusion) [28] | Food type recognition, portion size estimation, social context, eating environment [26] [28] [29] | Visual confirmation of food, contextual data capture, multi-modal sensing | Significant privacy concerns, higher power consumption, obtrusiveness |

Experimental Protocols and Methodologies

Multi-Sensor Systems for Pattern Recognition

Northwestern University's Multi-Sensor Protocol: A comprehensive study deployed three synchronized sensors to capture complementary behavioral data [27]:

- NeckSense Necklace: Precisely recorded eating behaviors including chewing speed, bite count, and hand-to-mouth movements using a combination of sensors

- HabitSense Body Camera: An activity-oriented thermal camera that initiated recording only when food entered the field of view, addressing privacy concerns while capturing meal context

- Wrist-worn Activity Tracker: Monitored general activity patterns and contextual movements

This multi-modal approach enabled researchers to identify five distinct overeating patterns through semi-supervised learning, demonstrating how complementary sensor placements can reveal complex behavioral phenotypes that single-sensor systems might miss [27] [30].

Sensor-Image Fusion for Enhanced Specificity

AIM-2 (Automatic Ingestion Monitor v2) Protocol: The integrated head-mounted system combined multiple sensing modalities to improve detection accuracy [28]:

- Continuous Image Capture: Egocentric images collected every 15 seconds provided visual confirmation of food consumption

- Accelerometer Data: 3-axis accelerometer sampled at 128 Hz captured jaw movements and head motion indicative of chewing

- Hierarchical Classification: A machine learning framework combined confidence scores from both image and sensor classifiers to reduce false positives

This fusion approach achieved a significant 8% improvement in sensitivity compared to either method alone, demonstrating the value of multi-modal detection systems [28].

Bio-Impedance Sensing for Activity Recognition

iEat Wrist-based Protocol: This innovative approach utilized an atypical sensing methodology for dietary monitoring [10]:

- Two-Electrode Configuration: Electrodes placed on each wrist measured electrical impedance across the body

- Dynamic Circuit Monitoring: Tracked impedance variations caused by formation of new circuit paths during food handling and consumption

- Activity Classification: A lightweight neural network classified four food intake-related activities with 86.4% macro F1-score

This protocol demonstrates how novel sensing modalities can leverage alternative physiological principles to detect eating behaviors, potentially overcoming limitations of traditional motion-based detection [10].

Signaling Pathways and Detection Workflows

The diagram below illustrates the integrated workflow for multi-sensor eating detection, showing how complementary data streams fuse to improve detection accuracy.

Diagram 1: Multi-modal sensing architecture showing how complementary data streams fuse to improve detection accuracy.

The Researcher's Toolkit: Essential Research Reagents and Solutions

Table 2: Essential Research Materials for Wearable Dietary Monitoring Studies

| Tool/Technology | Function/Purpose | Example Implementations |

|---|---|---|

| Inertial Measurement Units (IMUs) | Capture motion data for gesture recognition (bite detection via hand-to-mouth movements) [2] [29] | Wrist-worn accelerometers/gyroscopes; Head-mounted sensors for jaw movement [7] [28] |

| Acoustic Sensors | Detect chewing and swallowing sounds through bone conduction or airborne capture [7] [10] | Neck-mounted microphones; Piezoelectric sensors [7] |

| Bio-Impedance Sensors | Measure electrical impedance changes caused by food-handling interactions and circuit formation [10] | iEat wrist-worn electrodes; Necklace-based impedance sensors [10] |

| Wearable Cameras | Provide visual confirmation of food intake and contextual information [26] [28] [29] | AIM-2 egocentric camera; HabitSense activity-oriented camera; DietGlance smart glasses [27] [28] [29] |

| Thermal Sensors | Trigger recording when hot food enters field of view while preserving privacy [27] | HabitSense thermal-triggered camera; IR sensors for activity detection [27] [26] |

| Ground Truth Validation Tools | Establish reference data for algorithm training and validation [28] [30] | Foot pedal markers (AIM-2); Ecological Momentary Assessment (EMA); Video annotation [28] [30] |

The optimal sensor placement for dietary monitoring depends fundamentally on the specific research questions and behavioral constructs of interest. Head-mounted systems provide the highest specificity through visual confirmation but present significant privacy and usability challenges. Neck-mounted sensors offer excellent detection of core eating behaviors like chewing and swallowing with high temporal resolution. Wrist-worn devices benefit from superior wearability and social acceptance but struggle with gesture discrimination.

Future research directions point toward heterogeneous multi-sensor systems that strategically combine complementary placements to maximize both sensitivity and specificity while addressing the practical constraints of longitudinal studies. The emerging paradigm emphasizes sensor fusion approaches that leverage the distinct advantages of each anatomical position to create comprehensive digital phenotypes of eating behavior [27] [28] [30].

The objective monitoring of dietary intake is a critical challenge in nutritional science, chronic disease management, and pharmacological research. Food intake wearables represent a promising technological solution, moving beyond traditional self-reporting methods prone to inaccuracies and recall bias [1]. The sensitivity and specificity of these devices hinge fundamentally on the machine learning pipelines that process raw sensor data into detectable eating events. This guide provides a systematic comparison of the feature extraction methods and classification algorithms that underpin the performance of modern eating event detection systems, with a focused analysis on their operational characteristics within the broader context of wearable sensor research.

Comparative Performance of Detection Approaches

The performance of eating event detection systems varies significantly based on the sensing modality, feature extraction techniques, and classification algorithms employed. The table below summarizes the experimental outcomes from recent seminal studies.

Table 1: Performance Comparison of Eating Event Detection Approaches

| Study & System | Sensing Modality | ML Pipeline Components | Key Performance Metrics | Testing Context |

|---|---|---|---|---|

| Acoustic Food Recognition [31] | In-ear microphone (chewing sounds) | Feature Extraction: Spectrograms, MFCCs, spectral rolloff & bandwidth; Classification: GRU, LSTM, Hybrid models | GRU: Accuracy 99.28%, F1-score: N/R; Bidirectional LSTM+GRU: Precision 97.7%, Recall 97.3%; RNN+Bidirectional LSTM: Recall 97.45% | Lab-controlled conditions with 20 food items |

| ByteTrack (Video) [32] | Wall-mounted camera (meal videos) | Feature Extraction: Face detection (Faster R-CNN & YOLOv7); Classification: EfficientNet CNN + LSTM-RNN | Average Precision: 79.4%; Recall: 67.9%; F1-score: 70.6%; Intraclass Correlation: 0.66 (range 0.16-0.99) | Laboratory meals with children (ages 7-9) |

| EarBit (Inertial) [33] | Head-mounted IMU (jaw motion) | Feature Extraction: Jaw movement patterns; Classification: Unspecified ML model | Accuracy: 93.0%; F1-score: 80.1%; Episode Detection: All but one eating episode correctly identified | Real-world, unconstrained environments |

| Multimodal Fusion [24] | Empatica E4 wristband (ACC, BVP, EDA, TEMP) | Feature Extraction: 2D covariance representations; Classification: Deep Residual Network | Precision: 0.803 (from LOSO cross-validation) | Free-living conditions with multiple activities |

Detailed Experimental Protocols

Acoustic-Based Food Recognition

Data Collection & Preprocessing: The acoustic-based system collected 1,200 audio files for 20 distinct food items [31]. The research applied signal processing techniques to extract meaningful features, including spectrograms (for visual signal representation), mel-frequency cepstral coefficients (MFCCs) to capture timbral and textural sound aspects, spectral rolloff (to measure signal shape), and spectral bandwidth (to identify lower and upper frequencies) [31].

Model Training & Evaluation: The study trained multiple deep learning models, including Gated Recurrent Units (GRU), Long Short-Term Memory networks (LSTM), a customized Convolutional Neural Network (CNN), InceptionResNetV2, and several hybrid models (Bidirectional LSTM + GRU, RNN + Bidirectional LSTM, RNN + Bidirectional GRU) [31]. The models were designed to learn both spectral and temporal patterns in the audio signals. Evaluation was performed using standard metrics including accuracy, precision, recall, and F1-score, with GRU achieving the highest accuracy at 99.28% [31].

ByteTrack for Automated Bite Detection

Data Collection: The study involved 242 videos (1,440 minutes) of 94 children (ages 7-9) consuming four laboratory meals with identical foods served in varying amounts [32]. Videos were recorded at 30 frames per second using an Axis M3004-V network camera positioned outside the children's line of sight to minimize observer effects [32].

Model Architecture: ByteTrack employs a two-stage pipeline [32]:

- Face Detection & Tracking: A hybrid Faster R-CNN and YOLOv7 pipeline detects and tracks faces, reducing noise and preparing data for classification.

- Bite Classification: An EfficientNet Convolutional Neural Network combined with a Long Short-Term Memory (LSTM) Recurrent Network classifies movements as bites versus other actions like talking or gesturing.

Performance Challenges: The system demonstrated lower reliability in videos with extensive movement or occlusions, highlighting the challenges of real-world deployment [32].

Sensor Fusion for Eating Episode Detection

Methodology: This approach addresses the challenge of high-dimensional data from multiple sensors by transforming multi-sensor time-series data into a single 2D covariance representation [24]. The core hypothesis is that data from different sensors are statistically correlated, and this correlation has a unique distribution for each type of activity.

Implementation: The algorithm creates a filled contour plot from the covariance matrix of all sensor measurements, which is then fed into a deep residual network with three 2D convolution layers for classification [24]. This approach significantly reduces computational complexity while maintaining important activity discrimination patterns.

Visualizing Machine Learning Pipelines

Generalized ML Pipeline for Eating Event Detection

The following diagram illustrates the common workflow for machine learning-based eating event detection, from data acquisition to model evaluation.

Figure 1: Generalized machine learning pipeline for eating event detection, showing the flow from data acquisition through feature extraction, classification, and performance evaluation.

ByteTrack Architecture for Bite Detection

The ByteTrack system implements a specialized pipeline for detecting bites from video data, particularly designed to handle challenges in pediatric populations.

Figure 2: ByteTrack's two-stage pipeline for automated bite detection from video, combining face detection with spatiotemporal classification.

The Researcher's Toolkit: Essential Research Reagents & Materials

Successful development and validation of eating event detection systems requires specific technical components and validation methodologies. The table below details key solutions used across the featured studies.

Table 2: Essential Research Reagents & Solutions for Eating Event Detection Research

| Research Reagent | Function/Purpose | Example Implementations |

|---|---|---|

| Acoustic Sensors | Capture chewing and swallowing sounds for audio-based detection | In-ear microphones [31] [33]; Neck-worn piezoelectric microphones [33] |

| Inertial Measurement Units (IMUs) | Detect jaw motion and hand-to-mouth gestures via accelerometers/gyroscopes | Head-mounted IMUs for jaw motion [33]; Wrist-worn accelerometers (Empatica E4) [24] |

| Wearable Cameras | Capture first-person visual data for food identification and intake monitoring | eButton (chest-pin camera) [4] [6]; AIM (eyeglass-mounted camera) [4] |

| Deep Learning Frameworks | Provide infrastructure for developing complex neural network models | GRU, LSTM, CNN architectures [31]; EfficientNet + LSTM hybrids [32]; Deep Residual Networks [24] |

| Signal Processing Libraries | Extract meaningful features from raw sensor data | Spectrogram generation; MFCC extraction; Spectral rolloff & bandwidth calculation [31] |

| Video Annotation Systems | Generate ground truth data for model training and validation | Manual observational coding (gold standard) [32]; Semi-automated video analysis tools |

Discussion & Performance Analysis

The comparative analysis reveals significant trade-offs between different sensing modalities and their corresponding machine learning pipelines. Acoustic-based approaches demonstrate remarkable performance in laboratory settings (up to 99.28% accuracy) but face challenges with environmental noise in real-world conditions [31] [33]. Video-based systems like ByteTrack offer rich behavioral data but raise privacy concerns and require substantial computational resources [32]. Inertial sensing systems provide a balance between performance and practicality, with EarBit achieving 93% accuracy in unconstrained environments [33].

The sensitivity and specificity of these systems are influenced by multiple factors: the quality of feature extraction, the appropriateness of classification algorithms for temporal data, and the diversity of training datasets. Multimodal approaches that combine complementary sensing modalities show particular promise for enhancing both sensitivity and specificity while reducing false positives from confounding activities [24].

Future directions in this field include developing more robust hybrid models, improving personalization through transfer learning, addressing privacy concerns through edge computing, and enhancing generalizability across diverse populations and real-world conditions.

The growing global burden of chronic diseases has catalyzed the development of innovative digital health technologies capable of transforming care from episodic to continuous, proactive management. Artificial intelligence (AI)-integrated wearable devices represent a paradigm shift in how we approach diabetes, obesity, and cardiovascular diseases (CVD), enabling real-time physiological monitoring, personalized interventions, and decentralized care delivery. These technologies address critical limitations of traditional healthcare models, particularly for conditions requiring constant monitoring and timely intervention. The convergence of advanced sensors—capturing data from electrocardiography (ECG), photoplethysmography (PPG), accelerometry, and glucose monitoring—with sophisticated AI algorithms has created unprecedented opportunities for detecting subtle disease patterns, predicting adverse events, and supporting clinical decision-making [34] [35] [36]. This review systematically compares the performance of various wearable technologies across major chronic disease domains, with particular attention to their emerging role in monitoring dietary behaviors and food intake, a crucial yet challenging component of metabolic health management.

Performance Comparison of Wearable Technologies Across Chronic Diseases

Table 1: Performance Metrics of Wearable Devices in Diabetes Management

| Device Type | Key Measured Parameters | AI Integration & Capabilities | Reported Performance/Accuracy | Supporting Evidence |

|---|---|---|---|---|

| Continuous Glucose Monitors (CGMs) | Interstitial glucose levels | Prediction of glucose changes 1-2 hours in advance; personalized guidance | RMSE: 14.7-23.5 mg/dL for glucose prediction | 60 studies reviewed; AI-enhanced CGMs provide data every few minutes [35] [37] |

| Smartwatches with PPG/ECG | Heart rate, heart rate variability, physical activity | Integration of multimodal data (sleep, activity) for metabolic state assessment | High diagnostic accuracy for arrhythmia detection | Pattern recognition for glucose fluctuations; transformer models for data integration [34] [37] |

| Multi-sensor Systems | Physiological parameters for stress classification | AI-based stress classification in T2D patients | Classifies stress levels using physiological indicators | System developed using dataset of 128 diabetic patients [35] |

Table 2: Performance Metrics of Wearable Devices in Cardiovascular Disease Management

| Device Type | Key Measured Parameters | AI Integration & Capabilities | Reported Performance/Accuracy | Supporting Evidence |

|---|---|---|---|---|

| Smartwatches with ECG | Single-lead ECG, heart rhythm | Arrhythmia detection (e.g., atrial fibrillation) | 98.3% sensitivity, 99.6% specificity for AF detection in FDA-cleared devices [34] | High diagnostic accuracy demonstrated in controlled studies [34] |

| PPG-based Wearables | Heart rate, HR variability, blood pressure estimation | AI-enhanced preprocessing (CycleGAN, RLS adaptive filtering) | Motion artefacts reduced by 49%; BP error margins: ±4.5 mmHg (DBP), ±5.8 mmHg (SBP) [34] | Real-world implementation reports [34] |

| Activity Trackers in Cardiac Rehabilitation | Steps per day, physical activity levels, exercise capacity | Gamification strategies, behavior change support | 1060 steps/day increase; 13.06m improvement in 6-min walk test; 0.70 RR for rehospitalizations [38] | 23 RCTs meta-analyzed; significant effects on physical activity and prognosis [38] |

Table 3: Performance Metrics of Wearable Devices in Obesity Management

| Device Type | Key Measured Parameters | AI Integration & Capabilities | Reported Performance/Accuracy | Supporting Evidence |

|---|---|---|---|---|

| Multi-sensor System (Necklace, Wristband, Body Camera) | Eating behaviors, chewing speed, bite count, hand-to-mouth movements | Identification of overeating patterns; personalized behavior-change programs | Identified 5 distinct overeating patterns with precise behavior detection [39] | Study of 60 adults with obesity; real-world eating behavior captured [39] |

| Smartphone Apps (without additional devices) | Self-reported diet, weight, physical activity | Diet and exercise monitoring; basic goal setting | SMD -0.33 for body weight; MD -0.76 for BMI at 4-6 months [40] | 11 RCTs with 1717 participants; modest but significant effects [40] |

| Bioimpedance Sensors | Calorie intake, hydration levels | Automated tracking without manual logging | Advertised as automatic calorie intake tracking | Limited independent validation; proprietary algorithms [41] |

Experimental Protocols and Methodologies in Wearable Research

Protocol for Multi-Sensor Eating Behavior Monitoring

The Northwestern University study on obesity management exemplifies a comprehensive approach to monitoring dietary behaviors using multiple wearable sensors [39]. The experimental protocol involved:

Participant Selection and Device Configuration: 60 adults with obesity were recruited and fitted with three distinct wearable sensors: a specialized necklace (NeckSense), a wrist-worn activity tracker, and a body camera (HabitSense). The study duration was two weeks of continuous monitoring during waking hours.

Sensor Data Acquisition and Synchronization: The NeckSense device was configured to passively record multiple eating behaviors, including chewing rate, bite count, and hand-to-mouth movements. The wrist-worn tracker collected physiological data such as heart rate and gross motor activity. The HabitSense body camera, designed with privacy-preserving features, used thermal sensing to trigger recording only when food entered the camera's field of view.

Contextual Data Collection: Participants used a smartphone app to record meal-related mood states and contextual information (e.g., social environment, location) throughout the study period. This created thousands of hours of multimodal data for analysis.

Pattern Identification Algorithm: AI algorithms processed the synchronized sensor data to identify characteristic patterns in eating behaviors. The analysis revealed five distinct overeating patterns: take-out feasting, evening restaurant reveling, evening craving, uncontrolled pleasure eating, and stress-driven evening nibbling.

This protocol demonstrates the potential of multi-sensor systems to capture complex behavioral patterns in real-world settings, providing a foundation for highly personalized interventions.

Protocol for Cardiac Rehabilitation with Wearable Trackers

A comprehensive meta-analysis of 23 randomized controlled trials established a standardized protocol for implementing wearable devices in cardiac rehabilitation [38]: