Automated 24-Hour Dietary Recalls: A Comprehensive Analysis of Accuracy for Research and Clinical Applications

This article provides a critical evaluation of automated 24-hour dietary recall systems, a key methodology for capturing dietary intake data in biomedical research and drug development.

Automated 24-Hour Dietary Recalls: A Comprehensive Analysis of Accuracy for Research and Clinical Applications

Abstract

This article provides a critical evaluation of automated 24-hour dietary recall systems, a key methodology for capturing dietary intake data in biomedical research and drug development. It explores the foundational principles of dietary assessment and the inherent challenges of measurement error. The analysis covers the operational mechanisms of major automated platforms like ASA24® and INTAKE24, examines common sources of inaccuracy and strategies for mitigation, and synthesizes current validation evidence comparing these systems to traditional methods and emerging AI-driven tools. Aimed at researchers and clinical professionals, this review offers evidence-based insights to guide the selection, implementation, and optimization of automated dietary assessment for generating reliable nutritional data in scientific studies.

The Critical Foundation: Understanding Dietary Assessment and Measurement Error

The Essential Role of Accurate Dietary Data in Biomedical Research

Accurate dietary data is a cornerstone of robust biomedical research, influencing studies on disease etiology, the effectiveness of nutritional interventions, and public health policy. The choice of dietary assessment method can significantly impact the validity of a study's findings. This guide objectively compares the performance of major dietary assessment tools—specifically automated 24-hour recalls, food records, and food-frequency questionnaires (FFQs)—against objective recovery biomarkers, providing researchers with the experimental data needed to select the most appropriate method for their work.

Methodologies for Validating Dietary Assessment Tools

To objectively compare the accuracy of dietary assessment tools, researchers employ rigorous validation studies that pit self-reported data against objective, non-self-reported measures known as recovery biomarkers. These biomarkers provide a near-truth measure of intake for specific nutrients over a short-term period [1] [2].

The core experimental protocols for these validation studies are as follows:

- Doubly Labeled Water (DLW): This method is the gold standard for measuring total energy expenditure (TEE). Participants ingest water containing non-radioactive isotopes of hydrogen and oxygen. The difference in elimination rates of these isotopes from the body is used to calculate carbon dioxide production, from which TEE is derived. In weight-stable individuals, TEE is equivalent to energy intake [3] [4] [5].

- Urinary Nitrogen: Protein intake is objectively estimated by measuring the nitrogen content in a complete 24-hour urine collection. The formula used is: Protein = 6.25 × 24-hour urinary nitrogen ÷ 0.81, where 0.81 represents the average recovery rate of dietary nitrogen in urine [3] [4].

- Study Design: In a typical study, such as the Interactive Diet and Activity Tracking in AARP (IDATA) study, participants complete multiple self-reported dietary assessments over a period (e.g., 12 months). This includes several automated 24-hour recalls (e.g., ASA24), food records (e.g., 4-day food records), and FFQs. These are compared to biomarker data collected during the same timeframe, including DLW for energy and one or more 24-hour urine collections for protein, potassium, and sodium [4] [5].

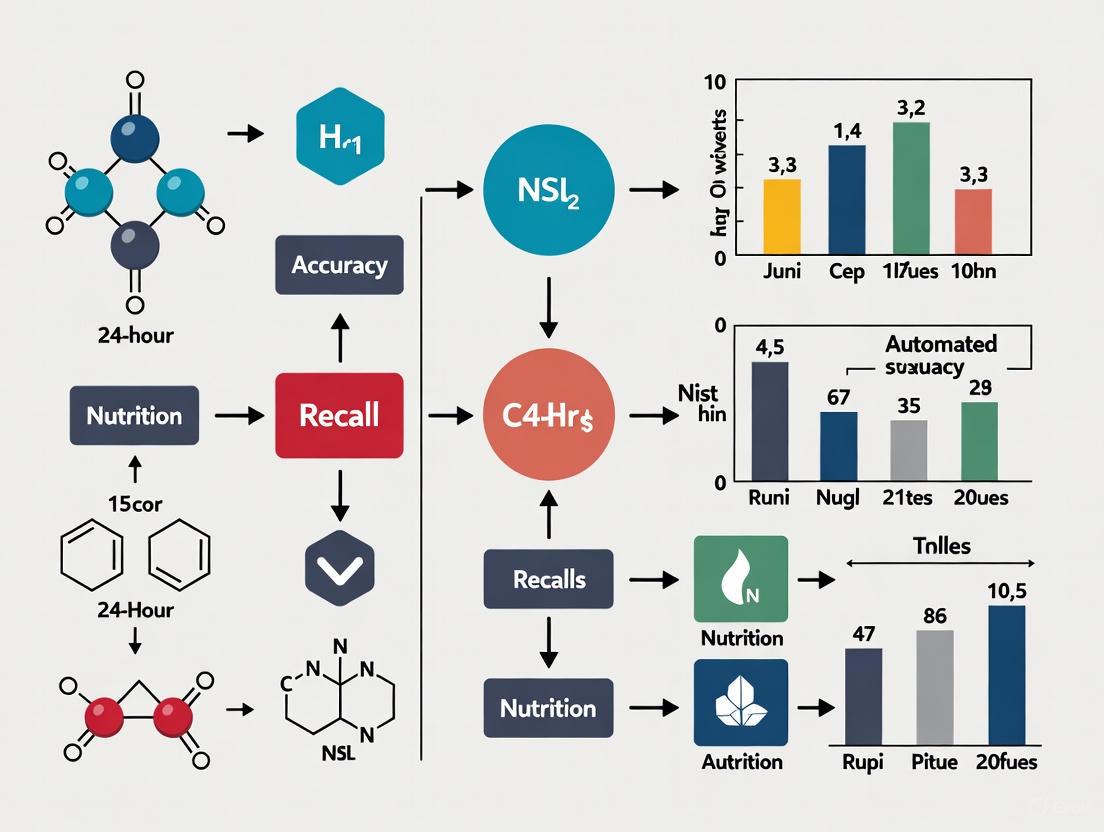

The following diagram illustrates the workflow of a comprehensive validation study, integrating both self-report tools and objective biomarkers.

Quantitative Comparison of Tool Performance

The following tables synthesize key findings from major validation studies, comparing the performance of different dietary assessment tools against recovery biomarkers.

Table 1: Absolute Intake Estimates Compared to Biomarkers

This table shows the extent to which each method underestimates actual intake for key nutrients on average, a phenomenon known as underreporting [4].

| Nutrient | Automated 24-h Recalls | 4-Day Food Records | Food Frequency Questionnaires (FFQs) |

|---|---|---|---|

| Energy | 15-17% underreporting | 18-21% underreporting | 29-34% underreporting |

| Protein | Closer to biomarkers for women [5] | 22.6% of biomarker variation explained [3] | 8.4% of biomarker variation explained [3] |

| Potassium | Reported intakes closer to biomarkers for women [5] | N/A | N/A |

| Sodium | Reported intakes closer to biomarkers for women [5] | N/A | N/A |

Notes: Data synthesized from the IDATA study [4] and the Women's Health Initiative Nutrient Biomarker Study [3]. Underreporting is more prevalent among obese individuals and is greater for energy than for other nutrients [4].

Table 2: Statistical Correlation with Biomarkers and Completion Metrics

This table shows how well the variation in reported intake from each tool tracks with the variation in biomarker values, indicating its ability to rank individuals correctly within a group [3] [5].

| Performance Metric | Automated 24-h Recalls | 4-Day Food Records | Food Frequency Questionnaires (FFQs) |

|---|---|---|---|

| Correlation with Energy Biomarker | 3.8% of variation explained [3] | 7.8% of variation explained [3] | 2.8% of variation explained [3] |

| Correlation with Protein Biomarker | 16.2% of variation explained [3] | 22.6% of variation explained [3] | 8.4% of variation explained [3] |

| Typical Completion Rate | ~75% complete ≥5 recalls [5] | Requires completion of 2 records [5] | Requires completion of 2 questionnaires [5] |

| Median Completion Time | 41-58 minutes (declines with practice) [5] | N/A | N/A |

Notes: The correlation (i.e., "variation explained") is substantially improved for all methods using calibration equations that adjust for factors like body mass index, age, and ethnicity [3].

Successful dietary assessment, particularly in validation studies, relies on a suite of specialized tools and resources.

Table 3: Essential Research Reagents and Solutions

| Item | Function in Dietary Research |

|---|---|

| Doubly Labeled Water (DLW) | Serves as an objective recovery biomarker for total energy expenditure, providing a reference measure to validate self-reported energy intake. |

| Para-Aminobenzoic Acid (PABA) | Used to validate the completeness of 24-hour urine collections by checking recovery rates; incomplete samples can be excluded from analysis. |

| Automated 24-h Recall Systems (e.g., ASA24) | Self-administered, web-based tools that use a multiple-pass method to guide participants through recalling the previous day's intake, automating data coding. |

| Food Composition Databases (e.g., CoFID) | Databases that link reported food consumption to nutrient composition, enabling the calculation of nutrient intakes from food intake data. |

| Life Cycle Assessment (LCA) Databases | Used in emerging research to estimate the environmental impact (e.g., greenhouse gas emissions) of individuals' reported diets. |

Implications for Research and Tool Selection

The experimental data lead to several key conclusions for biomedical researchers:

- Hierarchy of Accuracy: For estimating absolute intakes of energy and protein, multiple automated 24-hour recalls (ASA24) and 4-day food records provide the best estimates and significantly outperform FFQs [4]. FFQs are particularly prone to substantial energy underreporting.

- Strength of FFQs: Despite limitations for absolute intake, FFQs remain a practical tool for ranking individuals by their intake (assessing relative intake) in very large epidemiological studies and for capturing long-term, habitual diet [2] [6].

- Mitigating Error: Measurement error is pervasive in all self-report tools. Research indicates that using calibration equations—which adjust intake estimates using biomarkers and subject characteristics like BMI and age—can substantially improve the accuracy of consumption estimates for epidemiological studies [3].

- Feasibility of Automated Tools: Tools like ASA24 are highly feasible for large-scale studies. Completion rates are high, and the time burden decreases as participants become familiar with the system, making multiple administrations practical [5].

The selection of a dietary assessment tool involves a trade-off between accuracy, participant burden, cost, and study objectives. Automated 24-hour recall systems have emerged as a powerful solution, balancing strong accuracy against biomarkers with the feasibility required for large-scale research, thereby strengthening the foundation of diet-related biomedical science.

Self-reported dietary data is a cornerstone of nutritional epidemiology and clinical research, yet it is inherently susceptible to measurement errors that can significantly impact data quality and subsequent findings. These errors are broadly categorized into random errors, which reduce precision and statistical power, and systematic errors (bias), which compromise accuracy and can lead to erroneous conclusions regarding diet-health relationships [1]. Understanding these error typologies is particularly crucial when evaluating automated 24-hour recall systems, which are increasingly deployed in large-scale studies for their feasibility and cost-effectiveness.

The process of dietary intake measurement involves multiple stages, each presenting opportunities for error introduction: (1) initial data collection on food intakes, (2) conversion of food intake data to nutrients using food-composition databases, and (3) statistical adjustment of observed intakes to estimate "usual intakes" for evaluating nutrient adequacy or health outcomes [1]. The nature, direction, and magnitude of these errors vary depending on the recall protocol used, study population, setting, and nutrients of interest, making the choice of dietary assessment tool a critical methodological consideration.

Comparative Analysis of Automated 24-Hour Recall Systems

Performance Metrics and Validation Evidence

Automated self-administered dietary assessment tools have emerged as viable alternatives to interviewer-administered methods, offering substantial cost savings and logistical advantages. The table below summarizes key performance metrics from controlled studies comparing these methodologies.

Table 1: Performance Comparison of Dietary Recall Methods in Controlled Feeding Studies

| Performance Metric | ASA24 (Automated Self-Administered) | Interviewer-Administered AMPM | Research Context |

|---|---|---|---|

| Item Match Rate | 80% of items consumed reported [7] | 83% of items consumed reported [7] | Criterion validation against known true intake [7] |

| Intrusion Rate | Significantly higher (P < 0.01) [7] | Lower number of intrusions [7] | Items reported but not consumed [7] |

| Energy/Nutrient Estimate Gap | Little evidence of difference from true intake [7] | Little evidence of difference from true intake [7] | Comparison with true intake from weighed foods [7] |

| Omission Patterns | Higher omissions for additions/ingredients in multi-component foods [7] [8] | Similar omission patterns for complex foods [7] [8] | Consistent pattern across self-report methods [8] |

| Feasibility for Large Samples | High potential for substantial cost savings [7] | Higher resource requirements for interviewers [7] | Research aimed at describing population diets [7] |

Controlled feeding studies, where true intake is known, provide the highest quality evidence for validating self-report methods. One such study randomly assigned participants to complete either the Automated Self-Administered 24-hour Recall (ASA24) or an interviewer-administered Automated Multiple-Pass Method (AMPM) after consuming meals from a buffet where intake was inconspicuously weighed [7]. The findings revealed that while the interviewer-administered method performed somewhat better for match rates and intrusions, the ASA24 system performed well overall, with little evidence of differences between the modes in the accuracy of energy, nutrient, or food group estimates [7].

Error Patterns Across Food Groups

The accuracy of self-reported intake varies considerably across different types of foods and beverages. A systematic review examining contributors to misestimation found that omissions and portion size misestimations were the most frequently reported errors [8]. The review further identified distinct patterns of omission across food groups:

- Frequently Omitted Items: Vegetables (2–85% of the time) and condiments (1–80%) were omitted more frequently than other items [8]. Additions or ingredients in multi-component foods (e.g., toppings, sauces) are also particularly vulnerable to being forgotten [7] [8].

- Less Frequently Omitted Items: Beverages were omitted less frequently (0–32% of the time) [8].

Both under- and over-estimation of portion sizes occur for most food and beverage items within study samples and across most food groups, indicating that portion size misestimation is a pervasive and non-directional challenge [8].

Methodological Protocols for Error Assessment and Mitigation

Experimental Protocols for Validation Studies

To assess the criterion validity of dietary assessment tools, researchers employ rigorous experimental designs. The following workflow outlines a standard protocol for a comparative validation study against a measured true intake.

Diagram 1: Workflow for Dietary Recall Validation

Detailed Methodology:

- Participant Recruitment and Randomization: Recruit a sample of participants representative of the target population. Randomly assign them to different dietary assessment tool groups (e.g., ASA24 vs. interviewer-administered AMPM) to control for confounding variables [7].

- True Intake Measurement: Conduct a controlled feeding session in a laboratory or buffet setting.

- Pre-Consumption Weighing: Inconspicuously weigh all foods and beverages offered to participants before service [7].

- Post-Consumption Weighing: Collect and weigh all plate waste after the participant has finished eating. The true intake is calculated as the difference between the pre- and post-consumption weights [7].

- Dietary Recall Completion: On the following day, participants complete the dietary recall using their assigned method (e.g., ASA24 or AMPM). This time lag reflects real-world recall conditions [7].

- Data Analysis: Compare the self-reported data to the true intake values. Key metrics for analysis include:

- Match Rate: The proportion of truly consumed items that were correctly reported [7].

- Intrusion Rate: The number of items reported but not consumed [7].

- Omission Rate: The number of consumed items not reported [8].

- Portion Size Accuracy: The difference between reported and actual portion sizes for matched items [8].

- Energy and Nutrient Estimation Gap: The correlation and mean difference between reported and true energy/nutrient intakes [7].

The Researcher's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Dietary Assessment Research

| Item | Function/Description | Example Use Case |

|---|---|---|

| ASA24 (Automated Self-Administered 24-hr Recall) | A free, web-based tool that uses the Automated Multiple-Pass Method to conduct 24-hour diet recalls and food records automatically [9]. | Collecting dietary intake data from large-scale epidemiologic studies or interventions where interviewer costs are prohibitive [9] [7]. |

| AMPM (Automated Multiple-Pass Method) | A structured, interviewer-administered 24-hour recall protocol developed by the USDA. Serves as a benchmark in validation studies [1] [7]. | Used as a comparator method in validation studies for new automated tools or as a gold-standard method in national surveys [7]. |

| Doubly Labeled Water (DLW) | A biochemical reference method that measures total energy expenditure in free-living individuals over 1-2 weeks. It is considered the gold standard for validating energy intake reporting [1]. | Detecting systematic errors like energy underreporting in self-reported dietary data by comparing reported energy intake to measured energy expenditure [1]. |

| Biomarkers (e.g., Urinary Nitrogen) | Objective biological measurements that correlate with intake of specific nutrients. Urinary nitrogen is a validated biomarker for protein intake [1]. | Providing an objective, non-self-report measure to validate intake of specific nutrients and correct for systematic measurement error [1]. |

| Voice-Based Recall Tools (e.g., DataBoard) | Emerging tools that use speech input and AI for dietary reporting, potentially improving usability in populations with low literacy or digital skills [10]. | Pilot studies exploring more accessible dietary assessment methods, particularly for older adults or other populations challenged by screen-based interfaces [10]. |

Advanced Analytical Approaches for Error Correction

Beyond study design and tool selection, statistical and computational methods are being developed to correct for measurement errors in existing data. One innovative approach leverages the relationship between diet and the gut microbiome.

Diagram 2: Microbiome-Based Error Correction Logic

The METRIC Protocol:

METRIC (Microbiome-based nutrient profile corrector) is a deep-learning approach designed to correct random errors in nutrient profiles derived from self-reported data [11].

- Input Data:

- Assessed Nutrient Profiles: Nutrient intakes calculated from self-reported dietary assessments (e.g., ASA24, 24-hour recalls) which contain random measurement errors.

- Gut Microbial Compositions: 16S rRNA or shotgun metagenomic sequencing data from participant stool samples, which serve as an objective biomarker influenced by diet [11].

- Model Training: The model is trained in a self-supervised manner, without needing clean "true" intake data. It learns to map intentionally corrupted nutrient profiles (input) back to the original assessed profiles (output). This process forces the model to learn the underlying structure of the data and how to remove random noise [11].

- Prediction and Correction: Once trained, the model takes the original error-containing nutrient profile and the microbiome data as input and outputs a corrected nutrient profile with reduced random error. This method has shown promise particularly for nutrients that are metabolized by gut bacteria [11].

The evidence indicates that automated 24-hour recall systems like ASA24 present a favorable trade-off, offering performance comparable to interviewer-administered methods for many nutrients while providing substantial cost advantages [7]. However, certain error patterns, such as the omission of specific food items like vegetables and condiments, persist across self-report methods [8].

For researchers and drug development professionals, the selection of a dietary assessment tool must be guided by the specific research question, the nutrients and food groups of primary interest, and the resources available for data collection and validation. Mitigating the inherent challenges of self-reported data requires a multi-faceted strategy: employing robust tools like ASA24 for large-scale data collection, incorporating structured validation protocols using objective measures like doubly labeled water where feasible, and leveraging emerging computational techniques like METRIC for error correction in downstream analyses [1] [11]. This comprehensive approach strengthens the reliability of dietary data and enhances the validity of findings in nutritional epidemiology and clinical research.

The Evolution from Manual to Automated 24-Hour Recalls

The 24-hour dietary recall (24HR) has long been a cornerstone method for collecting detailed dietary intake data in nutritional epidemiology, clinical research, and national surveillance studies. Traditionally, this method relied heavily on interviewer administration, requiring trained personnel to guide participants through structured interviews using the Automated Multiple-Pass Method (AMPM) [12]. This labor-intensive approach created significant barriers to large-scale data collection due to high costs, time commitments, and coding complexities [12].

The evolution toward automated, self-administered 24-hour recalls represents a fundamental transformation in dietary assessment methodology. Pioneering tools like the Automated Self-Administered 24-hour Dietary Assessment Tool (ASA24), developed by the National Cancer Institute, have revolutionized the field by enabling automated coding of dietary data while maintaining the rigorous structure of the AMPM [9] [12]. This transition has not only addressed cost constraints but has also opened new possibilities for standardized data collection across diverse populations and research settings, making large-scale dietary surveillance studies more feasible than ever before.

Comparative Analysis of Automated 24-Hour Recall Systems

Table 1: Key Automated 24-Hour Dietary Recall Platforms and Characteristics

| Platform Name | Developer/Origin | Primary Features | Target Users | Language Availability |

|---|---|---|---|---|

| ASA24 | National Cancer Institute (USA) | Adaptation of AMPM, extensive food database, portion size images | Researchers, healthcare providers | English, Spanish, French (Canadian version) |

| DataBoard (SurveyLex) | Voice-based system | Speech input for dietary reporting, cloud-based response storage | Older adults, populations with digital literacy challenges | English |

| Intake24 | Newcastle University (UK) | Open-source system, portion size images, recipe creation | National surveys, research institutions | Multiple, including customized versions |

| Foodbook24 | University College Dublin | Food list based on national consumption data, portion images | Irish population, diverse ethnic groups | English, Polish, Brazilian Portuguese |

| SER-24H | University of Chile | Culturally specific food database, local recipes | Chilean and Latin American populations | Spanish |

| mFR24 | Purdue University | Image-assisted recall, before/after photos with fiducial marker | General population, technology-adoptive users | English |

Performance Metrics and Validation Evidence

Table 2: Comparative Performance Data of Automated vs. Traditional 24-Hour Recalls

| Assessment Metric | ASA24 Performance | Interviewer-Administered AMPM | Voice-Based (DataBoard) | Statistical Significance |

|---|---|---|---|---|

| Energy Intake Reporting | 2,374 kcal (women) | 1,906 kcal (women) | Not specified | Equivalent for 87% of nutrients |

| Item Match Rate | 80% (vs. observed intake) | 83% (vs. observed intake) | Not assessed | P = 0.07 |

| Intrusion Rate | Higher than AMPM | Lower than ASA24 | Not assessed | P < 0.01 |

| User Preference | 70% preferred ASA24 | 30% preferred AMPM | 7.2/10 preference rating | Significant preference for ASA24 |

| Feasibility Rating | Not specified | Not specified | 7.95/10 | Not applicable |

| Participant Burden | Lower attrition | Higher attrition | Rated easier than ASA24 (6.7/10) | Significant |

Experimental Protocols for Validation Studies

Controlled Feeding Study Design

The most rigorous approach for validating automated 24-hour recall systems involves controlled feeding studies with unobtrusive measurement of true intake. In a landmark study conducted by Kirkpatrick et al., researchers implemented a protocol where 81 adults were provided with meals from a buffet, with foods and beverages inconspicuously weighed before and after each participant served themselves to establish true consumption [7]. Participants were then randomly assigned to complete either an ASA24 or an interviewer-administered AMPM recall the following day.

The primary outcomes measured included: (1) proportion of matches (items consumed and reported), (2) exclusions (items consumed but not reported), (3) intrusions (items reported but not consumed), and (4) differences between reported and true intakes for energy, nutrients, food groups, and portion sizes [7]. Statistical analyses employed linear and Poisson regression models to examine associations between recall mode and reporting accuracy, providing a comprehensive assessment of each method's performance relative to known intake.

Field Trial Methodology

Large-scale field trials offer complementary evidence of feasibility and comparability in real-world settings. The Food Reporting Comparison Study (FORCS) employed a quota-sampling design to recruit 1,081 adults from three integrated health systems across the United States, ensuring diversity in age, sex, and race/ethnicity [12]. Participants were randomly assigned to one of four protocols: two ASA24 recalls, two AMPM recalls, ASA24 followed by AMPM, or AMPM followed by ASA24.

This design enabled researchers to assess comparability of reported nutrient and food intakes between methods, completion and attrition rates for each protocol, and participant preferences between recall modes [12]. The use of unannounced recall days throughout the 2-month study period helped minimize reactivity (changes in diet due to monitoring), while standardized incentives ($52 maximum) maintained participation across groups.

Usability Testing for Special Populations

Recent studies have adapted methodologies to assess usability and acceptability among specific population groups. A 2025 pilot study focusing on older adults (mean age 70.5) employed a randomized crossover design comparing the voice-based DataBoard tool with ASA24 [10]. Participants completed both tools in randomized order during a single Zoom session, followed by semi-structured interviews and quantitative ratings on a 1-10 scale for usability and acceptability.

This approach combined descriptive statistics for quantitative ratings with qualitative coding of interview transcripts using Dedoose software, providing insights into user preferences, challenges, and perceived usability [10]. The inclusion of both quantitative and qualitative measures offered a comprehensive understanding of the user experience beyond mere accuracy metrics.

Technological Workflow and System Architecture

International Adaptations and Cultural Customization

The implementation of automated 24-hour recall systems across diverse international contexts has demonstrated the critical importance of cultural and culinary customization. Successful adaptations require meticulous attention to several key factors:

Culturally Representative Food Lists: The development of Intake24-New Zealand involved creating a food list of 2,618 items specifically tailored to reflect foods consumed by Māori, Pacific, and Asian communities [13]. This process required identifying culturally significant foods and differentiating between fortified and non-fortified products where relevant to public health monitoring.

Local Nutrient Databases: Chile's SER-24H system incorporates over 7,000 food items and 1,400 culturally based recipes linked primarily to USDA nutrient data but supplemented with local composition information [14]. This hybrid approach balances comprehensive coverage with practical constraints on local database development.

Multilingual Interfaces: Foodbook24's expansion for use in Ireland addressed linguistic diversity by translating interfaces into Polish and Brazilian Portuguese, while adding 546 food items commonly consumed by these populations [15] [16]. Validation studies revealed differences in reporting accuracy across ethnic groups, with Brazilian participants omitting 24% of foods in self-administered recalls compared to 13% among Irish participants [16].

Table 3: Key Research Reagents and Tools for Dietary Recall Validation

| Tool/Resource | Function | Application Context |

|---|---|---|

| ASA24 | Self-administered 24-hour recall | Large-scale studies, population surveillance |

| AMPM | Interviewer-administered recall | Gold standard comparison, validation studies |

| Doubly Labeled Water | Objective energy expenditure measure | Criterion validation for energy intake |

| Weighed Food Protocol | Controlled feeding study | Establishing true intake for validation |

| Food Composition Databases | Nutrient calculation | All automated recall systems (e.g., USDA FNDDS, CoFID) |

| Portion Size Estimation Aids | Visual guides for amount consumed | Image-assisted recalls, portion size estimation |

| Social Desirability Scales | Assessment of reporting bias | Understanding psychosocial factors in misreporting |

The evolution from manual to automated 24-hour recalls represents significant progress in dietary assessment methodology, achieving a balance between measurement accuracy and practical feasibility. Current evidence indicates that while automated systems like ASA24 perform slightly less well than interviewer-administered methods on some metrics (e.g., intrusion rates), they offer substantial advantages in cost-effectiveness, scalability, and user preference [12] [7].

Future developments in this field are likely to focus on integration of artificial intelligence and image-assisted technologies to further reduce participant burden and improve accuracy [17]. The mFR24 system, which incorporates before and after meal images with fiducial markers, represents one such innovation currently under validation [17]. Additionally, ongoing efforts to adapt and validate these tools for diverse populations, including older adults and ethnic minorities, will be crucial for ensuring equitable representation in nutrition research [10] [15] [16].

As these technologies continue to evolve, they hold the promise of providing more accurate, timely, and comprehensive dietary data to inform public health policies, clinical practice, and our understanding of diet-disease relationships.

Core Components of a Standardized 24-Hour Recall Protocol

The 24-hour dietary recall (24HR) is a structured interview or self-administered tool designed to capture detailed information about all foods and beverages consumed by a respondent in the past 24 hours, typically from midnight to midnight of the previous day [18]. As a cornerstone of nutritional epidemiology and population surveillance, this method provides a snapshot of short-term dietary intake and, when administered multiple times, can be used to estimate usual dietary intake distributions for groups [18]. A key feature of a well-conducted 24HR is its open-ended response structure, which prompts respondents to provide a comprehensive and detailed report, including descriptors such as preparation methods, time of day, and food sources [18].

The utility of 24HR data is broad. It can be used to assess total dietary intake, specific aspects of the diet, meal and snack patterns, and the consumption of particular food groups [18]. When linked to a nutrient composition database, it allows for the determination of nutrient intake from foods and beverages [18]. Furthermore, 24HRs are employed to examine relationships between diet and health, to validate other dietary assessment instruments like Food Frequency Questionnaires (FFQs), and to evaluate the effectiveness of nutritional interventions [18]. The evolution of technology has led to the development of automated, self-administered 24HR systems, which are becoming increasingly prevalent in large-scale studies [18] [9].

Core Components of a Standardized Protocol

A standardized 24-hour recall protocol is built upon several key components that work in concert to minimize measurement error and enhance the validity and reliability of the collected data. The following table summarizes these essential elements.

Table 1: Core Components of a Standardized 24-Hour Recall Protocol

| Component | Description | Purpose |

|---|---|---|

| Structured Interview Passes | A multi-pass approach (e.g., Automated Multiple-Pass Method) guiding from quick list to final review [17]. | Enhances memory retrieval, reduces food omission, standardizes probing. |

| Portion Size Estimation Aids | Utilization of food models, photographs, or image-assisted methods to quantify amounts consumed [18] [17]. | Improves accuracy of portion size estimation, a major source of measurement error. |

| Comprehensive Food List & Database | A pre-defined, culturally relevant list of foods and beverages, often with nutrient composition data [15] [19]. | Ensures consistent coding, accommodates diverse dietary habits, and enables nutrient analysis. |

| Contextual & Descriptive Probes | Questions about time, location, meal occasion, preparation methods, and brand names [18] [19]. | Provides rich detail for accurate food identification and understanding of eating contexts. |

| Trained Interviewers or Automated Systems | Administration by personnel trained in neutral probing or via automated, self-administered software [18] [9]. | Reduces interviewer bias, improves standardization, and increases scalability. |

| Multiple, Non-Consecutive Administrations | Collection of more than one recall per participant, spread over different days of the week [18] [1]. | Allows for estimation of "usual intake" by accounting for day-to-day variation. |

The logical application of these components within a research workflow ensures the systematic collection of high-quality dietary data. The following diagram visualizes this process from participant engagement to data output.

Comparative Accuracy of Automated 24HR Systems

With the advent of technology-assisted dietary assessment, several automated 24HR systems have been developed. Their accuracy is paramount for their adoption in research and surveillance. Controlled feeding studies, where true intake is known, provide the highest quality evidence for comparing the accuracy of these methods.

Key Automated Systems in Comparison

- ASA24 (Automated Self-Administered 24-Hour Dietary Assessment Tool): A free, web-based tool developed by the National Cancer Institute (NCI) that adapts the USDA's Automated Multiple-Pass Method (AMPM) for self-administration [9] [17]. It is widely used in epidemiologic and clinical research.

- Intake24: A web-based, self-administered 24-hour recall system developed in the UK, which has undergone multiple cycles of user testing to refine its interface and reduce respondent burden [17].

- Image-Assisted Methods (e.g., mFR): These methods, such as the mobile Food Record (mFR), involve participants capturing images of their foods and beverages before and after consumption. The analysis can be conducted by a trained analyst (mFR-TA) or via automated image analysis [20] [17].

- Interviewer-Administered 24HR: The traditional method, often considered a gold standard in practice, where a trained interviewer conducts the recall, typically using a method like AMPM [18] [20].

Experimental Data on Accuracy

A recent randomized crossover feeding study compared the accuracy of four technology-assisted dietary assessment methods against objectively measured true intake [20]. The results for energy intake estimation are summarized below.

Table 2: Accuracy of Energy Intake Estimation in a Controlled Feeding Study [20]

| Dietary Assessment Method | Mean Difference from True Intake (% of True Intake) | 95% Confidence Interval | Intake Distribution Accurately Estimated? |

|---|---|---|---|

| ASA24 | +5.4% | (+0.6%, +10.2%) | No |

| Intake24 | +1.7% | (-2.9%, +6.3%) | Yes (for energy and protein) |

| mFR-Trained Analyst (mFR-TA) | +1.3% | (-1.1%, +3.8%) | No |

| Image-Assisted Interviewer-Administered (IA-24HR) | +15.0% | (+11.6%, +18.3%) | No |

The study concluded that under controlled conditions, Intake24, ASA24, and mFR-TA estimated average energy and nutrient intakes with reasonable validity [20]. However, a critical finding was that the overall intake distribution was accurately estimated by Intake24 only, for both energy and protein. The IA-24HR method showed a significant overestimation of intake in this controlled setting [20].

Another study protocol highlights the importance of evaluating not only accuracy but also omission (failing to report a consumed food) and intrusion (reporting a food not consumed) rates, which are key indicators of memory-related error [17].

Detailed Experimental Protocols for Validation

The accuracy data presented above is derived from rigorous experimental designs. The following details the methodology employed in key validation studies.

Controlled Feeding Study Design

The most robust protocol for validating dietary assessment methods is the controlled feeding study with a crossover design [20] [17]. The workflow involves tightly controlled conditions and direct comparison of reported intake to known consumption.

Key Methodological Steps [20] [17]:

- Participant Recruitment: Typically involves healthy adults across a range of ages and body mass indices to ensure generalizability.

- Randomized Crossover Design: Each participant is exposed to all dietary assessment methods being tested, with the order randomized. This controls for individual-specific characteristics that might affect reporting.

- Controlled Feeding: Participants attend a research center for three main meals (breakfast, lunch, dinner) on separate days. All foods and beverages are prepared and weighed unobtrusively to establish a "true intake" value without influencing participant behavior (avoiding reactivity).

- 24-Hour Recall Administration: The day after each feeding day, participants complete the 24-hour recall using one of the assigned methods (e.g., ASA24, Intake24, mFR). For image-assisted methods, participants may be instructed to take before-and-after photos of their meals.

- Data Analysis: True and estimated intakes for energy, nutrients, and food groups are compared. Statistical analyses, such as linear mixed models, are used to assess differences between methods, accounting for fixed effects like method order.

Biomarker Validation Studies

An alternative or complementary protocol involves the use of recovery biomarkers to validate energy and nutrient intake.

- Principle: Compare reported energy intake from the 24HR with total energy expenditure measured by the doubly labeled water (DLW) technique. Similarly, reported protein intake can be compared with urinary nitrogen excretion [1] [17].

- Utility: This method is particularly effective for identifying systematic errors like under-reporting of energy intake, which is a common bias in self-reported dietary data [1]. While ideal for validating energy and specific nutrients, it does not provide food-level detail like a feeding study.

The Researcher's Toolkit: Essential Materials & Reagents

Successful implementation of a standardized 24-hour recall protocol, particularly for validation purposes, requires specific tools and resources. The following table catalogs key solutions for researchers in this field.

Table 3: Essential Research Reagent Solutions for 24HR Validation Studies

| Item | Function/Description | Application in Protocol |

|---|---|---|

| Doubly Labeled Water (DLW) | A gold-standard recovery biomarker for measuring total energy expenditure in free-living individuals [1] [17]. | Serves as an objective reference to validate the accuracy of reported energy intake from 24HRs. |

| Standardized Food Composition Database | A database detailing the nutrient content of foods (e.g., UK's CoFID, USDA's FNDDS). Crucial for converting reported food intake into nutrient data [1] [15]. | Backend processing of all 24HR data; must be comprehensive and kept up-to-date with relevant foods. |

| Portion Size Estimation Aids | A set of standardized, 2D or 3D aids such as food model booklets, photographs, or digital images with known portion sizes [18] [17]. | Provided to participants during the recall to improve the accuracy of portion size estimations. |

| Visual Aids for Food Identification | Image libraries or interactive software features that help participants identify and describe mixed dishes, specific brands, and preparation methods. | Integrated into automated systems like ASA24 and Foodbook24 to assist in the accurate reporting of food items [15] [9]. |

| Structured Interview Scripts/Software | Automated or manual protocols that guide the recall process through multiple passes (e.g., AMPM, EPIC-SOFT/GloboDiet) [18] [19] [17]. | Ensures standardization and completeness of the dietary interview, reducing interviewer-induced bias. |

A standardized 24-hour recall protocol is a sophisticated instrument built on core components designed to mitigate inherent measurement errors. The structured multi-pass interview, supported by visual aids for portion estimation and a comprehensive food database, forms the foundation for collecting high-quality dietary data.

The emergence of automated, self-administered systems like ASA24 and Intake24 represents a significant advancement, offering scalability and reduced cost while maintaining reasonable accuracy for estimating average intakes, as evidenced by controlled feeding studies [20]. The choice of instrument, however, must be guided by research objectives. For instance, while several tools performed well in estimating mean intake, Intake24 demonstrated a distinct advantage in accurately capturing population-level intake distributions in one study [20].

Future research should continue to refine these tools, particularly in enhancing image-assisted and voice-based technologies to reduce user burden and improve accuracy across diverse populations, including older adults and those with low literacy [10]. The ongoing expansion and cultural adaptation of food databases will also be critical for ensuring equitable and accurate dietary monitoring in an increasingly globalized world [15].

Inside Automated Systems: Platforms, Protocols, and Real-World Implementation

Automated, web-based 24-hour dietary recall (24HR) systems have transformed the collection of dietary intake data in large-scale research and surveillance. These tools eliminate the need for trained interviewers, reduce study costs, and facilitate the automated coding of food consumption information [21] [9]. Among the leading platforms are ASA24 (United States), INTAKE24 (United Kingdom), and Foodbook24 (Ireland). Each has been developed with public funding to meet national nutritional surveillance needs and has undergone rigorous scientific evaluation. This guide provides a detailed, evidence-based comparison of their performance, drawing from controlled feeding studies, biomarker research, and methodological comparisons to inform tool selection by researchers and scientists.

The table below summarizes the core characteristics and key performance metrics of the three automated platforms based on current validation evidence.

Table 1: Platform Overview and Performance Summary

| Feature | ASA24 | INTAKE24 | Foodbook24 |

|---|---|---|---|

| Country of Origin | United States [9] | United Kingdom [22] | Ireland [15] [23] |

| Primary Funding/Developer | National Cancer Institute (NCI) & other NIH Institutes [9] | Newcastle University [22] | University College Dublin & University College Cork [15] [23] |

| Core Methodology | Adapted USDA Automated Multiple-Pass Method (AMPM) [21] [9] | Multiple-pass method informed by user testing [22] | Multiple-pass recall model based on European Food Safety Authority guidelines [15] [23] |

| Reported Energy Accuracy vs. True Intake | ~5.4% overestimation [20] | ~1.7% overestimation [20] | Data vs. biomarkers shows no significant difference for energy or macronutrients (except protein) [23] |

| Food Reporting Match Rate | 80% of items consumed [7] | Information not specifically reported | 85% overall match rate vs. interviewer-led recall [24] |

| Key Strength | Extensive validation against biomarkers and true intake; wide global adoption [25] [7] | High accuracy for energy and nutrient distribution in a controlled study [20] | Validated with biomarkers; expanded for diverse populations and languages [15] [23] |

Accuracy and Validation in Controlled Studies

Validation against objective measures is critical for assessing the performance of dietary assessment tools. The most robust evidence comes from controlled feeding studies, where true intake is known, and biomarker studies, which provide an objective measure of nutrient consumption.

Evidence from Controlled Feeding Studies

Controlled feeding studies, where the actual foods and amounts consumed are weighed and measured, provide the highest standard for validating self-reported dietary data.

Table 2: Performance in Controlled Feeding Studies vs. True Intake

| Metric | ASA24 | INTAKE24 | Image-Assisted Recall (mFR-TA) | Interviewer-Administered (IA-24HR) |

|---|---|---|---|---|

| Mean Energy Difference (% of True Intake) | +5.4% [20] | +1.7% [20] | +1.3% [20] | +15.0% [20] |

| Energy Intake Variance | Statistically different from true intake (P < 0.01) [20] | Not statistically different (P = 0.1) [20] | Statistically different from true intake (P < 0.01) [20] | Statistically different from true intake (P < 0.01) [20] |

| Food Item Reporting (Match Rate) | 80% of items consumed [7] | Information not specifically reported | Information not specifically reported | 83% of items consumed [7] |

| Intrusions (Items Reported but Not Consumed) | Significantly higher than interviewer-administered recall (P < 0.01) [7] | Information not specifically reported | Information not specifically reported | Fewer intrusions than ASA24 [7] |

A direct comparison in a randomized crossover feeding study found that all three automated methods estimated average energy intake with reasonable validity compared to an image-assisted interviewer-administered recall, which showed significantly higher overestimation [20]. INTAKE24 was the only tool that accurately estimated the distribution of energy and protein intakes in addition to the mean [20].

Another feeding study comparing ASA24 to the interviewer-administered AMPM found its performance was comparable, with ASA24 respondents reporting 80% of items truly consumed versus 83% for AMPM, a non-significant difference (P=0.07) [7]. Both methods showed similar gaps between true and reported energy, nutrient, and food group intakes [7].

Validation with Recovery Biomarkers

Recovery biomarkers, such as doubly labeled water for energy expenditure and urinary nitrogen for protein intake, provide an objective, error-free measure of intake for specific nutrients.

ASA24: In the IDATA study, energy intakes from multiple ASA24 recalls were lower than total energy expenditure measured by doubly labeled water [25]. Reported intakes for protein, potassium, and sodium were closer to urinary recovery biomarkers for women than for men [25]. The study concluded that ASA24 is a feasible tool for large-scale studies, with less bias than Food Frequency Questionnaires (FFQs) [25].

Foodbook24: In its validation, mean intakes of energy and macronutrients (except for protein) showed no significant differences between Foodbook24 and a 4-day semi-weighed food diary [23]. Correlations with urinary and plasma biomarkers for nutrient and food group intake were similar for both methods, providing objective evidence for its validity [23].

Methodological Protocols and Technical Specifications

Understanding the underlying protocols and technical features of each platform is essential for evaluating their suitability for specific research populations and study designs.

Core Dietary Recall Methodology

All three platforms are based on a multi-pass recall methodology designed to enhance memory and reduce forgetting.

- ASA24: This tool is a direct adaptation of the USDA's Automated Multiple-Pass Method (AMPM), a highly standardized five-pass system used in the National Health and Nutrition Examination Survey (NHANES) [21] [9]. The primary distinction is that the user interface guides participants, removing the need for an interviewer [21].

- INTAKE24: Its development involved multiple cycles of user testing with target populations, leading to modifications after each cycle to improve usability and engagement, particularly for younger demographics [22].

- Foodbook24: Its design was informed by European Food Safety Authority guidelines and includes "completeness of collection mechanisms" such as probe questions and linked food options to help users fully describe their intake [15] [23].

Technical Features and Adaptability

Table 3: Technical Specifications and Adaptability

| Specification | ASA24 | INTAKE24 | Foodbook24 |

|---|---|---|---|

| Primary Language(s) | English, Spanish (US); Canadian version: English, French [9] | English (UK) | English, Brazilian Portuguese, Polish [15] |

| Underlying Food Composition Database | USDA Food and Nutrient Database for Dietary Studies (FNDDS) [9] | UK Composition of Foods Integrated Dataset (CoFID) | UK Composition of Foods Integrated Dataset, with additions from Brazilian and Polish databases [15] |

| Portion Size Estimation | Food model images; standard household measures [9] | Portion size photographs [22] | Portion size photographs [15] [23] |

| Dietary Supplement Assessment | Included; coded to NHANES Dietary Supplement Database [21] | Information not specifically reported | Included; users are queried about supplement intake [23] |

| Feasibility & Completion Time | Median time: 55 mins (1st recall) to 41 mins (subsequent recalls) [25]; High completion rates (>70% for ≥5 recalls) [25] | Information not specifically reported | Well-received; 67.8% of users preferred it over traditional methods [23] |

A key strength of Foodbook24 is its recent expansion to serve diverse populations. The food list was expanded with 546 items commonly consumed by Brazilian and Polish adults living in Ireland, and the interface was fully translated into Portuguese and Polish, demonstrating its adaptability for multicultural studies [15].

Implementation Considerations for Researchers

- For Highest Accuracy of Mean Intakes: Both INTAKE24 and ASA24 demonstrated strong performance in estimating average energy and nutrient intake in a controlled setting [20]. INTAKE24 was particularly notable for also accurately capturing intake distributions [20].

- For Multinational or Multicultural Studies: Foodbook24, with its integrated multilingual support and culturally tailored food lists, offers a significant advantage for research involving diverse ethnic groups [15].

- For Large-Scale Cohort Studies in the US Context: ASA24 has a strong track record, with demonstrated feasibility for collecting multiple recalls over time with low participant burden and high completion rates [25].

- For Cost-Effectiveness: All three self-administered tools offer substantial cost savings over interviewer-administered recalls by eliminating staffing needs [21] [22]. The free availability of these tools for researchers further enhances their cost-effectiveness.

The Scientist's Toolkit: Key Research Reagents

In the context of validating dietary assessment tools, the following "research reagents" and methodologies are essential.

Table 4: Essential Methodologies for Dietary Tool Validation

| Methodology / Reagent | Function in Validation | Application in Cited Studies |

|---|---|---|

| Controlled Feeding Study | Provides a "gold standard" measure of true intake by weighing all foods and beverages offered and wasted. | Used to compare ASA24, INTAKE24, and other methods against known intake [20] [7]. |

| Doubly Labeled Water (DLW) | A recovery biomarker for total energy expenditure, serving as an objective measure of energy intake in energy-balanced individuals. | Used in the IDATA study to validate energy intake from ASA24 [25]. |

| 24-Hour Urinary Collection | Provides recovery biomarkers for nutrients like protein (urinary nitrogen), sodium, and potassium. | Used to validate reported intakes of protein, sodium, and potassium in both the IDATA (ASA24) and Foodbook24 studies [25] [23]. |

| Interviewer-Administered 24HR (AMPM) | Serves as a reference method against which new self-administered tools are compared in relative validity studies. | Used as a benchmark for comparing ASA24-2011 and Foodbook24 [21] [24]. |

| Blood Plasma/Sera Analysis | Can provide concentration biomarkers for certain nutrients (e.g., vitamin C, carotenoids) and food intake. | Used in the validation of Foodbook24 as an objective measure of nutrient and food group intake [23]. |

The Automated Multiple-Pass Method (AMPM) is a research-based, computerized approach for collecting interviewer-administered 24-hour dietary recalls, conducted either in person or by telephone. Developed by the USDA, this method employs a structured five-step process specifically designed to enhance complete and accurate food recall while simultaneously reducing respondent burden. The AMPM serves as the foundational methodology for What We Eat in America, the dietary interview component of the National Health and Nutrition Examination Survey (NHANES), and has been widely adopted for various research studies requiring precise dietary assessment [26].

The imperative to address critical nutritional challenges, such as the national obesity epidemic, has stimulated efforts to develop accurate dietary assessment methods suitable for large-scale applications. The AMPM represents a significant advancement in this field, providing researchers with a standardized tool for collecting high-quality dietary intake data that supports epidemiological investigations, clinical studies, and public health monitoring initiatives [27].

AMPM Methodology: Core Components and Workflow

The Five-Pass Structure

The AMPM utilizes a sophisticated multi-pass approach that guides respondents through several distinct stages of memory retrieval to enhance recall completeness and accuracy. This structured methodology systematically probes different aspects of dietary intake, significantly reducing the likelihood of omissions or inaccuracies that commonly plague simpler recall methods [26].

The following diagram illustrates the sequential workflow of the AMPM five-pass system:

Detailed Methodology Breakdown

Each pass in the AMPM workflow serves a distinct psychological and methodological purpose in enhancing memory retrieval and reporting accuracy:

Pass 1: Quick List - Respondents provide an uninterrupted list of all foods and beverages consumed the previous day, without interviewer probing. This free-recall approach captures readily accessible memories without contamination by leading questions [12].

Pass 2: Forgotten Foods - The interviewer probes for foods commonly omitted from recalls, including specific categories such as sweets, snacks, water, and alcoholic beverages. This pass employs category-based cueing to access less accessible memories [12].

Pass 3: Time and Occasion - Respondents assign each reported food to specific eating occasions and provide approximate consumption times. This temporal structuring helps create a chronological framework for further memory retrieval [12].

Pass 4: Detail Cycle - For each food reported, the interviewer collects comprehensive details including preparation methods, portion sizes (aided by measurement guides), and additions such as fats, sauces, or condiments. This pass utilizes visual aids including portion size images, measuring cups, spoons, rulers, and food model booklets to enhance accuracy [12] [28].

Pass 5: Final Review - The interviewer systematically reviews all reported foods and eating occasions, providing a final opportunity for respondents to recall additional items or correct previously reported information [12].

Experimental Validation of AMPM Accuracy

Validation Against Doubly Labeled Water

The most rigorous validation of the AMPM comes from studies comparing reported energy intake against total energy expenditure measured using the doubly labeled water (DLW) technique, considered the gold standard for energy expenditure measurement in free-living individuals.

A landmark 2006 study published in The Journal of Nutrition examined the performance of AMPM in 20 highly motivated, normal-weight-stable, premenopausal women. Participants completed two unannounced AMPM recalls while simultaneously undergoing DLW measurement. The results demonstrated that AMPM accurately estimated group total energy intake without significant difference from DLW-measured total energy expenditure [27] [29].

Table 1: AMPM Validation Against Doubly Labeled Water (DLW)

| Assessment Method | Mean Energy Intake/Expenditure (kJ) | Standard Deviation | P-value vs. DLW | Correlation with DLW (r) |

|---|---|---|---|---|

| AMPM | 8982 | ±2625 | Not Significant | 0.53 (P=0.02) |

| DLW (Criterion) | 8905 | ±1881 | - | - |

| Food Records | 8416 | ±2217 | Not Significant | 0.41 (P=0.07) |

| Block FFQ | 6365 | ±2193 | <0.0001 | 0.25 (P=0.29) |

| Diet History Q | 6215 | ±1976 | <0.0001 | 0.15 (P=0.53) |

The data revealed that AMPM not only provided accurate group-level energy intake estimates but also showed a stronger correlation with DLW measurements (r=0.53, P=0.02) compared to food frequency questionnaires, which significantly underestimated energy intake by approximately 28% [27] [29].

Validation in Controlled Feeding Studies

Further validation comes from controlled feeding studies that compare reported intake against known intake. A 2015 field trial known as the Food Reporting Comparison Study (FORCS) evaluated AMPM's performance across diverse populations. This study involved 1,081 adults from three integrated health systems in different geographic regions, with quota sampling ensuring diversity by sex, age, and race/ethnicity [12].

The study design incorporated rigorous methodology, with participants randomly assigned to one of four protocols differing by recall type (AMPM vs. ASA24) and administration order. All dietary recalls were conducted without prior notification to avoid changes in diet on the reporting day, a critical methodological consideration for reducing reactivity bias [12].

Table 2: AMPM Performance in Controlled Field Trials

| Participant Group | AMPM Mean Energy (kcal) | ASA24 Mean Energy (kcal) | Equivalent Nutrients/Food Groups |

|---|---|---|---|

| Men | 2,425 | 2,374 | 87% of 20 analyzed |

| Women | 1,876 | 1,906 | 87% of 20 analyzed |

The FORCS study demonstrated that for energy intake, the differences between AMPM and its self-administered counterpart (ASA24) were minimal, with mean intakes of 2,425 versus 2,374 kcal for men and 1,876 versus 1,906 kcal for women by AMPM and ASA24, respectively. Importantly, 87% of 20 analyzed nutrients and food groups were statistically equivalent at the 20% bound, controlling for false discovery rate [12].

Comparative Analysis: AMPM vs. Alternative Dietary Assessment Methods

Comparison with Self-Administered Automated Systems

The Automated Self-Administered 24-Hour Recall (ASA24) represents the self-administered counterpart to the interviewer-administered AMPM. Developed by the National Cancer Institute with funding from multiple NIH institutes, ASA24 was directly modeled after the USDA's AMPM and adapts the same multiple-pass approach for self-administration [9].

A critical distinction between the two systems lies in their administration: AMPM requires trained interviewers who actively guide respondents through the recall process, while ASA24 utilizes a web-based interface that enables respondents to complete recalls independently. This fundamental difference has implications for data quality, participant burden, and implementation costs [12].

Table 3: AMPM vs. ASA24 Comparative Analysis

| Feature | AMPM | ASA24 |

|---|---|---|

| Administration | Interviewer-administered | Self-administered |

| Personnel Requirements | Trained interviewers needed | No interviewers needed |

| Cost Structure | Higher personnel costs | Lower operational costs |

| Participant Preference | Preferred by 30% in comparative studies | Preferred by 70% in comparative studies |

| Completion Rates | Higher attrition in some studies | Lower attrition in some studies |

| Supplement Reporting | 43% reported use | 46% reported use (equivalent) |

| Ideal Population | Broad inclusion including low-literacy | Computer-literate with adequate health literacy |

The FORCS study revealed that 70% of respondents preferred ASA24 over AMPM, citing greater convenience and control over reporting timing. Additionally, attrition was lower in groups assigned to ASA24, suggesting potentially higher compliance with self-administered approaches in certain populations [12].

Comparison with Traditional Methods

When compared to traditional dietary assessment methods, AMPM demonstrates significant advantages in accuracy and practicality:

Food Frequency Questionnaires (FFQ): Unlike FFQs, which tend to systematically underestimate energy and nutrient intakes by approximately 28% according to validation studies, AMPM provides accurate estimation of absolute intakes at the group level [27].

Food Records: While food records can provide accurate data, they impose substantial respondent burden and may alter usual eating patterns due to the requirement for simultaneous recording. AMPM eliminates this reactivity by collecting retrospective recalls without advance notification [12].

Traditional 24-Hour Recalls: AMPM represents a substantial improvement over simple single-pass recalls through its structured multi-pass approach, which systematically addresses common memory lapses and portion size estimation errors [26] [12].

Global Adaptations and Methodological Influence

The effectiveness of the AMPM methodology has inspired the development of similar automated recall systems worldwide, with several countries creating culturally adapted versions:

Chile: Researchers developed the SER-24H software, containing over 7,000 food items and 1,400 culturally based recipes specific to the Chilean population. This system maintains the core multiple-pass structure while incorporating local foods and dietary patterns [14].

New Zealand: The Intake24-NZ system was adapted with a food list containing 2,618 foods specifically selected to reflect the New Zealand diet, including indigenous Māori foods and common Pacific and Asian dishes. The system differentiates between fortified and non-fortified products where nutritionally relevant [13].

United Kingdom: The Intake24 system has been used in the UK National Diet and Nutrition Survey, demonstrating the international transferability of the automated multiple-pass approach when appropriately adapted to local food supplies [13].

These international adaptations highlight both the robustness of the core AMPM methodology and the importance of cultural customization for accurate dietary assessment across different populations and food environments.

Successful implementation of AMPM in research settings requires specific tools and resources:

Table 4: Essential Research Reagents and Resources for AMPM Implementation

| Resource | Function/Application | Source/Example |

|---|---|---|

| USDA Food and Nutrient Database for Dietary Studies | Provides nutrient profiles for foods reported by respondents; essential for converting food intake to nutrient intakes | USDA FNDDS (Linked to AMPM) [12] |

| MyPyramid Equivalents Database | Allows conversion of reported foods to food group equivalents for dietary pattern analysis | USDA Database [12] |

| Standardized Portion Size Aids | Enhances accuracy of portion size estimation through visual and physical reference materials | Measuring cups, spoons, rulers, food model booklets [12] |

| NHANES Dietary Supplement Database | Facilitates coding of dietary supplement intake for comprehensive nutrient assessment | NHANES Database [28] |

| Trained Interviewers | Administers AMPM recalls following standardized protocols to ensure data quality and consistency | Study-specific training using What We Eat in America protocol [12] |

| Quality Control Procedures | Monitors and maintains data quality throughout data collection period | Recording reviews, interviewer supervision, data checks [12] |

These resources collectively support the comprehensive implementation of AMPM in research settings, ensuring standardized data collection, accurate nutrient analysis, and high-quality dietary information suitable for addressing complex research questions in nutritional epidemiology and public health.

The USDA Automated Multiple-Pass Method represents a significant advancement in dietary assessment methodology, providing researchers with a validated tool for collecting high-quality dietary intake data. Through its structured five-pass approach, AMPM effectively addresses common cognitive challenges in dietary recall, resulting in more complete and accurate reporting compared to traditional methods.

Validation studies demonstrate that AMPM accurately estimates group-level energy and nutrient intakes when compared against objective criteria such as doubly labeled water measurements. While self-administered systems like ASA24 offer advantages in cost and participant preference, AMPM maintains particular value in studies involving diverse populations, including those with lower literacy or limited computer proficiency.

The global adaptation of AMPM methodology across multiple countries underscores its robustness and flexibility, while maintaining core methodological principles that ensure data quality and comparability. As dietary assessment continues to evolve, the AMPM remains a foundational tool for research requiring precise measurement of food and nutrient intakes in population studies and clinical research.

A critical challenge in nutritional epidemiology is ensuring that automated 24-hour dietary recall (24HR) systems perform equitably across diverse population groups. The comparative accuracy of these tools hinges on deliberate design choices, primarily the adaptation of food lists and language interfaces to reflect varied cultural, linguistic, and culinary practices. This guide objectively compares the performance of several prominent systems based on recent validation studies, providing researchers with the experimental data necessary to select appropriate tools for diverse studies.

Comparative Performance of Adapted Dietary Recall Systems

The table below summarizes key performance metrics from recent studies on automated 24HR tools that have been adapted for specific populations.

| Tool Name | Adapted Population/ Language | Key Adaptation | Performance Metric | Result | Reference Study Design |

|---|---|---|---|---|---|

| ASA24 [7] [9] | General US (English, Spanish); Canadian (English, French); Australian | Based on USDA AMPM; not specifically adapted for diverse ethnic groups in primary design. | Item Match Rate (vs. True Intake) | 80% of items reported [7] | Criterion validity study vs. true intake from feeding study [7] |

| Foodbook24 [15] | Brazilian & Polish populations in Ireland | Added 546 foods; translated interface to Brazilian Portuguese and Polish. | Food List Coverage | 86.5% (302/349) of consumed foods found [15] | Acceptability study comparing participant-listed foods to tool's database [15] |

| Intake24 (South Asia) [30] | Bangladesh, India, Pakistan, Sri Lanka | Developed a new food database with 2,283 commonly consumed items. | Recall Completion Time | Median: 13 minutes [30] | Performance evaluation within the large South Asia Biobank study [30] |

| myfood24 [31] | Danish population | Adapted UK version for Denmark, including underlying food composition databases. | Correlation (ρ) with Biomarkers | Protein: 0.45; Potassium: 0.42; Energy: 0.38 [31] | Validity study comparing tool against biomarkers in urine and blood [31] |

Detailed Experimental Protocols and Methodologies

The comparative data in the table above is derived from rigorous experimental protocols. Understanding these methodologies is crucial for interpreting the results.

Criterion Validity Study against True Intakes (ASA24)

This study design provides the highest level of evidence by comparing reported intake to actual, known consumption [7].

- Objective: To assess the criterion validity of ASA24 relative to a measure of true intakes and an interviewer-administered 24-h recall (AMPM).

- Protocol:

- True Intake Measurement: For 81 adults, all foods and beverages offered from a buffet were inconspicuously weighed before and after each participant served themselves. Plate waste was also weighed to establish a "true intake" value for each individual.

- Randomization: Participants were randomly assigned to complete either the ASA24 or an interviewer-led AMPM recall the following day.

- Data Analysis: Researchers used linear and Poisson regression to analyze:

- Matches: The proportion of truly consumed items that were correctly reported.

- Omissions: Items that were consumed but not reported.

- Intrusions: Items that were reported but not consumed.

- Nutrient & Energy Estimation: The difference between reported and true values for energy, nutrients, and food groups.

Tool Expansion and Relative Validity Study (Foodbook24)

This methodology focuses on the process of adapting a tool and then testing its usability and accuracy [15].

- Objective: To examine the suitability of expanding Foodbook24 for use among Brazilian, Polish, and Irish populations in Ireland.

- Protocol:

- Expansion Phase: National survey data from Brazil and Poland were reviewed to identify commonly consumed foods. A total of 546 food items were added to the existing food list, and the entire interface was translated into Polish and Brazilian Portuguese.

- Acceptability Study: A qualitative approach was used where participants (n=349 foods listed) provided a visual record of their habitual diet. Researchers then calculated the percentage of these foods that were available in the updated Foodbook24 food list.

- Comparison Study: Participants completed one 24HR using the self-administered Foodbook24 and one interviewer-led recall on the same day, repeated after two weeks.

- Data Analysis: Dietary intake data from both methods were compared using Spearman rank correlations for food groups and nutrient intakes.

Biomarker Validation Study (myfood24)

This protocol validates a dietary tool against objective biological markers, which are not subject to the same recall biases as self-reported data [31].

- Objective: To assess the validity and reproducibility of the myfood24 tool against dietary intake biomarkers in healthy Danish adults.

- Protocol:

- Dietary Assessment: Participants (n=71) were instructed to complete a 7-day weighed food record using the adapted myfood24 tool at baseline and again 4 weeks later.

- Biomarker Collection:

- Energy Metabolism: Resting energy expenditure was measured by indirect calorimetry. Total energy expenditure was estimated and compared to reported energy intake.

- Blood Sample: Fasting blood was drawn to measure serum folate levels.

- Urine Sample: A 24-hour urine sample was collected to measure urea (a biomarker for protein intake) and potassium.

- Data Analysis: Validity was assessed by calculating Spearman's rank correlations (ρ) between the nutrient intake estimates from myfood24 and the concentration of their corresponding biomarker.

Workflow for Adapting a Dietary Recall Tool

The following diagram illustrates the systematic, multi-stage workflow for adapting an automated 24-hour recall tool for a new population, as demonstrated by several studies [15] [30].

This table details key tools and resources referenced in the comparative studies, which are essential for conducting research in this field.

| Tool / Resource | Primary Function | Relevance to Diverse Populations |

|---|---|---|

| ASA24 (Automated Self-Administered 24-h recall) [9] | A free, web-based tool for collecting multiple, automatically coded 24-hour diet recalls and food records. | The primary US, Canadian, and Australian versions exist, but adaptation for other specific populations requires researcher-led effort [7] [9]. |

| Intake24 [30] | An open-source, digital 24-h dietary recall tool. | Its open-source nature facilitates adaptation, as demonstrated by the creation of a bespoke 2,283-item food database for South Asian populations [30]. |

| Food Composition Database (FCDB) [15] | A database providing the nutrient profile for individual food items. | Critical for accuracy. Adapted tools may need to integrate local FCDBs (e.g., from Brazil or Poland) for culturally specific foods not in primary databases [15]. |

| Biomarkers (e.g., Urinary Nitrogen, Serum Folate) [31] | Objective biological measures used to validate self-reported intake of specific nutrients. | Provide a culture- and language-free method for validating dietary assessment tools, thus serving as a key reference for tools used in any population [31]. |

| Doubly Labeled Water (DLW) | The gold-standard method for measuring total energy expenditure in free-living individuals. | Serves as a reference for validating total energy intake reporting, though not used in the cited studies due to high cost and complexity [32]. |

The pursuit of comparative accuracy in automated 24-hour recall systems is fundamentally linked to inclusive design. Evidence shows that tools like Foodbook24 and Intake24, which undergo rigorous, population-specific adaptation of their food lists and languages, demonstrate strong usability and accuracy within their target groups [15] [30]. While universal tools like ASA24 provide a solid foundation, their performance in capturing the full dietary spectrum of ethnically diverse populations may be limited without similar customization efforts [7] [32]. For researchers, the choice of tool must be guided by the specific population of interest, with a commitment to employing and further developing methodologies that ensure equitable and accurate dietary assessment for all.

Automated 24-hour dietary recall systems represent a transformative advancement in nutritional assessment, offering a viable alternative to traditional interviewer-administered methods. The Automated Self-Administered 24-Hour Recall (ASA24) system, developed by the National Cancer Institute (NCI), is a web-based tool that enables the collection of high-quality dietary intake data at a lower cost than traditional methods [12] [9]. Modeled after the USDA's Automated Multiple-Pass Method (AMPM) used in the National Health and Nutrition Examination Survey (NHANES), ASA24 automates the multiple-pass interview process, guiding respondents through meal-based quick listing, detail passes for food preparation and portion size, and final review [12]. This automation eliminates the need for trained interviewers and manual coding of reported foods, significantly reducing the financial and administrative burdens associated with large-scale dietary assessment [12].

The integration of these automated systems into research workflows spans epidemiological studies investigating diet-disease relationships across populations to clinical trials requiring precise monitoring of participant adherence to nutritional interventions. As of June 2025, researchers have collected more than 1,140,328 recall or record days using ASA24, with approximately 673 studies per month utilizing the system [9]. This widespread adoption reflects growing recognition of the methodological advantages offered by automated systems, including standardized data collection, reduced administrative costs, and the ability to capture multiple days of intake to account for day-to-day variation [12] [33].

Comparative Performance Data: Automated vs. Traditional Methods

Energy and Nutrient Intake Comparisons

Quantitative comparisons between automated and interviewer-administered recall systems demonstrate generally equivalent performance for most nutrients, with some variation by specific nutrient and population.

Table 1: Mean Energy and Nutrient Intake Comparisons between AMPM and ASA24 [12]

| Nutrient | AMPM Mean | ASA24 Mean | Equivalence Judgment |

|---|---|---|---|

| Energy (Men) | 2,425 kcal | 2,374 kcal | Equivalent |

| Energy (Women) | 1,876 kcal | 1,906 kcal | Equivalent |

| Percentage of Nutrients Judged Equivalent | 87% (20 nutrients/food groups analyzed) |

The Food Reporting Comparison Study (FORCS), a large field trial conducted in 2010-2011 with 1,081 adults from three integrated health systems, found that mean energy intakes reported via ASA24 were comparable to those collected via interviewer-administered AMPM recalls [12]. Of the 20 nutrients and food groups analyzed, 87% were judged equivalent at the 20% bound after controlling for false discovery rate [12]. This high rate of equivalence indicates that ASA24 produces quantitatively similar intake estimates to the established interviewer-administered method for most nutritional parameters.

Methodological Advantages and Participant Engagement

Beyond numerical equivalence, automated systems offer several operational advantages that impact their integration into research workflows.

Table 2: Participant Engagement and Preference Metrics [12]

| Metric | AMPM | ASA24 | Implications |

|---|---|---|---|

| Participant Preference | 30% | 70% | Lower participant burden |

| Attrition (AMPM/AMPM protocol) | Higher | - | Higher retention with automation |

| Attrition (ASA24/ASA24 protocol) | - | Lower | Higher retention with automation |

| Cost per Recall | Higher (interviewer costs) | Lower (automated) | Scalability for large studies |