Automating Eating Detection: Sensor Technologies and AI for Real-World Clinical and Research Applications

This article provides a comprehensive overview of the current state of automatic eating detection in free-living settings, a field poised to revolutionize nutritional epidemiology, chronic disease management, and behavioral health...

Automating Eating Detection: Sensor Technologies and AI for Real-World Clinical and Research Applications

Abstract

This article provides a comprehensive overview of the current state of automatic eating detection in free-living settings, a field poised to revolutionize nutritional epidemiology, chronic disease management, and behavioral health research. We explore the foundational principles driving the shift from error-prone self-reporting to objective, sensor-based measures. The review details the wide array of methodological approaches, from wearable motion sensors and acoustic devices to computer vision and AI-driven analysis. We critically examine the key challenges in troubleshooting these systems for real-world deployment, including confounding behaviors and data variability. Finally, we assess the validation metrics and comparative performance of existing technologies, highlighting their readiness for integration into clinical trials and public health interventions. This synthesis is tailored for researchers, scientists, and drug development professionals seeking to implement these tools in their work.

The Paradigm Shift: Why Free-Living Eating Detection is Transforming Public Health Research

Traditional self-report methods, such as 24-hour dietary recalls, food frequency questionnaires (FFQs), and food records, have long been the cornerstone of dietary assessment in both research and clinical practice [1] [2]. These tools are widely used to understand the relationships between diet, health, and disease. However, when the research objective is to accurately detect eating episodes in free-living settings—a critical aim for applications in chronic disease management and drug development—the fundamental limitations of these methods become major impediments to scientific progress. This technical guide details how recall bias, significant participant burden, and substantial measurement error inherent in self-reports necessitate a paradigm shift towards automated, sensor-based detection technologies.

Core Limitations of Self-Report Methods

The use of self-report for assessing dietary intake is fraught with challenges that affect the validity and reliability of the collected data. The table below summarizes the primary limitations and their impacts on dietary data.

Table 1: Core Limitations of Traditional Self-Report Dietary Assessment Methods

| Limitation | Underlying Causes | Impact on Data Quality |

|---|---|---|

| Recall Bias [3] | Reliance on memory; length of recall period; characteristics of the disease or event being recalled. | Leads to systematic misreporting (under- or over-estimation); distorts observed associations between diet and health outcomes. |

| Participant Burden [4] [2] | High cognitive effort to remember intake; time-consuming nature of detailed logging; complexity of portion size estimation. | Leads to reduced participant compliance, task aversion, and premature study dropout, potentially biasing the study sample. |

| Measurement Error [5] [3] | Social desirability bias; imprecise portion size estimation; use of generic food composition databases; limitations of the instrument itself. | Introduces non-random noise; obscures true diet-disease relationships; reduces statistical power to detect significant effects. |

| Lack of Temporal Resolution [4] | Methods like FFQs assess long-term intake; 24-hour recalls and records are often aggregated to daily totals. | Fails to capture micro-level eating patterns (e.g., eating rate, meal duration) crucial for understanding behavioral phenotypes. |

Recall Bias

Recall bias is a form of information bias originating from participants' inaccurate recollection of their past dietary intake [3]. Its effects are particularly pronounced in case-control studies, where participants with a disease (cases) may recall their past diet differently than healthy controls [3].

- Mechanisms: The accuracy of recall is influenced by the length of the recall period, with longer periods leading to greater error [3]. The nature of the health outcome can also affect memory; individuals may scrutinize their past habits more closely after a diagnosis.

- Quantitative Evidence: A systematic review comparing self-reported and direct measures of physical activity found correlations were generally low-to-moderate, ranging from -0.71 to 0.96 [5]. This wide range highlights the unpredictable and often poor validity of self-reported data. In dietary assessment, a recall error can result in underestimates of the association between a dietary factor and disease risk [3].

Participant Burden

Participant burden refers to the demands placed on individuals by the data collection process, which can negatively impact compliance and data quality.

- Cognitive and Time Demands: The multiple-pass 24-hour recall, while designed to aid memory, requires a trained interviewer and can take 20-30 minutes to administer [2]. Weighed food records, often considered the most detailed self-report method, are exceptionally burdensome, leading to participant fatigue and reduced accuracy over time [1].

- Impact on Compliance: High-burden methods are poorly suited for long-term monitoring, which is essential for understanding habitual intake in free-living conditions. This burden is a key driver for developing passive monitoring technologies that minimize user interaction [4] [6].

Measurement Error

Measurement error, or misclassification, is a pervasive issue where the reported intake systematically deviates from the true intake.

- Social Desirability Bias: Participants may report what they believe is socially acceptable rather than what they actually consumed. For example, intake of foods perceived as unhealthy is often under-reported, while intake of healthy foods may be over-reported [3].

- Portion Size Estimation: A significant source of error is the difficulty individuals face in estimating portion sizes, even with the aid of photographs or household measures [2].

- Instrument Limitations: Self-report measures are often unable to capture the absolute level of physical activity or dietary intake, and they are wrought with issues of recall and response bias [5].

The following diagram illustrates how these limitations are interconnected and collectively degrade data quality in free-living research.

The Imperative for Automated Eating Detection

The limitations of self-report are not merely theoretical but have tangible consequences for research validity, particularly in studies requiring precise detection of eating episodes in free-living environments.

The Gold Standard Problem

A fundamental challenge in dietary assessment is the lack of a practical "gold standard" for validating self-report in free-living conditions [5]. While methods like doubly labeled water exist for energy expenditure, they do not provide information on meal timing or composition. This makes it difficult to quantify the exact degree of error in self-reported dietary data [5] [7].

Consequences for Free-Living Research

In the context of automatic eating detection, reliance on self-report for ground truth is problematic. Studies using food diaries as a reference standard are inherently compromised by the same biases they seek to validate against [8] [4]. This can lead to misleading estimates of an automated system's performance. Furthermore, self-reports lack the temporal resolution to capture micro-level eating activities, such as chewing frequency and eating rate, which are emerging as important behavioral markers for conditions like obesity and diabetes [4].

Sensor-Based Approaches: Mitigating Traditional Limitations

Technological advancements offer a pathway to overcome the constraints of self-report. Wearable sensors and integrated systems can passively and objectively monitor eating behavior, thereby minimizing recall bias, reducing participant burden, and improving measurement accuracy.

Table 2: Comparison of Traditional vs. Sensor-Based Dietary Assessment Methods in Free-Living Settings

| Characteristic | Traditional Self-Report | Sensor-Based Automated Detection |

|---|---|---|

| Recall Bias | High - Relies on memory [3] | Minimal - Passive data collection [6] |

| Participant Burden | High - Requires active user engagement [1] [2] | Low - Minimal user interaction required [4] [6] |

| Measurement Error | High - Social desirability, portion estimation [3] | Lower - Objective data from sensors (e.g., motion, acoustics) [8] [9] |

| Temporal Resolution | Low (Hourly/Daily) [4] | High (Continuous, near real-time) [4] |

| Suitability for Long-Term Free-Living Monitoring | Poor due to high burden and poor compliance [4] | Good - Designed for continuous use [8] [6] |

| Contextual Data (e.g., food type) | Can be detailed but relies on user description [2] | Possible via image capture or sensor fusion, but privacy concerns exist [9] |

Experimental Protocols for Validation

To validate these novel sensor-based systems, researchers have developed rigorous protocols that often combine multiple data streams to establish a more reliable ground truth.

- Protocol for Wearable Sensor Validation (JMir, 2022): A study utilizing Apple Watches collected accelerometer and gyroscope data from participants in a free-living environment [8]. Ground truth was established via a digital food diary implemented on the watch itself, which participants used to log eating events with a simple tap. This design allowed for the collection of 3,828 hours of data. The study developed both population-wide and personalized deep learning models, with the latter achieving an Area Under the Curve (AUC) of 0.872 for eating detection, demonstrating the high potential of this approach [8].

- Protocol for Multi-Sensor Fusion (Scientific Reports, 2024): This study employed the Automatic Ingestion Monitor v2 (AIM-2), a wearable device that includes a camera and a 3D accelerometer [9]. During pseudo-free-living days, participants used a foot pedal to mark the precise moment of food ingestion, providing a high-temporal-resolution ground truth. In free-living days, images captured by the device were manually reviewed to annotate eating episodes. By integrating confidence scores from both image-based food recognition and accelerometer-based chewing detection, the system achieved a 94.59% sensitivity and an 80.77% F1-score, significantly outperforming either method used in isolation [9].

The Scientist's Toolkit: Research Reagent Solutions

The transition to automated eating detection relies on a new set of research tools and reagents. The following table details key components used in cutting-edge research.

Table 3: Key Research Tools for Automated Eating Detection

| Tool / Reagent | Type/Model | Primary Function in Research |

|---|---|---|

| Inertial Measurement Unit (IMU) | Accelerometer & Gyroscope (e.g., in Apple Watch Series 4 [8]) | Captures hand-to-mouth gestures and other distinctive motion patterns associated with eating to detect intake events passively. |

| Egocentric Camera | Camera in AIM-2 system [9] | Automatically captures images from the user's point of view for visual food recognition and context validation, reducing reliance on memory. |

| Acoustic Sensor | Microphone [4] [6] | Captures chewing and swallowing sounds as proxies for eating events; often used in multi-sensor systems to improve accuracy. |

| Data Streaming & Logging Platform | Custom iOS/WatchOS App [8], AIM-2 SD Card [9] | Enables passive collection of sensor data and user-triggered event logs (diary), facilitating large-scale, free-living data collection for model training. |

| Deep Learning Model | Convolutional Neural Networks (CNN) [9], Personalized Models [8] | Classifies sensor data (images, motion signals) to detect eating episodes; personalization adapts to individual patterns, boosting performance (AUC up to 0.872 [8]). |

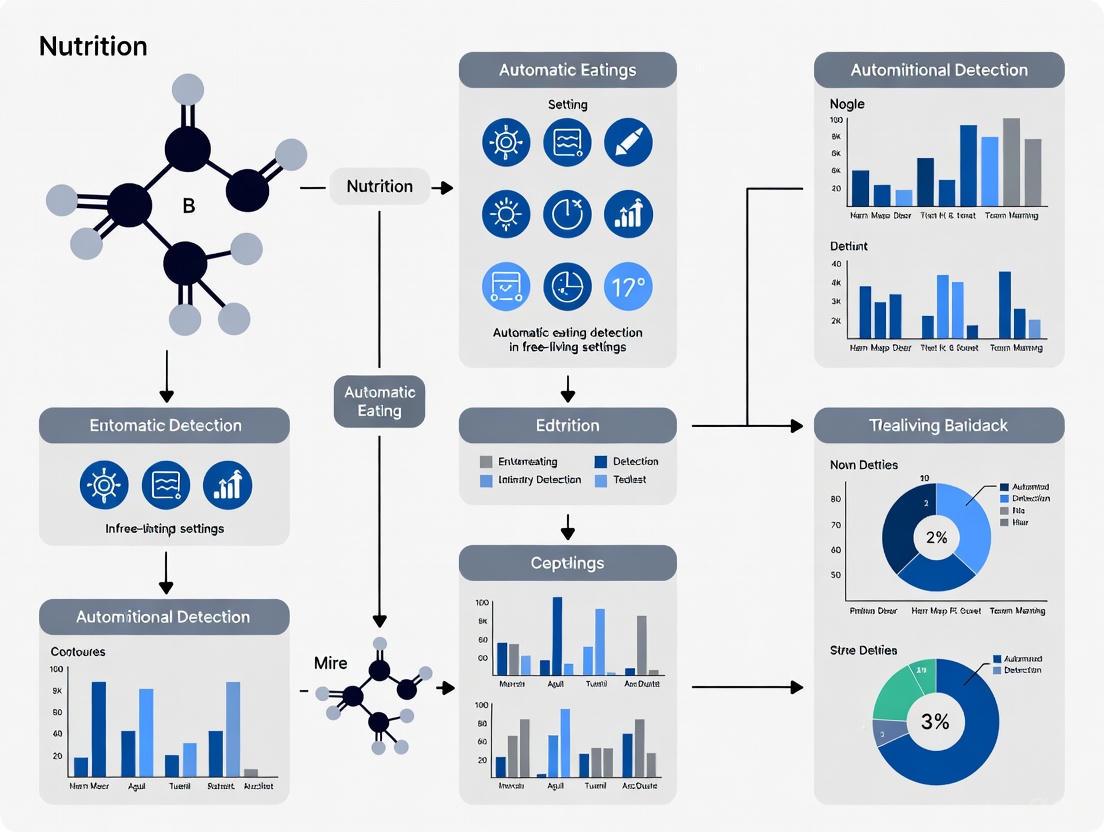

The workflow for developing and validating an automated eating detection system integrates these tools into a multi-stage process, as visualized below.

The limitations of traditional self-report methods—recall bias, participant burden, and measurement error—pose significant challenges to advancing research in automatic eating detection within free-living environments. These biases systematically distort data, impairing our ability to establish valid relationships between dietary behaviors and health outcomes. For researchers and drug development professionals, this represents a critical methodological bottleneck. The emergence of wearable sensor technologies and sophisticated machine learning models offers a compelling alternative, enabling objective, passive, and continuous monitoring of eating behavior. While challenges remain in standardizing outcomes and ensuring user privacy, the integration of multi-sensor data represents the future of dietary assessment, promising to unlock novel insights into diet and health that were previously obscured by the limitations of self-report.

Passive data collection technologies are revolutionizing research in automatic eating detection within free-living settings. These methodologies leverage wearable sensors and mobile devices to capture objective, high-fidelity data on eating behaviors, overcoming the profound limitations of traditional self-reporting tools. The integration of this passively gathered data with active reporting methods like Ecological Momentary Assessment (EMA), and its subsequent analysis through advanced machine learning models, enables the identification of nuanced behavioral phenotypes and paves the way for personalized, adaptive interventions. This technical guide details the core components, methodologies, and applications of these systems, providing researchers and drug development professionals with a framework for their implementation.

Research into eating behaviors has historically relied on self-reporting tools such as food diaries, 24-hour recalls, and food frequency questionnaires. Despite their widespread use, these methods are plagued by significant limitations, including participant burden, recall bias, and under- or over-reporting, which can skew research findings and limit their validity [4]. The emergence of passive data collection technologies offers a transformative alternative, enabling the continuous, objective measurement of behavior in a participant's natural, or "free-living," environment [10] [11].

This paradigm is particularly powerful when passive sensing is combined with active data collection. In this model, passive sensing (e.g., using accelerometers to detect bites) continuously collects objective data without requiring user engagement, while active sensing (e.g., EMA) involves participant-initiated reports, often serving as subjective ground-truth labels [10]. The confluence of these data streams creates rich, multimodal datasets that machine learning (ML) models can use to learn complex patterns, with the ultimate goal of using passive data alone to predict health outcomes and reduce participant burden [10].

Core Technologies and Research Reagents

The implementation of a passive sensing system for automatic eating detection requires a suite of hardware and software components. The table below catalogs the essential "Research Reagent Solutions" and their functions in this field.

Table 1: Essential Research Reagents for Passive Eating Detection Research

| Reagent Category | Specific Examples | Primary Function in Research |

|---|---|---|

| Wearable Sensors | Wrist-worn accelerometers (e.g., in smartwatches), wearable cameras, acoustic sensors | To passively capture micromovements (bites, chews) and visual context of eating episodes in a free-living environment [4] [12]. |

| Mobile Data Collection Platforms | Smartphone applications with embedded sensors (microphone, accelerometer) | To serve as a hub for collecting passive data, administering EMAs, and providing a user interface for participants [10]. |

| Active Data Collection Tools | Ecological Momentary Assessment (EMA) via mobile apps, dietitian-administered 24-hour dietary recalls | To collect subjective, self-reported ground-truth data on eating events, context, and psychological state (e.g., hunger, cravings) [10] [12]. |

| Data Processing & Machine Learning Algorithms | Signal processing algorithms for feature extraction (e.g., chew rate, bite count); Supervised (XGBoost, SVM) and semi-supervised ML models | To process raw sensor data into meaningful features and build predictive models for eating detection and phenotype classification [12]. |

Technical Challenges and Mitigation Strategies

Deploying these systems in free-living settings presents significant logistical and technical hurdles. A scoping review of mobile health sensing identified key challenges in both active and passive data collection [10].

Table 2: Key Data Collection Challenges and Corresponding Mitigation Strategies

| Data Collection Type | Primary Challenges | Evidence-Based Mitigation Strategies |

|---|---|---|

| Active Data Collection | Participant compliance and burden, leading to lower data volume and potential bias [10]. | Use ML to optimize prompt timing and minimize frequency; deploy simplified interfaces (e.g., smartwatch prompts); auto-fill responses where possible [10]. |

| Passive Data Collection | Data consistency (e.g., incomplete sessions), rapid battery drain, and operating system-level authorization issues [10]. | Optimize sensor recording times to preserve battery life; employ motivational techniques to encourage proper device use; select cross-platform development tools [10]. |

A prominent challenge across studies is the heterogeneity of outcome measures and evaluation metrics, which complicates the comparison of different sensors and multi-sensor systems [4]. There is a clear need for standardized reporting to foster comparability and multidisciplinary collaboration.

Experimental Protocols and Workflows

A robust experimental protocol for automatic eating detection integrates multiple data streams. The following workflow, exemplified by studies like the SenseWhy project, provides a template for in-field research [12].

Detailed Methodology from the SenseWhy Study

The SenseWhy study provides a concrete example of a comprehensive experimental protocol for identifying overeating patterns [12].

- Participant Cohort: The study monitored 65 individuals with obesity in free-living conditions, resulting in 2,302 meal-level observations (an average of 48 per participant) after accounting for dropouts and data quality checks [12].

- Technology Stack: The system utilized an activity-oriented wearable camera, a companion mobile application, and dietitian-administered 24-hour dietary recalls to establish ground truth [12].

- Data Annotation: From 6,343 hours of video footage spanning 657 days, researchers manually labeled micromovements such as bites and chews, creating a high-quality dataset for model training [12].

- Contextual Data Integration: Psychological and contextual data were gathered immediately before and after meals through EMAs delivered via the mobile app, capturing factors like biological hunger, cravings, and meal context [12].

Data Analysis and Model Performance

The analysis of collected data typically involves a two-stage process: supervised detection of target events (like overeating) followed by unsupervised or semi-supervised discovery of behavioral phenotypes.

Supervised Detection of Overeating

In the SenseWhy study, researchers compared model performance using different feature sets, with XGBoost emerging as the most effective algorithm [12].

Table 3: Model Performance in Predicting Overeating Episodes (SenseWhy Study)

| Feature Set | Best Model | AUROC (SD) | AUPRC (SD) | Brier Score Loss (SD) | Top Predictive Features |

|---|---|---|---|---|---|

| EMA-only | XGBoost | 0.83 (0.02) | 0.81 (0.02) | 0.13 (0.01) | Light refreshment (-), Pre-meal hunger (+), Perceived overeating (+) [12] |

| Passive Sensing-only | XGBoost | 0.69 (0.04) | 0.69 (0.05) | 0.18 (0.02) | Number of chews (+), Chew interval (-), Chew-bite ratio (-) [12] |

| Feature-complete (Combined) | XGBoost | 0.86 (0.04) | 0.84 (0.04) | 0.11 (0.02) | Perceived overeating (+), Number of chews (+), Light refreshment (-) [12] |

The superior performance of the feature-complete model underscores the synergistic value of integrating subjective contextual data from EMAs with objective behavioral data from passive sensing.

Phenotype Discovery via Semi-Supervised Clustering

Following detection, semi-supervised learning can be applied to discover distinct behavioral phenotypes. The SenseWhy study analyzed 2,246 meals, identifying 369 (16.4%) as overeating episodes. The pipeline, applied to the entire dataset, identified five distinct overeating phenotypes with a cluster purity of 81.4% and a silhouette score of 0.59, confirming their coherence and distinctiveness [12].

The following diagram illustrates the logical relationship between data inputs, the analytical process, and the resulting phenotypes.

Application in Clinical Trials and Drug Development

The emergence of digital endpoints—health measures derived from sensor-generated data collected outside clinical settings—is of paramount importance to drug development professionals [11]. These endpoints offer a more authentic assessment of a patient's daily experience and can reveal the direct impact of a therapeutic intervention on function and quality of life.

For instance, in conditions like obesity and its related comorbidities, traditional endpoints (e.g., weight, BMI) provide only a coarse snapshot. Passive data collection can generate digital endpoints that capture nuanced changes in eating behavior microstructure, such as reduced bite count or slower eating rate, which may serve as early indicators of a drug's efficacy [11] [12]. This continuous, objective measurement in a patient's free-living environment can significantly improve the sensitivity of clinical trials, potentially reducing costs and time by providing more efficient and accurate analyses of a treatment's effect [11].

Regulatory bodies recognize this potential. The FDA and European regulators have established guidance for the use of real-world data, which includes data from mobile devices and wearables, facilitating the incorporation of these novel endpoints into clinical trial design [11].

The automatic detection of eating behaviors in free-living settings represents a paradigm shift in nutritional science, obesity research, and therapeutic development. Traditional assessment methods like 24-hour recalls and food diaries are plagued by inaccuracies due to reliance on memory and susceptibility to reporting biases [4]. The emergence of sophisticated sensor technologies and computational methods now enables researchers to objectively capture the dynamic process of eating, from microscopic bite-level actions to the broader contextual landscape in which consumption occurs. This technical guide provides a comprehensive framework for understanding key eating behaviors and metrics, with particular emphasis on their relevance to developing and validating automated detection systems for use in real-world environments.

The study of eating behavior operates across two interconnected domains: meal microstructure and contextual cues. Meal microstructure refers to the precise temporal patterns of eating within a single bout, including metrics like bite rate and chewing duration [13]. Contextual cues encompass the environmental and behavioral circumstances surrounding eating episodes, such as location, social setting, and concurrent activities [14]. Together, these domains provide a holistic understanding of dietary patterns that can inform interventions for conditions ranging from obesity to eating disorders.

Foundational Concepts: Meal Microstructure

The term "meal microstructure" originated from animal studies investigating the behavioral and physiological mechanisms of food intake control [13]. In humans, it encompasses the detailed characterization of eating dynamics through specific, quantifiable behaviors. Research has consistently revealed that particular microstructural patterns correlate with consumption volume and obesity risk, forming what has been termed an 'obesogenic' eating style [13].

Core Microstructure Metrics

Table 1: Core Meal Microstructure Metrics and Definitions

| Metric | Technical Definition | Measurement Unit | Relevance to Free-Living Detection |

|---|---|---|---|

| Bite Count | Discrete instance of food entering the mouth [13] | Count per episode | Primary target for automated detection; can be inferred from jaw motion, hand gestures, or visual analysis [15] [9] |

| Bite Rate | Speed of biting activity | Bites per minute | Indicator of eating pace; linked to obesity risk; derivable from bite count and meal duration [15] [13] |

| Chewing Cycles | Number of masticatory sequences per food bolus | Count per bite | Proxied by jaw motion (accelerometers) or acoustic signals; relates to food texture and satiation [9] |

| Swallowing | The action of conveying food from the mouth to the esophagus | Count per minute | Detectable via throat microphone (acoustic) or strain sensors; marks intake completion [9] |

| Meal Duration | Total time from first to last bite | Minutes | Easily derived from detected eating episode start and end times [14] [13] |

| Eating Rate | Amount of food consumed per unit time | Grams or kcal per minute | Requires combined sensing (intake detection + portion estimation) [13] |

Behavioral Definitions and Coding Challenges

A significant challenge in the field is the lack of standardization in defining microstructure behaviors. For instance, a "bite" has been variably defined as any food touching the mouth versus food that is chewed and swallowed [13]. These definitional inconsistencies complicate the comparison of findings across studies and highlight the need for precise, algorithm-friendly definitions in automated detection research. Furthermore, certain behaviors like chews and swallows can be difficult to distinguish in video data but may be more readily discernible through inertial or acoustic sensors [9]. The gold standard for training and validating automated systems remains manual observational coding of video-recorded meals, which achieves high accuracy but is prohibitively time-consuming and labor-intensive for large-scale studies [15].

Technological Modalities for Automated Detection

Automated eating detection systems leverage a variety of wearable and ambient sensors to capture meal microstructure and contextual data. The following diagram illustrates the primary sensor modalities and the specific behaviors they detect.

Comparative Analysis of Detection Technologies

Table 2: Sensor Technologies for Automated Eating Detection in Free-Living Conditions

| Technology | Primary Sensing Modality | Detected Behaviors/Metrics | Strengths | Limitations |

|---|---|---|---|---|

| Inertial Sensors [14] [16] | Wrist-worn accelerometer/gyroscope | Hand-to-mouth gestures, coarse chewing | High user compliance, comfortable, long battery life | Prone to false positives from non-eating gestures (e.g., talking, face-touching) |

| Acoustic Sensors [9] [16] | Microphone (neck- or ear-worn) | Chewing and swallowing sounds | High accuracy for solid food intake | Background noise interference, privacy concerns, ineffective for soft foods |

| Image-Based Systems [15] [9] | First-person (egocentric) camera | Bite count, food type, portion size (pre-/post-meal) | Provides rich contextual and food identity data | Major privacy issues, high computational load for analysis, limited by field of view |

| Strain Sensors [9] | Jaw- or throat-mounted strain gauge | Chewing and swallowing | High accuracy for specific behaviors | Intrusive, low user acceptance for long-term free-living use |

| Multi-Sensor Systems (AIM-2) [9] | Accelerometer + Camera | Fused data for chewing, bites, and food presence | Reduces false positives via sensor fusion; higher overall accuracy [9] | More complex system design and data integration |

The Role of Multi-Sensor Fusion

Integrating multiple sensing modalities is a powerful strategy to overcome the limitations of individual sensors. For example, the Automatic Ingestion Monitor v2 (AIM-2) combines an accelerometer for detecting chewing motions with a camera that captures egocentric images periodically [9]. A hierarchical classifier can then fuse confidence scores from both sensor streams. This approach has demonstrated a significant improvement in performance, achieving 94.59% sensitivity, 70.47% precision, and an 80.77% F1-score in free-living conditions, which is approximately 8% higher in sensitivity than using either method alone [9]. This fusion effectively reduces false positives by, for instance, disregarding chewing motions (e.g., from gum) that are not accompanied by the visual presence of food in the images.

Capturing Contextual Cues in Free-Living Settings

Beyond the microstructure of how people eat, understanding why they eat requires capturing the context of eating episodes. Contextual cues are critical for interpreting dietary patterns and designing effective, context-aware interventions.

Key Contextual Dimensions

Social Context: This refers to whether an individual is eating alone or with others. Research has shown that social eating can influence both the amount and type of food consumed [14]. In a deployment of a smartwatch-based detection system among college students, over half (54.01%) of detected meals were consumed alone [14].

Location and Activity: The environment (e.g., home, workplace, car) and concurrent activities (e.g., watching TV, working) are significant influencers. The same study found that over 99% of meals were consumed with distractions, a behavior associated with overeating and uncontrolled weight gain [14].

Temporal Patterns: The time of day and regularity of eating episodes are important metabolic cues. Automated detection allows for the unobtrusive monitoring of meal timing and frequency across extended periods [4] [14].

Ecological Momentary Assessment (EMA) for Context Capture

A powerful method for capturing subjective contextual data is Ecological Momentary Assessment (EMA). EMA involves prompting users with short questionnaires on their mobile devices at specific moments—ideally, triggered automatically by a passive eating detection system [14]. When an eating episode is detected, a system can prompt the user to report contextual information such as mood, social company, and perceived healthfulness of the food. This method minimizes recall bias by collecting data in real-time and provides rich, ground-truthed contextual data that can be linked to the objectively sensed microstructure metrics.

Experimental Protocols and Validation Frameworks

Rigorous experimental protocols are essential for developing and validating automated eating detection systems. The transition from controlled lab settings to free-living conditions presents unique challenges and requirements.

Protocol Design for Algorithm Development

Data Collection Paradigms:

- Laboratory Meals: Controlled studies where participants consume pre-defined meals in a lab setting. This allows for precise ground truth collection using methods like video recording and manual annotation [15]. The ByteTrack study, for instance, used 242 lab meal videos from 94 children for initial model training [15].

- Pseudo-Free-Living: Participants wear sensors and consume prescribed meals in a lab but are otherwise unrestricted during the day. This serves as an intermediate step for algorithm tuning [9].

- Free-Living Validation: The ultimate test where participants go about their normal lives while wearing sensors. Ground truth is typically collected via a combination of EMAs, self-reported food logs, and periodic image review [4] [14] [9].

Ground Truth Annotation: For video data, manual coding by trained annotators using specialized software is the gold standard. Behaviors are annotated frame-by-frame or event-by-event to create labels for supervised machine learning [15] [13]. For sensor data in free-living studies, ground truth can be established using user-activated markers (e.g., a button press) or through intensive self-reporting tools like time-activated EMAs [14] [9].

Performance Metrics and Validation

The performance of detection systems is evaluated using standard classification metrics computed at the episode or gesture level:

- Precision: The proportion of detected eating episodes that are true meals (minimizing false positives).

- Recall (Sensitivity): The proportion of actual meals that are correctly detected (minimizing false negatives).

- F1-Score: The harmonic mean of precision and recall, providing a single metric for overall performance [15] [14] [9].

Agreement with ground truth is also frequently assessed using the Intraclass Correlation Coefficient (ICC) for continuous measures like bite count or meal duration [15].

Table 3: Essential Research Reagents and Solutions for Automated Eating Detection Studies

| Item | Function/Description | Example in Research Use |

|---|---|---|

| Wearable Sensor Platform | A device to capture raw sensor data (e.g., acceleration, sound, images). | Automatic Ingestion Monitor v2 (AIM-2) [9], Commercial smartwatches [14], Axis network cameras [15] |

| Annotation Software | Software for manually labeling and timestamping eating behaviors in video or sensor data. | MATLAB Image Labeler [9], ELAN, Noldus Observer XT |

| Ground Truth Logging Tool | A method for participants to mark eating events or provide context in free-living studies. | Smartphone-based EMA apps [14], Foot pedal data logger [9] |

| Pre-Annotated Datasets | Publicly available datasets of sensor data with corresponding eating behavior labels for algorithm training. | Wild-7 dataset (accelerometer data for eating/not-eating) [14] |

| Machine Learning Libraries | Software libraries (e.g., Python's scikit-learn, TensorFlow, PyTorch) for building and deploying detection models. | Used to implement models like Random Forests, CNNs, and LSTMs for bite classification [15] [14] |

The automated detection of key eating behaviors and contextual cues is a rapidly advancing field poised to transform nutritional science and clinical practice. The convergence of sophisticated sensing technologies, robust machine learning algorithms, and rigorous experimental protocols enables the objective, high-resolution measurement of meal microstructure and eating context in naturalistic environments. Current systems demonstrate promising performance, with multi-sensor fusion approaches effectively reducing false positives.

Future work must focus on several key areas: improving the robustness of algorithms to handle the vast diversity of eating styles and food types across different populations; enhancing user comfort and social acceptability of sensors to facilitate long-term deployment; and developing standardized evaluation frameworks and public datasets to enable direct comparison of different methodologies. As these technologies mature, they will unlock unprecedented opportunities for large-scale, longitudinal studies of eating behavior, paving the way for highly personalized, just-in-time interventions for obesity and related chronic diseases.

The accurate monitoring of dietary intake is a cornerstone in understanding and managing chronic diseases such as type 2 diabetes, cardiovascular disease, and obesity [17] [18]. Despite this critical need, traditional assessment methods like 24-hour recalls and food diaries are plagued by significant limitations, including substantial participant burden and pervasive recall bias, which lead to under- or over-reporting of energy intake [17]. These inaccuracies obstruct effective clinical management and high-quality research.

The field is now transitioning toward objective, technology-driven solutions. Research is increasingly framed within the context of developing automatic eating detection in free-living settings, aiming to passively capture eating behavior with minimal user interaction [17]. The integration of wearable sensors and artificial intelligence presents a transformative opportunity to improve chronic disease care. These technologies enable the continuous, objective collection of data on dietary intake, facilitating personalized interventions and providing previously unattainable insights into eating behaviors [6] [18]. This whitepaper explores the current state of these technologies, their validation, and their practical application for researchers and drug development professionals.

Technological Foundations of Automatic Eating Detection

Automatic eating detection systems primarily rely on two data sources: wearable motion sensors and optical sensing. These systems detect proxies of eating activity, such as chewing, swallowing, and hand-to-mouth gestures, or directly identify food via images.

Wearable Sensor Modalities

Wearable sensors offer a passive and unobtrusive method for monitoring eating episodes. A scoping review highlighted that 65% of studies used multi-sensor systems, with accelerometers being the most prevalent sensor type (62.5%) [17]. The table below summarizes the primary sensor types and their applications.

Table 1: Wearable Sensor Modalities for Eating Detection

| Sensor Type | Measured Proxy | Common Form Factor | Key Strengths |

|---|---|---|---|

| Accelerometer/Gyroscope | Hand-to-mouth gestures, head movement [9] [8] | Wristwatch (e.g., Apple Watch), eyeglasses [8] | High user compliance; convenient to use [9] |

| Acoustic Sensor | Chewing and swallowing sounds [15] [9] | Neck-mounted pendant [15] | Direct detection of ingestion-related sounds |

| Piezoelectric Strain Sensor | Jaw movement during chewing [19] | Patch on the temple or jaw [19] | High accuracy for solid food detection [19] |

| Image Sensor (Camera) | Direct visual identification of food and beverages [9] | Egocentric camera on eyeglasses (e.g., AIM-2) [9] | Provides contextual data on food type |

The Role of Artificial Intelligence and Computer Vision

Machine learning, particularly deep learning, is the cornerstone of modern eating detection systems. These models learn complex patterns from sensor data to distinguish eating from other activities.

- Sensor Data Analysis: Deep learning models analyze motion sensor data to infer eating behavior. One large-scale study using Apple Watches achieved an Area Under the Curve (AUC) of 0.951 for detecting entire meal events by aggregating data over time [8]. The same study showed that personalized models, fine-tuned to an individual's data, can boost performance to an AUC of 0.872, highlighting the value of individual-specific adaptation [8].

- Computer Vision for Video Analysis: In controlled settings, deep learning models can automate the analysis of meal videos. The ByteTrack system, which uses a hybrid Faster R-CNN and YOLOv7 pipeline for face detection followed by a convolutional and recurrent neural network for bite classification, achieved an F1-score of 70.6% for detecting bites in children [15]. This demonstrates feasibility but also the challenge of occlusions and high movement.

- Data Fusion: The most robust systems integrate multiple data streams. A study using the AIM-2 device fused image-based food recognition with accelerometer-based chewing detection, achieving a 94.59% sensitivity and 80.77% F1-score in a free-living environment. This integrated approach significantly reduced false positives compared to either method alone [9].

Experimental Protocols and Validation in Free-Living Conditions

Validating that these systems perform reliably in real-world settings is a critical step for their adoption in clinical research and care.

Core Methodological Components

Several key components are consistent across rigorous validation studies:

- Ground Truth Annotation: Establishing a reliable benchmark is essential. Video observation is often used as a ground truth, but requires assessing inter-rater reliability. One study reported an average kappa of 0.74 for activity annotation and 0.82 for food intake annotation across trained human raters [19]. Participant-activated foot pedals are also used to mark the start and end of bites in lab settings [9].

- Data Collection Protocols: Studies often progress from controlled to free-living conditions.

- Performance Metrics: A range of metrics are used to evaluate performance, including Accuracy, Sensitivity (Recall), Precision, F1-score, and Area Under the Curve (AUC) [17] [8]. The choice of metric depends on the clinical or research application.

Protocol Example: Integrated Image and Sensor Validation

A 2019 study detailed a protocol for validating the AIM-2 sensor in an unconstrained environment [19].

- Facility: A 4-bedroom, 3-bathroom apartment with six HD cameras installed in common areas.

- Participants: 40 participants were monitored in small groups over three non-consecutive days.

- Procedure: Participants self-applied the jaw sensor and wore the AIM-2 system. The kitchen was stocked with a wide variety of foods (189 items). Participants could move freely and socialize, but were asked to only eat in camera-monitored rooms. This setup allowed for the simultaneous collection of sensor data and multi-angle video ground truth.

- Outcome: The AIM-2 sensor data matched human video-annotated food intake with a kappa of 0.77-0.78, demonstrating accuracy comparable to video observation itself [19].

Diagram 1: Experimental validation workflow for integrated detection systems.

Performance Data and Application in Chronic Diseases

The performance of automatic detection systems has reached a level where deployment in clinical research and management is feasible.

Table 2: Performance of Selected Automatic Eating Detection Systems

| Technology / System | Study Setting | Key Performance Metrics | Relevance to Chronic Disease |

|---|---|---|---|

| Wrist-worn Accelerometer (Apple Watch) | Free-living, 3828 hours [8] | AUC: 0.951 (meal-level) | Diabetes management (passive meal detection for insulin dosing) [8] |

| Integrated Sensor (AIM-2) | Free-living, 30 participants [9] | Sensitivity: 94.59%, F1-score: 80.77% | Obesity research (reduced false positives for accurate intake monitoring) [9] |

| Video Analysis (ByteTrack) | Laboratory meals, 94 children [15] | F1-score: 70.6% (bite-level) | Pediatric obesity (analysis of meal microstructure) [15] |

| Neck-Worn Sensor (AIM v1.0) | Pseudo-free-living, 40 participants [19] | Kappa vs. Video: 0.77 | General dietary assessment for cardiometabolic health [19] |

Application in Specific Disease Contexts

- Diabetes Management: Passive monitoring of eating episodes can be integrated into a connected care ecosystem. For patients on insulin, detecting the start and duration of a meal can inform closed-loop insulin delivery systems or prompt for carbohydrate logging, addressing the issue of insulin omission [8].

- Obesity and Heart Disease: These technologies enable the objective measurement of eating patterns and behaviors linked to overconsumption, such as eating rate and meal duration. This data is vital for behavioral interventions and for evaluating the efficacy of new pharmaceuticals or medical devices aimed at modifying eating behavior [17] [18]. Furthermore, comprehensive telehealth interventions that include dietary monitoring have been shown to improve dietary outcomes, such as fruit and vegetable intake and sodium reduction, which are critical for managing hypertension and cardiovascular disease [20].

The Scientist's Toolkit: Research Reagent Solutions

For researchers embarking on studies in this field, the following table outlines essential tools and their functions as derived from the cited literature.

Table 3: Essential Research Tools for Automatic Eating Detection Studies

| Tool / Solution | Function in Research | Exemplar from Literature |

|---|---|---|

| Wrist-Worn Motion Sensor | Captures accelerometer and gyroscope data for detecting eating-related gestures and movements [8]. | Apple Watch Series 4 [8] |

| Multi-Sensor Wearable Platform | Integrates multiple sensing modalities (e.g., camera, accelerometer) for a holistic view of eating activity [9]. | Automatic Ingestion Monitor v2 (AIM-2) [9] |

| Piezoelectric Strain Sensor | Precisely monitors jaw movement (chewing) by detecting strain on the skin [19]. | LDT0-028K sensor (Measurement Specialties) [19] |

| Egocentric Camera | Automatically captures images from the user's point of view for food identification and context [9]. | Axis M3004-V network camera [15] / AIM-2 camera [9] |

| Multi-Angle Video Recording System | Provides comprehensive ground truth for algorithm training and validation in semi-naturalistic environments [19]. | GW-2061IP HD cameras [19] |

| Deep Learning Frameworks | Provides the architecture for developing and training custom models for bite detection, food recognition, and activity inference [15] [8]. | Convolutional Neural Networks (CNN), Long Short-Term Memory (LSTM) networks [15] |

| Pre-Annotated Image Datasets | Serves as a benchmark for training and validating computer vision models for food detection and classification [21]. | University of Toronto Food Label Information and Price (FLIP) database [21] |

Diagram 2: Data pipeline from raw inputs to research outputs.

The Technology Toolkit: Sensors, Algorithms, and Systems for Real-World Deployment

The automatic detection of eating behavior in free-living settings is a critical challenge in health research, with implications for managing obesity, diabetes, and other chronic conditions. Wearable sensor technologies have emerged as powerful tools to overcome the limitations of self-reporting methods, which are prone to inaccuracies due to recall bias and participant burden [22] [23]. These sensors enable the passive, objective monitoring of eating episodes and the detailed capture of in-meal microstructures—such as chewing, swallowing, and bite timing—that were previously difficult to measure outside laboratory environments [22] [24]. The selection of appropriate sensor modalities is therefore fundamental to developing effective dietary monitoring systems that can function reliably in real-world conditions.

This technical guide provides an in-depth analysis of four primary wearable sensor modalities used in eating behavior research: Inertial Measurement Units (IMUs), Acoustic sensors, Piezoelectric sensors, and Optical sensors. Each modality offers distinct mechanisms for capturing physiological and behavioral signals associated with eating, with varying strengths and limitations for deployment in free-living studies. By examining the operating principles, implementation considerations, and performance characteristics of these sensors, researchers can make informed decisions when designing studies or developing interventions for automatic eating detection.

Sensor Modality Analysis: Technical Principles and Implementation

Inertial Measurement Units (IMUs)

Technical Principles and Sensing Mechanism: Inertial Measurement Units (IMUs) are microelectromechanical systems that typically combine accelerometers and gyroscopes to measure linear acceleration and angular velocity, respectively. In eating detection research, IMUs capture body movements associated with eating activities, most notably hand-to-mouth gestures during food intake [23] [25]. These sensors operate by detecting changes in capacitance between microscopic structures that move in response to external acceleration or rotation. When deployed in wrist-worn devices like smartwatches, IMUs can identify characteristic motion patterns that occur when individuals bring food or utensils to their mouths [23]. Additional movements such as head tilts during swallowing or forward leans during eating episodes can also be detected when sensors are positioned on the head or torso [9] [26].

Implementation Considerations:

- Sampling Rates: Typical sampling rates range from 15-128 Hz depending on the specific eating behavior being monitored [9] [25].

- Data Processing: Raw IMU signals require preprocessing including filtering to remove noise unrelated to eating movements, segmentation to identify potential eating gestures, and feature extraction for classification.

- Sensor Placement: Optimal placement includes the wrist (for hand-to-mouth gestures), head (for jaw movements and chewing detection), and chest (for body posture during eating) [9] [26].

Table 1: Performance Characteristics of IMU-Based Eating Detection Systems

| Study Reference | Sensor Placement | Primary Detection Target | Reported Performance | Study Environment |

|---|---|---|---|---|

| Kong et al. [23] | Wrist (smartwatch) | Eating episodes | High precision and recall | Free-living |

| AIM-2 System [9] | Head (glasses) | Chewing and head movement | Significant detection improvement | Free-living |

| Dénes-Fazakas et al. [25] | Wrist (IMU) | Carbohydrate intake gestures | F1-score: 0.99 | Controlled lab |

Acoustic Sensors

Technical Principles and Sensing Mechanism: Acoustic sensors, typically implemented as microphones, capture sound waves generated during the eating process. The primary acoustic signatures of eating include chewing sounds produced by food crushing between teeth, swallowing sounds, and even biting sounds [22] [23]. These sensors convert mechanical sound waves into electrical signals through changes in capacitance or piezoelectric effects. The resulting audio signals contain characteristic frequency and temporal patterns that can distinguish eating sounds from speech or environmental noise. When positioned near the mouth (e.g., in earbuds or neck-worn devices), these sensors can capture high-fidelity audio signatures of mastication and swallowing with minimal interference from external noise [23].

Implementation Considerations:

- Signal Acquisition: Requires careful gain setting and filtering to capture relevant frequencies (typically 100-4000 Hz for chewing sounds) while minimizing environmental noise.

- Privacy Protection: Essential to implement processing techniques that filter out speech and other non-eating sounds to address privacy concerns [22].

- Sensor Placement: Optimal placement includes in-ear microphones, neck-mounted sensors, or submandibular positions to capture swallowing sounds [23].

Table 2: Performance Characteristics of Acoustic-Based Eating Detection Systems

| Study Reference | Sensor Placement | Primary Detection Target | Key Performance Metrics | Study Environment |

|---|---|---|---|---|

| Kyritsis et al. [23] | In-ear microphone | Chewing sounds | High accuracy for chew detection | Free-living |

| AIM-2 System [9] | Not specified | Eating episodes | 94.59% sensitivity, 70.47% precision | Free-living |

| Amft et al. [22] | Neck-worn | Chewing and swallowing | Differentiation of food types | Laboratory |

Piezoelectric Sensors

Technical Principles and Sensing Mechanism: Piezoelectric sensors generate an electrical charge in response to mechanical stress or vibration. In eating detection applications, these sensors are typically positioned on the neck or jaw to capture vibrations from swallowing, chewing, and laryngeal movements [26]. The piezoelectric effect occurs due to the displacement of dipoles within crystalline materials when subjected to mechanical deformation. This property makes them exceptionally sensitive to the high-frequency vibrations generated during food consumption while being relatively insensitive to slower body movements. When embedded in necklaces or patches that maintain snug contact with the skin, piezoelectric sensors can detect even subtle swallowing vibrations and differentiate between solids and liquids based on vibration patterns [26].

Implementation Considerations:

- Contact Requirements: Require consistent skin contact for optimal signal acquisition, which can present challenges during long-term wear.

- Environmental Sensitivity: Susceptible to motion artifacts and temperature variations that may require compensation algorithms.

- Placement Specificity: Optimal positioning over the larynx for swallowing detection or on the jawline for chewing monitoring [26].

Optical Sensors

Technical Principles and Sensing Mechanism: Optical sensing modalities for eating detection encompass two primary approaches: camera-based food recognition and optomyography for muscle movement detection. Camera systems capture images of food for type and volume estimation, while optomyography sensors (e.g., OCO sensors) measure skin surface movements resulting from underlying muscle activity during chewing [27] [28]. These optical surface tracking sensors use light patterns to detect minute skin displacements in the X and Y dimensions caused by activation of temporalis and masseter muscles during mastication [28]. Unlike traditional cameras that raise privacy concerns, optomyography sensors capture only movement patterns without identifiable visual information, making them more suitable for continuous monitoring in free-living conditions [28].

Implementation Considerations:

- Skin Contact: Non-contact operation within 4-30mm range without requiring direct skin contact [28].

- Sensor Configuration: Multiple sensors typically positioned on glasses frames to monitor temple (temporalis muscle) and cheek (masseter muscle) regions [27] [28].

- Signal Processing: Requires sophisticated algorithms to distinguish chewing from confounding facial movements like speaking, smiling, or teeth clenching [28].

Table 3: Performance Characteristics of Optical Sensor-Based Eating Detection Systems

| Study Reference | Sensor Type | Primary Detection Target | Key Performance Metrics | Study Environment |

|---|---|---|---|---|

| OCOsense Validation [27] | Optical muscle sensing | Chewing behavior | Strong agreement with video (r=0.955) | Laboratory |

| Stankoski et al. [28] | OCO optical sensors | Chewing segments | F1-score: 0.91 (lab), 95% precision (free-living) | Lab and free-living |

| AIM-2 System [29] | Camera + sensor fusion | Eating episodes and environment | Comprehensive environment classification | Free-living |

Experimental Design and Methodologies

Data Collection Protocols

Robust experimental protocols are essential for validating eating detection systems across different sensor modalities. Laboratory studies typically involve controlled feeding sessions where participants consume standardized meals while researchers collect sensor data alongside ground truth measurements through video recording, manual annotation, or participant-initiated event markers [27] [26]. These controlled environments enable precise algorithm development and initial validation. For example, in the OCOsense glasses validation study, 47 adults participated in a lab-based breakfast session where chewing behavior was simultaneously recorded by the sensors and manually annotated from video recordings by trained researchers [27].

Free-living studies introduce additional complexity but provide greater ecological validity. In these deployments, participants wear sensors during their normal daily activities while ground truth is collected through complementary methods such as wearable cameras, food diaries, or ecological momentary assessments [9] [29]. The AIM-2 system study employed a comprehensive approach where 30 participants wore the device for two days (one pseudo-free-living and one free-living), with ground truth collected via foot pedal markers during lab meals and manual image review during free-living periods [9]. This multi-method ground truth approach enables robust validation across different environmental contexts.

Signal Processing and Machine Learning Approaches

The raw signals from eating detection sensors require sophisticated processing pipelines to accurately identify eating behaviors. A typical processing workflow includes:

- Preprocessing: Filtering to remove noise, normalization to account for inter-participant differences, and segmentation to isolate potential eating events.

- Feature Extraction: Calculation of time-domain (e.g., mean, variance, zero-crossing rate) and frequency-domain (e.g., spectral entropy, band power) features from sensor signals.

- Classification: Application of machine learning models to distinguish eating from non-eating activities.

Multiple algorithmic approaches have demonstrated effectiveness for eating detection, ranging from traditional machine learning (Linear Discriminant Analysis, Support Vector Machines) to deep learning models (Convolutional Neural Networks, Recurrent Neural Networks) [9] [25] [28]. For complex temporal patterns in eating behavior, hybrid architectures like Convolutional Long Short-Term Memory networks have emerged as particularly effective [28]. Sensor fusion techniques that combine multiple modalities (e.g., inertial and acoustic) have shown improved performance over single-modality approaches by providing complementary information about eating events [9].

Research Reagents and Experimental Tools

Table 4: Essential Research Tools for Wearable Eating Detection Studies

| Tool/Platform | Type | Primary Function | Example Use Case |

|---|---|---|---|

| AIM-2 (Automatic Ingestion Monitor v2) | Multi-sensor wearable device | Combines camera and accelerometer for eating detection | Free-living eating environment classification [9] [29] |

| OCOsense Smart Glasses | Optical sensor platform | Monitors facial muscle activations via optomyography | Chewing detection and counting in lab and free-living [27] [28] |

| Custom Necklace Platform | Piezoelectric sensor system | Detects swallowing vibrations via piezoelectric sensors | Swallowing detection for solid vs. liquid differentiation [26] |

| Commercial Smartwatches | IMU-based platform | Captures hand-to-mouth gestures via accelerometer/gyroscope | Eating episode detection in free-living conditions [23] [25] |

| In-Ear Microphones | Acoustic sensor platform | Captures chewing sounds in ear canal | Chewing detection and characterization [23] |

Implementation Challenges and Future Directions

Despite significant advances in wearable sensor technologies for eating detection, several challenges remain for widespread deployment in free-living settings. Sensor placement and wearability present practical obstacles, as devices must balance detection accuracy with user comfort and social acceptability for long-term use [26]. Power consumption and battery life are critical constraints for continuous monitoring, particularly for computation-intensive sensors like cameras. Privacy concerns are especially relevant for audio and video-based modalities, necessitating the development of privacy-preserving approaches such as on-device processing and filtering of non-food-related sounds or images [22].

Future research directions focus on addressing these limitations through several promising avenues. Multi-modal sensor fusion combines complementary strengths of different modalities to improve overall system accuracy and robustness in diverse free-living conditions [9]. Personalized algorithm adaptation tailors detection models to individual chewing patterns and eating styles to enhance performance across diverse populations [25]. The development of less obtrusive form factors that integrate sensing into everyday objects like standard eyeglasses or jewelry aims to improve user compliance and social acceptability [27] [28]. Finally, real-time feedback systems represent a growing frontier, where sensor data not only monitors but also modulates eating behavior through just-in-time interventions, creating closed-loop systems for health management [24].

Wearable sensor modalities offer diverse and complementary approaches for automatic eating detection in free-living settings. IMUs excel at capturing macroscopic eating gestures, acoustic sensors provide detailed information on chewing and swallowing sounds, piezoelectric sensors detect throat vibrations with high sensitivity, and optical sensors enable visual food recognition and muscle movement monitoring. The optimal sensor selection depends on specific research objectives, target behaviors, and practical constraints related to user acceptance and battery life. Future advancements will likely focus on multi-modal approaches that combine complementary sensing technologies while addressing challenges related to power optimization, privacy preservation, and seamless integration into everyday life. As these technologies mature, they hold significant promise for transforming dietary assessment in both research and clinical applications.

The accurate detection of eating episodes in free-living conditions is a cornerstone for advancing research in nutrition, obesity, and chronic disease management. Traditional methods, such as food diaries and 24-hour recalls, are hampered by user burden and significant recall bias [23]. The emergence of automated dietary monitoring (ADM) systems promises to overcome these limitations by providing objective, passive, and granular data on eating behavior. The effectiveness of any ADM system is profoundly influenced by a critical design choice: the form factor and placement of the sensing device. This whitepaper provides an in-depth technical examination of the primary wearable form factors—neck-worn, wrist-worn, eyeglass-based, and in-ear systems—framed within the context of free-living research. We synthesize performance data, detail experimental methodologies, and analyze the trade-offs between obtrusiveness and signal fidelity that researchers must navigate to select the optimal platform for their specific investigative goals.

Comparative Analysis of Form Factors

The selection of a device form factor involves balancing sensor modality, user comfort, battery life, and performance across diverse populations. The table below summarizes the key characteristics and performance metrics of the four primary form factors as established in recent literature.

Table 1: Performance and Characteristics of Eating Detection Form Factors

| Form Factor | Primary Sensor Modalities | Target Signal / Activity | Reported Performance (F1-Score/Accuracy) | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Neck-worn [30] | Proximity, IMU, Ambient Light | Chin proximity (chewing), Lean Forward Angle | 81.6% (episode detection, semi-free-living) | Validated on diverse BMI populations; long battery life (15.8 hrs) | May be perceived as obtrusive; potential stigma |

| Eyeglass-based [31] [28] | Optical Myography (OCO), IMU | Facial muscle activation (temporalis, zygomaticus) | 89-91% (event detection, free-living) [31] | High granularity (chew-level); non-invasive sensing | Limited to glasses-wearers; privacy concerns with cameras |

| In-Ear [32] [23] | Inertial, Acoustic | Jaw motion, Chewing sounds | 80.1% (chewing detection, free-living) [32] | Discreet form factor; leverages commercial earbuds | Acoustic sensitive to ambient noise; ear canal fit issues |

| Wrist-worn [25] [23] | IMU (Accelerometer, Gyroscope) | Hand-to-mouth gestures | >90% (gesture detection, personalized models) [25] | High user acceptance; leverages commercial smartwatches | Cannot detect chewing directly; confounded by similar gestures |

Detailed Experimental Protocols and Methodologies

Neck-worn Systems (NeckSense)

The NeckSense platform exemplifies a multi-sensor, necklace-based approach designed for all-day monitoring in free-living conditions [30].

- Hardware Configuration: The custom-built necklace integrates a proximity sensor to measure the distance to the chin for jaw movement detection, an Inertial Measurement Unit (IMU) to capture the "Lean Forward Angle" and body movement, and an ambient light sensor to provide contextual information.

- Signal Processing and Feature Extraction:

- Proximity Signal: A longest periodic subsequence algorithm is applied to identify rhythmic chewing sequences from the jaw movements.

- Sensor Fusion: Features from the proximity, IMU, and ambient light sensors are fused. The IMU's lean-forward angle helps distinguish eating postures, while ambient light aids in identifying environmental context.

- Classification and Clustering: A classifier first identifies individual chewing sequences at a fine-grained (per-second) level. These sequences are then clustered temporally to define distinct eating episodes.

- Validation Protocol: The system was tested in two studies: an exploratory semi-free-living study and a full free-living study. Over 470 hours of data were collected from 20 participants, including individuals with and without obesity. Ground truth was established using video footage and clinical standard labeling, allowing for the calculation of per-second and per-episode F1-scores [30].

Eyeglass-based Systems (OCOsense)

The OCOsense smart glasses utilize a novel optical sensing technology to monitor eating through facial muscle activations [31] [28].

- Hardware Configuration: The glasses are equipped with optical tracking (OCO) sensors based on optomyography. These are non-contact sensors that measure 2D skin surface movements. Key sensors are positioned at the temple (to monitor the temporalis muscle for jaw movement) and the cheek (to monitor the zygomaticus muscles activated during chewing).

- Signal Processing and Workflow:

- The OCO sensors capture skin displacement data in the X and Y planes resulting from underlying myogenic activity.

- A Convolutional Long Short-Term Memory (ConvLSTM) deep learning model analyzes the temporal patterns from the sensor data to distinguish chewing from other facial activities like speaking, clenching, or smiling.

- A Hidden Markov Model (HMM) is often integrated as a post-processing step to model the temporal dependencies between consecutive chewing events, refining the output of the deep learning model.

- Validation Protocol: A three-week home-based study was conducted with 23 participants. Week one established a baseline, week two involved participant annotation of eating events and food photography for detection accuracy validation, and week three introduced real-time haptic feedback for behavior modification. Performance was evaluated based on the F1-score for eating event detection against the self-reported and photographed ground truth [31].

In-Ear Systems (EarBit)

The EarBit platform is an "earable" system that leverages the ear's proximity to the jaw and mouth for sensing [32].

- Hardware Configuration: As an experimental platform, EarBit incorporated multiple sensing modalities: an inertial sensor placed behind the ear to capture jaw motion, an acoustic sensor (microphone) to capture chewing sounds, and an optical sensor. The inertial sensor behind the ear proved most effective and comfortable.

- Signal Processing and Classification:

- The inertial sensor data (accelerometer/gyroscope) from the behind-the-ear unit is processed to isolate the characteristic periodic signals of chewing.

- Machine learning models (e.g., Support Vector Machines, Random Forests) are trained on features extracted from this inertial data to classify one-second intervals as "chewing" or "not chewing."

- These fine-grained chewing inferences are then aggregated over time to detect the start and end of full eating episodes.

- Validation Protocol: A key aspect of EarBit's development was its two-stage validation. Models were first trained on data collected in a semi-controlled "home-like" lab environment. These models were then tested on a completely new dataset collected from 10 participants in a fully unconstrained "outside-the-lab" setting, with ground truth provided by video recordings. This protocol rigorously tests generalizability to real-world conditions [32].

Wrist-worn Systems

Wrist-worn systems, typically using commercial smartwatches, take an indirect approach to eating detection by monitoring arm movements [25] [23].

- Hardware Configuration: These systems utilize the built-in IMU of a smartwatch, which includes a tri-axial accelerometer and gyroscope.

- Signal Processing and Workflow:

- The core principle is to detect characteristic hand-to-mouth gestures that serve as a proxy for bites.

- Time-series data from the accelerometer and gyroscope are segmented into windows.

- Deep learning models, such as Long Short-Term Memory (LSTM) networks, are trained to learn the unique pattern of these feeding gestures. Research shows that personalized models, trained on data from a specific individual, can achieve very high accuracy (>90%), significantly outperforming generalized models [25].

- Validation Protocol: Studies often involve participants wearing a smartwatch while eating in laboratory or semi-controlled settings. Video recordings are used to annotate the exact timing of each bite or eating episode, providing the ground truth for training and evaluating the gesture detection algorithms. Some studies also conduct free-living trials to assess real-world viability [23].

Visualizing Sensor Data Processing Workflows

The following diagrams illustrate the typical data processing and decision pathways for two distinct form factors.

Multi-Sensor Fusion in a Neck-worn System

Optical Sensing and Deep Learning in Smart Glasses

The Researcher's Toolkit: Essential Research Reagents

This section details the key hardware and software components, or "research reagents," essential for developing and testing eating detection systems across different form factors.

Table 2: Essential Research Reagents for Eating Detection Studies

| Reagent / Tool | Primary Function | Example Implementation in Research |

|---|---|---|

| Inertial Measurement Unit (IMU) | Captures motion and orientation data. | Used in neck-worn (posture), wrist-worn (gestures), and in-ear (jaw motion) systems [30] [32] [25]. |

| Optical Myography (OMG) Sensor | Measures skin surface movement from muscle activity. | The core sensor in OCOsense smart glasses for detecting temporalis and cheek muscle activations [28]. |

| Proximity Sensor | Measures distance to a target. | Used in neck-worn NeckSense to track chin movement for chewing cycle detection [30]. |

| Acoustic Sensor (Microphone) | Captures audio signals of chewing and swallowing. | Deployed in in-ear systems and custom earbuds for analyzing chewing sounds [32] [23]. |

| Bio-impedance Sensor | Measures electrical impedance across body tissues. | Used in systems like iEat to detect circuit variations formed during hand-mouth-food interactions [33]. |

| Convolutional LSTM Network | A deep learning model for spatiotemporal pattern recognition. | Effectively used to classify time-series data from optical and inertial sensors in eyeglass-based systems [28]. |

| Hidden Markov Model (HMM) | A statistical model for representing temporal sequences of states. | Used as a post-processing step to model the sequence of chewing events and improve detection robustness [28]. |

| Video Recording System | Provides ground truth data for algorithm training and validation. | A critical tool in all cited studies for manually annotating the timing of eating episodes, bites, and chews [30] [32]. |

The choice of device form factor and placement is a fundamental determinant in the success of automatic eating detection research in free-living settings. Each platform presents a unique set of trade-offs. Neck-worn systems offer robust, multi-sensor fusion validated across diverse populations. Eyeglass-based approaches provide unparalleled granularity at the level of individual chews using non-invasive optical sensing. In-ear devices balance discretion with direct access to jaw-motion signals, while wrist-worn smartwatches leverage high user acceptance and commercial availability, albeit with less direct sensing of ingestion. There is no universally optimal solution; the selection must be guided by the specific research question, target population, and required granularity of data. Future research directions will likely involve the fusion of data from multiple, complementary form factors and a stronger emphasis on personalized, adaptive algorithms to further enhance detection accuracy and clinical utility in the complex, unstructured environments of real life.

The increasing global prevalence of obesity and diet-related chronic diseases has intensified the need for accurate dietary assessment methods. Traditional approaches, such as food diaries and 24-hour recalls, are hampered by significant limitations including recall bias, participant burden, and substantial under- or over-reporting [34] [4] [35]. Research conducted in free-living settings is particularly vulnerable to these inaccuracies, as eating behaviors are influenced by complex, dynamic contextual factors that are difficult to capture retrospectively.

Artificial intelligence (AI), particularly machine learning (ML) and deep learning (DL), presents a paradigm shift for objectively inferring eating behaviors in naturalistic environments. These technologies enable the development of systems that can automatically detect eating activities, recognize consumed foods, and characterize contextual eating patterns with minimal user interaction [34] [4]. This technical guide examines the core AI methodologies advancing the field of automatic eating detection, focusing on their operational principles, implementation protocols, and performance metrics relevant to researchers and scientists working in free-living research contexts.

Core AI Applications in Eating Behavior Inference

AI applications for eating behavior inference can be categorized into three primary domains based on their function and technological approach. The table below summarizes the key paradigms, their data sources, and primary outputs.

Table 1: Core AI Paradigms in Eating Behavior Inference

| AI Application Domain | Data Sources | Primary Outputs | Key Strengths |

|---|---|---|---|

| Machine Perception for Activity Detection [34] [4] [35] | Wearable sensors (accelerometers, gyroscopes), acoustic sensors, physiological sensors | Detection of eating episodes, chewing sequences, swallows, hand-to-mouth gestures | Passive, continuous monitoring; captures micro-level eating metrics |

| Image-Based Food Recognition [36] [37] [38] | Smartphone cameras, wearable cameras, passive imaging systems | Food type identification, portion size estimation, calorie content prediction | Direct identification of food items; rich visual data |

| Predictive Analytics for Context & Lapse [34] [39] | Contextual sensor data, self-reported EMA, historical behavior patterns | Prediction of dietary lapses, emotional eating episodes, overall diet quality | Moves beyond detection to prediction; enables proactive interventions |

Machine Perception for Eating Activity Detection

Machine perception systems use data from wearable sensors to detect the physical acts of eating. A 2021 scoping review identified this as the most prevalent AI application in weight loss, focusing on recognizing food items, eating behaviors, and physical activities [34].

Common Sensing Modalities:

- Inertial Measurement Units (IMUs): Wrist-worn accelerometers are widely used to detect hand-to-mouth gestures as a proxy for bites. A real-time system using a smartwatch accelerometer achieved a precision of 80%, recall of 96%, and an F1-score of 87.3% in detecting meal episodes [40].

- Acoustic Sensors: Sensors placed on the neck capture chewing and swallowing sounds. These signals are processed to identify characteristic audio frequencies and patterns associated with food consumption [35].

- Multi-Sensor Systems: Combining multiple sensors (e.g., accelerometer and gyroscope) often improves accuracy by providing complementary data streams [4].

Image-Based Food Recognition and Dietary Assessment

Deep learning, particularly Convolutional Neural Networks (CNNs), has dramatically advanced automated food recognition. These systems analyze food images to identify items, estimate volume, and calculate nutritional content [37].

Performance Metrics: A 2025 study utilizing the EfficientNetB7 model with the Lion optimizer demonstrated the state of the art, achieving 100% accuracy in identifying 16 food classes and 99% accuracy for 32 food classes, with a mean absolute error (MAE) of 0.0079 [36]. In applied settings, an automatic image recognition (AIR) app correctly identified 86% of dishes in a multi-dish meal, significantly outperforming voice-input methods [38].

Predictive Analytics for Behavioral Context and Lapses

Beyond detection, ML models predict future eating behaviors by analyzing contextual factors. These models identify complex, non-linear relationships between environment, person-level traits, and eating outcomes [34] [39].

Key Predictive Factors: A 2025 study using gradient boost decision trees predicted food consumption at eating occasions with high accuracy (MAE below half a serving for most food groups). For overall daily diet quality, the model's predictions deviated by 11.86 points from the actual Dietary Guideline Index score. The most influential factors for diet quality included cooking confidence, self-efficacy, food availability, perceived time scarcity, and activity during consumption [39].

Detailed Experimental Protocols and Methodologies

Protocol for Wearable Sensor-Based Eating Detection