Beyond Accuracy: Mastering F1-Score Evaluation for Robust Eating Detection in Biomedical Research

This article provides a comprehensive guide to the F1-score for researchers and professionals developing and validating automated eating detection systems.

Beyond Accuracy: Mastering F1-Score Evaluation for Robust Eating Detection in Biomedical Research

Abstract

This article provides a comprehensive guide to the F1-score for researchers and professionals developing and validating automated eating detection systems. It covers foundational concepts of precision and recall, explores the application of the F1-score across diverse methodologies from wearable sensors to video-based deep learning, addresses troubleshooting for common challenges like class imbalance and confounding gestures, and establishes rigorous validation and comparative analysis frameworks. The insights are tailored to support the development of reliable dietary monitoring tools for clinical trials and public health research.

Why F1-Score? Foundational Metrics for Eating Detection

The Limitations of Accuracy in Imbalanced Dietary Datasets

In the pursuit of automated dietary monitoring (ADM), the accuracy of eating detection systems is paramount for applications ranging from clinical nutrition to chronic disease management. However, the performance of these systems, often quantified using metrics like the F1-score, is fundamentally constrained by a pervasive challenge: imbalanced dietary datasets. Such imbalance occurs when the data collected to train machine learning models overrepresent certain food types, eating activities, or dietary contexts while underrepresenting others. In real-world settings, this skew mirrors natural consumption biases—for instance, "imperfect" food products might be rarer in a production line than "good" ones, or specific eating gestures may occur less frequently than others [1] [2]. When models are trained on these non-uniform datasets, they often develop a predictive bias toward the majority classes, achieving high overall accuracy at the cost of poor performance on underrepresented categories. This limitation critically undermines the real-world reliability of dietary assessment systems. Using the F1-score, which balances precision and recall, provides a more truthful evaluation of model robustness than accuracy alone, especially for minority classes. This article explores how data imbalance affects system performance by comparing experimental data across diverse sensing modalities and highlighting the consequent limitations in achieved F1-scores.

Comparative Performance of Dietary Monitoring Systems

The performance of a dietary monitoring system is a direct reflection of the data it was trained on. Systems developed on more balanced and robust datasets typically demonstrate superior and more generalizable F1-scores. The following table summarizes the performance of various dietary monitoring approaches as reported in recent studies.

Table 1: Performance Comparison of Selected Dietary Monitoring Systems

| System / Study Focus | Sensing Modality | Key Classes / Imbalance Context | Reported Performance (F1-Score or Accuracy) |

|---|---|---|---|

| Smartwatch-Based Eating Detection [2] | Inertial (Accelerometer) | Eating vs. Non-eating gestures | F1-score: 87.3% |

| iEat Wearable [3] | Bio-impedance (Wrist-worn) | 4 food intake activities | Macro F1-score: 86.4% |

| 7 food types | Macro F1-score: 64.2% | ||

| Turkish Cuisine Classification [4] | Image (CNN) | 6 food groups | Accuracy: Up to 80% |

| YOLO-based Food Detection [5] | Image (YOLOv8) | 42 food classes | Precision: 82.4% |

| Poultry Product Quality [1] | Image (YOLO12) | "Good" vs. "Imperfect" products | mAP50-95: 0.936 |

| Chewable Food Sound Recognition [6] | Acoustic (Eating Sounds) | 20 food items | Accuracy: 99.28% (GRU Model) |

| Personalized IMU Model [7] | Inertial (IMU) | Carbohydrate intake gestures | Median F1-score: 0.99 |

The variance in performance across these systems can often be attributed to dataset characteristics. For instance, the iEat system demonstrates a notable drop in the macro F1-score when moving from activity recognition (86.4%) to food type classification (64.2%), highlighting the added complexity and potential data imbalance associated with a larger number of food classes [3]. Similarly, in computer vision tasks, the performance can be influenced by class representation; one study on poultry products noted that the model learned the "imperfect product" class better, likely due to its higher representation in the training data, underscoring the profound impact of dataset balance on model learning [1].

The F1-Score as a Critical Metric in Imbalanced Scenarios

In the context of imbalanced dietary datasets, traditional accuracy metrics can be profoundly misleading. A model might achieve 95% accuracy by simply always predicting the majority class ("non-eating" or "common food"), thereby failing completely to identify the critical minority classes ("eating" or "rare food"). The F1-score, calculated as the harmonic mean of precision and recall (F1 = 2 * (Precision * Recall) / (Precision + Recall)), provides a more nuanced and reliable measure.

Its utility is evident in eating detection research. For example, a smartwatch-based system achieved an overall high F1-score of 87.3%, which synthesizes its ability to correctly identify eating episodes (precision) and capture all actual eating episodes (recall) [2]. Conversely, the iEat wearable's macro F1-score of 64.2% for food type classification reveals a significant limitation, likely stemming from an inability to equally recognize all seven food types due to inherent data imbalance [3]. The macro-averaging method used here is particularly revealing, as it computes the metric independently for each class before averaging, ensuring that minority classes contribute equally to the final score. This prevents the model's performance on prevalent classes from masking its failures on rarer ones.

Detailed Experimental Protocols and Data Imbalance Challenges

Image-Based Food Classification with Data Augmentation

Objective: To develop a deep learning system for classifying food groups and estimating portion sizes from images, specifically for Turkish cuisine dishes [4]. Methods:

- Dataset: The study used a relatively small dataset of 679 original food images from Turkish cuisine, categorized into 6 food groups (e.g., G1: Dairy, G4: Vegetables) and 5 portion size ranges [4].

- Data Preprocessing and Augmentation: To counteract the limited dataset size and potential imbalance, the researchers employed data augmentation (DA), a technique to artificially expand the dataset. The original 679 images were quadrupled to 2,716 images after augmentation. They also used an 80/20 training-test split and applied five-fold cross-validation to enhance reliability and reduce overfitting [4].

- Models: The study leveraged transfer learning with six pre-trained CNN architectures, including NasNet-Mobile, SqueezeNet, and MobileNet-v2 [4]. Key Findings Related to Imbalance:

- The model achieved up to 80% accuracy for food group classification and 80.47% for portion estimation with the inclusion of data augmentation [4].

- This underscores the role of DA as a critical technique for mitigating the challenges of small and potentially imbalanced datasets, directly contributing to the model's achievable accuracy.

Wearable Dietary Monitoring with Bio-Impedance Sensing

Objective: To design a wearable system (iEat) for automatic dietary activity and food type monitoring using bio-impedance sensing between two wrists [3]. Methods:

- Sensing Principle: The system measures dynamic changes in the electrical impedance between wrist-worn electrodes. Different dietary activities (e.g., cutting, drinking) and food types create unique, paralleled circuit paths through the body, hands, utensils, and food, resulting in characteristic impedance variations [3].

- Dataset and Protocol: The study involved 10 volunteers performing 40 meals in an everyday dining environment. The data was used to recognize four activities (cutting, drinking, eating with hand, eating with fork) and classify seven types of food [3].

- Model: A lightweight, user-independent neural network model was trained on the collected sensor data [3]. Key Findings Related to Imbalance:

- The system showed a strong macro F1-score of 86.4% for activity recognition but a significantly lower 64.2% for food type classification [3].

- This performance gap highlights a classic imbalance challenge: distinguishing between many food classes (7 types) is inherently more complex and prone to data distribution issues than recognizing broader activity patterns (4 activities). The lower F1-score indicates that the model struggled to achieve high precision and recall consistently across all food classes.

Visualizing the Impact of Imbalanced Data on Model Evaluation

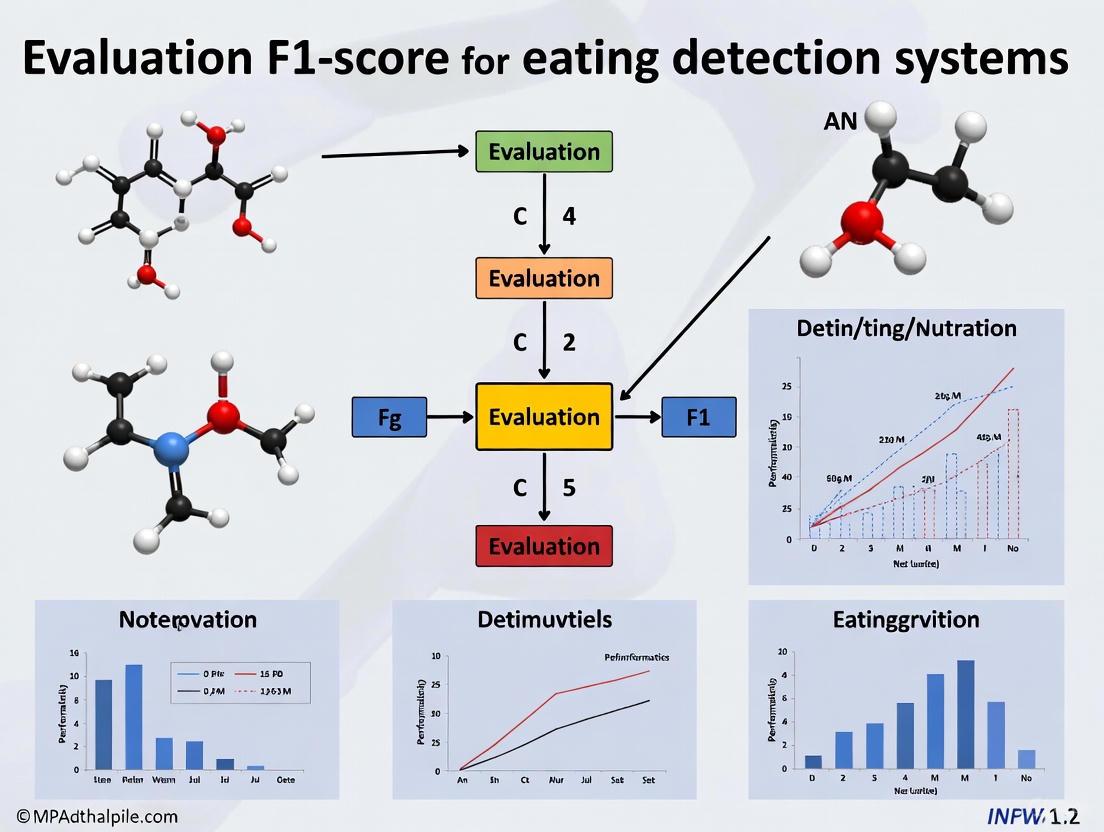

The following diagram illustrates the workflow of a dietary monitoring system and how data imbalance at the input stage propagates through the pipeline, ultimately leading to a biased performance evaluation if only global accuracy is considered.

Research Reagent Solutions for Robust Dietary Monitoring

To combat the limitations imposed by imbalanced data, researchers can employ a suite of methodological and technical solutions. The following table details key reagents and approaches essential for developing more accurate and generalizable eating detection systems.

Table 2: Essential Research Reagents and Methods for Addressing Data Imbalance

| Reagent / Method | Function in Dietary Monitoring Research | Example Application |

|---|---|---|

| Data Augmentation (DA) | Artificially expands training datasets by creating modified versions of existing images/signals, improving model generalization and robustness to class imbalance. | Quadrupling a dataset of 679 food images to 2,716 images to improve portion estimation accuracy [4]. |

| Synthetic Minority Over-sampling Technique (SMOTE) | Generates synthetic samples for minority classes in feature space to balance class distribution, mitigating model bias toward majority classes. | Used in chemistry and material science to balance datasets for property prediction; applicable to dietary sensor data [8]. |

| You Only Look Once (YOLO) Models | A family of efficient, single-pass deep learning models for real-time object detection in images, useful for creating large, annotated food datasets. | Evaluating YOLOv8 for food component detection and portion estimation based on the Swedish plate model [5]. |

| Bio-impedance Sensor | A wearable sensing modality that measures changes in electrical impedance to detect dietary activities and, potentially, food types based on conductivity. | Used in the iEat wearable to recognize food intake activities and classify food types via wrist-worn electrodes [3]. |

| Convolutional Neural Networks (CNNs) | Deep learning architectures highly effective for image-based tasks like food classification and portion size estimation from food photographs. | Classifying Turkish cuisine dishes into food groups using pre-trained models like ResNet-18 and GoogleNet [4]. |

| Macro F1-Score | A performance metric that calculates the unweighted mean of per-class F1-scores, ensuring minority classes have equal influence in the overall assessment. | Used to evaluate the iEat system's performance across different food types and activities, revealing gaps in classification [3]. |

The pursuit of high accuracy in dietary monitoring systems is inextricably linked to the challenge of imbalanced datasets. As the comparative data and experimental protocols show, even advanced deep learning models exhibit significant performance limitations, particularly for minority classes, when trained on non-uniform data. The F1-score emerges as an indispensable metric for a truthful evaluation, cutting through the illusion of high accuracy to reveal a model's weaknesses. Techniques like data augmentation and sophisticated sensing modalities offer pathways to mitigate these issues. Future progress in the field hinges on a concerted effort to create larger, more balanced, and culturally diverse dietary datasets. Without this foundation, the real-world applicability of eating detection systems in critical areas like personalized nutrition and clinical drug development will remain fundamentally constrained.

Contents

- Introduction

- Defining the Core Metrics

- The Precision-Recall Trade-off

- The F1-Score: A Unified Metric

- Evaluation in Eating Detection Research

- Experimental Protocols in Eating Detection

- Essential Research Toolkit

In the development of automated eating detection systems, the performance of machine learning models has a direct impact on the reliability of dietary monitoring and the efficacy of subsequent health interventions. Evaluating these models requires moving beyond simple accuracy to metrics that reflect real-world operational challenges. Precision, Recall, and the F1-score form the cornerstone of this evaluation, providing a nuanced view of a model's capabilities and limitations [9] [10]. This guide objectively compares these core metrics and illustrates their critical trade-offs within the context of eating detection research, a field where the cost of both false alarms and missed detections is significant [11].

Defining the Core Metrics

Precision and Recall are derived from the confusion matrix, which categorizes a model's predictions into True Positives (TP), False Positives (FP), True Negatives (TN), and False Negatives (FN) [12] [10]. In the context of eating detection, a "Positive" indicates the system has identified an eating event.

The formulas and interpretations for these metrics are as follows:

Precision answers the question: "Of all the eating episodes the system detected, how many were actual eating episodes?" [12] [13].

- Formula: ( \text{Precision} = \frac{TP}{TP + FP} )

- A high precision means the system has few false alarms; when it triggers an alert for eating, you can be confident it is correct [10].

Recall answers the question: "Of all the actual eating episodes that occurred, how many did the system successfully detect?" [12] [13].

- Formula: ( \text{Recall} = \frac{TP}{TP + FN} )

- A high recall means the system misses very few true eating episodes; it is comprehensive in its detection [10].

The following diagram visualizes the logical relationship between the confusion matrix, precision, and recall.

Logical relationship between core metrics and the confusion matrix

The Precision-Recall Trade-off

In practice, it is challenging for a model to achieve perfect precision and perfect recall simultaneously. Efforts to improve one often come at the expense of the other, creating a critical trade-off [12] [10].

This trade-off is often managed by adjusting the model's decision threshold—the level of confidence required for the model to predict a "positive" (eating event) [9] [10].

- Increasing the threshold makes the model more conservative. It only predicts an eating event when it is very confident. This reduces false positives (improving precision) but increases the risk of missing true events (lowering recall).

- Decreasing the threshold makes the model more liberal. It predicts an eating event even with lower confidence. This helps catch more true events (improving recall) but increases false alarms (lowering precision).

The optimal balance is dictated by the application. For instance, in a smoke detector, high recall is prioritized to catch all real fires, even at the cost of many false alarms. Conversely, in a criminal justice system, high precision is valued to avoid convicting the innocent, even if some guilty people go free [12]. In eating detection, the priority might shift based on the end goal—whether for rigorous scientific measurement (requiring high precision) or for initiating real-time health interventions (requiring high recall) [11].

The fundamental trade-off between precision and recall

The F1-Score: A Unified Metric

The F1-score addresses the need for a single metric that balances both precision and recall. It is defined as the harmonic mean of precision and recall, providing a more conservative average than a simple arithmetic mean [9] [14].

- Formula: ( F1 = 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \Recall} )

The F1-score is particularly valuable when working with imbalanced datasets, which are common in eating detection (where eating events are far less frequent than non-eating activities) [9] [13] [14]. A model that simply always predicts "not eating" would have high accuracy but be useless; the F1-score reveals its failure by penalizing poor performance on the positive class [9].

For multi-class problems, such as distinguishing between different food types, several variants exist:

- Macro F1: Calculates F1 for each class independently and then averages them, treating all classes equally regardless of their size [14].

- Weighted F1: Averages the F1-scores of each class, weighted by the number of true instances for each class. This is a better metric for imbalanced datasets as it considers class prevalence [14].

- Fβ-Score: A generalization that allows assigning more importance to either precision (with β < 1) or recall (with β > 1) based on specific application needs [14].

The F1-score as a harmonic mean of precision and recall

Evaluation in Eating Detection Research

The following tables consolidate quantitative results from recent studies in automated eating detection, showcasing how different approaches perform against the core metrics.

Table 1: Performance of Deep Learning Models on Chewable Food Audio Recognition [6] This study used a dataset of 1,200 audio files across 20 food items. Features like spectrograms and MFCCs were extracted and used to train various models.

| Model Type | Model Name | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| Single Model | GRU | 99.28% | - | - | - |

| Single Model | LSTM | 95.57% | - | - | - |

| Single Model | Custom CNN | 95.96% | - | - | - |

| Hybrid Model | Bidirectional LSTM + GRU | 98.27% | 97.7% | - | - |

| Hybrid Model | RNN + Bidirectional LSTM | - | - | 97.45% | - |

| Hybrid Model | RNN + Bidirectional GRU | 97.48% | - | - | - |

Table 2: Performance of a Vision-based Real-time Eating Gesture Detector [11] This study evaluated a wearable system that uses hand and object-in-hand detection on 36 participants in free-living environments.

| System Description | Key Metric | Performance |

|---|---|---|

| Real-time, hand-object-based method (detecting first 1.5 minutes or 10 gestures) | F1-Score | 89.0% |

| Baseline method (using only hand presence) | F1-Score | ~55.0% (approx. 34% lower) |

Experimental Protocols in Eating Detection

To ensure the validity and reproducibility of results, studies in this field adhere to rigorous experimental protocols.

1. Protocol for Audio-based Food Recognition [6]

- Data Collection & Preprocessing: Gather audio recordings of chewing sounds. Preprocess the data using signal processing techniques, including the generation of spectrograms (for visual signal strength), calculation of spectral rolloff and bandwidth (for signal shape), and extraction of Mel-Frequency Cepstral Coefficients (MFCCs) to capture timbral and textural aspects of the sound.

- Model Training & Evaluation: Split the data into training, validation, and test sets. Train various deep learning models (e.g., GRU, LSTM, CNN, and hybrid models) to learn the spectral and temporal patterns in the audio features. Finally, evaluate the models on the held-out test set to compute accuracy, precision, recall, and F1-score.

2. Protocol for Vision-based Eating Detection [11]

- Data Collection: Use a wearable device with an RGB camera and a low-power thermal sensor. Participants wear the device during waking hours, collecting thousands of hours of video data in free-living conditions. Frames are labeled for "feeding gesture," "smoking gesture," or "other."

- Gesture & Episode Detection: Train a lightweight object detection model (e.g., YOLOX) to detect the wearer's hand and an object-in-hand in real-time. Apply a clustering algorithm (e.g., DBSCAN) to group consecutive positive frames into "gestures." A second clustering step groups these gestures into longer "eating episodes."

- Evaluation & Trade-off Analysis: Evaluate the overlap between predicted and ground-truth gestures/episodes. Systematically analyze the trade-off between the number of gestures required to trigger an episode detection and the resulting F1-score to determine an optimal operating point.

Essential Research Toolkit

The following table details key reagents, technologies, and software solutions used in the featured experiments and the broader field of machine learning-based food analysis.

Table 3: Key Research Reagents and Solutions

| Item Name | Type | Function in Research |

|---|---|---|

| Mel-Frequency Cepstral Coefficients (MFCCs) | Software/Feature | Extracts timbral and textural features from audio signals by converting sound from the time to the frequency domain, critical for analyzing chewing sounds. [6] |

| Spectrogram Generator | Software/Feature | Creates a visual representation of the signal strength of an audio file over time and frequency, used for visualizing and analyzing eating sounds. [6] |

| GRU/LSTM Networks | Software/Model | Type of recurrent neural network (RNN) effective at learning long-term temporal patterns, making them suitable for sequential data like audio and video of eating events. [6] |

| YOLOX-nano | Software/Model | A lightweight, real-time object detection model backbone used for detecting hands and objects-in-hand on low-power wearable devices. [11] |

| DBSCAN | Software/Algorithm | A clustering algorithm used to group consecutive video frames or detected gestures into coherent eating episodes based on density and timing. [11] |

| Thermal Sensor (e.g., MLX90640) | Hardware | A low-power sensor that captures thermal signatures, used alongside RGB cameras to distinguish confounding gestures like smoking from eating. [11] |

| Near-Infrared Spectroscopy (NIRS) | Technology | A non-destructive food testing technology used to analyze chemical composition and quality, often combined with ML for food adulteration detection. [15] |

| Electronic Nose/Tongue | Technology | Sensor systems that detect flavors and odors, used in machine learning-assisted food quality and flavor component analysis. [15] |

In the field of automated dietary monitoring (ADM), the accurate detection of eating episodes is paramount for health interventions related to obesity, diabetes, and other diet-related conditions. Evaluating the performance of these detection systems presents a unique challenge: algorithms must correctly identify true eating events (minimizing false negatives) while avoiding incorrect detections from confounding activities like speaking or face-touching (minimizing false positives). This guide objectively compares the performance of various eating detection systems, with a focus on the F1-score as a critical harmonic mean of precision and recall. We synthesize experimental data from recent studies, providing structured comparisons of methodologies and outcomes to inform researchers and developers in the selection and optimization of ADM technologies.

Automated eating detection systems leverage a variety of sensing modalities, from wearable cameras and inertial sensors to bio-impedance and acoustic sensors. The deployment environment for these systems is inherently "in-the-wild," filled with activities that can be mistaken for eating, such as smoking, talking, or gesturing near the face [11]. In this context, relying on a single performance metric like accuracy can be misleading, especially if the dataset is imbalanced with long periods of non-eating activity.

The F1-score resolves this by providing a single metric that balances two crucial aspects:

- Precision: The quality of the positive predictions. A high precision means that when the system triggers an eating event, it is very likely to be correct. Low precision leads to false alarms, causing user frustration and "alert fatigue" [16].

- Recall: The ability to correctly identify actual eating events. A high recall means the system captures most genuine eating episodes. Low recall means meals are missed, compromising the data's utility for health assessment [16].

For eating detection, both types of errors are costly. A system with high recall but low precision would overwhelm a user with false notifications. Conversely, a system with high precision but low recall would fail to log significant portions of a meal, undermining dietary assessment. The F1-score, as the harmonic mean of precision and recall, ensures both metrics are optimized simultaneously, making it the most relevant indicator of a robust eating detection system in real-world conditions [16].

Comparative Performance of Eating Detection Modalities

The following table summarizes the performance of various eating detection approaches as reported in recent scientific literature. The F1-score is highlighted as the key comparative metric.

Table 1: Performance Comparison of Eating Detection Systems

| Sensing Modality | Reported Performance (F1-Score) | Key Strengths | Key Limitations |

|---|---|---|---|

| Wearable Camera & Thermal Sensor [11] | 89.0% (Eating Episode) | High accuracy in free-living; distinguishes eating from smoking via thermal data. | Privacy concerns; confirmation delay for short meals. |

| Smart Glasses (Optical Sensors) [17] | 0.91 (Chewing Detection, Lab)Precision: 0.95, Recall: 0.82 (Real-Life) | Non-invasive; granular chewing detection; high precision. | Requires wearing specific glasses; performance may vary with facial structure. |

| Wrist-Worn Inertial Sensors (Smartwatch) [18] | 0.82 (Eating Segment) | High user acceptability; uses common, commodity device. | Sensitive to confounding hand-to-head gestures. |

| Bio-Impedance Wearable (iEat) [3] | 86.4% (Activity Recognition) | Uses normal utensils; recognizes specific food intake activities. | Limited food type classification performance (64.2% F1). |

| Neck-Worn Acoustic Sensor [11] | 84.9% (Accuracy reported) | Directly captures chewing and swallowing sounds. | Privacy concerns with audio; sensitive to ambient noise. |

Detailed Experimental Protocols and Methodologies

This section details the experimental setups and methodologies from key studies cited in this guide, providing context for the performance data.

Vision-Based Eating Detection with When2Trigger

Objective: To develop a real-time, vision-based eating detection algorithm that balances the trade-off between false positives and detection delay [11].

- Sensors Used: A custom wearable device with an RGB camera and a low-power thermal sensor.

- Data Collection: 36 participants wore the device for up to 14 days in free-living conditions, resulting in 2,797 hours of data. RGB and thermal videos were captured at 5 frames per second [11].

- Algorithm Workflow:

- Gesture Detection: A lightweight YOLOX-nano model detected the presence of a hand and an object-in-hand in each frame.

- Gesture Clustering: Frames with detected hand-object interactions were clustered into distinct "gestures" using the DBSCAN algorithm.

- Episode Detection: Gestures were further clustered into eating "episodes" using a second DBSCAN pass.

- Trade-off Analysis: The study identified that using an average of 10 gestures or the first 1.5 minutes of an episode provided the optimal balance for triggering a detection with an F1-score of 89.0% [11].

The integration of a thermal sensor was crucial for distinguishing smoking gestures from eating, thereby reducing false positives.

Chewing Detection with Smart Glasses (Optical Sensors)

Objective: To accurately monitor eating behavior by detecting chewing segments using optical sensors embedded in smart glasses frames [17].

- Sensors Used: OCOsense smart glasses with optical tracking (OCO) sensors that measure 2D skin movement (optomyography) on the cheeks and temples.

- Data Collection: Data was collected in both controlled laboratory settings and real-life (in-the-wild) scenarios. The sensors captured muscle activations from the temporalis and zygomaticus muscles during chewing [17].

- Algorithm Workflow:

- Signal Acquisition: The OCO sensors recorded skin movement data from the cheek and temple areas during various activities (chewing, speaking, clenching teeth).

- Deep Learning Model: A Convolutional Long Short-Term Memory (ConvLSTM) neural network was trained to distinguish chewing from other facial activities.

- Temporal Modeling: A Hidden Markov Model was integrated to model the temporal dependencies between consecutive chewing events.

- Performance: The system achieved an F1-score of 0.91 in lab settings and high precision (0.95) in real-life, demonstrating effective granular chewing detection [17].

Dietary Monitoring with Bio-Impedance (iEat)

Objective: To explore an atypical use of bio-impedance sensing for recognizing food intake activities and food types via a wrist-worn device [3].

- Sensors Used: A custom wearable (iEat) using a two-electrode configuration to measure bio-impedance across two wrists.

- Experimental Principle: During dining activities, dynamic circuit loops are formed through the hands, mouth, utensils, and food. These interactions cause unique variations in the measured impedance signal, which serve as features for classification [3].

- Data Collection: Ten volunteers performed 40 meals in an everyday table-dining environment. The system recorded impedance data during activities like cutting, drinking, and eating with a hand or fork.

- Algorithm and Performance: A lightweight, user-independent neural network was used for classification. The system detected four food-intake activities with a macro F1-score of 86.4% and classified seven food types with a macro F1-score of 64.2% [3].

Visualizing the F1-Score's Role in Eating Detection

The following diagram illustrates the logical relationship between precision, recall, and the F1-score, and how this evaluation framework is applied to the workflow of an eating detection system.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key hardware, software, and algorithmic "reagents" essential for conducting research in the field of automated eating detection.

Table 2: Key Research Reagents for Eating Detection Systems

| Reagent / Solution | Type | Function in Research | Exemplar Use Case |

|---|---|---|---|

| YOLOX-nano Object Detector [11] | Algorithm | Lightweight, real-time detection of hand and object-in-hand for gesture recognition. | Vision-based eating detection on edge devices. |

| Convolutional LSTM (ConvLSTM) [17] | Algorithm | Analyzes spatiotemporal patterns in sensor data; ideal for sequential data like facial movements. | Chewing detection from optical sensor data streams. |

| OCO Optical Sensor [17] | Hardware | Measures 2D skin movement (optomyography) non-invasively via smart glasses. | Monitoring activations of temporalis and cheek muscles. |

| Bio-Impedance Sensor (Two-Electrode) [3] | Hardware | Measures electrical impedance across the body; detects circuit changes from human-food interaction. | Recognizing food intake activities with normal utensils. |

| DBSCAN Clustering [11] | Algorithm | Clusters time-series events (frames, gestures) into episodes without pre-defining the number of clusters. | Forming eating episodes from a series of detected feeding gestures. |

| Hidden Markov Model (HMM) [17] | Algorithm | Models temporal dependencies between discrete states in a sequence. | Post-processing DL outputs to refine chewing segment detection. |

This comparison guide demonstrates that the F1-score is an indispensable metric for evaluating and comparing eating detection systems, given the critical need to balance precision and recall in real-world applications. The experimental data reveals a trade-off between system obtrusiveness and performance. While vision-based methods currently achieve high F1-scores (e.g., 0.89 [11]), they raise privacy concerns. Conversely, more discreet modalities like smartwatch inertial sensors offer high usability but face challenges with confounding gestures, reflected in a lower F1-score of 0.82 [18]. Emerging technologies like optical sensing in smart glasses and bio-impedance on the wrist show promising, balanced performance while mitigating privacy issues. The choice of technology ultimately depends on the specific application requirements, but the F1-score remains the universal standard for objective, comparable, and meaningful performance assessment in this field.

The F1-score is a critical performance metric in machine learning, especially for classification tasks where data may be imbalanced. It provides a single measure that balances two competing objectives: precision (the accuracy of positive predictions) and recall (the ability to find all positive instances) [16] [19]. This guide explores the interpretation of F1-scores within the specific context of eating detection systems research, offering a framework for evaluating model performance from poor to excellent.

The Fundamentals of F1-Score

The F1-score is the harmonic mean of precision and recall, providing a balanced assessment of a model's performance. The harmonic mean, unlike a simple arithmetic average, penalizes large differences between precision and recall, making the F1-score a more conservative and reliable metric when both metrics are important [19] [20].

- Precision: Measures the model's ability to avoid false alarms (False Positives). It is calculated as True Positives / (True Positives + False Positives) [16] [21].

- Recall: Measures the model's ability to find all relevant cases (True Positives) and avoid missing them (False Negatives). It is calculated as True Positives / (True Positives + False Negatives) [16] [21].

The formula for the F1-score is: F1 Score = 2 * (Precision * Recall) / (Precision + Recall) [16] [19] [20]

It can also be expressed directly in terms of True Positives (TP), False Positives (FP), and False Negatives (FN): F1 Score = (2 * TP) / (2 * TP + FP + FN) [19]

The following diagram illustrates the logical relationship between the core components that constitute the F1-score.

Interpreting F1-Score Performance Tiers

There is no universal standard for F1-score ranges, as a "good" score is highly dependent on the complexity of the task and the consequences of errors [22]. However, general guidelines exist. The table below provides a conceptual framework for interpreting F1-score values, with a specific emphasis on the context of eating detection research.

Table 1: General Interpretation Guide for F1-Score

| F1-Score Range | Performance Tier | Interpretation in Eating Detection Research |

|---|---|---|

| 0.90 – 1.00 | Excellent | Model is highly reliable. Indicates robust detection of eating gestures with minimal false positives/negatives, suitable for clinical or intervention applications [7]. |

| 0.80 – 0.89 | Good | Model is solid and effective. Represents a high-accuracy system that reliably detects meals, though with some room for refinement [2] [11]. |

| 0.70 – 0.79 | Decent | Model has moderate performance. May be sufficient for initial research or applications where some error is acceptable, but not ideal for precise monitoring [22]. |

| 0.50 – 0.69 | Poor to Fair | Model performance is weak. May be outperformed by a random or naive classifier in balanced datasets; requires significant improvement [22]. |

| < 0.50 | Very Poor | Model has failed to learn the task effectively. Predictions are largely unreliable for eating detection purposes [22]. |

Contextual Performance in Eating Detection Systems

In eating detection research, performance must be evaluated against the specific challenge. The following table summarizes the F1-scores achieved by various state-of-the-art sensing methodologies, providing a benchmark for what constitutes excellent performance in this domain.

Table 2: F1-Score Performance of Select Eating Detection Systems

| Sensing Modality | System / Study Description | Reported F1-Score | Performance Tier |

|---|---|---|---|

| Inertial (IMU) & Deep Learning | Personalized deep learning model for carbohydrate intake detection in diabetic patients [7]. | 0.99 (Median) | Excellent |

| Vision & Thermal Sensing | Real-time, hand-object-based method for eating/drinking gesture detection [11]. | 0.890 | Good to Excellent |

| Smartwatch (Accelerometer) | Real-time meal detection system using smartwatch-based hand movements [2]. | 0.873 | Good |

| Bio-Impedance Sensing | iEat wearable device for food intake activity recognition (e.g., cutting, drinking) [3]. | 0.864 (Macro) | Good |

Essential Protocols in Eating Detection Research

To ensure the validity and reproducibility of F1-scores, rigorous experimental protocols are essential. The methodologies from key studies provide a template for robust evaluation.

Protocol: Real-Time Eating Detection via Hand Gestures

This protocol, adapted from a smartwatch-based study, focuses on detecting eating episodes based on dominant hand movements [2].

- Sensing Modality: A commercial smartwatch with a three-axis accelerometer, worn on the dominant hand.

- Data Collection: Accelerometer data is collected at a high frequency (e.g., 15 Hz). Studies often use pre-existing datasets (e.g., Lab-21, Wild-7) collected in laboratory and semi-controlled settings for initial model training [2].

- Feature Extraction: A sliding window (e.g., 6 seconds with 50% overlap) is used to extract statistical features (mean, variance, skewness, kurtosis, root mean square) from the accelerometer data along each axis [2].

- Model Training & Real-Time Detection: A machine learning classifier (e.g., Random Forest) is trained offline on the extracted features. The best-performing model is then ported to a smartphone to run in real-time. An eating episode is typically confirmed, and an Ecological Momentary Assessment (EMA) is triggered, when a specific number of eating gestures (e.g., 20) are detected within a defined time span (e.g., 15 minutes) [2].

The workflow for this protocol is summarized below.

Protocol: Vision-Based Detection with Hand-Object Interaction

This protocol uses wearable cameras and thermal sensors to reduce false positives from confounding gestures like face touching or smoking [11].

- Sensing Modality: A wearable device with an RGB camera and a low-power thermal sensor (e.g., MLX90640), capturing data at a rate of 5 frames per second [11].

- Hand-Object Detection: Each frame is processed by a lightweight object detection model (e.g., YOLOX-nano) trained with a custom loss function to detect the wearer's hand and an object-in-hand simultaneously [11].

- Gesture & Episode Clustering: Frames where both a hand and an object are detected form a binary sequence. The DBSCAN clustering algorithm is applied to this sequence to group detections into distinct "feeding gestures." These gestures are further clustered into eating "episodes" using a second DBSCAN pass with a larger time window (e.g., eps=5 minutes) [11].

- Performance Trade-off Analysis: Researchers systematically vary the minimum number of gestures required to confirm an eating episode. This allows them to analyze the trade-off between detection delay (how quickly a meal is detected) and the F1-score, identifying the optimal threshold for intervention [11].

The Scientist's Toolkit: Research Reagent Solutions

Building and evaluating an eating detection system requires a suite of methodological "reagents." The following table outlines essential components and their functions in this field of research.

Table 3: Essential Research Toolkit for Eating Detection Systems

| Research Reagent | Function & Application |

|---|---|

| Inertial Measurement Unit (IMU) | A sensor package (accelerometer, gyroscope) that captures hand-to-mouth movement dynamics. It is the core of smartwatch-based detection systems [2] [7]. |

| Wearable Camera | Provides visual confirmation of eating activity. Used for validating automated systems and for training hand-object detection models [11]. |

| Low-Power Thermal Sensor | Helps distinguish eating gestures from visually similar activities (e.g., smoking) by detecting thermal signatures, thereby reducing false positives [11]. |

| Bio-Impedance Sensor | Measures electrical impedance across the body. Systems like iEat leverage impedance variations caused by dynamic circuits formed during hand-food-mouth interactions to recognize dietary activities [3]. |

| Ecological Momentary Assessment (EMA) | A method for real-time, in-situ data collection. Short questionnaires are triggered automatically upon detection of an eating episode to gather ground-truth contextual data (e.g., food type, company) [2]. |

| Clustering Algorithm (DBSCAN) | A machine learning method used to group sporadic detections of hand-object interactions into coherent gestures and longer eating episodes, mitigating the impact of transient false positives [11]. |

| Personalized Deep Learning Model | A model (e.g., LSTM networks) tailored to an individual's unique eating patterns, which can achieve exceptionally high F1-scores by accounting for personal biometrics and behaviors [7]. |

Interpreting the F1-score requires a nuanced understanding that goes beyond a single number. In eating detection research, an F1-score of 0.87 to 0.89 represents a Good and highly effective system, as demonstrated by real-world deployments [2] [11]. Scores in the Excellent tier (≥0.90) are often achieved with personalized models or specific sensing modalities but may face challenges in generalizability [7]. Ultimately, the definition of an "excellent" F1-score is contingent on the specific application's requirements, the chosen sensing technology, and the acceptable trade-off between precision and recall. Researchers must contextualize this metric within their experimental design and intended use case.

F1-Score in Action: Evaluating Diverse Eating Detection Methodologies

In the research landscape of automated eating behavior analysis, the F1-score has emerged as a critical metric for evaluating system performance. This harmonic mean of precision and recall offers a balanced assessment, especially vital in detecting subtle behavioral markers like bite events where both false positives (mislabeling other actions as bites) and false negatives (missing actual bites) can significantly skew scientific outcomes [23]. The development of deep learning approaches for bite detection represents a paradigm shift from traditional manual coding and wearable sensors, aiming to provide scalable, non-intrusive solutions for long-term eating behavior studies [24]. Within this context, ByteTrack establishes itself as a specialized deep learning system designed specifically for automated bite count and bite-rate detection from video-recorded child meals, framing its performance within the crucial F1-score evaluation framework that balances precision and recall for eating detection systems research [24] [25].

Experimental Protocols: ByteTrack Methodology Unveiled

Data Collection and Study Design

The ByteTrack model was developed and validated using video data from the Food and Brain Study, a prospective investigation examining neural and cognitive risk factors for obesity development in middle childhood [24] [26]. The dataset comprised 1,440 minutes from 242 videos of 94 children aged 7-9 years consuming four laboratory meals spaced one week apart. Each meal consisted of identical foods common to US children (macaroni and cheese, chicken nuggets, grapes, and broccoli) served in varying amounts, with children eating ad libitum until comfortably full during 30-minute sessions [24] [26]. Meals were video-recorded at 30 frames per second using Axis M3004-V network cameras positioned outside children's direct line of sight to minimize observer effects, with approximately 80% of recordings including additional people to simulate natural mealtime environments [24] [27].

The ByteTrack Architecture: A Two-Stage Pipeline

ByteTrack employs a sophisticated two-stage deep learning pipeline specifically engineered to handle challenges in pediatric eating behavior analysis, including blur, low light, camera shake, and occlusions from hands or utensils blocking the mouth [24] [25].

Stage 1: Hybrid Face Detection - This initial stage combines Faster R-CNN and YOLOv7 architectures in a hybrid pipeline to detect and track children's faces throughout meal videos. This dual approach balances rapid face recognition with robust detection in challenging scenarios where faces may be partially blocked, ensuring the system focuses on the target child while ignoring irrelevant objects or individuals [24] [27].

Stage 2: Bite Classification - The identified face regions are analyzed using an EfficientNet convolutional neural network (CNN) combined with a long short-term memory (LSTM) recurrent network. This architecture leverages EfficientNet's efficient feature extraction capabilities while utilizing LSTM's strength in analyzing temporal sequences to distinguish true bite actions from other facial movements and gestures [24] [25].

The following workflow illustrates ByteTrack's architectural pipeline and evaluation process:

Performance Evaluation Framework

Model performance was quantified using standard object detection metrics [23] [28], with comparisons against manual observational coding as the gold standard. Key metrics included:

- Precision: Measures accuracy in identifying true bites, calculated as True Positives/(True Positives + False Positives) [23]

- Recall: Assesses capability to detect all actual bites, calculated as True Positives/(True Positives + False Negatives) [23]

- F1-Score: Harmonic mean of precision and recall, providing balanced performance assessment [23] [28]

- Intraclass Correlation Coefficient (ICC): Statistical measure of agreement with human coders [24] [25]

The evaluation process employed a rigorous train-test split, with the model trained on 242 videos and tested on a separate set of 51 videos to ensure unbiased performance assessment [24] [29].

Performance Comparison: ByteTrack Versus Alternative Approaches

ByteTrack's Quantitative Performance Profile

On the test set of 51 videos, ByteTrack demonstrated a distinct performance profile across evaluation metrics [24] [25]:

Table 1: ByteTrack Performance Metrics on Pediatric Meal Videos

| Metric | Performance | Interpretation |

|---|---|---|

| Precision | 79.4% | Proportion of correctly identified bites out of all detected bite events |

| Recall | 67.9% | Proportion of actual bites successfully detected by the system |

| F1-Score | 70.6% | Balanced measure combining precision and recall |

| ICC Agreement | 0.66 (range: 0.16-0.99) | Reliability compared to manual observational coding |

The performance variability reflected in the wide ICC range (0.16-0.99) stemmed primarily from challenging conditions including extensive child movement, utensils or hands blocking the mouth, and behavioral factors like chewing on spoons or playing with food, particularly common toward meal endings [24] [27] [29].

Comparative Analysis with Alternative Methodologies

When evaluated against existing approaches for eating behavior analysis, ByteTrack occupies a distinct position in the methodological ecosystem:

Table 2: Methodological Comparison for Bite Detection Systems

| Methodology | Key Features | Advantages | Limitations |

|---|---|---|---|

| ByteTrack (Video-Based DL) | Two-stage pipeline: hybrid face detection + CNN-LSTM bite classification | Non-intrusive, scalable, preserves natural eating context | Performance decreases with occlusions and high movement [24] [25] |

| Manual Observational Coding | Frame-by-frame human video annotation | High accuracy, current gold standard | Labor-intensive, time-consuming, costly, not scalable [24] [26] |

| Wearable Sensors | Accelerometers, acoustic sensors, pre-defined motion thresholds | Portable, usable outside laboratory settings | Disrupt natural eating, false positives from gestures, struggles with utensil variability [24] [30] |

| Facial Landmark Approaches | Hand proximity, mouth opening criteria | Effective in controlled environments | Prone to false positives from talking, gestures, facial expressions [24] [26] |

| Optical Flow Methods | Motion tracking between consecutive frames | Adaptable to different eating styles | Difficulties distinguishing bites from fidgeting or gesturing [24] [26] |

ByteTrack's architectural approach demonstrates particular advantages in handling real-world variability in pediatric eating environments while maintaining moderate reliability, though it faces ongoing challenges with visual occlusions common in naturalistic feeding scenarios [24] [27].

The Scientist's Toolkit: Research Reagent Solutions

Implementing and evaluating deep learning systems like ByteTrack requires specific computational tools and frameworks essential for reproducible research:

Table 3: Essential Research Reagents for Eating Detection Systems

| Research Reagent | Function in Development/Evaluation | Application in ByteTrack |

|---|---|---|

| Faster R-CNN | Region-based object detection network | Initial face detection in hybrid pipeline [24] |

| YOLOv7 | Real-time object detection system | Complementary face detection for challenging conditions [24] |

| EfficientNet CNN | Convolutional neural network with optimized scaling | Feature extraction from facial regions for bite analysis [24] [25] |

| LSTM Network | Recurrent neural network for sequence modeling | Temporal analysis of movement patterns for bite classification [24] [25] |

| Intersection over Union (IoU) | Evaluation metric for object detection accuracy | Measures overlap between predicted and ground truth bounding boxes [23] [28] |

| COCO Evaluation Metrics | Standardized object detection evaluation framework | Provides precision-recall curves and average precision calculations [28] |

These research reagents form the foundational infrastructure for developing, training, and evaluating automated eating detection systems, with the specific implementation in ByteTrack highlighting their practical application in pediatric nutrition research.

ByteTrack represents a significant methodological advancement in automated eating behavior analysis, demonstrating the feasibility of deep learning approaches for scalable bite detection in pediatric populations. With an F1-score of 70.6%, it establishes a benchmark for video-based systems, though there remains substantial opportunity for improvement, particularly in handling visual occlusions and high-movement scenarios common in child meals [24] [25] [27].

The system's performance profile offers valuable insights for the broader field of eating detection systems research. The precision-recall tradeoff embodied in ByteTrack's F1-score highlights both the progress made and challenges remaining in replacing labor-intensive manual coding with automated solutions. Future research directions likely include integrating multi-modal data streams, expanding model training across diverse populations and eating contexts, and enhancing robustness to occlusion through advanced computer vision techniques [24] [27] [29].

As obesity prevention research increasingly focuses on meal microstructure biomarkers like bite rate, technologies like ByteTrack provide essential methodological infrastructure for large-scale studies examining how eating behaviors influence obesity risk across development. The system's F1-score evaluation framework offers researchers a standardized approach for assessing future methodological innovations in this emerging field at the intersection of computer vision, nutritional science, and behavioral medicine [24] [25] [29].

Inertial sensor-based systems using smartwatches have emerged as a socially acceptable and practical method for the passive detection of eating gestures. A core challenge in this field is the objective evaluation and comparison of these systems, for which the F1-score has become a critical metric. This guide provides a systematic comparison of the performance and methodologies of key inertial sensing systems for eating detection, serving as a reference for researchers and professionals developing or selecting technologies for dietary monitoring and health intervention studies.

Performance Comparison of Inertial Sensing Systems

The following table synthesizes performance data from key studies that utilized smartwatch inertial sensors for eating gesture or meal detection. The F1-score, which harmonizes precision and recall into a single metric, is the primary basis for comparison.

Table 1: Performance Comparison of Inertial Sensor-Based Eating Detection Systems

| Study Reference | Primary Sensor | Detection Target | F1-Score (%) | Precision (%) | Recall (%) | Testing Context |

|---|---|---|---|---|---|---|

| Personalized Deep Learning Model [7] | IMU (Accelerometer & Gyroscope) | Food Consumption | 99 (Median) | - | - | In-the-Wild |

| Real-Time Smartwatch System [2] | Smartwatch Accelerometer | Meal Episodes | 87.3 | 80.0 | 96.0 | Free-Living (3-Week Deployment) |

| Thomaz et al. (7 Participants) [31] | Smartwatch Accelerometer | Eating Moments | 76.1 | 66.7 | 88.8 | Free-Living (1 Day) |

| Thomaz et al. (1 Participant) [31] | Smartwatch Accelerometer | Eating Moments | 71.3 | 65.2 | 78.6 | Free-Living (31 Days) |

Key Performance Insights

- High-Performance Range: The highest performance is demonstrated by a personalized deep learning model, achieving a median F1-score of 99% [7]. This indicates the potential for extremely high accuracy with user-specific model training.

- Robust Real-World Performance: A system designed for real-world deployment over three weeks achieved a robust F1-score of 87.3%, balancing high recall with good precision [2].

- Generalizable Models: Earlier foundational work by Thomaz et al. established the feasibility of the approach with F1-scores of 71.3% and 76.1% in free-living conditions, demonstrating generalizable, person-independent models [31].

Detailed Experimental Protocols

Understanding the methodology behind these performance metrics is crucial for critical evaluation and replication. This section details the experimental protocols from two pivotal studies.

Real-Time Smartwatch-Based Meal Detection System

This study aimed to develop a system for real-time meal detection to trigger Ecological Momentary Assessments (EMAs) [2].

- Objective: To detect meal episodes based on dominant hand movements and capture contextual eating data.

- Sensing Modality: A commercial smartwatch's three-axis accelerometer.

- Data Source & Algorithm: The system was built upon a dataset of eating and non-eating hand movements. The baseline classifier used a 50% overlapping 6-second sliding window to extract statistical features (mean, variance, skewness, kurtosis, and root mean square) from each accelerometer axis. A Random Forest classifier was trained and ported to a smartphone for real-time inference [2].

- Deployment & Validation: The system was deployed in a 3-week free-living study with 28 college students. Ground truth was established through participant self-reports. A meal was triggered for EMA when 20 eating gestures were detected within a 15-minute span [2].

A Practical Approach for Recognizing Eating Moments

This earlier work was pivotal in demonstrating the practicality of using a single off-the-shelf smartwatch for eating detection [31].

- Objective: To infer eating moments (including sit-down meals and snacks) from smartwatch accelerometer data.

- Methodology: The approach consisted of a two-step process:

- Food Intake Gesture Spotting: Identifying individual eating-related gestures from the continuous stream of inertial sensor data.

- Temporal Clustering: Aggregating these spotted gestures across the time dimension to infer distinct eating moments.

- Validation: The model was trained on data from a semi-controlled laboratory study with 20 subjects. It was then evaluated in two free-living condition studies: one with 7 participants over one day, and a longitudinal case study with one participant over 31 days, with performance tested on its ability to recognize eating moments within 60-minute intervals [31].

Workflow of a Smartwatch-Based Eating Detection System

The diagram below illustrates the standard end-to-end workflow for an inertial sensor-based eating detection system, from data acquisition to final output.

The Researcher's Toolkit: Essential Components

Successful implementation of an inertial sensor-based eating detection system relies on several key components, each with a specific function.

Table 2: Essential Research Components for Inertial Eating Detection

| Component | Function & Role in the System |

|---|---|

| Commercial Smartwatch | Provides a socially acceptable, stable hardware platform with a built-in 3-axis accelerometer and/or IMU for data collection [2] [31]. |

| Activity Recognition Pipeline | A structured workflow for processing sensor data, encompassing preprocessing, feature extraction, and model inference [2]. |

| Statistical Features (Time-Domain) | Features like mean, variance, skewness, and kurtosis calculated from accelerometer axes, serving as input for classical machine learning models [2]. |

| Random Forest Classifier | A commonly used and effective machine learning algorithm for classifying eating gestures from extracted features [2]. |

| Sliding Window Protocol | A data segmentation technique (e.g., 6-second windows with 50% overlap) used to frame the continuous sensor stream for feature extraction [2]. |

| Ecological Momentary Assessment (EMA) | A ground-truthing method where short, in-situ questionnaires are triggered by the detection system to validate predictions and capture context [2]. |

| Free-Living Validation Study | A study conducted in participants' natural environments, which is critical for assessing the real-world performance and robustness of the system [31] [32]. |

System Architecture for Real-Time Detection and Feedback

For systems designed to provide real-time intervention, the architecture extends beyond simple detection. The following diagram details the components required for a closed-loop sensing and feedback system.

Multi-modal data fusion represents a paradigm shift in human activity recognition, particularly for complex tasks like eating detection. While unimodal systems often face limitations due to their restricted information dimensionality, integrating complementary data streams from images and sensors creates synergistic effects that significantly enhance detection accuracy [33]. This guide provides an objective comparison of performance between single-modal and multi-modal approaches, with a specific focus on F1-score improvements achieved through strategic data fusion. The analysis is framed within the broader context of F1-score evaluation for eating detection systems research, providing researchers and product developers with evidence-based insights for selecting optimal architectural strategies.

The fundamental hypothesis driving multi-modal fusion is that food intake episodes generate correlated signatures across visual, inertial, and acoustic domains. By strategically combining these heterogeneous data sources, systems can overcome the inherent limitations of single-source approaches and achieve robust performance metrics essential for scientific and clinical applications [34]. The following sections present quantitative performance comparisons, detailed experimental methodologies, and technical implementation frameworks that demonstrate how intelligently designed fusion architectures can substantially boost F1-scores in eating detection systems.

Performance Comparison: Single-Modal vs. Multi-Modal Approaches

Quantitative F1-Score Comparison

Experimental evidence consistently demonstrates that multi-modal fusion strategies yield substantial improvements in F1-scores compared to single-modal approaches. The table below summarizes key performance metrics from controlled studies on drinking and eating activity detection.

Table 1: F1-Score Performance Comparison Across Modalities

| Detection Task | Modality | Fusion Strategy | F1-Score (%) | Research Context |

|---|---|---|---|---|

| Drinking Activity | Wrist IMU only (single-modal) | - | 97.2 [35] | Laboratory setting with limited confounding activities |

| Drinking Activity | Container IMU only (single-modal) | - | 97.1 [35] | Laboratory setting with limited confounding activities |

| Drinking Activity | In-ear microphone only (single-modal) | - | 72.1 [35] | Swallowing sound recognition in controlled conditions |

| Drinking Activity | Wrist IMU + Container IMU + Microphone | Late Fusion (SVM) | 96.5 (event-based) [35] | Includes challenging non-drinking activities (eating, pushing glasses, scratching neck) |

| Drinking Activity | Wrist IMU + Container IMU + Microphone | Late Fusion (XGBoost) | 83.9 (sample-based) [35] | Includes challenging non-drinking activities (eating, pushing glasses, scratching neck) |

| Food Intake Detection | Inertial sensors + Acoustic signals | Feature-level fusion | 82.0 [34] | Free-living conditions with real-world confounding factors |

| Food Type Recognition | Chewing sounds only (single-modal) | - | 80.0 [6] | 20 food items using transfer learning (EfficientNetB0) |

| Food Type Recognition | Chewing sounds (GRU model) | Deep learning on enhanced features | 99.3 [6] | 20 food items using spectrograms, spectral rolloff, and MFCCs |

Contextual Performance Analysis

The performance advantages of multi-modal fusion become particularly pronounced in real-world scenarios with diverse confounding activities. In drinking detection studies, single-modal approaches achieved high F1-scores in controlled settings with limited activity types [35]. However, when researchers introduced challenging non-drinking activities such as eating, pushing glasses, and scratching necks, multi-modal fusion maintained robust performance while single-modal systems experienced significant degradation [35].

The temporal dimension of evaluation also significantly impacts reported metrics. For drinking activity identification, event-based evaluation typically yields higher F1-scores (96.5%) compared to sample-based evaluation (83.9%) with the same sensor combination and classifier [35]. This discrepancy highlights the importance of evaluating detection systems using metrics aligned with their intended application context.

Experimental Protocols and Methodologies

Multi-Sensor Drinking Activity Identification Protocol

A comprehensive study on drinking activity identification implemented a rigorous experimental protocol to validate multi-modal fusion benefits [35]:

Subject Cohort and Sensor Configuration:

- Twenty participants (10 male, 10 female, age: 22.91 ± 1.64 years)

- Three inertial measurement units (IMUs) with triaxial accelerometers and gyroscopes:

- Two Opal sensors worn on both wrists

- One Opal sensor attached to a 3D-printed container

- Condenser in-ear microphone placed in the right ear for acoustic data

Experimental Design:

- Eight distinct drinking scenarios varying by posture (standing/sitting), hand dominance (left/right), and sip volume (small/large)

- Seventeen carefully designed non-drinking activities including eating, pushing glasses, combing hair, and scratching neck

- Four identical trials with interleaved drinking and non-drinking activities

Data Processing Pipeline:

- Motion signals: Euclidean norm calculation for acceleration and angular velocity vectors

- Acoustic signals: Spectral analysis for swallowing detection

- Sliding window approach for temporal segmentation

- Feature extraction and normalization for machine learning compatibility

- Post-processing to transform window-based predictions to event-based sequences

Classification Framework:

- Comparison of multiple machine learning algorithms (SVM, XGBoost)

- Separate evaluation of single-modal and multi-modal configurations

- Dual evaluation metrics: sample-based and event-based F1-scores

Covariance-Based Multi-Sensor Fusion Protocol

An alternative fusion methodology transformed multi-sensor data into 2D covariance representations for eating episode detection [34]:

Data Acquisition:

- Multi-sensor data collection from wearable devices (Empatica E4 wristband)

- Modalities included: 3-axis accelerometer, photoplethysmograph, electrodermal activity, temperature, heart rate

Covariance Representation:

- Formation of observation matrix from all sensor streams

- Pairwise covariance calculation between each signal across all samples:

- Covariance matrix computation: Cij = cov(H(:, i), H(:, j))

- Coefficient calculation: cov(Si, Sj) = 1/(n-1)Σmk=1(Sik-µi)(Sjk-µj)

- Creation of filled contour plots from covariance matrices

- Encoding of joint variability information as 2D color representations

Deep Learning Architecture:

- Deep residual network with 2D convolution layers

- Architecture: Three 2D convolution layers with batch normalization, ReLU, max pooling

- Output: Categorical response via softmax and classification layers

- Validation: Five-fold cross-validation on multi-day recording dataset

Technical Implementation Frameworks

Multi-Modal Fusion Workflow

The following diagram illustrates the complete technical workflow for multi-modal data fusion in eating/drinking detection systems:

Multi-Modal Fusion Technical Workflow

Experimental Setup for Drinking Detection

The specific experimental configuration for multi-modal drinking detection is detailed below:

Drinking Detection Experimental Setup

Research Reagent Solutions

Implementing effective multi-modal fusion systems requires specific technical components and analytical tools. The table below details essential "research reagents" for developing eating detection systems with enhanced F1-scores.

Table 2: Essential Research Reagents for Multi-Modal Eating Detection

| Component Category | Specific Solution | Function | Example Implementation |

|---|---|---|---|

| Motion Sensing | Inertial Measurement Units (IMUs) | Capture hand-to-mouth gestures and drinking kinematics | Opal sensors with triaxial accelerometers (±16g) and gyroscopes (±2000°/s) [35] |

| Acoustic Sensing | In-ear microphones | Detect swallowing sounds and chewing audio signatures | Condenser microphones (44.1 kHz sampling) placed in ear canal [35] [6] |

| Signal Processing | Feature extraction algorithms | Convert raw sensor data to discriminative features | Spectrograms, MFCCs, spectral rolloff, spectral bandwidth [6] |

| Fusion Architectures | Late fusion frameworks | Combine decisions from single-modal classifiers | Support Vector Machine (SVM) or XGBoost for decision integration [35] |

| Evaluation Metrics | F1-score calculation | Balance precision and recall for imbalanced activity detection | Sample-based and event-based F1-score implementations [35] |

| Data Annotation | Experimental protocols | Standardize activity scenarios for comparative evaluation | Designed drinking sessions with postural variations and confounding activities [35] |

The empirical evidence consistently demonstrates that strategically integrated multi-modal fusion significantly enhances F1-scores in eating and drinking detection systems compared to single-modal approaches. The performance advantage is particularly pronounced in real-world scenarios with diverse confounding activities, where multi-modal systems maintained F1-scores up to 96.5% while single-modal systems experienced significant degradation [35].

The choice of fusion strategy—whether early, intermediate, or late fusion—depends on specific application constraints and data characteristics. Late fusion approaches have demonstrated particular effectiveness for drinking activity detection, achieving high F1-scores while accommodating modality-specific processing requirements [35] [36]. For researchers and product developers, implementing the experimental protocols and technical frameworks outlined in this guide provides a validated pathway for developing robust eating detection systems with optimized F1-score performance.

Future directions in multi-modal fusion for eating detection will likely focus on adaptive fusion strategies that can handle real-world challenges including sensor failure, data loss, and variable environmental conditions. Additionally, personalization approaches that tune fusion parameters to individual user behaviors represent a promising avenue for further enhancing detection accuracy in diverse populations.

In the development of real-time detection systems, particularly for applications like automated eating behavior monitoring, two performance metrics are paramount: the F1-Score and Detection Latency. The F1-score, representing the harmonic mean of precision and recall, provides a balanced assessment of a model's classification accuracy, especially crucial when dealing with imbalanced datasets common in behavioral research [37] [38]. Simultaneously, detection latency—the time delay between data input and model prediction—determines the system's capability for timely intervention. For systems aiming to provide just-in-time feedback on eating behaviors, achieving an optimal balance between these competing metrics is the fundamental challenge in model design and deployment.

The trade-off arises because models with complex architectures often achieve higher F1-scores but require more computational time, increasing latency to impractical levels for real-time use. Conversely, overly simplified models may deliver instantaneous results but fail to detect behaviors accurately. This guide objectively compares the performance of various detection approaches and methodologies, providing researchers with a framework for evaluating systems suitable for eating detection research.

Quantitative Performance Comparison Across Domains

The following table summarizes the performance of various real-time detection systems documented in recent literature, highlighting the achievable balance between F1-Score and latency across different application domains.

Table 1: Performance Comparison of Real-Time Detection Systems

| Application Domain | Model/System Name | Reported F1-Score | Detection Latency | Key Hardware/Platform |

|---|---|---|---|---|

| Eating Detection | Hand-Object + YOLOX-nano [11] | 89.0% (Episode) | 1.5 minutes (Episode Delay) | Wearable Device (STM32L4 SoC) |

| Cybercrime Detection | Gradient Boosting [39] | 1.00 | ~0.6 ms | CPU (UNSW-NB15 dataset) |

| Cybercrime Detection | Random Forest [39] | 0.936 | ~55 ms | CPU (UNSW-NB15 dataset) |

| Network Intrusion | Temporal Graph Networks with XAI [40] | ~96.8% (Accuracy) | 1.45 s (for 50k packets) | Not Specified |

| Structural Inspection | Lite-V2 CNN [41] | 0.928 | 11 ms | Raspberry Pi 4 |

| Structural Inspection | AutoCrackNet [41] | 0.9598 | ~34 ms (29 FPS) | Jetson Xavier NX |

As evidenced in Table 1, different domains prioritize these metrics differently. For instance, the cybercrime detection system achieves perfect F1-score with sub-millisecond latency [39], whereas the eating detection system accepts a longer episode delay of 1.5 minutes to achieve a reliable F1-score of 89.0% [11]. This illustrates that the definition of "real-time" is context-dependent. For eating detection, where an "episode" unfolds over minutes, latency can be measured in minutes rather than milliseconds, focusing on accurate episode identification rather than instant gesture classification.

Detailed Experimental Protocols and Methodologies

Protocol for Real-Time Eating Detection

The evaluation of the wearable eating detection system provides a highly relevant protocol for researchers in the field of behavioral monitoring [11].

- System Design: The data was collected using a custom wearable device built around an STM32L4 SoC, featuring a Cortex M4 processor running at 80 MHz. The device incorporated an OV2640 camera for RGB video and an MLX90640 thermal sensor array, capturing data at 5 frames per second to balance detail with power efficiency.

- Data Collection: The study involved 36 participants who wore the device during waking hours, resulting in a total of 2,797 hours of data. The dataset included RGB and thermal video recordings, with frames labeled for feeding gestures, smoking gestures, and other activities.

- Gesture & Episode Detection: The model, based on a lightweight YOLOX-nano backbone (0.91M parameters), was trained to detect hands and objects-in-hand. A custom loss function helped determine the spatial relationship between hands and objects. Detected frames were clustered into gestures using DBSCAN (eps=21 seconds, minpoints=3). These gestures were then further clustered into eating episodes using a second DBSCAN step (eps=5 minutes, minpoints=4), with clusters shorter than 1 minute being excluded to reduce false positives.

- Performance Evaluation: The system's performance was evaluated based on its ability to detect entire eating episodes. The primary trade-off investigated was between the number of gestures required to confirm an episode and the resulting F1-score. The study found that using approximately 10 gestures to trigger detection achieved an optimal balance, yielding the reported F1-score of 89.0% with an average detection delay of 1.5 minutes into the episode.

Protocol for Benchmarking Cybercrime Detection

A separate study on cybercrime detection provides a clear framework for evaluating the latency and accuracy of machine learning models in a real-time streaming context [39].

- Experimental Setup: The researchers evaluated two models, Random Forest and Gradient Boosting, on the standard UNSW-NB15 network intrusion dataset.

- Performance Metrics and Load Testing: The core of the protocol was measuring two metrics simultaneously: the F1-score and the median inference latency. Models were tested under simulated streaming loads of 1,000 and 5,000 samples per second to mimic realistic network traffic.

- Success Criteria: A model was deemed suitable for real-time detection only if it could maintain an F1-score ≥ 0.90 while keeping the median inference latency ≤ 1.0 ms under both traffic loads. This strict criterion ensured that high accuracy did not come at the cost of practical usability.

- Results and Conclusion: Gradient Boosting met all criteria, achieving a perfect F1-score of 1.0 and a latency of approximately 0.6 ms even under load. In contrast, Random Forest, while achieving a high F1-score of 0.936, failed the latency test with a median latency of around 55 ms, making it unsuitable for this high-throughput real-time application [39].

System Workflows and Logical Diagrams

Workflow for a Wearable Eating Detection System

The following diagram illustrates the multi-stage pipeline for detecting eating episodes from sensor data, as described in the research [11].

Diagram 1: Eating Detection Workflow

This workflow highlights the sequential data processing stages, from raw sensor input to high-level behavioral episode detection. The clustering steps are critical for aggregating discrete frame-level detections into meaningful behavioral units (gestures and episodes), which is a common requirement in activity recognition systems.

General Logic of the F1-Score vs. Latency Trade-off

The relationship between model complexity, F1-score, and latency is a fundamental concept across all real-time detection domains. The diagram below visualizes this core trade-off.

Diagram 2: Core Performance Trade-off

This logical diagram shows the central challenge in designing real-time detection systems. Increasing model complexity generally improves the F1-score by enabling the model to capture more intricate patterns in the data. However, this complexity simultaneously increases computational demand, which in turn raises detection latency. The dashed line indicates the direct conflict between the goal of a high F1-score and the requirement for low latency.

Research Reagent Solutions and Essential Tools

For researchers developing and testing real-time eating detection systems, the following tools and "reagents" form the essential toolkit for experimental work.

Table 2: Essential Research Toolkit for Real-Time Eating Detection

| Tool / Solution | Function in Research | Exemplar in Literature |

|---|---|---|

| Lightweight CNN Models (e.g., YOLOX-nano, Lite-V2) | Enable accurate object and gesture detection on resource-constrained hardware. | YOLOX-nano (0.91M params) for gesture detection [11]. |

| Multi-Modal Sensors (RGB Camera, Thermal Sensor) | Provide complementary data streams to improve detection accuracy and reduce false positives. | Fusion of OV2640 RGB camera and MLX90640 thermal sensor [11]. |

| Edge Computing Platforms (e.g., STM32L4, Raspberry Pi, Jetson) | Serve as the hardware backbone for prototyping and deploying real-time systems. | STM32L4 SoC with Cortex M4 for wearable eating monitor [11]. |