Beyond the Bite: Advanced Methods for Differentiating Hand-to-Mouth Gestures from Eating Behavior in Clinical Research

This article provides a comprehensive overview for researchers and drug development professionals on the critical challenge of accurately differentiating hand-to-mouth gestures from actual eating events in sensor-based monitoring.

Beyond the Bite: Advanced Methods for Differentiating Hand-to-Mouth Gestures from Eating Behavior in Clinical Research

Abstract

This article provides a comprehensive overview for researchers and drug development professionals on the critical challenge of accurately differentiating hand-to-mouth gestures from actual eating events in sensor-based monitoring. It explores the foundational neuroscience linking hand and mouth movements, reviews state-of-the-art sensor technologies and machine learning methodologies, addresses key optimization challenges for real-world application, and establishes validation frameworks for assessing system performance. By synthesizing current research and emerging trends, this resource aims to support the development of robust, clinically viable tools for objective eating behavior assessment in therapeutic development and precision health.

The Neural and Kinematic Basis of Hand-to-Mouth Actions: From Shared Motor Programs to Distinct Behavioral Signatures

Troubleshooting Guide: Common Experimental Challenges

This guide addresses specific issues you might encounter during experiments on hand-to-mouth coordination.

Problem 1: Inconsistent Kinematic Signatures in Grasp-to-Eat vs. Grasp-to-Place Tasks

- Symptoms: High variability in Maximum Grip Aperture (MGA) measurements within the same experimental condition (e.g., grasp-to-eat), making it difficult to detect the significant MGA reduction that characterizes the grasp-to-eat movement.

- Solution:

- Control for Mouth Movement: Ensure the participant's mouth movement is consistent. The kinematic signature (smaller MGA) for hand-to-mouth movements is dependent on the concurrent opening of the mouth to accept the target, not just the goal of eating. Run conditions where participants must open their mouths during the transport phase, even for inedible targets or "place" tasks, to isolate this variable [1].

- Verify Target Type: The smaller MGA for hand-to-mouth movements is present even with inedible targets. If you are not seeing the effect, confirm that the target's properties (size, shape, slipperiness) are not forcing a different grip strategy that overrides the natural kinematic signature [1].

- Diagnostic Test: Run a simple internal check. Compare the MGAs for a "Grasp Edible to Eat" condition (which should show the smallest MGA) against a "Grasp Edible to Place" condition (which should show a larger MGA). If this expected pattern is not present in your pilot data, your motion capture or task instructions likely need recalibration.

Problem 2: Difficulty Isolating Neural Circuits for Specific Coordinated Movements

- Symptoms: Non-specific labeling when using neural tracers, making it impossible to determine if a single premotor neuron projects to multiple motor neuron pools controlling different muscles (e.g., hand and jaw).

- Solution:

- Employ Monosynaptic Tracing: Use a modified monosynaptic rabies virus-based transsynaptic tracing strategy. This method allows for the specific labeling of premotor neurons that form direct synaptic connections with motoneurons innervating a specific muscle, such as the masseter (jaw) or hand muscles, while avoiding labeling of passing fibers [2].

- Use Multiple Fluorescent Reporters: Inject different colored tracers (e.g., ΔG-RV-EGFP and ΔG-RV-mCherry) into two different muscles of interest. The presence of premotor neurons containing both colors indicates shared neural substrates that coordinate those muscles [2].

- Diagnostic Test: In a pilot animal, inject a single tracer and confirm that the labeling is confined to the expected motor nucleus and its premotor inputs before proceeding with a more complex dual-color experiment.

Problem 3: Interpreting Ambiguous Results from Functional Magnetic Resonance Imaging (fMRI)

- Symptoms: Widespread, overlapping activation patterns in the motor cortex during different hand-to-mouth tasks, making functional specialization difficult to pinpoint.

- Solution:

- Acknowledge Distributed Processing: Recognize that the human motor cortex contains a distributed, overlapping pattern of hand movement representation. Unlike a simple somatotopic map, finger and wrist movements activate a wide expanse of the precentral gyrus, and their representations overlap [3].

- Refine Your Experimental Design: Instead of looking for entirely separate brain areas, design your fMRI study to look for differences in the strength of activation within this shared network during grasp-to-eat versus grasp-to-place actions. Focus on areas like the ventral premotor cortex (PMVr/F5) and inferior parietal cortex, which are implicated in goal-oriented action [1] [4].

Problem 4: Confusion in Interpreting Arrow Symbols in Neural Pathway Diagrams

- Symptoms: Misunderstanding the meaning of arrows in biological schematics, such as mistaking a process arrow for a chemical reaction or directional flow.

- Solution:

- Establish a Lab Standard: Create and consistently use a legend for all diagrams and figures. Define what each arrow style (e.g., solid, dashed, double-lined) represents in your context [5].

- Add Explicit Labels: Do not rely on arrow style alone to convey meaning. Directly label the process that the arrow represents (e.g., "neural excitation," "synaptic connection," "information flow") [5].

Frequently Asked Questions (FAQs)

Q1: What is the key kinematic evidence that humans have distinct neural pathways for hand-to-mouth actions? A1: The primary evidence is a consistent reduction in the Maximum Grip Aperture (MGA) when reaching to grasp an item with the intent to bring it to the mouth (grasp-to-eat), compared to grasping the same item to place it elsewhere (grasp-to-place). This kinematic signature is specific to the right hand in right-handed individuals, suggesting left-hemisphere lateralization for this coordinated movement [1].

Q2: Is the "grasp-to-eat" kinematic signature triggered by the food itself? A2: No. Research shows that the smaller MGA is present even when transporting unmistakably inedible objects to the mouth. The signature is linked to the goal of the hand-to-mouth action itself, not the edibility of the target [1].

Q3: How exactly do premotor neurons coordinate bilateral movements, like symmetric jaw motion? A3: Monosynaptic circuit tracing reveals that some individual premotor neurons project to and connect with motoneurons on both the left and right sides of the brainstem. This shared premotor architecture provides a simple and effective neural solution for ensuring bilaterally symmetric muscle activity, which is essential for coordinated jaw movement [2].

Q4: What is the functional role of the ventral premotor cortex (PMVr or area F5) in coordination? A4: The ventral premotor cortex is crucial for shaping the hand during grasping and for orchestrating interactions between the hand and mouth. Electrical stimulation of this area can evoke complex, coordinated movements where the hand forms a grip and moves to the mouth, which simultaneously opens [4]. This region also contains "mirror neurons," which are active both when performing an action and when observing another individual perform the same action [4].

Q5: Why is the monosynaptic rabies virus tracing method superior to older neural tracer techniques for this research? A5: Traditional tracers suffer from limitations like labeling non-specific passing fibers or entire nuclei, making it difficult to confirm if a single premotor neuron controls multiple muscles. The modified monosynaptic rabies virus method specifically labels only the premotor neurons that form direct synaptic connections with the motoneurons of a defined muscle, allowing for precise mapping of functional circuits [2].

Table 1: Key Kinematic Findings from Hand-to-Mouth Action Studies

| Experimental Condition | Target Object | Mouth State During Transport | Observed Effect on Maximum Grip Aperture (MGA) |

|---|---|---|---|

| Grasp-to-Eat | Edible (e.g., Cheerio) | Open | Smaller MGA [1] |

| Grasp-to-Place | Edible (e.g., Cheerio) | Closed | Larger MGA [1] |

| Grasp-to-Mouth | Inedible (e.g., Hex Nut) | Open | Smaller MGA [1] |

| Grasp-to-Place | Inedible (e.g., Hex Nut) | Closed | Larger MGA [1] |

| Grasp-to-Mouth (any goal) | Any | Closed | Effect is diminished or absent [1] |

Table 2: Distribution of Premotor Neurons for Jaw-Closing Masseter Muscle (P1→P8 Mouse Model) [2]

| Brain Region | Function/Implication | Relative Abundance of Premotor Neurons |

|---|---|---|

| Brainstem Reticular Nuclei (IRt, PCRt, MdRt) | Rhythmogenesis, motor control | High (Bilateral) |

| Trigeminal Mesencephalic Nucleus (MesV) | Proprioception | High |

| Region Surrounding MoV | Local motor control | High |

| Cerebellar Deep Nuclei (e.g., Fastigial) | Motor coordination | Moderate |

| Red Nucleus (RN) | Descending motor control | Moderate |

| Midbrain Reticular Formation (dMRf) | Motor control | Moderate |

Detailed Experimental Protocols

Protocol 1: Kinematic Analysis of Goal-Differentiated Grasping

This protocol is adapted from methods used to isolate the hand-to-mouth kinematic signature [1].

- Participant Setup: Place three infrared light-emitting diodes (IREDs) on the participant's right hand: on the thumbnail, index fingernail, and the wrist (styloid process of the radius).

- Motion Capture: Use an optoelectronic system (e.g., Optotrak Certus) to record IRED positions at 200 Hz. Participants should wear liquid-crystal glasses that can be electronically occluded between trials to block vision.

- Task Design:

- Implement blocks of trials for different conditions (e.g., Grasp-to-Eat vs. Grasp-to-Place). For the "eat" condition, instruct participants to grasp the item and consume it. For the "place" condition, instruct them to grasp the item and release it into a container positioned near the mouth.

- Counterbalance the order of conditions across participants.

- Data Analysis: Calculate the Maximum Grip Aperture (MGA) as the maximum 3D distance between the thumb and index finger markers during the reach-to-grasp phase, before object contact. Perform statistical comparisons (e.g., repeated-measures ANOVA) of MGA between the different goal conditions.

Protocol 2: Mapping Shared Premotor Circuits with Monosynaptic Rabies Tracing

This protocol outlines the core methodology for defining neural substrates that coordinate multiple muscles [2].

- Viral Constructs: Utilize a genetically modified glycoprotein-deleted rabies virus (ΔG-RV) that is pseudotyped with a fluorescent reporter (e.g., EGFP, mCherry). This virus cannot spread beyond directly connected presynaptic neurons.

- Animal Model: Use a transgenic mouse line (e.g., Chat::Cre; RΦGT) that allows for Cre-dependent, specific targeting of motoneurons.

- Stereotaxic or Intramuscular Injection: Inject the ΔG-RV into the muscle(s) of interest (e.g., the jaw-closing masseter muscle and/or a hand muscle). The virus is taken up by motor nerve terminals and transported retrogradely to the motoneurons in the brainstem or spinal cord.

- Transsynaptic Spread: Within the motoneurons, the virus replicates and crosses synapses to label the premotor neurons that provide direct input. For dual-muscle tracing, inject two differently colored ΔG-RVs.

- Tissue Processing and Imaging: After a defined survival period (e.g., 7 days), perfuse the animal, serially section the brain and spinal cord, and image the sections using fluorescence microscopy.

- Analysis: Identify and count all labeled premotor neurons in different brain regions. Neurons labeled with both fluorescent reporters indicate shared premotor neurons that coordinate the two injected muscles.

Signaling Pathways and Neural Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Investigating Neural Coordination of Movement

| Reagent / Tool | Function / Application | Key characteristic |

|---|---|---|

| Glycoprotein-deleted Rabies Virus (ΔG-RV) | A modified virus for monosynaptic retrograde tracing; labels only neurons that form direct synaptic connections with the starter motor neurons, enabling precise circuit mapping [2]. | High specificity for direct inputs. |

| Optoelectronic Motion Capture (e.g., Optotrak) | Records the 3D position of infrared markers placed on the hand and fingers at high frequencies (e.g., 200 Hz) to quantify kinematics like Maximum Grip Aperture (MGA) [1]. | High spatial and temporal resolution. |

| Chat::Cre; RΦGT Transgenic Mouse Line | A genetically engineered animal model that enables Cre-dependent, specific infection of motoneurons by the modified rabies virus, which is essential for the monosynaptic tracing technique [2]. | Enables cell-type-specific starter population. |

| Liquid-Crystal Occlusion Glasses (e.g., Plato Glasses) | Glasses that can be electronically switched between transparent and opaque states; used to control visual input between trials in kinematic studies, preventing preview and standardizing testing conditions [1]. | Precise control of visual feedback. |

Frequently Asked Questions (FAQs)

Q1: What are the core kinematic components of prehension movements? Prehension movements are traditionally broken down into two core components: the transport component and the grip component. The transport component involves the movement of the arm and hand toward the target object's location, while the grip component involves the preshaping of the hand (aperture between finger and thumb) to match the object's intrinsic properties, such as its size and shape [6].

Q2: Are the motor plans for grasping and feeding actions fundamentally the same? Early research suggested strong similarities, but more recent, direct comparisons indicate significant differences. While both actions involve transport and grip/aperture elements, key kinematic measures such as oversizing (how much the hand or mouth opens beyond the object's size) and movement times differ, suggesting they may not be controlled by an identical motor plan [6].

Q3: How does the intent of an action (e.g., eating vs. placing) influence its kinematics? The end goal of an action significantly influences its kinematics. Research shows that during a grasp-to-eat movement, the maximum grip aperture (MGA) of the hand is significantly smaller compared to a grasp-to-place movement. This indicates greater precision when the ultimate goal is consumption, an effect that is more pronounced in the right hand [7].

Q4: What are the main methodological challenges when comparing grasping and feeding kinematics? Key challenges include ensuring task equivalence and accurate measurement. Early feeding studies used utensils, which alter the movement's kinematics. Furthermore, measuring mouth aperture based on the lips versus the teeth can yield different results. Direct comparisons require the hand to be used for both the grasping and feeding actions to isolate the kinematic components accurately [6].

Q5: How does tool use (like a fork) affect the kinematics of a feeding action? Using a tool modifies the kinematics. Total movement times are longer when using a fork compared to using the hand, particularly during the transport phase of bringing the food to the mouth [6].

Troubleshooting Common Experimental Issues

Problem 1: Inconsistent or Noisy Kinematic Data

Symptoms: High variance in trajectory, velocity, or aperture measurements across trials for the same condition; data appears "jittery."

Potential Causes and Solutions:

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Marker Placement | Verify marker security and positioning on anatomical landmarks at the start of each session. | Ensure markers are firmly attached to the distal phalanges of the thumb and index finger, and on the wrist [7]. |

| Environmental Noise | Check for sources of infrared interference in the lab. | Shield the experiment area from extraneous IR sources and ensure the motion capture system is properly calibrated. |

| Participant Instruction | Review instructions for clarity and consistency. | Standardize verbal instructions and ensure the participant understands the task goal (e.g., "grasp naturally to eat" vs. "grasp quickly") [7]. |

Problem 2: Failure to Replicate Differences Between Grasping and Feeding

Symptoms: Hand and mouth aperture profiles appear similar, with no significant difference in oversizing scaling.

Potential Causes and Solutions:

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Food Size Range | Check if the food items used are of sufficient size variation. | Use at least three distinct food sizes to elicit a range of aperture scaling (e.g., 10-mm, 20-mm, and 30-mm cubes) [6]. |

| Mouth Aperture Measurement | Review how mouth aperture is quantified. | Place markers to estimate the aperture between the teeth (e.g., on the forehead and chin) rather than the more elastic lips for a more consistent kinematic measure [6]. |

| Task Design | Ensure the feeding task is a direct "hand-to-mouth" movement. | Have participants grasp food with their fingers and bring it directly to the mouth to bite, avoiding the use of utensils which confound the kinematic comparison [6]. |

Problem 3: High Variability in Neural Data During Feeding Experiments

Symptoms: Unstable single-unit recordings or difficulty mapping neural population activity to chewing kinematics.

Potential Causes and Solutions:

| Potential Cause | Diagnostic Check | Solution |

|---|---|---|

| Uncontrolled Food Types | Check if food texture and toughness are documented. | Use a consistent set of foods and record their properties, as jaw kinematics and muscle activity vary with food type [8]. |

| Complex Neural Population Dynamics | Manually inspect single-unit recordings for rhythmic patterns. | Employ a Bayesian nonparametric latent variable model to uncover the latent structure of population activity and account for time-warping during rhythmic chewing [8]. |

| Behavioral Stage Identification | Verify accurate segmentation of the feeding sequence. | Divide the feeding sequence into distinct stages (ingestion, stage 1 transport, manipulation, chewing, swallowing) based on jaw gape cycles for more precise neural analysis [8]. |

Experimental Protocols

Protocol 1: Direct Comparison of Hand and Mouth Kinematics

Objective: To directly compare the kinematics of the transport and grip/aperture components during grasping and feeding actions under equivalent conditions [6].

Materials:

- Motion capture system (e.g., Optotrak Certus)

- Infrared markers

- Food items of different sizes (e.g., 10-mm, 20-mm, 30-mm cheese cubes)

Procedure:

- Participant Setup: Place markers on the participant's index finger, thumb, and wrist. Place additional markers on the forehead and chin to estimate mouth (jaw) aperture.

- Task Conditions:

- Grasping (Hand-to-Food): Instruct the participant to reach out, grasp a food item with a precision grip, and hold it.

- Feeding (Hand-to-Mouth): Instruct the participant to reach out, grasp a food item, and bring it to the mouth to bite.

- Data Collection: For each trial, record the 3D position of all markers. Ensure multiple trials are collected for each food size and condition.

- Kinematic Measures:

- Transport Component: Analyze the trajectory and velocity profile of the wrist marker.

- Grip/Aperture Component: For the hand, calculate the distance between the index finger and thumb markers to derive Maximum Grip Aperture (MGA). For the mouth, calculate the distance between the forehead and chin markers to derive Maximum Mouth Aperture (MMA).

Protocol 2: Investigating the Effect of Action Intent (Grasp-to-Eat vs. Grasp-to-Place)

Objective: To determine if the kinematics of a reach-to-grasp movement are influenced by the ultimate goal of the action (eating vs. placing) [7].

Materials:

- Motion capture system (e.g., Optotrak Certus)

- Infrared markers for thumb, index finger, and wrist.

- Small food items (e.g., Cheerios, Froot Loops).

- A bib or small container.

Procedure:

- Participant Setup: Place markers on the distal phalanges of the thumb and index finger, and on the wrist.

- Task Conditions (Blocked Design):

- Grasp-to-Eat: Participants grasp a food item and bring it to their mouth to eat.

- Grasp-to-Place: Participants grasp a food item and place it into a bib worn just beneath the chin.

- Data Collection: Record hand kinematics while participants perform each task with both their right and left hands, using different food sizes.

- Kinematic Analysis: The key dependent variable is the Maximum Grip Aperture (MGA). The hypothesis is that a task (EAT/PLACE) by hand (LEFT/RIGHT) interaction will be observed, with a smaller MGA for the right hand specifically during the grasp-to-eat condition.

Table 1: Comparison of Key Kinematic Measures in Grasping vs. Feeding

| Kinematic Measure | Grasping (Hand with Food) | Feeding (Mouth with Food) | Key Implication |

|---|---|---|---|

| Aperture Oversizing | Oversizes considerably larger than object (~11–27 mm) and scales with food size [6]. | Oversizes only slightly larger than object (~4–11 mm) and does not scale with food size [6]. | Different control strategies for hand vs. mouth, possibly due to grip stability needs for the hand. |

| Movement Time | Shorter total movement time [6]. | Longer total movement time, especially when using a tool (fork) [6]. | Feeding actions, particularly with tools, may require more fine motor control and deceleration. |

| Aperture Timing | Hand opens more rapidly relative to the reach [6]. | Mouth opens more slowly relative to the reach [6]. | Reflects the different precision demands and neural control of the two effectors. |

| Influence of Intent | Maximum Grip Aperture (MGA) is larger for grasp-to-place than for grasp-to-eat [7]. | Not Applicable | The end goal of an action fundamentally alters the kinematics of the grasp component. |

Table 2: Research Reagent Solutions & Essential Materials

| Item | Function/Description | Example from Research |

|---|---|---|

| Optotrak Certus | A motion capture system that records the position of infrared markers at high frequencies (e.g., 200 Hz) to precisely track hand, arm, and jaw kinematics [7] [8]. | Used to track markers on the finger, thumb, and wrist to calculate grip aperture and transport velocity [7]. |

| Infrared Emitting Diodes (IREDs) | Markers placed on anatomical landmarks (e.g., fingers, wrist, chin) that are tracked by the motion capture system to quantify movement [7]. | Placed on the distal phalanges of the thumb and index finger to measure grip aperture [7]. |

| Plato Liquid Crystal Goggles | Goggles that can be programmed to become opaque between trials. This controls visual input, preventing participants from pre-planning the next movement and ensuring each trial starts with a consistent visual state [7]. | Worn by participants to block vision after each trial is completed and until the next trial begins [7]. |

| Digital Videoradiography | A videofluoroscopic system used to capture 2D kinematics of internal orofacial structures (like the tongue and jaw) during naturalistic feeding by tracking implanted tantalum markers [8]. | Used to record jaw gape cycles and tongue movements at 100 Hz during feeding sequences in non-human primates [8]. |

| Micro-electrode Arrays | Chronically implanted arrays of electrodes used to simultaneously record the activity of ensembles of neurons in specific brain regions, such as the orofacial primary motor cortex (MIo) [8]. | Used to record spiking activity from the MIo of macaques during naturalistic feeding to study neural population dynamics [8]. |

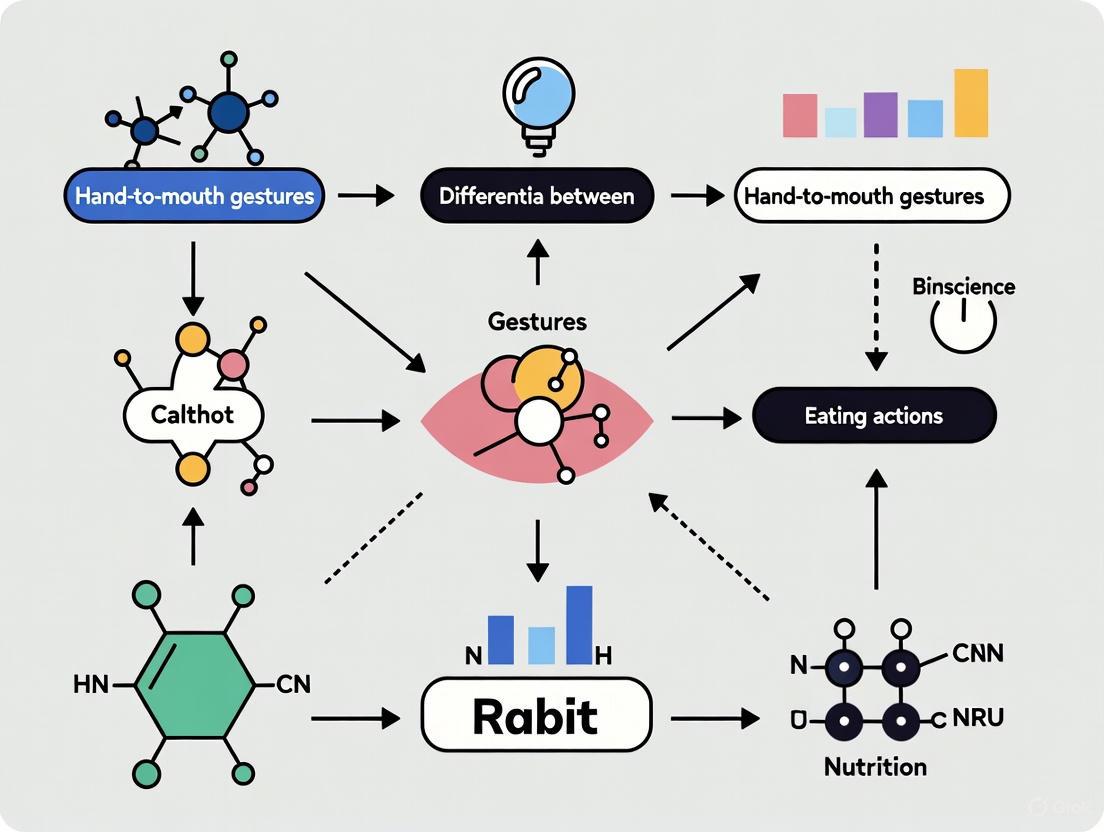

Experimental & Analytical Workflow Diagrams

Diagram 1: Kinematic Comparison Experimental Workflow

Diagram 2: Neural Data Analysis Pathway for Feeding

This technical support center provides resources for researchers working on the differentiation of hand-to-mouth gestures, a critical component in automated eating behavior analysis. The content is framed within a broader thesis on developing robust methods to distinguish eating from other activities using movement periodicity patterns. The following guides and protocols are designed to assist scientists, engineers, and drug development professionals in implementing, validating, and troubleshooting experimental setups for this specialized field of research.

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind using movement regularity to distinguish eating from other activities?

A1: The core principle is that repetitive hand-to-mouth gestures during an eating episode exhibit a more stable and periodic pattern compared to other arm and hand movements [9]. While activities like drinking or face-touching may involve similar trajectories, the continuous cycle of food acquisition, transport to the mouth, and return creates a distinctive rhythmic signature in the motion data that can be detected using inertial sensors and analyzed for its periodicity [9].

Q2: Which fingers' motion is most critical to monitor for eating activity analysis?

A2: Research indicates that the bending motion of the index finger and thumb is most critical, as it varies significantly with different food characteristics and the type of cutlery used (e.g., spoon vs. fork) [10]. In contrast, the motion of the middle finger has been shown to remain largely unaffected by these variables and shows the least correlation with fingertip forces, making it less discriminatory for this purpose [10].

Q3: What are the advantages of sensor-based methods over self-reporting for eating behavior studies?

A3: Sensor-based methods provide objective, high-granularity data on the temporal patterns of eating behavior, such as bite rate, chewing frequency, and hand-to-mouth periodicity [9]. They overcome the limitations of self-reporting methods like food diaries or 24-hour recalls, which are prone to recall bias and lack the precision to capture subconscious, repetitive eating actions [9].

Q4: We are getting poor classification accuracy when differentiating eating from face-touching gestures. What contextual factors should we consider?

A4: Your model may be lacking key contextual variables. Consider collecting and incorporating the following data:

- Temporal Context: The time of day, as eating often occurs at conventional mealtimes [11].

- Environmental Context: The location of the activity (e.g., kitchen, dining room, office desk) [11].

- Social Context: Whether the participant is alone or in the company of others during the activity [11].

- Object Presence: The use of cutlery, which introduces specific grip and finger motion patterns that can be detected [10].

Troubleshooting Guides

Issue 1: Low Accuracy in Detecting Eating Episodes

Problem: Your model fails to reliably identify the start and end of an eating episode, confusing it with other arm movements.

| Possible Cause | Diagnostic Steps | Proposed Solution |

|---|---|---|

| Insufficient Signal Features | Calculate the periodicity (e.g., using FFT) of hand-to-mouth movements from your motion sensor data. Eating should show stronger periodicity. | Extract and use time-domain (e.g., mean, variance) and frequency-domain (e.g., spectral power) features to capture rhythmic patterns [9]. |

| Poor Sensor Placement | Review the placement of your inertial measurement unit (IMU). | Ensure the sensor is securely placed on the wrist of the dominant hand to accurately capture the flexion/extension and pronation/supination of the wrist during eating [9]. |

| Lack of Contextual Data | Check if your data includes only motion and no other contextual cues. | Fuse motion data with other sensor modalities, such as audio from a microphone to detect chewing sounds, to improve detection specificity [9]. |

Issue 2: Data Artifacts and Sensor Noise Corrupting Motion Signals

Problem: The collected motion data is noisy, making it difficult to identify clear movement patterns.

| Possible Cause | Diagnostic Steps | Proposed Solution |

|---|---|---|

| Loose Sensor Attachment | Visually inspect the sensor attachment to the participant. | Use adjustable straps to ensure a snug but comfortable fit, minimizing movement artifacts [10]. |

| Unfiltered Raw Data | Plot the raw accelerometer and gyroscope signals to observe the noise level. | Apply standard signal processing filters (e.g., a low-pass filter with an appropriate cutoff frequency, such as 5-10 Hz, to remove high-frequency noise not related to gross arm movements) during data pre-processing [9]. |

| Participant Non-Compliance | Check for data gaps or irregular timestamps in the data log. | Provide clear instructions to participants and, if possible, use a system that can prompt participants to re-attach sensors or log compliance. |

Experimental Protocols & Data Presentation

Protocol 1: Instrumented Glove for Hand Motion Analysis

This methodology is adapted from studies analyzing finger motion and force during eating with different foods and cutlery [10].

1. Objective: To capture and analyze the bending motion of fingers and the forces exerted by the thumb and index finger during eating activities.

2. Materials and Setup:

- Prototype Glove: A glove fitted with flexible bend sensors (e.g., Spectra Symbol, 4.5 inches) placed over the interphalangeal joints of the thumb, index, and middle fingers.

- Force Sensors: Thin force sensors (e.g., FlexiForce A201) attached to the tips of the index finger and thumb.

- Data Acquisition System: A microcontroller (e.g., Arduino or Teensy) with analog-to-digital converters to read the sensor values.

- Calibration: Calibrate bend sensors against known angles and force sensors against known weights.

3. Procedure:

- Participants don the instrumented glove.

- Participants are asked to eat a variety of foods with different physical properties (e.g., liquid like yogurt, solid like bread) using standard cutlery (spoon and fork).

- Sensor data (finger bending and fingertip force) is recorded at a sufficient sampling rate (e.g., 50-100 Hz) throughout the eating task.

- Data is synchronized with video recording for ground truth annotation.

4. Data Analysis:

- Use the Pearson correlation coefficient to analyze the relationship between finger bending and exerted force.

- Perform Analysis of Variance (ANOVA) and independent samples t-tests to determine if motion and force vary significantly with food type and cutlery.

Table 1: Summary of Key Findings from Hand Motion Analysis During Eating

| Metric | Thumb | Index Finger | Middle Finger |

|---|---|---|---|

| Variation with Food & Cutlery | Varies significantly | Varies significantly | Remains unaffected [10] |

| Correlation with Fingertip Force | Significant linear relationship | Significant linear relationship | Least positive correlation [10] |

| Key Role in Eating | Force exertion & object manipulation | Force exertion & object manipulation | Stabilization [10] |

Protocol 2: Wrist-Worn IMU for Periodicity Analysis of Hand-to-Mouth Gestures

This protocol leverages the periodicity of eating gestures for detection [9].

1. Objective: To use a wrist-worn inertial sensor to capture the rhythmic pattern of hand-to-mouth movements during eating and differentiate it from non-eating activities.

2. Materials and Setup:

- Inertial Measurement Unit (IMU): A device containing a 3-axis accelerometer and a 3-axis gyroscope.

- Secure Mounting: A wristband to firmly attach the IMU to the participant's dominant wrist.

- Data Logger: A smartphone or dedicated logging device to store the IMU data.

3. Procedure:

- Calibrate the IMU sensors according to the manufacturer's instructions.

- Participants perform a series of activities:

- Eating: Consume a meal using a spoon or fork.

- Control Activities: Drink water, type on a keyboard, touch their face.

- Data is recorded and labeled for each activity.

4. Data Analysis:

- Pre-process the data (filtering, gravity removal).

- Segment the data to isolate individual gestures.

- Extract features from the accelerometer and gyroscope signals, focusing on those that capture periodicity and movement dynamics.

- Train a machine learning classifier (e.g., Random Forest, Support Vector Machine) to distinguish between eating and non-eating gestures based on the extracted features.

Table 2: Quantitative Performance of Sensor-Based Eating Behavior Monitoring

| Eating Metric | Sensor Modality | Typical Performance / Accuracy | Key Challenge |

|---|---|---|---|

| Bite/Hand-to-Mouth Detection | Wrist-worn IMU (Accelerometer/Gyroscope) | High accuracy in lab settings; lower in free-living [9] | Differentiation from similar gestures (e.g., face touching) [9]. |

| Chewing Detection | Acoustic (Microphone) / Strain (EMG) | High accuracy for counting chews [9] | Privacy concerns (audio); sensitivity to sensor placement [9]. |

| Food Type Recognition | Camera (Computer Vision) | Increasingly high accuracy with deep learning [12] | Varying lighting conditions and food presentation [12]. |

Research Reagent Solutions

Table 3: Essential Materials for Hand-to-Mouth Gesture Research

| Item | Function in Research |

|---|---|

| Flexible Bend Sensors | Measure the angular deflection of finger joints during cutlery grip and food manipulation [10]. |

| Force-Sensitive Resistors (FSR) | Quantify the contact force exerted by the thumb and fingertip when gripping a spoon or fork [10]. |

| Inertial Measurement Unit (IMU) | Captures the acceleration and rotational velocity of the wrist, enabling the analysis of movement trajectory and periodicity [9]. |

| Data Glove | An integrated glove system with multiple sensors to capture hand kinematics (bend, force) in a single form factor [10]. |

| Wearable Microphone | Captures acoustic signals of chewing and swallowing, providing a secondary modality to confirm eating activity and analyze chewing cycles [9]. |

| Machine Learning Algorithms | Classify motion data into activities (eat/drink/non-eat) and detect patterns from multiple sensor streams [9] [12]. |

Experimental Workflow Visualization

Experimental Workflow for Eating Gesture Analysis

Sensor Data Analysis Pipeline

The Impact of Tools and Food Properties on Gesture Kinematics and Dynamics

Experimental Protocols and Methodologies

This section details the core experimental methods used to investigate how tools and food properties influence hand kinematics and dynamics.

Protocol 1: Instrumented Glove for Finger Motion and Force Analysis

This methodology is designed to capture the motion of and forces exerted by the thumb, index, and middle fingers during eating activities [10].

- Objective: To analyze the bending motion and contact forces of the thumb, index, and middle finger with respect to different food characteristics (liquid, solid) and cutlery (fork, spoon) [10].

- Key Equipment:

- Prototype Glove: Instrumented with three flexible bend sensors (Spectra Symbol, 4.5 inches) to measure the angles of the index finger, middle finger, and thumb [10].

- Force Sensors: FlexiForce A201 sensors attached to the tips of the index finger and thumb to measure contact force during utensil holding and use [10].

- Data Acquisition System: A system to record and process resistance changes from the bend sensors and force data from the fingertip sensors [10].

- Procedure:

- Participants don the instrumented glove.

- Participants perform eating tasks using five different food types and two types of cutlery (fork and spoon).

- Data on finger bending (via resistance change in bend sensors) and fingertip force is continuously recorded.

- The Pearson correlation coefficient is used to analyze the relationship between finger bending and exerted force.

- Analysis of variance (ANOVA) and independent samples t-tests are performed to determine the influence of food type and cutlery on motion and force [10].

Protocol 2: Whole-Body Inertial Motion Capture for Eating Kinematics

This protocol uses a full-body sensor suit to quantify the kinematics of the entire body during a realistic eating scenario [13].

- Objective: To quantify whole-body three-dimensional kinematics—including upper limb, hip, neck, and trunk joint angles—during defined phases of eating real food with the dominant hand in a seated position [13].

- Key Equipment:

- Inertial Motion System: Xsens MVN system with 17 inertial sensor units (ISUs) and two Xbus Masters, capturing data at 120 Hz [13].

- Software: Xsens MVN Studio 3.1 for calculating kinematic parameters from raw ISU data [13].

- Utensils and Food: A standard spoon and bowl containing yogurt to represent a common eating behavior [13].

- Procedure:

- Participants don a Lycra suit with attached ISU sensors. The system is calibrated, and body dimensions are defined.

- Participants sit comfortably on a 40-cm high seat behind a table, with feet fully on the floor. The bowl's center is aligned with their body midline.

- Participants are instructed to eat three spoons of yogurt without a break using habitual movements, while the left hand rests on the thigh.

- Whole-body kinematics are captured throughout the task.

- The eating cycle is visually partitioned into four distinct phases for analysis:

- Reaching: Moving the spoon to the bowl.

- Spooning: Getting food into the spoon.

- Transport: Moving the spoon from the bowl to the mouth.

- Mouth: Placing the food into the mouth [13].

- Mean joint angles are compared among the phases using Friedman’s analysis of variance.

Troubleshooting Guide: Common Experimental Challenges

This guide addresses specific issues you might encounter during experiments on hand-to-mouth kinematics.

Problem: Inconsistent finger force data is recorded across participants using the instrumented glove.

- Possible Cause: Variations in individual grip strength or glove fit.

- Solution:

- Ensure the glove is snug but not restrictive for each participant.

- Perform a calibration routine before data collection where participants apply a known, gentle force to a calibrated load cell.

- In analysis, normalize force data relative to each participant's maximum voluntary contraction (MVC) for the key fingers.

Problem: Motion capture data from the full-body suit appears noisy or includes drift during the eating task.

- Possible Cause: Magnetic interference in the lab environment or improper sensor calibration.

- Solution:

- Conduct the experiment in an environment with minimal metal and electromagnetic interference.

- Strictly follow the manufacturer's calibration protocol before every recording session, ensuring the participant remains still during the calibration process.

- Use the system's software to perform a "sensor-to-segment" calibration for improved accuracy.

Problem: Difficulty in visually identifying and separating the four distinct eating phases (Reaching, Spooning, Transport, Mouth) from the continuous data stream.

- Possible Cause: The transitions between phases can be fluid and subjective.

- Solution:

- Record a synchronized, high-frame-rate video of each trial alongside the kinematic data.

- Have at least two independent researchers annotate the start and end of each phase based on the video.

- Calculate the inter-rater reliability (e.g., using Cohen's Kappa) to ensure consistent phase identification before proceeding with data analysis [13].

Problem: The bending sensor resistance values do not linearly correspond to finger joint angles.

- Possible Cause: Sensor non-linearity or hysteresis.

- Solution:

- Characterize each bend sensor prior to integration by mounting it on a goniometer and recording resistance values at known angles.

- Create a sensor-specific calibration curve (angle vs. resistance) and apply this transformation to all recorded data during processing [10].

Frequently Asked Questions (FAQs)

Q1: Which fingers are most critical for monitoring during utensil-based eating, and what parameters should I measure? The thumb, index, and middle fingers are most critical. Research shows that the bending motion of the index finger and thumb varies significantly with food type and cutlery. You should measure both the bending motion (kinematics) of these fingers and the contact force (dynamics) exerted by the thumb tip and index fingertip, as their relationship is key to understanding grip control [10].

Q2: How does food texture influence whole-body kinematics during eating? Food texture influences movement patterns. Studies dividing the eating cycle into phases (Reaching, Spooning, Transport, Mouth) show that joint angles change characteristically between phases. For example, shoulder, elbow, and hip flexion are largest in the mouth phase, while neck flexion is largest during the spooning phase. These patterns would likely be altered by food textures that require more or less postural stability or precision [13].

Q3: What are the primary sensor modalities used for measuring eating behavior in research? A systematic review of the field establishes a taxonomy of sensors including:

- Acoustic Sensors: For detecting chewing and swallowing sounds.

- Motion Sensors (Inertial Measurement Units - IMUs): For tracking hand-to-mouth gestures, arm and body kinematics.

- Strain Sensors: Often integrated into gloves to measure finger bending.

- Force Sensors: For measuring grip force and utensil interaction.

- Cameras: For computer vision-based analysis of food intake and gesture tracking [9].

Q4: My analysis shows that middle finger motion has a low correlation with fingertip force. Is this an error? No, this is an expected finding. Research specifically indicates that the middle finger motion shows the least positive correlation with index fingertip and thumb-tip force, irrespective of food characteristics or cutlery used. This suggests the middle finger may play a more stabilizing role rather than a primary force-application role in utensil use [10].

The tables below consolidate key quantitative findings from research on the kinematics and dynamics of eating gestures.

Table 1: Maximum Joint Angles Observed During a Complete Eating Cycle [13]

| Joint & Motion | Maximum Angle (Degrees) |

|---|---|

| Elbow Flexion | 129.0° |

| Wrist Extension | 32.4° |

| Hip Flexion | 50.4° |

| Hip Abduction | 6.8° |

| Hip Rotation | 0.2° |

Table 2: Statistical Outcomes from Finger Motion and Force Analysis [10]

| Analysis Type | Key Finding |

|---|---|

| Pearson Correlation | A significant linear relationship exists between finger bending motion and forces exerted during eating. |

| The middle finger motion showed the least positive correlation with index and thumb tip forces. | |

| ANOVA / t-test | Bending motion of the index finger and thumb varies significantly with differing food characteristics and type of cutlery (fork/spoon). |

| Bending motion of the middle finger remains unaffected by food type or cutlery. | |

| Contact forces exerted by the thumb tip and index fingertip remain unaffected by food type or cutlery. |

Research Reagent Solutions

This table lists essential materials and their functions for setting up experiments in hand-to-mouth gesture analysis.

Table 3: Key Research Materials and Equipment

| Item | Function / Application |

|---|---|

| Flexible Bend Sensors | Measure angular displacement of finger joints (e.g., index, middle, thumb) during utensil gripping and manipulation [10]. |

| Force Sensing Resistors (FSRs) | Measure contact force exerted by fingertips (e.g., thumb and index finger) on utensils during eating tasks [10]. |

| Inertial Measurement Unit (IMU) System | Capture full-body or upper-limb kinematics (joint angles, trajectories) during the entire eating motion in laboratory or free-living settings [13] [9]. |

| Data Glove | A unified platform (often custom-built) integrating multiple bend and force sensors to simultaneously capture hand kinematics and dynamics [10]. |

| Acoustic Sensors | Detect and monitor chewing and swallowing events as part of a multi-modal eating behavior analysis system [9]. |

Experimental Workflow Diagrams

Sensor Technologies and Analytical Frameworks for Automated Gesture Classification

Troubleshooting Guides

IMU Sensor Calibration and Data Accuracy

Problem: My IMU-derived joint angle measurements are inaccurate during dynamic movements. Inertial Measurement Units (IMUs) require sensor-to-segment calibration to align the sensor's internal coordinate system with the anatomical coordinate system of the body segment. Incorrect calibration leads to significant errors in measuring angles during sports-related or eating gesture tasks [14].

Solution:

- Select an Appropriate Calibration Method: For dynamic movements involved in eating research, functional calibration methods are generally more effective than simple static poses [14].

- Perform Dynamic Calibration Movements: Execute a series of predefined movements that involve significant motion in the sagittal plane while minimizing motion in other planes. Effective movements include [14]:

- Slow, Normal, and Fast Gait

- Tilted to Stand (from a seated, leaned-back position to standing)

- Extension to Stand (from seated with bent knees to standing)

- Calf Raise to Squat

- Validate Against Gold Standard: Where possible, validate your IMU system's output against an optical motion capture system to quantify and correct for measurement error [14].

Problem: My wrist-mounted IMU data is too noisy to reliably detect eating gestures. Raw sensor data often contains noise from various sources, including environmental interference and sensor artifacts, which can blur the target signal [15].

Solution: Implement a Multi-Step Joint Noise Reduction Method. This approach, adapted from acoustic sensing, effectively suppresses noise without requiring complex hardware changes or large labeled datasets [15].

- Step 1: Moving Average (MA): Apply a moving average filter to smooth the raw data in the temporal domain.

- Step 2: Wavelet Packet Transform (WPT): Use WPT to decompose the signal for more detailed analysis and denoising.

- Step 3: Bandpass Filtering (BPF): Apply a bandpass filter to isolate the frequency range characteristic of hand-to-mouth gestures.

- Step 4: Envelope Extraction (EE): Extract the signal envelope to analyze the amplitude variations related to activity.

EMG Sensor Setup and Signal Acquisition

Problem: My EMG sensor outputs a constant maximum reading (e.g., 1023) with no signal variation. This issue typically occurs when the sensor's amplification is set too high, causing the output voltage to saturate at the maximum level your microcontroller (e.g., Arduino) can read [16].

Solution:

- Check Electrode Connection: Ensure electrodes are properly attached to the skin with good contact to reduce signal impedance.

- Adjust the Onboard Potentiometer: The sensor module likely has a potentiometer to adjust the gain.

- Carefully tweak the potentiometer while the sensor is connected and the serial plotter is open.

- Make small adjustments and observe the signal. The goal is to reduce the gain so that the signal varies within a readable range (e.g., 0-5V for Arduino) instead of pegging at the maximum value [16].

- Verify Signal with Muscle Contraction: Once the signal is no longer saturated, test by flexing the muscle. You should observe clear signal spikes corresponding to your muscle activity.

Power Management for Long-Term Studies

Problem: The battery life of my wearable device is too short for all-day eating behavior monitoring. Continuous sensing, wireless connectivity, and data processing are significant power drains that can limit a device's operational time, disrupting data collection and user compliance [17] [18].

Solution:

- Implement Dynamic Power Scaling: Adjust the processor's voltage and clock frequency based on the current task requirements [17].

- Use Sleep Modes and Duty Cycling: Program the microcontroller and sensors to enter deep sleep states when not actively taking measurements, waking up intermittently to capture data [17].

- Employ Efficient Communication Protocols: Use Bluetooth Low Energy (BLE) instead of classic Bluetooth or Wi-Fi for data transmission [17] [18].

- Utilize Power Management ICs (PMICs): Select PMICs that efficiently handle multiple power rails, battery charging, and safety features, reducing the overall power footprint [17].

- Consider Adaptive Sampling: Dynamically adjust the sensor data collection frequency based on user activity to reduce unnecessary power consumption [18].

Algorithmic and Data Processing Challenges

Problem: My model fails to distinguish eating gestures from other similar arm movements. Detecting eating based solely on individual "bite" gestures in short time windows can be confused by other activities. A broader contextual approach often yields better results [19].

Solution: Adopt a Top-Down, Context-Aware Machine Learning Approach.

- Use Longer Data Windows: Instead of analyzing 1-5 second windows for individual bites, analyze longer windows (e.g., 4 to 15 minutes). This allows the model to learn the context of eating, including food preparation gestures and resting periods between bites [19].

- Apply a Convolutional Neural Network (CNN): Use a CNN to process these large windows of raw or pre-processed IMU data (accelerometer and gyroscope) in an end-to-end fashion [19].

- Implement a Hysteresis Algorithm: For detecting eating episodes of arbitrary length, use a dual-threshold hysteresis algorithm. Start an episode when the model's probability score exceeds a higher threshold (

TS) and end it only when the probability falls below a lower threshold (TE). This smooths the detections and reduces false positives [19].

Frequently Asked Questions (FAQs)

Q1: Which sensor modality is most socially acceptable for continuous eating monitoring in free-living conditions? Research indicates that wrist-worn devices like smartwatches or fitness bands are perceived as more socially acceptable than necklaces, earpieces, headsets, or sensors mounted on the head or neck. Their widespread consumer use makes them unobtrusive for long-term studies [20] [19].

Q2: What machine learning models are most effective for detecting eating from wrist motion? The best model depends on the approach:

- For detecting individual bites (bottom-up): Models that consider the sequential context of data, such as Hidden Markov Models (HMM) and Deep Learning models (e.g., CNNs combined with LSTMs), show promising results [20].

- For detecting entire eating episodes (top-down): Convolutional Neural Networks (CNNs) analyzing long time windows (minutes) have demonstrated state-of-the-art performance on public datasets, as they can learn the broader context of eating activity [19].

Q3: What are the key considerations for sensor placement when studying hand-to-mouth gestures? The dominant finding in the literature is placement on the dominant wrist (e.g., the right wrist for right-handed individuals) [20] [19]. This is because most hand-to-mouth gestures for eating are performed with the dominant hand. The sensor should be securely fastened to minimize noise from skin movement artifacts [14].

Q4: How can I improve the robustness of my gesture recognition system in noisy clinical or home environments? For radar-based systems, advanced signal processing techniques are key. Implement dynamic clutter suppression and multi-path cancellation algorithms optimized for complex environments. Using an L-shaped antenna array with Digital Beamforming (DBF) can also help by efficiently fusing range, velocity, and angle-of-arrival information to improve spatial resolution and noise resilience [21].

- Participant Preparation: Place IMU sensors securely on the body segments of interest (e.g., sacrum, thighs, shanks, feet) using elastic wrap and athletic tape to minimize movement artifact.

- Static Calibration: Have the participant assume two static poses:

- Standing Static: Neutral standing position, feet flat, toes forward.

- Seated Static: Seated in a leaned-back position, legs extended straight, toes pointing up.

- Functional Calibration: Guide the participant through a series of dynamic movements, each performed twice:

- Slow Gait

- Normal Gait

- Fast Gait

- Tilted to Stand

- Extension to Stand

- Calf Raise to Squat

- Data Collection: Collect synchronized data from the IMUs and, if available, a gold-standard optical motion capture system during these calibration trials and subsequent test movements.

Performance of Eating Detection Algorithms on Wrist Motion Data

| Metric / Algorithm | Bottom-Up Approach (Bite Detection) | Top-Down CNN (6-min window) |

|---|---|---|

| Dataset | Various (Lab & Free-living) | Clemson All-Day (CAD) [19] |

| Detection Basis | Individual hand-to-mouth gestures | Context of entire eating episode |

| Key Methodology | HMM, SVM, Random Forest [20] | Convolutional Neural Network [19] |

| Episode Detection Rate | Varies by study | 89% of eating episodes detected [19] |

| False Positive Rate | Varies by study | 1.7 False Positives per True Positive [19] |

| Calibration Method | Typical Absolute Mean Error (vs. Motion Capture) | Notes / Best For |

|---|---|---|

| Static Poses | Varies across joints and tasks | Found to be less accurate for dynamic sports tasks. |

| Functional Calibrations | <0.1° to 24.1° | Accuracy is joint and task-dependent. |

| Slow/Normal/Fast Gait | Lower error in gait analysis | Suitable for studies involving walking. |

| Tilted to Stand | Lower error at the pelvis and hip | Recommended for tasks involving sit-to-stand motions. |

| Calf Raise to Squat | Lower error at knee and ankle | Recommended for squats and jumps. |

Research Reagent Solutions: Essential Materials

| Item | Function / Application in Research |

|---|---|

| Inertial Measurement Unit (IMU) | Contains accelerometer, gyroscope, and sometimes magnetometer. Measures linear acceleration, angular velocity, and orientation. The primary sensor for capturing wrist motion and gesture dynamics [20] [14] [19]. |

| Electromyography (EMG) Sensor | Measures electrical activity produced by skeletal muscles. Used to detect and analyze muscle activation patterns during gesture execution [16]. |

| Power Management IC (PMIC) | Integrated circuit that manages power flow from the battery to different components. Crucial for extending battery life in wearable devices by efficiently regulating multiple power rails [17]. |

| Bluetooth Low Energy (BLE) Module | A low-power wireless communication module. Enables data transmission from the wearable sensor to a nearby device (e.g., smartphone) without excessive battery drain [17] [18]. |

| Frequency-Modulated Continuous Wave (FMCW) Radar | A contactless sensor that uses radio waves to detect gestures. Ideal for hygienic, vision-free interaction in clinical settings and robust to lighting conditions [21]. |

Workflow Diagrams

Top-Down Eating Episode Detection Workflow

Multi-Step Sensor Data Noise Reduction Process

Frequently Asked Questions (FAQ)

Q1: My model performs well in the lab but fails to generalize to real-world meal sessions. What could be wrong? This is often caused by data leakage or poor data distribution [22]. If your training data contains information that wouldn't be available in a real deployment (e.g., specific background patterns, consistent lighting), the model learns these shortcuts instead of the actual gesture. Ensure your training and test sets are strictly separated by participant and environment. Also, collect data across diverse meal sessions with varying food types and lighting conditions to mimic real-world variability [23].

Q2: How can I improve the accuracy of my gesture segmentation in continuous data streams? Adopt a temporal convolutional network with an attention mechanism. This architecture is specifically designed for continuous fine-grained gesture detection, like those in meal sessions, by focusing on relevant parts of the sequence and modeling long-range dependencies effectively [23].

Q3: My vision-based system is unreliable in low-light conditions or when the hand is occluded. What are my options? Consider switching to or fusing with a low-power radar or ultrasonic sensor array. Millimeter-wave FMCW radar and ultrasonic sensors are impervious to lighting conditions and can often detect motion through minor obstructions, making them robust for clinical or home monitoring environments [21] [24].

Q4: I am getting high validation accuracy, but the model's predictions seem random on new user data. The issue likely stems from inconsistent labeling during dataset creation [22]. If multiple annotators label the same gesture differently, the model cannot learn a consistent signal. Implement an annotation protocol with clear guidelines and measure inter-annotator agreement to ensure label consistency.

Q5: What is a simple way to check if my data contains a learnable signal before building a complex model? Always start with a baseline model, such as a simple linear model or a shallow CNN. If a simple model performs nearly as well as a complex one, it indicates that your complex architecture might be over-engineering the solution. Conversely, poor baseline performance can flag fundamental data issues early on [22].

Troubleshooting Guides

Problem: Poor Classification Accuracy for Specific Eating Styles

Possible Causes & Solutions:

- Cause 1: Class Imbalance in Training Data. Your dataset might have significantly more examples of one eating style (e.g., spoon) than others (e.g., chopsticks).

- Solution: Apply data-level techniques such as oversampling minority classes or undersampling majority classes. You can also generate synthetic data for underrepresented styles using algorithms like Generative Adversarial Networks (GANs) [25].

- Cause 2: Inadequate Feature Representation. The model may not be capturing the unique spatial-temporal patterns of different eating styles.

- Solution: Implement a Multi-Feature Fusion (MFF) framework. Fuse different types of features, such as range, velocity, and angle-of-arrival (AoA) information, to create a richer representation of each gesture. This has been shown to significantly boost accuracy [21].

- Cause 3: Confirmation Bias in Search Strategy.

- Solution: Analysis protocols should account for a innate confirmation bias, where researchers might preferentially look for expected patterns. Ensure evaluation metrics and validation sets are designed to objectively measure performance across all classes without preconceived templates [26].

Problem: System Fails in Real-Time Due to High Latency

Possible Causes & Solutions:

- Cause 1: Computational Complexity of Model.

- Solution: Optimize your model for edge deployment. This can involve model pruning, quantization, or using lightweight architectures like 3D-TCN or optimized CNNs. Research demonstrates that models can be redesigned to run efficiently on resource-constrained hardware like a Raspberry Pi while maintaining high frame rates [21] [27].

- Cause 2: Suboptimal GPU Utilization.

- Solution: Profile your code to check for poor GPU utilization. Common fixes include increasing the batch size (within memory limits), using mixed-precision training, and ensuring that data loading pipelines are asynchronous to prevent the GPU from idling [25].

Problem: Low Signal-to-Noise Ratio in Sensor Data

Possible Causes & Solutions:

- Cause 1: Environmental Clutter and Multi-Path Reflections.

- Solution: For radar-based systems, implement dynamic clutter suppression and multi-path cancellation algorithms specifically tuned for complex environments like clinics or homes [21].

- Cause 2: Attenuation of Signal Over Distance.

- Solution: This is common in ultrasonic systems. The signal strength

Aat distancedis given byA = A₀ * e^(-αd), whereA₀is initial strength andαis an attenuation factor [24]. Use hardware solutions like signal amplifiers and array-based sensors to boost the received signal and maintain fidelity across the expected working range [24].

- Solution: This is common in ultrasonic systems. The signal strength

Performance Data of Sensing Modalities

Table 1: Quantitative comparison of different gesture recognition technologies for hand-to-mouth monitoring.

| Technology | Reported Accuracy | Key Advantages | Key Limitations | Suitable Eating Styles |

|---|---|---|---|---|

| FMCW Radar [21] [23] | 93.87% - 98% (Classification)0.896 F1 (Eating Gesture) | Illumination independence, preserves privacy, contactsless, robust to occlusion [21]. | Computational complexity for high resolution, requires specialized hardware [21]. | Fork & Knife, Chopsticks, Spoon, Hand [23] |

| Ultrasonic Array [24] | >98% (Classification) | Low-cost, compact, unaffected by lighting, high power efficiency [24]. | Wide beamwidth (poor angular resolution), signal attenuates with distance [24]. | Not Specified |

| Multi-Modal (RGB + Thermal) [28] | 97.05% (Accuracy) | Robust to lighting changes, reduces background ambiguity [28]. | Privacy concerns (RGB), higher computational load for two streams [28]. | Not Specified |

| Piezoresistive Armband (FSR) [27] | 96% (Mean Accuracy) | Low-power, directly measures muscle activity, easy to wear [27]. | Physical contact required (not sterile), signal varies with band tightness [27]. | Not Specified |

Experimental Protocol: Radar-Based Gesture Segmentation

Objective: To detect and segment fine-grained eating and drinking gestures from continuous radar data [23].

Materials:

- FMCW Radar Sensor: A 60 GHz radar with an L-shaped antenna array (e.g., Infineon) is recommended for its cm-scale range resolution and ability to capture spatial information in multiple planes [21].

- Embedded Processor: An ESP32 or similar microcontroller for real-time signal processing and data acquisition [21].

- Software: A 3D Temporal Convolutional Network with Self-Attention (3D-TCN-Att) for processing the Range-Doppler Cube (RD Cube) [23].

Procedure:

- Data Collection: Collect continuous radar data from participants during entire meal sessions. The dataset should include a variety of eating styles (fork & knife, chopsticks, spoon, hand) and drinking gestures [23].

- Signal Processing: The radar's analog signals are converted and processed into RD Cubes, which provide a time-series of 2D range-velocity profiles [21] [23].

- Gesture Detection & Segmentation:

- Stage 1 - Detection: Use an adaptive energy thresholding detector to localize potential gesture segments within the continuous data stream [21].

- Stage 2 - Classification: Process the segmented RD Cube through the 3D-TCN-Att model. The self-attention mechanism helps the model focus on the most informative parts of the gesture [23].

- Validation: Apply a cross-validation method (e.g., seven-fold) on session data to evaluate the segmental F1-score for eating and drinking gestures independently [23].

Radar Gesture Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential materials and sensors for hand-to-mouth gesture recognition research.

| Item Name | Function & Application in Research |

|---|---|

| FMCW Radar Sensor (60 GHz) [21] | The core sensor for contactless gesture tracking. It transmits frequency-modulated waves and processes reflected signals to extract target range, velocity, and angle information, ideal for sterile environments. |

| Ultrasonic Transducer Array [24] | A low-cost alternative for gesture sensing. A circular array of transmitting transducers with a central receiver can form a wide beam area to track 3D hand movement. |

| ESP32 Microcontroller [21] [24] | A low-cost, low-power embedded system slave unit. Used for real-time signal acquisition from radar or ultrasonic sensors and initial data processing via SPI interface. |

| Piezoresistive FSR Armband [27] | An array of Force-Sensitive Resistors mounted on a forearm armband. It detects muscle swelling during contraction to classify hand gestures, useful for non-visual confirmation. |

| Multi-Modal (RGB-Thermal) Dataset [28] | A curated dataset containing synchronized RGB and thermal image streams of gestures. Used to train and validate models that are robust to lighting variations and background complexity. |

| 3D Temporal Convolutional Network (3D-TCN) [23] | A deep learning model architecture designed for processing sequential data like video or radar cubes. It effectively captures temporal dependencies for accurate gesture segmentation and classification. |

Multi-Modal Fusion Logic

Multi-Modal Fusion Pathway

FAQs: Addressing Common Experimental Challenges

FAQ 1: What are the most informative types of features for differentiating hand-to-mouth gestures from other daily activities?

Research indicates that a multi-domain approach is most effective. Key feature categories include:

- Temporal and Statistical Features: Simple features like the mean, standard deviation, and range of acceleration and gyroscopic signals are foundational. Rolling statistics (e.g., moving average, rolling standard deviation) can help capture short-term trends and volatility in the motion data [29].

- Spectral Features: Transforming the time-series signal into the frequency domain using techniques like the Fast Fourier Transform (FFT) reveals the power distribution across different frequencies. This is crucial for identifying the unique rhythmic patterns of eating gestures [29].

- Regularity-Based Features: Measures of signal regularity and periodicity can help distinguish repetitive eating motions from more erratic, non-eating movements. These can be derived from both time and frequency domains.

FAQ 2: My model is overfitting despite a large feature set. What is the likely cause and solution?

A large number of features relative to your training data is a common cause of overfitting. This high dimensionality increases computational complexity and can reduce model performance.

- Cause: The feature set likely contains redundant, noisy, or irrelevant features that do not contribute to differentiating the gesture [30].

- Solution: Implement a rigorous feature selection process. One effective method is using an Extra Trees Classifier to rank and select the most predictive features, which has been shown to significantly improve model accuracy while mitigating overfitting [31]. Dimensionality reduction techniques like Principal Component Analysis (PCA) can also be employed [30].

FAQ 3: How does the choice of cutlery or food type impact hand motion, and how can my model be robust to these variations?

Studies confirm that food characteristics and cutlery type do influence hand kinematics.

- Experimental Evidence: Analysis of Variance (ANOVA) and t-tests have shown that the bending motion of the index finger and thumb varies significantly when using a spoon versus a fork or when handling foods with different physical properties (e.g., liquid vs. solid) [10].

- Path to Robustness: To build a robust model, your training dataset must include data collected across these variations. Ensure your data encompasses different cutlery, food types, and eating styles. Feature engineering should focus on higher-level patterns of the hand-to-mouth trajectory and wrist rotation that are more invariant to the specific object being held.

FAQ 4: For detecting the timing of a gesture, which machine learning architectures are most suitable?

Models that can understand the sequential context of data across time are superior for this task.

- Recommended Architectures: Hidden Markov Models (HMMs) and Deep Learning models, particularly those using Long Short-Term Memory (LSTM) networks, show promising results for eating activity detection because they model temporal dependencies [20]. Bidirectional LSTM (BiLSTM) models are especially powerful for gesture recognition from sequential data [30].

Protocol: Inertial Sensor-Based Hand-to-Mouth Gesture Capture

This protocol outlines the methodology for using wrist-mounted inertial sensors to capture data for eating behavior research [20].

1. Sensor Configuration:

- Device: Use a commercial smartwatch/fitness band or a professional-grade inertial measurement unit (IMU).

- Sensors: The device must contain, at a minimum, a tri-axial accelerometer and a tri-axial gyroscope.

- Placement: Mount the device securely on the participant's wrist. Studies show the dominant wrist is most common, but the non-dominant wrist can also be used.

- Sampling Rate: Set a consistent sampling rate, typically 50 Hz or higher, sufficient to capture the dynamics of hand gestures.

2. Data Collection Procedure:

- Lab Setting: Conduct controlled sessions where participants perform specific activities, including eating with different foods/utensils and non-eating activities (e.g., typing, gesturing).

- Free-Living Setting: For more naturalistic data, participants wear the sensor during daily life.

- Ground Truth Annotation: Synchronize sensor data with a ground-truth source. This can be video recording, a self-report push button held in the other hand, or a researcher manually labeling the data.

3. Data Preprocessing & Feature Extraction:

- Preprocessing: Filter the raw sensor data to remove high-frequency noise.

- Segmentation: Split the continuous data stream into windows (e.g., 5-10 seconds) containing potential gesture events.

- Feature Extraction: From each data window, extract a comprehensive set of features from the following domains for each sensor axis:

- Temporal/Statistical: Mean, standard deviation, variance, kurtosis, skewness.

- Spectral: Spectral centroid, peak frequencies, spectral density [29].

- Regularity-Based: Signal entropy, zero-crossing rate.

Table 1: Summary of Sensor Modalities and Performance in Eating Detection Studies [20]

| Sensor Modality | Common Device Location | Key Measured Parameters | Reported High-Accuracy Models |

|---|---|---|---|

| Accelerometer & Gyroscope | Wrist, Lower Arm | Linear acceleration, Rotational velocity | Support Vector Machine (SVM), Random Forest |

| Commercial Smartwatch | Wrist | Integrated acceleration and rotation | Deep Learning (LSTM, CNN), Hidden Markov Model (HMM) |

| Bend & Force Sensors | Fingers (via Data Glove) | Finger flexion, Grasp force | Analysis of Variance (ANOVA), Correlation Analysis |

Table 2: Correlation Between Finger Motion and Force During Eating [10]

| Finger Motion | Correlation with Index Fingertip Force | Correlation with Thumb-tip Force | Influenced by Food Type/Cutlery? |

|---|---|---|---|

| Index Finger Bending | Strong Positive Correlation | Strong Positive Correlation | Yes |

| Middle Finger Bending | Least Positive Correlation | Least Positive Correlation | No (motion remains unaffected) |

| Thumb Bending | Strong Positive Correlation | Strong Positive Correlation | Yes |

Research Reagent Solutions: Essential Materials for Hand-to-Mouth Gesture Experiments

Table 3: Key Research Tools and Their Functions

| Item / Tool Name | Primary Function in Research |

|---|---|

| Inertial Measurement Unit (IMU) | The core sensor for capturing wrist and arm kinematics. Typically combines an accelerometer (measures linear acceleration) and a gyroscope (measures angular velocity) [20]. |

| Commercial Smartwatch/Fitness Band | A commercially available, user-friendly platform containing IMUs. Ideal for large-scale or free-living studies due to high acceptance and wireless operation [20]. |

| Data Glove with Bend Sensors | A glove instrumented with flexible bend sensors to measure the angular motion of individual finger joints during fine-motor tasks like holding cutlery [10]. |

| FlexiForce Pressure Sensors | Thin, flexible force sensors used to measure the contact forces exerted by the fingertips, e.g., the grip force on a spoon or fork [10]. |

| MediaPipe Framework | An open-source framework for pipeline-based data processing. Its "Hands" solution provides real-time hand landmark (21 points) detection from video, useful for ground truthing or vision-based studies [32]. |

| Leap Motion Controller | A device that uses infrared sensors to track hand and finger positions with high precision, providing detailed spatial data for gesture analysis [30]. |

Experimental Workflow Diagram

Hand-to-Mouth Gesture Analysis Workflow

Machine Learning and Deep Learning Architectures for Real-Time Gesture Recognition

Troubleshooting Guides & FAQs

This technical support center provides solutions for researchers and scientists working on real-time hand gesture recognition, with a specific focus on differentiating hand-to-mouth gestures in eating behavior studies.

Frequently Asked Questions

Q1: How can I improve my model's accuracy in distinguishing eating gestures from similar confounding gestures like face-touching or smoking?

A: This is a common challenge in free-living datasets. We recommend a multi-modal sensing approach.

- Solution 1: Incorporate Object-in-Hand Detection. A model that detects not just the hand but also the object being held can significantly reduce false positives. For example, a gesture involving a utensil or food item is a stronger indicator of eating than an empty hand moving toward the mouth. A method using a custom loss function with a lightweight YOLOX-nano backbone has been successfully employed for this purpose [33].

- Solution 2: Fuse Thermal Sensor Data. Supplementing an RGB camera with a low-power thermal sensor (e.g., MLX90640) can help filter out non-eating gestures. The thermal signature of a cigarette tip, for instance, is distinct from most food items, improving the differentiation of smoking sessions [33].

- Solution 3: Implement Temporal Clustering. Use a clustering algorithm like DBSCAN to group detected gestures into episodes. Feeding gestures typically occur in consecutive intervals, while confounding gestures are more sporadic. Optimal parameters found in one study were

eps = 21 secondsandmin_points = 3for gesture clustering [33].

Q2: My gesture recognition model is too slow for real-time inference on consumer-grade hardware. What optimization strategies can I use?

A: Achieving low latency on resource-constrained devices requires architectural optimizations.

- Solution 1: Model Pruning. Apply structured pruning techniques to remove less important neurons or connections from the network. The LAMP (Layer-Adaptive Magnitude-based Pruning) strategy has been used to compress a YOLOv8-based model by 76.1% in parameters and reduce GFLOPs by 66.7%, with negligible accuracy loss [34].

- Solution 2: Leverage Skeleton-Based Models. Instead of processing dense RGB or depth maps, use a skeleton-based representation of the hand. This transforms the problem into processing low-dimensional skeletal data, which is computationally lighter. These models can be transformed into 2D spatiotemporal images for efficient CNN-based classification [35].

- Solution 3: Use TensorRT Acceleration. For deployment on edge devices like the Jetson Orin Nano, convert and optimize your trained model using NVIDIA's TensorRT. This can significantly accelerate inference speed, as demonstrated with the pruned YOLOv8-GR model achieving 24.7 FPS [34].

Q3: What is the trade-off between detection speed and accuracy when triggering meal episode notifications?

A: This is a key design consideration for real-time intervention systems. The goal is to find the minimum number of gestures needed to confirm an eating episode reliably.

- Evidence: Research shows that waiting to confirm an episode using approximately 10 gestures (or within the first 1.5 minutes of an eating episode) can achieve a high F1-score of 89.0% [33].

- Trade-off Analysis: Triggering a notification based on fewer gestures reduces detection delay but increases the risk of false positives from confounding gestures. Requiring more gestures improves confidence but may miss very short eating bouts. You should calibrate this threshold based on the specific requirements of your study.

Experimental Protocols & Methodologies

This section details the experimental setup and workflows from key cited studies to serve as a reference for your own experiments.

Protocol 1: YOLOv8-GR for Gesture Recognition on Edge Devices [34]

This protocol outlines the enhancements made to the YOLOv8 architecture for robust gesture recognition and its deployment on an edge device.

1. Model Architecture Enhancements: