Biomarker Robustness in Habitual Diet Contexts: Validation, Challenges, and Applications in Biomedical Research

This article provides a comprehensive analysis of the robustness of dietary biomarkers within habitual diet contexts, addressing a critical need for objective dietary assessment in biomedical research.

Biomarker Robustness in Habitual Diet Contexts: Validation, Challenges, and Applications in Biomedical Research

Abstract

This article provides a comprehensive analysis of the robustness of dietary biomarkers within habitual diet contexts, addressing a critical need for objective dietary assessment in biomedical research. It explores the foundational principles of biomarker discovery, highlighting major initiatives like the Dietary Biomarkers Development Consortium (DBDC) that are systematically working to validate biomarkers for commonly consumed foods. The manuscript covers methodological applications of biomarker panels for monitoring dietary adherence and patterns in clinical trials and free-living populations. It critically examines key challenges, including the confounding effects of background diet, inter-individual variability, and analytical validation requirements. Furthermore, the article presents validation frameworks and comparative analyses of biomarker performance against traditional self-report methods. Designed for researchers, scientists, and drug development professionals, this resource offers evidence-based strategies for implementing robust dietary biomarker assessment in research protocols and clinical applications.

Foundations of Dietary Biomarkers: From Discovery to Validation in Complex Diets

The Critical Need for Objective Dietary Assessment in Biomedical Research

Accurate dietary assessment is a foundational element of nutrition research, chronic disease epidemiology, and the development of evidence-based public health policies. However, traditional methods for assessing habitual dietary intake, including food frequency questionnaires (FFQs), 24-hour dietary recalls, and food diaries, rely almost exclusively on self-reporting. These methods are prone to substantial measurement error, including recall bias, selective reporting, and difficulties in estimating portion sizes, which severely limits the validity and reliability of nutritional science [1]. The Global Burden of Disease project identifies suboptimal diet as a leading risk factor for premature death globally, highlighting the urgent need for more accurate dietary exposure assessment to inform effective interventions [2]. This article examines the transformative potential of objective biomarkers as alternatives to self-reported dietary assessment, with a specific focus on their application in evaluating complex dietary patterns rather than single nutrients.

Current Limitations of Self-Reported Dietary Data

Self-reported dietary assessment tools have been the mainstay of nutritional epidemiology for decades, despite well-documented limitations that introduce significant uncertainty into research findings and policy decisions.

Systematic Measurement Error: All self-report methods contain inherent measurement errors due to their subjective nature. Participants frequently underreport energy intake and selectively underreport consumption of foods perceived as "unhealthy" while overreporting "healthy" food items [1] [2].

Recall Challenges: FFQs require individuals to recall habitual intake over extended periods, which is cognitively demanding and imprecise. While multiple 24-hour recalls using multipass methods are increasingly considered more accurate, they still suffer from random and systematic errors in portion size estimation and forgotten consumption episodes [1].

Insufficient Capture of Dietary Complexity: Dietary patterns represent complex combinations of foods with synergistic and antagonistic nutrient interactions. Self-report methods struggle to capture these complexities, including food matrix effects and nutrient bioavailability [1].

The persistence of these methodological challenges has created a critical bottleneck in nutritional science, limiting our ability to establish robust connections between diet and health outcomes, and hampering the development of effective nutritional interventions and policies.

Biomarkers of Dietary Intake: Toward Objective Assessment

Dietary biomarkers offer a promising solution to the limitations of self-reported data by providing objective, quantifiable measures of dietary exposure and nutritional status. Defined as measurable biological indicators of dietary intake, these biomarkers can be categorized as either direct biomarkers of dietary exposure (measures of consumed nutrients) or biomarkers of nutritional status (indicators influenced by metabolism and nutrient interactions) [1].

The emergence of high-throughput metabolomics has revolutionized dietary biomarker discovery by enabling comprehensive profiling of metabolites in biological specimens. Metabolomics captures the complex biochemical responses to dietary intake, providing a sensitive measure of an organism's phenotype at a particular time [1] [2]. Unlike traditional nutritional biomarkers that target specific nutrients, metabolomic approaches can identify patterns associated with overall dietary patterns, making them particularly valuable for assessing adherence to dietary guidelines like the Mediterranean diet or Prudent diet [1] [3].

Types of Dietary Biomarkers

Table 1: Categories of Dietary Biomarkers and Their Applications

| Biomarker Category | Definition | Examples | Primary Applications |

|---|---|---|---|

| Recovery Biomarkers | Measures proportional to nutrient intake over specific periods | Doubly labeled water for energy expenditure; 24-hour urinary nitrogen for protein intake | Validation of self-report instruments; calibration of intake measurements |

| Concentration Biomarkers | Circulating or tissue levels reflecting nutritional status | Serum carotenoids, vitamin D, fatty acid profiles | Assessment of nutritional status; evaluation of diet-disease relationships |

| Predictive Biomarkers | Metabolites associated with specific food intake | Proline betaine (citrus fruits), alkylresorcinols (whole grains), 3-methylhistidine (meat) | Objective verification of specific food consumption; dietary pattern adherence |

| Metabolomic Pattern Biomarkers | Multiple metabolite profiles reflecting overall dietary patterns | NMR or MS-based metabolite signatures | Assessment of complex dietary patterns; classification of individuals by diet quality |

Methodological Approaches for Dietary Biomarker Discovery

The discovery and validation of robust dietary biomarkers requires sophisticated analytical platforms and carefully designed experimental protocols. The following section outlines key methodologies currently employed in the field.

Analytical Technologies for Metabolite Profiling

Nuclear Magnetic Resonance (NMR) Spectroscopy: NMR provides a high-throughput method for quantifying a broad range of metabolites in biological samples with excellent reproducibility. It requires minimal sample preparation and is particularly strong for identifying lipids and small molecules. However, it has lower sensitivity compared to mass spectrometry and may miss important low-abundance metabolites [2].

Mass Spectrometry (MS): MS-based platforms, especially when coupled with liquid or gas chromatography (LC-MS/GC-MS), offer high sensitivity and specificity for detecting thousands of metabolites simultaneously. These platforms can measure diverse chemical classes with wide dynamic ranges, making them ideal for discovery-phase research [4].

Multiplatform Approaches: Combining NMR and MS technologies provides complementary coverage of the metabolome, enhancing the breadth of metabolite detection and strengthening the validity of biomarker identification [3].

Experimental Workflow for Biomarker Discovery and Validation

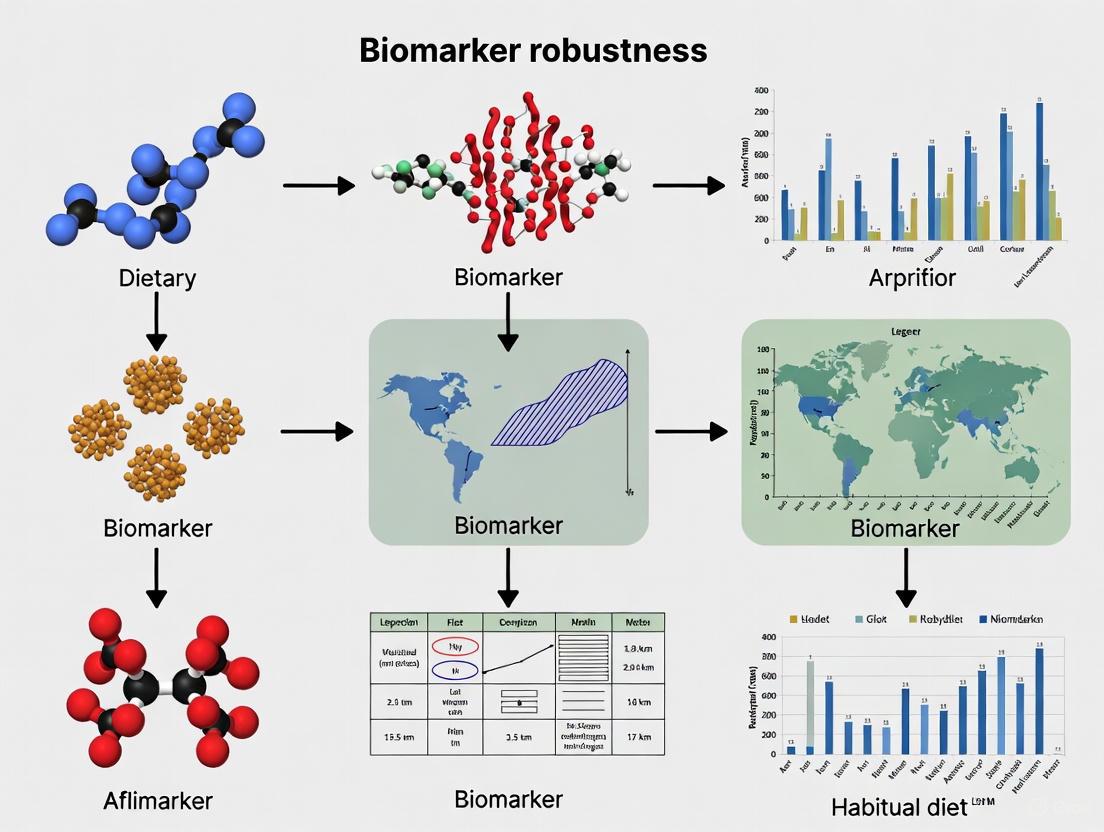

The following diagram illustrates the comprehensive workflow for dietary biomarker discovery and validation, from study design through to clinical application:

Experimental Protocol: Controlled Feeding Study for Biomarker Discovery

The Diet and Gene Intervention Study (DIGEST) provides an exemplary protocol for dietary biomarker discovery [3]:

Study Design: A two-arm, parallel randomized clinical trial comparing Prudent versus Western diets over a two-week intervention period.

Participant Selection:

- Healthy adults without serious metabolic disease

- Willingness to consume only provided foods during intervention

- Exclusion criteria: pre-existing cardiometabolic conditions, medication affecting metabolism

Dietary Interventions:

- Prudent Diet: Emphasizes minimally processed foods, lean protein, whole grains, and high amounts of fresh fruits and vegetables.

- Western Diet: Reflects typical North American profile with higher processed foods, red meat, and sweetened beverages.

Sample Collection and Processing:

- Fasting blood samples collected at baseline and post-intervention

- Single-spot urine specimens collected concurrently

- Plasma separation via centrifugation and storage at -80°C

- Urine aliquoting with creatinine normalization

Metabolomic Analysis:

- Multiplexed assay platforms (NMR, LC-MS, GC-MS)

- Stringent quality control with technical replicates

- Authentication of unknown metabolites via high-resolution MS/MS

- Confirmation using authentic chemical standards

Statistical Analysis:

- Mixed-effects models adjusting for age, sex, and BMI

- False discovery rate correction for multiple testing

- Correlation analysis with self-reported nutrient intake

- Multivariate pattern recognition techniques

Comparative Performance of Dietary Assessment Methods

Table 2: Method Comparison for Dietary Pattern Assessment

| Assessment Method | Key Strengths | Key Limitations | Biomarker Correlation | Ideal Application Context |

|---|---|---|---|---|

| Food Frequency Questionnaire (FFQ) | Captures habitual intake; practical for large studies | Recall bias; portion size estimation errors; culture-specific | Weak to moderate for specific nutrients | Large epidemiological studies; population surveillance |

| 24-Hour Dietary Recall | Reduced recall period; multiple passes enhance accuracy | Intra-individual variability; requires multiple collections | Moderate for specific foods | Research requiring quantitative nutrient estimates |

| Dietary Records/Diaries | Real-time recording; detailed food descriptions | Participant burden; reactivity (diet change) | Moderate for specific food groups | Metabolic studies; validation research |

| Metabolomic Biomarker Panels | Objective measurement; captures bioavailability | Cost; complex analysis; evolving validation standards | N/A (reference method) | Intervention studies; validation of self-report |

Key Research Reagent Solutions for Dietary Biomarker Studies

Table 3: Essential Research Reagents and Platforms for Dietary Biomarker Research

| Reagent/Platform | Function | Specific Application Example |

|---|---|---|

| Bruker 600 MHz NMR Spectrometer with IVDr | Quantitative metabolite profiling | Standardized plasma metabolite quantification in population studies [2] |

| LC-MS/MS Systems with HILIC/RP Chromatography | Broad metabolite detection | Identification of polar and non-polar food-related metabolites [3] |

| Chenomx NMR Suite 8.3 | Metabolite identification and quantification | Annotation of discriminating metabolites in dietary pattern analysis [2] |

| Food Processor Nutrition Analysis Software | Nutrient calculation from diet records | Linking self-reported intake to metabolite patterns [3] |

| Human Metabolome Database | Metabolite reference database | Structural identification of food-derived metabolites [2] |

| Stable Isotope-Labeled Standards | Quantitative precision in MS | Absolute quantification of candidate biomarker compounds [4] |

Biomarker Panels for Dietary Patterns: Experimental Evidence

Research has identified several robust biomarkers sensitive to short-term changes in habitual diet. The DIGEST study revealed distinct metabolic trajectories in participants following contrasting Prudent and Western diets [3]:

Prudent Diet Biomarkers

- Plasma and Urine Increases: 3-methylhistidine, proline betaine

- Urinary Increases (creatinine-normalized): Imidazole propionate, hydroxypipecolic acid, dihydroxybenzoic acid, enterolactone glucuronide

- Plasma Increases: Ketoleucine, ketovaline

Western Diet Biomarkers

- Plasma Increases: Myristic acid, linoelaidic acid, linoleic acid, α-linoleic acid, pentadecanoic acid, alanine, proline, carnitine, deoxycarnitine

- Urinary Increases: Acesulfame K (artificial sweetener)

These biomarkers not only confirmed good adherence to assigned food provisions but were also correlated (r > ±0.30, p < 0.05) with changes in the average intake of specific nutrients from self-reported diet records [3].

Challenges and Future Directions

Despite promising advances, significant challenges remain in the development and implementation of dietary biomarkers for routine research use.

Current Limitations

- Lack of Specificity: Most metabolites are not unique to specific foods and may be influenced by non-dietary factors including genetics, gut microbiome composition, and metabolic state [2].

- Validation Gaps: Few dietary biomarkers have been adequately validated as quantitative measures of habitual food intake in diverse populations [3].

- Technical Complexity: Metabolomics platforms require specialized expertise and infrastructure, limiting widespread adoption [4].

- Population Diversity: Most biomarker studies have been conducted in limited demographic groups, raising questions about generalizability [1].

Integration Framework for Biomarker Applications

The following diagram illustrates the conceptual pathway from biomarker discovery to public health application, highlighting key integration points and validation requirements:

Promising Avenues for Future Research

- Multi-Omic Integration: Combining metabolomic data with genomic, proteomic, and microbiome data to better understand inter-individual variability in response to diet [4].

- Point-of-Care Technology: Developing simplified devices for rapid biomarker assessment in clinical and community settings [5].

- Advanced Study Designs: Implementing larger controlled feeding studies testing a variety of foods and dietary patterns across diverse populations [4].

- Standardized Reporting: Establishing common ontologies and reporting standards for dietary biomarker literature to enhance reproducibility and comparability across studies [4].

- Biomarker Panels: Moving beyond single biomarkers to develop comprehensive panels that capture the complexity of dietary patterns through multiple complementary metabolites [1].

Objective dietary assessment through biomarker research represents a paradigm shift in nutritional science, offering an escape from the limitations of self-reported data. While current biomarkers show promise, particularly for assessing specific dietary patterns like the Prudent and Western diets, no single biomarker or biomarker profile can yet comprehensively identify the specific dietary pattern consumed by an individual. The future lies in validated biomarker panels that capture the complexity of whole diets, integrated with traditional assessment methods in a hybrid measurement error model approach. As the field advances, these objective measures will strengthen the evidence base for dietary guidelines, improve monitoring of nutrition interventions, and ultimately enhance our ability to connect diet to health outcomes across diverse populations.

The accurate assessment of diet, a complex exposure with significant implications for chronic disease risk, remains a formidable challenge in nutritional epidemiology [6] [7]. Traditional reliance on self-reported dietary data from food frequency questionnaires (FFQs) and dietary recalls introduces substantial measurement error due to systematic and random biases, including selective reporting and imprecise portion size estimation [1] [3]. For decades, nutritional science has been constrained by a limited toolkit of objective biomarkers, with only a handful like doubly labeled water for energy expenditure and 24-hour urinary nitrogen for protein intake meeting rigorous validation standards [8]. This methodological gap fundamentally impedes research into diet-disease associations and evidence-based public health policy development [4] [3].

The Dietary Biomarkers Development Consortium (DBDC) represents a pioneering, systematic initiative to address these limitations through the discovery and validation of food-based biomarkers using advanced metabolomic technologies [6] [7]. Established in 2021 through funding from the National Institute of Diabetes and Digestive and Kidney Diseases (NIDDK) and the USDA-National Institute of Food and Agriculture (USDA-NIFA), the DBDC coordinates multidisciplinary expertise across multiple academic institutions to significantly expand the list of validated biomarkers for foods commonly consumed in the United States diet [7]. This systematic framework marks a transformative approach to dietary assessment, moving beyond traditional nutrients to focus on food-specific biomarkers that can provide objective measures of dietary exposure in free-living populations [6].

The DBDC Organizational Structure and Strategic Approach

Consortium Infrastructure and Governance

The DBDC operates through a coordinated infrastructure designed to maximize scientific rigor, operational efficiency, and data harmonization across participating institutions. The consortium comprises three academic study centers—Harvard University (in collaboration with the Broad Institute), Fred Hutchinson Cancer Center (in collaboration with the University of Washington), and University of California Davis (in collaboration with the USDA Agricultural Research Service)—each with specialized cores for dietary interventions, metabolomic profiling, data analysis, and administration [7]. A central Data Coordinating Center (DCC) at Duke University manages data quality control, analysis, and repository functions, while standing committees and working groups provide scientific oversight and operational coordination [7].

This organizational structure enables the DBDC to implement standardized protocols across sites while maintaining specialized expertise. The Dietary Intervention Working Group harmonizes feeding study protocols and data collection procedures; the Metabolomics Working Group coordinates analytical methods for biomarker identification; and the Data Analysis/Harmonization Working Group develops consistent data dictionaries and analysis plans [7]. This integrated approach ensures that biomarker discovery efforts follow consistent methodologies across different foods and population groups, facilitating the creation of a comprehensive biomarker database for the research community [7].

Comparative Framework: DBDC Versus Traditional Biomarker Development

Table 1: Comparison of Biomarker Development Approaches

| Development Characteristic | Traditional Approach | DBDC Framework |

|---|---|---|

| Study Design | Often observational or cross-sectional | Controlled feeding trials with prescribed food amounts [6] |

| Analytical Scope | Targeted analysis of limited metabolites | Untargeted and targeted metabolomic profiling [6] [7] |

| Validation Rigor | Limited pharmacokinetic data | Comprehensive dose-response and time-response characterization [7] |

| Biomarker Specificity | Focus on single nutrients | Food-specific and dietary pattern biomarkers [6] [1] |

| Data Sharing | Limited accessibility | Publicly accessible database through NIDDK repository [6] [7] |

| Population Relevance | Variable population representation | Diverse United States populations across multiple sites [7] |

The DBDC framework represents a paradigm shift from traditional biomarker development through its systematic, phased approach to discovery and validation. Unlike earlier efforts that often relied on observational studies with inherent dietary measurement error, the DBDC implements controlled feeding studies where participants consume prescribed amounts of test foods, enabling precise characterization of the relationship between food intake and metabolite patterns [6] [7]. This methodological rigor addresses critical gaps in previous research, including insufficient assessment of pharmacokinetic parameters, dose-response relationships, and biomarker specificity [7].

The consortium's approach also contrasts with traditional methods through its application of advanced metabolomic technologies that allow for comprehensive profiling of blood and urine specimens rather than targeted analysis of limited metabolites [6]. By systematically testing a variety of foods across diverse populations and implementing standardized validation criteria, the DBDC aims to produce biomarkers that meet proposed validity criteria including plausibility, dose-response, time-response, analytical performance, stability, and reliability in free-living populations [7].

Experimental Framework and Methodological Protocols

The Three-Phase Biomarker Development Pipeline

The DBDC implements a rigorous three-phase biomarker development pipeline designed to systematically progress from initial discovery to real-world validation [6] [7]. This structured approach ensures that only biomarkers demonstrating robust performance across multiple validation stages advance toward application in nutritional research.

Table 2: DBDC Three-Phase Biomarker Development Pipeline

| Phase | Primary Objective | Study Design | Key Measurements | Outcome |

|---|---|---|---|---|

| Phase 1: Discovery | Identify candidate biomarkers associated with specific foods | Controlled feeding of test foods in prespecified amounts [6] | Metabolomic profiling of blood/urine; pharmacokinetic parameters [6] [7] | Candidate compounds with characteristic postprandial signatures [6] |

| Phase 2: Evaluation | Assess ability to identify consumption in mixed diets | Controlled feeding studies of various dietary patterns [6] | Specificity and sensitivity in detecting food intake against complex dietary background [6] | Biomarker performance metrics in controlled dietary patterns [6] |

| Phase 3: Validation | Validate predictive value in free-living populations | Independent observational studies [6] | Prediction of recent and habitual food consumption [6] | Validated biomarkers for use in epidemiological settings [6] |

Experimental Protocols for Biomarker Discovery

Controlled Feeding Trial Designs

The DBDC employs controlled feeding trials as the foundation for biomarker discovery in Phase 1. These trials administer test foods in prespecified amounts to healthy participants under carefully monitored conditions [6]. Test foods are selected based on USDA MyPlate Guidelines to represent commonly consumed foods in the United States diet, ensuring population relevance [7]. The feeding studies implement weight-maintaining menu plans designed by dietitians, with energy intake calibrated to individual participants using equations like the Harris-Benedict formula plus an activity factor [3]. Participants receive all foods prepared for consumption or as provisions for home preparation, with strict protocols for documenting adherence and any deviations from prescribed intake [3].

Biospecimen Collection and Processing

The consortium implements standardized protocols for biospecimen collection, processing, and storage across all study sites to ensure data comparability. Matching single-spot urine and fasting plasma specimens are collected at multiple time points following test food consumption to characterize postprandial metabolite kinetics [6] [3]. For urine specimens, refractive index targets and protocols guide screening and dilution procedures, while creatinine normalization is applied to account for variations in urine concentration [7] [3]. All biospecimens undergo rigorous quality control procedures before metabolomic analysis, with aligned protocols for clinical and laboratory measurements across participating centers [7].

Metabolomic Profiling Methodologies

The DBDC employs complementary metabolomic platforms to achieve comprehensive coverage of the food metabolome. The core analytical approach utilizes liquid chromatography-mass spectrometry (LC-MS) with both reverse-phase and hydrophilic-interaction liquid chromatography (HILIC) separations to capture metabolites with diverse chemical properties [6] [7]. These platforms enable reliable measurement of numerous plasma and urinary metabolites, with stringent quality control standards requiring coefficient of variation (CV) < 30% in the majority of participants (>75%) [3].

The metabolomic workflow incorporates both targeted and untargeted approaches. Targeted analysis focuses on predetermined metabolites of interest, while untargeted profiling enables discovery of novel biomarkers without prior hypothesis [3]. Unknown metabolites associated with specific dietary patterns are identified using high-resolution MS/MS fragmentation patterns and confirmed through co-elution with authentic chemical standards when available [3]. This dual approach balances comprehensive discovery with rigorous confirmation, enhancing the reliability of candidate biomarkers.

Diagram 1: DBDC Biomarker Discovery Workflow. This diagram illustrates the sequential process from controlled feeding studies to public database deposition, highlighting the three-phase validation structure.

Key Research Outputs and Biomarker Performance Data

Established Dietary Pattern Biomarkers

While the DBDC continues its systematic discovery efforts, previous research has identified several robust biomarkers associated with broader dietary patterns. These biomarkers demonstrate the potential of metabolomic approaches to objectively characterize dietary intake beyond single foods or nutrients.

Table 3: Established Biomarkers of Dietary Patterns

| Biomarker | Biological Matrix | Associated Dietary Pattern | Direction of Association | Performance Characteristics |

|---|---|---|---|---|

| Proline Betaine | Plasma and Urine [9] [3] | Prudent Diet (high fruits/vegetables) [9] [3] | Increase with Prudent diet [9] [3] | Sensitive to citrus fruit intake [9] |

| 3-Methylhistidine | Plasma and Urine [9] [3] | Prudent Diet [9] [3] | Increase with Prudent diet [9] [3] | Marker for lean meat and fish [9] |

| Enterolactone Glucuronide | Urine [3] | Prudent Diet [3] | Increase with Prudent diet [3] | Whole grain and fiber intake [3] |

| Myristic Acid | Plasma [9] [3] | Western Diet [9] [3] | Increase with Western diet [9] [3] | Saturated fat biomarker [9] |

| Linoleic Acid | Plasma [9] [3] | Western Diet [9] [3] | Increase with Western diet [9] [3] | Processed food and vegetable oil intake [9] |

| Acesulfame K | Urine [3] | Western Diet [3] | Increase with Western diet [3] | Artificial sweetener biomarker [3] |

The biomarkers identified in previous studies illustrate several important principles in dietary biomarker research. First, few metabolites are specific to single foods; instead, they often represent broader food groups or processing methods [1]. Second, combination biomarker panels typically provide better characterization of dietary patterns than individual metabolites [1]. Third, the direction and magnitude of biomarker response can help distinguish between contrasting dietary patterns, such as Prudent versus Western diets [9] [3].

Analytical Performance of Metabolomic Platforms

The technical performance of metabolomic platforms fundamentally determines the quality and reliability of biomarker data. Rigorous validation studies have established performance metrics for the analytical methods employed in dietary biomarker research.

In the DIGEST pilot study, which employed metabolomic profiling to identify biomarkers of Prudent and Western diets, researchers reliably measured 80 plasma metabolites and 84 creatinine-normalized urinary metabolites in the majority of participants (>75%) with a coefficient of variation (CV) < 30% across three complementary analytical platforms [3]. This level of analytical precision enables confident detection of metabolite differences associated with dietary interventions.

Method validation typically includes assessment of precision (through replicate analysis), accuracy (using reference materials when available), linearity, limit of detection, and stability under various storage conditions [3]. For biomarker quantification, normalization strategies such as creatinine adjustment for urine specimens and quality control pooling strategies help account for analytical variation and ensure data quality across large sample sets [3].

The Scientist's Toolkit: Essential Research Reagents and Methodologies

Table 4: Essential Research Reagents and Methodologies for Dietary Biomarker Research

| Tool Category | Specific Examples | Function in Biomarker Research | Technical Considerations |

|---|---|---|---|

| Analytical Instrumentation | LC-MS (Liquid Chromatography-Mass Spectrometry) [6] [7] | Separation and detection of metabolites in biospecimens | Requires HILIC and reverse-phase columns for metabolite coverage [6] |

| Reference Standards | Authentic chemical standards [3] | Confirmation of metabolite identity through co-elution | Limited availability for food-derived metabolites [4] |

| Biospecimen Collection Materials | Urine collection containers, EDTA blood tubes [7] | Standardized collection of biological samples | Strict protocols for fasting status and timing postprandial [7] |

| Data Analysis Tools | High-dimensional bioinformatics pipelines [6] [7] | Processing raw metabolomic data and identifying patterns | Must account for multiple comparisons and batch effects [6] |

| Dietary Assessment Software | Automated Self-Administered 24-h Dietary Assessment Tool (ASA-24) [6] | Collection of self-reported dietary data for comparison | Used alongside biomarkers for validation [6] |

| Nutrient Databases | Nutrition Data System for Research (NDS-R) [10] | Conversion of food intake to nutrient composition | Essential for controlled diet formulation [10] |

Biomarker Validation Framework and Application Pathways

Validation Criteria and Biomarker Qualification

The DBDC employs rigorous criteria for biomarker validation based on established frameworks proposed by Dragsted et al. [7]. These criteria ensure that only biomarkers with robust performance characteristics advance toward application in research settings.

Diagram 2: Biomarker Validation Pathway. This diagram illustrates the sequential criteria that candidate biomarkers must satisfy before achieving validated status for use in nutritional research.

The validation pathway begins with assessment of biological plausibility, establishing a mechanistic link between food consumption and biomarker appearance [7]. Next, dose-response relationships characterize how biomarker levels change with varying intake amounts, while time-response profiles define the pharmacokinetic parameters including absorption, peak concentration, and elimination half-life [7]. Analytical performance validation establishes precision, accuracy, and detection limits across relevant concentration ranges [3]. Stability testing evaluates biomarker integrity under various storage conditions, and temporal reliability assessment determines consistency of measurements over time in free-living populations [7].

Application in Nutritional Epidemiology and Clinical Research

Validated dietary biomarkers serve multiple critical functions in nutritional research and public health. In nutritional epidemiology, they enable objective assessment of dietary exposures, complementing or correcting self-reported data that suffer from systematic measurement error [8] [10]. The Women's Health Initiative has pioneered methods using biomarker-calibrated dietary intake to address systematic bias in self-reported data, particularly the substantial energy underestimation among overweight and obese participants [8].

Biomarkers also play crucial roles in intervention studies by objectively monitoring adherence to prescribed dietary regimens [9] [3]. In the DIGEST study, metabolite trajectories confirmed good adherence to assigned food provisions and were correlated with changes in nutrient intake from diet records [3]. This application provides an objective compliance measure that strengthens inferences about intervention efficacy.

Additionally, dietary biomarkers contribute to understanding diet-disease mechanisms by identifying metabolic pathways linking dietary exposures to health outcomes [4]. As Ross Prentice notes, "The use of intake biomarkers for diet and chronic disease association studies is still infrequent in nutritional epidemiology research" [8], highlighting the need for further development and application of these objective measures.

The Dietary Biomarkers Development Consortium represents a transformative, systematic approach to addressing fundamental methodological challenges in nutritional science. Through its coordinated infrastructure, rigorous three-phase validation pipeline, and application of advanced metabolomic technologies, the DBDC framework significantly advances the field beyond traditional biomarker development approaches. The consortium's focus on food-specific biomarkers, diverse population representation, and data sharing through public repositories promises to generate a comprehensive resource for objective dietary assessment.

As the field progresses, the integration of dietary biomarkers with other omics technologies and the development of standardized statistical methods for biomarker application will further strengthen nutritional epidemiology [4]. The systematic framework established by the DBDC provides a model for future biomarker discovery efforts that can ultimately enhance our understanding of diet-health relationships and support evidence-based public health recommendations for chronic disease prevention.

Controlled Feeding Trials for Identifying Candidate Biomarkers

In nutritional science, the accurate assessment of diet is fundamental to understanding its relationship with health and disease. Self-reported dietary intake methods, such as food frequency questionnaires and 24-hour recalls, are plagued by significant measurement errors, including systematic underreporting and recall bias [7] [11]. Objective dietary biomarkers, measurable indicators in biological samples, are therefore critical tools for moving the field toward precision nutrition. Among the various methods for discovering and validating these biomarkers, controlled feeding trials are considered the gold standard. These trials involve providing participants with known amounts and types of food, thereby creating a definitive link between intake and subsequent changes in the metabolome. This guide compares the experimental designs, applications, and outputs of different controlled feeding trial approaches used to identify candidate biomarkers, with a specific focus on their utility for assessing habitual diet in free-living populations.

Comparison of Controlled Feeding Trial Designs

Controlled feeding trials are not a monolithic approach; they vary in design based on the research question, from tightly controlled clinical studies to more flexible, real-world interventions. The table below summarizes the core characteristics of three primary designs.

Table 1: Comparison of Controlled Feeding Trial Designs for Biomarker Discovery

| Trial Design Feature | Classical Controlled Feeding Study | Habitual Diet-Mimicking Study | Large-Scale RCTN with Biomarkers |

|---|---|---|---|

| Primary Objective | Identify novel candidate biomarkers and establish pharmacokinetics [7]. | Evaluate biomarker performance amid complex, variable diets [11]. | Validate biomarkers and objectively measure adherence/background diet in trials [12]. |

| Diet Control | Full control; all food provided in prespecified amounts [7]. | Partial control; diet is tailored to mimic each participant's reported habitual intake [11]. | No direct control; relies on supplement intervention with background diet monitored via biomarkers [12]. |

| Key Strength | High internal validity; establishes direct cause-effect relationships [7]. | Preserves real-world variation in nutrient intake; tests biomarker specificity [11]. | High external validity; demonstrates utility in large, free-living cohorts [12]. |

| Key Limitation | Low external validity; may not reflect complex dietary patterns [7]. | Relies on accuracy of self-reported data for menu design [11]. | Does not establish novel biomarkers; applies validated ones [12]. |

| Example | Dietary Biomarkers Development Consortium (DBDC) Phase 1 [7]. | Nutrition and Physical Activity Assessment Study Feeding Study (NPAAS-FS) [11]. | COcoa Supplement and Multivitamin Outcomes Study (COSMOS) subcohort analysis [12]. |

The workflow from discovery to validation, as undertaken by consortia like the DBDC, is a multi-stage process. The following diagram illustrates this pathway and the role of different trial designs within it.

Experimental Protocols and Data Outputs

Detailed Methodologies

The protocols for conducting these trials are rigorous and designed to ensure high-quality data collection.

Classical Controlled Feeding Protocol (DBDC Phase 1): Healthy participants are administered a single test food or a simplified diet in prespecified amounts. Biological specimens (blood and urine) are collected at multiple, tightly controlled time points post-consumption to characterize the pharmacokinetic profile of potential biomarkers. This includes establishing parameters like time to peak concentration and elimination half-life. Metabolomic profiling of these samples using technologies like liquid chromatography-mass spectrometry (LC-MS) is then performed to identify candidate compounds that track with intake [7].

Habitual Diet-Mimicking Protocol (NPAAS-FS): This design begins with participants completing a 4-day food record and an in-depth interview about their food preferences and patterns. Researchers then use this data to design an individualized 2-week controlled diet that approximates each participant's habitual intake, adjusting for estimated energy requirements. Established recovery biomarkers, such as doubly labeled water for energy and 24-hour urinary nitrogen for protein, are used to verify intake. Serum or urine concentrations of candidate biomarkers (e.g., carotenoids, folate) are measured at the beginning and end of the feeding period and regressed against actual consumed nutrients to evaluate their performance [11].

Large-Scale RCTN Biomarker Application (COSMOS): In this model, a validated nutritional biomarker is used as an objective tool within a larger trial. For example, in the COSMOS trial, spot urine samples were collected at baseline and follow-up. Urinary flavanol metabolites (gVLMB and SREMB) were quantified using validated LC-MS methods. Pre-defined biomarker concentration thresholds were used to classify participants into groups based on their background flavanol intake and their adherence to the cocoa extract intervention, independent of self-report [12].

Quantitative Biomarker Performance Data

The effectiveness of a biomarker is quantified by how well its concentration explains the variation in actual intake. The table below presents performance data (R² values) for several biomarkers from a habitual diet-mimicking study, using established recovery biomarkers as a benchmark.

Table 2: Performance of Candidate Biomarkers in a Controlled Feeding Study (NPAAS-FS) Data presented as R² values from linear regression of (ln-transformed) consumed nutrients on (ln-transformed) biomarker concentrations [11].

| Biomarker | Performance (R²) | Classification |

|---|---|---|

| Urinary Nitrogen (Protein Intake) | 0.43 | Established Recovery Biomarker |

| Doubly Labeled Water (Energy Intake) | 0.53 | Established Recovery Biomarker |

| Serum Vitamin B-12 | 0.51 | Candidate Concentration Biomarker |

| Serum Folate | 0.49 | Candidate Concentration Biomarker |

| Serum α-Carotene | 0.53 | Candidate Concentration Biomarker |

| Serum β-Carotene | 0.39 | Candidate Concentration Biomarker |

| Serum Lutein + Zeaxanthin | 0.46 | Candidate Concentration Biomarker |

| Serum Lycopene | 0.32 | Candidate Concentration Biomarker |

| Serum α-Tocopherol | 0.47 | Candidate Concentration Biomarker |

| PLFA % Polyunsaturated | 0.27 | Candidate Concentration Biomarker |

The impact of using these objective biomarkers in research is significant. The following diagram contrasts the traditional trial analysis with the biomarker-informed approach, highlighting how the latter refines the assessment of intervention effects.

The Scientist's Toolkit: Essential Reagents and Materials

Successful execution of controlled feeding trials and subsequent biomarker analysis requires a suite of specialized reagents and tools.

Table 3: Essential Research Reagents and Materials for Feeding Trials and Biomarker Analysis

| Item Name | Function/Application | Specific Examples & Notes |

|---|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) | High-resolution metabolomic profiling for discovery and quantification of candidate biomarkers in blood and urine [7]. | Often coupled with HILIC (hydrophilic-interaction liquid chromatography) to identify a wider range of molecules [7]. |

| Validated Biomarker Assays | Quantifying specific, pre-validated intake biomarkers in biological samples. | e.g., LC-MS assays for urinary flavanol metabolites (gVLMB, SREMB) [12]. |

| Doubly Labeled Water (DLW) | The gold-standard recovery biomarker for measuring total energy expenditure in free-living conditions, used to validate energy intake [11]. | ¹⁸O and ²H (deuterium). |

| 24-Hour Urinary Nitrogen | A recovery biomarker used to objectively assess protein intake [11]. | Requires complete 24-hour urine collection from participants. |

| Diet Formulation Software | Designing controlled diets, creating menus and production sheets, and recording nutrient intake data. | ProNutra software [11]. |

| Standardized Food Composition Databases | Accurate nutrient analysis of food records and formulation of study diets. | Nutrition Data System for Research (NDS-R) [11]. |

| Stable Isotope-Labeled Compounds | As internal standards for mass spectrometry to enable precise quantification of metabolites [7]. | |

| Biospecimen Collection Kits | Standardized collection, processing, and storage of blood, urine, and other samples for biobanking. | Includes tubes, stabilizers, and protocols for consistent handling across sites [7]. |

Controlled feeding trials are indispensable for building a robust pipeline of dietary biomarkers, from initial discovery in highly controlled settings to validation in complex, real-world environments. As the data from studies like COSMOS demonstrates, the application of validated biomarkers can dramatically improve the precision of nutrition research by objectively accounting for background diet and adherence [12]. This moves the field beyond the limitations of self-report and enables more accurate estimations of true effect sizes in diet-disease relationships. The ongoing work of consortia like the DBDC promises to significantly expand the toolkit of validated biomarkers, thereby advancing the era of precision nutrition and empowering researchers, scientists, and drug development professionals with more reliable data for their work.

Pharmacokinetic Parameters and Dose-Response Relationships

The robustness of biomarkers, especially in the context of habitual diet research, hinges on a fundamental understanding of two interconnected disciplines: pharmacokinetics (PK) and dose-response relationships. Pharmacokinetics describes what the body does to a substance, quantitatively tracing its journey from administration to elimination through the processes of absorption, distribution, metabolism, and excretion (ADME) [13] [14]. Conversely, dose-response characterization describes the quantitative effect a substance elicits on the body, typically measured through physiological outcomes or biomarker changes [15] [16]. For researchers investigating habitual diet, where long-term, low-level exposure is the norm, integrating these disciplines is paramount. It allows scientists to move beyond merely detecting a biomarker to robustly interpreting its meaning—differentiating between recent intake, habitual consumption, and individual metabolic variability [17]. This guide provides a comparative framework of key pharmacokinetic parameters and dose-response methodologies, equipping scientists with the data and protocols necessary to validate biomarkers and interpret their fluctuations within complex, free-living populations.

Foundational Pharmacokinetic Parameters: A Comparative Guide

Pharmacokinetic parameters provide the numerical backbone for understanding systemic exposure to a compound. The following table summarizes the core parameters used to characterize drug disposition. These parameters are essential for designing dosing regimens that maintain drug concentrations within a therapeutic window, balancing efficacy with safety [13] [18].

Table 1: Core Pharmacokinetic Parameters and Their Definitions

| Parameter | Symbol | Unit | Definition | Clinical/Research Significance |

|---|---|---|---|---|

| Bioavailability | F | % or fraction | The fraction of an administered dose that reaches the systemic circulation unchanged [13]. | Determines the efficiency of drug delivery; critical for translating from intravenous to oral dosing [19]. |

| Area Under the Curve | AUC | conc. × time | The integral of the drug concentration-time curve in plasma [18]. | A direct measure of total systemic drug exposure over time [13]. |

| Maximum Concentration | C~max~ | concentration | The peak observed concentration in plasma following drug administration. | Often related to the intensity of pharmacodynamic effects, including toxicity [18]. |

| Time to Maximum Concentration | T~max~ | time | The time taken to reach the peak plasma concentration (C~max~). | Reflects the rate of absorption; useful for comparing formulations [18]. |

| Volume of Distribution | V~d~ | volume (e.g., L) | The apparent theoretical volume required to contain the total amount of drug at the same concentration observed in plasma [13] [18]. | Indicates the extent of drug distribution outside the plasma compartment. A high V~d~ suggests extensive tissue distribution [13]. |

| Clearance | CL | volume/time (e.g., L/h) | The volume of plasma from which the drug is completely removed per unit time [18]. | The primary parameter describing the body's efficiency in eliminating a drug; independent of administration route [19]. |

| Half-Life | t~1/2~ | time | The time required for the plasma concentration to decrease by 50% [13]. | Determines the time to reach steady state and the dosing frequency. Calculated as (0.693 × V~d~) / CL [19] [18]. |

| Elimination Rate Constant | K~e~ | 1/time | The fraction of drug in the body eliminated per unit time [19]. | The slope of the terminal elimination phase on a log-concentration vs. time graph [18]. |

These parameters are intrinsically linked, as exemplified by the equation for half-life, which is a function of both volume of distribution (V~d~) and clearance (CL) [18]. This relationship means that a drug can have a long half-life either because it is widely distributed in tissues (high V~d~) or because it is cleared very slowly (low CL), with vastly different implications for its dosing and accumulation.

Experimental Protocols for Pharmacokinetic and Dose-Response Characterization

Robust biomarker research requires rigorously designed experiments. The protocols below are foundational for generating high-quality PK and dose-response data.

Protocol: A Single-Dose PK Study with Multiple Food Matrices

This design is critical for assessing the bioavailability and pharmacokinetics of a dietary biomarker from different food sources, directly informing on matrix effects [17].

- Objective: To characterize and compare the pharmacokinetic parameters of avenanthramides (AVAs) and avenacosides (AVEs) as biomarkers of oat intake from solid (oat flakes) and liquid (oat drink) matrices.

- Study Design: A non-blinded, randomized, two-way crossover study [17].

- Participants: 21 healthy participants.

- Intervention:

- Phase I (Single Dose): After an overnight fast, participants consumed a single dose of either a solid (62 g oat flakes) or liquid (196 mL oat drink) oat product. A washout period of at least 8 days separated the two interventions.

- Blood Sampling: Serial blood samples were collected at 0 h (pre-dose), 0.25 h, 0.5 h, 0.75 h, 1 h, 1.5 h, 2 h, 3 h, 4 h, 5 h, 6 h, 7 h, 8 h, and 24 h post-consumption.

- Bioanalysis: Plasma concentrations of multiple AVAs (2p, 2c, 2f, 2fd, 2pd) and AVEs (A, B) were quantified using a validated analytical method (e.g., LC-MS/MS).

- Data Analysis: Non-compartmental analysis (NCA) was performed on the concentration-time data for each participant and product to determine key PK parameters: AUC, C~max~, T~max~, and t~1/2~ [17].

Protocol: A Caloric Dose-Response Study of a High-Fat Meal

This protocol exemplifies how a dose-response strategy can reveal differences in postprandial metabolism based on health status, which is vital for understanding how biomarkers respond to varying dietary loads [15].

- Objective: To investigate the dose-dependent effect of a high-fat (HF) meal on postprandial metabolic and inflammatory biomarkers in normal-weight versus obese participants.

- Study Design: A randomized crossover study.

- Participants: 19 normal-weight (BMI: 20–25 kg/m²) and 18 obese (BMI: >30 kg/m²) men, age-matched.

- Intervention: Each participant consumed three different caloric doses of a HF meal (500, 1000, and 1500 kcal) in random order, with a washout period of at least one week. The macronutrient composition was identical (61% fat, 21% carbohydrate, 18% protein).

- Blood Sampling: Blood was collected after an overnight fast (t=0) and at 1, 2, 4, and 6 hours postprandially.

- Biomarker Analysis: Plasma/serum was analyzed for a range of biomarkers, including glucose, lipids (triglycerides), insulin, and inflammatory markers like interleukin-6 (IL-6).

- Data Analysis: The postprandial response was calculated as the net incremental area under the curve (iAUC) for each biomarker. Statistical models (e.g., ANOVA) were used to compare iAUC and peak responses across doses and between participant groups.

Visualizing the Workflow: From Dose to Biomarker Response

The following diagram synthesizes the experimental and conceptual pathway from substance administration to biomarker interpretation, integrating both PK and dose-response principles.

The Scientist's Toolkit: Essential Reagents and Materials

Successful execution of pharmacokinetic and dose-response studies relies on a suite of specialized reagents and analytical tools. The following table details key solutions required for the featured experiments.

Table 2: Key Research Reagent Solutions for PK and Dose-Response Studies

| Item | Function & Application | Example from Featured Research |

|---|---|---|

| Validated Bioanalytical Assays | To accurately quantify drug/nutrient and biomarker concentrations in biological fluids (e.g., plasma, serum). | LC-MS/MS assay for quantifying avenanthramides and avenacosides in human plasma [17]. |

| Standardized Test Meals | To provide a consistent and controlled dietary intervention for dose-response studies, ensuring reproducibility. | High-fat meals with fixed macronutrient ratios (61% fat) and caloric doses (500, 1000, 1500 kcal) [15]. |

| Stable Isotope-Labeled Compounds | To serve as internal standards in mass spectrometry, improving quantification accuracy, or to trace metabolic pathways. | (Implied best practice) Use of deuterated or ¹³C-labeled internal standards for avenanthramide quantification via LC-MS/MS. |

| Clinical Laboratory Services | For the standardized, CLIA-approved analysis of routine clinical chemistry and hematology biomarkers. | Analysis of glucose, lipids, insulin, and inflammatory markers (IL-6, CRP) by third-party clinical labs [16]. |

| Enzyme Kits (CYP450, UGT) | To investigate specific metabolic pathways in in vitro systems, predicting potential for drug-drug or drug-nutrient interactions. | Investigation of Phase I (CYP450) and Phase II (UGT) metabolism of drug compounds [13] [14]. |

| Protein Binding Assays | To determine the extent of drug binding to plasma proteins like albumin, which influences free (active) drug concentration. | Assessment of protein binding for highly bound drugs like phenytoin to interpret therapeutic drug monitoring results [19]. |

The integration of robust pharmacokinetic characterization with precise dose-response analysis forms the bedrock of reliable biomarker research, particularly in the challenging context of habitual diet. The comparative data and standardized protocols presented in this guide provide a framework for researchers to objectively evaluate the performance of potential biomarkers. By applying these principles, scientists can better decipher the complex narrative told by biomarker concentrations, differentiating signal from noise and ultimately strengthening the scientific basis for dietary recommendations and personalized nutrition strategies.

The accurate assessment of habitual diet represents a fundamental challenge in nutritional epidemiology, with traditional self-reported methods such as food frequency questionnaires and dietary records being prone to significant bias and selective reporting [3]. Metabolomics, the comprehensive study of small molecules in biological systems, has emerged as a powerful tool for identifying objective biomarkers that reflect true dietary intake and metabolic responses [20]. Within this field, liquid chromatography-mass spectrometry (LC-MS) coupled with hydrophilic interaction liquid chromatography (HILIC) has become instrumental for measuring the broad spectrum of polar metabolites that often serve as sensitive indicators of food consumption [21] [22]. The robustness of dietary biomarkers is particularly important for understanding diet-disease relationships, as metabolites provide insights into biologically relevant components of food and their metabolic effects, which are influenced by nutrient availability, gut microbiome, genetics, and individual nutrient status [23]. This guide examines the performance characteristics of key LC-MS and HILIC methodologies, providing experimental data and protocols to inform their application in nutritional biomarker discovery.

Technology Comparison: LC-MS Platforms and HILIC Configurations

Performance Characteristics of Chromatographic Systems

Table 1: Comparison of HILIC Stationary Phases for Polar Metabolite Separation

| Stationary Phase Type | Retention Mechanism | Optimal pH Range | Key Applications | Strengths | Limitations |

|---|---|---|---|---|---|

| Bare Silica [24] | Hydrophilic partitioning, hydrogen bonding, ion-exchange | 3-7 (Type B) | Carbohydrates, organic acids, polar pharmaceuticals | Simple mechanism, widely available | Acidic silanols can cause peak tailing for basic compounds |

| Amide [21] [24] | Hydrophilic partitioning, hydrogen bonding | 3-8 | Energy metabolites, amino acids, sugar phosphates | High stability, reproducible retention | Limited ion-exchange capacity |

| Zwitterionic (ZIC-HILIC) [22] [24] | Hydrophilic partitioning, weak ion-exchange | 3-9 | Polar and ionic metabolites, complex biological extracts | Balanced electrostatic interactions, reduced matrix effects | Higher cost, requires specific buffer conditions |

| Amino [24] | Hydrophilic partitioning, ion-exchange, Schiff base formation | 3-9 | Carbohydrates, glycosylated compounds | Strong retention for very polar compounds | Chemically unstable, irreversible adsorption possible |

Table 2: Analytical Performance of Recent HILIC-MS Platforms in Metabolomics

| Platform Configuration | Metabolite Coverage | Sensitivity Gain vs. RP-LC | Retention Time Stability | Matrix Effect Reduction | Key Evidence |

|---|---|---|---|---|---|

| Novel Z-HILIC Orbitrap [22] | 707/990 chemical standards (71%) | Not quantified | High | 79.1% annotation in cell extracts | Improved resolution and RT distribution vs. ZIC-pHILIC |

| ZIC-pHILIC Orbitrap [22] | 543/990 standards (55%) | Not quantified | Moderate | 66.6% annotation in cell extracts | Good for polar metabolites but lower coverage than Z-HILIC |

| Dual-column (RP/HILIC) [25] | Expanded polar/nonpolar range | Not quantified | System-dependent | Varies with connector choice | Complementary coverage, orthogonal separations |

| Conventional HILIC-MS [26] | Targeted polar metabolites | ~10x with ESI-MS | Method-dependent | Phospholipids more retained | Higher organic mobile phase improves ESI sensitivity |

Detection Systems and Data Acquisition Methods

High-resolution mass spectrometry, particularly Orbitrap technology, provides the accurate mass measurements necessary for confident metabolite identification in complex biological samples [21] [22]. The implementation of deep-scan data-dependent acquisition (DDA) has demonstrated significant improvements in metabolite identification, increasing the number of confidently identified metabolites by more than 80% compared to standard DDA approaches [22]. This enhanced capability is particularly valuable in nutritional biomarker discovery, where comprehensive metabolite coverage is essential for identifying subtle metabolic responses to dietary interventions.

The orthogonal separation approach achieved through dual-column systems that combine reversed-phase (RP) and HILIC chromatography within a single analytical workflow significantly expands metabolite coverage in complex biological matrices [25]. These systems enable concurrent analysis of both polar and nonpolar metabolites, thereby reducing analytical blind spots and improving data integration for nutritional studies where metabolites span a wide polarity range [25].

Experimental Protocols for Nutritional Biomarker Discovery

Standardized HILIC-MS Metabolomics Workflow

Figure 1: Comprehensive HILIC-MS workflow for nutritional biomarker discovery, covering sample preparation, instrumental analysis, and data processing stages.

Detailed Sample Preparation and Metabolite Extraction

The quality of metabolomic data heavily depends on proper sample collection and preparation. For nutritional studies focusing on habitual diet, both plasma and urine specimens offer valuable but complementary information [23]. Urine contains higher numbers of unique food-associated metabolites, while serum provides insight into systemic metabolic responses [23]. The following protocol details a robust extraction method for polar metabolites from biofluids:

Materials and Reagents:

- Extraction solvent: Acetonitrile:methanol:formic acid (74.9:24.9:0.2, v/v/v) [21]

- Internal standards: Stable isotope-labeled compounds (e.g., l-Phenylalanine-d8 and l-Valine-d8) [21]

- LC aqueous mobile phase A: 0.1% formic acid, 10 mM ammonium formate in LC/MS-grade water [21]

- LC organic mobile phase B: 0.1% formic acid in LC/MS-grade acetonitrile [21]

Procedure:

- Sample Quenching: Add 100 μL of biofluid (plasma/urine) to 400 μL of chilled extraction solvent (-20°C) to rapidly quench metabolic activity [20].

- Internal Standard Addition: Spike with isotope-labeled internal standards (0.1 μg/mL of l-Phenylalanine-d8 and 0.2 μg/mL of l-Valine-d8) for quality control and quantification [21].

- Protein Precipitation: Vortex vigorously for 30 seconds, then incubate at -20°C for 60 minutes to precipitate proteins.

- Centrifugation: Centrifuge at 14,000 × g for 15 minutes at 4°C to pellet insoluble material.

- Sample Recovery: Transfer 400 μL of supernatant to a new vial and evaporate to dryness under nitrogen stream.

- Reconstitution: Reconstitute dried extract in 100 μL of initial mobile phase (high organic content) for LC-MS analysis [21].

This extraction protocol efficiently recovers polar metabolites while removing proteins and phospholipids that can interfere with HILIC separation and MS detection.

HILIC-MS Instrumental Parameters and Method Optimization

Chromatographic Conditions:

- Column: Zwitterionic HILIC (e.g., ZIC-HILIC) or amide-based stationary phase [22]

- Mobile Phase: A: 0.1% formic acid, 10 mM ammonium formate in water; B: 0.1% formic acid in acetonitrile [21]

- Gradient Program: Start with 95% B, gradually increase to 60% B over 15-20 minutes [26]

- Flow Rate: 0.4-0.6 mL/min [21]

- Column Temperature: 35-45°C [21]

- Injection Volume: 5-10 μL [21]

Mass Spectrometry Parameters:

- Ionization: Electrospray ionization (ESI) in positive and negative modes [22]

- Resolution: >70,000 full width at half maximum [22]

- Mass Range: m/z 70-1050 [22]

- Data Acquisition: Data-dependent MS/MS with dynamic exclusion [22]

Method development should consider that HILIC retention mechanisms combine hydrophilic partitioning, hydrogen bonding, and ion-exchange interactions [24]. The high organic mobile phase (>70% acetonitrile) enhances ESI-MS sensitivity approximately 10-fold compared to reversed-phase LC [26]. However, biological matrices like plasma contain phospholipids that are strongly retained in HILIC, potentially causing matrix effects that must be addressed through optimal sample preparation and chromatographic conditions [26].

Applications in Dietary Biomarker Research

Biomarker Discovery for Dietary Patterns

Table 3: Metabolite Biomarkers Associated with Contrasting Dietary Patterns

| Dietary Pattern | Biofluid | Key Metabolite Biomarkers | Direction of Change | Correlation with Self-Report | Study Design |

|---|---|---|---|---|---|

| Prudent Diet [3] | Plasma | 3-Methylhistidine, Proline Betaine | Increased | r > ±0.30, p < 0.05 | Randomized controlled trial (2-week intervention) |

| Prudent Diet [3] | Urine | Imidazole propionate, Hydroxypipecolic acid, Dihydroxybenzoic acid, Enterolactone glucuronide | Increased | r > ±0.30, p < 0.05 | Randomized controlled trial (2-week intervention) |

| Western Diet [3] | Plasma | Myristic acid, Linoelaidic acid, Linoleic acid, Alanine, Proline | Increased | r > ±0.30, p < 0.05 | Randomized controlled trial (2-week intervention) |

| Western Diet [3] | Urine | Acesulfame K (artificial sweetener) | Increased | r > ±0.30, p < 0.05 | Randomized controlled trial (2-week intervention) |

Controlled feeding studies have been instrumental for identifying robust biomarkers of dietary intake. The Diet and Gene Intervention (DIGEST) pilot study provided complete diets to participants for two weeks, revealing distinctive metabolic trajectories associated with Prudent versus Western dietary patterns [3]. This research demonstrated that urinary metabolites offer a valid alternative or complement to serum for metabolite biomarkers of diet in large-scale clinical or epidemiologic studies [23].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents for HILIC-Based Metabolomics

| Reagent/Material | Specifications | Function in Workflow | Example Application |

|---|---|---|---|

| HILIC Columns [22] [24] | Zwitterionic, amide, or bare silica; 2.1-4.6 mm ID, 1.7-5 μm particle size | Polar metabolite separation | ZIC-HILIC for comprehensive polar metabolome coverage |

| Mobile Phase Additives [21] [26] | LC/MS-grade ammonium formate/acetate (10-20 mM), formic acid (0.1%) | Modulate retention and ionization | 10 mM ammonium formate + 0.1% formic acid for optimal peak shape |

| Isotope-Labeled Internal Standards [21] [20] | Stable isotope-labeled metabolites (e.g., l-Phenylalanine-d8, l-Valine-d8) | Quality control, quantification normalization | Monitoring extraction efficiency and instrument performance |

| Metabolite Extraction Solvents [21] [20] | LC/MS-grade acetonitrile, methanol, chloroform; specific ratios for polarity coverage | Protein precipitation, metabolite extraction | ACN:MeOH:formic acid (74.9:24.9:0.2) for polar metabolites |

| Chemical Standard Libraries [22] | Authentic metabolite standards (>900 compounds) | Metabolite identification and annotation | Rigorous metabolite identification using RT, m/z and MS/MS matching |

LC-MS coupled with HILIC separation provides a powerful platform for discovering robust biomarkers of habitual diet. Performance comparisons demonstrate that zwitterionic HILIC phases offer superior metabolite coverage and sensitivity compared to traditional HILIC chemistries, while dual-column systems that combine orthogonal separation mechanisms further expand metabolome coverage [25] [22]. The implementation of rigorous experimental protocols—including proper sample preparation, optimized chromatographic conditions, and high-resolution mass spectrometry—enables the identification of metabolite biomarkers that strongly correlate with dietary intake patterns [3]. These technological advances support the development of objective assessment tools that overcome the limitations of self-reported dietary data, ultimately strengthening nutritional epidemiology and evidence-based public health policies for chronic disease prevention.

The objective assessment of dietary intake represents a fundamental challenge in nutritional epidemiology and clinical research. Self-reported dietary data from questionnaires or diaries are prone to significant bias and measurement error, limiting their reliability for establishing robust diet-disease relationships [27] [1]. Biomarkers of food intake (BFIs) have emerged as promising objective tools to overcome these limitations, providing direct biological measurements of food consumption independent of participant memory, perception, or reporting accuracy. However, to be clinically and scientifically useful, these biomarkers must undergo rigorous validation to ensure they accurately reflect intake of specific foods or dietary patterns.

Within the context of habitual diet research, biomarker robustness is paramount. Unlike single-dose pharmacokinetic studies, biomarkers for habitual intake must account for varied consumption patterns, food matrix effects, inter-individual differences in metabolism, and long-term stability considerations [27] [8]. This guide examines the three fundamental criteria—plausibility, specificity, and temporal reliability—that form the foundation of biomarker validation, with particular emphasis on their application in studies of habitual dietary patterns.

Core Validation Criteria Framework

The validation of dietary biomarkers extends beyond analytical performance to encompass biological relevance and practical utility in free-living populations. Based on consensus criteria developed through systematic review and expert deliberation, eight key characteristics define comprehensive biomarker validation [27] [28]. While all criteria are interconnected, plausibility, specificity, and temporal reliability form the essential triad for establishing fundamental biomarker validity.

Table 1: Comprehensive Biomarker Validation Criteria

| Validation Criterion | Key Evaluation Factors | Primary Application Context |

|---|---|---|

| Plausibility | Biological origin from food component; Mechanistic understanding | All biomarker applications |

| Specificity | Ability to distinguish target food from other dietary components | Food-specific intake assessment |

| Temporal Reliability | Half-life; Kinetics; Optimal sampling time | Defining exposure timeframes |

| Dose-Response | Relationship between intake amount and biomarker level | Quantitative intake assessment |

| Robustness | Performance across diverse populations and diets | Habitual diet studies |

| Reliability | Correlation with reference methods | Method validation |

| Stability | Sample processing and storage integrity | Biobanking and retrospective studies |

| Analytical Performance | Precision, accuracy, detection limits | Laboratory measurement |

The following diagram illustrates the interconnected validation workflow for dietary biomarkers, highlighting how plausibility, specificity, and temporal reliability serve as foundational gates that must be passed before addressing more complex validation aspects:

Plausibility: Establishing Biological Rationale

Definition and Significance

Plausibility refers to the fundamental requirement that a candidate biomarker has a verifiable biological connection to the food or food component of interest. This criterion demands that the biomarker either originates directly from the food itself or represents a consistent metabolite derived from the food through known metabolic pathways [27] [28]. Without established plausibility, even strong statistical associations between a biomarker and dietary intake remain suspect, as they may represent epiphenomena or confounding factors rather than true intake indicators.

The biological rationale for a biomarker must be grounded in food chemistry and metabolic understanding. For a biomarker to be considered plausible, there should be a demonstrated pathway from food consumption to biomarker appearance in biological samples, accounting for absorption, distribution, metabolism, and excretion processes [27]. This mechanistic understanding differentiates true intake biomarkers from correlative markers that may be influenced by non-dietary factors.

Experimental Approaches for Establishing Plausibility

Controlled feeding studies represent the gold standard for establishing biomarker plausibility. These studies involve administering specific test foods to participants under tightly monitored conditions and measuring subsequent appearance and kinetics of candidate biomarkers in biological samples [9] [6]. The Dietary Biomarkers Development Consortium (DBDC) has implemented a systematic three-phase approach that begins with controlled feeding trials where participants consume prespecified amounts of test foods, followed by comprehensive metabolomic profiling of blood and urine specimens [6].

Table 2: Experimental Designs for Plausibility Assessment

| Study Design | Key Features | Outcomes Measured | Example Findings |

|---|---|---|---|

| Acute Feeding Studies | Single dose of test food; Frequent sampling over short period | Rapid appearance of food-derived compounds; Precursor-product relationships | Proline betaine appearance in urine after citrus consumption [9] |

| Medium-term Controlled Diets | Fully controlled diets over days to weeks; Elimination of confounding foods | Steady-state biomarker levels; Elimination kinetics after diet cessation | 3-methylhistidine increase during Prudent diet intervention [9] |

| Stable Isotope Tracer Studies | Administration of isotopically labeled food compounds | Direct tracking of labeled compounds through metabolic pathways | Validation of flavonoid metabolism pathways [27] |

Advanced analytical techniques are crucial for establishing plausibility. Metabolomic approaches using liquid chromatography-mass spectrometry (LC-MS) enable simultaneous detection of thousands of metabolites in biological samples, facilitating the discovery of novel biomarker candidates [9] [3]. For example, in a study of Prudent versus Western diets, targeted and nontargeted metabolite profiling across three analytical platforms identified 80 plasma metabolites and 84 urinary metabolites that changed significantly in response to dietary interventions [9]. High-resolution MS/MS and comparison with authentic chemical standards were then used to identify unknown metabolites associated with each dietary pattern, strengthening the plausibility of these candidate biomarkers [9].

Case Example: Mediterranean Diet Biomarker Score

The development of a biomarker score for the Mediterranean diet exemplifies rigorous plausibility assessment. Researchers derived a multi-component biomarker score based on 5 circulating carotenoids and 24 fatty acids that collectively discriminated between Mediterranean and habitual diet arms of a randomized controlled trial [29]. Each component of this score was selected based on established biological pathways: carotenoids from high fruit and vegetable consumption, and specific fatty acid patterns reflecting olive oil intake and reduced saturated fat consumption. The resulting biomarker score demonstrated a substantially stronger inverse association with type 2 diabetes incidence than self-reported Mediterranean diet adherence, validating the biological plausibility of the selected biomarkers [29].

Specificity: Determining Unique Association with Target Food

Definition and Hierarchical Specificity

Specificity refers to a biomarker's ability to uniquely identify intake of a particular food or food group while remaining unaffected by consumption of other dietary components [27] [28]. In practice, perfect specificity to a single food is rare, and biomarker specificity is often conceptualized hierarchically, ranging from food-group specific to food-specific markers. For instance, proline betaine demonstrates high specificity for citrus fruits among commonly consumed foods, while 3-methylhistidine may reflect overall meat intake rather than specific meat types [9].

The level of specificity required depends on the intended application. For assessing compliance to dietary patterns like the Mediterranean diet, food-group specificity may be sufficient, whereas for monitoring intake of specific functional foods or allergens, higher specificity is necessary [1] [29]. The validation process must therefore clearly establish the limits of a biomarker's specificity and the conditions under which it remains a reliable intake indicator.

Methodologies for Specificity Testing

Cross-feeding studies represent the primary methodology for evaluating biomarker specificity. These investigations examine biomarker responses to consumption of various foods beyond the target food, identifying potential confounding sources [27] [6]. The DBDC employs specific study designs in phase 2 of their validation pipeline that expose participants to various dietary patterns containing the target food in different contexts, allowing researchers to determine whether candidate biomarkers remain specific to the target food across varying dietary backgrounds [6].

Metabolomic workflows for specificity assessment involve rigorous statistical validation using orthogonal partial least squares-discriminant analysis (OPLS-DA) and other multivariate methods to identify metabolite patterns that specifically discriminate between consumers and non-consumers of target foods [9] [3]. In the DIGEST study, for example, both univariate and multivariate statistical models were employed to identify metabolites with distinctive trajectories specifically associated with Prudent or Western diets, with confirmation through high-resolution MS/MS identification [9].

Table 3: Biomarker Specificity Classification and Examples

| Specificity Level | Definition | Representative Biomarkers | Limitations/Confounders |

|---|---|---|---|

| Food-Specific | Unique to single food or closely related food group | Proline betaine (citrus fruits); Allicin metabolites (garlic) | Limited by food variety and processing methods |

| Food-Group Specific | Identifies broader food category | Urinary enterolactone (whole grains); Plasma n-3 fatty acids (fatty fish) | Cannot distinguish individual foods within group |

| Dietary Pattern-Specific | Reflects complex dietary combination | Combined carotenoid and fatty acid score (Mediterranean diet) [29] | Pattern-specific but not component-specific |

| Nutrient-Specific | Tracks specific nutrient regardless of food source | Urinary nitrogen (protein); Doubly labeled water (energy) [8] | Multiple food sources possible |

Addressing Specificity Challenges in Habitual Diet Research

In free-living populations, dietary complexity presents significant challenges for biomarker specificity. The same biomarker may appear in multiple foods, and food matrix effects can alter bioavailability and metabolism [27] [1]. To address these challenges, researchers are increasingly developing biomarker panels that collectively provide specific identification of target foods or dietary patterns. For example, no single biomarker can specifically identify adherence to a Mediterranean diet, but a panel of carotenoids, fatty acids, and polyphenol metabolites can provide a specific signature of this dietary pattern [1] [29].

Statistical approaches for enhancing specificity include machine learning algorithms that identify multi-biomarker panels with superior specificity compared to individual biomarkers. These approaches weight each biomarker according to its specificity and variance, creating composite scores that more accurately reflect intake of complex dietary patterns [1] [29]. Validation of such panels requires testing in multiple independent populations with varying habitual diets to ensure specificity is maintained across different dietary contexts.

Temporal Reliability: Establishing Time-Response Relationships

Kinetic Parameters and Their Significance