Biomarkers of Habitual Food Intake: From Discovery to Application in Precision Nutrition and Biomedical Research

This article provides a comprehensive overview of the current landscape and future directions of dietary intake biomarkers for researchers and drug development professionals.

Biomarkers of Habitual Food Intake: From Discovery to Application in Precision Nutrition and Biomedical Research

Abstract

This article provides a comprehensive overview of the current landscape and future directions of dietary intake biomarkers for researchers and drug development professionals. It explores the foundational need for objective biomarkers to overcome the limitations of self-reported dietary data, detailing advanced methodological approaches like multi-biomarker panels and controlled feeding studies. The content addresses key challenges in biomarker validation, including specificity, dose-response relationships, and inter-individual variability, while also examining comparative applications for calibrating self-report instruments and monitoring dietary adherence in clinical trials. Synthesizing insights from major initiatives like the Dietary Biomarkers Development Consortium, this resource aims to equip scientists with the knowledge to leverage these robust tools for strengthening diet-disease association studies and advancing precision nutrition.

The Critical Need for Objective Dietary Biomarkers: Moving Beyond Self-Report in Nutritional Science

Accurate dietary assessment is a cornerstone of nutritional epidemiology, essential for understanding the relationships between diet, health, and disease. Self-reported instruments, including 24-hour recalls, food frequency questionnaires (FFQs), and dietary records, have served as the primary methods for capturing dietary intake in large-scale studies. However, when evaluated against objective biomarkers of intake, these methods demonstrate systematic measurement errors that substantially distort diet-disease relationships and compromise the validity of research findings [1] [2].

The persistent finding across validation studies is that self-reported dietary data are characterized by both random errors that reduce precision and systematic biases that compromise accuracy. These errors are not merely statistical nuisances; they have profound implications for public health recommendations, clinical guidelines, and nutritional policy. This analysis examines the nature, magnitude, and consequences of these measurement limitations within the context of biomarker-validated research, providing researchers with a critical framework for interpreting dietary data and designing robust nutritional studies.

Classification of Measurement Errors

Measurement error in dietary assessment can be categorized according to its nature and direction of bias. The table below summarizes the primary types of errors affecting self-reported instruments.

Table 1: Classification of Measurement Errors in Dietary Assessment

| Error Type | Definition | Primary Impact | Common Examples |

|---|---|---|---|

| Systematic Error (Bias) | Non-random error that deviates in a consistent direction from true intake | Reduces accuracy; creates directional bias in estimates | Energy underreporting; social desirability bias |

| Random Error | Unpredictable, non-directional fluctuations around true values | Reduces precision; attenuates correlation coefficients | Day-to-day intake variation; temporary memory lapses |

| Differential Error | Measurement error that differs based on outcome or participant characteristics | Biases effect estimates in unpredictable directions | Recall bias in case-control studies |

| Non-Differential Error | Measurement error unrelated to outcome status | Typically attenuates relationships toward null | General underreporting across a cohort |

The process of reporting dietary intake involves complex cognitive steps, each vulnerable to distinct error mechanisms [3]. Respondents must first encode consumption events into memory, then retain this information over time, retrieve it when completing an assessment, and finally estimate and report quantities and details. Failures can occur at each stage:

- Recall Bias: Imperfect memory leads to omissions of consumed items (errors of omission) or inclusion of items not consumed (errors of commission or intrusions) [4] [3]. Foods that constitute the main components of meals are better remembered than additions such as condiments, dressings, and ingredients in mixed dishes [3].

- Social Desirability Bias: Respondents frequently alter their reports to align with perceived social norms or researcher expectations [1]. This often manifests as underreporting of foods deemed "unhealthy" and overreporting of "healthy" options.

- Reactivity: The act of monitoring and recording intake can itself alter normal eating patterns, a phenomenon particularly relevant to food records [3].

- Portion Size Misestimation: Individuals struggle to accurately estimate and convert consumed amounts into quantifiable units, with both under- and overestimation occurring across different food groups [4].

Quantitative Evidence: Biomarker Comparisons Reveal Substantial Misreporting

Magnitude of Energy and Nutrient Underreporting

The development of objective biomarker methods, particularly the doubly labeled water (DLW) technique for measuring energy expenditure and urinary nitrogen for protein intake, has enabled rigorous quantification of reporting error. The evidence consistently reveals substantial underreporting across all major self-reported instruments.

Table 2: Biomarker-Based Validation of Self-Reported Dietary Instruments (Adapted from [2])

| Assessment Method | Energy Underreporting (%) | Protein Underreporting (%) | Potassium Underreporting (%) | Sodium Underreporting (%) |

|---|---|---|---|---|

| Automated 24-Hour Recalls (ASA24) | 15-17% | Lower than energy | Lower than energy | Lower than energy |

| 4-Day Food Records | 18-21% | Lower than energy | Lower than energy | Lower than energy |

| Food Frequency Questionnaires (FFQ) | 29-34% | Lower than energy | Overestimation (density-based) | Lower than energy |

The Interactive Diet and Activity Tracking in AARP (IDATA) study, which included 530 men and 545 women aged 50-74 years, provided direct comparison of multiple assessment tools against recovery biomarkers [2]. Participants completed six Automated Self-Administered 24-h recalls (ASA24s), two unweighed 4-day food records (4DFRs), two FFQs, two 24-hour urine collections, and one doubly labeled water administration. The findings demonstrated that absolute intakes of energy, protein, potassium, and sodium from all self-reported instruments were systematically lower than biomarker values, with underreporting most pronounced for energy.

Differential Misreporting Across Population Subgroups

The extent of misreporting is not uniform across populations. Research consistently identifies that underreporting increases with body mass index (BMI) [1]. Early studies using doubly labeled water found that obese women underreported energy intake by approximately 34% compared to no significant difference in lean women [1]. This pattern suggests that weight-related concerns, rather than weight status itself, drive systematic underreporting.

Additional factors influencing misreporting patterns include:

- Age and sex with systematic differences in reporting patterns across demographic groups [5]

- Educational attainment and socioeconomic status

- Day of the week with higher energy, carbohydrate, and alcohol intake reported on weekends [5]

- Specific food groups with vegetables (2-85% omission) and condiments (1-80% omission) omitted more frequently than beverages (0-32% omission) [4]

Methodological Implications for Research Design

Impact on Diet-Disease Relationships

Measurement error in dietary exposure data has profound consequences for epidemiological research:

- Attenuation of Effect Estimates: Non-differential misclassification of exposure typically biases correlation and relative risk estimates toward the null, potentially obscuring genuine diet-disease relationships [6].

- Reduced Statistical Power: Random error in exposure measurement increases variance, diminishing the ability to detect significant associations even when they exist [6].

- Distorted Ranking Ability: While self-report instruments may inadequately capture absolute intake, some (particularly multiple 24-hour recalls) retain utility for ranking individuals by intake, which is valuable in cohort studies focused on relative comparisons [7].

Optimizing Assessment Protocols

Research has identified several strategies to mitigate measurement error:

- Multiple Dietary Assessments: Collecting multiple 24-hour recalls or food records reduces the impact of day-to-day variation. Recent evidence suggests 3-4 days of dietary data collection, ideally non-consecutive and including at least one weekend day, are sufficient for reliable estimation of most nutrients [5].

- Biomarker Substudies: Incorporating objective biomarkers in validation subsamples enables quantification and correction for measurement error [6].

- Standardized Methodology: Using automated multiple-pass methods with standardized probing questions (e.g., USDA's Automated Multiple-Pass Method) reduces omissions and improves portion estimation [3].

The following diagram illustrates the decision pathway for selecting appropriate dietary assessment methods based on research objectives and resources:

Essential Research Reagents and Tools for Dietary Validation

Table 3: Key Research Reagents for Biomarker-Validated Dietary Assessment

| Reagent/Tool | Primary Function | Application Context | Key References |

|---|---|---|---|

| Doubly Labeled Water (DLW) | Objective measure of total energy expenditure through isotope elimination kinetics | Criterion method for validating energy intake assessment | [1] [2] |

| 24-Hour Urinary Nitrogen | Recovery biomarker for protein intake quantification | Validation of protein intake estimates from self-report | [7] [2] |

| 24-Hour Urinary Potassium | Recovery biomarker for potassium intake assessment | Validation of fruit and vegetable intake estimates | [7] [2] |

| Serum/Plasma Folate | Concentration biomarker for folate status | Validation of fruit and vegetable intake, especially leafy greens | [7] |

| Automated Self-Administered 24-h Recall (ASA24) | Web-based system for collecting multiple 24-hour dietary recalls | Reduced interviewer bias; standardized data collection | [3] [2] |

| Myfood24 | Fully automated online dietary assessment tool with nutrient database | Self- and interviewer-administered dietary assessment across populations | [7] |

| GloboDiet (formerly EPIC-SOFT) | Computer-assisted 24-hour recall method with standardized probing | International standardization of dietary recall methodology | [3] |

The evidence from biomarker validation studies unequivocally demonstrates that self-reported dietary assessment methods are plagued by substantial systematic errors, particularly underreporting of energy intake that varies by participant characteristics and instrument type. These limitations necessitate cautious interpretation of dietary data in research and policy contexts.

Future directions for strengthening nutritional epidemiology include:

- Routine incorporation of biomarker validation in dietary studies to quantify and correct for measurement error

- Development of integrated assessment systems that combine self-report with emerging technologies like image-assisted methods and barcode scanning

- Standardized reporting of measurement error parameters to facilitate appropriate interpretation and cross-study comparison

- Methodological research to better understand the cognitive processes underlying dietary reporting and develop improved assessment strategies

While self-reported dietary instruments remain necessary tools for large-scale nutritional research, acknowledging their limitations and implementing strategies to mitigate systematic error is essential for advancing our understanding of diet-health relationships.

Within nutritional science, accurately measuring what people eat remains a fundamental challenge. Self-reported dietary data, from tools like food frequency questionnaires and 24-hour recalls, are hampered by limitations including under-reporting, recall errors, and poor estimation of portion sizes [8]. Dietary biomarkers, as objective indicators of food intake, are critical for advancing the field. This guide compares three key classes of biomarkers—recovery, concentration, and predictive biomarkers—within the context of establishing a correlation with habitual food intake, a core objective for researchers and drug development professionals.

Biomarker Classes at a Glance

The following table defines and compares the primary classes of dietary biomarkers.

| Biomarker Class | Core Definition & Function | Key Characteristics | Relationship to Habitual Intake |

|---|---|---|---|

| Recovery Biomarkers | Compounds quantitatively excreted in urine, allowing intake to be calculated based on excretion levels [8]. | Considered the "gold standard" for objective intake measurement; often based on 24-hour urine collections [8]. | A single 24-hour urine sample reflects short-term intake. Multiple samples over time (e.g., 3 non-consecutive days within 2-6 weeks) are needed to estimate habitual intake [8]. |

| Concentration Biomarkers | Food-derived compounds measured in blood, urine, or other biofluids, whose levels correlate with consumption [8]. | Reflect short-term intake; levels are influenced by pharmacokinetics (absorption, distribution, metabolism, excretion) [9] [8]. | Like recovery biomarkers, repeated measures from multiple biological samples over a timeframe are essential to assess habitual dietary patterns [8]. |

| Predictive Biomarkers | A single compound or a multi-metabolite signature (poly-metabolite score) identified via metabolomics and machine learning to predict intake [10] [11]. | Can objectively classify individuals based on dietary patterns (e.g., high vs. low consumption) with no reliance on self-reported data [10] [8]. | Poly-metabolite scores derived from blood or urine show high potential for classifying individuals based on their level of consumption of specific food types, such as ultra-processed foods [10] [11]. |

Experimental Protocols in Biomarker Research

The discovery and validation of dietary biomarkers rely on rigorous, complementary study designs.

Controlled Feeding Trials for Biomarker Discovery

This is the preferred approach for identifying candidate biomarkers [8]. A common protocol involves:

- Study Design: Participants are administered a specific test food or diet in a prespecified amount [9].

- Sample Collection: Blood and urine specimens are collected at multiple time points post-consumption, sometimes up to 24-48 hours, to characterize pharmacokinetic parameters and the half-life of candidate compounds [9] [8].

- Metabolomic Profiling: Biospecimens are analyzed using techniques like liquid chromatography-mass spectrometry (LC-MS) to identify compounds that change in response to the test food [9].

- Control Arm: The study includes a control arm where participants consume a similar diet without the test food to ensure identified biomarkers are specific [8].

Validation Studies

After discovery, candidate biomarkers must be validated [8]. The Dietary Biomarkers Development Consortium (DBDC) employs a multi-phase approach:

- Phase 1: Implement controlled feeding trials to identify candidate compounds and their pharmacokinetics [9].

- Phase 2: Evaluate the ability of candidate biomarkers to identify individuals consuming the associated foods using controlled studies of various dietary patterns [9].

- Phase 3: Validate the candidate biomarkers' ability to predict recent and habitual consumption in independent observational settings [9].

Development of Predictive Poly-Metabolite Scores

This methodology was used to develop a biomarker for ultra-processed food (UPF) intake [10] [11].

- Observational Data: Researchers used data from 718 older adults who provided biospecimens and detailed dietary information over 12 months [10] [11].

- Experimental Data: A domiciled feeding study was conducted with 20 adults at the NIH Clinical Center. Participants consumed a diet high in UPF (80% of energy) and a diet with no UPF (0% of energy) for two weeks each in random order [10] [11].

- Metabolite Identification & Machine Learning: Hundreds of metabolites correlated with UPF intake were identified. Machine learning was then used to identify patterns of metabolites in blood and urine predictive of high UPF intake, which were used to calculate poly-metabolite scores [10] [11].

- Validation: The scores were tested and shown to accurately differentiate within trial subjects between the highly processed and unprocessed diet phases [10] [11].

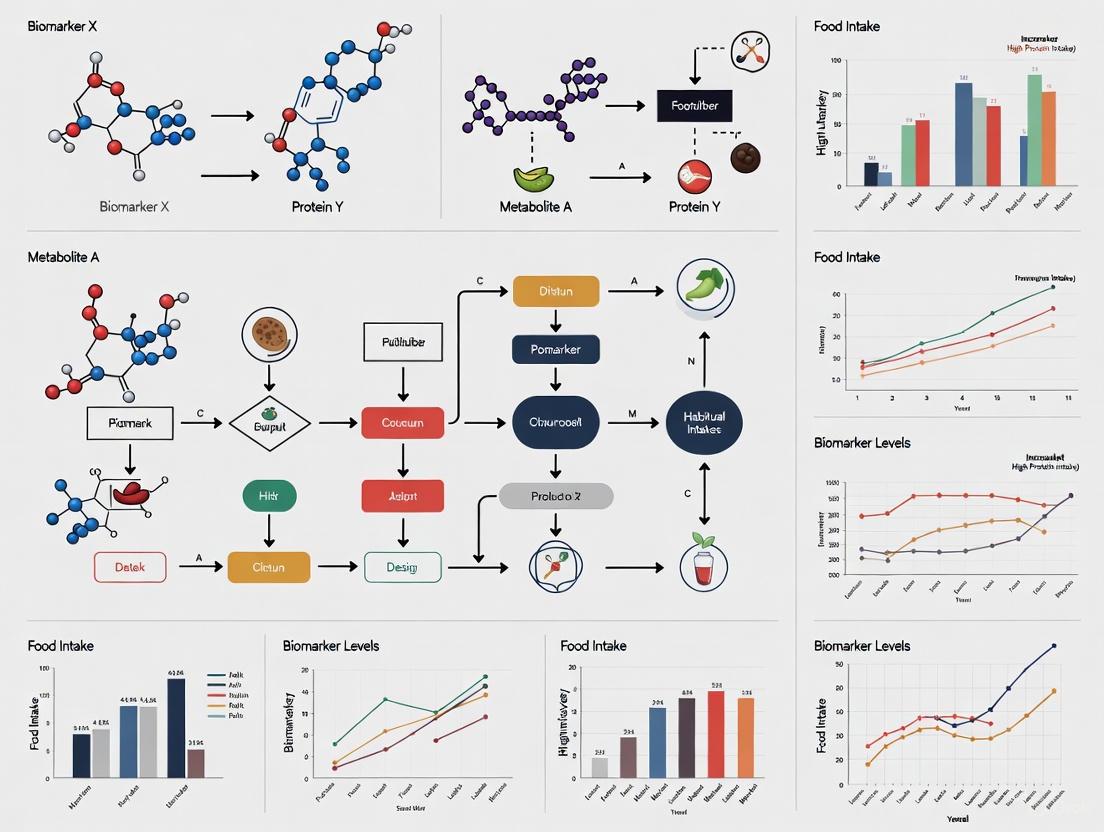

Visualizing the Biomarker Validation Pathway

The journey from biomarker discovery to application involves a rigorous, multi-stage process, as visualized below.

Biomarker Validation Workflow: This diagram outlines the key stages for validating a dietary biomarker, from initial discovery to real-world application.

The Researcher's Toolkit: Essential Reagents & Materials

Successful biomarker research requires specific reagents, databases, and analytical tools.

| Tool / Reagent | Function in Biomarker Research |

|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) | The primary analytical platform for metabolomic profiling of blood and urine to discover and quantify food-derived metabolites [9]. |

| Stable Isotope-Labeled Standards | Internal standards used in mass spectrometry to ensure accurate quantification of biomarkers by accounting for analytical variability [8]. |

| Food Composition Databases | Databases that link foods to their chemical components, crucial for identifying the origin of putative biomarkers. A current challenge is the lack of comprehensive databases for food-derived metabolites [8]. |

| 24-Hour Urine Collection Kits | Standardized kits for the complete collection of urine over 24 hours, which is essential for the validation and use of recovery biomarkers [8]. |

| Biobanked Samples from Cohort Studies | Archived biospecimens from large observational studies, used for the validation of candidate biomarkers in phase 3 studies and for developing predictive models [9] [10]. |

The evolution from traditional recovery biomarkers to sophisticated predictive poly-metabolite scores marks a significant advancement toward objectively measuring habitual food intake. While recovery biomarkers remain the gold standard for specific nutrients, the future lies in the discovery and rigorous validation of concentration and predictive biomarkers for a wide range of foods. These tools are indispensable for refining our understanding of the links between diet and health, calibrating self-reported data in large studies, and ultimately strengthening the evidence base for nutritional recommendations and therapeutic development.

The food metabolome, defined as the portion of the human metabolome directly derived from the digestion and biotransformation of foods and their constituents, represents a complex yet powerful resource for understanding dietary exposure [12] [13]. Comprising over 25,000 distinct compounds found in various foods, the food metabolome varies dramatically according to dietary intake and provides a detailed, objective snapshot of an individual's nutritional status [12]. For researchers and drug development professionals, this biological reflection of dietary intake offers a promising alternative to traditional self-reporting methods, which are often plagued by recall bias and inaccuracies [11]. The systematic exploration of the food metabolome has gained significant momentum with advances in analytical technologies, particularly mass spectrometry and nuclear magnetic resonance (NMR) spectroscopy, enabling more comprehensive detection and quantification of dietary biomarkers [14].

Understanding the relationship between habitual food intake and metabolic profiles is crucial for developing objective measures of dietary exposure. This field moves beyond hypothesis-driven approaches to embrace agnostic, data-rich investigations that can uncover novel biomarkers and bioactive molecules associated with health and disease [12]. The implications extend across nutritional science, therapeutic development, and public health, offering new avenues for understanding how diet influences metabolic pathways and disease risk [15]. This guide examines current methodologies, key findings, and emerging applications in food metabolome research, with particular emphasis on the correlation between biomarkers and habitual intake.

Analytical Approaches in Food Metabolomics

Core Analytical Technologies

Metabolomics employs several complementary analytical platforms to characterize the food metabolome, each with distinct strengths and applications. Mass spectrometry (MS) coupled with separation techniques like liquid chromatography (LC-MS) or gas chromatography (GC-MS) offers high sensitivity and the ability to detect metabolites at very low concentrations [14]. These platforms are particularly valuable for comprehensive profiling of complex biological samples. Nuclear magnetic resonance (NMR) spectroscopy, while generally less sensitive than MS, provides highly reproducible results with minimal sample preparation and non-destructive analysis [14]. NMR is especially useful for structural elucidation and quantifying known metabolites. Recent technological advances have enhanced the capabilities of these platforms, including ultra-performance liquid chromatography (UPLC) for improved separation efficiency, cryogenically-cooled probes for increased NMR sensitivity, and hybrid systems like LC-SPE-NMR for complex sample analysis [14].

Targeted vs. Untargeted Strategies

Food metabolomics approaches generally fall into two categories: targeted and untargeted analyses. Targeted metabolomics focuses on the precise identification and quantification of a predefined set of metabolites, typically those involved in specific metabolic pathways or known to be associated with certain food intakes [14]. This hypothesis-driven approach provides highly accurate quantitative data for validating potential biomarkers. In contrast, untargeted metabolomics aims to comprehensively profile all measurable metabolites in a sample without prior selection, making it ideal for discovery-phase research [14]. Untargeted approaches can be further divided into fingerprinting (rapid classification of samples based on spectral patterns) and profiling (more detailed analysis with some metabolite identification) [16]. The choice between these strategies depends on research goals, with many studies now incorporating both approaches in a complementary manner.

Table 1: Comparison of Major Analytical Platforms in Food Metabolomics

| Analytical Platform | Key Strengths | Common Applications | Sample Types |

|---|---|---|---|

| LC-MS/MS | High sensitivity, broad dynamic range, quantitative capability | Biomarker discovery and validation, pathway analysis | Plasma, urine, tissue, food extracts |

| GC-MS | Excellent separation efficiency, robust compound libraries | Volatile compounds, metabolic profiling | Serum, plant materials, fermented foods |

| NMR Spectroscopy | Non-destructive, highly reproducible, minimal sample prep | Structural elucidation, quality control, metabolic phenotyping | Intact tissues, biofluids, food products |

| CE-MS | High resolution for polar/ionic compounds | Amino acid analysis, nucleotide profiling | Cellular extracts, biofluids |

Key Experimental Findings on Biomarkers and Habitual Intake

Challenges in Reflecting Habitual Intake

The relationship between habitual food intake and metabolic profiles presents significant methodological challenges. A 2022 cohort study exploring associations between habitual food intake and metabolomes in adolescents and young adults revealed a limited reflection of habitual food group intake by single metabolites [17]. The researchers employed both orthogonal projection to latent structures (oPLS) and random forests analyses on data from 228 participants with yearly repeated 3-day food records. Surprisingly, they found only six metabolites in urine that showed consistent associations across both statistical methods, and no associations in blood that met their criteria for agreement [17]. These findings suggest that single biomarkers may have limited utility for assessing long-term dietary patterns, necessitating more complex multi-biomarker approaches.

Advancements in Multi-Metabolite Biomarker Panels

Recent large-scale studies have demonstrated the superior performance of multi-metabolite panels over single biomarkers. A 2025 study of 8,391 multi-ethnic Asian individuals analyzed 1,055 plasma metabolites and their associations with 169 foods and beverages [15]. Using machine learning approaches, the researchers developed multi-biomarker panels and composite scores for key dietary components and overall diet quality. These comprehensive biomarker panels explained variances in intake prediction models better than single biomarkers and showed reproducible associations over time [15]. Importantly, these objective measures improved prediction of clinical outcomes including insulin resistance, diabetes, BMI, and hypertension compared to self-reported dietary data [15].

Similar advances were reported in research on ultra-processed food consumption, where researchers identified patterns of hundreds of metabolites in blood and urine that correlated with the percentage of energy from ultra-processed foods [11]. Through machine learning, they developed poly-metabolite scores that could accurately differentiate between highly processed and unprocessed diet conditions in a controlled feeding study [11]. This approach demonstrates how metabolomic signatures can provide more nuanced and objective measures of dietary patterns than traditional assessment methods.

Table 2: Key Food-Metabolite Associations from Recent Studies

| Food Category | Associated Metabolites | Biological Sample | Study Population |

|---|---|---|---|

| Processed/Other Meat | Vanillylmandelate | Urine | European adolescents/young adults [17] |

| Eggs | Indole-3-acetamide, N6-methyladenosine | Urine | European adolescents/young adults [17] |

| Vegetables | Hippurate, citraconate/glutaconate, X-12111 | Urine | European adolescents/young adults [17] |

| Ultra-processed Foods | Poly-metabolite scores (multiple compounds) | Blood and Urine | IDATA Study & NIH Clinical Center [11] |

| Multi-ethnic Asian Diet | 1,055 metabolites analyzed, multi-biomarker panels | Plasma | 8,391 Asian participants [15] |

Experimental Protocols for Diet-Metabolite Association Studies

Well-designed experimental protocols are essential for robust diet-metabolite association research. The 2022 cohort study on adolescents and young adults provides an exemplary methodology [17]. The research employed yearly repeated 3-day food records to establish habitual intake patterns across 23 food groups during adolescence. The analytical approach included untargeted metabolomics that quantified 2,638 metabolites in plasma and 1,407 metabolites in urine. To ensure rigorous statistical analysis, researchers applied two complementary methods: orthogonal projection to latent structures (oPLS) and random forests, with findings considered significant only when both methods agreed [17]. This stringent approach minimized false discoveries and highlighted the most robust associations.

Controlled feeding studies represent another powerful methodological approach, as demonstrated in ultra-processed food research [11]. The experimental design included both observational data from 718 participants in the IDATA study and a domiciled feeding study with 20 subjects admitted to the NIH Clinical Center. In the controlled feeding component, participants were randomized to either a diet high in ultra-processed foods (80% of calories) or a diet with zero ultra-processed foods for two weeks, immediately followed by the alternate diet [11]. This crossover design allowed for within-subject comparisons under highly controlled conditions, strengthening the causal inference between dietary exposure and metabolic changes.

Essential Research Tools and Reagents

The advancement of food metabolome research relies on specialized reagents, databases, and analytical tools. Key resources include comprehensive metabolite databases such as the Human Metabolome Database (HMDB) and nutrition-specific databases like the Nutritional Phenotype Database (dbNP) [12]. For sample preparation, extraction kits designed for different sample types (plasma, urine, tissues) are essential, with protocols often optimized for either polar or non-polar metabolites. Chemical libraries for the food metabolome containing standard compounds are crucial for accurate metabolite identification and quantification [12].

Commercial platforms have emerged to support food metabolomics research, offering standardized databases and analytical packages. For instance, GC/MS databases such as the Smart Metabolites Database include hundreds of registered compounds including organic acids, fatty acids, amino acids, and sugars, with methods for both scan and MRM (Multiple Reaction Monitoring) analysis [18]. Similarly, LC/MS/MS Method Packages provide targeted analysis for metabolites in major metabolic pathways, with specific methods optimized for different column chemistries [18]. These standardized approaches facilitate reproducibility across laboratories and enable more efficient biomarker validation.

Table 3: Essential Research Reagent Solutions for Food Metabolomics

| Research Tool | Function/Application | Example Specifications |

|---|---|---|

| GC-MS/MS with Database | Quantitative analysis of primary metabolites | Smart Metabolites Database: 568 compounds registered for scan, 475 for MRM [18] |

| LC-MS/MS Method Packages | Targeted analysis of key metabolic pathways | Method Package Ver. 2: 55 metabolites with ion pair reagent, 97 with PFPP columns [18] |

| CE-MS & LC-MS Platforms | Measurement of polar metabolites in food networks | Quantification of 100+ polar metabolites with calibration; good separation of structural isomers [19] |

| NMR Solvent Systems | Metabolic profiling of diverse food samples | Optimization for different food matrices (juice, pulp, dry powder) and compound classes [14] |

| Protein Assay Kits | Sample preparation and quantification | BCA protein assay for proteomic workflows [20] |

Methodological Workflows and Metabolic Pathways

The experimental workflow in food metabolomics involves multiple stages, from study design through data interpretation. The following diagram illustrates the key steps in a comprehensive diet-metabolite association study:

Diagram 1: Experimental Workflow in Diet-Metabolite Association Studies

The relationship between dietary exposure and biological response involves complex metabolic pathways that transform food components into measurable metabolites. The following diagram illustrates key metabolic processes linking diet to the food metabolome:

Diagram 2: Metabolic Pathways Linking Diet to Measurable Metabolites

Applications in Nutritional Research and Drug Development

The food metabolome has significant applications across multiple research domains. In nutritional epidemiology, metabolomic approaches enhance dietary assessment accuracy by providing objective biomarkers that complement traditional questionnaires [15]. This is particularly valuable for studying diet-disease relationships, where recall bias can substantially impact findings. In functional food development, metabolomics facilitates the identification of bioactive compounds and assessment of their physiological effects [14] [19]. For instance, researchers have used metabolomic profiling to analyze metabolic changes after ingestion of specific foods or to explore components that improve gut health [19].

For drug development professionals, understanding food metabolome interactions is crucial for several reasons. First, dietary factors can significantly influence metabolic pathways targeted by pharmaceuticals, potentially modifying drug efficacy or toxicity profiles [20]. Second, the food metabolome can reveal important interactions between nutrition and drug metabolism, informing clinical trial design and personalized medicine approaches. Finally, the identification of dietary patterns associated with disease risk through metabolomic profiling can reveal novel therapeutic targets for preventive interventions [15].

Food metabolomics also plays an increasingly important role in food quality and safety assessment. Proteomic and metabolomic analyses help monitor meat quality, detect adulteration, and evaluate processing effects [16]. For example, LC-MS/MS technologies have identified species-specific heat-stable peptide markers in processed meat products, enabling accurate authentication and quality control [16]. Similarly, NMR-based approaches have been used to determine the geographical origin of honey, characterize metabolites in different wine varieties, and evaluate the quality of green tea and ginseng products [14].

The food metabolome represents a rich source of biological information that reflects habitual dietary intake with unprecedented detail. While early research focused on identifying single biomarkers for specific foods, recent studies demonstrate that multi-metabolite panels and machine learning approaches provide more accurate assessment of dietary patterns [15] [11]. These advances address fundamental limitations of self-reported dietary data and offer new opportunities for objective exposure assessment in nutritional research and drug development.

Future progress in this field requires coordinated efforts to address several challenges. There remains a need for larger, more diverse population studies to identify culturally-specific biomarkers and understand ethnic variations in diet-metabolite relationships [15]. Development of standardized protocols and shared repositories for metabolomic data will enhance reproducibility and facilitate meta-analyses [12]. Additionally, the integration of food metabolome data with other omics technologies (proteomics, genomics) will provide more comprehensive understanding of how diet influences health at the systems biology level.

For researchers and drug development professionals, the rapidly evolving science of the food metabolome offers powerful tools to elucidate complex relationships between diet, metabolism, and health outcomes. As analytical technologies continue to advance and computational methods become more sophisticated, the food metabolome will undoubtedly play an increasingly central role in personalized nutrition, preventive medicine, and therapeutic development.

Accurately measuring dietary intake is a fundamental challenge in nutritional epidemiology. For decades, research has relied heavily on self-reported methods such as food frequency questionnaires and 24-hour recalls, which are susceptible to systematic and random measurement errors [21] [22]. These limitations have spurred international efforts to discover and validate objective biomarkers of food intake. Among the most prominent initiatives are the Dietary Biomarkers Development Consortium (DBDC) in the United States and the Food Biomarker Alliance (FoodBAll) in Europe. These consortia aim to identify metabolomic signatures in biofluids like blood and urine that can provide a more reliable, objective measure of habitual food consumption, thereby strengthening research on the links between diet and chronic diseases [22] [21].

The DBDC and FoodBAll share a common goal but differ in their organizational structure and regional focus.

Dietary Biomarkers Development Consortium (DBDC)

The DBDC was established in 2021 following a call from the National Institute of Diabetes and Digestive and Kidney Diseases (NIDDK) and the USDA National Institute of Food and Agriculture (USDA-NIFA) [22]. It represents the first major U.S. effort to systematically discover and validate biomarkers for foods commonly consumed in the American diet. Its stated mission is to "discover objective measures, biomarkers, that can inform individual dietary patterns and advance nutritional epidemiology" [23]. The consortium is structured around three primary study centers at leading U.S. institutions: Harvard University (in collaboration with the Broad Institute of MIT and Harvard), the Fred Hutchinson Cancer Center (in collaboration with the University of Washington), and the University of California Davis (in collaboration with the USDA Agricultural Research Service) [22]. A Data Coordinating Center at Duke University manages administrative activities, data quality control, and will eventually submit data to public repositories like the NIDDK Central Repository and the Metabolomics Workbench [22].

Food Biomarker Alliance (FoodBAll)

FoodBAll was a European consortium that explored markers of food intake across different populations in Europe [22]. While the search results provide less specific structural detail for FoodBAll compared to the DBDC, it is noted as a systematic and concerted effort that contributed significantly to the field of dietary biomarker discovery. Its work helped establish foundational criteria for validating food intake biomarkers, including plausibility, dose-response, time-response, and robustness in free-living populations [22].

Table 1: Structural and Regional Comparison of the DBDC and FoodBAll

| Feature | Dietary Biomarkers Development Consortium (DBDC) | Food Biomarker Alliance (FoodBAll) |

|---|---|---|

| Primary Region | United States [22] | Europe [22] |

| Leading Agencies | National Institutes of Health (NIDDK), USDA-NIFA [22] | Information not specified in search results |

| Organizational Structure | Three study centers, a Data Coordinating Center, and governing committees (Steering, Executive) [22] | Information not specified in search results |

| Public Data Access | Data will be archived in NIDDK Repository and Metabolomics Workbench [22] | Information not specified in search results |

| Key Dietary Focus | Foods commonly consumed in the U.S. diet, guided by USDA MyPlate [22] [24] | Exploration across different European populations [22] |

Experimental Protocols and Methodologies

Both consortia employ controlled feeding studies and advanced metabolomics to identify candidate biomarkers, with the DBDC implementing a highly structured, multi-phase protocol.

The DBDC's Three-Phase Approach

The DBDC has implemented a rigorous, multi-phase strategy to move biomarkers from discovery to validation [22]:

- Phase 1: Candidate Biomarker Identification. This initial phase involves controlled feeding trials where healthy participants consume pre-specified amounts of test foods. Blood and urine specimens are collected at multiple time points and subjected to extensive metabolomic profiling. This design allows researchers to characterize the pharmacokinetic parameters of candidate biomarkers, such as their appearance and clearance rates in the body [22].

- Phase 2: Evaluation in Varied Dietary Patterns. In this phase, the ability of the candidate biomarkers to identify individuals consuming the associated foods is tested within the context of different controlled dietary patterns. This helps determine the specificity and robustness of the biomarkers [22].

- Phase 3: Validation in Observational Settings. The final validation step assesses the performance of the candidate biomarkers in independent, free-living populations. This tests whether the biomarkers can predict recent and habitual consumption of specific foods in a real-world setting, outside the controlled environment of a feeding study [22].

Analytical and Statistical Methods

The core analytical methodology for the DBDC relies on metabolomic profiling using mass spectrometry. Specific techniques include liquid chromatography-mass spectrometry (LC-MS) and hydrophilic-interaction liquid chromatography (HILIC) to measure a wide array of small molecules in blood and urine [22]. The data analysis is complex, given the expected high inter-individual variability due to genetics, gut microbiome, and other factors. Statistical approaches range from generalized linear models (GLMs) to Bayesian regression, all aimed at ranking compounds for their ability to discriminate between food groups and identify optimal sample collection times [24].

The following diagram illustrates the sequential workflow of the DBDC's three-phase biomarker development pipeline.

Key Research Outputs and Applications

The research conducted by these consortia is generating tangible outputs and demonstrating practical applications for dietary biomarkers in public health and clinical research.

Expansion of the Biomarker Repository

A primary output of these initiatives is the significant expansion of the library of validated intake biomarkers. The DBDC, for instance, is systematically working to add biomarkers for specific foods and food groups, moving beyond the traditional handful of biomarkers for total energy or protein [21]. For example, the UC Davis center is specifically focused on discovering biomarkers linked to the consumption of fruits and vegetables, while other centers are investigating biomarkers for proteins, carbohydrates, and dairy [25]. This expansion is crucial for building a more complete objective picture of dietary patterns.

Application in Nutritional Epidemiology

Intake biomarkers are increasingly being applied to correct for measurement errors in dietary studies. In the Women's Health Initiative (WHI) cohorts, for example, the use of the doubly labeled water (DLW) method as an objective biomarker for total energy expenditure revealed substantial underestimation of energy intake in self-reported data, particularly among overweight and obese participants [21]. This objective data was then used to "calibrate" self-reported intake, which dramatically altered the observed associations between energy intake and major disease outcomes like cancer and cardiovascular disease [21].

Development of Complex Biomarker Signatures

Beyond biomarkers for single foods, research is advancing towards poly-metabolite scores that reflect complex dietary patterns. A notable example is the development of a metabolite signature to measure consumption of ultra-processed foods (UPF). One study used machine learning on metabolomic data from both observational and controlled feeding studies to identify patterns of metabolites in blood and urine that could accurately differentiate individuals with high versus zero intake of ultra-processed foods [11]. This type of score represents a powerful new tool for objectively studying the health impacts of complex modern diets.

Table 2: Examples of Dietary Biomarkers and Their Research Applications

| Biomarker Type | Specific Example(s) | Research Application and Finding |

|---|---|---|

| Energy Intake | Doubly Labeled Water (DLW) as a biomarker for total energy expenditure [21] | Revealed 30-40% energy intake underestimation in overweight/obese postmenopausal women using FFQs; calibrated intake showed strong positive associations with disease risk [21]. |

| Food Group | Biomarkers for fruits and vegetables (under development) [24] [25] | Aims to provide objective verification of consumption for these key food groups, moving beyond error-prone self-report [24]. |

| Dietary Pattern | Poly-metabolite score for Ultra-Processed Foods (UPF) [11] | Machine learning identified metabolite patterns that could accurately differentiate between high- and zero-UPF diets in a controlled feeding study [11]. |

The Scientist's Toolkit: Essential Reagents and Technologies

The experimental work of dietary biomarker discovery and validation relies on a suite of sophisticated reagents, technologies, and methodologies.

Table 3: Essential Research Reagents and Solutions for Dietary Biomarker Studies

| Tool / Reagent | Function and Application | Specific Examples from Research |

|---|---|---|

| Controlled Test Meals | Administered in precise amounts during feeding trials to create a known dietary exposure for identifying candidate biomarkers. | DBDC studies use test meals with varying servings of fruits and vegetables according to USDA MyPlate guidelines [22] [24]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Primary analytical platform for untargeted and targeted metabolomic profiling of biofluids to detect and quantify small molecule metabolites. | Used by all DBDC sites for analyzing plasma and urine specimens; often coupled with HILIC for better separation of polar compounds [22] [24]. |

| Immunoassays | Used for targeted, quantitative measurement of specific protein biomarkers in biofluids. | Used in other biomarker fields (e.g., traumatic brain injury) to measure proteins like GFAP and UCH-L1 in sweat [26]; analogous to targeted nutrient biomarkers. |

| Doubly Labeled Water (DLW) | An objective biomarker for total energy expenditure, used as a reference method to validate self-reported energy intake. | Used in the Women's Health Initiative to reveal systematic bias in self-reported energy data and to calibrate intake for disease association studies [21]. |

| Stable Isotope-Labeled Standards | Added to samples during mass spectrometry analysis for precise quantification of metabolites, correcting for analytical variability. | Implied in high-resolution MS/MS methods for identifying and quantifying unknown food-associated compounds [24]. |

| Biofluid Collection Kits | Standardized collection and preservation of biological specimens (e.g., blood, urine, sweat) for consistent metabolomic analysis. | DBDC harmonizes protocols for urine and blood collection; other studies use specialized sweat patches for non-invasive biomarker sampling [22] [26]. |

The concerted efforts of the DBDC and FoodBAll are fundamentally advancing the science of nutritional assessment. By moving from error-prone self-report to objective biomarkers based on metabolomic signatures, these consortia are addressing a long-standing critical limitation in nutritional epidemiology. The DBDC's structured, multi-phase approach in the U.S. complements the foundational work of FoodBAll in Europe. Their collective output—a growing repository of validated biomarkers, sophisticated poly-metabolite scores, and methodologies for error correction—is providing the scientific community with powerful new tools. These tools are crucial for more precisely defining the correlations between habitual food intake and health, ultimately leading to more reliable dietary guidelines and public health interventions.

Key Gaps in Current Biomarker Research for Habitual Intake Assessment

Accurate assessment of habitual dietary intake represents a fundamental challenge in nutritional science, epidemiology, and public health. Traditional reliance on self-reported methods such as food frequency questionnaires, 24-hour recalls, and food diaries is plagued by well-documented limitations including under-reporting, poor estimation of portion sizes, recall errors, and intentional misreporting [27] [28] [29]. Biomarkers of food intake (BFIs) offer a promising alternative as objective indicators of dietary exposure that can bypass the biases inherent in self-reported data. These biomarkers are typically food-derived metabolites present in biological samples that distinguish themselves from endogenous metabolites [30] [8]. Despite significant advances in the field, critical gaps persist in our ability to use biomarkers for reliable assessment of long-term, habitual intake patterns. This review systematically examines these research gaps, compares biomarker performance across studies, and outlines methodological frameworks for advancing the field toward more accurate dietary assessment.

Current Limitations in Biomarker Reproducibility and Variability

Poor Long-Term Reproducibility

A fundamental limitation in using biomarkers for habitual intake assessment is their questionable reproducibility over extended timeframes. A recent large-scale study examining urinary biomarkers in European children and adolescents revealed poor to moderate reproducibility over 2- to 4-year periods. The study reported median intraclass correlation coefficients (ICCs) of just 0.27 (range: 0.11-0.54) and 0.28 (range: 0.15-0.51) over 2- and 4-year intervals, respectively [31]. These low values indicate substantial variability in biomarker measurements over time, raising questions about their reliability for assessing long-term dietary patterns.

The same investigation sought to identify factors explaining biomarker variance, with country of residence explaining the largest proportion (median: 5% for 2-year interval, 4.5% for 4-year interval). Surprisingly, actual dietary intake explained only minimal variation—0.7% and 0.6% for the 2- and 4-year intervals, respectively [31]. This suggests that non-dietary factors account for the majority of biomarker variability, complicating their interpretation as straightforward indicators of food consumption.

Table 1: Sources of Variation in Urinary Biomarkers of Food Intake

| Source of Variation | Proportion of Variance Explained (2-year interval) | Proportion of Variance Explained (4-year interval) |

|---|---|---|

| Country of residence | 5.0% (median) | 4.5% (median) |

| Dietary intake | 0.7% (range: 0.0-1.5) | 0.6% (range: 0.0-1.1) |

| Other factors (cumulative) | 17.0% (median) | 14.6% (median) |

Critical Research Gaps in Biomarker Validation

Insufficient Validation Against Established Criteria

The validation of proposed biomarkers has significantly lagged behind their discovery. While metabolomic approaches have identified numerous putative biomarkers, most lack comprehensive validation against established criteria [30] [8]. The European FoodBall project proposed a validation framework encompassing eight key criteria: plausibility, dose-response, time-response, robustness, reliability, stability, analytical performance, and inter-laboratory reproducibility [32] [8]. A recent assessment of these criteria revealed that many foods still lack well-validated biomarkers, with only a limited number (e.g., proline betaine for citrus intake) having undergone extensive validation [8].

Incomplete Understanding of Biomarker Kinetics

Many candidate biomarkers lack characterization of their pharmacokinetic parameters, including absorption, distribution, metabolism, and excretion patterns. Without understanding the time-response relationship and half-life of biomarkers, it is difficult to determine the appropriate sampling frequency needed to capture habitual intake [32] [8]. The emerging Dietary Biomarkers Development Consortium (DBDC) has recognized this gap and is implementing controlled feeding trials to characterize pharmacokinetic parameters of candidate biomarkers [9].

Table 2: Validation Status of Selected Dietary Biomarkers

| Biomarker Category | Example Biomarker | Validation Level | Key Gaps |

|---|---|---|---|

| Citrus fruits | Proline betaine | High (extensively validated) | Limited data on inter-individual variability |

| Whole grains | Alkylresorcinols | Moderate | Dose-response in diverse populations |

| Cruciferous vegetables | Sulfur-containing metabolites | Moderate | Effect of cooking methods |

| Red meat | Unknown | Low | Specific biomarkers lacking |

| Soy foods | Isoflavones | Moderate | Impact of food processing |

Methodological and Analytical Challenges

Complex Nature of Food and Dietary Patterns

Nutrition interventions fundamentally differ from pharmaceutical trials in their complexity. Foods consist of heterogeneous mixtures of nutrients and bioactive components that interact in synergistic or antagonistic ways [33]. This complexity creates challenges for identifying specific biomarkers and establishing clear dose-response relationships. Additionally, high collinearity between dietary components—where consumption of one food often correlates with consumption of others—makes it difficult to isolate biomarkers specific to individual foods [33].

Impact of Food Processing and Preparation

Food processing, cooking methods, and storage conditions can significantly alter the chemical composition of foods and their resulting metabolites [34] [33]. For example, different cooking methods for meat or processing techniques for grains may generate different metabolite profiles, potentially confounding biomarker measurements [34]. The MAIN Study specifically addressed this challenge by testing biomarker performance with different food formulations and processing methods involving meat, wholegrain, fruits, and vegetables [34].

Interindividual Variability in Biomarker Response

Multiple factors contribute to substantial interindividual variability in biomarker response, including genetic polymorphisms, gut microbiota composition, lifestyle factors, and physiological states [32] [33]. This variability reduces the robustness of biomarkers across diverse population groups. A validation framework has recently been expanded to include assessment of intra- and inter-individual variability in biomarker levels as an additional criterion [8].

Experimental Approaches and Methodological Frameworks

Controlled Feeding Studies for Biomarker Discovery

The preferred approach for identifying biomarkers of food intake involves human intervention studies with controlled feeding. These typically include participants consuming specific foods with collection of biological samples in the postprandial state [8]. The MAIN Study implemented an innovative design using free-living individuals preparing meals at home, thus bridging the gap between highly controlled laboratory studies and real-world conditions [34]. This approach allowed researchers to test methodology in an environment that mimicked annual eating patterns using commonly consumed foods.

Analytical Considerations for Biomarker Measurement

The analytical workflow for biomarker discovery and validation requires careful consideration of multiple factors. The MAIN Study demonstrated that spot urine samples, normalized by refractive index to account for differences in fluid intake, could be effectively used for dietary exposure monitoring in large epidemiological studies [34]. This approach offers practical advantages over more burdensome 24-hour urine collections.

Table 3: Essential Research Reagents and Analytical Tools

| Research Tool | Function/Purpose | Examples/Alternatives |

|---|---|---|

| LC-MS (Liquid Chromatography-Mass Spectrometry) | Metabolite separation and identification | UHPLC-MS, HPLC-MS |

| GC-MS (Gas Chromatography-Mass Spectrometry) | Volatile compound analysis | GC-IRMS for stable isotopes |

| NMR (Nuclear Magnetic Resonance) | Metabolic fingerprinting | 1H-NMR, 13C-NMR |

| Stable isotope analyzers | Detection of 13C isotopes for added sugars | CF-SIRMS, NA-SIMS |

| Biobanking systems | Long-term sample storage | -80°C freezers, LN2 storage |

| Normalization methods | Accounting for fluid intake variations | Creatinine, refractive index |

Future Directions and Research Priorities

Expanding Biomarker Coverage and Specificity

Current biomarker panels cover only a limited range of commonly consumed foods. Systematic reviews have identified biomarkers for categories including fruits, vegetables, aromatics, grains, dairy, soy, coffee, tea, cocoa, alcohol, meat, proteins, nuts, seeds, and sweeteners [29]. However, many specific foods within these categories lack robust biomarkers. Plant-based foods are often represented by polyphenols, while others are distinguishable by innate food composition, such as sulfurous compounds in cruciferous vegetables or galactose derivatives in dairy [29]. Future research should prioritize foods of high public health relevance that are currently underrepresented in biomarker panels.

Integrating Multiple Biomarkers and Statistical Approaches

Single biomarkers rarely capture the complexity of food intake. Future approaches should focus on developing multi-metabolite biomarker panels that may provide more reliable estimation of dietary exposure than single-biomarker approaches [34]. Additionally, new statistical methods are needed to handle multiple biomarkers for single foods and to account for the complex covariance structure of dietary intake [30] [8].

Addressing Real-World Complexity

For biomarkers to have significant utility in public health, their performance must be demonstrated in real-world environments where foods are consumed as part of complex meals rather than in isolation [34]. Future studies should explore shorter time intervals between measurements and investigate other sources of variation, including the influence of the gut microbiome and genetic factors [31].

Significant gaps remain in the development and application of biomarkers for habitual dietary intake assessment. The poor long-term reproducibility of current biomarkers, incomplete validation against established criteria, and insufficient understanding of the factors contributing to biomarker variability represent the most pressing challenges. Future research should prioritize comprehensive validation of candidate biomarkers against the eight established criteria, expansion of biomarker coverage to include foods of high public health relevance, and development of statistical approaches to integrate multiple biomarkers into panels that better reflect the complexity of dietary intake. Addressing these gaps will require collaborative efforts, such as those undertaken by the Dietary Biomarkers Development Consortium, and methodological innovations that bridge the divide between highly controlled feeding studies and real-world dietary patterns. Only through such comprehensive approaches can biomarkers fulfill their potential as objective tools for assessing habitual dietary intake in nutrition research and public health monitoring.

Methodological Approaches for Biomarker Discovery and Application in Research Settings

In nutritional science, establishing a reliable correlation between biomarker levels and habitual food intake is fundamental for developing objective dietary assessment tools. Unlike traditional self-report methods, which are prone to significant measurement error and bias, dietary biomarkers offer a more accurate means of linking dietary patterns to health outcomes. The discovery and validation of these biomarkers rely on two primary research approaches: controlled feeding trials and observational studies. This guide examines the methodological frameworks, applications, and comparative strengths of these designs, providing researchers with a structured overview for planning biomarker discovery research.

Table 1: Key Characteristics of Discovery Study Designs

| Feature | Controlled Feeding Trials | Observational Studies |

|---|---|---|

| Primary Objective | Identify candidate biomarkers and establish causal intake-biomarker relationships [9] [35] | Validate biomarkers in free-living populations and assess habitual intake [9] [21] |

| Study Environment | Highly controlled (e.g., metabolic ward, provided diets) [36] [35] | Free-living, real-world settings [37] |

| Dietary Control | Complete control; diets are known and provided [35] | No direct control; relies on self-report (FFQ, 24-h recall) [21] [38] |

| Key Strengths | Controls for confounding; establishes pharmacokinetics; high internal validity [9] [39] | Assesses generalizability; suitable for long-term intake; high external validity [9] [21] |

| Main Limitations | High cost and participant burden; short duration; limited generalizability [39] [35] | Cannot prove causality; residual confounding; self-report dietary errors [21] [38] |

| Ideal Use Case | Initial biomarker discovery and dose-response characterization [9] [37] | Biomarker validation and application in epidemiological cohorts [9] [38] |

Experimental Protocols and Workflows

Controlled Feeding Trials

These studies are the gold standard for the initial discovery phase of biomarker development.

- Protocol Design: Participants are provided with all meals and snacks for a defined period, typically ranging from two weeks to several months [36] [35]. Diets can be designed to test a single specific food, a nutrient, or a complex dietary pattern.

- Diet Formulation: Two common approaches exist: 1) Standardized menus, where all participants consume the same diet to reduce variability [36], and 2) Personalized menus, which are formulated to approximate each participant's habitual diet based on pre-study food records, thereby preserving real-world variation in nutrient intake for biomarker evaluation [35].

- Biospecimen Collection: Blood and urine samples are collected at precise time points before, during, and after the intervention. This allows researchers to characterize the pharmacokinetic profile of candidate biomarkers, including their appearance, peak concentration, and clearance [9] [37].

- Analytical Methods: Metabolomic profiling of biospecimens is performed primarily using liquid chromatography-mass spectrometry (LC-MS) or nuclear magnetic resonance (NMR) to identify food-specific metabolites [21] [39] [40].

The following diagram illustrates a typical workflow for a controlled feeding trial.

Observational Studies

This design is critical for validating the performance of candidate biomarkers in larger, free-living populations.

- Protocol Design: Researchers recruit a cohort of participants who consume their habitual, self-selected diets. Dietary intake is assessed using tools like Food Frequency Questionnaires (FFQs), 24-hour recalls, or food diaries [21] [38].

- Biospecimen Collection: Participants typically provide one or more biospecimens (e.g., blood, urine) at a single time point or longitudinally. A key challenge is ensuring that a single sample can reflect habitual intake [21] [40].

- Data Analysis: Statistical models, primarily linear regression, are used to correlate the concentrations of candidate biomarkers in biospecimens with self-reported dietary intake data. This assesses how well the biomarker predicts reported consumption [21] [38].

- Advanced Applications: Machine learning techniques are increasingly used to develop poly-metabolite scores—predictive models based on multiple metabolites. For example, NIH researchers have developed such scores to objectively measure consumption of ultra-processed foods [10] [11].

The workflow below outlines the key stages of an observational study for biomarker validation.

Integrated Frameworks for Biomarker Development

Leading research initiatives now recognize that a sequential, multi-phase approach integrating both trial and observational designs is the most robust path from biomarker discovery to application. The Dietary Biomarkers Development Consortium (DBDC), for instance, employs a structured three-phase framework [9]:

- Phase 1: Discovery. Controlled feeding trials are used to identify candidate biomarkers and characterize their pharmacokinetics.

- Phase 2: Evaluation. Controlled studies with varied dietary patterns test the ability of candidate biomarkers to classify individuals based on their intake of target foods.

- Phase 3: Validation. Independent observational studies evaluate the performance of biomarkers in predicting food intake in free-living populations.

This integrated framework ensures that biomarkers are not only biologically sound but also practically useful in epidemiological research.

Table 2: Key Reagent Solutions for Biomarker Research

| Research Reagent | Function & Application in Biomarker Studies |

|---|---|

| Doubly Labeled Water (DLW) | Objective biomarker for total energy expenditure; used as a reference to validate self-reported energy intake and calibrate other biomarkers [21] [35]. |

| 24-hour Urinary Nitrogen | Recovery biomarker for protein intake; serves as a high-quality objective measure for calibrating self-reported protein data [21] [38] [35]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Primary analytical platform for targeted and untargeted metabolomics; identifies and quantifies food-derived metabolites in blood and urine [9] [39] [40]. |

| Stable Isotope Labels | Used in controlled trials to track the metabolic fate of specific food components, helping to distinguish dietary origins of metabolites [39]. |

| Automated Dietary Assessment Tools (e.g., ASA-24) | Self-report tools used in observational cohorts to collect dietary data for correlation with biomarker levels; subject to measurement error but necessary for scale [9] [38]. |

| Biobanked Serum/Plasma & Urine Samples | Archived samples from large cohorts used in validation phases; enable testing of candidate biomarkers against health outcomes in nested case-control studies [21] [40]. |

Controlled feeding trials and observational studies serve distinct yet complementary roles in the lifecycle of a dietary biomarker. Feeding trials provide the causal evidence and pharmacokinetic precision necessary for initial discovery, while observational studies offer the real-world validation and generalizability required for application in public health and epidemiology. The most successful biomarker development pipelines, such as that employed by the DBDC, strategically integrate both methodologies. As the field advances with technologies like machine learning and complex poly-metabolite scores, this synergistic use of rigorous controlled experiments and large-scale observational data will continue to be the cornerstone of objective dietary assessment.

Objective assessment of habitual food intake remains a significant challenge in nutritional epidemiology. Traditional methods, such as food diaries and frequency questionnaires, are prone to recall bias and measurement error, limiting their reliability for establishing precise diet-disease relationships [41] [42]. Dietary biomarkers, objectively measured from biological samples, offer a promising alternative by providing a more accurate representation of actual food consumption and metabolic response [39]. The discovery and validation of these biomarkers depend heavily on advanced analytical platforms capable of detecting and quantifying thousands of metabolites simultaneously.

Metabolomic profiling has emerged as a powerful approach for identifying biomarker patterns reflective of dietary intake. Among the various technologies available, Liquid Chromatography-Mass Spectrometry (LC-MS), often coupled with Hydrophilic Interaction Liquid Chromatography (HILIC), and Nuclear Magnetic Resonance (NMR) spectroscopy represent the most widely used platforms in nutritional metabolomics [42] [43]. Each platform offers distinct advantages and limitations in coverage, sensitivity, reproducibility, and throughput, making them complementary rather than competitive for comprehensive biomarker research. This guide provides an objective comparison of these analytical platforms, supported by experimental data and methodological protocols relevant to habitual food intake studies.

The selection of an appropriate analytical platform depends heavily on the specific research objectives, required sensitivity, metabolite coverage, and available resources. The table below summarizes the key technical characteristics and performance metrics of LC-MS, HILIC-LC-MS, and NMR platforms based on recent applications in nutritional metabolomics.

Table 1: Performance Comparison of Major Analytical Platforms in Metabolomic Profiling

| Platform Characteristic | LC-MS (Reversed-Phase) | HILIC-LC-MS | NMR Spectroscopy |

|---|---|---|---|

| Analytical Principle | Separation by hydrophobicity; mass-based detection | Separation by polarity; mass-based detection | Magnetic properties of atomic nuclei |

| Optimal Metabolite Coverage | Mid-to non-polar metabolites (lipids, acyl carnitines) [44] | Polar metabolites (amino acids, sugars, organic acids) [45] [46] | Abundant, mainly polar metabolites (lipoproteins, organic acids) [42] |

| Typical Sensitivity | fmol-µmol (high sensitivity) [47] [46] | fmol-µmol (high sensitivity) [47] [46] | µmol-mmol (lower sensitivity) [48] |

| Analytical Repeatability (CV) | Excellent (<20% for most features) [45] | Excellent (median CV ~5%) [45] | High (dependent on metabolite concentration) |

| Sample Throughput | Medium | Medium | High (rapid, minimal preparation) [42] |

| Quantitative Capability | Excellent (wide dynamic range) [46] | Excellent (wide dynamic range) [47] [46] | Good (directly proportional) |

| Structural Information | Moderate (fragmentation patterns) | Moderate (fragmentation patterns) | High (definitive structural elucidation) |

| Sample Preparation | Moderate complexity | Moderate complexity | Minimal preparation |

| Destructive Analysis | Yes | Yes | No |

| Key Applications in Food Intake Research | Lipid-soluble vitamins, meat biomarkers (carnosine) [41], UPF signature lipids [43] | Plant food biomarkers (alkylresorcinols, flavonoids) [41], amino acids, bile acids [45] | Habitual intake associations (proline betaine, hippurate) [42], lipoprotein profiling [43] |

The data reveals a clear complementarity between platforms. HILIC-LC-MS excels where reversed-phase LC-MS falls short: in the retention and sensitive analysis of highly polar metabolites central to energy metabolism, such as amino acids and sugars [45] [46]. A direct performance comparison of narrow-bore versus capillary HILIC systems demonstrated that capillary systems (CapHILIC) can increase signal areas for polar metabolites by up to 18-fold in tissue extracts and 80-fold in bile acid standards, albeit with a less broad metabolite spectrum [45]. Conversely, NMR provides a less sensitive but highly reproducible and non-destructive platform suitable for high-throughput analysis and absolute quantification without the need for compound-specific calibration, making it ideal for large-scale epidemiological studies like the MEIA study, which investigated associations between habitual diet and urinary metabolites in nearly 500 participants [42].

Experimental Protocols for Platform Evaluation

HILIC-LC-MS/MS for Simultaneous Quantification of Dietary Metabolites

Objective: To develop a precise, efficient HILIC-LC-MS/MS method for simultaneously quantifying 28 diet-related metabolites in human serum, including acylcarnitines, amino acids, ceramides, and lysophosphatidylcholines, which are potential biomarkers for multiple myeloma and nutritional status [46].

Sample Preparation:

- Extraction: 50 µL of serum is mixed with 200 µL of ice-cold isopropanol containing 0.1% acetic acid and a mixture of isotopically labeled internal standards.

- Protein Precipitation: The mixture is vortexed vigorously and centrifuged at 13,000 × g for 10 minutes at 4°C.

- Supernatant Collection: The clear supernatant is transferred to a new vial for LC-MS/MS analysis [46].

LC Conditions:

- Column: HILIC column (e.g., 2.1 × 100 mm, 1.7 µm).

- Mobile Phase: A) 10 mM ammonium acetate in water (pH 9.0), B) 10 mM ammonium acetate in 90% acetonitrile/10% water.

- Gradient: Linear gradient from 90% B to 50% B over 10 minutes, followed by re-equilibration.

- Flow Rate: 0.4 mL/min.

- Column Temperature: 40°C.

- Injection Volume: 5 µL [46].

MS Conditions:

- Instrument: Triple quadrupole mass spectrometer.

- Ionization: Positive electrospray ionization (ESI+).

- Detection Mode: Multiple Reaction Monitoring (MRM).

- Data Acquisition: Full separation and quantification of 28 metabolites achieved within 15 minutes [46].

Performance Metrics:

- Linearity: Correlation coefficients (R²) > 0.9984 for all analytes.

- Precision: Intra-run CVs: 1.1–5.9%; Total CVs: 2.0–9.6%.

- Accuracy: Analytical recoveries ranged from 91.3% to 106.3% (average 99.5%) [46].

NMR Metabolomics for Habitual Dietary Intake Assessment

Objective: To identify associations between habitual dietary intake and urinary metabolite profiles in a large, population-based cohort (MEIA study, n=496) [42].

Study Design:

- Participants: 496 adults from the general population.

- Dietary Assessment: Multiple 24-hour dietary recalls using the myfood24 online tool, capturing habitual intake as a weighted mean over weekdays and weekends.

- Sample Collection: Fasting spot urine samples collected and stored at -80°C until analysis [42].

NMR Analysis:

- Platform: High-throughput NMR spectroscopy (Nightingale Health platform).

- Sample Preparation: Centrifugation and aliquoting of urine samples.

- Analysis: Quantification of 49 urinary metabolites.

- Data Processing: Metabolite concentrations expressed relative to creatinine (mmol/L) and scaled by 100 [42].

Statistical Analysis:

- Association Testing: Linear and median regression models examined diet-metabolite associations, adjusted for age, sex, BMI, physical activity, smoking, and alcohol consumption.

- Clustering: K-means clustering identified urinary metabolite clusters, with multinomial regression used to analyze associations with food intake [42].

Key Findings:

- Replicated known associations (e.g., citrus intake with proline betaine, fiber with hippurate).

- Identified novel associations (e.g., poultry intake with taurine, indoxyl sulfate, and TMAO).

- Demonstrated the utility of NMR-based metabolomics for objective dietary assessment in free-living populations [42].

Cross-Platform Comparison for Biomarker Discovery

Objective: To compare the performance of UHPLC-High-Resolution MS (HRMS) and Fourier Transform Infrared (FTIR) spectroscopy for serum metabolome analysis and prediction of clinical outcomes in critically ill patients [48].

Experimental Design:

- Cohorts: Three patient groups (n=8 each) with different clinical outcomes.

- Sample Analysis: Same serum samples analyzed by both UHPLC-HRMS and FTIR spectroscopy.

Platform Performance:

- UHPLC-HRMS: Showed 8-17% higher accuracies (≥83%) when comparing homogeneous patient groups, enabling more robust prediction models and better understanding of metabolic mechanisms.

- FTIR Spectroscopy: More suitable for unbalanced populations, with advantages in simplicity, speed, cost-effectiveness, and high-throughput operation.

Conclusion: UHPLC-HRMS is superior for detailed mechanistic studies, while FTIR offers practical advantages for large-scale screening and clinical translation in complex populations [48].

Workflow Visualization for Analytical Platforms

The following diagram illustrates the generalized experimental workflow for metabolomic profiling in dietary biomarker research, highlighting the parallel and complementary paths for LC-MS/HILIC and NMR platforms.

Diagram 1: Experimental workflow for dietary biomarker discovery using LC-MS/HILIC and NMR platforms. The workflow begins with study population recruitment and dietary assessment, followed by biospecimen collection. Platform selection determines the sample preparation and analysis path, with data streams converging for integrated statistical analysis and biomarker validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of metabolomic platforms requires specific reagents, standards, and materials optimized for each analytical approach. The following table details essential components for dietary biomarker research.

Table 2: Essential Research Reagents and Materials for Dietary Metabolomics

| Item | Function/Application | Platform | Specific Examples from Literature |

|---|---|---|---|

| HILIC Columns | Separation of polar metabolites | HILIC-LC-MS | Alkaline HILIC for central carbon metabolites [47]; HILIC separation of amino acids, AcyCNs, ceramides, LPCs [46] |

| Isotopically Labeled Standards | Internal standards for quantification | LC-MS/HILIC-MS | Deuterated amino acids (Val-D8, Leu-D2), carnitines, and lipids for precise quantification [46] |

| Biocrates AbsoluteIDQ p180 Kit | Targeted metabolomics profiling | LC-MS | Quantification of 186 metabolites including acylcarnitines, amino acids, biogenic amines, and lipids [44] |

| NMR Buffer Solutions | Standardized pH for reproducible spectroscopy | NMR | Standardized buffer systems used in high-throughput NMR platforms (e.g., Nightingale Health) [42] |

| Protein Precipitation Solvents | Metabolite extraction and protein removal | LC-MS/HILIC-MS | Ice-cold isopropanol with 0.1% acetic acid for serum metabolite extraction [46] |

| Quality Control Materials | Monitoring analytical performance | LC-MS/NMR | Pooled quality control samples analyzed throughout batch sequences to assess reproducibility |

The objective comparison of LC-MS, HILIC-LC-MS, and NMR platforms reveals a clear case for platform complementarity in dietary biomarker research. LC-MS and HILIC-LC-MS offer superior sensitivity and coverage for targeted analysis of specific food biomarkers, with HILIC extending capabilities to polar metabolites that are poorly retained in reversed-phase chromatography [45] [46]. NMR spectroscopy provides robust, high-throughput analysis suitable for large-scale epidemiological studies investigating habitual dietary patterns [42].