Bland-Altman Analysis for Wearable Nutrition Data: A Comprehensive Guide for Validation in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on the application of Bland-Altman analysis for validating wearable technology in nutrition monitoring.

Bland-Altman Analysis for Wearable Nutrition Data: A Comprehensive Guide for Validation in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the application of Bland-Altman analysis for validating wearable technology in nutrition monitoring. It covers foundational statistical principles, practical methodological applications across diverse wearable platforms (including BIA smartwatches, dietary wristbands, and AI-enabled cameras), troubleshooting for common analytical challenges, and frameworks for comparative device validation. By synthesizing recent validation studies, this resource aims to equip scientists with robust methodologies to assess the agreement, bias, and clinical utility of emerging wearable nutrition technologies, ultimately supporting rigorous evaluation in precision health and clinical trial contexts.

Understanding Bland-Altman Analysis: A Foundational Tool for Wearable Nutrition Validation

Bland-Altman analysis provides a fundamental methodological framework for assessing agreement between two measurement techniques, offering a more appropriate approach for method comparison studies than correlation analysis alone [1]. In nutritional research, this methodology is particularly valuable for evaluating wearable devices and dietary assessment tools against established reference methods. The core output of this analysis is the calculation of limits of agreement (LoA), which define an interval within which 95% of the differences between two measurement methods are expected to lie [2] [1]. Unlike correlation coefficients, which measure the strength of relationship between variables, Bland-Altman analysis directly quantifies the agreement by examining the differences between paired measurements, making it uniquely suited for validating new nutritional assessment methodologies against established standards [1] [3]. This approach has demonstrated remarkable utility across nutritional science, from harmonizing laboratory assays to validating smartphone-based dietary intake assessment tools [4].

Core Statistical Components and Their Interpretation

Key Parameters and Their Clinical Meaning

Table 1: Core Components of Bland-Altman Analysis and Their Interpretation

| Component | Calculation | Interpretation in Nutrition Research |

|---|---|---|

| Mean Difference (Bias) | Average of differences between paired measurements | Systematic over- or under-estimation by one method; indicates constant bias requiring adjustment |

| Limits of Agreement | Mean difference ± 1.96 × SD of differences | Range containing 95% of differences between methods; defines clinical acceptability |

| 95% Confidence Intervals | Interval estimates for mean difference and LoA | Precision of bias and LoA estimates; narrower intervals indicate more reliable estimates |

| Proportional Error | Slope in regression of differences on averages | Systematic change in bias across measurement magnitudes; violates constant bias assumption |

The mean difference, or bias, represents the systematic discrepancy between two measurement methods [1]. In nutritional research, this might manifest as a consistent overestimation of caloric intake by a new wearable device compared to dietitian-weighed food records [5]. The limits of agreement (LoA) are calculated as the mean difference plus and minus 1.96 times the standard deviation of the differences, establishing the range within which most differences between methods will fall [2] [1]. Proper interpretation requires comparing these statistical limits to pre-defined clinical agreement limits based on biological relevance or clinical requirements [2] [1]. For instance, researchers must determine whether observed differences in nutrient intake measurements would meaningfully impact dietary recommendations or clinical outcomes.

Advanced Methodological Variations

Table 2: Bland-Altman Methodologies for Different Data Types in Nutrition Research

| Method Type | Application Context | Key Assumptions | Nutrition Research Example |

|---|---|---|---|

| Parametric (Conventional) | Constant bias and variance (homoscedasticity) | Differences are normally distributed | Comparing nutrient analysis methods with consistent precision |

| Non-Parametric | Non-normally distributed differences | No distributional assumptions | Ranked comparison of dietary assessment tools |

| Regression-Based | Non-constant variance (heteroscedasticity) | Bias and precision vary with magnitude | Energy intake measurement where variability increases with intake level |

| Percentage Differences | Increasing variability with measurement magnitude | Proportional error structure | Nutrient biomarkers with concentration-dependent variability |

The conventional parametric approach assumes constant bias and variance across the measurement range (homoscedasticity) [2]. However, nutritional data often exhibits heteroscedasticity, where variability increases with measurement magnitude [2]. For such cases, the regression-based method models both bias and LoA as functions of measurement magnitude, providing more accurate agreement intervals across different intake levels [2]. Alternative approaches include plotting differences as percentages or using ratios instead of absolute differences, which can be particularly valuable when comparing nutritional measurements across wide concentration ranges [2] [3].

Detection and Handling of Proportional Error

Identifying Proportional Error

Proportional error occurs when the differences between methods systematically change as the magnitude of measurement increases [2]. This pattern is frequently encountered in nutritional research, such as when wearable devices demonstrate greater variability at higher energy intake levels or when biomarker assays show concentration-dependent performance [2]. Detection involves both visual inspection of Bland-Altman plots and statistical validation through regression analysis of differences against averages [2] [1].

The following workflow outlines the systematic approach to detecting and addressing proportional error:

Statistical Approaches for Proportional Error

When proportional error is detected, the regression-based Bland-Altman method provides the most appropriate analytical approach [2]. This method involves:

- Regression of differences on averages: ( \hat{D} = b0 + b1 A ), where D represents differences and A represents averages of paired measurements [2]

- Regression of absolute residuals on averages: ( \hat{R} = c0 + c1 A ), modeling how variability changes with measurement magnitude [2]

- Calculation of regression-based LoA: ( b0 + b1 A \pm 2.46 { c0 + c1 A } ), which provides sloping limits of agreement that more accurately capture method agreement across the measurement range [2]

The resulting LoA are not horizontal lines but rather curves that widen or narrow across the measurement continuum, providing a more realistic representation of agreement when proportional error is present [2]. This approach is particularly valuable for nutritional biomarkers and intake measurements that naturally exhibit increased variability at higher concentrations or intake levels.

Experimental Protocols for Nutrition Data Applications

Protocol 1: Basic Bland-Altman Analysis for Dietary Assessment Tools

Purpose: To evaluate agreement between a new dietary assessment method (e.g., wearable sensor, smartphone app) and an established reference method (e.g., weighed food record, 24-hour recall) [1] [5].

Materials and Equipment:

- Paired measurements from both methods (minimum sample size: 40-50 pairs recommended)

- Statistical software with Bland-Altman capabilities (e.g., MedCalc, R, SPSS)

- Pre-defined clinical agreement limits based on biological relevance

Procedure:

- Collect paired measurements using both methods on the same subjects/samples

- Calculate differences between methods (new method minus reference method)

- Calculate averages of paired measurements [(method A + method B)/2]

- Create scatter plot with differences on Y-axis and averages on X-axis

- Compute mean difference (bias) and standard deviation of differences

- Calculate limits of agreement: mean difference ± 1.96 × standard deviation

- Draw horizontal lines on plot for mean difference and both limits of agreement

- Assess normality of differences using Shapiro-Wilk test or normal Q-Q plot

- Calculate 95% confidence intervals for bias and limits of agreement

- Compare limits of agreement to pre-defined clinical agreement limits

Interpretation: The two methods can be considered interchangeable if the limits of agreement fall within clinically acceptable difference ranges and no systematic patterns are evident in the plot [2] [1].

Protocol 2: Regression-Based Analysis for Proportional Error Detection

Purpose: To assess agreement when variability between methods changes with measurement magnitude, common in nutrient biomarkers and intake assessments [2].

Materials and Equipment:

- Paired measurements covering wide concentration/intake range

- Statistical software with regression capabilities

- Graphical output for visualizing sloping limits of agreement

Procedure:

- Follow steps 1-4 from Protocol 1

- Perform linear regression of differences on averages: Differences = b₀ + b₁ × Averages

- Test statistical significance of slope (b₁) using t-test (α = 0.05)

- If significant slope exists, calculate absolute residuals from this regression

- Perform second regression of absolute residuals on averages: |Residuals| = c₀ + c₁ × Averages

- Calculate regression-based limits of agreement:

- Lower LoA = (b₀ - 2.46 × c₀) + (b₁ - 2.46 × c₁) × Averages

- Upper LoA = (b₀ + 2.46 × c₀) + (b₁ + 2.46 × c₁) × Averages

- Plot curved limits of agreement on Bland-Altman plot

- Calculate LoA area (LoAA) as summary measure of disagreement across measurement interval

Interpretation: Significant slope indicates proportional error; the regression-based LoA provide more accurate agreement intervals across the measurement range than conventional horizontal LoA [2].

Table 3: Key Analytical Components for Bland-Altman Analysis in Nutrition Research

| Component | Function/Purpose | Implementation Considerations |

|---|---|---|

| Paired Measurements | Provides matched data points from both methods | Ensure measurements are truly paired (same subject, same time) |

| Clinical Agreement Limits (Δ) | Defines clinically acceptable difference range | Should be established a priori based on biological or clinical requirements [2] |

| 95% Confidence Intervals | Quantifies precision of bias and LoA estimates | Essential for proper interpretation; should be reported routinely [2] |

| Normality Assessment | Validates assumption for parametric LoA calculation | Shapiro-Wilk test or Q-Q plot; if violated, use non-parametric approach [2] [3] |

| Regression Analysis | Detects and quantifies proportional error | Test significance of slope in differences vs. averages regression [2] |

| Log Transformation | Handles multiplicative error structures | Alternative approach for heteroscedastic data; equivalent to analyzing ratios [2] |

Methodological Considerations for Nutritional Data

Special Challenges in Nutrition Research

Nutritional data presents unique challenges for method comparison studies. Dietary intake measurements often exhibit substantial within-person variation and systematic reporting errors that complicate agreement assessments [6]. The absence of perfect reference methods for many nutritional exposures necessitates careful consideration of which method serves as the benchmark in comparisons [6]. Furthermore, nutritional biomarkers frequently demonstrate heteroscedasticity, where measurement variability increases with concentration, making the detection and handling of proportional error particularly important [2].

Recent methodological advancements have demonstrated the effectiveness of Bland-Altman based harmonization algorithms for nutritional biomarkers and assessment tools [4]. These approaches can adjust for both mean differences and distributional patterns in method comparisons, providing more effective harmonization than regression-based approaches alone in certain applications [4].

Limitations and Appropriate Applications

While powerful, Bland-Altman methods have specific limitations in nutritional research. The approach is not recommended when one measurement method has negligible measurement errors compared to the other, as this violates the method's underlying assumptions [5]. In such cases, simple regression of the measurements (or differences) on the reference method may provide more appropriate analysis [5].

Additionally, Bland-Altman analysis defines intervals of agreement but does not determine whether those limits are clinically acceptable [1]. Researchers must incorporate external criteria based on clinical requirements, biological variation, or analytical quality specifications to determine whether observed levels of disagreement preclude method interchangeability [2] [1]. This is particularly crucial in nutritional research where the clinical impact of measurement differences may vary across nutrients, populations, and applications.

Why Bland-Altman Outperforms Correlation for Method Comparison in Nutrition Monitoring

In nutrition monitoring research, the validation of new dietary assessment methods—ranging from wearable sensors and image-based smartphone applications to automated food photography analysis—against established standards is a fundamental practice [7] [8] [9]. Traditionally, many researchers have relied on correlation coefficients to assess the relationship between two measurement methods. However, this approach is statistically flawed for method comparison as it measures the strength of association between variables rather than their actual agreement [1] [10]. A high correlation can mask significant biases between methods, potentially leading researchers to conclude that methods agree when they do not [1]. The Bland-Altman analysis, developed in 1983 and popularized in 1986, addresses this critical limitation by quantifying agreement through the assessment of mean differences and limits of agreement, providing researchers with a clear understanding of both the magnitude and pattern of discrepancies between methods [1] [10] [11].

Theoretical Foundations: How Bland-Altman Analysis Works

Core Components and Interpretation

The Bland-Altman method plots the differences between two measurements against their average values for each subject or sample [1] [11]. This visualization reveals several key aspects of methodological agreement that correlation analysis cannot detect. The plot includes three central lines: the mean difference (indicating systematic bias), and the upper and lower limits of agreement (defined as the mean difference ± 1.96 standard deviations of the differences) [1]. These limits represent the range within which 95% of the differences between the two measurement methods are expected to fall [10].

Interpretation of the Bland-Altman plot focuses on three critical elements: first, the magnitude of the mean difference reveals any consistent bias between methods; second, the width of the agreement intervals indicates the expected variability between measurements; and third, the pattern of differences across measurement ranges can identify proportional bias [1] [11]. Importantly, the clinical acceptability of these limits of agreement depends on predefined criteria based on biological or clinical requirements, not statistical significance [1] [10].

Fundamental Limitations of Correlation Analysis

Correlation analysis suffers from several critical drawbacks when used for method comparison. The correlation coefficient (r) reflects how well measurements from two methods maintain their relative positioning across subjects, but does not indicate whether the methods produce identical values [1]. A high correlation coefficient can be misleadingly reassuring even when substantial systematic differences exist between methods [1]. Furthermore, correlation is influenced by the range of values in the sample—wider ranges tend to produce higher correlations—making it unreliable for assessing agreement across the measurement spectrum [1]. Perhaps most importantly, correlation analysis cannot quantify the actual magnitude of discrepancies between methods, which is essential for determining clinical relevance in nutrition monitoring applications [10].

Comparative Analysis in Nutrition Monitoring Applications

Quantitative Evidence from Nutrition Research

Table 1: Bland-Altman Analysis in Nutrition Monitoring Validation Studies

| Study & Technology | Comparison | Mean Bias (kcal/dish or kcal/day) | Limits of Agreement | Key Findings |

|---|---|---|---|---|

| DialBetics (Photo vs. WFR) [7] | Energy intake per dish | 6 kcal/dish | -198 to 210 kcal/dish | Random differences, no systematic bias detected |

| Wearable Wristband [8] | Daily energy intake | -105 kcal/day | -1400 to 1189 kcal/day | Overestimation at lower intake, underestimation at higher intake |

| Nutrition Apps [9] | Energy intake per item | -2 to -5.4 kcal/item | Not reported | Systematic underestimation of energy and lipids |

Table 2: Correlation vs. Bland-Altman in Detecting Methodological Issues

| Analysis Method | Detection of Systematic Bias | Quantification of Measurement Error | Clinical Relevance Assessment | Identification of Proportional Bias |

|---|---|---|---|---|

| Correlation Analysis | Poor | None | Not possible | Limited |

| Bland-Altman Analysis | Excellent (via mean difference) | Direct (via limits of agreement) | Directly enables | Excellent (via pattern inspection) |

The comparative performance of these statistical approaches becomes evident in real nutrition monitoring applications. In the validation of the DialBetics system, which uses smartphone photos of meals to assess dietary intake, correlation analysis showed strong relationships for nutrients (ICC=0.93 for carbohydrates) [7]. However, only Bland-Altman analysis revealed the random nature of differences and the actual expected variability (-198 to 210 kcal per dish), providing clinically meaningful information for implementation decisions [7].

Similarly, in validating wearable nutrition monitoring technology, Bland-Altman analysis uncovered a significant proportional bias (regression equation: Y=-0.3401X+1963, P<0.001) where the device overestimated low energy intake and underestimated high intake [8]. This critical pattern would have remained undetected using correlation analysis alone and has profound implications for the appropriate use contexts of the technology.

Practical Consequences of Method Choice

The choice between statistical approaches directly impacts research conclusions and practical applications. When popular nutrition applications were evaluated against standard methods, correlation coefficients might have suggested reasonable relationships, but Bland-Altman analysis revealed systematic underestimation of energy and lipid intake across multiple platforms [9]. These biases have direct implications for clinical applications, particularly for patients managing conditions like diabetes or obesity where accurate intake tracking is essential [7] [9].

In body composition assessment, another critical aspect of nutrition monitoring, Bland-Altman plots demonstrated proportional bias in wearable bioelectrical impedance devices, with increasing underestimation of body fat percentage at higher adiposity levels [12]. This pattern, invisible to correlation analysis (which showed strong relationships: r=0.93), is essential for appropriate clinical interpretation and device usage guidelines.

Experimental Protocols for Nutrition Monitoring Validation

Protocol 1: Validating Image-Based Dietary Assessment Methods

Purpose: To validate smartphone image-based dietary intake methods against weighed food records (WFR) using Bland-Altman analysis [7] [13].

Materials and Reagents:

- Digital cooking scale (e.g., Shimadzu PZ-2000): For precise measurement of food components [7]

- Standardized food composition database (country-specific): For nutrient calculation (e.g., USDA Database, BDA Italy) [9]

- Smartphone with camera: For image capture of test meals

- Fiducial marker (reference object): For portion size estimation in images [13]

- Nutrition analysis software: For nutrient calculation from WFR (e.g., Excel-Eiyokun) [7]

Procedure:

- Test Meal Preparation: Prepare a representative range of dishes (typically 50-60) covering various food groups and cooking methods common to the target population [7]

- Gold Standard Measurement: Precisely weigh all ingredients (including seasonings and oils) using digital scales to establish WFR values [7]

- Image Capture: Photograph each dish using standardized conditions (45-60° angle, consistent lighting, fiducial marker visible) [7] [13]

- Blinded Evaluation: Trained dietitians estimate nutrient intake from images using the test method's database and protocols [7]

- Data Analysis: Calculate differences between image-based estimates and WFR values for energy and nutrients

- Statistical Analysis: Perform Bland-Altman analysis including mean bias, limits of agreement, and assessment for proportional bias [7] [1]

Visualization Framework:

Protocol 2: Validating Wearable Nutrition Monitoring Sensors

Purpose: To assess agreement between wearable nutrient intake sensors and controlled reference methods using Bland-Altman analysis [8].

Materials and Reagents:

- Wearable sensor device: e.g., wristband with nutritional intake tracking (GoBe2) [8]

- Controlled meal facility: University dining facility with calibrated meal preparation [8]

- Continuous glucose monitoring system: Optional for adherence monitoring [8]

- Food composition database: For reference method nutrient calculation [8]

- Data collection software: For synchronized data acquisition

Procedure:

- Participant Screening: Recruit free-living participants meeting inclusion/exclusion criteria [8]

- Controlled Meal Provision: Prepare and serve calibrated study meals with precise nutrient documentation [8]

- Parallel Monitoring: Participants use wearable sensors while consuming documented meals under observation [8]

- Data Collection: Collect daily nutritional intake estimates from both wearable sensor and reference method for 14-day test periods [8]

- Difference Calculation: Compute daily differences between sensor estimates and reference values

- Bland-Altman Analysis: Construct plots with mean bias and limits of agreement; perform regression analysis to identify proportional bias [8]

Visualization Framework:

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Essential Research Materials for Nutrition Monitoring Validation Studies

| Category | Specific Items | Function in Validation | Considerations |

|---|---|---|---|

| Reference Standards | Digital cooking scales, measuring spoons/cups | Provide gold-standard measurement for validation | Precision to 0.1g required; regular calibration essential [7] |

| Food Composition Databases | USDA Food Composition Database, BDA Italy, country-specific databases | Enable nutrient calculation from food records | Country-specific databases crucial for accurate local food representation [9] |

| Image Capture Tools | Smartphones with cameras, fiducial markers, angle guides | Standardize food photography for assessment | 45° angle with reference object optimizes portion size estimation [13] |

| Wearable Sensors | Bioelectrical impedance devices, nutritional intake wristbands | Test novel monitoring approaches | Independent validation critical due to proprietary algorithms [8] [12] |

| Statistical Software | R (blandPower package), MedCalc, jamovi | Perform Bland-Altman analysis with confidence intervals | Sample size estimation capabilities essential for adequate power [11] |

Implementation Guidelines and Best Practices

Sample Size Considerations

Adequate sample size is critical for reliable Bland-Altman analysis. Early recommendations suggested minimum samples of 100-200 observations, but contemporary approaches using the methods of Lu et al. (2016) enable formal power calculations [11]. Researchers should aim for sufficient samples to achieve narrow confidence intervals around limits of agreement, typically requiring at least 100 paired measurements for nutrition monitoring studies [11]. The R package blandPower and commercial software like MedCalc provide specialized tools for sample size estimation in method comparison studies [11].

Handling Non-Normal Data and Proportional Bias

When differences between methods do not follow a normal distribution, Bland and Altman recommend using percentile-based limits of agreement rather than standard deviation-based intervals [11]. For data exhibiting proportional bias (where differences increase with measurement magnitude), log transformation before analysis or the use of percentage difference plots is recommended [1] [11]. These adaptations ensure robust analysis across the diverse measurement scenarios encountered in nutrition monitoring research.

Defining Clinically Acceptable Limits

A crucial step in Bland-Altman analysis is establishing clinically acceptable limits of agreement before conducting the study [1] [10]. In nutrition monitoring, these limits might be based on clinical outcomes (e.g., glycemic impact of carbohydrate estimation errors), practical considerations (e.g., weight management applications), or biological variability [7] [8]. The decision regarding agreement acceptability should be grounded in these predefined criteria rather than statistical significance alone.

Bland-Altman analysis provides nutrition researchers with a robust framework for method comparison that directly quantifies agreement in clinically meaningful terms. Unlike correlation analysis, which can misleadingly suggest agreement where none exists, Bland-Altman analysis detects and characterizes both fixed and proportional biases, enables evidence-based decisions about method equivalence, and provides explicit estimates of measurement error that directly inform clinical and research applications [1] [10]. As nutrition monitoring technologies continue to evolve—from image-based apps to wearable sensors—the proper application of Bland-Altman methodology will remain essential for validating these tools and advancing nutritional science.

In the evolving field of precision nutrition, wearable sensors and devices present novel methods for quantifying dietary intake and energy expenditure. The Bland-Altman analysis provides an essential statistical framework for validating these emerging technologies against established reference methods [1] [11]. Unlike correlation analyses that measure the strength of relationship between two variables, Bland-Altman analysis specifically quantifies agreement by focusing on the differences between paired measurements [1]. This methodology is particularly valuable for researchers and drug development professionals who require rigorous assessment of measurement agreement before deploying wearable technologies in clinical trials or nutritional interventions. As the field advances beyond population-level dietary guidelines toward personalized nutrition, establishing the validity and limits of agreement for wearable devices becomes paramount for generating reliable, actionable data [8].

Core Metrics and Their Interpretation

The Bland-Altman plot visualizes agreement between two measurement methods through a scatter plot where the Y-axis represents the differences between paired measurements (Method A - Method B) and the X-axis represents the average of these two measurements ((A+B)/2) [1] [11]. Three key quantitative metrics form the foundation for interpreting this plot: the mean difference, standard deviation of differences, and 95% limits of agreement.

Table 1: Core Metrics in Bland-Altman Analysis

| Metric | Calculation | Interpretation | Clinical Significance |

|---|---|---|---|

| Mean Difference (Bias) | Σ(Method A - Method B) / n | Systematic average difference between methods | Positive value: Method A consistently higher than B; Negative value: Method A consistently lower than B |

| Standard Deviation of Differences | √[Σ(difference - mean difference)² / (n-1)] | Spread of the differences around the mean | Quantifies random variation between methods; Larger SD indicates greater dispersion |

| 95% Limits of Agreement | Mean difference ± 1.96 × SD | Range containing 95% of differences between methods | Defines the interval where most differences between measurement methods will lie |

The mean difference, or bias, represents the systematic discrepancy between two measurement methods [14]. For example, in a study validating a wearable device (GoBe2) for tracking caloric intake, researchers observed a mean bias of -105 kcal/day, indicating that the wearable generally underestimated energy intake compared to the reference method [8]. The standard deviation of the differences characterizes the random variation around this bias, with larger values indicating greater dispersion and inconsistency between methods [14]. The 95% limits of agreement (LoA) combine these metrics to create an interval (bias ± 1.96 × SD) within which 95% of the differences between the two methods are expected to fall [1] [2]. In the wearable nutrition study, the LoA ranged from -1400 to 1189 kcal/day, highlighting substantial variability in the device's performance across participants [8].

Advanced Considerations for Metric Interpretation

Beyond the basic calculations, several advanced considerations affect how these metrics should be interpreted:

Proportional Bias: When the differences between methods change systematically as the magnitude of measurement increases, this indicates a proportional bias [11] [2]. For example, in body composition validation research, wearable BIA devices demonstrated proportional bias, particularly in individuals with higher body fat percentages [12]. This relationship can be detected visually when the data points in a Bland-Altman plot show a sloping pattern or statistically through regression analysis of differences against averages [14] [2].

Heteroscedasticity: This occurs when the variability of differences changes across the measurement range, often appearing as a funnel-shaped pattern on the Bland-Altman plot [11]. In such cases, the standard deviation and limits of agreement calculated for the entire dataset may be misleading. Transformation of data (logarithmic or ratio) or regression-based LoA that vary across the measurement range may be more appropriate [11] [2].

Confidence Intervals for LoA: Especially with smaller sample sizes, the calculated limits of agreement are estimates with inherent uncertainty [14] [2]. Reporting 95% confidence intervals for the LoA provides a more realistic interpretation of the expected range of differences between methods [2]. Narrow confidence intervals increase confidence in the estimated LoA, while wide intervals indicate substantial uncertainty.

Step-by-Step Protocol for Analysis and Interpretation

Data Collection and Preparation

Protocol 3.1.1: Paired Measurements Collection

- Participant Recruitment: Recruit a representative sample of participants covering the expected measurement range of interest. For nutrition wearables, include participants with diverse body compositions, ages, and activity levels [8] [12].

- Paired Measurements: For each participant, obtain simultaneous or near-simultaneous measurements using both the test method (wearable device) and reference method. In nutrition research, this may involve comparing wearable calorie estimates against controlled meal consumption measured by dietitians [8].

- Data Recording: Record measurements in a structured format with participant identifiers, values from both methods, and relevant covariates (e.g., time of day, physiological status).

Protocol 3.1.2: Preliminary Data Assessment

- Normality Check: Assess the distribution of differences using statistical tests (Shapiro-Wilk) or visual inspection (Q-Q plot). Non-normal distributions may require data transformation or non-parametric approaches [11] [2].

- Outlier Identification: Identify potential outliers that may disproportionately influence the mean difference or standard deviation. Investigate whether outliers represent measurement errors or true biological variation.

Calculation of Key Metrics

Protocol 3.2.1: Computational Steps

- Calculate Differences: For each paired measurement, compute the difference (Test Method - Reference Method).

- Calculate Averages: For each pair, compute the average of the two measurements ((Test Method + Reference Method)/2).

- Compute Mean Difference: Calculate the arithmetic mean of all differences.

- Compute Standard Deviation: Calculate the standard deviation of the differences.

- Determine Limits of Agreement: Calculate the upper and lower limits as: Mean difference ± (1.96 × Standard deviation of differences) [1] [14].

Protocol 3.2.2: Visualization Steps

- Create Scatter Plot: Plot the differences (Y-axis) against the averages (X-axis).

- Add Reference Lines: Draw horizontal lines at the mean difference and at the upper and lower limits of agreement.

- Optional Enhancements: Add confidence interval bands for the mean difference and limits of agreement, particularly when sample size is limited [2].

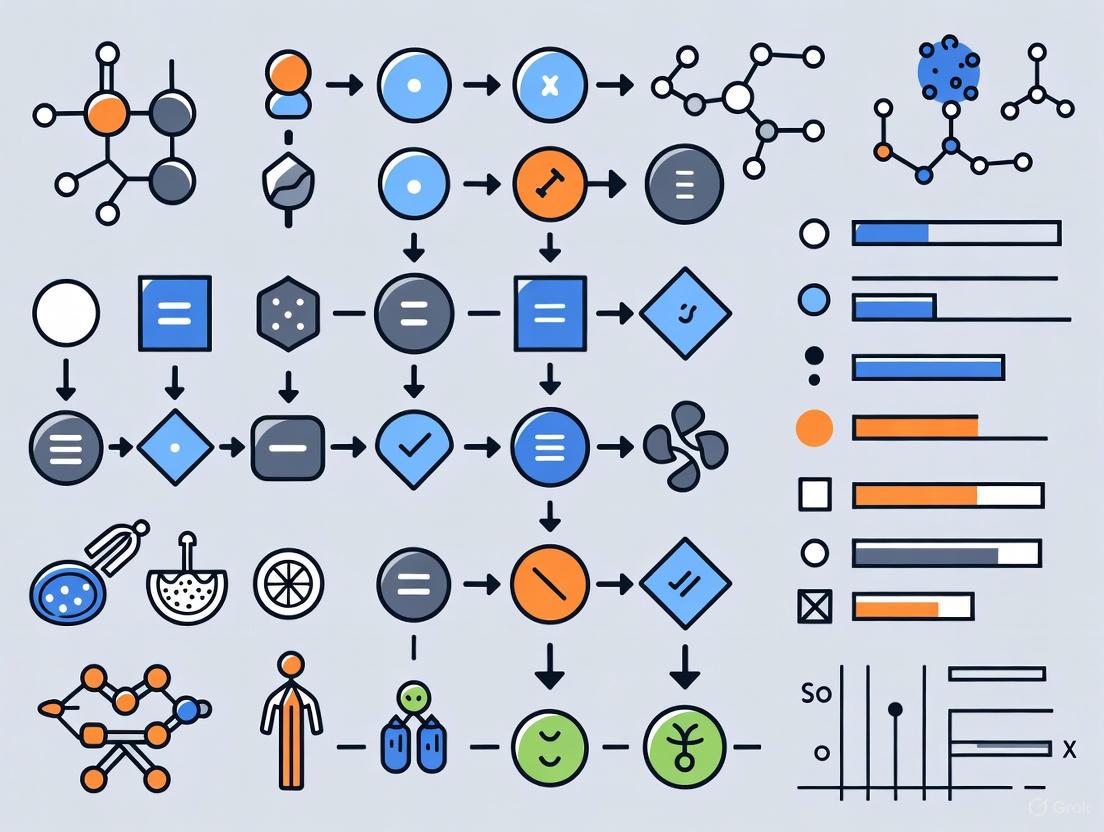

Figure 1: Bland-Altman Analysis Workflow. This diagram illustrates the sequential process for conducting Bland-Altman analysis, from data collection through interpretation.

Interpretation Framework

Protocol 3.3.1: Systematic Assessment

- Evaluate Bias Significance: Determine if the mean difference is statistically significantly different from zero using a one-sample t-test or by examining whether the confidence interval for the mean difference includes zero [14].

- Assess LoA Width: Judge whether the limits of agreement are clinically acceptable based on predetermined criteria. For example, in nutritional intake monitoring, researchers might consider whether the LoA width would impact dietary recommendations [8] [14].

- Check for Patterns: Visually inspect the plot for proportional bias, heteroscedasticity, or other systematic patterns that might affect interpretation [14] [11].

Protocol 3.3.2: Clinical Relevance Decision Matrix

- Define Acceptable Difference: Prior to analysis, establish the maximum clinically acceptable difference (Δ) between methods based on biological relevance, clinical requirements, or analytical performance specifications [2].

- Compare LoA to Δ: If the limits of agreement fall entirely within the range -Δ to +Δ, the two methods may be used interchangeably [2].

- Consider Confidence Intervals: For more conservative interpretation, ensure that the confidence intervals for the limits of agreement also fall within the acceptable difference range [2].

Application to Wearable Nutrition Research

Case Study: Validating Wearable Calorie Tracking Devices

In a study validating the GoBe2 wearable device for nutritional intake monitoring, researchers employed Bland-Altman analysis to compare the device's calorie estimates against a reference method where participants consumed calibrated meals at a university dining facility [8]. The analysis revealed a mean bias of -105 kcal/day, indicating the wearable tended to underestimate calorie intake. More notably, the 95% limits of agreement ranged from -1400 to 1189 kcal/day, demonstrating substantial variability in the device's performance [8]. The regression equation of the Bland-Altman plot (Y = -0.3401X + 1963) indicated a proportional bias where the device overestimated at lower calorie intakes and underestimated at higher intakes [8]. These findings highlight the importance of not relying solely on correlation coefficients, which were likely high given the wide range of calorie intakes, but rather examining the agreement metrics that reveal systematic and random errors.

Table 2: Research Reagent Solutions for Wearable Nutrition Validation Studies

| Research Tool | Function/Application | Example from Literature |

|---|---|---|

| Controlled Meal Provision | Provides reference method for dietary intake validation | University dining facility preparing and serving calibrated study meals [8] |

| Clinical BIA Device | Reference method for body composition assessment | InBody 770 used as clinical comparator for wearable BIA devices [12] |

| Dual-Energy X-Ray Absorptiometry (DXA) | Criterion method for body composition measurement | Lunar iDXA used as gold standard for validating wearable BIA devices [12] |

| Continuous Glucose Monitors | Objective measure of metabolic response to food intake | Used to assess adherence to dietary reporting protocols [8] |

| AI-Enabled Wearable Cameras | Passive assessment of dietary intake | EgoDiet system using egocentric vision to estimate food portion sizes [15] |

Special Considerations for Nutrition and Wearable Data

Protocol 4.2.1: Addressing Proportional Bias in Nutritional Data

- Detection Method: Plot differences against averages and fit a regression line. A statistically significant slope indicates proportional bias [14] [2].

- Transformation Approach: Apply logarithmic transformation to the original measurements before analysis, which converts proportional differences to constant differences [11].

- Alternative Visualization: Express differences as percentages of the average values when variability increases with measurement magnitude [2].

Protocol 4.2.2: Sample Size Considerations

- Power Analysis: Conduct formal sample size calculations using methodologies that account for the expected variability and desired precision of the limits of agreement [11].

- Practical Guidance: While larger samples (n > 100) provide more precise estimates of LoA, smaller samples (n = 40-100) may be sufficient for initial validation studies, particularly when complemented with confidence intervals [11].

Figure 2: Nutrition Device Validation Framework. This diagram shows the relationship between reference methods, wearable devices, and Bland-Altman analysis in validation studies.

The Bland-Altman analysis provides an essential methodological framework for validating wearable technologies in nutrition research. Proper interpretation of the mean difference, standard deviation of differences, and 95% limits of agreement enables researchers to make informed decisions about the clinical utility and limitations of emerging measurement devices. As the field of precision nutrition advances, rigorous method comparison studies will be crucial for establishing which technologies are sufficiently valid for research and clinical applications. By following the protocols and interpretation guidelines outlined in this document, researchers can standardize their validation approaches and generate comparable evidence across studies, ultimately accelerating the development of reliable wearable solutions for nutritional assessment.

The adoption of wearable technology for monitoring nutritional parameters, such as body composition and dietary intake, represents a paradigm shift in nutritional science and personalized health. However, the accurate validation of these technologies is paramount for their reliable application in research and clinical practice. The Bland-Altman analysis has emerged as a fundamental statistical methodology for assessing the agreement between new wearable technologies and established reference or "criterion" methods [1] [2]. This application note details the scope, protocols, and analytical frameworks for validating wearable devices that estimate body composition and energy intake, providing researchers with standardized approaches for method comparison studies.

The complexity of validating wearable devices stems from the multifaceted nature of nutritional biomarkers. Unlike many clinical chemistry measurements, nutrition-related parameters like body fat percentage and energy intake present unique challenges due to biological variability, methodological constraints of reference methods, and the influence of human behavior on measurements. This document provides a comprehensive framework for the validation of these technologies, with all quantitative data synthesized into structured tables and all methodological workflows visualized through standardized diagrams to enhance reproducibility and clarity.

Wearable Technology for Body Composition Analysis

Recent advancements have integrated bioelectrical impedance analysis (BIA) into consumer wearable devices, such as smartwatches, offering unprecedented accessibility for tracking body composition measures outside clinical settings [12]. These devices operate by measuring the resistance of body tissues to a low-level electrical current, estimating components like body fat percentage (BF%) and skeletal muscle mass percentage (SM%) through proprietary algorithms [12]. The validation of these technologies typically employs dual-energy x-ray absorptiometry (DXA) as the criterion method due to its high accuracy and reliability [12].

Table 1: Key Validation Metrics for Body Composition Wearables (vs. DXA)

| Measurement | Device Type | Correlation (r) | Concordance (CCC) | Mean Absolute Percentage Error (MAPE) |

|---|---|---|---|---|

| Body Fat % | Wearable BIA | 0.93 | 0.91 | 14.3% |

| Body Fat % | Clinical BIA | 0.96 | 0.86 | 21.1% |

| Skeletal Muscle % | Wearable BIA | 0.92 | 0.45 | 20.3% |

| Skeletal Muscle % | Clinical BIA | 0.89 | 0.25 | 36.1% |

The data in Table 1, derived from a study of 108 physically active participants, demonstrates that wearable BIA devices can achieve very strong correlations for body fat percentage (r=0.93) compared to DXA, with agreement levels (CCC=0.91) that may even surpass some clinical BIA devices [12]. However, the validation data reveals important limitations: wider limits of agreement and higher error rates were observed in individuals with higher body fat percentages, indicating proportional bias, and skeletal muscle mass estimates showed notably weaker agreement despite strong correlations [12]. This discrepancy highlights why correlation coefficients alone are insufficient for method comparison and why Bland-Altman analysis is essential.

Experimental Protocol: Body Composition Validation

The following protocol outlines the methodology for validating wearable body composition devices against criterion methods:

Participant Preparation and Eligibility:

- Recruit participants representing the target population (e.g., 56 females, 52 males in the reference study) [12].

- Establish inclusion/exclusion criteria: age range (18-80 years), physical activity level (≥3 days/week moderate-vigorous activity), and absence of contraindications (cardiovascular disease, pregnancy, significant musculoskeletal impairments) [12].

- Standardize pre-test conditions: 3-hour fast from food, caffeine, and other drinks; 24-hour abstinence from alcohol, smoking, and heavy exercise [12].

Testing Procedure:

- Conduct all assessments during a single visit with participants wearing lightweight athletic clothing.

- Perform measurements in sequence:

- Criterion Method: Conduct total body DXA scan (e.g., Lunar iDXA) following manufacturer protocols [12].

- Wearable Device: Utilize wearable BIA device (e.g., Samsung Galaxy Watch5) with participants placing middle and ring fingers on the metal knobs for 30-60 seconds as per device instructions [12].

- Clinical Reference: Administer clinical BIA (e.g., InBody 770) with participants positioned according to device instructions for hand-to-foot analysis [12].

- Ensure all devices report output metrics directly in consistent units (kg for mass, percentage for composition) for subsequent analysis.

Data Collection and Management:

- Record fat mass, skeletal muscle mass, body fat percentage, and skeletal muscle percentage from all devices.

- Utilize structured data management systems (e.g., REDCap) for data integrity [12].

- Export data for statistical analysis in appropriate software packages (e.g., jamovi, R) [12].

Wearable Technology for Energy Intake Estimation

The accurate estimation of energy intake represents a more significant challenge for wearable technologies compared to body composition. Emerging approaches include wrist-worn devices that claim to automatically track energy intake through various sensing mechanisms, including bioimpedance signals interpreted by computational algorithms that detect patterns associated with nutrient absorption [8]. The validation of these technologies requires sophisticated reference methods, often involving controlled feeding studies or objective biomarkers like the doubly labeled water (DLW) method for total energy expenditure [16] [17].

Table 2: Energy Intake Estimation Methods and Validation Approaches

| Method Category | Specific Method | Key Characteristics | Validation Challenges |

|---|---|---|---|

| Wearable Sensors | Wristband Technology (e.g., GoBe2) | Uses bioimpedance to estimate calorie intake from fluid shifts; claims automatic tracking | High variability (Bland-Altman LoA: -1400 to 1189 kcal/day); signal loss issues [8] |

| Digital Dietary Assessment | Experience Sampling Method (ESDAM) | App-based prompts for 2-hour recalls over 2 weeks; reduces recall bias | Convergent validity against 24-HDR; objective biomarkers needed [16] |

| Objective Biomarkers | Doubly Labeled Water (DLW) | Criterion for total energy expenditure; based on isotopic elimination | High cost, specialized expertise required, reflects expenditure not intake [16] [17] |

| Traditional Recall | 24-Hour Dietary Recall | Structured interview using AMPM method; nutrient analysis via USDA database | Memory dependency, misreporting, non-falsifiable [18] |

Validation studies of energy intake wearables have revealed substantial challenges. One study of a commercial wristband (GoBe2) found a mean bias of -105 kcal/day compared to controlled reference meals, but with 95% limits of agreement ranging from -1400 to 1189 kcal/day, indicating considerable variability at the individual level [8]. The regression equation of the Bland-Altman plot (Y = -0.3401X + 1963) demonstrated a tendency for the device to overestimate at lower calorie intakes and underestimate at higher intakes [8]. Researchers identified transient signal loss from the sensor technology as a major source of error in computing dietary intake.

Experimental Protocol: Energy Intake Validation

Reference Method Development (Controlled Feeding):

- Collaborate with a metabolic kitchen or dining facility to prepare and serve calibrated study meals [8].

- Precisely record energy and macronutrient composition of all meals using established food composition databases (e.g., USDA FoodData Central) [19].

- Observe participants during consumption to verify adherence to protocol and complete intake recording.

Wearable Device Testing:

- Instruct participants to wear the test device (e.g., nutrition tracking wristband) consistently throughout the study period (e.g., two 14-day test periods) [8].

- Ensure proper device placement and function according to manufacturer specifications.

- Synchronize device data collection with reference method timeframes.

Biomarker Validation (For Method Comparison):

- Implement the doubly labeled water (DLW) method for total energy expenditure assessment as a reference for energy intake under weight-stable conditions [16].

- Collect urinary nitrogen excretion measurements as a biomarker for protein intake validation [16].

- Analyze serum carotenoids as biomarkers for fruit and vegetable consumption [16].

- Examine erythrocyte membrane fatty acid composition as a biomarker for dietary fat intake [16].

Data Analysis:

- Extract daily energy intake estimates (kcal/day) from both the wearable device and reference methods.

- Perform Bland-Altman analysis to assess agreement, calculating mean bias and 95% limits of agreement [8] [1].

- Conduct correlation analyses (Pearson or Spearman) to evaluate the strength of relationship between methods.

Bland-Altman Analysis: A Critical Methodological Framework

Fundamentals and Interpretation

The Bland-Altman plot, also known as the difference plot, is a graphical method specifically designed to assess the agreement between two measurement techniques [1] [2]. Unlike correlation coefficients that measure the strength of relationship but not agreement, Bland-Altman analysis quantifies the actual differences between methods, making it ideally suited for wearable technology validation.

The methodology involves plotting the differences between two measurements against their averages for each subject [1]. Key elements of the plot include:

- Mean Difference: The average of all differences (measurement A - measurement B), representing the systematic bias between methods.

- Limits of Agreement (LoA): Defined as the mean difference ± 1.96 times the standard deviation of the differences, representing the range within which 95% of differences between the two methods are expected to fall [2].

- Clinical Agreement Limits: Pre-defined acceptable differences based on clinical requirements or biological considerations [2].

Table 3: Key Statistical Measures in Bland-Altman Analysis

| Statistical Measure | Calculation | Interpretation | Acceptance Criteria |

|---|---|---|---|

| Mean Difference (Bias) | Σ(Method A - Method B)/n | Systematic difference between methods; positive value indicates A > B | Ideally zero; clinical relevance determines acceptability |

| Standard Deviation of Differences | √[Σ(d - d̄)²/(n-1)] | Spread of differences around the mean | Smaller values indicate better precision |

| 95% Limits of Agreement | d̄ ± 1.96×SD | Range containing 95% of differences between methods | Should fall within pre-defined clinical agreement limits |

| Confidence Intervals for LoA | Statistical estimation | Precision of the limits of agreement estimates | Narrower intervals indicate more reliable LoA estimates |

Application to Wearable Nutrition Data

In the context of wearable nutrition monitoring, Bland-Altman analysis provides critical insights that correlation analysis alone cannot reveal. For example, in body composition validation, while a wearable BIA device might show strong correlation with DXA (r=0.93 for BF%) [12], the Bland-Altman analysis can reveal:

- Proportional Bias: Systematic overestimation or underestimation that changes across the measurement range (e.g., higher errors in individuals with elevated body fat) [12].

- Heteroscedasticity: Non-constant variance of differences across the measurement range, requiring data transformation or regression-based approaches [2].

- Clinical Significance: Whether the observed differences are large enough to impact interpretation at the individual level.

For energy intake estimation, where absolute accuracy is challenging, Bland-Altman analysis helps quantify the practical utility of wearable devices. The wide limits of agreement observed in validation studies (-1400 to 1189 kcal/day) [8] demonstrate that while these devices might show reasonable accuracy at the group level (mean bias -105 kcal/day), their individual-level precision remains insufficient for many clinical or research applications.

Essential Research Toolkit

Table 4: Research Reagent Solutions for Wearable Nutrition Validation

| Category | Essential Item | Function/Application | Examples/Specifications |

|---|---|---|---|

| Criterion Methods | DXA Scanner | Gold-standard body composition assessment via tissue density differentiation | Lunar iDXA (GE) with enCORE software [12] |

| Doubly Labeled Water Kit | Isotopic method for measuring total energy expenditure in free-living conditions | (^2)H(_2)(^{18})O isotopes with mass spectrometry analysis [16] [17] | |

| Reference Devices | Clinical BIA Analyzer | Established bioelectrical impedance method for body composition | InBody 770 (hand-to-foot configuration) [12] |

| Metabolic Chamber | Controlled environment for precise energy expenditure measurement | Whole-room calorimeter with respiratory gas analysis [17] | |

| Biomarker Analysis | Urinary Nitrogen Assay | Biomarker validation for protein intake assessment | Kjeldahl method or chemiluminescence detection [16] |

| Serum Carotenoids Analysis | Biomarker for fruit and vegetable consumption validation | HPLC with UV-Vis or mass spectrometry detection [16] | |

| Data Resources | Food Composition Database | Nutrient analysis for reference diet creation and validation | USDA FoodData Central [19], NHANES dietary data [18] |

| Dietary Assessment Platform | Digital tools for comparative dietary intake measurement | Automated Multiple-Pass Method (AMPM) for 24-hour recall [18] | |

| Statistical Tools | Bland-Altman Analysis Software | Method comparison and agreement statistics | MedCalc, R Statistical Software, jamovi [12] [2] |

The validation of wearable technologies for nutrition monitoring requires sophisticated methodological approaches that properly account for both random and systematic errors. Bland-Altman analysis provides an essential framework for quantifying agreement between emerging wearable devices and established reference methods, offering advantages over simple correlation analyses by highlighting bias patterns and limits of agreement that determine practical utility.

Current evidence suggests that wearable BIA devices show promise for body composition assessment, particularly for body fat percentage in female populations, while technologies for automated energy intake estimation remain in development with significant accuracy limitations. Researchers should implement the standardized protocols and analytical approaches outlined in this application note to ensure rigorous validation of wearable nutrition monitoring technologies across diverse populations and use cases.

The continued development and validation of these technologies represents a critical pathway toward more precise, personalized nutrition monitoring, with potential applications in clinical practice, public health, and pharmaceutical development.

Practical Application: Implementing Bland-Altman Analysis Across Wearable Nutrition Technologies

This case study investigates the validity of smartwatch-based bioelectrical impedance analysis (BIA) for estimating body composition, using dual-energy X-ray absorptiometry (DXA) as the criterion method. The analysis is framed within a broader research thesis utilizing Bland-Altman analysis to assess the agreement between wearable nutrition data and clinical gold standards. Data from a study of 108 physically active participants demonstrates that a consumer smartwatch (Samsung Galaxy Watch5) can provide body fat percentage (BF%) estimates with very strong correlation (r = 0.93) and agreement (Lin's CCC = 0.91) to DXA. However, the agreement for skeletal muscle mass percentage (SM%) was weaker (Lin's CCC = 0.45), and proportional bias was observed in individuals with higher BF%. The findings support the cautious use of wearable BIA for general body composition monitoring in environments where laboratory-based methods are unavailable, while highlighting the critical role of Bland-Altman analysis in quantifying measurement bias and limits of agreement for wearable data [12].

Body composition, including body fat percentage (BF%) and skeletal muscle mass percentage (SM%), is a critical measure for understanding metabolic health, physical performance, and nutritional status. Unlike simple body mass index (BMI), body composition differentiates between fat and lean tissue, providing a nuanced view of health that is valuable for researchers, clinicians, and individuals managing their fitness [12]. Dual-energy X-ray absorptiometry (DXA) is widely considered a criterion method for body composition assessment due to its high accuracy and reliability [12] [20]. However, DXA is expensive, requires specialized facilities, and is not suitable for frequent monitoring.

Recent technological advancements have integrated bioelectrical impedance analysis (BIA) into commercially available wearable devices, such as smartwatches. These wearables offer a non-invasive, quick, and accessible solution for frequent body composition tracking, enabling measurements in diverse settings like homes and training centers [12] [21]. Despite their potential, the validity of these consumer devices against criterion methods like DXA requires rigorous, independent evaluation. This case study examines the accuracy of a wrist-worn wearable BIA device, employing Bland-Altman analysis—a key statistical method for assessing agreement between two measurement techniques—to quantify bias and limits of agreement, thereby providing a framework for interpreting wearable-derived nutrition and body composition data in research and clinical applications [12].

The following tables summarize the key quantitative findings from the validation study, comparing the wearable smartwatch BIA (Samsung Galaxy Watch5) and a clinical BIA device (InBody 770) against DXA [12].

Table 1: Overall Agreement with DXA in Body Composition Estimates (n=108)

| Metric | Device | Pearson's r | Lin's CCC | MAPE | MAE | Clinical Interpretation |

|---|---|---|---|---|---|---|

| Body Fat % (BF%) | Wearable-BIA | 0.93 | 0.91 | 14.3% | - | Very strong correlation and agreement [12] |

| Clinical-BIA | 0.96 | 0.86 | 21.1% | - | Very strong correlation, good agreement [12] | |

| Skeletal Muscle % (SM%) | Wearable-BIA | 0.92 | 0.45 | 20.3% | - | Strong correlation, weak agreement [12] |

| Clinical-BIA | 0.89 | 0.25 | 36.1% | - | Strong correlation, weak agreement [12] |

Table 2: Sex-Stratified Accuracy of the Wearable Smartwatch for BF%

| Participant Group | Lin's CCC | MAPE | Equivalence to DXA |

|---|---|---|---|

| Females (n=56) | 0.91 | 9.19% | Supported |

| Males (n=52) | Data not fully specified in search results |

Table 3: Key Findings from a Supplementary Validation Study A supplementary study of 75 participants further assessed the precision of wearable BIA for Fat-Free Mass (FFM) [21] [22].

| Metric | Method | Test-Retest Precision (CV) | RMSE | Concordance with DXA (Lin's CCC) |

|---|---|---|---|---|

| Fat-Free Mass (FFM) | DXA (Criterion) | 0.7% | 0.4 kg | - |

| Wearable-BIA | 1.3% | 0.7 kg | 0.97 (after systematic correction) [21] [22] |

Experimental Protocols

Participant Preparation and Pre-Testing Protocol

Standardized pre-test conditions are crucial for obtaining reliable BIA measurements, as hydration, food intake, and exercise can significantly alter results [12] [20].

- Fasting: Participants are instructed to refrain from food, caffeine, and other drinks for 3 hours prior to testing.

- Hydration: Participants are to consume water as they normally would.

- Activity & Substances: Avoid alcohol, smoking, and heavy exercise for 24 hours prior to the assessment.

- Clothing: Participants should wear lightweight athletic clothing during testing.

Device Measurement Procedures

Body composition is assessed using three devices in a single session. The following workflow outlines the sequential testing procedure.

DXA Scan Procedure

- Device: Lunar iDXA (General Electric) with enCORE v18 software.

- Positioning: The participant lies supine on the scanning bed for the duration of the whole-body scan as per manufacturer and laboratory protocols [12].

- Output: The software directly reports values for fat mass, skeletal muscle mass, BF%, and SM%.

Wearable Smartwatch BIA Procedure

- Device: Samsung Galaxy Watch5.

- Setup: Demographic information (age, height, weight) is input into the device. The watch is secured on the participant's left wrist.

- Measurement: The participant places the middle and ring fingers of their right hand on the two metal electrode knobs on the side of the watch. The reading takes 30 seconds to 1 minute to complete [12] [21].

- Output: The device directly reports BF% and other body composition metrics via its proprietary algorithm.

Clinical BIA Analyzer Procedure

- Device: InBody 770.

- Positioning: The participant stands barefoot on the device's foot electrodes and grips the hand electrodes, assuming a standing hand-to-foot position as per device instructions [12].

- Output: The device provides estimates for BF%, SM%, and other parameters.

Data Analysis Protocol

- Statistical Agreement: Use Bland-Altman plots to visualize the mean difference (bias) between the BIA devices and DXA, and to calculate the 95% Limits of Agreement (LoA). This identifies proportional bias and helps determine the clinical acceptability of the devices [12].

- Correlation and Concordance: Calculate Pearson's r for linearity and Lin's Concordance Correlation Coefficient (CCC) to assess agreement, which is more informative than correlation alone [12].

- Error Metrics: Compute Mean Absolute Percentage Error (MAPE) and Mean Absolute Error (MAE) to quantify the magnitude of error relative to the criterion standard [12].

- Equivalence Testing: Employ tests like the Two One-Sided Tests (TOST) procedure to determine if the measurements from the new device are statistically equivalent to the criterion within a pre-specified margin [12].

Visualizing Bland-Altman Analysis for Wearable Data

Bland-Altman analysis is the recommended method for assessing the agreement between a new measurement technique (wearable BIA) and a gold standard (DXA). The following diagram illustrates the key components of the plot and their interpretation for a body fat percentage dataset, where a proportional bias is often observed.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials and Equipment for BIA Validation Studies

| Item | Function & Application in Validation |

|---|---|

| Dual-Energy X-Ray Absorptiometry (DXA) | Criterion method for body composition. Provides high-accuracy benchmarks for fat mass, lean mass, and bone mass against which wearable devices are validated [12] [20]. |

| Wearable BIA Device (e.g., Samsung Galaxy Watch) | Device Under Test (DUT). Uses a low-level electrical current passed through the upper body via wrist and hand electrodes to estimate body composition via proprietary algorithms [12] [21]. |

| Clinical BIA Analyzer (e.g., InBody 770) | Established clinical comparator. A standing hand-to-foot BIA device used as an intermediate standard to contextualize the performance of the wearable device [12]. |

| Bioelectrical Impedance Raw Parameters (R, Xc, PhA) | Foundational electrical measurements. Resistance (R) and Reactance (Xc) are used to calculate the Phase Angle (PhA), an indicator of cellular health. Access to these raw data is essential for applying population-specific predictive equations and improving result accuracy [20]. |

| Standard Operating Procedure (SOP) Protocol | A detailed, step-by-step manual ensuring measurement consistency. It covers participant preparation, device operation顺序, and environmental controls, which is critical for minimizing variability and ensuring reproducible results in a validation study [12]. |

The accurate measurement of energy intake (EI) is a cornerstone of nutritional science, critical for research on energy balance, obesity, and metabolic diseases. Traditional methods, such as food diaries and 24-hour recalls, are plagued by significant reporting biases and inaccuracies [23]. The emergence of wearable technology promises a paradigm shift towards objective, automatic dietary monitoring (ADM). This case study critically evaluates the validation of a commercial dietary wristband, focusing on the application of Bland-Altman analysis to assess its agreement with a controlled reference method for measuring energy intake in free-living adults. This analysis is situated within a broader thesis on the use of Bland-Altman methodology for validating wearable nutrition data, providing a framework for researchers and drug development professionals to appraise the real-world performance of such devices.

Background: The Challenge of Dietary Assessment

Precision nutrition requires moving beyond population-level dietary guidelines to personalized interventions, a transition made possible by modern tools that provide dynamic, individual-specific assessments of dietary intake [8]. However, the accurate quantification of food intake remains a fundamental challenge. Traditional memory-based dietary assessments are non-falsifiable and reflect perceived rather than true intake, while even more advanced methods like remote food photography are limited by an inability to record in true real time and difficulties in estimating portion sizes [8]. Wearable sensors offer a potential solution by directly measuring physiological responses to food intake, thus bypassing the reliance on user memory and cooperation.

Materials and Experimental Protocol

Technology Under Investigation

The device evaluated in this case study was the GoBe2 wristband (Healbe Corp.). This wearable technology employs bioimpedance spectroscopy, utilizing computational algorithms to convert bioimpedance signals into patterns of extracellular and intracellular fluid shifts associated with nutrient influx. It automatically estimates daily energy intake (calories) and macronutrient intake (grams of protein, fat, and carbohydrates) by tracking these physiological fluctuations [8].

Reference Method Development

A robust reference method was established to validate the wristband's estimates [8]:

- Collaboration with Dining Facility: The research team collaborated with a university dining facility to prepare and serve calibrated study meals.

- Controlled Consumption: The energy and macronutrient intake of each participant was precisely recorded through direct observation by a trained research team during meal consumption.

- Dietary Recording: Participants used an accompanying mobile app to log their dietary intake, consistent with the device's intended use case.

Participant Recruitment and Study Design

- Participants: 25 free-living adults were recruited (age range: 18-50 years). Exclusion criteria were strict, encompassing chronic diseases, current dieting, restricted dietary habits, pregnancy, smoking, and use of medications affecting digestion or metabolism [8].

- Study Protocol: The study consisted of two 14-day test periods. During these periods, participants were required to use the wristband and its mobile app consistently. Their dietary intake was simultaneously measured using the reference method described above [24] [8].

Key Research Reagents and Solutions

Table 1: Essential Research Materials and Their Functions in the Validation Study

| Item / Solution | Function in the Experimental Protocol |

|---|---|

| GoBe2 Wristband (Healbe Corp.) | The test device; uses bioimpedance signals to automatically estimate energy and macronutrient intake. |

| Custom-Calibrated Study Meals | Served as the reference for true energy/macronutrient intake; prepared in a metabolic kitchen. |

| Mobile Application | Accompanied the wristband; used by participants for dietary logging as part of the device's ecosystem. |

| Continuous Glucose Monitor (CGM) | Used to measure adherence to dietary reporting protocols (data not reported in primary outcomes). |

| Bland-Altman Statistical Method | The primary statistical analysis for assessing agreement between the wristband and reference method. |

The following workflow diagram illustrates the sequential structure of the experimental protocol.

Data Analysis: Application of Bland-Altman Method

Rationale for Bland-Altman Analysis

The Bland-Altman analysis is the preferred method for assessing agreement between two measurement techniques, as it quantifies the bias (mean difference) and the limits of agreement (LOA) within which 95% of the differences between the two methods are expected to fall. This is more informative than simple correlation, which measures association but not agreement [25].

The study collected 304 paired cases of daily dietary intake (kcal/day) from the reference method and the wristband [24] [8].

Table 2: Bland-Altman Analysis Results for Energy Intake (kcal/day) [24] [8]

| Parameter | Value |

|---|---|

| Mean Bias (Test - Reference) | -105 kcal/day |

| Standard Deviation (SD) of Bias | 660 kcal/day |

| 95% Limits of Agreement (LOA) | -1400 to 1189 kcal/day |

| Regression Equation (Bias vs. Average) | Y = -0.3401X + 1963 |

| Statistical Significance of Regression | P < 0.001 |

Interpretation of Findings and Clinical Relevance

Analysis of Bias and Limits of Agreement

The mean bias of -105 kcal/day indicates a slight average underestimation of energy intake by the wristband compared to the reference method. However, the clinical significance of this device is determined by the very wide Limits of Agreement (LOA). The 95% LOA of -1400 to 1189 kcal/day means that for any individual, the wristband's measurement could be as much as 1400 kcal below or 1189 kcal above the true value. This range is unacceptably large for most clinical or research applications where precise energy intake measurement is required.

Proportional Bias and Its Implications

The significant regression equation (Y = -0.3401X + 1963, P < 0.001) reveals a systematic proportional bias [24] [8]. This indicates that the device's performance is not consistent across the range of intake:

- It has a tendency to overestimate energy intake at lower levels of consumption.

- It has a tendency to underestimate energy intake at higher levels of consumption. This type of bias is a critical flaw, as it means the device's error is not random but predictable and dependent on the user's actual intake level, complicating any attempt to correct for the error.

The researchers identified transient signal loss from the wristband's sensor technology as a major source of error in computing dietary intake [24] [8]. This highlights a common technical challenge in wearable devices: maintaining consistent, high-quality signal acquisition in free-living conditions.

Comparative Landscape of Wearable Dietary Technologies

The field of Automatic Dietary Monitoring (ADM) is rapidly evolving, with the bioimpedance approach being one of several technological pathways. Table 3: Comparison of Emerging Wearable Technologies for Dietary Monitoring

| Technology | Principle | Example Device | Key Advantages/Challenges |

|---|---|---|---|

| Bioimpedance Sensing | Measures fluid shifts via electrical impedance to estimate nutrient influx. | GoBe2 Wristband [8], iEat [26] | Advantage: Fully automatic, estimates macros.Challenge: Signal loss, variable accuracy shown in validation. |

| Wearable Cameras + AI | Uses egocentric cameras and computer vision to passively capture and analyze food. | EgoDiet System [15] | Advantage: Passive, provides rich contextual data (food type, sequence).Challenge: Privacy concerns, computational complexity for portion size. |

| Accelerometry (Intake-Balance) | Uses wrist-worn accelerometers to estimate Energy Expenditure (EE), then calculates EI as EI = EE + ΔES. | ActiGraph with Open-Source Algorithms [27] | Advantage: Based on energy balance principle, uses research-grade devices.Challenge: Error propagation from both EE and body composition measures. |

| Acoustic Sensing | Uses a neck-borne microphone to detect and analyze chewing and swallowing sounds. | AutoDietary [26] | Advantage: Direct detection of ingestion events.Challenge: Susceptible to ambient noise, classifies food type with limited accuracy. |

The following diagram maps the logical decision process for selecting a dietary monitoring technology based on research objectives and constraints.

This case study demonstrates a rigorous application of Bland-Altman analysis to validate a wearable dietary device. The key conclusion is that while the tested wristband showed a small average bias, its high individual-level variability and significant proportional bias limit its utility for applications requiring precise measurement of energy intake at the individual level [24] [8]. The study underscores the immense challenge of automatically tracking nutritional intake with high accuracy in free-living conditions.

For future research and validation studies in this domain, the following protocols are recommended:

- Incorporate Objective Reference Methods: Where possible, use controlled feeding studies with calibrated meals or the intake-balance method (using Doubly Labeled Water and DXA) as a criterion standard to avoid the biases of self-report [27] [28].

- Conduct Longer Validation Studies: Extend testing beyond short periods to assess device reliability, user compliance, and performance over time.

- Report Comprehensive Metrics: Beyond Bland-Altman analysis, include metrics like Mean Absolute Error (MAE) and Mean Absolute Percentage Error (MAPE) to provide a fuller picture of device accuracy [27].

- Test in Diverse Populations: Ensure validation studies include participants with varying body compositions, ages, and health statuses to evaluate generalizability.

- Rigorously Assess Macronutrient Tracking: Extend validation beyond total energy to the critical endpoints of protein, carbohydrate, and fat intake, which are essential for many clinical and research applications.

Accurate dietary assessment is fundamental to nutrition research, public health monitoring, and chronic disease management. Traditional methods, primarily based on self-report (e.g., 24-hour dietary recalls, food diaries), are labor-intensive and prone to significant error and bias, including systematic under-reporting of energy intake [29] [30]. These limitations distort the understanding of diet-disease relationships and hinder effective intervention strategies.

The emergence of passive wearable cameras, coupled with Artificial Intelligence (AI), presents a transformative approach. These systems automatically capture images of food consumption, minimizing user burden and reporting bias. This case study examines the development, validation, and application of these technologies, with a specific focus on the statistical validation of their performance, a critical consideration for their adoption in rigorous scientific research and drug development.

A key step in validating new measurement tools against an established reference is assessing their agreement. The Bland-Altman analysis is a fundamental statistical method used for this purpose, providing insights into the bias and limits of agreement between two measurement techniques [8]. The following tables summarize the quantitative performance of AI-enabled wearable cameras against traditional dietary assessment methods, with metrics relevant to agreement studies.

Table 1: Performance Comparison of Dietary Assessment Methods

| Assessment Method | Study/Context | Key Performance Metric | Value | Implied Bias vs. Reference |

|---|---|---|---|---|

| EgoDiet (AI) | Study A (London vs. Dietitian) | Mean Absolute Percentage Error (MAPE) | 31.9% | Lower error than human expert |

| Dietitian's Assessment | Study A (Reference) | Mean Absolute Percentage Error (MAPE) | 40.1% | Reference for AI comparison |

| EgoDiet (AI) | Study B (Ghana vs. 24HR) | Mean Absolute Percentage Error (MAPE) | 28.0% | Lower error than self-report |

| 24-Hour Dietary Recall (24HR) | Study B (Reference) | Mean Absolute Percentage Error (MAPE) | 32.5% | Reference for AI comparison |

| Camera-Assisted 24HR | Northern Ireland Study | Mean Energy Intake (kJ/d) | 9677.8 ± 2708.0 | Systematically higher intake vs. recall alone |

| 24-Hour Recall Alone | Northern Ireland Study | Mean Energy Intake (kJ/d) | 9304.6 ± 2588.5 | Reference for camera-assisted method |

Table 1 Note: MAPE measures the average absolute percentage error, where a lower value indicates higher accuracy. The consistent reduction in MAPE and the increased energy intake reported with camera assistance suggest that the AI method reduces the systematic under-reporting bias inherent in traditional methods [15] [31].

Table 2: Validation Metrics from Related Wearable Technology

| Device / Technology | Measurement Target | Comparison Method | Agreement / Accuracy Metric | Value |

|---|---|---|---|---|

| Wearable BIA Smartwatch | Body Fat % (BF%) | Dual-energy X-ray Absorptiometry (DXA) | Lin's Concordance Correlation Coefficient (CCC) | 0.91 |

| Mean Absolute Percentage Error (MAPE) | 14.3% | |||

| Clinical BIA Device | Body Fat % (BF%) | Dual-energy X-ray Absorptiometry (DXA) | Lin's Concordance Correlation Coefficient (CCC) | 0.86 |

| Mean Absolute Percentage Error (MAPE) | 21.1% | |||

| Nutrition Tracking Wristband | Daily Energy Intake (kcal/day) | Controlled Reference Meal Method | Mean Bias (Bland-Altman) | -105 kcal/day |

| 95% Limits of Agreement | -1400 to 1189 kcal/day |