Cluster Randomized Trials in Nutrition: A Comprehensive Guide to Design, Implementation, and Analysis for Researchers

Cluster randomized trials (CRTs) are essential for evaluating group-based nutritional interventions, from public health programs to clinical practice changes.

Cluster Randomized Trials in Nutrition: A Comprehensive Guide to Design, Implementation, and Analysis for Researchers

Abstract

Cluster randomized trials (CRTs) are essential for evaluating group-based nutritional interventions, from public health programs to clinical practice changes. This article provides a comprehensive guide for researchers and clinical trial professionals on the foundational principles, methodological design, and analytical strategies for CRTs in nutrition. It explores the rationale for cluster randomization, including preventing contamination and assessing interventions applied at a group level. The guide details practical aspects like randomization schemes, ethical considerations, and sample size calculation, while also addressing common pitfalls and advanced optimization techniques like adaptive designs. Furthermore, it examines real-world case studies and evidence of impact, synthesizing key takeaways to inform the future of robust, efficient nutritional research.

Understanding Cluster Randomized Trials: The Why and When for Nutrition Research

A cluster randomized trial (CRT) is a study design in which intact groups of individuals, rather than the individuals themselves, are randomized to receive different interventions [1]. These units of randomization, or clusters, can be diverse, including clinics, hospitals, worksites, schools, or entire communities [1]. This design has been increasingly adopted by public health and medical researchers over recent decades, particularly when the nature of an intervention makes individual randomization impractical or scientifically inappropriate [2].

The primary rationale for moving beyond individual randomization often lies in the intervention itself. Some interventions are logistically applied at a group level, such as health education programs delivered via mass media or organizational changes in healthcare settings [1] [2]. Furthermore, cluster randomization helps lessen the risk of experimental contamination, where individuals in the control group are inadvertently exposed to the intervention, which is a significant concern in closely-knit groups like communities or clinical practices [1] [2]. For instance, in a trial evaluating the effect of safety advice provided by general practitioners to families, randomizing by family (cluster) was more appropriate than randomizing individual family members [1].

Key Comparisons: Cluster vs. Individual Randomization

The choice between a cluster randomized design and an individually randomized design has profound implications for a study's methodology, ethical considerations, and statistical power. The table below summarizes the core distinctions.

Table 1: Fundamental Differences Between Cluster and Individual Randomized Trials

| Aspect | Cluster Randomized Trial | Individually Randomized Trial |

|---|---|---|

| Unit of Randomization | Intact groups (clusters) such as communities, schools, or clinics [1]. | Individual participants [1]. |

| Primary Rationale | Intervention is applied at group level; to prevent contamination; to assess herd immunity [1] [2]. | Feasible to apply intervention to individuals; no high risk of contamination between groups. |

| Unit of Inference | Can be the individual or the cluster, a fundamental choice that affects design and analysis [1]. | Typically the individual. |

| Statistical Analysis | Must account for intra-cluster correlation; standard methods are invalid [1] [2]. | Standard statistical procedures (e.g., t-tests, chi-square) are valid. |

| Sample Size Requirement | Requires a larger sample size for equivalent power due to the design effect [2]. | Standard sample size calculations apply. |

| Informed Consent | More complex; may involve cluster leaders as surrogates; participants may be enrolled after randomization [1]. | Typically requires individual informed consent before randomization. |

Statistical Implications and the Design Effect

The most critical statistical consequence of cluster randomization is that responses from individuals within the same cluster cannot be assumed to be independent. Patients within one general practice, for example, are likely to have more similar outcomes than patients across different practices due to shared environmental factors and care providers [2]. This intra-cluster correlation invalidates standard statistical procedures that assume independence of observations [1].

To account for this, sample size calculations for CRTs must incorporate a design effect (also known as variance inflation factor). The formula for the design effect is:

Design Effect = 1 + (m̄ − 1)ρ

Where:

- m̄ = the average cluster size

- ρ (rho) = the intracluster correlation coefficient (ICC), interpretable as the correlation between any two responses in the same cluster or the proportion of overall variation accounted for by between-cluster variation [1]

The impact on the required sample size is substantial. The total number of participants needed is the sample size calculated for an individual randomized trial multiplied by the design effect [2].

Table 2: Example Sample Size Impact of Cluster Design

| Trial Design | Scenario | Required Sample Size | Notes |

|---|---|---|---|

| Individual Randomization | Detect change from 40% to 60% in appropriate management [2]. | 194 patients | Assumes 80% power and 5% significance. |

| Cluster Randomization | Same change, with moderate ICC and 10 patients per cluster [2]. | 380 patients (38 clusters) | Sample size nearly doubles due to the design effect. |

Failure to account for this design effect during the analysis phase leads to artificially extreme P-values and over-narrow confidence intervals, increasing the risk of spuriously significant findings [2]. Analytical approaches must model the hierarchical nature of the data, using techniques such as mixed-effects models or generalized estimating equations, unless the analysis is aggregated to the cluster level [2].

Ethical and Informed Consent Considerations

The ethical framework for CRTs, particularly concerning informed consent, requires careful adaptation from principles developed for individually randomized trials. A key challenge is that in trials with large clusters (e.g., entire communities), it may be logistically impossible to obtain informed consent from all individuals before random assignment [1].

Ethical guidelines suggest a tiered approach:

- Community-Level Agreement: Permission from key decision-makers or community leaders can act as a surrogate for pre-randomization consent, especially for public health interventions [1]. The choice of representative should be consistent with the community's traditions and political philosophy [1].

- Individual-Level Consent: The refusal of an individual to participate in a study must be respected, even if a leader has agreed on behalf of the community [1]. Individuals should, where possible, be given the opportunity to avoid the inherent risks of the intervention or to provide consent for data collection, especially in Zelen-designed trials where patients are enrolled after random assignment [1].

Editors often require reports of CRTs to state that institutional review board approval was obtained and to describe how participant consent was addressed [1].

Experimental Protocols for a Nutrition Intervention CRT

This section outlines a detailed methodology for a hypothetical cluster randomized trial evaluating a group-based nutrition intervention.

Protocol: Community-Based Trial of a Nutritional Education Program

1. Research Question and Hypothesis:

- Does a structured, group-based nutritional education program, compared to usual care, increase fruit and vegetable consumption among adults in participating communities?

2. Cluster Identification and Selection:

- Clusters: Define communities (e.g., towns, neighborhoods) as the unit of randomization.

- Eligibility Criteria: Select communities based on size, demographic stability, and presence of key facilities (e.g., community centers, supermarkets).

- Recruitment: Obtain permission from community gatekeepers (e.g., local government, health authorities) for the community's participation [1].

3. Randomization and Blinding:

- Unit of Randomization: Community (cluster).

- Procedure: After baseline data collection, an independent statistician uses a computer-generated sequence to randomize communities to either the intervention or control group. Stratified randomization can be used to balance known prognostic factors (e.g., socioeconomic status).

- Blinding: While participants and educators cannot be blinded to the intervention, outcome assessors and data analysts should be kept blinded to group assignment.

4. Interventions:

- Intervention Group: Receives a 12-week, theory-based nutritional education program delivered in weekly group sessions at local community centers. The program includes interactive workshops, cooking demonstrations, and goal-setting activities.

- Control Group: Continues with usual care and receives standard, publicly available health information pamphlets.

5. Outcomes and Data Collection:

- Primary Outcome: Change in self-reported daily servings of fruits and vegetables, validated using a food frequency questionnaire, from baseline to 12 months.

- Secondary Outcomes: Changes in body mass index (BMI), knowledge about nutrition, and biomarkers of fruit/vegetable intake (e.g., blood carotenoids) in a sub-sample.

- Data Collection Points: Baseline, immediately post-intervention (3 months), and at 12 months for follow-up.

6. Sample Size Calculation:

- Assumptions: Based on prior studies, assume an ICC of 0.02, an average of 50 participants per community, and a design effect of 1 + (50-1)*0.02 = 1.98.

- Calculation: For an individually randomized trial, 200 participants per group are needed. Accounting for the design effect: 200 * 1.98 = 396 participants per group. Therefore, approximately 8 communities per arm (396/50 ≈ 8 clusters) are required, for a total of 16 communities and ~800 participants.

7. Statistical Analysis Plan:

- Primary Analysis: A mixed-effects linear regression model will be used to assess the difference in the change of fruit and vegetable consumption between groups. The model will include a fixed effect for treatment group and random intercepts for communities to account for clustering.

- Software: Analysis will be performed using statistical software capable of multilevel modeling (e.g., R, Stata, SAS).

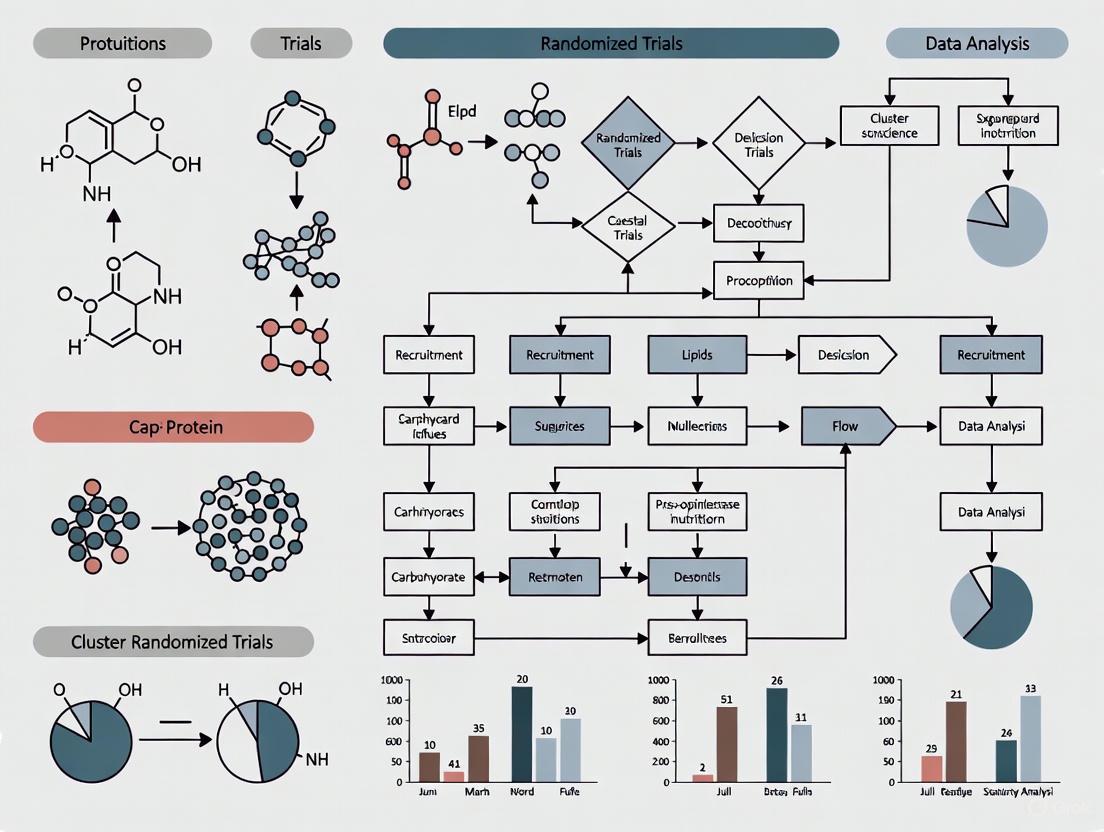

Experimental Workflow Visualization

The following diagram illustrates the high-level workflow for the described cluster randomized trial.

The Researcher's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for a Nutrition CRT

| Item | Function in the Experiment |

|---|---|

| Validated Food Frequency Questionnaire (FFQ) | A standardized tool to assess participants' habitual dietary intake, specifically fruit and vegetable consumption, as the primary outcome measure. |

| Biomarker Assay Kits (e.g., for blood carotenoids) | Provides an objective, biochemical validation of self-reported fruit and vegetable intake in a sub-sample of participants. |

| Educational Program Materials | Structured curriculum, lesson plans, and participant handbooks for the group-based nutritional education intervention to ensure standardized delivery. |

| Data Collection and Management Platform | Secure, centralized software (e.g., REDCap) for storing and managing participant data, ensuring data integrity and facilitating blinded analysis. |

| Statistical Software with Multilevel Modeling Capability | Software such as R or Stata is essential for performing the correct statistical analyses that account for the hierarchical (clustered) nature of the data [2]. |

Cluster randomized controlled trials (cRCTs) are multilevel experiments where groups, rather than individuals, are randomly assigned to intervention or control conditions. This design is paramount in nutritional intervention research for two core reasons: to prevent the contamination of the control group and to accurately evaluate interventions that are naturally delivered at a group level. When individual randomization is used for community-based interventions, information or behavioral changes can spread from the intervention to the control group, blurring the true effect of the intervention. [3] cRCTs preserve the integrity of the comparison by keeping the intervention and control groups separate. Furthermore, many public health and nutritional policies, educational programs, and environmental changes are implemented at the level of a school, community, or clinic, making the cluster the appropriate unit for both delivery and evaluation. [4] [5]

Experimental Designs and Protocols in Nutrition cRCTs

Nutritional research employs various cRCT designs, each with distinct methodologies tailored to the research question and context. The table below summarizes key designs and their specific applications as demonstrated in recent trials.

Table 1: Overview of Cluster Randomized Trial Designs in Nutrition Research

| Trial Design | Research Objective | Clusters & Population | Key Methodological Features for Contamination Control |

|---|---|---|---|

| Parallel cRCT [6] [7] [5] | To evaluate the effect of a Nutritional Behavioral Change Communication (NBCC) intervention on dietary practices of pregnant adolescents. [6] | 28 clusters (kebeles); 426 pregnant adolescents. [6] | Clusters were non-adjacent, and buffer zones (non-selected clusters) were placed between intervention and control clusters to prevent information sharing. [6] |

| Factorial cRCT [4] | To test the individual and combined impact of three implementation strategies (additional resources, mentoring, enhanced engagement) on a school nutrition program. [4] | 2 cohorts of 8 public elementary schools each (24 total). [4] | The Multiphase Optimization STrategy (MOST) framework uses a full factorial design to efficiently test multiple strategy components without the need for separate, potentially contaminating, trials for each. [4] |

| Stepped-Wedge cRCT [8] | To test a digital nutrition education intervention for older adults at congregate meal sites. [8] | 398 older adults at 12 congregate meal sites. [8] | Clusters are randomly assigned to sequences where they cross over from control to intervention. All clusters eventually receive the intervention, and each cluster serves as its own control, reducing between-cluster comparison. [8] |

Detailed Experimental Protocol: Parallel cRCT for Nutritional Behavioral Change

The following protocol from a trial in Ethiopia provides a clear example of a rigorously designed parallel cRCT. [6]

- Intervention Design: The intervention group received a community-based Nutritional Behavioral Change Communication (NBCC) package, grounded in the Health Belief Model. This included food preparation demonstrations and four counseling sessions for pregnant adolescents and their husbands, delivered by Alliances for Development (AFDs). The control group received standard nutritional counseling. [6]

- Randomization and Blinding: The unit of randomization was the kebele (the smallest administrative unit). A cluster sampling technique was used, and clusters were allocated to intervention or control arms using a lottery method (simple random sampling) within districts. Due to the nature of the behavioral intervention, blinding of participants was not possible. [6]

- Primary Outcomes and Measurement: The primary outcome was appropriate dietary practice, a binary measure. Secondary outcomes included nutritional knowledge. Data were collected at baseline and post-intervention, and the net treatment effect was estimated using generalized estimating equations and the difference-in-differences method to account for the clustered design. [6]

Visualizing the Logic of cRCT Design Selection

The diagram below illustrates the key decision points that lead researchers to select a cRCT design, with the central goal of preventing contamination.

The Scientist's Toolkit: Essential Reagents for cRCTs

Successfully conducting a cRCT requires specific "research reagents" and methodological components. The following table details these essential elements and their functions in the context of nutrition research.

Table 2: Key Research Reagents and Methodological Components for Nutrition cRCTs

| Tool / Reagent | Function in cRCT | Exemplar Use in Nutrition Research |

|---|---|---|

| Implementation Strategies [4] | Methods to enhance the adoption of a bundled evidence-based practice. | In a school-based trial, strategies included additional resources, school-to-school mentoring, and enhanced engagement to support program delivery. [4] |

| Validated Behavioral Surveys [6] [8] | To quantitatively measure primary outcomes like dietary practices, nutrition knowledge, and food security. | Surveys assessed nutritional knowledge and dietary practices in pregnant adolescents [6] and food security in older adults. [8] |

| Objective Biomarkers [4] | To provide objective, physical measures of intervention effectiveness, supplementing self-reported data. | A school trial used dermal carotenoids (Veggie Meter) to estimate fruit/vegetable intake and measured cardiovascular fitness via the Progressive Aerobic Cardiovascular Endurance Run. [4] |

| Generalized Linear Mixed Models (GLMM) [7] [5] | A statistical framework that accounts for the correlation of outcomes within clusters, which is essential for valid analysis. | Used to analyze changes in body weight and mealtime behaviors in persons with dementia [7] and food safety behaviors in the MaaCiwara study. [5] |

| Reporting Guidelines (CONSORT/SPIRIT) [9] | Checklists to ensure transparent and complete reporting of trial design and results, which is critical for replication. | A review found 75.3% of nutrition RCT journals endorsed CONSORT, but only 27.8% of protocols mentioned using it, highlighting a need for greater adherence. [9] |

Cluster randomized trials (CRTs) are a powerful research design for evaluating interventions that are naturally delivered to groups or are expected to have effects that extend beyond the individual. This guide compares the performance of CRTs against alternative methodologies, providing a detailed overview of their application in group-based nutrition intervention research.

Experimental Design and Methodological Comparison

A cluster randomized trial is a study in which intact social units or groups—rather than individual participants—are randomly assigned to intervention or control conditions [1]. This design is particularly suited for evaluating complex public health and nutritional interventions.

Head-to-Head Comparison: CRTs vs. Alternative Trial Designs

The table below objectively compares CRT against two common alternative designs: individually randomized controlled trials (RCTs) and non-randomized observational studies.

Table 1: Performance Comparison of Cluster Randomized Trials vs. Alternative Research Designs

| Design Feature | Cluster Randomized Trial (CRT) | Individually Randomized Controlled Trial (RCT) | Non-Randomized Observational Study |

|---|---|---|---|

| Unit of Randomization | Cluster (e.g., community, school, clinic) [1] | Individual participant | No randomization |

| Control for Contamination | High protection; reduces risk of intervention spillover between groups [1] | Lower protection; risk of contamination between individuals in same setting | Not applicable |

| Administrative Efficiency | High; often easier to implement group-level interventions [1] | Lower; can be logistically challenging for group-based delivery | Variable |

| Statistical Power | Reduced without adjustment; requires accounting for intra-cluster correlation [1] | Higher for a given sample size | Variable |

| Ethical Considerations | Complex; may involve multiple levels of consent [1] | More straightforward individual consent | Typically involves standard consent |

| Best Application | Group-level interventions, policy evaluations, and when contamination is a primary concern [1] | Individual-level therapies and interventions | Rare outcomes, long-term effects, or when RCTs are infeasible [10] |

| Certainty of Evidence (Initial GRADE) | High (as an RCT variant) [11] | High [11] | Low (but can be upgraded under specific conditions) [11] |

Quantitative Outcomes from Nutrition-Focused CRTs

The following table summarizes key performance data from real-world cluster randomized trials that investigated nutritional interventions, demonstrating the range of outcomes this design can measure.

Table 2: Experimental Outcomes from Nutrition-Based Cluster Randomized Trials

| Trial Name / Location | Intervention | Primary Outcome Measure | Key Quantitative Finding | Sample Size & Design |

|---|---|---|---|---|

| Create Healthy Futures (Pennsylvania, USA) [12] | Web-based nutrition education for early care providers | Diet Quality (AHEI-2010 score) | No significant within-or-between-group changes in AHEI-2010 scores. | 186 providers in 12 centers (Cluster RCT) |

| MAHAY Study (Madagascar) [13] | Home-visiting & lipid-based nutrient supplementation (LNS) | Linear growth (Height-for-age z-scores) | In Malawi, a similar LNS intervention reduced severe stunting to 3.5% vs. 12.5% in controls [13]. | 125 communities (Multi-arm Cluster RCT) |

| Ethiopia Elderly Nutrition (Southwest Ethiopia) [14] | Theory-based nutritional education | Dietary Diversity Score (DDS) | Mean DDS increased significantly (p<.001). Intervention group was 7.7x more likely to consume a diverse diet (AOR=7.746, 95% CI: 5.012, 11.973). | 720 older persons (Cluster RCT) |

| PRET Substudy (Niger) [15] | Mass azithromycin distributions | Prevalence of wasting (Weight-for-height z-score) | No difference in wasting between annual and biannual treatment arms (OR=0.75, 95% CI: 0.46–1.23). | 1,030 children in 24 communities (Cluster RCT) |

Detailed Experimental Protocols

To ensure methodological rigor and reproducibility, this section outlines the core protocols employed in the cited CRTs.

The MAHAY study employs a multi-arm CRT design to test the effects and cost-effectiveness of combined interventions to address chronic malnutrition and poor child development.

- Arm T0 (Control): Receives the existing national program, which includes monthly growth monitoring and nutritional/hygiene education.

- Arm T1: Receives T0 plus home visits for intensive nutrition counseling within a behavior change framework.

- Arm T2: Receives T1 plus lipid-based nutrient supplementation (LNS) for children 6–18 months old.

- Arm T3: Receives T2 plus LNS supplementation for pregnant and lactating women.

- Arm T4: Receives T1 plus an intensive home visiting program to support child development.

Methodology: The trial randomizes 125 communities (clusters), with an anticipated enrollment of 1,250 pregnant women, 1,250 children aged 0-6 months, and 1,250 children aged 6-18 months. Primary outcomes include linear growth (length/height-for-age z-scores) and child development scores (mental, motor, and social). The analysis will estimate both unadjusted and adjusted intention-to-treat effects.

This trial assessed the impact of a theory-based educational intervention on the nutritional status of older people.

- Intervention Group: Received a nutritional education intervention guided by Social Cognitive Theory (SCT). This approach focuses on improving self-efficacy, outcome expectations, and using social support to facilitate behavior change.

- Control Group: Received usual care or a minimal intervention for comparison.

Methodology: The study was a CRT conducted from December 2021 to May 2022 among 782 older persons randomly selected from multiple urban and semi-urban areas. Data were collected using interviewer-administered questionnaires. Nutritional status was assessed with the Mini Nutritional Assessment (MNA) tool, and dietary diversity was evaluated using a qualitative 24-hour dietary recall. The intervention effect was analyzed using Difference-in-Difference and Generalized Estimating Equation (GEE) models to account for the cluster design.

Logical Workflows and Pathway Visualizations

The following diagrams illustrate the core logical relationships and decision pathways in designing and appraising evidence from cluster randomized trials.

CRT Design and Inference Logic

Evidence Certainty Assessment (GRADE) Pathway

The GRADE framework provides a systematic approach for rating the certainty of evidence in systematic reviews and health technology assessments, including those incorporating CRT data.

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers designing a cluster randomized trial in nutrition, the following tools and methodologies are essential for ensuring rigor and validity.

Table 3: Key Reagents and Methodologies for Nutrition-Focused CRTs

| Tool / Methodology | Function in CRT Research | Application Example |

|---|---|---|

| Intraclass Correlation Coefficient (ICC) | Quantifies the degree of similarity among responses from individuals within the same cluster; critical for accurate sample size calculation [1]. | Used in the Niger azithromycin trial to inform power calculations, assuming an ICC of 0.015 from a previous trial in the same region [15]. |

| GRADE (Grading of Recommendations, Assessment, Development, and Evaluations) Framework | Systematically rates the certainty of a body of evidence from studies, including CRTs, to inform guidelines and policies [11] [16]. | Used by health bodies like the CDC's ACIP to assess evidence and make vaccination recommendations, transparently grading it as High, Moderate, Low, or Very Low [11]. |

| Social Cognitive Theory (SCT) | A theoretical framework for designing behavioral interventions, focusing on self-efficacy, observational learning, and environmental factors. | Guided the nutritional education intervention in the Ethiopia Elderly Nutrition trial to successfully improve dietary diversity [14]. |

| Lipid-Based Nutrient Supplements (LNS) | A ready-to-use supplemental food designed to prevent undernutrition by providing essential micronutrients and calories. | Used in the MAHAY study in Madagascar, providing LNS to children and/or pregnant women to test its impact on linear growth and development [13]. |

| Generalized Estimating Equations (GEE) | A statistical method that accounts for the correlation of outcomes within clusters when analyzing data from a CRT. | Used in the Ethiopia Elderly Nutrition trial to correctly model the effect of the intervention while adjusting for the cluster design [14]. |

Core Conceptual Framework

In the field of cluster randomized trials (CRTs) for nutrition intervention research, understanding three interconnected concepts—clusters, intraclass correlation coefficient (ICC), and design effect—is fundamental to designing robust, properly powered studies that yield valid conclusions.

Clusters are the pre-existing groups (e.g., primary care clinics, schools, villages, or families) that are randomly assigned to different intervention arms, rather than individual participants [17] [18]. This design is often adopted when the intervention is naturally delivered at a group level, to prevent "contamination" between treatment arms, or for administrative ease [19] [20]. A key consequence of this design is that individuals within the same cluster tend to have more similar outcomes than individuals from different clusters due to shared environmental, social, or provider-specific factors [18].

The Intraclass Correlation Coefficient (ICC), denoted by the Greek letter ρ (rho), is the statistical measure that quantifies this similarity or dependence within clusters [17] [20]. It is defined as the proportion of the total variance in the outcome that is attributable to the variation between clusters: ρ = σ_b² / (σ_b² + σ_w²), where σ_b² is the between-cluster variance and σ_w² is the within-cluster variance [20]. An ICC of 0 indicates no within-cluster correlation (outcomes are independent), while an ICC of 1 signifies perfect correlation (all individuals within a cluster have identical outcomes) [19]. In practice, ICCs in public health and nutrition research are typically small but influential, often ranging from 0.01 to 0.05 [20] [21].

The Design Effect (DEFF) is a factor that measures how much the sampling variance of an estimator (like a mean or proportion) is increased due to the clustered nature of the data, compared to a simple random sample [19] [22]. The fundamental formula for the design effect is DEFF = 1 + (n - 1) * ρ, where n is the average cluster size and ρ is the ICC [19] [20]. This DEFF is directly used to inflate the sample size required for a CRT to achieve statistical power equivalent to an individually randomized trial. The total sample size for a CRT is the sample size calculated for an individual randomized trial multiplied by the DEFF [19] [22].

Table 1: Summary of Key Terminology in Cluster Randomized Trials

| Term | Definition | Role in CRT Design & Analysis | Common Symbols |

|---|---|---|---|

| Cluster | A group of individuals (e.g., clinic, school) randomly assigned intact to an intervention arm [17] [18]. | The unit of randomization; creates the dependency in data that must be accounted for. | - |

| Intraclass Correlation Coefficient (ICC) | Measures the degree of similarity or correlation of outcomes among individuals within the same cluster [17] [20]. | Quantifies the clustering effect; a key parameter for sample size calculation and analysis. | ρ (rho) |

| Design Effect (DEFF) | The factor by which the sample size needs to be increased to account for the clustered design [19] [22]. | Informs sample size calculation to ensure the trial has adequate statistical power. | DEFF |

Quantitative Data and Comparisons

The following tables summarize empirical data on ICC values and design effects from various contexts, providing a reference for researchers planning group-based nutrition interventions.

Table 2: Empirical ICC Values from Health-Focused Cluster Randomized Trials

| Study Context / Outcome | Reported ICC Values | Notes & Implications |

|---|---|---|

| School-Based Health Interventions (Median) [21] | School-level: 0.031 (IQR: 0.011-0.08)Class-level: 0.063 (IQR: 0.024-0.1) | Demonstrates that clustering at a more granular level (class) can produce a larger ICC. |

| PROPEL Weight Loss Trial (Primary Care Clinics) [20] | Baseline measures: median 0.019 (range: 0 to 0.055) | ICCs for change outcomes were often higher and varied over the follow-up period. |

| PROPEL Trial: Total Cholesterol [20] | Baseline ICC: 0.055 | One of the highest baseline ICCs in the study, indicating greater between-cluster variability for this biomarker. |

Table 3: Impact of Design Effect on Sample Size Requirements

| Average Cluster Size (n) | Assumed ICC (ρ) | Design Effect (DEFF) | Implied Sample Size Inflation |

|---|---|---|---|

| 25 | 0.01 | 1 + (25-1)*0.01 = 1.24 | Sample size must be increased by 24% |

| 50 | 0.01 | 1 + (50-1)*0.01 = 1.49 | Sample size must be increased by 49% |

| 25 | 0.05 | 1 + (25-1)*0.05 = 2.20 | Sample size must be increased by 120% |

Experimental Protocols and Methodologies

Protocol for Calculating the ICC from a Cluster Randomized Trial

The following workflow outlines the standard methodology for deriving the ICC, which is essential for both planning future studies and analyzing completed trials [17] [20].

Title: ICC Calculation Workflow

Detailed Methodology:

Data Collection and Model Specification: After conducting the CRT, individual-level outcome data is collected. A linear mixed-effects model (hierarchical or multilevel model) is then fitted to this data [20] [18]. This model must include a random intercept for the cluster unit (e.g., clinic ID) to partition the variance into between-cluster and within-cluster components. Covariates (e.g., age, sex, baseline values) can be included as fixed effects to explain some of the variability and potentially produce an adjusted, often smaller, ICC [17].

- Model Equation:

Y_ij = β0 + β1 * X_ij + u_j + e_ij, whereY_ijis the outcome for individualiin clusterj,u_jis the random cluster effect (u_j ~ N(0, σ_b²)), ande_ijis the individual error (e_ij ~ N(0, σ_w²)) [18].

- Model Equation:

Variance Component Extraction: The fitted model provides estimates of the two key variance components:

σ_b²(the between-cluster variance) andσ_w²(the within-cluster variance) [20].ICC Calculation: The point estimate of the ICC (ρ) is calculated by placing the variance component estimates into the formula:

ρ = σ_b² / (σ_b² + σ_w²)[20].Precision Estimation: It is crucial to report the precision of the ICC estimate. This is often done by calculating its standard error (SE) or a confidence interval. The SE can be approximated using the formula:

SE(ICC) = sqrt( 2*(1-ICC)² * [1+(n-1)*ICC]² / (n(n-1)k ) ), wherenis the average cluster size andkis the number of clusters [20].Comprehensive Reporting: Following survey-based guidelines, researchers should report the ICC alongside a description of the dataset and outcome, the method and software used for calculation, and the measure of precision [17].

Protocol for Designing a CRT Using the Design Effect

This protocol is applied during the planning stage of a trial to determine the required sample size.

Detailed Methodology:

Determine Individual-Randomized Sample Size: First, calculate the sample size (

N_indiv) required for an equivalent individually randomized trial using standard formulas, specifying the desired power, significance level, and effect size [19].Obtain an ICC Estimate: Identify a plausible ICC (ρ) value for the primary outcome from previous studies in a similar context (e.g., from tables like Table 2 above) or from pilot data [20] [21]. This is often the most challenging step.

Define Cluster Size and Count: Decide upon the anticipated average number of participants per cluster (

n) and the number of clusters (k) available or feasible for the study.Calculate the Design Effect: Apply the formula:

DEFF = 1 + (n - 1) * ρ[19] [20].Inflate the Sample Size: Calculate the total sample size required for the CRT:

N_CRT = N_indiv * DEFF[22].Calculate Individuals per Arm and Clusters per Arm: The number of individuals needed per intervention arm is

N_CRT / 2. The number of clusters required per arm is(N_CRT / 2) / n[19].

Logical and Conceptual Relationships

The relationship between clusters, ICC, and DEFF forms the logical backbone of a CRT's statistical considerations. The following diagram illustrates how these concepts interact from the design phase through to the analysis and interpretation of results.

Title: CRT Conceptual Flow

The Scientist's Toolkit: Essential Reagents and Materials

For researchers implementing and analyzing a cluster randomized trial in nutrition, the following "tools" are indispensable.

Table 4: Essential Reagents and Materials for Cluster Randomized Trials

| Tool / Reagent | Function in CRT Research |

|---|---|

| ICC Estimate from Prior Literature | Informs the sample size calculation during the design phase; provides a plausible value for ρ to be used in the DEFF formula [19] [21]. |

| Sample Size & Power Calculation Software | Software with CRT capabilities (e.g., PASS, SAS PROC POWER, R CRTsize package, Stata sampsi) is used to compute the number of clusters and individuals needed, incorporating the DEFF and ICC [19]. |

| Statistical Software for Mixed Models | Software like R (lme4), Stata (mixed), or SAS (PROC MIXED, PROC GLIMMIX) is required to fit the multilevel models that correctly account for clustering in the final analysis [18]. |

| Linear Mixed-Effects Model | The primary statistical model used to analyze continuous outcomes from a CRT. It explicitly includes random effects for clusters to provide valid estimates and inference [18]. |

| Generalized Estimating Equations (GEE) | An alternative, "marginal" method for analyzing CRT data (especially for non-normal outcomes) that accounts for within-cluster correlation using a "working correlation matrix" [19] [18]. |

| Detailed Protocol for ICC Reporting | A guideline ensuring that when an ICC is reported, it includes a description of the dataset, the calculation method, and its precision, thus making it useful to other scientists [17]. |

Cluster Randomized Trials (CRTs) are essential for evaluating group-based interventions in public health, health services research, and nutritional science. Unlike individually randomized trials, CRTs randomly assign intact groups—or clusters—such as hospitals, schools, communities, or care homes to different study arms [23]. This design is particularly suited for interventions that are naturally delivered at a group level, such as nutrition education programs for entire schools or dietary policy implementations within healthcare systems. However, the unique structure of CRTs, where the units of allocation, intervention, and outcome measurement can differ, raises distinct ethical challenges not adequately addressed by standard research ethics guidelines developed for individual-focused trials [23] [24].

The Ottawa Statement on the Ethical Design and Conduct of Cluster Randomized Trials, published in 2012, was developed to provide specific guidance for researchers and Research Ethics Committees (RECs) facing these complex issues [23]. It represents the first internationally recognized ethics guideline developed specifically for CRTs and is the product of a five-year mixed-methods research project that included empirical studies, ethical analyses, and a formal consensus process involving a multidisciplinary expert panel [23]. This article examines the foundations of the Ottawa Statement, with particular focus on its recommendations regarding informed consent, and explores its application and limitations within the context of group-based nutrition intervention research.

Core Ethical Framework of the Ottawa Statement

The Fifteen Recommendations

The Ottawa Statement provides 15 key recommendations organized across seven ethical domains critical to the ethical conduct of CRTs [23]. These recommendations were developed through a systematic consensus process involving ethicists, trialists, consumer representatives, REC members, policy makers, funding agencies, and journal editors [23]. The table below summarizes these core recommendations and their primary applications in nutrition research.

Table 1: The Ottawa Statement's 15 Recommendations and Applications to Nutrition Research

| Ethical Domain | Recommendation Number | Key Principle | Application in Nutrition Research |

|---|---|---|---|

| Justifying the CRT Design | 1 | Provide clear rationale for cluster randomization and appropriate statistical methods. | Justify why individual randomization is unsuitable (e.g., intervention contamination in school feeding programs). |

| REC Review | 2 | Submit CRT for REC approval before commencement. | Ensure specialized ethics review of cluster-specific issues in community nutrition trials. |

| Identifying Research Participants | 3 | Clearly identify all research participants using specific criteria. | Identify recipients of interventions (e.g., children), targets of environmental manipulations (e.g., cafeteria changes), and those providing data. |

| Obtaining Informed Consent | 4 | Obtain informed consent from research participants unless waiver granted. | Seek consent for data collection procedures and personal interventions within cluster-randomized nutrition studies. |

| 5 | Seek consent as soon as possible after cluster randomization when pre-randomization not feasible. | Approach patients or students after their clinic/school is randomized but before data collection. | |

| 6 | RECs may waive or alter consent when research is infeasible without waiver and procedures pose minimal risk. | Potential application for low-risk educational interventions where pre-consent would undermine trial validity. | |

| 7 | Obtain consent from professionals or service providers who are research participants. | Secure consent from dietitians, teachers, or cafeteria staff implementing nutritional interventions. | |

| Gatekeepers | 8 | Gatekeepers cannot provide proxy consent for individuals. | Principals cannot consent on behalf of students; parents must provide consent for children. |

| 9 | Obtain gatekeeper permission when cluster interests are substantially affected. | Seek school district approval for school-wide nutrition policy changes. | |

| 10 | Protect cluster interests through cluster consultation on design, conduct, and reporting. | Engage community representatives in designing culturally appropriate dietary interventions. | |

| Assessing Benefits and Harms | 11 | Adequately justify study interventions; benefits/harms must align with competent practice. | Ensure nutritional supplements or dietary restrictions are consistent with evidence-based practice. |

| 12 | Adequately justify control conditions; control arm should not be deprived of effective care. | Control groups in malnutrition trials should receive standard nutritional support, not no support. | |

| 13 | Justify data collection procedures; risks must be minimized and reasonable relative to knowledge gained. | Balance burden of dietary recalls or blood draws with potential benefits of knowledge gained. | |

| Protecting Vulnerable Participants | 14 | Implement additional protections when clusters contain vulnerable participants. | Provide special safeguards for care home residents with dementia in nutritional studies [25]. |

| 15 | Pay special attention to consent procedures for those potentially coerced due to organizational hierarchy. | Ensure junior staff in healthcare settings feel free to decline participation in implementation trials. |

Identifying Research Participants in CRTs

A fundamental challenge in CRTs is identifying exactly who constitutes a research participant. The Ottawa Statement provides crucial clarity through Recommendation 3, defining a research participant as "an individual whose interests may be affected as a result of study interventions or data collection procedures" [23]. Specifically, this includes individuals who are:

- Intended recipients of an experimental (or control) intervention

- Direct targets of an experimental manipulation of their environment

- Those with whom investigators interact to collect data

- Those about whom investigators obtain identifiable private information for data collection [23]

This definition is particularly relevant in nutrition research, where interventions often operate at multiple levels. For example, in a school-based nutrition trial, participants might include students (receiving modified meals), parents (providing dietary information), teachers (implementing educational components), and cafeteria staff (altering food preparation). Each category may have different consent requirements based on their role and level of involvement.

Table 2: Research Participant Identification in Different Nutrition CRT Contexts

| CRT Context | Intervention Target | Research Participants | Non-Participants Affected |

|---|---|---|---|

| School Meal Program | School food environment | Students (data collection), Parents (surveys), Food service staff (training) | Siblings eating leftover food, Teachers receiving same meals |

| Care Home Nutritional Supplement | Care home procedures | Residents (supplements, measurements), Staff (implementation) | Visitors, Family members involved in care |

| Community Nutrition Education | Community health services | Community health workers (training), Residents (education, data) | All community members exposed to educational materials |

Diagram 1: Decision Pathway for Identifying Research Participants in CRTs. This flowchart illustrates the application of the Ottawa Statement's definition to determine who qualifies as a research participant based on the nature of their interaction with the study.

Informed Consent in Cluster Randomized Trials

The Consent Framework

Informed consent represents one of the most challenging ethical domains in CRTs. The Ottawa Statement addresses this through four specific recommendations (4-7) that acknowledge the practical realities of cluster randomization while upholding the fundamental ethical principle of respect for autonomy [23].

Recommendation 4 establishes the default position that researchers must obtain informed consent from human research participants in a CRT, unless a waiver is granted by a REC under specific circumstances [23]. This aligns with the universal understanding of informed consent as a cornerstone of ethical research, ensuring patients or participants understand the procedures, potential risks, benefits, and alternatives before agreeing to participate [26].

Recommendation 5 addresses the common CRT scenario where identifying and recruiting participants before cluster randomization is not feasible. It stipulates that when informed consent is required but pre-randomization recruitment is impossible, researchers must seek consent as soon as possible after cluster randomization—specifically, before the participant undergoes any study interventions or data collection procedures [23]. This approach balances scientific validity (avoiding post-randomization bias) with ethical requirements.

Recommendation 6 provides for exceptions, allowing RECs to approve a waiver or alteration of consent requirements when (1) the research is not feasible without the waiver or alteration, and (2) the study interventions and data collection procedures pose no more than minimal risk [23]. This is particularly relevant for low-risk public health interventions where seeking individual consent might undermine the trial's validity.

Recommendation 7 specifically addresses professionals or service providers who function as research participants, requiring their informed consent unless conditions for waiver are met [23]. This recognizes that in many nutrition CRTs, healthcare providers, teachers, or other professionals may be implementing interventions or providing data as part of the study.

Practical Application in Nutrition Research

The application of these consent principles can be illustrated through real nutrition CRTs. In a cluster-randomized feasibility trial evaluating nutritional interventions in care homes, the REC approved consent and randomization at the care home level, but required individual consent from residents with capacity for participant-reported outcome measures [25]. This hybrid approach recognized the cluster-level nature of the intervention while protecting individual autonomy for more personal data collection.

In the MAHAY study in Madagascar, a multi-arm CRT testing nutritional supplementation and responsive parenting promotion, the study protocols received approval from both the Malagasy Ethics Committee and the institutional review board at the University of California, Davis [13]. The consent procedures would have needed to account for multiple levels of intervention—including supplementation for pregnant/lactating women and children, plus home visits—across 125 communities.

Diagram 2: Consent Framework for Nutrition CRTs. This diagram visualizes the multi-layered consent approach required in cluster randomized trials, encompassing cluster-level permissions, individual-level consent, and potential waivers under specific conditions.

Experimental Evidence and Case Studies

Methodologies from Nutrition CRTs

Several cluster randomized trials in nutrition research provide insight into how Ottawa Statement principles are implemented in practice. The following table summarizes key methodological features and consent approaches from relevant studies.

Table 3: Methodological Approaches and Consent Strategies in Nutrition CRTs

| Trial | Clusters & Participants | Intervention | Consent Procedures | Ethical Considerations Applied |

|---|---|---|---|---|

| Care Home Nutritional Feasibility Trial [25] | 6 care homes; 110 residents at risk of malnutrition | Food-based intervention vs. oral nutritional supplements vs. standard care | Cluster-level randomization and intervention; individual consent for PROMs from residents with capacity | REC oversight; special protections for vulnerable care home residents; balance of cluster and individual rights |

| Create Healthy Futures Study [27] | 12 Head Start programs; 186 early care and education providers | Web-based nutrition intervention to improve diet quality and behaviors | Cluster randomization of centers; individual consent from providers for data collection | Justification of CRT design; professional participants; assessment of benefits/harms |

| MAHAY Study [13] | 125 communities; 1,250 pregnant women; 1,250 children 0-6mo; 1,250 children 6-18mo | Multi-arm: behavior change communication +/- lipid-based supplementation for children +/- supplementation for pregnant women | Community-level randomization; individual consent procedures for interventions and data collection | Complex multi-level participant identification; justification for cluster design; engagement with national ethics committees |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Materials for Nutrition CRTs

| Tool/Resource | Function in Nutrition CRT | Ethical Considerations |

|---|---|---|

| Malnutrition Universal Screening Tool ('MUST') [25] | Identifies participants at risk of malnutrition for eligibility assessment | Requires individual consent for screening unless waived; privacy of health information |

| Lipid-Based Nutrient Supplements (LNS) [13] | Provides balanced nutritional supplementation in food-insecure populations | Justification of intervention; assessment of benefits/harms; appropriate control conditions |

| 24-Hour Dietary Recall Methodology [28] | Gold-standard dietary assessment in "What We Eat in America" component of NHANES | Minimizes burden of data collection; stands in reasonable relation to knowledge gained |

| Alternative Healthy Eating Index (AHEI-2010) [27] | Validated measure of diet quality aligning with dietary guidelines | Justification as appropriate outcome measure; consistency with competent practice |

| Digital Platform for Intervention Delivery [27] | Enables scalable delivery of nutritional education components | Privacy and confidentiality of participant data; equitable access to intervention |

Current Gaps and Evolving Guidance

Despite its comprehensive nature, the Ottawa Statement requires updating to address evolving research methodologies and identified limitations. A 2025 citation analysis identified 24 distinct gaps in the original guidance, revealing areas where additional ethical direction is needed [24] [29].

Key gaps relevant to nutrition research include:

Emerging Trial Designs: The rise of stepped-wedge CRTs, where all clusters begin in the control condition and cross over to the intervention at randomly assigned timepoints, raises new ethical questions, particularly when evidence has accumulated concerning an intervention's efficacy [24] [29].

Waiver of Consent: There is ongoing debate about whether waivers of consent are appropriate in CRTs to increase pragmatism, especially in the context of minimal-risk implementation research [24] [29].

Equity Considerations: The original Statement lacks sufficient guidance on addressing equity-related issues in CRTs, particularly relevant for nutrition research involving vulnerable or resource-limited populations [29].

Benefit-Harm Assessment: Six distinct gaps were identified regarding assessment of benefits and harms, including how to evaluate cluster-level benefits and harms, and how to address uncertainties in interventions with complex effect pathways [29].

These gaps are being addressed through an official update process to the Ottawa Statement, which will incorporate ongoing empirical work and engagement with patient and public partners [24] [29]. Additionally, setting-specific implementation guidance has been developed, such as specialized recommendations for CRTs in the hemodialysis setting, demonstrating how the core principles can be adapted to specific research contexts with unique ethical challenges [30].

For nutrition researchers, these developments highlight the importance of maintaining awareness of evolving ethical standards while applying the fundamental principles of the Ottawa Statement to ensure the ethical design and conduct of cluster randomized trials in the field.

Designing and Executing Robust Nutrition CRTs: From Protocol to Practice

In cluster-randomized trials (CRTs), where groups rather than individuals are randomized to intervention arms, the choice of a randomization scheme is a critical design decision that directly impacts the validity and interpretability of trial results. CRTs are particularly relevant for group-based nutrition interventions, where the intervention is naturally applied at a cluster level (e.g., schools, communities, or healthcare centers) [31]. Unlike individually randomized trials, CRTs face unique complexities, including cluster-level correlation in outcomes and the frequent limitation of having a small number of available clusters. This guide objectively compares simple, block, and stratified randomization methods within this context, providing researchers with the data and methodologies needed to inform their selection.

Understanding Randomization in Cluster-Randomized Trials

Randomization serves to create comparable treatment and control arms, balanced on both measured and unmeasured factors, allowing observed differences to be given a causal interpretation [31]. In CRTs, the unit of randomization is the cluster. However, a key consideration is the unit of inference—whether the analysis aims to draw conclusions about clusters or individuals. When the goal is to make inferences about individuals, imbalance in individual-level characteristics across arms can introduce confounding, a risk exacerbated when not all individuals within a cluster are enrolled or when patients with multiple chronic conditions are unevenly distributed across clusters [31].

Randomization methods can be broadly categorized as simultaneous or sequential. Simultaneous randomization, where all clusters are randomized prior to enrollment, is easier to operationalize but cannot be modified later. Sequential randomization, where clusters are randomized over time as they are included in the study, offers flexibility but different logistical challenges [31].

Comparative Analysis of Randomization Methods

The table below summarizes the core characteristics, advantages, and disadvantages of simple, block, and stratified randomization methods in the context of CRTs.

Table 1: Comparison of Randomization Methods for Cluster-Randomized Trials

| Method | Description | Key Advantages | Key Disadvantages |

|---|---|---|---|

| Simple Randomization | Unrestricted technique based on a single sequence of random assignments; all possible allocations are permissible [31]. | Simple and easy to implement; balances covariates with a large number of randomized units [31]. | High probability of imbalance on key covariates when the number of clusters is small (a common feature of CRTs) [31]. |

| Block Randomization | A restricted technique (a type of "matching") where a smaller set of all possible allocations is selected based on balance criteria; randomization then occurs within these blocks or pairs [31]. | Effectively reduces imbalance between treatment groups, especially on specific cluster-level risk factors [31]. | Requires identifying well-matched pairs of clusters, which is often not feasible; balance can be undermined if subsets of individuals are enrolled post-randomization [31]. |

| Stratified Randomization | A restricted technique where strata are created based on combinations of important covariates; clusters are then randomly assigned to treatment arms within each stratum [31]. | Directly reduces imbalance between groups on preselected, important covariates [31]. | The number of strata increases rapidly with the number of covariates, making it impractical to control for many factors; requires categorization of continuous variables [31]. |

Experimental Protocols and Data Presentation

The quantitative data supporting the comparison of these methods often comes from simulation studies or re-analyses of real CRTs. These studies typically assess performance metrics such as covariate balance, Type I error rate, and statistical power under different randomization schemes.

Table 2: Summary of Key Experimental Findings from Methodological Studies

| Study Focus | Experimental Protocol | Key Metric | Simple | Block | Stratified |

|---|---|---|---|---|---|

| Covariate Balance | Methodology: Simulate a CRT with a fixed number of clusters. Predefine cluster-level covariates (e.g., cluster size, baseline morbidity rate). Apply each randomization method 10,000 times and measure the standardized difference in means for each covariate between arms. | Mean Absolute Covariate Balance | Higher imbalance, especially with fewer clusters (<20) | Lower imbalance within matched pairs | Lower imbalance within each defined stratum |

| Statistical Power | Methodology: Using the same simulations, for each allocation, analyze the outcome using a mixed model. Calculate the proportion of simulations that correctly reject the null hypothesis (power) for a predefined treatment effect. | Achieved Power (%) | Can be substantially reduced due to imbalance | Better maintained due to improved balance | Better maintained, contingent on strata being predictive of outcome |

| Handling of Multiple Covariates | Methodology: Evaluate the ability of each method to simultaneously balance more than one covariate. | Probability of Global Balance | Low | Good for the matched factors, but may not balance others | Becomes computationally difficult and inefficient with many covariates |

Workflow for Selecting a Randomization Scheme

The following diagram outlines a logical decision pathway for selecting an appropriate randomization method for a cluster-randomized trial, based on trial characteristics and constraints.

Diagram 1: Randomization Method Decision Pathway

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key methodological components and their functions in the design and analysis of randomization schemes for CRTs.

Table 3: Research Reagent Solutions for Randomization in CRTs

| Item | Function in Randomization & Analysis |

|---|---|

| Covariate Balance Metrics | Quantitative tools (e.g., standardized differences, p-values from balance tests) used to assess the success of a randomization method in creating comparable groups before analysis [31]. |

| Restricted Randomization Algorithm | Software algorithms that implement block, stratified, or covariate-constrained methods by randomly selecting from a subset of allocations that meet pre-specified balance criteria [31]. |

| Statistical Software (e.g., R, SAS) | Platforms used to generate the randomization sequence, simulate trial designs to compare methods, and perform the subsequent mixed-model or cluster-level analyses that account for intra-cluster correlation [31]. |

| Cluster-Level Covariate Data | Pre-existing data on potential effect modifiers (e.g., cluster size, geographic location, baseline health status) crucial for planning stratified or constrained randomization [31]. |

The selection of a randomization scheme in cluster-randomized trials is a trade-off between operational simplicity and statistical robustness. While simple randomization is straightforward, its tendency for imbalance makes it risky for trials with a limited number of clusters. Block randomization (matching) is highly effective for ensuring balance on a few key factors when well-matched pairs can be identified. Stratified randomization provides direct control over specific covariates but becomes unwieldy with multiple factors. For group-based nutrition interventions, where clusters like schools or communities may be few and heterogeneous, restricted methods like block or stratified randomization are generally recommended to ensure valid and reliable causal conclusions.

In evaluative health care research, cluster randomized trials (cRCTs) represent a critical design where groups of individuals (clusters), rather than individuals themselves, are randomized to different interventions [17]. This approach is particularly prevalent in group-based nutrition interventions, where randomizing intact units such as communities, schools, or healthcare facilities helps prevent treatment contamination across experimental conditions and aligns with the natural implementation of public health programs [17] [32]. However, this design introduces a key methodological complexity: outcomes for individuals within the same cluster are often correlated because they share common environmental influences, social networks, or service providers [17] [32].

The intracluster correlation coefficient (ICC) quantifies this phenomenon by measuring the degree of similarity among responses within the same cluster [17]. Statistically, the ICC (denoted as ρ) represents the proportion of the total variance in the outcome that can be attributed to the variation between clusters [17]. Understanding and accurately estimating the ICC is paramount for appropriate trial design, as it directly impacts sample size requirements, statistical power, and the validity of analytical approaches [17] [32]. This article provides a comprehensive comparison of methodologies for incorporating ICC into power and sample size calculations for nutrition intervention research, supporting the broader thesis that robust cRCT design necessitates specialized statistical approaches distinct from individually randomized trials.

The Statistical Foundation of ICC and Design Effects

Conceptual and Mathematical Definition of ICC

The intracluster correlation coefficient operates on the principle that observations within clusters are more similar than observations between clusters. This clustering effect violates the fundamental assumption of independence underlying many standard statistical tests, necessitating specialized approaches to both sample size calculation and data analysis [17]. The ICC can be conceptualized as the correlation between any two randomly selected individuals within the same cluster, with values typically ranging from less than 0.001 to over 0.8 depending on the intervention, population, and outcome being investigated [32].

The mathematical consequence of this clustering is quantified through the design effect (DEFF), also known as the variance inflation factor [17]. This multiplier adjusts the sample size required for an individually randomized trial to account for the reduced effective sample size in a cRCT. The design effect is calculated as:

[ DEFF = 1 + (m - 1)ρ ]

where ( m ) represents the average cluster size and ( ρ ) is the ICC [17]. This formula demonstrates that both larger cluster sizes and higher ICC values substantially increase the required sample size. For example, with an ICC of 0.05 and cluster size of 20, the design effect would be 1.95, essentially doubling the sample size needed compared to an individually randomized design [32].

Impact of ICC on Statistical Power and Error Rates

Statistical power, defined as the probability of correctly rejecting a false null hypothesis (1-β), is profoundly influenced by the ICC in cluster randomized designs [33] [34]. The interrelated concepts of power, effect size, sample size, and significance level form a closed system where fixing any three parameters determines the fourth [34]. When the ICC is ignored or underestimated, the effective sample size decreases, reducing statistical power and increasing the risk of Type II errors (failing to detect a true effect) [33] [32].

The relationship between ICC, cluster size, and required sample size for a continuous outcome in a two-armed cRCT can be expressed as:

[ n = \frac{2(Z{1-\alpha/2} + Z{1-β})^2σ^2(1 + (mf - 1)ρ̂)}{(μ1 - μ_2)^2} ]

where ( n ) is the required participants per arm, ( Z{1-\alpha/2} ) and ( Z{1-β} ) are standard normal distribution values, ( σ^2 ) is the variance, ( μ1 ) and ( μ2 ) are the group means, ( ρ̂ ) is the estimated ICC, and ( m_f ) is the desired cluster size for the main trial [32]. This formula highlights the direct relationship between ICC and required sample size, illustrating why precise ICC estimation is crucial for adequate trial planning.

Table 1: Sample Size Requirements (Clusters per Arm) for Cluster-Randomized Trials with 90% Power and α=0.05

| Estimated ICC (ρ) | Effect Size d = 0.1 | Effect Size d = 0.25 | Effect Size d = 0.5 | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Cluster Size (m) | Cluster Size (m) | Cluster Size (m) | |||||||

| 10 | 20 | 30 | 10 | 20 | 30 | 10 | 20 | 30 | |

| 0.01 | 231 | 126 | 91 | 37 | 21 | 15 | 10 | 6 | 4 |

| 0.05 | 307 | 206 | 173 | 50 | 33 | 28 | 13 | 9 | 7 |

| 0.10 | 402 | 307 | 275 | 65 | 50 | 44 | 17 | 13 | 11 |

| 0.20 | 592 | 508 | 479 | 95 | 82 | 77 | 24 | 21 | 20 |

Adapted from sample size calculations for cluster-randomised trials with continuous outcomes [32]

Methodological Approaches to ICC Estimation

Framework for Reporting ICCs

Comprehensive reporting of ICCs is essential for both interpreting trial results and planning future studies. A survey of researchers specializing in cRCTs identified three critical dimensions for appropriate ICC reporting [17]:

Description of the Dataset and Outcome: This includes demographic distributions within and between clusters, complete characterization of the outcome (binary or continuous, underlying prevalence, measurement method), and detailed description of the intervention. Outcomes measured subjectively (e.g., physician assessment) typically demonstrate higher ICCs than objectively measured outcomes (e.g., laboratory results) [17].

Method of ICC Calculation: Researchers should specify the statistical method used (e.g., ANOVA, maximum likelihood), software implementation, source data (control only, pre-intervention, or post-intervention), and whether covariates were adjusted for in the calculation, as covariate adjustment generally reduces ICC values by explaining between-cluster variation [17].

Precision of the ICC Estimate: Reporting confidence intervals, number of clusters, average cluster size, and range of cluster sizes provides crucial information about the reliability of the ICC estimate [17].

Accounting for Uncertainty in ICC Estimates

ICC estimates derived from pilot studies often contain substantial uncertainty that must be incorporated into sample size calculations [32]. Utilizing a single point estimate without considering its precision can lead to seriously underpowered or overpowered main trials [32]. Common approaches to address this uncertainty include:

Upper Confidence Limit Method: Using the upper confidence limit of the ICC estimate rather than the point estimate, though this often results in overpowered trials and inefficient resource allocation [32].

Numerical Integration Adjustment: A more sophisticated method that integrates the sample size formula across the plausible distribution of ICC values, providing an "average" sample size that more appropriately accounts for estimation uncertainty [32].

Incorporating Multiple Information Sources: Researchers are advised to consult collections of ICC estimates from multiple studies or databases rather than relying solely on a single pilot estimate [32].

Several statistical methods exist for estimating uncertainty in ICC estimates, including Swiger's variance (based on large sample approximations), Searle's method (using the variance ratio statistic), and Fisher's transformation (applying a normalizing transformation to the ICC) [32]. The choice among these methods depends on the distributional properties of the data and the desired balance between computational complexity and accuracy.

Diagram 1: Accounting for Uncertainty in ICC Estimation for Sample Size Calculation. This workflow illustrates the process from pilot data collection through main trial design, highlighting alternative methods for quantifying uncertainty in ICC estimates.

Experimental Protocols for ICC Determination in Nutrition Research

Case Study: The MaaCiwara Cluster Randomized Trial

The MaaCiwara study, a cRCT evaluating a community-level complementary food safety, hygiene, and nutrition intervention in Mali, provides a practical example of ICC implementation in nutrition research [5]. This trial randomized 120 urban and rural clusters to either a behavior change intervention or control group, with mother-child pairs as participants [5]. The study incorporated ICC considerations throughout its design:

Primary Outcomes: The trial specified three primary outcomes with different measurement characteristics: (1) water and food safety behavior observations (binomial), (2) food and water E. coli contamination (count), and (3) diarrhoea prevalence (dichotomous) [5].

Sample Size Justification: The design recruited 120 communities with 27 mother-child pairs per cluster-period, distributed across baseline, midline (4 months), and endline (15 months) assessments [5]. Power calculations assumed an ICC of 0.02 and a cluster autocorrelation coefficient (CAC) of 0.8, with sensitivity analyses considering a range of plausible ICC values [5].

Analytical Approach: The statistical analysis plan specified generalized linear mixed models at the individual level, accounting for cluster effects and rural/urban stratification to estimate intervention effects [5].

Power Analysis Protocol for cRCTs

Conducting an appropriate power analysis for cluster randomized trials requires careful attention to both conventional power considerations and cluster-specific parameters [33] [35] [34]. The following protocol provides a structured approach:

Define Hypothesis and Parameters: Formulate null and alternative hypotheses, select significance level (α, typically 0.05), determine power (1-β, ideally ≥0.8), and specify the minimum detectable effect size clinically relevant to nutrition interventions [33] [34].

Identify ICC Source: Obtain ICC estimates for primary outcomes from previous studies in similar populations or conduct pilot studies. When using external estimates, ensure compatibility in outcome measures, cluster characteristics, and population demographics [17] [32].

Calculate Design Effect: Incorporate the ICC and anticipated cluster size into the variance inflation factor: DEFF = 1 + (m - 1)ρ [17].

Determine Required Sample Size: Calculate the sample size needed for an individually randomized trial and multiply by the design effect. Alternatively, use specialized sample size formulas for cRCTs that directly incorporate ICC [32].

Account for Uncertainty: Perform sensitivity analyses across a plausible range of ICC values to understand how variations affect power and sample size requirements [32].

Consider Practical Constraints: Balance statistical ideals with logistical realities, including budget, recruitment feasibility, and ethical considerations [33] [35].

Table 2: Essential Research Reagents for ICC Determination and Power Analysis in Cluster Randomized Trials

| Research Reagent | Type/Category | Function in cRCT Design |

|---|---|---|

| Statistical Software | Analysis Tool | Calculates ICC estimates, performs power analysis, and conducts appropriate clustered data analyses |

| Pilot Trial Data | Data Source | Provides preliminary estimates of ICC and variance parameters for main trial sample size calculation |

| ICC Repository/Database | Reference Data | Offers historical ICC values for similar interventions, outcomes, and cluster types to inform power calculations |

| Sample Size Calculator | Specialized Tool | Computes required participants and clusters incorporating design effects for various cRCT designs |

| Mixed Effects Models | Analytical Framework | Accounts for hierarchical data structure in both planning and analysis phases |

Comparative Analysis of ICC Impact Across Trial Scenarios

The influence of ICC on sample size requirements varies substantially across different trial parameters. The relationship between ICC, effect size, and cluster size demonstrates several key patterns essential for nutrition intervention research:

Effect Size Modulation: Smaller effect sizes dramatically increase sensitivity to ICC inflation. For an effect size of d=0.1 with cluster size 20, increasing ICC from 0.01 to 0.20 raises required clusters per arm from 126 to 508 – a 403% increase. The same ICC change for larger effect size (d=0.5) increases clusters from 6 to 21 – a 250% increase [32].

Cluster Size Interaction: The impact of ICC intensifies with larger cluster sizes. With ICC=0.05 and effect size d=0.25, increasing cluster size from 10 to 30 raises total participants required per arm from 500 to 840, while the number of clusters decreases from 50 to 28 [32]. This demonstrates the diminishing returns of increasing cluster size in the presence of non-zero ICC.

Nutrition-Specific Considerations: In nutrition interventions, process outcomes (e.g., behavioral observations) typically demonstrate higher ICCs than physiological outcomes (e.g., biomarker measurements) [17]. The MaaCiwara trial acknowledged this by specifying different ICC assumptions for its diverse primary outcomes [5].

Diagram 2: Interrelationships Between ICC, Design Effect, and Statistical Power. This diagram illustrates the cascading effect of ICC values through the trial design process, ultimately determining the statistical power and required resources.

The intracluster correlation coefficient represents a fundamental parameter in the design and interpretation of cluster randomized trials for nutrition interventions. Appropriate attention to ICC estimation and incorporation into power calculations protects against underpowered studies that waste resources and fail to detect genuine intervention effects. The comparative analysis presented demonstrates that ICC magnitude, interacting with effect size and cluster size, dramatically influences sample size requirements across diverse trial scenarios.

Nutrition intervention researchers should adopt comprehensive approaches to ICC handling, including thorough reporting standards, incorporation of uncertainty in estimation, and sensitivity analyses across plausible ICC ranges. The methodological framework presented supports the broader thesis that valid cluster randomized trial design necessitates specialized statistical approaches distinct from individually randomized trials. By implementing these practices, researchers can enhance the scientific rigor, efficiency, and translational impact of group-based nutrition interventions.

In the realm of public health nutrition, selecting the appropriate intervention type is a critical determinant of success in research and program implementation. For scientists designing cluster randomized trials (cRCTs) for group-based nutrition interventions, understanding the distinct characteristics, applications, and methodological considerations of different intervention approaches is paramount. This guide provides a systematic comparison of four principal intervention types—behavioral, fortification, supplementation, and regulatory—focusing on their operational frameworks, experimental evidence, and implementation protocols. The content is specifically contextualized within the design of nutrition intervention studies, providing researchers with the practical tools and comparative data necessary for rigorous trial design and evaluation.

Comparative Analysis of Nutrition Intervention Types

The table below provides a systematic comparison of the four primary nutrition intervention types, highlighting their defining characteristics, targets, and applications.

| Intervention Type | Definition & Core Mechanism | Primary Targets & Vehicles | Typical Implementation Context & Scale |

|---|---|---|---|

| Behavioral | Aims to modify dietary habits and patterns through education, counseling, and motivation [36]. | Targets individual food choices, portion sizes, meal timing, and physical activity levels [36]. | Community, clinical, or school-based settings; often implemented in cRCTs to manage obesity and chronic disease [36]. |

| Fortification | Adds essential micronutrients to widely consumed staple foods or condiments during processing to prevent deficiencies at a population level [37]. | Vehicles: Salt, flour, oil, sugar, rice [37].Nutrients: Iodine, iron, vitamin A, folic acid, zinc [37]. | Large-scale, population-level programs, often mandated by governments (mandatory fortification) or initiated by industry (voluntary fortification) [37]. |

| Supplementation | Provides essential nutrients in pharmaceutical forms (pills, powders, syrups) to correct or prevent specific nutrient deficiencies [38]. | Targets specific high-risk groups (e.g., children, pregnant women) or individuals with diagnosed deficiencies [38]. | Clinical settings or targeted public health programs; often used for rapid response to deficiency [38]. |