Controlling for Non-Food Determinants of Biomarker Levels: A Strategic Framework for Researchers and Drug Developers

This article provides a comprehensive framework for researchers and drug development professionals to identify, understand, and control for the critical non-food factors that confound biomarker levels.

Controlling for Non-Food Determinants of Biomarker Levels: A Strategic Framework for Researchers and Drug Developers

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to identify, understand, and control for the critical non-food factors that confound biomarker levels. Covering foundational concepts to advanced applications, it details the biological sources of variability—from inflammation and metabolic status to genetic background—and offers robust methodological strategies for accounting for these confounders in study design and data analysis. The content further addresses troubleshooting common pitfalls, optimizing biomarker panels, and outlines rigorous validation pathways to ensure biomarkers are reliable for use in clinical trials, nutritional epidemiology, and the advancement of precision medicine.

Understanding the Sources of Variability: Key Non-Food Determinants of Biomarker Levels

Understanding Confounding Factors in Biomarker Research

What are confounding factors in the context of biomarker levels?

Confounding factors are variables that can distort the apparent relationship between the primary exposure (e.g., a drug, a nutrient) and a biomarker level, potentially leading to incorrect conclusions. If not properly controlled for, they can introduce bias, making it seem that an association exists when it does not, or vice-versa [1].

Why is distinguishing between fixed and modifiable determinants critical for research validity?

Distinguishing between these types is fundamental to proper study design and statistical analysis. Fixed factors (like age or genetics) often require specific statistical adjustments or stratification of the study population. In contrast, modifiable factors (like inflammation or metabolic health) might be intervention targets themselves or require standardization of measurement conditions [2]. Failing to account for these can lead to significant biological variability in biomarker levels, complicating the interpretation of results related to disease diagnosis, prognosis, and treatment monitoring [2].

Fixed Determinants

Fixed determinants are intrinsic, non-changeable characteristics of an individual that can systematically influence biomarker levels.

What are the key fixed determinants?

The table below summarizes the primary fixed determinants, their impact on biomarkers, and corresponding control strategies.

| Determinant | Impact on Biomarkers | Control Strategies |

|---|---|---|

| Age [2] | Age-related changes can alter concentrations of proteins like Aβ and tau, independent of disease state [2]. | Stratify study population by age groups; Use age-adjusted reference ranges in analysis. |

| Sex [2] | Biological sex can influence hormone levels, metabolism, and baseline values of various biomarkers. | Include sex as a covariate in statistical models; Conduct sex-stratified analyses. |

| APOE-ε4 Genotype [2] | Carriers of this allele have a higher risk for Alzheimer's disease, which can influence levels of AD-related biomarkers like Aβ and p-tau [2]. | Genotype participants and include genotype as a factor in the analysis; Recruit based on genetic status for targeted studies. |

| Genetic Makeup [2] | Broad genetic background beyond single alleles can affect an individual's baseline risk and biomarker expression. | Utilize family-based study designs or genome-wide association studies (GWAS) to account for polygenic effects. |

Modifiable Determinants

Modifiable determinants are potentially changeable biological states or lifestyle factors that can cause significant variability in biomarker measurements.

What are the key modifiable determinants?

The table below outlines major modifiable factors, their effects, and how to mitigate their impact.

| Determinant | Impact on Biomarkers | Control Strategies |

|---|---|---|

| Systemic Inflammation [2] | Chronic inflammation, marked by cytokines (IL-6, TNF-α) or CRP, can alter levels of key biomarkers like Aβ and p-tau, independent of the primary disease process [2]. | Measure and adjust for inflammatory markers (e.g., hs-CRP) in statistical models; Exclude individuals with acute infections. |

| Metabolic Health [2] | States like insulin resistance and dyslipidemia can significantly alter biomarker variability, including metabolites and proteins related to neurodegeneration [2]. | Standardize fasting conditions before blood draws; Assess and control for metabolic markers (e.g., fasting insulin, HOMA-IR). |

| Hormonal Changes [2] | Fluctuations in hormones like cortisol (stress) or thyroid hormones can influence biomarker levels related to energy metabolism and cellular function [2]. | Record time of day for sample collection to account for circadian rhythms; Document medication use and menstrual cycle phase. |

| Nutritional Status [2] | Deficiencies in vitamins E, D, B12, and antioxidants can contribute to oxidative stress and neuroinflammation, subsequently changing biomarker levels [2]. | Assess nutritional status via questionnaires or blood tests; Consider supplementation studies to control for deficiencies. |

Experimental Protocols for Controlling Confounders

How do I design a study to control for fixed and modifiable confounders?

Protocol: Study Design and Pre-Data Collection Control

- Define Target Trial Protocol: Specify the hypothetical ideal randomized trial (the "target trial") including eligibility criteria, treatment strategies, and outcomes. This helps identify potential sources of confounding at the design stage [3].

- Stratified Sampling: Recruit participants by stratifying based on key fixed factors (e.g., age groups, sex, APOE-ε4 status) to ensure balanced representation across subgroups [2].

- Standardize Procedures: Define and document protocols for sample collection (e.g., time of day, fasting status), processing, and storage to minimize variability introduced by modifiable factors [4].

What statistical methods can I use to adjust for confounders during analysis?

Protocol: Statistical Analysis and Post-Hoc Control

- Corrected Score Functions: Employ these methods, for example, to address bias from both confounding and continuous exposure measurement error simultaneously [1].

- Regression Adjustment: Include confounding variables as covariates in multivariable regression models to statistically control for their effects.

- Inverse Probability Weighting (IPW): Weight participants based on their probability of exposure given their confounders, creating a pseudo-population where confounders are balanced across exposure groups [1].

- Doubly-Robust Estimation: Combine regression and IPW methods; this approach provides consistent effect estimates if either the outcome model or the exposure model is correctly specified, offering protection against model misspecification [1].

Troubleshooting Common Experimental Issues

What should I do if my biomarker levels show high biological variability despite controlling for common confounders?

- Investigate Unmeasured Confounding: Consider that an important confounder may not have been measured. Use sensitivity analyses to assess how robust your results are to potential unmeasured confounding [3].

- Apply Advanced Modeling: Explore integrative approaches like multi-omics or AI modeling to account for the intricate, synergistic interactions between multiple biological determinants [2].

- Check Assay Precision: Verify that the variability is biological and not technical by reviewing the coefficient of variation (CV) for your biomarker assay from quality control data.

How can I handle time-varying confounding in longitudinal studies of biomarker levels?

- Use Appropriate Longitudinal Methods: Employ methods like marginal structural models or G-estimation, which are specifically designed to handle confounders that change over time and may be affected by prior exposure [3].

- Align Follow-up Time: Ensure the start of follow-up is correctly aligned with the time eligibility criteria are met and treatment is assigned to avoid immortal time bias [3].

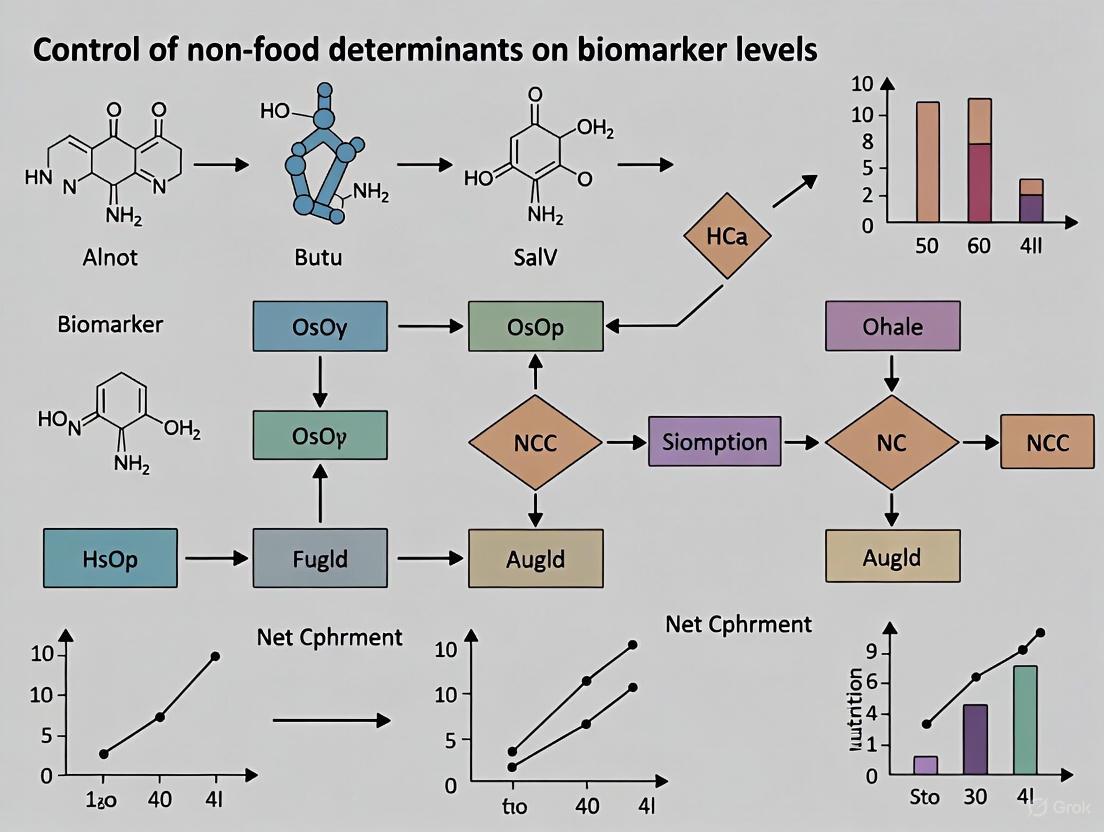

Visualizing the Workflow for Addressing Confounders

Research Reagent Solutions

The table below lists key reagents and materials essential for investigating and controlling for confounding factors.

| Reagent/Material | Function in Research |

|---|---|

| ELISA Kits [2] | Quantify protein biomarkers (e.g., cytokines for inflammation, Aβ, p-tau) from blood or CSF samples. |

| PCR Assays [2] | Genotype participants for fixed determinants like the APOE-ε4 allele and other genetic variants. |

| Mass Spectrometry [2] | Precisely measure small molecules, metabolites, and proteins with high specificity, reducing measurement error. |

| High-Sensitivity CRP (hs-CRP) Assay [4] | Accurately measure low-grade chronic inflammation, a key modifiable confounder. |

| Certified Reference Materials | Standardize assays across batches and laboratories to ensure measurement accuracy and reproducibility. |

The Impact of Systemic Inflammation and Metabolic Health on Biomarker Expression

Frequently Asked Questions

FAQ 1: Why is it crucial to account for metabolic health in nutritional biomarker research? Metabolic health conditions, such as obesity and insulin resistance, are characterized by a state of chronic low-grade inflammation [5]. This inflammation can directly alter the levels of various molecules in the blood, independent of dietary intake. For instance, inflammatory cytokines can change the production, release, or clearance of biomarkers. If unaccounted for, this can lead to a false conclusion that a biomarker level is due to a specific food consumed, when it is actually driven by the individual's underlying metabolic state [6] [7].

FAQ 2: What are some common non-food determinants that can confound biomarker levels? Several factors beyond diet can influence biomarker expression. Key among them are:

- Systemic Inflammation: Conditions like metabolic syndrome can elevate inflammatory markers such as hs-CRP, which may correlate with or influence other biomarkers [6] [5].

- Medications: Common drugs can affect metabolic pathways and biomarker kinetics.

- Age, Sex, and Genetics: These intrinsic factors cause significant inter-individual variation in how biomarkers are metabolized and expressed [8] [7].

- Kidney and Liver Function: These organs are critical for the metabolism and excretion of many biomarkers; their impaired function can lead to biomarker accumulation.

FAQ 3: Which specific inflammatory biomarkers should I consider measuring in my studies? You should consider a combination of established and emerging biomarkers. The table below summarizes key options [6] [5]:

| Biomarker Name | Full Name | Biological Matrix | Key Characteristics |

|---|---|---|---|

| hs-CRP | High-sensitivity C-reactive protein | Plasma/Serum | An established, robust marker of systemic inflammation; strongly associated with obesity phenotypes [6]. |

| SII | Systemic Immune-Inflammatory Index | Calculated from blood cell counts | A composite index (Platelets × Neutrophils / Lymphocytes). Emerging prognostic value for cardiovascular mortality [5]. |

| SIRI | Systemic Inflammatory Response Index | Calculated from blood cell counts | A composite index (Monocytes × Neutrophils / Lymphocytes). Shows superior predictive performance for mortality risk in some studies [5]. |

| IL-6 | Interleukin-6 | Plasma/Serum | A pro-inflammatory cytokine that plays a mechanistic role in chronic low-grade inflammation [5]. |

FAQ 4: My experiment yielded a biomarker with poor reproducibility. What could have gone wrong? Poor reproducibility often stems from methodological pitfalls. Common issues include:

- Inadequate Sample Size: A study that is underpowered may identify biomarkers that do not hold up in validation [9] [10].

- Overfitting the Data: Using complex models on small datasets can produce biomarkers that are not generalizable [10].

- Dichotomania: Artificially dichotomizing continuous biomarker data (e.g., into "high" and "low" groups) discards valuable information, reduces statistical power, and is a major source of non-reproducible findings [9].

- Insufficient Validation: Failing to validate a candidate biomarker in an independent cohort or using a different analytical method [11] [10].

Troubleshooting Guides

Problem: Inconsistent correlation between a dietary biomarker and food intake records. Solution: Follow this systematic workflow to identify and control for confounding factors.

Recommended Actions for Each Step:

- Assess Inflammatory Status: Measure hs-CRP and calculate SII/SIRI from complete blood count (CBC) data in all participants [6] [5].

- Stratify Analysis: Re-analyze the correlation between your dietary biomarker and food intake after splitting your cohort into groups based on their inflammatory status (e.g., high vs. low SIRI) or metabolic health phenotype (e.g., metabolically healthy vs. unhealthy) [6].

- Statistical Adjustment: In your regression models, include inflammatory biomarkers (e.g., log-transformed hs-CRP, SII) and metabolic health indicators (e.g., HOMA-IR for insulin resistance, BMI) as covariates to control for their effects [8] [6].

- Re-evaluate Correlation: Check if the correlation between the dietary biomarker and food intake becomes stronger and more significant after accounting for these non-food determinants.

- Expand Confounder Screening: If the issue persists, investigate other potential confounders like medication use (e.g., metformin, statins), renal function (e.g., estimated glomerular filtration rate), or genetic polymorphisms via genotyping.

Problem: Selecting a statistical model for biomarker discovery from high-dimensional omics data. Solution: Choose a model that avoids overfitting and is suited for high-dimensional data. The table below compares common algorithms [10]:

| Algorithm | Full Name | Best Use Case | Key Advantage |

|---|---|---|---|

| sPLS | Sparse Partial Least Squares | Integrating two data types (e.g., transcriptomics & proteomics) | Simultaneously performs dimension reduction and variable selection [10]. |

| XGBoost | eXtreme Gradient Boosting | Prediction and classification with complex relationships | High predictive accuracy; handles mixed data types well [10]. |

| Random Forest | Random Forest | Identifying robust feature importance | Reduces overfitting by building many decision trees; provides stability [10]. |

| Glmnet | - | Building predictive models with many features | Uses regularized regression to prevent overfitting in high-dimensional datasets [10]. |

Recommended Action: For robust discovery, do not rely on a single model. Use a combination of these algorithms (e.g., in an ensemble method) and prioritize features that are consistently identified as important across multiple methods [10]. Always validate the final model on a completely independent dataset.

Experimental Protocols

Protocol 1: Validating a Candidate Dietary Biomarker Against Habitual Intake This protocol outlines the key steps for establishing a correlation between a candidate biomarker and long-term dietary intake in a free-living population, while controlling for metabolic inflammation.

Objective: To assess the validity of a candidate biomarker (e.g., alkylresorcinols for whole-grain intake) by correlating its concentration in a biological matrix with habitual food intake estimated from a Food Frequency Questionnaire (FFQ), while adjusting for inflammatory confounders.

Materials:

- Cohort: Free-living participants with stored biospecimens (e.g., plasma, urine).

- Dietary Assessment: Validated FFQ or multiple 24-hour recalls.

- Biospecimens: Fasting plasma or 24-hour urine samples.

- Analytical Instrumentation: High-performance liquid chromatography-mass spectrometry (LC-MS) or gas chromatography-mass spectrometry (GC-MS) for biomarker quantification [8].

- Clinical Biochemistry Analyzer: For measuring hs-CRP and a complete blood count (CBC) to calculate SII and SIRI.

Procedure:

- Biomarker Quantification: Analyze the concentration of the candidate dietary biomarker in the biospecimens using a validated LC-MS/MS or GC-MS method. Ensure the assay meets precision and accuracy standards [8].

- Inflammatory Marker Assessment: Measure hs-CRP levels and obtain a CBC from the same blood draw. Calculate SII as (Platelets × Neutrophils / Lymphocytes) and SIRI as (Monocytes × Neutrophils / Lymphocytes) [5].

- Data Analysis:

- Calculate the correlation coefficient (e.g., Pearson's r) between the biomarker level and the corresponding food intake from the FFQ.

- Perform multiple linear regression with the biomarker level as the dependent variable. Include food intake as the primary independent variable, and add hs-CRP, SII, age, sex, and BMI as covariates.

- Interpret the correlation as follows: r < 0.2 (weak), r = 0.2–0.5 (moderate), r > 0.5 (strong) [8].

Protocol 2: Assessing Biomarker Reproducibility Over Time This protocol is critical for determining whether a single biomarker measurement can reliably represent long-term exposure.

Objective: To determine the intraclass correlation coefficient (ICC) of a candidate biomarker from repeated measures over time.

Materials:

- Study Design: Longitudinal cohort with biospecimens collected from the same individuals at multiple time points (e.g., baseline, 1-year, 3-years).

- Statistical Software: R, SPSS, or SAS with procedures for calculating ICC.

Procedure:

- Sample Collection: Collect and analyze biospecimens following a standardized protocol at each time point.

- Statistical Calculation: Use a mixed-effects model to calculate the ICC. The ICC represents the ratio of between-person variance to the total variance (between-person + within-person variance) [8].

- Interpretation: Classify reproducibility as: ICC < 0.4 (poor), ICC = 0.4–0.6 (fair), ICC = 0.60–0.75 (good), ICC > 0.75 (excellent) [8]. A biomarker with good-to-excellent reproducibility is more suitable for use in epidemiological studies with single measurements.

Conceptual Diagram: Inflammation as a Confounder

This diagram illustrates the conceptual framework of how systemic inflammation acts as a confounder in the relationship between dietary intake and biomarker levels.

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Research | Example Application |

|---|---|---|

| High-Sensitivity CRP (hs-CRP) Assay | Precisely quantifies low levels of C-reactive protein in serum/plasma to assess chronic low-grade inflammation. | Stratifying participants by inflammatory status in a cohort study [6]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS/MS) | A highly specific and sensitive platform for identifying and quantifying low-abundance dietary biomarkers in complex biological samples. | Measuring alkylresorcinols (whole grains) or proline betaine (citrus) in plasma [8] [7]. |

| Complete Blood Count (CBC) Analyzer | Provides absolute counts of neutrophils, lymphocytes, monocytes, and platelets required to calculate SII and SIRI. | Calculating novel inflammatory indices for prognostic risk assessment [5]. |

| Enzyme-Linked Immunosorbent Assay (ELISA) Kits | Allows for the quantification of specific protein biomarkers (e.g., IL-6, adiponectin) in a high-throughput manner. | Measuring inflammatory cytokines in a large number of patient samples. |

| Stable Isotope-Labeled Standards | Internal standards used in mass spectrometry to correct for sample loss and matrix effects, ensuring accurate biomarker quantification. | Adding d₅-alkylresorcinol to a sample before extraction to precisely quantify native alkylresorcinols [8]. |

The rising prevalence of complex diseases such as obesity, type 2 diabetes, cardiovascular disease, and Alzheimer's disease has paralleled the global shift from traditional, nutritionally dense diets to energy-dense Western-pattern diets and more sedentary lifestyles [12]. However, considerable individual diversity exists in response to these environmental pressures, suggesting that genetic and epigenetic factors significantly modulate disease susceptibility. Understanding gene-diet interactions offers profound potential for personalizing nutritional strategies and improving public health outcomes [12].

Genetic background alone often provides an incomplete picture of disease risk. The "thrifty genotype" hypothesis proposes that genetic variations selected for efficient energy storage during periods of famine have become maladaptive in modern environments with constant food availability [12]. Furthermore, epigenetic mechanisms—heritable changes in gene expression that do not alter the DNA sequence—respond to dietary and other environmental exposures, creating a dynamic interface between fixed genetic risk and modifiable lifestyle factors [13] [14]. This technical support guide provides researchers with methodologies and troubleshooting approaches for investigating these complex relationships, with particular emphasis on controlling for non-food determinants in biomarker research.

Fundamental Concepts: Genetic Risk and Epigenetic Regulation

Key Genetic Risk Factors

APOE Genotypes and Alzheimer's Disease Risk The apolipoprotein E (APOE) gene represents a well-characterized example of genetic risk modulation. Its three common variants differentially influence Alzheimer's disease susceptibility [15]:

- APOE ε2: The least common variant; associated with reduced Alzheimer's risk

- APOE ε3: The most common variant; considered neutral for Alzheimer's risk

- APOE ε4: Present in up to 15% of people; increases Alzheimer's risk significantly

Individuals with one APOE ε4 variant have approximately 2-3 times increased risk of developing Alzheimer's disease, while those with two copies face 8-12 times higher risk [15]. However, APOE ε4 is neither deterministic nor the sole factor in disease development, highlighting the importance of gene-environment interactions.

Obesity and Cardiovascular Disease Genetics Genome-wide association studies (GWAS) have identified numerous genetic variants associated with obesity, type 2 diabetes, and cardiovascular disease [12]. The fat mass and obesity-associated gene (FTO) represents one of the strongest genetic predictors for obesity, while chromosome 9p21 variants are significantly linked to coronary heart disease risk [12]. These genetic discoveries provide the foundation for investigating how dietary factors modulate inherent genetic susceptibility.

Epigenetic Mechanisms

Epigenetic regulation occurs through three primary systems that can interact to silence or activate genes [13] [14]:

DNA Methylation This process involves adding a methyl group to cytosine nucleotides in CpG dinucleotides, primarily within promoter regions [13] [14]. Hypermethylation of CpG islands typically silences gene expression by preventing transcription factor binding and promoting chromatin condensation. In cancer, tumor suppressor genes often undergo promoter hypermethylation, while global hypomethylation can activate oncogenes [14].

Histone Modification Histone proteins package DNA into nucleosomes, and post-translational modifications (acetylation, methylation, phosphorylation, ubiquitylation) alter chromatin structure [13]. Acetylation generally loosens chromatin and facilitates transcription, while methylation can either activate or repress genes depending on the specific residue modified [13] [14].

Non-coding RNA-Associated Silencing Non-coding RNAs (including miRNA, siRNA, and lncRNA) regulate gene expression by directing chromatin modifications or interfering with mRNA translation [13]. These molecules have emerged as crucial epigenetic regulators with roles in development, cellular differentiation, and disease pathogenesis.

Dietary Biomarkers: Validation and Analytical Considerations

Biomarker Classification and Validation Criteria

Accurate assessment of dietary exposure is fundamental to gene-diet interaction studies. Dietary biomarkers provide objective measures that complement self-reported intake data [8]. The table below outlines key validation criteria for dietary biomarkers in epidemiological research:

Table 1: Validation Criteria for Dietary Biomarkers in Epidemiological Studies

| Validation Criterion | Description | Application in Research |

|---|---|---|

| Nature and Specificity | Whether biomarker is a parent compound or metabolite; specificity to food of interest | Determines biological plausibility and interpretive value |

| Biospecimen Type | Presence in plasma, urine, adipose tissue, hair, or nails | Informs collection protocols and stability requirements |

| Analytical Method | LC, GC, NMR, or other detection methods | Affects sensitivity, specificity, and reproducibility |

| Correlation with Habitual Intake | Correlation coefficient (r) with dietary assessment tools | r < 0.2 (weak); r = 0.2-0.5 (moderate); r > 0.5 (strong) |

| Time Response | Temporal relationship with intake based on pharmacokinetics | Determines appropriate sampling timing |

| Reproducibility Over Time | Intraclass correlation coefficient (ICC) of repeated measures | ICC < 0.4 (poor); 0.4-0.6 (fair); 0.60-0.75 (good); >0.75 (excellent) |

| Dose Response | Concentration changes with sequential intake increases | Establishes quantitative relationship with exposure |

Promising dietary biomarkers have been identified for various food groups including alcohol, coffee, dairy, fruits, vegetables, meats, seafood, and cereals [8]. However, many candidate biomarkers still require rigorous validation against these criteria, particularly regarding dose response, correlation with habitual intake, and long-term reproducibility.

Controlling for Non-Food Determinants of Biomarker Levels

Non-food factors significantly influence biomarker levels and must be controlled in study design and analysis:

Biological Variability

- Age: Epigenetic patterns change throughout lifespan [13]

- Sex: Hormonal differences affect metabolism and biomarker kinetics

- Ethnicity: Genetic ancestry influences metabolic pathways

- Disease Status: Pathological conditions alter biomarker metabolism [8]

Lifestyle Factors

- Smoking: Induces cytochrome P450 enzymes and affects metabolism [13]

- Physical Activity: Alters energy metabolism and inflammatory markers

- Sleep Patterns: Affect hormonal regulation and metabolic processes [16]

- Medications: Drug interactions can modify biomarker levels

Technical Considerations

- Sample Collection Timing: Circadian rhythms influence biomarker levels

- Sample Processing: Delay in processing affects labile biomarkers

- Storage Conditions: Long-term stability varies among biomarkers [8]

- Analytical Batch Effects: Technical variation across analysis runs

Experimental Protocols for Gene-Diet Interaction Research

Study Design Considerations

Observational Studies Large-scale prospective cohorts with replicated dietary assessments and biological sampling provide valuable platforms for gene-diet interaction research [12] [17]. Key considerations include:

- Sample size adequacy for detecting interactions (often requiring larger samples than main effects)

- Population diversity to ensure generalizability

- Repeated biomarker measurements to account within-person variation

- Comprehensive covariate data to control for confounding

Randomized Controlled Trials Dietary intervention studies with genetic stratification offer the strongest evidence for causal gene-diet interactions [12] [18]. The PRISMA NMA extension provides guidelines for conducting and reporting network meta-analyses of multiple dietary patterns [18].

Methodological Workflow for Gene-Diet Interaction Analysis

Protocol: Assessing Gene-Diet Interactions in Cardiovascular Disease

Objective: To investigate interactions between genetic risk scores and dietary patterns on cardiovascular disease biomarkers.

Materials:

- Participants: Minimum 1,000 adults (aged 40-75), free of clinical CVD

- Biological samples: Fasting blood, urine, DNA from buccal swabs or blood

- Dietary assessment: Validated food frequency questionnaire, 24-hour recalls

- Covariate data: Demographics, medical history, lifestyle factors, medications

Procedures:

- Genotyping:

- Select established genetic variants for CVD (e.g., chromosome 9p21, APOE, PCSK9)

- Calculate polygenic risk scores using weighted methods

- Verify Hardy-Weinberg equilibrium and genotype quality control

Dietary Pattern Assessment:

- Administer validated food frequency questionnaire

- Calculate dietary pattern scores (e.g., Mediterranean, DASH, Western)

- Collect potential dietary biomarkers (e.g., plasma fatty acids, urinary sodium)

Biomarker Measurement:

- Lipid profile: LDL-C, HDL-C, triglycerides, apolipoprotein B

- Glycemic biomarkers: Fasting glucose, insulin, HOMA-IR

- Inflammatory markers: CRP, IL-6, TNF-α

- Follow standardized laboratory protocols with quality controls

Statistical Analysis:

- Test gene-diet interactions using multiplicative terms in regression models

- Adjust for multiple testing using false discovery rate or Bonferroni correction

- Stratify analysis by genetic risk categories to examine effect modification

- Conduct sensitivity analyses excluding participants with extreme values

Troubleshooting Guides and FAQs

Common Methodological Challenges and Solutions

Table 2: Troubleshooting Guide for Gene-Diet Interaction Studies

| Problem | Potential Causes | Solutions |

|---|---|---|

| Non-replication of significant gene-diet interactions | Underpowered sample size; population stratification; measurement error in dietary assessment; confounding | Increase sample size; validate dietary biomarkers; control for genetic ancestry; replicate in independent population |

| High within-person variability in biomarker levels | Biological variation; timing of sample collection; acute dietary influences; assay variability | Collect repeated measures; standardize sampling conditions; use biomarkers with better reproducibility; average multiple measurements |

| Inconsistent dietary pattern effects across studies | Different definitions of dietary patterns; population-specific food choices; varying adjustment for confounders | Use standardized dietary pattern definitions; consider cultural adaptations; adjust for consistent covariate sets; perform individual-level meta-analysis |

| Missing genetic data affecting analysis | Sample quality; genotyping failure; imputation inaccuracies | Implement rigorous DNA quality control; use high-quality imputation reference panels; conduct sensitivity analyses |

| Confounding by non-food factors | Incomplete measurement of lifestyle factors; residual confounding; population stratification | Comprehensively measure potential confounders; use directed acyclic graphs to identify minimal sufficient adjustment sets; employ family-based designs |

Frequently Asked Questions

Q: How can we distinguish between statistical and biological interaction in gene-diet studies?

A: Statistical interaction refers to deviation from additivity of effects in a statistical model, while biological interaction implies two factors participate in the same causal mechanism [17]. While statistical interaction is model-dependent, assessing biological interaction requires understanding underlying pathways through functional studies and mechanistic experiments.

Q: What are the key considerations for selecting dietary biomarkers in large epidemiological studies?

A: Prioritize biomarkers with established validity, good reproducibility over time (ICC > 0.4), correlation with habitual intake (r > 0.2), and practical measurement in stored samples [8]. Consider cost-effectiveness, with panels of biomarkers sometimes providing better predictive value than single markers.

Q: How should researchers address multiple testing in gene-diet interaction studies?

A: Correction for multiple testing is essential but often overlooked [17]. Approaches include false discovery rate control for exploratory analyses, Bonferroni correction for hypothesis-driven studies with limited tests, and split-sample discovery-replication designs. Pre-specifying primary hypotheses minimizes concerns about data dredging.

Q: What explains the variable responsiveness to dietary interventions among individuals with the same genetic risk profile?

A: Beyond measured genetic variants, epigenetic modifications, gut microbiota composition, lifelong dietary habits, and other environmental exposures contribute to interindividual variability [13] [14]. Comprehensive phenotyping and consideration of these additional factors improve prediction of dietary responsiveness.

Q: How can we improve the clinical translation of gene-diet interaction findings?

A: Focus on interactions with substantial effect sizes, replicate findings across diverse populations, demonstrate clinical utility through randomized trials, and develop user-friendly tools for healthcare providers [12] [16]. Implementation science approaches can address barriers to integrating genetics into nutritional guidance.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Gene-Diet Interaction Studies

| Reagent/Material | Function/Application | Key Considerations |

|---|---|---|

| DNA extraction kits | Isolation of high-quality DNA from blood, saliva, or tissue | Yield, purity, compatibility with downstream genotyping platforms |

| Genotyping arrays | Genome-wide variant profiling; targeted variant analysis | Coverage of relevant populations; inclusion of nutritionally relevant variants |

| Methylation arrays | Epigenome-wide association studies; DNA methylation quantification | Coverage of CpG islands; regulatory regions; reproducibility |

| Mass spectrometry platforms | Targeted and untargeted metabolomics; dietary biomarker quantification | Sensitivity, specificity, throughput; capacity for absolute quantification |

| ELISA kits | Quantification of protein biomarkers (inflammatory markers, adipokines) | Validation in study matrix; cross-reactivity; dynamic range |

| Stable isotope tracers | Metabolic pathway analysis; nutrient kinetics studies | Safety considerations; analytical requirements; cost |

| Biobanking supplies | Long-term sample storage at ultra-low temperatures | Temperature monitoring; sample tracking; preservation of analyte integrity |

| Dietary assessment software | Analysis of food frequency questionnaires; 24-hour recalls | Food composition database quality; cultural appropriateness |

Investigating genetic and epigenetic influences on dietary responses requires sophisticated methodological approaches that account for both biological complexity and practical research constraints. By implementing rigorous biomarker validation, controlling for non-food determinants, employing robust statistical methods, and troubleshooting common methodological challenges, researchers can advance our understanding of gene-diet interactions and move toward personalized nutrition strategies. The continued refinement of experimental protocols and analytical frameworks will enhance the reproducibility and translational potential of this promising field.

In nutritional epidemiology and biomarker research, a "confounder" is a variable that is associated with both the exposure (e.g., diet) and the outcome (e.g., biomarker level) and can distort the true relationship between them. Demographic and lifestyle factors often act as such confounders. For instance, the relationship between a dietary intake biomarker and a health outcome might actually be driven by underlying factors like age, physical activity levels, or existing health conditions. Failure to properly account for these non-food determinants can lead to extreme instances of spurious association, a phenomenon dramatically illustrated in a study of Facebook interests, where demographic confounding was responsible for the most extreme cases of "lifestyle politics" [19]. This technical support center provides protocols and guidelines for researchers to identify, assess, and control for these critical confounders.

Troubleshooting Guides

Troubleshooting Guide 1: Suspected Demographic Confounding

Problem: An observed association between a dietary biomarker and an outcome of interest is suspected to be driven by underlying demographic factors such as age, sex, or race/ethnicity.

Symptoms:

- A strong, unexpected association disappears or drastically weakens after statistical adjustment for demographic variables [20].

- The distribution of the biomarker differs significantly across demographic subgroups (e.g., higher levels in older participants).

- The results of a stratified analysis (e.g., analyzing males and females separately) are inconsistent with the overall finding.

Diagnosis and Resolution:

- Gather Evidence: Collect data on key demographic variables (age, sex, race/ethnicity) for all participants. This is a fundamental requirement for any study in this field [20].

- Run Diagnostic Models:

- Model 1: Run a regression model with only the biomarker predicting the outcome. Note the effect size and significance.

- Model 2: Run the same model, but add the demographic variables as covariates.

- Compare Models: If the effect of the biomarker in Model 2 is substantially weaker (attenuated) or becomes non-significant compared to Model 1, this is strong evidence of demographic confounding. A study on prediabetes and mortality showed that an initial hazard ratio (HR) of 1.58 became a non-significant 1.04 after full adjustment for demographics, lifestyle, and comorbidities [20].

- Final Resolution: The fully adjusted model (Model 2) provides a more accurate estimate of the biomarker's effect, independent of demographic influences. This must be reported as the primary result.

Troubleshooting Guide 2: Assessing Physical Activity and Comorbidity as Confounders

Problem: The level of a nutritional biomarker is influenced by a participant's physical activity level or their underlying health status and comorbidities, rather than, or in addition to, their diet.

Symptoms:

- Biomarker levels are correlated with physical activity metrics (e.g., IPAQ scores) or comorbidity indices (e.g., Charlson Comorbidity Index, CCI) [21].

- Participants with specific conditions (e.g., chronic kidney disease) show systematically different biomarker profiles, independent of diet [21].

- The association between a dietary pattern and a biomarker is different in healthy populations compared to populations with chronic diseases.

Diagnosis and Resolution:

- Standardized Assessment:

- Physical Activity: Use a validated tool like the short form of the International Physical Activity Questionnaire (IPAQ) to quantify activity levels in MET-min/week [21] [22]. Categorize participants into low, medium, and high activity groups.

- Comorbidities: Use a standardized index like the Charlson Comorbidity Index (CCI) to quantify the overall burden of comorbid conditions [21].

- Check Correlations: Perform correlation analysis (e.g., Spearman's rho) between your biomarker levels and the IPAQ scores and CCI.

- Statistical Control: If significant correlations are found, include IPAQ score and CCI as covariates in your statistical models alongside demographic variables. Research in CKD G5D patients has shown that both the physical component summary of quality of life and IPAQ scores are significantly predicted by sex, age, CCI, and dialysis vintage [21].

- Stratified Analysis: For a more nuanced view, consider stratifying your analysis by comorbidity status (e.g., patients with vs. without diabetes) or activity level to see if the associations hold within each subgroup.

Frequently Asked Questions (FAQs)

Q1: Why can't I just match study groups on key demographics like age and sex instead of statistically adjusting for them? A1: While matching is a valid strategy, it is often impractical in observational studies and can only control for a limited number of variables. Statistical adjustment (e.g., using regression models) allows you to simultaneously control for a wider range of potential confounders, including continuous variables like age. It is the more flexible and commonly used approach.

Q2: What is the minimum set of demographic and lifestyle variables I should collect and control for? A2: At a minimum, your data should include and you should consider adjusting for: age (as a continuous variable), sex (male/female), race and ethnicity (as self-reported categories), and socioeconomic status (often proxied by educational attainment or income). Studies consistently show these are powerful confounders [19] [20]. Lifestyle factors should include physical activity and smoking status at a minimum.

Q3: How do I handle a situation where a potential confounder is also on the causal pathway? A3: This is a central problem in causal inference. If a variable is a mediator (part of the causal pathway), controlling for it will block part of the effect you are trying to measure. Careful causal reasoning using Directed Acyclic Graphs (DAGs) is required to distinguish between confounders (which must be controlled) and mediators (which generally should not be controlled for when estimating the total effect).

Q4: I have a limited sample size. How many confounders can I adjust for without overfitting my model? A4: A common rule of thumb is to have at least 10-15 outcome events per variable (EPV) in your model. In a study with a continuous outcome, this translates to a total sample size requirement. With limited samples, prioritize confounders based on the strength of their known association with both the exposure and outcome. Consider using penalized regression methods (e.g., Lasso) if you have many potential confounders.

The following table summarizes key quantitative findings from recent studies on the impact of adjusting for demographic and lifestyle confounders.

Table 1: Impact of Adjusting for Confounders on Reported Associations

| Study Focus | Unadjusted Association | Adjusted for Demographics | Fully Adjusted (Demographics, Lifestyle, Comorbidities) | Key Confounders Identified |

|---|---|---|---|---|

| Prediabetes & Mortality [20] | HR = 1.58 (1.43-1.74) | HR = 0.88 (0.80-0.98) | HR = 1.04 (0.92-1.18) | Age, Race/Ethnicity, Smoking, Comorbidities (CCI) |

| Lifestyle Politics on Facebook [19] | Extreme political alignment of interests | --- | Alignment decreased by 27.36% after demographic deconfounding | Race/Ethnicity, Education, Age |

| Physical Activity (PA) in CKD G5D [21] | Mean IPAQ: 1163 MET-min/week (vs. higher in controls) | --- | PA predicted by: Age (β=-0.303), HD Vintage (β=0.275), PCS (β=0.343) | Age, Dialysis Vintage, Physical Health |

| Hypertension-Diabetes Comorbidity [22] | Prevalence: 58.3% (Low PA) vs. 45.4% (High PA) | --- | Odds Ratio (OR) for Female vs. Male: 1.194 (1.122-1.271) | Sex, Education, Occupation, Income, PA Level |

HR = Hazard Ratio; OR = Odds Ratio; β = Standardized Regression Coefficient; CCI = Charlson Comorbidity Index; PCS = Physical Component Summary (of HRQoL); HD Vintage = Hemodialysis Vintage.

Experimental Protocols for Confounder Control

Protocol 1: Measuring Physical Activity with the International Physical Activity Questionnaire (IPAQ) - Short Form

Application: To objectively quantify participants' physical activity levels for use as a covariate or stratification variable. Methodology:

- Administration: The questionnaire is administered via a structured interview or self-report. It asks about the frequency (days/week) and duration (minutes/day) of physical activity in three domains: vigorous-intensity, moderate-intensity, and walking during the last 7 days [22].

- Scoring:

- Calculate the total MET-min/week for each activity level using predefined Metabolic Equivalent (MET) weights: 8.0 for vigorous, 4.0 for moderate, and 3.3 for walking.

- Formula: MET-min/week = MET value × minutes of activity × days per week.

- Categorization: Participants are categorized as follows:

- High: Vigorous activity on ≥3 days achieving ≥1500 MET-min/week OR ≥7 days of any combination achieving ≥3000 MET-min/week.

- Moderate: ≥5 days of moderate/walking activity for ≥30 min/day OR ≥5 days of any combination achieving ≥600 MET-min/week.

- Low: Those not meeting the above criteria [22].

Protocol 2: Quantifying Comorbidity Burden with the Charlson Comorbidity Index (CCI)

Application: To assign a single, weighted score that captures the burden of comorbid disease, which can be used for adjustment in statistical models. Methodology:

- Data Collection: Collect data on the presence or absence of 19 specific conditions (e.g., myocardial infarction, congestive heart failure, diabetes with complications, any malignancy, moderate or severe renal disease) from medical records or participant self-report.

- Scoring: Each condition is assigned a weight of 1, 2, 3, or 6, based on its associated risk of one-year mortality.

- Calculation: Sum the weights for all conditions present for a participant to calculate their raw CCI score.

- Age-Adjustment (Optional): For an Age-adjusted CCI (ACCI), add one point for each decade over the age of 40 (e.g., 1 point for 41-50, 2 for 51-60, etc.) [22].

Research Reagent Solutions

Table 2: Essential Tools for Assessing and Controlling for Confounders

| Item | Function in Research | Example Application |

|---|---|---|

| International Physical Activity Questionnaire (IPAQ) | A validated self-report tool to estimate habitual physical activity levels across different domains (work, transport, leisure) [21] [22]. | Quantifying physical activity as a continuous (MET-min/week) or categorical (low/medium/high) variable for use as a covariate. |

| Charlson Comorbidity Index (CCI) | A method of classifying prognostic comorbidity to quantify the burden of concomitant diseases from medical record or self-report data [21]. | Generating a comorbidity score to adjust for disease burden's effect on biomarker levels or health outcomes. |

| Structured Demographic Questionnaire | A standardized tool to collect core demographic data (age, sex, gender, race/ethnicity, education, income) [19] [20]. | Ensuring consistent collection of essential confounder data across all study participants. |

| Statistical Software (e.g., R, Stata, SAS) | Software platforms capable of performing multivariable regression analysis, which is the primary method for statistically controlling for multiple confounders simultaneously. | Running models to assess the independent effect of a dietary biomarker after adjusting for age, sex, CCI, and IPAQ score. |

FAQs: Understanding Biomarker Variability

FAQ 1: What are the main categories of determinants that affect biomarker levels? Biomarker variability is influenced by a complex interplay of factors that can be categorized as follows:

- Fixed Factors: These are intrinsic, non-modifiable characteristics such as age, sex, genetics (e.g., APOE-ε4 genotype in Alzheimer's disease), and ethnicity [23] [24].

- Modifiable Factors: These include nutritional status, systemic inflammation, metabolic health (e.g., insulin resistance, dyslipidemia), physical activity, and lifestyle choices such as smoking [23] [24].

- Technical & Analytical Factors: These encompass pre-analytical sample handling, assay accuracy, precision, sensitivity, and the stability of the biomarker in storage [25] [24].

- Temporal & Biological Rhythms: Diurnal (circadian) rhythms, the timing of sampling relative to exposure or activity events, and hormonal cycles can cause significant intra-individual variation [25] [26].

FAQ 2: How do non-food determinants like activity and inflammation specifically alter biomarker concentrations?

- Physical Activity: Even light, non-exertional activity can significantly increase serum concentrations of various biomarkers. For example, in osteoarthritis, serum COMP, hyaluronan, and keratan sulfate levels rise after activity. Conversely, activity can decrease the concentration of some urinary biomarkers due to changes in glomerular filtration rate [26].

- Systemic Inflammation: A chronic state of inflammation, characterized by cytokines like IL-6, IL-1β, and TNF-α, can directly influence the pathobiology of diseases such as Alzheimer's. It promotes the formation of amyloid plaques and neurofibrillary tangles, thereby altering the levels of key blood-based biomarkers like Aβ, p-tau, and neurofilament light chain (NFL) [23].

- Metabolic State: Conditions like insulin resistance and thyroid imbalances can alter biomarker variability by affecting underlying metabolic pathways [23].

FAQ 3: What are the best practices for controlling non-food determinants in study design? Controlling for variability requires a strategic approach from study design through sample collection and analysis.

- Standardize Sampling Protocols: Collect samples at a standardized time of day to account for diurnal variation. For certain biomarkers, a first-morning urine sample or fasting blood draw may be optimal [24] [26].

- Record Contextual Data: Meticulously document participant-related factors at the time of sampling. This includes time of day, recent physical activity, medication and supplement use, health status, and, for female participants, hormonal status [24].

- Multiple Sampling: Where possible, collect multiple samples from the same individual over time to better estimate and account for intra-individual variation [25] [24].

- Statistical Adjustment: Measure and statistically adjust for confounding factors. For instance, measure inflammatory markers like C-reactive protein (CRP) and alpha-1-acid glycoprotein (AGP) to adjust for the influence of inflammation on nutritional biomarkers [24].

Troubleshooting Guides

Problem: High Unexplained Variability in Biomarker Measurements Within a Cohort.

- Potential Cause 1: Uncontrolled for diurnal variation and recent participant activity.

- Solution: Implement and enforce strict, standardized sampling protocols. For example, schedule all blood draws for the morning after a period of fasting and minimal physical activity. Use accelerometers to objectively monitor and control for activity levels before sampling [26].

- Potential Cause 2: Unaccounted for subclinical inflammation or common comorbidities (e.g., obesity, diabetes).

- Solution: Incorporate specific biomarker panels to detect confounders. Routinely measure and adjust for CRP and AGP to account for inflammation. Classify participants based on health status and medication use collected via detailed questionnaires [23] [24].

Problem: Biomarker Fails to Replicate in a Validation Study or Distinguish Between Disease States.

- Potential Cause 1: Over-reliance on dichotomization of continuous biomarker data or use of arbitrary cut-points, which discards statistical information and power.

- Solution: Use the full, continuous information in the data during statistical analysis. Employ models like proportional odds ordinal logistic models that do not require artificially categorizing outcomes [9].

- Potential Cause 2: Inadequate sample size for the complexity of the analysis, leading to findings that are not reproducible.

- Solution: Ensure the sample size is sufficient for the intended analysis. Use bootstrap methods to validate the stability and reliability of biomarker rankings, especially when searching for a single "winner" from a large set of candidates [9].

- Potential Cause 3: The biomarker is influenced by a synergistic interplay of factors not considered in the model.

- Solution: Move beyond single-biomarker approaches. Develop integrative models that simultaneously consider nutrition, metabolism, and inflammation. Use multi-omics approaches and AI modeling to refine biomarker interpretation within a complex biological context [23].

Table 1: Impact of Controlled Activity and Food Intake on Osteoarthritis Biomarkers

Data derived from a study of 20 participants with knee OA, showing percent change from baseline (T0) [26].

| Biomarker | After 1h Activity (T1a) | After Food Post-Activity (T1b) | Notes |

|---|---|---|---|

| sCOMP | Increased | Returned to near baseline | Positively correlated with activity level measured by accelerometer. |

| sHA (Hyaluronan) | Increased | Returned to near baseline | Previously linked to food-stimulated lymphatic clearance. |

| sKS-5D4 (Keratan Sulfate) | Increased | Returned to near baseline | - |

| uCTX-II | Decreased | - | Showed true circadian rhythm (peak in morning, nadir in evening). |

Table 2: Categories of Determinants Affecting Biomarker Variability

Synthesized from multiple sources on biomarker and nutritional research [23] [25] [24].

| Category | Specific Examples | Primary Influence |

|---|---|---|

| Fixed Factors | Age, Sex, Genetics (e.g., APOE-ε4), Ethnicity | Inter-individual variation, baseline setting |

| Modifiable Biological Factors | Inflammation (Cytokines IL-6, TNF-α), Metabolic Health (Insulin resistance), Hormonal Status | Intra- & inter-individual variation, disease linkage |

| Lifestyle & Environment | Physical Activity, Smoking, Recent Diet, Medication/Supplement Use | Intra-individual variation, confounding |

| Temporal & Sampling | Diurnal/Circadian Rhythm, Time since last meal/activity, Season | Intra-individual variation, measurement noise |

| Technical & Analytical | Assay precision & accuracy, sample handling & storage, hemolysis | Measurement noise, validity |

Detailed Experimental Protocol: Diurnal and Activity Variation

Objective: To evaluate the variation in serum and urinary biomarkers due to physical activity and food consumption, independent of disease progression.

Methodology Summary from Osteoarthritis Study [26]:

- Participants: 20 individuals with symptomatic and radiographic knee osteoarthritis.

- Study Setting: Controlled inpatient setting (Clinical Research Unit).

- Sampling Timeline:

- T3 (Evening, ~6-8 PM): Baseline sample after dinner, participant upright.

- T0 (Morning, 8 AM): Baseline sample collected after overnight fast, while supine, immediately upon arising. First-morning urine collected.

- T1a (9 AM, Post-Activity): After 1 hour of monitored, light, normal morning activities. An accelerometer (RT3) is worn to objectively quantify activity levels.

- T1b (10 AM, Post-Food): 1 hour after consuming a standardized breakfast while seated.

- Sample Processing: Sera separated and frozen at -20°C within 2 hours, then transferred to -80°C. Urine centrifuged and supernatant aliquoted for analysis.

- Data Analysis: Normalized biomarker concentrations to the individual's mean across all time points. Analyzed using non-parametric Friedman's test with Dunn's post-hoc test. Correlation between activity (accelerometer counts/kcal) and biomarker change was assessed.

Signaling Pathways and Experimental Workflows

Diagram 1: Determinants of Biomarker Variability Interplay

Diagram 2: Controlled Activity & Food Biomarker Study

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomarker Variability Research

Key items and their functions for conducting controlled biomarker studies [24] [26].

| Item / Reagent | Function / Application |

|---|---|

| Accelerometer (e.g., RT3) | Objectively monitors and quantifies participant physical activity in three dimensions to ensure protocol compliance and correlate activity intensity with biomarker changes. |

| Standardized Meal Kits | Controls for the confounding effects of food composition and intake on biomarker levels (e.g., by stimulating glomerular filtration rate or lymphatic clearance). |

| Cryogenic Vials & -80°C Freezer | Ensures the stability of biomarker analytes in serum, plasma, and urine samples after processing and during long-term storage. |

| High-Sensitivity Immunoassays (ELISA) | Quantifies specific, low-concentration protein biomarkers (e.g., p-tau, cytokines, COMP) in blood and other biological fluids. |

| Creatinine Assay Kit | Normalizes the concentration of urinary biomarkers to account for variations in urine dilution and flow rate. |

| Inflammation Panel (CRP, AGP) | Measures acute-phase proteins to identify and statistically adjust for the confounding effects of subclinical inflammation on other biomarkers of interest. |

Strategic Control and Integration: Methodologies for Isulating Dietary Signals

Troubleshooting Guide: Common Experimental Issues & Solutions

This guide addresses frequent challenges researchers face when working with controlled feeding trials and longitudinal observational studies to control for non-food determinants of biomarker levels.

FAQ 1: How can I distinguish biomarker changes from dietary intake versus other biological factors?

- Issue: Biomarker levels fluctuate due to non-food determinants like systemic inflammation, metabolic status, or circadian rhythms, creating confounding signals [2].

- Solution:

- Measure and Adjust for Confounders: Actively measure and statistically adjust for key biological determinants. The table below summarizes critical non-food factors to measure [2]:

| Biological Determinant | Impact on Biomarkers | Examples |

|---|---|---|

| Systemic Inflammation | Can alter levels of key biomarkers (e.g., plasma p-tau181, Aβ42/40) by 20-30%, independent of diet [2]. | C-reactive protein (CRP), cytokines (IL-6, TNF-α) [2]. |

| Metabolic Disorders | Insulin resistance and dyslipidemia can significantly change biomarker variability [2]. | HbA1c, fasting glucose, insulin, lipid panels [27]. |

| Hepatic & Renal Function | Affects biomarker metabolism, excretion, and clearance rates [28]. | ALT, AST, GGT (liver); creatinine, eGFR (kidney) [28]. |

* Utilize Controlled Feeding Designs: Use controlled feeding trials to establish a baseline "dose-response" relationship, which helps clarify the specific effect of a food component isolated from other factors [8] [29].

FAQ 2: What are the primary sources of pre-analytical variability in biomarker levels, and how can they be minimized?

- Issue: Inconsistent sample handling introduces "noise" that can obscure true biological signals, leading to unreliable data [30].

- Solution: Implement a standardized protocol across all collection sites and time points. Key factors to control include [30]:

- Time of Collection: Account for circadian rhythms in hormones like cortisol [28].

- Fasting Status: Ensure consistent participant fasting (or non-fasting) state before blood draws to reduce variability in metabolites like glucose and triglycerides [28].

- Sample Processing: Use standardized, automated protocols for sample processing and homogenization to prevent degradation and cross-contamination [30].

- Storage Conditions: Maintain consistent cold chain logistics and storage temperatures to preserve sample integrity [30].

FAQ 3: How do I validate that a candidate molecule is a robust biomarker of food intake?

- Issue: Many discovered compounds lack validation for real-world use, limiting their application in epidemiological studies [8].

- Solution: Evaluate candidates against a systematic validation framework. The most promising biomarkers are food-specific, have defined parent compounds, and are unaffected by non-food determinants [8]. The following workflow, based on criteria adapted for epidemiological studies, outlines key validation steps [8]:

FAQ 4: In longitudinal studies, how can a single biomarker measurement reflect long-term habitual intake?

- Issue: A single biomarker measurement may not accurately represent habitual intake due to day-to-day variations in diet and metabolism.

- Solution:

- Select biomarkers with favorable kinetic properties. A longer elimination half-life is often indicative of a biomarker that reflects intake over a longer period [8].

- Assess the reproducibility over time, often represented by the intraclass correlation coefficient (ICC). The table below interprets ICC values for a single measurement [8]:

| ICC Range | Interpretation for a Single Measurement |

|---|---|

| < 0.4 | Poor reproducibility |

| 0.4 - 0.6 | Fair reproducibility |

| 0.6 - 0.75 | Good reproducibility |

| > 0.75 | Excellent reproducibility |

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and methodologies for conducting research in this field.

| Item / Methodology | Function & Application |

|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) | High-precision analytical method for identifying and quantifying unknown biomarker compounds in blood and urine samples; key for metabolomic discovery [8] [29]. |

| Nuclear Magnetic Resonance (NMR) Spectroscopy | Used for high-throughput metabolic profiling and quantification of known metabolites in biofluids; less sensitive but highly reproducible [8]. |

| Controlled Feeding Trials | Study design where participants consume pre-defined diets; essential for establishing causal dose-response relationships and discovering candidate biomarkers under controlled conditions [8] [29]. |

| Automated Homogenization Systems | Standardizes sample preparation (e.g., of tissue or complex biofluids), reducing human error and cross-contamination to ensure data reproducibility [30]. |

| High-Sensitivity Immunoassays | Used for precise quantification of low-abundance proteins and metabolic markers in blood (e.g., inflammatory cytokines like IL-6, hs-CRP) [2]. |

| Food Frequency Questionnaires (FFQs) & 24-Hour Recalls | Self-report tools used in observational studies to estimate dietary intake; used alongside biomarkers to validate and correlate findings [8]. |

Experimental Protocols for Key Methodologies

Protocol 1: Conducting a Controlled Feeding Trial for Biomarker Discovery (Adapted from the DBDC Protocol [29])

- Study Design: Implement a crossover or parallel-arm design where healthy participants are administered specific test foods or a whole-diet pattern in prespecified amounts.

- Biospecimen Collection: Collect serial blood (plasma/serum) and urine samples from participants at baseline, during, and after the feeding period.

- Sample Processing: Process all samples using standardized, automated protocols. Immediately flash-freeze samples and store at -80°C to preserve biomarker integrity [30].

- Metabolomic Profiling: Analyze biospecimens using LC-MS and/or NMR-based platforms to capture a wide array of metabolites.

- Data Analysis: Use high-dimensional bioinformatics and statistical analyses (e.g., ANOVA) to identify candidate compounds that significantly change in response to the test food compared to control diets.

- Pharmacokinetic Characterization: Model the time response and elimination half-life of candidate biomarkers from the serial sample data.

Protocol 2: Validating a Biomarker in a Longitudinal Observational Setting

- Cohort Selection: Recruit a large, independent cohort from a free-living population with diverse dietary habits.

- Data Collection:

- Biomarker Assay: Measure the candidate biomarker in the biospecimens using a validated, quality-controlled laboratory method [30].

- Statistical Validation:

- Calculate correlation coefficients (e.g., Spearman's) between biomarker levels and reported food intake.

- Evaluate the biomarker's ability to predict food intake and its reproducibility over time (ICC) in participants with repeated samples [8].

- Use multivariate models to adjust for non-food determinants and confirm the biomarker's specific link to the food of interest.

The relationships between non-food determinants, dietary intake, and resulting biomarker levels can be visualized as follows, highlighting the complexity that study designs must control for:

Leveraging Multi-Omics and AI for Comprehensive Biomarker Panels and Data Integration

Frequently Asked Questions (FAQs)

Core Concepts and Integration Strategies

What is multi-omics integration and why is it crucial for biomarker discovery? Multi-omics integration refers to the combined analysis of different omics datasets—such as genomics, transcriptomics, proteomics, and metabolomics—to provide a more comprehensive understanding of biological systems [31]. This approach is crucial because it allows researchers to examine how various biological layers interact and contribute to overall phenotype or biological response, enabling the identification of robust biomarker signatures that reflect disease complexity [32] [33]. For research on non-food determinants of biomarker levels, multi-omics helps disentangle complex interactions by capturing molecular cascades from genetic variation to functional outcomes.

What are the main architectural approaches to multi-omics data integration? There are two primary architectural paradigms for multi-omics integration [34]:

Table: Multi-Omics Integration Architectures

| Integration Type | Description | Primary Application |

|---|---|---|

| Horizontal Integration | Combines comparable datasets (e.g., transcriptomes from multiple cohorts) for meta-analysis | Strengthens statistical power and generalizability across populations |

| Vertical Integration | Links distinct omics layers from the same biological samples | Uncovers causal relationships and molecular cascades across regulatory layers |

What emerging technologies are enhancing multi-omics integration? Several cutting-edge technologies are advancing multi-omics capabilities [32] [35]:

- Single-cell multi-omics: Provides unprecedented resolution in characterizing cellular states and activities

- Spatial multi-omics: Enables spatially resolved molecular data, preserving tissue architecture context

- AI and machine learning: Deep learning architectures extract nonlinear relationships across omics layers

- Liquid biopsy technologies: Facilitate non-invasive, real-time monitoring of biomarker levels

Data Quality and Preprocessing

How should I preprocess different omics data types for joint analysis? Effective preprocessing requires type-specific normalization methods to account for technical variations while preserving biological signals [31]:

Table: Omics-Specific Normalization Methods

| Omics Data Type | Recommended Normalization | Purpose |

|---|---|---|

| Metabolomics | Log transformation, total ion current normalization | Stabilizes variance and accounts for sample concentration differences |

| Transcriptomics | Quantile normalization | Ensures consistent distribution of expression levels across samples |

| Proteomics | Quantile normalization, variance stabilization | Handles abundance distribution challenges and technical noise |

What are common sample data errors and how can they be detected? Sample-labeling errors including sample swapping and mis-labeling are common in large multi-omics datasets [36]. These can be detected using probabilistic matching procedures like proMODMatcher that identify biological cis-associations (e.g., cis-eQTLs) between different omics data types from the same sample to verify correct sample pairing. These errors should be corrected before integrative analysis as they can dampen true biological signals and lead to incorrect scientific conclusions.

How do I handle different data scales and dimensionality in multi-omics datasets? To handle different data scales, apply scaling methods such as z-score normalization to standardize data to a common scale, allowing better comparison across different omics layers [31]. For high dimensionality, employ feature selection methods including univariate filtering (t-tests, ANOVA) or machine learning algorithms (Lasso regression, Random Forest) to identify the most informative variables while penalizing irrelevant ones.

AI and Computational Approaches

What AI approaches are most effective for multi-omics biomarker discovery? Machine learning and deep learning approaches are revolutionizing multi-omics data interpretation [32] [33]:

Table: AI Approaches for Multi-Omics Integration

| AI Method | Application | Benefit |

|---|---|---|

| Multi-omics Factor Analysis (MOFA) | Dimensionality reduction across omics layers | Identifies latent factors driving variation across datasets |

| Deep Learning Architectures (Autoencoders, Graph Neural Networks) | Nonlinear relationship extraction | Reveals latent biological structures traditional models miss |

| Multimodal ML Models | Simultaneous analysis of genomics, proteomics, and imaging data | Predicts patient responses and therapeutic outcomes |

How can I assess the reproducibility of multi-omics findings? Assess reproducibility through technical replicates during sample preparation and analysis to evaluate intra-experiment variability, followed by independent validation studies with separate cohorts to confirm robustness of findings [31]. Statistical metrics like coefficient of variation (CV) or concordance correlation coefficient (CCC) can quantify reproducibility across different omics layers.

What computational tools are available for multi-omics data integration? Multiple computational tools support different integration objectives [37]:

- MOFA: For factor analysis across multiple omics datasets

- Cytoscape: For network visualization and analysis

- COSMOS: For integrated analysis of signaling and metabolic networks Public databases like The Cancer Genome Atlas (TCGA), Answer ALS, and jMorp provide curated multi-omics data resources for analysis [37].

Troubleshooting Guides

Data Quality Issues

Problem: Discrepancies between transcriptomics, proteomics, and metabolomics results

Solution: Follow this systematic troubleshooting workflow:

- Verify data quality from each omics layer, checking for consistency in sample processing and ensuring appropriate statistical analyses were applied [31]

- Consider biological mechanisms that might explain differences:

- High transcript levels don't always yield equivalent protein abundance due to translation efficiency or protein stability issues

- Post-translational modifications (phosphorylation, acetylation, ubiquitination) can alter protein function without transcript-level changes [32]

- Metabolic feedback inhibition can regulate metabolite concentrations independently of upstream molecular changes

- Perform integrative pathway analysis using databases like KEGG, Reactome, or MetaCyc to identify common biological pathways that might reconcile observed differences [31]

- Explore regulatory mechanisms including miRNA regulation, epigenetic modifications, or protein degradation pathways that might explain discordant findings

Problem: Poor reproducibility across multi-omics experiments

Solution:

- Implement comprehensive Laboratory Information Management Systems (LIMS) to ensure sample and data traceability throughout the experimental lifecycle [34]

- Enforce metadata standardization using controlled vocabularies and ontologies across all omics datasets

- Establish automated data capture systems integrated with instrumentation to minimize transcription errors

- Maintain complete version histories and audit trails for all data transformations and analytical workflows

- Utilize blockchain-based systems for enhanced data provenance in clinical research settings [34]

AI and Computational Challenges

Problem: Overfitting in machine learning models with high-dimensional multi-omics data

Solution:

- Apply rigorous feature selection methods before model training:

- Univariate filtering with false discovery rate correction

- Regularization techniques (Lasso, Elastic Net)

- Tree-based importance scoring (Random Forest)

- Utilize dimensionality reduction techniques:

- Principal component analysis (PCA) for linear relationships

- Autoencoders for nonlinear dimensionality reduction

- Implement robust cross-validation strategies:

- Nested cross-validation for hyperparameter tuning and performance estimation

- Group-based cross-validation when dealing with correlated samples

- Validate findings in independent cohorts to ensure generalizability

Problem: Difficulty interpreting AI-derived biomarker signatures

Solution:

- Employ explainable AI (XAI) techniques:

- SHAP (SHapley Additive exPlanations) values for feature importance

- LIME (Local Interpretable Model-agnostic Explanations) for local explanations

- Conduct pathway enrichment analysis to map identified biomarkers to biological processes

- Integrate prior knowledge from curated databases to contextualize findings

- Validate functional relationships through experimental follow-up in model systems

Experimental Design Issues

Problem: Controlling for non-food determinants in biomarker level studies

Solution: Implement controlled experimental designs that systematically account for confounding variables:

Stratified sampling across key non-food determinants:

- Demographic factors (age, sex, ethnicity)

- Physiological parameters (BMI, body composition)

- Genetic background (key polymorphisms)

- Gut microbiome composition

- Lifestyle factors (physical activity, sleep patterns)

Standardized measurement of potential confounding variables:

- Genotyping for genetic variants known to influence metabolic pathways

- 16S rRNA or shotgun metagenomics for microbiome characterization

- Physical activity monitoring using accelerometers or validated questionnaires

Statistical modeling with appropriate covariate adjustment:

- Mixed-effects models to account for repeated measures

- Principal component analysis to control for population stratification

- Mediation analysis to disentangle direct and indirect effects

Independent validation in diverse cohorts to confirm biomarker specificity to the target exposure

Experimental Protocols

Protocol 1: Multi-Omics Data Quality Control and Validation

Objective: Ensure data quality and sample integrity across multiple omics platforms

Materials:

- Quality control samples (reference standards, pooled quality control samples)

- Laboratory Information Management System (LIMS) for sample tracking

- proMODMatcher or similar sample verification tool [36]

Procedure:

- Pre-analytical processing:

- Assign unique digital identifiers to all samples and aliquots

- Record comprehensive metadata using controlled vocabularies

- Implement randomized processing order to minimize batch effects

Platform-specific QC:

- Genomics/Transcriptomics: Assess RNA integrity numbers (RIN > 7), DNA quality metrics

- Proteomics: Monitor retention time stability, intensity distributions, reference standard signals

- Metabolomics: Evaluate peak shapes, internal standard recoveries, batch correction

Sample identity verification:

- Identify biological cis-associations between different omics data types (e.g., cis-eQTLs between genotype and expression data)

- Apply probabilistic matching to verify sample pairs across platforms

- Correct any sample mis-identifications before proceeding with integrated analysis

Data normalization and transformation:

- Apply platform-specific normalization methods (see Preprocessing FAQ)

- Transform data to common scales where appropriate

- Document all transformations for reproducibility

Protocol 2: Controlled Feeding Study for Biomarker Discovery

Objective: Identify and validate dietary biomarkers while controlling for non-food determinants [29]

Materials:

- Standardized test foods or controlled diets

- Biological sample collection kits (blood, urine)

- Multi-omics profiling platforms (metabolomics, proteomics, transcriptomics)

- Covariate assessment tools (genetic, microbiome, lifestyle)

Procedure:

- Study design phase:

- Implement randomized crossover or parallel arm designs

- Stratify participants by key non-food determinants (genetics, microbiome, demographics)

- Include washout periods between interventions where appropriate

Controlled feeding period:

- Administer test foods or diets in prespecified amounts

- Maintain strict dietary control to minimize confounding

- Collect biological samples at multiple timepoints for pharmacokinetic analysis

Multi-omics profiling:

- Process samples using standardized multi-omics platforms

- Include quality control samples in each batch

- Apply normalization and batch correction algorithms

Data integration and analysis:

- Identify candidate biomarkers associated with test food intake

- Adjust for non-food determinants using statistical models

- Validate findings in independent observational settings

The Scientist's Toolkit

Table: Essential Research Reagent Solutions for Multi-Omics Biomarker Studies

| Reagent/Resource | Function | Application Notes |