Correcting Systematic Error in FFQ Data: Advanced Strategies for Reliable Diet-Disease Research

Systematic measurement error in Food Frequency Questionnaire (FFQ) data presents a significant challenge in nutritional epidemiology and drug development research, potentially distorting diet-disease associations and reducing statistical power.

Correcting Systematic Error in FFQ Data: Advanced Strategies for Reliable Diet-Disease Research

Abstract

Systematic measurement error in Food Frequency Questionnaire (FFQ) data presents a significant challenge in nutritional epidemiology and drug development research, potentially distorting diet-disease associations and reducing statistical power. This comprehensive review explores the sources and impacts of these errors while presenting advanced mitigation strategies. We examine foundational concepts of recall bias, social desirability bias, and misclassification inherent in self-reported dietary data. The article details innovative methodological approaches including machine learning correction algorithms, regression calibration techniques, and biomarker validation. We provide practical troubleshooting guidance for improving data quality and compare validation frameworks using 24-hour dietary recalls, recovery biomarkers, and repeated administrations. This resource equips researchers and drug development professionals with evidence-based strategies to enhance FFQ data reliability for more accurate nutritional assessment and strengthened epidemiological findings.

Understanding Systematic Error in FFQ Data: Sources, Impacts, and Cognitive Challenges

Defining Systematic Measurement Error in Nutritional Epidemiology

Systematic measurement error, distinct from random variation, is a form of bias that does not average to zero with repeated measurements and can consistently distort data in a particular direction [1]. In nutritional epidemiology, this error significantly challenges the accurate measurement of dietary exposure, particularly when using self-report instruments like Food Frequency Questionnaires (FFQs) [2] [3]. Within the broader context of correcting systematic error in FFQ data research, understanding its precise definition, origins, and quantitative impact is a foundational step. This document details protocols for quantifying this error and outlines methodologies for its adjustment, providing researchers with tools to mitigate bias in diet-disease association studies.

Quantitative Characterization of Systematic Error

The following tables summarize the core components and quantitative impacts of systematic measurement error, as revealed by validation studies.

Table 1: Components and Proportional Impact of Systematic Error

| Component of Error | Description | Quantitative Impact | Source Study |

|---|---|---|---|

| Systematic Error in FFQ | Persistent bias (e.g., intake-related, person-specific) that remains after accounting for random error. | Accounted for >50% of the total measurement error variance. [2] | |

| Systematic Error in 24HR | Persistent bias in repeated 24-hour recalls. | Accounted for >22% of the total measurement error variance. [2] | |

| Correlated Errors | Person-specific bias creating non-independent errors between FFQ and 24HR. | Leads to overcorrection when using 24HR for calibration; confirmed for protein and energy. [3] | |

| Intake-Related Bias | Error whose magnitude or direction depends on the level of true intake. | Present in FFQ and 24HR data; hampers de-attenuation methods. [3] |

Table 2: Impact of Measurement Error on Diet-Disease Association Estimates

| Scenario / Condition | True Relative Risk (RR) | Observed RR (Attenuated) | Correction Method |

|---|---|---|---|

| Uncorrected FFQ Error [3] | 2.0 | 1.4 (for protein) | None |

| Uncorrected FFQ Error [3] | 2.0 | 1.5 (for potassium) | None |

| Dietary Pattern Analysis (Simulation) [4] | -0.5 (Beneficial) | -0.231 to -0.394 | K-means Cluster Analysis (KCA) |

| Dietary Pattern Analysis (Simulation) [4] | 0.5 (Harmful) | -0.003 to 0.373 | Principal Component Factor Analysis (PCFA) |

Methodological Protocols for Error Assessment and Correction

Core Measurement Error Model Protocol

This protocol outlines the statistical modeling of systematic error using data from multiple dietary assessment methods [2].

- Objective: To estimate the validity, systematic error, and reliability of self-report dietary assessment methods (e.g., FFQ, 24-hour recalls) against a conceptual "true" intake.

- Materials:

- Dietary intake data from FFQs and repeated 24-hour recalls.

- Biomarker data (e.g., plasma carotenoids, urinary nitrogen).

- Data on covariates (e.g., total plasma cholesterol, body mass index, smoking status).

- Procedure:

- Data Collection: Collect parallel data on the same participants using an FFQ, multiple 24-hour recalls, and a biomarker. Ensure the sample size is sufficient (e.g., n > 1,000) for precise estimation [2].

- Model Specification: Apply a measurement error model where the observed intake for participant i at time j via method k is defined as:

Y_{ijk} = α_k + β_k * Z_i + ε_{ijk}Here,Z_iis the unobservable "true" habitual intake,α_kis the method-specific intercept (location bias),β_kis the method-specific scale parameter, andε_{ijk}is the random error [2]. - Parameter Estimation: Use statistical software to estimate model parameters. The validity coefficient (correlation between self-report and true intake) is a key output.

- Error Quantification: Calculate the proportion of total error variance attributed to systematic error for each method [2].

Protocol for Correction via Regression Calibration

Regression calibration is a widely used method to correct diet-disease associations for measurement error [3] [1].

- Objective: To obtain corrected (de-attenuated) estimates of relative risks or regression coefficients in diet-disease analyses.

- Materials:

- Main study data: Disease outcome and FFQ-derived exposure.

- Calibration study data: A subset of the main study population with data from a superior reference method (e.g., recovery biomarker, multiple 24-hour recalls).

- Procedure:

- Calibration Study: In a subset of the cohort, perform a regression of the reference method values (

Ref) on the FFQ values (Q) and other covariates:Ref = γ_0 + γ_1 * Q + .... - Estimate Calibration Factor: The key parameter is the calibration factor

γ_1(also denotedb_RefQ). - Apply Correction: In the main study, replace the error-prone FFQ values with their calibrated values, or directly correct the observed diet-disease association. For a relative risk (

RR_observed), the corrected RR is:RR_corrected = RR_observed^(1/b_RefQ)[3].

- Calibration Study: In a subset of the cohort, perform a regression of the reference method values (

- Limitations: This method's validity is highly dependent on the reference method. Using an "alloyed gold standard" like 24-hour recalls can be compromised by correlated errors between the FFQ and the reference instrument [3] [1].

Protocol for a Triad Validity Analysis

The triad method estimates the validity coefficient of an instrument when no single gold standard is available [3] [1].

- Objective: To estimate the validity coefficient (correlation with true intake) for an FFQ using two other, imperfect measures.

- Materials: Data from three different methods (e.g., FFQ, a biomarker, and 24-hour recalls) collected from the same subjects.

- Procedure:

- Calculate Correlations: Compute the pairwise correlation coefficients between the three methods (

r_QBiom,r_Q24hR,r_Biom24hR). - Estimate Validity: The validity coefficient (

ρ_QT) for the FFQ is estimated as:ρ_QT = √( (r_QBiom * r_Q24hR) / r_Biom24hR )[1].

- Calculate Correlations: Compute the pairwise correlation coefficients between the three methods (

- Limitations: This approach is sensitive to violations of the assumption that errors between the three methods are independent. The presence of correlated errors between the FFQ and 24-hour recalls will bias the estimate [3].

Machine Learning Protocol for Error Adjustment

A novel, supervised machine learning approach can be used to identify and correct for systematic misreporting in FFQ data [5].

- Objective: To reclassify underreported or overreported entries in an FFQ dataset using objectively measured health variables.

- Materials:

- FFQ data.

- Objective health measures (e.g., blood lipids, glucose, body fat percentage, BMI).

- Demographic data (age, sex).

- Procedure:

- Define a "Healthy" Cohort: Split the dataset, defining a "healthy" group based on objective health risk cut-offs (e.g., body fat percentage, age, sex). This group is assumed to report dietary intake more accurately [5].

- Train Predictive Model: Using only the "healthy" group data, train a Random Forest classifier. The objective health measures (LDL, total cholesterol, etc.) are the predictors, and the FFQ responses for specific foods (e.g., frequency of bacon consumption) are the outcomes.

- Predict and Adjust: Apply the trained model to the remaining "unhealthy" group. For unhealthy foods, if the originally reported FFQ value is lower than the model's prediction, replace it with the predicted value to correct for presumed underreporting [5].

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Reagents and Instruments for Measurement Error Research

| Item / Instrument | Function / Rationale | Example & Key Features |

|---|---|---|

| Recovery Biomarkers | Gold standard reference; provides unbiased estimate of absolute intake for specific nutrients. | Doubly Labelled Water (energy), Urinary Nitrogen (protein), Urinary Potassium (K). Requires sample collection (urine) and lab analysis. [3] [1] |

| Concentration Biomarkers | Alloyed gold standard; correlates with dietary intake but influenced by metabolism. | Plasma Carotenoids (fruit/vegetable intake), Vitamin C, Vitamin E. Requires blood draw and high-performance liquid chromatography (HPLC). [2] [1] |

| 24-Hour Dietary Recalls (24HR) | Alloyed gold standard reference method; detailed short-term intake. | Multiple, non-consecutive recalls collected by trained interviewers using software (e.g., EPIC-Soft). Used for calibration. [3] [1] |

| Food Frequency Questionnaire (FFQ) | Primary exposure instrument in main studies; assesses habitual long-term intake. | Semi-quantitative, multi-item FFQ (e.g., Block 2005, Arizona FFQ). Cost-effective but prone to systematic error. [2] [5] |

| Food Diaries/Records | Potential reference instrument; prospective recording reduces recall bias. | Multi-day weighed or estimated food records. High participant burden but considered more accurate than FFQs. [1] |

Food Frequency Questionnaires (FFQs) are widely used in nutritional epidemiology to assess habitual dietary intake and investigate diet-disease relationships due to their cost-effectiveness and feasibility in large cohort studies [5]. However, as self-reported instruments, FFQs are susceptible to substantial systematic measurement errors that can compromise the validity of research findings. These errors introduce bias that obscures true diet-disease relationships and leads to misinterpretation of epidemiological data. Within the broader context of correcting systematic measurement error in FFQ research, understanding three major sources of bias—recall bias, social desirability bias, and misclassification—is fundamental to developing effective correction methodologies. These biases manifest consistently across populations and study designs, producing predictable patterns of error that can be quantified and adjusted statistically [6] [3].

The presence of these biases has profound implications for nutritional epidemiology. Measurement error in FFQs can weaken observed relative risks, with true relative risks of 2.0 potentially attenuated to approximately 1.4-1.5 in observed data [3]. Furthermore, systematic error may account for over 50% of measurement error variance in FFQ data [2], substantially impacting the accuracy of diet-disease association studies. This document provides researchers with a comprehensive analysis of these bias sources, along with protocols for their quantification and correction, to enhance the validity of nutritional research.

The table below summarizes the characteristics, impact, and detection methods for the three major bias sources in FFQ research.

Table 1: Major Sources of Bias in Food Frequency Questionnaire Data

| Bias Type | Definition | Primary Impact | Detection Methods | Typical Magnitude |

|---|---|---|---|---|

| Recall Bias | Inaccurate memory of past dietary consumption | Under/over-reporting of specific food items | Comparison with 24-hour recalls; Biomarker studies | Correlation coefficients: 0.23-0.46 between FFQ and 24HR [7] |

| Social Desirability Bias | Tendency to report socially acceptable rather than true intake | Systematic under-reporting of "unhealthy" foods; Over-reporting of "healthy" foods | Social Desirability Scales; Comparison with recovery biomarkers | ~50 kcal/point on social desirability scale (~450 kcal over interquartile range) [8] |

| Misclassification | Incorrect categorization of participants into intake quantiles | Attenuation of risk estimates; Loss of statistical power | Triad method (FFQ, 24HR, biomarker); Cross-classification analysis | 50% of Black participants misclassified as eating unhealthy based on FFQ vs. 24HR [7] |

The impact of these biases varies across population subgroups. For example, one study found that correlations between FFQ and 24-hour recall measurements were substantially lower for Black women (mean rho = 0.23) compared to White women (mean rho = 0.46) [7]. Similarly, using a cutoff of 40% of the maximum Alternative Healthy Eating Index (AHEI) score, 50% of Black participants were classified as eating unhealthy based on 24-hour recalls, versus only 2.6% based on FFQ data, indicating significant differential misclassification by race [7].

Social desirability bias demonstrates gender variations, with the effect being approximately twice as large for women as for men [8]. This bias predominantly affects reporting of foods with strong health perceptions, with under-reporting of high-fat foods being particularly common [5] [8]. The bias is more pronounced in individuals with higher body mass index and those who have higher true intake of less healthy foods [8].

Methodological Protocols for Bias Assessment

Protocol for Assessing Social Desirability Bias

Objective: To quantify the effect of social desirability bias on nutrient intake estimates from FFQ data.

Materials:

- Food Frequency Questionnaire (180-item semi-quantitative FFQ recommended)

- Social Desirability Scale (e.g., Marlowe-Crowne Scale)

- Multiple 24-hour dietary recalls (minimum of 3 non-consecutive days)

- Statistical software (R, SAS, or SPSS)

Procedure:

- Administer the social desirability scale and FFQ to participants

- Collect multiple 24-hour dietary recalls as a reference method

- Calculate nutrient intakes from both FFQ and 24-hour recalls

- Perform linear regression analysis with the difference between FFQ and 24-hour recall estimates as the dependent variable and social desirability score as the independent variable

- Calculate the bias magnitude as the regression coefficient for social desirability score

Analysis:

The statistical model should be specified as follows:

Δ = β0 + β1(SDS) + ε

Where Δ = (FFQ intake - 24HR intake), SDS = social desirability score, β1 represents the bias magnitude per unit of social desirability score

Interpretation: A significant β1 indicates presence of social desirability bias. In one study, social desirability score produced a large downward bias equaling about 50 kcal/point on the social desirability scale or about 450 kcal over its interquartile range [8].

Protocol for Assessing Misclassification Error

Objective: To evaluate the extent and impact of misclassification in FFQ-based dietary intake assessment.

Materials:

- Food Frequency Questionnaire

- Multiple 24-hour dietary recalls (minimum of 4 days recommended)

- Biomarkers of intake where available (e.g., urinary nitrogen for protein, carotenoids for fruit/vegetable intake)

- Statistical software with capabilities for cross-classification analysis

Procedure:

- Collect FFQ and reference method data (24-hour recalls and/or biomarkers) from the same participants

- Categorize participants into quantiles (quartiles or quintiles) of intake based on each method

- Perform cross-classification analysis comparing quantile assignment between methods

- Calculate the proportion of participants classified into the same, adjacent, and opposite quantiles

- Compute weighted kappa statistics to assess agreement beyond chance

Analysis: For a sample cross-classification analysis:

- Create contingency tables of quantile assignments (FFQ vs reference method)

- Calculate percentage of participants in the same and adjacent quartiles (acceptable: >70%)

- Calculate percentage of participants grossly misclassified (opposite quartiles; acceptable: <10%)

- Compute weighted kappa statistic (values >0.2 indicate acceptable agreement)

Interpretation: In validation studies, the proportion of participants classified into the same and adjacent quartiles typically ranges from 64.3% to 83.9%, with gross misclassification ranging from 3.7% to 12.2% [9]. Weighted kappa values generally range from 0.02 to 0.36, with most exceeding 0.2 indicating fair agreement [9].

Protocol for Correcting Measurement Error Using Biomarkers

Objective: To correct intake-health associations for measurement error using recovery biomarkers as reference instruments.

Materials:

- Food Frequency Questionnaire data

- Recovery biomarkers (e.g., urinary nitrogen for protein, urinary potassium for potassium intake, doubly labeled water for energy)

- 24-hour dietary recall data (optional)

- Health outcome data

Procedure:

- Collect duplicate recovery biomarkers and FFQ data from participants

- For each participant, calculate true intake approximation from biomarker using established conversion formulas (e.g., urinary protein = [6.25 × (urinary N/0.81)])

- Perform linear regression of biomarker-based intake values against FFQ-based intake values to obtain calibration factor

- Use the calibration factor to correct observed intake-health associations

Analysis:

The measurement error model can be specified as:

Y_ijk = α_k + β_k * Z_i + ε_ijk

Where Yijk is the observed intake for participant i at time j using method k, Zi is the true unobservable usual intake, and β_k represents the scale parameter [2].

The correction for relative risk estimates follows:

True RR = Observed RR^(1/λ)

Where λ is the attenuation factor obtained from the calibration study.

Interpretation: Calibration to recovery biomarkers represents the preferred approach for correcting intake-health associations as it directly addresses the measurement error structure. In practice, this method has been shown to correct a true relative risk of 2.0 that was attenuated to 1.4-1.5 back to approximately 2.0 [3].

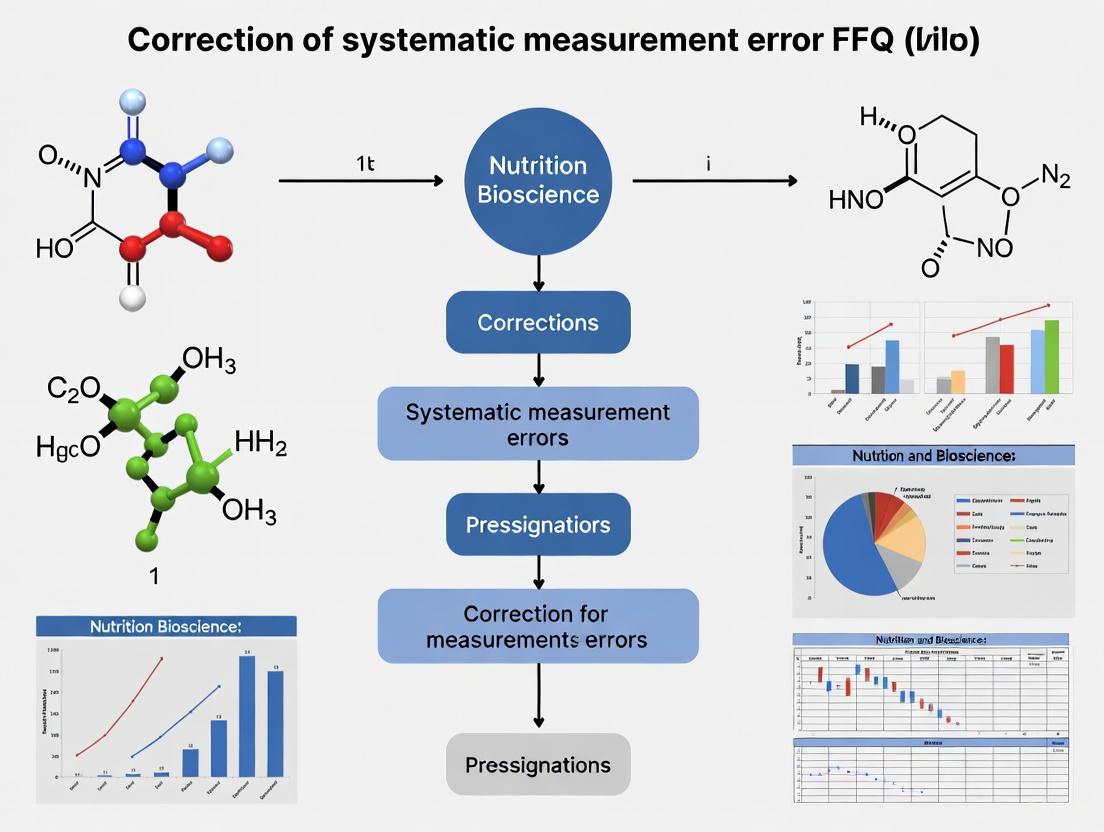

Visualizing Bias Assessment and Correction Workflows

Diagram 1: Comprehensive Workflow for FFQ Bias Assessment and Correction

Advanced Correction Methodologies

Machine Learning Approaches for Bias Correction

Objective: To implement a supervised machine learning method for correcting underreported error in FFQ data.

Materials:

- FFQ data with suspected underreporting

- Objective biomarkers (LDL cholesterol, total cholesterol, blood glucose)

- Anthropometric measures (body fat percentage, BMI)

- Demographic data (age, sex)

- Programming environment with machine learning capabilities (Python/R)

Procedure:

- Split dataset into healthy and unhealthy participants using established health risk classifications

- Train a random forest classifier using the healthy participant data to model relationships between objective measures and food consumption

- Apply the trained model to predict food frequency variables for the unhealthy group based on their objective measures

- Compare predicted values with originally reported FFQ values

- Replace underreported values with predictions using a defined algorithm

Analysis: For each response with L categories C(1), C(2), ..., C(L) with corresponding probabilities P(1), P(2), ..., P(L):

- For unhealthy foods where underreporting is suspected: if original FFQ response < predicted value, replace with predicted value

- For healthy foods where overreporting is suspected: replace FFQ response with category lower than reported value that has the largest probability

Interpretation: This method has demonstrated high model accuracies ranging from 78% to 92% in participant-collected data and 88% in simulated data [5]. The random forest approach is particularly advantageous due to its capability to capture nonlinear relationships, robustness to overfitting, and ability to rank importance of predictors.

Measurement Error Modeling for Diet-Disease Associations

Objective: To estimate validity coefficients and systematic error components in dietary assessment methods.

Materials:

- Multiple dietary assessment methods (FFQ, 24-hour recalls, biomarkers)

- Statistical software for measurement error modeling

Procedure:

- Collect data using multiple dietary assessment methods in the same participants

- Specify a measurement error model that relates observed intakes to true usual intake

- Estimate validity coefficients (correlations between observed and true intake)

- Quantify the proportion of measurement error variance due to systematic error

- Use these estimates to correct observed diet-disease associations

Analysis:

The measurement error model takes the form:

Y_ijk = α_k + β_k * Z_i + ε_ijk

Where Yijk is the observed intake for participant i at time j using method k, Zi is the true unobservable usual intake, αk and βk are method-specific parameters, and ε_ijk is measurement error.

Interpretation: Studies applying this methodology have found validity coefficients of approximately 0.44 for 24-hour recalls and 0.39 for FFQs [2]. Systematic error can account for over 22% and 50% of measurement error variance for 24-hour recalls and FFQs, respectively [2].

Table 2: Comparison of Correction Approaches for FFQ Measurement Error

| Correction Approach | Procedure | Required Resources | Corrected Errors | Limitations |

|---|---|---|---|---|

| Calibration to Recovery Biomarkers | Regression of biomarker values vs. FFQ values | Duplicate recovery biomarkers (urinary N, K) | Random error, Person-specific bias | Limited biomarkers available; Costly |

| Triad Method with Biomarker and 24HR | Estimate validity coefficient using biomarker, FFQ, and 24HR | Single biomarker + 24HR data | Random error | Effect of intake-related bias and correlated errors |

| Calibration to 24HR | Regression of 24HR values vs. FFQ values | Multiple 24HR administrations | Random error | Correlated errors between methods not addressed |

| Machine Learning Correction | Random forest prediction using objective measures | Biomarkers, Anthropometric data | Under/over-reporting based on health status | Requires healthy subset for training |

The Researcher's Toolkit: Essential Reagent Solutions

Table 3: Essential Research Materials for FFQ Bias Assessment and Correction

| Research Tool | Specifications | Application | Key Considerations |

|---|---|---|---|

| Food Frequency Questionnaire | 164-180 item semi-quantitative; Frequency (never to 6-7 days/week) and portion size assessment | Assessment of habitual dietary intake | Include culture-specific food items; Validate for target population |

| 24-Hour Dietary Recalls | Multiple pass method; Minimum 3 non-consecutive days (including weekend); EPIC-Soft software recommended | Reference method for validation studies | Trained interviewers; Multiple days to account for day-to-day variation |

| Recovery Biomarkers | Urinary nitrogen (protein); Urinary potassium (potassium); Doubly labeled water (energy) | Gold standard for specific nutrients | PABA check for urine completeness; Adjust for recovery rates |

| Social Desirability Scales | Marlowe-Crowne Social Desirability Scale; 33-item questionnaire | Quantification of social desirability bias | Administer concurrently with FFQ |

| Biochemical Analyzers | High-performance liquid chromatography (carotenoids); Kodak Ektachem Analyzer (cholesterol) | Objective biomarker measurement | Participate in quality assurance programs |

| Statistical Software Packages | R, SAS, SPSS with measurement error modeling capabilities | Data analysis and correction modeling | Custom programming for complex error models |

The selection of appropriate research tools depends on study objectives, population characteristics, and available resources. For comprehensive bias assessment, multiple complementary tools should be employed. For example, the combination of 24-hour recalls and recovery biomarkers provides a more complete assessment of measurement error structure than either method alone [3].

When implementing correction approaches, researchers should consider the specific limitations of each method. Calibration to 24-hour recalls only partially corrects measurement error due to correlated errors between the instruments and intake-related bias in the 24-hour recalls themselves [3]. In contrast, calibration to recovery biomarkers provides more complete error correction but is limited to the few nutrients with available biomarkers.

For nutrients without recovery biomarkers, the triad method—using a combination of FFQ, 24-hour recall, and concentration biomarker (e.g., plasma carotenoids)—provides a reasonable alternative for estimating validity coefficients, though this approach is still affected by intake-related bias and correlated errors between methods [3].

Cognitive Processes in Dietary Recall and Their Failure Points

Recalling dietary intake is a central part of population nutrition surveillance conducted to inform public health nutrition policy and interventions [10]. The 24-hour dietary recall (24HR) method is a standard method in nutrition surveillance, during which participants receive temporal and content cues to retrieve memories and are subsequently required to recall, describe, and quantify all consumed foods and beverages from the previous 24 hours [10]. Despite methodological improvements, measurement error remains a significant issue, with 24HR underestimating energy intake by 8–30% [10]. This error may be related to the cognitive challenges involved in completing a 24HR, as the act of recalling, describing, and quantifying involves several complex neurocognitive processes [10]. Understanding these processes and their potential failure points is crucial for researchers seeking to correct systematic measurement error in food frequency questionnaire (FFQ) data research.

Neurocognitive Processes in Dietary Recall

The completion of a dietary recall engages multiple interdependent cognitive functions. Errors in dietary reporting can occur in the encoding and/or retrieval of memories and in the mapping of those memories into a response [10].

- Encoding: The initial processing of dietary information is influenced by attention, perception, interpretation, organization, and retention [10]. Paying attention during encoding results in better subsequent recall, while divided attention during encoding is associated with large reductions in subsequent recall [10].

- Retrieval and Conceptualization: Once memories are encoded, various processes are involved in their retrieval and the formulation of responses. This includes cognitive flexibility, which allows an individual to switch cognitive strategies and consider multiple aspects of a complex situation simultaneously [10]. The strength of visual imagery also predicts memory capacity, affecting how well a participant can conceptualize visual memory [10].

- Working Memory and Response Formulation: Working memory, the ability to hold information in mind and manipulate it, is crucial for remembering and quantifying food items [10]. Finally, the formulation of a response requires integrating retrieved information into a coherent and quantifiable report.

Quantifying Cognitive Performance and Its Impact on Recall Error

Recent controlled feeding studies have quantitatively investigated how variation in neurocognitive processes predicts variation in 24HR error [10]. Participants completed cognitive tasks and technology-assisted 24HRs during which true energy intake was known.

Table 1: Cognitive Tasks Used to Assess Functions Relevant to Dietary Recall

| Cognitive Task | Primary Cognitive Function Assessed | Measurement Outcome | Association with 24HR Error |

|---|---|---|---|

| Trail Making Test [10] | Visual attention, executive function, processing speed | Time to complete the task | Longer completion time associated with greater error in energy estimation in self-administered tools (ASA24, Intake24) [10] |

| Wisconsin Card Sorting Test [10] | Cognitive flexibility, executive function | Number of accurate trials as a percentage of total trials | No significant association with error in interviewer-administered recall [10] |

| Visual Digit Span [10] | Working memory | Last digit span correctly recalled before consecutive errors | Not all cognitive tasks showed associations, highlighting the specific role of visual attention [10] |

| Vividness of Visual Imagery Questionnaire [10] | Visual imagery strength | Self-rated vividness of imagined scenes | Research on visual imagery's role is mixed; some studies find it predicts memory capacity, others do not [10] |

Table 2: Impact of Cognitive Function on Dietary Reporting Error in a Controlled Feeding Study

| Cognitive Measure | Dietary Assessment Tool | Statistical Association (B Coefficient) | Variance Explained (R²) |

|---|---|---|---|

| Trail Making Test (time) | ASA24 (Self-Administered) | B 0.13 (95% CI 0.04, 0.21) [10] | 13.6% [10] |

| Trail Making Test (time) | Intake24 (Self-Administered) | B 0.10 (95% CI 0.02, 0.19) [10] | 15.8% [10] |

| Trail Making Test (time) | IA-24HR (Interviewer-Administered) | Not Significant [10] | Not Reported |

Experimental Protocols for Investigating Cognition-Dietary Error Relationships

Protocol 1: Controlled Feeding Study with Cognitive Assessment

This protocol outlines a method for directly quantifying the relationship between cognitive function and dietary reporting error [10].

1. Objective: To investigate whether variation in neurocognitive processes, measured using cognitive tasks, is associated with variation in measurement error of 24-hour dietary recalls.

2. Materials and Equipment:

- Controlled feeding laboratory or pre-portioned meals

- Technology-assisted 24HR platforms (e.g., ASA24, Intake24)

- Computerized cognitive task battery

- Demographic and health questionnaire

3. Procedure:

- Recruitment: Recruit a convenience sample (target n=150) of adults, excluding those with serious illnesses, pregnancy, or special dietary restrictions [10].

- Baseline Assessment:

- Administer demographic questionnaire (age, sex, education).

- Conduct computer-based cognitive assessment in the following order [10]:

- Trail Making Test

- Wisconsin Card Sorting Test

- Visual Digit Span (forward and backward)

- Vividness of Visual Imagery Questionnaire

- Controlled Feeding Phase:

- Use a crossover design where participants attend multiple feeding days (e.g., 3 days, 1 week apart) [10].

- Provide all foods and beverages to participants, recording true energy and nutrient intake.

- Dietary Recall Phase:

- On the day following each feeding day, participants complete a 24HR using the assigned method (e.g., ASA24, Intake24, Interviewer-Administered). The order of methods is randomized [10].

- Data Analysis:

- Calculate the percentage error between reported and true energy intakes:

(Reported - True) / True * 100. - Use linear regression to assess the association between cognitive task scores and the absolute percentage error in estimated energy intake, adjusting for covariates like age, sex, and education [10].

- Calculate the percentage error between reported and true energy intakes:

Protocol 2: Biomarker-Based Validation of Self-Report Instruments

This protocol uses recovery biomarkers to evaluate the measurement error structure of self-report dietary instruments, an essential step for understanding systematic error [11].

1. Objective: To assess the validity, systematic error, and reliability of self-report dietary assessment methods (24HR and FFQ) using recovery biomarkers.

2. Materials and Equipment:

- Doubly labeled water (DLW) for energy intake validation

- Urinary nitrogen for protein intake validation

- Urinary potassium and sodium for respective intake validation

- 24-hour dietary recall interview protocol or software

- Food frequency questionnaire

- High-performance liquid chromatography (HPLC) for biomarker analysis

3. Procedure:

- Recruitment: Recruit a subsample (e.g., n=100-1000) from a larger cohort study [2] [11].

- Biomarker Measurement:

- Dietary Assessment:

- Statistical Modeling:

- Apply a measurement error model (e.g.,

Y_ijk = α_k + β_k * Z_i + ε_ijk), where Y is the observed intake from method k, Z is the unobservable true intake, and ε is the measurement error [2]. - Estimate the validity (correlation between self-report and true intake) and the proportion of measurement error variance attributable to systematic error for each method [2] [11].

- Apply a measurement error model (e.g.,

Diagram 1: Experimental workflow for cognition-dietary error research.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Dietary Validation and Cognitive Research

| Tool / Reagent | Function / Application | Specification / Example |

|---|---|---|

| Recovery Biomarkers [11] | Objective validation of self-reported intake for specific nutrients; considered the gold standard for estimating systematic error. | Doubly Labeled Water (energy), Urinary Nitrogen (protein), Urinary Sodium, Urinary Potassium [11]. |

| Automated 24HR Tools [10] [12] | Standardized, self-administered dietary data collection; reduces interviewer burden and cost. | ASA24 (Automated Self-Administered 24-Hour Recall), Intake24 [10] [12]. |

| Cognitive Task Batteries [10] | Quantitative assessment of specific neurocognitive functions implicated in the dietary recall process. | Trail Making Test (visual attention/executive function), Wisconsin Card Sorting Test (cognitive flexibility), Visual Digit Span (working memory) [10]. |

| Statistical Error Models [2] [11] | Modeling the structure of measurement error (random vs. systematic) in dietary data, enabling correction in diet-disease analyses. | Measurement error model: Y = α + β*Z + ε, where Z is true intake and Y is reported intake [2]. Regression calibration techniques [11]. |

Implications for Correcting Systematic Error in FFQ Research

Food Frequency Questionnaires are particularly susceptible to systematic error due to their reliance on long-term memory and complex cognitive tasks [11]. The findings on cognitive processes directly inform strategies for correcting systematic measurement error in FFQ-based research:

- Covariate Adjustment in Calibration: In regression calibration, where FFQ data are adjusted using data from more accurate 24HRs, cognitive performance scores (e.g., from the Trail Making Test) could be included as covariates in the calibration model. This would account for the systematic bias introduced by differences in participants' cognitive abilities [10] [11].

- Stratified Sampling and Analysis: For studies targeting populations where cognitive function may vary systematically (e.g., older adults), researchers could stratify sampling or analysis by cognitive performance levels. This approach helps to quantify and control for the bias introduced by cognitive factors on FFQ reporting [13].

- Instrument Selection and Design: Understanding that interviewer-administered recalls appear less affected by certain cognitive deficits (like poor visual attention) suggests that using interviewer-led 24HRs for calibration, rather than self-administered tools, may provide a more robust reference for correcting FFQs in cognitively diverse samples [10].

- Targeted Instrument Improvement: FFQs could be redesigned to mitigate specific cognitive failure points. For instance, improving the visual layout and reducing cognitive load could help individuals with weaker executive function, thereby reducing one source of systematic error at the data collection stage.

Diagram 2: From cognitive failures to correction strategies in FFQ research.

Impact of Measurement Error on Diet-Disease Association Studies

Diet-disease association studies are foundational to understanding how nutrition influences chronic disease risk. However, the field of nutritional epidemiology faces a significant challenge: measurement error in dietary intake assessment. Food Frequency Questionnaires (FFQs) are widely used in large-scale studies due to their cost-effectiveness and ability to assess habitual diet, but they are susceptible to both random and systematic measurement errors [14]. These errors arise from various sources including recall bias, social desirability bias, misclassification, and the difficulty of accurately estimating portion sizes and consumption frequencies [5]. The presence of measurement error substantially impacts the validity of observed diet-disease relationships, typically attenuating relative risk estimates toward the null and reducing statistical power to detect true associations [14]. For instance, a true relative risk of 2.0 may be estimated as only 1.03-1.06 for energy intake, 1.10-1.12 for protein intake, and 1.17-1.22 for potassium intake when using FFQ data with measurement error [14]. This document provides application notes and experimental protocols for understanding, quantifying, and correcting measurement error in FFQ-based research, with particular emphasis on addressing systematic error.

Quantifying the Impact of Measurement Error

Statistical Consequences of Measurement Error

Measurement error in FFQ data creates three primary problems for diet-disease association studies: (1) bias in estimated relative risks, typically attenuating them toward the null value; (2) loss of statistical power to detect true diet-disease relationships; and (3) potential invalidity of conventional statistical tests in multivariable models containing multiple error-prone exposures [14]. The table below summarizes the attenuation factors for different nutrients derived from the Observing Protein and Energy Nutrition (OPEN) Study:

Table 1: Attenuation Factors for Different Nutrients from the OPEN Study [14]

| Nutrient | Attenuation Factor (Men) | Attenuation Factor (Women) | True RR=2.0 Becomes |

|---|---|---|---|

| Energy | 0.08 | 0.04 | 1.03-1.06 |

| Protein | 0.16 | 0.14 | 1.10-1.12 |

| Potassium | 0.29 | 0.23 | 1.17-1.22 |

| Protein Density | 0.40 | 0.32 | 1.25-1.32 |

| Potassium Density | 0.49 | 0.57 | 1.40-1.48 |

The severe attenuation demonstrated in Table 1 necessitates enormous sample sizes to compensate for lost statistical power. To maintain power when studying energy intake, sample sizes may need to be 25-100 times larger; for protein, 10-12 times larger; and for protein density, 5-8 times larger [14].

Impact on Dietary Pattern Analyses

Measurement errors also distort dietary patterns derived from FFQ data. Research examining principal component factor analysis (PCFA) and K-means cluster analysis (KCA) has demonstrated that larger measurement errors cause more serious distortion of derived dietary patterns [4]. Consistency rates for dietary patterns under measurement error ranged from 67.5% to 100% for PCFA and from 13.4% to 88.4% for KCA, with larger errors leading to greater attenuation effects on association coefficients between dietary patterns and disease outcomes [4].

Methodological Approaches for Error Correction

Several statistical and computational methods have been developed to address measurement error in FFQ data. The table below summarizes the primary approaches, their methodologies, and applications:

Table 2: Methods for Correcting Measurement Error in FFQ Data

| Method | Description | Applications | Key Findings |

|---|---|---|---|

| Regression Calibration (RC) | Regression of superior reference method (e.g., biomarker, 24hR) vs. FFQ to obtain calibration factor [15] | Correcting intake-health associations | Reduced bias for protein (AF: 1.14) and potassium (AF: 1.28) [15] |

| Enhanced Regression Calibration (ERC) | Extension of RC adding individual random effects to incorporate all available information [15] | Combining FFQ and 24hR data | Further reduced bias for protein (AF: 0.95) with more power than RC [15] |

| Microbiome-Based Correction (METRIC) | Deep learning approach leveraging gut microbial composition to correct random errors [16] | Nutrient profile correction | Effectively minimized simulated random errors, particularly for microbiome-metabolized nutrients [16] |

| Mixed-Effects Model (MEM) | Mixed-effects modeling approach to measurement error correction [17] | Assessing choline-CHD association | Generally outperformed SIMEX in bias reduction except when σX > σU [17] |

| Simulation-Extrapolation (SIMEX) | Simulation-based method that estimates effect of measurement error and extrapolates to no error scenario [17] | Assessing choline-CHD association | Effectively reduced bias but generally performed worse than MEM [17] |

| Machine Learning Correction | Random Forest classifier to identify and correct misreported entries [5] | Addressing underreporting in FFQ | Achieved 78%-92% accuracy in correcting underreported entries [5] |

Biomarker-Based Correction Approaches

Recovery biomarkers serve as gold standard reference instruments for validating and correcting self-reported dietary data [3]. These include doubly labeled water for energy intake assessment, 24-hour urinary nitrogen for protein intake, and 24-hour urinary potassium for potassium intake [14]. The preferred approach for correcting intake-health associations involves calibration to duplicate recovery biomarkers, which effectively removes both random and systematic errors [3]. When using the validity coefficient from a duplicate biomarker without calibration, overcorrected associations can result due to intake-related bias in the FFQ [3]. Similarly, triad methods using biomarkers combined with 24-hour recalls may be hampered by intake-related bias and correlated errors between instruments [3].

Experimental Protocols for Error Correction

Protocol 1: Regression Calibration Using Biomarkers

Purpose: To correct measurement error in FFQ-based nutrient intake estimates using recovery biomarkers as reference instruments.

Materials and Reagents:

- 24-hour urinary collection kits (containers, preservatives, instructions)

- P-aminobenzoic acid (PABA) tablets (80 mg) for completeness verification

- Doubly labeled water for energy intake validation (optional)

- Food composition database for nutrient calculation

- Statistical software (R, SAS, or Stata)

Procedure:

- Participant Recruitment: Recruit a representative subsample from the main cohort (minimum n=100-200).

- Dietary Assessment:

- Administer the FFQ to all participants to assess habitual dietary intake.

- Collect at least two 24-hour urine samples from each participant for biomarker assessment.

- Biomarker Processing:

- Analyze urine samples for nitrogen and potassium concentrations.

- Adjust for completeness using PABA recovery (exclude samples <50% recovery, adjust proportionally for 50-85% recovery).

- Calculate protein intake as [6.25 × (urinary N/0.81)] and potassium intake as (urinary K/0.77) [3].

- Statistical Calibration:

- Perform linear regression with biomarker-based intake as dependent variable and FFQ-based intake as independent variable:

Biomarker_i = β₀ + β₁ × FFQ_i + ε_i - Obtain the calibration factor (β₁) and its standard error.

- Perform linear regression with biomarker-based intake as dependent variable and FFQ-based intake as independent variable:

- Application to Main Study:

- Apply the calibration factor to all FFQ values in the main study:

Corrected intake_i = β₀ + β₁ × FFQ_i - Use calibrated values in diet-disease association models.

- Apply the calibration factor to all FFQ values in the main study:

Validation: Compare attenuation factors before and after correction by examining the association between calibrated intake values and health outcomes.

Protocol 2: Machine Learning-Based Error Adjustment

Purpose: To identify and correct for systematic underreporting in FFQ data using supervised machine learning.

Materials and Reagents:

- FFQ data with demographic and clinical variables

- Objective health measures: LDL cholesterol, total cholesterol, blood glucose, body fat percentage (DXA), BMI

- Statistical software with machine learning capabilities (Python with scikit-learn or R)

Procedure:

- Data Preparation:

- Select FFQ variables known to be susceptible to underreporting (e.g., high-fat foods like bacon, fried chicken).

- Compile objective health measures and demographic data (age, sex).

- Health Status Classification:

- Divide participants into "healthy" and "unhealthy" groups using established cutoffs for body fat percentage, blood lipids, and glucose.

- Use the "healthy" group (n=384 in original study) as the reference dataset with assumed more accurate reporting [5].

- Model Training:

- Train a Random Forest classifier using healthy group data:

- Features: LDL, total cholesterol, glucose, body fat %, BMI, age, sex

- Target: FFQ response categories for specific foods

- Tune hyperparameters using cross-validation to optimize performance.

- Train a Random Forest classifier using healthy group data:

- Prediction and Adjustment:

- Apply the trained model to predict expected FFQ responses for the unhealthy group.

- Compare predictions with actual FFQ responses.

- If original response < predicted value for unhealthy foods, replace with prediction.

- For healthy foods where overreporting is suspected, apply reverse adjustment.

- Validation:

- Assess model accuracy (target: >80% based on original study results).

- Compare distributions of adjusted vs. unadjusted data.

- Evaluate correlation between adjusted FFQ data and objective health measures.

Implementation Note: This method achieved 78%-92% accuracy in correcting underreported entries in validation studies [5].

Protocol 3: Microbiome-Based Error Correction (METRIC)

Purpose: To correct random errors in nutrient profiles using gut microbiome data.

Materials and Reagents:

- Fecal sample collection kits (DNA stabilization buffers, containers)

- DNA extraction kits for microbial genomic DNA

- 16S rRNA or shotgun metagenomic sequencing reagents

- Bioinformatics pipeline for microbial composition analysis

- Deep learning framework (Python with TensorFlow/PyTorch)

Procedure:

- Sample Collection:

- Collect fecal samples from all participants.

- Extract and sequence microbial DNA.

- Process sequencing data to obtain microbial abundance profiles.

- Dietary Assessment:

- Obtain nutrient profiles from FFQ or 24-hour recalls.

- Standardize nutrient values (z-scores or log-transformation).

- Model Architecture:

- Implement a neural network with:

- Input layer: nutrient profiles + microbial compositions

- Three hidden layers (256 units each)

- Skip connection adding input directly to output

- Xavier initialization for weights

- Use mean squared error as loss function.

- Implement a neural network with:

- Training Procedure:

- Split data into training (80%) and test (20%) sets.

- Generate corrupted nutrient profiles by adding random noise to assessed profiles.

- Train model to predict assessed profiles from corrupted profiles + microbiome.

- Use Adam optimizer with learning rate 0.001.

- Application:

- Apply trained model to full dataset to obtain corrected nutrient profiles.

- Validate using correlation with known biomarkers or in simulated error datasets.

Performance Metrics: The method demonstrated improved Pearson correlation coefficients between predicted and true nutrient concentrations, particularly for nutrients metabolized by gut bacteria [16].

Visualization of Method Workflows

METRIC Workflow Diagram

Microbiome-Based Error Correction Workflow

Measurement Error Correction Decision Framework

Measurement Error Correction Decision Framework

Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Measurement Error Correction Studies

| Reagent/Material | Function | Application Examples | Specifications |

|---|---|---|---|

| 24-Hour Urine Collection Kit | Recovery biomarker assessment for protein and potassium intake | Validation of self-reported protein and potassium intake [3] | Includes containers, preservatives, PABA tablets for completeness verification |

| Doubly Labeled Water | Gold standard for energy expenditure measurement | Validation of self-reported energy intake [14] | ²H₂¹⁸O mixture, mass spectrometry analysis |

| Fecal DNA Extraction Kit | Isolation of microbial genomic DNA from stool samples | Microbiome-based error correction methods [16] | Stable at room temperature, inhibitor removal |

| 16S rRNA Sequencing Reagents | Amplification and sequencing of bacterial genes | Gut microbiome composition analysis [16] | Primers targeting V4 region, high-fidelity polymerase |

| Food Composition Database | Nutrient calculation from food intake data | All dietary assessment methods | Country-specific, regularly updated (e.g., Dutch food composition table 2011) [15] |

| Web-Based 24-Hour Recall System | Reference method for dietary assessment | Regression calibration studies [15] | Automated Self-Administered 24-hour Dietary Assessment Tool (ASA24) |

| Statistical Software Packages | Implementation of error correction methods | All statistical analyses | R (mime, simex packages), SAS, Stata, Python with scikit-learn |

Measurement error presents a substantial challenge in nutritional epidemiology, potentially obscuring true diet-disease relationships and leading to erroneous conclusions. The methods described herein provide researchers with multiple approaches for addressing this challenge, ranging from traditional biomarker-based calibration to innovative machine learning and microbiome-based techniques. Implementation should be guided by available resources, study objectives, and the specific nature of measurement error in the target population. When applying these methods, researchers should consider that correlation between errors in different dietary assessment instruments, intake-related bias, and person-specific bias can complicate correction efforts [3]. For optimal results, a combination of methods may be necessary, and validation using objective biomarkers should be pursued whenever possible. As the field advances, integration of multiple data sources including omics technologies and objective physical activity measures will further enhance our ability to accurately characterize diet-disease relationships.

The Consequences for Drug Development and Clinical Research

The accurate measurement of dietary intake is a cornerstone of nutritional epidemiology, which in turn plays a critical role in understanding the dietary determinants of disease and developing nutritional interventions in clinical research. The food frequency questionnaire (FFQ) is the most frequently used method to assess dietary intake in large-scale epidemiological studies investigating diet-disease relationships due to its practicality, low cost, and ability to capture long-term habitual intake [18]. However, FFQs are prone to both random and systematic measurement errors that can significantly distort research findings [1]. Systematic errors, or biases, are particularly problematic as they do not average out to the true value even with repeated measurements and can introduce directional biases in observed associations [1]. In the context of drug development and clinical research, where decisions about therapeutic targets and intervention strategies are based on observed associations, uncorrected systematic errors in FFQ data can lead to flawed conclusions about diet-disease relationships, misallocation of research resources, and ultimately, compromised clinical recommendations.

The validation of FFQs typically involves comparison with reference instruments such as multiple 24-hour dietary recalls (24HRs), dietary records, or biomarkers [19]. Studies consistently demonstrate systematic discrepancies between FFQs and reference methods. For instance, validation studies show that FFQs tend to overestimate absolute intake levels for many nutrients compared to 24-hour dietary recalls [18]. This overestimation represents a systematic error that, if unaddressed, can lead to incorrect classifications of nutrient adequacy or excess in population studies. Furthermore, correlation coefficients between FFQs and reference methods, while often statistically significant, typically range from moderate to strong (e.g., 0.16 to 0.65 for unadjusted values), indicating substantial measurement error [18]. The persistence of these discrepancies across different populations and FFQ designs highlights the fundamental challenge of systematic error in nutritional assessment and its potential consequences for interpreting research outcomes.

Quantifying Measurement Error in Dietary Assessment

Typology of Measurement Errors in FFQs

Measurement errors in dietary assessment using FFQs can be categorized into two broad types: random errors and systematic errors. Random errors are chance fluctuations in reported intake that average out to the true value when many repeats are taken, following the classical measurement error model [1]. In contrast, systematic errors (also called biases) do not average out to the true value even with repeated measurements and can introduce directional bias in observed associations [1]. These errors operate at different levels - within individuals (affecting repeatability) and between individuals (affecting accuracy) - creating at least four possible combinations of error types that can coexist in FFQ data [1].

Common sources of systematic error in FFQs include:

- Omission of commonly eaten foods when the food list is insufficient or not culturally specific, leading to underestimation of absolute consumption levels [19]

- Overestimation of consumption when the food list includes too many items (particularly over 100 items) [19]

- Unintentional underreporting due to difficulties in recalling intake or estimating portion sizes [19]

- Intentional misreporting of overall intake or specific foods perceived as socially undesirable [19]

- Systematic overestimation of nutrient intakes compared to multiple 24-hour dietary recalls [18]

Statistical Evidence of Systematic Error in FFQ Validation Studies

Validation studies across diverse populations consistently reveal patterns of systematic error in FFQ data. The table below summarizes key quantitative findings from recent FFQ validation studies, demonstrating the nature and magnitude of systematic errors observed.

Table 1: Quantitative Evidence of Systematic Error from FFQ Validation Studies

| Population Study | Reference Method | Sample Size | Correlation Coefficients | Key Evidence of Systematic Error |

|---|---|---|---|---|

| Lebanese Adults [18] | Six 24-hour recalls | 238 participants | 0.16-0.65 (Pearson); Two-thirds >0.3 | Systematic overestimation of most nutrients compared to 24HR; Mean percent difference decreased after energy adjustment |

| Emirati Adults [19] | Three 24-hour recalls | 60 participants | Not specified | Discussion of systematic biases including omission of foods and portion size estimation errors |

| Fujian, China Adults [20] | Three 24-hour recalls | 142 participants | 0.40-0.72 for food groups; 0.40-0.70 for nutrients | Proportion classified into same/adjacent tertile: 78.8-95.1%; Evidence of systematic misclassification |

| Women with Osteoporosis [21] | 3-day food record | 30 participants | Statistically significant Pearson correlations for all nutrients | Significant differences for carbohydrate and magnesium; Bland-Altman showed disagreement increases with intake magnitude |

The consistency of these findings across different populations and FFQ designs underscores the pervasive nature of systematic error in FFQ-based dietary assessment and highlights the critical need for appropriate statistical correction methods in research settings.

Consequences for Drug Development and Clinical Research

Impact on Diet-Disease Association Studies

Systematic measurement error in FFQ data has profound implications for observational studies investigating diet-disease relationships, which often form the foundation for hypothesis generation in drug development. In the classical measurement error model, where errors are random and independent of true exposure, the effect is attenuation of estimated effect sizes toward the null hypothesis [1]. This attenuation reduces statistical power and can lead to false negative conclusions about potentially important diet-disease relationships. For example, if a nutrient truly reduces disease risk, systematic measurement error might obscure this protective effect, causing researchers to abandon a promising therapeutic target.

The situation becomes more complex when systematic errors are present or when multiple correlated exposures are measured with error. In these scenarios, which are common in nutritional epidemiology, effect estimates can be biased in any direction - not just toward the null [1]. This can lead to false positive findings where null or even protective associations appear as risk factors. In drug development, such errors could direct substantial resources toward pursuing false leads based on erroneously identified diet-disease relationships.

The problem is compounded when covariates in disease models are also imprecisely measured, leading to residual confounding that further distorts the apparent relationship between the dietary exposure and health outcome [1]. The resulting biased effect estimates undermine the evidence base used to prioritize targets for pharmaceutical development and design clinical trials for nutritional interventions.

Implications for Clinical Trial Design and Interpretation

In clinical research, systematic error in FFQ data can compromise multiple aspects of trial design and interpretation:

Subject Selection Bias: If FFQs are used to identify eligible participants based on dietary patterns (e.g., low fruit and vegetable consumers), systematic measurement error could lead to inclusion of misclassified individuals, reducing the contrast between intervention groups and diluting observed intervention effects.

Stratification and Adjustment Issues: When FFQ data are used for stratification or statistical adjustment in randomized trials, systematic error can introduce residual confounding and reduce the efficiency of the randomization.

Biomarker Validation Challenges: Discrepancies between FFQ-based intake estimates and nutritional biomarkers may reflect systematic error in FFQs rather than limitations of the biomarkers, leading to incorrect conclusions about the utility of each approach.

Intervention Efficacy Assessment: In nutritional intervention trials where FFQs are used as outcome measures, systematic error can either exaggerate or minimize apparent intervention effects, potentially leading to incorrect conclusions about intervention efficacy.

Table 2: Consequences of Uncorrected Systematic Error in Different Research Contexts

| Research Context | Primary Consequence | Impact on Drug Development/Clinical Research |

|---|---|---|

| Target Identification | Attenuated or biased diet-disease associations | Pursuit of false targets or abandonment of valid targets |

| Biomarker Development | Discrepancies between reported intake and biomarker levels | Misinterpretation of biomarker validity and utility |

| Clinical Trial Stratification | Misclassification of participants by dietary patterns | Reduced statistical power and biased effect estimates |

| Nutritional Intervention Trials | Systematic over/under-estimation of dietary changes | Incorrect conclusions about intervention efficacy |

| Diet-Disease Mechanisms | Distorted relationships between multiple nutrients | Flawed understanding of biological mechanisms |

Statistical Protocols for Quantifying and Correcting Systematic Error

Experimental Design for FFQ Validation Studies

Proper validation of FFQs requires carefully designed studies that compare FFQ results with appropriate reference methods. The following protocol outlines key methodological considerations for designing FFQ validation studies:

Participant Selection and Sample Size

- Recruit participants representative of the target population in terms of age, sex, socioeconomic status, and health status [18] [19]

- Target sample sizes typically range from 30 to over 200 participants, depending on the precision required and expected correlation with reference method [18] [21]

- Apply exclusion criteria for implausible energy intakes (e.g., <500 or >3500 kcal/day for women; <800 or >4000 kcal/day for men) to minimize the impact of gross reporting errors [18]

Reference Method Selection and Administration

- Use multiple 24-hour dietary recalls (typically 3-6 non-consecutive days) as reference method, covering both weekdays and weekend days to account for intra-individual variation [18] [19] [20]

- Employ trained interviewers using standardized multiple-pass methods to enhance accuracy of recalls [18]

- Administer reference method recalls over a period that reflects the seasonal variation in dietary intake (e.g., 1 month) [19]

- Space FFQ and reference method administrations appropriately (e.g., 1 month apart) to minimize recall bias while ensuring they reference the same time period [19]

Data Collection and Management

- Use appropriate food composition databases that reflect local food options and preparation methods [18]

- Implement standardized procedures for converting household measures to nutrients

- Apply quality control checks throughout data collection and processing

Statistical Methods for Quantifying Measurement Error

Several statistical approaches are available to quantify the relationship between FFQ measurements and "true intake" in validation studies:

Correlation Analysis

- Calculate Pearson or Spearman correlation coefficients between nutrient intakes from FFQ and reference method [18] [20]

- Interpret magnitude of correlations: values >0.5 generally indicate good agreement, though two-thirds exceeding 0.3 may be acceptable for epidemiological studies [18]

- Calculate energy-adjusted and deattenuated correlations to account for within-person variation in reference method [18]

Method of Triads

- Use structural equation modeling with three different dietary assessment methods (e.g., FFQ, 24HR, biomarker) to estimate correlation with true intake [1]

- Particularly useful when no perfect reference method is available

- Provides estimates of validity coefficients for each method

Cross-Classification Analysis

- Classify participants into quartiles or tertiles based on nutrient intakes from both FFQ and reference method [18] [20] [21]

- Calculate proportion classified into same, adjacent, or extreme opposite categories

- Acceptable classification when >50% in same/adjacent quartile and <10% in opposite quartile [18] [21]

Bland-Altman Analysis

- Plot differences between methods against their means to visualize agreement and systematic bias [18] [20] [21]

- Identify proportional bias (where differences change with magnitude of intake)

- Establish limits of agreement (±1.96 SD of differences) [18]

Intraclass Correlation Coefficients (ICC)

- Assess reproducibility when administering FFQ twice in short interval [18] [20]

- Interpret values: <0.5 poor, 0.5-0.75 moderate, 0.75-0.9 good, >0.9 excellent reliability [18] [20]

Statistical Methods for Correcting Systematic Error

Several statistical approaches can correct for measurement error in diet-disease associations:

Regression Calibration

- Most common approach to correct for measurement error in nutritional epidemiology [1]

- Replaces mismeasured exposure with expected value given reference measurements

- Requires that measurement error follows classical error model

- Can be extended to multivariate settings with multiple mismeasured exposures

Method of Triads for Correction

- Uses three complementary measurements to estimate true exposure

- Particularly valuable when no gold standard reference is available

- Can provide corrected effect estimates in diet-disease associations

Multiple Imputation

- Can handle differential measurement error where error structure differs between study subgroups [1]

- Imputes multiple plausible values for true exposure based on reference measurements

- Accounts for uncertainty in the imputation process

Moment Reconstruction

- Alternative method for dealing with differential measurement error [1]

- Reconstructs moments of true exposure distribution from mismeasured data and error model

- Less computationally intensive than multiple imputation

Research Reagent Solutions for FFQ Validation and Error Correction

Table 3: Essential Research Reagents and Tools for FFQ Validation Studies

| Reagent/Tool | Function | Implementation Considerations |

|---|---|---|

| 24-Hour Dietary Recalls (24HR) | Reference method for validation; Multiple non-consecutive days (3-6) recommended [18] [19] | Should include weekdays and weekend days; Use multiple-pass method; Train interviewers thoroughly |

| Food Composition Databases (FCDB) | Convert food consumption to nutrient intakes [18] | Should reflect local foods and preparation methods; Combine sources if necessary (e.g., local and USDA databases) |

| Statistical Software (R, SAS, Stata) | Implement error correction methods and validation statistics | Specific packages available for measurement error correction (e.g., R's 'mecor') |

| Biomarkers of Nutrient Intake | Objective reference measures for specific nutrients [1] | Doubly labeled water for energy; Urinary nitrogen for protein; Serum carotenoids for fruit/vegetable intake |

| Standardized Portion Size Aids | Improve portion size estimation in FFQs | Use photographs, household measures, or food models; Culturally appropriate |

| Quality Control Protocols | Ensure consistency in data collection and processing | Standard operating procedures for interviewers; Data cleaning protocols; Range checks for nutrient values |

Systematic measurement error in FFQ data represents a significant methodological challenge with far-reaching consequences for drug development and clinical research. The evidence consistently shows that FFQs are subject to various systematic errors that can distort observed diet-disease relationships, compromise clinical trial integrity, and lead to incorrect conclusions about nutritional interventions. The statistical protocols outlined in this document provide researchers with practical approaches to quantify, correct, and account for these errors in their analyses.

Moving forward, the field would benefit from greater standardization in FFQ validation protocols, increased utilization of appropriate statistical correction methods, and clearer communication of measurement error limitations in research publications. By implementing robust error correction strategies, researchers can enhance the validity of their findings, make more efficient use of research resources, and contribute to a more reliable evidence base for dietary recommendations and therapeutic development.

Advanced Correction Methods: From Traditional Calibration to Machine Learning

Regression Calibration Using 24-Hour Dietary Recalls as Reference

In nutritional epidemiology, the food frequency questionnaire (FFQ) is a primary tool for assessing habitual dietary intake in large-scale studies. However, data obtained from FFQs are prone to substantial measurement error, both random and systematic, which can attenuate or bias estimated diet-disease associations [15] [1]. Regression calibration (RC) is a statistical method that corrects for this measurement error bias by using intake estimates from a more accurate reference instrument, such as 24-hour dietary recalls (24hR), to calibrate the error-prone FFQ measurements [22]. This application note details the protocols for implementing regression calibration where 24hR data serve as the reference, framed within the broader objective of correcting systematic measurement error in FFQ-based research.

Methodological Framework

Theoretical Basis of Regression Calibration

Regression calibration is a widely used method to correct point and interval estimates in regression models for bias introduced by measurement error in continuous exposures [22] [1]. The core concept involves replacing the error-prone exposure measurement in the analysis model with its expectation given the true exposure, estimated from calibration model data [23].

In the context of dietary data, let ( Q ) represent the nutrient intake measured by the FFQ, and let ( T ) represent the unobservable "true" habitual intake. The standard RC approach assumes a measurement error model relating the FFQ to true intake. A common model is the classical error model: ( Q = T + εQ ), where ( εQ ) is random error with mean zero and independent of ( T ). However, for self-reported dietary data, a more flexible linear measurement error model is often more appropriate [23]: [ Q = α0 + αT T + εQ ] Here, ( α0 ) represents constant (location) bias and ( αT ) represents proportional (scale) bias. When a reference instrument like the 24hR (( R )) is available, it is assumed to measure true intake with error: ( R = T + εR ), where ( εR ) is random error independent of ( T ) and ( εQ ).

Workflow for Regression Calibration

The following diagram illustrates the logical workflow and data relationships for implementing regression calibration in a study where all participants have both FFQ and 24hR data.

Enhanced Regression Calibration (ERC)

When both FFQ and 24hR data are available for all study participants, an enhanced regression calibration (ERC) approach can be employed. This method incorporates individual-level information from the 24hR measurement directly into the calibrated value, rather than using it only to fit the model [15]. The model can be formulated as: [ Ti^* = E(Ti | Qi, Ri) = γ0 + γQ Qi + γR Ri ] where ( Ti^* ) is the calibrated intake for individual ( i ), and ( Qi ) and ( Ri ) are their FFQ and 24hR intakes, respectively. This approach utilizes all available information and can yield more precise and less biased estimates compared to standard RC [15].

Application Example: Protein and Potassium Intake

Experimental Protocol

A study utilizing data from the Dutch National Dietary Assessment Reference Database (NDARD) compared five approaches for estimating self-reported dietary intakes of protein and potassium [15].

Research Reagent Solutions

| Research Reagent | Function in the Experimental Protocol |

|---|---|

| 180-item FFQ | A semi-quantitative food frequency questionnaire assessed habitual intake over the past month, using natural portions and household measures [15]. |

| Telephone 24hR | Two unannounced 24-hour dietary recalls conducted by trained dietitians using a standardized protocol based on the five-step multiple-pass method [15]. |

| 24-hour Urine Collection | Served as an unbiased recovery biomarker for protein and potassium intake to validate the self-report methods; completeness was checked with PABA tablets [15]. |

| Dutch Food Composition Table (2011) | The standardized database used to convert reported food consumption from both the FFQ and 24hR into nutrient intakes (e.g., grams of protein) [15]. |

| Urinary Nitrogen & Potassium | Laboratory measurements from the 24-hour urine collection, providing an objective measure of true intake for validation (reference instrument) [15]. |

Methodology:

- Population: 236 adults from the NDARD database with complete data for two 24hRs, a baseline FFQ, and biomarker data for protein and potassium [15].

- Dietary Assessment: The FFQ was self-administered, while the 24hRs were conducted by trained dietitians via unannounced telephone calls. Nutrient intakes were calculated using the 2011 Dutch food composition table [15].

- Biomarker Assessment: Participants completed a 24-hour urine collection, which was aliquoted and stored at -20°C until analysis for nitrogen (for protein) and potassium [15].

- Comparison of Methods: The following five intake estimates for protein and potassium were compared:

- Uncorrected FFQ intake (FFQ)

- Uncorrected average of two 24hRs (24hR)

- Simple average of the FFQ and 24hR estimates (Average)

- Intake estimated by standard regression calibration (RC) of 24hR on FFQ

- Intake estimated by enhanced regression calibration (ERC)

- Validation: The empirical attenuation factor (AF) was derived by regressing the urinary biomarker measurement on each of the five intake estimates. An AF closer to 1.0 indicates less bias in the diet-disease association [15].

Data Presentation: Performance Comparison

The following table summarizes the key quantitative results from the study, demonstrating the impact of different correction methods on the bias for protein and potassium intake estimates.

Table 1: Comparison of Attenuation Factors (AF) for Protein and Potassium Intake Estimates Using Different Methods (Adapted from [15])

| Method for Intake Estimation | Attenuation Factor (Protein) | Attenuation Factor (Potassium) |

|---|---|---|

| Uncorrected FFQ (Q) | Not Reported | Not Reported |

| Uncorrected 24hR (R) | Not Reported | Not Reported |

| Average of Q and R | Not Reported | Not Reported |

| Regression Calibration (RC) | 1.14 | 1.28 |

| Enhanced Regression Calibration (ERC) | 0.95 | 1.34 |

Interpretation of Results: The AF for protein was closest to 1.0 (indicating minimal bias) when using the ERC method (AF=0.95), whereas RC showed slight overcorrection (AF=1.14) [15]. For potassium, both RC and ERC resulted in AFs greater than 1 (1.28 and 1.34, respectively), suggesting possible overcorrection for this nutrient [15]. The authors noted that ERC generally provided more statistical power, as evidenced by larger standard deviations and narrower confidence intervals for the AF compared to standard RC [15].

Implementation and Research Toolkit

Software and Data Requirements

The implementation of regression calibration requires specific data structures and can be performed using standard statistical software.

Software: Regression calibration can be implemented using common statistical software packages. SAS macros are specifically mentioned in the literature for performing these corrections [22], but the models can also be fitted in R, Stata, or other environments capable of linear regression.

Data Structure: The ideal data structure involves a calibration study, which can be internal (a sub-sample of the main study) or external (conducted on a separate but similar population) [1] [23]. For enhanced methods like ERC, the 24hR data must be available for every participant in the main study [15].

Considerations for Research Design

- Choice of Reference Instrument: The 24hR is often considered an "alloyed gold standard" – it is superior to the FFQ but not a perfect instrument, as it also contains random error and may be subject to biases like reliance on memory [1]. The validity of RC hinges on the assumption that the 24hR is unbiased for true intake, which may not hold perfectly.

- Model Assumptions: Researchers must verify that the relationship between the 24hR and FFQ is approximately linear and that the errors in the two instruments are independent. Violations of these assumptions can lead to residual bias in the corrected estimates [1] [23].

- Handling Covariates: The basic RC model can be extended to include covariates (e.g., age, sex, energy intake) that are associated with true intake or the measurement error process, which can improve the calibration [23].