Dietary Adherence Scoring Systems: A Comprehensive Guide for Clinical Research and Drug Development

Accurately measuring participant adherence to dietary interventions is a critical yet complex challenge in clinical research and drug development.

Dietary Adherence Scoring Systems: A Comprehensive Guide for Clinical Research and Drug Development

Abstract

Accurately measuring participant adherence to dietary interventions is a critical yet complex challenge in clinical research and drug development. This article provides a systematic analysis of dietary adherence scoring systems, exploring their foundational principles, methodological applications, common pitfalls, and validation strategies. We examine established indices like HEI-2020, aMED, DASH, and DII alongside novel computational and behavioral approaches. Targeted at researchers and pharmaceutical professionals, this review synthesizes current evidence to guide the selection, implementation, and optimization of adherence metrics, ultimately enhancing the reliability and interpretability of nutrition-focused clinical trials and therapeutic development.

Core Principles and Landscape of Dietary Adherence Metrics

Accurately defining and measuring adherence is a fundamental challenge in nutritional science and intervention research. Moving beyond the simplistic concept of compliance, contemporary adherence science recognizes the multifaceted nature of how individuals follow dietary recommendations. The World Health Organization defines adherence as "the extent to which a person's behavior- taking medication, following a diet, and/or executing lifestyle changes- corresponds with the agreed recommendations from a healthcare provider" [1]. This definition encompasses not merely whether a diet is followed, but how closely the implementation matches the prescribed parameters across multiple dimensions.

In dietary intervention research, the selection of adherence assessment methodology significantly influences study outcomes and interpretations. Variations in operational definitions, measurement timeframes, and scoring algorithms can produce substantially different adherence estimates from the same underlying behavior [2]. This comparison guide examines the leading dietary adherence scoring systems, their experimental applications, methodological considerations, and performance characteristics to inform researcher selection and implementation.

Comparative Analysis of Dietary Adherence Scoring Systems

Several validated scoring systems have been developed to quantify adherence to evidence-based dietary patterns. These indices transform complex dietary behaviors into quantifiable metrics suitable for statistical analysis and intervention monitoring.

Table 1: Major Dietary Adherence Scoring Systems

| Scoring System | Dietary Pattern Assessed | Components Evaluated | Scoring Range | Primary Application Context |

|---|---|---|---|---|

| HEI-2020 [3] | Dietary Guidelines for Americans | 13 components: vegetables, fruits, whole grains, dairy, protein foods, fat intake | 0-100 points | Comprehensive dietary quality assessment |

| aMED [3] | Mediterranean Diet | 9 categories: vegetables, fruits, nuts, whole grains, legumes, fish, MUFA:SFA ratio, red/processed meats, alcohol | 0-9 points | Mediterranean diet adherence |

| DASH [4] [3] | DASH Diet | 9 nutrient targets: saturated fat, total fat, protein, cholesterol, fiber, magnesium, calcium, potassium, sodium | 0-9 points | Hypertension-focused dietary patterns |

| DII [3] | Diet Inflammatory Potential | Multiple food parameters with inflammatory/anti-inflammatory properties | Continuous scale | Inflammatory potential of dietary patterns |

| EAT-Lancet Index [5] | Planetary Health Diet | Food group consumption aligned with EAT-Lancet recommendations | Varies by implementation | Sustainable and healthy dietary patterns |

| WISH 2.0 [5] | Planetary Health Diet | Original WISH categories plus processed meat and alcoholic beverages | Varies by implementation | Sustainability and health-focused diets |

Performance Comparison in Research Settings

Different scoring systems demonstrate varying sensitivities and specificities when examining associations with health outcomes. Recent research has directly compared these indices to evaluate their performance characteristics.

Table 2: Performance Comparison of Dietary Indices in Health Outcomes Research

| Scoring System | Association with Periodontitis (OR)* [3] | Discriminatory Capacity | Regional Pattern Detection | Key Strengths |

|---|---|---|---|---|

| HEI-2020 | Not significant in fully adjusted models | Moderate | Limited | Comprehensive nutritional assessment |

| aMED | 1.147 (95%CI: 1.002-1.313) | Moderate | Strong for Mediterranean regions | Cultural specificity |

| DASH | 1.310 (95%CI: 1.139-1.507) | High | Moderate | Strong clinical outcome associations |

| DII | 0.675 (95%CI: 0.597-0.763) | High | Limited | Inflammatory pathway specificity |

| EAT-Lancet Index [5] | Not assessed in periodontitis study | Moderate | Limited | Environmental sustainability integration |

| WISH 2.0 [5] | Not assessed in periodontitis study | High | Strong for European patterns | Enhanced reflection of actual consumption |

Note: OR = Odds Ratio from fully adjusted models comparing fourth to first quartile of adherence; DII OR interpretation reversed due to its inverse scoring

In a direct comparison of four indices examining periodontitis risk, only DASH and DII maintained significant associations after full adjustment for covariates, suggesting these indices may capture dietary aspects most relevant to inflammatory oral health outcomes [3]. The EAT-Lancet index and WISH 2.0 were evaluated in a separate study of European dietary patterns, where WISH 2.0 demonstrated superior capacity to distinguish between different national dietary patterns and better alignment with actual food consumption data [5].

Experimental Protocols for Adherence Assessment

Dietary Data Collection Methodologies

The foundation of accurate adherence assessment lies in rigorous dietary data collection. The most common methodologies include:

24-Hour Dietary Recall

- Protocol: Structured interviews using multiple passes (quick list, detailed description, review) to capture all foods and beverages consumed in the previous 24 hours [4] [3]

- Tools: Standardized measuring aids, food models, picture references, and digital interfaces

- Administration: Typically conducted by trained interviewers, with at least two recalls (one in-person and one telephone) to account for day-to-day variation [3]

- Advantages: Minimal respondent burden, high immediate recall accuracy

- Limitations: Relies on memory, potential under-reporting of certain foods

Food Frequency Questionnaires (FFQ)

- Protocol: Self-administered questionnaires assessing frequency of consumption of specific foods over extended periods (typically 3-12 months)

- Tools: Validated food lists with culturally appropriate portion size estimation

- Administration: Can be completed independently or with interviewer assistance

- Advantages: Captures habitual intake, efficient for large studies

- Limitations: Memory bias, portion size estimation errors, fixed food lists may miss relevant items

Adherence Scoring Implementation

The transformation of raw dietary data into adherence metrics follows standardized computational procedures:

DASH Score Calculation Protocol [4] [3]

- Nutrient Quantification: Calculate daily intake of 9 target nutrients from dietary recall data

- Energy Adjustment: Express nutrient values per 1,000 kcal to account for varying energy requirements

- Scoring Application:

- Award 1 point for achieving target for each nutrient

- Award 0.5 points for intermediate achievement

- Sum points across all 9 nutrients for total score (maximum 9 points)

- Adherence Classification: Define adherence threshold (typically ≥4.5 points for DASH accordant) [4]

Planetary Health Diet Indices Protocol [5]

- Food Group Categorization: Classify consumed foods into predefined food groups aligned with EAT-Lancet recommendations

- Consumption Assessment: Compare actual consumption to recommended intake ranges for each food group

- Scoring Application:

- EAT-Lancet Index: Uses ordinal scoring based on degree of alignment with targets

- WISH 2.0: Applies continuous scoring with additional points for processed meat and alcohol moderation

- Normalization: Normalize scores to enable cross-population comparisons

Methodological Considerations in Adherence Measurement

Operational Definitions and Their Impact

The specific operational definition of adherence significantly influences measured adherence rates. Research across chronic conditions demonstrates that varying calculation methods produce substantially different results:

Table 3: Impact of Calculation Method on Adherence Rates [2]

| Calculation Method | Definition | Reported Adherence Range | Key Considerations |

|---|---|---|---|

| PILLCOUNT | Number of administrations ÷ number prescribed, regardless of timing | 89%-92% | Overestimates adherence by ignoring timing |

| DAILY | Days with correct number of administrations ÷ total days | 79%-85% | Accounts for missed days but not dosing intervals |

| TIMING | Administrations within prescribed dosing intervals ÷ total opportunities | 62%-68% | Most stringent, accounts for timing accuracy |

In a study of diabetes and hypertension medications, these different calculation methods produced adherence estimates varying by approximately 30 percentage points, highlighting the critical importance of methodological transparency [2].

Measurement Frequency and Variability

Dietary adherence demonstrates significant within-person variability over time, necessitating repeated assessments for accurate characterization. Electronic monitoring research reveals that adherence patterns fluctuate daily and weekly, influenced by lifestyle factors, day of week, and seasonal variations [6]. Visual analytics of dense adherence data captured through digital monitoring systems can reveal longitudinal patterns not apparent in summary statistics, including time-of-day effects, clustering of missed doses, and relationships between physiological measures and adherence behaviors [6].

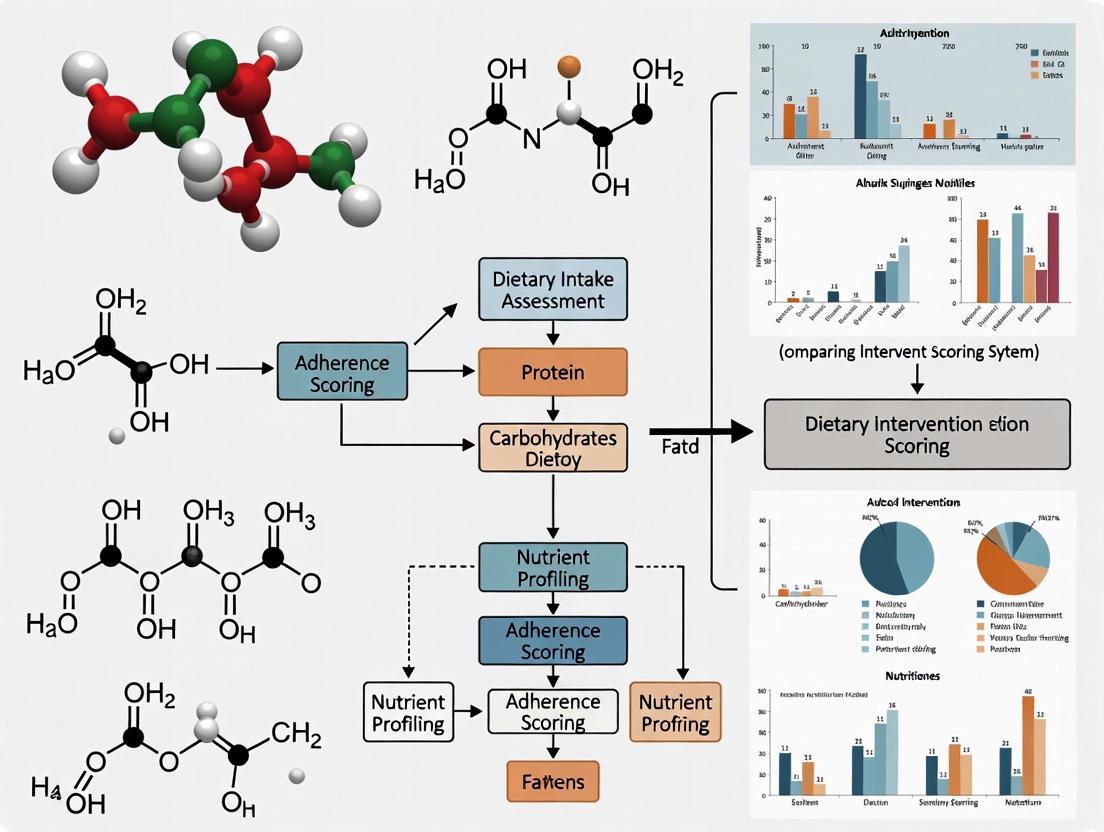

Visualization of Adherence Assessment Methodologies

Adherence Assessment Methodology Framework

This diagram illustrates the comprehensive framework for dietary adherence assessment, encompassing the three critical dimensions: data collection methods, scoring systems, and calculation approaches. The integration of these components enables researchers to select methodologically aligned assessment strategies tailored to specific research questions and dietary patterns.

Table 4: Essential Research Reagents and Tools for Dietary Adherence Studies

| Tool/Resource | Function | Application Example | Key Features |

|---|---|---|---|

| EFSA Comprehensive European Food Consumption Database [5] | Reference food consumption data | Cross-national dietary pattern comparisons | Standardized EU menu methodology |

| USDA Food and Nutrient Database | Food composition data | Nutrient intake calculations | Comprehensive nutrient profiles |

| Tzameret Software [4] | Dietary intake calculation | Israeli National Health and Nutrition Survey | Integrated with local food database |

| Medication Event Monitoring System (MEMS) [2] | Electronic medication adherence monitoring | Timing and frequency of medication administration | Objective dosing data |

| Digital Health Feedback System (DHFS) [6] | Actual medication ingestion detection | Correlation between adherence and physiological measures | Edible sensor technology |

| Health-ITUES Survey [7] | Usability assessment of data visualization tools | Evaluating clinician comprehension of adherence reports | Validated usability metrics |

The precise definition and measurement of adherence represents a critical methodological frontier in nutritional intervention research. Rather than a unitary construct, adherence encompasses multiple dimensions including frequency, timing, quantity, and persistence. The selection of appropriate assessment methodologies—from data collection instruments through scoring algorithms and calculation methods—significantly influences study outcomes and interpretations.

Current evidence suggests that no single adherence metric serves all research purposes equally. The DASH score demonstrates robust associations with clinical outcomes including periodontitis [3], while WISH 2.0 offers enhanced capacity to detect national dietary patterns for sustainability-focused research [5]. Methodological transparency, including explicit reporting of operational definitions and calculation methods, is essential for cross-study comparisons and evidence synthesis.

Future directions in adherence science include the integration of digital monitoring technologies that capture dense longitudinal data [6], advanced visualization techniques to identify temporal patterns [7] [6], and standardized reporting frameworks that account for the multidimensional nature of dietary adherence behaviors. Through methodological rigor and appropriate tool selection, researchers can advance our understanding of how dietary adherence influences health outcomes across diverse populations and intervention contexts.

Dietary pattern indices are essential tools in nutritional epidemiology, allowing researchers to quantify the complexity of overall diet and examine its relationship with health outcomes. Unlike approaches focused on single nutrients or foods, these indices evaluate the cumulative and synergistic effects of diverse dietary components, providing a more holistic assessment of diet quality. This guide objectively compares four prominent dietary pattern indices—Healthy Eating Index-2020 (HEI-2020), alternative Mediterranean Diet Score (aMED), Dietary Approaches to Stop Hypertension (DASH), and Dietary Inflammatory Index (DII). Designed for researchers, scientists, and drug development professionals, this comparison covers each index's conceptual foundation, methodological approach, scoring system, and association with health outcomes, with a specific focus on their application in dietary intervention adherence scoring systems research.

Index Profiles and Scoring Methodologies

The following table summarizes the core characteristics, components, and scoring methodologies of the four dietary indices.

Table 1: Core Characteristics and Scoring of Major Dietary Indices

| Feature | HEI-2020 | aMED | DASH | DII |

|---|---|---|---|---|

| Primary Focus | Adherence to U.S. Dietary Guidelines [8] | Adherence to Mediterranean diet principles [9] | Blood pressure control; diet pattern from NHLBI trials [10] | Inflammatory potential of the overall diet [11] |

| Theoretical Basis | Dietary Guidelines for Americans (DGA) [12] | Traditional Mediterranean diet [9] | DASH clinical trials [10] | Empirical literature linking diet to inflammation [11] |

| Number of Components | 13 [9] | 9 [9] | Varies (commonly 7-9 food groups/nutrients) [10] | 45 food parameters (including nutrients and flavonoids) [11] |

| Component Types | Food groups & nutrients (e.g., fruits, vegetables, added sugars) [8] | Food groups & ratio of fats [9] | Food groups & nutrients (e.g., fruits, vegetables, sodium) [10] | Nutrients, bioactive compounds, and food ingredients [11] |

| Scoring Range | 0 to 100 [9] | 0 to 9 [9] | Varies by method (e.g., 8-40 for Fung method) [10] | Theoretical range from -∞ (anti-inflammatory) to +∞ (pro-inflammatory); typically ~ -8 to +8 in practice [11] |

| Scoring Basis | Density-based standards per 1,000 calories [8] | Median intake of cohort for each component [9] | Quintile-based intake of cohort for each component [10] | Global database of mean intakes for each parameter [11] |

| Key Strengths | Directly aligned with U.S. federal nutrition policy; useful for surveillance [12] | Captures a well-studied, culturally specific dietary pattern associated with longevity [13] | Based on a dietary pattern with proven efficacy in clinical trials [10] | Uniquely designed to specifically quantify diet's effect on chronic inflammation [14] |

Experimental Comparison: Protocol and Findings from a Cross-Sectional Analysis

A cross-sectional study offers a direct, empirical comparison of the HEI-2020, aMED, DASH, and DII in relation to a specific health outcome, periodontitis. The following workflow diagram outlines the key stages of this research.

Diagram 1: Experimental workflow for the comparative analysis of dietary indices and periodontitis, based on an NHANES study [9].

Detailed Experimental Protocol

The comparative analysis was designed as a cross-sectional study using data from the National Health and Nutrition Examination Survey (NHANES) collected between 2009 and 2014 [9].

- Study Population: The initial sample included 30,486 participants. After applying exclusion criteria (age <30 years, incomplete demographic or dietary data, and invalid periodontal assessments), the final analytical sample consisted of 8,571 adults aged 30 years and older [9].

- Dietary Assessment and Index Calculation: Dietary intake was assessed using two non-consecutive 24-hour dietary recalls. The data from these recalls were used to calculate scores for the four indices:

- HEI-2020: Comprises 13 components (e.g., fruits, vegetables, whole grains) scored based on density per 1,000 calories, summing to a total score of 100 [9].

- aMED: Comprises 9 components (e.g., fruits, vegetables, fish, ratio of monounsaturated to saturated fats). Participants scored one point for each component where their intake was above the sex-specific median for healthy components or below for unhealthy components, creating a total score from 0 to 9 [9].

- DASH: Based on the Fung method, which includes 8 components (fruits, vegetables, nuts and legumes, whole grains, low-fat dairy, sodium, red/processed meats, and sweetened beverages). Intakes are ranked by quintiles, with scores from 1 to 5 assigned for each component, resulting in a total score range of 8 to 40 [9].

- DII: Calculated based on intake of 45 food parameters compared to a global reference database. The scoring algorithm produces a score where more negative values indicate an anti-inflammatory diet, and more positive values indicate a pro-inflammatory diet [11].

- Outcome Assessment: Periodontitis was diagnosed through a full-mouth periodontal examination conducted by trained dentists. Assessment included clinical attachment loss (CAL) and probing depth (PD) at six sites per tooth [9].

- Statistical Analysis: The association between each dietary index and periodontitis was examined using multivariable logistic regression models, adjusted for confounders including age, sex, ethnicity, smoking status, and comorbidities. The analysis was conducted in three ways:

- Single exposure model: Each index was evaluated independently.

- Double exposure model: Pairs of indices were included to assess robustness.

- Overall model: All indices were included simultaneously [9].

- Additional analyses included ROC (Receiver Operating Characteristic) curves to compare the contribution of dietary indices to periodontitis relative to other factors, and Restricted Cubic Splines (RCS) to explore potential non-linear associations [9].

Key Findings and Comparative Performance

The study yielded clear findings on the relative performance of the four indices in relation to periodontitis risk.

Table 2: Association between Dietary Indices and Periodontitis from NHANES Analysis [9]

| Dietary Index | Performance in Single Exposure Model | Performance in Overall Model (Adjusted for all indices) | Odds Ratio (OR) for Periodontitis in Overall Model (95% CI) | Nature of Association |

|---|---|---|---|---|

| HEI-2020 | Significant association | Not significant | Not Reported (retained no significance) | Not applicable |

| aMED | Significant association | Significant | 1.147 (1.002, 1.313) | Positive (poor habit linked to higher risk) |

| DASH | Significant association | Significant | 1.310 (1.139, 1.507) | Positive (poor habit linked to higher risk) |

| DII | Significant association | Significant | 0.675 (0.597, 0.763) | Negative (pro-inflammatory diet linked to higher risk) |

- Model Robustness: While all four indices showed significant associations with periodontitis when assessed individually, only DASH and DII retained complete significance in the more rigorous double and overall exposure models, indicating their associations are more robust when accounting for other dietary patterns [9].

- Contribution to Outcome: The ROC analysis revealed that the collective contribution of these dietary indices to periodontitis was second only to demographic factors like sex and ethnicity, underscoring the importance of diet as a modifiable risk factor [9].

- Linearity of Association: Tests for non-linearity showed an approximately linear association for HEI-2020, aMED, and DASH with periodontitis. In contrast, the DII exhibited a significant non-linear association (p=0.024), suggesting a more complex relationship between inflammatory potential and disease risk [9].

- Conclusion: The study concluded that a poor habit for DASH was most robustly linked to the occurrence of periodontitis among the four indices evaluated [9].

Association with Health Outcomes Across Studies

Beyond periodontitis, these indices have been extensively studied in relation to major chronic diseases and overall health status.

All-Cause and Cause-Specific Mortality

- DASH Diet: A comprehensive dose-response meta-analysis of 17 prospective studies found that higher adherence to the DASH diet was linearly associated with a lower risk of mortality. For each 5-point increment in the DASH score (on a scale from 8 to 40), the summary hazard ratios (HRs) were 0.95 for all-cause mortality, 0.96 for cardiovascular disease (CVD) mortality, and 0.97 for cancer mortality. Evidence of a non-linear association was also found, with the risk reduction becoming more pronounced when adherence scores exceeded 20 points [10].

- aMED Diet: Studies have consistently linked the aMED with reduced mortality. In the Multiethnic Cohort (MEC), higher scores for both the raw (aMED) and energy-standardized (aMED-e) versions were associated with a lower risk of all-cause, CVD, and cancer mortality. The correlation between the two versions was lower among individuals with a higher BMI, though both yielded similar risk reductions for mortality [15].

Healthy Aging

A landmark study using data from the Nurses' Health Study and the Health Professionals Follow-Up Study (n=105,015) examined the association between long-term adherence to various dietary patterns, including aMED, DASH, and the related Alternative Healthy Eating Index (AHEI), with "healthy aging." Healthy aging was defined as surviving to age 70 years or older with intact cognitive, physical, and mental health, and without major chronic diseases. The study found that higher adherence to all dietary patterns was associated with significantly greater odds of healthy aging after 30 years of follow-up. The AHEI showed the strongest association, followed by empirically derived indices for insulinemia and inflammation. The aMED and DASH diets also showed strong, significant associations with greater odds of healthy aging [13].

Colorectal Cancer

A comparison of four different DASH indexes within the large NIH-AARP Diet and Health Study (n=491,841) found that higher scores were generally associated with a reduced incidence of colorectal cancer. In men, all four DASH indexes showed a significant inverse association. In women, three of the four indexes (Mellen, Fung, and Günther) showed a significant protective association [16]. This highlights that while different operationalizations of the same dietary pattern can affect results, the underlying construct consistently predicts disease risk.

Table 3: Key Databases, Tools, and Metrics for Dietary Pattern Research

| Resource | Type | Primary Function & Application |

|---|---|---|

| NHANES Database | Public Database | Provides nationally representative, publicly available data on diet, health, and examination metrics for the U.S. population; ideal for validation and population-level studies [9]. |

| MyPyramid Equivalents Database (MPED) | Food Group Database | A standardized system that disaggregates mixed foods into their constituent food groups and ingredients; essential for calculating food group-based scores like aMED and DASH [15]. |

| Global Dietary Intake Database (for DII) | Reference Database | A composite database of means and standard deviations for 45 food parameters from 11 countries worldwide; serves as the reference for calculating individual DII scores [11]. |

| ROC (Receiver Operating Characteristic) Analysis | Statistical Method | Evaluates the predictive performance and contribution of a variable (e.g., a diet score) to a specific outcome relative to other factors [9]. |

| Restricted Cubic Splines (RCS) | Statistical Method | A tool used in regression models to visually and statistically explore potential non-linear relationships between an exposure (diet score) and an outcome (disease) [9]. |

| Energy Standardization Methods | Methodological Consideration | Techniques (e.g., using density per 1000 kcal or nutrient residuals) to account for total energy intake, a critical step that can influence index scores and their interpretation [15]. |

This comparison reveals that the choice of a dietary index should be strategically aligned with the specific research question and the biological pathways of interest.

- For research focused on general diet quality and adherence to national guidelines, the HEI-2020 is the most appropriate tool [8] [12].

- For studies on chronic inflammation as a primary mechanism, the DII is specifically designed for this purpose and has been robustly validated [11] [14].

- For outcomes like cardiovascular health, hypertension, or periodontitis, the DASH index has demonstrated particularly strong and consistent associations [9] [10].

- For investigations into healthy aging and chronic disease prevention, the aMED and related indices like the AHEI are well-supported by longitudinal evidence [13] [15].

The finding that DASH was most robustly associated with periodontitis in a direct comparison underscores that the performance of an index can be outcome-dependent. This highlights the importance of selecting an index whose underlying dietary pattern is biologically relevant to the health outcome under investigation. Future research should continue to employ comparative studies across diverse populations to further refine the application of these indices in predictive and interventional research.

The Critical Link Between Adherence Scoring and Clinical Trial Outcomes

In clinical research, the bridge between a theoretically effective intervention and a proven successful outcome is participant adherence. For chronic conditions, medication adherence averages only 50% in developed countries, presenting a significant public health challenge that leads to poor health outcomes and increased healthcare costs [17]. The accurate measurement of adherence is therefore not merely a methodological detail but a critical determinant of a trial's validity and the real-world applicability of its findings. Without robust adherence scoring, researchers cannot distinguish between intervention failure and implementation failure, potentially leading to the erroneous dismissal of effective treatments [17]. This article provides a comprehensive comparison of adherence scoring methodologies across clinical and dietary intervention research, examining their measurement properties, applications, and relationships with trial outcomes.

Comparative Analysis of Adherence Measurement Approaches

Adherence measures are broadly categorized into subjective and objective methods, each with distinct strengths, limitations, and optimal use cases. The World Health Organization emphasizes that no single measure serves as a perfect "gold standard," recommending a multi-measure approach for the most accurate assessment [17].

Table 1: Comparative Overview of Adherence Measurement Methodologies

| Method Category | Specific Measures | Key Advantages | Principal Limitations | Optimal Use Cases |

|---|---|---|---|---|

| Subjective Measures | Self-report questionnaires, Healthcare professional assessments [17] | Identifies reasons for non-adherence, Low cost, Easy to implement [17] | Patient underreporting of non-adherence, Recall bias [17] | Initial adherence screening, Understanding behavioral determinants |

| Objective - Pharmacy Records | Proportion of Days Covered (PDC), Medication Possession Ratio (MPR) [18] | Suitable for large populations, Allows multidrug adherence assessment [17] | Assumes medication taken as prescribed, Cannot detect partial adherence [17] | Long-term chronic medication studies, Health services research |

| Objective - Electronic Monitoring | Electronic Medication Packaging (EMP) devices [17] | Precise recording of dosing patterns, Captapes timing of administration [17] | Higher cost, "White coat adherence" effect [17] | Detailed dosing pattern analysis, Complex regimens |

| Objective - Direct Measures | Drug/metabolite concentration in blood/urine, Biological markers [17] | Physical evidence of medication ingestion, Highly accurate for recent doses [17] | Intrusive, Expensive, Influenced by metabolic variability [17] | Single-dose therapy, Hospitalized patients |

| Dietary Adherence Algorithms | SAVoReD score, PrimeScreen-adapted FFQ, 3-day diet records [19] [20] | Captapes behavioral complexity, Can combine multiple compliance aspects [19] | Self-report limitations, Requires validation for each diet type [19] | Dietary intervention trials, Lifestyle modification studies |

Advanced Adherence Trajectories and Clinical Outcomes

Modern adherence research has evolved beyond static measurements to capture dynamic patterns over time. Group-based trajectory modeling represents a significant methodological advancement, identifying distinct adherence pathways that powerfully predict clinical outcomes.

Cardiovascular Disease Adherence Trajectories and Outcomes

A recent meta-analysis of nine cohorts comprising 226,203 cardiovascular disease patients with a mean age of 66.1 years identified four distinct medication adherence trajectories over a maximum follow-up of five years [21]. The study utilized Proportion of Days Covered (PDC) as the primary adherence assessment method in eight of the nine studies [21].

Table 2: Clinical Outcomes by Medication Adherence Trajectory in Cardiovascular Disease

| Adherence Trajectory | All-Cause Mortality Risk (HR) | Major Adverse Cardiovascular Events (MACE) Risk (HR) | Other Clinical Outcomes |

|---|---|---|---|

| Persistent Adherence | Reference (1.0) | Reference (1.0) | Reference for all outcomes |

| Persistent Nonadherence | Significantly higher risk [21] | Significantly higher risk [21] | Nearly 3 times higher recurrent venous thromboembolism risk [21] |

| Gradually Increasing Adherence | 26% higher risk [21] | 22% increased risk [21] | - |

| Gradually Declining Adherence | Not specified | 24% increased risk [21] | 43% decreased major bleeding risk [21] |

This evidence underscores that maintaining persistent adherence provides the most substantial clinical benefits, while even improving adherence after periods of non-adherence does not fully eliminate excess risk [21]. The findings highlight the critical importance of early and sustained adherence interventions in cardiovascular disease management.

Dietary Intervention Adherence Methodologies

Dietary adherence measurement presents unique challenges compared to medication adherence, requiring specialized approaches that account for multidimensional eating behaviors.

The SAVoReD Scoring System The Score for Adherence to Voluntary Restriction Diets (SAVoReD) represents an innovative methodology specifically designed to quantify and compare adherence across different food-group-restricting diets [20]. When applied to popular diets including whole food plant-based (WFPB), vegan, vegetarian, and Paleo diets, higher adherence to WFPB and vegan diets was significantly associated with lower BMI, though no such association was observed for vegetarian or Paleo diet followers [20]. This demonstrates how adherence metrics can reveal important differences between seemingly similar dietary patterns.

Composite Adherence Algorithms The Be Healthy in Pregnancy (BHIP) trial developed a novel adherence algorithm combining compliance data for prescribed protein and energy intakes with daily step counts [19]. This approach recognized that adherence is multidimensional, particularly in complex lifestyle interventions. The study found that adherence scores significantly increased from early to mid-pregnancy but declined toward late pregnancy, primarily due to reduced physical activity [19]. This pattern illustrates the dynamic nature of adherence even within relatively short trial durations and highlights the importance of repeated measurements throughout the study period.

Experimental Protocols for Adherence Assessment

Protocol 1: Pharmacotherapy Adherence Using Proportion of Days Covered (PDC)

The Proportion of Days Covered is the preferred methodology for measuring medication adherence in chronic drug therapies, endorsed by the Pharmacy Quality Alliance (PQA) with a standard threshold of 80% for optimal clinical benefit (or 90% for antiretroviral medications) [18].

Methodology:

- Data Collection: Extract prescription claims data from electronic health records or pharmacy databases for the therapeutic class of interest over the measurement period (typically one year) [18].

- Calculation Method:

- Identify the first prescription fill date during the measurement period

- Calculate the total number of days the patient possessed the medication (based on dosage and quantity supplied)

- Divide the number of days "covered" by the medication by the number of days in the measurement period

- For multiple medications in the same class, apply the same methodology considering overlapping coverage [18]

- Analysis: Determine the proportion of patients achieving PDC ≥80% threshold, with lower adherence indicating need for intervention [18].

Protocol 2: Dietary Intervention Adherence Scoring

The PREDITION trial implemented a comprehensive adherence monitoring system for a 10-week dietary intervention comparing flexitarian and vegetarian diets [22].

Methodology:

- Scoring Framework: Develop a 100-point adherence score based on:

- Consumption of allocated foods (red meat or plant-based meat alternatives)

- Abstention from non-provided animal-based foods

- Continuous monitoring through food diaries and regular check-ins [22]

- Support Structure: Implement behavioral support framework including:

- Household pair recruitment to encourage mutual accountability

- Regular nutritionist consultations

- Provision of key intervention foods [22]

- Assessment: Calculate total adherence scores at intervention conclusion, with the trial achieving an exceptional overall average adherence score of 91.5/100 [22].

Protocol 3: Group-Based Trajectory Modeling for Longitudinal Adherence

This advanced statistical approach identifies distinctive adherence patterns over time, moving beyond static measures.

Methodology:

- Data Collection: Gather repeated adherence measures (e.g., monthly PDC) over the study period [21].

- Model Selection: Apply group-based trajectory modeling to identify distinctive adherence patterns:

- Persistent adherence

- Persistent nonadherence

- Gradually increasing adherence

- Gradually declining adherence [21]

- Outcome Analysis: Link trajectory groups to clinical endpoints using multivariate Cox proportional hazards models, adjusting for relevant covariates [21].

- Validation: Conduct sensitivity analyses to confirm model robustness and account for potential reverse causation [21].

Visualizing Adherence Measurement Workflows

Adherence Measurement Decision Workflow

Table 3: Essential Research Resources for Adherence Measurement

| Resource Category | Specific Tools & Methods | Research Application | Key Considerations |

|---|---|---|---|

| Medication Adherence Measures | Proportion of Days Covered (PDC), Medication Possession Ratio (MPR), Electronic Medication Packaging [17] [18] | Chronic disease medication trials, Health services research | PQA recommends PDC with 80% threshold for most chronic therapies [18] |

| Dietary Adherence Instruments | SAVoReD score, PrimeScreen-adapted FFQ, 3-day diet records, Adherence algorithms [19] [20] | Nutritional intervention studies, Lifestyle modification trials | Combine objective biomarkers with self-report for validation [19] |

| Statistical Analysis Tools | Group-based trajectory modeling, Random effects models, Cox proportional hazards regression [21] | Longitudinal adherence pattern analysis, Clinical outcome prediction | Identifies distinct adherence trajectories (persistent, declining, increasing) [21] |

| Behavioral Assessment Tools | Positive Eating Scale, Purpose-designed exit surveys, Experience measures [22] | Understanding psychological factors, Intervention refinement | Higher satisfaction correlates with better adherence [22] |

Robust adherence measurement is not merely a methodological consideration but a fundamental component of meaningful clinical trials. The evidence consistently demonstrates that adherence levels and trajectories significantly influence clinical outcomes across therapeutic domains [21] [20] [22]. Researchers should select adherence measures based on their specific intervention type, resources, and research questions, recognizing that multi-method approaches typically provide the most comprehensive assessment [17]. Future trial design should integrate adherence tracking as a primary component rather than a secondary consideration, with pre-specified analyses examining the relationship between adherence patterns and clinical outcomes. Only through such rigorous attention to adherence measurement can we truly distinguish between ineffective interventions and effectively delivered treatments.

In the field of nutritional science, diet quality indices are essential tools for quantifying how closely a population's dietary patterns align with recommended guidelines. For researchers and drug development professionals, understanding the nuances of different scoring methodologies is critical for interpreting study results and selecting appropriate metrics for clinical trials or public health interventions. This guide objectively compares two prominent frameworks used to assess adherence to the Planetary Health Diet (PHD): the EAT-Lancet Index and the World Index for Sustainability and Health (WISH) 2.0 [5].

The following table summarizes the core characteristics and performance data of the two indices based on a recent study across 11 European countries [5].

| Feature | EAT-Lancet Index | WISH 2.0 |

|---|---|---|

| Core Reference | EAT-Lancet Commission's PHD [5] | EAT-Lancet Commission's PHD [5] |

| Scoring System | Ordinal | Continuous |

| Number of Food Categories | 14 | 15 (includes processed meat & alcoholic beverages) [5] |

| Key Differentiator | Original, widely-cited framework | Expanded framework with enhanced public health relevance [5] |

| Discriminatory Capacity | Standard | Higher; more accurately reflects national dietary patterns [5] |

| Alignment with Consumption Data | Good | Better [5] |

| Sample Mean Normalized Score | Higher average scores achieved | Effectively distinguishes between dietary patterns [5] |

Experimental Protocols and Data

The comparative analysis is based on a study conducted within the European PLAN’EAT project, which aimed to provide data and recommendations to transform food systems toward healthier and more sustainable dietary behaviors [5].

Detailed Methodology

- Data Source: Food consumption data was retrieved from the EFSA Comprehensive European Food Consumption Database. This database uses a homogeneous, standardized methodology (the EU menu methodology) for data collection across countries [5].

- Population: The study analyzed mean individual consumption data from the adult population (18–64 years) across 11 European countries [5].

- Index Application: The three dietary quality indices—EAT-Lancet, original WISH, and WISH 2.0—were applied to the consumption data. Scores were calculated and normalized to carry out descriptive and comparative analyses [5].

- Statistical Analysis: The researchers performed descriptive and comparative analyses of the scores. Cluster analyses were also conducted to examine dietary pattern differences by country and gender [5].

Key Experimental Findings

- Overall Adherence: The study found low adherence to the Planetary Health Diet across all 11 European countries, indicating a substantial gap between current dietary patterns and recommendations [5].

- Geographical Patterns: Southern European countries such as Italy, Greece, and Spain showed comparatively higher adherence. This highlights the cultural specificity of dietary behaviors and the similarity between the PHD and the traditional Mediterranean diet [5].

- Gender Differences: Gender-based analyses revealed that women exhibited dietary behaviors more aligned with the PHD recommendations than men, consistent with existing literature on health-conscious dietary behaviors [5].

A related study on Italian dietary trends provided longitudinal data using the WISH2.0 score, noting a 5.1% decrease in adherence among adults between 2005-2006 and 2018-2020, illustrating the index's sensitivity to temporal trends [23].

Index Application Workflow

The diagram below outlines the logical workflow for applying and comparing these dietary adherence indices in a research context.

The Researcher's Toolkit

The table below details key resources and their functions for conducting research on dietary adherence scoring systems.

| Research Reagent / Resource | Function / Application in Research |

|---|---|

| EFSA Comprehensive Food Consumption Database | Provides standardized, population-level food consumption data expressed in grams per day, essential for calculating and comparing dietary indices across Europe [5] [23]. |

| EU Menu Methodology | A standardized dietary survey methodology that ensures data homogeneity and comparability across different European countries [5]. |

| Planetary Health Diet (PHD) Framework | The reference dietary pattern against which adherence is measured; it integrates public health and environmental sustainability goals [5]. |

| NOVA Food Classification System | A tool for categorizing foods by level of industrial processing, used in parallel with adherence indices to assess diet quality (e.g., ultra-processed food consumption) [23]. |

| Statistical Software (e.g., R, SPSS, Python) | Used for applying scoring algorithms, performing descriptive and inferential statistics (cluster analysis, cross-tabulation), and generating visualizations [24]. |

Performance Analysis and Researcher Insights

The experimental data demonstrates that while both indices are valuable, WISH 2.0 offers enhanced practical utility for certain research applications. Its inclusion of processed meat and alcoholic beverages, two categories with significant public health and environmental relevance, makes it a more comprehensive tool for contemporary dietary studies [5].

The continuous scoring system of WISH 2.0, compared to the ordinal system of the EAT-Lancet index, likely contributes to its greater discriminatory capacity. This allows researchers to detect more subtle variations and trends in dietary patterns across populations and over time [5] [23]. For studies requiring high sensitivity to demographic or temporal differences, WISH 2.0 may be the superior instrument.

Implementing Adherence Scoring in Research and Clinical Settings

In both scientific research and professional practice, structured scoring systems provide an essential methodology for transforming complex, multi-faceted qualitative assessments into quantifiable, comparable, and objective data. These systems enable researchers and clinicians to standardize evaluations across diverse domains, from dietary adherence and healthcare competency to emergency supply management. The fundamental purpose of these systems is to mitigate subjective bias, enhance reproducibility, and facilitate data-driven decision-making. As the volume and complexity of data in health and management sciences continue to grow, the sophistication of these scoring methodologies has evolved correspondingly, incorporating advanced mathematical frameworks to handle uncertainty and competing criteria.

This guide focuses on two distinct categories of scoring systems: those designed for evaluating adherence to dietary patterns and those based on the multi-criteria decision-making framework known as Evaluation based on Distance from Average Solution (EDAS). While applied in different domains, both share a common foundation in systematically quantifying performance against established benchmarks. The accurate assessment of dietary adherence, for instance, is crucial for understanding the real-world effectiveness of nutritional interventions, as the theoretical benefits of a diet can only be realized if participants follow them appropriately [20]. Similarly, EDAS provides a powerful tool for ranking alternatives in complex decision-making environments where multiple, often conflicting, criteria must be considered simultaneously [25].

Understanding the Scoring System Landscape

Dietary Adherence Scoring Systems

Dietary adherence scoring systems are designed to measure how closely individuals follow prescribed or voluntary dietary patterns. These systems typically convert complex dietary intake data into simplified numerical scores that can be statistically analyzed against health outcomes.

- Function and Purpose: They serve as objective metrics in nutritional epidemiology and clinical trials, enabling researchers to quantify exposure to dietary patterns rather than isolated nutrients [20] [23] [4]. This is crucial for establishing valid relationships between diet and health outcomes.

- Methodological Approach: Most systems operationalize adherence by assessing consumption of target food groups or nutrients, often based on dietary recalls, food frequency questionnaires, or food diaries. Points are allocated based on meeting specific intake targets, with total scores reflecting overall adherence levels [23] [4].

- Key Applications: These scores are used to investigate associations between dietary patterns and body mass index (BMI), chronic disease risk, nutrient adequacy, and sustainability metrics [20] [23].

The EDAS (Evaluation Based on Distance from Average Solution) Method

EDAS is a multi-criteria decision-making (MCDM) method that ranks alternatives based on their distance from the average solution across all evaluated criteria [26] [25].

- Core Mechanism: Unlike simpler weighted-sum approaches, EDAS calculates two separate measures for each alternative: positive distance from average (PDA) and negative distance from average (NDA). The optimal alternative is identified by having the highest PDA and lowest NDA values [25].

- Advantages Over Traditional Methods: Conventional weighted-sum scoring systems often incorrectly penalize poor performance on unimportant criteria while failing to sufficiently penalize poor performance on critical criteria. EDAS and its advanced variants address this logical flaw through their dual-distance calculation approach [27].

- Evolution and Extensions: The basic EDAS method has been extended to operate in various uncertain environments, including fuzzy, intuitionistic fuzzy, spherical fuzzy, and hesitant fuzzy sets, enhancing its ability to handle real-world decision-making ambiguity [26] [25].

Table 1: Comparison of Scoring System Categories

| Feature | Dietary Adherence Systems | EDAS-Based Systems |

|---|---|---|

| Primary Purpose | Quantify compliance with dietary patterns | Rank alternatives in multi-criteria decisions |

| Key Output | Adherence score (continuous or categorical) | Ranking order of alternatives |

| Methodological Basis | Nutrient/food group intake vs. targets | Distance from average solution across criteria |

| Common Applications | Nutritional epidemiology, clinical trials | Supply chain, emergency management, healthcare |

| Handling Uncertainty | Statistical confidence intervals | Fuzzy sets, hesitant fuzzy models |

Comparative Analysis of Dietary Adherence Scoring Systems

System Methodologies and Scoring Protocols

Various dietary adherence scoring systems have been developed, each with distinct methodological approaches and applications:

SAVoReD (Scoring Adherence to Voluntary Restriction Diets): This metric quantifies and compares adherence across food-group-restricting diets like Paleo, vegan, vegetarian, and whole-food plant-based (WFPB). It examines associations between adherence and diet quality (Healthy Eating Index), BMI, and diet duration. In application, higher adherence to WFPB and vegan diets was significantly associated with lower BMI, but no association was observed for vegetarian or Paleo diet followers [20].

AIDGI (Adherence to Italian Dietary Guidelines Indicator) and WISH (World Index for Sustainability and Health): These complementary indices assess diet quality using different reference standards. AIDGI measures alignment with national Italian dietary recommendations, while WISH assesses adherence to the Planetary Health Diet, integrating both health and environmental sustainability criteria. When applied to Italian consumption data, these indices revealed scores around 50% of theoretical maximums, indicating substantial room for improvement in dietary quality [23].

DASH (Dietary Approaches to Stop Hypertension) Scoring Algorithm: This system assesses adherence based on nine target nutrients: saturated fatty acids (≤6% of energy), total fat (≤27% of energy), protein (≥18% of energy), cholesterol (≤71.4 mg/1,000 kcal), dietary fiber (≥14.8 g/1,000 kcal), magnesium (≥238 mg/1,000 kcal), calcium (≥590 mg/1,000 kcal), potassium (≥2,238 mg/1,000 kcal), and sodium (≤1,143 mg/1,000 kcal). Participants receive one point for meeting each nutrient goal, 0.5 points for intermediate achievement, with a maximum score of 9. A score ≥4.5 typically classifies participants as "DASH accordant" [4].

Experimental Data and Performance Comparisons

Table 2: Performance Metrics of Dietary Adherence Scoring Systems in Research Applications

| Scoring System | Study Population | Key Findings | Associations with Health Outcomes |

|---|---|---|---|

| SAVoReD | Followers of WFPB, vegan, vegetarian, Paleo diets | Higher adherence to WFPB/vegan diets associated with lower BMI; association strongest in those following diet ≥2 years | No significant BMI association for vegetarian/Paleo diets; WFPB/vegan had healthiest HEI scores/BMI |

| AIDGI/WISH | Italian adults (2005-2020) | AIDGI: +5.6% in elderly, -5.9% in adults; WISH: +2.8% in elderly, -5.1% in adults | UPFs contributed 23% of energy despite being only 6% of consumption by weight |

| DASH Score | Israeli adults (n=2,579); NFL users vs. non-users | 32.1% of NFL users were DASH accordant vs. 20.6% of non-users | NFL users had higher odds of meeting protein, fiber, magnesium, calcium, potassium targets |

| Checklist vs. Global Rating | Medical students in OSCEs | Higher pass rates with global rating vs. checklist scoring | Combined approaches provide more comprehensive assessment |

Experimental Protocols for Dietary Adherence Assessment

Protocol 1: SAVoReD Application in ADAPT Study

- Data Collection: Dietary intake assessed using the Diet History Questionnaire II (DHQ-II). Additional data included anthropometric measurements (BMI) and demographic information [20].

- Scoring Implementation: The SAVoReD metric was applied to quantify adherence across four diet types (WFPB, vegan, vegetarian, Paleo). Each dietary pattern had specific food-group restrictions that defined adherence criteria [20].

- Statistical Analysis: Multiple regression models examined associations between SAVoReD adherence scores and outcome measures (HEI, BMI), adjusting for potential confounders. Interaction effects between adherence and diet duration were tested [20].

- Sample Characteristics: The study included sufficient sample sizes across diet categories to enable comparative analysis between groups with different restriction patterns [20].

Protocol 2: AIDGI and WISH Application to Italian Dietary Trends

- Data Source: Food consumption data from the European Food Consumption Comprehensive Database for 2005-2006 and 2018-2020, including 2,313 adults and 290 elderly in initial survey; 726 adults and 156 elderly in follow-up [23].

- Index Calculation: AIDGI scoring based on adherence to Italian dietary guidelines across food groups. WISH scoring based on alignment with Planetary Health Diet reference values [23].

- UPF Classification: Application of NOVA classification to identify ultra-processed foods and quantify their contribution to total energy and nutrient intake [23].

- Trend Analysis: Statistical comparison of scores between time periods, with stratification by age group (adults 18-64 years vs. elderly 65-74 years) and gender [23].

Protocol 3: DASH Accordance and NFL Use Study

- Study Design: Cross-sectional analysis of Israeli National Health and Nutrition Survey (2014-2016) data from 2,579 participants aged 21-64 years [4].

- DASH Scoring: Single 24-hour dietary recall used to calculate DASH score based on 9 nutrient targets. Participants categorized as DASH accordant (score ≥4.5) or non-accordant [4].

- NFL Use Assessment: Participants categorized as NFL users if they reported "always or often" checking nutrition facts on food labels [4].

- Statistical Modeling: Multivariable logistic regression estimated odds ratios for DASH accordance among NFL users versus non-users, adjusting for age, sex, education, SES, physical activity, smoking, and BMI [4].

The EDAS Method: Foundations and Advancements

Core Methodology and Mathematical Framework

The EDAS method operates through a structured sequence of calculations to rank alternatives in multi-criteria decision-making environments. The process begins with the construction of a decision matrix where rows represent alternatives and columns represent criteria. After determining criterion weights, the method calculates the average solution for each criterion across all alternatives. The key differentiator of EDAS is the subsequent calculation of positive and negative distances from this average solution [25].

The EDAS method's mathematical formulation can be visualized through its logical decision workflow:

Extensions and Applications in Complex Environments

The basic EDAS framework has been extended to handle various types of uncertain and imprecise information common in real-world decision scenarios:

Spherical Hesitant Fuzzy Soft EDAS: This advanced extension integrates three powerful mathematical concepts: spherical fuzzy sets (which consider membership, non-membership, and neutral membership functions), hesitant fuzzy sets (accommodating multiple possible membership values), and soft sets (parameterization tool). This integrated approach provides exceptional flexibility in capturing complex uncertainty in emergency decision-making contexts, such as post-flood relief supply management [26].

Domain Applications: EDAS and its extensions have been successfully applied across diverse fields including healthcare management, supply chain optimization, energy resource allocation, manufacturing process selection, and transportation planning. In healthcare, it has been used for medical supplier selection and treatment option evaluation [25].

Table 3: EDAS Method Variations and Their Applications

| EDAS Variant | Uncertainty Handling Capability | Sample Application | Key Advantage |

|---|---|---|---|

| Conventional EDAS | Crisp, numerical data | Business management, manufacturing | Simple implementation with clear interpretation |

| Fuzzy EDAS | Linguistic assessments, vague information | Healthcare management, supplier selection | Accommodates qualitative expert judgments |

| Spherical Hesitant Fuzzy Soft EDAS | Multi-dimensional uncertainty with parameterization | Emergency supply management | Handles membership, neutral membership, and non-membership simultaneously |

| Intuitionistic Fuzzy EDAS | Degree of membership and non-membership | Energy project selection | Captures support and opposition dimensions |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Methodological Components for Scoring System Implementation

| Research Component | Function/Purpose | Example Implementation |

|---|---|---|

| 24-Hour Dietary Recall | Captures detailed recent food intake | Israeli National Health Survey used single 24-hour recall with visual aids for portion estimation [4] |

| Food Consumption Database | Standardized food composition and consumption data | European Food Consumption Comprehensive Database provided Italian consumption data [23] |

| Healthy Eating Index (HEI) | Measures diet quality against Dietary Guidelines | Used as outcome measure in SAVoReD validation [20] |

| NOVA Classification System | Categorizes foods by processing level | Applied to identify ultra-processed foods in Italian diet analysis [23] |

| Spherical Hesitant Fuzzy Soft Aggregation Operators | Transforms complex uncertain data into decision scores | Enabled emergency supply decision-making in post-flood scenarios [26] |

| Checklist and Global Rating Scales | Standardized performance assessment in OSCEs | Compared for evaluating medical student clinical competencies [28] |

The comparative analysis presented in this guide demonstrates that structured scoring systems serve as indispensable tools across research and practice domains, but their effectiveness depends on appropriate selection and implementation. Dietary adherence systems like SAVoReD, AIDGI, WISH, and DASH provide validated methodologies for quantifying compliance with nutritional patterns, each with distinct strengths and applications. Meanwhile, EDAS and its advanced extensions offer robust solutions for complex multi-criteria decision environments characterized by uncertainty and competing priorities.

Critical considerations for researchers and practitioners include:

- Domain-Specific Requirements: Dietary research demands different scoring properties (sensitivity to food group consumption, nutrient adequacy) compared to emergency management decisions (handling uncertain information, rapid ranking of alternatives).

- Uncertainty Handling: The complexity of real-world data often necessitates advanced mathematical frameworks like spherical hesitant fuzzy sets to adequately capture decision ambiguity.

- Validation and Reliability: As demonstrated by the comparison of checklist and global rating systems in medical assessment, combining complementary scoring approaches often yields more comprehensive evaluation than reliance on a single method [28].

The evolution of scoring systems continues to address limitations of conventional approaches, particularly through negative scoring mechanisms that more logically penalize poor performance on important criteria [27] and through hybrid models that integrate multiple mathematical frameworks to better capture real-world complexity [26]. These advancements promise enhanced decision support capabilities across scientific research, clinical practice, and organizational management.

This guide provides an objective comparison of different algorithmic approaches for creating composite adherence scores, a common challenge in clinical and intervention research. Based on the gathered research, we compare the performance, methodological underpinnings, and applications of various scoring algorithms, with a specific focus on evidence from dietary intervention and medication adherence studies.

Comparative Analysis of Composite Adherence Algorithms

Adherence measurement moves beyond tracking single behaviors to creating composite scores that reflect overall adherence to multi-faceted interventions, such as complex medication regimens or dietary patterns. The table below compares the core algorithmic approaches identified in the literature.

Table 1: Core Algorithmic Approaches for Composite Adherence Measurement

| Algorithm Name | Core Computational Logic | Key Performance Characteristics | Best-Suited Applications |

|---|---|---|---|

| "All" (Concurrent) [29] [30] | Participant is considered adherent only if adherence to each individual component meets the threshold (e.g., PDC ≥80% for every medication). | - Stringency: Most conservative measure; flags non-adherence to any component. [29] - Predictive Power: Significantly predicts hazards of healthcare utilization (e.g., ER visits). [30] | Scenarios where adherence to all components is critical for efficacy or safety (e.g., multi-drug chemotherapy). |

| "At Least One" [29] [30] | Participant is considered adherent if adherence to any one of the components meets the threshold. | - Sensitivity: Identifies more patients as persistent; slowest decline in adherence over time. [29] - Predictive Power: Significantly predicts hazards of healthcare utilization. [30] | Initial screening to identify a pool of patients with some level of engagement. |

| "Both" (Joint Coverage) [29] | Calculates the proportion of days covered by all required medications simultaneously. | - Stringency: Falls between "All" and "At Least One". [29] - Classification: Can misclassify if a patient is highly adherent to one medication but not others. | Regimens where medications are intended to be taken concurrently for a synergistic effect. |

| "Average" [29] [30] | Computes the mean adherence value across all individual component adherence scores. | - Ease of Use: Simple to calculate and interpret. [30] - Predictive Power: Significantly predicts hazards of all-cause and diabetes-related ER visits. [30] | Providing a single, summary metric of overall adherence behavior across a regimen. |

| Weighted Scoring [31] | Creates a composite score from multiple inputs, each multiplied by a pre-defined weight reflecting its relative importance. | - Flexibility: Can incorporate both static (e.g., patient risk factors) and dynamic (e.g., recent behavior) data. [31] - Context-Rich: Offers a more nuanced and holistic risk profile. [31] | Complex interventions where different components have varying levels of importance or for real-time, dynamic risk assessment. |

Experimental Protocols for Adherence Measurement

The following section details the methodologies from key studies that have implemented and validated these algorithms, providing a blueprint for experimental design.

Protocol: Composite Medication Adherence in Diabetes

This retrospective cohort study provides a template for comparing composite adherence estimators and linking them to clinical outcomes [29] [30].

- Data Source: Commercial claims database (MarketScan 2002–2003).

- Cohort: 6,043 non-elderly patients with separate prescriptions for two classes of diabetes medications (sulfonylurea and thiazolidinedione).

- Intervention/Exposure: N/A (observational study of prescription refills).

- Adherence Measurement:

- Metric: Proportion of Days Covered (PDC).

- Timeframes: Measured over 90-day periods (8 quarters) and cumulatively over a 2-year study period.

- Algorithms: The four composite measures ("All", "At Least One", "Both", "Average") were calculated. Adherence was dichotomized using an 80% threshold.

- Outcome Measurement: The primary outcomes were all-cause and diabetes-related emergency room (ER) visits. Statistical analyses included Cox proportional hazards models and concordance statistics to evaluate the predictive power of each algorithm.

- Key Findings: All composite measures predicted hazards of ER visits, with no single algorithm showing clear superiority. The optimal dichotomization cut-point for predicting outcomes was not consistently 80% [30].

Protocol: Dietary Pattern Adherence and Periodontitis

This cross-sectional study demonstrates the application of composite scoring in nutritional epidemiology and its association with health outcomes [3].

- Data Source: Publicly available data from the National Health and Nutrition Examination Survey (NHANES), 2009–2014.

- Cohort: 8,571 adults aged 30 years and older.

- Intervention/Exposure: Adherence to four dietary patterns, calculated from two 24-hour dietary recalls and operationalized as continuous index scores:

- Healthy Eating Index-2020 (HEI-2020)

- alternative Mediterranean Diet Score (aMED)

- Dietary Approaches to Stop Hypertension (DASH)

- Dietary Inflammatory Index (DII)

- Adherence Measurement:

- Metric: Standardized dietary pattern scores.

- Modeling: Dietary indices were included in logistic regression models in single, double, and overall forms to explore association with periodontitis.

- Outcome Measurement: Periodontitis, defined via standardized full-mouth periodontal examinations measuring clinical attachment loss and probing depth.

- Key Findings: While all indices showed a significant effect in single models, only DASH and DII retained significance in the overall model. The contribution of dietary indices to periodontitis, determined by ROC analysis, was second only to demographic factors like sex and ethnicity [3].

Workflow Visualization of Composite Score Development

The diagram below outlines the generalized workflow for developing and validating a composite adherence score, synthesizing the methodologies from the cited research.

This table outlines essential materials and tools for conducting research into dietary intervention adherence, as featured in the experimental protocols.

Table 2: Research Reagents and Solutions for Adherence Studies

| Item Name | Function in Research | Example from Literature |

|---|---|---|

| NHANES Dietary Data | Provides large-scale, publicly available demographic, dietary, and health examination data for observational studies. | Used as the primary data source to calculate dietary indices (HEI-2020, aMED, DASH, DII) and link them to periodontitis status [3]. |

| 24-Hour Dietary Recall | A structured interview method to quantitatively assess an individual's food and beverage intake over the previous 24 hours. | Used in NHANES and the DG3D trial to collect detailed dietary intake data for calculating adherence scores [3] [32]. |

| Validated Dietary Pattern Scores (HEI, aMED, DASH, DII) | Standardized algorithms to convert complex dietary intake data into a single quantitative measure of diet quality or inflammatory potential. | These indices were the core "composite scores" tested for their association with chronic disease outcomes in large cohort studies [3] [13]. |

| Pharmacy Claims Databases | Provides objective, longitudinal data on medication prescription fills for calculating refill-based adherence metrics. | Used in studies of multiple medication adherence to calculate PDC and its composite variants ("All", "Average", etc.) [29] [30]. |

| Proportion of Days Covered (PDC) | The primary metric for measuring medication adherence using pharmacy refill data; represents the proportion of days a patient has medication available. | Served as the fundamental adherence metric from which composite scores ("All", "At Least One", "Average") were built in diabetes medication studies [29] [30]. |

| Video-Based Monitoring System (VSMS) | A digital tool using asynchronous video uploads to directly observe and verify self-administration of interventions in near-real-time. | Used in repeated-dose clinical trials to obtain dosing information with accuracy comparable to direct observation, validating participant adherence [33]. |

This guide provides an objective comparison of GPS tracking technologies, focusing on their performance, reliability, and suitability for different research scenarios, particularly those requiring precise location and movement data, such as in dietary intervention adherence studies involving field researchers or participants.

The table below summarizes the core performance characteristics of major GPS technology categories, highlighting key differentiators for research applications. [34] [35]

| Technology Type | Representative Examples | Tracking Accuracy | Battery Performance | Connectivity Requirements | Best-Suited Research Application |

|---|---|---|---|---|---|

| Dedicated GPS Tracking Devices | PAJ GPS, Samsara, GPS Insight | High (within meters via satellite) [34] | Long-lasting (days/weeks); hardwired options [34] | Satellite + Cellular; independent operation [34] | Long-term asset/fleet tracking; remote area studies [34] [36] |

| Smartphone Navigation Apps | Google Maps, Apple Maps, Waze [37] | Variable (uses GPS, Wi-Fi, cell triangulation) [34] | High drain on phone battery [34] [35] | Requires consistent cellular/Wi-Fi [34] | Urban field navigation; short-term, casual location sharing [34] |

| Professional GPS Software Platforms | SafetyCulture, Rhino Fleet, Quartix [36] | High (dependent on device hardware) [36] | Varies by connected device [36] | Cellular/Wi-Fi for data transmission [36] | Fleet management; logistics; large-scale field team coordination [36] |

Detailed Technology Comparison and Experimental Data

Dedicated GPS Tracking Devices

Dedicated devices are purpose-built hardware for continuous, reliable location monitoring.

- Experimental Protocol for Accuracy Testing: To evaluate a GPS device's accuracy, a controlled route is established with known geocoordinate checkpoints. The device is mounted on a vehicle that traverses the route in varied environments (urban canyons, open highways, rural areas). The reported coordinates from the device are logged and compared against the known checkpoint coordinates. The average deviation in meters across all checkpoints quantifies accuracy. [34]

- Key Performance Data: These devices connect directly to satellites, providing consistent, real-time accuracy typically within a few meters. They operate independently of smartphones, ensuring tracking continues even if a phone is turned off. Many devices offer extended battery life from days to weeks or can be hardwired to a vehicle for permanent power. [34] [35]

Smartphone-Based GPS and Navigation Apps

These apps utilize the smartphone's built-in GPS receiver and are characterized by their convenience and rich feature sets.

- Experimental Protocol for Urban Navigation: Researchers can compare apps by simulating common field tasks. For example, multiple smartphones running different apps (e.g., Google Maps, Apple Maps, Waze) are used simultaneously to navigate identical urban routes during peak and off-peak hours. Metrics such as route calculation time, adherence to the fastest route despite traffic, ETA accuracy, and battery consumption are measured and compared. [37] [38]

- Key Performance Data: Accuracy can fluctuate as apps often augment satellite GPS data with less precise Wi-Fi and cellular tower triangulation. Continuous tracking rapidly depletes the phone's battery. Functionality is entirely dependent on the phone's power and an active data connection, making it less reliable for uninterrupted, long-term data collection. [34] [35]

Professional GPS Tracking Software

These are comprehensive software platforms that utilize data from dedicated devices or smartphones to provide advanced analytics and management features.

- Experimental Protocol for Fleet Efficiency: In a study involving a field research team, vehicles are equipped with a software platform like Samsara or Quartix. Over a defined period, researchers monitor key metrics provided by the software, including idle time, route deviation from pre-defined plans, and fuel consumption. The data is used to analyze operational efficiency and identify bottlenecks. [36]

- Key Performance Data: These platforms offer features critical for managing mobile resources: real-time tracking, geofencing (virtual boundaries that trigger alerts), historical route replay, and detailed reporting on driver behavior and vehicle health. [36]

Visual Workflow: Selecting a GPS Tool for Research

The diagram below outlines the decision-making process for selecting the appropriate GPS technology based on research requirements.

The Researcher's Toolkit: Essential GPS Technology Solutions

The table below catalogs key technology solutions and their primary functions in a research context.

| Tool Name | Technology Type | Primary Research Function |

|---|---|---|

| PAJ GPS Trackers [35] | Dedicated Hardware | Discreet, long-term asset and vehicle monitoring with high accuracy. |

| Google Maps Platform [37] | Smartphone App / API | Urban navigation, route planning, and location data integration into apps. |

| Samsara [36] | Professional Software | Centralized fleet management with AI-driven insights and operational data. |

| Rhino Fleet Management [36] | Professional Software | Real-time vehicle tracking, geofencing, and driver behavior monitoring. |

| SafetyCulture (iAuditor) [36] | Professional Software | GPS-enabled asset tracking and mobile data collection for field audits. |

| Waze [37] | Smartphone App | Real-time, crowdsourced traffic data for optimizing field routes. |

This guide compares dietary intervention adherence scoring systems by examining their experimental implementation and performance data across three clinical domains: non-alcoholic fatty liver disease (NAFLD), pregnancy, and cardiometabolic conditions.

Comparative Performance of Adherence Scoring Systems

Table 1: Quantitative Performance Comparison of Adherence Scoring Systems Across Clinical Domains

| Clinical Domain | Scoring System Name | System Type & Components | Key Performance Data | Reference |

|---|---|---|---|---|

| NAFLD | Exercise and Diet Adherence Scale (EDAS) | 33-item questionnaire across 6 dimensions (e.g., understanding, belief, self-control); 165-point total score. | Sensitivity/Specificity: • Score ≥116 (Good): 100%/75.8% for predicting >500 kcal/d reduction • Score <97 (Poor): 89.5%/44.4% for predicting daily exercise Clinical Outcomes: Significant correlation with daily calorie reduction (P<0.05) and ALT reduction (P=0.02). | [39] [40] |

| Pregnancy | BHIP Adherence Algorithm | Combined score from prescribed protein/energy intakes and daily step counts. | Adherence Change: Significant increase from early (1.52±0.70) to mid-pregnancy (1.89±0.82), declining to 1.55±0.78 in late pregnancy (P<0.0005). Diet Quality: Intervention group significantly improved and maintained diet scores (18.7±7.6 to 22.9±6.1, P<0.001). | [19] |

| Cardiometabolic | Proportional Days Covered (PDC) with IMB Model | Algorithmic identification of PDC<80% plus Information-Motivation-Behavioral Skills model. | Adherence Improvement: Adjusted odds ratio of 1.29 (95% CI: 1.06-1.56) for BP medications versus usual care. Risk Factor Control: No overall improvement in HbA1c, systolic BP, or LDL-C; subgroup with pharmacist outreach showed improved HbA1c (-0.4%, 95% CI: -0.8% to -0.1%). | [41] |

| NAFLD | Modified Alternate-Day Calorie Restriction (MACR) Adherence | Direct monitoring of 70% calorie restriction on fasting days. | Adherence Rate: Maintained 75-83% throughout 8-week trial. Clinical Outcomes: Significant reductions in BMI (P=0.02), ALT (P=0.02), liver steatosis and fibrosis scores (both P<0.01) versus control. | [42] |

Detailed Experimental Protocols and Methodologies

Case Study 1: NAFLD and the Exercise and Diet Adherence Scale (EDAS)

Objective: To develop and validate a scale for rapidly assessing adherence to lifestyle interventions in NAFLD patients, for whom lifestyle correction is the primary treatment [39].

Methodology:

- Item Pool Establishment: Professional medical workers conducted face-to-face conversations with 20 patients with typical NAFLD to record reasons affecting exercise and diet adherence [39].

- Scale Development: Initial 36 items across five dimensions were refined using the Delphi method with five NAFLD research professors and one psychology professor. After expert consultation and statistical analysis, the final EDAS contained 33 items across six dimensions: Understanding and valuing, Belief, Self-control of diet, Strengthen exercise self-control, Control dietary conditions, and Strengthen conditions for exercise [39].

- Validation Cohort: NAFLD patients aged 18-70 years were enrolled from a single hospital, excluding those with other liver diseases, excessive alcohol consumption, or serious systemic diseases. Sample size was calculated using a factor analysis approach [39].

- Intervention Protocol: Patients received 6-month lifestyle interventions including moderate aerobic exercise (>4 times/week, 150-250 min cumulative) and dietary recommendations (500-1000 kcal daily reduction, balanced low-sugar, low-fat diet) [39].