Equivalence Trials in Nutritional Science: A Comprehensive Guide to Design, Methodology, and Application for Researchers

This article provides a comprehensive framework for designing and implementing equivalence trials in nutritional intervention research.

Equivalence Trials in Nutritional Science: A Comprehensive Guide to Design, Methodology, and Application for Researchers

Abstract

This article provides a comprehensive framework for designing and implementing equivalence trials in nutritional intervention research. Aimed at researchers, scientists, and drug development professionals, it explores the foundational concepts distinguishing equivalence from superiority and non-inferiority designs. The content details specific methodological considerations for nutritional trials, including control group selection, blinding challenges, and sample size calculation. It addresses common troubleshooting scenarios such as managing complex food matrices and adherence issues, while highlighting validation techniques and comparative analysis frameworks. By synthesizing current methodologies and evidence, this guide aims to enhance the quality and clinical relevance of nutritional equivalence research for robust evidence-based practice.

Understanding Equivalence Trials: Foundational Principles and Their Role in Nutritional Science

Equivalence trials are a specific type of clinical study designed to demonstrate that the effect of a new intervention is similar to that of an established comparator within a pre-specified margin [1]. In the context of nutritional intervention research, these trials answer the question: "Is the effect of intervention A equivalent to that of intervention B?" rather than seeking to prove superiority [1]. This design is particularly valuable when comparing a novel nutritional approach—which might be less expensive, easier to implement, or have fewer side effects—to a current standard, with the goal of establishing that it provides comparable health benefits [1].

The fundamental rationale for these trials stems from a limitation of traditional null hypothesis testing. In standard superiority trials, a non-significant result (p ≥ 0.05) does not prove equivalence; it may simply indicate insufficient statistical power [1]. Equivalence trials address this problem by introducing a pre-defined equivalence margin (Δ), which represents the largest difference in effect between two interventions that would still be considered clinically acceptable [1] [2]. The trial then uses confidence intervals to determine if the true effect difference likely lies within this margin.

Core Concepts and Methodological Framework

Distinguishing Between Trial Objectives

Understanding the distinctions between superiority, equivalence, and non-inferiority trials is fundamental to selecting the appropriate design. The following table summarizes their key characteristics:

Table 1: Comparison of Clinical Trial Primary Objectives

| Trial Objective | Primary Research Question | Interpretation of a Positive Result | Common Context in Nutrition Research |

|---|---|---|---|

| Superiority | Is Intervention A more effective than Intervention B? | Intervention A is statistically significantly better than B. | Comparing a new supplement to a placebo. |

| Non-Inferiority | Is Intervention A not unacceptably worse than Intervention B? | Intervention A preserves a pre-specified fraction of B's effect; it is not worse by a clinically important margin [2]. | Comparing a simplified dietary regimen to a complex standard one. |

| Equivalence | Is the effect of Intervention A similar to that of Intervention B? | The effects of A and B do not differ by more than a pre-defined equivalence margin in either direction [1]. | Demonstrating that a plant-based protein source is as effective as whey protein for muscle synthesis. |

The Equivalence Margin (Δ) and Statistical Analysis

The equivalence margin (Δ) is the most critical element in designing an equivalence trial. This pre-specified value represents the largest difference between interventions that is considered clinically irrelevant [1]. The choice of Δ should be justified by a combination of clinical judgment and empirical evidence, such as historical data on the minimal clinically important difference (MCID) for a key outcome [1].

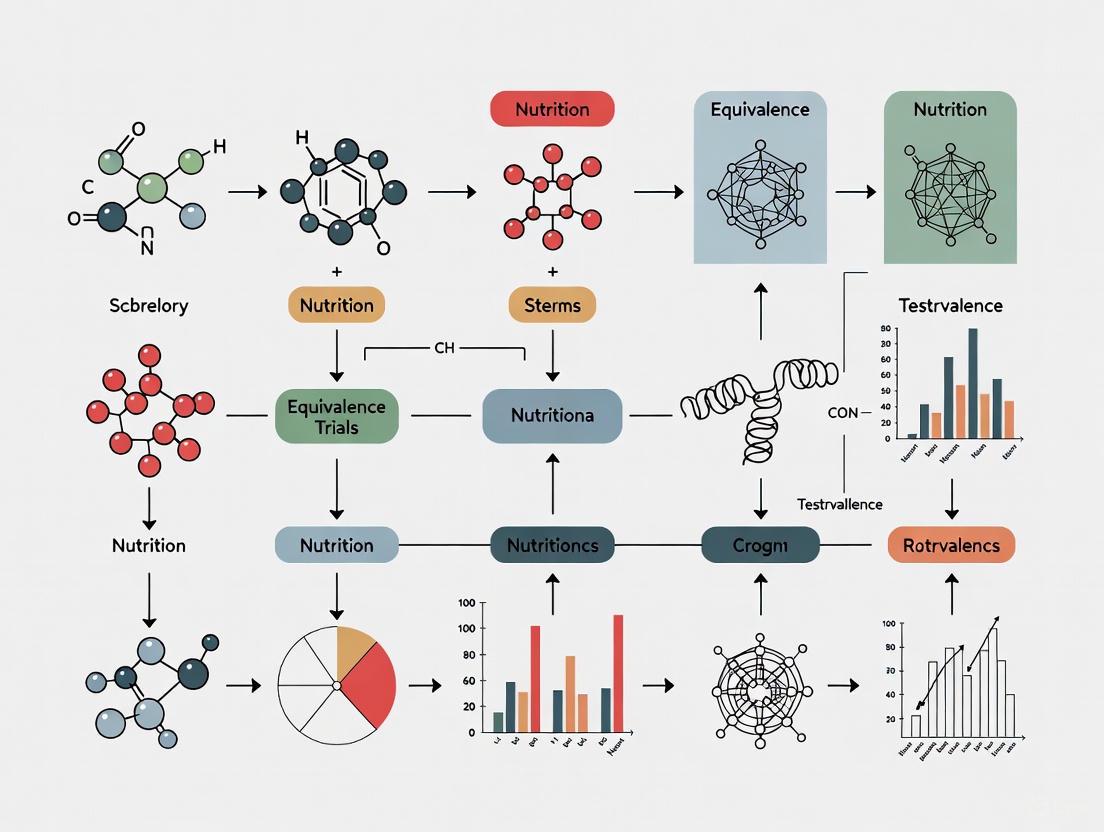

The statistical analysis is typically performed using a two-sided 95% confidence interval (CI) for the true difference between interventions [2]. The result is declared equivalent if the entire confidence interval lies within the range of –Δ to +Δ [2]. The following diagram illustrates the workflow for designing an equivalence trial and interpreting its results.

Diagram 1: Equivalence Trial Workflow and Interpretation

Regulatory Context and Guidelines

Regulatory bodies like the European Medicines Agency (EMA) provide specific guidance on the design and interpretation of equivalence trials. A core focus of modern regulations is ensuring that these complex trials are designed with a high degree of rigor to avoid false conclusions of equivalence.

The Estimands Framework (ICH E9(R1))

A significant recent development is the mandatory incorporation of the Estimands Framework following the ICH E9(R1) addendum [3] [2]. An estimand provides a structured definition of the treatment effect being measured, specifically addressing how post-randomization events, known as intercurrent events (e.g., participants discontinuing the dietary intervention, starting a rescue medication, or dying), are handled [4] [5]. This framework brings clarity and alignment between the trial's scientific question and its statistical analysis.

Regulators note that for equivalence trials, a single estimand is often insufficient. The EMA frequently recommends defining two co-primary estimands to thoroughly assess the impact of intercurrent events [4] [5]. For example, one estimand might use a "treatment policy" strategy (incorporating all data regardless of events), while another uses a "hypothetical" strategy (addressing what would have happened in the absence of the event) [5].

Key Regulatory Requirements

The EMA draft guideline emphasizes several requirements for robust equivalence trials [2]:

- Assay Sensitivity and Constancy: The trial must be capable of detecting a difference between interventions if one truly exists. This requires justification that the performance of the active comparator in the current trial is consistent with its historically established effect.

- Robust Justification of Margin: The equivalence margin (Δ) cannot be chosen based solely on statistical convenience or to reduce sample size. It must be clinically justified and reflect a difference that is acceptable to patients, clinicians, and regulators [2].

- Prohibitions on Post-Hoc Switching: A superiority trial cannot be re-defined as an equivalence trial after results are known, as this undermines the trial's credibility and introduces bias [2].

Application in Nutritional Intervention Research

The principles of equivalence trials are highly relevant to advancing the field of nutritional science. As research moves beyond simple placebo comparisons, directly comparing active interventions becomes necessary to establish optimal, practical, and sustainable dietary strategies.

Sample Experimental Protocol

A published scoping review on nutritional interventions provides a template for how these concepts can be applied in practice [6]. The following protocol outlines a hypothetical equivalence trial comparing two dietary strategies.

Table 2: Sample Protocol for a Nutritional Equivalence Trial

| Protocol Element | Description | Application Example |

|---|---|---|

| Objective | To test the equivalence of a novel, low-cost plant-based protein blend versus standard whey protein on muscle mass in older adults. | Primary: Change in appendicular lean mass (kg). |

| Design | Randomized, controlled, parallel-group equivalence trial. | Participants are randomized to one of two active interventions. |

| Participants | Healthy older adults, aged ≥60 years [7]. | Community-dwelling, free of major chronic diseases affecting muscle metabolism. |

| Interventions | Group A: Novel plant-based protein blend, 30g/day.Group B: Whey protein isolate, 30g/day.Both combined with standardized resistance training [7]. | Supplements are isocaloric and matched for appearance and taste. |

| Equivalence Margin | Δ = 0.5 kg for change in lean mass. | Based on the established Minimal Clinically Important Difference (MCID) for lean mass in sarcopenia. |

| Primary Estimand | Strategy: Treatment Policy.Endpoint: Change in lean mass from baseline to 6 months.Handling of Intercurrent Events: Use of non-protocol exercises is measured as a covariate. Discontinuation of the supplement is handled as a missing data problem. | Analysis follows the intention-to-treat principle. |

The Researcher's Toolkit for Nutritional Equivalence Trials

Successfully conducting an equivalence trial in nutrition requires careful consideration of methodological tools.

Table 3: Essential Methodological Tools for Nutritional Equivalence Trials

| Tool / Concept | Function & Importance |

|---|---|

| Equivalence Margin (Δ) | The cornerstone of the trial. Defines the threshold for clinical irrelevance. Its rigorous justification is paramount for regulatory and scientific acceptance [1] [2]. |

| Confidence Interval (CI) | The primary statistical tool for interpretation. A two-sided 95% CI for the difference between groups must lie entirely within -Δ to +Δ to claim equivalence [2]. |

| Estimand Framework | A structured plan that pre-defines how to handle intercurrent events (e.g., non-adherence to the diet, use of concomitant therapies), ensuring the estimated treatment effect answers a clear scientific question [4] [5]. |

| Standard Protocol Items (SPIRIT) | A reporting guideline for clinical trial protocols. Its use promotes transparency and completeness in protocol design, which is critical for complex equivalence trials [8]. |

| Historical Evidence Meta-Analysis | Used to justify the equivalence margin and the constancy assumption. It involves a systematic review and meta-analysis of previous trials of the active comparator to reliably estimate its effect size [2]. |

Equivalence trials provide a powerful and methodologically rigorous framework for demonstrating that two nutritional interventions produce clinically similar effects. Their successful execution depends on a clear understanding of their distinct logic, centered on the pre-specified equivalence margin and the use of confidence intervals for interpretation. The modern regulatory landscape, guided by the ICH E9(R1) estimands framework, demands heightened rigor in their design, particularly in the handling of intercurrent events and the justification of the margin. For researchers in nutritional science, mastering these core concepts is essential for generating robust evidence to compare active interventions and advance the field toward more effective, accessible, and personalized dietary strategies.

In the field of clinical research, particularly in nutritional science, the strategic selection of a trial objective is a cornerstone of a valid and informative study. The choice fundamentally shapes the trial's design, statistical analysis, and ultimate interpretation. While the gold standard for establishing the efficacy of a new intervention is the randomized clinical trial (RCT), specifying the correct hypothesis remains a challenging task for many researchers [9].

This guide provides a structured comparison of the three primary trial objectives: superiority, non-inferiority, and equivalence. For researchers designing trials on nutritional interventions—which can range from behavioral changes and fortification to supplementation—understanding these distinctions is critical to generating high-quality, actionable evidence [10]. A well-chosen design ensures that the trial is adequately powered to answer the right clinical question, thereby strengthening the evidence base for nutritional guidelines.

Core Concepts and Statistical Hypotheses

At their heart, these three trial types are defined by their unique statistical hypotheses, which are formulated around a pre-specified margin of clinical significance (Δ). This margin (delta) is the smallest difference in effect between two interventions that is considered clinically important [9] [1].

The following table summarizes the key characteristics of each trial type.

Table 1: Fundamental Comparison of Superiority, Non-Inferiority, and Equivalence Trials

| Feature | Superiority Trial | Non-Inferiority Trial | Equivalence Trial |

|---|---|---|---|

| Primary Objective | To demonstrate that a new intervention is superior to (better than) a comparator [9] [11]. | To demonstrate that a new intervention is not unacceptably worse than a comparator [9] [1]. | To demonstrate that a new intervention is neither superior nor inferior to a comparator, within a set margin [9] [1]. |

| Typical Context | Comparing a new intervention against a placebo or a standard of care to prove greater efficacy [11]. | Comparing a new intervention that has secondary advantages (e.g., lower cost, fewer side effects, less invasive) against an effective standard [9] [1]. | Demonstrating that two interventions are clinically interchangeable; often used for generic drugs or formulations [11]. |

| Statistical Hypotheses | H₀: μ₁ - μ₀ ≤ ΔH₁: μ₁ - μ₀ > Δ [9] | H₀: μ₁ - μ₀ ≤ -ΔH₁: μ₁ - μ₀ > -Δ [9] | H₀: |μ₁ - μ₀| ≥ ΔH₁: |μ₁ - μ₀| < Δ [9] |

| Interpretation of Result | Rejecting the null hypothesis (H₀) provides evidence that the new treatment is superior. | Rejecting the null hypothesis (H₀) provides evidence that the new treatment is not inferior. | Rejecting the null hypothesis (H₀) provides evidence that the treatments are equivalent. |

The Margin of Clinical Significance (Δ)

The choice of the margin (Δ) is a critical and nuanced decision, requiring both clinical judgment and empirical evidence [1]. It should be informed by asking: "What is the smallest difference between these interventions that would warrant disregarding the novel intervention in favour of the criterion standard?" [1]. This margin can sometimes be informed by the Minimal Clinically Important Difference (MCID), which can be estimated from patient or clinician input, expert consensus, or assumptions about standardized effect sizes [1]. For a superiority trial, a large Δ makes it harder to reject the null hypothesis, while in a non-inferiority or equivalence trial, a larger Δ makes it easier to claim non-inferiority or equivalence [9].

Methodological and Analytical Considerations

The different objectives of superiority, non-inferiority, and equivalence trials necessitate specific approaches to their design and analysis.

Analytical Populations: Intention-to-Treat vs. Per-Protocol

The choice of analysis population can significantly impact the results, especially in non-inferiority and equivalence trials.

- Intention-to-Treat (ITT) Analysis: Includes all randomized participants in the groups to which they were originally assigned. It is the primary analysis for superiority trials as it preserves the benefits of randomization and reflects real-world conditions where not everyone adheres to treatment [12].

- Per-Protocol (PP) Analysis: Includes only participants who completed the study without major protocol violations. Historically, non-inferiority trials placed greater emphasis on PP analysis because including non-adherent patients can dilute the observed difference between groups, artificially making them look more similar and increasing the chance of falsely claiming non-inferiority [12]. However, there is increasing skepticism about PP analyses as they subvert randomization, and their definition can be subjective. The trend is moving towards using ITT as the primary analysis, supplemented by sensitivity analyses to assess the impact of non-adherence [12].

Sample Size Calculation

The sample size formulae for these trials are mathematically related but are based on different assumptions.

- In a superiority trial, the calculation is based on achieving adequate power to demonstrate that the confidence interval for the difference between treatments excludes zero, assuming the new treatment is superior by a given amount (δ) [9] [12].

- In a non-inferiority trial, the calculation is based on achieving adequate power to demonstrate that the confidence interval excludes the non-inferiority margin (-Δ), assuming the two treatments are equally effective [9] [12].

For continuous outcomes, the sample size formulae for superiority and non-inferiority are identical when using two-sided confidence intervals, given their respective assumptions [12]. A common misconception is that non-inferiority trials must be much larger; however, their size depends entirely on the chosen margin and the assumption of equal efficacy [12].

Interpreting Results with Confidence Intervals

The interpretation of results is most intuitively understood through confidence intervals (CIs).

Practical Application in Nutritional Intervention Research

Nutritional interventions present unique methodological challenges, including the difficulty of blinding, ensuring adherence to dietary regimens, and selecting appropriate control groups [10]. The choice between superiority, non-inferiority, and equivalence designs is therefore crucial.

Selecting the Right Design: A Decision Framework

The following flowchart outlines a logical process for selecting the most appropriate trial objective for a nutritional intervention study.

The Researcher's Toolkit for Nutritional Trials

Successfully implementing a nutritional trial requires careful consideration of several methodological components. The CONSORT (Consolidated Standards of Reporting Trials) statement provides a baseline for reporting, and specific extensions are highly recommended for nutritional studies [10].

Table 2: Essential Methodological Toolkit for Nutritional Intervention Trials

| Component | Description & Application in Nutrition Research |

|---|---|

| CONSORT Extensions | Guidelines to improve reporting quality. Key extensions for nutrition research include: Non-Pharmacologic Treatment, Herbal Interventions (if using herbal supplements), Non-Inferiority and Equivalence Trials, and Cluster Trials (if intervening at a group level) [10]. |

| Randomization Techniques | A fundamental process to eliminate selection bias. Common types include:• Simple Randomization: Best for large samples (>200) [10].• Block Randomization: Ensures equal group sizes throughout the trial, ideal for slow recruitment [10] [13].• Stratified Randomization: Balances groups for key prognostic factors (e.g., age, BMI, disease severity) [10] [13]. |

| Control Group Design | The choice of control is pivotal for interpreting results. Options include:• Placebo Control: An inert substance matching the active intervention's look and taste (e.g., a placebo supplement) [13].• Active Control: The current standard of care or dietary recommendation [13].• Attention Control: Provides a similar level of participant contact as the intervention group without the active component [10]. |

| Blinding (Masking) | Reduces performance and detection bias. While challenging in behavioral nutrition, blinding is crucial in supplement trials using a double-dummy design (when comparing two active interventions with different administration routes) to maintain integrity [13]. |

The decision to frame a clinical trial question in terms of superiority, non-inferiority, or equivalence is a foundational one that dictates the study's entire architecture. For researchers in nutritional science, where interventions are often complex and compared against existing standards, this choice is particularly salient.

A superiority trial is the design of choice when the objective is to demonstrate a clear improvement in efficacy. In contrast, a non-inferiority trial is a powerful design when evaluating a new intervention that offers practical advantages over an established effective treatment, and the goal is to demonstrate that its efficacy is not unacceptably worse. An equivalence trial is appropriate when the goal is to show that two interventions are clinically interchangeable.

Moving beyond a rigid classification, the most robust approach is to pre-specify the hypothesis and margin based on sound clinical reasoning and to focus on the estimation of the treatment effect with its confidence interval, allowing for a nuanced interpretation of the results [1] [12]. By carefully selecting and applying the correct trial objective, nutritional researchers can generate higher-quality evidence that more effectively informs clinical practice and public health policy.

The Growing Importance of Equivalence Designs in Nutritional Research

Randomized clinical trials (RCTs) have traditionally been the gold standard for establishing the efficacy of new interventions in nutrition research. Historically, superiority trials dominated this landscape, designed to determine if one intervention was statistically better than another, often a placebo or control condition [14] [15]. However, as the field has matured, effective nutritional interventions have been established for many conditions, prompting new research questions. Investigators now often need to determine not if a novel intervention is better, but if it is as effective as an existing standard—particularly when the new option offers practical advantages such as lower cost, greater accessibility, improved sustainability, or better acceptability [1] [12].

This shift has driven the growing importance of equivalence and non-inferiority designs in nutritional research. These trials address a fundamentally different question from superiority trials. An equivalence trial is designed to show that the response to a novel intervention is neither better nor worse than a standard intervention by more than a pre-specified, clinically unimportant margin [14] [1]. A non-inferiority trial, its close relative, is a one-sided test aiming to show that a new intervention is not worse than the standard by more than that margin [14] [11]. The adoption of these designs allows the field to advance by validating new options that may be practically superior while being clinically "as good as" established standards, thereby expanding the toolkit available to clinicians, policymakers, and consumers.

Comparative Framework: Superiority, Non-inferiority, and Equivalence Trials

Understanding the distinction between trial types is fundamental to appropriate methodological selection. The following table summarizes the core hypotheses, interpretations, and common scenarios for each design.

Table 1: Key Characteristics of Superiority, Non-Inferiority, and Equivalence Trial Designs

| Trial Design | Primary Objective | Null Hypothesis (H₀) | Alternative Hypothesis (H₁) | Typical Application in Nutrition |

|---|---|---|---|---|

| Superiority | To demonstrate that a new intervention is superior to a control (placebo or active). | The new intervention is not superior to the control. | The new intervention is superior to the control [9]. | Testing a new probiotic against a placebo for improving gut health markers. |

| Non-Inferiority | To demonstrate that a new intervention is not worse than an active control by more than a pre-specified margin (Δ). | The new intervention is inferior to the active control by at least Δ [15]. | The new intervention is not inferior to the active control (i.e., the difference is less than Δ) [14]. | Comparing a less expensive, plant-based protein source to whey protein for muscle synthesis. |

| Equivalence | To demonstrate that a new intervention is neither superior nor inferior to an active control by more than a pre-specified margin. | The effects of the two interventions differ by more than the margin Δ [1]. | The effects of the two interventions differ by less than the margin Δ [9]. | Demonstrating the nutritional equivalence of a new fortified food product to a standard supplement. |

The logic of these designs diverges significantly from traditional null hypothesis significance testing. In a superiority trial, failing to reject the null hypothesis typically leads to an inconclusive result. In contrast, equivalence and non-inferiority trials are structured so that rejecting the null hypothesis provides evidence in support of the desired conclusion—that the two interventions are equivalent or that the new one is not inferior [14]. This reversal of the customary roles of the null and alternative hypotheses is a key conceptual shift for researchers adopting these methods.

Methodological Considerations for Equivalence Designs

Foundational Parameters and Design Choices

Successfully implementing an equivalence or non-inferiority design hinges on several critical methodological choices made during the planning phase.

The Choice of a Credible Criterion Standard: The entire logic of an equivalence trial presupposes a meaningful, well-established standard intervention for comparison [1]. For instance, when comparing a novel, more sustainable protein source to a standard one, the standard must have robust evidence supporting its efficacy. Establishing equivalence to a weakly effective standard is of little scientific or clinical value. Furthermore, researchers must guard against "biocreep"—a phenomenon where sequential trials with new interventions, each shown to be non-inferior to the previous generation, could gradually lead to the acceptance of progressively less effective treatments [1].

Defining the Equivalence Margin (Δ): The equivalence margin is the cornerstone of the design. It represents the largest difference in effect between the two interventions that would still be considered clinically or practically irrelevant [1] [15]. Choosing Δ is a challenging exercise that blends clinical judgement, empirical evidence, and stakeholder input. It should often be informed by the Minimal Clinically Important Difference (MCID) for a particular outcome [1]. This margin must be specified a priori in the trial protocol. An overly large Δ makes it too easy to claim equivalence for a potentially inferior intervention, while an overly small Δ may demand an impractically large sample size [15].

Randomization and Blinding: As with any RCT, rigorous methodology is essential to minimize bias. For nutritional interventions, which are often non-pharmacological, this can be challenging. Stratified randomization may be necessary if factors like age, BMI, or genetic predispositions are known to modify the response to the intervention [10]. Blinding can be difficult when comparing distinct dietary patterns (e.g., Mediterranean diet vs. plant-based diet) but should be implemented to the greatest extent possible, particularly for outcome assessors and data analysts [10].

The following diagram illustrates the logical pathway and key decision points in designing a robust equivalence or non-inferiority trial in nutritional research.

Analytical Approaches and Interpretation of Results

The analysis of equivalence and non-inferiority trials typically relies on confidence interval (CI) analysis rather than traditional p-value significance testing [1] [12].

- For an equivalence trial, researchers calculate a two-sided CI (commonly 95%) for the true difference between the interventions. If the entire CI lies entirely within the pre-specified equivalence margin, -Δ to +Δ, equivalence is concluded.

- For a non-inferiority trial, a one-sided CI (commonly 97.5%) is used. If the entire CI lies above the lower bound of -Δ, non-inferiority is concluded. If the CI also excludes zero, the new intervention can be declared superior to the standard.

A contentious issue has been the choice between Intention-to-Treat (ITT) and Per-Protocol (PP) analyses. ITT analysis includes all randomized participants and preserves the benefits of randomization, making it the preferred primary analysis for superiority trials. Historically, non-inferiority trials emphasized PP analysis (which excludes participants with major protocol violations) to avoid dilution of the treatment effect that could make it easier to claim non-inferiority [12]. However, there is increasing skepticism about PP analyses as they can subvert randomization and introduce bias [12]. Current best practice is to conduct both ITT and PP analyses, with the ITT analysis being primary, and ensuring that conclusions are consistent across both [12].

Case Study: Equivalence Design in Food Labeling Research

A mixed-method study from Iran provides a robust example of an equivalence design applied to a nutritional intervention, comparing a new Physical Activity Calorie Equivalent (PACE) food label with the mandatory Traffic Light Label (TLL) [16].

Table 2: Experimental Protocol for the PACE vs. TLL Equivalence Trial

| Aspect | Protocol Details |

|---|---|

| Objective | To determine if the newly designed PACE label is as effective as the TLL in helping mothers select lower-calorie food products. |

| Study Population | 496 mothers of school-aged children (6-12 years) were recruited and randomly assigned to one of five groups [16]. |

| Intervention Groups | 1. No nutrition label (Control)2. Current TLL only3. TLL + educational brochure4. PACE label only5. PACE label + brochure [16] |

| Experimental Procedure | Mothers were presented with samples of dairy products, beverages, cakes, and biscuits from their assigned group and asked to make selections. The primary outcome was the total calories of the selected products [16]. |

| Key Findings | The mean calories selected were lowest in the TLL + brochure group and highest in the PACE-only group. The PACE label, despite being designed with stakeholder input, did not lead to significantly lower caloric choices compared to the TLL, failing to demonstrate equivalence for the goal of reducing selected calories [16]. |

This case highlights the practical application of an equivalence framework. The novel PACE intervention offered a potential advantage by integrating physical activity information. However, the experimental data demonstrated that it was not equivalent to the established TLL for the key outcome of caloric choice, providing crucial evidence for policymakers. The study also underscores the importance of rigorous experimental testing, even for interventions developed with extensive qualitative input from target users and experts.

The Researcher's Toolkit for Nutritional Equivalence Trials

Table 3: Essential Methodological and Reagent Solutions for Nutritional RCTs

| Tool Category | Specific Example / Solution | Function & Importance in Equivalence Trials |

|---|---|---|

| Database & Methodology | Food Patterns Equivalents Database (FPED) | Converts foods consumed in dietary studies into USDA Food Pattern components, allowing researchers to assess and ensure equivalence in dietary interventions based on the Dietary Guidelines for Americans [17]. |

| Reporting Guideline | CONSORT Extension for Non-Inferiority Trials | Provides a structured checklist to ensure transparent and complete reporting of non-inferiority and equivalence trials, which is critical for judging the validity and interpretability of results [10]. |

| Dietary Control | Standardized Clinical Recipes with Herbs/Spices | Using precisely defined recipes, including specific types and amounts of herbs and spices, improves the acceptability of healthier study diets. This enhances dietary adherence and the reproducibility of the nutritional intervention, which is vital for demonstrating equivalence [18]. |

| Randomization Technique | Stratified Randomization | Ensures balanced distribution of key prognostic factors (e.g., baseline BMI, age, metabolic status) across intervention groups. This reduces variability and potential bias, increasing the study's power to detect true equivalence [10]. |

Equivalence and non-inferiority designs represent a maturing of nutritional science, reflecting a shift from simply establishing efficacy to optimizing practical implementation. These designs are indispensable for evaluating novel nutritional interventions that trade a marginal degree of efficacy for substantial gains in cost, accessibility, sustainability, or cultural acceptability. Their proper application demands rigorous methodology, including the careful a priori specification of a clinically justifiable equivalence margin, robust randomization and blinding procedures, and appropriate statistical analysis centered on confidence intervals. As the field continues to evolve, these trial designs will play an increasingly critical role in generating the evidence needed to refine dietary guidelines, inform public health policy, and provide a wider array of effective, practical nutritional strategies for diverse populations.

In clinical research, particularly when comparing therapeutic interventions, clearly defining the objective of a trial is paramount. This objective directly dictates the statistical framework used to analyze the data, specifically how the concepts of the Margin of Clinical Significance (Δ) and Tolerance Ranges are applied. While often related, these terms have distinct meanings: Δ (delta) is the predefined, single value representing the largest clinically acceptable difference, while a tolerance range typically defines the upper and lower bounds within which results are considered equivalent [19] [9] [1].

The three primary trial designs for comparing interventions are superiority, non-inferiority, and equivalence. The choice between them hinges on the research question—whether the goal is to demonstrate that a new treatment is better, not unacceptably worse, or practically the same as a comparator [15] [19]. The following table summarizes the core characteristics of each design.

Table 1: Comparison of Superiority, Non-Inferiority, and Equivalence Trial Designs

| Feature | Superiority Trial | Non-Inferiority Trial | Equivalence Trial |

|---|---|---|---|

| Primary Research Question | Is the new intervention better than the control? | Is the new intervention not worse than the control by a clinically important margin? | Is the new intervention neither superior nor inferior to the control? |

| Typical Comparator | Placebo or no treatment [19] | Active control (standard treatment) [20] [15] | Active control (standard treatment) [1] |

| Key Statistical Parameter | Target difference (δ) [19] | Non-inferiority Margin (Δ) [20] | Equivalence Margin (Δ) [1] |

| Interpretation of Margin (Δ) | The smallest difference considered clinically beneficial [9] | The largest loss of effect considered clinically acceptable [20] | The largest difference in either direction considered clinically irrelevant [9] [1] |

| Application of Margin | Not used in hypothesis; used in sample size and result interpretation [9] | Used to define the null hypothesis; the confidence interval must lie above -Δ [20] [19] | Used to define the null hypothesis; the confidence interval must lie between -Δ and +Δ [19] [9] |

Defining the Margin of Clinical Significance (Δ)

The Role of Delta (Δ) in Non-Inferiority and Equivalence

The Margin of Clinical Significance (Δ) is a pre-specified, critical value in non-inferiority and equivalence trials. It is not a statistical artifact but a clinically and statistically reasoned threshold that represents the maximum loss of effect stakeholders are willing to accept in exchange for the new intervention's secondary benefits (e.g., fewer side effects, lower cost, easier administration) [20] [15].

In a non-inferiority trial, if the new treatment is no more than Δ worse than the active comparator, it is declared "non-inferior." In an equivalence trial, if the difference between treatments lies entirely within the range of -Δ to +Δ, the treatments are considered "equivalent" for practical purposes [9] [1].

Methodological Framework for Determining Delta

Establishing a justifiable Δ is one of the most challenging steps in designing a non-inferiority or equivalence trial [20]. Regulatory guidelines recommend a process that integrates both statistical evidence and clinical judgment [20].

A common approach is the two-step method for defining Δ:

- Step 1 - Establish M1: Summarize the historical evidence of the active comparator's effect over placebo. This is often done by pooling effect estimates from previous placebo-controlled trials. The value M1 is typically based on the lower limit of the confidence interval of this pooled estimate, which is the effect most conservative to the new treatment [20].

- Step 2 - Define M2 (Δ) by applying the "Preserved Fraction": Clinical judgment is used to decide what proportion (or fraction) of the active comparator's effect (M1) must be preserved by the new drug. The margin Δ (also called M2) is the remaining fraction. For example, if it is decided that 50% of the effect must be preserved, then Δ = (1 - 0.5) × M1 [20].

The choice of the preserved fraction is not arbitrary. It depends on factors such as the seriousness of the disease, the benefit-risk profile of the new treatment, and the need to account for a potential diminished effect of the active comparator over time (a violation of the "constancy assumption") [20]. While a 50% preserved fraction is common in some fields like cardiology, stricter fractions (e.g., 80-90%) are required in others, such as antibiotics [20].

Table 2: Key Considerations and Common Methods for Setting the Margin (Δ)

| Consideration | Description | Example/Common Practice |

|---|---|---|

| Clinical Judgement | Involves defining the largest difference patients and clinicians would find acceptable in light of the new treatment's other benefits [1]. | A slightly less effective drug might be acceptable if it has a drastically improved safety profile. |

| Historical Evidence | Relies on meta-analyses of previous trials to quantify the effect of the active comparator versus placebo [20]. | Pooled data from RCTs showing the active comparator reduces event rates by 20% (95% CI: 15% to 25%) compared to placebo. |

| Preserved Fraction | The percentage of the active comparator's effect that the new treatment must retain [20]. | A 50% preserved fraction is frequently used, but this can vary. |

| Constancy Assumption | The assumption that the effect of the active comparator in the current trial is the same as in the historical trials [20]. | If the standard of care has improved, the effect of the active comparator may be smaller, making a fixed Δ from historical data potentially too large. |

| Fixed Margin Method | A conservative method where Δ is defined based on the lower confidence limit of the historical effect (M1) [20]. | Recommended by regulators like the FDA as it accounts for uncertainty in the historical estimate. |

Analytical Methods and Visualization

Analytical Workflows for Non-Inferiority and Equivalence

Once Δ is defined, the analysis of non-inferiority and equivalence trials typically involves comparing the confidence interval (CI) for the treatment effect from the current trial against the predefined margin [20] [1]. The following diagram illustrates the primary analytical workflow and decision logic for interpreting these results.

Figure 1: Analytical workflow for declaring non-inferiority or equivalence based on confidence intervals (CIs).

The Scientist's Toolkit: Reagents and Materials

The following table details key methodological "reagents" and conceptual tools essential for designing and interpreting trials involving margins and tolerance ranges.

Table 3: Essential Methodological Tools for Clinical Trial Design

| Tool Name | Function/Description | Application Context |

|---|---|---|

| Fixed-Margin Method | A statistical method to define Δ conservatively using the lower confidence limit of the historical effect of the active comparator [20]. | Recommended by regulators for non-inferiority trials to protect against bias from violated assumptions. |

| Synthesis Method | A statistical method that combines the variability of the current trial data with the variability of the historical estimate of the active comparator's effect [20]. | An alternative to the fixed-margin method; can be used to test the fraction of the active control's effect retained. |

| Confidence Interval (CI) | An estimated range of values that is likely to include the true treatment effect [15]. | The primary tool for analysis; compared against Δ to conclude non-inferiority or equivalence. |

| Constancy Assumption | The key assumption that the effect of the active comparator in the current trial is the same as its effect in the historical placebo-controlled trials [20]. | Critical for the validity of non-inferiority trials. If violated, the chosen Δ may be invalid. |

| Consolidated Standards of Reporting Trials (CONSORT) | A set of guidelines for reporting trials, including extensions for non-inferiority and equivalence designs [20] [10]. | Ensures transparent and complete reporting of trial methods and results, including the justification for Δ. |

Experimental Protocols and Case Studies

Protocol for Defining a Non-Inferiority Margin

This protocol outlines the steps for defining the non-inferiority margin (Δ) using the fixed-margin method, as recommended by regulatory agencies.

- Objective: To establish a statistically sound and clinically justified non-inferiority margin for a clinical trial comparing a new nutritional intervention (Test, T) against a standard nutritional therapy (Active Control, A).

- Background: The new intervention offers potential benefits in cost and palatability but may have slightly different efficacy. The goal is to demonstrate that any loss of efficacy is not clinically unacceptable.

- Materials: Historical data from at least two well-conducted, randomized, placebo-controlled trials of the active control (A) versus placebo (P).

- Procedure:

- Systematic Literature Review: Identify all relevant, high-quality, historical RCTs of A vs. P. The study designs and populations should be similar to the planned non-inferiority trial.

- Meta-Analysis: Pool the effect estimates of A vs. P for the primary endpoint. Perform both a fixed-effect and random-effects meta-analysis to obtain a pooled point estimate and its 95% confidence interval.

- Determine M1: Select the lower bound of the 95% CI from the meta-analysis as M1. This represents a conservative estimate of the smallest effect A is likely to have [20].

- Define Preservation Fraction: Convene a panel of clinical experts, statisticians, and patient representatives. Based on the severity of the outcome, the benefits of T, and the risk of constancy assumption violation, decide on the fraction of M1 that must be preserved (e.g., 50%). This is a clinical judgement [20].

- Calculate Δ (M2): Apply the formula: Δ = (1 - Preservation Fraction) × M1. For a 50% preservation, Δ = 0.5 × M1 [20].

- Document Rationale: Justify and document all choices, including the selected trials, meta-analysis results, and the reasoning behind the chosen preservation fraction, in the study protocol.

Case Study: Impact of Different Preservation Fractions

The importance of the chosen preservation fraction was demonstrated in a case-study of novel oral anticoagulants. Researchers re-analyzed 16 non-inferiority comparisons using two different preservation fractions [20].

- Finding: When a 50% preserved fraction was used, all 16 comparisons concluded non-inferiority. However, when a stricter 67% preserved fraction was applied, two of the 16 comparisons failed to demonstrate non-inferiority [20].

- Implication: The choice of the preservation fraction (and thus Δ) directly impacts the conclusion of a trial. A less stringent margin makes it easier to claim non-inferiority, but may allow a less effective treatment to be deemed acceptable.

The Margin of Clinical Significance (Δ) and Tolerance Ranges are foundational concepts in the design and interpretation of non-inferiority and equivalence trials. Properly defining Δ is a rigorous process that synthesizes historical evidence and clinical judgment, most commonly through the fixed-margin method and the concept of effect preservation. Analytical conclusions hinge on the relationship between the confidence interval of the treatment effect and this predefined margin. As demonstrated, the choice of Δ is not merely statistical but has direct and profound implications for clinical practice, determining whether a new intervention with secondary advantages can be considered a viable alternative to standard care.

In clinical research, particularly in nutritional intervention studies, equivalence trials are designed to demonstrate that a new intervention is not unacceptably different from an existing standard in terms of efficacy [21]. This approach is fundamentally distinct from traditional superiority trials and requires specific hypothesis framing. When comparing nutritional intervention approaches, researchers aim to show that a novel nutritional strategy (such as a new supplementation protocol, dietary counseling method, or fortified food product) produces outcomes that are "equivalent" to a established standard within a pre-specified margin [15] [21].

The core premise of equivalence testing reverses the conventional logic of hypothesis testing. Rather than attempting to reject a hypothesis of no difference, researchers seek to reject a hypothesis of a clinically important difference [1]. This methodological approach is particularly valuable in nutritional research when a new intervention offers potential advantages such as lower cost, improved palatability, easier administration, or fewer gastrointestinal side effects, while maintaining similar therapeutic efficacy to the current standard [15].

Fundamental Hypothesis Structure

Core Mathematical Formulation

In equivalence trials, the null and alternative hypotheses are formulated around a predetermined equivalence margin (Δ or Ψ), which represents the largest clinically acceptable difference between interventions [22] [21].

The hypotheses are structured as follows:

- Null Hypothesis (H₀): The difference between experimental and control interventions is greater than or equal to the equivalence margin

- Alternative Hypothesis (H₁): The difference between interventions is less than the equivalence margin

Mathematically, this is expressed as:

- H₀: |μₑ - μₐ| ≥ Δ

- H₁: |μₑ - μₐ| < Δ

Where μₑ represents the mean outcome of the experimental nutritional intervention, μₐ represents the mean outcome of the active control intervention, and Δ represents the pre-specified equivalence margin [22].

Two One-Sided Tests (TOST) Procedure

The statistical implementation of equivalence testing typically employs the Two One-Sided Tests (TOST) procedure, which decomposes the equivalence hypothesis into two separate one-sided tests [22]:

1. Non-inferiority component:

- H₀₁: μₑ - μₐ ≤ -Δ

- H₁₁: μₑ - μₐ > -Δ

2. Non-superiority component:

- H₀₂: μₑ - μₐ ≥ Δ

- H₁₂: μₑ - μₐ < Δ

The overall null hypothesis of non-equivalence is rejected only if both the non-inferiority and non-superiority null hypotheses are rejected [22].

Table 1: Hypothesis Structures Across Trial Types

| Trial Type | Null Hypothesis (H₀) | Alternative Hypothesis (H₁) | Primary Objective |

|---|---|---|---|

| Equivalence | The interventions are not equivalent (difference ≥ Δ) | The interventions are equivalent (difference < Δ) | Show similarity within margin Δ |

| Non-Inferiority | The new intervention is inferior (difference ≤ -Δ) | The new intervention is not inferior (difference > -Δ) | Show not unacceptably worse |

| Superiority | There is no difference between interventions | The interventions are different | Show statistically significant difference |

Comparison with Other Trial Hypotheses

Distinction from Non-Inferiority and Superiority Trials

Understanding the distinction between equivalence, non-inferiority, and superiority hypotheses is crucial for appropriate trial design [15]:

Superiority Trials follow traditional hypothesis testing framework:

- H₀: μₑ - μₐ = 0

- H₁: μₑ - μₐ ≠ 0

After rejecting H₀, researchers determine if the difference favors the experimental intervention and if the magnitude is clinically meaningful [15].

Non-Inferiority Trials employ a one-sided hypothesis test:

- H₀: μₑ - μₐ ≤ -Δ

- H₁: μₑ - μₐ > -Δ

This tests whether the new intervention is not worse than the control by more than the margin Δ, without evaluating potential superiority [15] [21].

Type I and Type II Errors in Different Trial Designs

The interpretation of statistical errors varies significantly across trial designs [15]:

Table 2: Statistical Errors Across Trial Types

| Trial Type | Type I Error (α) | Type II Error (β) |

|---|---|---|

| Equivalence | Falsely concluding equivalence when interventions are not equivalent | Failing to conclude equivalence when interventions are equivalent |

| Non-Inferiority | Falsely concluding non-inferiority when the intervention is inferior | Failing to conclude non-inferiority when the intervention is non-inferior |

| Superiority | Falsely concluding superiority when there is no superiority | Failing to conclude superiority when superiority exists |

Practical Implementation in Nutritional Research

Defining the Equivalence Margin (Δ)

The equivalence margin (Δ) is the most critical design parameter in equivalence trials and must be specified before commencing the study [21]. This margin represents the largest difference between interventions that would still be considered clinically irrelevant [1].

In nutritional research, Δ should be determined through:

- Clinical judgement of what constitutes a nutritionally irrelevant difference

- Previous research on minimal clinically important differences (MCID) for the outcome

- Regulatory guidelines when applicable

- Practical considerations of nutritional impact

For example, in a trial comparing two dietary counseling approaches for weight loss, Δ might be set at ±1.5 kg, representing a weight difference considered nutritionally insignificant in long-term weight management [21].

Statistical Testing Procedures

For continuous outcomes commonly measured in nutritional research (e.g., BMI, biomarker levels, nutrient intake measures), the TOST procedure uses the following test statistics [22]:

Test for non-inferiority: tᵢₙf = (Ȳₑ - Ȳₐ + Δ) / (s√(1/nₑ + 1/nₐ))

Test for non-superiority: tₛᵤₚ = (Ȳₑ - Ȳₐ - Δ) / (s√(1/nₑ + 1/nₐ))

Where Ȳₑ and Ȳₐ are sample means, nₑ and nₐ are sample sizes, and s is the pooled standard deviation calculated as:

s² = [Σ(Yₑᵢ - Ȳₑ)² + Σ(Yₐⱼ - Ȳₐ)²] / (nₑ + nₐ - 2)

Both tests are conducted at significance level α (typically 0.05), and equivalence is established only if both null hypotheses are rejected [22].

Experimental Protocols for Nutritional Equivalence Trials

Methodological Considerations for Nutritional Interventions

Nutritional interventions present unique methodological challenges that must be addressed in equivalence trial design [10]:

Intervention Types in Nutritional Research:

- Behavioral interventions (dietary counseling, education)

- Fortification (adding nutrients to foods)

- Supplementation (administering specific nutrients)

- Regulatory interventions (policy changes) [10]

Control Group Selection: Equivalence trials in nutrition require an active control (established effective intervention) rather than placebo, as equivalence to an ineffective intervention provides no useful evidence [21]. The control intervention must have established efficacy under similar conditions to support trial validity.

Randomization and Blinding Procedures

Proper randomization is essential to minimize bias in nutritional equivalence trials [10]:

Randomization Techniques:

- Simple randomization: Appropriate for large samples (>200 participants)

- Block randomization: Ensures equal group sizes throughout recruitment

- Stratified randomization: Controls for prognostic factors (age, BMI, disease status) that may affect nutritional outcomes [10]

Blinding procedures, while challenging in behavioral nutritional interventions, should be implemented whenever possible for outcome assessors and statisticians to maintain objectivity [10].

Sample Size Considerations

Power Calculations for Equivalence Trials

Equivalence trials typically require larger sample sizes than superiority trials due to the smaller Δ margin [21]. The sample size depends on:

- Equivalence margin (Δ)

- Type I error (α), typically 0.05 for each one-sided test

- Type II error (β), typically 0.1-0.2 (power 80-90%)

- Expected variability in the primary outcome

- Expected difference between interventions

For binary outcomes common in nutritional research (e.g., achievement of nutritional targets), sample size per group can be calculated as [15]:

n = [P₁(100-P₁) + P₂(100-P₂)] × (Z₁₋α + Z₁₋β/₂)² / (Δ - |P₁-P₂|)²

Where P₁ and P₂ are expected percentages in each group, Z represents critical values from standard normal distribution, α is type I error, β is type II error, and Δ is the equivalence margin.

Reporting and Interpretation

Confidence Interval Approach

Equivalence is typically demonstrated using confidence intervals rather than p-values alone [21]. The 95% confidence interval for the difference between interventions must fall entirely within the range -Δ to +Δ to establish equivalence [21].

For a more conservative approach corresponding to the TOST procedure, 90% confidence intervals are sometimes used, with the entire interval needing to fall within the equivalence margins [21].

Interpretation of Results

Proper interpretation of equivalence trial results requires considering both statistical and clinical significance [1]. Key considerations include:

- Evidence of Equivalence: When the confidence interval lies entirely within -Δ to +Δ

- Inconclusive Results: When the confidence interval includes values both within and outside the equivalence margin

- Non-Equivalence: When the confidence interval lies entirely outside the equivalence margin [21]

It is crucial to recognize that failure to demonstrate equivalence does not prove non-equivalence, just as a non-significant result in a superiority trial does not prove equivalence [21].

Research Reagent Solutions for Nutritional Equivalence Trials

Table 3: Essential Methodological Components for Nutritional Equivalence Trials

| Component | Function in Nutritional Research | Implementation Considerations |

|---|---|---|

| Validated Dietary Assessment Tools | Measure nutritional intake and adherence | FFQs, 24-hour recalls, food diaries validated for target population |

| Biomarker Assays | Objective measures of nutritional status | Select biomarkers with established responsiveness to intervention |

| Randomization Systems | Ensure unbiased allocation to interventions | Computer-generated sequences with allocation concealment |

| Blinding Procedures | Minimize assessment bias | Use blinded outcome assessors when participant blinding impossible |

| Equivalence Margin (Δ) | Define clinically irrelevant difference | Based on MCID, previous research, and clinical expertise |

| CONSORT Extension for Non-Pharmacological Trials | Reporting guidelines for nutritional interventions | Improves transparency and quality of trial reporting [10] |

Equivalence Hypothesis Testing Flow

Common Methodological Challenges and Solutions

Threats to Validity in Nutritional Equivalence Trials

Nutritional equivalence trials face several methodological challenges that can threaten validity [1]:

Intervention Fidelity: Ensuring consistent delivery of nutritional interventions across participants and over time is particularly challenging. Solutions include:

- Standardized intervention protocols

- Training and monitoring of intervention staff

- Regular assessment of adherence

Assay Sensitivity: The trial must be capable of detecting differences should they exist. This requires:

- Validated and responsive outcome measures

- Appropriate statistical power

- Minimized missing data

Choice of Active Control: The control intervention must be well-established with proven efficacy under similar conditions to support meaningful equivalence conclusions [21].

Regulatory and Reporting Considerations

Proper reporting of nutritional equivalence trials should follow CONSORT extensions appropriate for nutritional interventions [10] [8]:

- CONSORT for Non-Pharmacological Treatment Interventions

- CONSORT for Non-Inferiority and Equivalence Trials

- Template for Intervention Description and Replication (TIDieR) for detailed intervention description

Both intention-to-treat and per-protocol analyses should be presented, as they provide complementary information in equivalence trials [21].

Methodological Framework: Designing Robust Nutritional Equivalence Trials

Non-inferiority (NI) trials are a critical study design used to demonstrate that a new intervention is not unacceptably worse than an active comparator by a predefined margin. In nutritional science, this approach is particularly valuable when comparing novel nutritional interventions—such as dietary patterns, fortified foods, or supplements—against established standard care or other active interventions. These trials are essential when the new intervention offers potential advantages such as improved cost-effectiveness, enhanced palatability, better adherence, fewer side effects, or easier implementation, while its efficacy is expected to be similar, though possibly slightly reduced, compared to the standard intervention [20] [23].

The fundamental question an NI trial seeks to answer is whether the effect of a new intervention is "not much worse than" the active comparator, which differs from superiority trials that aim to prove one intervention is better than another [23] [24]. This design is especially relevant in nutritional research where placebo-controlled trials may be unethical when denying participants an effective nutritional intervention, and where practical considerations like cost and adherence are paramount [20] [10]. The core of a valid NI trial lies in the appropriate determination and application of the non-inferiority margin (Δ), which represents the largest clinically acceptable difference by which the new intervention can be worse than the comparator while still being considered non-inferior [20] [25].

Defining the Non-Inferiority Margin (Δ)

Clinical and Statistical Foundations

The non-inferiority margin (Δ) is a predefined threshold that represents the maximum clinically acceptable loss of efficacy that stakeholders (including clinicians, patients, and regulators) are willing to accept in exchange for the potential benefits of the new intervention [20] [25]. This margin must be specified a priori based on both clinical judgment and statistical reasoning [20] [26]. The determination of Δ is arguably the most challenging and critically important aspect of NI trial design, as an overly generous margin might lead to the acceptance of ineffective interventions, while an overly strict margin might reject potentially useful ones [20] [25].

Regulatory agencies such as the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA) recommend that the margin should be defined based on historical evidence of the active comparator's effect, typically derived from placebo-controlled trials [20]. This process involves two key steps: first, summarizing the historical evidence to establish the effect of the active comparator versus placebo (often denoted as M1); and second, applying clinical judgment to determine what fraction of this effect must be preserved by the new intervention [20]. The remaining fraction then constitutes the noninferiority margin (M2).

The Constancy Assumption

A fundamental assumption underlying NI trials is the constancy assumption—the premise that the effect of the active comparator in the current NI trial is the same as its effect in the historical studies used to define M1 [20]. Violations of this assumption can seriously compromise the validity of NI conclusions. For example, if the standard of care has improved over time, the actual effect of the active comparator versus placebo in the current setting might be smaller than historically observed. If this diminished effect is not accounted for, a new intervention might demonstrate noninferiority while actually being less effective than placebo in the current clinical context [20].

Table 1: Key Considerations for Defining the Non-Inferiority Margin

| Consideration | Description | Impact on Margin Selection |

|---|---|---|

| Seriousness of Outcome | Whether the endpoint involves irreversible morbidity or mortality | Smaller margins for more serious outcomes [25] |

| Effect Size of Active Comparator | Magnitude of the established treatment effect | Larger absolute margins may be acceptable with larger treatment effects [20] |

| Risk-Benefit Profile | Balance between potential benefits and risks of the new intervention | Wider margins may be acceptable for interventions with substantial safety advantages [25] |

| Constancy of Effect | Whether the comparator's effect has remained stable over time | May require margin adjustment if effect has diminished [20] |

| Stakeholder Perspectives | Input from patients, clinicians, and researchers | Ensures the margin reflects clinically meaningful differences [25] |

Methodological Approaches to Determining Δ

Statistical Framework and Effect Preservation

The statistical foundation for determining Δ typically begins with a meta-analysis of historical randomized controlled trials that compared the active comparator against placebo [20]. This analysis yields an estimate of the comparator's effect size (M1), which can be defined either as the pooled point estimate or as the lower confidence interval limit closest to the null effect, depending on the chosen method [20].

The next step involves determining the preserved fraction—the proportion of the active comparator's effect that the new intervention must retain. This is a clinical decision that reflects stakeholder willingness to exchange efficacy for other benefits. The noninferiority margin (M2) is then calculated as: M2 = (1 - preserved fraction) × M1 [20]. For example, if stakeholders decide that 75% of the active comparator's effect must be preserved, then M2 would be 25% of M1.

In practice, preserved fractions of 50% have been common in many fields, particularly for cardiovascular outcomes and irreversible morbidity or mortality [20]. However, stricter fractions are sometimes employed, such as 90% preservation in antibiotic trials [20]. The choice of preserved fraction significantly impacts trial conclusions; research on novel oral anticoagulants found that changing from a 50% to a 67% preserved fraction resulted in two interventions being reclassified from noninferior to inferior [20].

Analytical Methods for Non-Inferiority Testing

Three primary statistical methods are used to analyze NI trials, each applying the noninferiority margin differently:

Fixed-Margin Method (95%-95% Method): This approach, recommended by regulators like the FDA, defines the margin (M2) conservatively based on the lower limit of the confidence interval of the pooled point estimate from historical trials (the limit closest to the null effect) [20]. This incorporates an additional discount of the active comparator's effect to account for uncertainty in historical estimates and to protect against potential violations of the constancy assumption.

Point-Estimate Method: This method determines the margin based directly on the pooled point estimate of the active comparator's effect from historical trials, assuming constant variability in these estimates [20].

Synthesis Method: This approach adjusts the confidence interval from the NI trial to account for variability in the estimates of the active comparator's effect from historical trials [20]. It can also be implemented through a test statistic that evaluates whether the new intervention retained a prespecified fraction of the active comparator's effect.

Table 2: Comparison of Analytical Methods for Non-Inferiority Trials

| Method | Basis for Margin | Key Features | Regulatory Perspective |

|---|---|---|---|

| Fixed-Margin | Lower confidence limit of historical effect | Conservative; accounts for uncertainty in historical estimates; recommended by FDA [20] | Preferred method [20] |

| Point-Estimate | Pooled point estimate of historical effect | Less conservative; assumes constant variability [20] | Less favored due to potential bias [20] |

| Synthesis | Adjusts for variability in historical estimates | Can test preserved fraction directly; can assess superiority to putative placebo [20] | Accepted alternative with specific applications [20] |

Experimental Protocols and Methodological Standards

Trial Design Considerations

Proper design of NI trials requires careful attention to several methodological aspects beyond margin determination. The CONSORT (Consolidated Standards of Reporting Trials) statement includes extensions specifically for NI trials that provide reporting guidelines [10]. These guidelines recommend including a figure showing where the confidence interval lies in relation to the noninferiority margin, which enhances transparency and interpretability [20].

Randomization remains a fundamental requirement, with appropriate methods (simple, block, or stratified randomization) selected based on study characteristics and sample size considerations [10]. For nutritional interventions, which often have heterogeneous implementation, the "Non-Pharmacologic Treatment Interventions" extension of CONSORT provides particularly relevant guidance [10].

Unlike superiority trials, where intention-to-treat (ITT) analysis is generally conservative, in NI trials, ITT analysis may be anti-conservative because protocol deviations tend to make treatment groups more similar [25]. Therefore, both ITT and per-protocol analyses should typically be conducted, with noninferiority ideally required in both populations to support a robust conclusion [25].

Specific Considerations for Nutritional Interventions

Nutritional interventions present unique methodological challenges for NI trials. They are often complex and heterogeneous, ranging from nutrient administration and food fortification to behavioral interventions and nutritional education programs [10]. This complexity necessitates careful description of intervention components, including "the types and amounts of specific foods included within nutrition interventions in combination with preparation methods and study recipes" to ensure reproducibility and translatability [18].

Acceptability and adherence present particular challenges in nutritional trials. As noted in recent perspectives, "adherence to healthier dietary patterns is typically low because of many factors, including reduced taste, flavor, and familiarity to the study foods" [18]. This highlights the importance of designing culturally appropriate interventions and considering strategies such as incorporating herbs and spices to maintain acceptability while meeting nutritional targets [18].

Potential Pitfalls and Interpretive Challenges

Threats to Validity

NI trials face several unique threats to validity that require careful consideration:

Biocreep: This phenomenon occurs when successive generations of interventions are each shown to be noninferior to the immediately preceding standard, potentially leading to gradual erosion of treatment effectiveness over time [23] [1]. To prevent biocreep, regulators recommend comparing new interventions against the gold-standard therapy rather than the most recently approved treatment [23].

Poor Trial Conduct: Ironically, methodological shortcomings such as poor compliance, inadequate blinding, or protocol deviations can make it easier to demonstrate noninferiority by increasing similarity between treatment groups [23]. This contrasts with superiority trials, where such issues typically make it harder to demonstrate differences.

Inappropriate Margin Selection: Perhaps the most significant threat comes from selecting margins that are too wide, potentially allowing interventions with questionable efficacy to be deemed noninferior [26]. This risk underscores the importance of rigorous, predefined margin determination that accounts for both statistical and clinical considerations.

Complex Interpretations

The interpretation of NI trials can be counterintuitive. A treatment can be statistically inferior to the active comparator in a conventional analysis (with a confidence interval excluding zero but favoring the comparator) while simultaneously meeting the criteria for noninferiority if the entire confidence interval remains above the noninferiority margin [25]. This highlights the distinction between statistical and clinical significance in NI trials.

Additionally, demonstrating noninferiority does not automatically establish efficacy compared to placebo, particularly when the point estimate favors the comparator [25]. This necessitates complementary analyses to indirectly assess efficacy versus a putative placebo, especially when the new intervention shows slightly reduced efficacy compared to the active comparator [25].

Table 3: Essential Research Reagents for Nutritional Non-Inferiority Trials

| Research Reagent | Function/Application | Considerations for Nutritional Trials |

|---|---|---|

| Validated Dietary Assessment Tools | Quantify dietary intake and adherence | Must be validated for specific study population and dietary components [10] |

| Biomarkers of Nutritional Status | Objective measures of nutrient exposure and status | Strengthens validity when self-report may be unreliable [10] |

| Standardized Recipe Database | Ensure consistency in dietary interventions | Critical for reproducibility; should include specific ingredients and preparation methods [18] |

| Culturally Appropriate Food Options | Enhance intervention acceptability and adherence | Improves ecological validity and participant retention [18] |

| Blinding Materials | Maintain study blinding when possible | May include placebo foods/supplements with similar sensory properties [10] |

The determination of the non-inferiority margin Δ represents a critical intersection of statistical rigor and clinical judgment in the design of nutritional intervention trials. Proper margin setting requires synthesizing historical evidence of the active comparator's effect, determining an clinically acceptable preserved fraction, and selecting an appropriate analytical method. The fixed-margin approach, which conservatively uses the confidence interval limit from historical data, provides robust protection against various biases and is recommended by regulatory agencies.

Nutritional NI trials present unique methodological challenges related to intervention complexity, adherence, and acceptability that necessitate careful attention to trial design and implementation. Researchers must remain vigilant against threats to validity such as biocreep and poor trial conduct, while recognizing the complex interpretations that NI outcomes sometimes require. By adhering to established methodological standards and transparently reporting both design decisions and results, nutritional researchers can generate reliable evidence regarding interventions that may offer practical advantages while maintaining sufficient efficacy compared to established standards.

Sample Size Calculation Formulas for Equivalence Trials with Continuous and Binary Outcomes

In clinical research, particularly in nutritional intervention studies, equivalence trials are designed to demonstrate that a new intervention is not substantially different from an existing standard intervention by a clinically important margin [9]. Unlike superiority trials that aim to prove one treatment is better than another, and non-inferiority trials that seek to confirm a new treatment is not worse than an existing one, equivalence trials test whether a new treatment is neither sufficiently better nor worse than a comparator [27] [28]. This study design is particularly valuable in nutritional science when researchers want to show that a novel nutritional formulation, delivery method, or dietary approach produces equivalent health outcomes to established standards while potentially offering other benefits such as lower cost, improved palatability, easier administration, or reduced side effects.

The fundamental statistical approach for equivalence trials involves testing whether the entire confidence interval for the difference between treatments lies within a predetermined equivalence margin (Δ) [9]. This margin represents the maximum clinically acceptable difference that would still allow the treatments to be considered functionally equivalent. Proper determination of this margin requires both clinical judgment and statistical consideration, as setting it too wide might declare clearly different treatments as equivalent, while setting it too narrow might make it impractical to demonstrate equivalence without prohibitively large sample sizes [28]. In nutritional research, these margins might be based on biologically meaningful differences in outcomes such as biomarker changes, anthropometric measurements, or clinical endpoint rates.

Key Statistical Concepts and Parameters

Fundamental Hypotheses and Error Control

Equivalence testing employs a unique hypothesis framework that reverses the conventional null and alternative hypotheses [29]. The null hypothesis (H₀) states that the treatments differ by more than the equivalence margin, while the alternative hypothesis (Hₐ) states that the treatments differ by less than this margin. For a continuous outcome comparing two means (μ₁ and μ₂), the hypotheses are formally expressed as:

- H₀: |μ₁ - μ₂| > Δ

- Hₐ: |μ₁ - μ₂| ≤ Δ [9]

This framework requires careful control of two types of statistical errors. The Type I error (α), typically set at 0.05, represents the probability of incorrectly concluding equivalence when the treatments are actually different [27]. The Type II error (β), often set at 0.1 or 0.2, represents the probability of failing to conclude equivalence when the treatments are truly equivalent [29]. The power (1-β) of an equivalence trial, commonly set at 80% or 90%, is the probability of correctly concluding equivalence when the treatments are indeed equivalent [27].

Determining the Equivalence Margin

The equivalence margin (Δ) is a clinically determined value that represents the maximum difference between treatments considered clinically irrelevant [28]. This margin should be established based on clinical expertise, prior research evidence, and regulatory guidance when applicable. For nutritional interventions, this might involve determining what difference in blood pressure (for hypertension studies), HbA1c (for diabetes studies), or body composition changes (for weight management studies) would be considered unimportant in clinical practice. The margin must be established before trial initiation and should not be changed based on observed results [28].

Sample Size Calculation for Continuous Outcomes

Formulas and Parameters

For equivalence trials with continuous outcomes (e.g., blood pressure, cholesterol levels, body weight), the sample size calculation depends on several key parameters [27]. The formula for the sample size per group (n) is:

n = f(α, β/2) × 2 × σ² / Δ² [30]

Where:

- σ = standard deviation of the outcome measure

- Δ = equivalence margin (the maximum clinically acceptable difference)

- α = Type I error rate (typically 0.05)

- β = Type II error rate (typically 0.1 or 0.2)

- f(α, β) = [Φ⁻¹(α) + Φ⁻¹(β)]², where Φ⁻¹ is the inverse cumulative standard normal distribution

This formula assumes that the data are normally distributed, the variances are equal between groups, and the study uses a two-arm parallel design [27] [30].

Application in Nutritional Research

Table 1: Sample Size Requirements for Continuous Outcomes in Nutritional Equivalence Trials (α=0.05, Power=80%)

| Standard Deviation (σ) | Equivalence Margin (Δ) | Sample Size per Group | Nutritional Research Example |

|---|---|---|---|

| 0.5 | 0.2 | 129 | Micronutrient level changes |

| 1.0 | 0.5 | 103 | Blood glucose measurements |

| 1.5 | 0.75 | 98 | Body composition changes |

| 2.0 | 1.0 | 103 | Blood pressure measurements |

| 2.5 | 1.25 | 98 | Weight change (kg) |

Consider a trial comparing two nutritional formulations for their effect on systolic blood pressure reduction, where the standard deviation is 2.0 mmHg and the equivalence margin is set at 1.0 mmHg [31]. Using the formula with α=0.05 and β=0.1 (90% power), the sample size per group would be:

n = f(0.05, 0.1/2) × 2 × (2.0)² / (1.0)² = (1.64 + 1.28)² × 8 / 1 = 69 participants per group [30] [29]

Experimental Protocol for Continuous Outcomes

The following workflow diagram illustrates the key steps in designing an equivalence trial with continuous outcomes:

Key considerations for continuous outcomes in nutritional equivalence trials include:

- Measurement precision: Ensure outcome measures have sufficient precision and reliability to detect differences within the equivalence margin.

- Follow-up duration: Allow sufficient time for nutritional interventions to demonstrate their full effect.