From Lab to Clinic: Developing Robust Dietary Biomarkers Through Controlled Feeding Studies

This article provides a comprehensive guide for researchers and drug development professionals on the process of discovering and validating dietary biomarkers using data from controlled feeding studies.

From Lab to Clinic: Developing Robust Dietary Biomarkers Through Controlled Feeding Studies

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the process of discovering and validating dietary biomarkers using data from controlled feeding studies. It covers the foundational principles of study design, explores advanced methodological applications like machine learning and multi-omics integration, addresses common troubleshooting and optimization challenges, and outlines rigorous validation frameworks. By synthesizing current methodologies and emerging trends, this resource aims to advance the field of precision nutrition and enhance the objective measurement of dietary intake in clinical and public health research.

Laying the Groundwork: The Critical Role of Controlled Feeding Studies in Dietary Biomarker Discovery

The Role of Dietary Biomarkers in Modern Nutrition Research

Diet is a complex exposure that significantly affects health across the lifespan, yet accurately assessing dietary intake in free-living populations remains a substantial challenge in nutrition research [1]. Current dietary assessment approaches rely heavily on self-reported methodologies such as food frequency questionnaires (FFQs), multiple-day food diaries, and 24-hour recalls, which are often distorted by various systematic and random measurement errors [1]. Objective dietary biomarkers measured in biological specimens provide a crucial solution to this problem by offering reliable, unbiased measures of food intake that represent the true "bioavailable" dose of dietary exposure [1].

The emergence of precision nutrition as a field has accelerated the need for validated dietary biomarkers that can account for individual variations in metabolism and response to dietary interventions. These biomarkers serve multiple critical functions: they complement and validate self-reported dietary assessment methods, help quantify and calibrate measurement errors, and enable researchers to establish robust associations between diet and health outcomes with greater confidence [1] [2]. Furthermore, advances in metabolomic technologies have created unprecedented opportunities for discovering sensitive and specific biomarkers for a wide range of foods and nutrients [3] [1].

Current Biomarker Development Initiatives: The Dietary Biomarkers Development Consortium

The Dietary Biomarkers Development Consortium (DBDC) represents the first major systematic effort to improve dietary assessment through the discovery and validation of biomarkers for foods commonly consumed in the United States diet [3] [1]. Established in 2021 through collaboration between the National Institute of Diabetes and Digestive and Kidney Diseases (NIDDK) and the USDA-National Institute of Food and Agriculture (USDA-NIFA), the DBDC employs a structured, multi-phase approach to biomarker development [1].

Table 1: DBDC Three-Phase Biomarker Development Approach

| Phase | Primary Objective | Study Design | Key Outputs |

|---|---|---|---|

| Phase 1: Discovery | Identify candidate biomarker compounds | Controlled feeding trials with test foods in prespecified amounts; metabolomic profiling of blood and urine [3] | Characterization of pharmacokinetic parameters; candidate biomarker compounds [3] |

| Phase 2: Evaluation | Assess ability to identify consumers of biomarker-associated foods | Controlled feeding studies of various dietary patterns [1] | Evaluation of biomarker sensitivity and specificity across different dietary contexts [1] |

| Phase 3: Validation | Validate predictive value for recent and habitual consumption | Independent observational studies [3] | Validated biomarkers suitable for use in free-living populations [3] |

The DBDC operates through three academic study centers (Harvard University, Fred Hutchinson Cancer Center/University of Washington, and University of California Davis/USDA-ARS) coordinated by a Data Coordinating Center at Duke University [1]. This infrastructure ensures rigorous scientific standards through specialized working groups focused on dietary interventions, metabolomics, and data harmonization [1]. All data generated through the DBDC will be archived in publicly accessible databases as a resource for the broader research community [3] [1].

Experimental Protocols for Biomarker Discovery and Validation

Controlled Feeding Study Design

Controlled human feeding studies provide the foundation for robust nutritional biomarker development and validation [2]. The DBDC implements several controlled feeding trial designs where participants receive test foods in prespecified amounts, followed by comprehensive metabolomic profiling of serial blood and urine specimens [3]. These studies are designed to characterize the pharmacokinetic parameters of candidate biomarkers, including their appearance, peak concentration, and clearance patterns in relation to food intake [1].

Previous research, such as the Nutrition and Physical Activity Assessment Study Feeding Study (NPAAS-FS), has demonstrated the effectiveness of designing individual menu plans that approximate each participant's habitual food intake [2]. This approach minimizes perturbation of blood and urine measures that might otherwise be slow to equilibrate over a short feeding period while preserving the normal variation in nutrient and food consumption present in the study population [2].

Metabolomic Profiling and Analysis

The DBDC employs advanced metabolomic technologies to identify food-associated metabolite patterns [3] [1]. Each study center utilizes liquid chromatography-mass spectrometry (LC-MS) and hydrophilic-interaction liquid chromatography (HILIC) protocols to analyze biospecimens, increasing the likelihood of identifying similar molecules and molecule classes across sites [1]. The Metabolomics Working Group within the DBDC coordinates strategies for identifying sensitive and specific food biomarkers and works to harmonize metabolite identifications across analytical platforms based on MS/MS ion patterns and retention times [1].

Statistical Considerations and Validation

Appropriate statistical approaches are critical for biomarker development. Linear regression of consumed nutrients on potential biomarkers has been used to evaluate the performance of serum concentration biomarkers for various vitamins and carotenoids [2]. Established urinary recovery biomarkers of total energy intake (from doubly labeled water) and total protein intake (from 24-hour urinary nitrogen) serve as benchmarks for evaluating new biomarker candidates [2].

Table 2: Performance Characteristics of Selected Nutritional Biomarkers

| Biomarker | Biological Matrix | Regression R² Value | Performance Assessment |

|---|---|---|---|

| Vitamin B-12 | Serum | 0.51 [2] | Suitable for application in postmenopausal women [2] |

| Folate | Serum | 0.49 [2] | Performs similarly to established energy and protein biomarkers [2] |

| α-Carotene | Serum | 0.53 [2] | Represents nutrient intake variation effectively [2] |

| β-Carotene | Serum | 0.39 [2] | Acceptable for measuring intake variation [2] |

| Lutein + Zeaxanthin | Serum | 0.46 [2] | Suitable for application in postmenopausal women [2] |

| Energy Intake | Urine (Doubly Labeled Water) | 0.53 [2] | Established recovery biomarker used as benchmark [2] |

| Protein Intake | Urine (24-hour Nitrogen) | 0.43 [2] | Established recovery biomarker used as benchmark [2] |

Essential Research Reagents and Materials

Successful dietary biomarker research requires carefully selected reagents and analytical materials. The following table details key components of the research toolkit for dietary biomarker studies:

Table 3: Essential Research Reagents for Dietary Biomarker Studies

| Reagent/Material | Function/Application | Specifications |

|---|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) Systems | Metabolomic profiling of biospecimens; identification and quantification of candidate biomarker compounds [1] | Ultra-high performance LC (UHPLC) systems coupled with high-resolution mass spectrometers [3] |

| Hydrophilic-Interaction Liquid Chromatography (HILIC) Columns | Separation of polar metabolites in biological samples [1] | Standardized column chemistry across sites to enhance comparability [1] |

| Stable Isotope-Labeled Internal Standards | Quantification of metabolites; correction for analytical variation [1] | Isotopically labeled compounds identical to target analytes |

| Standard Reference Materials | Quality control and method validation [1] | Certified reference materials for targeted metabolites |

| Biospecimen Collection Supplies | Standardized collection, processing, and storage of blood and urine samples [3] [2] | EDTA tubes for plasma; sterile containers for urine; standardized processing protocols [1] |

| Dietary Control Materials | Preparation of controlled diets in feeding studies [2] | Precisely weighed food ingredients; standardized recipes |

Experimental Workflow and Biomarker Development Pathway

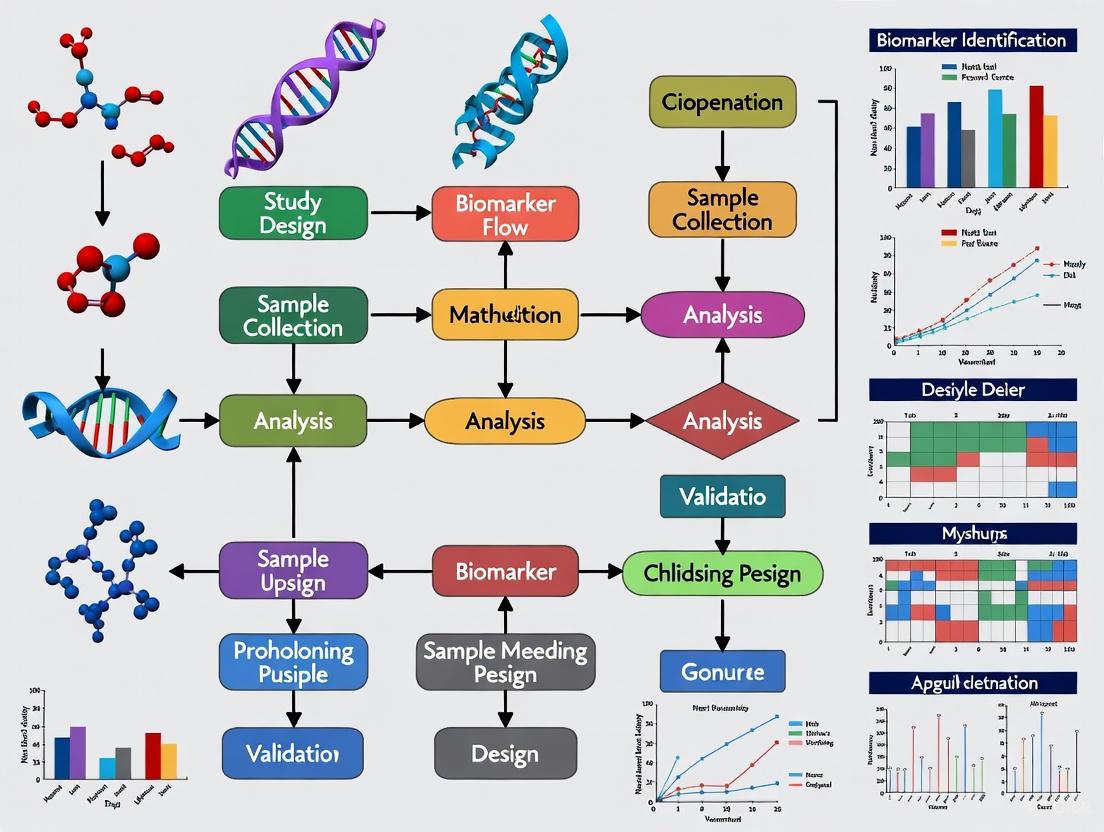

The following diagram illustrates the comprehensive workflow for dietary biomarker development from controlled feeding studies:

Applications in Precision Nutrition and Drug Development

The development of validated dietary biomarkers has far-reaching implications for precision nutrition and pharmaceutical research. In precision nutrition, these biomarkers enable researchers to move beyond one-size-fits-all dietary recommendations toward personalized nutrition approaches that account for individual metabolic variability [3]. The DBDC specifically aims to expand the list of validated biomarkers for foods consumed in the United States diet, which will advance understanding of how diet influences human health and disease risk [3] [1].

In drug development, dietary biomarkers provide crucial tools for assessing dietary exposures in clinical trials, particularly for nutrition-related conditions such as metabolic disorders, cardiovascular disease, and certain cancers [2]. Objective biomarkers help ensure accurate assessment of dietary compliance and can elucidate mechanisms by which diet modifies drug efficacy or toxicity. Furthermore, the three-phase validation approach employed by the DBDC ensures that biomarkers meet rigorous criteria for sensitivity, specificity, and reliability before implementation in research or clinical settings [3] [1].

The integration of dietary biomarkers with other molecular profiling data (genomic, proteomic, metabolomic) creates powerful multidimensional datasets for understanding complex diet-health interactions. As the field advances, these biomarkers will play an increasingly important role in developing targeted nutritional interventions, validating dietary assessment tools, and informing public health policy [3] [1].

Accurate dietary assessment is fundamental to nutrition research, yet traditional self-reported methods, such as food frequency questionnaires and dietary recalls, are plagued by significant measurement error, including substantial underreporting, especially among overweight and obese individuals [2]. Controlled human feeding studies provide a robust alternative by delivering known quantities of specific foods or entire diets, thereby creating a definitive framework for discovering and validating objective biomarkers of food intake (BFIs) [2] [4]. These biomarkers, measured in accessible bio-specimens like blood and urine, offer a pathway to objectively quantify dietary exposure, overcoming the biases inherent in self-report [5] [6]. The central design challenge lies in balancing experimental control with ecological validity. This article details the core methodologies for designing controlled feeding trials, focusing on two principal approaches: the use of standardized menus for all participants and the creation of individualized menus that mimic habitual intake, with a specific focus on their application in dietary biomarker development.

Core Methodologies for Dietary Control

The choice between a standardized or a mimicked habitual diet design is pivotal and depends on the primary research objective. The table below summarizes the key characteristics of each approach.

Table 1: Comparison of Controlled Feeding Study Designs for Biomarker Research

| Feature | Standardized Diet Design | Mimicked Habitual Diet Design |

|---|---|---|

| Primary Objective | To control and isolate the effect of a specific nutrient or food; ideal for mechanistic studies and validating known biomarkers [5]. | To preserve the natural variation in a population's diet; ideal for discovering novel biomarkers across a wide range of foods and for calibration [2] [4]. |

| Diet Composition | Identical menus for all participants, often with a high percentage of energy from a target food (e.g., 80% from ultra-processed foods) [5]. | Unique menus for each participant, designed to approximate their usual food intake as estimated from pre-study dietary records [2]. |

| Key Advantage | High internal validity; reduces inter-individual variance from different food types, preparation, and processing [2]. | Maintains real-world dietary variation, making findings more generalizable to free-living populations [2] [4]. |

| Key Challenge | May be unrepresentative of habitual diets, potentially affecting biomarker metabolism and participant compliance [2]. | Complex and resource-intensive to design and implement; requires extensive dietary interviewing and menu customization [2]. |

| Example Application | NIH clinical trial comparing an 80% ultra-processed food diet to a 0% ultra-processed food diet [5]. | Women's Health Initiative Feeding Study (NPAAS-FS) and the MAIN Study [2] [4]. |

Protocol: Implementing a Mimicked Habitual Diet Design

The following workflow outlines the key steps for implementing a mimicked habitual diet, a complex but powerful design for biomarker discovery.

Figure 1: Workflow for a mimicked habitual diet feeding study.

1. Participant Recruitment and Screening: Recruit participants based on specific inclusion/exclusion criteria. The MAIN Study, for example, excluded individuals with conditions or medications that could alter normal food metabolism, such as diabetes, kidney disease, or cholecystectomy, and required non-vegetarians [4]. Sample size calculations should be based on the expected variation in biomarker levels; the NPAAS-FS targeted 150 participants to have high power (>88%) to detect a biomarker with an R² ≥ 0.5 [2].

2. Baseline Dietary Assessment and Interview: Participants complete a detailed dietary assessment, such as a 4-day food record (4DFR). A critical subsequent step is a standardized, in-depth interview conducted by a study dietitian to assess usual food choices, brands, meal patterns, recipes, and food likes/dislikes not fully captured in the record [2]. This qualitative data is essential for menu personalization.

3. Menu Formulation and Energy Adjustment: Using data from the 4DFR and interview, individualized menus are designed. Energy needs are typically adjusted beyond self-reported intake to prevent non-compliance. In the NPAAS-FS, for 73% of women whose recorded intake was below estimated needs, calories were proportionally increased by an average of 335 ± 220 kcal/day [2]. Software like the Nutrition Data System for Research (NDS-R) and ProNutra is used for nutrient analysis and menu creation [2].

4. Food Provision and Compliance Monitoring: All foods and beverages are provided from a central kitchen. Participants are instructed to consume only the provided foods and to return any uneaten items, allowing for precise calculation of actual intake [2] [4].

Protocol: Implementing a Crossover Trial with Standardized Diets

For investigating the specific effects of a dietary component, a randomized controlled crossover trial is the gold standard.

1. Diet Formulation: Develop two or more tightly controlled diets. A notable example is the NIH study that used a diet comprising 80% of energy from ultra-processed foods versus a diet with 0% ultra-processed foods [5].

2. Randomization and Washout: Participants are randomly assigned to the sequence of diets. Each dietary period is followed by a washout period to allow biomarkers to return to baseline before the next intervention.

3. Controlled Feeding and Biomarker Collection: Participants consume all meals under supervision (e.g., at a clinical center) or as provided take-away meals. Biospecimens are collected at defined time points during each diet phase to capture the metabolic response [5].

The Scientist's Toolkit: Key Reagents and Materials

Successful execution of a controlled feeding study requires meticulous planning and a suite of specialized tools and materials. The following table details essential components of the research toolkit.

Table 2: Research Reagent Solutions for Controlled Feeding Trials

| Tool/Reagent | Function/Description | Example Use in Protocol |

|---|---|---|

| Dietary Analysis Software | Software platforms for nutrient analysis and menu creation. | The NPAAS-FS used NDS-R for analysis and ProNutra for creating menus, recipes, and production sheets [2]. |

| Biospecimen Collection Kits | Standardized kits for the collection, preservation, and transport of biological samples from free-living participants. | The MAIN Study provided participants with kits for home collection of urine samples, demonstrating high compliance and data quality [4]. |

| Doubly Labeled Water (DLW) | A gold-standard recovery biomarker for total energy expenditure (Ein). | Used in the NPAAS-FS as an objective measure to validate energy intake [2]. |

| Urinary Nitrogen | A recovery biomarker for estimating total protein intake. | Measured from 24-hour urine collections in the NPAAS-FS to objectively assess protein consumption [2]. |

| Mass Spectrometry | An analytical platform for metabolomic analysis to identify and quantify metabolite patterns in biospecimens. | Used by NIH researchers to find hundreds of metabolites correlated with ultra-processed food intake and to develop poly-metabolite scores [5]. |

Biomarker Discovery and Validation Workflow

The ultimate goal of many feeding studies is to develop robust biomarkers. The process from biospecimen collection to biomarker validation is multi-staged.

Figure 2: Biomarker discovery and validation pipeline.

Metabolomic Profiling and Machine Learning: As demonstrated in recent NIH research, biospecimens are analyzed using metabolomics to identify metabolites that correlate with dietary intake. Machine learning algorithms can then be employed to identify complex metabolic patterns and calculate a poly-metabolite score—a composite, objective measure of intake that reduces reliance on self-report [5]. This score must subsequently be validated in independent populations with different dietary habits and evaluated for its association with disease outcomes [5].

The strategic design of controlled feeding trials is instrumental in advancing the field of dietary biomarker development. The choice between a standardized menu and a mimicked habitual diet hinges on the research question, with the former offering precision for testing specific hypotheses and the latter providing a realistic variation necessary for discovering and calibrating biomarkers applicable to free-living populations. By adhering to rigorous protocols for diet design, participant management, and biospecimen collection, researchers can generate high-quality data to identify objective biomarkers, ultimately strengthening our understanding of the links between diet and health.

In the field of metabolomics, blood and urine stand as the two most accessible and information-rich biological specimens for discovering and validating dietary biomarkers. Their metabolic profiles provide a functional read-out of the body's physiological state, capturing the complex interplay between diet, metabolism, and health outcomes [7] [8]. For research based on controlled feeding study data, these biofluids are indispensable. Blood metabolomics offers a snapshot of systemic metabolic processes, while urine provides a cumulative record of waste and intermediate products excreted by the kidneys [9]. The non-invasive nature of urine collection and the clinical routine of blood drawing make them ideal for repeated sampling in longitudinal studies, a common feature of feeding trials [10] [9]. The systematic discovery of food intake biomarkers, as championed by initiatives like the Dietary Biomarkers Development Consortium (DBDC), relies on controlled feeding studies coupled with advanced metabolomic profiling of these specimens to identify compounds that are sensitive and specific to dietary exposures [1].

Comparative Analysis of Blood and Urine Specimens

The choice between blood and urine for metabolomic profiling depends on the research question, with each matrix offering distinct advantages and reflecting different biological information. The following table provides a structured comparison for easy reference.

Table 1: Comparative characteristics of blood and urine as specimens for metabolomic profiling.

| Characteristic | Blood (Serum/Plasma) | Urine |

|---|---|---|

| Biological Insight | Snapshot of real-time, systemic metabolism [7] | Cumulative record of metabolic waste and clearance over several hours [9] |

| Invasiveness | Invasive collection | Non-invasive collection [10] [9] |

| Collection Volume | Typically 1-10 mL | Typically 0.25-50 mL [9] |

| Metabolite Stability | Requires rapid processing to prevent glycolysis; highly sensitive to pre-analytical variables [8] | Generally more stable; less sensitive to time-dependent pre-analytical changes post-collection [9] |

| Key Advantages | Captures both endogenous and exogenous metabolites; rich in lipid species; standard for clinical chemistry | High concentration of polar metabolites; ideal for monitoring diurnal variation and long-term exposure [9] |

| Primary Applications | Diagnostic and prognostic biomarker discovery; pathophysiological mechanism investigation [11] [7] | Biomarker discovery for renal and urological diseases; monitoring nutritional interventions and toxic exposures [10] [9] |

Metabolite Classes and Analytical Targets

The metabolome encompassed in blood and urine consists of a diverse range of small molecule metabolites with a molecular mass typically less than 1500 Da [7]. These can be broadly categorized for the purpose of dietary biomarker research.

Table 2: Key classes of metabolites targeted in blood and urine for dietary biomarker discovery.

| Metabolite Class | Representative Members | Primary Biofluid | Role as Dietary Biomarkers |

|---|---|---|---|

| Amino Acids & Derivatives | Branched-chain amino acids, taurine, histidine [11] [7] | Blood, Urine | Markers of protein intake and energy metabolism; disrupted in conditions like colorectal cancer [11] |

| Lipids & Fatty Acids | Glycerophospholipids, sphingolipids, short-chain fatty acids [7] | Blood | Reflect fat intake and energy storage; indicators of cardiovascular health [8] |

| Organic Acids | Citrate, succinate, hippurate [7] | Urine | Products of energy cycles (TCA cycle) and gut microbiota metabolism; sensitive to diet changes [10] |

| Carbohydrates & Derivatives | Glucose, galactose, sugar alcohols | Blood, Urine | Direct markers of sugar and carbohydrate intake [8] |

| Secondary Plant Metabolites | Polyphenols, flavonoids, alkaloids | Urine, Blood | Highly specific biomarkers for intake of fruits, vegetables, and other plant-based foods [1] |

Detailed Experimental Protocols for Specimen Handling

Standardized protocols are critical to ensure the integrity of metabolomic data and the validity of discovered biomarkers. The following sections detail protocols for the collection, processing, and storage of blood and urine specimens in the context of controlled feeding studies.

Blood Collection and Serum/Plasma Processing

This protocol is adapted from methodologies used in large-scale biomarker studies [11].

- Patient Preparation: Participants should fast for 8-16 hours (ideally 12-14 hours) prior to blood collection to minimize the influence of recent dietary intake on the metabolome [11].

- Blood Draw: Collect blood via venipuncture into appropriate collection tubes (e.g., serum separator tubes or EDTA/K2-EDTA tubes for plasma).

- Clotting (for Serum): If serum is required, allow blood to clot at room temperature for 30 minutes.

- Initial Centrifugation: Centrifuge samples at 3000 rpm for 10 minutes at room temperature to separate cells from the liquid fraction.

- Supernatant Transfer & High-Speed Centrifugation: Transfer the supernatant (serum or plasma) to a clean centrifuge tube. Centrifuge again at 14,000 rpm for 10 minutes at 4°C to remove any remaining cellular debris or platelets [11].

- Aliquoting and Storage: Aliquot the clarified serum/plasma into cryovials and immediately freeze at -80°C until analysis. Avoid multiple freeze-thaw cycles.

Urine Collection and Processing

This protocol synthesizes standard practices from clinical metabolomic studies [10] [9].

- Collection: Collect 0.25 to 1 mL of mid-stream urine into a sterile polypropylene tube [9]. Note the fasting status of the participant, if applicable.

- Immediate Storage: Immediately place the specimen into a freezer (≤ -20°C) after collection.

- Centrifugation: Thawed urine samples must be centrifuged at 13,000 rpm for 10 minutes at 4°C to remove any solid debris [10].

- Filtration: Filter the supernatant through a 0.22 μm syringe filter to ensure removal of particulates.

- Long-term Storage: Store the processed urine samples frozen, preferably at -80°C, until ready for shipment and analysis. Ship frozen specimens on dry ice to maintain temperature [9].

Analytical Workflow for Metabolomic Profiling

The journey from a collected biofluid to biomarker discovery involves a multi-step process that integrates laboratory techniques and advanced data analysis. The following diagram illustrates the core workflow.

Diagram 1: Metabolomics biomarker discovery workflow.

Untargeted Metabolomics via Liquid Chromatography-Mass Spectrometry (LC-MS)

The core analytical platform for discovering novel dietary biomarkers is untargeted LC-MS, which allows for the unbiased profiling of thousands of metabolites in a single sample [10] [11] [8].

- Chromatographic Conditions:

- Column: ACQUITY UPLC BEH C18 (e.g., 2.1 mm × 100 mm, 1.7 μm) or HSS T3 column for polar metabolites [10] [11].

- Mobile Phase: Phase A: Water with 0.1% formic acid; Phase B: Acetonitrile with 0.1% formic acid [10] [11].

- Gradient: A typical reverse-phase gradient runs from 1% to 99% organic phase (B) over 10-15 minutes, followed by a re-equilibration step [10].

- Flow Rate: 0.40 mL/min [10].

- Injection Volume: 2-5 μL of processed sample [11].

- Mass Spectrometric Conditions:

- Ionization: Electrospray Ionization (ESI) in both positive and negative ion modes to maximize metabolite coverage [10] [11].

- Mass Analyzer: Quadrupole Time-of-Flight (Q-TOF) or similar high-resolution mass spectrometer for accurate mass measurement [10] [11].

- Mass Range: Full scan from m/z 50 to 1000 [10].

- Capillary Voltage: 2.0 - 3.2 kV [10] [11].

- Source Temperature: 100°C - 110°C; Desolvation Temperature: 200°C - 350°C [10] [11].

- Quality Control: A pooled Quality Control (QC) sample, created by combining a small aliquot of every sample in the study, is analyzed repeatedly throughout the batch to monitor instrument stability and for data normalization [10] [11].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful metabolomic profiling requires carefully selected reagents and materials to ensure analytical robustness and reproducibility.

Table 3: Essential research reagents and materials for blood and urine metabolomics.

| Item | Function/Application | Example Specifications |

|---|---|---|

| LC-MS Grade Solvents | Used as mobile phases and for sample extraction/reconstitution to minimize background noise and ion suppression. | Acetonitrile, Methanol, Water (all HPLC-MS grade) [10] [11] |

| Acid Additives | Modifies pH of mobile phase to improve chromatographic separation and ionization efficiency in ESI-MS. | Formic Acid (Optima LC/MS grade) [10] [11] |

| Internal Standards | Added to each sample to correct for variability during sample preparation and instrument analysis. | Stable Isotope-Labeled Compound Mixtures (e.g., for targeted analysis) [8] |

| Collection Tubes | For biological specimen collection and initial storage. | Polypropylene Tubes (e.g., Eppendorf Cat #022363204) [9]; EDTA tubes for plasma; serum separator tubes |

| Syringe Filters | Removal of particulate matter from processed urine or protein-precipitated serum samples prior to LC-MS injection. | 0.22 μm, Nylon or PVDF membrane [10] |

| Chromatography Columns | Separation of complex metabolite mixtures based on hydrophobicity before they enter the mass spectrometer. | ACQUITY UPLC BEH C18, 1.7 μm, 2.1x100mm [10] [11] |

| Metabolomics Databases | Annotation and identification of unknown metabolites based on accurate mass, MS/MS fragments, and retention time. | Human Metabolome Database (HMDB), Kyoto Encyclopedia of Genes and Genomes (KEGG) [11] |

Data Analysis and Integration with Feeding Study Data

The raw data generated from LC-MS must be processed to extract meaningful biological information and correlated with dietary intake data from controlled feeding studies.

- Raw Data Conversion: Convert raw mass spectrometer files to an open format (e.g., mzXML) using software like MSConvert [11].

- Peak Processing: Use computational tools like XCMS in R for peak picking, retention time alignment, and feature grouping across all samples. Key parameters include peak width, mass accuracy (ppm), and signal-to-noise threshold [11].

- Multivariate Statistical Analysis: Apply unsupervised (e.g., Principal Component Analysis, PCA) and supervised (e.g., Orthogonal Projections to Latent Structures-Discriminant Analysis, OPLS-DA) methods to identify metabolic features that discriminate between different dietary groups [10] [11].

- Biomarker Identification and Validation: Statistically significant features are identified by querying their accurate mass and MS/MS spectra against metabolomic databases (HMDB, KEGG) [11]. The performance of these candidate biomarkers is then validated in independent sample sets or observational cohorts [1].

- Pathway Analysis: Enrichment analysis tools (e.g., MetaboAnalyst) map the dysregulated metabolites onto biochemical pathways (e.g., primary bile acid biosynthesis, taurine metabolism) to elucidate the biological impact of the dietary intervention [11].

Blood and urine are foundational pillars in the metabolomic assessment of dietary intake. Their complementary nature provides a powerful, multi-faceted view of the metabolic phenotype. The rigorous application of standardized protocols for collection, processing, and analysis, as detailed in these application notes, is paramount for generating high-quality, reproducible data. When integrated with the controlled conditions of feeding studies, metabolomic profiling of these biofluids moves beyond simple correlation to establish causal relationships between diet and metabolic response. This approach is dramatically expanding the list of validated dietary biomarkers, thereby enhancing our ability to objectively assess diet and understand its precise role in health and disease.

Diet is a major modifiable risk factor for chronic diseases, yet accurately assessing dietary intake in free-living populations remains a significant challenge in nutrition research [1]. Current methods, such as food frequency questionnaires and 24-hour recalls, rely on self-reporting and are susceptible to systematic and random measurement errors [1]. To address these limitations, the Dietary Biomarkers Development Consortium (DBDC) was established in 2021 as the first major initiative to systematically discover and validate objective biomarkers for foods commonly consumed in the United States diet [1] [3].

This case study examines the DBDC's Phase 1 approach, which implements controlled feeding trials to identify candidate biomarkers using metabolomic technologies. The consortium's work aims to significantly expand the list of validated dietary biomarkers, thereby enhancing the precision of nutritional science and improving our understanding of diet-health relationships [1] [12].

DBDC Organizational Structure and Governance

The DBDC operates through a coordinated network of research centers and committees overseen by the National Institute of Diabetes and Digestive and Kidney Diseases (NIDDK) and the USDA-National Institute of Food and Agriculture (USDA-NIFA) [1]. The organizational structure ensures rigorous scientific discovery and validation of dietary biomarkers.

Table: DBDC Organizational Structure and Responsibilities

| Component | Institution | Primary Responsibilities |

|---|---|---|

| Study Centers | Harvard University (with Broad Institute), Fred Hutchinson Cancer Center (with University of Washington), University of California Davis (with USDA-ARS) | Conduct controlled feeding trials; collect and process biospecimens; perform metabolomic analyses [1] |

| Data Coordinating Center (DCC) | Duke University | Administrative coordination; data quality control; data analysis for reports; data submission to repositories [1] |

| Steering Committee | Principal investigators from study centers, DCC, NIDDK, and USDA-NIFA | Governing body making strategic scientific and administrative decisions [1] |

| Data Safety Monitoring Board | Independent experts | Regular review of progress, participant safety, data integrity, and scientific rigor [1] |

Three specialized working groups support the consortium's operations: the Dietary Intervention Working Group harmonizes feeding study protocols, the Metabolomics Working Group coordinates analytical methods for biomarker identification, and the Data Analysis/Harmonization Working Group standardizes data collection and analysis plans [1].

Phase 1 Study Designs and Objectives

DBDC Phase 1 employs controlled feeding trials to identify candidate biomarkers and characterize their pharmacokinetic parameters. The three study centers implement complementary research protocols focused on different food groups [1] [13].

Table: DBDC Phase 1 Study Characteristics

| Research Center | Primary Food Focus | Study Status | Key Objectives |

|---|---|---|---|

| UC Davis Dietary Biomarker Development Center | Fruits and vegetables [13] [14] | Recruiting [13] | Identify biomarkers linked to specific fruits and vegetables; determine dose and time responses of metabolites [15] |

| Dietary Biomarker Intervention Core (Harvard) | Proteins (chicken, beef, salmon, soybeans), carbohydrates (whole wheat, potatoes, corn, oats), and dairy (yogurt, cheese) [13] [14] | Recruiting [13] | Conduct tightly controlled pharmacokinetic and dose-response feeding studies across range of food items [13] |

| Seattle Dietary Biomarker Development Center (Fred Hutch) | USDA MyPlate foods, food groups, and dietary patterns [13] [16] | Recruiting [13] | Discover biomarkers of MyPlate food groups/subgroups; determine half-lives and dynamic range [17] |

The overarching goal of Phase 1 is to identify sensitive and specific candidate biomarkers by administering test foods in prespecified amounts to healthy participants and conducting metabolomic profiling of blood and urine specimens collected during feeding trials [1]. Data from these studies will characterize the pharmacokinetic parameters of candidate biomarkers associated with specific foods [1].

Experimental Protocols and Methodologies

Participant Recruitment and Controlled Feeding

Each DBDC center recruits healthy adult participants for controlled feeding studies [13]. Prior to intervention, habitual diet is assessed using food frequency questionnaires (FFQs) and recent intake is evaluated through automated 24-hour dietary recalls (ASA-24) [15]. The feeding studies employ standardized protocols:

- Test Meals: Participants consume test foods in prespecified amounts following randomized controlled dietary intervention designs [15]. The UC Davis center, for example, administers different servings of fruit and vegetable mixtures in an inverse dosing gradient (e.g., 1 fruit/3 vegetables, 2 fruit/2 vegetables, 3 fruit/1 vegetables) within a standard mixed meal setting [15].

- Dietary Control: Participants are provided with standardized meals and snacks low in the target food groups to prevent interference with biomarker detection [15].

- Washout Periods: Multiple dosing interventions are conducted with at least 48-hour washout periods between test sessions [15].

Biospecimen Collection and Processing

Comprehensive biospecimen collection is critical for metabolomic analysis in Phase 1 studies:

- Blood Collection: Fasting blood samples are collected initially, followed by postprandial samples at 1, 2, 4, 6, and 8 hours after test meal consumption. A final fasting sample is collected at 24 hours [15].

- Urine Collection: Urine is pooled sequentially between 0-2, 2-4, 4-6, and 6-8 hours, with a final collection from 8-24 hours [15].

- Sample Processing: All biospecimens are processed, tracked, and stored according to standardized protocols across centers to maintain sample integrity [1] [17].

Diagram Title: DBDC Phase 1 Experimental Workflow

Metabolomic Profiling and Biomarker Discovery

Phase 1 utilizes advanced metabolomic technologies to identify candidate biomarkers:

- Analytical Platforms: Liquid chromatography-mass spectrometry (LC-MS) and hydrophilic-interaction liquid chromatography (HILIC) are employed for comprehensive metabolite profiling [1]. Both untargeted and targeted approaches are used to discover novel biomarkers and quantify known compounds [15] [17].

- Metabolite Identification: Unknown metabolites are characterized using high-resolution MS/MS with ramped collision energies and SWATH-based LC-TripleTOF MS to ensure accurate identification with associated retention times and precise masses [15].

- Quality Assurance: Extensive QA/QC strategies are implemented to ensure analytical precision and stability, including analysis of blinded duplicate samples [15] [17].

Data Analysis and Statistical Approaches

The DBDC employs sophisticated statistical methods to identify and validate dietary biomarkers:

- Kinetic Modeling: Data analysis cores characterize the appearance and clearance kinetics of metabolites in blood and urine to determine optimal sampling times and stratify markers for acute or habitual intake [15].

- Generalized Linear Models: Multiple GLM approaches (Gaussian, log-link Gaussian, log-normal, etc.) are constructed, adjusting for subject metadata and using participants as random effects to evaluate intervention-associated changes in biomarkers [15].

- Bayesian Methods: Effect sizes are estimated using Bayesian regression with credible intervals >95% to account for interindividual variability stemming from genetics, lifestyle, gut microbiome, and ADME profiles [15].

- Multiple Comparison Correction: The false discovery rate is controlled using the Benjamini-Hochberg method to ensure statistical rigor in biomarker identification [1].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Materials and Analytical Tools for Dietary Biomarker Studies

| Item/Category | Function/Application | Examples/Specifications |

|---|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Primary platform for metabolomic profiling; separates and detects small molecules in biospecimens [1] | Ultra-HPLC (UHPLC) systems coupled to high-resolution mass spectrometers [1] |

| Hydrophilic-Interaction Liquid Chromatography (HILIC) | Complementary separation mechanism for polar metabolites; enhances coverage of metabolome [1] | HILIC columns with MS-compatible mobile phases [1] |

| Chemical Libraries & Standards | Metabolite identification; quantification; method development and validation [1] | Commercially available metabolite standards; in-house generated spectral libraries [15] |

| Stable Isotope-Labeled Compounds | Tracking metabolite fate; distinguishing dietary compounds from endogenous metabolites [15] | ¹³C, ¹⁵N-labeled analogs of suspected biomarkers |

| Quality Control Materials | Monitoring analytical performance; ensuring data quality across batches and sites [15] [17] | Pooled reference samples; blinded duplicates; standard reference materials [17] |

Significance and Future Directions

The DBDC Phase 1 approach represents a transformative advancement in nutritional science by applying rigorous metabolomic technologies to the challenge of dietary assessment. The discovery and validation of objective food biomarkers will address critical limitations of self-reported dietary data and enhance the precision of nutrition research [1] [14].

Following Phase 1, the DBDC will progress to Phase 2, where candidate biomarkers will be evaluated for their ability to identify individuals consuming biomarker-associated foods using controlled feeding studies of various dietary patterns [1]. Phase 3 will validate the most promising biomarkers in independent observational settings to predict recent and habitual consumption of specific test foods [1].

All data generated throughout the DBDC study phases will be archived in publicly accessible databases, including the NIDDK Central Repository and Metabolomics Workbench, serving as a valuable resource for the broader research community [1]. This systematic approach promises to significantly expand the repertoire of validated dietary biomarkers, ultimately advancing our understanding of how diet influences human health and disease.

Establishing Pharmacokinetic Parameters and Dose-Response Relationships

Within the framework of biomarker development from controlled feeding studies, the precise establishment of pharmacokinetic (PK) parameters and dose-response relationships is fundamental. These quantitative assessments form the critical link between dietary exposure and biological effect, allowing researchers to move from simple observational associations to a mechanistic understanding of how foods and nutrients influence health. PK parameters describe the body's processing of a compound—its absorption, distribution, metabolism, and excretion (ADME)—while dose-response modeling quantifies the relationship between the exposure level and the magnitude of a biological response [18]. In the specific context of the Dietary Biomarkers Development Consortium (DBDC), the goal is to discover and validate objective biomarkers for foods consumed in the U.S. diet, a process that relies heavily on controlled feeding trials and subsequent metabolomic profiling to identify candidate compounds that reliably reflect intake [3]. This document outlines detailed protocols and applications for determining these essential parameters to advance the field of precision nutrition.

Core Concepts and Definitions

Fundamental Pharmacokinetic Parameters

Pharmacokinetics describes "what the body does to a drug"—or, in a nutritional context, a bioactive food component. The key parameters are summarized in the table below. These parameters are typically assessed by monitoring the concentration-time profile of a compound or its metabolites in accessible biological fluids like plasma or urine [19].

Table 1: Key Pharmacokinetic Parameters and Their Definitions

| Parameter | Symbol | Definition | Significance |

|---|---|---|---|

| Area Under the Curve | AUC | Total exposure to a compound over time | Surrogate for total drug exposure; used to calculate bioavailability [19] |

| Maximum Concentration | C~max~ | Peak plasma concentration after administration | Indicates the intensity of exposure [19] |

| Time to C~max~ | T~max~ | Time taken to reach peak concentration | Reflects the rate of absorption [19] |

| Elimination Half-Life | t~1/2~ | Time for plasma concentration to reduce by 50% | Determines dosing frequency and time to steady-state [20] [19] |

| Clearance | CL | Volume of plasma cleared of the compound per unit time | Represents the body's efficiency in eliminating the compound [19] |

| Volume of Distribution | V~d~ | Apparent volume in which a compound distributes | Indicates extent of distribution outside the plasma compartment [19] |

| Bioavailability | F | Fraction of administered dose that reaches systemic circulation | Critical for evaluating efficacy of extravascular routes (e.g., oral) [20] [19] |

Principles of Dose-Response Modeling

The dose-response relationship, a cornerstone of toxicology and pharmacology, describes the magnitude of a biological response as a function of exposure level [21]. Dose-response modeling quantitatively assesses this relationship to identify which exposure doses are safe, hazardous, or beneficial [22]. These relationships are typically visualized through dose-response curves, which are often sigmoidal in shape when the dose is plotted on a logarithmic scale [21].

Key metrics derived from these models include:

- Potency: Often represented by the EC~50~ (half maximal effective concentration) or ED~50~ (half maximal effective dose), which is the dose required to produce 50% of the maximum response [21].

- Efficacy: Represented by E~max~, the maximum possible effect a compound can elicit [21].

- Benchmark Dose (BMD): The dose that produces a predetermined, measurable change in response rate (Benchmark Response, or BMR), often a 5% or 10% change from background. The BMDL is the lower confidence bound of the BMD and is often used as a Point of Departure (POD) for risk assessment [22] [23].

Experimental Protocols

Protocol 1: Determining PK Parameters from a Controlled Feeding Study

This protocol outlines the methodology for characterizing the pharmacokinetics of a dietary biomarker following a controlled dose, aligned with the controlled feeding trials described by the DBDC [3].

1. Study Design and Dosing:

- Subjects: Recruit healthy participants. The study protocol must be approved by an Institutional Review Board (IRB) and/or an Animal Care and Use Committee (IACUC) for preclinical studies [18].

- Administration: Administer a precise, pre-specified amount of the test food or nutrient. The route should mimic typical consumption (e.g., oral). An intravenous dose of a purified compound may be co-administered in a separate phase to determine absolute bioavailability [18].

- Controls: Implement appropriate control diets to account for background metabolic interference.

2. Sample Collection:

- Collect serial blood samples (e.g., plasma, serum) at predetermined time points: pre-dose, and at multiple time points post-dose (e.g., 0.5, 1, 2, 4, 8, 12, 24 hours) to fully characterize the absorption and elimination phases [18].

- Collect urine over timed intervals (e.g., 0-4h, 4-8h, 8-12h, 12-24h) to determine renal excretion.

- Immediately process samples (e.g., centrifugation for plasma) and store at -80°C until analysis.

3. Bioanalytical Analysis:

- Use targeted or untargeted metabolomic approaches, such as Liquid Chromatography-Mass Spectrometry (LC-MS), to quantify the candidate biomarker and its potential metabolites in the biological samples [3].

- Ensure method validation for accuracy, precision, and sensitivity.

4. Data Analysis and Parameter Calculation:

- Plot the plasma concentration-time curve for each subject.

- Use non-compartmental analysis (NCA) to calculate PK parameters [19]:

- AUC: Calculate using the trapezoidal rule.

- C~max~ and T~max~: Observed directly from the data.

- Elimination Rate Constant (K): Determine by performing linear regression on the log-linear portion of the concentration-time curve [24]. The half-life is then calculated as ( t{1/2} = 0.693 / K ) [24].

- Clearance (CL): For intravenous dosing, ( CL = Dose / AUC ). For oral dosing, ( CL = F \cdot Dose / AUC ), where F is bioavailability.

- Volume of Distribution (V~d~): ( Vd = CL / K ) [24].

The following diagram illustrates the workflow for this protocol:

Protocol 2: Establishing a Dose-Response Relationship

This protocol describes the steps for modeling the relationship between the dose of a nutrient and a measurable health outcome, which is central to risk-benefit assessment (RBA) [25].

1. Experimental Design:

- Dose Groups: Assign subjects or animals to multiple groups receiving different doses of the nutrient or food of interest, including a control (zero-dose) group. A wide range of doses is preferable.

- Endpoint Measurement: Define and measure a relevant quantitative endpoint. This could be a continuous outcome (e.g., blood pressure, biomarker concentration) or a quantal outcome (e.g., incidence of tumors) [22] [23].

2. Data Plotting and Model Selection:

- Plot the raw data with dose on the x-axis and response on the y-axis.

- Test several mathematical models to find the best fit for the data. Common models include:

- Hill Equation: ( E = E{0} + \frac{[A]^n \times E{max}}{[A]^n + EC_{50}^n} ) , where E is the effect, E₀ is the baseline effect, [A] is the dose, E~max~ is the maximum effect, EC~50~ is the half-maximally effective dose, and n is the Hill coefficient that determines steepness [21] [26].

- Weibull Model

- Logistic Model

3. Model Fitting and Evaluation:

- Fit the candidate models to the data using nonlinear regression techniques.

- Evaluate the goodness-of-fit using statistical criteria such as Akaike's Information Criterion (AIC) or the Bayesian Information Criterion (BIC). Visually inspect the curve fit.

4. Derivation of Benchmark Doses (BMD):

- Define a Benchmark Response (BMR), which is a predetermined change in response (e.g., 10% increase over background).

- Using the best-fitting model, calculate the BMD—the dose corresponding to the BMR.

- Calculate the BMDL, the lower confidence bound of the BMD (e.g., the 95% lower confidence limit) [22] [23].

Table 2: Common Dose-Response Model Functions

| Model Name | Function | Typical Application |

|---|---|---|

| Hill Equation | ( E = E{0} + \frac{[A]^n \times E{max}}{[A]^n + EC_{50}^n} ) | Standard model for efficacy and potency; widely used in pharmacology [21] [26] |

| Linear Model | ( E = E_{0} + k \cdot [A] ) | Simple linear relationships; often used for low-dose extrapolation |

| Weibull Model | ( E = E{0} + E{max} (1 - e^{-([A]/k)^m}) ) | Flexible model for toxicological data with a threshold-like shape |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for PK and Dose-Response Studies

| Item | Function/Application |

|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS/MS) | High-sensitivity quantification and identification of biomarkers and metabolites in complex biological matrices like plasma and urine [3]. |

| Stable Isotope-Labeled Standards | Internal standards for mass spectrometry that correct for matrix effects and recovery losses, ensuring analytical accuracy and precision. |

| Biomarker Discovery Panels | Multiplexed assays for broad-spectrum metabolomic or proteomic profiling to identify novel candidate biomarkers in controlled feeding studies [3]. |

| Pharmacokinetic Modeling Software | Software platforms (e.g., NONMEM, Phoenix WinNonlin) for performing non-compartmental and compartmental analysis to calculate PK parameters [26]. |

| Benchmark Dose Software (BMDS) | US EPA-developed software for conducting dose-response modeling and deriving benchmark doses (BMD/BMDL) for risk assessment [23]. |

| Controlled Diet Formulations | Precisely formulated diets for feeding studies, ensuring consistent and reproducible nutrient exposure for all participants or animals [3]. |

Integrated Workflow: From Feeding Study to Biomarker Validation

The integration of PK and dose-response analysis is critical for biomarker development. The DBDC outlines a three-phase approach that encapsulates this integration [3]:

- Phase 1: Discovery: Controlled feeding trials with test foods are conducted, and biospecimens are analyzed using metabolomics to identify candidate biomarker compounds and characterize their pharmacokinetic parameters.

- Phase 2: Evaluation: The ability of candidate biomarkers to classify consumers vs. non-consumers is tested using controlled feeding studies of various dietary patterns.

- Phase 3: Validation: The validity of candidate biomarkers to predict habitual intake is evaluated in independent observational cohorts.

The relationship between PK/PD modeling and the broader goal of biomarker development can be visualized as follows, showing how different modeling approaches feed into the validation pipeline:

Application in Nutritional Science: A Case Study on Fibre and Calcium

Quantitative dose-response relationships are increasingly used in food risk-benefit assessment (RBA). A recent synthesis of meta-analyses revealed specific, quantifiable relationships between nutrient intake and health outcomes [25]:

- Dietary Fibre: A dose-response relationship shows a protective effect against colorectal cancer, with cereal fibre being the most beneficial source. The relationship can be quantified as a specific percentage reduction in risk per gram of fibre consumed.

- Calcium: Inverse associations were found with several cancers. However, the relationship is complex, as high dairy intake (a primary calcium source) may be associated with an increased risk of prostate cancer, highlighting the importance of considering the nutrient source.

- Zinc: Exhibits a potential U-shaped relationship with colorectal cancer risk, indicating that both deficiency and excessive intake may be harmful.

These findings underscore the power of dose-response modeling to move beyond qualitative advice ("eat more fibre") to quantitative, evidence-based dietary recommendations.

Advanced Techniques and Analytical Approaches for Biomarker Identification

The pursuit of objective biomarkers for dietary intake represents a significant frontier in nutritional epidemiology and precision health. Diet is a complex exposure that profoundly affects health across the lifespan, yet accurately assessing dietary intake through self-reported methods remains challenging. Objective biomarkers that can reliably reflect intake of specific nutrients, foods, and dietary patterns are therefore critically needed to strengthen research on diet-health relationships [3]. Within this context, liquid chromatography-mass spectrometry (LC-MS) coupled with hydrophilic interaction liquid chromatography (HILIC) has emerged as a powerful analytical platform for discovering and validating dietary biomarkers. These technologies enable comprehensive profiling of the complex metabolome present in biological samples, capturing the subtle metabolic changes induced by specific dietary components.

The Dietary Biomarkers Development Consortium (DBDC) exemplifies the systematic approach required for this endeavor, implementing a 3-phase framework for biomarker discovery and validation that spans controlled feeding trials to independent observational studies [3]. Success in this domain requires not only advanced instrumentation but also rigorous experimental protocols, optimized chromatographic separations, and sophisticated data analysis pipelines. This application note provides detailed methodologies for leveraging LC-MS and HILIC platforms to advance compound identification in metabolomics studies, with particular emphasis on applications within controlled feeding studies for biomarker development.

Analytical Workflow for Metabolite Identification

The complete workflow for metabolite identification in biomarker development studies encompasses multiple stages from sample preparation through data interpretation. The following diagram illustrates this integrated process:

Figure 1: Integrated workflow for metabolite identification in biomarker development studies

Materials and Reagents

Research Reagent Solutions

Table 1: Essential research reagents and materials for LC-MS/HILIC metabolomics

| Reagent/Material | Specifications | Function in Workflow |

|---|---|---|

| Mobile Phase A | 10 mM ammonium formate/acetate in water, pH 3.0 ( Ultra LC-MS grade) | Aqueous component for HILIC separation; volatile buffer enhances ionization |

| Mobile Phase B | Acetonitrile with 0.1% formic acid (Ultra LC-MS grade) | Organic component for HILIC separation; maintains compound retention |

| Protein Precipitation Solvent | 80% methanol in water (LC-MS grade) | Deproteinization of plasma/serum samples; metabolite extraction |

| Reference Standards | >95% purity, 1.0 mg/mL in 80% methanol (MetaSci, Sigma-Aldrich) | Compound identification and retention time calibration |

| HILIC Column | Sulfobetaine-based Atlantis Premier BEH Z-HILIC (2.1 × 100 mm, 1.7 µm) | Separation of polar metabolites; minimal analyte adsorption |

| Quality Control | Pooled plasma sample from study cohort | Monitoring instrument performance; data normalization |

Instrumentation Specifications

Modern metabolomics relies on complementary instrumental configurations to balance comprehensive coverage with sensitive quantification. The EMBL-MCF 2.0 method utilizes two complementary platforms [27]:

- Untargeted Discovery Platform: Biocompatible Vanquish Horizon UHPLC system (MP35N-based) coupled to Orbitrap Exploris 240 mass spectrometer

- Targeted Validation Platform: Exion LC AD system coupled to QTRAP 6500+ mass spectrometer

The utilization of low-adsorption LC hardware (MP35N, PEEK, titanium) is critical for minimizing loss of metabolites containing common functional groups such as phosphates and carboxylates that exhibit non-specific adsorption to metal and stainless-steel surfaces [27].

Experimental Protocols

Sample Preparation Protocol

Proper sample preparation is fundamental to achieving reproducible results in metabolomics. The following protocol is optimized for plasma/serum samples from controlled feeding studies:

- Thawing: Slowly thaw plasma samples on ice for 30-60 minutes.

- Aliquoting: Transfer 100 µL of plasma to a low-adsorption microcentrifuge tube.

- Protein Precipitation: Add 400 µL of pre-chilled 80% methanol (-20°C) to the plasma.

- Vortexing and Incubation: Vortex thoroughly for 30 seconds, then incubate at -20°C for 20 minutes.

- Centrifugation: Centrifuge at 14,000 × g for 15 minutes at 4°C.

- Collection: Carefully transfer 400 µL of supernatant to a new low-adsorption vial without disturbing the protein pellet.

- Storage: Store extracts at -80°C until LC-MS analysis (typically within 48 hours).

- Quality Control: Prepare a pooled QC sample by combining 50 µL aliquots from each processed sample [27].

HILIC Chromatography Method

HILIC separation is particularly valuable for retaining highly polar metabolites that elute near the void volume in reversed-phase chromatography. The following method provides robust retention and separation of polar compounds:

Table 2: HILIC chromatographic conditions for polar metabolite separation

| Parameter | Specification | Notes |

|---|---|---|

| Column | Atlantis Premier BEH Z-HILIC (2.1 × 100 mm, 1.7 µm) | Sulfobetaine-based chemistry; excellent for acids and bases |

| Column Temperature | 40°C | Enhanced reproducibility and peak shape |

| Flow Rate | 0.4 mL/min | Optimal for MS sensitivity and separation |

| Injection Volume | 3 µL | Compromise between sensitivity and matrix effects |

| Gradient Timetable | Time (min) | % Mobile Phase B (ACN) |

| 0.0 | 85% | |

| 1.0 | 85% | |

| 10.0 | 20% | |

| 11.0 | 20% | |

| 11.5 | 85% | |

| 15.0 | 85% | |

| Autosampler Temperature | 4°C | Maintains sample integrity |

This method employs a decreasing organic gradient to elute compounds based on increasing hydrophilicity, with the initial high organic content (85% acetonitrile) ensuring proper retention on the HILIC stationary phase [27].

Mass Spectrometry Acquisition Parameters

Data acquisition in biomarker discovery studies typically employs both high-resolution full-scan and targeted MS/MS modes:

Table 3: Mass spectrometry parameters for untargeted and targeted analysis

| Parameter | Untargeted (Orbitrap) | Targeted (QTRAP) |

|---|---|---|

| Ionization Mode | Electrospray ionization (ESI) positive/negative switching | ESI positive or negative mode |

| Spray Voltage | ±3.5 kV | ±4.5 kV |

| Sheath Gas | 50 arb | 50 arb |

| Aux Gas | 10 arb | 10 arb |

| Capillary Temperature | 320°C | 500°C |

| MS1 Resolution | 120,000 @ m/z 200 | Unit resolution (Q1) |

| Scan Range | m/z 70-1050 | MRM transitions |

| MS2 Acquisition | Data-dependent acquisition (top 10) | Optimized collision energies |

| Collision Energy | Stepped (20, 35, 50 eV) | Compound-specific |

| Chromatographic Peak Width | ≥ 4 scans/peak | ≥ 12 data points/peak |

The dual-platform approach enables comprehensive metabolite profiling in discovery phase (Orbitrap) followed by sensitive and quantitative validation of candidate biomarkers (QTRAP) [27].

Data Analysis and Compound Identification

Statistical Approaches for Biomarker Discovery

The analysis of metabolomics data requires careful consideration of statistical methods, particularly as the number of metabolites increases. Comparative studies have demonstrated that:

- With small sample sizes (N < 200) and targeted metabolomics (∼200 metabolites), traditional univariate methods with false discovery rate (FDR) correction perform adequately.

- With larger sample sizes (N > 1000) and nontargeted metabolomics (∼2000 metabolites), sparse multivariate methods such as Sparse Partial Least Squares (SPLS) and LASSO regression demonstrate superior performance with higher positive predictive value and fewer false positives [28].

The improved performance of multivariate methods in high-dimensional data stems from their ability to model the complex correlation structure between metabolites, reducing spurious associations that may arise due to intercorrelation with true positive metabolites [28].

Advanced Annotation with Network Analysis

Global network optimization approaches have revolutionized compound identification in untargeted metabolomics. The NetID algorithm exemplifies this strategy by:

- Connecting ion peaks based on mass differences reflecting adduct formation, fragmentation, isotopes, or feasible biochemical transformations

- Applying integer linear programming to achieve global optimization of network annotations

- Differentiating biochemical connections from mass spectrometry phenomena based on chromatographic co-elution

- Scoring candidate annotations based on mass accuracy, retention time alignment, and MS/MS spectral similarity [29]

This approach generates a single consistent network linking most observed ion peaks, substantially improving annotation coverage and accuracy compared to individual peak annotation strategies. The network-based methodology is particularly valuable for identifying previously unrecognized metabolites, such as thiamine derivatives and N-glucosyl-taurine, through their biochemical relationships to known metabolites [29].

The following diagram illustrates the network-based annotation process:

Figure 2: Network-based annotation workflow for metabolite identification

Application in Biomarker Development

Integration with Controlled Feeding Studies

The DBDC has established a systematic 3-phase framework for biomarker development that integrates controlled feeding studies with advanced metabolomics:

- Phase 1 - Discovery: Controlled feeding of test foods in prespecified amounts to healthy participants followed by metabolomic profiling to identify candidate compounds and characterize their pharmacokinetic parameters [3].

- Phase 2 - Evaluation: Assessment of candidate biomarkers' ability to identify individuals consuming specific foods using controlled feeding studies of various dietary patterns [3].

- Phase 3 - Validation: Evaluation of candidate biomarkers' predictive validity for recent and habitual consumption in independent observational settings [3].

This phased approach ensures that biomarkers progress through increasingly rigorous testing before implementation in epidemiological studies.

Biological Interpretation Tools

Specialized computational tools have been developed to facilitate biological interpretation of metabolomics data in specific domains. The Immunometabolic Atlas (IMA) exemplifies such tools by:

- Inferring associations between metabolites and immune processes through protein-metabolite network analysis

- Leveraging Gene Ontology annotations and protein-metabolite interaction databases

- Enabling inheritance of immune process associations by metabolites based on their protein interactions [30]

Similar approaches can be adapted for nutritional metabolomics by creating networks that connect metabolites to specific dietary exposures through biochemical pathways.

The integration of LC-MS/HILIC platforms with robust experimental protocols and advanced computational methods provides a powerful framework for compound identification in biomarker development research. The methodologies detailed in this application note—from sample preparation through network-based annotation—enable researchers to confidently identify metabolites associated with specific dietary exposures in controlled feeding studies. As the field progresses toward standardized biomarker development pipelines, these protocols offer a foundation for generating reproducible, high-quality metabolomics data that can advance precision nutrition and enhance our understanding of diet-health relationships.

Machine Learning and AI Algorithms for Pattern Recognition in Complex Datasets

The discovery and validation of dietary biomarkers represent a significant challenge in nutritional science and precision medicine. Objective biomarkers are crucial for accurately assessing associations between diet and health outcomes, as traditional self-reported dietary measures are often limited by their reliability and validity [3]. Machine learning (ML) and artificial intelligence (AI) algorithms have emerged as powerful tools for identifying subtle patterns in complex biological datasets generated from controlled feeding studies. These algorithms can analyze high-dimensional data from metabolomics, metagenomics, and other profiling technologies to identify compounds and biological features that serve as sensitive and specific biomarkers of dietary exposures [3] [31].

The application of ML in biomarker development represents a paradigm shift from traditional statistical approaches. ML algorithms excel at identifying complex, non-linear relationships within high-dimensional data that might elude conventional analysis methods. For researchers and drug development professionals, this capability is particularly valuable for understanding how dietary components influence physiological processes and disease risk, ultimately supporting the development of targeted nutritional interventions and therapies.

Key Machine Learning Algorithms for Pattern Recognition

Algorithm Classification and Applications

Machine learning algorithms can be categorized based on their learning approach, each with distinct strengths for biomarker discovery applications.

Supervised learning algorithms learn from labeled training data, where both input data and corresponding output labels are provided [32]. This approach is analogous to a teacher providing examples with answers, enabling the algorithm to later make predictions on new, unlabeled data [32]. In the context of biomarker development, supervised learning is particularly valuable for classification tasks, such as determining whether specific dietary exposures have occurred based on biological samples.

Unsupervised learning algorithms identify inherent patterns, structures, or groupings within data without pre-existing labels [32]. This approach is likened to organizing a messy closet without instructions, making it valuable for discovering previously unknown subtypes or patterns in biological data that may represent novel biomarker signatures [32].

Ensemble methods combine multiple models to improve predictive performance and robustness. These methods are particularly effective for complex biomarker discovery tasks where multiple weak predictors can be combined to form a stronger overall model.

Table 1: Machine Learning Algorithms for Biomarker Development

| Algorithm | Type | Primary Use in Biomarker Research | Key Advantages |

|---|---|---|---|

| Random Forest | Supervised | Classification of dietary intake based on metagenomic features [33] [31] | Handles high-dimensional data well; reduces overfitting through ensemble approach [33] |

| Logistic Regression | Supervised | Binary classification of dietary exposure [33] [32] | Provides probability estimates; efficient with smaller datasets [33] |

| K-nearest neighbor (KNN) | Supervised | Pattern recognition in metabolic profiles [33] | Simple implementation; effective for multi-class problems [33] |

| Support Vector Machine (SVM) | Supervised | Classification in high-dimensional biomarker data [33] | Effective with small sample sizes; reliable performance [33] |

| K-means | Unsupervised | Clustering of similar metabolic response patterns [33] | Identifies natural groupings in data without pre-defined labels [33] |

| Gradient Boosting | Supervised | Creating strong predictive models from weak learners [33] | High predictive accuracy; handles complex patterns well [33] |

| Naive Bayes | Supervised | Probabilistic classification of dietary patterns [33] | Works well with high-dimensional data; computationally efficient [33] |

Algorithm Selection Considerations

Selecting appropriate machine learning algorithms for biomarker development requires careful consideration of multiple factors. Dataset dimensionality is a primary concern, as high-dimensional omics data may benefit from algorithms like random forest that naturally handle many features [33] [31]. Sample size availability also influences algorithm choice, with support vector machines performing reliably even with smaller sample sizes [33]. The specific research question further guides selection, with classification problems requiring different approaches than clustering or pattern discovery tasks. For dietary biomarker development, random forest has demonstrated particular utility, achieving 80-87% classification accuracy for specific food intake in controlled feeding studies [31].

Experimental Protocols for Biomarker Discovery

Controlled Feeding Study Design

The Dietary Biomarkers Development Consortium (DBDC) has established a rigorous 3-phase approach for biomarker discovery and validation that integrates machine learning at multiple stages [3].

Phase 1: Candidate Biomarker Identification

- Participant Administration: Healthy participants receive test foods in prespecified amounts under controlled conditions [3]

- Sample Collection: Blood and urine specimens are collected at predetermined timepoints following dietary administration [3]

- Metabolomic Profiling: Advanced analytical techniques including liquid chromatography-mass spectrometry (LC-MS) are employed to generate comprehensive metabolic profiles [3]

- Pharmacokinetic Characterization: Data from feeding trials characterize pharmacokinetic parameters of candidate compounds associated with specific foods [3]

Phase 2: Biomarker Evaluation

- Controlled feeding studies utilizing various dietary patterns assess the ability of candidate biomarkers to identify individuals consuming biomarker-associated foods [3]

- Machine learning models are trained to classify dietary exposure based on candidate biomarker profiles

- Algorithm performance is evaluated using cross-validation techniques to ensure robustness

Phase 3: Biomarker Validation

- Candidate biomarkers are evaluated in independent observational settings [3]

- Models predict recent and habitual consumption of specific test foods [3]

- Validation against traditional dietary assessment methods establishes real-world utility [3]

Metagenomic Biomarker Discovery Protocol

Recent research has demonstrated the utility of fecal metagenomics for developing objective biomarkers of food intake. The following protocol outlines key experimental steps:

Sample Processing and DNA Sequencing

- Fecal samples are collected at pre- and post-intervention timepoints in controlled feeding studies [31]

- DNA extraction is performed using standardized protocols to ensure sample integrity

- Shotgun genomic sequencing generates comprehensive metagenomic data [31]

Data Preprocessing and Functional Annotation

- Raw sequencing data undergoes quality control and preprocessing to remove artifacts and ensure data quality [31]

- Sequences are aligned using specialized tools such as Double Index AlignMent Of Next-generation sequencing Data (v2.0.11.149) [31]

- Functional annotation is performed using platforms like MEtaGenome ANalyzer (MEGAN, v6.12.2) to identify genes and metabolic pathways [31]

Differential Abundance Analysis

- Normalized count data is transformed using log fold change ratios between pre- and post-intervention samples [31]

- Differential abundance analysis identifies Kyoto Encyclopedia of Genes and Genomes (KEGG) Orthology categories that significantly change with specific food intake [31]

- Statistical thresholds (e.g., q < 0.20) control for false discovery in high-dimensional data [31]

Machine Learning Model Development

- Differentially abundant features are used as input for random forest classification models [31]

- Both single-food and multi-food models are developed to test classification accuracy [31]

- Model performance is evaluated using appropriate validation techniques to ensure generalizability

Table 2: Performance of Metagenomic Biomarker Classification

| Food Item | Number of Significant KEGG Orthologies | Classification Accuracy | Model Type |

|---|---|---|---|

| Almond | 54 | 80% | Random Forest [31] |

| Broccoli | 2,474 | 87% | Random Forest [31] |

| Walnut | 732 | 86% | Random Forest [31] |

| Mixed Food Model | Combined Features | 81% | Random Forest [31] |

Data Visualization and Workflow

Biomarker Discovery Workflow - This diagram illustrates the comprehensive workflow from controlled feeding studies to biomarker validation, highlighting the integration of machine learning at key analytical stages.

Random Forest Classification - This visualization shows the ensemble approach of random forest algorithms used to classify food intake based on metagenomic features, with multiple decision trees contributing to a final classification through majority voting.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for ML-Driven Biomarker Discovery

| Reagent/Platform | Function | Application in Biomarker Research |

|---|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Separation and detection of metabolic compounds | Profiling of blood and urine specimens for candidate biomarker identification [3] |

| Shotgun Genomic Sequencing Platform | Comprehensive analysis of genetic material in samples | Characterizing microbial community structure and functional potential in fecal samples [31] |

| Double Index AlignMent Of Next-generation sequencing Data (DIAMOND) | Sequence alignment for metagenomic data | Aligning sequencing reads to reference databases for functional annotation [31] |

| MEtaGenome ANalyzer (MEGAN) | Functional analysis of metagenomic sequences | Taxonomic and functional assignment of sequencing reads; identification of KEGG orthologies [31] |

| Automated Self-Administered 24-h Dietary Assessment Tool (ASA-24) | Self-reported dietary intake assessment | Collection of complementary dietary data for correlation with biomarker profiles [3] |

| scikit-learn (Python ML Library) | Implementation of machine learning algorithms | Building random forest classifiers for food intake prediction [33] [32] |

| Controlled Feeding Study Diets | Standardized dietary interventions | Administration of test foods in prespecified amounts for biomarker discovery [3] [31] |