From Lab to Life: A Research Framework for Deploying In-Field Eating Detection Systems

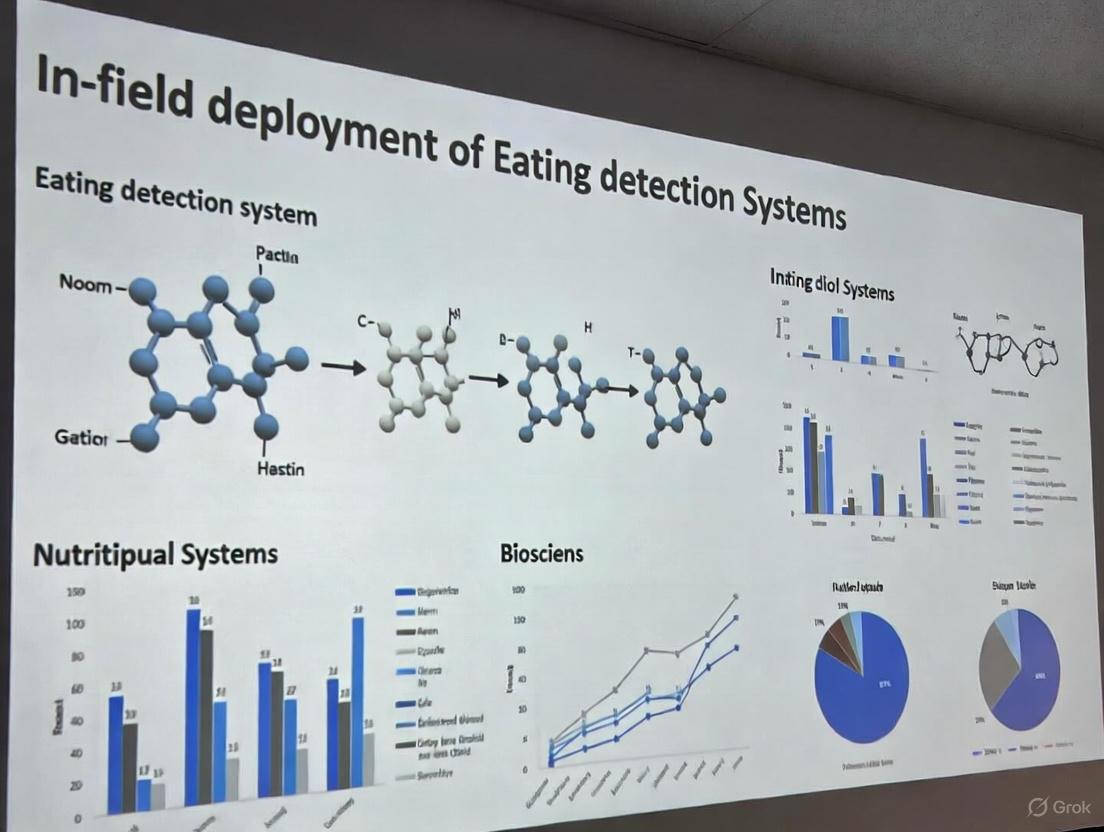

This article provides a comprehensive framework for the development and real-world deployment of sensor-based eating detection systems, tailored for biomedical research and clinical applications.

From Lab to Life: A Research Framework for Deploying In-Field Eating Detection Systems

Abstract

This article provides a comprehensive framework for the development and real-world deployment of sensor-based eating detection systems, tailored for biomedical research and clinical applications. It explores the foundational principles of eating behavior metrics and the sensor technologies that capture them, details the application of machine learning and AI for data analysis, addresses critical challenges in privacy and real-world performance, and establishes rigorous methodologies for system validation. Aimed at researchers, scientists, and drug development professionals, this review synthesizes current advancements and future directions to bridge the gap between technological innovation and reliable, ethical deployment in free-living environments.

Understanding Eating Behavior: Core Metrics and Sensor Modalities for Foundational Research

The accurate measurement of eating behavior is pivotal for advancing research in nutrition, obesity, and metabolic health. Moving beyond traditional self-report methods, which are often prone to bias and inaccuracy, the field is increasingly adopting sensor-based technologies that capture both macroscopic intake and micro-behaviors with high precision. This shift enables a more nuanced understanding of the dietary microstructure—the fine-grained, temporal patterns of eating within a single episode. These quantifiable metrics are essential for the in-field deployment of robust eating detection systems, providing the objective data needed to develop personalized interventions and understand the complex interplay between diet and health [1] [2].

A Taxonomy of Quantifiable Eating Metrics

Eating behavior can be deconstructed into a hierarchy of metrics, from broad dietary patterns to minute actions. The following table categorizes these quantifiable metrics, aligning them with the relevant sensing technologies as identified in a recent systematic review [1].

Table 1: Taxonomy of Eating Metrics and Associated Measurement Technologies

| Metric Category | Specific Metric | Description | Example Sensing Modalities |

|---|---|---|---|

| Macroscopic Intake | Energy & Macronutrient Intake | Total calories, grams of protein, fat, carbohydrates consumed. | Camera-based systems (pre/post meal), Universal Eating Monitor (UEM) [2] |

| Food Item Recognition | Identification of the specific type(s) of food consumed. | Food image analysis (active/passive cameras), computer vision [1] | |

| Portion Size | The amount of each food item consumed. | Pre- and post-meal weighing, image-based estimation [1] | |

| Meal Microstructure | Meal/Eating Duration | Total time taken for an eating episode. | Acoustic sensors, motion sensors, UEM [1] [2] |

| Eating Rate/Speed | Average amount of food consumed per unit of time (e.g., g/min). | UEM, combined sensor systems [2] | |

| Bite Rate/Frequency | Number of bites taken per minute. | Wrist-worn inertial sensors (hand-to-mouth gestures), acoustic sensors [1] | |

| Micro-behaviors | Chewing | Number of chews, chewing rate/frequency. | Acoustic sensors, strain sensors, neck-worn sensors [1] |

| Swallowing | Swallowing rate/frequency. | Acoustic sensors, neck-worn sensors [1] | |

| Contextual Factors | Eating Environment | Location, social context (e.g., alone, with others). | Wearable cameras, smartphone app self-report [3] [1] |

| Emotional & Behavioral State | Mood, stress, or pleasure associated with eating. | Smartphone app self-report (e.g., ecological momentary assessment) [3] |

Experimental Protocols for In-Field and Laboratory Deployment

A multi-method approach is critical for capturing the full spectrum of eating metrics. The following protocols detail methodologies for laboratory-based validation and in-field data collection.

Protocol A: Laboratory-Based Validation with a Multi-Food Universal Eating Monitor (UEM)

Objective: To achieve high-resolution, quantitative monitoring of eating microstructure and macronutrient intake from multiple foods simultaneously under standardized conditions [2].

Materials:

- Feeding Table: A custom table integrated with multiple high-precision balances (e.g., 5 balances monitoring up to 12 different food items) [2].

- Data Acquisition System: Computer with software for continuous weight data recording (e.g., every 2 seconds).

- Auxiliary Sensors: Standard video camera for process recording and a thermal imaging camera (optional for additional physiological metrics).

- Standardized Foods: A selection of foods representing different macronutrient profiles.

Procedure:

- Participant Preparation: Recruit participants under fasting conditions (e.g., overnight fast). Obtain informed consent.

- Setup: Place pre-weighed food items in standardized containers on the designated balances of the Feeding Table.

- Data Recording:

- Initiate continuous weight data logging via the balances' software.

- Start video recording to capture the eating process and link hand gestures to specific food items.

- Meal Initiation: Instruct the participant to eat until comfortably full.

- Data Processing:

- Food Intake: Calculate intake (g) for each food from the weight change recorded by each balance.

- Macronutrient & Energy Intake: Convert food weight to energy (kcal) and macronutrient content using a food composition database.

- Microstructure Metrics: Derive metrics from the continuous weight data:

- Eating Rate: The derivative of the cumulative intake curve (g/s or g/min).

- Meal Duration: Total time from first to last bite.

- Validation: Compare the UEM data with video recordings to validate food choices and intake patterns.

Validation Notes: This system has demonstrated high day-to-day repeatability for energy intake (r = 0.82) and no significant positional bias for food selection, making it a robust tool for laboratory studies [2].

Protocol B: In-Field Eating Behavior Capture via Multi-Sensor Wearables

Objective: To passively capture real-world eating episodes, including micro-behaviors and contextual data, for profiling individualized overeating patterns [3].

Materials:

- Wearable Sensors:

- Neck-worn Sensor (e.g., NeckSense): Precisely detects eating episodes, bite count, chewing rate, and hand-to-mouth movements [3].

- Wrist-worn Activity Tracker: Monitors gross motor activity and can serve as a proxy for bite detection via hand gestures [3] [1].

- Activity-Oriented Camera (e.g., HabitSense): A bodycam that uses thermal sensing to record only when food is in the field of view, preserving bystander privacy [3].

- Smartphone App: For self-reported ecological momentary assessment (EMA) of mood, context, and subjective states.

Procedure:

- Sensor Deployment: Equip participants with the three sensors (necklace, wristband, bodycam) for a continuous period (e.g., two weeks).

- In-Field Data Collection:

- Sensors passively and continuously collect data.

- The smartphone app prompts participants to report their mood, social context, and activity at meal times or random intervals.

- Data Integration and Analysis:

- Synchronize data streams from all sensors and the smartphone app using timestamps.

- Apply machine learning algorithms to sensor data to detect and classify eating episodes and micro-behaviors.

- Correlate detected eating patterns with self-reported contextual and emotional data to identify behavioral phenotypes (e.g., "stress-driven evening nibbling" or "uncontrolled pleasure eating") [3].

Key Consideration: This protocol emphasizes privacy-by-design, particularly through the use of the Activity-Oriented Camera, which is critical for ethical in-field deployment [3].

Visualization of Research Workflows

The following diagrams, generated with Graphviz DOT language, illustrate the logical flow of the experimental protocols and the relationship between different metric levels.

Diagram 1: Experimental workflows for laboratory and in-field eating behavior research.

Diagram 2: The hierarchy of quantifiable eating metrics, from broad intake to fine-grained behaviors.

The Scientist's Toolkit: Research Reagent Solutions

For researchers deploying eating detection systems, a suite of validated tools and technologies is available. The following table details essential materials and their functions.

Table 2: Essential Tools for Eating Behavior Research

| Tool / Technology | Category | Primary Function | Key Considerations |

|---|---|---|---|

| Universal Eating Monitor (UEM) / Feeding Table [2] | Laboratory Hardware | Precisely tracks continuous food weight and eating microstructure for multiple foods in real-time. | Gold standard for lab validation; high repeatability for energy intake (ICC: 0.94). |

| Neck-worn Sensor (e.g., NeckSense) [3] | Wearable Sensor | Passively detects eating episodes, chewing rate, bite count, and hand-to-mouth gestures. | High precision for micro-behavior capture in the field. |

| Wrist-worn Inertial Sensor [3] [1] | Wearable Sensor | Detects hand-to-mouth gestures as a proxy for bites; monitors general physical activity. | Common form-factor (e.g., Fitbit, Apple Watch); good for bite estimation. |

| Activity-Oriented Camera (AOC) [3] | Wearable Camera | Records activity using thermal sensing triggered by food, preserving privacy. | Critical for ethical in-field video capture and ground-truth validation. |

| Acoustic Sensors [1] | Wearable Sensor | Detects chewing and swallowing sounds for counting and rate analysis. | Can be integrated into neck- or head-worn devices. |

| Computer Vision / Image Analysis [1] | Software Algorithm | Recognizes food items and estimates portion size from images (active or passive capture). | Accuracy depends on image quality, database, and algorithms; active capture requires user burden. |

| Ecological Momentary Assessment (EMA) App [3] | Software / Protocol | Captures self-reported contextual data (mood, environment) in real-time via smartphone. | Provides essential qualitative context for quantitative sensor data. |

| Data Integration & ML Platform | Software / Analysis | Synchronizes multi-modal data streams and applies machine learning for pattern detection. | Required for analyzing complex datasets from in-field deployments [3]. |

The in-field deployment of automated eating detection systems represents a paradigm shift in dietary behavior research, offering a solution to the limitations of traditional self-reporting methods like questionnaires and food diaries, which are often prone to recall bias and participant burden [4] [5] [6]. These sensor-based technologies enable the passive, objective, and high-resolution measurement of eating behavior in free-living conditions, capturing everything from micro-level gestures like bites and chews to broader contextual factors [7] [5]. This document establishes a taxonomy of sensor technologies—acoustic, motion, visual, and physiological—and provides detailed application notes and experimental protocols for their deployment within public health research and clinical drug trials. The goal is to furnish researchers and scientists with the practical framework needed to implement these technologies for robust, in-field data collection on eating behavior.

Sensor Technology Taxonomy and Performance Comparison

The following table summarizes the primary sensor modalities used in eating behavior research, their measured parameters, and their performance characteristics as reported in the literature.

Table 1: Taxonomy and Performance of Sensors for Eating Behavior Detection

| Sensor Modality | Specific Sensor Types | Measured Eating Parameters | Reported Performance | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Acoustic | Microphone (body-worn/ambient), Acoustic Sensor [8] [6] | Chewing, swallowing, biting, food texture identification [6] | High accuracy for chewing detection in controlled settings [6] | Directly captures mastication sounds; can identify food texture [6] | Susceptible to ambient noise; privacy concerns; may be considered intrusive [6] |

| Motion | Accelerometer, Gyroscope, Inertial Measurement Unit (IMU) [7] [9] [5] | Hand-to-mouth gestures (as bite proxy), eating episodes, meal duration [7] [6] | F1-score of 87.3% for meal detection [7]; ~99% accuracy for carbohydrate intake gesture detection [9] | High user compliance; leverages commercial smartwatches; well-suited for long-term, in-field use [7] [5] | Cannot directly detect food type or intake; confounded by non-eating gestures (e.g., face-touching) [6] |

| Visual | Camera (wearable/static), Smartphone Camera [6] | Food type, portion size, food recognition, energy intake estimation [6] | High accuracy for food item recognition in controlled studies [6] | Provides rich visual data on food type and quantity [6] | Major privacy concerns; limited use in private settings; lighting and angle affect accuracy [6] |

| Physiological | Photoplethysmography (PPG), Electroencephalography (EEG), Strain Sensor [8] [6] | Swallowing, heart rate variability, pulse wave (indirect correlates) [6] | Varies by specific metric and sensor; used to capture correlates of eating and metabolism [8] [6] | Can capture autonomic nervous system responses during eating [6] | Often indirect measure of eating; signals can be weak and confounded by other physiological processes [6] |

Detailed Experimental Protocols for In-Field Deployment

Protocol for Motion-Based Eating Detection Using a Smartwatch

This protocol outlines the methodology for deploying a smartwatch-based system to detect eating episodes in free-living conditions, based on validated approaches [7].

1. Objective: To passively detect eating episodes and capture contextual eating data in free-living settings using a commercial smartwatch.

2. Research Reagent Solutions: Table 2: Essential Materials for Motion-Based Eating Detection

| Item | Specification/Example | Function |

|---|---|---|

| Smartwatch | Commercial device (e.g., Pebble, Android Wear) with a 3-axis accelerometer | Data acquisition platform for capturing dominant hand movements. |

| Companion Smartphone | Android or iOS device with custom data collection app | Receives and processes sensor data from the watch via Bluetooth; runs the detection algorithm. |

| Machine Learning Classifier | Random Forest model (e.g., ported using sklearn porter) [7] | Classifies accelerometer data streams into "eating" or "non-eating" gestures in real-time. |

| Ecological Momentary Assessment (EMA) System | Short questionnaires deployed via the smartphone app [7] | Validates detected eating episodes and captures subjective contextual data (e.g., company, location, mood). |

3. Procedure:

- Participant Setup: Fit the participant with a smartwatch on their dominant wrist. Ensure the companion smartphone app is installed, paired, and functioning.

- Data Collection & Real-Time Processing: The smartwatch's accelerometer continuously streams data to the smartphone. The pre-trained machine learning classifier analyzes the data for patterns indicative of hand-to-mouth eating gestures.

- Eating Episode Trigger & Validation: Upon detecting a threshold of eating gestures (e.g., 20 gestures within a 15-minute window), the system automatically triggers an EMA on the smartphone [7].

- Contextual Data Capture: The participant completes the EMA, which typically includes questions about the meal type, food consumed, social context, and location. This provides ground-truth validation and rich contextual data.

- Data Aggregation & Analysis: Confirmed eating episodes are logged with timestamps. Data analysis focuses on meal detection accuracy (precision, recall, F1-score), meal timing, duration, and contextual patterns.

The workflow for this protocol is illustrated below:

Protocol for Multi-Sensor Eating Detection System Deployment

For comprehensive eating behavior analysis, integrating multiple sensors is often necessary [5] [6]. This protocol describes the deployment of a multi-sensor system.

1. Objective: To synergistically use multiple sensor modalities to improve the accuracy and richness of in-field eating behavior measurement.

2. Research Reagent Solutions: Table 3: Essential Materials for a Multi-Sensor System

| Item | Specification/Example | Function |

|---|---|---|

| Head-Worn Sensors | Acoustic sensor (e.g., microphone) or strain sensor [6] | Directly captures chewing and swallowing sounds/vibrations. |

| Wrist-Worn IMU | Smartwatch or custom band with accelerometer and gyroscope [9] [6] | Tracks hand-to-mouth gestures and arm movement patterns. |

| Data Synchronization Unit | Custom microcontroller or smartphone with precise timekeeping | Synchronizes data streams from all sensors to a common timeline. |

| Multi-Modal Fusion Algorithm | Machine learning model (e.g., LSTM, transformer) [9] | Integrates data from all sensors to make a final eating activity prediction. |

3. Procedure:

- Sensor Calibration & Synchronization: Calibrate all sensors according to manufacturer specifications. Implement a synchronization protocol (e.g., a shared start timestamp) across all devices to align data streams.

- Multi-Modal Data Acquisition: Participants wear all sensors during the study period. Data is collected continuously or in bursts triggered by initial detection from a primary sensor (e.g., the wrist IMU).

- Data Pre-processing & Feature Extraction: Each sensor's raw data is pre-processed (filtered, normalized). Relevant features (e.g., frequency features from audio, statistical features from accelerometer) are extracted.

- Sensor Fusion & Classification: The extracted features from all modalities are fed into a multi-modal fusion algorithm. This model learns to weigh the inputs from different sensors to classify eating activities with higher accuracy than a single-modality system.

- Ground-Truth Annotation & Model Validation: Use simultaneous EMAs, food diaries, or video recording (in controlled segments of the study) to provide ground-truth labels for model training and validation.

The logical flow of data and decisions in a multi-sensor system is as follows:

Application Notes for In-Field Research

- Addressing Privacy Concerns: For acoustic and visual sensors, which raise significant privacy issues, implement privacy-preserving techniques. These include on-device processing that discards raw data after feature extraction, filtering algorithms to remove non-food-related sounds or images, and using low-fidelity data sufficient for analysis but not for identifying individuals or conversations [6].

- Ensuring Ecological Validity: The key advantage of these systems is deployment in free-living settings. To maximize ecological validity, minimize participant burden. This involves using comfortable, commercially available wearables where possible, ensuring long battery life, and designing EMAs to be brief and infrequent to avoid alert fatigue [7] [5].

- Data Management and Analysis: In-field studies generate large, complex datasets. Establish a robust data pipeline for storage, synchronization, and cleaning. Employ machine learning pipelines, such as those using Recurrent Neural Networks (RNNs) like LSTMs for temporal gesture data, to analyze the data [9]. The choice of evaluation metrics (e.g., F1-score, precision, recall) should be consistent and reported thoroughly to allow for cross-study comparisons [5].

- Sensor Selection Guidance: The choice of sensor depends on the research question. Motion sensors are ideal for long-term, unobtrusive monitoring of eating episodes and patterns. Acoustic sensors provide granular detail on eating microstructure but are more intrusive. Visual sensors are best for identifying food type and quantity but have limited applicability. Physiological sensors can offer insights into the metabolic or autonomic correlates of eating [6]. A multi-sensor approach often provides the most comprehensive picture [5].

The Shift from Self-Report to Objective Sensor-Based Measurement

Historically, dietary intake and eating behavior assessment have relied predominantly on self-report methods such as food diaries, 24-hour recalls, and food frequency questionnaires. However, a growing body of evidence reveals significant limitations in these approaches due to inherent biases, including misreporting and an inability to capture the subconscious, repetitive nature of eating actions [1]. The transition to sensor-based measurement addresses these critical gaps by providing objective, high-fidelity data on eating microstructure—including chewing, biting, swallowing, and eating speed—that self-report cannot reliably capture.

This paradigm shift is particularly crucial for in-field deployment of eating detection systems, where accurate, passive monitoring in free-living conditions is essential for understanding real-world behavior. Research demonstrates that self-report measures consistently underestimate sedentary time by approximately 1.74 hours per day compared to device-based measures [10]. Similarly, studies of upper limb activity reveal a "high degree of variability" between self-reported and sensor-derived measurements, with most participants unable to accurately self-report their activity levels consistently [11]. These findings underscore the fundamental reliability challenges of subjective reporting and highlight the necessity of objective sensor-based approaches for robust scientific research and clinical assessment.

Current Sensor-Based Technologies for Eating Behavior Monitoring

The landscape of sensor technologies for monitoring eating behavior has diversified significantly, enabling researchers to select modalities based on specific research questions, target metrics, and practical constraints related to field deployment.

Table 1: Taxonomy of Sensor Technologies for Eating Behavior Monitoring

| Sensor Modality | Measured Eating Metrics | Technology Examples | Key Advantages | Reported Performance/Accuracy |

|---|---|---|---|---|

| Acoustic Sensors [1] [12] | Chewing, swallowing, bite count | Microphones (e.g., on neck-worn devices) | Non-invasive detection of eating sounds | High accuracy for solid food detection; susceptible to ambient noise |

| Motion Sensors (Inertial) [1] [12] | Hand-to-mouth gestures, head movement, bite count | Wrist/head-worn accelerometers, gyroscopes (e.g., AIM-2) | Convenient, no direct skin contact needed | False detection rate of 9-30% for gestures [12] |

| Image Sensors (Camera) [1] [12] | Food type, portion size, eating environment | Wearable cameras (e.g., AIM-2, HabitSense), smartphones | Provides contextual and food identification data | 86.4% food intake detection accuracy; ~13% false positives [12] |

| Strain/Pressure Sensors [1] | Jaw movement, swallowing | Piezoelectric sensors, flex sensors on head/neck | Direct measurement of mandibular movement | High accuracy for chewing detection; requires skin contact |

| Thermal Sensors [13] | Food presence detection | Activity-Oriented Cameras (AOC) | Preserves privacy by triggering recording only with food | Enables pattern analysis without full video recording |

| Multi-Sensor Systems [13] [12] | Comprehensive eating episode data (context + behavior) | NeckSense + AIM-2 + HabitSense bodycam | Data fusion improves overall accuracy | 94.59% sensitivity, 70.47% precision when integrated [12] |

Multi-Sensor Fusion for Enhanced Accuracy

A prominent trend in field-deployable systems is the integration of multiple sensor modalities to overcome the limitations of individual sensors. Research demonstrates that combining image-based and sensor-based detection significantly improves performance. One study achieved a 94.59% sensitivity and 80.77% F1-score in detecting eating episodes in free-living conditions by integrating accelerometer-based chewing detection with image-based food recognition, outperforming either method used in isolation [12]. This hierarchical classification approach effectively reduces false positives common in single-sensor systems.

Another innovative system utilizes three synchronized wearable sensors—a necklace (NeckSense), a wristband, and a privacy-aware body camera (HabitSense)—to capture behavioral and contextual data simultaneously [13]. This multi-modal approach has successfully identified five distinct, real-world overeating patterns, demonstrating the power of comprehensive sensor systems to reveal complex behavior phenotypes that are impossible to discern through self-report.

Experimental Protocols for In-Field Data Collection and Validation

Deploying sensor systems for eating detection in free-living conditions requires meticulous experimental protocols to ensure data quality, participant compliance, and ethical integrity.

Protocol for Multi-Sensor Data Collection in Free-Living Conditions

Title: Multi-Sensor Free-Living Data Collection Workflow

Procedure Details:

Participant Recruitment and Ethics: Secure IRB approval and obtain informed consent. Recruit a sample size of approximately 30 participants to ensure sufficient statistical power for algorithm development, as demonstrated in validation studies [12]. Clearly explain the privacy safeguards of any imaging technology.

Sensor Deployment:

- Devices: Utilize a combination of wearable sensors. The AIM-2 (worn on eyeglasses) can provide simultaneous accelerometer data and egocentric images [12]. The NeckSense necklace can precisely capture chewing rate, bite count, and hand-to-mouth movements [13]. The HabitSense body camera, which uses thermal sensing to record only when food is present, adds contextual data while mitigating privacy concerns [13].

- Fitting: Calibrate and fit all sensors according to manufacturer specifications. For the AIM-2, ensure proper positioning on the participant's own eyeglasses. For NeckSense, ensure snug but comfortable contact.

Data Collection in Pseudo-Free-Living and Free-Living Conditions:

- Pseudo-Free-Living Day: Conduct the first study day in a lab environment where participants consume prescribed meals but are otherwise unrestricted. Use a foot pedal connected to a data logger for participants to manually mark the start and end of each bite as ground truth for model training [12].

- Free-Living Day: Participants wear the sensor system for 24 hours in their natural environment with no restrictions on food intake or activities. The device should passively collect data (e.g., images every 15 seconds, continuous accelerometer data) [12].

Contextual Data Capture: Supplement sensor data with Ecological Momentary Assessments (EMA) delivered via a smartphone app. Prompt participants to report meal-related mood, social context (who they are with), and activity [13].

Protocol for Ground Truth Annotation and Validation

Title: Ground Truth Annotation and Validation Process

Procedure Details:

Image Annotation for Food Detection: Manually review all images captured by the wearable camera. Annotate images using a tool like the MATLAB Image Labeler application [12].

- Positive Samples: Draw bounding boxes around all food and beverage objects.

- Negative Samples: Identify images containing no consumables.

- Context Filtering: Exclude images from contexts where detected food was not consumed by the participant (e.g., during food preparation, shopping, or social eating where food belongs to others) [12].

Eating Episode Annotation: Manually review the continuous image stream to identify the start and end times of all eating episodes during the free-living period. This serves as the primary ground truth for validating detection algorithms [12].

Algorithm Training and Validation: Use the annotated dataset to train and test detection models (e.g., for solid food and beverage recognition from images, and for chewing detection from accelerometer data). Employ a leave-one-subject-out cross-validation approach to ensure generalizability and avoid overfitting [12].

Performance Metrics: Evaluate system performance using standard metrics: Sensitivity (ability to detect true eating episodes), Precision (ability to avoid false positives), and the F1-Score (harmonic mean of precision and sensitivity) [12].

The Researcher's Toolkit: Essential Reagents and Materials

Table 2: Essential Research Toolkit for In-Field Eating Detection Studies

| Tool Category | Specific Item / Solution | Primary Function in Research | Key Considerations |

|---|---|---|---|

| Wearable Sensor Systems | Automatic Ingestion Monitor v2 (AIM-2) [12] | Integrated device capturing egocentric images (every 15s) and 3D accelerometer data (128 Hz) for head movement. | Worn on participant's own eyeglasses; enables correlation of images with sensor data. |

| Neck-Worn Sensors | NeckSense [13] | Precisely and passively records eating microstructure: chewing speed, bite count, and hand-to-mouth gestures. | Provides high-temporal-resolution behavioral data complementary to images. |

| Context-Aware Cameras | HabitSense Bodycam [13] | An Activity-Oriented Camera (AOC) that uses thermal sensing to record only when food is present, preserving privacy. | Critical for capturing eating context while addressing ethical concerns of continuous recording. |

| Ground Truth Tools | USB Foot Pedal Logger [12] | Provides precise ground truth in lab settings; participant presses and holds pedal to mark the duration of each bite/swallow. | Creates accurate labels for training sensor-based detection algorithms. |

| Data Annotation Software | MATLAB Image Labeler App [12] | Software application for manually drawing bounding boxes around food/beverage objects in image datasets. | Creates labeled datasets necessary for training and validating computer vision models. |

| Contextual Data Capture | Smartphone EMA App [13] | Delivers prompts for participants to report mood, social context, and activity in real-time during free-living. | Links objective sensor data with subjective experience and environmental context. |

Data Analysis and Validation Approaches

The analysis of multi-modal sensor data requires sophisticated computational methods to transform raw signals into meaningful behavioral insights.

Hierarchical Classification for Data Fusion

As validated in recent studies, a hierarchical classification framework that combines confidence scores from both image-based and sensor-based classifiers significantly enhances detection accuracy [12]. This data fusion approach mitigates the weaknesses of individual modalities—such as false positives from gum chewing (sensors) or images of food not consumed (camera)—by requiring consensus or high-probability signals from both channels to confirm an eating episode.

Identifying Behavioral Phenotypes

Advanced pattern recognition techniques applied to the rich, longitudinal data from systems like NeckSense and HabitSense can identify distinct overeating patterns. Research has revealed five clinically relevant phenotypes [13]:

- Take-out Feasting

- Evening Restaurant Reveling

- Evening Craving

- Uncontrolled Pleasure Eating

- Stress-Driven Evening Nibbling

The identification of these patterns provides a foundation for developing precisely targeted, personalized interventions that address the specific environmental, emotional, and behavioral triggers of each individual.

This document provides application notes and experimental protocols for the in-field deployment of eating behavior detection systems, framed within a broader thesis on translating technological innovations into real-world health research. The systematic monitoring of eating behavior has emerged as a critical component for understanding and intervening in chronic diseases and eating disorders. Recent technological advances in sensor-based monitoring and artificial intelligence now enable researchers to capture granular, objective data on eating metrics that were previously inaccessible through traditional self-report methods [6]. This document outlines standardized protocols for deploying these systems, summarizes key quantitative relationships between eating behavior and health outcomes, and provides essential toolkits for researchers and drug development professionals working at the intersection of nutritional science, behavioral health, and computational sensing.

Eating Behavior and Chronic Disease Risk

The relationship between specific eating behaviors and the development of non-communicable diseases (NCDs) is well-established. Research has identified several modifiable behavioral factors that significantly influence cardiovascular health, metabolic regulation, and obesity risk.

Quantitative Relationships Between Eating Behavior and Chronic Disease

Table 1: Eating Behavior Metrics and Their Documented Impact on Chronic Disease Risk

| Eating Behavior Metric | Health Outcome | Quantitative Relationship | Proposed Mechanism |

|---|---|---|---|

| Chewing Thoroughness | Food Consumption Volume | Doubling chews per bite reduces food volume by ≈14.8% [14] | Extended eating time allows satiety signals to develop [14] |

| Chewing Ability | Cardiovascular Disease (CVD) Risk | Impaired chewing increases CVD risk by factor of 3.5 with age [14] | Limited chewing capacity associated with poor dietary choices [14] |

| Eating Speed | Caloric Intake | Fast eaters experience greater post-meal hunger; slow eaters require 42% more chews [14] | Rapid intake disrupts appetite hormone signaling [14] |

| Meal Context | Eating Distraction | >99% of detected meals consumed with distractions [7] | Distracted eating leads to overconsumption and poor food choices [7] |

| Food Texture | Caloric Intake | Altering texture reduces intake by prolonging chewing [14] | Increased oro-sensory exposure promotes satiety [14] |

Experimental Protocol: Monitoring Chewing Behavior for Cardiovascular Health Research

Objective: To quantify the relationship between chewing metrics and cardiovascular health biomarkers in free-living conditions.

Materials:

- Inertial measurement unit (IMU) sensors or surface electromyography (sEMG) for jaw movement detection

- Signal processing unit (microcontroller)

- Data storage/transmission module

- Validated food diary application

- Portable blood pressure monitor and point-of-care lipid testing kit

Procedure:

- Sensor Deployment: Deploy a biomechatronic monitoring system incorporating EMG sensors for muscle activity detection and inertial sensors for jaw movement tracking [14]. Secure sensors in positions to capture masseter and temporalis muscle activity.

- Signal Acquisition: Collect raw data at sampling frequency ≥100 Hz. Apply bandpass filtering (0.1-10 Hz for inertial sensors; 10-500 Hz for EMG) to remove movement artifacts and noise [14].

- Feature Extraction: For each 30-second epoch with 50% overlap, extract: (1) number of chewing cycles, (2) chewing frequency, (3) chewing duration, (4) chewing power spectral density.

- Meal Detection: Implement a threshold-based algorithm to identify eating episodes when chewing rate exceeds 0.5 Hz for >2 minutes [14].

- Validation: Correlate sensor-derived chewing counts with manually counted chewing during one controlled meal per day.

- Health Biomarker Assessment: Measure blood pressure, lipid profiles, and HbA1c at baseline, 2 weeks, and 4 weeks.

- Data Analysis: Use multivariate regression to model relationship between chewing metrics (independent variable) and cardiovascular biomarkers (dependent variables), controlling for age, sex, and BMI.

Deployment Considerations: The system must distinguish eating from speaking via AI classification, with regular model updates to maintain accuracy >85% in free-living conditions [14].

Eating Behavior and Eating Disorders

Eating disorders represent complex psychophysiological conditions where behavioral monitoring can provide critical insights for diagnosis, treatment personalization, and outcome assessment.

Psychological and Behavioral Correlates of Disordered Eating

Table 2: Documented Psychological and Behavioral Factors in Eating Disorders

| Factor Category | Specific Metric | Quantitative Association with ED Risk | Study Details |

|---|---|---|---|

| Psychological Distress | Anxiety | OR=1.27 (95% CI: 1.20-1.34) for food addiction [15] | Strongest direct predictor in cross-sectional study (n=985) [15] |

| Self-Control Capacity | BSCS Score | Mean 37.1±4.3 vs 40.2±4.3 in food addiction vs controls (p<0.001) [15] | Lower self-control mediates stress-food addiction pathway [15] |

| Sustainable Eating | Healthy Eating Score | Mean 15.0±3.9 vs 17.6±4.7 in food addiction vs controls (p<0.001) [15] | Mediates relationship between psychological distress and addictive eating [15] |

| Emotion Regulation | Rumination | Positive association with diet quality (B=0.34, p<0.001) [16] | Counterintuitive association in Czech young adults (n=1,027) [16] |

| Social Media Content | ED-related Posts | AI detection feasibility established [17] | <20% of individuals with EDs receive treatment [17] |

Experimental Protocol: Multi-Modal Assessment of Eating Disorders in Free-Living Conditions

Objective: To capture behavioral, contextual, and psychological markers of eating disorders using integrated sensor systems and ecological momentary assessment (EMA).

Materials:

- Wrist-worn inertial measurement unit (commercial smartwatch or research-grade device)

- Smartphone application for EMA delivery

- Bio-impedance sensor system (optional for advanced monitoring)

- Validated psychological assessment scales (DASS-21, BSCS, YFAS)

Procedure:

- System Configuration: Implement a real-time eating detection system using a smartwatch accelerometer to capture dominant hand movements [7]. Set detection threshold to trigger EMA prompts after 20 eating gestures within 15 minutes.

- EMA Design: Develop brief (<30 second) EMA questions to capture: (1) meal context (alone/with others), (2) location, (3) perceived food healthiness, (4) current mood state, (5) presence of distraction during eating [7].

- Psychological Assessment: Administer validated scales (DASS-21, BSCS, YFAS) at baseline, 2 weeks, and 4 weeks to measure depression, anxiety, stress, self-control, and food addiction symptoms [15].

- Bio-Impedance Supplementation (Optional): For detailed food type monitoring, deploy iEat system with wrist-worn electrodes measuring impedance variations during food interactions [18]. Classify four food intake activities (cutting, drinking, eating with hand, eating with fork) and seven food types.

- Social Media Monitoring (With Consent): Implement natural language processing algorithms to identify ED-related content in social media posts, focusing on keywords, sentiment, and topic patterns associated with disordered eating [17].

- Data Integration: Synchronize sensor-derived eating metrics, EMA responses, psychological scores, and social media patterns to create comprehensive behavioral profiles.

- Analysis: Use structural equation modeling to test direct and indirect effects between psychological distress, self-control, eating behaviors, and disorder symptoms [15].

Deployment Considerations: System should achieve >80% precision and >96% recall for meal detection [7]. EMA compliance should be monitored with protocols for missed prompts.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Eating Behavior Monitoring Systems

| Tool Category | Specific Solution | Technical Specifications | Research Application |

|---|---|---|---|

| Inertial Sensing System | Wrist-worn Accelerometer | 3-axis, ≥50 Hz sampling, 50% overlapping 6-second windows [7] | Detection of hand-to-mouth gestures as eating episode proxy [7] |

| Biomechatronic Monitoring | EMG + Inertial Sensor Array | sEMG (10-500 Hz), IMU (0.1-10 Hz), real-time processing [14] | Chewing thoroughness assessment and eating speed quantification [14] |

| Bio-Impedance Device | iEat Wearable System | Two-electrode configuration, measures dynamic impedance variation [18] | Food-type classification and intake activity recognition [18] |

| Ecological Momentary Assessment | Smartphone-based EMA | Triggered by detected eating, <30-second completion time [7] | Capturing contextual factors (company, location, mood) [7] |

| AI Classification | Random Forest Algorithm | Python scikit-learn, ported to mobile platforms [7] | Distinguishing eating from non-eating activities with >80% precision [7] |

| Social Media Analysis | NLP Content Analysis | Topic modeling, keyword detection, sentiment analysis [17] | Identifying ED symptoms from publicly available content [17] |

| Psychological Assessment | DASS-21, BSCS, YFAS | Validated scales, cross-culturally adapted versions [15] | Quantifying depression, anxiety, stress, self-control, food addiction [15] |

Implementation Framework for In-Field Deployment

Successful deployment of eating detection systems in research settings requires careful attention to technical validation, participant engagement, and ethical considerations.

Performance Metrics for Eating Detection Systems

Technical Validation Protocol:

- Laboratory Calibration: Conduct controlled eating sessions to establish baseline accuracy for each sensor modality. For inertial sensing, validate against video-recorded chewing counts. For bio-impedance, verify circuit models with known food types [18].

- Free-Living Validation: Compare system-detected eating episodes with participant-initiated meal markers and 24-hour dietary recalls. Target performance metrics of >80% precision and >90% recall for meal detection [7].

- Algorithm Training: Utilize leave-one-subject-out cross-validation to ensure user-independent performance. Regularly update models to address concept drift in free-living conditions.

- Multi-Modal Fusion: Implement sensor fusion algorithms to combine complementary data streams (e.g., inertial + bio-impedance + acoustic) for improved specificity in noisy environments.

Participant Engagement and Compliance Strategies

Adherence Enhancement Protocol:

- Burden Minimization: Limit EMA prompts to essential questions with intuitive interfaces. Automate sensor data collection to require minimal participant intervention.

- Feedback Provision: Develop secure data visualization dashboards that provide participants with meaningful insights about their eating patterns while maintaining research blinding where appropriate.

- Compensation Structure: Implement tiered compensation systems that reward consistent participation without coercing engagement.

- Technical Support: Establish responsive helpdesk systems to address sensor malfunctions, connectivity issues, and usability concerns within 24 hours.

Ethical Implementation Framework

Ethical Safeguards Protocol:

- Privacy Protection: Implement end-to-end encryption for all data transmission and storage. For social media monitoring, obtain explicit consent for data scraping and analysis [17].

- Data Anonymization: De-identify data at point of collection where possible. Establish secure procedures for re-identification keys where necessary for longitudinal analysis.

- Risk Mitigation: Develop protocols for responding to detected eating disorder behaviors or psychological distress, including referral pathways to clinical services.

- Transparent AI: Maintain documentation of algorithm limitations and potential biases. Implement regular audits of classification performance across demographic subgroups.

This framework provides researchers with standardized methodologies for deploying eating behavior monitoring systems in diverse research contexts, from observational studies to clinical trials. The integration of objective sensor data with psychological assessments and contextual measures enables comprehensive investigation of the complex relationships between eating behavior and health outcomes.

Building the System: AI, Sensor Fusion, and Methodological Approaches for Real-World Application

Machine Learning and AI Algorithms for Pattern Recognition in Eating Episodes

The automatic detection of eating episodes represents a critical frontier in digital health, with significant implications for obesity management, diabetes care, and nutritional psychiatry [19] [20]. Traditional dietary assessment methods, such as food diaries and 24-hour recalls, are hampered by recall bias, under-reporting, and significant participant burden [19] [21]. The emergence of wearable sensors and advanced machine learning algorithms has enabled the development of passive monitoring systems that can detect eating episodes with increasing accuracy in free-living conditions. These systems leverage diverse data modalities including wrist motion, chewing sounds, and contextual self-reports to identify eating patterns. This document provides a comprehensive technical framework for implementing machine learning-based eating detection systems, with specific protocols for data acquisition, model development, and performance evaluation tailored for research deployment in real-world settings.

Core Sensing Modalities and Data Acquisition

Eating detection systems utilize multiple sensing approaches, each capturing different aspects of eating behavior with distinct technical requirements.

Inertial Sensing for Hand-to-Mouth Gestures

Wrist-worn inertial measurement units (IMUs) detect characteristic hand-to-mouth motions during eating episodes. The Clemson All-Day (CAD) dataset exemplifies this approach, containing 354 day-length recordings from 351 participants using accelerometers and gyroscopes sampled at 15 Hz [20]. Data acquisition involves collecting tri-axial accelerometer and gyroscope data from commercial smartwatches or research-grade sensors, with careful attention to sensor orientation consistency and sampling rate stability. Preprocessing typically includes noise filtering, gravity compensation, and normalization to account for inter-participant variability in motion patterns.

Acoustic Sensing for Mastication Analysis

Acoustic sensors capture chewing and swallowing sounds that provide direct evidence of food consumption. Microphones can be positioned in various locations including the outer ear canal, neck, or integrated into handheld utensils [22]. The SenseWhy study utilized a wearable camera with audio capabilities, collecting 6,343 hours of footage from which micromovements like bites and chews were manually labeled [19]. Acoustic data requires specialized preprocessing including spectral noise reduction, amplitude normalization, and filtering to isolate frequencies relevant to mastication (typically 100-4000 Hz). Time-frequency representations like spectrograms and Mel-Frequency Cepstral Coefficients (MFCCs) are then extracted for model input [22].

Contextual Sensing via Ecological Momentary Assessment (EMA)

Ecological Momentary Assessment captures subjective and contextual factors surrounding eating episodes through brief, in-the-moment surveys triggered automatically or at scheduled times [19] [7]. EMA protocols typically gather data on hunger levels, emotional state, food type, social context, and location. In the SenseWhy study, EMAs administered before and after meals collected psychological and contextual information that significantly improved overeating prediction accuracy when combined with passive sensing [19].

Table 1: Comparative Analysis of Primary Sensing Modalities for Eating Detection

| Sensing Modality | Primary Signals | Sample Rate | Key Features | Implementation Challenges |

|---|---|---|---|---|

| Wrist IMU | Accelerometer, Gyroscope | 15-30 Hz | Number of bites, chew rate, gesture patterns | Distinguishing eating from similar gestures (e.g., tooth brushing) |

| Acoustic | Audio waveforms | 8-44.1 kHz | Chews, swallows, food texture sounds | Ambient noise interference, privacy concerns |

| Camera-Based | Video frames | 0.1-1 Hz | Food type, portion size, eating environment | Privacy issues, computational load, limited battery life |

| EMA | Self-report ratings | 3-10 prompts/day | Hunger, emotion, context, food cravings | Participant burden, response fatigue |

Machine Learning Architectures and Implementation

Temporal Pattern Recognition with Deep Learning

Recurrent neural network architectures have demonstrated particular efficacy for modeling the temporal sequences characteristic of eating behaviors:

Bidirectional LSTM Networks process sensor data in both forward and backward directions, capturing contextual dependencies throughout eating episodes. Implementation typically involves 2-3 LSTM layers with 64-128 units, followed by fully connected layers for classification [9] [23]. These networks effectively model the sequential nature of wrist motions during eating, where each bite consists of approach, consumption, and retraction phases.

Gated Recurrent Units (GRUs) provide similar capabilities to LSTMs with reduced computational complexity. In acoustic-based food recognition, GRUs have achieved 99.28% accuracy by modeling temporal patterns in chewing sounds [22]. The simpler gating mechanism in GRUs (using update and reset gates instead of three separate gates in LSTMs) makes them suitable for deployment on resource-constrained mobile devices.

Hybrid Architectures combine convolutional layers for spatial feature extraction with recurrent layers for temporal modeling. For example, a 1D-CNN can first extract local patterns from IMU data, followed by LSTM layers to model longer-term dependencies. The self-explaining neural network described in [23] integrates specialized attention mechanisms with temporal modules, achieving 94.1% accuracy on food recognition while maintaining interpretability through attention-based concept encoders.

Two-Stage Detection Frameworks

Recent advances have introduced hierarchical approaches that leverage diurnal patterns to improve detection accuracy:

Two-Stage Detection Framework

The two-stage framework addresses the "needle in a haystack" problem of identifying brief eating gestures within continuous day-length data streams [20]. In implementation, the first-stage model can utilize previously developed window-based classifiers, while the second-stage model requires approximately 1K parameters, making it suitable for deployment on wearable devices with limited computational resources.

Semi-Supervised Phenotype Discovery

Beyond simple detection, semi-supervised learning approaches can identify distinct overeating phenotypes from unlabeled behavioral data. The SenseWhy study applied this methodology to EMA-derived features, discovering five clinically relevant overeating patterns with a cluster separability silhouette score of 0.59 [19]:

- Take-out Feasting: Restaurant-sourced meals in social settings

- Evening Restaurant Reveling: Pleasure-driven dine-in meals in evenings

- Evening Craving: Self-prepared meals for hunger relief in evenings

- Uncontrolled Pleasure Eating: Hedonic eating with loss of control

- Stress-driven Evening Nibbling: Stress and loneliness-induced eating

This approach enables personalized interventions tailored to specific behavioral patterns rather than applying one-size-fits-all strategies.

Experimental Protocols and Validation Frameworks

Dataset Construction and Annotation

Robust eating detection requires carefully annotated datasets representing diverse eating behaviors:

Participant Recruitment: Recruit 50+ participants representing target demographics (age, BMI, cultural background). The SenseWhy study monitored 65 individuals with obesity, collecting 2,302 meal-level observations [19].

Sensor Configuration: Deploy multiple synchronized sensors including wrist-worn IMU (sampling at ≥15 Hz), acoustic sensors if applicable, and smartphones for EMA collection.

Ground Truth Annotation: Implement precise meal annotation using one of two approaches:

- Manual Annotation: Research staff label meal start/end times based on first/last bite, validated with video recording when ethically permissible.

- Self-Report: Participants log meal times via mobile application, though this introduces potential recall bias.

Protocol Duration: Minimum 7-day monitoring period to capture variability in eating patterns, with some studies extending to 30+ days for longitudinal analysis.

Model Training and Evaluation

Implement rigorous evaluation protocols to ensure model generalizability:

Data Partitioning: Use participant-independent split (train/test sets contain different individuals) to avoid inflated performance from person-specific patterns.

Performance Metrics: Comprehensive evaluation beyond accuracy:

- Time-Weighted Accuracy: Accounts for temporal alignment between predictions and ground truth [20]

- Episode True Positive Rate (TPR): Proportion of actual eating episodes correctly detected

- False Positives per True Positive (FP/TP): Balance between sensitivity and specificity

- Brier Score Loss: Measures probability calibration quality [19]

Comparative Benchmarking: Evaluate against multiple baseline approaches including:

- Random Forest Classifiers: For feature-based models

- SVM and Naïve Bayes: As performance baselines [19]

- State-of-the-Art Methods: Compare against published results on benchmark datasets like Clemson All-Day (CAD)

Table 2: Performance Benchmarks Across Detection Modalities

| Algorithm | Sensing Modality | Accuracy/Precision | Key Performance Metrics | Dataset/Validation |

|---|---|---|---|---|

| XGBoost (Feature-Complete) | Multi-modal (IMU + EMA) | AUROC: 0.86, AUPRC: 0.84 | Brier Score: 0.11 | SenseWhy (n=48, 2302 meals) [19] |

| Two-Stage Framework | Wrist IMU | Episode TPR: 89%, Time Accuracy: 84% | FP/TP: 1.4 | CAD Dataset (354 days) [20] |

| GRU Network | Acoustic | Accuracy: 99.28% | F1-Score: 0.99 | 20 Food Items (1200 audio files) [22] |

| LSTM (Personalized) | Wrist IMU | Median F1: 0.99 | Prediction Latency: 5.5s | IMU Public Dataset [9] |

| Bidirectional LSTM+GRU | Acoustic | Precision: 97.7%, Recall: 97.3% | F1-Score: 97.7% | 20 Food Items [22] |

Implementation Considerations for Real-World Deployment

Successful in-field deployment requires addressing practical constraints:

Computational Efficiency: Optimize models for mobile deployment through quantization, pruning, and efficient architecture design. The self-explaining network in [23] achieved 63.3% parameter reduction compared to baseline transformers while maintaining 94.1% accuracy.

Power Consumption: Balance sensing frequency and model complexity to enable all-day monitoring without excessive battery drain.

Privacy Protection: Implement on-device processing for sensitive data (especially audio and video), with explicit user consent protocols.

Personalization: Develop adaptive models that tune to individual eating patterns over time, as demonstrated by the personalized deep learning model for diabetics that achieved median F1 score of 0.99 [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Eating Detection Systems

| Research Tool | Function | Example Implementation |

|---|---|---|

| Commercial Smartwatches | Wrist motion data collection | Pebble smartwatch with 3-axis accelerometer (Thomaz et al. dataset) [7] |

| Wearable Cameras | Ground truth validation, context capture | SenseWhy wearable camera (6343 hours of footage) [19] |

| EMA Platforms | Contextual data collection, self-report | Mobile apps with triggered surveys pre/post meals [19] [7] |

| Annotation Software | Manual labeling of eating episodes | Video annotation tools for meal start/end time labeling [19] |

| Public Datasets | Algorithm benchmarking | Clemson All-Day (CAD) dataset (354 day-length recordings) [20] |

| Deep Learning Frameworks | Model development and training | TensorFlow, PyTorch for LSTM/GRU implementation [9] [22] |

Visualization and Interpretability Methods

Model interpretability is crucial for clinical adoption and scientific validation:

Attention Visualization: Highlight temporal regions most influential in eating episode classification, particularly valuable in self-explaining networks [23].

Feature Importance Analysis: Use SHAP (SHapley Additive exPlanations) values to identify top predictive features (e.g., number of chews, perceived overeating, evening timing) [19].

Cluster Visualization: Project high-dimensional behavioral data into 2D space using UMAP to visualize distinct overeating phenotypes [19].

Multi-Modal Pattern Recognition Pipeline

Ethical Considerations and Clinical Translation

Deploying eating detection systems requires careful attention to ethical and practical concerns:

Privacy Protection: Implement strict data governance for sensitive behavioral data, particularly when using audio or video recording [24].

Algorithmic Bias: Evaluate model performance across diverse demographics to ensure equitable accuracy [21].

Clinical Integration: Develop interfaces that present insights in clinically actionable formats, balancing automation with professional oversight [21].

User Autonomy: Maintain transparency about data collection and processing, allowing users control over their personal information [24].

The field of AI-assisted eating behavior analysis continues to evolve rapidly, with future directions including multi-modal fusion architectures, self-supervised learning to reduce annotation burden, and personalized adaptive interventions that respond to individual behavioral patterns in real-time.

The deployment of robust eating detection systems in real-world settings presents a significant challenge, requiring resilience against environmental variability, user diversity, and motion artifacts. Multi-sensor fusion has emerged as a cornerstone methodology to address these challenges, enabling perception models to integrate complementary cues from disparate data sources such as accelerometers, gyroscopes, acoustic sensors, and optical detectors [25] [26]. By leveraging the statistical dependencies between these modalities, fusion algorithms can synthesize a more comprehensive and reliable representation of eating episodes than is possible with any single sensor, thereby enhancing detection accuracy and system robustness for in-field deployment [26].

The core principle underpinning this approach is the hypothesis that data streams captured by various sensors during a specific activity, such as eating, are statistically associated with one another. The joint variability patterns embedded within these multi-sensory signals form a unique signature that can be discriminatively modeled against other confounding activities [26]. This article provides a structured overview of recent advances in fusion methodologies, details practical experimental protocols, and outlines essential tools for developing and validating the next generation of eating detection systems.

Experimental Protocols for Multi-Modal Data Fusion

This section delineates two distinct experimental protocols for acquiring and fusing multi-modal data to detect eating episodes. The first protocol is based on wearable sensor data, while the second utilizes a specialized laboratory apparatus.

Protocol 1: Wearable Sensor-Based Fusion for Activity Recognition

This protocol describes a method to transform multi-sensor time-series data from a wearable device into a single 2D image representation that facilitates classification using deep learning [26].

- Aim: To detect eating episodes by fusing data from accelerometer (ACC), photoplethysmograph (BVP), electrodermal activity (EDA), and temperature (TEMP) sensors embedded in a wrist-worn device.

- Hypothesis: Data from various sensors are statistically correlated, and the covariance matrix of these signals has a unique distribution for eating activities that can be encoded into a discriminative 2D contour plot [26].

Equipment and Reagents:

- Empatica E4 wristband or equivalent multi-sensor wearable device.

- Data preprocessing and analysis software (e.g., Python with NumPy, SciPy).

- Deep learning framework (e.g., PyTorch, TensorFlow).

Procedure:

- Data Acquisition and Preprocessing: Collect raw data from the ACC, BVP, EDA, and TEMP sensors. Resample all signals to a uniform sampling frequency (e.g., 64 Hz) to ensure temporal alignment [26].

- Segmentation: Segment the synchronized data stream into non-overlapping temporal windows. A window size of 500 samples (~7.8 seconds at 64 Hz) has been used effectively, but this parameter should be optimized for the specific activity and sensor characteristics [26].

- Covariance Matrix Calculation: For each window, form an observation matrix

Hwhere each column represents a different sensor's signal. Calculate the covariance matrixCofHusing the following equation, which measures the pairwise covariance between each sensor signal combination:Cij = cov(H(:, i), H(:, j)) = 1/(n–1) * Σ (Sik – µi)(Sjk – µj)for k = 1 to m [26]. Here,SiandSjare the i-th and j-th columns ofH(representing different sensors),µiandµjare their respective means, andmis the number of samples in the window. - 2D Contour Representation: Generate a filled contour plot from the covariance matrix

C. This plot transforms the covariance coefficients into a 2D color image where the spatial patterns and colors correspond to the strength and distribution of the inter-sensor correlations [26]. - Deep Learning Classification: Utilize a deep residual network (ResNet) to learn the specific patterns within the 2D contour representations associated with eating episodes. The network architecture, as implemented in the original study, should include [26]:

- An input layer for the contour image.

- Multiple 2D convolutional layers for feature extraction.

- Batch normalization and ReLU activation functions.

- Skip connections to facilitate training of deeper networks.

- A final softmax and classification layer for categorical output (e.g., "eating" vs. "non-eating").

- Validation: Employ a leave-one-subject-out cross-validation strategy to evaluate model performance and ensure generalizability across users. Precision, recall, and F1-score should be reported.

Protocol 2: Laboratory-Based Universal Eating Monitor (UEM)

This protocol leverages a specialized "Feeding Table" to achieve high-resolution, multi-food monitoring in a controlled laboratory setting, providing ground truth data for validating wearable-based systems [2].

- Aim: To simultaneously track the intake of multiple foods with high temporal resolution to study eating microstructure, including eating rates and food choices.

- Equipment and Reagents:

- The "Feeding Table": A custom table integrated with multiple high-precision balances (e.g., 5 balances capable of monitoring up to 12 different foods) [2].

- Standardized food items.

- Data recording system with software for real-time weight capture.

- Video camera for recording the eating process.

- Procedure:

- Setup: Position up to 12 different food items in dishes distributed across the multiple balances embedded in the Feeding Table. Calibrate all instruments prior to the experiment [2].

- Data Acquisition: Instruct the participant to consume a meal normally. The system records the weight from each balance at a high frequency (e.g., every 2 seconds), transmitting data in real-time to a computer [2].

- Synchronization: Simultaneously record video of the eating session. The video feed is used to identify which food item was taken from each balance, linking weight changes to specific food types [2].

- Data Processing: Calculate key metrics of eating microstructure from the weight-time data, including:

- Total energy and macronutrient intake per food and for the entire meal.

- Eating rate (grams per minute or kcal per minute).

- Meal duration.

- Changes in eating rate throughout the meal.

- Validation: Assess the system's repeatability by conducting test-retest studies on consecutive days. High intra-class correlation coefficients (ICCs > 0.90 for energy and macronutrients) demonstrate excellent reliability [2].

Quantitative Performance Data

The following tables summarize key performance metrics and methodological details from the cited research, providing a benchmark for evaluating eating detection systems.

Table 1: Performance Metrics of Multi-Modal Fusion for Activity Recognition

| Metric | Value | Experimental Context |

|---|---|---|

| Precision | 0.803 | Leave-one-subject-out cross-validation on a data set of 10 participants performing activities of daily living [26]. |

| Temporal Window Size | 500 samples | Data resampled to 64 Hz (~7.8 seconds per window) [26]. |

| Deep Learning Architecture | Deep Residual Network (ResNet) | Includes 2D convolution, batch normalization, ReLU, and skip connections [26]. |

Table 2: Performance and Reliability of the Universal Eating Monitor (UEM)

| Metric | Value | Interpretation |

|---|---|---|

| Energy Intake Repeatability (r) | 0.82 | High day-to-day correlation for energy intake in standard meal tests [2]. |

| Macronutrient Intake Repeatability (r) | 0.86 (Fat), 0.86 (Carb), 0.58 (Protein) | High repeatability for fat and carbohydrates, moderate for protein [2]. |

| Intra-class Correlation (ICC) for Energy | 0.94 | Excellent reliability across four repeated intake measurements [2]. |

Workflow Visualization

The diagram below illustrates the logical workflow and data fusion process for the wearable sensor-based eating detection protocol (Protocol 1).

Diagram 1: Workflow for wearable sensor-based eating detection.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table catalogs key hardware, software, and datasets essential for conducting research in multi-modal eating detection.

Table 3: Key Research Reagents and Materials for Eating Detection Research

| Item Name | Type | Function & Application |

|---|---|---|

| Empatica E4 Wristband | Wearable Sensor | A research-grade wearable device that captures accelerometry, photoplethysmography (PPG), electrodermal activity (EDA), and skin temperature data, ideal for unobtrusive monitoring [26]. |

| Universal Eating Monitor (UEM) / "Feeding Table" | Laboratory Apparatus | A table integrated with multiple high-precision scales to provide ground truth data on food intake weight with high temporal resolution, enabling detailed study of eating microstructure [2]. |

| RADIal Dataset | Dataset | A public dataset containing synchronized camera, radar, and lidar data; while focused on automotive applications, it provides a benchmark for developing and testing multi-sensor fusion architectures [27]. |

| Deep Residual Network (ResNet) | Algorithm | A deep learning architecture that uses skip connections to mitigate vanishing gradients, enabling the training of very deep networks for complex pattern recognition in image-like data (e.g., 2D contour plots) [26]. |

| XGBoost Algorithm | Algorithm | A decision tree-based machine learning method using gradient boosting, effective for ranking the importance of input features (e.g., biomarkers, dietary factors) in complex, multimodal datasets [28]. |

Computer Vision for Food Recognition, Portion Size Estimation, and Challenges

The in-field deployment of automated dietary assessment systems is a critical frontier in health research and chronic disease management. Traditional methods, such as 24-hour dietary recalls, are plagued by participant burden, recall bias, and significant inaccuracies in self-reporting [29] [30]. The emergence of computer vision (CV) technologies offers a promising pathway to objective, real-time measurement of dietary intake. These systems primarily address two core challenges: food recognition (identifying what food is being consumed) and portion size estimation (determining how much is being consumed). However, the transition from controlled laboratory settings to robust in-field deployment presents substantial technical and practical challenges, including large intra-class variations, complex 3D geometry of foods, and diverse real-world eating environments [31] [32]. This document provides detailed application notes and experimental protocols to guide researchers in developing and validating these systems for rigorous scientific use.

Food Recognition: Methods, Datasets, and Performance

Food recognition is a fine-grained image classification task. The primary challenge lies in the high visual similarity between different food items (inter-class similarity) and the significant variation in appearance for the same food due to ingredients, preparation, and presentation (intra-class variation) [31] [32].

Dominant Methodologies and Models

Early approaches relied on handcrafted features, but the field has been revolutionized by deep learning, particularly Convolutional Neural Networks (CNNs). The choice of model often involves a trade-off between accuracy and computational efficiency, which is crucial for real-time, in-field applications on mobile devices.

- Lightweight Models (e.g., MobileNetV2): Designed for efficiency, these models are ideal for mobile and embedded systems. MobileNetV2 uses depthwise separable convolutions to reduce computational cost and parameters. One study achieved 92.97% accuracy on the Food-11 dataset (16,643 images) using a pre-trained MobileNetV2, demonstrating that high accuracy is possible with efficient architectures [33] [34].

- Dense and Deep Models (e.g., VGG, ResNet): These models typically offer higher accuracy at the cost of greater computational requirements. For instance, a VGG-like model custom-built for food recognition achieved 98.8% accuracy on a specific dataset [33]. ResNet variants have also been used for highly accurate tasks like crop disease identification, achieving over 99% accuracy [33].

- Multimodal Large Language Models (MLLMs) with RAG: A recent framework, DietAI24, integrates MLLMs with Retrieval-Augmented Generation (RAG). This approach uses a model like GPT-Vision for food item recognition but grounds its nutritional estimation in authoritative databases like the Food and Nutrient Database for Dietary Studies (FNDDS), mitigating the "hallucination" problem of LLMs. This enables zero-shot estimation of 65 distinct nutrients [30].

Key Datasets and Their Limitations

The performance of food recognition models is heavily dependent on the training data. Table 1 summarizes widely used datasets. A significant limitation is the cultural bias in mainstream datasets, which are predominantly composed of Western dishes, with under-representation of Asian, African, and other cuisines [31]. Other challenges include coarse annotation granularity (lacking ingredient-level labels) and a lack of images from real-world, in-the-wild conditions [31].

Table 1: Summary of Key Public Food Image Datasets

| Dataset Name | Scale | Number of Images/Items | Key Characteristics and Limitations |

|---|---|---|---|

| ETHZ Food-101 [31] | Large-scale | 101,000 images (101 classes) | First large-scale Western dish dataset; widely used as a benchmark; ~30% Asian dishes, ~1% African dishes. |

| PFID [31] | Small-scale | 4,545 images + other media | First fast food dataset; includes still images, stereo pairs, and videos. |

| Food-11 [33] [34] | Medium-scale | 16,643 images | Used for evaluating models like MobileNetV2. |

| Nutrition5k [35] | - | ~3,000 images with depth maps | Contains top-view images with associated depth maps; limited camera poses. |

| SimpleFood45 [35] | Small-scale | 45 food items | Newly introduced; includes images from various camera poses, ground-truth volume, weight, and energy. |

| FNDDS [30] | Database | 5,624 food items | Not an image dataset. A nutritional database used by DietAI24, providing standardized nutrient values for 65 components. |

Portion Size and Volume Estimation: From 2D to 3D

Accurately estimating food volume from 2D images is a more complex challenge than recognition, as it involves reconstructing 3D information from a 2D projection. Table 2 compares the primary technological approaches.

Table 2: Comparison of Food Portion Size Estimation Methods

| Methodology | Key Principle | Example Performance | Pros and Cons for In-Field Deployment |

|---|---|---|---|

| Fiducial-Marker-Free Smartphone Imaging [36] | Uses smartphone's known physical length and motion sensors to calibrate the camera. Relies on a specific picture-taking strategy (e.g., phone bottom on table). | Pilot study with 69 participants and 15 foods showed significant improvement with training (p<0.05 for all but one food). | Pro: Eliminates need to carry an external reference object, improving convenience. Con: Requires user compliance with a specific picture-taking protocol. |

| 3D Object Scaling [35] | Estimates camera pose and food pose from a 2D image. A 3D model of the food is rendered, scaled based on area differences, and its known volume is used for estimation. | Achieved 17.67% average error (31.10 kCal) on the SimpleFood45 dataset, outperforming existing methods. | Pro: Leverages available 3D data; not reliant on large neural networks for volume, making it more explainable. Con: Requires a pre-existing 3D model for each food type. |

| RGB-D Camera Fusion [37] | Combines RGB data (for segmentation) with depth data from a stereo camera (e.g., Luxonis OAK-D Lite) to directly calculate food volume. Weight is then estimated using food-specific density models. | Validation on rice and chicken yielded error margins of 5.07% and 3.75% for weight, respectively. | Pro: Direct volume measurement can be highly accurate. Con: Requires specialized depth-sensing hardware, limiting deployment to standard smartphone users. |

| Wireframe Model Fitting [36] | The user fits a predefined 3D wireframe shape (e.g., cuboid, wedge) to the food in the image. The volume of the scaled wireframe is calculated. | High accuracy when food and wireframe shapes match well. Error can be large if shapes are mismatched. | Pro: Intuitive and can be implemented without complex hardware. Con: User-dependent, time-consuming, and ineffective for amorphous or mixed foods. |

Experimental Protocols for System Validation

For in-field deployment, robust validation is essential. The following protocols outline key experiments.

Protocol: In-Field Food Recognition Accuracy

Objective: To evaluate the performance of a food recognition model in a real-world, free-living environment. Materials: Smartphone with study app; pre-trained food recognition model; central server for data logging. Procedure:

- Participant Recruitment: Recruit a cohort representative of the target population (e.g., 28+ participants [38]).

- Data Collection: Participants use the study app to capture images of all meals and snacks over a designated period (e.g., 3 weeks [38]). No restrictions on what, where, or how they eat.

- Ground-Truth Annotation: Establish ground truth for each image. This can be done via:

- Self-Report: Participants label their food immediately after image capture [29].

- Expert Annotation: Researchers annotate images based on participant descriptions or additional context.

- Performance Metrics Calculation: Compare model predictions against ground truth to calculate:

Protocol: Portion Estimation Accuracy Using a Reference Dataset

Objective: To quantitatively validate the accuracy of a portion estimation system against ground-truth measurements. Materials:

- Test Dataset: A dataset with ground-truth volume/weight, such as the SimpleFood45 dataset [35] or a custom-created set.

- System Under Test (SUT): The portion estimation algorithm (e.g., 3D scaling, RGB-D fusion).

- Evaluation Framework: Software to run the SUT on the test dataset and compare outputs to ground truth. Procedure:

- Dataset Acquisition/Creation: Procure or create a dataset where each food image is paired with precisely measured volume (ml) and weight (g). The SimpleFood45 dataset uses a checkerboard for physical reference and is captured with a smartphone to simulate real conditions [35].

- System Execution: Process all images in the dataset through the SUT to obtain estimated volumes.

- Error Analysis: For each image, calculate the absolute and relative error between the estimated and true volume/weight.

- Performance Reporting: Report the Mean Absolute Error (MAE), Mean Absolute Percentage Error (MAPE), and relative error for key nutrients (e.g., DietAI24 achieved a 63% reduction in MAE for food weight and four key nutrients [30]).

Protocol: Integrated Eating Detection and Contextual Analysis

Objective: To deploy a passive eating detection system that triggers Ecological Momentary Assessments (EMAs) to capture eating context. Materials: Commercial smartwatch (e.g., Pebble, Apple Watch); companion smartphone app; EMA system [38]. Procedure:

- Sensor Deployment: Participants wear a smartwatch on their dominant hand to capture accelerometer data [38].

- Eating Event Detection: A real-time classifier on the smartphone processes the accelerometer data to detect eating gestures (e.g., based on hand-to-mouth movements). An eating episode is inferred upon detecting a threshold of gestures within a time window (e.g., 20 gestures in 15 minutes [38]).