Inertial Sensing for Bite Detection: A Comprehensive Review for Biomedical Research and Clinical Applications

This article provides a systematic analysis of inertial sensor technology for automated bite detection, a critical component in objective dietary monitoring.

Inertial Sensing for Bite Detection: A Comprehensive Review for Biomedical Research and Clinical Applications

Abstract

This article provides a systematic analysis of inertial sensor technology for automated bite detection, a critical component in objective dietary monitoring. Tailored for researchers and drug development professionals, it explores the foundational principles, sensor placement, and data processing methodologies. The review covers machine learning applications for gesture recognition, performance optimization strategies, and validation in both laboratory and free-living settings. A comparative evaluation of different sensing modalities and wearable platforms is presented, highlighting accuracy, feasibility, and practical implementation challenges. The synthesis aims to inform the development of robust, non-invasive tools for clinical trials and chronic disease management where precise eating behavior quantification is essential.

Foundations of Inertial Bite Detection: Principles and Sensor Taxonomy

The quantitative analysis of eating behavior is a critical frontier in health research, particularly for understanding conditions like obesity and eating disorders. This field spans from the macro-level analysis of complete eating episodes down to the micro-level detection of individual bites and chews, and further to the quantification of micro-gestures like wrist movements during food gathering. Research methodologies have diversified, primarily branching into wearable sensor-based systems and video-based analysis. Wearable approaches often leverage commercial hardware, such as Inertial Measurement Unit (IMU) sensors in smartwatches, to capture motion data, while video-based methods employ deep learning for automated behavioral analysis. This guide objectively compares the performance, experimental protocols, and technological foundations of these predominant approaches, providing researchers with a clear framework for selecting appropriate methodologies for specific investigative scopes.

Comparative Performance of Detection Modalities

The following tables summarize the quantitative performance and key characteristics of different eating behavior monitoring technologies, based on recent experimental findings.

Table 1: Performance Metrics of Bite and Chewing Detection Systems

| Detection Modality | Reported Performance | Primary Metric | Study Context |

|---|---|---|---|

| Smartwatch IMU (Personalized Model) | Median F1-score of 0.99 [1] | Carbohydrate intake detection | Diabetic participants, using recurrent networks (LSTM) |

| Video Analysis (ByteTrack) | Average precision of 79.4%, F1-score of 70.6% [2] | Bite count and bite-rate detection | Children (ages 7-9) consuming lab meals |

| Wearable Chewing Sensors | Significant effect of food hardness on signal (P-value < .001) [3] | Chewing strength estimation | Adults consuming foods of different hardness (carrot, apple, banana) |

| Haptic Feedback Glasses (OCOsense) | Significant reduction in chewing rate (p < 0.001) [4] | Chewing rate manipulation | Pilot intervention to encourage slower chewing |

Table 2: Key Characteristics and Applicability of Monitoring Approaches

| Technology | Key Strength | Primary Limitation | Best Suited For |

|---|---|---|---|

| Wearable IMU Sensors | High adherence (commercial smartwatches), non-invasive, suitable for free-living [5] [6] | Limited granularity for food type identification | Long-term, objective monitoring of eating episodes and micro-gestures in real-world settings |

| Video-Based Analysis | Rich contextual data, direct observation of eating mechanics [2] [6] | Privacy concerns, labor-intensive coding, sensitive to occlusion and lighting [2] | Controlled laboratory studies focusing on detailed meal microstructure and validation |

| Specialized Wearables | High accuracy for specific metrics (e.g., chewing rate) [3] [4] | Requires specialized hardware, potentially lower user adherence | Targeted clinical interventions or studies focusing on a specific behavioral metric |

Experimental Protocols and Methodologies

Inertial Sensor-Based Bite Weight Estimation

A novel approach for estimating the weight of individual bites uses inertial signals from a commercial smartwatch, establishing a direct link between micro-gestures and consumption quantity [5] [7].

- Data Collection & Preprocessing: Researchers collected synchronized 3D accelerometer and gyroscope streams from a smartwatch during eating sessions. The data was resampled to a constant 100 Hz sampling rate. The gravitational component was removed from the accelerometer data using a high-pass FIR filter, and a median filter was applied to reduce noise [5].

- Feature Engineering: The method combines behavioral features and statistical features from the inertial signals.

- Behavioral Features: These are extracted using a pre-existing micromovement classification model. They include 1) Food gathering duration: the time required to load the utensil with food, and 2) Stillness score: a measure of movement stability during food transport to the mouth, which correlates with utensil load [5].

- Statistical Features: These are derived from the raw inertial signals during the identified bite events.

- Modeling & Validation: The features serve as input to a Support Vector Regression (SVR) model to estimate bite weights. The model was evaluated under a leave-one-subject-out cross-validation (LOSO CV) scheme on a dataset of 342 bites, achieving a mean absolute error (MAE) of 3.99 grams per bite [5] [7].

Video-Based Bite Detection with ByteTrack

The ByteTrack system was developed to automate bite detection from video recordings, specifically addressing challenges in pediatric populations [2].

- Data Collection: The model was trained on 242 video recordings (1,440 minutes) of children (ages 7-9) consuming laboratory meals. Videos were recorded at 30 frames per second [2].

- Model Architecture: ByteTrack is a two-stage deep learning pipeline:

- Face Detection: A hybrid model combining Faster R-CNN and YOLOv7 locates the participant's face in the video frame.

- Bite Classification: Sequences of frames are processed by an EfficientNet convolutional neural network (CNN) to extract spatial features, which are then analyzed by a Long Short-Term Memory (LSTM) recurrent network to model temporal dependencies and classify bite events [2].

- Performance: On a test set of 51 videos, ByteTrack achieved an average precision of 79.4% and an F1-score of 70.6% when compared to manual coding. Performance decreased with extensive head movement or occlusions (e.g., hands or utensils blocking the mouth) [2].

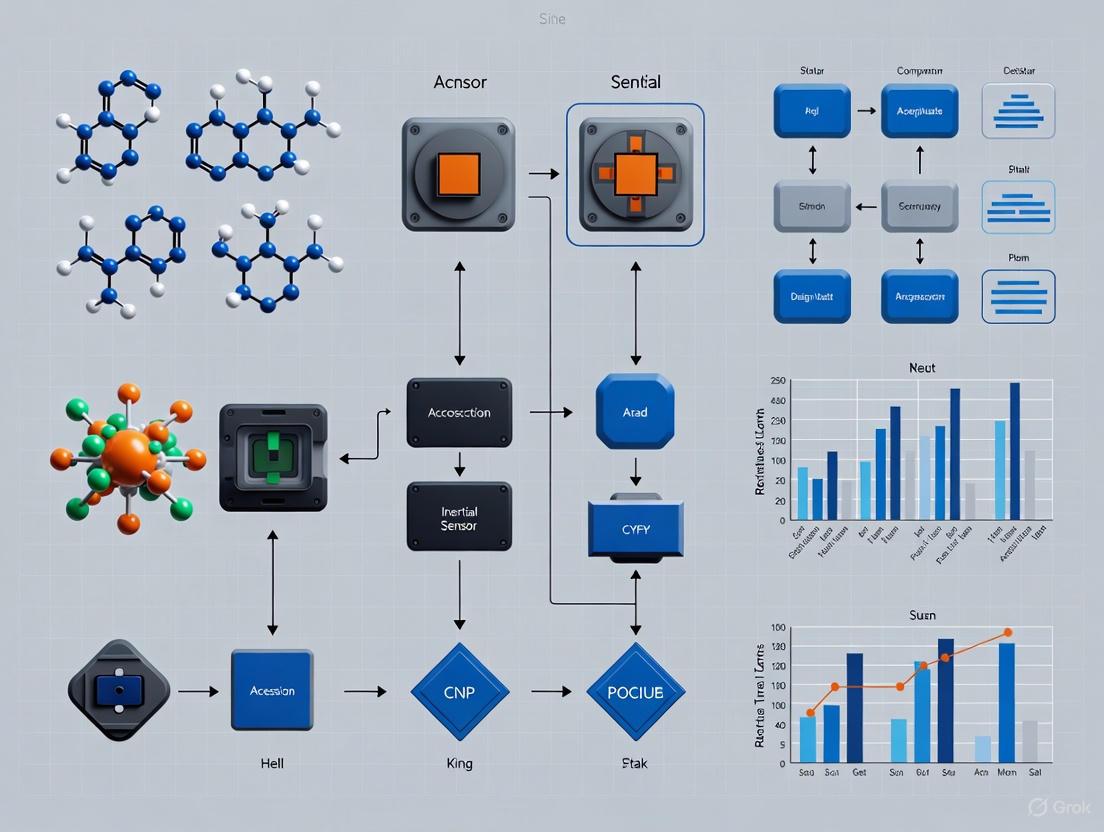

Conceptual Workflow of a Multi-Modal Analysis System

The following diagram illustrates the logical flow and integration points for the key technologies discussed, from data capture to behavioral insight.

The Researcher's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagents and Solutions for Inertial Sensor-Based Studies

| Reagent / Material | Function / Relevance | Exemplar in Research |

|---|---|---|

| Commercial Smartwatch | Provides the Inertial Measurement Unit (IMU) platform; contains 3-axis accelerometer and gyroscope for data capture [5]. | Used as the primary data collection device in smartwatch-based bite weight estimation studies [5] [7]. |

| Publicly-Available Datasets | Serves as a benchmark for training and validating machine learning models, enabling reproducible research. | The dataset collected by Levi et al., containing smartwatch inertial data synchronized with bite weights [5]. |

| Support Vector Regression (SVR) | A machine learning model used for estimating continuous values, such as the weight of a bite. | The core regression model in the bite weight estimation method, chosen for its effectiveness with the engineered features [5]. |

| Long Short-Term Memory (LSTM) | A type of Recurrent Neural Network (RNN) ideal for processing sequential data and capturing temporal dependencies. | Used in both IMU-based food intake detection [1] and video-based ByteTrack system [2] for modeling time-series data. |

| Faster R-CNN / YOLOv7 | Deep learning object detection models used to locate and track objects of interest within video frames. | Formed the hybrid face-detection pipeline in the ByteTrack system to initially locate the subject's face [2]. |

Core Physics and Signal Characteristics of Inertial Measurement Units (IMUs)

An Inertial Measurement Unit (IMU) is a sophisticated electronic device that measures and reports a body's specific force, angular rate, and sometimes its orientation in space [8]. By combining multiple sensors, IMUs provide crucial motion data without relying on external references, making them indispensable in applications from consumer electronics to advanced scientific research [9] [10]. The core physics of IMUs revolves around the precise measurement of fundamental physical properties including acceleration, rotational velocity, and magnetic fields, which can be processed to derive orientation, velocity, and even position through dead reckoning [8].

The historical evolution of IMUs traces back to the early 19th century with Léon Foucault's gyroscope invention in 1852, designed to demonstrate Earth's rotation [11]. Significant development occurred during World War II with inertial navigation systems for submarines and aircraft, including the German V-2 rocket guidance system [11]. The 1960s-1970s saw miniaturization efforts for Apollo missions, followed by the revolutionary development of Microelectromechanical Systems (MEMS) in the 1980s-1990s that enabled mass production of tiny, inexpensive sensors [11]. Today, IMUs have become ubiquitous components in navigation systems, robotics, consumer devices, and specialized research applications including bite detection and eating behavior monitoring [12] [1].

Core Components and Physical Principles

IMU Sensor Architecture

IMUs integrate multiple sensors in typical configurations of 6-axis (accelerometer + gyroscope) or 9-axis (accelerometer + gyroscope + magnetometer) [9]. These sensors are arranged along three principal axes—pitch, roll, and yaw—providing comprehensive data on an object's motion and orientation in three-dimensional space [8]. The following diagram illustrates the relationship between these core components and the physical properties they measure:

Accelerometers: Principles of Linear Acceleration Measurement

Accelerometers measure linear acceleration (the rate of change of velocity) along one or more axes [9] [10]. In MEMS accelerometers, the most common type found in commercial and research applications, this measurement follows Hooke's law and Newton's second law of motion through a spring-mass system [9]. The core physics principle involves a tiny proof mass connected to a reference frame by a spring. When acceleration occurs, the mass deflects proportionally to the applied force (F = ma), and this deflection is measured capacitively through changes in capacitance between fixed and moving plates [9]. The accelerometer establishes a baseline capacitance when stationary, with any acceleration causing measurable changes to this capacitance, which are then electronically processed to determine acceleration magnitude and direction [9].

Gyroscopes: Principles of Angular Velocity Measurement

Gyroscopes measure angular velocity—how fast an object is rotating around its axes [9] [10]. MEMS gyroscopes, commonly used in modern IMUs, operate based on the Coriolis effect, which describes the apparent force on a mass moving in a rotating reference frame [9]. In a typical Coriolis MEMS gyroscope, a vibrating proof mass is attached to a reference frame. When the sensor rotates, the Coriolis effect induces a secondary vibration perpendicular to both the drive axis and the axis of rotation [9]. This secondary vibration is sensed through changes in capacitance, producing a signal proportional to the Coriolis force and thus the rate of rotation [9]. This principle allows MEMS gyroscopes to precisely measure rotational motion without the large moving parts of traditional mechanical gyroscopes.

Magnetometers: Principles of Magnetic Field Measurement

Magnetometers measure the strength and orientation of magnetic fields, typically used in IMUs to determine heading relative to Earth's magnetic field [9]. Different physical principles can be employed in magnetometers, with Hall effect magnetometers being common in IMU applications [9]. The Hall effect involves generating a voltage difference (Hall voltage) across a conductor when exposed to a magnetic field perpendicular to current flow [9]. In Hall effect magnetometers, current passes through a semiconducting material, and changes in current due to nearby magnetic fields produce a Hall voltage proportional to magnetic field strength [9]. Other magnetometer types include Magneto-Induction magnetometers, which assess how magnetized a material becomes when exposed to external magnetic fields, and Magneto-Resistance magnetometers, which leverage the anisotropic magneto-resistance (AMR) of ferromagnets whose electrical resistance changes when exposed to magnetic fields [9].

Key Performance Metrics

The performance of IMUs varies significantly across different grades and technologies, with specific metrics determining their suitability for various applications, including research-grade bite detection. The table below summarizes the critical performance parameters and their implications for sensor selection:

Table 1: Key IMU Performance Metrics and Specifications

| Performance Metric | Description | Impact on Measurement | Typical Ranges |

|---|---|---|---|

| Bias Instability | Drift in sensor output when no motion is present | Determines long-term stability; affects orientation accuracy | Varies from >1000 μg to <1 μg for accelerometers [9] |

| Noise Density | Inherent random variation in sensor output | Limits resolution of small motions; critical for detecting subtle gestures | Higher in consumer vs. tactical grade IMUs |

| Scale Factor | Ratio of sensor output to actual input | Non-linearity causes proportional errors in measured motion | Specified as % deviation from ideal response |

| Sample Rate | Frequency at which data is acquired | Must exceed Nyquist rate for target motions; bite detection typically requires ≥15 Hz [1] | 15 Hz to >200 Hz depending on application [13] [1] |

| Range | Maximum measurable acceleration/rotation | Must accommodate fastest expected motions without saturation | ±16 g commonly used for rapid arm movements [13] |

| Resolution | Smallest detectable change in motion | Determines ability to detect minute movements | Higher resolution needed for subtle eating gestures |

Error Characteristics and Drift

All IMUs suffer from inherent errors that accumulate over time, a fundamental challenge in inertial navigation [8]. The primary error sources include offset error (bias), scale factor error, misalignment error, cross-axis sensitivity, noise, and environmental sensitivity (particularly to thermal gradients) [8]. Due to the mathematical integration process used to derive position and velocity from acceleration measurements, these errors accumulate in characteristic ways: a constant error in acceleration results in a linear error growth in velocity and a quadratic error growth in position, while a constant error in attitude rate (gyro) results in a quadratic error growth in velocity and a cubic error growth in position [8]. This drift phenomenon necessitates regular calibration and sensor fusion techniques, especially for applications requiring prolonged measurement periods.

IMU Technologies and Comparative Performance

IMU Technology Categories

IMU technologies span multiple performance grades and operating principles, each with distinct advantages and limitations for research applications:

Silicon MEMS IMUs: Utilize miniaturized sensors measuring mass deflection or the force required to hold a mass in place [9]. While traditionally exhibiting higher noise, vibration sensitivity, and instability compared to higher-grade technologies, ongoing advancements have steadily improved their precision [9]. Their compact size, lighter weight, and cost-effectiveness make them suitable for consumer electronics, automotive applications, and research prototypes [9].

Quartz MEMS IMUs: Feature a one-piece inertial sensing element crafted from quartz, driven by an oscillator to vibrate precisely [9]. Known for high reliability and stability over temperature, tactical-grade quartz MEMS IMUs compete with FOG and RLG technologies in SWaP-C (size, weight, power, and cost) metrics [9]. These are used in industrial automation, UAVs, and medical equipment [9].

Fiber Optic Gyro (FOG) IMUs: Employ solid-state technology where beams of light traverse through a coiled optical fiber [9]. They are less sensitive to shock and vibration, offer excellent thermal stability, and deliver high performance in critical parameters [9]. While larger and more expensive than MEMS-based counterparts, FOG IMUs excel in mission-critical applications demanding exceptionally precise navigation [9].

Ring Laser Gyro (RLG) IMUs: Use laser beams traveling in opposite directions around a closed path to measure rotation through interference patterns [9]. RLGs have in-run bias stabilities ranging from 1 °/hour to less than 0.001 °/hour, suitable for tactical and navigation grades [9]. They offer high accuracy but at increased cost and size.

Performance Comparison of IMU Types

The selection of appropriate IMU technology depends heavily on the specific requirements of the research application, particularly in bite detection studies where accuracy must be balanced with practical wearability considerations:

Table 2: Comparative Analysis of IMU Technologies for Research Applications

| IMU Type | Accuracy Range | Power Consumption | Cost | Size/Weight | Suitable Research Applications |

|---|---|---|---|---|---|

| Consumer MEMS | Medium (Accel: >100 mg, Gyro: >0.1°/s) [8] | Low | $1-$10 [14] | Very Small | Basic gesture recognition, consumer wearables |

| Tactical MEMS | Medium-High (Accel: 100 mg to 1 mg, Gyro: 0.1°/s to 0.001°/s) [8] | Low-Medium | $10-$100 | Small | Biomedical research, bite detection [12] [1] |

| FOG | High (Gyro: <0.001 °/h bias stability) [9] | Medium-High | $100-$10,000 | Medium | Laboratory motion capture, clinical studies |

| RLG | Very High (Gyro: 1 °/h to <0.001 °/h bias stability) [9] | High | $10,000+ | Large | High-precision biomechanics, validation systems |

Experimental Methodologies for IMU Performance Evaluation

Standardized Testing Protocols

Rigorous experimental methodologies are essential for characterizing IMU performance in research contexts. The following workflow outlines a comprehensive testing approach suitable for validating IMUs for bite detection applications:

The hand tapping test represents a gold standard for measuring rapid hand movement kinematics and has been successfully employed with IMU-based systems [13]. This protocol involves lateral alternating hand movement between two markers positioned at a standardized distance (typically 50 cm) while wearing an IMU sensor on the dominant hand [13]. Participants perform maximally fast movements after familiarization trials, with the best result used for statistical processing [13]. This methodology has demonstrated excellent discriminative power between athlete groups and controls, with temporal variables (time elapsed between movement onset and first/second tap) showing particularly high sensitivity [13].

Sensor Fusion and Data Processing

Raw IMU data requires sophisticated processing to extract meaningful information. A typical processing pipeline involves several stages. First, raw signals from accelerometers, gyroscopes, and magnetometers are acquired at appropriate sampling frequencies (e.g., 200 Hz for detailed motion analysis [13] or 15 Hz for eating behavior monitoring [1]). The data then undergoes filtering, commonly using low-pass Butterworth filters (e.g., order = 5, cutoff frequency = 40 Hz) to reduce noise while preserving motion signatures [13]. Feature extraction follows, identifying relevant kinematic variables such as maximal acceleration (A1), maximal deceleration (A2), acceleration gradients (GA1, GA2), and temporal characteristics (t1, t2) [13]. For orientation estimation, sensor fusion algorithms such as Kalman filters combine data from multiple sensors to estimate attitude, correct for drift, and transform measurements into appropriate reference frames [8]. Finally, machine learning approaches, including temporal convolutional networks with multi-head attention (TCN-MHA) or recurrent neural networks with LSTM layers, can be applied for specific detection tasks like bite recognition [12] [1].

The Researcher's Toolkit for IMU-Based Bite Detection

Successful implementation of IMU-based bite detection requires careful selection of hardware, software, and methodological components. The following table summarizes the essential research reagents and solutions for this specialized application:

Table 3: Essential Research Toolkit for IMU-Based Bite Detection Studies

| Component | Specification | Research Function | Example Models/References |

|---|---|---|---|

| IMU Sensors | 6-axis or 9-axis MEMS IMU, ±16g range, sampling ≥15 Hz | Captures raw accelerometer and gyroscope data of wrist movements | LSM6DS33 [13], ICM-45686 [14] |

| Data Acquisition System | Wireless transmission capability, timestamp synchronization | Enables continuous monitoring in free-living environments | Custom Wi-Fi modules [13], Commercial IMU platforms |

| Signal Processing Software | Digital filtering, feature extraction algorithms | Removes noise, isolates bite-related signals | Low-pass Butterworth filters [13], Custom LabVIEW applications [13] |

| Machine Learning Models | Temporal pattern recognition networks | Detects and classifies intake gestures from IMU data | TCN-MHA [12], CNN-LSTM hybrids [12], Personalized LSTM networks [1] |

| Validation Protocols | Standardized eating tasks, video recording | Ground truth establishment for algorithm training | Hand tapping tests [13], Controlled meal sessions [12] |

| Calibration Equipment | Multi-axis turntables, climatic chambers | Characterizes and compensates for sensor errors | Factory calibration systems [8] |

Application in Bite Detection Research

IMU Sensor Data Characteristics in Eating Monitoring

In bite detection applications, IMUs typically mounted on the wrist capture distinctive motion patterns associated with eating gestures [12]. These gestures are defined as the action of raising the hand to the mouth with cutlery or a water container until the hand is moved away from the mouth [12]. The inertial signals characteristic of biting motions include specific acceleration profiles during the hand-to-mouth movement, distinct rotational velocities as the wrist orientates utensils toward the mouth, and periodic patterns corresponding to repetitive biting sequences [12]. Research has demonstrated that these motion signatures can be successfully identified within continuous data streams using appropriate detection algorithms, achieving high accuracy in controlled environments (F1 scores up to 0.99 in personalized models [1]) and acceptable performance in free-living conditions (MAPE of 0.110-0.146 for eating speed measurement [12]).

Performance Comparison in Eating Behavior Research

The effectiveness of IMU-based bite detection systems varies based on sensor quality, algorithm selection, and implementation methodology. Current research indicates that wrist-worn IMU sensors can successfully detect bites with high accuracy in structured meal sessions using models like CNN-LSTM hybrids [12]. The more challenging scenario of free-living bite detection (full-day monitoring) has been achieved with mean absolute percentage errors of 0.110-0.146 for eating speed measurement using TCN-MHA models [12]. Personalized deep learning models, particularly those utilizing LSTM networks, have demonstrated superior performance (median F1 score of 0.99) compared to generalized models, highlighting the importance of individual variability in eating kinematics [1]. The temporal characteristics of intake gestures, particularly the timing between movement onset and key events, have proven to be highly discriminative features [13], aligning with findings from rapid hand movement research that identified temporal variables as having the greatest discriminative potential between different participant groups [13].

Inertial Measurement Units represent a powerful technology for capturing detailed motion data across diverse research applications, particularly in the growing field of automated eating behavior monitoring. The core physics of IMUs—based on measuring specific force through accelerometers, angular velocity through gyroscopes, and magnetic fields through magnetometers—enables precise tracking of movement kinematics when properly implemented. The selection of appropriate IMU technology must balance performance specifications with practical constraints, where MEMS-based systems typically offer the best compromise for wearable bite detection research. Critical to success are rigorous experimental methodologies, comprehensive sensor characterization, and sophisticated data processing pipelines that address inherent IMU limitations such as drift and noise. As research in this field advances, the integration of higher-performance sensors with increasingly sophisticated machine learning algorithms promises to enhance the accuracy and applicability of IMU-based monitoring systems, potentially enabling new insights into eating behaviors and their relationship to health outcomes.

This guide objectively compares the performance of inertial sensors across three prominent wearable form factors—wrist, head, and earable platforms—for bite detection and eating behavior monitoring, a critical area of research for nutritional science and chronic disease management.

Performance Comparison of Wearable Form Factors

The table below summarizes the key performance metrics and characteristics of the three primary wearable form factors as evidenced by recent research.

| Form Factor / Study | Primary Sensor Type | Key Performance Metrics | Strengths | Limitations / Intrusiveness |

|---|---|---|---|---|

| Wrist (Smartwatch) [5] | IMU (Accelerometer, Gyroscope) | Bite weight estimation: MAE of 3.99 grams/bite (SVR model) [5].Food consumption detection: F1 score up to 0.99 (personalized LSTM model) [1]. | High usability & strong user adherence; leverages commercial devices [5]. | Indirect measurement (infers bite from arm movement); less fine-grained for chewing mechanics [6]. |

| Head (Glasses/Headband) [15] [16] | IMU (Accelerometer, Gyroscope), Contact Microphone | Chewing side detection: 84.8% accuracy [16].Bite timing for robotics: Performed on par or better than manual methods in user control and understanding [15]. | Direct measurement of jaw movement & head kinematics; high detail for mechanistic studies [15] [16]. | Higher intrusiveness; form factor may not be suitable for all-day wear [16]. |

| Earable (In-Ear/Behind-Ear) [17] | IMU (Accelerometer), Acoustic (Microphone) | Chewing instance detection: 93% accuracy, 80.1% F1-score in unconstrained environments [17].Eating episode recognition: Correctly identified all but one episode in free-living study [17]. | Good balance between robustness (resilient to noise) and discretion; suitable for free-living studies [17]. | May be affected by ambient noise if using acoustics; placement can vary user-to-user [17]. |

Detailed Experimental Protocols

To critically assess the data in the comparison table, an understanding of the underlying experimental methodologies is essential. Below are the protocols for the key studies cited.

This study focused on estimating the weight of individual bites using only a commercial smartwatch's Inertial Measurement Unit (IMU).

- Objective: To estimate the weight of a bite (in grams) from inertial sensor data.

- Data Collection: A dataset was created from ten participants using a commercial smartwatch. Inertial data (3D accelerometer and gyroscope) were synchronized with a smart scale that recorded the weight of each bite. The start and end times of bites were manually annotated from video.

- Preprocessing: Sensor data was resampled to 100 Hz, gravity was removed from the accelerometer signal using a high-pass filter, and a median filter was applied for noise reduction.

- Feature Engineering: A combination of behavioral features (e.g., food gathering duration, movement stillness during transport) and statistical features from the IMU signals were extracted.

- Model & Validation: A Support Vector Regression (SVR) model was trained on these features. Performance was evaluated using Leave-One-Subject-Out Cross-Validation (LOSO CV), resulting in a mean absolute error (MAE) of 3.99 grams per bite.

This study aimed to detect whether a person is chewing on the left or right side of their mouth using motion sensors.

- Objective: To classify individual chews as "left side" or "right side."

- Sensor Placement: Two motion-sensing devices (containing an accelerometer and gyroscope) were deployed on the left and right temporalis muscles via a headband.

- Data Collection: Data from eight human subjects eating eight different food types was collected to create a real-world evaluation dataset.

- Signal Processing: A heuristic-rules based method was used to exclude non-chewing data (like biting and swallowing) and to accurately segment the sensor data for each individual chew. The relative difference series between the left and right sensors was calculated to characterize the asymmetry in muscle bulge and skull vibration.

- Model & Validation: A two-class classifier using a Long Short-Term Memory (LSTM) neural network was trained on the processed data segments. The model achieved an average detection accuracy of 84.8% across all subjects and food types.

The EarBit study was designed to detect eating episodes in unconstrained, real-world environments using a combination of sensors.

- Objective: To detect chewing instances and aggregate them into eating episodes in free-living conditions.

- Sensor Placement & Modalities: As an experimental platform, EarBit incorporated inertial, optical, and acoustic sensors in a head-mounted form factor. The final optimized model used an IMU placed behind the ear to detect jaw motion.

- Data Collection: The model was first trained on data from a semi-controlled "home-like" lab environment. It was then tested on a separate, fully unconstrained "outside-the-lab" dataset, where 10 participants used the prototype for 45 hours in their own environments. Video footage was used as ground truth.

- Model & Validation: A machine learning model (specific algorithm not detailed) was trained on the inertial data from the semi-controlled study. When tested on the real-world data, it detected chewing instances at a 1-second resolution with 93% accuracy and an 80.1% F1-score. By aggregating these chewing inferences, the system recognized all but one of the recorded eating episodes.

The Researcher's Toolkit

The table below lists key hardware and software solutions used in the featured experiments, providing a starting point for developing a research pipeline.

| Research Reagent / Solution | Function in Experiment |

|---|---|

| Commercial Smartwatch (IMU) [5] | Provides a source of inertial data (accelerometer, gyroscope) from the wrist; enables research with commercially available, user-acceptable hardware. |

| Custom Headband with IMUs [16] | Enables precise placement of motion sensors on the temporalis muscles to capture detailed jaw movement and muscle activity for fine-grained analysis. |

| Behind-the-Ear Inertial Sensor [17] | Detects jaw motion as a proxy for chewing with a form factor that is more robust to environmental noise than acoustic sensors and less obtrusive than head-worn kits. |

| Support Vector Regression (SVR) [5] | A machine learning model used for solving regression tasks, such as estimating continuous variables like bite weight from sensor features. |

| Long Short-Term Memory (LSTM) [16] [1] | A type of recurrent neural network (RNN) ideal for classifying and modeling time-series data, such as sequential sensor data from chewing or gestures. |

Experimental Workflow for Wearable Bite Detection

The following diagram illustrates the common data processing and modeling pipeline used in bite detection research, from data collection to model output.

Key Research Implications

The body of research demonstrates a clear trade-off between the richness of mechanistic data and practical usability for long-term monitoring. Head-worn and earable platforms provide more direct, high-frequency signals related to the oral phase of eating (chewing, swallowing), making them indispensable for detailed behavioral analysis. Wrist-worn devices, while more indirect in their measurement, leverage a highly adoptable form factor, enabling larger-scale and longer-duration studies with implications for population health and chronic disease management. The choice of platform should be dictated by the specific research question, prioritizing data granularity for mechanistic studies and user adherence for interventional or long-term observational studies.

The Role of Accelerometers and Gyroscopes in Capturing Hand-to-Mouth Motions

Inertial sensors, particularly accelerometers and gyroscopes, have become fundamental tools in the objective monitoring of eating behavior. Their ability to capture the distinct kinematic signatures of hand-to-mouth motions makes them invaluable for automated dietary monitoring (ADM) and bite detection research [6] [18]. This guide provides a comparative analysis of their performance, detailing the experimental protocols and data outputs that define their application in both laboratory and free-living settings. For researchers in fields ranging from nutritional science to drug development, where precise adherence monitoring is critical, understanding the capabilities and limitations of these sensors is essential.

Performance Comparison of Inertial Sensing Systems

The performance of systems using accelerometers and gyroscopes for eating detection varies based on sensor configuration, placement, and the analytical models employed. The table below summarizes key performance metrics from recent studies.

Table 1: Performance Comparison of Inertial Sensor-Based Eating Detection Systems

| Study Description | Sensor Type & Placement | Key Performance Metrics | Experimental Context |

|---|---|---|---|

| Wrist-worn IMU (Multi-Sensor Fusion) [19] | Accelerometer, Gyroscope, Piezoelectric sensor, RIP sensor (Wrist, Jaw, Torso) | F1-scores: Eating Gestures (0.82), Chewing (0.94), Swallowing (0.58) | Controlled lab setting with 6 subjects |

| Smartwatch-based Model (Free-Living) [20] | Accelerometer & Gyroscope (Apple Watch, Wrist) | Meal-level Detection AUC: 0.951; Personalized Model AUC: 0.872 | Large-scale free-living study; 3828 hours of data from 34 participants |

| Commercial Smartwatch (Eating Gesture Detection) [18] | Accelerometer & Gyroscope (Commercial Wrist-worn Device) | F1-score: ~0.79 for eating gestures | Review of 69 studies; mix of lab and free-living settings |

| Head-Mounted Motion Sensors (Chewing Side Detection) [16] | Accelerometer & Gyroscope (Temporalis Muscles) | Average Chewing Side Detection Accuracy: 84.8% | Lab study with 8 subjects and 8 food types |

Experimental Protocols for Hand-to-Mouth Motion Capture

The following experimental workflows are standardized methodologies for collecting and analyzing inertial sensor data related to eating behavior.

Protocol for Wrist-Worn Inertial Sensor Data Collection

This protocol is designed to capture the kinematics of eating gestures using common wearable devices [18] [20].

- Sensor Configuration: A smartwatch or research-grade inertial measurement unit (IMU) containing a tri-axial accelerometer and a tri-axial gyroscope is used. The device is securely fastened to the wrist of the dominant hand used for eating.

- Data Collection: Sensors are programmed to sample data at frequencies typically between 50-100 Hz [19] [20]. Raw accelerometer (in g-forces) and gyroscope (in radians/second) data are streamed to a paired smartphone or local storage.

- Ground Truth Labeling: In laboratory settings, simultaneous video recording is used to manually annotate the start and end times of each eating gesture [19]. In free-living studies, participants may use a push-button on the smartwatch or a smartphone app to self-report the beginning and end of meals [20].

- Data Processing: The continuous data stream is segmented into windows (e.g., 5-minute windows with a moving step) for analysis [20]. Features such as mean, variance, and spectral energy are extracted from the raw sensor signals within each window.

- Model Training and Validation: Machine learning models, including Support Vector Machines (SVM), Random Forests, or Deep Learning networks, are trained on the extracted features to classify windows as "eating" or "non-eating" [18] [20]. Performance is validated using metrics like F1-score and Area Under the Curve (AUC).

Figure 1: Experimental workflow for wrist-worn sensor data collection and analysis.

Protocol for Multi-Sensor Fusion Approach

This methodology leverages data from sensors on multiple body parts to improve detection accuracy by capturing different phases of food consumption [19].

- Multi-Sensor Instrumentation:

- Wrist: A smartwatch with an accelerometer and gyroscope detects hand-to-mouth gestures.

- Jaw: A piezoelectric sensor is attached to the mandible to detect chewing motions.

- Torso: A Respiratory Inductance Plethysmographic (RIP) sensor with two belts around the chest and abdomen detects swallowing through changes in breathing patterns.

- Synchronized Data Collection: All sensors record data simultaneously. A common timestamp or a synchronization signal is used to align data streams from all devices.

- Activity Protocol: Participants perform specific tasks, including eating with utensils (e.g., spoon, fork) and hands (e.g., croissant), as well as non-eating activities that mimic eating gestures (e.g., scratching the head, talking) [19].

- Feature-Level Fusion: Features are extracted from each sensor's data stream. These diverse feature sets are then combined into a single feature vector for each time window.

- Integrated Classification: A unified classifier (e.g., SVM) is trained on the combined feature set to distinguish eating events from non-eating activities more robustly than any single sensor could [19].

Figure 2: Multi-sensor fusion workflow for robust eating activity detection.

The Researcher's Toolkit: Essential Reagents and Materials

Successful implementation of inertial sensing for bite detection requires a suite of hardware and software components.

Table 2: Essential Research Toolkit for Inertial Sensor-Based Bite Detection

| Category | Item | Specification / Example | Primary Function in Research |

|---|---|---|---|

| Hardware | Inertial Measurement Unit (IMU) | Tri-axial accelerometer & gyroscope; often MEMS-based [21] | Captures linear acceleration and angular rotation of limbs. |

| Wearable Platform | Commercial smartwatch (e.g., Apple Watch [20]) or research-grade sensor node [18] | Houses IMU, provides power, data storage/streaming. | |

| Supplementary Sensors | Piezoelectric sensor (jaw) [19], RIP sensor belts (torso) [19] | Captures chewing and swallowing for multi-modal fusion. | |

| Software & Data | Data Acquisition App | Custom smartphone app (e.g., iOS/Android application) [20] | Streams sensor data, collects ground truth (e.g., button presses). |

| Machine Learning Library | Scikit-learn (SVM, Random Forest), TensorFlow/PyTorch (Deep Learning) [18] | Trains and deploys models for activity classification. | |

| Experimental Materials | Ground Truth Tools | Video recording system, electronic food diary [20] | Provides annotated data for training and validating models. |

| Calibration Equipment | Precision turntable (for gyroscopes), tilt station (for accelerometers) | Characterizes and corrects for sensor bias and scaling errors. |

Accelerometers and gyroscopes are proven, effective tools for capturing hand-to-mouth motions, with systems achieving high accuracy in controlled environments. The current research demonstrates a clear trend towards leveraging commercial smartwatches and sophisticated machine learning models to move from laboratory validation to large-scale, free-living application [18] [20]. The integration of multiple sensor modalities presents a promising path to overcome the challenge of distinguishing eating from similar non-eating activities, thereby increasing robustness and reliability. For researchers in clinical and pharmaceutical settings, these technologies offer a powerful means to objectively monitor dietary adherence and eating behaviors, which are critical endpoints in many therapeutic areas.

Distinguishing Bites from Other Activities of Daily Living

Automatic detection of eating moments is a cornerstone of modern dietary monitoring, with applications spanning health research and clinical care. A primary technical challenge within this domain is accurately distinguishing bites from other daily activities using non-intrusive sensors. This guide objectively compares the performance of different sensing modalities, with a particular focus on the role of inertial sensors in this evolving field.

The Core Challenge in Bite Detection

The act of taking a bite is a complex activity that can be captured through various physiological and motion signatures. The key challenge lies in isolating these bite-specific signals from the vast array of other daily movements and activities, often referred to as the "NULL class" in activity recognition research [22]. This requires sensing technologies that can detect subtle patterns with high temporal resolution while being socially acceptable and practical for long-term use. Current approaches primarily leverage three core aspects of dietary activity: characteristic arm and hand movements associated with bringing food to the mouth, jaw movements during chewing, and acoustic signals from chewing and swallowing [22] [6].

Comparison of Sensing Modalities for Bite Detection

The following table summarizes the performance, strengths, and limitations of the primary sensor technologies used for distinguishing bites from other activities.

| Sensing Modality | Key Measurable Actions | Reported Performance (Precision/Recall/F-score) | Key Advantages | Major Limitations |

|---|---|---|---|---|

| Wrist-Worn Inertial Sensors (Smartwatch) [23] [5] | Food intake gestures (arm movements), bite weight estimation | F-score: 71.3%-76.1% (Precision: 65.2%-66.7%, Recall: 78.6%-88.8%) for eating moments [23] | High practicality, uses commercial devices, suitable for long-term free-living monitoring [23] | Performance can be affected by high variability in individual eating styles and concurrent activities |

| Multi-Sensor Body Array [22] | Combined arm movements, chewing, swallowing | Arm Movements: Recall: 80-90%, Precision: 50-64% [22] | High accuracy by fusing multiple data sources, captures comprehensive intake cycle [22] | Low social acceptability, intrusive, requires multiple specialized sensors [22] [23] |

| Acoustic Sensors [22] | Chewing sounds, swallowing | Chewing: Recall: 80-90%, Precision: 50-64%Swallowing: Recall: 68%, Precision: 20% [22] | Directly captures sounds of mastication and swallowing | Privacy concerns, vulnerable to ambient noise, lower precision for swallowing [22] [6] |

| Jaw-Mounted Inertial Sensors [24] | Jaw movements during mastication (vertical, lateral, protrusion) | No statistically significant difference from clinical ground truth (p<0.05) in measuring jaw features [24] | Directly measures the act of chewing, high accuracy for jaw movement kinematics [24] | Specialized form factor, lower social acceptability for continuous daily use [24] |

Detailed Experimental Protocols and Methodologies

Protocol 1: Wrist-Worn Inertial Sensing for Eating Moment Recognition

This protocol outlines the methodology for using a commercial smartwatch to detect eating episodes, representing a practical approach for free-living monitoring [23].

- Sensor Configuration: A single off-the-shelf smartwatch equipped with a 3-axis accelerometer is worn on the wrist. Data is typically sampled at frequencies between 50-100 Hz [23] [5].

- Data Collection & Preprocessing: In a semi-controlled lab setting, participants perform eating activities and other daily tasks. The raw accelerometer data is preprocessed to remove noise and gravitational components, often using high-pass filtering [5].

- Feature Extraction & Model Training: The core of the method involves a two-step learning process [23]:

- Food Intake Gesture Spotting: A classifier identifies short, repetitive gestures characteristic of eating (e.g., hand-to-mouth movements) from the continuous stream of sensor data.

- Temporal Clustering: These spotted gestures are grouped across time to infer distinct "eating moments." Machine learning models (often Classic Machine Learning) are trained on lab-collected data and then validated in free-living conditions [23] [25].

Protocol 2: Multi-Sensor Fusion for Comprehensive Dietary Activity Recognition

This protocol employs a multi-modal approach to capture the full sequence of eating activities, from arm movement to swallowing [22].

- Multi-Sensor Setup: The system uses an array of sensors positioned on the body [22]:

- Inertial Sensors on the lower/upper arms and upper back to capture intake gestures.

- Ear Microphone to capture chewing sounds from food breakdown.

- Sensor Collar with surface EMG electrodes and a stethoscope microphone to detect swallowing activity.

- Event Recognition Procedure: The method employs a sensitive search to spot potential activity events in the continuous data, followed by a selective refinement stage. This stage uses information fusion schemes to combine evidence from the different sensing modalities, improving the overall robustness of detection [22].

- Performance Metrics: Performance is evaluated separately for each activity domain (movements, chewing, swallowing) in terms of recall (ability to find all true events) and precision (ability to avoid false positives) [22].

Protocol 3: Jaw Movement Analysis with a Reference Inertial System

This protocol provides a high-accuracy method for analyzing mastication, which is crucial for validating other, less direct sensing methods [24].

- Experimental Setup: Two commercial MEMS inertial sensors (e.g., MPU-6050) are used. One sensor is fixed on the jaw (S_jaw) and another, serving as a dynamic reference, is fixed on the forehead (S_forehead). This configuration cancels out head movement artifacts [24].

- Jaw-Movement Feature Extraction: The system measures angular data to extract specific kinematic features of each chewing cycle [24]:

- Vertical Amplitude: Total vertical aperture of the jaw.

- Cycle Lapsed Time: Duration of a single chew cycle.

- Laterality Coefficient: Indicates chewing side preference (left or right).

- Clinical Validation: The extracted features are compared against clinical assessments (often video-recorded and analyzed by professionals) using statistical tests (e.g., paired Student's t-test) to confirm no significant difference, establishing the method's validity [24].

The Scientist's Toolkit: Research Reagent Solutions

Essential hardware and software components for inertial sensor-based bite detection research.

| Item Name | Type/Model Example | Primary Function in Research |

|---|---|---|

| Inertial Measurement Unit (IMU) | MPU-6050 (3-axis accelerometer + 3-axis gyroscope) [24] | Captures linear acceleration and angular velocity of limb or jaw movements. |

| Microcontroller Platform | Arduino UNO [24] | Acquires data from sensors and handles initial preprocessing or wireless transmission. |

| Commercial Smartwatch | WearOS or watchOS devices with IMU [23] [5] | Provides a practical, consumer-grade sensing platform for free-living studies. |

| Signal Processing Toolbox | MATLAB, Python (SciPy) | Implements filters (e.g., high-pass FIR, median filter) to remove noise and gravity components [5]. |

| Machine Learning Library | Python (scikit-learn), TensorFlow/PyTorch | Enables development of classification (e.g., SVM) and clustering models for gesture spotting and eating moment recognition [23] [25] [5]. |

Experimental Workflow and Logical Relationships

The following diagram illustrates the standard workflow for developing a Human Activity Recognition (HAR) system for bite detection, from device selection to model evaluation [25].

The field is moving toward solutions that balance high accuracy with user adherence. Inertial sensors in commercial smartwatches are a promising candidate due to their practicality, though methods fusing them with other privacy-preserving modalities may offer the next leap in performance. Future work must address the high variability in eating styles and the challenge of differentiating bites from semantically similar gestures (e.g., drinking, face-touching) in completely unconstrained environments.

Methodologies and Real-World Implementation of Bite Detection Algorithms

Inertial Measurement Unit (IMU) sensors have emerged as a cornerstone technology for objective monitoring of eating behavior, offering a non-invasive and practical method for bite detection research. For scientists and drug development professionals, the reliability of this data is paramount, and it is heavily influenced by the initial stages of data acquisition and preprocessing. This guide provides a detailed, evidence-based comparison of methodologies for handling sampling rates, signal filtering, and sensor orientation correction, synthesizing current experimental data to inform robust research design.

Sampling Rate Selection for Optimal Performance

The sampling rate of an IMU is a critical parameter that balances accuracy with practical constraints like power consumption and computational load. Selecting an inappropriate rate can lead to aliasing artifacts or loss of critical movement information.

Evidence-Based Recommendations by Movement Type

The required sampling rate is directly influenced by the speed of the movement being analyzed. Research investigating human movement analysis provides clear guidance on sufficient sampling rates [26]:

- Walking (at 1.2 m/s): A sufficient sampling rate of 100 Hz is recommended.

- Running (at 2.2 m/s): For faster movements like running, a higher rate of 200 Hz is necessary.

- High-Speed Cyclic Movements (up to 3.0 Hz): To accurately capture very fast cyclic motions, a sampling rate of 400 Hz is advised.

For the specific, relatively slow gestures associated with eating (such as hand-to-mouth motions), studies have successfully used lower rates. Research utilizing the public Clemson all-day dataset, which contains smartwatch inertial data, employed a sampling rate of 15 Hz [27]. Another study on bite weight estimation resampled raw data to a constant 100 Hz for consistent processing [5].

Table 1: Recommended IMU Sampling Rates for Different Activities

| Activity Type | Recommended Sampling Rate | Supporting Experimental Context |

|---|---|---|

| Bite Detection / Eating Episodes | 15 - 100 Hz | Successfully used in free-living and semi-controlled eating detection studies [27] [5]. |

| Walking | 100 Hz | Determined as sufficient for accurate orientation estimation during walking at 1.2 m/s [26]. |

| Running | 200 Hz | Required for accurate orientation estimation during running at 2.2 m/s [26]. |

| Spine Orientation (Low Power) | 13 - 35 Hz | Varies by task (sitting, walking, jogging); sufficient for accurate motion estimates with optimized filters [28]. |

The Critical Role of Gyroscope Sampling

Evidence indicates that the gyroscope's sampling rate is more critical for orientation estimation than that of the accelerometer. One study found that accelerometer sampling rates exceeding 100 Hz could even decrease accuracy, as "excessive orientation updates using distorted accelerations and angular velocity introduced more error than merely using angular velocity" [26]. This underscores the importance of prioritizing gyroscope performance in system design for dynamic movement tracking.

Sensor Fusion Algorithms and Filtering Techniques

Raw IMU data is noisy and must be filtered and fused to yield a reliable estimate of sensor orientation—a prerequisite for accurate movement analysis.

Comparison of Sensor Fusion Algorithms

Different algorithms combine data from the accelerometer, gyroscope, and magnetometer to compensate for the weaknesses of each individual sensor.

Table 2: Comparison of Common Inertial Sensor Fusion Algorithms

| Algorithm/Filters | Sensors Used | Key Advantages | Key Limitations / Considerations |

|---|---|---|---|

AHRS (e.g., ahrsfilter) |

Accelerometer, Gyroscope, Magnetometer | Correctly estimates magnetic north; removes gyroscope bias; robust to mild magnetic jamming [29]. | Performance degrades in magnetically distorted environments. |

IMU Filter (e.g., imufilter) |

Accelerometer, Gyroscope | Removes gyroscope bias noise; does not require a magnetometer [29]. | Does not correctly estimate the direction of north (assumes initial orientation). |

| Extended Complementary Filter | Accelerometer, Gyroscope, Magnetometer | Computationally efficient; extensively adopted in literature [26]. | Accuracy can be limited during highly dynamic movement [26]. |

| VQF (Versatile Quaternion-based Filter) | Accelerometer, Gyroscope, Magnetometer | Incorporates gyro-bias estimation and magnetic disturbance rejection [26]. | - |

| Kalman Filter Variants | Varies | Powerful for state estimation; can incorporate multiple sensor models. | Higher computational complexity consumes about 29% more energy than simpler quaternion filters [26]. |

Preprocessing Pipelines for Bite Detection

For the specific application of dietary monitoring, research papers outline tailored preprocessing workflows. A common pipeline includes [5]:

- Resampling: Standardizing the sampling rate (e.g., to 100 Hz via linear interpolation).

- Gravity Removal: Using a high-pass filter (e.g., a 1 Hz cutoff FIR filter) to separate gravitational acceleration from dynamic wrist movement acceleration.

- Noise Reduction: Applying a median filter (e.g., 5th-order) to attenuate transient signal fluctuations while preserving motion patterns.

- Axis Standardization: Mirroring data from the left wrist to match right-wrist orientation for dataset consistency.

The following diagram illustrates a generalized preprocessing workflow for inertial data in bite detection research.

Orientation Estimation and Correction

Accurate orientation estimation is foundational for interpreting IMU data, as errors in this step propagate to all subsequent analyses, such as identifying specific gestures [26].

The Impact of Sampling Rate on Orientation Error

The relationship between sampling rate and orientation error is not linear. A study on spine orientation found that error depends exponentially on the sampling frequency [28]. This means that as the sampling rate is reduced below a certain task-dependent threshold, the error in the orientation estimate begins to increase dramatically. This model provides a quantitative basis for selecting the minimum viable sampling rate for a given application.

Calibrating for Magnetic Distortions

When using magnetometer-inclusive fusion algorithms (AHRS), compensating for magnetic distortions is essential. The process involves [29]:

- Hard Iron Distortion: Caused by permanent magnetic fields, corrected by a constant bias vector (

b). - Soft Iron Distortion: Caused by materials that distort the magnetic field, corrected by a 3x3 matrix (

A). - Calibration Method: The sensor is rotated through multiple 360-degree arcs along each axis. The collected magnetometer data is processed using a calibration function (e.g.,

magcalin MATLAB) to derive theAmatrix andbvector correction factors.

Axis Alignment with a Reference Coordinate System

For sensor fusion algorithms to function correctly, the axes of all sensors (accelerometer, gyroscope, magnetometer) must be aligned with each other and with a defined reference coordinate system, such as North-East-Down (NED) [29]. This often requires:

- Defining the device axes in accordance with the NED convention.

- Swapping and/or inverting accelerometer and gyroscope readings to match the magnetometer axis.

- Verifying polarity by placing the sensor in known orientations and checking the accelerometer reading against gravity.

The Scientist's Toolkit

This table details key resources and methodologies used in the featured research, providing a quick reference for experimental design.

Table 3: Research Reagent Solutions for IMU-Based Bite Detection

| Tool / Solution | Function / Description | Example in Research Context |

|---|---|---|

| Public Datasets (e.g., CAD) | Provides benchmark data for algorithm development and validation. | The Clemson all-day (CAD) dataset: 354 days of 6-axis wrist motion data (1063 eating episodes) sampled at 15 Hz [27]. |

| Sensor Fusion Toolboxes | Software libraries providing implemented orientation estimation algorithms. | MATLAB's Sensor Fusion and Tracking Toolbox, featuring ahrsfilter and imufilter objects [29]. |

| Commercial Smartwatches | Off-the-shelf wearable platforms with embedded IMUs for data collection. | Used in studies for in-the-wild data collection, providing accelerometer and gyroscope data [30]. |

| High-Precision IMUs (e.g., XSENS MTi-630) | Research-grade sensors used for method validation and high-frequency data collection. | Employed to investigate the influence of sampling rate on orientation estimation (gyro: 1600 Hz, accelerometer: 1000 Hz) [26]. |

| Optical Motion Capture (OMC) | Gold-standard reference system for validating IMU-based orientation and movement data. | Used as a benchmark (e.g., ±0.15 mm marker position accuracy) to quantify the error of IMU orientation estimates [26]. |

| Deep Learning Frameworks | Enables end-to-end learning from raw sensor data for activity recognition. | Convolutional Neural Networks (CNNs) analyzing long time windows (0.5-15 min) for top-down eating episode detection [27]. |

Experimental Protocols in Practice

To illustrate how these elements converge, here are methodologies from key studies:

Protocol for Sampling Rate Investigation [26]: Seventeen healthy subjects wore IMUs on the thigh, shank, and foot while walking and running on a treadmill. IMU data was collected at high rates (gyroscope: 1600 Hz) and then downsampled. Orientation was computed at various frequencies (10–1600 Hz) using four sensor fusion algorithms and compared against optical motion capture to determine accuracy.

Protocol for Bite Detection with Long Windows [27]: This approach used the Clemson all-day dataset (15 Hz). A sliding window of 0.5–15 minutes was passed through a day's worth of 6-axis motion data. A pre-trained Convolutional Neural Network (CNN) processed each window to determine the probability of eating. A hysteresis algorithm with start and end thresholds was then applied to detect eating episodes of arbitrary length.

Protocol for Orientation at Low Sampling Rates [28]: Twelve participants were measured with IMUs across tasks (sitting, walking, jogging). Orientation was reconstructed using several filters, including a novel one for low-frequency performance. By benchmarking against optical motion capture, the researchers modeled the exponential relationship between sampling frequency and error.

The acquisition and preprocessing of IMU data form the critical foundation for any reliable bite detection research pipeline. Experimental evidence indicates that sampling rate must be chosen based on movement dynamics, with 15-100 Hz often sufficient for eating gestures but higher rates needed for validation or broader activity contexts. The choice of sensor fusion algorithm involves a trade-off between accuracy, computational cost, and environmental robustness, with AHRS filters often preferred when magnetometer data is reliable. Finally, rigorous orientation correction through axis alignment and magnetic calibration is non-negotiable for ensuring data integrity. By adhering to these data-driven practices, researchers can ensure the quality and validity of their inertial data, thereby enabling the development of more effective digital biomarkers and interventions for dietary health.

Feature engineering is a foundational step in developing robust automated dietary monitoring (ADM) systems, particularly for the complex task of bite identification. The process involves creating informative descriptors from raw sensor data to characterize the unique motion patterns associated with eating gestures. Within the specific context of inertial measurement unit (IMU) sensors, features are broadly categorized into temporal descriptors, which capture the timing, sequence, and dynamics of movements, and statistical descriptors, which quantify the distribution and properties of the sensor signals [6] [5]. The choice and quality of these descriptors directly determine the performance of machine learning models in distinguishing bites from other daily activities. This guide provides a comparative analysis of the experimental protocols, performance outcomes, and technical reagents used in contemporary research on inertial sensor-based bite detection.

Experimental Protocols for Bite Identification

Research in bite identification employs varied yet methodologically sound experimental protocols to collect data and validate models. The following are detailed methodologies from key studies in the field.

Deep Learning with Inertial Measurement Unit (IMU) Sensors

Objective: To develop a personalized deep learning model that detects carbohydrate intake for diabetes management using IMU data [1]. Sensor Configuration: A single IMU sensor was used, providing 3-axis accelerometer and 3-axis gyroscope data sampled at a rate of 15 Hz. Data Collection: The study utilized a publicly available dataset. The data underwent preprocessing to be formatted for a recurrent neural network model. Model and Features: The core architecture was a Recurrent Neural Network (RNN) with Long Short-Term Memory (LSTM) layers. LSTM networks are inherently designed to model temporal sequences, meaning the "feature engineering" is largely automated by the network, which learns relevant temporal descriptors directly from the preprocessed sensor data streams [1]. Validation: Model performance was evaluated on a per-subject basis, reporting a median F1-score of 0.99, indicating high personalization and accuracy.

Support Vector Regression with Engineered Features for Bite Weight

Objective: To estimate the weight of individual bites using only the inertial signals from a commercial smartwatch [5]. Sensor Configuration: A commercial smartwatch was worn on the wrist, streaming 3-axis accelerometer and 3-axis gyroscope data. Data Collection: Ten participants ate meals in semi-controlled conditions. Bite events were manually annotated from video, and their weights were recorded in real-time using a smart scale, resulting in 342 annotated bites. Inertial data were resampled to 100 Hz, and gravity was removed from the accelerometer signals using a high-pass filter. Feature Engineering: This study explicitly engineered a hybrid set of six features:

- Behavioral Features (Temporal Descriptors):

f1: Food gathering duration, quantified by analyzing the temporal sequence of a micromovement classification model's predictions prior to a bite.f2: Stillness score, characterizing movement stability during food transport by combining normalized signal variance and movement duration.

- Statistical Features: Four additional statistical features (

f3tof6) were extracted from the IMU signals, though the specific metrics are not detailed in the excerpt [5]. Model and Validation: A Support Vector Regression (SVR) model was trained on these features to estimate bite weight. The model was evaluated using Leave-One-Subject-Out Cross-Validation (LOSO CV), achieving a Mean Absolute Error (MAE) of 3.99 grams per bite.

Video-Based Deep Learning for Bite Detection in Children

Objective: To develop a fully automated system for bite count and bite rate detection from video-recorded meals in children [31] [32]. Sensor Configuration: Meals were recorded at 30 frames per second using a fixed network camera. Data Collection: The dataset comprised 242 videos of 94 children (ages 7-9) consuming four laboratory meals. Model and Features: The "ByteTrack" system uses a two-stage deep learning pipeline:

- Face Detection: A hybrid model (Faster R-CNN and YOLOv7) locates and tracks the child's face.

- Bite Classification: An EfficientNet (a Convolutional Neural Network) extracts spatial features from video frames, which are then modeled temporally by an LSTM network to classify bites. This end-to-end system learns its own spatio-temporal descriptors [31]. Validation: On a test set of 51 videos, ByteTrack achieved an average precision of 79.4%, recall of 67.9%, and an F1-score of 70.6%. Performance decreased with extensive head movement or hand/utensil occlusions of the mouth.

Performance Comparison of Sensing Modalities

The following table summarizes the quantitative performance of different sensing modalities and algorithmic approaches for bite and eating-related event detection, providing a clear basis for comparison.

Table 1: Performance Comparison of Bite and Eating Event Detection Modalities

| Sensing Modality | Primary Features | Algorithm | Performance | Key Challenge |

|---|---|---|---|---|

| Wrist-worn IMU [5] | Engineered Hybrid (Behavioral & Statistical) | Support Vector Regression (SVR) | MA: 3.99 g per bite (Weight Estimation) | Generalization across diverse foods and users |

| Head-worn Motion Sensors [16] | Relative difference series from bilateral sensors | Long Short-Term Memory (LSTM) | Avg. Accuracy: 84.8% (Chewing Side Detection) | Sensor placement consistency |

| Eyeglasses with EMG [33] | Chewing cycle density | Bottom-up algorithm | F1-score: 99.2% (Eating Event Detection) | Intrusiveness for some users |

| Multi-Sensor Fusion [19] | NA (Raw sensor data) | Support Vector Machine (SVM) | F1-scores: 0.82 (Gesture), 0.94 (Chewing), 0.58 (Swallowing) | System complexity and user burden |

| Video (ByteTrack) [31] | Learned Spatio-temporal | CNN + LSTM | F1-score: 70.6% (Bite Detection) | Occlusions and variable lighting |

Research Reagent Solutions for Inertial Sensor-Based Bite Detection

A standardized set of research reagents is essential for the experimental investigation of feature engineering for bite identification.

Table 2: Essential Research Reagents for Bite Identification Experiments

| Reagent / Solution | Specification / Function | Exemplar Use Case |

|---|---|---|

| IMU Sensor | 3-axis accelerometer & gyroscope; ≥50 Hz sampling rate | Captures raw wrist and arm kinematics during eating gestures [1] [5] [19]. |

| Data Preprocessing Pipeline | Gravity filter, resampling, signal smoothing | Isolates movement-induced acceleration and standardizes signal frequency for analysis [5]. |

| Temporal Descriptor Set | Movement duration, stillness periods, micromovement sequence | Quantifies the behavioral characteristics of food gathering and transport to the mouth [5]. |

| Statistical Descriptor Set | Mean, variance, peak magnitude, spectral features | Characterizes the distribution and energy of inertial signals during a bite event [6] [5]. |

| LSTM Network | RNN architecture for sequence modeling | Models temporal dependencies in sensor data for bite classification [1] [31] [16]. |

Logical Workflow in Bite Identification Research

The following diagram illustrates the standard logical workflow and signaling pathway from data acquisition to model output in an inertial sensor-based bite identification system.

Diagram 1: Workflow for IMU-based Bite Identification

The comparative analysis indicates a fundamental trade-off between model interpretability and performance. Explicit feature engineering, as demonstrated in the SVR approach for bite weight estimation [5], provides a high level of interpretability, allowing researchers to understand which temporal and statistical descriptors contribute most to the model's decision. In contrast, end-to-end deep learning models, such as LSTMs and CNN-LSTMs, learn features directly from the data, often achieving superior performance by capturing complex, non-linear patterns that may be missed by manual engineering [1] [31]. The choice between these paradigms depends on the research goals: engineered features are advantageous for mechanistic understanding and hypothesis testing, while learned features are often better suited for maximizing predictive accuracy in complex, real-world scenarios. Future work will likely focus on hybrid approaches and improving the robustness of these systems across diverse populations and unconstrained environments.

The field of behavioral analysis, particularly in specialized domains like bite detection for nutritional research, has witnessed a rapid evolution in the machine learning models employed. The journey spans from traditional machine learning workhorses like Support Vector Machines (SVMs) to sophisticated deep neural networks, each offering distinct advantages for pattern recognition tasks [34] [35]. This progression is driven by the need to handle increasingly complex data types, from structured clinical readings and sensor outputs to high-dimensional video and image data. The choice of model fundamentally shapes the capabilities of a system, influencing its accuracy, robustness, and applicability to real-world, personalized healthcare solutions. In the specific context of detecting and analyzing eating behaviors, this evolution has enabled a shift from intrusive sensor-based methods to less obtrusive, vision-based approaches that can capture rich behavioral data in naturalistic settings [31] [2].

The following diagram illustrates the typical workflow for developing a video-based detection system, integrating both data processing and model training stages.

Model Archetypes: A Comparative Framework

Support Vector Machines (SVMs) and Traditional Machine Learning

Support Vector Machines represent a class of powerful, discriminative classifiers that have proven effective in various biomedical and clinical applications. Their strength lies in finding the optimal hyperplane that separates classes in a high-dimensional feature space [35]. For instance, in a study aimed at predicting dengue PCR results using routine clinical and demographic data, SVM was the best-performing model among several traditional ML algorithms, achieving an accuracy of 71.4%, a recall of 97.4%, and a precision of 71.6%. After hyperparameter tuning, the model's recall reached a perfect 100% [35]. This demonstrates SVM's particular strength in scenarios with structured, tabular data and where feature relationships are critical. Similarly, SVMs have been successfully integrated into hybrid deep learning models, such as in a system for COVID-19 pattern identification from chest X-rays and CT scans, where they served as the final classification layer acting upon features selected by a ReliefF algorithm from a deep neural network [34].

Deep Neural Networks and Complex Pattern Recognition

Deep Neural Networks, particularly Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), excel at automatically learning hierarchical features directly from raw, high-dimensional data like images, signals, and video [31] [36]. A prime example of a modern, specialized deep learning system is ByteTrack, designed for automated bite count and bite-rate detection from video-recorded child meals [31] [32]. ByteTrack employs a two-stage deep learning pipeline: first, a hybrid of Faster R-CNN and YOLOv7 for face detection, and second, a combination of an EfficientNet CNN with a Long Short-Term Memory (LSTM) network for bite classification [31] [2]. This architecture allows the model to handle temporal sequences and spatial features simultaneously, making it robust to challenges like blur, low light, and occlusions.

Another compelling application is in PTSD diagnosis using ECG signals. Research has shown that deep learning models like AlexNet, GoogLeNet, and ResNet50 , when fed with time–frequency images of ECG signals generated via Continuous Wavelet Transform (CWT), can achieve remarkable accuracy. In one study, ResNet50 achieved the highest classification accuracy of 94.92%, significantly outperforming traditional machine learning approaches [36]. This underscores a key advantage of DL: its superior ability to model complex, non-stationary data structures with minimal manual feature engineering.

Comparative Performance Analysis

The table below summarizes the performance metrics of various machine learning models as reported in recent research, highlighting their applicability across different domains and data types.

Table 1: Performance Comparison of Machine Learning Models Across Applications

| Application Domain | Model(s) Used | Key Performance Metrics | Data Type & Context |

|---|---|---|---|

| Bite Detection [31] [32] [2] | ByteTrack (EfficientNet + LSTM) | Precision: 79.4%, Recall: 67.9%, F1-Score: 70.6%, ICC: 0.66 | Video data of children eating; lab environment with occlusions. |

| Dengue PCR Prediction [35] | Support Vector Machine (SVM) | Accuracy: 71.4%, Recall: 97.4%, Precision: 71.6% (100% recall post-tuning) | Structured clinical & demographic data from 300 patients. |

| PTSD Diagnosis [36] | ResNet50 (CNN) | Accuracy: 94.92%, AUC: 0.99 | ECG signals converted to 2D scalogram images. |

| COVID-19 Detection [34] | Hybrid SVM-RLF-DNN | Test Accuracy: 98.48% (2-class X-ray), 87.9% (3-class X-ray) | Chest X-ray and CT scan images. |

Experimental Protocols and Methodologies

Protocol for Video-Based Bite Detection (ByteTrack)

The development and validation of the ByteTrack system provide a detailed template for creating a deep learning-based behavioral analysis tool [31] [2].

- Data Collection: The model was trained on a substantial dataset comprising 1,440 minutes (24 hours) from 242 videos of 94 children (ages 7–9 years). Each child consumed four identical meals spaced one week apart, with meals recorded at 30 frames per second using a network camera positioned outside the child's direct line of sight to minimize the observer effect [31] [2].

- Data Preprocessing and Augmentation: The pipeline's first stage involved face detection using a hybrid Faster R-CNN and YOLOv7 pipeline to isolate the child's face and reduce background noise. The data was augmented to introduce real-world variations, training the model to handle blur, low light, camera shake, and occlusions from hands or utensils [31] [37].

- Model Training and Evaluation: The second stage focused on bite classification using an EfficientNet CNN for spatial feature extraction combined with an LSTM network to model temporal dependencies across video frames. The model's performance was compared against manual observational coding (the gold standard) on a test set of 51 videos, with metrics including precision, recall, F1-score, and Intraclass Correlation Coefficient (ICC) [31] [32].

Protocol for ECG-Based PTSD Diagnosis

This protocol highlights the process for applying deep learning to physiological signal classification [36].

- Data Acquisition and Preprocessing: ECG signals were obtained from 20 individuals with PTSD and 20 healthy controls. The raw signals underwent preprocessing, including normalization and baseline drift correction, to remove noise and artifacts.

- Signal Transformation and Segmentation: The cleaned ECG signals were transformed into 2D time-frequency images (scalograms) using Continuous Wavelet Transform (CWT). This step is crucial for capturing the signal's complex temporal and spectral patterns. The signals were segmented into different lengths (5s, 10s, 15s, and 20s) to analyze the impact of segment duration on performance.

- Model Training with Cross-Validation: Pre-trained CNN models (AlexNet, GoogLeNet, ResNet50) were used for classification via transfer learning. The scalograms were used as input to these models. A 5-fold cross-validation approach was employed for training and evaluation to ensure model robustness and generalizability, with the best performance observed using 5-second segments [36].

The Researcher's Toolkit: Essential Research Reagents and Materials

Successful implementation of machine learning models, especially in biomedical domains, relies on a suite of key resources. The following table details essential "research reagents" for developing systems like ByteTrack.

Table 2: Essential Research Reagents and Materials for ML-Driven Detection Systems

| Item / Solution | Function in Research Context | Example from Cited Studies |

|---|---|---|