Leveraging Machine Learning to Decode Dietary Patterns: Advanced Methods for Research and Drug Development

This article explores the transformative role of machine learning (ML) in characterizing complex dietary patterns, a critical frontier for nutritional epidemiology, public health, and drug development.

Leveraging Machine Learning to Decode Dietary Patterns: Advanced Methods for Research and Drug Development

Abstract

This article explores the transformative role of machine learning (ML) in characterizing complex dietary patterns, a critical frontier for nutritional epidemiology, public health, and drug development. As diet is a leading risk factor for chronic diseases, moving beyond single-nutrient analysis to capture the totality of dietary intake is essential. We review the foundational shift from traditional a priori and a posteriori methods to novel ML approaches that can model dietary synergy, multidimensionality, and dynamism. The scope encompasses a detailed examination of specific ML algorithms—from unsupervised learning for pattern discovery to supervised models for predicting health outcomes—and their practical applications in precision nutrition and disease research. We also address crucial methodological challenges, including data quality, model interpretability, and overfitting, while providing a framework for validation and comparison with traditional statistical techniques. This resource is tailored for researchers, scientists, and drug development professionals seeking to harness ML for more robust, data-driven dietary insights.

From Traditional Scores to AI-Driven Patterns: The New Paradigm in Dietary Analysis

The Limitations of Traditional Dietary Pattern Analysis (A priori and A posteriori Methods)

Dietary pattern analysis has become a cornerstone of nutritional epidemiology, shifting the focus from individual nutrients to the complex combinations of foods and beverages that people actually consume. This holistic approach is crucial because humans do not consume nutrients in isolation but within the context of a broader dietary pattern, where synergistic and antagonistic relationships between multiple dietary components influence health [1] [2]. For decades, research has relied predominantly on two traditional methodological approaches: a priori (investigator-driven) and a posteriori (data-driven) methods [3]. While these approaches have contributed significantly to our understanding of diet-disease relationships, they possess inherent limitations in capturing the true complexity and multidimensionality of dietary intake. Within the evolving landscape of nutritional research, particularly with the emergence of machine learning applications, a critical examination of these traditional methods is essential for advancing the field and improving our ability to characterize dietary patterns in relation to health outcomes.

Traditional dietary pattern analysis methods can be broadly classified into two categories: a priori (investigator-driven) and a posteriori (data-driven) approaches. Each category encompasses several specific techniques with distinct characteristics and applications.

Table 1: Characteristics of Traditional Dietary Pattern Analysis Methods

| Method Type | Specific Methods | Underlying Principle | Key Output |

|---|---|---|---|

| A priori (Investigator-driven) | Healthy Eating Index (HEI), Mediterranean Diet Score (MDS), Dietary Approaches to Stop Hypertension (DASH) | Pre-defined based on existing nutritional knowledge or dietary guidelines | Composite scores reflecting adherence to pre-specified dietary patterns |

| A posteriori (Data-driven) | Principal Component Analysis (PCA), Factor Analysis, Cluster Analysis (k-means, Ward's method) | Statistical derivation from dietary intake data without pre-defined hypotheses | Patterns derived from population data (factors, components, clusters) |

A Priori (Investigator-Driven) Methods

A priori approaches are based on pre-defined dietary guidelines or existing nutritional knowledge about health-promoting diets [3]. These methods involve constructing scores or indices that measure adherence to specific dietary patterns aligned with current scientific evidence. Common examples include the Healthy Eating Index (HEI), which assesses conformity to the Dietary Guidelines for Americans; the Mediterranean Diet Score (MDS), which evaluates adherence to traditional Mediterranean eating patterns; and the Dietary Approaches to Stop Hypertension (DASH) score, which measures alignment with the DASH diet [3] [4]. These indices typically assign points based on consumption levels of recommended food groups or nutrients, with total scores representing overall diet quality. The fundamental characteristic of a priori methods is that they are hypothesis-driven, relying on prior assumptions about what constitutes a healthy dietary pattern based on existing evidence [3].

A Posteriori (Data-Driven) Methods

A posteriori approaches are hypothesis-free methods that derive dietary patterns empirically from dietary intake data without pre-defined nutritional hypotheses [1] [3]. These methods use multivariate statistical techniques to identify common consumption patterns within study populations. The most commonly applied a posteriori methods include Principal Component Analysis (PCA) and Factor Analysis, which identify patterns of intercorrelated food groups by reducing the dimensionality of dietary data [3] [4]. These techniques generate factors or components that explain the maximum variation in food consumption patterns. Cluster Analysis is another a posteriori approach that classifies individuals into mutually exclusive groups with similar dietary habits, using algorithms such as k-means or Ward's method [1] [4]. Unlike a priori methods, a posteriori approaches are entirely data-driven, allowing patterns to emerge from the dietary data itself without investigator-imposed constraints.

Key Limitations of Traditional Methods

Dimensionality Reduction and Oversimplification

Both a priori and a posteriori methods suffer from significant limitations related to dimensionality reduction, which oversimplifies the complex, multidimensional nature of dietary intake.

A priori methods compress multidimensional dietary data into a single unidimensional score, collapsing the rich variability of food consumption into a simplified metric that fails to capture important nuances and interactions between dietary components [1] [2]. For instance, the Healthy Eating Index-2020 and similar indices condense multiple dietary components into a single score reflecting overall diet quality, thereby losing information about pattern specificity and food combinations [1].

Similarly, a posteriori methods like Principal Component Analysis and Factor Analysis reduce dietary components to key food groupings typically expressed as single scores, limiting their ability to explain the wide variation in dietary intakes across populations [1] [2]. By focusing on maximizing explained variance, these methods prioritize common patterns at the expense of less prevalent but potentially important dietary combinations that may still significantly impact health outcomes.

Table 2: Key Limitations of Traditional Dietary Pattern Analysis Methods

| Limitation Category | A Priori Methods | A Posteriori Methods |

|---|---|---|

| Dimensionality Reduction | Compression to unidimensional scores | Loss of dietary variation through factor extraction |

| Subjectivity | Subjective selection of components and cut-off points | Subjective decisions on food grouping, pattern naming, and number retention |

| Synergistic Effects | Inability to capture food-nutrient interactions | Limited capacity to model complex dietary synergies |

| Pattern Stability | Fixed structure regardless of population | Population-specific patterns limit generalizability |

| Temporal Dynamics | Static assessment unable to capture meal-to-meal or day-to-day variation | Typically based on average intake, missing temporal sequences |

Subjectivity in Methodological Application

Both approaches involve considerable subjective decision-making throughout their application, introducing potential biases and affecting the reproducibility of findings.

In a priori methods, researchers must make subjective determinations about which dietary components to include, how to define dietary diversity, and how to interpret dietary guidelines when constructing scores [3]. The selection of cut-off points for scoring adherence is particularly subjective and can significantly influence results [4]. For example, application of Mediterranean diet indices has been shown to vary considerably across studies in terms of the nature of dietary components (foods only versus foods and nutrients) and the rationale behind cut-off points (absolute and/or data driven) [4].

A posteriori methods require multiple subjective analytical choices, including decisions about food group aggregation, the number of factors or clusters to retain, rotational techniques, and the interpretation and naming of derived patterns [4]. The criteria for determining the number of dietary patterns to retain vary across studies, with some using eigenvalues greater than one, others using scree plots, and some using interpretable variance percentage, leading to inconsistent applications and results [3] [4].

Inability to Capture Dietary Synergy and Complexity

Traditional methods are limited in their capacity to capture the complex synergistic and antagonistic relationships between different dietary components that likely influence health outcomes.

A priori methods cannot adequately account for food-nutrient interactions because they focus on selected aspects of diet and do not consider the correlation between different dietary components [3]. The comprehensive scores generated do not provide specific information on multiple foods, often leading to unclear interpretation of intermediate scores, where individuals with similar scores may have markedly different nutritional compositions and dietary patterns [3].

A posteriori methods, while capturing some correlations between food groups, typically model linear relationships and miss potential non-linear interactions and threshold effects that may be important in diet-disease relationships [1]. These methods do not allow for explorations of dietary patterns in their totality because they miss potential synergistic or antagonistic associations among dietary components [1] [2].

Limited Generalizability and Population Specificity

A significant limitation of a posteriori methods is their population specificity, as derived patterns are dependent on the specific dietary data from which they were generated, limiting comparability across different populations and studies [4]. This has been evidenced by systematic reviews showing that similarly named dietary patterns (e.g., "Western" or "Prudent") across different studies often contain substantially different food combinations, making synthesis of evidence challenging [4].

While a priori methods are theoretically more generalizable, their fixed structure may not adequately capture culturally specific dietary patterns or adapt to evolving nutritional science without significant modification [3]. This limitation is particularly relevant for diverse populations, as demonstrated by research indicating that standard dietary guidelines may require cultural adaptations to enhance relevance and adoption [5].

Inadequate Handling of Dietary Dynamics

Traditional methods typically provide static representations of dietary intake and cannot adequately capture the dynamic nature of eating patterns that change from meal to meal, day to day, and across the life course [1]. Most methods rely on average consumption data,

failing to account for temporal sequences, meal timing, and seasonal variations in dietary intake that may independently influence health outcomes. This limitation is particularly relevant given growing evidence about the importance of chrononutrition and eating patterns throughout the day.

Experimental Protocols for Methodological Comparison

Protocol 1: Comparative Analysis of Classification Accuracy

This protocol evaluates the predictive performance of a priori and a posteriori dietary patterns for health outcomes using various classification algorithms, based on methodologies from comparative studies [6].

Materials and Reagents:

- Dietary intake assessment tools (validated food frequency questionnaire, 24-hour recalls, or food records)

- Clinical assessment equipment for outcome verification

- Statistical software (R, Python, or SAS with appropriate packages)

Procedure:

- Participant Recruitment: Enroll sufficient participants (e.g., 1000) including both cases (with the health outcome of interest) and controls (population-based and matched for age and sex)

- Dietary Assessment: Collect dietary intake data using validated methods (e.g., food frequency questionnaires with portion size estimation)

- A Priori Pattern Derivation: Calculate dietary pattern scores using pre-defined indices (e.g., MedDietScore, HEI, DASH)

- A Posteriori Pattern Derivation: Perform Principal Component Analysis or Factor Analysis to derive empirical dietary patterns from the dietary intake data

- Model Development: Apply multiple classification algorithms to both dietary pattern approaches:

- Multiple logistic regression (MLR)

- Naïve Bayes classifier

- Decision trees (C4.5)

- Rule-based classifiers (RIPPER)

- Artificial neural networks (multilayer perceptron)

- Support vector machines (SVM)

- Model Evaluation: Assess classification accuracy using the C-statistic (area under the ROC curve) through cross-validation techniques

- Statistical Comparison: Compare performance metrics between a priori and a posteriori approaches across all classification algorithms

Protocol 2: Assessment of Methodological Subjectivity and Variability

This protocol systematically evaluates the impact of researcher subjectivity on dietary pattern derivation and characterization.

Materials and Reagents:

- Dietary intake datasets (multiple 24-hour recalls or food records)

- Food grouping schema templates

- Statistical software with factor and cluster analysis capabilities

Procedure:

- Food Grouping Variability:

- Have multiple independent research teams create food grouping schema from the same raw dietary data

- Compare the number, composition, and aggregation level of food groups across teams

- Quantify variability using concordance metrics

Pattern Retention Subjectivity:

- Apply multiple retention criteria (eigenvalue >1, scree plot, interpretable variance) to the same dataset

- Compare the number and composition of patterns derived from each criterion

- Assess stability using split-sample validation

Pattern Naming Consistency:

- Provide derived dietary patterns (without names) to multiple nutritional epidemiologists

- Document the proposed names and rationale for each pattern

- Analyze naming consistency using inter-rater reliability statistics

Cut-point Determination for A Priori Methods:

- Apply different cut-point methodologies (percentiles, medians, clinical values) to the same a priori index

- Compare score distributions and classifications across methods

- Assess impact on health outcome predictions

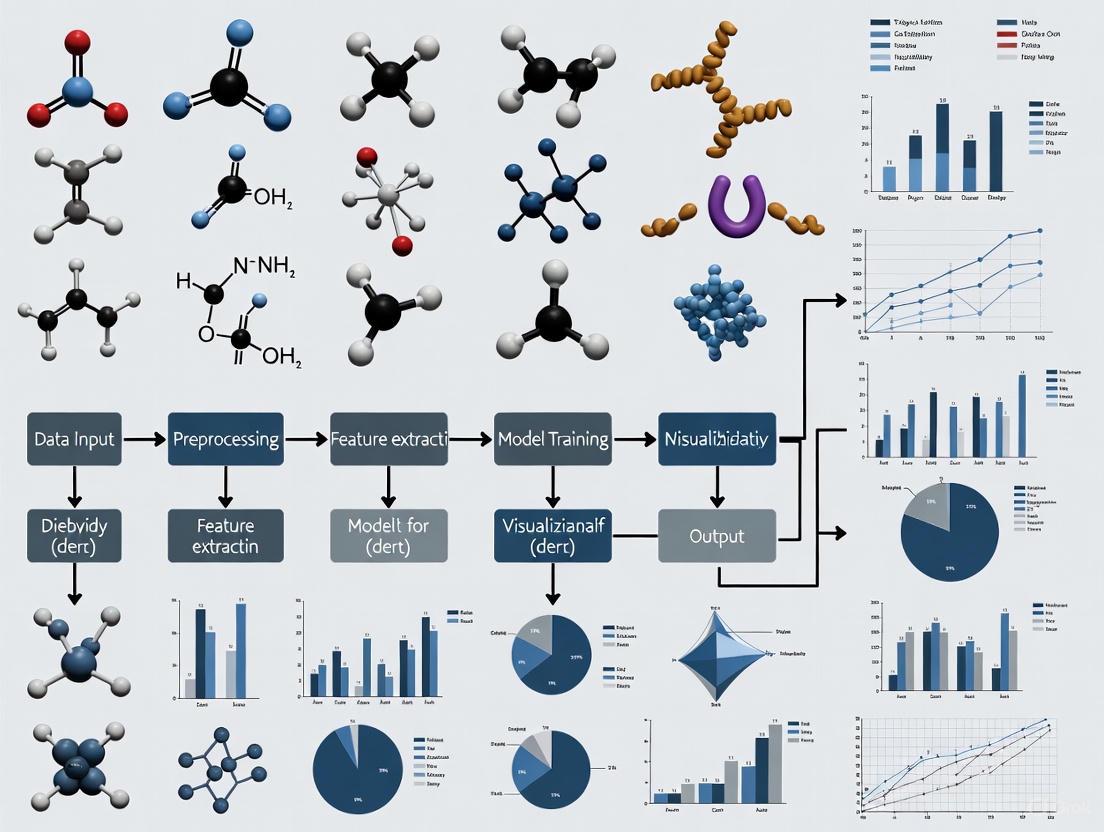

Visualization of Methodological Limitations

Figure 1: Methodological limitations of traditional dietary pattern analysis and machine learning solutions. Traditional approaches suffer from dimensionality reduction, subjectivity, inability to capture dietary synergy, limited generalizability, and static assessment. Machine learning methods offer potential solutions to these limitations.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Dietary Pattern Analysis

| Tool/Reagent | Function/Application | Implementation Considerations |

|---|---|---|

| Gaussian Graphical Models (GGMs) | Network models depicting conditional correlations between food groups after controlling for all other foods [7] | Requires sufficient sample size; implemented in R with qgraph or bootnet packages |

| LASSO (Least Absolute Shrinkage and Selection Operator) | Regularized regression that performs variable selection to enhance prediction accuracy and interpretability [1] [3] | Effective for high-dimensional data; available in most statistical software (glmnet in R) |

| Latent Class Analysis (LCA) | Model-based approach to identify unobserved subgroups (classes) within population with similar dietary patterns [1] [2] | Provides probability of class membership; implemented in Mplus or R poLCA package |

| Treelet Transform (TT) | Combines principal component analysis and clustering in a one-step process to identify stable patterns [3] | Useful for correlated data; available in specialized R packages |

| Compositional Data Analysis (CODA) | Accounts for relative nature of dietary data by transforming intake into log-ratios [3] | Appropriate for nutrient and food composition data; requires specialized packages |

| 24-Hour Dietary Recall Instruments | Gold standard for dietary assessment providing detailed intake data [7] [5] | Automated self-administered instruments (ASA24) reduce burden and enhance accuracy |

| Food Grouping Standardization Protocols | Systematic approaches for aggregating individual foods into meaningful groups [4] | Critical for reproducibility; should be documented and justified in methods |

Traditional a priori and a posteriori methods for dietary pattern analysis have provided valuable insights into diet-disease relationships but face significant limitations in capturing the complexity, multidimensionality, and dynamic nature of dietary intake. The dimensionality reduction inherent in both approaches oversimplifies dietary exposure, while subjective methodological decisions threaten reproducibility and comparability across studies. The inability of traditional methods to adequately model dietary synergies and their population specificity further constrain their utility for advancing nutritional epidemiology. These limitations highlight the need for more sophisticated analytical approaches, including machine learning algorithms such as Gaussian graphical models, latent class analysis, and regularized regression techniques, which offer promising avenues for capturing the complex realities of dietary patterns and their relationship with health outcomes. As the field evolves, researchers should consider these limitations when selecting analytical methods and interpreting findings from traditional dietary pattern analyses.

Why Diet is a Complex, Multidimensional Exposure for Chronic Disease

Diet represents one of the most complex exposures in chronic disease research, characterized by multidimensionality, dynamic nature, and intricate component interactions. Unlike single nutrient studies, dietary pattern analysis captures the totality of diet, recognizing that humans consume foods and beverages in complex combinations with potential synergistic and antagonistic relationships that collectively influence health [1]. This complexity presents significant methodological challenges for traditional analytical approaches, creating opportunities for machine learning to advance dietary pattern characterization and its relationship to chronic disease risk.

The shift from single-nutrient to dietary pattern-focused research reflects the growing recognition that the synergistic effects of multiple dietary components likely exert greater influence on health outcomes than individual nutrients or foods [8]. Dietary patterns are dynamic constructs that change across meals, days, and the life course, while being shaped by cultural, social, and environmental factors [1]. This complexity necessitates advanced analytical approaches capable of capturing non-linear relationships and high-dimensional interactions within dietary data.

The Multidimensional Nature of Dietary Exposure

Key Dimensions of Dietary Complexity

Dietary complexity manifests across several interconnected dimensions that traditional methods struggle to capture comprehensively. The table below summarizes these core dimensions and their implications for chronic disease research.

Table 1: Key Dimensions of Dietary Complexity in Chronic Disease Research

| Dimension | Description | Research Implications |

|---|---|---|

| Multidimensionality | Simultaneous consumption of numerous foods and nutrients with potential interactive effects [1] | Cannot isolate single components; requires holistic analysis of combinations |

| Dynamism | Dietary patterns change from meal to meal, day to day, and across the life course [1] | Requires longitudinal assessment; single timepoint measurements are insufficient |

| Contextual Influence | Diet shaped by culture, social position, economics, and environment [1] | Must account for socio-demographic factors as determinants of dietary patterns |

| Synergistic Effects | Components may interact antagonistically or synergistically to influence health outcomes [8] | Simple additive models may miss critical biological interactions |

Methodological Challenges in Dietary Assessment

Accurate dietary assessment faces significant challenges that contribute to measurement complexity:

Assessment Limitations: Traditional methods include food records, 24-hour recalls, and food frequency questionnaires (FFQs), each with distinct strengths and limitations [9]. FFQs assess usual intake over extended periods but limit food items queried, while 24-hour recalls provide more detailed recent intake but require multiple administrations to estimate habitual intake.

Measurement Error: Self-reported dietary data is subject to both random and systematic measurement error, including energy underreporting and recall bias [9]. Recovery biomarkers (energy, protein, sodium, potassium) enable validation but remain limited to few nutrients.

Temporal Considerations: Dietary assessments must distinguish between short-term fluctuations and long-term habitual patterns, as most chronic diseases develop over extended periods [9].

Analytical Approaches for Dietary Pattern Characterization

Traditional Methodological Frameworks

Traditional approaches to dietary pattern analysis fall into two primary categories:

A Priori (Investigator-Driven) Methods: These approaches use predefined dietary indices based on nutritional knowledge or guidelines, such as the Healthy Eating Index (HEI) or Mediterranean Diet Score [3]. They measure adherence to recommended dietary patterns but are limited by subjective construction and inability to capture novel patterns in population data.

A Posteriori (Data-Driven) Methods: These include principal component analysis (PCA), factor analysis, and cluster analysis, which identify patterns based on statistical relationships within dietary data [1] [3]. While valuable for exploring population patterns, these methods often reduce dietary dimensionality to simplified scores, potentially missing synergistic relationships.

Machine Learning Approaches

Machine learning offers powerful alternatives to address limitations of traditional methods:

Unsupervised Learning: Algorithms including k-means, k-medoids, and hierarchical clustering identify groups of individuals with similar dietary patterns without prior hypotheses [8]. These can reveal novel dietary patterns but may suffer from stability problems without careful validation.

Supervised Approaches: Methods like random forests, gradient boosting, and neural networks can model complex relationships between dietary components and health outcomes, capturing non-linearities and interactions [8] [10].

Hybrid Methods: Techniques like stacked generalization combine multiple machine learning algorithms to improve predictive performance and account for potential synergies [8].

Table 2: Comparison of Analytical Approaches for Dietary Pattern Analysis

| Method Category | Examples | Advantages | Limitations |

|---|---|---|---|

| A Priori | Healthy Eating Index, Mediterranean Diet Score | Simple interpretation, based on existing evidence | Subjective weighting, may miss novel patterns |

| Traditional Data-Driven | Principal Component Analysis, Factor Analysis, Cluster Analysis | Identifies population patterns without prior hypotheses | Dimensionality reduction, may miss synergies |

| Machine Learning | Random Forests, Neural Networks, Causal Forests | Captures complex interactions, handles high-dimensional data | Computational intensity, requires large samples |

| Hybrid | Stacked Generalization, LASSO | Combines strengths of multiple approaches | Implementation complexity, interpretation challenges |

Experimental Protocols for Dietary Pattern Analysis

Protocol 1: Traditional Factor Analysis for Dietary Pattern Identification

Application Notes: This protocol outlines the step-by-step process for deriving dietary patterns using exploratory factor analysis, based on methodology from the Shandong Province chronic disease study [11].

Materials:

- Food Frequency Questionnaire (FFQ) data

- Statistical software (SAS, R, or STATA)

- Demographic and health outcome data

Procedure:

- Dietary Data Collection: Administer a validated FFQ capturing frequency and portion size of food items over the preceding 12 months [11].

- Food Grouping: Aggregate individual food items into logical food groups (e.g., grains, fruits, vegetables, dairy, meat) [11].

- Factorability Assessment: Conduct Bartlett's test of sphericity and Kaiser-Meyer-Olkin (KMO) test to confirm data suitability for factor analysis (KMO ≥0.5, p<0.05) [11].

- Factor Extraction: Retain factors with eigenvalues >1, supplemented by scree plot interpretation and cumulative variance consideration [11].

- Rotation: Apply orthogonal rotation (e.g., varimax) to achieve simpler factor structure [11].

- Pattern Interpretation: Name factors based on food groups with highest factor loadings (typically >|0.25|) [11].

- Pattern Scoring: Calculate factor scores for each participant representing adherence to each identified pattern [11].

- Outcome Analysis: Use multivariate logistic regression to assess associations between pattern scores and health outcomes, adjusting for confounders [11].

Protocol 2: Machine Learning Approach for Dietary Pattern Analysis with Biomarkers

Application Notes: This protocol describes a machine learning framework for identifying dietary patterns from food photographs and biomarker data, adapted from USDA-funded research [10].

Materials:

- Food image dataset with associated nutritional information

- Biomarker data from controlled feeding studies

- Python/R with scikit-learn, tensorflow, or similar ML libraries

- High-performance computing resources for deep learning models

Procedure:

- Image Preprocessing:

Feature Extraction:

Biomarker Data Preparation:

Feature Selection:

- Apply feature-selection algorithms (e.g., BoostARoota) to exclude superfluous variables [10].

Model Development:

Model Validation:

Visualization of Analytical Workflows

Figure 1: Integrated Workflow for Dietary Pattern Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Resources for Dietary Pattern Analysis

| Resource Category | Specific Tools/Databases | Function/Application |

|---|---|---|

| National Dietary Data Sets | NHANES, FoodAPS, CSFII [12] | Provide nationally representative dietary consumption data for analysis |

| Dietary Assessment Tools | ASA-24, FFQ, 24-hour Recalls [9] | Standardized methods for collecting individual-level dietary intake data |

| Biomarker Resources | Recovery Biomarkers (Energy, Protein), Concentration Biomarkers [9] | Objective measures to validate self-reported dietary data |

| Analytical Software | R, Python, SAS, STATA [3] | Statistical computing platforms for implementing analytical methods |

| Machine Learning Libraries | Scikit-learn, TensorFlow, PyTorch [10] | Specialized tools for implementing ML algorithms for dietary analysis |

| Dietary Pattern Databases | Healthy Eating Index, AHEI, DASH Scores [3] | Predefined dietary quality scores for a priori pattern analysis |

Diet represents a fundamentally complex, multidimensional exposure in chronic disease research, requiring sophisticated analytical approaches that move beyond traditional methods. Machine learning offers promising avenues for capturing the synergistic relationships, high-dimensional interactions, and dynamic nature of dietary patterns that influence chronic disease risk. As these advanced methodologies continue to evolve, they hold substantial potential to enhance our understanding of diet-disease relationships and inform evidence-based dietary guidelines for chronic disease prevention.

Defining what populations should eat to optimize health is challenging due to the profound complexity of diet. It is well-recognized that foods are eaten in complex combinations with potential antagonistic and synergistic interactions that may impact long-term health [8]. The conceptually relevant exposure for health outcomes is the totality of the diet, typically conceptualized as the multidimensional and dynamic construct of 'dietary patterns' [8]. However, conventional analytical approaches in nutritional epidemiology assume no dietary synergy, which can lead to bias if incorrectly modeled [13]. These methods rely entirely on investigator background knowledge to manually code all relevant interactions a priori—a near-impossible task given the vast number of possible interactive associations in the diet and the dearth of knowledge about their effects on health outcomes [8] [13]. Machine learning (ML) represents a paradigm shift, offering a set of flexible algorithms and methods to model these complex relations in data, accounting for potential synergies through automated, data-adaptive strategies [8] [13]. This document outlines application notes and experimental protocols for leveraging ML to capture dietary synergy and high-dimensional interactions, providing a framework for advanced dietary pattern characterization.

Core ML Approaches and Quantitative Evidence

Machine learning mitigates the challenges of dietary pattern analysis by addressing underlying heterogeneity and interaction without heavy reliance on parametric assumptions. The table below summarizes key ML approaches and their applications in nutritional research.

Table 1: Machine Learning Approaches for Dietary Synergy and Interaction Analysis

| ML Approach | Primary Function | Key Application in Nutrition | Reported Performance/Outcome |

|---|---|---|---|

| Super Learner with TMLE [13] | Ensemble algorithm that combines several ML models for robust causal inference. | Estimating association between fruit/vegetable intake and pregnancy outcomes. | Revealed significant associations with preterm birth, SGA, and pre-eclampsia not detected by logistic regression [13]. |

| Causal Forests [8] | Quantifies heterogeneity in a causal effect of interest across many variables. | Estimating how the effect of a vegetable-rich diet varies across population subgroups. | Identifies variables that explain the largest degree of heterogeneity in a treatment effect [8]. |

| Gaussian Graphical Models (GGMs) with Louvain Algorithm [7] | Identifies networks of food groups based on conditional correlations, clustering co-consumed items. | Deriving empirical dietary pattern networks and associating them with CVD risk. | Identified a "ultraprocessed sweets and snacks" network associated with a 32% greater CVD risk (HR: 1.32; 95% CI: 1.11, 1.57) [7]. |

| Gradient Boosted Decision Trees / Random Forests [14] | Handles non-linear associations and interactions automatically to predict consumption. | Predicting food group consumption (servings) at eating occasions based on contextual factors. | Robust predictions for various food groups (e.g., MAE of 0.3 servings for vegetables, 0.75 for fruit) [14]. |

| Stacked Generalisation [8] | Combines multiple algorithms (e.g., GLMs, random forests) into one to avoid misspecification bias. | Quantifying the confounder-adjusted causal effect of a diet pattern on health outcomes. | Mitigates bias from heterogeneous associations that vary by factors like fruit intake or smoking status [8]. |

The quantitative evidence underscores ML's value. For instance, one study applying Super Learner with Targeted Maximum Likelihood Estimation (TMLE) found that high fruit and vegetable densities were associated with 4.0 and 3.7 fewer cases of preterm birth per 100 births, respectively—associations that conventional logistic regression completely missed [13]. Similarly, ML models have demonstrated high predictive accuracy for food intake at the eating occasion level, with mean absolute errors below half a serving for several food groups, enabling precise investigation of dietary behaviors [14].

Application Notes & Experimental Protocols

Protocol: Predicting Food Consumption with Contextual Factors

This protocol is adapted from a study that used ML to predict food consumption at eating occasions (EOs) and daily diet quality [14].

1. Objective: To predict the consumption (in servings) of key food groups at each EO and overall daily diet quality using person-level and EO-level contextual factors.

2. Data Collection & Preprocessing:

- Dietary Assessment: Collect at least 3-4 non-consecutive days of food intake data per participant using a validated smartphone food diary app. Record all foods and beverages consumed simultaneously with a minimum energy of 210 kJ as one EO.

- Contextual Factors:

- Person-Level (via survey): Demographics, cooking confidence, self-efficacy, food availability at home, perceived time scarcity.

- EO-Level (via app): Time of day, eating location, social context, activity during consumption, food source.

- Food Group Classification: Code all foods into predefined groups (e.g., vegetables, fruits, grains, discretionary foods) and calculate servings per EO based on national dietary guidelines.

- Diet Quality Index: Calculate a daily diet quality score (e.g., Dietary Guideline Index) for each participant.

3. Modeling & Analysis:

- Algorithm Selection: Employ tree-based ensemble methods like Gradient Boosted Decision Trees and Random Forests.

- Model Training & Validation: Split data into training and test sets. Use k-fold cross-validation on the training set to tune hyperparameters.

- Performance Metric: Select the final model based on the lowest Mean Absolute Error (MAE) for food group serving prediction and for the diet quality score.

- Interpretation: Use SHapley Additive exPlanations (SHAP) values to interpret the impact and direction of each contextual factor on the predictions.

4. Expected Outputs:

- Predictive models for food group consumption per EO with MAEs for each group (e.g., 0.3 for vegetables, 0.75 for fruit) [14].

- A ranked list of the most influential contextual factors for each food group and for overall diet quality.

Protocol: Identifying Dietary Pattern Networks and CVD Risk

This protocol details the use of GGMs and community detection to derive data-driven dietary patterns and link them to health outcomes [7].

1. Objective: To identify distinct dietary pattern networks from food group consumption data and investigate their associations with cardiovascular disease (CVD) incidence.

2. Data Preparation:

- Cohort: Utilize a large, prospective cohort with validated dietary and health event data.

- Dietary Data: Assess usual intake with at least two 24-hour dietary records. Classify all items into a sufficient number of pre-defined food groups (e.g., ~40 groups).

- Covariates: Collect data on energy intake, age, sex, physical activity, smoking status, and other relevant confounders.

3. Dietary Pattern Network Derivation:

- Model Fitting: Employ Gaussian Graphical Models (GGMs) to estimate conditional dependence networks among the food groups. This reveals which food groups are consumed together, conditional on all others.

- Community Detection: Apply the Louvain algorithm to the constructed GGM to identify non-overlapping clusters (communities) of highly interconnected food groups. These clusters represent the empirical dietary pattern networks.

4. Statistical Analysis of Health Association:

- Exposure Definition: For each identified DP network, calculate an individual's exposure level (e.g., energy-adjusted intake quintiles for the foods in that network).

- Association Model: Use Cox proportional hazards models to evaluate the relationship between DP network exposure and incident CVD.

- Model Adjustment: Adjust models for energy intake, socio-demographic factors, lifestyle, and crucially, for overall diet quality to test for independence.

5. Expected Outputs:

- A set of distinct DP networks (e.g., "plant-based foods," "ultraprocessed sweets and snacks").

- Hazard ratios and confidence intervals for the association between each DP network and CVD risk.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Software for ML-Driven Nutrition Research

| Tool Category | Specific Tool / Software | Function & Application |

|---|---|---|

| Statistical & ML Programming | R Programming [15] [16] | A language and environment for statistical computing and graphics; extensive packages for ML and data visualization (e.g., ggplot2). |

| Python (Pandas, Scikit-learn, Matplotlib) [15] [16] | A general-purpose language with powerful libraries for data manipulation, machine learning, and creating static and interactive visualizations. | |

| Specialized ML & Version Control | MLflow [17] | An open-source platform for managing the end-to-end machine learning lifecycle, including experiment tracking and model packaging. |

| DVC (Data Version Control) [17] | An open-source version control system for machine learning projects, designed to handle large files, datasets, and model versions. | |

| Data Visualization | Tableau [15] [16] | An interactive data visualization tool useful for creating dashboards and exploring data patterns quickly. |

| ggplot2 (R) [16] | A powerful and widely used data visualization package in R based on the "grammar of graphics." | |

| Matplotlib / Seaborn (Python) [16] | Comprehensive Python libraries for creating static, animated, and interactive visualizations. | |

| Color Palette Selection | Color Brewer [16] | A web-based tool designed specifically to help select appropriate color schemes for data maps and charts. |

In dietary pattern characterization research, selecting the appropriate machine learning (ML) approach is fundamental. The choice between supervised and unsupervised learning is dictated by the research question, the nature of the available data, and the desired outcome [18] [19].

Supervised learning involves training a model on a labeled dataset. Here, "label" means that the outcome or target variable is already known for the training data. The model learns the relationship between input features (e.g., nutrient intake, demographic factors) and this known output, allowing it to predict outcomes for new, unseen data [18]. This approach is ideal for classification (e.g., predicting stunting status) and regression (e.g., predicting future body mass index) tasks.

Unsupervised learning, in contrast, is used with data that has no pre-existing labels. The goal is to uncover inherent structures, patterns, or groupings within the data itself [18] [19]. This is particularly powerful in nutritional epidemiology for discovering novel dietary patterns or segmenting populations into distinct subgroups based on their food intake without prior hypotheses.

Table 1: Fundamental Differences Between Supervised and Unsupervised Learning

| Feature | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Data Requirements | Uses labeled data (input-output pairs) [18] | Uses unlabeled data (inputs only) [18] |

| Primary Goal | Predict outcomes for new data [19] | Discover hidden patterns or intrinsic structures in data [19] |

| Common Tasks | Classification, Regression [18] | Clustering, Association, Dimensionality Reduction [18] |

| Model Output | A predictive function | A description of data structure (e.g., clusters, rules) |

| Expert Intervention | Required for labeling data [18] | Required for interpreting and validating found patterns [18] |

| Example in Nutrition | Predicting stunting based on nutritional status and wealth index [20] | Identifying distinct dietary patterns using K-means clustering [21] |

Application Notes and Experimental Protocols

Supervised Learning for Predictive Modeling in Nutrition

Supervised learning models are increasingly deployed to predict specific nutritional and public health outcomes, enabling targeted interventions.

Protocol 1: Predicting Child Stunting Using Gradient Boosting

This protocol outlines the application of the Gradient Boosting machine learning classifier to predict stunting among children under five, as demonstrated in a study using Egyptian Demographic and Health Surveys (DHS) data [20].

- Aim: To classify and predict stunting (Height-for-Age Z-score < -2) and identify key risk factors.

- Dataset:

- Source: Egypt DHS data (2005, 2008, 2014) [20].

- Sample Size: Nationally representative sample of children under five.

- Features: Child nutritional status (e.g., Weight-for-Age Z-score), maternal education, birth size, wealth index, place of residence (rural/urban), and other socio-demographic covariates [20].

- Data Preprocessing:

- Cleaning: Merge datasets from different years. Handle missing values and remove rows with >50% null values.

- Feature Engineering: Calculate Height-for-Age Z-score (HAZ) and Weight-for-Age Z-score (WAZ) using WHO references. Derive the target variable "stunted" (HAZ < -2) [20].

- Class Imbalance Handling: Address class imbalance using techniques such as stratified sampling [20].

- Model Training & Evaluation:

- Algorithms: Train and compare multiple classifiers including Gradient Boosting, Random Forest, XGBoost, Logistic Regression, and K-Nearest Neighbors.

- Validation: Use 10-fold stratified cross-validation to ensure robustness [20].

- Performance Metrics: Evaluate models based on Accuracy, Precision, Recall, F1-Score, and ROC-AUC [20].

- Key Findings: Gradient Boosting and Random Forest achieved the highest predictive performance (Accuracy >90%, ROC-AUC >0.96). Significant predictors included the child's nutritional status, maternal education, and wealth index [20].

Protocol 2: Classifying Food Ingredients as Healthy or Unhealthy

This protocol details a binary classification task for food ingredients using nutritional and biochemical data, a foundational step for intelligent food recommendation systems [22].

- Aim: To classify food ingredients into "healthy" or "unhealthy" categories.

- Dataset:

- Source: "Indian Food Classification" dataset from Kaggle (177 records) [22].

- Features: Nutritional and biochemical characteristics of ingredients, flavor profile, course, diet.

- Target: Binary label (healthy/unhealthy) based on nutrient profile and ingredient composition (e.g., high sugar/fat = unhealthy) [22].

- Data Preprocessing:

- Cleaning: Remove irrelevant attributes (e.g.,

food_name,region). Handle missing values. - Feature Engineering:

- Categorical Encoding: Apply one-hot encoding to variables like

flavor_profileanddiet. - Unhealthy Ratio: Compute a novel feature: the fraction of ingredients in a dish classified as unhealthy (e.g., sugar, oil) [22].

- Categorical Encoding: Apply one-hot encoding to variables like

- Class Imbalance: Apply Random Over Sampling (ROS) to balance the class distribution (70% unhealthy vs. 30% healthy in original data) [22].

- Cleaning: Remove irrelevant attributes (e.g.,

- Model Training & Evaluation:

- Algorithms: Implement and compare Decision Tree, Random Forest, SVM, Logistic Regression, K-Nearest Neighbors, and XGBoost [22].

- Metrics: Assess using Accuracy, Precision, Recall, and F1-Score.

- Key Findings: XGBoost demonstrated superior performance (94% accuracy), with

unhealthy_ratioandingredient_percentagebeing the most important features for classification [22].

Unsupervised Learning for Dietary Pattern Discovery

Unsupervised methods are pivotal for generating hypotheses and understanding the complex structure of dietary intake without predefined outcomes.

Protocol 3: Identifying Dietary Patterns with K-means Clustering

This protocol describes the use of K-means clustering to identify population-level dietary patterns and investigate their association with chronic kidney disease (CKD) onset, as applied in a Korean cohort study [21].

- Aim: To identify distinct dietary patterns and assess their association with new-onset CKD.

- Dataset:

- Data Preprocessing:

- Food Grouping: Classify 106 food items into 21 food groups (e.g., rice, vegetables, red meat, sweets) [21].

- Covariates: Collect data on age, sex, BMI, comorbidities (hypertension, diabetes), and lifestyle factors (smoking, alcohol) for subsequent analysis.

- Clustering Analysis:

- Algorithm: K-means clustering.

- Determining Clusters (k): The optimal number of clusters is determined through internal validation using metrics like within-cluster sum of squares (Within SS), statistical significance of differences in CKD incidence among clusters, and clinical interpretability. A three-cluster solution was selected [21].

- Association Analysis:

- Epidemiological Method: Use Cox regression models to evaluate the association between cluster membership and CKD incidence, adjusted for confounders (age, sex, BMI, comorbidities) [21].

- Key Findings: Three distinct dietary clusters were identified. The "low intake, high carbohydrate" cluster was independently associated with a 59% increased risk of CKD compared to the "high vegetables and fish" cluster [21].

Protocol 4: Deriving Dietary Pattern Networks with Gaussian Graphical Models

This protocol employs advanced network analysis methods to understand how food groups co-occur in a diet, providing insights beyond traditional clustering [7].

- Aim: To identify dietary pattern (DP) networks and investigate their association with cardiovascular disease (CVD) risk.

- Dataset:

- Source: NutriNet-Santé cohort (n=99,362) [7].

- Features: Dietary intakes assessed via 24-hour dietary records, classified into 42 food groups (g/day).

- Methodology:

- Network Modeling: Use Gaussian Graphical Models (GGMs) to create DP networks. GGMs are network models that depict relationships between many food groups based on conditional correlation matrices, showing how food groups are consumed together after accounting for all other foods [7].

- Community Detection: Apply the Louvain algorithm to extract non-overlapping communities (i.e., dietary patterns) from the large GGM network [7].

- Association Analysis: Use Cox models to relate DP network adherence to CVD incidence, adjusting for energy intake and confounders.

- Key Findings: Analysis revealed five distinct DP networks (e.g., "plant-based foods," "ultraprocessed sweets and snacks"). The "ultraprocessed sweets and snacks" network was associated with a 32% greater CVD risk, independent of overall diet quality [7].

Table 2: Summary of Key Machine Learning Applications in Dietary Pattern Research

| ML Approach | Specific Task | Protocol / Study | Key Outcome |

|---|---|---|---|

| Supervised | Classification (Stunting) | Predicting Child Stunting [20] | Gradient Boosting achieved >90% accuracy; identified key socioeconomic and nutritional predictors. |

| Supervised | Classification (Food Healthiness) | Classifying Food Ingredients [22] | XGBoost achieved 94% accuracy; "unhealthy ratio" was a key predictive feature. |

| Unsupervised | Clustering (Diet Patterns) | K-means for CKD Risk [21] | Identified a "low intake, high carbohydrate" cluster with 59% higher CKD risk. |

| Unsupervised | Network Analysis (Food Co-consumption) | GGM for CVD Risk [7] | Identified a "ultraprocessed sweets and snacks" network with 32% higher CVD risk. |

Visualization of Experimental Workflows

The following diagrams illustrate the logical workflows for the core machine learning tasks described in the application notes.

Supervised Learning Workflow

Unsupervised Learning Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Data and Computational Tools for ML in Nutrition Research

| Tool / Resource | Type | Function in Research | Example from Context |

|---|---|---|---|

| Demographic and Health Surveys (DHS) | Data Source | Provides nationally representative, standardized data on health, nutrition, and demographics for predictive modeling. | Used to predict child stunting with socio-economic features [20]. |

| Food Frequency Questionnaire (FFQ) Data | Data Source | Captures habitual dietary intake over time; the foundational data for deriving dietary patterns. | Used in KoGES study for K-means clustering analysis [21]. |

| 24-Hour Dietary Recalls | Data Source | Provides detailed, quantitative dietary intake data for a specific period, often used for high-resolution pattern analysis. | Used in NutriNet-Santé cohort for GGM network analysis [7]. |

| Gradient Boosting Machines (e.g., XGBoost) | Algorithm | A powerful supervised learning algorithm that combines multiple weak models to create a highly accurate predictor. | Achieved top performance in stunting prediction [20] and food ingredient classification [22]. |

| K-means Clustering | Algorithm | An unsupervised learning algorithm that partitions data into 'k' distinct clusters based on feature similarity. | Used to identify dietary patterns associated with CKD risk [21]. |

| Gaussian Graphical Models (GGM) | Algorithm | An unsupervised method that models the conditional dependence structure between variables to form a network. | Used to identify networks of co-consumed food groups [7]. |

| Python/R Scikit-learn, TensorFlow, PyTorch | Software Library | Open-source programming libraries that provide implementations of a wide array of ML algorithms and data processing tools. | Essential for data cleaning, model training, and evaluation across all protocols [20] [22]. |

A Machine Learning Toolkit for Dietary Pattern Discovery and Health Prediction

In nutritional epidemiology, the analysis of entire dietary patterns, rather than isolated nutrients, provides a more holistic understanding of the relationship between diet and health [1] [23]. Unsupervised learning is a branch of machine learning ideal for this task, as it identifies hidden structures within complex, high-dimensional dietary data without pre-existing labels or hypotheses [1]. These data-driven, or a posteriori, methods allow researchers to discover prevalent dietary habits within populations, which can then be investigated for associations with various health outcomes [24].

This article details the application of three foundational unsupervised learning techniques—k-means clustering, Latent Class Analysis (LCA), and Principal Component Analysis (PCA)—for dietary pattern discovery. Aimed at researchers and scientists, these protocols provide a framework for implementing these methods to characterize robust and interpretable dietary patterns.

Methodological Protocols

K-Means Clustering

K-means clustering is a partitioning algorithm that groups individuals into k distinct, non-overlapping clusters based on the similarity of their dietary intake [25] [21]. The goal is to identify homogenous subgroups of individuals with comparable dietary patterns.

Experimental Protocol

- Objective: To segment a study population into distinct, mutually exclusive subgroups based on their food and nutrient intake profiles.

- Preprocessing:

- Data Collection: Collect dietary intake data, typically using a Food Frequency Questionnaire (FFQ) or 24-hour recalls, resulting in data on dozens to hundreds of food items [21].

- Standardization: Normalize all intake variables (e.g., grams per day) to a common scale (e.g., z-scores) to prevent variables with larger scales from dominating the clustering process.

- Variable Selection: Inputs can include the quantities of individual foods, consolidated food groups, and/or nutrient intakes (e.g., energy, macronutrients, vitamins) [25] [21].

- Execution:

- Determine Optimal Clusters (k): Use an internal validation process. Specify the number of clusters (k) a priori and employ criteria such as the Within-Cluster Sum of Squares (Within SS) to evaluate cluster cohesion. Combine this with clinical interpretability to select the final k [21]. A sensitivity analysis across different k values is recommended.

- Initialize Centroids: Randomly select k data points as initial cluster centers (centroids).

- Assign and Update: Iterate between (a) assigning each individual to the cluster with the nearest centroid, and (b) recalculating the centroids as the mean of all points in the cluster. Continue until cluster assignments stabilize [21].

- Validation: Characterize the resulting clusters by comparing the mean intake of key foods and nutrients across clusters. The clinical meaningfulness of these profiles is paramount [25] [21].

- Application Note: A study on chronic kidney disease (CKD) used k-means on 106 foods and 22 nutrients from 57,213 participants. A three-cluster solution revealed a "low-intake, high-carbohydrate" pattern associated with a 1.59-fold increased risk of new-onset CKD compared to a "vegetable and fish" pattern [25] [21].

Research Reagent Solutions

| Item | Function in Protocol |

|---|---|

| Food Frequency Questionnaire (FFQ) | A validated tool to collect habitual intake data on a wide range of food items over a specified period [21]. |

| Dietary Data Database (e.g., FCT) | Provides nutritional composition (energy, nutrients) for consumed foods to calculate nutrient intake values [26]. |

| Statistical Software (e.g., R, Python) | Provides the computational environment and libraries (e.g., scikit-learn in Python) to perform k-means clustering and validation. |

Latent Class Analysis (LCA)

LCA is a model-based probabilistic method that identifies unobserved (latent) categorical variables, or "classes," from observed multivariate data. It assumes that the population is composed of distinct subgroups, each with a characteristic pattern of responses to the observed dietary variables [1] [23].

Experimental Protocol

- Objective: To identify underlying, mutually exclusive latent classes of individuals who share similar probabilistic profiles of dietary intake.

- Preprocessing:

- Data Collection: Use data from FFQs or dietary recalls.

- Categorization: Unlike k-means, LCA often requires categorical input variables. Continuous food intake data may need to be categorized into percentiles (e.g., tertiles, quartiles) or levels (e.g., low, medium, high) [23].

- Execution:

- Model Estimation: Use maximum likelihood estimation to fit a series of models with an increasing number of latent classes (e.g., 1-class through 5-class).

- Model Selection: Determine the optimal number of classes using fit statistics such as the Bayesian Information Criterion (BIC) and Akaike Information Criterion (AIC), where lower values indicate a better balance of fit and parsimony. The interpretability of the classes is also a critical factor [1] [23].

- Interpretation: Examine the item-response probabilities for each food variable within each class. These probabilities indicate the likelihood of a specific level of consumption for a food, given membership in a particular latent class.

- Validation: Assess the clarity of class separation using entropy statistics (values closer to 1.0 indicate better separation) and validate the class solution by examining demographic, socioeconomic, or health outcome differences across the classes.

- Application Note: A scoping review on novel methods for dietary patterns found LCA to be an emerging technique that may capture complex synergies between dietary components that are missed by traditional methods [1] [23].

Principal Component Analysis (PCA)

PCA is a dimensionality reduction technique that transforms the original, correlated dietary variables into a new, smaller set of uncorrelated variables called principal components. These components are linear combinations of the original foods that explain the maximum possible variance in the data [27] [24].

Experimental Protocol

- Objective: To reduce the dimensionality of dietary data and derive continuous dietary patterns that explain the maximum variance in food consumption habits.

- Preprocessing:

- Data Collection: Input data is typically continuous (e.g., grams of food groups consumed per day).

- Standardization: Correlation matrix-based PCA requires variables to be standardized (mean-centered and scaled to unit variance) to avoid dominance by high-variance food items [27].

- Execution:

- Component Extraction: The PCA algorithm calculates components sequentially. The first component (PC1) accounts for the largest possible variance, the second (PC2) for the next largest variance while being uncorrelated to the first, and so on.

- Determine Component Retention: Use criteria such as the Kaiser rule (eigenvalue > 1), scree plot analysis, and the total variance explained (often aiming for 70-80% cumulative variance) to decide how many components to retain [27] [24].

- Interpretation via Factor Loadings: Rotate the retained components (using Varimax is common) to simplify their structure and enhance interpretability. Interpret each pattern by examining the factor loadings, which are correlations between the original food groups and the component. Food groups with high absolute loadings (e.g., > |0.2| or |0.3|) define the pattern [27] [24].

- Validation: Calculate a component score for each individual for each retained pattern. These scores can then be used in regression models to investigate associations with health outcomes.

- Application Note: An Irish study used PCA on FFQ data from 957 adults and identified five patterns, including a "vegetable-focused" pattern associated with a 1.90 times higher odds of having a healthy BMI, and a "meat-focused" pattern linked to higher obesity odds [27].

The following workflow diagram illustrates the application of these three methods in a nutritional epidemiology study.

Comparative Analysis and Advanced Considerations

Method Selection and Comparison

The choice of method depends on the research question, data characteristics, and desired output. The table below summarizes the key features of each approach.

Table 1: Comparative Summary of Unsupervised Learning Methods for Dietary Pattern Discovery

| Feature | K-Means Clustering | Latent Class Analysis (LCA) | Principal Component Analysis (PCA) |

|---|---|---|---|

| Core Objective | Segment individuals into distinct groups | Identify probabilistic subpopulations | Reduce data dimensionality; create continuous scores |

| Nature of Output | Categorical (cluster membership) | Categorical (probabilistic class membership) | Continuous (pattern scores for each individual) |

| Key Output | Cluster centroids (mean intake profiles) | Item-response probabilities | Factor loadings; component scores |

| Data Input | Continuous (often standardized) | Typically categorical/ordinal | Continuous (standardized) |

| Interpretation Focus | Comparing mean intake between clusters | Interpreting probability of food consumption per class | Interpreting food loadings on each component |

| Primary Strength | Creates clear, distinct patient/diet subgroups | Model-based; provides probability of class membership | Captures major gradients of variation in the diet |

| Example Health Finding | "Low-intake, high-carb" cluster had 59% higher CKD risk [25] [21] | Emerging method for capturing dietary complexity [1] [23] | "Prudent" pattern associated with 32% lower stroke risk [24] |

Advanced and Emerging Techniques

The field of dietary pattern analysis is evolving beyond these traditional a posteriori methods. Researchers should be aware of several advanced and emerging techniques:

- Compositional Data Analysis (CoDA): This approach acknowledges that dietary data are inherently compositional—the intake of one food is relative to others. Methods like Compositional PCA (CPCA) and Principal Balances Analysis (PBA) use log-ratio transformations to account for this, potentially offering more robust patterns [26]. A study on hyperuricemia found that CPCA, PBA, and traditional PCA all consistently identified a "traditional southern Chinese" diet high in rice and animal-based foods as a risk factor, demonstrating the utility of CoDA [26].

- Hybrid and Machine Learning Approaches: There is growing interest in applying a broader suite of machine learning algorithms, including random forests, neural networks, and probabilistic graphical models, to capture the complex, synergistic relationships within dietary data [1] [28] [29].

- Methodological Comparisons: Studies that directly compare methods are invaluable. For instance, one study found that both PCA and k-means identified similar "prudent" and "western" patterns, and both showed the "prudent" pattern was associated with a reduced risk of coronary heart disease and stroke [24]. Another comparison suggested that Confirmatory Factor Analysis (CFA) might offer more stable patterns than PCA in smaller sample sizes [30].

K-means clustering, LCA, and PCA are powerful, foundational tools for discovering meaningful dietary patterns in complex nutritional data. K-means excels at partitioning populations into discrete subgroups, LCA at identifying probabilistic latent classes, and PCA at defining continuous dietary gradients that explain maximum variance. The choice of method shapes the nature of the patterns discovered and their subsequent interpretation. As the field advances, integrating these methods with compositional data techniques and a wider array of machine learning algorithms will further enhance our ability to decipher the intricate links between diet and health, ultimately informing more effective public health and clinical interventions.

In dietary pattern characterization research, moving from generic recommendations to precise, data-driven predictions is paramount. Supervised learning algorithms, including Random Forests, Gradient Boosting, and Neural Networks, have emerged as powerful tools for predicting health outcomes, classifying dietary patterns, and personalizing nutritional interventions. These models can identify complex, non-linear relationships within high-dimensional data derived from dietary surveys, biomarkers, and lifestyle factors, offering insights that traditional statistical methods may overlook [8]. This document provides application notes and detailed experimental protocols for implementing these algorithms in nutrition research, framed within a broader thesis on machine learning applications in this field.

Algorithm Comparison and Performance

The selection of an appropriate algorithm depends on the specific research question, data structure, and desired outcome. The following table summarizes the key characteristics and empirical performance of Random Forests, Gradient Boosting, and Neural Networks in recent nutritional studies.

Table 1: Comparative Performance of Supervised Learning Algorithms in Nutrition Research

| Algorithm | Reported Accuracy/Metrics | Dataset & Task Description | Key Advantages for Nutrition Research |

|---|---|---|---|

| Random Forest | Lowest MAE: 0.78 ms (testing) for predicting cognitive performance (reaction time) [31].AUC > 0.96 for classifying food processing degree (NOVA classes) [32]. | 374 adults; features: demographics, anthropometrics, dietary indices, blood pressure [31].USDA FNDDS database; nutrient profiles as features [32]. | Handles mixed data types well; robust to outliers; provides native feature importance scores [31] [32]. |

| Gradient Boosting (XGBoost, LightGBM) | ~97% Accuracy for obesity susceptibility prediction when ensembled with other models [33].MAE < 0.5 servings for predicting food group consumption per eating occasion [14]. | Lifestyle and physical characteristic data from UCI repository [33].675 young adults; contextual factors to predict food group servings [14]. | High predictive accuracy on structured/tabular data; efficient handling of large datasets [34] [33]. |

| Neural Networks | >90% Accuracy for food image classification and nutrient detection [35].Forms base for novel probabilistic frameworks (Neural-NGBoost) [36]. | Image datasets for dietary assessment [35].Various datasets for probabilistic estimation tasks [36]. | Superior with unstructured data (images, text); models highly complex, non-linear interactions [36] [35]. |

Experimental Protocols

Protocol: Predicting Health Outcomes from Dietary and Lifestyle Data

This protocol outlines the steps for using ensemble tree methods to predict a continuous or categorical health outcome, such as cognitive performance or obesity risk [31] [33].

1. Research Question Formulation: Define the target variable (e.g., reaction time on a cognitive test, obesity status) and the scope of predictors (e.g., dietary indices, BMI, age, blood pressure).

2. Data Preprocessing (Preprocessing Stage - PS): - Handling Missing Data: Impute null values using appropriate methods (e.g., median for continuous, mode for categorical) [33]. - Feature Encoding: Convert categorical variables (e.g., gender, transportation mode) into numerical format using one-hot or label encoding [33]. - Outlier Treatment: Identify and remove outliers using statistical methods (e.g., IQR rule) to reduce noise [33]. - Data Normalization: Scale numerical features (e.g., age, height) to a standard range (e.g., 0-1) to ensure stable model training [33].

3. Feature Selection (Feature Stage - FS): - Objective: Reduce dimensionality and mitigate overfitting by selecting the most informative features. - Methodology: Employ advanced feature selection algorithms. For instance, the Entropy-controlled Quantum Bat Algorithm (EC-QBA) has been shown to effectively identify key predictors like physical activity frequency and consumption of vegetables for obesity risk prediction [33]. - Validation: Compare the performance of models trained with and without the feature selection step to validate its impact.

4. Model Training and Validation (Obesity Risk Prediction - ORP):

- Algorithm Selection: Choose one or multiple algorithms (e.g., Random Forest, LightGBM, XGBoost).

- Data Splitting: Split the dataset into training (e.g., 80%) and testing (e.g., 20%) sets [31].

- Hyperparameter Tuning: Use cross-validation (e.g., 5-fold) on the training set to optimize hyperparameters.

- Random Forest: n_estimators (number of trees), max_depth [37].

- Gradient Boosting: n_estimators, learning_rate, max_depth [37].

- Model Training: Train the model on the full training set with the optimal hyperparameters.

- Performance Evaluation: Evaluate the final model on the held-out test set using metrics such as Mean Absolute Error (MAE) for regression or Accuracy/Precision/Sensitivity for classification [31] [33].

Protocol: Predicting Food Consumption Using Contextual Factors

This protocol details the use of machine learning to predict food group consumption at the level of individual eating occasions (EOs) [14].

1. Data Collection via Ecological Momentary Assessment (EMA): - Use smartphone apps to collect real-time data on food intake and contextual factors over multiple, non-consecutive days [14]. - EO-level Factors: Record location, social context, activity, time of day, and food source for each EO [14]. - Person-level Factors: Collect via survey: demographics, cooking confidence, self-efficacy, food availability at home [14].

2. Outcome Variable Engineering: - Classify all consumed foods into specific groups (e.g., vegetables, fruits, discretionary foods) based on relevant dietary guidelines (e.g., Australian Dietary Guidelines) [14]. - Calculate the number of servings for each food group at every eating occasion.

3. Model Building with Gradient Boosted Decision Trees:

- Algorithm: Employ a Gradient Boosted Decision Tree algorithm (e.g., in scikit-learn).

- Hurdle Model Approach: For food groups often not consumed (e.g., vegetables), a two-step model can be used: first a classifier to predict consumption, then a regressor to predict serving size if consumed [14].

- Hyperparameter Tuning: Focus on max_depth, learning_rate, and n_estimators, using the lowest Mean Absolute Error (MAE) as the selection criterion [14] [37].

4. Model Interpretation: - SHAP (SHapley Additive exPlanations) Values: Calculate mean absolute SHAP values to interpret the impact of each contextual factor (e.g., cooking confidence, self-efficacy) on the predictions for different food groups and overall diet quality [14].

Workflow Visualization

Diagram Title: ML Workflow for Dietary Research

Diagram Title: Gradient Boosting Sequential Learning

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Dietary ML Research

| Tool / Resource | Type | Function in Research | Example Use Case |

|---|---|---|---|

| Healthy Eating Index (HEI) | Dietary Index / Metric | Quantifies adherence to dietary recommendations; used as a feature or target variable [31]. | Predicting cognitive performance; characterizing overall diet quality in a population [31]. |

| Dietary Guideline Index (DGI) | Dietary Index / Metric | Assesses adherence to national dietary guidelines on a 0-120 scale; a key outcome variable [14]. | Evaluating the overall daily diet quality of individuals based on their food intake records [14]. |

| FoodNow / Smartphone Diary App | Data Collection Tool | Enables Ecological Momentary Assessment (EMA) for real-time recording of food intake and contextual factors [14]. | Collecting high-frequency, low-recall-bias data on eating occasions and their contexts for predictive modeling [14]. |

| SHAP (SHapley Additive exPlanations) | Interpretation Library | Explains the output of any ML model by quantifying the contribution of each feature to a single prediction [14]. | Identifying which contextual factors (e.g., location, self-efficacy) most influence the prediction of fruit or vegetable consumption [14]. |

| XGBoost or LightGBM Libraries | Software Library | Provides highly optimized implementations of gradient boosting algorithms for efficient model training [37] [33]. | Building a high-accuracy model for predicting obesity susceptibility or food group consumption from lifestyle data [14] [33]. |

| NOVA Food Classification System | Food Categorization Framework | Manually classifies foods by degree of processing (NOVA 1-4); used as ground truth for model training [32]. | Training a Random Forest model (FoodProX) to predict the degree of food processing from nutrient profiles alone [32]. |

Precision nutrition represents a paradigm shift from generalized dietary advice to individualized recommendations that account for a person's unique biology, behavior, and environment [35]. Within this field, machine learning (ML) has emerged as a transformative tool for predicting individual food choices and overall diet quality by modeling complex, multi-dimensional data [38] [39]. This application note details how ML algorithms can characterize dietary patterns and generate personalized nutritional insights, with direct relevance for researchers, clinical scientists, and professionals in preventive medicine and drug development seeking to understand dietary influences on health outcomes.

The integration of artificial intelligence (AI) in nutrition science enables the analysis of complex datasets that capture the interplay between genetic profiles, metabolic markers, lifestyle behaviors, and environmental contexts [35]. By moving beyond one-size-fits-all dietary guidelines, ML-driven approaches can identify subtle patterns in food consumption behavior, predict responses to dietary interventions, and ultimately support the development of more effective, personalized nutrition strategies for health promotion and disease prevention [8] [40].

Key Research Findings and Quantitative Data

Recent studies have demonstrated the robust predictive capabilities of machine learning models across various nutritional outcomes, from food group consumption at individual eating occasions to overall daily diet quality assessment.

Table 1: Predictive Performance of ML Models for Food Group Consumption

| Food Group | ML Model | Performance (MAE in servings) | Key Predictive Factors |

|---|---|---|---|

| Vegetables | Gradient Boost Decision Tree | 0.30 | Location, time of day, social context [38] |

| Fruits | Gradient Boost Decision Tree | 0.75 | Food availability, time scarcity [38] |

| Dairy | Gradient Boost Decision Tree | 0.28 | Activity during consumption, self-efficacy [38] |

| Grains | Gradient Boost Decision Tree | 0.55 | Cooking confidence, perceived time scarcity [38] |

| Meat | Gradient Boost Decision Tree | 0.40 | Social context, location [38] |

| Discretionary Foods | Gradient Boost Decision Tree | 0.68 | Self-efficacy, activity during consumption [38] |

Table 2: Predictive Performance for Overall Diet Quality

| Outcome Metric | ML Model | Performance | Top Predictors |

|---|---|---|---|

| Dietary Guideline Index (0-120) | Gradient Boost Decision Tree | MAE: 11.86 points [38] | Cooking confidence, self-efficacy, food availability [38] |

| Diet Quality Index-International | Deep Neural Network | R²: 0.928, MAE: 0.048 [41] | BMI, sleep quality, work-family conflict [41] |

| Healthy Eating Index | Variational Autoencoder | High accuracy in personalized weekly meal plans [42] | Anthropometrics, medical conditions, energy requirements [42] |

Studies utilizing gradient boost decision tree and random forest algorithms have demonstrated robust performance in predicting food consumption across multiple food groups, with mean absolute error (MAE) values below half a serving for most categories [38]. For overall diet quality, models have achieved high predictive accuracy, with one Deep Neural Network (DNN) application reporting an R² value of 0.928 and MAE of 0.048 on the Diet Quality Index-International [41].

Experimental Protocols

Protocol 1: Predictive Modeling of Food Choices at Eating Occasions

This protocol outlines the methodology for using machine learning to predict food consumption based on contextual factors at individual eating occasions, adapted from the MEALS study [38].

Materials and Methods

- Participants: Recruit 18-30 year old adults (target n=675) without conditions affecting dietary intake (pregnancy, lactation, language barriers)

- Dietary Assessment: Implement 3-4 non-consecutive days of data collection using a smartphone food diary app (e.g., FoodNow) with image capture and text descriptions

- Contextual Data Collection:

- Record eating occasion-level factors: time, location, social context, activity, food source via ecological momentary assessment

- Collect person-level factors: demographics, socioeconomic status, cooking confidence, self-efficacy via online survey

- Food Coding and Serving Calculation:

- Code foods to national nutrient database (e.g., AUSNUT 2011-2013)

- Classify as discretionary or non-discretionary using national guidelines

- Calculate servings according to national dietary guideline food groups

- Machine Learning Pipeline:

- Preprocess data: log-transform serving sizes, handle missing data

- Train multiple algorithms: gradient boost decision tree, random forest

- Optimize hyperparameters using k-fold cross-validation

- Select best model based on lowest mean absolute error (MAE)

- Interpret results using SHapley Additive exPlanations (SHAP) values

Analysis and Interpretation Calculate mean absolute SHAP values to determine variable importance for each food group. Validate model performance on held-out test data and report MAE for each food group.

Protocol 2: Deep Neural Network for Diet Quality Prediction

This protocol details the use of deep neural networks for predicting overall diet quality based on multi-dimensional predictors, adapted from research on healthcare professionals [41].

Materials and Methods

- Participants: Recruit target population (e.g., healthcare workers, n=5,013) from multiple sites to ensure diversity

- Data Collection Instruments:

- Food Frequency Questionnaire (FFQ) to assess dietary intake over past 12 months

- Diet Quality Index-International (DQI-I) to calculate variety, adequacy, moderation, and overall balance scores

- Pittsburgh Sleep Quality Index (PSQI) to assess sleep quality

- Emotional Eating Scale-Revised (EES-R) to measure emotional eating patterns

- Demographic and socioeconomic questionnaires

- Work-related conflict and burnout assessments

- Model Development:

- Architecture: Implement 21-30-28-1 network framework (input-hidden-hidden-output)

- Input features: Standardize all predictor variables (BMI, sleep quality, work-family conflict, etc.)