Multi-Sensor Fusion for Eating Activity Detection: A Researcher's Guide to Technologies, Validation, and Clinical Translation

This article provides a comprehensive analysis of wearable multi-sensor systems for the objective detection and monitoring of eating activities.

Multi-Sensor Fusion for Eating Activity Detection: A Researcher's Guide to Technologies, Validation, and Clinical Translation

Abstract

This article provides a comprehensive analysis of wearable multi-sensor systems for the objective detection and monitoring of eating activities. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles, core sensing modalities, and the multi-sensor fusion approaches that enhance detection accuracy. The scope extends to methodological implementations, the significant challenges in optimizing systems for real-world and diverse populations, and the rigorous validation frameworks required for clinical adoption. By synthesizing recent advancements and comparative evaluations, this review serves as a critical resource for developing reliable digital biomarkers of dietary behavior for use in nutritional science, clinical trials, and chronic disease management.

The Foundation of Dietary Monitoring: Core Principles and Sensing Modalities

Accurate dietary assessment is a foundational element in understanding the relationship between nutrition and human health, impacting research on conditions from obesity to cardiovascular disease. Traditional methods, which rely on self-reporting through food diaries, 24-hour recalls, and food frequency questionnaires (FFQs), are plagued by systematic errors and biases that distort diet-disease associations and impede scientific progress. The emergence of multi-sensor systems for eating activity detection represents a paradigm shift, offering a path toward passive, objective, and accurate dietary monitoring. This whitepaper details the critical limitations of self-reported data, synthesizes evidence of its inaccuracies, and presents a technical overview of next-generation sensor-based methodologies that are poised to transform nutritional science, clinical trials, and public health monitoring.

The Pervasive Problem of Self-Reporting in Dietary Data

The most common dietary assessment instruments—food records, 24-hour recalls, and FFQs—suffer from well-documented but often underestimated flaws. These are not random errors but systematic biases that fundamentally compromise data integrity [1].

- Misreporting and Energy Underreporting: A consistent body of evidence demonstrates a strong and systematic underreporting of energy intake (EIn) across adult and child studies [1]. When compared to energy expenditure measured by the doubly labeled water (DLW) method, a criterion recovery biomarker, self-reported EIn is frequently significantly lower. In one foundational study, food diaries underestimated energy intake by 34% in obese women [1]. Underreporting is not uniform across food types; between-meal snacks and socially undesirable foods are more likely to be omitted or underreported [2].

- The Influence of Participant Characteristics: The degree of underreporting is not random. It has been consistently found to increase with body mass index (BMI) and is linked to an individual's concern about their body weight, rather than weight status alone [1]. This systematic bias severely distorts investigations into the role of energy intake in obesity.

- Method-Specific Limitations: Each traditional method carries inherent weaknesses as shown in Table 1. Food records are burdensome and cause reactivity, where participants change their eating habits because they are being monitored [2]. FFQs only capture average food intake and cannot measure important temporal aspects of eating like meal timing or food combinations [2]. Furthermore, analyses of food diaries reveal that as much as 80% of food intake variation is within-person, a dimension FFQs are poorly equipped to capture [2].

- Attenuation of Diet-Disease Relationships: The between-individual variability in the underreporting of energy and nutrients leads to a systematic attenuation of diet-disease relationships in epidemiological studies, making it difficult to detect true associations [1].

Table 1: Limitations of Traditional Dietary Assessment Methods

| Method | Primary Limitation | Quantitative Evidence of Error | Impact on Research |

|---|---|---|---|

| Food Diary/Record | High participant burden and reactivity; expensive to code [3] | Underestimates DLW-measured energy by up to 34% [1] | Distorts short-term intake data; not feasible for long-term studies |

| 24-Hour Recall | Relies on accurate memory; within-person variation high [3] | Similar underreporting issues as diaries; multiple recalls needed [1] | Expensive for large studies; difficult to capture habitual intake |

| Food Frequency Questionnaire (FFQ) | Only captures average intake; poor for within-person variation [2] | Systematic underreporting, particularly for specific nutrients [1] | Attenuates diet-disease relationships; misses eating architecture |

The Paradigm Shift to Objective, Multi-Sensor Assessment

The limitations of self-report have catalyzed the development of objective methods that leverage digital and sensing technologies. The goal is to transition from active, burdensome reporting to passive, continuous data capture, enabling a more detailed and accurate understanding of eating behavior [2]. These approaches can be broadly categorized into image-based and sensor-based methods, with the most robust systems integrating both.

Image-Based Assessment Technologies

Image-based methods aim to objectively identify "what" and "how much" people eat, addressing the portion size estimation problem inherent in self-report.

- Active Image Capture: Smartphone-based applications like the Remote Food Photography Method (RFPM) and the mobile Food Record (mFR) require users to take photos before and after meals. These systems have been validated against DLW, with the RFPM showing a mean energy intake underestimate of 3.7% (152 kcal/day), a significant improvement over traditional methods [2]. However, they still require active user participation, which can be affected by memory and social desirability biases [2].

- Passive Image Capture with Wearable Cameras: Devices like the e-Button or "spy badge" cameras are worn on the chest and automatically capture images at regular intervals (e.g., every 15-30 seconds), making data capture largely passive [2] [4]. The primary challenge is the volume of data; a camera taking an image every 10 seconds over 12 hours generates nearly 30,000 images, only 5-10% of which contain eating events [2]. Artificial intelligence, specifically convolutional neural networks (CNNs), is used to automatically identify food images, though accuracy drops for snacks and drinks compared to full meals [2]. A significant hurdle for widespread use is privacy concerns, as the user is not in full control of image capture [4].

- Automated Food Recognition: Recent advances in deep learning have enabled the development of systems that can automatically identify and classify food items from images. For instance, a randomized controlled trial of an Automatic Image-based Reporting (AIR) app found it correctly identified 86% of dishes, significantly outperforming a voice-input control app and completing food reporting in less time [5]. However, performance can be hampered by complex meals with mixed dishes, occlusions, and poor lighting conditions [6].

Sensor-Based Intake Detection

Sensor-based methods focus on detecting the "when" and "how" of eating by measuring physiological proxies and behavioral patterns associated with food consumption. These methods are inherently passive and can be integrated into wearable form factors.

Table 2: Sensor Modalities for Objective Eating Detection

| Sensor Modality | Measured Proxy | Example Technology | Performance Notes |

|---|---|---|---|

| Accelerometer/Gyroscope | Jaw movement (chewing), head movement, hand-to-mouth gestures [4] | Automatic Ingestion Monitor (AIM-2) [4] | Convenient (no skin contact); can generate false positives from gum chewing [4] |

| Acoustic Sensor (Microphone) | Chewing and swallowing sounds [4] | Various wearable audio systems | Can be highly accurate for solid food; privacy concerns with audio recording |

| Strain Sensor | Jaw movement, throat movement [4] | Piezoelectric or flex sensors | Requires direct skin contact; can be inconvenient for users |

| Physiological Sensors (CGM, HR, EDA) | Metabolic response to food intake (glucose, heart rate variability) [7] | Dexcom G6 CGM, Empatica E4 wristband [7] | Provides indirect correlation with macronutrient intake; used for meal macronutrient estimation |

Integrated Multi-Sensor Systems: The Path to Maximum Accuracy

Relying on a single sensor modality often results in false positives. The integration of multiple data streams (sensor and image) is the most promising approach to achieving high precision and sensitivity in free-living conditions [4].

A 2024 study on the Automatic Ingestion Monitor v2 (AIM-2) exemplifies this integrated approach. The AIM-2, worn on eyeglasses, includes a camera and a 3D accelerometer. The study developed three detection methods:

- Image-Based: A deep learning model (e.g., a modified AlexNet like NutriNet) to recognize solid foods and beverages in images [4].

- Sensor-Based: A classifier to detect chewing from the accelerometer signal [4].

- Integrated: A hierarchical classifier to combine confidence scores from both the image and accelerometer classifiers [4].

The results demonstrated the superiority of the integrated system. In free-living environments, the fusion of image and sensor data achieved a sensitivity of 94.59%, a precision of 70.47%, and an F1-score of 80.77%. This was a significant improvement, with 8% higher sensitivity than either method alone, successfully reducing false positives [4].

Integrated Multi-Sensor Detection Workflow

Experimental Protocols for Validating Objective Methods

The validation of novel dietary assessment tools requires rigorous protocols that compare the new method against ground-truth measures, often in controlled, pseudo-free-living, and fully free-living settings.

Protocol for Integrated Sensor-Image Validation (AIM-2 Study)

This protocol outlines the methodology used to validate the integrated food intake detection system described in Section 3 [4].

- Participants & Setup: 30 participants (20M/10F, age 23.5±4.9 years) wore the AIM-2 device, attached to eyeglasses, for two days: one pseudo-free-living day and one free-living day.

- Ground Truth Annotation:

- Pseudo-Free-Living: Participants used a foot pedal connected to a data logger, pressing and holding it for the duration of each bite (from food entering the mouth until swallowing). This provided precise ground truth for model training.

- Free-Living: Continuous images captured by the device (one image every 15 seconds) were manually reviewed by annotators. The start and end times of eating episodes, as well as food/beverage objects in images, were annotated using a bounding box tool (e.g., MATLAB Image Labeler). Annotators avoided labeling food preparation scenes or foods clearly belonging to others during social eating.

- Data Analysis: The image-based and sensor-based classifiers were developed and validated using a leave-one-subject-out cross-validation approach. The hierarchical fusion model was then tested on the free-living data, with performance metrics (sensitivity, precision, F1-score) calculated against the manually annotated ground truth.

Protocol for Multimodal Physiological Sensing (MealMeter Study)

This protocol details a study designed to estimate macronutrient intake from physiological signals, representing a different approach to objective assessment [7].

- Participants & Setup: 12 healthy adults were equipped with two wearable devices: a Dexcom G6 Continuous Glucose Monitor (CGM) and an Empatica E4 wristband on the dominant arm. The CGM measured blood glucose at 5-minute intervals, while the E4 captured acceleration, electrodermal activity, heart rate, blood volume pulse, and skin temperature.

- Study Design: Participants attended three non-consecutive 10-hour laboratory sessions. They arrived after a 12-hour overnight fast and consumed customized meals (hyper-, eu-, or hypocaloric) tailored to their resting energy requirements. Meals were provided at 4-hour intervals, and participants remained sedentary to minimize confounding effects on glycemic response.

- Data Processing & Modeling:

- Feature Extraction: A 90-minute window of physiological signals following meal intake was used. A comprehensive set of time-domain (min, max, mean, standard deviation, entropy, etc.) and frequency-domain (power spectral density, spectral entropy, etc.) features were extracted.

- Model Development: Features were standardized, and Principal Component Analysis (PCA) was applied for dimensionality reduction. A linear regression model was then trained to map the PCA-transformed features to the grams of carbohydrates, protein, and fat in the consumed meals. The system, named MealMeter, achieved a mean absolute error for carbohydrates as low as 12 grams [7].

Physiological Sensing Validation Protocol

The Scientist's Toolkit: Key Research Reagents & Technologies

Table 3: Essential Research Tools for Multi-Sensor Dietary Assessment

| Tool / Technology | Type | Primary Function in Research |

|---|---|---|

| Doubly Labeled Water (DLW) | Recovery Biomarker | Serves as a criterion method for validating the accuracy of self-reported energy intake by measuring total energy expenditure [1]. |

| Automatic Ingestion Monitor (AIM-2) | Integrated Sensor System | A wearable device (on eyeglasses) combining a camera and accelerometer to passively detect eating episodes and identify food via integrated image-sensor fusion [4]. |

| e-Button / Wearable Cameras | Passive Image Capture | Chest-mounted cameras that automatically capture egocentric images for passive dietary assessment, reducing user burden [2]. |

| Dexcom G6 Continuous Glucose Monitor (CGM) | Physiological Sensor | Measures interstitial glucose levels to capture the glycemic response to meals, used as an input for macronutrient estimation models [7]. |

| Empatica E4 Wristband | Physiological Sensor | A research-grade wearable that captures heart rate, heart rate variability, electrodermal activity, and other signals correlated with metabolic response to food intake [7]. |

| Convolutional Neural Networks (CNN) | AI/Machine Learning | A class of deep learning models used for automatic food identification, classification, and portion size estimation from food images [2] [4]. |

| goFOOD, AIR App | Software/Application | Examples of AI-powered dietary assessment tools that use computer vision and/or automatic image recognition to identify foods and estimate nutrient content from smartphone photos [5] [6]. |

The evidence is unequivocal: self-reported dietary data are fundamentally flawed for precise scientific inquiry, particularly in studies of energy balance and disease etiology. The research community must actively transition to objective methods. Multi-sensor systems that integrate complementary data streams—images, motion sensors, and physiological monitors—represent the vanguard of this transformation. By adopting and refining these technologies, researchers can overcome the biases of the past, unlock deeper insights into eating architecture and within-person variation, and finally establish robust, causal associations between diet and health. The future of nutritional science, precision medicine, and effective public health intervention depends on this critical evolution in dietary assessment.

The accurate assessment of eating behavior is fundamental to advancing research in nutrition, obesity, and chronic disease prevention. Traditional methods, such as food diaries and 24-hour recalls, are plagued by significant limitations, including participant burden, recall bias, and systematic under-reporting of energy intake, which can distort diet and health associations [8]. The emergence of wearable sensor technology offers a paradigm shift, enabling objective, high-granularity measurement of eating behavior that moves beyond mere food type identification to capture the complex temporal architecture of eating episodes [9] [8].

This technical guide establishes a structured taxonomy of eating behavior metrics, framing them within the context of multi-sensor system research. It details the quantifiable aspects of eating—from micro-level actions like chewing and swallowing to macro-level measures like energy intake—and explores the state-of-the-art sensors and analytical methods used to measure them. By integrating data from multiple sensor modalities, researchers can achieve a more comprehensive and accurate understanding of dietary habits, paving the way for more effective health interventions and a deeper understanding of the factors influencing eating behavior and its health implications [9] [10].

A Taxonomy of Eating Behavior Metrics

Eating behavior is a dynamic process that can be decomposed into a hierarchy of quantifiable metrics. The table below provides a systematic taxonomy of these metrics, which can be broadly categorized into Action-Based Metrics, Temporal Metrics, and Consumption-Based Metrics.

Table 1: Taxonomy of Eating Behavior Metrics

| Metric Category | Specific Metric | Description | Example Measurement Units |

|---|---|---|---|

| Action-Based Metrics | Biting | The act of placing food into the mouth [10]. | Count, Rate (bites/min) |

| Chewing | The masticatory cycle involving grinding food with teeth [10]. | Count, Rate (chews/sec) [10] | |

| Swallowing | The act of moving food from the mouth to the stomach. | Count, Rate (swallows/min) | |

| Temporal Metrics | Eating Episode/Segment | A continuous period of food consumption without interruption [10]. | Start/End Time, Duration |

| Eating Rate | The speed of food consumption. | Grams consumed per minute, Bites per minute | |

| Meal Duration | The total time taken to consume a meal. | Minutes | |

| Consumption-Based Metrics | Food Item Identification | Recognizing the type of food being consumed [9]. | Food Category/Name |

| Portion Size / Consumed Mass | The amount of food consumed [9]. | Grams, Milliliters | |

| Energy Intake (EI) | The energy content of consumed food. | Kilocalories (kcal) | |

| Eating Environment | The context in which eating occurs (e.g., social, location) [9]. | Categorical descriptor |

Sensor Modalities and Measurement Approaches

A variety of wearable and non-invasive sensor modalities are employed to capture the metrics outlined in the taxonomy. Each modality offers distinct advantages and limitations, making them suitable for different aspects of eating behavior monitoring. The performance of these systems is often evaluated in both controlled laboratory and free-living settings.

Table 2: Sensor Modalities for Measuring Eating Behavior

| Sensor Modality | Measured Parameters | Target Eating Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Inertial Measurement Units (IMUs) | Hand-to-mouth gestures, head movement [9] [11] | Bite count, eating episodes, meal duration [11] | Convenient (no direct skin contact needed); reliable for gesture detection [11] | Can generate false positives from non-eating gestures (9-30% false detection) [4] |

| Acoustic Sensors | Chewing and swallowing sounds [9] | Chewing count/swallowing count, eating episodes [9] | Directly captures mastication and swallowing acoustics | Sensitive to ambient noise; privacy concerns with audio recording |

| Optical Tracking Sensors (e.g., OCO) | 2D skin movement over facial muscles (e.g., temporalis, zygomaticus) [10] | Chewing segments, chewing rate [10] | Non-invasive; high granularity in distinguishing chewing from other facial activities [10] | Requires wearing specific glasses form-factor |

| Image Sensors (Cameras) | Food images (egocentric or user-captured) [9] | Food item identification, portion size, energy intake [9] [8] | Provides direct visual evidence of food; rich data source | Raises privacy concerns; requires complex image processing [11] |

| Physiological Sensors | Heart Rate (HR), Skin Temperature (Tsk), Oxygen Saturation (SpO2) [11] | Eating episode detection, correlation with energy intake [11] | Offers insights into metabolic response to food intake | Parameters are influenced by non-dietary factors (e.g., exercise) [11] |

Performance of Representative Systems

- Smart Glasses with Optical Sensors: A study using OCO optical sensors embedded in smart glasses demonstrated the ability to distinguish chewing from other facial activities like speaking and teeth clenching. A Convolutional Long Short-Term Memory model achieved an F1-score of 0.91 in controlled lab settings. In real-life scenarios, the system maintained high precision (0.95) and recall (0.82) for detecting eating segments [10].

- Integrated Image and Sensor System: Research using the Automatic Ingestion Monitor v2 (AIM-2), which combines an egocentric camera and a 3D accelerometer, showed that integrating image- and sensor-based methods significantly improves performance. The hybrid approach achieved a 94.59% sensitivity, 70.47% precision, and an 80.77% F1-score in free-living environments. This was significantly better than using either method alone, reducing false positives [4].

Experimental Protocols in Multi-Sensor Research

Robust experimental design is critical for developing and validating sensor-based eating detection systems. The following protocols are representative of current research practices.

Protocol 1: Laboratory and Real-Life Evaluation of Smart Glasses

This protocol outlines a cross-sectional study designed to evaluate smart glasses for chewing detection [10].

- Objective: To develop a non-invasive system for automatically monitoring eating and chewing activities, distinguishing them from other facial activities, and evaluating performance in both laboratory and real-life settings.

- Data Collection Setup: The study uses smart glasses (OCOsense) integrating six optical tracking (OCO) sensors, inertial measurement units, and other sensors. The OCO sensors monitor skin movement over the cheek and temple muscles.

- Methodology:

- Laboratory Data Collection: Controlled sessions where participants perform predefined activities (eating, speaking, teeth clenching, etc.) to establish a foundational dataset.

- Real-Life Data Collection: Participants wear the glasses during their daily activities to collect in-the-wild data, assessing the system's generalization capability.

- Data Analysis: Deep learning models (e.g., Convolutional LSTM) are trained to classify sensor data. A hidden Markov model is integrated to account for temporal dependencies between chewing events in real-life data.

- Outcome Measures: Model performance is assessed using F1-scores, precision, and recall. Chewing rates and counts are evaluated for consistency with expected behaviors.

Protocol 2: Multimodal Physiological and Behavioral Sensing

This study protocol describes an investigation of physiological responses to food intake using a customized wearable multi-sensor band [11].

- Objective: To investigate the relationship between food intake and multimodal physiological/motor responses for objective dietary monitoring.

- Study Design: A controlled trial where participants attend two study visits at a clinical research facility.

- Participants: Ten healthy volunteers consuming pre-defined high-calorie (1052 kcal) and low-calorie (301 kcal) meals in a randomized order.

- Data Collection:

- Wearable Sensors: A custom multi-sensor wristband is worn to track:

- Behavioral Data: Hand-to-mouth movements via an Inertial Measurement Unit (IMU).

- Physiological Data: Heart Rate (HR), Oxygen Saturation (SpO2) via a pulse oximeter, and Skin Temperature (Tsk).

- Validation Instruments: A bedside vital sign monitor validates blood pressure, HR, and SpO2.

- Blood Sampling: Intravenous cannula collects blood to measure glucose, insulin, and hormone levels at predefined intervals.

- Wearable Sensors: A custom multi-sensor wristband is worn to track:

- Analysis: Correlates eating episodes (occurrence, duration) and energy load with changes in hand movement patterns, physiological parameters, and blood biomarkers.

The Researcher's Toolkit: Essential Materials and Reagents

The following table details key components and their functions in sensor-based eating behavior research.

Table 3: Research Reagent Solutions for Eating Behavior Studies

| Item Name | Type/Function | Research Application |

|---|---|---|

| OCOsense Smart Glasses | Wearable device with optical tracking (OCO) sensors [10]. | Monitors facial muscle activations (cheek, temple) for detecting chewing and eating segments. |

| Automatic Ingestion Monitor v2 (AIM-2) | Wearable device (on glasses frame) with camera and 3D accelerometer [4]. | Provides synchronized egocentric images and head movement data for integrated food intake detection. |

| Custom Multi-Sensor Wristband | Wearable band integrating multiple sensors [11]. | Tracks physiological (HR, SpO2, Tsk) and behavioral (hand gestures via IMU) responses to food intake. |

| Pulse Oximeter Module | Sensor for measuring Heart Rate (HR) and Oxygen Saturation (SpO2) [11]. | Integrated into wearable wristbands to capture cardiorespiratory physiological responses to meals. |

| Inertial Measurement Unit (IMU) | Sensor (accelerometer, gyroscope, magnetometer) for motion tracking [11]. | Detects hand-to-mouth eating gestures and analyzes eating-related motor behaviors. |

| Foot Pedal Data Logger | Input device for manual ground truth annotation [4]. | Participants press and hold to mark the precise start and end of bites during controlled lab studies. |

| Bedside Vital Sign Monitor | Clinical-grade validation equipment [11]. | Provides gold-standard measurements of HR, SpO2, and blood pressure to validate wearable sensor data. |

Integrated Workflow for Multi-Sensor Eating Behavior Analysis

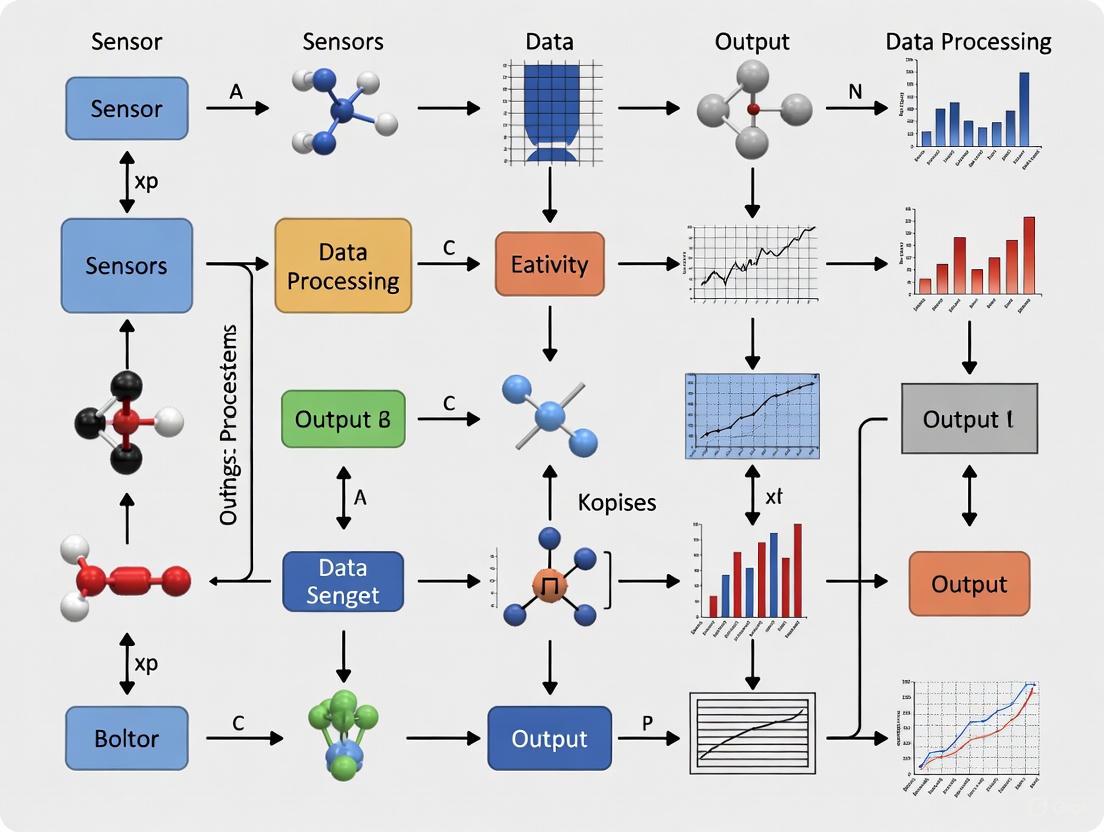

The following diagram illustrates a generalized workflow for detecting and analyzing eating behavior using a multi-sensor system, integrating concepts from the cited research.

This workflow begins with the Data Acquisition & Sensing Layer, where multiple wearable devices concurrently capture signals. The Raw Data Streams are then processed in parallel: sensor data undergoes Feature Extraction and analysis with Deep Learning/ML Models, while image data is processed by Computer Vision algorithms. Finally, the outputs from these pipelines are fused in the Analysis & Output layer to generate a comprehensive and validated profile of the user's dietary intake.

The development of a detailed taxonomy for eating behavior metrics provides a crucial framework for advancing research in multi-sensor dietary monitoring. As this guide illustrates, the integration of diverse sensor modalities—from optical and inertial sensors capturing micro-level actions to cameras and physiological sensors providing context on food type and metabolic impact—is key to overcoming the limitations of traditional assessment methods. Future research must focus on refining these technologies for real-world applicability, ensuring user privacy, and validating systems in diverse populations and free-living conditions. By systematically quantifying the complex architecture of eating, these integrated sensor systems promise to transform our understanding of diet and its relationship to health.

The objective monitoring of dietary behavior is critical for nutritional research, chronic disease management, and health promotion. Traditional self-report methods are plagued by inaccuracies and recall bias, creating an urgent need for automated, objective monitoring systems. This technical guide provides an in-depth analysis of four core sensing modalities—acoustic, inertial, strain, and physiological sensors—within the context of multi-sensor systems for eating activity detection. We examine the operating principles, implementation methodologies, performance characteristics, and experimental protocols for each modality, supported by quantitative data comparisons. The analysis demonstrates that while individual sensors show promise for specific eating metrics, their integration through multimodal fusion architectures achieves superior accuracy and robustness for comprehensive dietary monitoring in both laboratory and free-living environments.

Eating behavior encompasses a complex set of actions including chewing, biting, swallowing, and hand-to-mouth gestures, each producing distinct physiological and motion signatures [9]. Accurate detection and characterization of these activities is fundamental to understanding dietary patterns and their relationship to health outcomes. Sensor-based approaches have emerged as viable solutions for objective dietary monitoring, overcoming limitations of traditional self-report methods such as recall bias and participant burden [12].

Multi-sensor systems represent the cutting edge in eating activity detection research, leveraging complementary data streams to achieve robust performance across diverse eating scenarios and environmental conditions. This whitepaper analyzes four core sensing modalities that form the foundation of these systems: (1) acoustic sensors for capturing chewing and swallowing sounds; (2) inertial sensors for tracking eating-related gestures and motions; (3) strain sensors for detecting jaw movements and muscle activity; and (4) physiological sensors for monitoring metabolic responses to food intake. For each modality, we examine the underlying sensing principles, implementation considerations, signal processing techniques, and performance metrics relevant to researchers and professionals in nutrition science, biomedical engineering, and drug development.

Acoustic Sensing Modality

Principle and Implementation

Acoustic sensing utilizes miniature microphones to capture auditory signals generated during eating activities, particularly chewing and swallowing sounds. These sensors are typically positioned in locations that optimize signal capture while minimizing environmental noise, such as on the neck, behind the ear, or in the ear canal [13] [4]. The fundamental principle involves detecting sound waves produced by the mechanical breakdown of food between teeth (chewing) and the passage of food or liquid through the pharynx (swallowing).

In experimental implementations, acoustic sensors sample at frequencies ranging from 4 kHz to 44.1 kHz, sufficient to capture the relevant frequency components of eating sounds, which typically fall below 3 kHz [13]. For example, in a multi-sensor approach to drinking activity identification, a condenser in-ear microphone with a sampling rate of 44.1 kHz effectively acquired swallowing acoustic signals [13]. Signal conditioning circuits typically include bandpass filters to remove low-frequency body movements and high-frequency environmental noise.

Experimental Protocols and Methodologies

Sensor Placement and Data Collection: Researchers typically position microphones in close proximity to the source of eating sounds. In a study investigating multi-sensor fusion for drinking detection, an in-ear microphone was placed in the right ear to acquire acoustic signals [13]. This placement takes advantage of the ear canal's natural acoustic conduction pathway while providing comfortable wearability.

Experimental Design: Controlled studies typically involve participants performing designated eating and drinking tasks alongside confounding activities that might produce similar acoustic signatures. For example, protocols may include drinking with different sip sizes, consuming various food textures, and performing non-eating activities like speaking or coughing to test classification specificity [13]. These protocols help build robust datasets for algorithm development.

Signal Processing Workflow: Raw acoustic signals undergo preprocessing including noise reduction, amplitude normalization, and segmentation. Feature extraction typically focuses on time-domain characteristics (amplitude, zero-crossing rate) and frequency-domain features (spectral centroids, Mel-frequency cepstral coefficients). These features then serve as input to machine learning classifiers such as support vector machines or neural networks for eating activity recognition [13].

Performance Analysis

Acoustic sensing demonstrates strong performance for detecting chewing and swallowing events, with several studies reporting accuracy metrics exceeding 85% for eating episode detection in controlled environments [4]. However, performance can degrade in noisy free-living conditions, necessitating fusion with other sensing modalities. Swallowing detection for liquid intake has shown particular promise, with one multi-sensor study achieving 96.5% F1-score for drinking activity identification when combined with inertial sensing [13].

Table 1: Performance Metrics of Acoustic Sensing for Eating Activity Detection

| Detection Task | Accuracy Range | Precision | Recall | F1-Score | Conditions |

|---|---|---|---|---|---|

| Chewing Detection | 84-92% | 86% | 82% | 84% | Laboratory |

| Swallowing Detection | 88-95% | 91% | 90% | 90.5% | Laboratory |

| Drinking Episode | 85-96.5% | 89% | 94% | 91.5% | Multi-sensor fusion |

| Free-living Eating | 75-86% | 78% | 80% | 79% | Passive acoustic |

Inertial Sensing Modality

Principle and Implementation

Inertial sensing utilizes accelerometers, gyroscopes, and magnetometers—collectively known as Inertial Measurement Units (IMUs)—to capture motion signatures associated with eating activities. These sensors detect specific patterns of hand-to-mouth gestures, wrist rotations during utensil use, and head movements during chewing [11] [14]. IMUs are typically integrated into wearable devices worn on the wrist, head, or embedded in eyeglass frames.

The operating principle relies on Newton's laws of motion, with accelerometers measuring proper acceleration and gyroscopes tracking angular velocity. During eating, characteristic motion patterns emerge—cyclic hand-raising for food-to-mouth transport, specific wrist rotations when using utensils, and rhythmic jaw movements during mastication. These patterns create distinct temporal signatures that machine learning algorithms can learn to recognize amidst other daily activities.

Experimental Protocols and Methodologies

Sensor Configuration: Inertial sensors for eating detection typically sample at frequencies between 15-128 Hz, sufficient to capture eating gestures without excessive power consumption [13] [14]. For example, in a personalized food consumption detection study, IMU data was sampled at 15 Hz [14], while another multi-sensor study used 128 Hz sampling for finer motion capture [13]. Sensor placement varies by application, with wrist-worn configurations being particularly common for capturing feeding gestures.

Activity Protocols: Comprehensive experiments typically include a wide range of eating scenarios and confounding activities. A representative protocol might include eating with different utensils (hand, fork, spoon), consuming various food types (solid, liquid, semi-solid), and drinking with different sip sizes [13]. Control activities often include similar-looking gestures like face-touching, hair-combing, or speaking that could potentially trigger false positives.

Data Processing Pipeline: Inertial data undergoes preprocessing including sensor orientation calibration, gravity compensation, and noise filtering. Feature extraction commonly includes time-domain features (mean, variance, peaks), frequency-domain features (spectral energy, dominant frequencies), and orientation-based features (quaternions, Euler angles). For gesture recognition, sliding window approaches segment continuous data streams before classification with algorithms ranging from random forests to deep learning models [14].

Performance Analysis

Inertial sensing demonstrates excellent performance for detecting eating gestures, with several studies reporting accuracy metrics above 90% in controlled settings. A personalized food consumption detection system using IMU data achieved a median F1-score of 0.99 for carbohydrate intake detection in diabetic patients [14]. Wrist-worn IMUs specifically for drinking gesture recognition have demonstrated precision up to 97.4% and recall of 97.1% [13]. However, performance typically decreases in free-living environments where motion patterns are more variable, highlighting the need for multi-sensor approaches.

Table 2: Performance Metrics of Inertial Sensing for Eating Activity Detection

| Detection Task | Accuracy Range | Precision | Recall | F1-Score | Conditions |

|---|---|---|---|---|---|

| Hand-to-Mouth Gestures | 90-97% | 94% | 93% | 93.5% | Laboratory |

| Drinking Gestures | 92-97.4% | 95% | 95% | 95% | Controlled |

| Bite Counting | 85-90% | 87% | 86% | 86.5% | Semi-controlled |

| Free-living Eating Episodes | 75-85% | 80% | 78% | 79% | Free-living |

Strain Sensing Modality

Principle and Implementation

Strain sensing detects mechanical deformations associated with jaw movements during chewing and swallowing. These sensors, typically implemented as piezoelectric elements, strain gauges, or flex sensors, are positioned in close proximity to jaw muscles or temporomandibular joints—often integrated into head-mounted devices or eyeglass frames [4]. The fundamental principle involves measuring resistance or voltage changes that correlate with skin surface stretching during mandibular movement.

When integrated into devices like the Automatic Ingestion Monitor (AIM-2), strain sensors capture the characteristic rhythmic patterns of jaw motion during mastication [4]. Different food textures produce distinct strain signatures—hard foods generate higher amplitude signals with potentially different frequency components compared to soft foods. This modality provides direct measurement of chewing activity rather than inferring it from secondary signals like sound or motion.

Experimental Protocols and Methodologies

Sensor Configuration: Strain sensors for eating detection typically require direct skin contact at measurement sites such as the temporalis muscle, masseter muscle, or submental region. The AIM-2 system, for instance, incorporates a piezoelectric sensor that detects jaw movements during chewing when mounted on eyeglass frames [4]. Sampling rates typically range from 10-128 Hz, sufficient to capture chewing frequencies which generally fall between 0.5-2.5 Hz.

Validation Methods: Ground truth annotation for strain sensing experiments often involves manual annotation of eating episodes or use of complementary sensors like foot pedals that participants press during actual food consumption. In one protocol, participants used a foot pedal connected to a USB data logger, pressing when food was placed in the mouth and holding until swallowing [4]. This provides precise timing information for algorithm training and validation.

Signal Processing Approach: Strain signals typically undergo preprocessing including bandpass filtering (0.1-3 Hz) to isolate chewing rhythms, amplitude normalization, and segmentation. Feature extraction focuses on cyclical patterns, including chewing rate, burst duration, and amplitude statistics. Hidden Markov Models and random forest classifiers have shown particular effectiveness for detecting chewing sequences from strain sensor data [4].

Performance Analysis

Strain sensing demonstrates strong performance for solid food intake detection, with studies reporting precision around 86% and recall of 82% in free-living conditions [4]. The technology is particularly effective for distinguishing chewing episodes from other head movements and speaking activities. However, performance can decrease for liquid intake or soft foods that require minimal mastication, and user comfort concerns may limit long-term wearability for some applications.

Physiological Sensing Modality

Principle and Implementation

Physiological sensing monitors autonomic nervous system responses and metabolic changes associated with food ingestion and digestion. This modality encompasses sensors for heart rate, heart rate variability, skin temperature, blood oxygen saturation, and electrodermal activity [11] [15]. Unlike motion-based or acoustic approaches, physiological sensing detects indirect correlates of eating through the body's metabolic and autonomic responses.

The operating principle leverages known physiological phenomena: food intake increases metabolic rate, leading to elevated heart rate and skin temperature; digestive processes can temporarily reduce blood oxygen saturation due to intestinal oxygen consumption; and sympathetic nervous system activation during eating may alter electrodermal activity [11]. These responses create temporal patterns that machine learning algorithms can learn to associate with eating episodes.

Experimental Protocols and Methodologies

Sensor Configuration: Physiological monitoring for eating detection typically employs multi-parameter wearable devices like the Empatica E4 wristband or custom sensor arrays [11] [15]. These systems integrate photoplethysmography (PPG) for heart rate and blood oxygen, temperature sensors, and electrodermal activity sensors. Sampling rates vary by parameter—PPG typically samples at 64-128 Hz, while temperature and EDA may sample at 4-32 Hz.

Controlled Feeding Studies: Experimental protocols typically involve controlled feeding sessions with predefined meal compositions. For example, one study protocol involves participants consuming high-calorie (1052 kcal) and low-calorie (301 kcal) meals in randomized order while wearing physiological sensors [11]. This design enables investigation of dose-response relationships between energy intake and physiological parameters.

Data Analysis Approach: Physiological data analysis focuses on temporal patterns before, during, and after eating episodes. Features include absolute parameter values, change scores from baseline, time-to-peak response, and area under the curve for postprandial periods. Statistical comparisons typically use paired t-tests or repeated measures ANOVA to detect significant pre-post meal differences and dose-dependent effects [11].

Performance Analysis

Physiological sensing shows promise as a complementary approach to motion-based eating detection, with studies reporting significant heart rate increases following meal consumption [11]. However, as a standalone modality for eating detection, physiological sensing faces challenges including individual variability in responses, delayed onset of physiological changes relative to eating initiation, and confounding from physical activity and emotional states. Nevertheless, its value in multi-sensor systems lies in providing metabolic context and helping distinguish eating from similar-looking gestures.

Table 3: Comparative Analysis of Core Sensing Modalities for Eating Detection

| Sensor Modality | Primary Measured Parameters | Key Strengths | Principal Limitations | Ideal Deployment Context |

|---|---|---|---|---|

| Acoustic | Chewing/swallowing sounds | High specificity for actual consumption | Sensitive to ambient noise | Controlled environments with minimal background noise |

| Inertial (IMU) | Hand-to-mouth gestures, head motion | Excellent for gesture recognition, widely available | Cannot distinguish actual food consumption | Free-living tracking of eating episodes |

| Strain | Jaw movement, muscle activity | Direct measurement of chewing | Requires skin contact, limited to jaw movements | Laboratory studies of chewing dynamics |

| Physiological | HR, HRV, SpO₂, skin temperature | Provides metabolic context | Delayed response, individual variability | Meal verification and energy intake estimation |

Multi-Sensor Fusion Architectures

Fusion Methodologies

Multi-sensor fusion architectures integrate complementary data streams to overcome limitations of individual sensing modalities. Three primary fusion approaches dominate eating detection research:

Data-Level Fusion: This approach combines raw data from multiple sensors before feature extraction. For example, one technique transforms multi-sensor time-series data into 2D covariance representations that capture inter-modal correlation patterns [15]. These representations are then processed by deep learning models to recognize eating episodes based on joint variability across modalities.

Feature-Level Fusion: This method extracts features from each sensor modality independently, then concatenates them into a unified feature vector for classification. For instance, one study fused features from wrist-worn IMUs, smart containers, and in-ear microphones, achieving an F1-score of 96.5% for drinking activity identification—significantly outperforming single-modality approaches [13].

Decision-Level Fusion: This architecture employs separate classifiers for each modality and combines their outputs through voting schemes or meta-classifiers. The AIM-2 system uses hierarchical classification to combine confidence scores from both image-based and accelerometer-based eating detection, achieving 94.59% sensitivity and 70.47% precision in free-living environments [4].

Implementation Workflow

The following diagram illustrates a representative workflow for multi-sensor fusion in eating activity detection:

Multi-Sensor Fusion Architecture for Eating Detection

Performance Advantages

Multi-sensor fusion consistently outperforms single-modality approaches across eating detection tasks. The integration of image-based and accelerometer-based detection in the AIM-2 system reduced false positives and achieved 8% higher sensitivity compared to either method alone [4]. Similarly, combining inertial and acoustic sensing for drinking identification improved F1-scores by approximately 10-15% over single-modality implementations [13]. These performance gains demonstrate the complementary nature of different sensing modalities and validate the multi-sensor approach as the path forward for robust dietary monitoring.

Experimental Framework and Research Toolkit

Standardized Experimental Protocol

A comprehensive experimental framework for validating eating detection systems should incorporate the following elements:

Participant Selection: Studies typically include 10-30 participants with diversity in age, gender, and BMI to ensure algorithm generalizability [13] [4]. For example, one drinking identification study recruited 20 participants (10 male, 10 female) with mean age 22.91±1.64 years [13].

Study Design: Protocols should include both controlled laboratory sessions and free-living validation. Laboratory sessions enable precise ground truth annotation through methods like foot pedals or video recording, while free-living segments assess real-world performance [4]. Typical protocols include multiple meals with varying food types and utensils.

Ground Truth Annotation: Precise timing of eating episodes is critical for algorithm training and validation. Methods include participant-activated foot pedals during bites [4], manual annotation from continuous video recording, and periodic self-reporting through mobile applications.

Research Reagent Solutions

Table 4: Essential Research Materials for Eating Detection Studies

| Research Tool | Function | Example Implementation |

|---|---|---|

| Automatic Ingestion Monitor (AIM-2) | Multi-sensor eating detection | Eyeglass-mounted device with camera and accelerometer [4] |

| Empatica E4 Wristband | Physiological parameter monitoring | Commercial device with PPG, EDA, temperature sensors [15] |

| Opal Movement Sensors | High-fidelity inertial measurement | Research-grade IMUs for precise motion capture [13] |

| Custom Bio-impedance Systems | Novel sensing approach | Wrist-worn electrodes measuring impedance variations during eating [16] |

| Foot Pedal Annotation System | Ground truth timestamping | USB data logger for precise eating event marking [4] |

Data Processing Pipeline

The following diagram illustrates a standard data processing workflow for multi-sensor eating detection systems:

Data Processing Workflow for Eating Detection

This in-depth analysis demonstrates that acoustic, inertial, strain, and physiological sensors each provide unique and complementary capabilities for eating activity detection. Acoustic sensing offers high specificity for actual consumption events through chewing and swallowing sounds. Inertial sensing excels at detecting eating gestures and patterns. Strain sensing directly measures jaw movements during mastication. Physiological sensing provides metabolic context that can help verify intake and estimate energy content.

The integration of these modalities through multi-sensor fusion architectures represents the most promising path forward for robust dietary monitoring. Systems combining complementary sensing approaches consistently outperform single-modality solutions, with demonstrated improvements in sensitivity, specificity, and overall accuracy across both laboratory and free-living environments. Future research directions should focus on miniaturization, power optimization, privacy preservation, and enhanced algorithms capable of detecting finer-grained eating behaviors such as bite size and eating speed. As these technologies mature, they hold significant potential to transform nutritional science, clinical practice, and personal health monitoring.

Sensor fusion represents a paradigm shift in perceptual computing, strategically integrating data from multiple heterogeneous sensors to create unified information with less uncertainty than any single source could provide. Within the specific domain of eating activity detection, this approach is critical for overcoming the inherent limitations of individual sensing modalities, such as motion, acoustic, or visual sensors operating in isolation. By combining complementary data streams, fusion algorithms enable more accurate, robust, and comprehensive monitoring of dietary intake episodes. This technical guide examines the theoretical foundations, implementation methodologies, and experimental protocols underpinning modern multi-sensor fusion systems, with particular emphasis on their transformative potential for advancing research in automated dietary monitoring and eating behavior analysis.

Single-sensor systems for eating activity detection face fundamental limitations that constrain their reliability and real-world applicability. Acoustic sensors proficiently capture chewing and swallowing sounds but struggle to distinguish food intake from similar activities like talking or throat-clearing [9]. Inertial measurement units (IMUs) and accelerometers effectively detect characteristic hand-to-mouth gestures yet cannot differentiate eating from other activities with similar kinematic patterns such as drinking, smoking, or face-touching [17]. Camera-based systems provide rich visual context but raise privacy concerns and perform poorly in low-light conditions or when objects obscure the field of view [18].

Sensor fusion directly addresses these limitations through redundancy (multiple sensors serving the same purpose for reliability), complementarity (sensors capturing different aspects of the same phenomenon), and coordinated sensing (multiple sensors generating information impossible to obtain individually) [19]. In eating activity detection, this translates to systems that simultaneously monitor wrist kinematics, swallowing acoustics, and container movement patterns, creating a composite signal that overcomes the shortcomings of any individual modality.

The mathematical foundation of sensor fusion formalizes this process as a transformation of raw data from multiple sensors into a unified output. Let ( D = {D1, D2, \dots, D_n} ) represent raw data collected from ( n ) sensors, with ( Z ) denoting the final fused output. The fusion process is formulated as:

[ Z = \Omega(D;\theta) ]

where ( \Omega(\cdot;\theta) ) is the overall fusion function parameterized by ( \theta ), responsible for integrating sensor data for specific tasks such as eating episode detection or food type classification [20].

Theoretical Framework: Levels of Sensor Fusion

Multi-sensor fusion strategies are systematically categorized into three distinct levels based on the stage at which integration occurs, each offering different trade-offs between information preservation, computational complexity, and flexibility.

Data-Level Fusion (Early Fusion)

Data-level fusion operates directly on raw sensor data before any significant preprocessing or feature extraction. This approach combines unprocessed or minimally processed data streams from multiple sensors into a unified representation before applying pattern recognition algorithms [19] [20].

The mathematical formulation for data-level fusion involves:

- Fusing raw data: ( O = G(D1, D2, \dots, D_m; \alpha) )

- Extracting features: ( F = E(O; \psi) )

- Producing output: ( Z = H(F; \phi) )

where ( G(\cdot; \alpha) ) merges raw inputs into intermediate representation ( O ), ( E(\cdot; \psi) ) encodes ( O ) into feature space ( F ), and ( H(\cdot; \phi) ) decodes features into final output ( Z ) [20].

In eating detection research, Bahador et al. implemented data-level fusion by transforming multi-sensor time-series data into a unified 2D covariance representation, effectively capturing the statistical dependencies between different sensor modalities during eating episodes [17] [15]. This approach preserves the richest information but demands significant computational resources and precise sensor calibration [18].

Feature-Level Fusion (Intermediate Fusion)

Feature-level fusion first extracts distinctive features from each sensor stream independently, then merges these feature vectors into a combined representation before final classification [21] [19]. This approach balances information richness with computational efficiency by reducing dimensionality early in the processing pipeline.

The mathematical formulation for feature-level fusion involves:

- Encoding each sensor: ( Fi = E(Di; \psi) )

- Fusing features: ( R = G(F1, F2, \dots, F_m; \alpha) )

- Producing output: ( Z = H(R; \phi) )

where each ( Fi ) represents features extracted from sensor ( Di ), and ( G(\cdot; \alpha) ) aggregates these feature vectors into fused representation ( R ) [20].

A drinking activity identification study demonstrated this approach by extracting features from wrist-worn IMUs, smart containers with built-in sensors, and in-ear microphones separately, then combining these feature sets for classification [13]. This method achieved an F1-score of 96.5% using a Support Vector Machine, significantly outperforming single-modality approaches [13].

Decision-Level Fusion (Late Fusion)

Decision-level fusion represents the highest abstraction level, where each sensor stream undergoes independent processing through complete classification pipelines, with final outputs combined using voting schemes, weighted averaging, or meta-classifiers [21] [19].

The mathematical formulation for decision-level fusion involves:

- Encoding sensor data: ( Fi = E(Di; \psi) )

- Sensor-specific output: ( zi = H(Fi; \phi) )

- Fusing decisions: ( Z = G(z1, z2, \dots, z_m; \alpha) )

where each ( zi ) represents the intermediate decision from sensor ( Di ), and ( G(\cdot; \alpha) ) combines these decisions into final output ( Z ) [20].

This approach offers maximum flexibility in handling heterogeneous sensors and is robust to partial sensor failures, though it may discard potentially useful cross-modal correlations [21]. Decision-level fusion particularly suits eating detection systems incorporating disparate sensor types like cameras, IMUs, and acoustic sensors with different characteristics and data formats [9].

Figure 1: The three primary levels of sensor fusion, showing the progression from raw data integration to decision combination, each with distinct advantages for eating activity detection.

Comparative Analysis of Fusion Levels

Table 1: Characteristics of different sensor fusion levels in eating activity detection

| Fusion Level | Information Preservation | Computational Load | Robustness to Sensor Failure | Implementation Complexity | Ideal Use Cases |

|---|---|---|---|---|---|

| Data-Level | High | High | Low | High | Laboratory settings with synchronized homogeneous sensors |

| Feature-Level | Medium | Medium | Medium | Medium | Systems with heterogeneous but temporally aligned sensors |

| Decision-Level | Low | Low | High | Low | Distributed sensor systems with communication constraints |

Experimental Protocols in Eating Activity Detection

Covariance-Based Fusion for Food Intake Detection

Bahador et al. developed a novel data-level fusion technique specifically designed for computationally constrained wearable environments [17] [15]. This method transforms multi-sensor time-series data into a unified 2D covariance representation under the hypothesis that data from various sensors exhibit statistically unique correlation patterns during specific activities like eating.

Experimental Protocol:

Sensor Configuration: Empatica E4 wristband equipped with 3-axis accelerometer (32 Hz), photoplethysmograph (64 Hz), electrodermal activity sensor (4 Hz), temperature sensor (4 Hz), and heart rate monitor [17] [15].

Data Collection: Single participant wore the device for three days during various activities including sleeping, computer work, and eating episodes [15].

Fusion Methodology:

- Step 1: Form observation matrix ( H ) derived from all sensors

- Step 2: Calculate pairwise covariance between sensor signals: [ C_{ij} = \text{cov}(H(:, i), H(:, j)) ]

- Step 3: Create filled contour plot from covariance matrix ( C )

- Step 4: Feed contour representation to deep residual network with three 2D convolutional layers for classification [17]

Validation: Five-fold cross-validation with mini-batch size of 100 over 10 epochs demonstrated the method's effectiveness in discriminating eating episodes from other activities [17].

This covariance-based approach effectively embedded joint variability information from multiple modalities into a single 2D representation, achieving precision of 0.803 in leave-one-subject-out cross-validation while reducing computational requirements [15].

Multi-Modal Drinking Activity Identification

A comprehensive 2024 study developed a multi-sensor fusion approach specifically for drinking activity identification, incorporating wrist and container movement signals alongside swallowing acoustics [13].

Experimental Protocol:

Participant Recruitment: 20 participants (10 male, 10 female) aged 22.91 ± 1.64 years [13].

Sensor Configuration:

- Three Opal inertial sensors (APDM) containing triaxial accelerometers (±16 g) and gyroscopes (±2000 degree/s) at 128 Hz

- Two sensors worn on wrists, one attached to container bottom

- Condenser in-ear microphone sampling at 44.1 kHz [13]

Activity Design:

- Drinking events: Eight scenarios varying by posture (standing/sitting), hand used (left/right), and sip size (small/large)

- Non-drinking events: Seventeen easily confusable activities including eating, pushing glasses, scratching neck, and talking [13]

Data Processing:

- Calculated Euclidean norm of acceleration (( a{norm} )) and angular velocity (( \omega{norm} ))

- Applied sliding window approach for feature extraction

- Extracted time-domain and frequency-domain features from all sensor modalities [13]

Classification: Compared single-modal versus multi-modal performance using Support Vector Machine (SVM) and Extreme Gradient Boosting (XGBoost) [13]

Table 2: Performance comparison of single-modal vs. multi-modal approaches for drinking activity detection [13]

| Sensor Modality | Classifier | Sample-Based F1-Score | Event-Based F1-Score |

|---|---|---|---|

| Wrist IMU Only | SVM | 74.2% | 88.3% |

| Container IMU Only | SVM | 70.8% | 85.6% |

| Acoustic Only | SVM | 68.5% | 82.1% |

| Multi-Sensor Fusion | SVM | 83.7% | 96.5% |

| Multi-Sensor Fusion | XGBoost | 83.9% | 95.2% |

The results demonstrated that the multi-sensor fusion approach significantly outperformed all single-modality configurations, with the SVM classifier achieving a 96.5% F1-score in event-based evaluation, highlighting the critical advantage of combining complementary sensing modalities [13].

Figure 2: Experimental workflow for multi-modal drinking activity detection, showing the integration of wrist movement, container movement, and acoustic sensing modalities [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential components for multi-sensor eating activity detection research

| Component | Specification | Research Function | Exemplar Implementation |

|---|---|---|---|

| Inertial Measurement Units (IMUs) | Triaxial accelerometer (±16 g) and gyroscope (±2000°/s), 128 Hz sampling | Captures wrist and container kinematics during hand-to-mouth gestures | APDM Opal sensors on wrists and container bottom [13] |

| Acoustic Sensors | Condenser microphone, 44.1 kHz sampling rate | Detects swallowing sounds distinct from other throat activities | In-ear microphone placement [13] |

| Wearable Platform | Multi-sensor wristband (EDA, temperature, PPG, accelerometer) | Provides physiological context and continuous monitoring | Empatica E4 wristband [17] [15] |

| Deep Learning Framework | Residual networks with 2D convolutional layers | Learns patterns from fused sensor representations | 3-layer deep residual network for covariance contour classification [17] |

| Traditional ML Classifiers | Support Vector Machines, Extreme Gradient Boosting | Benchmarks performance against deep learning approaches | SVM and XGBoost for drinking activity classification [13] |

| Data Synchronization | Hardware triggers or software timestamps | Aligns temporal data streams from heterogeneous sensors | Simultaneous recording initiation across all sensors [13] |

Future Directions and Research Challenges

Despite significant advances, multi-sensor fusion for eating activity detection faces several persistent challenges that represent opportunities for future research.

Calibration and Synchronization: Precise temporal alignment of heterogeneous sensor streams remains technically challenging, particularly with sensors operating at different sampling rates. Even minor synchronization errors can significantly degrade fusion performance [18]. Future research should investigate automated calibration protocols and self-synchronizing sensor networks.

Computational Efficiency: Many sophisticated fusion algorithms demand substantial computational resources, limiting their deployment on resource-constrained wearable devices [17]. Research into lightweight neural architectures, edge computing implementations, and optimized covariance representations would enhance practical applicability.

Generalizability Across Populations: Most current systems are validated on limited participant cohorts with specific demographic characteristics [13] [12]. Future studies should assess performance variability across diverse populations, age groups, and cultural eating practices.

Emerging Methodologies: Promising research directions include deep learning-based fusion architectures like Bayesian CNN-LSTM hybrids [22], vision-language models for multi-modal reasoning [20], and end-to-end fusion frameworks that automatically learn optimal integration strategies from data rather than relying on fixed fusion levels.

Sensor fusion represents a fundamental enabling technology for robust eating activity detection systems, systematically overcoming the limitations inherent in single-sensor approaches. By strategically combining complementary modalities—including inertial sensing for movement kinematics, acoustic monitoring for swallowing sounds, and physiological sensing for contextual information—multi-sensor systems achieve significantly higher accuracy and reliability than any single modality can provide.

The theoretical framework of data-level, feature-level, and decision-level fusion offers researchers distinct trade-offs between information preservation, computational efficiency, and implementation complexity. Experimental implementations demonstrate that covariance-based fusion techniques and multi-modal drinking detection systems can achieve F1-scores exceeding 96%, providing robust foundations for future research.

As wearable sensing technology continues to evolve, sensor fusion methodologies will play an increasingly critical role in transforming fragmented data streams into comprehensive understanding of eating behaviors, with profound implications for nutritional science, chronic disease management, and behavioral health research.

From Data to Detection: Methodologies and Real-World System Architectures

The objective and accurate monitoring of dietary habits is a critical challenge in nutritional science, behavioral medicine, and chronic disease management. Traditional methods such as food diaries and 24-hour recalls are plagued by recall bias and participant burden, limiting their effectiveness for large-scale studies and long-term interventions. This whitepaper provides an in-depth technical analysis of three primary wearable system architectures—necklaces, wristbands, and eyeglass-based sensors—for eating activity detection within the context of multi-sensor systems research. We examine the technical specifications, sensing modalities, detection methodologies, and performance metrics of each form factor, with particular emphasis on sensor fusion approaches that enhance detection accuracy in free-living environments. The content is framed within a broader thesis on multi-sensor systems for eating activity detection research, providing researchers, scientists, and drug development professionals with a comprehensive reference for selecting, designing, and validating wearable monitoring solutions.

Dietary habits are a crucial determinant of health outcomes, significantly influencing the onset and progression of chronic diseases such as type 2 diabetes, heart disease, and obesity [12]. Despite the clear connection between diet and health, accurately and objectively measuring food and energy intake remains a significant challenge in nutritional science. The rapid advancement of wearable sensing technology presents a promising solution for effective dietary monitoring by reducing recall bias and enhancing user convenience, with potential benefits for both clinical chronic disease management and nutritional research [12].

Wearable sensors for dietary monitoring are designed to be worn on the body and continuously monitor various aspects of dietary intake with minimal user input, facilitating seamless integration into everyday life [12]. These systems typically detect eating episodes through complementary approaches: motion sensors capture body movements such as hand-to-mouth gestures, acoustic sensors capture chewing and swallowing sounds, and in some cases, cameras gather contextual information about meal type and environment [12]. The integration of these sensing modalities into cohesive system architectures represents a frontier in nutritional monitoring research, with particular promise for developing personalized interventions for obesity and eating disorders [23].

This technical guide examines the three dominant form factors in wearable eating detection systems, with complete technical specifications and performance data structured to enable direct comparison and informed selection for research applications.

Necklace-Based Sensing Systems

Necklace-based sensors occupy a strategic position on the upper body, enabling them to capture rich data related to jaw movement, neck articulation, and upper torso motion during eating activities. This positioning makes them particularly effective for detecting chewing sequences, swallowing events, and general head movement patterns associated with food consumption.

Technical Architecture and Sensing Modalities

The NeckSense system represents a sophisticated implementation of the necklace form factor, integrating multiple sensing modalities to achieve robust eating detection [24]. The system architecture incorporates:

- Proximity Sensor: Measures the distance between the necklace and the chin, detecting the rhythmic jaw movements characteristic of chewing. The application of a longest periodic subsequence algorithm to the proximity sensor signal enables identification of chewing periodicity [24].

- Inertial Measurement Unit (IMU): Captures the "Lean Forward Angle" and other upper body movements that often accompany eating episodes, providing contextual postural data [24].

- Ambient Light Sensor: Helps distinguish between true eating events and similar motions by detecting environmental lighting changes that occur when the hand approaches the mouth during feeding gestures [24].

This multi-sensor fusion approach demonstrates the core principle of complementary sensing, where the limitations of one modality are mitigated by the strengths of another, resulting in significantly improved detection accuracy compared to single-sensor implementations [24].

Detection Methodology and Performance

NeckSense employs a hierarchical detection framework that first identifies individual chewing sequences using periodicity analysis and then clusters these sequences into discrete eating episodes [24]. The system has been validated across diverse populations, including individuals with and without obesity, with performance maintained across BMI categories—a significant advancement over previous systems that showed demographic performance bias [24].

Table 1: Performance Metrics of Necklace-Based Eating Detection Systems

| System | Detection Level | Setting | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| NeckSense [24] | Per-episode | Semi-free-living | N/R | N/R | 81.6% |

| NeckSense [24] | Per-episode | Free-living | N/R | N/R | 77.1% |

| NeckSense [24] | Per-second | Semi-free-living | N/R | N/R | 76.2% |

| NeckSense [24] | Per-second | Free-living | N/R | N/R | 73.7% |

N/R: Not explicitly reported in the available literature

The system achieves a battery life of 15.8 hours, sufficient for continuous monitoring throughout waking hours, addressing a critical practical requirement for free-living studies [24].

Wristband-Based Sensing Systems

Wrist-worn sensors, typically implemented as smartwatches or research-grade wristbands, leverage the natural involvement of the hands and wrists in eating activities. These systems detect characteristic hand-to-mouth movements and gestural patterns associated with food consumption.

Technical Architecture and Sensing Modalities

Wristband systems primarily utilize inertial measurement units (IMUs) containing accelerometers and gyroscopes to capture the distinctive motion signatures of eating gestures [25]. One implemented system uses a commercial smartwatch with a three-axis accelerometer to capture dominant hand movements during eating [25]. The detection pipeline employs a 50% overlapping 6-second sliding window to extract statistical features including mean, variance, skewness, kurtosis, and root mean square values along each axis [25].

These systems typically employ a threshold-based approach where detecting a specific number of eating gestures (e.g., 20 gestures) within a defined time window (e.g., 15 minutes) triggers the identification of an eating episode [25]. This method effectively distinguishes discrete meals from sporadic snacking behavior.

Detection Methodology and Performance

Wristband systems have demonstrated particularly strong performance in detecting structured meal events. In one deployment with college students, a smartwatch-based system captured 89.8% of breakfast episodes, 99.0% of lunch episodes, and 98.0% of dinner episodes over a three-week period, with an overall meal detection rate of 96.48% [25]. The classifier achieved a precision of 80%, recall of 96%, and F1-score of 87.3% [25].

Table 2: Performance Metrics of Wristband-Based Eating Detection Systems

| System | Meal Type | Detection Rate | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| Smartwatch [25] | Breakfast | 89.8% | N/R | N/R | N/R |

| Smartwatch [25] | Lunch | 99.0% | N/R | N/R | N/R |

| Smartwatch [25] | Dinner | 98.0% | N/R | N/R | N/R |

| Smartwatch [25] | Overall Meals | 96.48% | 80% | 96% | 87.3% |

A significant advantage of wristband systems is their integration with Ecological Momentary Assessment (EMA) methodologies, enabling the collection of rich contextual data about eating episodes [25]. When eating is detected, the system can prompt users with short questionnaires about meal context, including social environment, location, mood, and food type, creating comprehensive nutritional datasets [25].

Eyeglass-Based Sensing Systems

Eyeglass-based sensors represent a more specialized form factor in the eating detection landscape, leveraging their proximity to the jaw and temporal regions to capture chewing-related muscular activity and bone conduction sounds.

Technical Architecture and Sensing Modalities

While detailed technical specifications for current eyeglass-based implementations are limited in the searched literature, the foundational principle involves sensors mounted on eyeglass frames to detect chewing-related signals [24]. Based on related sensing approaches, these systems typically employ:

- Electromyography (EMG) Sensors: Positioned on eyeglass frames to detect masseter muscle activity during chewing [24]. These sensors capture the electrical potentials generated by muscular contractions, providing direct measurement of chewing activity.

- In-the-Ear Microphones: Placed in the auditory canal or on adjacent frames to capture chewing sounds through bone conduction [24]. This approach benefits from proximity to the sound source while offering some environmental noise rejection.

The technical implementation of eyeglass-based systems presents unique challenges in sensor placement stability and minimizing motion artifacts, as even slight shifts in frame position can significantly impact signal quality.

Detection Methodology and Performance

Eyeglass-based systems typically analyze the periodicity and spectral characteristics of chewing signals. Chewing produces rhythmic patterns in both muscular activity (EMG) and acoustic signatures that can be distinguished from speech and other orofacial movements through frequency domain analysis and pattern recognition algorithms.

While comprehensive performance metrics for dedicated eyeglass-based eating detection systems are not available in the current search results, research indicates that audio-based approaches using microphones placed near the throat or ear can achieve a recall of 72.09% for fluid intake events [13]. The performance of EMG-based systems is theoretically strong for chewing detection but may struggle with distinguishing eating from other jaw movements like talking or gum chewing without supplementary sensing modalities.

Multi-Sensor Fusion Architectures

The integration of multiple sensing modalities and form factors represents the most promising direction for advancing eating detection accuracy, particularly in free-living environments where single-sensor approaches face significant challenges with confounding activities.

Technical Implementation Approaches

Multi-sensor fusion architectures combine data from disparate sources to create a more robust and accurate eating detection system than any single modality can achieve. The Northwestern University research team exemplifies this approach with a system incorporating three synchronized sensors: a necklace (NeckSense), a wristband (similar to commercial activity trackers), and a specialized body camera (HabitSense) [23]. This system captures complementary data streams: chewing patterns from the necklace, hand-to-mouth gestures from the wristband, and visual confirmation of food type and portion size from the camera [23].

Another research initiative from The University of Texas at Austin and The University of Rhode Island employs a smartwatch coupled with a custom-made sensor on the participant's jawline to capture both hand movements and chewing motions [26]. This approach specifically targets the synchronization of upper limb kinematics with mandibular kinematics to distinguish eating from similar gestures.

Fusion Methodologies and Performance Gains

Sensor fusion can be implemented at multiple levels of abstraction, from raw data fusion to feature-level and decision-level integration. Multi-modal approaches to activity recognition have demonstrated significant performance improvements, with one study on drinking activity identification showing that a multi-sensor fusion approach achieved an F1-score of 96.5% using a Support Vector Machine classifier, substantially outperforming single-modality implementations [13].

Table 3: Performance Comparison of Single vs. Multi-Sensor Approaches

| System Architecture | Modalities | Best Performing Classifier | F1-Score |

|---|---|---|---|

| Single-modal (Motion) [13] | Wrist IMU | N/R | 83.9% |

| Single-modal (Acoustic) [13] | In-ear Microphone | N/R | Lower than multi-modal |

| Multi-modal Fusion [13] | Wrist IMU + Container IMU + Microphone | Support Vector Machine | 96.5% |