Navigating the Complex Landscape of Biomarker Validation Across Diverse Study Populations

This article provides a comprehensive roadmap for researchers and drug development professionals on validating biomarkers across diverse populations.

Navigating the Complex Landscape of Biomarker Validation Across Diverse Study Populations

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals on validating biomarkers across diverse populations. It explores the foundational scientific and ethical challenges, details advanced methodological frameworks and emerging technologies, addresses key troubleshooting and optimization strategies for real-world application, and outlines rigorous validation and comparative effectiveness research approaches. By synthesizing current guidelines, technological innovations, and ethical considerations, this work aims to enhance the reliability, generalizability, and clinical utility of biomarkers in precision medicine.

The Scientific and Ethical Bedrock: Establishing Foundational Principles for Cross-Population Biomarker Research

Defining the Unique Challenges of Endogenous Biomarkers vs. Traditional Drug Assays

In the evolving landscape of pharmaceutical research and development, biomarkers have become indispensable tools for decision-making from early discovery through clinical validation. Among these, endogenous biomarkers—naturally occurring molecules measured within the body—present unique challenges and opportunities that distinguish them fundamentally from traditional drug assays. While traditional drug assays quantify the pharmacokinetics of administered xenobiotics, endogenous biomarkers provide insights into physiological processes, disease states, and therapeutic responses by measuring internally produced analytes. The 2025 FDA Biomarker Guidance acknowledges this distinction, maintaining that although validation parameters of interest are similar between drug concentration and biomarker assays, the technical approaches must be adapted to demonstrate suitability for measuring endogenous analytes [1].

This comparison guide examines the fundamental distinctions between these two classes of analytical measurements, providing researchers with structured experimental data, methodological frameworks, and practical tools to navigate the complexities of endogenous biomarker validation. Understanding these differences is particularly crucial for applications in precision medicine, drug-transporter interactions, and therapeutic monitoring across diverse study populations [2] [3].

Fundamental Conceptual Differences

The core distinction between these analytical approaches lies in their fundamental nature: traditional drug assays measure exogenous compounds administered to the body, while endogenous biomarker assays quantify naturally occurring molecules that are integral to physiological processes.

Definition and Measurement Context

Traditional drug assays are designed to quantify pharmaceutical compounds and their metabolites that are introduced into the biological system. These xenobiotics are typically absent from the matrix prior to administration, allowing for straightforward standard curve preparation using the authentic compound spiked into blank matrix. The analyte is well-defined, with known chemical structure and properties, and sample processing focuses on extracting the drug from complex biological matrices while minimizing degradation [1] [4].

In contrast, endogenous biomarkers are naturally present in biological samples, creating significant analytical challenges. As noted in the 2025 FDA Biomarker Guidance, "biomarker assays must demonstrate suitability for measuring endogenous analytes - a fundamentally different challenge from the spike-recovery approaches used in drug concentration assays" [1]. The biomarker exists within a complex background of similar molecules, often at low concentrations, and may exhibit natural variability across individuals and populations. Furthermore, many endogenous biomarkers exist in multiple molecular forms or complexes, requiring careful characterization of the specific form being measured [2] [5].

Key Applications in Drug Development

The applications of these analytical approaches reflect their fundamental differences:

Table: Primary Applications of Traditional Drug Assays vs. Endogenous Biomarker Assays

| Application Area | Traditional Drug Assays | Endogenous Biomarkers |

|---|---|---|

| Pharmacokinetics | Quantify drug absorption, distribution, metabolism, excretion | Assess transporter activity (e.g., OATP1B via coproporphyrins) [2] |

| Dose Selection | Establish exposure-response relationships | Inform personalized dosing based on individual biomarker levels |

| Drug-Drug Interactions | Identify PK interactions between co-administered drugs | Assess transporter-mediated DDIs (e.g., using CP-I and CP-III for OATP1B inhibition) [2] |

| Therapeutic Monitoring | Ensure drug concentrations remain within therapeutic window | Monitor disease progression, treatment response, safety biomarkers [3] [4] |

| Patient Stratification | Limited application | Identify responder populations, define pathophysiological subsets [3] |

Analytical and Validation Challenges

The validation of endogenous biomarker assays presents distinct technical hurdles that differentiate them from traditional drug assays. While the 2025 FDA guidance indicates that biomarker validation should address the same parameters as drug assays—including accuracy, precision, sensitivity, selectivity, parallelism, range, reproducibility, and stability—the approaches to demonstrating these characteristics differ substantially [1].

Unique Validation Hurdles for Endogenous Biomarkers

Accuracy and Quantification Challenges: For traditional drug assays, accuracy is typically assessed by spiking known concentrations of the drug into blank biological matrix. This approach is impossible for endogenous biomarkers since they are naturally present in all biological samples. Alternative strategies include using surrogate matrices (stripped of the endogenous analyte), standard addition methods, or surrogate analyte approaches with stable isotope-labeled standards [1] [5].

Selectivity in Complex Matrices: Endogenous biomarkers often exist in complex biological milieus with multiple similar interfering substances. For example, in the analysis of endogenous peptides for hepatocellular carcinoma detection, researchers must distinguish specific peptide sequences among thousands of similar peptides in serum samples. As one study noted, "Among 2568 endogenous peptides, 67 showed significant differential expression between the HCC vs CIRR," highlighting the substantial selectivity challenges [5].

Parallelism and Matrix Effects: Demonstrating parallelism—that the biomarker responds similarly in the actual sample matrix compared to the calibration curve—is particularly challenging. Natural biomatrix components can cause suppression or enhancement effects that differ from artificial matrices. The European Bioanalysis Forum emphasizes that biomarker assays benefit fundamentally from Context of Use (CoU) principles rather than a PK SOP-driven approach [1].

Comparative Validation Parameters

Table: Comparison of Key Validation Parameters and Challenges

| Validation Parameter | Traditional Drug Assays | Endogenous Biomarker Assays | Key Challenges for Biomarkers |

|---|---|---|---|

| Accuracy/Recovery | Spiked samples in biological matrix | Surrogate matrix, standard addition, or surrogate analyte approaches | Lack of true blank matrix; natural variability [1] |

| Selectivity | Assess interference from matrix components | Distinguish target from structurally similar endogenous compounds | High background of similar molecules; isoform discrimination [5] |

| Reference Standards | Well-characterized drug substance | Often partially characterized natural compounds; recombinant proteins | Limited availability; structural heterogeneity; stability issues |

| Calibration | Linear curves in blank matrix | Non-linear in biological matrix; requires specialized approaches | Natural baseline levels; matrix effects [1] [5] |

| Sensitivity | Limited by instrumental detection | Limited by natural background levels | High background signals reduce practical sensitivity |

| Stability | Focus on drug stability in matrix | Must account for natural degradation pathways | Enzymatic degradation; ex vivo generation/decay [5] |

Experimental Approaches and Methodologies

Robust experimental design is crucial for addressing the unique challenges of endogenous biomarker analysis. The following sections outline proven methodologies and workflows for biomarker qualification and application.

Protocol for Endogenous Biomarker Validation: A Case Study in Hepatocellular Carcinoma

Research investigating endogenous peptides as biomarkers for hepatocellular carcinoma (HCC) provides an exemplary protocol for addressing endogenous assay challenges [5]:

Sample Preparation Workflow:

- Serum Processing: Collect blood using sterile vacuum tubes without anticoagulants. Centrifuge at 1,000 × g for 10 minutes followed by 2,500 × g for 10 minutes at room temperature. Aliquot serum with protease inhibitor and store at -80°C.

- Peptide Extraction: Mix 40 μL serum with 250 μL of 1% trifluoroacetic acid (TFA), vortex for 30 seconds, then heat at 98°C for 10 minutes to disrupt peptide-protein interactions.

- Fractionation: Transfer mixture to Amicon Ultra-0.5 centrifugal filter units (10 kDa MWCO) preconditioned with 150 μL of 70% ethanol with 1% TFA. Centrifuge at 14,000 × g for 20 minutes at 4°C.

- Desalting and Purification: Wash twice with 100 μL of 1% TFA followed by centrifugation for 10 minutes. Purify using C18 columns equilibrated with 50% ACN and conditioned with 2% TFA.

- Elution and Concentration: Elute peptides with 100 μL of 80% ACN, 1% TFA, then concentrate by freeze-drying.

Analytical Separation and Detection:

- LC-MS/MS Analysis: Reconstitute peptides in 0.1% formic acid. Separate using nano-LC system with C18 column (75 μm × 15 cm, 2 μm particle size) with 60-minute gradient from 5% to 35% acetonitrile in 0.1% formic acid at 300 nL/min.

- Mass Spectrometry: Analyze using Q-Exactive HF mass spectrometer in data-dependent acquisition mode. Full MS scans at 60,000 resolution, followed by MS/MS of top 15 ions at 15,000 resolution.

Data Analysis and Validation:

- Peptide Identification: Search data against human protein database using Sequest HT algorithm. Apply false discovery rate threshold of 1%.

- Statistical Analysis: Use ANOVA with multiple testing correction to identify significantly differentiated peptides between HCC and cirrhosis groups.

- Performance Validation: Evaluate diagnostic performance using receiver operating characteristic (ROC) analysis, comparing against existing biomarkers like alpha-fetoprotein (AFP).

This comprehensive approach identified three endogenous peptides that outperformed AFP in distinguishing HCC from cirrhosis, with one peptide (IAVEWESNGQPENNYKT) detected in 100% of HCC cases and completely absent in cirrhosis patients [5].

Experimental Workflow for Transporter Activity Assessment Using Endogenous Biomarkers

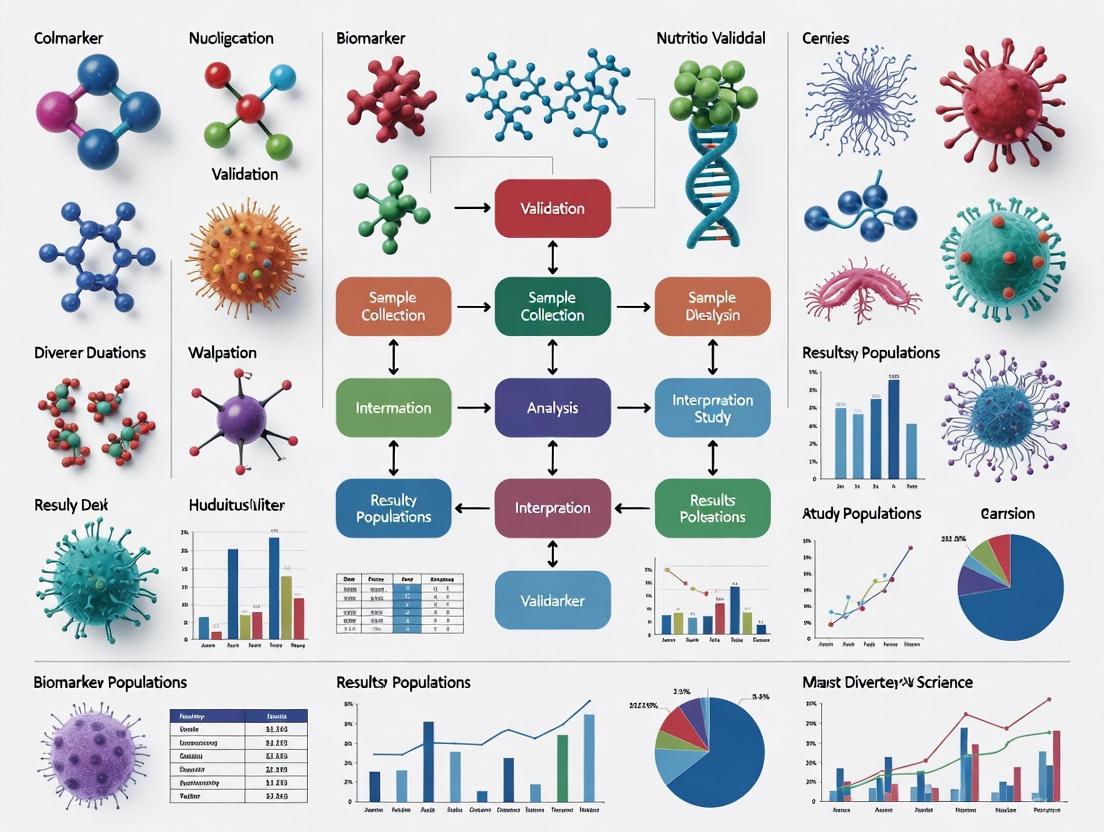

The following diagram illustrates a generalized workflow for assessing transporter activity using endogenous biomarkers like coproporphyrins (CP-I and CP-III) for OATP1B transporters:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful endogenous biomarker research requires specialized reagents and materials designed to address the unique challenges of quantifying naturally occurring analytes. The following table outlines essential solutions for this field:

Table: Essential Research Reagents for Endogenous Biomarker Analysis

| Reagent/Material | Function | Application Example | Key Considerations |

|---|---|---|---|

| Stable Isotope-Labeled Internal Standards | Account for extraction efficiency and matrix effects; enable accurate quantification | Quantification of coproporphyrin I and III for OATP1B activity [2] | Must be structurally identical to endogenous analyte; optimal labeling position |

| Surrogate Matrices | Create artificial matrix free of endogenous analyte for calibration | Bovine serum albumin solution or stripped serum for calibration curves | Must demonstrate parallelism with native biological matrix |

| Protease Inhibitor Cocktails | Prevent ex vivo degradation of protein/peptide biomarkers | Preservation of endogenous peptide signatures in serum samples [5] | Broad-spectrum inhibitors; compatibility with downstream analysis |

| Immunoaffinity Enrichment Materials | Concentrate low-abundance biomarkers from complex matrices | Antibody-coated magnetic beads for specific peptide capture | Specificity for target epitope; minimal non-specific binding |

| Solid-Phase Extraction Cartridges | Remove interfering matrix components; concentrate analytes | C18 cartridges for peptide cleanup prior to LC-MS/MS [5] | Selective retention of target analyte class; high recovery efficiency |

| Quality Control Materials | Monitor assay performance across multiple runs | Pooled human serum with characterized biomarker levels | Long-term stability; commutability with patient samples |

Regulatory and Contextual Considerations

The regulatory landscape for biomarker validation continues to evolve, with recent guidance emphasizing context-specific validation approaches rather than one-size-fits-all requirements.

Regulatory Framework and Guidelines

The 2025 FDA Biomarker Guidance builds upon the 2018 framework, maintaining remarkable consistency in fundamental principles while harmonizing with international standards through the adoption of ICH M10. This guidance explicitly recognizes that "although validation parameters of interest are similar between drug concentration and biomarker assays, attempting to apply M10 technical approaches to biomarker validation would be inappropriate" [1]. This distinction is critical, as M10 explicitly excludes biomarker assays from its scope, acknowledging that biomarker assays require adapted technical approaches to demonstrate suitability for measuring endogenous analytes.

The BEST (Biomarkers, EndpointS, and other Tools) glossary developed by the FDA and EMA provides standardized definitions for various biomarker categories, including susceptibility/risk, diagnostic, prognostic, pharmacodynamic/response, predictive, monitoring, and safety biomarkers [3]. Understanding these categories is essential for appropriate validation, as the evidentiary requirements differ based on the intended context of use.

Context of Use in Different Populations

A critical consideration in endogenous biomarker validation is understanding how biomarker levels and interpretation may vary across different populations. For example, research on coproporphyrins as biomarkers for OATP1B transporter activity has revealed that genetic polymorphisms can significantly impact baseline levels. The functional SLCO1B1 c.521T>C variant was shown to affect plasma concentrations of CPI but not CPIII, suggesting different transport mechanisms for these closely related biomarkers [2].

Similarly, disease states can dramatically alter endogenous biomarker levels and interpretation. Patients with organ impairment may exhibit altered biomarker baselines, requiring population-specific reference ranges. As noted in recent research, "endogenous biomarkers have also helped shed light on alterations in transporter activity in the setting of organ dysfunction and enabled the prediction of DDIs in specific populations such as patients with renal impairment" [2].

The comparison between endogenous biomarkers and traditional drug assays reveals fundamental distinctions that necessitate specialized approaches throughout the assay development and validation process. While both share common validation parameters—accuracy, precision, sensitivity, selectivity, and reproducibility—the technical strategies for demonstrating these characteristics differ substantially. Endogenous biomarkers require innovative solutions to challenges such as the absence of true blank matrix, natural biological variability, complex matrix effects, and context-dependent interpretation across diverse populations.

The scientific community's growing understanding of these distinctions, reflected in updated regulatory guidance and advancing methodological approaches, continues to enhance our ability to leverage endogenous biomarkers across the drug development spectrum. From assessing transporter-mediated drug-drug interactions to patient stratification and therapeutic monitoring, these analytical tools provide unique insights into physiological processes and disease states that cannot be obtained through traditional drug assays alone. By applying the specialized methodologies, reagents, and validation frameworks outlined in this guide, researchers can more effectively navigate the complexities of endogenous biomarker implementation, ultimately advancing drug development and personalized medicine.

The Critical Importance of Population Diversity in Genomic Studies and GWAS

Genome-wide association studies (GWAS) have revolutionized our understanding of the genetic architecture of complex traits and diseases. However, their transformative potential has been critically limited by a severe lack of population diversity in research participants. Historically, over 78% of participants in large-scale genomic studies have been of European ancestry, creating a substantial representation gap that undermines the equitable application of genomic medicine [6]. This bias persists despite evidence that expanding diversity accelerates scientific discovery and improves healthcare outcomes for all populations. The limited scope of genetic research creates a precision medicine gap where findings from well-represented populations may not translate effectively to underrepresented groups, potentially exacerbating existing health disparities [7]. This article examines the critical importance of population diversity in genomic studies, comparing analytical approaches and their performance across diverse populations while providing methodological guidance for researchers working to expand the inclusivity of genomic research.

Performance Comparison: Analytical Approaches for Diverse Genomic Studies

Methodological Comparisons in Multi-Population GWAS

Table 1: Performance comparison of GWAS methodologies across diverse populations

| Method | Study Design | Key Advantages | Limitations | Representative Findings |

|---|---|---|---|---|

| Quantile Regression (QR) | UK Biobank analysis of 39 quantitative traits [8] | Identifies variants with heterogeneous effects across phenotype distribution; robust to non-normal distributions; invariant to trait transformations | Slight power reduction under homogeneous linear models with normal errors | Identified variants with larger effects on high-risk subgroups missed by linear regression; powerful under location-scale and local effect models |

| Multi-Population GWAS (Univariate) | Barley breeding populations (6-rowed winter, 2-rowed spring, etc.) [9] | Increases detection power by combining datasets; identifies conserved QTLs | Assumes genetic effects are identical across populations (often unrealistic) | Detected 4-5 robust QTLs for heading date and lodging in nascent breeding program; three loci undetected in individual population analyses |

| Multi-Population GWAS (Multivariate) | Same barley breeding populations as above [9] | Allows for partial genetic correlations between populations; more realistic assumptions | Increased computational complexity; requires careful parameterization | Identified both conserved and population-specific loci; provided more accurate effect size estimates across populations |

| Stratified Multi-Population Analysis | INTEGRAL-ILCCO consortium (European, East Asian, African descent) [10] | Reveals novel variants specific to subgroups; captures genetic heterogeneity | Reduces sample size per stratum; requires large initial cohorts | Identified five novel loci (GABRA4, LRRC4C, etc.) in ever-smokers and never-smokers missed by main-effect analyses |

Empirical Evidence from Multi-Ethnic Studies

Recent multi-ethnic studies have demonstrated the tangible benefits of diversity in genomic research. In a landmark multi-population GWAS on lung cancer encompassing 64,897 individuals of European, East Asian, and African descent, researchers conducted stratified analyses by smoking status that revealed five novel independent loci (GABRA4, intergenic region 12q24.33, LRRC4C, LINC01088, and LCNL1) that had been missed in previous non-stratified analyses [10]. The study further demonstrated that genetic risk variants exhibited different risk patterns among never-smokers, light-smokers, and moderate-to-heavy smokers, highlighting the genetic heterogeneity between ever- and never-smoking lung cancer.

Similarly, research on the APOL1 gene revealed variants common among individuals with African ancestry that confer dramatically increased risk of kidney disease (with odds ratios up to 89 for HIV-associated nephropathy) while providing resistance against human African trypanosomiasis [7]. These variants are largely absent in those without African ancestry, illustrating how studies in diverse populations can uncover important genetic factors relevant to health disparities.

Experimental Protocols for Diverse Genomic Studies

Protocol 1: Multi-Population GWAS with Stratified Analysis

Objective: To identify genetic variants associated with complex traits across diverse populations while accounting for potential heterogeneity in genetic effects.

Step-by-Step Methodology:

Cohort Assembly and Genotyping: Collect genetic and phenotypic data from multiple ancestral populations. The INTEGRAL-ILCCO lung cancer consortium, for example, analyzed ~9 million high-quality imputed SNPs from 64,897 individuals of European, East Asian, and African ancestry [10].

Population Structure Assessment: Use ancestry-informative markers (approximately 2,000 in the INTEGRAL-ILCCO study) to infer ancestry and account for population stratification in analyses.

Stratified Association Testing: Conduct GWAS separately within each population group and smoking stratum (ever-smokers and never-smokers). Adjust for study sites and significant principal components to control for residual population structure.

Meta-Analysis: Combine results across populations using fixed-effects or random-effects meta-analysis. Select significant SNPs based on: (a) consistent direction of effect and P < 0.1 in at least two populations; and (b) joint P < 5 × 10⁻⁸ in meta-analysis.

Rare Variant Validation: For significant variants with minor allele frequency < 0.01, apply Firth logistic regression to reduce small-sample bias and validate associations.

Functional Annotation: Annotate significant variants using tools like CADD and RegulomeDB, and perform eQTL analysis to identify potential target genes. For lung cancer, DNA damage assays can further characterize candidate risk genes [10].

Figure 1: Workflow for multi-population GWAS with stratified analysis

Protocol 2: Quantile Regression GWAS for Heterogeneous Genetic Effects

Objective: To detect genetic variants with effects that vary across the distribution of a quantitative trait, particularly in high-risk subgroups.

Step-by-Step Methodology:

Data Preparation: Obtain genotype, phenotype, and covariate data from biobank-scale resources (e.g., UK Biobank). Unlike linear regression, quantile regression does not require rank-based inverse normal transformation of traits [8].

Model Specification: For each genetic variant and specified quantile levels τ (typically τ = 0.1, 0.2, ..., 0.9), fit the conditional quantile regression model:

QY(τ∣Xj,C) = Xjβj(τ) + Cα(τ)

where Y is the phenotype, Xj is the genotype of variant j, and C represents covariates.

Statistical Testing: For each variant and quantile level, test H₀: βj(τ) = 0 using the rank score test [8]. The test statistic is computed as:

SQRank,j,τ = n⁻¹/²∑𝒾=1ⁿX*ᵢⱼϕτ(Y𝒾 - C𝒾α̂(τ))

where X* = P𝒸X, P𝒸 = I - C(C′C)⁻¹C′, and ϕτ(u) = τ - I(u < 0).

P-value Combination: Combine quantile-specific p-values across the nine quantile levels using the Cauchy combination method to obtain an overall association test [8].

Heterogeneity Assessment: Examine patterns of βj(τ) estimates across quantiles to identify variants with non-constant effects, which may indicate presence of gene-environment interactions or other sources of heterogeneity.

Visualization of Genetic Architecture in Diverse Populations

Figure 2: Genetic architecture heterogeneity across populations

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key research reagents and resources for diverse genomic studies

| Resource Type | Specific Examples | Function and Application | Performance Considerations |

|---|---|---|---|

| Biobanks with Diverse Participants | UK Biobank, Multiethnic Cohort (MEC) [11] | Provides genotypic and phenotypic data from ethnically diverse populations; enables sufficiently powered stratified analyses | MEC includes >215,000 participants from five ethnic groups; biorepository contains >3.7 million biospecimen aliquots [11] |

| Genotyping Arrays | Illumina Infinium 15K/9K SNP arrays [9] | Standardized genome-wide variant detection; enables imputation to larger reference panels | Within-population imputation using Beagle v.5.4 with 150 cM sliding window effective for combining datasets [9] |

| Local Ancestry Inference Tools | RFMix [12], HAPMIX | Estimates ancestry-specific origins of chromosomal segments in admixed individuals; identifies regions with anomalous ancestry | Critical for detecting heterogeneity in population structure across the genome in admixed populations like Mexican-Americans and African-Americans [12] |

| Analysis Software for Diverse GWAS | QRank R package [8], METAL, CANDE [12] | Implements specialized association tests (quantile regression); combines results across diverse populations | CAnD test identifies chromosomes with significant ancestry differences without requiring strong population history assumptions [12] |

| Functional Validation Tools | DNA damage assays [10], eQTL analysis | Characterizes biological mechanisms underlying statistical associations; validates candidate genes | DNA damage assays confirmed CHEK2, ATM as lung cancer risk genes; eQTL colocalization supports regulatory mechanisms [10] |

Discussion: Implications for Biomarker Validation and Clinical Translation

The integration of population diversity into genomic studies presents both challenges and unprecedented opportunities for biomarker validation and drug development. Research consistently demonstrates that genetic associations discovered in one population often do not translate directly to others, complicating the development of broadly applicable biomarkers and therapeutics. For instance, variants in PCSK9 discovered in African American populations were associated with a 28-40% reduction in LDL cholesterol and an 88% reduction in coronary heart disease risk [7]. While these variants were present in European populations, their frequency was too low (0.006% vs. 2.6% in African ancestry individuals) for effective detection, highlighting how important therapeutic targets can be missed in non-diverse studies.

Furthermore, the clinical implementation of pharmacogenomics depends critically on diverse genomic research. The association between HLA-B*5701 allele and abacavir hypersensitivity syndrome (AHS) initially led to screening recommendations primarily for European populations. However, subsequent research revealed that the prevalence of this allele in the Kenyan Masai group was 13.6% (more than double that in European samples) while being absent among the Yoruba in Nigeria [7]. This finding underscored the inadequacy of broad racial labels for describing genetic risk and led to genetically-guided prescription becoming the standard of care.

Multi-ethnic cohorts also enhance the discovery of biomarkers with improved prognostic performance. In COVID-19 research, a multi-ethnic cohort study identified seven miRNAs (including miR-146b-3p, miR-154-5p, and miR-5010-3p) with strong prognostic potential through miRNA sequencing of nasal swab samples [13]. A panel of these miRNAs demonstrated significantly enhanced diagnostic accuracy (AUC 0.939-0.972), with performance further improving when combined with clinical parameters (AUC = 0.982) [13].

The critical importance of population diversity in genomic studies and GWAS extends far beyond equity concerns to the fundamental validity and utility of research findings. As this analysis demonstrates, diverse populations provide unique analytical advantages that enable the discovery of genetic effects heterogeneous across populations, subgroups, and phenotypic distributions. Methodological innovations such as quantile regression, multi-population GWAS, and stratified analyses represent powerful approaches for capturing this complexity, while growing biorepositories from diverse cohorts provide the essential substrate for these investigations.

For researchers and drug development professionals, prioritizing diversity requires both methodological sophistication and community engagement. As the scientific community moves forward, developing standardized protocols for diverse genomic studies, expanding ethical sample sharing frameworks, and implementing comprehensive functional validation pathways will be essential for translating diverse genomic discoveries into clinically actionable biomarkers and therapeutics that benefit all populations equitably.

Navigating Ethical, Legal, and Social Implications (ELSI) in Global Research

The validation of biomarkers across diverse global populations is a cornerstone of precision medicine, yet it presents a complex web of Ethical, Legal, and Social Implications (ELSI). As biomarker research rapidly advances, evidenced by the development of blood-based biomarkers for Alzheimer's disease and digital biomarkers for oncology and neurology, the ethical imperative to ensure these technologies are developed and applied equitably becomes paramount [14] [15]. This is especially critical in light of persistent health disparities and the historical underrepresentation of certain populations in biomedical research [16]. The ELSI Research Program, established in 1990 by the National Human Genome Research Institute (NHGRI), specifically fosters research on these implications for individuals, families, and communities, highlighting the long-recognized importance of this field [16].

A primary ELSI challenge is the limited generalizability of biomarkers validated in homogeneous populations. For example, a 2025 Brazilian cohort study demonstrated the excellent diagnostic performance of plasma pTau217 for Alzheimer's disease (ROC AUC = 0.98) [14]. This finding is a crucial step in local validation, addressing the sharp increase in Brazil's elderly population and high rates of underdiagnosed dementia [14]. Without such targeted studies in low- and middle-income countries (LMICs), biomarker-based predictive models risk exacerbating global health inequities through algorithmic bias and stratification injustices [17] [18]. This article provides a comparative guide to ELSI challenges and solutions, framing the discussion within the scientific necessity of validating biomarkers across different study populations.

Comparative Analysis of ELSI Challenges in Biomarker Research

The ethical landscape of global biomarker research is characterized by several interconnected challenges. These issues span the domains of data governance, clinical translation, and societal impact, each manifesting differently across global contexts.

Table 1: Comparative Analysis of Core ELSI Challenges in Global Biomarker Research

| ELSI Domain | Technical & Methodological Roots | Manifestations in High-Income Countries | Manifestations in Low- and Middle-Income Countries (LMICs) | Exemplary Data/Evidence |

|---|---|---|---|---|

| Data Equity & Bias | Limited training data from diverse populations; algorithmic bias [17]. | Potential reduction in diagnostic accuracy for underrepresented subgroups within the population [15]. | Lack of locally validated biomarkers; models trained on foreign populations have poor performance [14] [17]. | ~77% of adults with dementia in Brazil are undiagnosed, highlighting the urgent need for locally relevant biomarkers [14]. |

| Justice & Equity | High implementation costs; concentration of research infrastructure [19] [17]. | Barriers to access for socio-economically disadvantaged groups [18]. | Limited healthcare budgets; prioritization of basic care over advanced diagnostics [14] [17]. | Research infrastructures like SIMOA HD-X platforms are not equally available globally, hindering local validation [14]. |

| Privacy & Governance | Generation of large volumes of sensitive physiological and behavioral data [15]. | Concerns over data commercialization and use by insurers/employers [18]. | Lack of robust data protection laws and enforcement mechanisms; potential for exploitation [17]. | Digital biomarkers from wearables create vast, sensitive datasets requiring strong governance [15]. |

| Clinical Translation | Lack of universal frameworks for validating digital biomarkers as clinical endpoints [15]. | Uncertainty for sponsors and clinicians in adopting new biomarker technologies [15]. | Reliance on imported, unvalidated technologies; "one-size-fits-all" diagnostic approaches [14]. | Plasma pTau231 could not be reliably measured in a Brazilian cohort with a standard kit, indicating validation gaps [14]. |

| Communicating Uncertainty | Complex predictive nature of many biomarkers [18]. | Challenges in managing patient expectations and obtaining informed consent for probabilistic information [18]. | Communicating limitations of biomarkers validated in foreign populations; managing false hopes [18]. | Interviews with stakeholders reveal "multiple uncertainties" as a cross-cutting ethical theme [18]. |

Experimental Protocols and Methodologies for Equitable Biomarker Research

Addressing ELSI challenges requires methodologically rigorous and ethically informed study designs. The following section details key experimental approaches, with a focus on methodologies that enhance population diversity and ethical oversight.

Longitudinal Cohort Designs for Local Validation

The successful validation of plasma biomarkers for dementia in a Brazilian cohort exemplifies a robust methodology for local validation [14].

- Objective: To assess the diagnostic and predictive performance of plasma biomarkers for dementia in a Brazilian population, addressing the lack of local validation in Latin America [14].

- Population Recruitment: The study enrolled 145 elderly Brazilians, categorized into clinically distinct groups: cognitively unimpaired (n=49), amnestic mild cognitive impairment (aMCI, n=29), Alzheimer's disease (AD, n=38), Lewy body dementia (LBD, n=22), and vascular dementia (VaD, n=7) [14]. This design ensures representation across the disease spectrum.

- Biomarker Measurement: Plasma biomarkers (Tau, Aβ40, Aβ42, NfL, GFAP, pTau231, pTau181, pTau217) were measured using the SIMOA HD-X platform, a highly sensitive technology [14].

- Reference Standard: Clinical diagnosis was supplemented by cerebrospinal fluid (CSF) biomarker data, available for 36% of the sample, to establish biomarker positivity based on locally defined cutoffs [14].

- Longitudinal Follow-up: Participants were followed for up to 4.7 years to determine the performance of baseline plasma biomarkers in predicting diagnostic conversions from aMCI to dementia [14].

- Key Findings: Plasma pTau217 showed excellent performance in determining CSF biomarker status (ROC AUC = 0.94 alone, 0.98 as a ratio to Aβ42). Both pTau181 and pTau217 were elevated in participants who converted to dementia during follow-up [14].

Qualitative, Multi-Stakeholder ELSI Inquiry

A 2025 qualitative study on biomarkers in dermatology provides a template for investigating ELSI challenges empirically [18].

- Objective: To conduct an in-depth analysis of the ethical challenges in research and application of data-driven biomarkers for chronic inflammatory skin diseases [18].

- Study Design and Population: A qualitative interview study was conducted with 28 members of a European research consortium (BIOMAP), including multiple stakeholder groups involved in biomarker research and application. The interviews were analyzed using a grounded theory approach [18].

- Data Analysis: The analysis identified two broad categories of ethical challenges—disease-related and biomarker-related issues—from which three cross-cutting themes emerged: multiple forms of harm, multiple injustices, and multiple uncertainties [18].

- Key Findings: The study revealed interconnected ethical challenges, including covert patient suffering, multiple biases in datasets, stratification of patients into subgroups, and various uncertainties. It highlighted epistemic injustice, where the harm and suffering caused by chronic skin diseases are not adequately recognized [18].

Table 2: Research Reagent Solutions for Biomarker Validation and ELSI Assessment

| Research Tool / Solution | Specific Example | Primary Function in Research | Role in Addressing ELSI |

|---|---|---|---|

| High-Sensitivity Immunoassay Platform | SIMOA HD-X Platform [14] | Quantifies ultra-low levels of protein biomarkers (e.g., pTau217, GFAP) in plasma. | Enables less invasive, more accessible testing; facilitates local validation in diverse settings. |

| Multiplex Biomarker Analysis | Luminex Platform [20] | Measures multiple cytokines, chemokines, and growth factors simultaneously in a single serum sample. | Provides a comprehensive, cost-effective immunological profile; useful for population-level studies like ELSI-Brazil. |

| Digital Data Capture | Wearable devices, smartphone apps [15] | Continuously collects real-world data on physiology (e.g., heart rate) and behavior (e.g., sleep). | Shifts data collection to patients' environments; can reduce participation burden and increase diversity. |

| Qualitative Data Analysis Framework | Updated Grounded Theory Approach [18] | Systematically analyzes interview transcripts to identify themes and build theoretical understanding. | Elicits and centers the perspectives of patients and local stakeholders, identifying nuanced harms and injustices. |

| Multi-Omics Data Integration | Combined genomics, proteomics, metabolomics [17] | Develops comprehensive molecular maps of diseases by integrating data from different biological layers. | Moves beyond single markers, potentially identifying more robust and universally applicable biomarker signatures. |

Visualization of Workflows and Ethical Frameworks

The integration of ELSI considerations requires structured workflows. The diagram below outlines a proposed pathway for embedding ELSI assessment throughout the biomarker development and validation lifecycle.

A critical technical challenge is integrating diverse data types to build predictive models without perpetuating bias. The following diagram visualizes a multi-modal data fusion framework that can support more equitable biomarker discovery.

Navigating the ELSI landscape in global biomarker research is not an impediment to progress but a prerequisite for sustainable and equitable precision medicine. The quantitative data from the Brazilian cohort confirms that local validation is scientifically necessary, as biomarker performance can vary across populations with different genetic backgrounds, environmental exposures, and health profiles [14]. Simultaneously, the qualitative findings from dermatology research reveal that without careful attention to ELSI, even technically successful biomarkers can cause harm, perpetuate injustice, and fail to meet the needs of the communities they are intended to serve [18].

Future efforts must focus on strengthening multi-omics approaches integrated with ELSI frameworks, expanding longitudinal cohort studies in underrepresented populations, and leveraging edge computing solutions for low-resource settings [17]. Furthermore, as digital biomarkers and decentralized trial models become more common, new ethical frameworks for data governance and validation must be developed [15]. The growing recognition of these challenges is reflected in targeted funding initiatives, such as the NHGRI's Building Partnerships and Broadening Perspectives to Advance ELSI Research (BBAER) Program, which aims to include diverse perspectives in ELSI research [16]. By systematically integrating ELSI considerations from hypothesis formation through to clinical implementation, as outlined in the provided workflows, the scientific community can ensure that the promise of biomarker research is realized for all populations.

Ensuring Valid Informed Consent and Community Engagement in Low-Resource Settings

In the field of biomarker research, the scientific imperative to validate discoveries across diverse populations intersects directly with the ethical imperative to conduct research respectfully and equitably. The process of biomarker validation depends entirely on the availability of appropriate clinical specimens and data from well-characterized study populations [21]. Securing these resources in low-resource settings presents unique challenges that extend beyond technical considerations to fundamental questions of trust, understanding, and ethical practice. Informed consent and community engagement represent two interdependent aspects of a single concern—ensuring research is conducted respectfully while maximizing social value [22]. When these elements fail, as demonstrated in a Zambian pilot study where inadequate engagement led to guardian consent rates as low as 19%, the entire research enterprise is compromised [23]. This guide compares approaches to these ethical requirements, examining their relative effectiveness in supporting the broader goal of validating biomarkers across diverse study populations.

Comparative Analysis of Community Engagement Frameworks

Defining the Spectrum of Engagement

Community engagement (CE) ranges from simple information sharing to authentic partnerships with shared power and decision-making [22]. The appropriate level on this spectrum depends on contextual factors, but deeper engagement generally correlates with improved ethical and scientific outcomes.

Table: Community Engagement Approaches and Outcomes

| Engagement Approach | Key Characteristics | Typical Outcomes | Suitability for Low-Resource Settings |

|---|---|---|---|

| Information Giving | One-way communication; basic transparency | Limited trust building; high risk of misunderstanding | Low resource requirements but often insufficient alone |

| Consultation | Seeks community input but retains researcher control | Moderate trust; identifies major concerns | Moderate resource needs; can be effective with key informants |

| Partnership & Collaboration | Shared decision-making; mutual respect | High trust; sustainable relationships; improved consent | Higher initial investment but superior long-term efficiency |

Quantitative Comparison of Engagement Impact

Research demonstrates that the quality of community engagement directly influences research participation rates and quality. The contrasting outcomes from two studies highlight this relationship:

Table: Impact of Community Engagement on Research Participation

| Study Context | Engagement Approach | Participation/Consent Rate | Key Contributing Factors |

|---|---|---|---|

| Zambian SRH Pilot Study [23] | Inadequate use of local communication channels; limited understanding of local values | 19-57% (varied by site) | Mistrust; fears about intentions; suspicion of financial incentives; cultural misunderstandings |

| Productive Research Site [22] | Authentic partnerships; mutual respect; power sharing | Significantly higher (specific rates not provided) | Context-appropriate consent processes; community involvement in study design; ongoing dialogue |

The Zambian case study revealed that inadequate engagement created room for misinterpretation, including fears about loss of control over daughters, suspicion about unconditional cash transfers to girls, and even concerns about links to satanism [23]. These fears directly undermined the conditions necessary for valid informed consent.

Informed Consent Models: Methodologies and Comparative Effectiveness

Core Components of Valid Informed Consent

Valid consent with competent adults requires: (1) researchers adequately explaining the proposed study; (2) prospective participants understanding what is being proposed; and (3) prospective participants being able to make a free choice about joining the study [22]. Achieving these components in low-resource settings faces exacerbated challenges due to greater inequities in resources, power, and information among stakeholders [22].

Experimental Protocols for Consent Process Evaluation

A 2019 Zambian study employed a rigorous qualitative methodology to evaluate why a pregnancy prevention pilot study achieved such low consent rates (19% at one site) [23]. The research team conducted:

- Data Collection: Four focus group discussions (with girls, boys, and parents) and eleven semi-structured interviews with teachers, peer educators, community health workers, and community leaders

- Analysis Method: Thematic analysis to identify recurring patterns and challenges

- Key Findings: Inadequate use of locally appropriate communication channels resulted in limited understanding of the pilot concept, creating space for damaging misinterpretations

This methodological approach provides a template for other researchers to evaluate and improve their consent processes through systematic qualitative assessment.

Comparison of Emerging Consent Models for Complex Settings

Table: Innovative Consent Models for Challenging Contexts

| Consent Model | Protocol Description | Advantages | Limitations | Evidence Base |

|---|---|---|---|---|

| Two-Step/"Just-in-Time" Consent [24] | First stage: general research procedures; Second stage (only for experimental arm): specific intervention details | Reduces anxiety and information overload; preserves doctor-patient relationship | Only suitable for trials with standard-of-care comparator | Used in point-of-care trials; improves comprehension |

| Collaborative Consent Process [23] | Involvement of community representatives in developing consent approach and materials | Enhances cultural appropriateness; builds trust through co-creation | Time-intensive; requires flexible research timeline | Demonstrated improved acceptance in Zambian context after initial failures |

| Waiver of Consent [24] | Regulatory approval to forego consent for minimal-risk research using EHR data | Increases efficiency; enables research impractical with full consent | Ethically complex; requires rigorous risk assessment | Used in ABATE trial for infection control; inappropriate for higher-risk interventions |

Integrated Workflow: Connecting Community Engagement to Informed Consent

The relationship between community engagement and informed consent can be visualized as a sequential workflow where each stage builds upon the previous one to establish conditions for valid consent.

Table: Research Reagent Solutions for Ethical Engagement and Consent

| Tool Category | Specific Resource | Function & Application | Implementation Considerations |

|---|---|---|---|

| Community Liaison Tools | Trusted Community Health Workers | Bridge cultural and linguistic gaps; facilitate dialogue | Invest in training and fair compensation; recognize added value |

| Communication Platforms | Local Radio, Community Meetings, Religious Gatherings | Disseminate information through trusted channels | Identify most respected platforms; partner with local institutions |

| Consent Enhancement Tools | Visual Aids, Simplified Documents, Oral Quizzes | Improve comprehension across literacy levels | Pre-test with small groups; use local metaphors and examples |

| Partnership Structures | Community Advisory Boards | Institutionalize community voice in research governance | Ensure representative membership; provide meaningful influence |

| Assessment Tools | Qualitative Interview Guides, Focus Group Protocols | Evaluate and improve engagement and consent processes | Use independent facilitators when possible; ensure confidentiality |

The validation of biomarkers across diverse populations depends as much on ethical rigor as on technical proficiency. Evidence demonstrates that community engagement and informed consent are not administrative hurdles but fundamental components of scientifically valid research [22] [23]. When conducted effectively, they establish the trust and understanding necessary to obtain high-quality specimens and data from representative populations. The comparative analysis presented here reveals that while context-specific adaptations are necessary, certain principles remain universal: early and authentic community partnership, culturally appropriate communication, and ongoing relationship building consistently outperform approaches that treat engagement and consent as mere regulatory requirements. As biomarker research continues to globalize, integrating these ethical practices becomes increasingly essential to both scientific progress and the equitable distribution of research benefits.

Addressing Data Ownership, Sharing Policies, and Post-Study Expectations

The validation of biomarkers across diverse study populations is a cornerstone of precision medicine, yet this critical endeavor is fraught with complex challenges in data governance. As biomarker technologies evolve from single-omics approaches to comprehensive multi-omics integrations, researchers face escalating difficulties with data heterogeneity, standardization protocols, and limited generalizability across populations [17]. The success of cross-population biomarker validation studies hinges on robust frameworks for data ownership, sharing policies, and post-study expectations—elements that form the foundation of collaborative science while protecting intellectual property and patient privacy. These governance considerations are particularly crucial when deploying advanced analytical methods like artificial intelligence and machine learning on biomarker data, where access to high-quality, well-curated datasets determines the validity and utility of research outcomes [25] [26].

Within the context of multi-center studies spanning different geographical regions and demographic groups, inconsistent data policies can significantly impede the reproducibility and clinical translation of biomarker research [17]. The emerging paradigm of "proactive health management" further amplifies these challenges, as it incorporates dynamic monitoring through digital biomarkers and wearable devices, generating unprecedented volumes of real-world data [17] [27]. This article systematically compares the governance frameworks, experimental methodologies, and practical implementation strategies that support effective biomarker validation across diverse populations, providing researchers with actionable guidance for navigating this complex landscape.

Comparative Analysis of Data Governance Frameworks

Data Ownership and Intellectual Property Models

The governance of biomarker data begins with establishing clear ownership structures, which vary significantly across research contexts. A comparative analysis reveals several predominant models with distinct implications for validation studies across populations.

Academic Institution-Led Model: Traditionally, biomarker discoveries originating from universities and research institutes follow institutional intellectual property policies, with ownership often vested in the institution itself. Researchers operating under this model must be particularly diligent about securing necessary data rights through appropriate data use agreements and collaboration agreements early in the research process [26]. The failure to establish these agreements upfront can create significant downstream obstacles, especially when seeking to validate biomarkers across diverse populations that may require additional data sharing.

Industry-Sponsored Research Model: In pharmaceutical and biotechnology contexts, sponsors typically retain ownership of biomarker data generated during drug development programs. This model increasingly emphasizes trade secret protection for valuable datasets, sometimes surpassing reliance on patent protection alone [26]. Companies are developing comprehensive trade secret programs that include access controls, employee training, and detailed documentation to protect biomarker data while enabling necessary research access [26].

Consortium and Collaborative Models: Multi-stakeholder consortia are emerging as powerful frameworks for cross-population biomarker validation, implementing shared ownership through carefully structured governance agreements. These models typically employ data licenses that specify terms of use and data policies that govern access and dissemination [28]. The most effective consortia establish clear principles for data attribution and secondary use at the outset, preventing conflicts as research scales across populations and institutions [17] [28].

Table 1: Comparative Analysis of Data Ownership Models in Biomarker Research

| Ownership Model | Key Characteristics | Advantages for Multi-Population Studies | Limitations and Challenges |

|---|---|---|---|

| Academic Institution-Led | - Institutional IP policies- Bayh-Dole Act provisions- Publication-focused | - Supports fundamental discovery- Facilitates public dissemination- Often includes ethical oversight | - Potential delays in commercialization- Varied policies across institutions- May limit industry collaboration |

| Industry-Sponsored | - Sponsor retains ownership- Strong IP protection focus- Trade secret strategies | - Resources for large-scale validation- Clear commercialization pathways- Standardized data governance | - May restrict data access- Focus on proprietary positions- Potential publication limitations |

| Consortium-Based | - Shared governance- Multi-party agreements- Pre-competitive collaboration | - Pooled diverse datasets- Harmonized protocols across sites- Shared resource burden | - Complex negotiation processes- Balancing contributor interests- Managing exit strategies |

| Patient-Centric/Controlled | - Patient-mediated access- Dynamic consent models- Portable data rights | - Enhances participant trust- Facilitates longitudinal engagement- Aligns with privacy expectations | - Emerging legal frameworks- Implementation complexity- Scalability considerations |

Data Sharing Policies and Implementation Frameworks

Effective data sharing policies are essential for validating biomarkers across diverse populations, requiring careful balance between accessibility and protection. Several structured approaches have emerged as best practices in the field.

The FAIR Guiding Principles (Findable, Accessible, Interoperable, and Reusable) provide a foundational framework for sharing biomarker data across research communities [28]. Implementation typically involves metadata standards that describe how biomarkers were generated, including sample origin, collection methods, processing protocols, and analysis methods [28]. For cross-population studies, additional demographic, clinical, and methodological context is crucial for proper interpretation and reuse of data [17].

Structured data sharing platforms have become instrumental for collaborative biomarker validation. These platforms provide functions for data upload, download, visualization, annotation, analysis, and feedback [28]. When selecting such platforms, researchers should consider capabilities for version control, data validation, indexing, and secure access management to maintain data integrity across multiple research sites [28].

Data licensing agreements represent the legal implementation of sharing policies, specifying terms and conditions for data use [28]. These agreements are particularly important for biomarker data that may have multiple potential uses beyond the original research context. Progressive approaches include tiered access models that provide different levels of data granularity based on the researcher's needs and credentials, helping to balance open science objectives with privacy protection requirements [27] [28].

Table 2: Data Sharing Policy Components and Implementation Considerations

| Policy Component | Implementation Requirements | Multi-Population Considerations | Tools and Standards |

|---|---|---|---|

| Metadata Documentation | - Common data elements- Standardized terminologies- Protocol descriptions | - Cultural and linguistic adaptation- Population-specific variables- Geographic and environmental factors | - CDISC standards- NIH Common Data Elements- Data dictionaries |

| Access Governance | - Tiered access controls- User authentication- Data use agreements | - Compliance with international regulations- Ethical review diversity- Indigenous data sovereignty | - Data use agreements- Researcher passports- Data safe havens |

| Data Licensing | - Clear usage terms- Attribution requirements- Commercialization clauses | - Cross-jurisdictional enforcement- Varying IP protections- Benefit-sharing considerations | - Creative Commons licenses- Open Data Commons- Custom license agreements |

| Security Protocols | - Encryption standards- Access logging- Breach notification | - Infrastructure variability- Resource-appropriate solutions- Cultural privacy norms | - ISO 27001 standards- FIPS 140-2 validation- Differential privacy tools |

Experimental Design for Multi-Population Biomarker Validation

Methodological Framework and Protocols

Validating biomarkers across diverse populations requires meticulous experimental design to ensure results are comparable, reproducible, and clinically meaningful. The following methodological framework provides a structured approach for researchers undertaking these complex studies.

The foundation of robust multi-population biomarker validation begins with context of use (COU) definition, which specifies how the biomarker will be applied and informs all subsequent validation requirements [29]. For studies spanning multiple populations, researchers must clearly articulate whether the biomarker is intended for risk stratification, diagnosis, prognosis, or predicting treatment response, as each application demands different levels of evidence [30]. This COU should explicitly address the populations being studied and the intended generalizability of results.

Fit-for-purpose validation represents the guiding principle for biomarker method development, with the level of validation rigor directly corresponding to the intended application [29]. For early-phase exploratory studies across populations, limited validation may suffice, while biomarkers intended for regulatory decision-making or clinical implementation require comprehensive validation. This approach acknowledges that validation is often iterative, with requirements evolving as the biomarker progresses through different stages of development and application in diverse groups [29].

Multi-omics integration methodologies are increasingly essential for comprehensive biomarker validation, combining data from genomics, proteomics, metabolomics, and transcriptomics to achieve a holistic understanding of biological variations across populations [17] [25]. These approaches enable the identification of comprehensive biomarker signatures that reflect the complexity of diseases across diverse genetic and environmental backgrounds, moving beyond single-marker analyses to integrated biomarker panels [17].

Diagram 1: Biomarker validation workflow for multi-population studies. This workflow emphasizes the iterative nature of validation across diverse groups.

Addressing Pre-Analytical Variables Across Populations

A critical challenge in multi-population biomarker validation involves managing pre-analytical variables that may systematically differ across collection sites or population groups. These variables can be categorized as controllable and uncontrollable factors [29].

Controllable pre-analytical variables include specimen collection methods, processing protocols, storage conditions, and transportation procedures [29]. For multi-center studies, standardizing these variables through detailed standard operating procedures (SOPs) is essential. For example, variations in sample processing time or temperature can significantly impact biomarker stability and measurements, potentially creating artifactual differences between populations [29]. Researchers should implement rigorous training programs and monitoring systems to ensure consistent procedures across all collection sites.

Uncontrollable pre-analytical variables encompass inherent patient characteristics such as age, sex, genetics, comorbidities, medications, and environmental exposures [29]. While these cannot be standardized, they must be carefully documented and accounted for in statistical analyses. When designing a multi-population validation study, researchers should prospectively collect comprehensive metadata on these factors to enable proper adjustment and subgroup analyses.

Biological variability represents a particularly important consideration when validating biomarkers across diverse populations. The acceptable level of analytical imprecision depends on both the intended use of the biomarker and the degree of biological variability within and between populations [29]. Understanding population-specific biological ranges for biomarkers is essential for establishing appropriate reference intervals and interpreting results in different demographic and geographic contexts.

Table 3: Key Research Reagent Solutions for Multi-Population Biomarker Studies

| Reagent Category | Specific Examples | Function in Validation | Multi-Population Considerations |

|---|---|---|---|

| Reference Standards | - Recombinant proteins- Synthetic peptides- Certified reference materials | - Calibration normalization- Assay performance tracking- Cross-site harmonization | - Genetic variant inclusion- Population-specific isoforms- Commutability assessment |

| Quality Control Materials | - Pooled patient samples- Commercial QC pools- Cell line extracts | - Monitoring assay performance- Detecting reagent drift- Longitudinal stability | - Genetic diversity representation- Population-relevant matrices- Environmental factor reflection |

| Binding Reagents | - Monoclonal antibodies- Polyclonal antibodies- Aptamers | - Biomarker capture and detection- Assay specificity determination- Epitope mapping | - Variant binding affinity- Cross-reactivity profiling- Population-specific epitopes |

| Assay Platforms | - Immunoassays- Mass spectrometry- Sequencing platforms | - Biomarker quantification- Multiplexed analysis- Analytical validation | - Platform transferability- Resource-appropriate solutions- Technical variability assessment |

Visualization of Data Governance Relationships

The complex relationships between stakeholders in multi-population biomarker research can be visualized through a governance framework that balances various interests and responsibilities.

Diagram 2: Data governance relationships in multi-population biomarker research. This framework illustrates the reciprocal relationships and responsibilities between key stakeholders.

Post-Study Expectations and Implementation

Data and Sample Disposition Frameworks

Post-study expectations represent a frequently overlooked yet critical component of biomarker research, particularly for studies spanning multiple populations with varying cultural expectations regarding data and sample usage. Clear frameworks for data disposition, sample management, and result dissemination are essential for maintaining trust and enabling future research.

Data and sample retention policies should be explicitly defined in study protocols and consent forms, specifying duration, storage conditions, and future use permissions [28] [26]. For international studies, these policies must account for varying regulatory requirements across jurisdictions, including differences in how biomarkers are classified and governed [31]. Increasingly, researchers are implementing dynamic consent models that allow participants to make ongoing decisions about how their data and samples are used, particularly valuable in longitudinal studies across diverse populations [27].

Data publication and sharing expectations have evolved significantly, with many funders and journals now requiring data deposition in public repositories [28]. For biomarker researchers, this entails careful preparation of de-identified datasets with sufficient metadata to enable reuse while protecting participant privacy. The use of data use agreements even for supposedly de-identified data provides an additional layer of protection and clarity regarding appropriate uses [28] [26].

Ancillary study policies establish clear processes for researchers outside the original team to access data and samples for additional investigations [28]. These policies typically include scientific review mechanisms to evaluate proposed uses, prioritization criteria for scarce resources, and acknowledgment requirements that ensure proper attribution of the original contributors [17] [28]. For multi-population studies, these policies should specifically address how research benefits will be shared with participating communities, particularly when working with underrepresented or vulnerable groups.

Translational Pathways and Real-World Implementation

The ultimate validation of biomarkers across diverse populations occurs through their successful translation into clinical practice and public health benefit. Several key considerations emerge in the post-study phase as biomarkers move toward implementation.

Regulatory qualification pathways for biomarkers continue to evolve, with agencies like the FDA providing frameworks for biomarker qualification through drug development tool programs [32]. The level of evidence required depends on the proposed context of use, with biomarkers intended for regulatory decision-making requiring more extensive validation across diverse populations [31] [32]. Engaging regulatory agencies early in the development process can help align validation strategies with expectations and mitigate downstream delays [31].

Real-world performance monitoring represents an essential component of post-study evaluation, as biomarkers validated in controlled research settings may perform differently in routine clinical practice [25]. Establishing systems to track biomarker performance across diverse healthcare settings and patient populations provides critical feedback for refining interpretation guidelines and identifying implementation barriers [17] [25]. This is particularly important for biomarkers that may exhibit population-specific variations in performance or clinical utility.

Knowledge translation and implementation science approaches are increasingly recognized as essential for bridging the gap between biomarker discovery and clinical impact. Effective translation requires attention to how biomarker information will be communicated to healthcare providers and patients across different cultural contexts and health literacy levels [27]. Developing population-specific educational materials and decision support tools can facilitate appropriate adoption and use of validated biomarkers.

Addressing data ownership, sharing policies, and post-study expectations is not merely an administrative requirement in biomarker research—it is a scientific imperative that directly impacts the validity, reproducibility, and utility of research findings across diverse populations. The frameworks and methodologies presented here provide a structured approach for researchers navigating this complex landscape, emphasizing the importance of proactive planning, stakeholder engagement, and adaptive governance throughout the research lifecycle.

As biomarker technologies continue to evolve, incorporating artificial intelligence, multi-omics integration, and digital biomarkers from wearable devices, the governance challenges will likely intensify [17] [27] [25]. Researchers who embrace comprehensive data governance as an enabler rather than a barrier will be best positioned to advance precision medicine through biomarkers that are not only scientifically valid but also ethically sound and equitable in their application across all populations. The future of biomarker research depends on creating governance frameworks that are as robust and sophisticated as the scientific methods they support, ensuring that breakthroughs in understanding biological mechanisms translate into meaningful improvements in human health for everyone, regardless of their geographic, genetic, or socioeconomic background.

From Bench to Bedside: Advanced Methodologies, Technologies, and Application Frameworks

Leveraging Multi-Omics Approaches for Comprehensive Biomarker Signatures

Multi-omics strategies, which integrate genomics, transcriptomics, proteomics, and metabolomics, have fundamentally transformed biomarker discovery and enabled novel applications in personalized oncology and disease management [33]. This integrated approach provides a comprehensive understanding of cellular dynamics by capturing multiple layers of biological information that collectively govern complex disease processes [33] [34]. The emergence of high-throughput technologies has catalyzed a paradigm shift in translational medicine projects toward collecting multi-omics patient samples, allowing researchers to move beyond fragmented single-omics analyses toward a holistic view of biological systems [35].

The fundamental premise of multi-omics biomarker discovery lies in its ability to characterize molecular signatures that drive disease initiation, progression, and therapeutic resistance through vertically integrated biological data [33]. Where traditional single-omics approaches provide limited insights, multi-omics integration reveals interconnected molecular networks, offering more robust results for biomarker identification [33]. This comprehensive framework has become indispensable for cancer diagnosis, prognosis, and therapeutic decision-making, with growing applications in metabolic diseases like prediabetes and other complex conditions [33] [34].

Technological advancements in single-cell multi-omics and spatial multi-omics technologies are further expanding the scope of biomarker discovery, enabling unprecedented resolution in characterizing cellular microenvironments and intercellular communications within tissues [33]. These developments, coupled with sophisticated computational integration methods, are deepening our understanding of disease heterogeneity and accelerating the development of clinically actionable biomarkers across diverse patient populations [33].

Multi-Omics Integration Strategies and Comparative Performance

Integration Methodologies and Computational Approaches

Multi-omics data integration employs sophisticated computational strategies to extract meaningful biological insights from complex, heterogeneous datasets. Current integration methods can be broadly categorized into network-based, statistics-based, and deep learning-based approaches, each with distinct strengths for specific research objectives [36]. Network-based methods like Similarity Network Fusion (SNF) construct patient similarity networks across different omics layers and fuse them to identify disease subtypes [36]. Statistics-based approaches including iClusterBayes use Bayesian models to infer latent variables that capture shared variation across omics datasets, while deep learning methods like Subtype-GAN employ generative adversarial networks to learn integrated representations [36].

The selection of an appropriate integration strategy depends heavily on research objectives, which typically include: (i) detecting disease-associated molecular patterns, (ii) subtype identification, (iii) diagnosis/prognosis, (iv) drug response prediction, and (v) understanding regulatory processes [35]. Horizontal integration combines the same type of omics data across different samples or studies, while vertical integration analyzes different omics layers from the same biological samples to understand causal relationships and regulatory mechanisms [33]. The increasing complexity and scale of multi-omics datasets, particularly from single-cell and spatial platforms, necessitate these sophisticated computational approaches for meaningful biological inference [33].

Performance Benchmarking of Integration Methods

Comprehensive evaluation of multi-omics integration methods has revealed critical insights about their performance characteristics. Benchmarking studies assessing accuracy, robustness, and computational efficiency across multiple cancer types have demonstrated that method performance varies significantly based on disease context and data composition [36]. Surprisingly, contrary to the widespread assumption that incorporating more omics data types always improves results, evidence shows there are situations where integrating additional omics data negatively impacts performance [36].

Table 1: Performance Comparison of Multi-Omics Integration Methods for Cancer Subtyping

| Integration Method | Integration Type | Key Strengths | Reported Limitations | Best-Suited Applications |

|---|---|---|---|---|

| SNF (Similarity Network Fusion) | Network-based | Effective for subtype identification; handles data heterogeneity | Limited scalability to very large datasets | Cancer subtyping with clinical data integration |

| iClusterBayes | Statistics-based | Models uncertainty; provides probabilistic clustering | Computationally intensive for high dimensions | Subtype discovery with uncertainty quantification |

| MOFA (Multi-Omics Factor Analysis) | Statistics-based | Identifies latent factors driving variation; handles missing data | Requires careful factor number selection | Decomposing sources of variation across omics |

| NEMO | Network-based | Robust to outliers; preserves sample relationships | Limited interpretability of features | Clustering with noisy data |

| Subtype-GAN | Deep Learning | Captures complex non-linear relationships; high accuracy | Requires large sample sizes; computationally intensive | Pattern recognition in large multi-omics cohorts |

Performance evaluations consistently show that no single method outperforms others across all scenarios, with optimal selection depending on specific research questions, data types, and sample sizes [36]. For disease subtyping applications, network-based methods often demonstrate superior performance in identifying clinically relevant subgroups, while statistical approaches provide more interpretable models of biological mechanisms [36]. The effectiveness of different omics combinations also varies by disease context, with certain data type pairings yielding more robust biomarkers than others [36].

Experimental Protocols and Workflows

Standardized Multi-Omics Workflow for Biomarker Discovery

A robust multi-omics biomarker discovery pipeline encompasses coordinated stages from sample collection through data integration and validation. The following workflow diagram illustrates the key stages:

Sample Collection and Preparation: The initial stage involves collecting appropriate biological specimens (tissue, blood, or other biofluids) from well-characterized patient cohorts with appropriate clinical annotations. For circulating biomarker studies, peripheral blood mononuclear cells (PBMCs) and plasma are commonly used, with careful attention to sample processing protocols to preserve molecular integrity [37]. In oncology applications, liquid biopsy platforms like ApoStream enable capture of viable whole cells from liquid biopsies when traditional biopsies aren't feasible, preserving cellular morphology for downstream multi-omic analysis [38].

Multi-Omics Data Generation: This stage involves parallel generation of data across multiple molecular layers. Genomics investigates DNA-level alterations using whole exome sequencing (WES) or whole genome sequencing (WGS) to identify copy number variations, mutations, and single nucleotide polymorphisms [33]. Transcriptomics profiles RNA expression using RNA sequencing, encompassing mRNAs, noncoding RNAs, and microRNAs [33]. Proteomics analyzes protein abundance, modifications, and interactions using liquid chromatography-mass spectrometry (LC-MS) and reverse-phase protein arrays [33] [34]. Metabolomics examines cellular metabolites through LC-MS and gas chromatography-mass spectrometry [33].

Quality Control and Preprocessing: Each omics dataset undergoes stringent quality control measures specific to the technology platform. For sequencing data, this includes adapter trimming, quality filtering, and removal of low-quality reads. For proteomics and metabolomics data, normalization, batch effect correction, and peak detection are critical steps [33]. Single-cell RNA sequencing data requires additional processing including cell filtering, normalization, and batch correction using methods like Harmony [37].