Optimizing Controlled Feeding Study Designs for Robust Dietary Biomarker Development

This article provides a comprehensive guide for researchers and drug development professionals on designing and executing controlled feeding studies to discover and validate novel dietary biomarkers.

Optimizing Controlled Feeding Study Designs for Robust Dietary Biomarker Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on designing and executing controlled feeding studies to discover and validate novel dietary biomarkers. It covers the foundational principles of dietary biomarker discovery, details advanced methodological frameworks including multi-omics integration and AI-driven data analysis, and addresses key challenges in standardization and clinical translation. By outlining a systematic pathway from study conception to biomarker validation, this resource aims to enhance the precision, efficiency, and applicability of nutritional research, ultimately advancing the field of precision medicine and proactive health management.

Foundations of Dietary Biomarker Discovery: The Critical Role of Controlled Feeding Studies

Frequently Asked Questions (FAQs)

What is the primary limitation of using self-reported data like FFQs in nutrition research?

Self-reported dietary intake methods, such as Food Frequency Questionnaires (FFQs), are subjective and introduce significant measurement error. Individuals often struggle to recall foods consumed, determine accurate portion sizes, and tend to underreport intake, especially for unhealthy foods. This foundational data inaccuracy impedes our ability to establish valid links between diet and health [1] [2].

How do dietary biomarkers address this limitation?

Dietary biomarkers are measurable biological indicators obtained from biospecimens like blood or urine. They provide an objective assessment of nutrient intake or exposure by measuring compounds the body produces when it metabolizes a specific nutrient. This eliminates the bias of self-reported data and offers a more proximal and accurate measure of actual intake [1] [2].

What are the main categories of dietary biomarkers?

Dietary biomarkers can be categorized based on their timeframe and purpose:

- Recovery Biomarkers: Measure the urinary recovery of metabolites from a nutrient (e.g., doubly labeled water for energy, urinary nitrogen for protein) [3] [2].

- Concentration Biomarkers: Reflect the concentration of a nutrient or its metabolite in blood or other tissues (e.g., serum carotenoids for fruit/vegetable intake) [2].

- Predictive Biomarkers: Developed through controlled feeding studies to calibrate self-reported intake, even without a perfect recovery biomarker [3].

What is "regression calibration" and how is it used with biomarkers?

Regression calibration is a statistical method that uses biomarker measurements from a sub-cohort (a calibration cohort) to correct for random and systematic measurement errors in the self-reported dietary data of the entire study population. This corrected intake value is then used in diet-disease association analyses, leading to more reliable risk estimates [3].

Are there biomarkers for specific food components?

Yes, novel biomarkers for specific foods and dietary components are being developed. For example, the carbon stable isotope abundance (δ13C) in blood can serve as a biomarker for estimating intake of cane sugar and high-fructose corn syrup, which are derived from C4 plants [2]. The field of metabolomics is accelerating the discovery of such food-specific biomarkers [1] [2].

Troubleshooting Common Experimental Challenges

Problem: High Variability in Biomarker Measurements

Issue: Biomarker measurements, such as those from a single 24-hour urine collection for sodium, show high within-individual, day-to-day variation, weakening their correlation with true long-term intake [3].

Solution:

- Repeated Measurements: Collect multiple biospecimen samples (e.g., urine on multiple non-consecutive days) from each participant to better estimate habitual intake.

- Utilize Controlled Feeding Studies: Conduct biomarker development studies, like the NPAAS-FS, where participants are fed a known diet. This allows researchers to directly model the relationship between consumed nutrients and biomarker levels, accounting for variability [3].

- Statistical Modeling: Use measurement error models that explicitly account for within-person variation when calibrating self-reported intake or assessing diet-disease associations [3].

Problem: Lack of an "Objective" Recovery Biomarker

Issue: For most nutrients, a perfect "objective" recovery biomarker (one that equals true intake plus random, independent error) does not exist. Using an imperfect biomarker for calibration can lead to biased results in association studies [3].

Solution:

- Alternative Calibration Designs: Employ study designs that do not rely on pre-existing objective biomarkers.

- Biomarker Development Cohort Approach: Use data from a controlled feeding study to develop a calibration equation that predicts true intake (

Z) using the biomarker (W), self-reported intake (Q), and subject characteristics (V) [3]. - Two-Stage Approach: Combine the feeding study (biomarker development cohort) with a larger calibration cohort that has both biomarker and self-report data to develop a more robust calibration equation [3].

- Biomarker Development Cohort Approach: Use data from a controlled feeding study to develop a calibration equation that predicts true intake (

- Direct Calibration: In the absence of a strong biomarker, the controlled feeding study can be used to calibrate self-reported intake directly, bypassing the need for a biospecimen-based biomarker altogether [3].

Experimental Protocols & Workflows

Protocol: Designing a Controlled Feeding Study for Biomarker Development

Objective: To establish a quantitative relationship between the intake of a specific nutrient and the level of a candidate biomarker in a biospecimen.

Methodology:

- Participant Recruitment: Recruit a representative sample (e.g., ~150 participants) from the target population [3].

- Diet Design: Provide participants with a diet that approximates their usual intake for a stabilization period (e.g., 2 weeks) to allow biomarker levels to stabilize while preserving intake variations across the sample [3].

- Dietary Control & Documentation: Weigh and record all food provided to participants. Use a precise dietary analysis system to document the actual consumed amounts of the target nutrient (

X*). - Biospecimen Collection: Collect relevant biospecimens (e.g., blood, urine) at designated times, such as a 24-hour urine collection on the penultimate day of the feeding period [3].

- Biomarker Assay: Analyze biospecimens using validated techniques (e.g., mass spectrometry, immunoassays) to quantify the candidate biomarker (

W) [4]. - Data Analysis: Fit a measurement error model (e.g.,

W = β0 + βzZ + εW) to develop the algorithm that translates biomarker levels into estimated intake.

Workflow: Integrating Biomarker Data for Diet-Disease Analysis

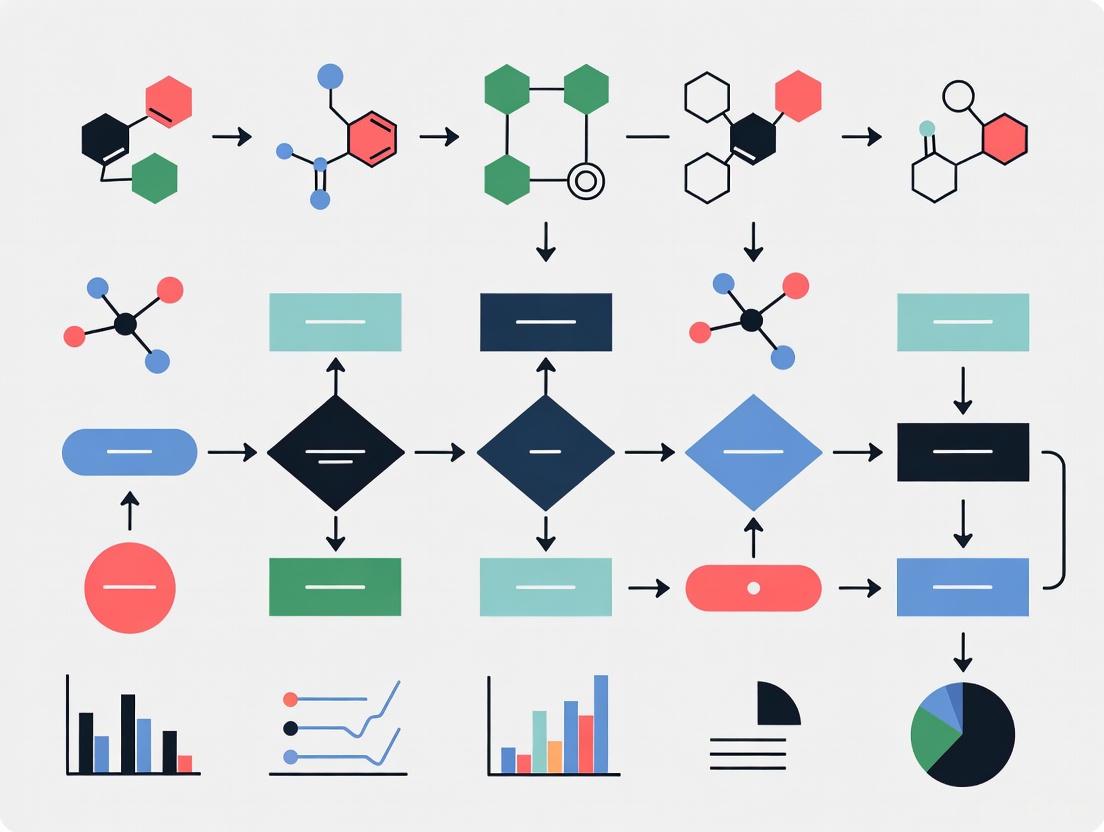

The following diagram illustrates the multi-stage process of using biomarkers to correct self-reported data in a large epidemiological study.

Key Research Reagent Solutions

The table below details essential materials and their functions in dietary biomarker research.

| Research Reagent / Material | Function & Application in Biomarker Research |

|---|---|

| Doubly Labeled Water | Gold-standard recovery biomarker for measuring total energy expenditure in free-living individuals [3] [2]. |

| 24-Hour Urine Collection Kits | Used for the non-invasive collection of urine to measure recovery biomarkers for protein (urinary nitrogen), sodium, and potassium [3] [2]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Analytical platform for identifying and quantifying a wide range of nutrient metabolites and novel biomarkers with high precision [4]. |

| Next-Generation Sequencing (NGS) | Used in molecular biomarker discovery (e.g., for cancer) to profile genetic changes. In nutrition, it can help understand genetic factors affecting nutrient metabolism [5] [6]. |

| Stable Isotopes (e.g., 13C) | Serve as tracers in controlled studies to track the metabolic fate of specific nutrients or as biomarkers themselves (e.g., for C4 plant-based sugars) [2]. |

| Validated Food Composition Databases | Critical for converting consumed foods into nutrient intakes (X*) in controlled feeding studies and for analyzing self-reported dietary data, despite their limitations [1] [3]. |

| Automated Self-Administered 24-h Recall (ASA24) | A web-based tool to reduce participant and researcher burden in dietary assessment, though it still relies on self-report [2]. |

Table 1: Characteristics of Major Dietary Biomarker Types

| Biomarker Type | Key Examples | Typical Biospecimen | Strengths | Limitations |

|---|---|---|---|---|

| Recovery | Doubly Labeled Water (Energy), Urinary Nitrogen (Protein) | Urine, Blood | Considered objective; validates other methods [3] | Very few exist; expensive; high participant burden [2] |

| Concentration | Serum Carotenoids, Fatty Acid Profiles | Blood, Adipose Tissue | Reflects medium/long-term status; less invasive | Influenced by homeostatic control & metabolism, not just intake [2] |

| Predictive / Calibration | Urinary Sodium/Potassium (from single 24-h urine), δ13C (for sugars) | Urine, Blood | Can be developed for nutrients lacking recovery biomarkers; corrects self-report error [3] | Requires complex modeling & feeding studies for development [3] |

Table 2: Comparison of Dietary Assessment Methods

| Method | Principle | Key Advantage | Key Limitation |

|---|---|---|---|

| Food Frequency Questionnaire (FFQ) | Self-reported frequency of food consumption over time | Captures habitual diet; feasible for large cohorts [2] | Prone to systematic measurement error and recall bias [1] [2] |

| 24-Hour Dietary Recall | Self-reported detailed intake over previous 24 hours | More precise for short-term intake than FFQ [2] | High day-to-day variability; does not represent habitual intake alone [2] |

| Biomarkers | Objective measurement in biological samples | Unbiased; not reliant on memory or food composition tables [1] | Costly; invasive; not yet available for most nutrients [1] [2] |

FAQs: Core Biomarker Concepts and Calculations

1. What is the difference between a biomarker and a clinical endpoint?

A biomarker is a defined characteristic measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention. It is not a direct measure of how an individual feels, functions, or survives. In contrast, a clinical endpoint is a precisely defined variable that reflects how an individual feels, functions, or survives, and is statistically analyzed to address a specific research question [7]. Biomarkers can sometimes serve as surrogate endpoints in clinical trials if they are validated to predict clinical benefit [7].

2. How are sensitivity and specificity defined for a diagnostic biomarker?

- Sensitivity refers to the test's ability to correctly identify individuals who have the disease (true positive rate).

- Specificity refers to the test's ability to correctly identify individuals who do not have the disease (true negative rate) [8].

These metrics are core components of a biomarker's clinical validity, which establishes how well the biomarker correctly identifies or predicts a clinical condition [8].

3. What is the purpose of analytical validation for a biomarker assay?

Analytical validation is a process to establish that the performance characteristics of an assay or test are acceptable. This includes evaluating its:

- Sensitivity: The ability to detect the biomarker when it is present.

- Specificity: The ability to yield a negative result when the biomarker is absent.

- Accuracy: The closeness of agreement between the measured value and the true value.

- Precision: The agreement between a series of measurements taken from the same homogeneous sample under prescribed conditions [7].

This process validates the test's technical performance but does not validate its usefulness for a specific clinical purpose [7].

4. What common pharmacokinetic parameters are derived from DCE-MRI data?

Dynamic Contrast-Enhanced Magnetic Resonance Imaging (DCE-MRI) is used to quantify microvascular parameters. The analysis of the time-intensity curve can yield several semi-quantitative and quantitative parameters [9].

Table 1: Common Pharmacokinetic and Semi-Quantitative Parameters in DCE-MRI

| Parameter | Definition | Unit |

|---|---|---|

| Maximum Enhancement | The maximum signal difference divided by the baseline signal. | % |

| Time to Peak | Time elapsed between arterial peak enhancement and the maximum tissue enhancement. | sec |

| Rate of Enhancement | The speed of signal increase during the initial wash-in phase. | %/min |

| Initial Area Under the Curve (iAUC) | The area under the tissue concentration-time curve up to a stipulated initial time point. | - |

| Ktrans | The volume transfer constant between blood plasma and the extracellular extravascular space. | min-1 |

| ve | The volume of the extracellular extravascular space per unit volume of tissue. | % |

The quantitative parameters like Ktrans and ve are derived from pharmacokinetic modeling, which requires measurement of the Arterial Input Function (AIF)—the concentration-time curve of contrast agent in a feeding artery [9].

Troubleshooting Guides for Biomarker Development

Issue 1: High Measurement Error in Self-Reported Nutritional Biomarkers

Problem: In nutritional studies, systematic measurement error in self-reported dietary data (like FFQs) can lead to biased associations in diet-disease risk studies. This error is often related to individual characteristics like BMI [10].

Solution: Regression Calibration with Controlled Feeding Studies

- Approach: Use a controlled feeding study to develop an objective biomarker that can correct for systematic error in self-reported data.

- Protocol:

- Feeding Study (Biomarker Development): In a sub-cohort, provide participants with food that mimics their habitual diet (as described by a food record) but with precisely characterized nutrient content. Collect objective measurements (e.g., blood, urine) [10].

- Biomarker Substudy (Calibration): In a larger sub-cohort, collect both self-reported data and the same objective measurements.

- Full Cohort (Association Analysis): Use the established calibration equation to correct the self-reported data in the full cohort, leading to more accurate estimates of diet-disease associations [10].

- Consideration: Standard regression calibration assumes "classical" measurement error. Biomarkers developed via regression from feeding studies may introduce "Berkson-type" error, requiring specialized methods to avoid bias in final association estimates [10].

Issue 2: Poor Robustness and Reproducibility of a Biomarker Assay

Problem: An optimized biomarker protocol performs well under ideal conditions but is sensitive to small experimental variations, leading to failures and inconsistent results during routine use.

Solution: Robust Parameter Design (RPD) and Optimization

- Approach: Use statistical design of experiments (DOE) and response function modeling to develop a protocol that is both inexpensive and robust to noise factors.

- Protocol:

- Experimental Design: Classify factors into control factors (adjustable during production) and noise factors (hard to control). Run a staged experiment (e.g., screening, fractional factorial, composite design) to explore the factor-response space [11].

- Model Fitting: Fit a mixed-effects model to estimate both fixed factor effects and variance components from random noise factors. Use model selection criteria to derive a parsimonious model [11].

- Robust Optimization: Formulate a risk-averse optimization problem to select control factor settings that minimize cost while ensuring protocol performance remains above a required threshold with high probability, even in the presence of noise factor variations [11].

Issue 3: Biomarker Fails to Achieve Clinical Adoption

Problem: Many discovered biomarkers stall and never reach clinical practice, often due to deficiencies in validation and demonstration of utility.

Solution: Systematic Evaluation Using the Biomarker Toolkit

- Approach: Use an evidence-based checklist to guide development and evaluate the biomarker's potential for clinical success.

- Protocol: Systematically assess your biomarker against validated attributes in four key areas [8]:

- Rationale: Is there a clear, unmet clinical need? Is the hypothesis pre-specified?

- Analytical Validity: Is the assay validated for precision, reproducibility, and accuracy? Are biospecimen collection and handling procedures standardized?

- Clinical Validity: Does the biomarker have demonstrated sensitivity, specificity, and is it backed by studies with appropriate design, blinding, and statistical power?

- Clinical Utility: Does the biomarker lead to a net improvement in health outcome? Is it cost-effective, feasible to implement, and approved by relevant guidelines? [8]

- Scoring: Publications supporting the biomarker can be scored based on the reporting of these attributes. Higher scores are significantly associated with successful biomarker implementation [8].

Experimental Workflows and Signaling Pathways

Biomarker Development and Validation Workflow

DCE-MRI Data Acquisition and Analysis Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Biomarker Development and Analysis

| Item / Reagent | Function / Application |

|---|---|

| Validated Assay Kits | Provide standardized reagents and protocols for measuring specific biomarkers (e.g., proteins, metabolites) with defined analytical performance (sensitivity, specificity) [7] [8]. |

| Paramagnetic Contrast Agents (e.g., Gd-DTPA) | Used in DCE-MRI to alter tissue relaxation times (T1), allowing for the visualization and quantification of tissue perfusion and microvascular permeability [9]. |

| Standardized Reference Materials | Used for assay calibration, quality control, and ensuring reproducibility and accuracy of biomarker measurements across different laboratories and studies [8]. |

| Biospecimen Collection Kits | Standardized containers and preservatives for consistent collection, processing, and storage of biological samples (e.g., blood, urine, tissue), which is critical for analytical validity [8]. |

| Software for Pharmacokinetic Modeling | Analyzes dynamic imaging data (e.g., from DCE-MRI) to deconvolve tissue curves and the arterial input function, calculating quantitative parameters like Ktrans and ve [9]. |

The Dietary Biomarkers Development Consortium (DBDC) is leading a pioneering effort to improve dietary assessment through the discovery and validation of biomarkers for foods commonly consumed in the United States diet [12]. This initiative addresses a critical challenge in nutrition research: the accurate assessment of diet in free-living populations, which has traditionally relied on self-reported methodologies that are often distorted by various systematic and random measurement errors [12].

The DBDC represents the first major systematic effort to discover and validate food intake biomarkers specifically for United States populations, taking into account transatlantic differences in food preferences, governmental regulations, and dietary recommendations [12]. The consortium employs a structured three-phase approach to identify, evaluate, and validate food biomarkers using controlled feeding studies and advanced metabolomic technologies [12] [13].

The 3-Phase Biomarker Development Approach

The DBDC's systematic approach ensures rigorous biomarker identification and validation through sequential phases.

Table 1: DBDC's 3-Phase Biomarker Development Framework

| Phase | Primary Objective | Study Design | Key Outcomes |

|---|---|---|---|

| Phase 1: Discovery | Identify candidate biomarker compounds | Controlled feeding trials with test foods in prespecified amounts [12] | Characterization of pharmacokinetic parameters of candidate biomarkers [12] |

| Phase 2: Evaluation | Assess ability to identify individuals consuming biomarker-associated foods | Controlled feeding studies of various dietary patterns [12] | Determination of biomarker sensitivity and specificity [12] |

| Phase 3: Validation | Validate prediction of recent and habitual consumption | Evaluation in independent observational settings [12] | Validation of biomarkers for use in free-living populations [12] |

Phase 1: Biomarker Discovery

The initial discovery phase focuses on identifying potential biomarkers through tightly controlled feeding studies.

Experimental Protocols for Phase 1 Studies:

- Controlled Feeding Trials: Administer test foods in prespecified amounts to healthy participants [12]

- Specimen Collection: Collect blood and urine specimens during feeding trials for metabolomic profiling [12]

- Metabolomic Analysis: Employ liquid chromatography-mass spectrometry (LC-MS) and hydrophilic-interaction liquid chromatography (HILIC) protocols [12]

- Pharmacokinetic Characterization: Analyze time-response relationships and dose-response parameters [12]

UC Davis Implementation Example: Researchers at the UC Davis Dietary Biomarkers Development Center employ a randomized controlled dietary intervention where different servings of fruit and vegetable mixtures are provided in an inverse dosing gradient (high to low fruit/low to high vegetables) within a standard mixed meal setting [14]. They collect fasting blood samples followed by postprandial collections at 1, 2, 4, 6, and 8 hours after test meals, with urine pooled between 0-2, 2-4, 4-6, and 6-8 hours, plus 8-24 hour collections [14].

Phase 2: Biomarker Evaluation

The evaluation phase assesses how well candidate biomarkers perform in identifying individuals consuming specific foods across varied dietary patterns.

Methodological Approach:

- Utilize controlled feeding studies with various dietary patterns [12]

- Evaluate candidate biomarkers' ability to correctly identify consumption of biomarker-associated foods [12]

- Assess biomarker performance across diverse dietary backgrounds

UC Davis Implementation Example: Aim 2 of the UC Davis protocol recruits 40 volunteers randomized to either a typical American diet (TAD) or a high-quality Dietary Guidelines for Americans (DGA) diet in a parallel design [14]. Participants provide fasting blood samples and undergo meal challenges with the same test meal described in Phase 1, with identical sample collection protocols before and after the one-week feeding trial [14].

Phase 3: Biomarker Validation

The final validation phase tests biomarker performance in real-world settings.

Validation Protocols:

- Evaluate candidate biomarkers in independent observational settings [12]

- Assess ability to predict recent and habitual consumption of specific test foods [12]

- Compare biomarker performance against traditional dietary assessment methods [14]

UC Davis Implementation Example: Aim 3 of the UC Davis protocol evaluates the robustness and reliability of food exposure markers within the range of typical and recommended dietary intakes through a cross-sectional study in a diverse cohort, comparing biomarkers to traditional diet recall assessment tools [14].

Experimental Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials and Technologies for Dietary Biomarker Studies

| Category | Specific Solutions | Function/Application |

|---|---|---|

| Analytical Platforms | Liquid chromatography-mass spectrometry (LC-MS) [12] | Metabolite separation and identification |

| Hydrophilic-interaction liquid chromatography (HILIC) [12] | Polar metabolite analysis | |

| LC-QTOF MS and LC-TripleTOF MS [14] | High-resolution MS/MS data collection | |

| Biospecimen Types | Blood plasma/serum [12] [14] | Source of circulating metabolites |

| Urine samples [12] [14] | Source of excreted metabolites | |

| Fecal samples [14] | Banked for future microbiome analysis | |

| Study Designs | Controlled feeding trials [12] | Biomarker discovery under controlled conditions |

| Randomized parallel diet studies [14] | Biomarker evaluation across dietary patterns | |

| Cross-sectional observational studies [14] | Biomarker validation in free-living populations | |

| Data Analysis Tools | Generalized linear models (GLM) [14] | Statistical analysis of metabolite levels |

| Bayesian regression [14] | Effect size estimation with credible intervals | |

| Multivariate statistical methods [14] | Pattern recognition in metabolomic data |

Troubleshooting Guides and FAQs

Experimental Design Challenges

Q: How can we address the high inter-individual variability in metabolite levels due to genetics, gut microbiome, and other factors?

A: The DBDC recommends employing advanced statistical models that account for this variability:

- Construct multiple generalized linear models (Gaussian, log-link Gaussian, log-normal, log-link inverse Gaussian, and log-link Gamma) and select the best model using Bayesian information criterion [14]

- Use Bayesian regression with credible intervals >95% for effect size estimation [14]

- Include subject random effects in models to account for individual variability [14]

Q: What is the optimal sample collection timing for capturing food-specific metabolites?

A: Based on DBDC protocols:

- For blood: Collect fasting samples followed by postprandial collections at 1, 2, 4, 6, and 8 hours after test meals [14]

- For urine: Pool samples between 0-2, 2-4, 4-6, and 6-8 hours, plus 8-24 hour collections [14]

- These timeframes capture both acute and medium-term metabolite responses

Analytical Methodology Issues

Q: How do we handle unknown metabolites in biomarker discovery?

A: The DBDC Metabolomics Core employs:

- Exhaustive high-resolution MS/MS data collections with ramped collision energies using LC-QTOF MS [14]

- SWATH-based LC-TripleTOF MS for comprehensive metabolite coverage [14]

- Integration with food composition databases to ensure biomarker specificity to food groups [14]

Q: How do we ensure analytical precision and stability across multiple sites and studies?

A: The DBDC implements:

- Extensive QA/QC strategies to ensure analytical precision and stability [14]

- Harmonized LC-MS and HILIC protocols across study centers [12]

- Systems to enhance harmonization of metabolite identifications across platforms based on MS/MS ion patterns and retention times [12]

Biomarker Validation Challenges

Q: How do we establish that candidate biomarkers meet validity criteria for food intake?

A: Following established biomarker validation principles:

- Assess content validity: How well the biomarker measures the intended biological phenomenon [15]

- Evaluate construct validity: How well the biomarker aligns with other relevant characteristics of the dietary exposure [15]

- Determine criterion validity: How accurately the biomarker correlates with the specific food intake of interest [15]

Q: What performance metrics should we use for biomarker evaluation?

A: The DBDC approach includes assessment of:

- Sensitivity: Ability to identify true positive consumption [15]

- Specificity: Ability to identify true negative consumption (non-consumption) [15]

- Dose-response relationships: Correlation between biomarker levels and amount consumed [12]

- Time-response relationships: Kinetic parameters of biomarker appearance and clearance [12]

Integration with Broader Biomarker Development Frameworks

The DBDC's 3-phase approach aligns with established biomarker development pipelines while specifically addressing the unique challenges of dietary biomarkers.

The DBDC framework specifically addresses the challenge that "few metabolites have met the criteria for serving as valid biomarkers of food intake as proposed by Dragsted et al, including plausibility, dose-response, time-response, analytic detection performance, chemical stability, robustness, and temporal reliability in free-living populations consuming complex diets" [12].

The Dietary Biomarkers Development Consortium's systematic 3-phase approach provides a robust framework for discovering and validating dietary intake biomarkers. By implementing controlled feeding studies, advanced metabolomic technologies, and rigorous statistical analyses, this methodology addresses fundamental challenges in nutritional epidemiology. The structured troubleshooting guides and FAQs presented here offer practical solutions to common experimental challenges, supporting researchers in optimizing their controlled feeding study designs for biomarker development research.

The ongoing work of the DBDC promises to "significantly expand the list of validated biomarkers of intake for foods consumed in the United States diet, which can help advance understanding of how diet influences human health" [12]. As of the current date, all three Phase 1 studies across the consortium centers are actively recruiting participants and generating data that will feed into the subsequent evaluation and validation phases [16].

Multi-omics integration represents a transformative approach in biological sciences, converging data from genomics, transcriptomics, proteomics, metabolomics, and other omics technologies to provide a comprehensive understanding of biological systems [17]. This methodology is particularly powerful for biomarker discovery, as it enables researchers to uncover complex interactions and regulatory mechanisms that remain invisible when analyzing single omics layers in isolation [18]. The integration of distinct molecular measurements can reveal relationships crucial for understanding complex phenotypes, including multifactorial diseases, by identifying concurrent transcriptomics, proteomics, and epigenomic alterations [18].

The fundamental principle underlying multi-omics integration lies in the complementary nature of different biological data layers. Proteins act as enzymes, structural elements, and signaling molecules, while metabolites represent the end products and intermediates of biochemical reactions [19]. Studying either layer in isolation provides only a partial picture: changes in protein expression don't necessarily indicate altered enzymatic activity, and shifts in metabolite concentrations may occur without clear knowledge of upstream regulatory proteins [19]. By integrating proteomics and metabolomics data with genomic information, researchers can establish direct links between molecular regulators and metabolic outcomes, enabling deeper understanding of biological mechanisms and more robust biomarker identification.

Experimental Protocols for Multi-Omics Biomarker Studies

Controlled Feeding Study Design for Biomarker Development

Controlled feeding studies represent a gold standard approach for developing and validating dietary biomarkers, which can be integrated into multi-omics frameworks [10]. These studies employ specialized designs where participants are provided with standardized food that mimics their habitual diet, with precise documentation of nutrient intake [10]. The Women's Health Initiative (WHI) feeding study implemented a novel design where rather than feeding all women the same standard diets, each participant received food that approximated her habitual diet as described by her 4-day food record with adjustments based on individual discussions with study dietitians [10].

Key Methodological Steps:

Participant Recruitment and Baseline Assessment: Recruit participants representing target populations, collect comprehensive baseline data including medical history, anthropometrics, and habitual dietary patterns through food frequency questionnaires (FFQs) or food records [10].

Dietary Intervention Design: Develop individualized meal plans that mirror participants' usual dietary patterns while using dietary components with well-characterized nutrient content. This preserves natural variation in intake across the study sample [10].

Intervention Period: Implement a controlled feeding period (typically 2 weeks) during which all food is provided to participants. This allows blood and urine measures to stabilize and creates known intake conditions [10].

Biospecimen Collection: Collect blood, urine, or other relevant biospecimens at strategic time points for multi-omics analyses. In the WHI feeding study, recovery biomarkers for sodium and potassium intakes were measured from 24-hour urine collections completed on the penultimate day of the feeding period [10].

Multi-Omics Data Generation: Process biospecimens using appropriate technologies for genomic, proteomic, and metabolomic profiling, ensuring standardized protocols across all samples.

The Dietary Biomarkers Development Consortium (DBDC) has formalized a 3-phase approach for biomarker discovery and validation [20]:

- Phase 1: Controlled feeding trials where test foods are administered in prespecified amounts to healthy participants, followed by metabolomic profiling of blood and urine specimens to identify candidate compounds.

- Phase 2: Evaluation of candidate biomarkers' ability to identify individuals consuming biomarker-associated foods using controlled feeding studies of various dietary patterns.

- Phase 3: Validation of candidate biomarkers for predicting recent and habitual consumption of specific test foods in independent observational settings.

Sample Preparation Protocol for Multi-Omics Analysis

Proper sample preparation is critical for generating high-quality multi-omics data. The following workflow outlines key considerations for preparing samples that will undergo genomic, proteomic, and metabolomic analysis:

Goal: Obtain high-quality extracts suitable for multiple omics analyses from the same biological material [19].

Best Practices:

Joint Extraction Protocols: When possible, use protocols that enable simultaneous recovery of macromolecules (DNA, RNA, proteins) and metabolites from the same biological material to maintain biological context [19].

Sample Preservation: Keep samples on ice and process rapidly to minimize degradation. Use appropriate preservatives for specific analytes (e.g., RNase inhibitors for transcriptomics, protease inhibitors for proteomics) [19].

Internal Standards: Include isotope-labeled internal standards (e.g., labeled peptides for proteomics, labeled metabolites for metabolomics) to enable accurate quantification across analytical runs [19].

Quality Assessment: Implement rigorous quality control measures at each step, including assessment of DNA/RNA integrity, protein quality, and metabolite stability.

Challenge: Balancing extraction conditions that preserve proteins (which often require denaturants) with those that stabilize metabolites (which may be heat- or solvent-sensitive) [19].

Data Acquisition Methods for Multi-Omics Studies

Proteomics Workflow:

- Primary Technology: Liquid chromatography coupled with tandem mass spectrometry (LC-MS/MS) [19]

- Acquisition Strategies:

- Data-Dependent Acquisition (DDA): For comprehensive protein identification

- Data-Independent Acquisition (DIA): For high reproducibility and broad proteome coverage [19]

- Targeted Proteomics: Parallel reaction monitoring (PRM) or selected reaction monitoring (SRM) for specific proteins of interest [19]

- Quantification Methods: Tandem Mass Tags (TMT) for multiplexed quantification across samples [19]

Metabolomics Workflow:

- Untargeted Approaches: LC-MS or GC-MS for broad metabolite coverage [19]

- Targeted Approaches: LC-MS/MS with multiple reaction monitoring (MRM) for precise quantification of predefined metabolites [19]

- Additional Technologies: Nuclear magnetic resonance (NMR) spectroscopy for highly reproducible metabolite quantification [19]

Genomics/Transcriptomics Workflow:

- Next-Generation Sequencing (NGS): For comprehensive genomic and transcriptomic profiling

- Array-Based Technologies: For cost-effective analysis of predefined genetic variants or expression profiles

Research Reagent Solutions

Table 1: Essential Research Reagents for Multi-Omics Biomarker Studies

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| Sample Collection & Stabilization | PAXgene Blood RNA Tubes, Streck Cell-Free DNA Tubes, RNAlater | Stabilize nucleic acids, proteins, and metabolites during sample collection and storage [19] |

| Nucleic Acid Extraction | QIAamp DNA/RNA Kits, MagMAX Total Nucleic Acid Isolation Kit | Isolate high-quality DNA and RNA from various biospecimens for genomic and transcriptomic analysis |

| Protein Digestion & Cleanup | Trypsin/Lys-C Mix, FASP Filter Aids, C18 Spin Columns | Digest proteins into peptides and remove contaminants prior to LC-MS/MS analysis [19] |

| Metabolite Extraction | Methanol:Water:Chloroform, Biocrates Kit | Extract polar and non-polar metabolites with high recovery and reproducibility [19] |

| Isotope-Labeled Standards | SILAC Amino Acids, Heavy Isotope-Labeled Peptides, (^{13})C-Labeled Metabolites | Enable accurate quantification in mass spectrometry-based assays [19] |

| Chromatography Columns | C18 Reverse-Phase Columns, HILIC Columns | Separate complex mixtures of peptides or metabolites prior to mass spectrometry analysis [19] |

| Multiplexing Reagents | Tandem Mass Tags (TMT), Isobaric Tags for Relative and Absolute Quantitation (iTRAQ) | Allow simultaneous analysis of multiple samples in a single LC-MS run [19] |

Multi-Omics Integration Workflows

Data Preprocessing and Normalization

The critical first step in multi-omics integration involves proper preprocessing and normalization of diverse datasets. Each omics data type has unique statistical distributions, measurement errors, and noise profiles, requiring tailored preprocessing before integration [18].

Key Preprocessing Steps:

Data Cleaning: Remove low-quality measurements, handle missing values using appropriate imputation methods, and filter artifacts.

Normalization: Apply techniques such as log-transformation, quantile normalization, or variance stabilization to make datasets comparable [19]. Normalizing raw data ensures compatibility across omics technologies with different measurement units and characteristics [21].

Batch Effect Correction: Use tools like ComBat to mitigate technical variation introduced by different processing batches, dates, or operators [19]. This ensures biological signals dominate subsequent analyses.

Quality Assessment: Implement rigorous QC metrics specific to each data type, including sample-level and feature-level quality checks.

For small- and medium-scale studies, storing and providing access to raw data is important for ensuring full reproducibility, as processing steps may vary and different researchers may need to make preprocessing assumptions appropriate for their specific downstream analyses [21].

Computational Integration Methods

Multiple computational approaches exist for integrating preprocessed multi-omics data. The choice of method depends on the biological question, data characteristics, and study design.

Table 2: Multi-Omics Data Integration Methods

| Method | Type | Key Features | Applications |

|---|---|---|---|

| MOFA [18] | Unsupervised | Bayesian factor analysis; infers latent factors capturing variation across data types | Exploratory analysis, identifying co-variation patterns, data compression |

| DIABLO [18] | Supervised | Multiblock sPLS-DA; integration in relation to categorical outcomes | Biomarker discovery, classification, phenotype prediction |

| SNF [18] | Unsupervised | Similarity Network Fusion; constructs sample-similarity networks | Sample clustering, subgroup identification |

| MCIA [18] | Unsupervised | Multiple Co-Inertia Analysis; covariance optimization across datasets | Simultaneous analysis of multiple omics datasets, visualization |

| MixOmics [22] | Both | Multivariate statistics; includes PLS, rCCA, sPLS-DA | Correlation analysis, dimension reduction, classification |

| WGCNA [22] | Unsupervised | Weighted correlation network analysis; correlation and topology | Gene co-expression networks, module-trait relationships |

| Pathway-Based [22] | Knowledge-driven | IMPALA, iPEAP, MetaboAnalyst; pathway enrichment | Biological interpretation, functional analysis |

Multi-Omics Experimental Workflow

The following diagram illustrates the complete workflow for a multi-omics study integrating controlled feeding design with biomarker development:

Technical Support: FAQs and Troubleshooting Guides

Frequently Asked Questions

Q1: What is the optimal order for processing different omics layers in integrated analyses?

A rational approach for disease state phenotyping typically follows this hierarchy: genome → epigenome → transcriptome → proteome → metabolome → microbiome [17]. The genome provides a foundational static snapshot, while subsequent layers offer increasingly dynamic information. However, the most responsive omics layer varies by research context. The transcriptome is often highly sensitive to interventions and may require more frequent assessment, while proteomics generally requires lower testing frequency due to protein stability [17].

Q2: How can we address the challenge of data heterogeneity in multi-omics integration?

Data heterogeneity arises from different technologies having unique noise profiles, detection limits, and measurement scales [18]. Address this through:

- Standardized preprocessing protocols for each data type [21]

- Appropriate normalization techniques (log-transformation, quantile normalization) [19]

- Batch effect correction using tools like ComBat [19]

- Data harmonization methods, such as style transfer based on conditional variational autoencoders [21]

Q3: What sample size is recommended for multi-omics biomarker studies?

When collecting multi-omics data, consider a sample size that provides sufficient statistical power [21]. For controlled feeding studies specifically, the WHI NPAAS-FS enrolled 153 participants, while the biomarker calibration cohort included 450 participants [10]. Larger samples are needed for biomarker validation phases, with the DBDC recommending independent observational cohorts for phase 3 validation [20].

Q4: How do we validate biomarkers discovered through multi-omics integration?

Employ a multi-stage validation approach:

- Technical validation using targeted assays (PRM for proteins, NMR for metabolites) [19]

- Independent validation in separate cohorts [20]

- Biological validation through functional studies

- Clinical validation for diagnostic or prognostic utility

Troubleshooting Common Experimental Issues

Problem: Poor correlation between proteomic and metabolomic data

Potential Causes and Solutions:

- Sample timing mismatch: Metabolites change rapidly while proteins are more stable. Ensure synchronized sample collection [17].

- Incompatible extraction methods: Optimize joint extraction protocols that preserve both protein and metabolite integrity [19].

- Technical artifacts: Check batch effects and platform-specific variability. Apply appropriate normalization and batch correction [18].

- Biological disconnect: The relationship might not be direct; incorporate intermediate omics layers (e.g., transcriptomics) for better context.

Problem: High technical variation in multi-omics measurements

Potential Causes and Solutions:

- Inconsistent sample processing: Implement standardized SOPs across all samples and batches.

- Inadequate quality control: Introduce more rigorous QC checkpoints at each processing step.

- Platform drift: Use internal standards and reference materials to correct for analytical variability [19].

- Sample degradation: Optimize collection-to-preservation time and storage conditions.

Problem: Difficulty in biological interpretation of integrated multi-omics signatures

Potential Causes and Solutions:

- Insufficient pathway context: Use integrated pathway analysis tools like IMPALA, iPEAP, or MetaboAnalyst that combine multiple omics data types [22].

- Overlooking regulatory mechanisms: Incorporate epigenomic or transcriptomic data to connect genomic variants with functional outcomes.

- Complex interactions: Employ network-based approaches (MetaMapR, Metscape, Grinn) to visualize and interpret complex relationships [22].

Multi-Omics Data Integration Methods

The following diagram illustrates the key computational approaches for integrating multi-omics datasets:

Multi-omics integration represents a powerful framework for advancing biomarker research, particularly when coupled with controlled feeding study designs. The synergistic analysis of genomic, proteomic, and metabolomic data provides unprecedented opportunities to uncover comprehensive biomarker profiles that reflect the complex interplay between different biological layers. By addressing key challenges in experimental design, data processing, computational integration, and biological interpretation, researchers can leverage these approaches to develop robust biomarkers with enhanced clinical utility. As technologies evolve and computational methods advance, multi-omics integration will continue to transform our understanding of health and disease, enabling more precise and personalized healthcare interventions.

Advanced Methodologies: Designing and Implementing High-Impact Feeding Trials

Technical Support Center: Troubleshooting Guides and FAQs

Common Experimental Challenges & Solutions

Q1: How can we mitigate participant dropout in long-term controlled feeding studies? A: Implement shorter, phased study designs. The Dietary Biomarkers Development Consortium (DBDC) uses a 3-phase approach where each phase has a specific, manageable goal, reducing long-term participant burden [12]. Maintain engagement through clear communication, flexible scheduling where possible, and regular feedback on study progress.

Q2: What is the best approach when a candidate biomarker shows high interpersonal variability? A: The DBDC strategy is to first characterize the biomarker's pharmacokinetic (PK) parameters in Phase 1 controlled feeding trials [12]. If high variability persists despite controlled intake, it may indicate strong influence of non-dietary factors (e.g., gut microbiota, genetics), and the biomarker may be unsuitable for quantitative intake assessment. Consider it for qualitative (presence/absence) assessment or focus discovery efforts on more stable compounds.

Q3: How should we handle discrepancies between self-reported dietary intake and biomarker levels in observational validation studies? A: This is a expected discovery step. In the DBDC Phase 3, candidate biomarkers are evaluated for their ability to predict habitual consumption in independent observational settings [12]. Discrepancies often reveal the limitations of self-report. Use biomarker data to calibrate self-reported intake measurements and develop error-correction models.

Q4: What is the recommended response when a biomarker is detected in participants who did not consume the target food? A: This indicates low specificity. Potential causes and actions include:

- Investigate dietary sources: The compound may be present in other unsuspected foods.

- Analyze metabolic pathways: The compound could be an endogenous metabolite influenced by other factors.

- Re-evaluate specificity: The biomarker may not be suitable as a standalone marker but could contribute to a composite biomarker panel.

Methodological Protocols

Protocol: Conducting a Phase 1 Single-Food Pharmacokinetic Study

Purpose: To identify candidate food biomarkers and characterize their pharmacokinetic profiles [12].

Methodology:

- Participant Administration: Administer a pre-specified amount of a single test food to healthy participants after a washout period.

- Biospecimen Collection: Collect serial blood (plasma/serum) and urine specimens at predetermined time points (e.g., 0, 30min, 1h, 2h, 4h, 8h, 24h) post-consumption.

- Metabolomic Profiling: Perform untargeted metabolomic profiling on biospecimens using Liquid Chromatography-Mass Spectrometry (LC-MS) and hydrophilic-interaction liquid chromatography (HILIC) protocols [12].

- Data Analysis: Identify metabolites that significantly increase post-consumption. Model their time-response curves to calculate PK parameters like time to peak concentration (T~max~) and elimination half-life.

Protocol: Implementing a Phase 2 Complex Dietary Pattern Study

Purpose: To evaluate the ability of candidate biomarkers to identify consumption of the target food within the context of various complex diets [12].

Methodology:

- Diet Design: Develop controlled diets that represent different dietary patterns (e.g., Western, Mediterranean, Vegetarian), with and without the inclusion of the target food.

- Controlled Feeding: Provide all meals and snacks to participants for the study duration.

- Biospecimen Collection: Collect biospecimens (e.g., 24-hour urine, fasting blood) at baseline and during the intervention periods.

- Biomarker Evaluation: Measure levels of the candidate biomarkers. Use statistical models (e.g., ROC analysis) to determine how well each biomarker can distinguish between diets that contain versus exclude the target food, even amidst a complex background diet.

Structured Data Tables

Table 1: DBDC Three-Phase Biomarker Validation Framework [12]

| Phase | Primary Goal | Study Design | Key Outputs |

|---|---|---|---|

| Phase 1: Discovery & PK | Identify candidate biomarkers and characterize pharmacokinetics. | Single-food administration with dense, serial biospecimen collection. | Candidate biomarkers with time-response and dose-response relationships. |

| Phase 2: Specificity | Evaluate biomarker performance within complex dietary patterns. | Controlled feeding of various dietary patterns with/without the target food. | Assessment of biomarker specificity and sensitivity in a complex matrix. |

| Phase 3: Observational Validation | Validate biomarkers for predicting habitual intake in free-living populations. | Independent observational studies with biomarker measurement and self-reported diet. | Validated biomarkers for recent and habitual intake in real-world settings. |

Table 2: Essential Reagent Solutions for Controlled Feeding Trials

| Research Reagent / Material | Function in Experiment |

|---|---|

| Standardized Test Foods | Provides a consistent and quantifiable dietary exposure for all participants, which is fundamental for dose-response assessment [12]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) Platforms | Enables high-throughput, untargeted metabolomic profiling of biospecimens to discover novel food-derived metabolites [12]. |

| Hydrophilic-Interaction Liquid Chromatography (HILIC) Columns | Enhances the separation and detection of polar metabolites in metabolomic analyses, expanding the range of detectable compounds [12]. |

| Stable Isotope-Labeled Compounds | Serves as internal standards for mass spectrometry to improve quantification accuracy and confirm metabolite identification. |

| Biospecimen Collection Kits | Standardizes the collection, processing, and storage of blood and urine samples to maintain analyte integrity and minimize pre-analytical variability [12]. |

Experimental Workflow and Structure Visualizations

Three-Phase Biomarker Development

DBDC Organizational Governance

Frequently Asked Questions (FAQs)

1. What is the most critical pre-analytical factor affecting metabolomic results? The entire pre-analytical phase is crucial, but sample collection and initial processing set the stage for data quality. Metabolites can be significantly influenced by the choice of collection tubes, timing of collection, and the delay before processing and stabilization [23]. Any variability introduced at these initial stages can alter the metabolic profile and compromise downstream analysis.

2. Should I collect serum or plasma for my blood-based metabolomics study? The choice depends on your specific analytical goals. Serum generally provides higher overall sensitivity and metabolite content, partly due to the volume displacement effect during clotting [23]. However, plasma offers quicker processing and potentially better reproducibility because it avoids the variable clotting process [23]. It is critical to maintain consistency in your clotting conditions if you choose serum and to be aware that the anticoagulant used in plasma collection (e.g., EDTA, heparin, citrate) can be a source of ionic interference in mass spectrometry [23].

3. How should urine samples be handled after collection to preserve metabolic integrity? Urine specimens should be centrifuged shortly after collection to remove cellular debris [24]. Subsequently, they must be stored on ice or refrigerated immediately [24]. The use of preservatives may be required for specific analyses, but this should be determined by your targeted metabolomic approach [24].

4. Why is the timing of biospecimen collection so important? Metabolite levels are dynamic and are significantly influenced by the circadian rhythm, nutritional status (fasting vs. non-fasting), and physical activity [23]. To minimize the impact of these factors and reduce inter-sample variability, all samples throughout a study should be collected within the same time lapse (e.g., early morning) and under similar conditions (e.g., after an overnight fast) [23].

5. What are the best practices for long-term storage of biospecimens? Storage must follow validated Standard Operating Procedures (SOPs). Key practices include using validated, monitored storage equipment like mechanical freezers or liquid nitrogen tanks and planning for backup systems and alarms to prevent losses from mechanical failures [24]. Furthermore, you should avoid unnecessary thawing and refreezing of samples, as this can degrade labile metabolites [24].

Troubleshooting Guides

Table 1: Common Pre-Analytical Issues and Solutions for Blood Collection

| Problem | Potential Consequence | Recommended Solution |

|---|---|---|

| Hemolysis during blood draw | Release of intracellular metabolites, altering plasma/serum metabolomic profile. | Ensure draw is performed by a trained phlebotomist; use proper needle size and gentle mixing of tubes [24]. |

| Prolonged processing time | Degradation of labile metabolites (e.g., RNA, proteins), glycolysis in blood cells. | Process and separate plasma/serum within 4 to 24 hours of the draw; reduce time for highly labile analytes [24] [23]. |

| Inconsistent clotting for serum | Variable release of metabolites from cells, leading to inter-sample variability. | Standardize and tightly control clotting time and temperature according to your SOP [23]. |

| Use of inappropriate collection tube | Ion suppression/enhancement in MS; contamination from tube components (polymers, slip agents). | Select tubes validated for metabolomics; use the same manufacturer and type throughout the study; avoid gel separator tubes for metabolomics [23]. |

| Multiple freeze-thaw cycles | Degradation of metabolites, leading to inaccurate concentration measurements. | Aliquot samples upon initial freezing; plan analyses to minimize thawing cycles [24]. |

Table 2: Common Pre-Analytical Issues and Solutions for Urine Collection

| Problem | Potential Consequence | Recommended Solution |

|---|---|---|

| Bacterial overgrowth in urine | Altered metabolite levels due to bacterial metabolism. | Store urine on ice or refrigerated immediately after collection; consider using preservatives for specific analyses [24]. |

| Inconsistency in collection type (random, first-morning, timed) | High physiological variability, complicating data interpretation. | Define and document the collection method (e.g., first-morning void) in the study protocol and ensure all participants adhere to it [24]. |

| Presence of particulate matter | Interference in analytical instrumentation; inaccurate metabolite measurements. | Centrifuge urine samples after collection to remove debris before aliquoting and storage [24]. |

| Suboptimal sample preparation for GC-MS | Inefficient derivatization and poor metabolite coverage. | For a low-volume GC-MS protocol, use a 1:8 dilution with methanol which has been shown to provide exhaustive metabolic coverage and good reproducibility [25]. |

Experimental Protocols for Key Biospecimen Types

Protocol 1: Optimized Urine Sample Preparation for Multi-Platform Metabolomics

This protocol is adapted from a study that evaluated different preparation methods for wide metabolite coverage using NMR and LC-MS platforms [25].

1. Collection: Collect urine in a sterile, leak-proof container. Document the time and type of collection (e.g., first-morning). 2. Initial Processing: Centrifuge the sample (e.g., 2000-3000 x g for 10 minutes) to remove cellular debris. 3. Aliquoting and Storage: Immediately aliquot the supernatant into pre-labeled cryovials and freeze at -80°C. 4. Preparation for Analysis (GC-MS):

- Thaw samples on ice.

- For GC-MS analysis, the optimized protocol is a 1:8 (urine:methanol) monophasic extraction [25].

- Vortex the mixture vigorously and incubate on ice for a set time (e.g., 10-20 minutes).

- Centrifuge at high speed (e.g., 14,000 x g for 15 minutes) to pellet proteins.

- Transfer the clear supernatant to a new vial for subsequent derivatization (e.g., methoximation and silylation) and analysis [26] [25].

Justification: This method was found to provide a large number of metabolites (215+ compounds), excellent reproducibility (201 metabolites with CV < 30%), and coverage of numerous metabolic pathways [25].

Protocol 2: Blood Collection and Processing for Plasma and Serum

This protocol synthesizes best practices from biobanking and metabolomics literature [24] [23].

1. Collection:

- Venipuncture: A trained phlebotomist should perform the blood draw to minimize hemolysis.

- Tubes: Use the correct vacuum tubes for the desired biofluid. For plasma, choose tubes with the appropriate anticoagulant (e.g., EDTA, heparin), noting that heparin is often preferred for its richer metabolomic profile, while EDTA can interfere with polar metabolite analysis [23]. For serum, use plain tubes without clot activators or gels if possible [23]. 2. Processing:

- Plasma: Gently invert tubes several times to mix the anticoagulant. Centrifuge at the recommended force and time (e.g., 2000 x g for 10-15 minutes at 4°C) as soon as possible, ideally within 4 hours of collection [24] [23].

- Serum: Allow blood to clot in a vertical position for a standardized time (typically 30-60 minutes) at room temperature. Then, centrifuge as for plasma. 3. Post-Processing: Carefully pipette the supernatant (plasma or serum) into pre-labeled cryovials, avoiding the buffy coat and any sediment. Flash-freeze aliquots and store at -80°C.

Workflow Visualization

Sample Collection and Processing Workflow

Multi-Matrix Metabolomics Analysis Pathway

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Biospecimen Collection and Processing

| Item | Function | Application Notes |

|---|---|---|

| EDTA Blood Collection Tubes | Prevents coagulation by chelating calcium; yields plasma. | Can cause ion suppression in MS; not suitable for analysis of certain metabolites like sarcosine [23]. |

| Heparin Blood Collection Tubes | Prevents coagulation by activating antithrombin; yields plasma. | Often provides a richer metabolomic profile for lipids and amino acids; lithium heparin can enhance ionization of phospholipids [23]. |

| Serum Tubes (no additive) | Allows blood to clot; yields serum. | Clotting conditions must be standardized; avoid polymeric gel separator tubes for metabolomics work [23]. |

| Methanol (HPLC/MS Grade) | Protein precipitation and metabolite extraction. | A 1:8 (urine:MeOH) ratio is an optimized protocol for wide metabolite coverage in urine [25]. |

| Cryogenic Vials | Long-term storage of biospecimen aliquots. | Must be pre-labeled with unique, durable identifiers that can withstand ultra-low temperatures [24]. |

| Derivatization Reagents | Chemically modify metabolites for volatility and detection in GC-MS. | Typical two-step process involves methoximation (e.g., with methoxyamine) followed by silylation (e.g., with MSTFA) [26]. |

Leveraging Liquid Chromatography-Mass Spectrometry (LC-MS) and Hydrophilic-Interaction Liquid Chromatography (HILIC) for Metabolite Profiling

Technical Support Center

Troubleshooting Guides & FAQs

LC-MS System Performance

Q1: Why am I observing a significant drop in MS signal intensity during my HILIC-LC-MS run for polar metabolites? A: This is often due to buffer salt precipitation or contamination of the MS source. HILIC mobile phases use high concentrations of volatile salts (e.g., ammonium acetate) which can precipitate if the system is not properly stored and flushed. Contaminants from biological samples can also accumulate on the HILIC column and transfer to the MS source.

- Troubleshooting Steps:

- Flush System: Flush the entire LC system, including the column, with a high-water content mobile phase (e.g., 90:10 H₂O:ACN) to re-dissolve any salts.

- Inspect Source: Clean the ESI source, including the capillary, cone, and skimmer, according to the manufacturer's instructions.

- Check Column: Perform a column cleaning procedure as recommended by the manufacturer. If performance does not recover, the column may need replacement.

- Mobile Phase Preparation: Ensure ammonium acetate or formate is fully dissolved in the aqueous phase before mixing with the organic phase.

Q2: My chromatographic peaks are broad and tailing, leading to poor separation in HILIC mode. What could be the cause? A: Poor peak shape in HILIC is frequently a result of insufficient column equilibration or a mismatch between the sample solvent and the starting mobile phase.

- Troubleshooting Steps:

- Extend Equilibration: HILIC columns require extensive equilibration. Increase the equilibration time between runs; 10-15 column volumes of the starting mobile phase is a minimum.

- Match Solvent Strength: Reconstitute or inject your sample in a solvent that has a stronger eluting strength than the starting mobile phase (e.g., 90-95% ACN). Injecting in a high-aqueous solvent will cause peak distortion.

- Check pH and Buffer: Ensure the buffer pH is correctly set and consistent. Verify that the buffer concentration is sufficient (typically 10-20 mM) to shield analytes from residual silanols on the stationary phase.

Sample Preparation & Data Quality

Q3: I am experiencing high background noise and ion suppression in my LC-MS data from plasma samples in a controlled feeding study. How can I mitigate this? A: Complex biological matrices like plasma contain salts, lipids, and proteins that cause ion suppression and background chemical noise.

- Troubleshooting Steps:

- Optimize Protein Precipitation: Use cold acetonitrile (2:1 ACN:plasma ratio) for protein precipitation. This effectively removes proteins and some lipids while keeping polar metabolites in solution.

- Implement SPE: Use Solid-Phase Extraction (SPE) cartridges designed for phospholipid removal to specifically reduce a major source of ion suppression.

- Dilute-and-Shoot: For targeted analysis, a simple "dilute-and-shoot" approach with a high organic solvent can be effective if the analyte is present at high enough concentrations.

Q4: How do I ensure my sample preparation is reproducible for biomarker discovery across a large cohort from a feeding study? A: Reproducibility is critical. Use an internal standard (IS) cocktail and a standardized, automated protocol.

- Troubleshooting Steps:

- Use Internal Standards: Add a cocktail of stable isotope-labeled internal standards (SIL-IS) before the first processing step. This corrects for losses during preparation and matrix effects during analysis.

- Automate: Use a liquid handling robot for protein precipitation and sample transfer to minimize human error and improve throughput.

- Quality Control (QC) Pool: Create a pooled QC sample by combining a small aliquot of every sample. Inject this QC repeatedly throughout the analytical sequence to monitor system stability and data quality.

Quantitative Data Summary

Table 1: Common HILIC Mobile Phase Additives and Their Properties

| Additive | Concentration | Common Use Case | MS Compatibility |

|---|---|---|---|

| Ammonium Acetate | 5-20 mM | General polar metabolite profiling, positive/negative mode switching | Excellent |

| Ammonium Formate | 5-20 mM | Better solubility at high ACN%; often used for negative mode | Excellent |

| Formic Acid | 0.1% | Positive ion mode for acidic and basic compounds | Excellent |

| Ammonium Hydroxide | 0.1% | Negative ion mode for acidic compounds | Good (can cause corrosion) |

Table 2: Troubleshooting Guide for Common LC-MS/HILIC Issues

| Problem | Potential Cause | Solution |

|---|---|---|

| High Backpressure | Column blockage, buffer precipitation | Filter samples, flush system with high-water content mobile phase |

| Retention Time Drift | Insufficient column equilibration, temperature fluctuation | Increase equilibration time, use a column oven |

| No Peaks / Low Signal | MS source contamination, incorrect mobile phase | Clean ESI source, check MS tuning and mobile phase composition |

| Poor Peak Shape | Sample solvent mismatch, column degradation | Reconstitute sample in starting mobile phase, replace column |

Experimental Protocols

Protocol 1: HILIC-MS Metabolite Profiling of Human Plasma from a Controlled Feeding Study

Objective: To extract and profile polar metabolites from human plasma for biomarker discovery.

Materials:

- Methanol, LC-MS Grade

- Acetonitrile, LC-MS Grade

- Water, LC-MS Grade

- Ammonium Acetate, LC-MS Grade

- Stable Isotope-Labeled Internal Standard (SIL-IS) cocktail

- Microcentrifuge tubes

- Centrifuge

- Vortex mixer

- Liquid handling robot (recommended)

Methodology:

- Thawing: Thaw plasma samples on ice.

- Aliquoting: Aliquot 50 µL of plasma into a clean microcentrifuge tube.

- Internal Standard Addition: Add 10 µL of a SIL-IS cocktail.

- Protein Precipitation: Add 200 µL of cold methanol (-20°C). Vortex vigorously for 1 minute.

- Centrifugation: Centrifuge at 14,000 x g for 15 minutes at 4°C.

- Collection: Transfer 150 µL of the supernatant to a new LC-MS vial.

- Drying: Evaporate the solvent to dryness under a gentle stream of nitrogen.

- Reconstitution: Reconstitute the dried extract in 100 µL of 90% ACN / 10% water containing 10 mM ammonium acetate. Vortex for 1 minute.

- Analysis: Centrifuge at 14,000 x g for 5 minutes and transfer the supernatant to an LC-MS vial with insert for HILIC-MS analysis.

Protocol 2: HILIC Chromatography Method for Polar Metabolite Separation

LC Conditions:

- Column: BEH Amide (2.1 x 100 mm, 1.7 µm)

- Mobile Phase A: 95% ACN / 5% Water with 10 mM Ammonium Acetate (pH ~6.8)

- Mobile Phase B: 50% ACN / 50% Water with 10 mM Ammonium Acetate (pH ~6.8)

- Flow Rate: 0.4 mL/min

- Column Temperature: 40°C

- Injection Volume: 5 µL

Gradient Program:

| Time (min) | %A | %B |

|---|---|---|

| 0.0 | 100 | 0 |

| 1.0 | 100 | 0 |

| 10.0 | 70 | 30 |

| 11.0 | 50 | 50 |

| 12.0 | 50 | 50 |

| 12.1 | 100 | 0 |

| 15.0 | 100 | 0 |

Mandatory Visualization

Title: Plasma Metabolite Extraction Workflow

Title: HILIC Elution Gradient

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for HILIC-MS Metabolomics

| Item | Function & Importance |

|---|---|

| LC-MS Grade Solvents (ACN, MeOH, H₂O) | Minimize background noise and ion suppression caused by impurities. Essential for reproducible retention times. |

| Ammonium Acetate/Formate | Volatile buffers for mobile phase pH control and ion-pairing. MS-compatible and prevent salt precipitation in the source. |

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Correct for matrix effects, extraction efficiency, and instrument variability. Critical for accurate quantification. |

| BEH Amide HILIC Column | A robust, widely used stationary phase for retaining a broad range of highly polar metabolites. |

| Phospholipid Removal SPE Plates | High-throughput cleanup of plasma/serum to reduce ion suppression and source contamination. |

| Liquid Handling Robot | Automates sample preparation, ensuring high reproducibility and throughput for large cohort studies. |

Incorporating Artificial Intelligence and Machine Learning for Automated Data Interpretation and Predictive Modeling

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center addresses common challenges researchers face when incorporating Artificial Intelligence (AI) and Machine Learning (ML) into controlled feeding studies for biomarker development. The following guides and FAQs provide specific, actionable solutions to ensure robust and reliable predictive modeling.

Frequently Asked Questions

Q1: Our predictive model performed well on training data but generalizes poorly to our validation cohort. What are the primary causes and solutions?

This is a classic case of overfitting, where a model learns the noise in the training data rather than the underlying biological signal [27].

- Cause 1: Inadequate Training Data. The model may be trained on an insufficient number of samples or non-representative data.

- Solution: Ensure your dataset is large enough and that the training set reflects the diversity of the target population. Utilize techniques like data augmentation (creating modified copies of existing data) and collect more samples if possible [28].

- Cause 2: Incorrect Algorithm Choice or Hyperparameters. Complex models like deep neural networks can overfit small datasets.

- Cause 3: Data Preprocessing Inconsistencies. Differences in how training and validation data are normalized or cleaned can create performance gaps.

- Solution: Implement a standardized preprocessing pipeline applied consistently to all datasets. This includes handling missing values, normalization, and feature scaling [30].

Q2: How can we assess the value of novel omics biomarkers compared to established clinical variables?

This requires a comparative evaluation to determine if new data types provide added value for decision-making [30].

- Solution: Use a baseline model built only on traditional clinical data. Train a second model that incorporates both clinical and omics data. Compare their performance using appropriate metrics (e.g., AUC, accuracy, F1-score). The omics data is only valuable if it significantly improves the model's predictive power beyond the clinical baseline [30]. Random Forest algorithms are particularly useful here as they can estimate which variables are important in the classification [29].

Q3: What strategies are recommended for integrating multiple data types, such as clinical records and metabolomics data?

Effective multimodal data integration is key for a comprehensive view. There are three primary strategies [30]:

- Early Integration: Combine all raw data from different modalities (e.g., clinical and omics) into a single feature set before model training. Use methods like canonical correlation analysis (CCA) to extract common features.

- Intermediate Integration: Use models that integrate data sources during the learning process. Examples include Support Vector Machines with multiple kernels or multimodal neural networks.

- Late Integration: Train separate models on each data type (e.g., one model on clinical data, another on omics). Then, use a meta-model (a technique called stacked generalization) to combine their predictions [30].

Q4: Our dataset has a very high number of features (p) but a small sample size (n). How can we build a reliable model with this "p >> n" problem?

This high-dimensionality problem is common in omics studies and risks false discoveries [30] [27].

- Solution 1: Dimensionality Reduction. Apply unsupervised techniques like Principal Component Analysis (PCA) or UMAP to project the high-dimensional data into a lower-dimensional space while preserving its structure [27].

- Solution 2: Feature Selection. Prior to modeling, filter out uninformative features. Remove features with zero or near-zero variance. Use statistical methods or the built-in feature importance scores from algorithms like Random Forest to select the most predictive features [30] [29].

- Solution 3: Algorithm Selection. Employ algorithms designed for high-dimensional data. Regularized regression (like Lasso) performs feature selection as part of the model training, forcing the coefficients of non-informative features to zero [27].

Troubleshooting Common Experimental and Data Workflow Issues

The following workflow diagram outlines a robust pipeline for AI-driven biomarker discovery, highlighting stages where common issues occur.

Predictive AI Models and Algorithms for Biomarker Research

The table below summarizes key predictive models and algorithms, their applications in biomarker research, and important considerations for their use.

| Model/Algorithm | Primary Use Case | Key Advantages | Common Pitfalls |

|---|---|---|---|

| Random Forest [29] | Classification (e.g., disease vs. healthy); Regression | Resistant to overfitting; Handles thousands of input variables; Estimates feature importance [29] | Can be computationally intensive for very large datasets |

| Generalized Linear Model (GLM) [29] | Regression with non-normal data distributions; Modeling dose-response | Fast training time; Straightforward to interpret; Handles categorical predictors [29] | Requires relatively large datasets; Susceptible to outliers [29] |

| Clustering Models [29] | Unsupervised discovery of disease endotypes or patient subgroups [27] | Identifies hidden patterns and subgroups without pre-defined labels | Results can be sensitive to initial parameters and distance metrics |

| Time Series Model [29] | Analyzing longitudinal data (e.g., biomarker levels over time in a feeding study) | Captures trends and seasonal patterns; Forecasts future values | Requires consistent, time-stamped data collection |

| Outliers Model [29] | Quality control; Detecting anomalous samples or potential fraud | Identifies unusual data points that may indicate errors or unique biological signals | Requires careful tuning to avoid flagging valid but rare biological events |

Essential Research Reagent Solutions and Materials

This table details key materials and computational tools essential for conducting AI-driven biomarker research in controlled feeding studies.

| Item / Reagent | Function / Application | Technical Notes |

|---|---|---|

| Liquid Chromatography-Mass Spectrometry (LC-MS) [12] [20] | Metabolomic profiling of blood and urine specimens to identify candidate food intake biomarkers. | Use HILIC (hydrophilic-interaction liquid chromatography) protocols for broad metabolite coverage [12]. |

| Controlled Diets | Administer test foods in prespecified amounts to establish a direct link between intake and biomarker levels [12] [20]. | Diets should be designed based on dietary guidelines (e.g., USDA MyPlate). Precise portion control (e.g., cup equivalents) is critical [12]. |

| Biospecimen Collection Kits | Standardized collection of blood, urine, and other samples (e.g., stool) for multi-omics analysis. | Implement protocols for 24-hour pharmacokinetic data collection points and consistent handling (e.g., freezing) to ensure sample integrity [12]. |

| Data Harmonization Frameworks | Standardizing data collection and variable definitions across multiple study sites. | Use common data elements (CDEs) and develop shared data dictionaries to ensure consistency and enable pooled analyses [12]. |

| Python with scikit-learn & Jupyter | Building, training, and documenting machine learning models for predictive analytics [27]. | Jupyter notebooks provide a flexible framework for analysis that is easily modified and shared, requiring little coding expertise [27]. |

Detailed Experimental Protocol: A 3-Phase Biomarker Validation Workflow

The following diagram and protocol detail a structured approach for the discovery and validation of dietary biomarkers using controlled feeding studies, a methodology employed by the Dietary Biomarkers Development Consortium (DBDC) [12] [20].

Phase 1: Discovery - Identify Candidate Biomarkers

- Objective: To identify novel compounds associated with specific food intake and characterize their pharmacokinetics [12] [20].

- Methodology: