Optimizing Sensor Placement for Advanced Food Intake Monitoring: Methods, Applications, and Clinical Translation

This article provides a comprehensive analysis of sensor placement optimization strategies for objective food intake monitoring, a critical need in nutritional science, obesity research, and clinical drug development.

Optimizing Sensor Placement for Advanced Food Intake Monitoring: Methods, Applications, and Clinical Translation

Abstract

This article provides a comprehensive analysis of sensor placement optimization strategies for objective food intake monitoring, a critical need in nutritional science, obesity research, and clinical drug development. We systematically explore the foundational principles of ingestive behavior monitoring, examining diverse sensor modalities including acoustic, motion, strain, and image-based systems. The review details methodological frameworks for optimal sensor placement adapted from structural health monitoring, addresses key challenges in real-world implementation, and evaluates validation protocols for assessing system performance. By synthesizing current research and emerging trends, this work aims to equip researchers and healthcare professionals with the knowledge to develop more accurate, reliable, and user-acceptable monitoring systems for both laboratory and free-living conditions.

Fundamentals of Eating Behavior Monitoring and Sensor Modalities

The Critical Need for Objective Food Intake Monitoring in Health and Disease

Frequently Asked Questions (FAQs)

Q1: What are the main limitations of self-reported methods for dietary assessment? Self-reported methods like 24-hour recalls and food diaries are subject to significant errors, including inaccurate recall, social desirability bias, and portion-size estimation errors. They lack the granularity to capture subconscious, repetitive eating actions and often fail to provide accurate data on eating behavior metrics such as eating speed and chewing rate [1] [2].

Q2: What sensor modalities are most commonly used for monitoring eating behavior? Researchers primarily use acoustic, motion, inertial, strain, and camera-based sensors [1] [3]. These can be deployed as wearable devices (e.g., on the head, neck, or wrist) or as non-wearable systems (e.g., ambient cameras or weight scales) [4] [3].

Q3: Why is sensor placement optimization critical in food intake monitoring research? Optimal sensor placement is crucial for data accuracy and user compliance. For example, sensors on the head or neck are best for detecting chewing and swallowing, while wrist-worn inertial sensors are effective for identifying hand-to-mouth gestures as a proxy for bites. Incorrect placement can lead to false positives or missed detection of eating episodes [1] [4].

Q4: What are the key challenges when moving from laboratory to free-living studies? The main challenges include ensuring sensor performance in uncontrolled environments, minimizing user burden to encourage long-term adherence, and addressing privacy concerns, especially with camera-based methods [1] [3]. Developing privacy-preserving algorithms that filter non-food-related data is an active area of research [1].

Q5: How can I improve the accuracy of my image-based dietary assessment data? Implement a two-stage data modification process: 1) Manual data cleaning to correct for wrong food code selections and portion size errors, and 2) Re-analyzing food codes with missing micronutrient information, which is common with prepackaged and restaurant foods [2].

Troubleshooting Common Experimental Issues

Issue 1: Low Accuracy in Detecting Eating Episodes

- Problem: The system fails to detect bites or chews, or generates false positives during non-eating activities.

- Solution:

- Sensor Validation: Verify the attachment and initial calibration of the sensor. For wearable motion sensors, ensure they are snug but comfortable.

- Algorithm Tuning: Retrain machine learning classifiers with data that represents the specific study population's eating patterns and demographics. The use of personalized models can significantly improve accuracy [1] [3].

- Multimodal Sensing: Combine data from multiple sensors (e.g., a wrist-worn inertial sensor for bite gestures and a piezoelectric sensor for chewing sounds) to cross-validate events and reduce false positives [4].

Issue 2: High Participant Burden and Low Adherence

- Problem: Participants find the sensors cumbersome or forget to use them, leading to incomplete data.

- Solution:

- Sensor Selection: Choose the least obtrusive sensor that meets the study's primary objective. For long-term free-living studies, a single wrist-worn device may be preferable to multi-sensor setups [4].

- User Interface Simplification: For apps requiring active image capture, ensure the interface is intuitive and minimizes the number of steps required to log a meal [2].

- Automated Passive Monitoring: Where ethically and technically feasible, utilize passive sensing (e.g., wearable cameras that capture images at intervals) to reduce participant burden [1].

Issue 3: Inaccurate Food Identification and Portion Size Estimation

- Problem: Image-based methods consistently misidentify food items or provide incorrect volume/mass estimates.

- Solution:

- Reference Object: Include a reference object (e.g., a checkerboard pattern or a fiducial marker of known size) in the image frame to calibrate portion size estimation [1] [2].

- Database Enhancement: Continuously update the food image and nutrient database underlying the analysis tool, paying special attention to local and culturally specific foods [2].

- Manual Verification: Implement a protocol for trained analysts to review a subset of images to identify and correct systematic errors in automated food coding [2].

Issue 4: Data Integrity and Preprocessing Errors

- Problem: The collected sensor data is noisy, or the dataset contains gaps and inconsistencies.

- Solution:

- Preprocessing Pipeline: Establish a robust data preprocessing pipeline that includes filtering for signal noise, segmentation of data streams into potential eating episodes, and imputation methods for handling minor data loss [3].

- Data Cleaning Protocol: As demonstrated in the Formosa FoodApp study, perform manual data cleaning to address errors in food code selection and portion size entries before final analysis [2].

Experimental Protocols for Key Methodologies

Protocol 1: Validating a Wrist-Worn Inertial Sensor for Bite Detection

Objective: To assess the accuracy of a wrist-worn inertial measurement unit (IMU) in detecting hand-to-mouth gestures during eating episodes.

Materials:

- Wrist-worn IMU sensor (e.g., containing accelerometer and gyroscope).

- Video recording system (as ground truth).

- Data processing unit (laptop/tablet) with time-synchronization software.

Methodology:

- Sensor Placement: Securely attach the IMU to the participant's dominant wrist.

- Calibration: Record a baseline of neutral position and standardized gestures.

- Experimental Meal: Provide participants with a standardized meal in a controlled laboratory setting. Simultaneously record sensor data and video.

- Ground Truth Annotation: From the video, trained annotators mark the timestamps of each actual bite.

- Data Analysis: Extract features (e.g., signal magnitude, orientation) from the IMU data. Train a machine learning classifier (e.g., Support Vector Machine) to identify bite gestures. Compare algorithm-derived bite timestamps against video-annotated ground truth to calculate precision, recall, and F1-score [1] [3].

Protocol 2: Implementing an Image-Assisted Dietary Assessment App

Objective: To validate the accuracy of a mobile nutrition app for estimating energy and nutrient intake in a free-living population.

Materials:

- Smartphone with the image-assisted dietary app (e.g., Formosa FoodApp).

- Platform for manual data review by dietitians.

Methodology:

- Participant Training: Train participants to capture clear, top-down images of their food before and after consumption, including a reference object for scale.

- Data Collection: Participants record all food and drink consumed over a set period (e.g., 3 days) using the app.

- Data Modification Process:

- Stage 1 (Manual Cleaning): A trained dietitian reviews all entries to correct for errors in food code selection, portion size estimation, and missing items/condiments [2].

- Stage 2 (Reanalysis): Identify food codes with missing micronutrient data and replace them with nutritionally complete alternatives from an expanded database [2].

- Validation: Compare the app's output for energy and key nutrients against a reference method, such as a 24-hour dietary recall conducted by an expert [2]. Use statistical methods (paired t-tests, Bland-Altman plots, correlation coefficients) to assess agreement.

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Materials for Food Intake Monitoring Research

| Item | Function in Research | Example/Note |

|---|---|---|

| Piezoelectric Sensor | Detects vibrations from chewing and swallowing. Often embedded in a neckband or eyeglasses [3]. | Can be used to count chews and estimate chewing rate [1]. |

| Inertial Measurement Unit (IMU) | Tracks hand and wrist movement to identify gestures like hand-to-mouth bites [1] [4]. | Typically includes an accelerometer and gyroscope. Worn on the wrist. |

| Wearable Camera (e.g., egocentric camera) | Passively captures images of the participant's field of view for dietary assessment [1]. | Raises privacy concerns; requires ethical consideration and privacy-preserving algorithms [1]. |

| Acoustic Sensor (Microphone) | Captaves sounds associated with eating (biting, chewing, swallowing). Often used with noise-filtering algorithms [1]. | Can be susceptible to ambient noise in free-living conditions. |

| Reference Food Database | A comprehensive database of food items with associated nutrient information used to convert images or logs into energy and nutrient intake data [4] [2]. | Must be continually updated with new food products and regional dishes to maintain accuracy [2]. |

| Standardized Reference Object | A object of known dimensions (e.g., a checkerboard card) placed in food photos to calibrate and improve portion size estimation [2]. | Critical for reducing error in image-based volume and mass calculations. |

Experimental and Data Workflows

Research Methodology Workflow

Data Error Mitigation Process

Taxonomy of Sensor Modalities for Eating Behavior Metrics

Within the scope of sensor placement optimization for food intake monitoring research, selecting the appropriate sensor modality is a foundational step that directly influences data quality and experimental success. This guide provides a structured taxonomy of available sensor technologies, troubleshooting for common experimental challenges, and standardized protocols to assist researchers, scientists, and drug development professionals in designing robust and reliable studies.

Sensor Taxonomy and Selection Guide

The following table catalogs the primary sensor modalities used in eating behavior research, their detection principles, and key considerations for selection.

Table 1: Taxonomy of Sensor Modalities for Eating Behavior Monitoring

| Sensor Modality | Measured Eating Metrics | Common Placements | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Acoustic [5] [3] | Chewing, swallowing, bite identification [6] | Ear (ear-worn buds), neck (pendants) [6] [7] | High accuracy for oral activities | Sensitive to ambient noise; privacy concerns [5] |

| Motion/Inertial (Accelerometer, Gyroscope) [3] [8] | Hand-to-mouth gestures, biting, chewing [6] | Wrist (watch-style), head [5] [6] | Captures upper-body eating gestures; widely available in consumer devices [8] | Can confuse with similar non-eating gestures (e.g., talking, face-touching) [8] |

| Strain/Pressure [5] | Jaw opening/closing, chewing [3] | Temple (eyeglass frames), neck [3] | Direct measurement of jaw movement | Device may be obtrusive, affecting natural behavior |

| Image/Vision (Cameras) [5] [9] | Food type, portion size, eating environment [5] [6] | Wearable (eyeglasses), overhead, personal devices [5] | Provides rich contextual data on food and environment | Raises significant privacy issues; manual or complex algorithmic analysis needed [5] |

| Physiological | Heart rate, electrodermal activity | Chest, wrist | Provides data on body's autonomic responses | Indirect measure of eating; can be confounded by other activities |

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Our wrist-worn motion sensor has a high false positive rate, detecting non-eating activities like face-touching as bites. How can we improve detection accuracy?

- A: This is a common challenge in free-living settings [8]. Implement a multi-sensor fusion approach. Consider combining the wrist-worn accelerometer with a secondary modality, such as a small acoustic sensor on the neck to verify the presence of chewing sounds associated with a detected gesture [5] [8]. Furthermore, review and refine your detection algorithm's event classification logic to include temporal patterns and signal characteristics that better distinguish bites from other arm movements [3].

Q2: In our field study, participant compliance with wearing the sensor is low. What can we do to improve adherence?

- A: User experience is critical for long-term compliance [6] [7]. Optimize for comfort and obtrusiveness:

- Sensor Choice: Prioritize small, lightweight sensors that integrate into existing accessories (e.g., eyeglass frames, wristwatches, discreet ear buds) [9].

- User Feedback: Involve users in the design selection process to identify comfort and aesthetic concerns [7].

- Clear Communication: Explain the research purpose and data privacy measures to build trust and participant motivation [9].

Q3: Our camera-based system accurately identifies food items but raises significant privacy concerns among participants. How can we mitigate this?

- A: Privacy is a major challenge for visual monitoring [5] [9]. Develop and enforce a privacy-preserving protocol:

- Anonymization: Immediately blur or remove all non-food elements, such as faces and identifiable backgrounds, from captured images [5].

- On-Device Processing: Process images locally on the device to prevent transmission of raw visual data to external servers [9].

- Transparent Consent: Clearly inform participants about what data is collected, how it is processed, and who will have access to it [5].

Q4: Our sensor performs well in the lab but its accuracy drops significantly in real-world, free-living conditions. What steps should we take?

- A: This highlights the importance of in-field validation [8]. To bridge the performance gap:

- Field Calibration: Conduct calibration sessions in the target environment, not just the lab, to tune sensor parameters against real-world noise [8].

- Diverse Training Data: Ensure the machine learning algorithms are trained on data that includes a wide variety of real-world activities that could be confused with eating (e.g., driving, talking, working) [3] [8].

- Ground Truth: Use a reliable ground-truth method during field validation, such as annotated video recording or a simplified self-report prompt delivered via a smartphone app at sensed, opportune moments [9] [8].

Experimental Protocol: Validation of a Multi-Sensor Setup for Bite Detection

Objective

To validate the accuracy of a combined wrist-worn accelerometer and neck-placed microphone setup for automatic bite counting in a free-living environment.

Materials and Reagents

Table 2: Essential Research Reagents and Materials

| Item | Function/Application |

|---|---|

| Wrist-worn IMU Sensor [3] [8] | Captures inertial data from hand and arm movements to detect potential bite gestures. |

| Miniature Microphone [5] [6] | Captures acoustic signals from chewing and swallowing to verify eating activity. |

| Data Logger/Smartphone [3] | Synchronously records and stores timestamped data from all sensors. |

| Annotation Tool (e.g., video camera or software) [8] | Serves as a ground-truth source for manual annotation of actual bite events. |

| Sensor Attachment Kits (e.g., hypoallergenic adhesives, straps) | Secures sensors to the participant's body comfortably and reliably. |

Methodology

- Sensor Synchronization: Precisely time-synchronize all sensors (accelerometer, microphone) and the ground-truth annotation system (e.g., video camera) before deployment [8].

- Participant Briefing: Instruct participants on the correct placement of sensors. Define the study period and the types of meals/snacks to be consumed.

- Data Collection: Participants wear the sensor system during one or more eating episodes in their natural environment. Ground-truth data is collected concurrently (e.g., through first-person video or direct observation if ethically permissible) [9].

- Data Processing:

- Data Fusion and Validation: Fuse the motion and acoustic event streams using a decision logic (e.g., a bite is confirmed only if a hand gesture is temporally aligned with a chewing sound). Compare the system's detected bites against the manually annotated ground-truth bites to calculate performance metrics like accuracy, precision, recall, and F1-score [8].

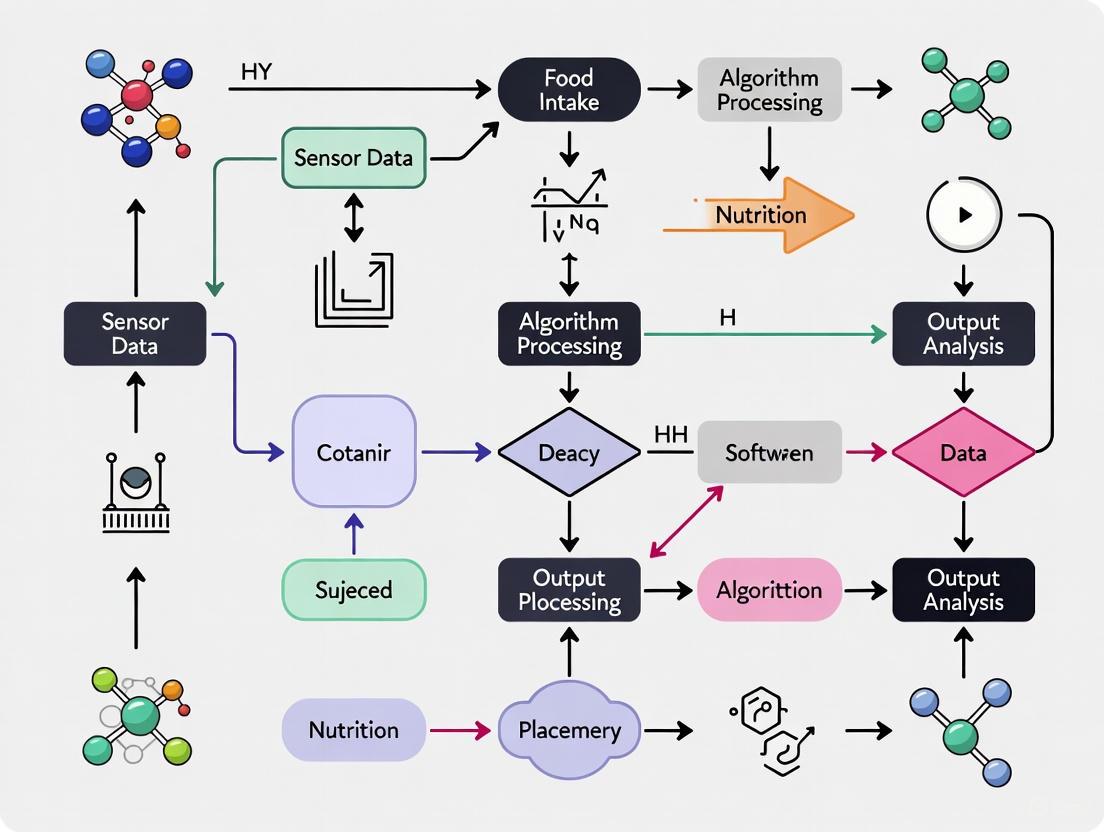

Workflow Diagram

The following diagram illustrates the logical flow and data integration points of the experimental validation protocol.

Performance Metrics and Benchmarking

When reporting results, it is crucial to use standardized performance metrics to allow for cross-study comparison.

Table 3: Key Performance Metrics for Eating Detection Systems

| Metric | Definition | Interpretation in Eating Detection |

|---|---|---|

| Accuracy [8] | (True Positives + True Negatives) / Total Predictions | Overall, how often the system is correct across eating and non-eating periods. |

| Precision [8] | True Positives / (True Positives + False Positives) | When the system detects an eating event, how likely is it to be correct? (Low precision = high false alarms). |

| Recall (Sensitivity) [8] | True Positives / (True Positives + False Negatives) | What proportion of actual eating events does the system successfully detect? (Low recall = missed meals/bites). |

| F1-Score [8] | 2 * (Precision * Recall) / (Precision + Recall) | The harmonic mean of precision and recall; a single balanced metric for uneven class distributions. |

| Specificity [7] | True Negatives / (True Negatives + False Positives) | How effectively the system rejects non-eating activities? |

Troubleshooting Guide: Jaw Movement Sensors

Q1: What are common failure modes for intraoral jaw movement trackers and their solutions?

Intraoral sensors, while minimizing external hardware, face unique challenges. The following table outlines common issues and corrective actions.

| Failure Mode | Symptoms | Diagnostic Steps | Corrective Action |

|---|---|---|---|

| Signal Drift/Inaccurate Readings | Gradual deviation in jaw position data over time; inconsistent movement trajectories. | Check for power supply instability [10]; Verify sensor calibration [10]; Analyze effect of temperature/humidity fluctuations [10]. | Recalibrate sensor following manufacturer's protocol [11] [10]; Implement environmental shielding [10]. |

| Prolonged Response Time | Delayed detection of jaw movement initiation; data not matching observed motion. | Use an oscilloscope to analyze signal waveform for anomalies [10]. | Ensure power supply is stable and adequate [10]; Check for mechanical obstruction in jaw movement path. |

| Complete Signal Loss | No data output from the sensor. | Perform visual inspection for wire damage or loose connections [10]; Use a multimeter to test for short or open circuits [10]. | Replace damaged wiring or connectors [10]; Verify the sensor is correctly powered. |

Q2: My magnetic jaw tracker is providing erratic positional data. What should I check?

Magnetic sensors are susceptible to external interference. Follow this systematic protocol [11] [10]:

- Identify Electromagnetic Interference (EMI): Check the environment for potential EMI sources, such as large electric motors, unshielded power cables, or other electronic devices. Move the experimental setup away from these sources or power them down temporarily for testing [10].

- Inspect Sensor and Magnet Integrity: Perform a visual inspection of the magnet and sensor housing for any physical damage, such as cracks or deformations [10]. Ensure the magnet is securely fixed and has not shifted from its calibrated position [11].

- Re-run Calibration ("Fingerprint Method"): Recalibrate the system using the "fingerprint method." This involves experimentally collecting the distribution of the magnet’s three-dimensional magnetic flux density vectors in advance to create an accurate map for converting sensor readings to positions [11].

Troubleshooting Guide: Throat (Swallowing) and Head Movement Sensors

Q3: The detection of swallowing events (via throat microphones/IMUs) is inconsistent. How can I improve reliability?

Inconsistent swallowing detection often stems from sensor placement and environmental noise.

| Problem Area | Specific Issue | Troubleshooting Method | Solution |

|---|---|---|---|

| Sensor Placement | Variations in signal amplitude due to slight sensor shifting. | Reposition the sensor on the neck to find the location of maximum signal strength during a swallow. Use double-sided adhesive or a stable collar to minimize movement [1]. | Establish a standardized placement protocol using anatomical landmarks (e.g., superior to the thyroid cartilage) [1]. |

| Environmental Noise | Acoustic sensors picking up non-swallowing sounds like speech or ambient noise. | Analyze recorded signal for patterns not characteristic of swallows [1]. | Apply software filters (e.g., band-pass filters) to isolate the frequency profile of swallows. Develop machine learning algorithms trained to recognize and filter out non-food-related sounds [1]. |

| Subject Variability | Differences in swallow physiology between participants. | Check sensor data across multiple participants and swallowing types (dry vs. wet swallow) [1]. | Develop personalized models that are trained on individual user data to improve detection accuracy [12]. |

Q4: Head-mounted sensors for eating context are causing user discomfort and affecting natural movement. What are the alternatives?

Large head-mounted devices can restrict movement and posture, preventing the tracking of natural behavior [11]. Consider these alternatives:

- Miniaturized Intraoral Devices: For jaw movement, explore emerging mouthpiece-type sensing devices that complete all measurements inside the oral cavity, eliminating the need for external head fixation [11].

- Wrist-Worn Inertial Measurement Units (IMUs): For detecting bites, use wrist-based inertial sensors to track hand-to-mouth gestures as a proxy for eating events. This is less obtrusive and can be integrated into a smartwatch form factor [1] [12].

- Wearable Cameras: For dietary intake context, passive (automatic) wearable cameras can capture images at pre-determined intervals without requiring user interaction, though privacy-preserving approaches are necessary [1].

Frequently Asked Questions (FAQs)

Q5: What is the most critical factor for ensuring accurate data across all sensor types? Regular calibration is paramount. Sensor drift over time is a common issue that can severely compromise data quality. A strict calibration schedule based on the manufacturer's guidelines and your specific experimental conditions is essential for reliable results [10].

Q6: How can I validate that my sensor setup is accurately detecting eating behavior? Use a multi-modal validation protocol. Correlate the sensor data (e.g., number of chews from a jaw sensor, bites from a wrist IMU) with video recordings of the eating episode, which serve as a ground truth [1]. This allows you to calculate the accuracy, precision, and recall of your detection method.

Q7: We are collecting data in free-living conditions. How do we handle the massive amount of sensor data generated? Implement an automated data processing pipeline. This typically involves:

- Preprocessing: Filtering noise and segmenting data into potential eating episodes.

- Machine Learning Classification: Using trained models (e.g., deep learning models like LSTMs) to automatically detect and classify eating events from sensor data [12].

- Cloud/Edge Computing: Leveraging cloud resources for heavy computation or edge computing on the device itself for real-time analysis [12].

Experimental Protocols for Sensor Validation

Protocol 1: Validating Jaw Movement Tracking Accuracy

Objective: To quantify the accuracy of an intraoral jaw movement tracker against a video-based motion capture system (ground truth).

Materials:

- Intraoral jaw movement sensor (e.g., magnetic Hall effect sensor with MEMS orientation sensor) [11].

- High-speed video camera (≥60 fps).

- Calibration jig with known distances.

- Data synchronization software.

Methodology:

- Calibration: Calibrate both the jaw sensor and the video system using the calibration jig.

- Task Procedure: The participant will perform a series of predefined mandibular movements:

- Open/close at slow, medium, and fast paces.

- Lateral excursions (left/right).

- Protrusion/retrusion.

- Data Collection: Simultaneously record jaw position/orientation from the intraoral sensor and 3D jaw marker positions from the video system.

- Data Analysis:

- Synchronize the two data streams temporally.

- Calculate the Euclidean distance between the jaw position derived from the intraoral sensor and the position from the video system for each time point.

- Report the mean error and standard deviation across all movements. The proposed intraoral system has demonstrated an accuracy of approximately 3 mm [11].

Protocol 2: Establishing Wrist IMU Accuracy for Bite Detection

Objective: To determine the F1-score and latency of a wrist-worn IMU for detecting eating gestures (bites).

Materials:

- Wrist IMU (with accelerometer and gyroscope) [12].

- Video recording setup.

- Computing platform for deep learning model (e.g., LSTM network) [12].

Methodology:

- Data Collection: Participants wear the IMU on their dominant wrist while eating a standardized meal. Simultaneous video is recorded.

- Ground Truth Labeling: Annotate the exact timestamps of each hand-to-mouth bite gesture from the video.

- Model Training & Testing:

- Preprocess the IMU data (e.g., filter, segment).

- Train a personalized deep learning model, such as a Recurrent Neural Network with LSTM layers, on the IMU data using the video annotations as labels [12].

- Evaluate the model on a held-out test dataset.

- Performance Metrics:

- Calculate the F1-score (harmonic mean of precision and recall). State-of-the-art models can achieve a median F1-score of 0.99 [12].

- Measure the prediction latency, defined as the time difference between the actual bite and its detection. High-accuracy models have reported an average latency of around 5.5 seconds [12].

Signaling Pathway and Workflow Visualizations

Jaw-Neck Biomechanical Coupling

Sensor Data Processing Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Essential Material | Function in Food Intake Monitoring Research |

|---|---|

| Hall Effect Magnetic Sensor | Measures the magnetic flux density from a permanent magnet to estimate the relative position and orientation of the jaw in six degrees of freedom [11]. |

| MEMS Orientation Sensor | A microelectromechanical system (MEMS) sensor, often part of an Inertial Measurement Unit (IMU), that measures 3D orientation and is integrated into jaw trackers and wrist-worn devices [11] [12]. |

| Inertial Measurement Unit (IMU) | A sensor package containing an accelerometer and gyroscope, worn on the wrist to detect hand-to-mouth gestures that are proxies for bites [12] [1]. |

| Acoustic Sensor | A small microphone placed on the neck to capture the distinct audio signatures of chewing and swallowing events [1]. |

| Machine Learning Model (LSTM) | A type of Recurrent Neural Network (RNN) highly effective for time-series data, used for personalized detection of eating gestures from IMU or acoustic sensor data [12]. |

FAQs and Troubleshooting Guide

Sensor Selection and Data Quality

Q1: What are the primary sensor modalities for detecting chewing and swallowing, and how do I choose between them?

The choice of sensor depends on the specific eating metrics you aim to capture, the required accuracy, and the desired level of obtrusiveness. The main modalities are:

- Piezoelectric Strain Sensors: Ideal for detecting jaw motion during chewing. They are typically placed below the ear on the jawline and measure skin curvature changes [13] [14]. They provide a clear signal of mastication frequency and are less susceptible to acoustic noise than microphones [14].

- Acoustic Sensors (e.g., throat microphones, in-ear microphones): Best for capturing swallowing sounds and chewing sounds [13] [15]. A throat microphone placed over the laryngopharynx is effective for swallowing [16], while in-ear microphones can capture chewing acoustics [15].

- Inertial Measurement Units (IMUs): These accelerometers and gyroscopes are effective for detecting hand-to-mouth gestures as a proxy for bites when placed on the wrist [1] [12]. They can also be used on the head to capture jaw motion [17].

Table: Comparison of Sensor Modalities for Eating Behavior Monitoring

| Sensor Modality | Primary Measured Metric | Typical Sensor Location | Advantages | Limitations |

|---|---|---|---|---|

| Piezoelectric Strain Gauge [13] [14] | Jaw motion (Chewing count & rate) | Below the earlobe, on the jawline | High accuracy for chew count; Less sensitive to environmental noise [17] [14] | May not directly detect swallowing |

| Acoustic Sensor (Throat Microphone) [13] [16] | Swallowing sound | Over the laryngopharynx | Direct measurement of swallowing (deglutition) | Signal can be affected by head movements and obesity [13] |

| Acoustic Sensor (In-ear Microphone) [15] | Chewing sound | Ear canal | Captures internal chewing sounds | Can be sensitive to ambient noise without proper shielding |

| Inertial Sensor (IMU) [1] [12] | Hand-to-mouth gesture / Jaw motion | Wrist / Head | Good for bite detection via arm movement; Non-intrusive on the head | Does not directly measure intra-oral activity like chewing |

Q2: My sensor signals are noisy, making it difficult to identify clear chewing or swallowing events. What are the common causes and solutions?

Problem: Motion Artifacts

- Cause: Head movements, talking, or walking can generate signals that interfere with chewing or swallowing patterns [14].

- Solution: Use a multi-sensor fusion approach. For example, combine a jaw strain sensor with a wrist IMU. The IMU can detect periods of gross body movement, allowing the algorithm to discount signals from the jaw sensor during those times [18]. Ensure sensors are securely attached to minimize movement-induced noise.

Problem: Acoustic Interference for Swallowing Sensors

- Cause: Ambient noise, speech, or coughing can mask swallowing sounds captured by a throat microphone [13].

- Solution: Implement pattern recognition algorithms rather than simple thresholding. Swallowing has a characteristic sound signature and typically occurs when teeth are close together, not during speech [13]. Machine learning classifiers (e.g., SVMs, Neural Networks) can be trained to differentiate swallows from other sounds [1] [14]. Using a combination of acoustic and strain sensors can also improve reliability [18].

Problem: Low Signal Amplitude

- Cause: Incorrect sensor placement or poor skin contact. This is a particular issue for throat microphones in obese subjects, where a "under chin fat pad" can inhibit reliable detection [13].

- Solution: Follow standardized placement protocols. For a jaw strain sensor, the optimal location is immediately below the outer ear, where jaw motion causes significant skin curvature [14]. For a throat microphone, ensure it is positioned firmly over the laryngopharynx. Test sensor output with a few deliberate chews or swallows before starting formal data collection.

Experimental Protocol and Validation

Q3: What is the best method for establishing ground truth during my experiments?

Video observation is widely considered the most robust method for establishing ground truth in controlled laboratory settings [18].

- Protocol:

- Multi-Camera Setup: Use multiple cameras to capture the participant from different angles, ensuring that hand-to-mouth gestures and food intake are always visible, even in pseudo-free-living environments [18].

- Synchronization: Precisely synchronize all sensor data streams with the video recording using a common timecode.

- Manual Annotation: Have trained human raters annotate the video footage for key events: start/end of eating episodes, individual bites, chewing sequences, and swallows [18] [16].

- Inter-Rater Reliability: Calculate inter-rater reliability statistics (e.g., Cohen's Kappa, Intra-class Correlation Coefficients) to ensure consistency and objectivity in the annotations. High agreement (e.g., Kappa > 0.8) is essential for a reliable gold standard [18].

Q4: How can I estimate Energy Intake (EI) from chewing and swallowing signals?

Individually calibrated models based on Counts of Chews and Swallows (CCS) offer a promising objective method [16].

- Methodology:

- Data Collection: Collect data from participants consuming multiple training meals where ground truth EI is known (e.g., via weighed food records) [16].

- Feature Extraction: From the sensor signals, extract the total number of chews and swallows for each meal.

- Model Development: For each participant, develop a linear or non-linear regression model that maps their unique counts of chews and swallows to the known energy intake. Research has shown these individualized models can have lower reporting bias than traditional diet diaries [16].

- Validation: Validate the model on a separate test meal. Note that model performance may decrease if the physical properties (e.g., texture, hardness) of the validation meal differ significantly from the training meals [16].

Key Experimental Protocols

Protocol for Manual Scoring of Chewing and Swallowing as Ground Truth

This protocol is essential for creating labeled datasets to train and validate automatic detection algorithms [13] [18].

- Equipment Setup: Simultaneously record data from chewing (jaw strain sensor) and swallowing (throat microphone) sensors alongside synchronized video footage of the participant [13] [16].

- Rater Training: Train multiple human raters to identify and annotate specific events using specialized software. Raters should be blinded to each other's scores.

- Annotation Procedure: Raters review the synchronized multimodal data and mark the timestamps for:

- Reliability Assessment: Calculate inter-rater reliability using intra-class correlation coefficients (ICC) for continuous data (e.g., number of chews) or Cohen's Kappa for categorical data (e.g., activity classification). Target an average ICC > 0.98 for chews and swallows and a Kappa > 0.8 for activity annotation to ensure a high-quality gold standard [13] [18].

Protocol for Fully Automatic Food Intake Detection and Chew Counting

This protocol outlines a complete pipeline for objective monitoring of eating behavior using a wearable sensor system [17].

- Sensor Deployment: Fit the participant with a wearable sensor system, such as the Automatic Ingestion Monitor (AIM), which typically includes a jaw strain sensor and a wrist-worn gesture sensor [18].

- Data Segmentation: Divide the continuous sensor signal into short, non-overlapping epochs (e.g., 5-second or 30-second intervals) [17] [14].

- Food Intake Detection:

- Chew Counting within Intake Episodes:

- Apply a peak detection algorithm to the signal from epochs classified as "food intake" to identify and count individual chews [17].

- Calculate derived metrics like chewing rate (chews per minute) for the eating episode.

- Performance Validation: Compare the automatically detected eating episodes and chew counts against the video-annotated gold standard. Successful systems have achieved a Kappa agreement of >0.77 for food intake detection and a mean absolute error of ~15% for chew count compared to human raters [18] [17].

The following diagram illustrates this automated workflow:

Protocol for Food Recognition from Eating Sounds

This protocol uses deep learning models to classify food types based on acoustic signals generated during chewing [15].

- Audio Data Collection: Record chewing sounds using a microphone placed in the ear canal or on a headset. Collect a large dataset of audio files for various food items [15].

- Pre-processing and Feature Extraction:

- Clean audio files and apply noise reduction algorithms if necessary [15].

- Extract relevant acoustic features such as:

- Mel-Frequency Cepstral Coefficients (MFCCs): To capture timbral and textural aspects of sound.

- Spectrograms: For a visual representation of signal strength over time and frequency.

- Spectral Roll-off and Bandwidth: To measure the shape and frequency range of the signal [15].

- Model Training: Train deep learning models on the extracted features. Models that have shown high performance include:

- Gated Recurrent Units (GRU) and Long Short-Term Memory (LSTM)

- Hybrid models (e.g., Bidirectional LSTM + GRU)

- Convolutional Neural Networks (CNNs) [15].

- Evaluation: Evaluate model performance using metrics like accuracy, precision, and recall. High-performing models can achieve classification accuracy above 95% for a limited set of food items [15].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Sensor-Based Eating Behavior Research

| Item Name | Specification / Example | Primary Function in Research |

|---|---|---|

| Piezoelectric Strain Sensor | LDT0-028K (Measurement Specialties) [18] [14] | Monitors jaw motion by detecting skin curvature changes during chewing. The core sensor for mastication quantification. |

| Throat Microphone | IASUS NT [16] | Captures acoustic signals of swallowing (deglutition) when placed over the laryngopharynx. |

| Inertial Measurement Unit (IMU) | Tri-axial accelerometer and gyroscope (e.g., ADXL335) [18] [12] | Detects hand-to-mouth gestures for bite identification or body movement for activity context and artifact detection. |

| Data Acquisition Module | USB-1608FS (Measurement Computing) [14] | Interfaces with analog sensors, provides sampling (e.g., 100-1000 Hz) and digitization of sensor signals for processing. |

| Medical Adhesive | Hollister 7730 [16] | Securely attaches skin-contact sensors (e.g., jaw strain sensor) to ensure consistent signal quality and placement. |

| Video Recording System | Multiple HD cameras (e.g., GW-2061IP) [18] | Provides ground truth for experiment validation. Allows for manual annotation of bites, chews, and swallows. |

| Annotation Software | Custom-designed software [16] | Enables trained raters to manually label sensor data and video, creating the gold-standard dataset for algorithm training. |

Performance Metrics of Selected Methods

Table: Reported Performance of Sensor-Based Eating Metric Methods

| Method / Sensor System | Primary Metric | Reported Performance | Key Findings / Limitations |

|---|---|---|---|

| Piezoelectric Sensor + ANN Classifier [17] | Chew Count (Fully Automatic) | Mean Absolute Error: 15.01% ± 11.06% vs. video annotation [17] | Provides objective quantification of chewing behavior; Performance is for a wide variety of foods. |

| Automatic Ingestion Monitor (AIM) [18] | Food Intake Detection | Kappa agreement with video: 0.77-0.78 [18] | Multisensor system (jaw, hand gesture) validated in a pseudo-free-living environment. |

| Counts of Chews & Swallows (CCS) Model [16] | Energy Intake Estimation | Reporting error comparable to diaries; lower bias for training meals [16] | Individually calibrated models show promise, but error may increase with unfamiliar food texture. |

| Acoustic Deep Learning (GRU Model) [15] | Food Item Recognition | Classification Accuracy: 99.28% (20 food items) [15] | Demonstrates high potential of audio-based food ID in controlled settings; real-world performance may be lower. |

| Piezoelectric Sensor + SVM Classifier [14] | Food Intake Detection (Epochs) | Per-epoch Classification Accuracy: 80.98% (30s epochs) [14] | A simpler system demonstrating the feasibility of jaw motion for intake detection. |

Comparison of Wearable vs. Environmental Sensor System Architectures

This technical support center provides guidance on selecting and troubleshooting sensor architectures for food intake monitoring research. The optimal choice between a wearable sensor system (worn on the body) and an environmental sensor system (deployed in the surroundings) depends heavily on your specific research objectives concerning data granularity, ecological validity, and participant burden.

The following guides and FAQs will help you configure your systems, diagnose common issues, and implement validated experimental protocols.

→ System Architecture Comparison & Selection Guide

The table below summarizes the core characteristics of each architecture to inform your selection.

Table 1: Wearable vs. Environmental Sensor System Architectures

| Feature | Wearable Sensor System | Environmental Sensor System |

|---|---|---|

| Primary Data Source | Individual's body (e.g., head, wrist, torso) [19] | Individual's surroundings (e.g., room, kitchen) [20] [21] |

| Typical Sensors | Accelerometer, gyroscope, camera, microphone [22] [19] | Depth cameras (e.g., Azure Kinect), pressure-sensitive walkways, fixed cameras [23] |

| Data Perspective | First-person (egocentric) [19] | Third-person (external observer) [23] |

| Monitoring Scope | Personal exposure and behavior, anywhere [24] [25] | Behavior within a specific, instrumented environment [21] [23] |

| Key Advantage | Captures individualized data in free-living conditions [24] [19] | High accuracy in controlled metrics; no user-worn gear required [23] |

| Key Limitation | Potential user burden, comfort, and privacy concerns [4] [25] | Limited to pre-deployed areas; cannot track behavior outside them [23] |

For a visual overview of how these systems can be integrated into a research workflow, see the following experimental pathway:

→ Frequently Asked Questions (FAQs)

System Selection & Design

Q1: My study aims to correlate food intake with individual gait patterns in elderly subjects. Which architecture is more suitable? A1: A Wearable Sensor System is strongly recommended. Gait is a personal biomechanical parameter that requires individual-level measurement. Research shows that foot-mounted Inertial Measurement Units (IMUs) provide high-accuracy gait data as subjects move freely, which is crucial for assessing fall risk or mobility changes related to nutrition [23].

Q2: I need to monitor long-term skin barrier health in relation to dietary factors. What should I consider? A2: For long-term physiological monitoring, a specialized Wearable Sensor is essential. Key considerations include:

- Breathability: To prevent sweat accumulation and data artifacts during prolonged wear [26].

- Form Factor: The device should be compact, lightweight, and cause minimal skin irritation to ensure adherence [26].

- Objective Metrics: Prioritize sensors that provide quantitative data (e.g., skin hydration) over subjective self-reports for greater accuracy [26].

Troubleshooting & Validation

Q3: My wearable sensor data is noisy, leading to false-positive food intake detection. How can I improve accuracy? A3: This is a common challenge. Implement a sensor fusion approach:

- Problem: Relying on a single sensor (e.g., an accelerometer for chewing) can be confused by activities like talking or gum chewing [19].

- Solution: Integrate data from multiple sensors. For example, combine the confidence scores from an accelerometer-based chewing detector with an egocentric camera-based food object recognizer. One study demonstrated that this hierarchical classification significantly increased sensitivity and reduced false positives compared to using either method alone [19].

Q4: How can I validate the accuracy of my environmental sensor system against a gold standard? A4: Conduct a validation study with precise synchronization:

- Protocol: In a controlled setting, have participants perform tasks while data is captured simultaneously by your environmental system (e.g., an Azure Kinect depth camera) and a gold-standard device (e.g., a pressure-sensitive Zeno walkway) [23].

- Synchronization: Use a custom hardware system to achieve millisecond-level temporal alignment between the devices [23].

- Analysis: Compare a rich set of gait markers (e.g., stride length, step time) using Mean Absolute Error (MAE) and Pearson correlation (r) to quantify your system's performance against the reference [23].

→ Detailed Experimental Protocols

Protocol 1: Validating a Wearable Food Intake Monitor (AIM-2) in Free-Living Conditions

This protocol is designed to evaluate the performance of a multi-sensor wearable device for detecting eating episodes.

- Objective: To assess the sensitivity and precision of the Automatic Ingestion Monitor v2 (AIM-2) in detecting food intake during unrestricted daily activities [19].

- Equipment: AIM-2 device (wearable egocentric camera and 3D accelerometer) mounted on eyeglass frames [19].

- Procedure:

- Data Collection: Participants wear the AIM-2 for a 24-hour free-living period. The camera captures one image every 15 seconds, and the accelerometer records head movement at 128 Hz [19].

- Ground Truth Annotation:

- Image Annotation: Review all captured images. Manually draw bounding boxes around all food and beverage objects present. Do not label foods during preparation or those belonging to others during social eating [19].

- Episode Annotation: Manually log the start and end times of all eating episodes based on the image review [19].

- Algorithm Development & Testing:

- Train a deep learning model (e.g., CNN) to recognize solid foods and beverages in the images.

- Train a separate classifier to detect eating episodes from the accelerometer (chewing) data.

- Implement a hierarchical classifier that combines the confidence scores from both the image and sensor models to make a final detection decision [19].

- Validation Metrics: Calculate Sensitivity, Precision, and F1-Score for eating episode detection, comparing the algorithm's output to the manually annotated ground truth [19].

Protocol 2: Comparative Gait Analysis for Nutritional Studies

This protocol is used to benchmark the accuracy of sensor systems against a clinical gold standard for gait measurement, a potential biomarker in nutritional intervention studies.

- Objective: To simultaneously evaluate the accuracy of wearable IMUs and a depth camera against an electronic walkway for gait analysis in a realistic clinical environment [23].

- Equipment:

- Gold Standard: ProtoKinetics Zeno Walkway (pressure-sensitive mat).

- Wearable System: APDM IMU sensors.

- Environmental System: Azure Kinect depth camera.

- Custom hardware for precise temporal synchronization [23].

- Procedure:

- Sensor Placement: Attach IMUs to the dorsal surface of both feet and the lower back (L5 vertebra) of participants [23].

- Task Execution: Participants perform two walking trials over the Zeno walkway:

- Single-Task: Straight, back-and-forth walking.

- Dual-Task: The same walking pattern while simultaneously counting backward from 80 in steps of seven (adds cognitive load) [23].

- Data Recording: All three systems (Walkway, IMUs, Kinect) record data synchronously throughout the trials [23].

- Data Analysis: Extract 11 gait markers (e.g., stride length, velocity, step time). Compute Mean Absolute Error (MAE) and Pearson correlation (r) for the IMU and Kinect data versus the Zeno walkway reference. Foot-mounted IMUs have been shown to demonstrate the highest accuracy [23].

The logical flow of this comparative validation is outlined below:

→ The Researcher's Toolkit: Essential Research Reagents & Materials

Table 2: Key Components for Sensor-Based Food Intake Research

| Item Name | Type | Primary Function in Research |

|---|---|---|

| Automatic Ingestion Monitor v2 (AIM-2) | Wearable Device | A multi-sensor platform (camera, accelerometer) worn on glasses for detecting eating episodes and capturing food images in free-living conditions [19]. |

| Inertial Measurement Unit (IMU) | Wearable Sensor | Typically contains accelerometers, gyroscopes, and magnetometers. Used to capture motion data for gait analysis, fall detection, and classification of physical activities like chewing [22] [23]. |

| APDM IMU System | Wearable System | A specific brand of wearable IMU system validated for high-accuracy gait analysis, often used as a benchmark in clinical research [23]. |

| Azure Kinect | Environmental Sensor | A depth-sensing camera that provides markerless motion capture. Used for gait analysis and activity recognition in instrumented spaces without requiring subjects to wear sensors [23]. |

| Zeno Walkway | Environmental System | An electronic walkway with integrated pressure sensors. Serves as a clinical gold standard for validating spatiotemporal gait parameters from other sensor systems [23]. |

| Breathable Skin Health Analyzer (BSA) | Specialized Wearable | A wearable device designed for long-term monitoring of skin health parameters (hydration, water loss), useful for studies on dietary impacts on skin barrier function [26]. |

| ESP32 Microcontroller | Hardware Component | A low-cost, Wi-Fi enabled microcontroller. Serves as the core for building custom, cost-effective IoT sensor systems, such as for human activity recognition [20]. |

Sensor Placement Optimization Frameworks and Implementation Strategies

Adapting Structural Health Monitoring OSP Principles for Biomedical Applications

This technical support guide explores the adaptation of Structural Health Monitoring (SHM) principles, specifically Optimal Sensor Placement (OSP), for biomedical applications, with a focus on sensor placement optimization for food intake monitoring research. SHM uses advanced sensing technologies to assess the condition and safety of structures like buildings and bridges [27]. Researchers are now leveraging these well-established principles to solve complex biomedical sensing challenges, such as accurately detecting and monitoring eating behaviors. This guide provides troubleshooting and methodological support for researchers embarking on this interdisciplinary work.

Research Reagent Solutions: Essential Materials for Food Intake Monitoring

The following table details key sensor types and materials used in the development of food intake monitoring systems.

Table 1: Key Sensor Technologies and Materials for Food Intake Monitoring

| Sensor/Material | Type | Primary Function in Food Intake Monitoring |

|---|---|---|

| Inertial Measurement Unit (IMU) [12] | Wearable Sensor | Captures motion data (via accelerometer and gyroscope) from the wrist or head to detect hand-to-mouth gestures and head movements associated with chewing and swallowing. |

| Acoustic Sensor [1] [3] | Wearable Sensor | Typically placed on the neck or head to capture sounds generated by chewing, biting, and swallowing activities. |

| Piezoelectric Sensor [3] | Wearable Sensor | Detects strains and vibrations on the skin surface resulting from jaw movements (mastication) and swallowing. |

| Electromyography (EMG) Sensor [1] | Wearable Sensor | Measures electrical activity generated by jaw and neck muscles during chewing and swallowing. |

| Camera / Image Sensor [1] | Non-Wearable Sensor | Used for food recognition and portion size estimation through computer vision algorithms, often analyzing images taken before and after an eating episode. |

| Gas Sensor [28] | Non-Wearable Sensor | Detects volatile organic compounds (VOCs) emitted by food, potentially useful for identifying food type or spoilage state in controlled environments. |

Experimental Protocols and Detailed Methodologies

Protocol 1: Detecting Eating Gestures with a Wrist-Worn IMU

This protocol is adapted from studies using Inertial Measurement Units for food consumption detection [12].

- Sensor Configuration: Attach a commercial IMU sensor to the participant's dominant wrist. Ensure the sensor is secure and comfortable for extended wear.

- Data Acquisition: Set the IMU to sample 3-axis accelerometer and 3-axis gyroscope data at a minimum frequency of 15 Hz. Record data continuously throughout the experiment.

- Experimental Procedure:

- Conduct sessions in a controlled laboratory setting.

- Participants perform a series of activities, including eating various foods (e.g., an apple, a sandwich, chips) and non-eating activities (e.g., talking, walking, gesturing).

- Precisely label the start and end times of all eating episodes in the data stream.

- Data Preprocessing:

- Apply noise filtering (e.g., a low-pass filter) to the raw sensor data.

- Segment the continuous data stream into fixed-length or variable-length windows for analysis.

- Model Training and Validation:

- Design a personalized deep learning model, such as a Recurrent Neural Network (RNN) with Long Short-Term Memory (LSTM) layers, to classify data segments as "eating" or "non-eating" [12].

- Train the model using a subset of the labeled data.

- Validate the model's performance on a separate, unseen test dataset using metrics like F1-score and accuracy. High accuracy (e.g., 98-99%) and a median F1-score of 0.99 have been reported in controlled studies [12].

Protocol 2: Identifying Chewing and Swallowing with Acoustic Sensing

This methodology is based on research that uses acoustic signals to monitor eating behavior [1] [3].

- Sensor Configuration: Fit a contact microphone or an acoustic sensor in a wearable form factor, such as a necklace, positioned to reliably capture sounds from the jaw and throat area.

- Data Acquisition: Record acoustic data at a sampling rate sufficient to capture the frequencies of chewing and swallowing (typically 8-44.1 kHz).

- Experimental Procedure:

- Participants consume different food types with varying textures (e.g., crunchy, soft, chewy) while acoustic data is recorded.

- Audio recordings are manually annotated to mark individual chews and swallows.

- Signal Processing:

- Pre-process the audio signal to remove background noise and non-food-related sounds to preserve user privacy and comfort [1].

- Extract relevant features from the audio signal in the time and frequency domains (e.g., Mel-Frequency Cepstral Coefficients - MFCCs).

- Event Detection:

- Use machine learning algorithms (e.g., support vector machines or convolutional neural networks) to identify and count chewing and swallowing events from the processed acoustic signal.

- Validate the algorithm's output against the manual annotations to determine detection accuracy.

Troubleshooting Guides and FAQs

Q1: Our model for detecting bites from wrist motion performs well in the lab but fails in real-world settings. What could be the issue?

A: This is a common challenge. The problem likely stems from overfitting to the controlled conditions of the lab and a lack of generalization.

- Solution: Increase the diversity of your training data. Collect data in various real-world scenarios (e.g., at a dinner table, in a cafeteria, while working) and include a wide range of non-eating gestures that mimic eating motions (e.g., brushing hair, talking on the phone). Techniques such as data augmentation, where you artificially create variations of your existing data, can also improve model robustness [3].

Q2: The acoustic signals from our neck-worn sensor are too noisy. How can we improve signal quality?

A: Background noise is a significant obstacle for acoustic monitoring.

- Solution:

- Hardware Improvement: Ensure the sensor has good skin contact to reduce ambient noise interference. Using a physical barrier or gel around the sensor can help.

- Signal Processing: Implement advanced filtering techniques, such as band-pass filters focused on the frequency range of chewing and swallowing (typically between 100-3000 Hz). Machine learning models, like deep neural networks, can also be trained to separate foreground (eating) sounds from background noise [1].

Q3: How do we determine the optimal number and placement of sensors on the body for monitoring eating behavior?

A: This is the core challenge of adapting OSP principles.

- Solution: Frame this as an optimization problem, similar to how SHM determines sensor placement on large structures [29].

- Define an Objective Function: This function should represent your goal, such as maximizing the detection accuracy of chewing events or minimizing the number of sensors.

- Use Bio-Inspired Optimization Algorithms: Employ algorithms like Genetic Algorithms (GA) or Particle Swarm Optimization (PSO) [29]. These algorithms can test millions of potential sensor configurations (location, type, number) in a simulation to find the one that best satisfies your objective function. For example, you can use a GA to find the single sensor placement on the wrist or head that provides the highest accuracy for bite detection.

Q4: Our food intake detection system has a high false positive rate. How can we improve its specificity?

A: A high false positive rate means the system is detecting eating when none is occurring.

- Solution: Implement a multi-sensor fusion approach. Instead of relying on a single sensing modality (e.g., just motion), combine data from multiple sensors [1] [3]. For instance, require that a "bite" event is only confirmed when the system detects:

- A hand-to-mouth gesture from the IMU, and

- A corresponding chewing sound from the acoustic sensor, and/or

- A specific jaw muscle activation from an EMG sensor. This logical combination of signals significantly reduces false alarms caused by isolated non-eating activities.

Workflow and Signaling Pathway Diagrams

Research and Optimization Workflow

The following diagram illustrates the high-level workflow for adapting SHM principles to food intake monitoring, from problem definition to system deployment.

Multi-Sensor Fusion Logic for Improved Detection

This diagram outlines the decision-making logic for a multi-sensor fusion system that reduces false positives by requiring concurrent signals from multiple sensors to confirm an eating event.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: What are the core components of an objective function for sensor placement in food intake monitoring?

Answer: Formulating an objective function is crucial for optimizing your sensor network. The core components typically involve balancing three competing objectives: coverage, sensitivity, and cost [30] [31]. The goal is to find a sensor configuration that maximizes information gain for detecting eating events while minimizing resource expenditure.

The table below summarizes these core components:

| Objective Component | Description | Consideration in Food Intake Monitoring |

|---|---|---|

| Coverage | The extent and reliability of the area or physiological processes monitored [30]. | Ensure sensors capture relevant data across all potential eating gestures and physiological signals (e.g., jaw movement, hand-to-mouth motion) [32] [33]. |

| Sensitivity | The ability to detect the phenomena of interest, such as chewing or swallowing, and distinguish them from non-eating activities [34]. | Maximize the detection of true eating episodes (true positives) while minimizing false positives from activities like talking or gum chewing [19]. |

| Cost | The financial and computational resources required, including sensor procurement, installation, data processing, and power consumption [30] [31]. | Balance the need for multiple or high-accuracy sensors against budget constraints and user comfort for wearable devices [30]. |

FAQ 2: How can I reduce false positives in my eating event detection system?

Answer: False positives, where non-eating activities are misclassified as eating, are a common challenge. A highly effective strategy is sensor fusion, which integrates data from multiple, heterogeneous sensors [19].

- Problem: A system relying solely on an accelerometer to detect head or wrist movement might misinterpret talking or gesturing as an eating episode [19].

- Solution: Integrate a second sensing modality to provide complementary information. For instance, combine the accelerometer data with images from a wearable egocentric camera. A hierarchical classifier can then use confidence scores from both the motion sensor and the image-based food recognition system to make a final, more accurate decision [19].

- Result: One study demonstrated that this integrated approach significantly improved performance in free-living conditions, achieving a 94.59% sensitivity and 70.47% precision, which was over 8% higher in sensitivity than using either method alone [19].

The following workflow diagram illustrates this multi-sensor fusion process for robust food intake detection:

FAQ 3: What methodologies can I use to formally optimize the sensor placement and selection?

Answer: For a rigorous optimization process, you can employ mathematical programming models. Integer Linear Programming (ILP) is a powerful method used to find the optimal sensor configuration based on your defined objective function and constraints [30].

- Methodology: ILP models are designed to handle problems where decisions are binary (e.g., place a sensor at a location or not). You can formulate one model to minimize cost while ensuring a minimum level of coverage and another to maximize coverage under a fixed budget [30].

- Framework: A Leader-Follower (bi-level) approach can be used to integrate these models, simultaneously solving for both cost and coverage to find a balanced optimal solution [30].

- Application: While often used in building sensor networks, this framework is directly applicable to determining the optimal number and placement of wearable sensors on the body to monitor eating behavior, ensuring reliable data coverage at the lowest possible cost [30].

The logical relationship between optimization objectives and methods can be visualized as follows:

FAQ 4: How do I validate that my wearable sensor system is accurately detecting eating episodes and estimating intake?

Answer: Validation requires a controlled study design where sensor data is compared against a reliable ground truth. The protocol below, adapted from a recent study, provides a robust methodology [33].

Experimental Validation Protocol for a Wearable Dietary Monitor

| Protocol Stage | Key Activities | Measured Parameters & Validation |

|---|---|---|

| 1. Participant Recruitment | - Recruit healthy volunteers within specific age and BMI ranges.- Obtain ethical approval and written informed consent [33]. | - Ensures subject safety and adherence to ethical guidelines. |

| 2. Controlled Meal Trials | - Conduct visits in a clinical research facility.- Provide pre-defined high- and low-calorie meals in randomized order [33]. | - Allows observation of physiological responses to different energy loads.- Controls for food type and portion size. |

| 3. Ground Truth Data Collection | - Blood Sampling: Collect via intravenous cannula to measure glucose, insulin, and appetite hormones.- Bedside Monitor: Use clinical-grade devices to measure heart rate, blood pressure, and SpO2 for sensor validation.- Manual Annotation: For image-based validation, manually review and annotate camera images for food presence and eating episodes [33] [19]. | - Provides objective biochemical and physiological ground truth.- Enables accuracy calculation for sensor-derived metrics (e.g., heart rate).- Creates a labeled dataset for training and testing algorithms. |

| 4. Sensor Data Acquisition | - Participants wear a custom multi-sensor band (e.g., on the wrist).- Record data before, during, and after meal consumption [33]. | - Inertial Measurement Unit (IMU): Captures hand-to-mouth movements.- PPG/SpO2 Sensor: Monitors heart rate and oxygen saturation.- Temperature Sensor: Tracks skin temperature changes. |

The Scientist's Toolkit: Research Reagent Solutions

The table below lists essential materials and their functions for setting up experiments in sensor-based food intake monitoring.

| Item | Function in Research |

|---|---|

| Inertial Measurement Unit (IMU) | A sensor package (accelerometer, gyroscope) integrated into a wearable band to detect and analyze eating gestures and wrist motions characteristic of hand-to-mouth movements [33] [19]. |

| Automatic Ingestion Monitor (AIM-2) | A specific wearable device (typically on eyeglasses) that houses an egocentric camera and a 3D accelerometer for the passive capture of images and head movement data related to eating [19]. |

| Pulse Oximeter Module | A sensor integrated into a wearable wristband to automatically track physiological responses to food intake, such as Heart Rate (HR) and blood Oxygen Saturation (SpO2) [33]. |

| Bedside Vital Sign Monitor | A clinical-grade stationery device used as a gold-standard reference to validate the accuracy of physiological parameters (HR, SpO2, blood pressure) measured by wearable sensors during controlled experiments [33]. |

| Integer Linear Programming (ILP) Model | A mathematical optimization technique used to formally determine the optimal type, number, and placement of sensors by balancing competing objectives like cost and coverage [30]. |

Frequently Asked Questions (FAQs)

Q1: In my food intake monitoring research, the wireless sensor network performance degrades as the subject's environment changes (e.g., from laboratory to free-living conditions). How can Genetic Algorithms help optimize sensor placement to maintain data quality?

A1: Genetic Algorithms (GAs) can optimize sensor node deployment by treating placement as a multi-objective optimization problem. In food intake monitoring, this ensures reliable data capture despite environmental changes.

- Problem: Initial sensor deployments often fail to account for dynamic signal attenuation caused by environmental factors like vegetation growth in agricultural settings or physical obstacles in free-living environments [35].

- GA Solution: A Non-dominated Sorting Genetic Algorithm (NSGA-II) can optimize placement by simultaneously maximizing coverage, minimizing over-coverage, and ensuring strong received signal strength [35].

- Implementation: The GA generates potential placement configurations, evaluates them against your objectives (e.g., coverage of eating areas, connectivity to base stations), and iteratively improves solutions through selection, crossover, and mutation operations.

Q2: When analyzing sensor data from dietary monitoring studies, my team gets conflicting results from traditional statistical tests. How can Bayesian methods provide more meaningful interpretations?

A2: Bayesian methods address key limitations of traditional frequentist statistics by providing direct probabilistic interpretations of results, which is particularly valuable for complex sensor data analysis.

- Key Advantage: Bayesian analysis provides direct probability statements about hypotheses (e.g., "There is a 95% probability that the true effect size lies between X and Y") rather than indirect p-values [36] [37].

- Practical Application: For sensor data, you can calculate Bayes Factors to quantify evidence for one sensor configuration over another, or create posterior distributions that incorporate prior knowledge from previous studies [37].

- Implementation Tools: Open-source software like JASP provides accessible Bayesian independent t-tests, while platforms like Stan enable more complex hierarchical modeling of sensor data [36] [37].

Q3: What are the most common sensor modalities for eating behavior monitoring, and how do their accuracy compare in real-world conditions?

A3: The table below summarizes primary sensor types and their performance characteristics based on current research:

Table 1: Sensor Modalities for Eating Behavior Monitoring

| Sensor Type | Measured Metrics | Accuracy/Performance | Limitations |

|---|---|---|---|

| Acoustic Sensors [1] | Chewing, swallowing events | High accuracy in lab settings | Privacy concerns, background noise interference |

| Inertial Measurement Units (Wrist) [1] | Hand-to-mouth gestures, bite counting | Moderate accuracy for bite detection (varies 60-85%) | False positives from similar gestures |

| Camera-Based Systems [1] | Food recognition, portion size estimation | Improving with deep learning; challenges with mixed foods | Privacy issues, lighting dependency |

| Wearable Sensors (Head/Neck) [4] | Chewing frequency, swallowing rate | Good for laboratory validation | User comfort and social acceptability in free-living |

Q4: How do I implement a Genetic Algorithm for sensor selection and placement in a heterogeneous monitoring environment?

A4: Implement the following workflow for sensor optimization using GAs:

Table 2: Genetic Algorithm Implementation Parameters

| Component | Configuration | Considerations for Food Monitoring |

|---|---|---|

| Chromosome Encoding | Binary string representing sensor locations | Each gene = potential sensor location in monitoring area |

| Fitness Function | Multi-objective: coverage, connectivity, energy efficiency [38] | Weight coverage of eating areas highest for dietary studies |

| Selection Method | Tournament selection or roulette wheel | Maintain diversity to avoid local optima |

| Crossover Rate | Adaptive (0.6-0.9) [39] | Higher rates promote exploration of new configurations |

| Mutation Rate | Adaptive (0.01-0.1) [39] | Prevents premature convergence to suboptimal layouts |

Diagram 1: Genetic Algorithm Optimization Workflow

Q5: What computational challenges might I face with Bayesian analysis of continuous sensor data, and how can I address them?

A5: Bayesian methods for sensor data present specific computational challenges:

- High-Dimensional Data: Continuous sensor streams generate large parameter spaces. Solution: Use Hamiltonian Monte Carlo (HMC) or No-U-Turn Sampler (NUTS) for more efficient exploration of posterior distributions [36].

- Convergence Diagnosis: Implement multiple chains and monitor Gelman-Rubin statistics (R-hat < 1.01 indicates convergence) and effective sample size [36].

- Model Specification: Choose appropriate priors based on pilot studies or literature, and conduct sensitivity analysis to ensure results aren't overly dependent on prior choice [37].

Troubleshooting Guides

Problem: Poor Sensor Coverage in Specific Eating Locations

Symptoms: Gaps in data collection during meal episodes, particularly in free-living environments.

Solution: Implement NSGA-II multi-objective optimization specifically for your monitoring environment [35].

Diagram 2: Sensor Coverage Optimization Process

Implementation Protocol:

- Environment Mapping: Create a detailed map of the monitoring area, identifying all potential eating locations and communication obstacles.

- Objective Definition: Formulate three key objectives:

- Maximize coverage of eating areas

- Minimize over-coverage (redundancy)

- Maintain strong RSSI (Received Signal Strength Indicator) between nodes [35]

- NSGA-II Configuration:

- Population size: 50-100 individuals

- Generations: 100-200 iterations

- Crossover probability: 0.8-0.9

- Mutation probability: 0.1-0.2

- Validation: Deploy sensors according to the optimized placement and collect validation data for 2-3 days, adjusting based on performance gaps.

Problem: Inconsistent Eating Detection Across Diverse Subject Populations

Symptoms: Variable accuracy in detecting eating events across different demographic groups or eating styles.

Solution: Implement Bayesian hierarchical models to account for population variability while incorporating prior knowledge.

Methodology:

- Data Collection: Gather labeled eating data from a representative sample of your target population.

- Model Specification:

- Use weakly informative priors for population-level parameters

- Include group-level effects for demographic factors

- Specify likelihood functions appropriate for your sensor data type [36]

- Computational Implementation:

- Use Stan or PyMC3 for model implementation

- Run 4 parallel chains with 2000 iterations each (50% warm-up)

- Monitor R-hat statistics and effective sample size [36]

Table 3: Bayesian Model Checking Metrics

| Diagnostic | Target Value | Interpretation |

|---|---|---|

| R-hat | < 1.01 | Chains have converged |

| Effective Sample Size | > 400 per chain | Sufficient independent samples |

| Bayes Factor | > 3 or < 0.33 | Substantial evidence for H1 or H0 |

| 95% Credible Interval | Excludes zero | Practically significant effect |

Problem: Rapid Battery Depletion in Wearable Food Monitoring Sensors

Symptoms: Sensors require frequent recharging, leading to data gaps during extended monitoring periods.

Solution: Implement a Genetic Algorithm optimized sensor selection and adaptive sampling strategy [38].

Optimization Protocol:

- Define Energy Optimization Objectives:

- Minimize number of active sensors

- Balance energy consumption across nodes

- Maintain minimum coverage threshold [38]

- Chromosome Encoding: Represent sensor activation schedules as binary strings.

- Fitness Function: Combine energy usage metrics with coverage quality scores.

- Adaptive Sampling: Integrate with Extended Kalman Filters to dynamically adjust sampling rates based on detected activity levels [38].

Research Reagent Solutions

Table 4: Essential Computational Tools for Optimization Research

| Tool/Category | Specific Examples | Research Application |

|---|---|---|

| Genetic Algorithm Frameworks | DEAP, PyGAD, MATLAB GA Toolbox | Custom implementation of sensor placement optimization |

| Bayesian Analysis Platforms | Stan (with RStan/PyStan), JASP, PyMC3 | Probabilistic modeling of sensor data and eating behavior |

| Sensor Hardware Platforms | Arduino, Raspberry Pi with custom sensors | Prototyping wearable food intake monitoring systems |

| Wireless Communication | IEEE 802.15.4, Bluetooth Low Energy, LoRaWAN | Reliable data transmission from wearable sensors |

| Data Processing Libraries | NumPy, Pandas, Scikit-learn | Preprocessing and feature extraction from sensor streams |

| Visualization Tools | Matplotlib, Seaborn, Graphviz | Results communication and algorithm workflow design |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common types of sensors used for jaw motion and chewing detection in research? Researchers primarily use motion sensors (like accelerometers), acoustic sensors (microphones), and strain sensors (such as piezo-electric or flex sensors) to detect chewing. These sensors can be integrated into wearable devices, often placed on the head (e.g., on eyeglass frames) or neck to capture jaw movement, head motion, and chewing sounds [1] [19] [8].

FAQ 2: My sensor system is producing a high number of false positives. How can I reduce this? A high false-positive rate is a common challenge. It can be mitigated by:

- Sensor Fusion: Combining data from multiple sensor types. For example, integrating an accelerometer (for chewing motion) with a camera (for visual food confirmation) can significantly reduce false detections from non-eating activities like gum chewing or talking [19].

- Algorithm Adjustment: Fine-tuning the classification algorithms to improve the distinction between eating and non-eating signals. Increasing the detection threshold or using more advanced machine learning models can help [19] [8].

FAQ 3: Where is the optimal placement for a jaw motion sensor to ensure accurate chewing detection? The optimal placement for a wearable jaw motion sensor is typically on the head, close to the jaw joints or muscles. A common and effective approach documented in research is to attach the sensor system (e.g., an accelerometer) to the temple of a pair of eyeglasses. This position reliably captures the vibrations and movements associated with chewing [19] [8]. For strain sensors, direct contact with the skin over the temporalis or masseter muscle is often required [19].