Real-Time Eating Event Detection: Sensor Algorithms, Clinical Validation, and Future Directions for Biomedical Research

This article provides a comprehensive review of real-time eating event detection algorithms, a critical emerging field at the intersection of wearable sensing, machine learning, and personalized health.

Real-Time Eating Event Detection: Sensor Algorithms, Clinical Validation, and Future Directions for Biomedical Research

Abstract

This article provides a comprehensive review of real-time eating event detection algorithms, a critical emerging field at the intersection of wearable sensing, machine learning, and personalized health. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of eating behavior measurement, delves into diverse methodological approaches from inertial sensors to multi-modal systems, and analyzes performance optimization and validation strategies. By synthesizing the latest research, including recent 2024-2025 studies, this review aims to equip professionals with the knowledge to evaluate these technologies for applications in clinical trials, chronic disease management, and objective dietary assessment, ultimately bridging the gap between technological innovation and biomedical evidence generation.

The Science of Measuring Eating: From Behavior to Biomedical Data

Within the scope of research on real-time eating event detection algorithms, the precise definition and quantification of core eating behavior metrics are foundational. These micro-level behaviors—chewing, biting, swallowing, and hand-to-mouth gestures—constitute the "meal microstructure" and serve as critical objective biomarkers for understanding individual eating patterns, quantifying energy intake, and developing interventions for conditions ranging from obesity to eating disorders [1] [2]. The move beyond subjective self-reporting methods to automated, sensor-based detection relies on a robust framework for measuring these behaviors. This document provides detailed application notes and experimental protocols for defining and quantifying these key metrics, supporting the development of more accurate and reliable detection algorithms.

Defining Core Eating Behavior Metrics

The following section delineates the standard definitions and quantitative measures for each core eating behavior metric, which are essential for creating a common ground in algorithm development and validation.

Chewing (Mastication): The process of crushing and grinding food with the teeth in preparation for swallowing. It is a rhythmic jaw movement that mixes food with saliva.

- Primary Measures:

- Chew Count: The total number of chewing cycles within an eating episode.

- Chewing Rate/Frequency: The number of chews per minute (CPM).

- Chewing Duration: The total time spent chewing during a meal or per food bolus.

- Primary Measures:

Biting: The action of cutting or ingesting a piece of food, typically involving the incisor teeth, which initiates a new eating sequence.

- Primary Measures:

- Bite Count: The total number of bites taken during an eating episode.

- Bite Rate: The number of bites per minute (BPM).

- Bite Size: The estimated mass or volume of food consumed per bite (often derived from total intake divided by bite count).

- Primary Measures:

Swallowing (Deglutition): The complex neuromuscular act of transporting food from the mouth through the pharynx and into the esophagus.

- Primary Measures:

- Swallow Count: The total number of swallows during an eating episode.

- Swallowing Rate: The number of swallows per minute.

- Swallow Identification: The acoustic or kinematic signature of a swallow, distinct from other activities like talking or coughing [3].

- Primary Measures:

Hand-to-Mouth Gestures: The movement of the hand (with or without utensils) from a location outside the personal space toward the mouth, typically preceding a bite.

- Primary Measures:

- Gesture Count: The number of hand-to-mouth movements.

- Gesture Rate: The frequency of these gestures per minute.

- Gesture Duration: The time taken to complete the movement from start to mouth contact.

- Primary Measures:

Quantitative Performance of Sensing Modalities

The choice of sensor modality significantly impacts the accuracy with which eating behaviors can be detected. The following table summarizes the performance of various technologies as reported in recent literature. Accuracy is often reported as F1-score, a harmonic mean of precision and recall, where a value of 1 represents perfect precision and recall.

Table 1: Performance Metrics of Sensor Modalities for Eating Behavior Detection

| Sensor Modality | Target Behavior | Reported Performance (F1-Score/Accuracy/Error) | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Video (Computer Vision) | Bite Count | F1-Score: ~70.6% (ByteTrack model in children) [1] | Non-invasive, rich contextual data | Privacy concerns, sensitive to occlusion and lighting |

| Mass & Energy Intake | Absolute Percentage Error: 25.2% (mass), 30.1% (energy) [4] | |||

| Piezoelectric Strain Sensor | Chewing & Swallowing | High inter-rater reliability (ICC >0.98) for manual annotation from sensor data [3] [4] | Direct measure of jaw movement, robust | Can be obtrusive, placement affects signal |

| Acoustic Sensor | Swallowing & Chewing | Effective for distinguishing swallowing sounds from other noises [3] | Can detect internal sounds of ingestion | Susceptible to ambient noise, privacy concerns |

| Inertial Measurement Unit (IMU/Wrist Sensor) | Hand-to-Mouth Gestures | Commonly used as a proxy for bite count [2] | Comfortable, widely available (e.g., smartwatches) | Prone to false positives from non-eating gestures |

Detailed Experimental Protocols for Data Acquisition

A rigorous, multi-modal approach is recommended for collecting ground-truth data to train and validate detection algorithms. The protocols below outline standardized methodologies.

Protocol: Multi-Modal Laboratory Data Collection

Objective: To simultaneously capture high-fidelity data on chewing, biting, swallowing, and hand-to-mouth gestures in a controlled environment for algorithm development [3] [4].

Materials:

- See "The Scientist's Toolkit" below.

- A controlled laboratory setting with minimal auditory and visual distractions.

- Standardized test meals (e.g., solid foods with varying textures).

Procedure:

- Sensor Setup:

- Attach the piezoelectric strain sensor below the participant's right ear, on the mandible, to capture jaw movements.

- Place an acoustic sensor (e.g., a contact microphone) on the neck lateral to the laryngopharynx to capture swallowing sounds.

- Fit the participant with an inertial measurement unit (IMU) on the wrist of their dominant hand to track hand-to-mouth gestures.

- Video Recording:

- Data Synchronization:

- Ensure all sensor data streams and video are synchronized to a common time source at the beginning of the recording session.

- Meal Consumption:

- Provide the participant with the test meal. Instruct them to eat normally until they are comfortably full or until a time limit (e.g., 30 minutes) is reached.

- Data Collection:

- Start all sensors and the video recorder simultaneously before the participant begins eating. Stop all recording once the meal is concluded.

Protocol: Manual Annotation of Eating Behaviors (Gold Standard)

Objective: To create a manually annotated "gold standard" dataset from the synchronized multi-modal recordings for training and evaluating automated algorithms [3] [4].

Materials:

- Synchronized multi-modal data (video, sensor signals).

- Video and signal annotation software (e.g., ELAN, ANVIL, or custom-designed software).

Procedure:

- Rater Training: Train multiple human raters to identify and label the start and end times of each target behavior based on the defined metrics.

- Annotation:

- Bites: Mark the timestamp when a hand brings food to the mouth and the food is ingested, as observed in the video.

- Chews: Mark each distinct jaw closure observed in the video or identified as a characteristic cyclic pattern in the strain sensor signal.

- Swallows: Mark the timestamp of a swallow, identified by a characteristic laryngeal elevation in the video, a specific sound in the acoustic signal, or a distinct pattern in the strain sensor signal [3].

- Hand-to-Mouth Gestures: Mark the start (initiation of hand movement toward mouth) and end (wrist reversal) of the gesture from the video and IMU data.

- Inter-Rater Reliability: Calculate Intra-class Correlation Coefficients (ICC) or Cohen's Kappa between raters to ensure consistency. High reliability (ICC > 0.9) is achievable for these metrics [3] [4].

- Ground Truth Generation: Resolve discrepancies between raters through discussion to create a single, consensus-based ground truth dataset.

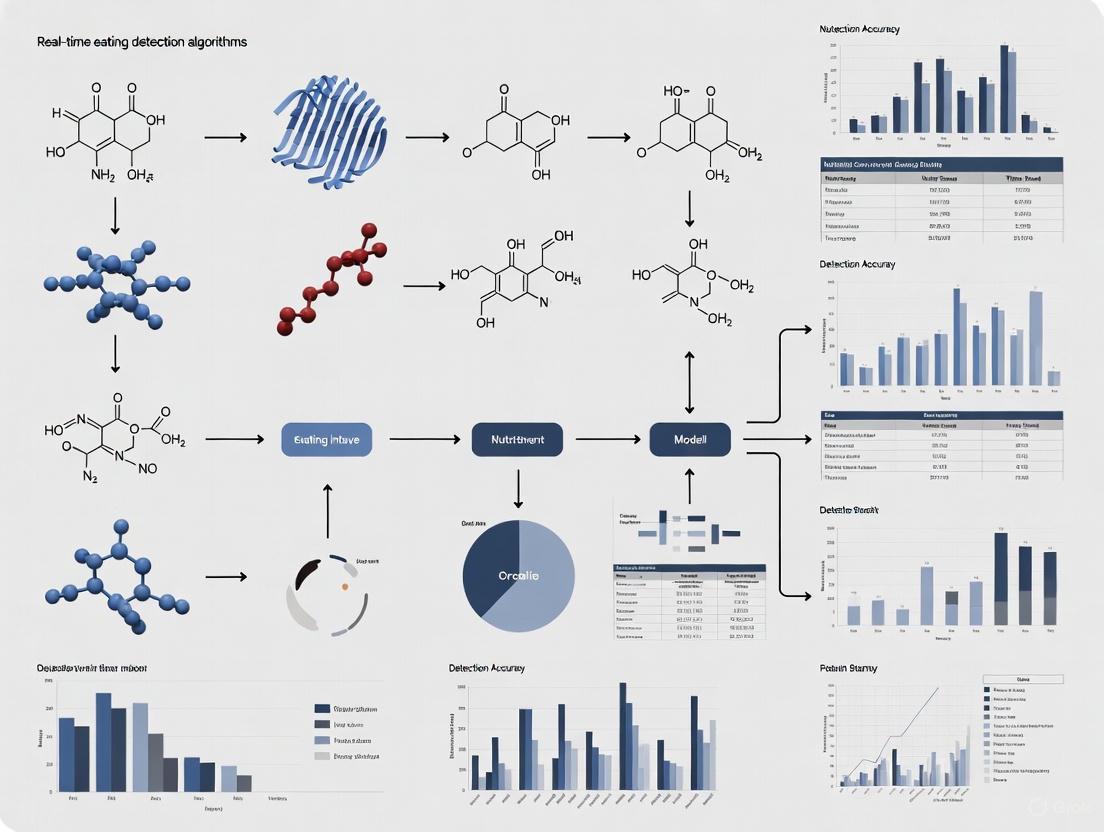

The following diagram illustrates the workflow for creating a gold-standard dataset for eating behavior analysis.

The Scientist's Toolkit: Research Reagent Solutions

The following table catalogs essential materials and sensors used in the featured experiments for quantifying eating behavior.

Table 2: Essential Research Materials and Sensors for Eating Behavior Analysis

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Piezoelectric Strain Sensor (e.g., LDT0-028K) | Detects jaw movements during chewing by measuring strain below the ear. | Highly sensitive to mechanical deformation; provides a clear signal for masticatory cycles [4]. |

| Contact Microphone / Acoustic Sensor | Captures swallowing and chewing sounds via skin contact near the larynx. | Effective for distinguishing swallowing acoustics from speech and noise; avoids ambient sound [3]. |

| Inertial Measurement Unit (IMU) | Tracks arm and wrist kinematics to detect hand-to-mouth gestures. | Typically includes accelerometer and gyroscope; can be integrated into a wrist-worn device [2]. |

| Network Camera (e.g., Axis M3004-V) | Provides high-quality video for manual annotation and computer vision. | Used at 30 fps for capturing detailed eating microstructure; serves as a primary validation source [1]. |

| Hard Viscoelastic Test Food | Standardized food for comminution tests to assess masticatory performance. | Cylindrical shape (e.g., 20mm diameter x 10mm height); allows for objective particle analysis post-chewing [5]. |

| 3D Jaw Tracking System | Precisely records jaw movements in three dimensions during chewing. | Uses a magnet attached to the lower incisors and a sensor array on a head-frame to track kinematics [5]. |

| Annotation Software | Software for manually labeling events in video and sensor signal data. | Critical for creating ground truth; requires multi-modal synchronization and export capabilities [4]. |

The accurate definition and measurement of chewing, biting, swallowing, and hand-to-mouth gestures are critical for advancing the field of real-time eating event detection. The protocols and metrics outlined herein provide a standardized framework for researchers to generate high-quality, multi-modal datasets. By leveraging a combination of sensor technologies and rigorous annotation practices, the development of robust algorithms that can operate in both controlled and free-living environments is significantly accelerated. This groundwork is essential for future research aimed at personalized nutritional interventions, clinical monitoring, and a deeper understanding of ingestive behavior.

The Critical Limitations of Self-Reported Dietary Assessment in Clinical Research

Accurate dietary assessment is a cornerstone of clinical nutrition research, forming the basis for investigating links between diet and health and for developing evidence-based public health guidance [6]. For decades, the field has predominantly relied on self-reported dietary instruments, including 24-hour recalls, food frequency questionnaires (FFQs), and food diaries [7] [2]. However, a substantial body of evidence now demonstrates that these methods are prone to significant error, thereby limiting the validity and translational potential of research findings [6] [7] [8]. This document outlines the critical limitations of self-reported dietary assessment, contextualized within a broader thesis on the development of real-time eating event detection algorithms. It further provides experimental protocols for key validation studies and introduces a toolkit of emerging technological solutions designed to mitigate these long-standing challenges.

Critical Limitations of Self-Reported Dietary Data

Self-reported dietary data are compromised by several systematic and random errors that introduce substantial bias into nutritional research.

Systematic Misreporting and Energy Underreporting

The most documented issue is the systematic underreporting of energy intake, which is consistently validated by objective biomarkers.

- Prevalence and Magnitude: Studies comparing self-reported energy intake to energy expenditure measured by the doubly labeled water (DLW) technique—considered the gold standard—consistently find significant underreporting. A comprehensive analysis using the IAEA DLW database revealed that approximately 33% of adult dietary reports in major national surveys were misreported, primarily through underreporting [9].

- Dependence on BMI: The degree of underreporting is not random; it increases with body mass index (BMI). Individuals with higher BMI, or those concerned about their body weight, are more likely to underreport intake [7]. This variable bias systematically skews diet-disease relationships in studies of obesity.

- Selective Misreporting: Macronutrients and food groups are not underreported equally. Protein intake is generally less underreported compared to fats and carbohydrates, and foods with a "negative health image" (e.g., sweets, fast food) are more likely to be omitted or underreported than "healthy" foods like fruits and vegetables [7] [10].

Table 1: Evidence of Systematic Misreporting in Self-Reported Dietary Intake

| Study Type | Comparison Method | Key Finding | Implication |

|---|---|---|---|

| Biomarker Validation [7] | Doubly Labeled Water (DLW) | Systematic underreporting of Energy Intake (EIn), worsening with higher BMI. | Self-reported EIn is invalid for energy balance studies. |

| Controlled Feeding [10] | Provided Menu Items | Energy-adjusted fat underreported in high-fat diet; carbohydrates underreported in high-carb diet. | Macronutrient-specific misreporting biases intervention outcomes. |

| Biomarker Comparison [8] | Urinary Nutritional Biomarkers | Ranking of individuals by intake (e.g., into quintiles) was highly unreliable when using self-report data. | Attenuates diet-disease relationships; obscures true effects. |

The Problem of Food Composition Variability and Data Processing

Even if self-reported food intake were perfectly accurate, translating this information into nutrient intake introduces another layer of significant error.

- Inherent Variability: The chemical composition of food is highly variable due to factors like cultivar, soil, growing conditions, storage, processing, and cooking methods [6] [8]. Relying on single point estimates (mean values) from food composition databases ignores this variability.

- Impact on Intake Estimation: Research on bioactive compounds (e.g., flavan-3-ols, nitrate) shows that when this variability is accounted for, the same reported diet could place an individual in either the bottom or top quintile of intake [8]. This makes it nearly impossible to reliably rank participants by intake, a common practice in nutritional epidemiology.

Limitations in Capturing Eating Behavior

Self-report methods are poorly suited to capturing the complex, dynamic behaviors associated with eating.

- Lack of Granularity: They fail to objectively measure key behavioral metrics such as eating rate, chewing frequency, meal duration, and eating environment [2]. These metrics are subconscious yet critically important for understanding conditions like obesity and diabetes.

- Recall and Respondent Burden: Reliance on memory leads to recall bias, while the burden of detailed logging leads to poor participant compliance and high dropout rates in long-term studies, compromising data quality [6] [11].

Experimental Protocols for Validating Dietary Assessment Methods

To advance the field, rigorous validation of new dietary assessment methods against objective criteria is essential. Below are detailed protocols for two key types of validation studies.

Protocol 1: Validation Against Doubly Labeled Water (DLW)

This protocol serves as the gold standard for validating total energy intake reporting.

1. Objective: To determine the accuracy and extent of misreporting in self-reported energy intake by comparison with total energy expenditure (TEE) measured by the DLW method.

2. Materials and Reagents:

- Doubly labeled water (^2H₂¹⁸O)

- Vacutainers for urine or saliva collection

- Isotope ratio mass spectrometer (IRMS)

- Self-report tools (e.g., 24-hr recall forms, food diary app)

- Algorithm for calculating CO₂ production and TEE from elimination kinetics [7]

3. Experimental Workflow:

4. Procedure: 1. Participant Preparation: Recruit participants meeting study criteria (e.g., stable weight, non-pregnant). Obtain informed consent. 2. Baseline Sample Collection: Collect a baseline urine or saliva sample from each participant prior to dosing. 3. DLW Administration: Administer an oral dose of DLW according to participant body weight. 4. Post-Dose Sample Collection: Collect subsequent urine/saliva samples at predetermined intervals over 8-14 days to track the elimination kinetics of the isotopes. 5. Isotope Analysis: Analyze all samples using IRMS to determine the differential elimination rates of deuterium and oxygen-18. 6. Energy Expenditure Calculation: Calculate carbon dioxide production rate and subsequently TEE using established equations [7]. 7. Dietary Data Collection: During the same measurement period, collect self-reported dietary data using the method under investigation (e.g., multiple 24-hour recalls). 8. Data Analysis: Compare self-reported energy intake to measured TEE. For weight-stable individuals, the two values should be approximately equal. Significant deviation indicates misreporting.

Protocol 2: Laboratory Validation of a Wearable Sensor for Eating Event Detection

This protocol validates the technical performance of a wearable eating detection sensor against video observation in a controlled laboratory setting.

1. Objective: To evaluate the accuracy of a wearable inertial sensor in detecting individual eating gestures (e.g., bites, chews) under controlled conditions.

2. Materials and Reagents:

- Commercial smartwatch or custom inertial measurement unit (IMU) sensor

- Data logging smartphone or device

- Video recording system for ground truth annotation

- Machine learning software platform (e.g., Python with scikit-learn/TensorFlow)

- Standardized test meals

3. Experimental Workflow:

4. Procedure: 1. Sensor Configuration: Configure the inertial sensor (e.g., a smartwatch with accelerometer/gyroscope) to stream or record data at a sufficient frequency (e.g., ≥15 Hz [12]). 2. Participant Instrumentation: Fit the sensor securely on the participant's dominant wrist. 3. Laboratory Session: Participants are asked to perform a series of activities while being video-recorded. This includes: - Eating tasks: Consuming a standardized meal with various utensils. - Non-eating tasks: Activities that involve similar hand-to-head gestures (e.g., drinking water, talking on the phone, face touching). 4. Data Synchronization: Ensure the sensor data and video recording are synchronized using a common time signal or a synchronization event. 5. Ground Truth Annotation: Manually review the video recording to label the precise start and end times of each eating gesture (bite, chew) and non-eating activity. 6. Data Processing and Feature Extraction: Segment the synchronized sensor data into windows (e.g., 6-second windows with 50% overlap [13]). Extract relevant features (e.g., mean, variance, skewness, kurtosis, temporal features) from each axis of the inertial data. 7. Model Training and Validation: Use the extracted features and video-derived labels to train a machine learning classifier (e.g., a recurrent neural network with LSTM layers [12]) to distinguish eating from non-eating gestures. Perform validation using a hold-out test set or cross-validation. 8. Performance Analysis: Calculate standard performance metrics including accuracy, precision, recall, F1-score, and create a confusion matrix to evaluate the classifier's performance [12] [13].

The Scientist's Toolkit: Research Reagent Solutions

The transition from subjective self-report to objective digital sensing requires a new toolkit for researchers. The following table details key components.

Table 2: Essential Materials and Tools for Modern Dietary Assessment Research

| Item Name | Function/Application | Key Characteristics |

|---|---|---|

| Inertial Measurement Unit (IMU) [2] [12] [13] | Captures hand-to-mouth gestures and wrist movements as a proxy for bite detection. | Typically contains accelerometer and gyroscope; can be embedded in a commercial smartwatch; sampling rate ≥15Hz. |

| Wearable Acoustic Sensor [11] [2] | Detects characteristic sounds of chewing and swallowing. | Placed on the neck or jaw; requires filtering of non-food noises for privacy and accuracy. |

| Doubly Labeled Water (DLW) [7] [9] | Gold standard method for validating total energy intake in free-living conditions. | Non-invasive; uses stable isotopes (²H, ¹⁸O) to measure CO₂ production and calculate energy expenditure. |

| Nutritional Biomarkers [8] | Objective measures of intake for specific nutrients/foods (e.g., urinary nitrogen for protein, (‑)-epicatechin for flavan-3-ol intake). | Validated against controlled intake; bypasses errors from self-report and food composition databases. |

| Ecological Momentary Assessment (EMA) [13] | Captures contextual data in real-time, triggered by passive detection. | Short questionnaires delivered via smartphone; minimizes recall bias for factors like mood, company, and location. |

| Automatic Ingestion Monitor (AIM-2) [11] | A multi-sensor device for comprehensive dietary monitoring. | Integrates camera, inertial, and other sensors; designed to reduce the burden of dietary logging. |

The critical limitations of self-reported dietary assessment—including systematic misreporting, food composition variability, and an inability to capture nuanced eating behaviors—pose a fundamental challenge to the credibility and translational potential of nutrition research [6] [7] [8]. While these traditional methods may continue to have a role in large-scale epidemiology, their shortcomings necessitate a paradigm shift towards more objective, sensor-based approaches. The experimental protocols and research tools detailed herein provide a framework for validating and deploying the next generation of dietary monitoring technologies. The integration of real-time eating event detection algorithms with objective biomarkers and contextual data capture represents the most promising path forward for obtaining reliable, granular, and actionable insights into the complex relationships between diet and health.

The first step in any automated dietary monitoring system is the automatic detection of eating episodes, a challenge that has garnered significant attention in ubiquitous computing and health informatics [14]. Research has demonstrated that dietary habits are critically important to overall human health, yet traditional assessment methods like food frequency questionnaires and 24-hour recalls suffer from well-documented limitations including recall bias and under-reporting [15] [16]. The emergence of wearable sensing technologies has created new opportunities for objective, continuous monitoring of eating behaviors in free-living conditions, forming a crucial component for applications ranging from obesity and diabetes management to eating disorder interventions [17] [12].

This review synthesizes current research on sensor modalities for eating detection, presenting a comprehensive taxonomy spanning acoustic, inertial, visual, and multimodal approaches. Within the broader context of real-time eating event detection algorithms, we examine the technical implementation, performance characteristics, and practical considerations of each sensing paradigm. For researchers and drug development professionals working in digital phenotyping or behavioral monitoring, understanding these modalities' comparative advantages and limitations is essential for selecting appropriate technologies for clinical trials and therapeutic interventions.

A Taxonomy of Sensor Modalities for Eating Detection

Eating detection systems can be categorized according to their underlying sensing modality, each with distinct mechanisms for capturing eating-related signals. The taxonomy below classifies these approaches based on the primary physical phenomena they measure and their corresponding implementation approaches.

Figure 1: Taxonomy of sensor modalities for eating detection, categorized by sensing principle and specific detection approaches.

Acoustic Sensing Modalities

Acoustic sensing approaches detect eating episodes by capturing sounds produced during chewing and swallowing activities. These systems typically utilize miniature microphones positioned in various locations to capture audio signatures of mastication and deglutition.

The iHearken system exemplifies this approach with a headphone-like wearable that captures chewing sounds for food intake recognition. By employing a Bidirectional Long Short-Term Memory (Bi-LSTM) softmax network for analyzing chewing sound signals, this system achieved remarkable performance with 97.4% accuracy, 96.8% precision, and 98.0% recall in classifying solid and liquid foods [18]. The system operates through a four-stage pipeline: data acquisition, event detection using a pre-trained model, bottleneck feature extraction, and classification based on the Bi-LSTM softmax model.

Other acoustic implementations include neck-worn systems that detect swallowing sounds. One such system achieved a recall of 79.9% and precision of 67.7% for swallowing detection [17]. However, acoustic methods face challenges in noisy environments and may raise privacy concerns among users, potentially limiting their adoption for continuous monitoring.

Inertial Sensing Modalities

Inertial sensing approaches detect eating episodes through motion signatures associated with eating activities, primarily using accelerometers and gyroscopes embedded in wearable devices. These can be further categorized into three subtypes: wrist-worn sensors detecting hand-to-mouth gestures, head-worn sensors capturing jaw movement, and combination approaches.

Wrist-worn inertial sensors have gained popularity due to the widespread adoption of smartwatches. One smartwatch-based system using a 3-axis accelerometer demonstrated the practicality of this approach by detecting eating moments through food intake gesture spotting and temporal clustering of these gestures. When evaluated in free-living conditions, this system achieved F-scores of 76.1% (66.7% precision, 88.8% recall) in a one-day study with 7 participants and 71.3% (65.2% precision, 78.6% recall) in a longer 31-day study with one participant [16].

Head-worn inertial sensors typically offer higher accuracy for detecting chewing activities by capturing jaw movements more directly. The EarBit system, an experimental head-mounted wearable, utilized an inertial measurement unit (IMU) behind the ear to measure jaw motion and achieved 93% accuracy with an F1-score of 80.1% in detecting chewing instances in unconstrained environments [17]. Similarly, the OCOsense smart glasses, which detect chewing through jaw movement, demonstrated impressive performance with F1-scores of 0.89 in week two of validation (detecting 476 of 498 eating events) and 0.91 in week three (detecting 528 of 548 real-time events) [19].

Visual Sensing Modalities

Visual approaches to eating detection utilize cameras to capture feeding gestures, food presence, or object-in-hand interactions. These systems can provide rich contextual information but often raise privacy considerations that must be carefully addressed.

The When2Trigger system represents an advanced vision-based approach that uses both RGB and thermal imaging to detect eating episodes through hand-object interactions. This system employs a lightweight YOLOX object detection backbone with a custom loss function to simultaneously detect hands and objects-in-hand, then clusters these detections to form gestures and eating episodes. By incorporating thermal sensing, the system can distinguish smoking gestures from eating gestures, reducing false positives. In evaluation across 36 participants, this method achieved an F1-score of 89.0% using an average of 10 gestures and could detect eating episodes as short as 1.3 minutes [20].

Another visual approach utilized the Automatic Ingestion Monitor v2 (AIM-2), a wearable egocentric camera that captures images every 15 seconds. Through deep learning-based recognition of solid foods and beverages in these images, this system provided visual confirmation of eating episodes [14]. While visual methods can provide high confidence through direct observation, they typically face challenges related to power consumption and computational requirements for real-time operation.

Multimodal Fusion Approaches

Multimodal fusion approaches integrate complementary sensing modalities to overcome limitations of individual sensors and improve overall detection accuracy. These systems leverage the strengths of multiple sensing approaches to achieve more robust eating detection across varying conditions and individual differences.

One innovative fusion technique transformed multisensor data into 2D covariance representations that capture the statistical dependencies between different signals. This approach embedded joint variability information from multiple modalities into a single 2D image representation, which was then classified using deep learning models. When evaluated using leave-one-subject-out cross-validation, this method achieved a precision of 0.803, demonstrating the value of leveraging inter-modality correlation patterns for eating activity recognition [21].

Another integrated approach combined image-based and sensor-based detection from the AIM-2 wearable device. By implementing hierarchical classification to combine confidence scores from both image and accelerometer classifiers, this fusion method achieved 94.59% sensitivity, 70.47% precision, and an 80.77% F1-score in free-living environments - significantly outperforming either individual method alone [14].

For drinking activity detection specifically, a multi-sensor fusion approach that combined wrist and container movement signals with acoustic swallowing signals demonstrated substantial improvements over single-modality methods. This multimodal system achieved an F1-score of 96.5% using a support vector machine classifier in event-based evaluation, highlighting the power of combining complementary sensing modalities [22].

Comparative Performance Analysis

Table 1: Performance comparison of different eating detection sensor modalities

| Sensing Modality | Representative System | Accuracy (%) | Precision (%) | Recall (%) | F1-Score (%) | Key Advantages | Key Limitations |

|---|---|---|---|---|---|---|---|

| Acoustic | iHearken [18] | 97.42 | 96.81 | 98.00 | 97.51 | High accuracy for chewing detection | Sensitive to ambient noise |

| Wrist Inertial | Smartwatch System [16] | - | 66.70 | 88.80 | 76.10 | Practical, uses commercial devices | Lower precision |

| Head Inertial | OCOsense [19] | - | - | - | 89.00-91.00 | Direct jaw movement capture | Requires head-worn device |

| Ear-worn Inertial | EarBit [17] | 93.00 | - | - | 80.10-90.90 | Discrete form factor | Experimental device |

| Visual | When2Trigger [20] | - | - | - | 89.00 | Direct visual confirmation | Privacy concerns |

| Visual-Inertial Fusion | AIM-2 Fusion [14] | - | 70.47 | 94.59 | 80.77 | Reduced false positives | Complex implementation |

| Multimodal Covariance | Deep Fusion [21] | - | 80.30 | - | - | Efficient data representation | Complex signal processing |

Experimental Protocols and Methodologies

Protocol for Free-Living Validation Studies

Free-living validation studies represent the gold standard for evaluating eating detection systems in real-world conditions. The following protocol outlines a comprehensive approach for validating sensor-based eating detection systems:

Participant Recruitment: Recruit a diverse participant pool representing different demographics, including age, gender, and body mass index variations. For example, the OCOsense study recruited 23 volunteers (14 women, 7 men, and 2 non-binary individuals) to ensure diverse representation [19].

Device Configuration: Configure sensing devices for continuous data collection during waking hours. The AIM-2 study instructed participants to wear the device for two full days (one pseudo-free-living and one free-living day) [14].

Ground Truth Annotation: Implement robust ground truth collection methods. Options include:

- Foot pedal markers for lab-based studies where participants press a pedal when taking a bite [14]

- Ecological Momentary Assessment (EMA) triggered by detection systems for contextual information [15]

- Manual video annotation where researchers review recorded footage to identify eating episodes [17]

- Food diaries completed by participants throughout the study period

Data Collection Period: Conduct studies over sufficient duration to capture variability in eating patterns. The smartwatch-based eating detection system was deployed among 28 college students over 3 weeks, providing substantial data for validation [15].

Performance Metrics Calculation: Evaluate system performance using standardized metrics including precision, recall, F1-score, and timing accuracy for episode detection.

Protocol for Semi-Controlled Laboratory Studies

Semi-controlled laboratory studies provide a balanced approach for initial algorithm development and validation:

Laboratory Setup: Create a naturalistic environment that simulates real-world settings while maintaining some experimental control. The EarBit system used a "semi-controlled home environment" that acted as a living lab space to reduce the gap between controlled laboratory results and real-world performance [17].

Activity Protocol: Design a structured protocol that includes both target activities (eating) and confounding activities (similar non-eating gestures). One comprehensive approach included eight drinking events varying by posture, hand used, and sip size, plus seventeen non-drinking activities to ensure broad variability [22].

Sensor Synchronization: Implement precise time synchronization between all sensors and ground truth annotation systems.

Data Segmentation: Annotate data at appropriate temporal resolutions, typically using sliding windows ranging from 1-second to 30-second durations depending on the sensing modality [21].

Protocol for Real-Time Eating Detection with EMAs

Systems that trigger Ecological Momentary Assessments require specialized protocols:

Detection Threshold Tuning: Optimize detection thresholds to balance sensitivity and specificity. The smartwatch-based system triggered EMAs upon detecting 20 eating gestures in a 15-minute span [15].

EMA Design: Develop concise, contextually relevant questions that capture essential eating context without burdening users. One implementation designed EMA questions after conducting a survey study with 162 students from the same campus to ensure relevance [15].

Timing Optimization: Balance detection delay with accuracy to ensure EMAs are delivered while eating episodes are still in progress or immediately afterward.

Compliance Monitoring: Track participant responses to EMAs to assess adherence and identify potential response biases.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential research reagents and platforms for eating detection research

| Research Reagent | Type | Function | Example Implementation |

|---|---|---|---|

| OCOsense Smart Glasses | Commercial Platform | Detects chewing through jaw movement | F1-score of 0.89-0.91 in free-living [19] |

| Automatic Ingestion Monitor v2 (AIM-2) | Research Device | Combines egocentric camera and accelerometer | 94.59% sensitivity in free-living [14] |

| Empatica E4 Wristband | Commercial Platform | Provides accelerometer, PPG, EDA, temperature | Used in multimodal fusion research [21] |

| iHearken | Research Device | Headphone-like wearable for chewing sounds | 97.42% accuracy in food intake recognition [18] |

| Custom When2Trigger Device | Research Device | Combines RGB camera and thermal sensor | 89.0% F1-score with 10 gestures [20] |

| YOLOX-nano | Algorithm | Lightweight object detection for edge devices | 71% mAP in hand-object detection [20] |

| Bi-LSTM Softmax Network | Algorithm | Classifies chewing sounds from acoustic data | 97.51% F1-score for food recognition [18] |

| Random Forest Classifier | Algorithm | Detects eating from wrist-worn inertial sensors | Used in smartwatch-based eating detection [15] |

| DBSCAN Clustering | Algorithm | Clusters frames/gestures into eating episodes | eps=21s, min_points=3 for gesture clustering [20] |

Implementation Workflow for Eating Detection Systems

Figure 2: Generalized implementation workflow for eating detection systems, showing the pipeline from data acquisition to validation.

The field of automated eating detection has evolved substantially, with current systems demonstrating impressive performance across acoustic, inertial, visual, and multimodal approaches. For researchers and drug development professionals, selection of an appropriate sensing modality requires careful consideration of the specific application requirements, including accuracy needs, user burden, privacy constraints, and implementation complexity.

Wrist-worn inertial sensors offer a practical approach for long-term monitoring through commercially available devices, while head-worn sensors typically provide higher accuracy at the cost of specialized hardware. Acoustic methods can deliver exceptional performance for chewing detection but face challenges in noisy environments. Visual approaches provide direct confirmation but raise privacy considerations. Multimodal fusion approaches represent the most promising direction, leveraging complementary sensing modalities to achieve robust performance across diverse real-world conditions.

Future research directions should focus on improving real-time performance, enhancing generalization across diverse populations, reducing power consumption for extended monitoring, and developing more sophisticated fusion techniques that optimally combine complementary modalities. As these technologies mature, they hold significant potential to transform dietary monitoring in both clinical research and therapeutic applications.

Key Applications in Chronic Disease Management and Drug Development

The ability to objectively and automatically detect eating events is becoming a transformative capability in both chronic disease management and pharmaceutical development. Poor dietary habits are a crucial determinant of health outcomes, significantly influencing the onset and progression of chronic diseases such as type 2 diabetes, heart disease, and obesity [11]. Traditional dietary monitoring methods like food diaries and 24-hour recalls are prone to inaccuracies and impose substantial burdens on participants [11]. The emergence of sophisticated wearable sensing technologies now enables passive, real-time monitoring of dietary behaviors with minimal user intervention, offering new paradigms for clinical care and therapeutic development [23]. This article explores the key applications of these technologies through structured application notes and experimental protocols.

Technology Landscape: Sensor-Based Eating Detection

Sensor Modalities and Performance Metrics

Wearable sensors for eating detection leverage various physiological and motion signals to identify eating episodes and characterize eating behavior. The table below summarizes the primary sensor modalities and their documented performance characteristics.

Table 1: Wearable Sensor Modalities for Eating Event Detection

| Sensor Type | Detection Mechanism | Body Placement | Reported Performance | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Motion Sensors (Accelerometer/Gyroscope) | Hand-to-mouth gestures, head movement [14] | Wrist (Smartwatch) [24], Head [14] | Meal-level AUC: 0.951; F1-score: 87.7% [24] | High user comfort, widespread device availability | Prone to false positives from non-eating gestures |

| Acoustic Sensors | Chewing and swallowing sounds [11] | Neck, Ear | F1-score: 87.9% in free-living [24] | Direct capture of eating-related sounds | Sensitive to ambient noise, privacy concerns |

| Optical Tracking Sensors (OCO) | Facial muscle activations (cheeks, temple) [25] | Smart Glasses | F1-score: 0.91 (Lab); Precision: 0.95 (Real-life) [25] | Granular chewing detection, non-invasive | Requires wearing glasses, limited battery life |

| Strain Sensors | Jaw movement, throat movement [14] | Jaw, Temple, Neck | High accuracy for solid food detection [14] | Accurate for chewing detection | Requires direct skin contact, can be uncomfortable |

| Camera (Egocentric) | Direct food visualization [14] | Glasses, Lapel | Integrated system F1-score: 80.77% [14] | Provides contextual food data, enables nutrient estimation | Significant privacy concerns, high data processing needs |

Integrated Multi-Sensor Systems

Research demonstrates that combining multiple sensor modalities significantly enhances detection accuracy by compensating for the limitations of individual sensors. The Automatic Ingestion Monitor v2 (AIM-2) represents this integrated approach, combining a camera for image capture and an accelerometer to detect head movement as an eating proxy [14]. A hierarchical classification system that integrated confidence scores from both image and accelerometer classifiers achieved a sensitivity of 94.59%, precision of 70.47%, and an F1-score of 80.77% in free-living environments—significantly outperforming either method used in isolation [14]. This multi-modal approach effectively reduces false positives common in single-sensor systems.

Application Note 001: Diabetes Management

Clinical Rationale and Impact

Diabetes represents one of the most significant chronic disease applications for eating detection technology, with the global diabetes treatment market expected to grow at the highest CAGR among chronic disease segments [26]. Current diabetes management, particularly using basal and bolus insulin regimens, requires a high level of patient engagement and accurate meal timing data. Studies indicate that one-third of patients with type 1 or type 2 diabetes report insulin omission or nonadherence at least once in the past month, with "being too busy" cited as a primary reason [24]. Passively collected digital sensor data from consumer wearable devices provides an ideal approach for supplementing the data collected by specialized connected care diabetes devices, enabling more precise insulin timing and dosing recommendations.

Experimental Protocol for Diabetes Application

Objective: To validate the performance of a wrist-worn wearable device for detecting eating episodes in free-living conditions among individuals with type 2 diabetes.

Materials and Reagents:

- Apple Watch Series 4 or equivalent (equipped with accelerometer and gyroscope)

- Custom smartphone application for data streaming

- Cloud computing platform for data storage and analysis

- Secure password-protected Wi-Fi network

Participant Selection Criteria:

- Aged ≥18 years with clinically diagnosed type 2 diabetes

- No hand tremors or involuntary arm movements

- Non-smoker status

- Willingness to wear provided smartwatch for study duration

Procedure:

- Device Configuration: Program smartwatch to stream accelerometer and gyroscope data at 50 Hz to paired smartphone application.

- Data Collection Period: Conduct study over 14-day monitoring period in free-living conditions.

- Ground Truth Annotation: Implement two methods for ground truth collection:

- Electronic Diary: Participants tap watch face to mark meal start and end times.

- 24-Hour Recall: Conduct structured interviews every third day to verify meal timing and content.

- Data Processing: Apply deep learning models (e.g., convolutional neural networks) with spatial and time augmentation to motion sensor data.

- Model Validation: Use leave-one-subject-out cross-validation to assess generalizability across participants.

Performance Metrics: Report area under the curve (AUC), F1-score, sensitivity, and precision at both 5-minute window and full meal levels. Compare performance between general population models and personalized models fine-tuned to individual participants.

Application Note 002: Obesity Clinical Trials

Clinical Rationale and Impact

Obesity represents a global health crisis with strong connections to numerous chronic diseases, including diabetes, cancer, and cardiovascular conditions [25]. The chronic disease treatment market is projected to reach USD 38.02 billion by 2034, with significant growth in digital therapeutics and remote monitoring segments [26]. Pharmaceutical development for obesity treatments has been accelerated by the emergence of GLP-1 receptor agonists, which now comprise 17% of all diabetes prescriptions, up from just 6% in 2019 [27]. The use of eating detection technology in obesity trials enables objective measurement of micro-level eating activities—such as meal duration, chewing frequency, and eating episodes—which provide crucial secondary endpoints beyond traditional weight-based metrics [25].

Experimental Protocol for Obesity Trials

Objective: To evaluate the effect of an investigational anti-obesity pharmaceutical agent on micro-level eating behaviors using sensor-equipped smart glasses.

Materials and Reagents:

- OCOsense smart glasses with optical tracking sensors (cheek and temple placement)

- Data annotation software for video recording analysis

- Hidden Markov Model processing framework

- Convolutional Long Short-Term Memory (ConvLSTM) neural network architecture

Participant Selection Criteria:

- Adults with BMI ≥30 kg/m²

- Otherwise healthy without contraindications to investigational product

- Willing to wear smart glasses during all eating episodes

Procedure:

- Baseline Assessment: Collect 7 days of free-living eating behavior data prior to treatment initiation.

- Randomization: Double-blind randomization to investigational product or placebo control.

- Treatment Period: Conduct 12-week intervention with continuous eating monitoring.

- Data Collection:

- Laboratory meals: Standardized meals under controlled conditions at weeks 0, 4, and 12.

- Free-living monitoring: Continuous wearing of smart glasses during waking hours.

- Sensor Data Processing: Analyze optical sensor data from cheek and temple positions to distinguish chewing from other facial activities (speaking, clenching).

- Activity Classification: Implement ConvLSTM model followed by Hidden Markov Model to account for temporal dependencies between chewing events.

Outcome Measures:

- Primary: Change in number of eating episodes per day

- Secondary: Changes in chewing rate, meal duration, number of chews per bite

- Exploratory: Correlation between micro-eating behaviors and weight loss

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Eating Detection Studies

| Reagent / Tool | Function | Example Implementation | Key Considerations |

|---|---|---|---|

| Automatic Ingestion Monitor v2 (AIM-2) | Integrated image and sensor data collection for dietary monitoring | Glasses-mounted device with camera and accelerometer [14] | Provides synchronized multi-modal data; enables ground truth establishment |

| OCO Optical Tracking Sensors | Monitoring facial muscle activations during eating | Smart glasses with cheek and temple sensors [25] | Non-contact method; measures skin movement in X-Y dimensions |

| Apple Watch with Custom Research App | Stream motion sensor data in free-living conditions | Accelerometer and gyroscope data collection [24] | Leverages consumer devices for scalability; requires custom data pipeline |

| Convolutional Long Short-Term Memory (ConvLSTM) Networks | Temporal pattern recognition in sensor data | Chewing detection from optical sensor sequences [25] | Captures both spatial and temporal dependencies in eating behaviors |

| Hierarchical Classification Framework | Fusion of multiple sensor modalities | Combining image and accelerometer confidence scores [14] | Reduces false positives by requiring multi-modal evidence |

| Hidden Markov Models (HMM) | Modeling temporal dependencies between eating events | Post-processing for sequence prediction [25] | Accounts for natural transitions between eating and non-eating states |

| Leave-One-Subject-Out Cross-Validation | Assessing model generalizability | Testing performance on unseen users [14] [25] | Provides robust estimate of real-world performance across diverse populations |

Visualizing Experimental Workflows

Multi-Sensor Eating Detection Workflow

Sensor Data Processing Pipeline

The field of real-time eating detection is rapidly evolving, with several key trends shaping its future application in chronic disease management and drug development. The integration of artificial intelligence is revolutionizing the market by improving disease diagnosis and screening, enabling healthcare professionals to provide more effective therapeutics [26]. Digital therapeutics and remote monitoring represent the fastest-growing segment in chronic disease treatment, expected to expand rapidly in the coming years [26]. Future research should focus on developing more privacy-preserving approaches, such as filtering out non-food-related sounds or images, to ensure user confidentiality and comfort [23]. Additionally, the development of standardized performance metrics and validation frameworks will be crucial for regulatory acceptance and clinical adoption.

The Alzheimer's disease drug development pipeline currently includes 138 drugs being assessed in 182 clinical trials, with biomarkers playing an important role in 27% of active trials [28]. While not directly focused on eating detection, this highlights the growing sophistication of clinical trial methodologies where digital monitoring technologies could play an increasingly important role. As eating detection technologies mature, their integration with other digital biomarkers will provide comprehensive insights into disease progression and treatment efficacy across multiple therapeutic areas.

In conclusion, sensor-based eating detection technologies have matured beyond proof-of-concept demonstrations to become viable tools for chronic disease management and drug development. The structured application notes and experimental protocols presented herein provide researchers and drug development professionals with practical frameworks for implementing these technologies in both clinical care and therapeutic development contexts.

Algorithmic Architectures: From Smartwatch Sensing to Deep Learning Models

The accurate detection of eating episodes is a critical component in automated dietary monitoring for nutritional research, chronic disease management, and behavioral health studies. Traditional self-reporting methods, such as food diaries and recall surveys, are prone to inaccuracies due to recall bias and substantial participant burden [11] [16]. Inertial sensing via commercially available smartwatches presents a practical, non-invasive solution for detecting eating episodes by monitoring characteristic hand-to-mouth gestures. This approach leverages widespread wearable technology to enable continuous, objective data collection in free-living conditions, thereby facilitating research into dietary patterns and their health impacts [16] [29]. These application notes detail the methodologies, performance metrics, and experimental protocols for implementing hand-to-mouth gesture detection within a broader research framework on real-time eating event detection algorithms.

Technical Background and Mechanism

Hand-to-mouth gesture detection utilizes the Inertial Measurement Unit (IMU) embedded in commercial smartwatches, which typically includes a 3-axis accelerometer and a 3-axis gyroscope [30]. The underlying principle posits that the act of eating involves a repetitive sequence of arm and wrist movements—transporting food from plate to mouth and returning—that generates a distinct kinematic signature. This signature is characterized by specific patterns in linear acceleration and angular velocity that can be discriminated from other activities of daily living through machine learning classification [16] [30].

The detection process typically follows a two-stage approach, as identified in research:

- Gesture Spotting: The continuous stream of IMU data is analyzed to identify discrete segments corresponding to individual food intake gestures, such as bites or sips [16].

- Temporal Clustering: These identified gestures are then clustered across the time dimension to infer distinct eating moments or meal episodes, effectively differentiating isolated gestures from actual meals based on temporal proximity and density [16].

Performance Metrics and Quantitative Data

The following tables summarize the performance outcomes of various studies that have implemented inertial sensing for dietary monitoring.

Table 1: Performance of Eating Moment Detection in Different Environments

| Study Context | Sensitivity (Recall) | Precision | F1-Score | Citation |

|---|---|---|---|---|

| Free-living (7 participants, 1 day) | 88.8% | 66.7% | 76.1% | [16] |

| Free-living (1 participant, 31 days) | 78.6% | 65.2% | 71.3% | [16] |

| Integrated Image & Sensor-Based Detection | 94.59% | 70.47% | 80.77% | [14] |

Table 2: Performance of Advanced Models for Specific Detection Tasks

| Detection Task | Model/Approach | Key Performance Metric | Result | Citation |

|---|---|---|---|---|

| Carbohydrate intake detection | Personalized Deep Learning (LSTM) | Median F1-Score | 0.99 | [12] |

| Bite weight estimation | Support Vector Regression (SVR) | Mean Absolute Error (MAE) | 3.99 grams/bite | [30] |

Experimental Protocols

Protocol for General Eating Moment Detection

This protocol is adapted from studies that validated smartwatch-based detection in free-living conditions [16].

- Objective: To train and evaluate a model for detecting eating moments based on hand-to-mouth gestures using a commercial smartwatch.

- Equipment:

- Commercial smartwatch (e.g., running Android Wear or similar OS) with 3-axis accelerometer.

- Smartphone application for data logging or custom software for direct sensor data collection.

- Data Collection:

- Sensor Parameters: Collect 3-axis accelerometer data at a sampling rate ≥ 15 Hz [12] [16].

- Ground Truth Annotation: In laboratory settings, use a foot pedal for participants to mark the precise start and end of each bite or sip [14]. In free-living studies, use Ecological Momentary Assessment (EMA) via smartphone prompts, where participants self-report eating episodes in real-time to establish ground truth [29].

- Study Duration: Data should be collected over multiple sessions, including both controlled laboratory meals and unrestricted free-living periods.

- Data Preprocessing:

- Resampling: Resample all sensor data to a consistent frequency (e.g., 100 Hz) using linear interpolation [30].

- Gravitational Filtering: Apply a high-pass filter (e.g., cutoff frequency of 1 Hz) to remove the gravitational component from the accelerometer signals [30].

- Noise Reduction: Apply a median filter (e.g., 5th-order) to attenuate transient signal noise [30].

- Model Training & Evaluation:

- Feature Extraction: Extract features from the preprocessed IMU data. These can be:

- Algorithm Selection: Implement a classification model such as a Hierarchical Support Vector Machine (SVM) combined with a Hidden Markov Model (HMM) for temporal modeling [31].

- Validation: Perform leave-one-subject-out cross-validation (LOSO CV) to evaluate model generalizability across individuals [30].

Protocol for Personalized Carbohydrate Intake Detection

This protocol is tailored for specific populations, such as individuals with diabetes, requiring high detection accuracy [12].

- Objective: To develop a personalized deep learning model that detects carbohydrate consumption gestures with high precision.

- Equipment:

- Smartwatch with IMU (accelerometer and gyroscope).

- Data storage or transmission capability for centralized processing.

- Data Collection:

- Record IMU data from participants during meal consumption.

- Annotate the start and end of each carbohydrate intake event as ground truth.

- Data Preprocessing:

- Resample gyroscope and accelerometer data to a uniform sampling rate.

- Apply necessary filtering for signal clarity.

- Model Development:

- Architecture: Utilize a Recurrent Neural Network (RNN) with Long Short-Term Memory (LSTM) layers, which are effective for learning temporal sequences of inertial data [12].

- Personalization: Train a dedicated model for each individual user to account for personal variations in eating gestures.

- Performance Metrics: Evaluate the model using F1-score, precision, recall, and confusion matrix analysis focusing on prediction latency [12].

Figure 1: Workflow for smartwatch-based eating episode detection, from data acquisition to classification.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Inertial Sensing-Based Eating Detection Research

| Item | Specification / Example | Primary Function in Research |

|---|---|---|

| IMU Sensor | 3-axis accelerometer, 3-axis gyroscope (commonly found in commercial smartwatches) [16] [30] | Captures raw kinematic data of wrist and arm movements. |

| Data Annotation Tool | Foot pedal switch [14] or Ecological Momentary Assessment (EMA) smartphone app [29] | Provides precise ground truth labels for model training and validation. |

| Public Datasets | CGMacros dataset (multimodal, includes CGM, IMU, macronutrients) [32]; FIC dataset (annotated accelerometer data) [32] | Provides benchmark data for algorithm development and comparative studies. |

| Deep Learning Models | LSTM networks [12], Hybrid RNNs (e.g., Bidirectional LSTM + GRU) [33] | Classifies temporal sequences of IMU data into eating/non-eating gestures. |

| Classical ML Algorithms | Support Vector Machines (SVM) [30] [31], Hidden Markov Models (HMM) [31] | Provides an alternative approach for gesture classification and temporal modeling. |

| Signal Processing Library | Python (SciPy, NumPy), MATLAB | Performs essential preprocessing: filtering, resampling, and feature extraction. |

Discussion and Integration

Integrating inertial sensing with other sensing modalities can significantly enhance detection accuracy and reduce false positives. For instance, combining smartwatch IMU data with images from a wearable camera (e.g., the AIM-2 device) has been shown to improve sensitivity in eating episode detection by 8% compared to using either method alone [14]. This multi-modal approach leverages the complementary strengths of gesture detection and visual confirmation.

Future research directions should focus on improving the robustness of algorithms in completely free-living environments, where unstructured activities and varied eating styles present significant challenges. Furthermore, the development of personalized models that adapt to an individual's unique eating gestures has demonstrated exceptionally high performance (F1-scores of 0.99) and represents a promising path forward for clinical applications, such as diabetes management [12]. Standardizing validation protocols, including the use of multi-day datasets and consistent performance metrics, will be crucial for comparing advancements across the field [11] [34].

Figure 2: Structure of an LSTM cell used for temporal modeling of eating gestures.

Within the framework of real-time eating event detection algorithms, the accurate capture of chewing and swallowing signatures is a fundamental challenge. These micro-level behaviors provide the raw data necessary for analyzing dietary patterns, estimating energy intake, and developing interventions for conditions like obesity and dysphagia. While traditional methods rely on invasive techniques or error-prone self-reporting, sensor-based approaches offer a passive, objective means of data collection. This document details the application of acoustic and strain-based sensing methodologies, which have emerged as two of the most promising technologies for this task. The following sections provide a comparative analysis of these methods, detailed experimental protocols for their implementation, and visualizations of their underlying workflows, providing researchers with the practical tools needed to integrate these sensors into robust detection algorithms.

Comparative Analysis of Sensing Modalities

The table below summarizes the core performance characteristics, advantages, and limitations of the primary acoustic and strain-based methods used for capturing chewing and swallowing signatures.

Table 1: Comparison of Acoustic and Strain-Based Methods for Capturing Chewing and Swallowing

| Detection Target | Primary Sensor Type | Common Sensor Placement | Reported Performance | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Chewing (General) | Piezoelectric Strain Gauge [35] [36] | Below the ear, on the mandible [35] | F1-score: 0.90 to 0.96 [36] | Directly measures jaw movement; well-defined frequency range (1-2 Hz) [35] | Can be obtrusive; may be sensitive to talking [36] |

| Swallowing | Acoustic Sensor (Microphone) [37] [38] | Neck (Cervical Auscultation) [38] | Differentiates swallows in dysphagia with statistical significance (p<0.001) [38] | High information content; can qualify swallowing clinically [37] [38] | Vulnerable to ambient noise; poses privacy concerns [37] |

| Swallowing | Respiratory Inductance Plethysmography (RIP) [36] | Chest and Abdomen (with belts) [36] | F1-score: 0.58 to 0.78 [36] | Detects swallowing via related breathing patterns and lung volume changes [36] | Lower performance when used alone; requires multiple belts [36] |

| Eating Gestures | Wrist-Worn Inertial Sensors (Accelerometer/Gyroscope) [15] [36] | Wrist (Smartwatch) [15] | F1-score: 0.79 to 0.82 [36] | Non-invasive and socially acceptable (commercial smartwatches) [15] | Infers ingestion indirectly; can confuse with similar gestures (e.g., face-touching) [36] |

Experimental Protocols

Protocol for Acoustic Swallowing Detection via Cervical Auscultation

This protocol outlines the procedure for capturing and analyzing swallowing sounds using digital cervical auscultation, a method validated for differentiating normal and impaired swallows across adult and older adult populations [38].

Research Reagent Solutions

Table 2: Essential Materials for Acoustic Swallowing Detection

| Item | Function/Description |

|---|---|

| Digital Stethoscope (e.g., Eko CORE 500) [38] | Core sensor for capturing swallowing sounds with an integrated amplifier. |

| Data Acquisition System | A system (e.g., BIOPAC) to record acoustic signals at a high sampling rate (≥2000 Hz recommended). |

| Audio Processing Software (e.g., in Python [38]) | For segmenting swallowing events and extracting acoustic features (duration, magnitude, phase, recurrence). |

| Test Boluses | Standardized volumes of different textures (e.g., 5 mL water, 5 mL pureed banana) to elicit consistent swallows [38]. |

Methodology

- Sensor Placement: Place the diaphragm of the digital stethoscope on the participant's neck, lateral to the trachea and superior to the cricoid cartilage, as established in clinical practice for cervical auscultation [38]. Secure it with medical tape to minimize movement artifacts.

- Signal Recording: Instruct the participant to swallow the provided test boluses on command. Record the acoustic signal throughout the swallowing task. A minimum of 5 swallows per bolus type is recommended for a reliable dataset [38].

- Data Processing:

- Segmentation: Manually or algorithmically segment the recorded audio signal to isolate individual swallow events, using the distinct acoustic signature of the swallow as a marker.

- Feature Extraction: For each segmented swallow, compute key acoustic parameters in the time and frequency domains. Critical parameters include duration (total time of acoustic event), magnitude (signal amplitude), phase (spectral characteristics), and recurrence (patterns of repetition) [38].

- Data Analysis: Use statistical tests (e.g., t-tests, ANOVA) to compare the extracted acoustic parameters between different groups (e.g., healthy vs. dysphagic) or different bolus types. Machine learning classifiers (e.g., Support Vector Machines) can then be trained on these features to automatically identify and classify swallowing events [37].

Protocol for Jaw Motion (Chewing) Detection Using a Piezoelectric Strain Gauge

This protocol describes the use of a piezoelectric film sensor to monitor characteristic jaw motion during chewing, a method proven effective for food intake detection [35] [36].

Research Reagent Solutions

Table 3: Essential Materials for Strain-Based Chewing Detection

| Item | Function/Description |

|---|---|

| Piezoelectric Film Sensor (e.g., LDT0-028K) [35] [36] | A flexible sensor that generates a voltage signal in response to curvature changes from jaw movement. |

| Signal Conditioning Circuit [35] | A circuit featuring a buffering op-amp (e.g., TLV-2452) and voltage divider to manage the sensor's high impedance and set a DC offset. |

| Data Acquisition Module (e.g., USB-1608FS) [35] | Hardware to sample the analog signal at 100 Hz and digitize it with 16-bit resolution. |

| Feature Extraction & ML Software (e.g., Python, MATLAB) | Software to process the signal, compute time/frequency features, and train a classifier (e.g., SVM). |

Methodology

- Sensor Attachment: Clean the skin area immediately below the participant's outer ear, over the mandible. Attach the piezoelectric sensor firmly using medical tape to ensure it bends with the skin's curvature during jaw movement [35].

- Data Collection: Connect the sensor to the signal conditioning circuit and data acquisition system. Record the signal while the participant engages in a series of activities, including quiet sitting, talking, and consuming foods of varying textures (e.g., a sandwich, an apple). This variety helps build a robust classification model [35] [36].

- Signal Processing and Feature Extraction:

- Segment the collected signal into fixed-length, non-overlapping epochs (e.g., 30 seconds) [35].

- For each epoch, compute a comprehensive set of time-domain and frequency-domain features. A forward selection procedure can then identify the most relevant features (e.g., 4 to 11 features) for distinguishing chewing from other activities [35].

- Model Training and Validation: Train a Support Vector Machine (SVM) classifier using the selected features. Validate the model's performance using cross-validation, reporting standard metrics such as accuracy, precision, recall, and F1-score to objectively quantify chewing detection performance [35] [36].

Workflow Visualization

The following diagrams illustrate the logical workflows for the acoustic and strain-based detection methodologies described in the protocols.

Acoustic Swallowing Analysis Workflow

Strain-Based Chewing Detection Workflow

Within the scope of research on real-time eating event detection algorithms, vision-based systems have emerged as a powerful tool for objectively monitoring dietary behavior. The fusion of RGB and thermal sensing modalities addresses significant challenges in the reliable detection of hand-object interactions, particularly those related to feeding gestures and food intake. Traditional RGB cameras, while informative, struggle with variable lighting conditions and motion blur. Thermal sensors, by capturing heat signatures, provide a complementary data stream that enhances robustness and protects user privacy by obscuring identifiable facial features. This application note details the implementation, performance, and experimental protocols for these multi-modal systems, providing a framework for their application in clinical and free-living research.

Multi-modal sensing systems for eating behavior analysis typically integrate a low-resolution RGB camera with a low-resolution thermal sensor, often configured as a wearable, activity-oriented device. The core function of this configuration is to leverage the strengths of each sensing modality: the rich visual context from RGB and the privacy-preserving, illumination-invariant thermal signatures from the IR sensor.

The integration of thermal data with RGB video has been empirically shown to significantly enhance the performance of automated detection models. The table below summarizes quantitative performance improvements from a real-world study involving 10 participants with obesity, comparing a video-only approach to a combined RGB+IR system [39].

Table 1: Performance Comparison of Eating and Social Presence Detection Modalities

| Detection Target | Sensing Modality | Reported Performance (F1-Score) | Key Advantage |

|---|---|---|---|

| Eating Gestures | RGB Video Only | ~65% (Baseline) | Provides visual confirmation of food and gesture [39] |

| RGB + Thermal Sensor | ~70% (~5% improvement) | Enhances reliability in detecting feeding gestures [39] | |

| Social Presence | RGB Video Only | ~30% (Baseline) | Can identify faces and other visual cues [39] |

| RGB + Thermal Sensor | ~74% (~44% improvement) | Significantly improves detection of nearby individuals via body heat [39] |

The dramatic improvement in social presence detection underscores the thermal sensor's efficacy in identifying human silhouettes, as the average body temperature is usually higher than the surrounding environment [39]. Furthermore, the physical configuration of the sensing system is critical. For capturing fine-grained hand-to-mouth gestures, an activity-oriented camera with a fish-eye lens oriented towards the wearer's mouth has been found optimal for visualizing the path from table to mouth [39].

Figure 1: Workflow of a multi-modal RGB-Thermal sensing system for activity recognition, from data acquisition to final classification.

Detailed Experimental Protocols

Protocol 1: Device Deployment for In-the-Wild Data Collection

This protocol outlines the procedure for deploying a wearable RGB-Thermal sensor system to collect data on eating behavior and social presence in free-living conditions.

1. Objectives: To collect a synchronized dataset of RGB video and thermal sensor data for the development and validation of models detecting eating gestures and social presence in real-world settings.

2. Materials and Reagents:

- Low-power, wearable device with synchronized low-resolution RGB camera and low-resolution IR sensor array [39].

- Secure data storage unit (e.g., high-capacity SD card).

- Charging equipment and spare batteries.

- Adjustable head-mounted or neck-worn harness for stable device positioning.

- Annotation software (e.g., ELAN, ANVIL) or custom logging tools.

3. Procedure: 1. Device Preparation: - Fully charge all device batteries. - Configure sensors to record at specified low resolutions (e.g., 320x240) to conserve power and storage. - Synchronize the RGB and thermal sensor clocks to a common time source. - Securely mount the device in the harness, ensuring the RGB camera and thermal sensor have an unobstructed view oriented towards the mouth and upper body. 2. Participant Briefing and Fitting: - Obtain informed consent, explaining the data collection purpose, types of data recorded, and privacy safeguards. - Fit the harness on the participant, adjusting for comfort and stability while verifying the sensor field of view. - Instruct the participant to wear the device during all waking hours for a target period (e.g., 3 days). - Train the participant on basic device operations (e.g., charging overnight) and how to temporarily pause recording if necessary. 3. Data Collection: - Initiate recording at the start of each day. - Participants go about their normal daily routines, including all meals and snacks. 4. Ground Truth Annotation: - Simultaneously, participants (or researchers via periodic prompts) log the start and end times of all eating episodes and note whether they were alone or with others. - Alternatively, subsequent manual video review can serve as the gold standard for annotation. 5. Data Cessation and Retrieval: - After the deployment period, retrieve the device and data storage unit. - Download the synchronized RGB and thermal data streams and the corresponding ground truth logs.

4. Analysis and Notes:

- The collected dataset will comprise paired RGB and thermal video sequences.

- Data should be annotated frame-by-frame or event-by-event for eating gestures (bite, chew) and social presence (person present/not present).

- This in-the-wild data is essential for training models that are robust to real-world challenges like motion blur and lighting changes.

Protocol 2: Validation of Bite Count Using the ByteTrack Algorithm

This protocol describes a method for validating bite count and bite rate from meal videos in a controlled laboratory setting, which can be used to corroborate findings from the wearable sensor system.

1. Objectives: To automatically detect and count bites from video recordings of meals using the ByteTrack deep learning pipeline and validate the counts against manual observational coding.

2. Materials and Reagents:

- Fixed, wall-mounted network camera (e.g., Axis M3004-V) recording at 30 fps [1].

- Controlled laboratory eating environment.

- Video dataset of meal sessions.

- Computational resources (GPU workstation) with the ByteTrack implementation.

- Gold-standard manual bite annotations from trained human coders.

3. Procedure: 1. Experimental Setup: - Position the camera discreetly outside the participant's direct line of sight to minimize the observer effect. - Ensure consistent and adequate lighting in the eating area. 2. Video Recording: - Record the entire meal session from start to finish. - Use a standardized protocol (e.g., children are read a non-food story during the meal to minimize interaction) [1]. 3. ByteTrack Model Application: - Stage 1: Face Detection and Tracking. Process the video through a hybrid pipeline (e.g., Faster R-CNN and YOLOv7) to detect and track the participant's face throughout the meal, mitigating issues from occlusions and motion [1]. - Stage 2: Bite Classification. Feed the tracked face regions to a convolutional neural network combined with a Long Short-Term Memory network (e.g., EfficientNet + LSTM) to classify movements as bites or non-bites (e.g., talking, gesturing) [1]. - Apply a filtering process to refine the results and output timestamps for each detected bite. 4. Validation: - Compare the bite timestamps and total bite count generated by ByteTrack against the gold-standard manual coding. - Calculate performance metrics including precision, recall, F1-score, and Intraclass Correlation Coefficient (ICC) for agreement.

4. Analysis and Notes:

- As reported, expect an F1-score of approximately 70.6% on a test set, with ICC agreement averaging 0.66 [1].

- Performance is typically lower in videos with extensive head movement or hand/utensil occlusions of the mouth.

- This protocol provides an automated, scalable alternative to labor-intensive manual video coding for validating micro-behaviors like bites.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table catalogues key materials and their functions for setting up a research pipeline for vision-based eating behavior analysis.

Table 2: Essential Research Reagents and Solutions for RGB-Thermal Eating Behavior Analysis

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Low-Resolution RGB-Thermal Wearable | Core sensing unit for in-the-wild data capture; enables hand-object interaction and social presence confirmation. | Combine low-power RGB camera with low-resolution IR sensor array (e.g., Grid-EYE); fish-eye lens orientation towards the mouth is critical [39]. |

| Fixed Network Camera | High-quality video recording for laboratory validation of algorithms under controlled conditions. | Use a model like Axis M3004-V; 30 fps recording rate; positioned discreetly to reduce participant reactivity [1]. |