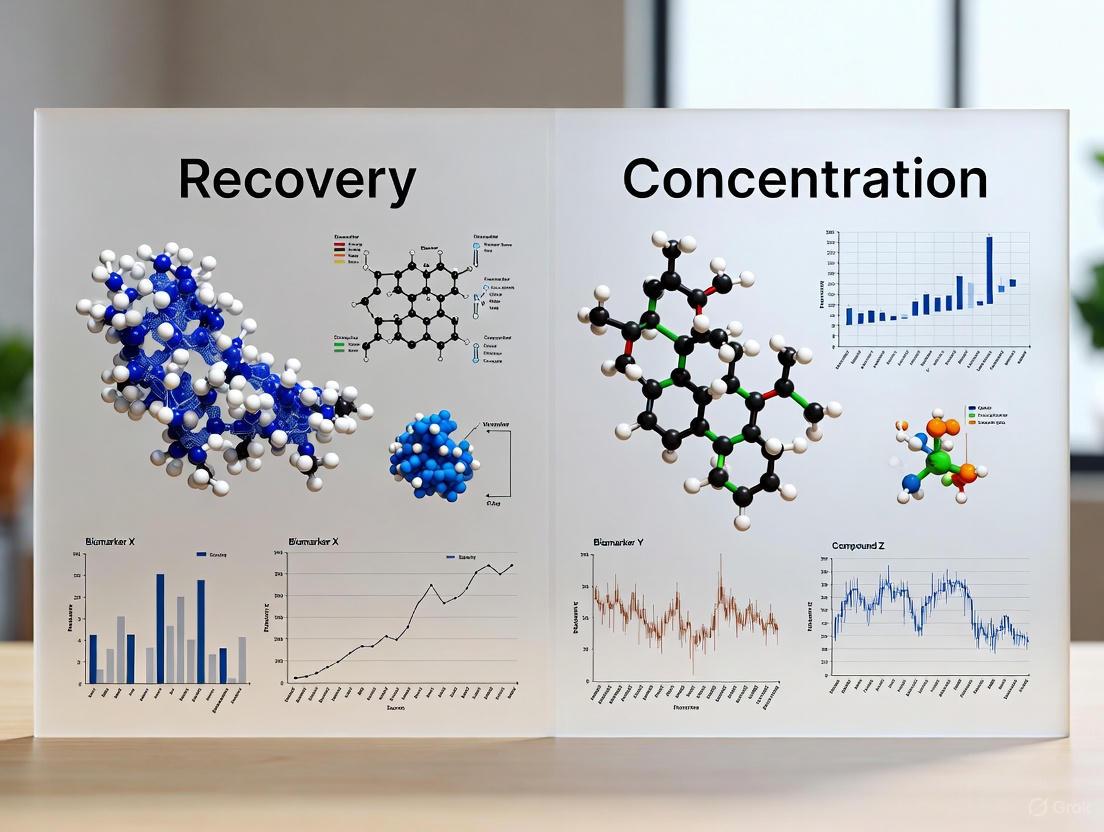

Recovery vs. Concentration Biomarkers: A Comparative Analysis for Objective Measurement in Drug Development and Clinical Research

This article provides a comprehensive comparison of recovery and concentration biomarkers, two fundamental classes for objective measurement in biomedical research.

Recovery vs. Concentration Biomarkers: A Comparative Analysis for Objective Measurement in Drug Development and Clinical Research

Abstract

This article provides a comprehensive comparison of recovery and concentration biomarkers, two fundamental classes for objective measurement in biomedical research. Tailored for researchers and drug development professionals, it explores the foundational definitions, distinct applications, and methodological approaches for each biomarker type. The content delves into validation challenges, optimization strategies, and critical selection criteria based on Context of Use (COU). By synthesizing current standards and scientific advances, this guide aims to enhance the strategic implementation of these biomarkers to improve the efficiency of clinical trials, strengthen regulatory submissions, and advance precision medicine.

Defining the Landscape: Core Concepts and Classifications of Recovery and Concentration Biomarkers

In modern biomedical research and drug development, biomarkers are indispensable tools that provide an objective measure of biological processes, pathogenic processes, or pharmacological responses to therapeutic interventions [1]. According to the FDA-NIH Biomarker Working Group's BEST (Biomarkers, EndpointS, and other Tools) Resource, a biomarker is formally defined as "a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or biological responses to an exposure or intervention, including therapeutic interventions" [2]. This comprehensive definition encompasses molecular, histologic, radiographic, or physiologic characteristics that can be quantified and evaluated.

The critical importance of biomarkers extends across the entire spectrum of medical research and clinical practice. They serve fundamental roles in diagnosing diseases, monitoring treatment efficacy, predicting health outcomes, and understanding pathological mechanisms. For researchers and drug development professionals, biomarkers provide essential tools for decision-making throughout the drug development pipeline, from early target identification to late-stage clinical trials [3]. The classification of biomarkers into specific categories—including diagnostic, monitoring, and predictive biomarkers—enables more precise application in both research and clinical settings, facilitating the advancement of personalized medicine approaches [2].

Core Biomarker Categories and Classifications

The BOND Nutritional Biomarker Framework

The Biomarkers of Nutrition and Development (BOND) program provides a sophisticated classification system that organizes nutritional biomarkers into three primary categories based on an assumed intake-response relationship. This framework, which can be broadly applied to biomarkers beyond nutrition, includes biomarkers of exposure, status, and function [4].

Biomarkers of Exposure: These biomarkers are designed to assess what has been consumed or encountered, taking into account bioavailability. They include traditional dietary assessment methods as well as more objective dietary biomarkers that provide indirect measures of nutrient exposure independent of self-reported food intake [4].

Biomarkers of Status: These measure the concentration of a nutrient in biological fluids (serum, erythrocytes, leucocytes, urine, breast milk) or tissues (hair, nails), or the urinary excretion rate of the nutrient or its metabolites. Ideally, status biomarkers reflect either total body nutrient content or the size of the tissue store most sensitive to nutrient depletion, helping to determine where an individual or population stands relative to an accepted cut-off (adequate, marginal, deficient) [4].

Biomarkers of Function: These biomarkers measure the functional consequences of a specific nutrient deficiency or excess, providing greater biological significance than static biomarkers. They are further subdivided into functional biochemical biomarkers (enzyme stimulation assays, abnormal metabolites, DNA damage) and functional physiological/behavioral biomarkers (vision, growth, immune function, cognition) [4].

Table 1: Core Biomarker Categories According to the BOND Classification Framework

| Category | Subcategory | Measurement Examples | Primary Application |

|---|---|---|---|

| Exposure | Traditional Assessment | Food records, recall surveys | Estimate intake of foods/nutrients |

| Dietary Biomarkers | Objective biochemical measurements | Indirect assessment of nutrient exposure | |

| Status | Tissue Concentration | Serum/plasma levels, tissue stores | Assess body reserves or tissue amounts |

| Excretion Metrics | Urinary metabolites | Evaluate nutrient retention or loss | |

| Function | Biochemical | Enzyme activity, metabolic products | Detect early subclinical deficiencies |

| Physiological/Behavioral | Growth, vision, immune response, cognition | Assess clinical health outcomes |

Recovery vs. Concentration Biomarkers in Research

In the specific context of comparing recovery versus concentration biomarkers research, distinct differences emerge in their application and interpretation. While the BOND framework does not explicitly use the term "recovery biomarkers," this category aligns most closely with functional biomarkers that measure the body's response to intervention or its capacity to return to homeostasis after challenge.

Concentration Biomarkers: These static measurements reflect the circulating or tissue levels of a specific analyte at a single point in time. Examples include serum vitamin D levels, hemoglobin A1c for glucose control, or cholesterol measurements. While valuable for assessing status, they provide limited information about metabolic flux, tissue utilization, or functional capacity [4].

Recovery Biomarkers: These dynamic measurements evaluate the body's functional response to a controlled intervention or its ability to recover from a physiological challenge. In nutritional research, this might include the return to baseline of inflammatory markers after an oxidative stress challenge, or the normalization of metabolic parameters after nutrient administration. In sports medicine, recovery biomarkers track an athlete's physiological restoration after exercise, including inflammation resolution, muscle repair, and metabolic homeostasis [5].

The distinction is particularly important in intervention studies and clinical trials, where understanding both the static levels (concentration) and dynamic responses (recovery) provides a more comprehensive picture of biological effect than either category alone.

Experimental Approaches and Methodologies

Technical Validation of Biomarker Assays

Ensuring the reliability of biomarker measurements begins with rigorous analytical validation, establishing that the performance characteristics of an assay are acceptable for its intended purpose [2]. The CLSI (Clinical and Laboratory Standards Institute) provides extensive evaluation protocols (EPs) that set consistent standards for assay validation. These protocols vary depending on the specific stage or aspect of the assay being examined [6].

For biomarker assays to be considered "fit-for-purpose," they must demonstrate adequate sensitivity, specificity, accuracy, precision, and other relevant performance characteristics using specified technical protocols. The level of validation required may vary depending on the application context—whether the assay is for research use only or requires regulatory approval for clinical use [6]. Unfortunately, studies have revealed significant problems with commercially available immunoassays, with one evaluation finding that almost 50% of more than 5,000 commercially available antibodies failed specificity testing [6].

Advanced Detection Technologies

Innovative detection platforms continue to push the boundaries of biomarker quantification. Digital immunoassays represent a significant advancement over traditional analog methods by enabling single-molecule counting, currently the most accurate and precise method for determining biomarker concentration in solution [7].

The fundamental principle behind digital detection involves converting the presence or absence of individual target molecules into a binary ("1" or "0") readout. In one innovative approach, researchers used easily-identifiable DNA nanostructures as proxies for the presence ("1") or absence ("0") of a target protein captured via a magnetic bead-based sandwich immunoassay. This method successfully quantified thyroid-stimulating hormone (TSH) from human serum samples down to the high femtomolar range, overcoming specificity, sensitivity, and consistency challenges associated with conventional solid-state nanopore sensors [7].

Table 2: Comparison of Traditional Analog vs. Digital Immunoassay Approaches

| Parameter | Traditional Analog ELISA | Digital Immunoassay |

|---|---|---|

| Detection Principle | Intensity-based optical readout | Single-molecule counting |

| Sensitivity Range | pM-nM | fM-pM (high femtomolar) |

| Key Limitation | Limited by antibody affinity and analog error | Requires partitioning and precise detection |

| Dynamic Range | Limited | Broad |

| Applications | Standard clinical measurements | Low-abundance biomarkers, early disease detection |

| Readout Method | Colorimetric, chemiluminescent | Electrical, magnetic, or fluorescent |

Data Normalization Strategies in Biomarker Research

The accuracy of biomarker measurements depends significantly on appropriate data normalization, particularly when integrating data across multiple cohorts or experimental conditions. Biological variance among samples from different cohorts can pose substantial challenges for the long-term validation of developed models, necessitating robust data-driven normalization methods [8].

A comparative analysis of normalization approaches in metabolomic biomarker research evaluated seven different methods: normalization by total concentration, autoscaling, quantile normalization (QN), probabilistic quotient normalization (PQN), median ratio normalization (MRN), trimmed mean of M-values (TMM), and variance stabilizing normalization (VSN). The quality of normalization was assessed through the performance of Orthogonal Partial Least Squares (OPLS) models, with sensitivity and specificity calculated from validation datasets [8].

The findings demonstrated that PQN, MRN, and VSN provided higher diagnostic quality of OPLS models than other methods. Specifically, the OPLS model based on VSN demonstrated superior performance with 86% sensitivity and 77% specificity. Notably, after VSN normalization, the VIP-identified potential biomarkers notably diverged from those identified using other normalization methods, uniquely highlighting pathways related to the oxidation of brain fatty acids and purine metabolism [8].

Visualization of Biomarker Relationships and Workflows

Biomarker Classification Framework

Digital Immunoassay Workflow

Research Reagent Solutions for Biomarker Studies

Table 3: Essential Research Reagents for Biomarker Detection and Analysis

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Antibody Pairs | Capture and detect target proteins in sandwich immunoassays | TSH quantification, inflammatory markers |

| Magnetic Beads | Solid phase for efficient target capture and washing | Biomarker isolation from complex fluids |

| DNA Nanostructures | Signal amplification and digital detection proxies | Solid-state nanopore digital assays |

| Streptavidin-Biotin System | High-affinity conjugation for detection antibodies | Signal amplification in immunoassays |

| Photocleavable Linkers | Controlled release of reporter molecules | Digital immunoassay target quantification |

| Quality Control Samples | Monitoring assay performance and reproducibility | Inter-laboratory standardization |

| Stable Isotope Standards | Internal standards for mass spectrometry | Quantitative metabolomics |

Biomarker science continues to evolve with increasingly sophisticated classification frameworks, detection technologies, and analytical approaches. The distinction between concentration biomarkers (measuring static levels) and recovery biomarkers (assessing dynamic responses) provides researchers with complementary tools for understanding biological systems. While concentration biomarkers offer snapshot assessments of biological status, recovery biomarkers capture the functional capacity and adaptive responses of organisms to challenges or interventions.

The future of biomarker research will likely see increased integration of multi-omics approaches, advanced materials for detection, and artificial intelligence for data interpretation. As digital detection technologies mature and normalization methods become more sophisticated, the precision and accuracy of both concentration and recovery biomarker measurements will continue to improve, enabling more sensitive disease detection, better therapeutic monitoring, and more personalized medical interventions.

In the field of nutritional epidemiology, accurately measuring what people consume remains a fundamental challenge. Dietary assessment has long relied on self-reported methods such as food frequency questionnaires, food records, and 24-hour recalls, which are invariably subject to random and systematic errors including recall bias and misreporting [9]. To overcome these limitations, researchers have turned to objective biological measurements known as nutritional biomarkers. The Biomarkers of Nutrition and Development (BOND) program defines a nutritional biomarker as "a biological characteristic that can be objectively measured and evaluated as an indicator of normal biological or pathogenic processes, and/or as an indicator of responses to nutrition interventions" [4].

Nutritional biomarkers are typically classified into three primary categories based on their function: biomarkers of exposure (intake), biomarkers of status (body levels), and biomarkers of function (physiological consequences) [4]. Within biomarkers of exposure, a further critical distinction exists between recovery biomarkers and concentration biomarkers. This distinction is paramount for understanding their respective applications in research settings. Recovery biomarkers, the focus of this article, possess unique properties that enable them to serve as objective reference measures for quantifying absolute intake of specific nutrients, thereby playing a crucial role in validating self-reported dietary data and strengthening diet-disease association studies [10] [11].

Table 1: Classification of Nutritional Biomarkers

| Biomarker Category | Definition | Key Characteristics | Examples |

|---|---|---|---|

| Recovery Biomarkers | Biomarkers with a direct, quantitative relationship between intake and excretion | Measure absolute intake; Minimal influence from metabolism; Used as reference standards | Doubly labeled water, Urinary nitrogen, Urinary sodium, Urinary potassium |

| Concentration Biomarkers | Biomarkers correlated with intake but influenced by other factors | Useful for ranking individuals; Cannot assess absolute intake; Affected by metabolism and personal characteristics | Plasma vitamin C, Serum carotenoids, Plasma phospholipid fatty acids |

| Predictive Biomarkers | Biomarkers that can predict intake but with incomplete recovery | Sensitive and time-dependent; Dose-response relationship with intake; Lower overall recovery | Urinary sucrose, Urinary fructose |

| Replacement Biomarkers | Biomarkers serving as proxies when nutrient database information is inadequate | Used when direct assessment is problematic; Fill specific assessment gaps | Phytoestrogens, Polyphenols, Aflatoxin |

Fundamental Principles of Recovery Biomarkers

Recovery biomarkers operate on the fundamental principle of metabolic balance, where the intake of specific nutrients is quantitatively reflected in their excretion or utilization products over a defined period. The core concept underlying these biomarkers is that for certain dietary components, the relationship between consumption and biological output is predictable and quantifiable following established physiological pathways [10] [11]. This quantitative relationship enables researchers to calculate absolute intake based on measurements taken from biological specimens, primarily urine.

The defining characteristic of recovery biomarkers is their ability to fulfill the "classical measurement model criterion" - meaning they measure the intake of interest with measurement error that is unrelated to the targeted intake or other participant characteristics [9]. This property is crucial because it makes recovery biomarkers particularly valuable for identifying and correcting for systematic biases inherent in self-reported dietary data, especially those related to participant characteristics such as age, sex, body mass index, and ethnicity [9] [12].

Several key principles govern the validity and application of recovery biomarkers in research settings. First, they must demonstrate a consistent and predictable relationship between intake and the measured biological output. Second, the recovery of the nutrient or its metabolites must be consistent across individuals with different characteristics. Third, the biomarker must be measurable using accurate and precise analytical methods. Fourth, the timing of specimen collection must align with the biological half-life and excretion patterns of the target nutrient [10] [11]. These principles collectively ensure that recovery biomarkers can serve as reference measures for assessing absolute intake in free-living populations.

Established Recovery Biomarkers and Their Applications

Doubly Labeled Water for Energy Intake

The doubly labeled water (DLW) method is widely regarded as the gold standard for measuring total energy expenditure in free-living individuals. When body weight is stable, total energy expenditure provides a precise measure of energy intake [10] [13]. The method involves administering a dose of water containing stable isotopes of hydrogen (deuterium) and oxygen (oxygen-18). Deuterium leaves the body as water (HDO), while oxygen-18 is eliminated as both water and carbon dioxide. The difference in elimination rates between these two isotopes allows for calculation of carbon dioxide production, from which total energy expenditure is derived using modified Weir equations [12].

The DLW method provides an objective measure of energy intake over a 1-2 week period and has been instrumental in revealing substantial underestimation of energy intake in self-reported dietary assessments, particularly among overweight and obese individuals [13]. For example, studies in the Women's Health Initiative (WHI) cohorts found that energy intake was underestimated by 30-40% among overweight and obese postmenopausal women when using food frequency questionnaires [13]. This method, while highly accurate, requires specialized laboratory equipment and expertise for isotope analysis, making it relatively expensive for large-scale epidemiological studies.

Urinary Nitrogen for Protein Intake

Urinary nitrogen serves as a validated recovery biomarker for dietary protein intake. The method is based on the principle that approximately 81% of ingested nitrogen is excreted in urine over 24 hours, with the remaining portion excreted in feces, sweat, and other losses [12]. Protein intake can be calculated from 24-hour urinary nitrogen using the formula: Protein intake = (24-hour urinary nitrogen ÷ 0.81) × 6.25, where 6.25 is the conversion factor from nitrogen to protein [12].

To ensure complete urine collections, researchers often use para-aminobenzoic acid (PABA) as an internal marker. PABA is assumed to undergo complete urinary excretion within 24 hours, and recovery rates of 85-110% are typically considered indicative of complete collection [12] [11]. Studies comparing this biomarker with self-reported protein intake have demonstrated the superior accuracy of urinary nitrogen. For instance, the Observing Protein and Energy Nutrition (OPEN) Study found that urinary nitrogen explained 22.6% of biomarker variation for protein, compared to just 8.4% for food frequency questionnaires [12].

Urinary Sodium and Potassium

Twenty-four-hour urinary excretion is considered the gold standard for assessing sodium and potassium intake, as the majority of consumed amounts of these minerals are excreted in urine [14]. This method has been crucial for monitoring population-level sodium intake and evaluating public health interventions, such as the UK's program to gradually reduce sodium content in foods [11].

Recent controlled feeding studies have confirmed the superiority of 24-hour urine collections over alternative methods. Research from the Women's Health Initiative demonstrated that sodium and potassium excretions from 24-hour urine collections had "significantly higher correlations with the consumed and quantified intakes" compared to estimates derived from spot urine samples using various algorithms [14]. While spot urine samples have been investigated as less burdensome alternatives, they remain inadequate substitutes for measured 24-hour urine collections for quantitative intake assessment [14].

Table 2: Established Recovery Biomarkers and Their Applications

| Biomarker | Nutrient Assessed | Biological Specimen | Collection Protocol | Key Research Findings |

|---|---|---|---|---|

| Doubly Labeled Water | Total Energy Intake | Urine (spot samples) | Isotope administration with urine collection over 10-14 days | Revealed 30-40% energy underestimation in overweight/obese individuals using FFQs [13] |

| Urinary Nitrogen | Protein Intake | 24-hour urine collection | Complete 24-hour urine collection with PABA compliance check | Explains 22.6% of biomarker variation vs. 8.4% for FFQs [12] |

| Urinary Sodium | Sodium Intake | 24-hour urine collection | Complete 24-hour urine collection, ideally with PABA check | Gold standard for population sodium assessment; Superior to spot urine algorithms [11] [14] |

| Urinary Potassium | Potassium Intake | 24-hour urine collection | Complete 24-hour urine collection, ideally with PABA check | More reliable from 24-hour urine than spot samples in feeding studies [14] |

Methodological Protocols for Recovery Biomarker Assessment

Standardized Experimental Protocols

The accurate application of recovery biomarkers requires strict adherence to standardized protocols for specimen collection, processing, and analysis. For urinary biomarkers, complete 24-hour urine collections are essential. The standard protocol involves participants discarding the first void of the morning and then collecting all subsequent urine for exactly 24 hours, including the first void of the following morning [11]. To assess completeness of collection, researchers typically provide participants with PABA tablets to be taken at specific intervals during the collection period, with recovery rates of 85-110% considered acceptable [12].

For the doubly labeled water method, participants receive an oral dose of isotopically labeled water (²H₂O and H₂¹⁸O). Baseline urine samples are collected before dosing, followed by periodic spot urine samples over the subsequent 10-14 days. The analysis requires specialized equipment such as isotope ratio mass spectrometry to precisely measure the differential elimination rates of the two isotopes [12] [13]. Proper sample handling, storage at -80°C, and avoidance of repeated freeze-thaw cycles are critical for maintaining sample integrity across all recovery biomarker assessments [11].

Quality Control and Validation Procedures

Robust quality control measures are integral to recovery biomarker methodology. This includes the use of blind duplicates (approximately 5% of samples) in analytical runs to assess precision, and participation in external quality assurance programs where available [12]. For urinary nitrogen, sodium, and potassium assessments, laboratory methods with demonstrated accuracy and precision, such as the Kjeldahl method for nitrogen or flame photometry and ion-selective electrode methods for electrolytes, should be employed [11].

The Women's Health Initiative Nutrition and Physical Activity Assessment Study (NPAAS) exemplifies comprehensive quality control in recovery biomarker research. This study implemented a rigorous protocol including doubly labeled water dosing, 24-hour urine collections with PABA checks, 4-day food records, three 24-hour dietary recalls, and food frequency questionnaires, all conducted with strict standardization and quality monitoring [12]. Such meticulous approaches are necessary to ensure the validity of recovery biomarker data.

Comparative Analysis: Recovery vs. Concentration Biomarkers

Fundamental Distinctions and Applications

The distinction between recovery and concentration biomarkers is fundamental to their appropriate application in nutritional research. Recovery biomarkers measure absolute intake through quantitative recovery of nutrients or their metabolites, while concentration biomarkers measure relative concentrations in biological fluids that correlate with intake but are influenced by various metabolic and physiological factors [9] [10]. This fundamental difference dictates their respective roles in nutritional research.

Recovery biomarkers, with their predictable relationship between intake and excretion, are uniquely suited for validation studies aimed at quantifying and correcting for measurement error in self-reported dietary assessments [10]. Their ability to provide objective measures of absolute intake makes them invaluable reference instruments. In contrast, concentration biomarkers are primarily useful for ranking individuals according to their intake of specific nutrients or food groups, but cannot provide estimates of absolute intake due to the influence of confounding factors such as age, sex, metabolism, health status, and lifestyle factors like smoking [9] [11].

Comparative Performance in Research Settings

Empirical studies have demonstrated the superior performance of recovery biomarkers compared to both self-reported measures and concentration biomarkers for assessing absolute intake. The OPEN Study directly compared recovery biomarkers with self-reported data and found that food records explained 7.8% of biomarker variation for energy, compared to just 3.8% for food frequency questionnaires [12]. For protein, food records explained 22.6% of biomarker variation versus 8.4% for food frequency questionnaires [12].

The EPIC-Norfolk study provided a compelling example of how biomarkers can strengthen diet-disease associations. When examining the relationship between fruit and vegetable intake and type 2 diabetes, the inverse association was significantly stronger when using plasma vitamin C (a concentration biomarker) compared to self-reported fruit and vegetable intake from food frequency questionnaires [11]. This demonstrates how both types of biomarkers can play complementary roles in nutritional epidemiology, with recovery biomarkers serving as objective references for absolute intake and concentration biomarkers providing additional evidence for diet-disease relationships.

Diagram: Comparative Roles of Recovery and Concentration Biomarkers in Nutritional Research

Table 3: Comparative Characteristics of Recovery and Concentration Biomarkers

| Characteristic | Recovery Biomarkers | Concentration Biomarkers |

|---|---|---|

| Relationship to Intake | Direct, quantitative relationship | Correlational relationship |

| Absolute Intake Assessment | Yes | No |

| Influence of Metabolism | Minimal | Significant |

| Impact of Personal Characteristics | Limited | Substantial (age, sex, BMI, etc.) |

| Primary Research Application | Validation of self-report; Calibration | Ranking individuals; Diet-disease associations |

| Specimen Collection Burden | High (24-hour urine, multiple specimens) | Variable (single blood/urine spot often sufficient) |

| Number Available | Limited (only a few exist) | Numerous |

| Examples | Doubly labeled water, Urinary nitrogen | Plasma vitamin C, Serum carotenoids, Plasma phospholipid fatty acids |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Materials for Recovery Biomarker Studies

| Research Material | Specific Type/Example | Primary Function | Application Notes |

|---|---|---|---|

| Stable Isotopes | Deuterium oxide (²H₂O), Oxygen-18 water (H₂¹⁸O) | DLW method for energy expenditure measurement | Require specialized mass spectrometry for analysis; High purity standards essential |

| PABA Tablets | Para-aminobenzoic acid | Validation of complete 24-hour urine collections | Typically 80 mg doses; Recovery of 85-110% indicates complete collection |

| Urine Collection Containers | 24-hour urine collection jugs | Biological specimen collection for urinary biomarkers | Light-resistant containers; Pre-treated with preservatives for specific analytes |

| Laboratory Equipment | Isotope ratio mass spectrometer | Analysis of isotopic enrichment in DLW studies | High precision required; Specialized operator training needed |

| Analytical Kits/Reagents | Nitrogen analysis kits, Electrolyte assay kits | Quantification of target analytes in biological specimens | Methods: Kjeldahl for nitrogen; Flame photometry/ISE for electrolytes |

| Biological Specimen Storage | -80°C freezers | Preservation of sample integrity | Multiple aliquots recommended to avoid freeze-thaw cycles |

Recovery biomarkers represent a cornerstone of objective dietary assessment in nutritional research, providing unparalleled accuracy for quantifying absolute intake of specific nutrients. Their unique property of exhibiting a direct, quantitative relationship between intake and biological measurement makes them indispensable for validating self-reported dietary data, quantifying measurement error, and strengthening diet-disease association studies through calibration techniques [10] [15]. While the number of established recovery biomarkers remains limited—primarily including doubly labeled water for energy, urinary nitrogen for protein, and 24-hour urinary sodium and potassium—their role in advancing nutritional epidemiology is profound.

The future of recovery biomarkers lies in addressing current limitations, particularly the high participant burden and cost associated with their collection [14]. Research continues to explore less burdensome alternatives, such as spot urine samples for sodium and potassium, though these have yet to match the accuracy of 24-hour collections [14]. Emerging technologies in metabolomics hold promise for discovering new recovery biomarkers for additional nutrients and food components [16] [13]. Furthermore, innovative study designs and statistical approaches are being developed to maximize the utility of recovery biomarkers in diet-disease association studies, even when available only in subsamples of larger cohorts [15]. As these methodological advances continue, recovery biomarkers will maintain their critical role as objective reference measures that anchor nutritional epidemiology in rigorous biological measurement.

In the evolving landscape of biomedical research and drug development, biomarkers serve as critical tools for objectively measuring biological processes. The FDA-NIH BEST (Biomarkers, EndpointS, and other Tools) Resource defines a biomarker as "a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention" [17]. Within this broad field, biomarkers are categorized according to their specific applications, with concentration biomarkers representing a fundamentally important class for ranking individuals based on their exposure to dietary components or environmental factors [11].

Understanding concentration biomarkers requires placing them in context alongside other biomarker categories, particularly recovery biomarkers. While recovery biomarkers (such as doubly labeled water for energy expenditure or urinary nitrogen for protein intake) are based on metabolic balance and can assess absolute intake, concentration biomarkers are correlated with dietary intake but are influenced by additional factors including metabolism, personal characteristics, and lifestyle [11]. This distinction places concentration biomarkers as ideal tools for ranking individuals within a population rather than determining precise absolute intake values, making them invaluable for epidemiological research where relative comparisons are scientifically meaningful.

The principle behind concentration biomarkers lies in their ability to provide an objective measure of exposure that circumvents the limitations of self-reported data, which is often plagued by measurement error and recall bias [11] [18]. By measuring the concentration of specific compounds or their metabolites in biological samples, researchers can obtain a more reliable indicator of habitual exposure to various dietary components or environmental factors, thereby strengthening the foundation for evidence-based clinical guidance and public health recommendations [4].

Principles and Defining Characteristics of Concentration Biomarkers

Core Definition and Fundamental Properties

Concentration biomarkers are defined as biological measures that correlate with dietary intake or exposure to specific substances, but whose levels are influenced by factors beyond mere intake quantity [11]. Unlike recovery biomarkers which exhibit a direct, quantitative relationship between intake and excretion, concentration biomarkers reflect a complex interplay of absorption, distribution, metabolism, and excretion processes within the body. This fundamental characteristic means that while they provide excellent data for comparing relative exposure between individuals or populations, they do not readily translate to precise absolute intake amounts without additional calibration [11].

The scientific premise underlying concentration biomarkers centers on their dose-response relationship with exposure, wherein higher intake generally leads to higher biomarker concentrations, but this relationship is moderated by individual physiological factors. For example, plasma vitamin C concentration serves as a robust concentration biomarker for fruit and vegetable intake, demonstrating a stronger inverse association with type 2 diabetes risk than self-reported dietary assessments [11]. However, the same plasma vitamin C level in two individuals with identical dietary intake might differ due to factors such as genetic variations in absorption, smoking status, or body composition.

Key Distinguishing Features

Several characteristics distinguish concentration biomarkers from other biomarker categories. First, they are primarily used for ranking individuals within a population according to their exposure level rather than determining precise intake quantities [11]. This makes them particularly valuable for large-scale epidemiological studies where establishing dose-response relationships and comparing quartiles or quintiles of exposure is more relevant than absolute intake values.

Second, concentration biomarkers exhibit context-dependent variability influenced by numerous host factors. As outlined in nutritional biomarker research, these factors include age, sex, genetic predisposition, physiological state, lifestyle factors such as smoking and physical activity, and the presence of certain health conditions [4]. This multifactorial influence necessitates careful study design and statistical adjustment to ensure accurate interpretation.

Third, concentration biomarkers demonstrate temporal specificity based on the biological matrix in which they are measured. Short-term biomarkers reflect intake over hours to days and are typically measured in serum, plasma, or urine. Medium-term biomarkers reflect exposure over weeks to months and may be measured in erythrocytes, while long-term biomarkers reflect intake over months to years and can be assessed in tissues such as adipose or hair [18]. This temporal dimension allows researchers to select biomarkers appropriate for their specific research questions regarding exposure timing.

Comparative Analysis: Concentration Biomarkers vs. Recovery Biomarkers

The distinction between concentration and recovery biomarkers represents a fundamental concept in biomarker science, with significant implications for research design and interpretation. The table below summarizes the key differences between these two biomarker categories:

Table 1: Comparative Characteristics of Concentration vs. Recovery Biomarkers

| Characteristic | Concentration Biomarkers | Recovery Biomarkers |

|---|---|---|

| Primary Function | Ranking individuals based on relative exposure [11] | Assessing absolute intake through metabolic balance [11] |

| Relationship to Intake | Correlated with intake but influenced by metabolism and individual factors [11] | Direct, quantitative relationship with intake over a specific period [11] |

| Key Applications | Epidemiological studies, population ranking, association studies [11] [4] | Validation of dietary assessment methods, calibration studies [11] |

| Examples | Plasma vitamin C, plasma carotenoids [11] | Doubly labeled water, urinary nitrogen, urinary potassium [11] |

| Strengths | Less burdensome to collect, suitable for large studies, reflects biological integration | High accuracy for absolute intake, minimal influence by host factors |

| Limitations | Cannot determine absolute intake, influenced by confounding factors | Expensive, burdensome for participants, limited to specific nutrients |

Practical Implications of the Distinction

The choice between concentration and recovery biomarkers depends fundamentally on the research question and available resources. Recovery biomarkers, while providing gold-standard measurements for absolute intake, are often prohibitively expensive or impractical for large-scale studies [11]. For instance, the doubly labeled water method for measuring energy expenditure requires specialized isotopes and sophisticated analytical equipment, while complete 24-hour urine collection for nitrogen assessment places significant participant burden and requires strict compliance monitoring.

In contrast, concentration biomarkers offer a practical alternative for large epidemiological studies where relative ranking provides sufficient scientific value. The EPIC-Norfolk study exemplifies this application, where plasma vitamin C concentration demonstrated a stronger inverse association with incident type 2 diabetes across population quintiles than self-reported fruit and vegetable intake [11]. This study highlights how concentration biomarkers can enhance statistical power in association studies by reducing measurement error inherent in self-reported dietary data.

Complementary Applications in Research

Rather than existing in opposition, concentration and recovery biomarkers often serve complementary roles in comprehensive research frameworks. Recovery biomarkers may be used in calibration substudies to correct for measurement error in larger studies utilizing concentration biomarkers or self-reported data [11]. This hybrid approach leverages the strengths of both methods while mitigating their individual limitations.

In drug development, this complementary relationship extends to the use of biomarkers throughout the development pipeline. The FDA's Biomarker Qualification Program emphasizes a fit-for-purpose validation approach where the level of evidence needed depends on the specific context of use [19]. For some applications, concentration biomarkers provide sufficient validation, while others may require the more rigorous quantification offered by recovery biomarkers.

Experimental Protocols and Methodological Considerations

Standardized Measurement Approaches

The validity of concentration biomarkers depends critically on rigorous methodological protocols that account for potential confounding factors. The following experimental workflow outlines a standardized approach for concentration biomarker analysis:

Diagram 1: Experimental workflow for concentration biomarker analysis with key confounding factors that must be controlled at each stage.

Critical Protocol Elements

Successful implementation of concentration biomarkers in research requires careful attention to several methodological considerations. Timing of specimen collection represents a crucial factor, as biomarker levels can exhibit diurnal variation or be influenced by fasting status [11]. Standardizing collection times across participants and clearly documenting fasting status helps minimize these sources of variability.

The choice of biological matrix significantly influences the temporal window of exposure assessment. Short-term biomarkers measured in serum or plasma reflect intake over days, while erythrocyte-based biomarkers reflect longer-term exposure due to their approximately 120-day lifespan [11]. Adipose tissue provides an even longer-term assessment window for fat-soluble biomarkers. Each matrix offers distinct advantages and limitations that must align with research objectives.

Sample processing and storage conditions can profoundly impact biomarker stability. Proper aliquotting to avoid repeated freeze-thaw cycles, maintenance of ultra-low storage temperatures (-80°C), and use of appropriate stabilizers are essential practices [11]. For example, vitamin C requires stabilization with metaphosphoric acid to prevent oxidation, while trace mineral assays necessitate precautions against environmental contamination [11].

Accounting for Confounding Factors

The interpretation of concentration biomarker data requires careful consideration of numerous potential confounders. The BOND (Biomarkers of Nutrition and Development) program classifies these as technical, participant-related, biological, and health-related factors [4]. Technical factors include analytical precision and sample quality, while participant factors encompass age, sex, genetics, and lifestyle. Biological factors include homeostatic regulation and circadian rhythms, and health factors incorporate medication use, inflammation, and disease states.

Strategies to address these confounders include standardized collection protocols, classification of observations by life stage and sex, statistical adjustment for known covariates, and measurement of acute-phase proteins like C-reactive protein to account for inflammatory states [4]. In some cases, combining multiple biomarkers can enhance specificity and provide a more robust assessment of exposure or status.

Applications in Research and Drug Development

Nutritional Research and Public Health

Concentration biomarkers have revolutionized nutritional epidemiology by providing objective measures that complement and validate traditional dietary assessment methods. The table below highlights key applications of concentration biomarkers across research domains:

Table 2: Research Applications of Concentration Biomarkers with Representative Examples

| Research Domain | Application | Representative Biomarkers | Key Insights |

|---|---|---|---|

| Nutritional Epidemiology | Objective assessment of dietary exposure [11] | Plasma vitamin C, carotenoids [11] | Stronger diet-disease associations than self-reported data [11] |

| Public Health Monitoring | Population nutritional status assessment [4] | Iron status markers (ferritin, transferrin receptors) [4] | Identification of deficiency states and monitoring of intervention effectiveness |

| Diet-Disease Relationships | Investigating mechanisms linking diet to chronic disease [18] | Metabolomic profiles, specific food biomarkers [18] | Identification of novel pathways and intermediate endpoints |

| Drug Development | Patient stratification, dose selection [19] | Predictive and prognostic biomarkers [19] | Enhanced clinical trial efficiency and personalized treatment approaches |

In nutritional research, concentration biomarkers serve multiple functions at both population and individual levels. At the population level, they enable national nutrition surveillance, identification of at-risk groups, and evaluation of public health interventions [4]. At the individual level, they help assess nutrient reserves, determine response to clinical treatments, and predict future disease risk based on nutritional status [4].

Drug Development and Regulatory Science

In pharmaceutical development, concentration biomarkers play increasingly important roles across the development continuum. The FDA's Biomarker Qualification Program recognizes several biomarker categories relevant to concentration biomarkers, including susceptibility/risk, diagnostic, monitoring, prognostic, predictive, pharmacodynamic/response, and safety biomarkers [17]. Each category serves distinct purposes in enhancing drug development efficiency and patient safety.

Predictive biomarkers, a subset often measured as concentration biomarkers, have dominated the efficacy biomarker segment due to their critical role in guiding tailored treatment strategies, particularly in oncology, autoimmune disorders, and infectious diseases [20]. The growing importance of these biomarkers is evident in the increasing approvals of companion diagnostics, such as Roche's PATHWAY anti-HER2/neu test for HER2-low breast cancer [20].

The regulatory acceptance of biomarkers follows a structured pathway emphasizing fit-for-purpose validation [19]. This approach recognizes that the level of evidence required depends on the specific context of use, with different validation requirements for biomarkers used for early research decisions versus those supporting regulatory approvals. The Biomarker Qualification Program provides a framework for developing biomarkers for specific contexts of use, potentially benefiting multiple drug development programs [17].

Essential Research Tools and Reagent Solutions

The effective implementation of concentration biomarker research requires specialized reagents and analytical platforms. The following table outlines key solutions utilized in this field:

Table 3: Essential Research Reagent Solutions for Concentration Biomarker Analysis

| Research Solution | Primary Function | Specific Applications | Technical Considerations |

|---|---|---|---|

| Immunoassay Platforms | High-specificity detection of protein biomarkers [20] | Oncology, cardiology, metabolic diseases | High throughput capability, requires specific antibodies |

| Mass Spectrometry | Precise quantification of small molecules [18] | Metabolomics, nutrient biomarkers, pharmaceutical compounds | High sensitivity, requires technical expertise |

| Stabilization Reagents | Preservation of labile biomarkers during storage [11] | Vitamins (e.g., metaphosphoric acid for vitamin C), unstable metabolites | Matrix-specific formulations, critical for pre-analytical phase |

| LC-MS/MS Systems | Separation and quantification of complex biomarker panels [18] | Lipidomics, metabolomics, drug monitoring | High resolution, capable of multiplexing |

| Biomarker Panels | Comprehensive assessment of multiple biomarkers [4] | Nutritional status profiling, disease risk assessment | Provides systems biology perspective, computational challenges |

Technology Platforms and Emerging Capabilities

Immunoassays currently dominate the biomarker technologies market, commanding the largest share due to their precise detection capabilities across various disease areas [20]. Companies like Roche and Abbott have driven advances in immunoassay platforms, enhancing diagnostic capacities across diverse disease spectra. These platforms offer the sensitivity and specificity required for many protein-based concentration biomarkers while supporting scalable high-throughput testing.

The emergence of multi-omics approaches represents a significant advancement in concentration biomarker science. By integrating data from genomics, proteomics, metabolomics, and transcriptomics, researchers can develop comprehensive biomarker signatures that better reflect disease complexity [21]. This systems biology approach facilitates improved diagnostic accuracy and treatment personalization while identifying novel therapeutic targets.

Liquid biopsy technologies are expanding the applications of concentration biomarkers beyond traditional matrices. Advances in circulating tumor DNA analysis and exosome profiling are increasing the sensitivity and specificity of these approaches, enabling real-time monitoring of disease progression and treatment responses [21]. Originally developed for oncology, these applications are expanding into infectious diseases, autoimmune disorders, and other medical fields.

Future Directions and Emerging Trends

Technological Innovations

The field of concentration biomarkers is undergoing rapid transformation driven by technological advances. Artificial intelligence and machine learning are revolutionizing biomarker data analysis through sophisticated predictive models that forecast disease progression and treatment responses based on biomarker profiles [21]. These approaches enable automated interpretation of complex datasets, significantly reducing the time required for biomarker discovery and validation.

Single-cell analysis technologies are providing unprecedented resolution in biomarker science. By examining individual cells within complex tissues like tumors, researchers can uncover heterogeneity within cellular populations, identify rare cell populations that drive disease progression, and discover specific biomarkers that predict treatment responses [21]. When integrated with multi-omics data, single-cell analysis provides a comprehensive view of cellular mechanisms, paving the way for novel biomarker discovery.

Regulatory and Methodological Evolution

Regulatory frameworks are evolving to keep pace with biomarker innovations. By 2025, regulatory agencies are expected to implement more streamlined approval processes for biomarkers validated through large-scale studies and real-world evidence [21]. Collaborative efforts among industry stakeholders, academia, and regulatory bodies will promote standardized protocols for biomarker validation, enhancing reproducibility and reliability across studies.

There is growing emphasis on patient-centric approaches in biomarker research, with efforts to improve patient education regarding biomarker testing, incorporate patient-reported outcomes into biomarker studies, and engage diverse patient populations to ensure new biomarkers are relevant across different demographics [21]. This approach addresses health disparities and enhances the applicability of biomarker research to real-world populations.

The field continues to grapple with challenges related to biomarker quantification and validation, data integration complexities, and technical issues surrounding sample collection and storage [20]. Addressing these challenges requires continued methodological refinements and collaborative efforts across disciplines and sectors. As these advancements unfold, concentration biomarkers will play an increasingly central role in personalized medicine, public health monitoring, and pharmaceutical development, solidifying their position as indispensable tools in modern biomedical science.

In the fields of pharmaceutical development, medical device manufacturing, and healthcare sterilization, ensuring process efficacy is paramount for patient safety and regulatory compliance. This guide objectively compares two fundamental approaches to process monitoring: Objective Quantification, which refers to the precise physical measurement of process variables, and Biological Indicators (BIs), which provide a direct biological challenge to the sterilization process. The selection between these methods is not merely a technical choice but a strategic one, influencing the reliability, interpretability, and regulatory acceptance of validation data. This comparison is framed within a broader research context familiar to scientists: the distinction between "recovery biomarkers," which measure a biological response that returns to a baseline state, and "concentration biomarkers," which provide a precise quantitative measurement of a specific analyte. In sterilization, Biological Indicators function analogously to recovery biomarkers, demonstrating the process's ability to "recover" to a sterile state, while objective quantification with physical sensors acts as a concentration biomarker, providing continuous, numerical data on critical process parameters.

Quantitative Comparison of Key Metrics

The following tables summarize core performance and market data for Biological Indicators and the context in which objective quantification is used.

Table 1: Performance and Characteristic Comparison [22] [23] [24]

| Metric | Biological Indicators (BIs) | Objective Quantification (Physical Indicators) |

|---|---|---|

| Fundamental Principle | Direct biological challenge using resistant bacterial spores (e.g., G. stearothermophilus) | Physical measurement of process parameters (e.g., temperature, pressure, time) |

| Primary Output | Qualitative or semi-quantitative (Growth/No-Growth; D-value) | Quantitative, continuous numerical data |

| Response to Process Failure | Integrates effect of all process variables; can detect failures missed by other methods [23] | Measures specific parameters; may not detect complex failures like non-condensable gases (NCGs) on its own [23] |

| Result Time | 24-48 hours (Standard); Rapid-read variants: < 3 hours [25] | Real-time or near real-time |

| Regulatory Role | Considered the highest level of monitoring; often required for validation [22] | Required for cycle development and routine monitoring |

| Data Interpretation | Requires incubation and biological interpretation | Direct readout of physical parameters |

Table 2: Market Scope and Adoption Metrics [26] [27] [28]

| Market Aspect | Biological Indicators | Note on Objective Quantification |

|---|---|---|

| U.S. Demand (2025) | USD 59.6 Million [26] | (Market data often integrated with sterilizer equipment) |

| Global Market Forecast | USD 1,205.1 Million by 2032 (CAGR 5.1%) [28] | |

| Dominant Sterilization Method | Steam Sterilization (40.9% share of BI market) [28] | Steam sterilizers are the primary equipment physically monitored. |

| Fastest-Growing Region | Asia Pacific (24.3% market share in 2025) [28] | |

| Key Growth Driver | Stringent regulatory requirements and expansion of biopharmaceuticals [26] [28] |

Experimental Protocols for Performance Verification

D-value Verification of Biological Indicators

The D-value, or decimal reduction time, is a critical quantitative measure of a BI's resistance, representing the time required to reduce the microbial population by 90% at a specific temperature. Its verification is a cornerstone of objective quantification in BI performance.

Protocol Overview: The Limited Spearman-Karber (LSK) method is a widely accepted fraction-negative technique for determining the D-value [22].

Equipment: A Biological Indicator Evaluator Resistometer (BIER) vessel is mandatory. It must meet stringent specifications per ANSI/AAMI ST44:2002 [22]:

- Time: Resolution of 0.01s, accuracy of ±0.02s.

- Temperature: Resolution of 0.1°C, accuracy of ±0.5°C.

- Come-up Time: Must reach setpoint temperature (e.g., 121°C) within 10 seconds or less.

Procedure:

- Sample Preparation: Multiple groups of BIs (e.g., 20 per group) are prepared.

- Cycle Exposure: Groups are exposed to a series of increasing sub-lethal exposure times at a constant temperature (e.g., 121°C). The time intervals are chosen to bracket the expected D-value.

- Fraction-Negative Data: After exposure, each BI is transferred aseptically to a growth medium and incubated at the optimal temperature for the species (e.g., 55-60°C for G. stearothermophilus). The number of BIs showing no growth (fraction-negative) at each exposure time is recorded.

- Calculation: The LSK formula is applied to the fraction-negative data to calculate the mean D-value and its confidence limits. Per USP requirements, the determined D-value must be within 20% of the labeled claim, and the confidence limits must be within 10% of the determined value [22].

Comparative Performance Testing in a Simulated Failure Mode

This experiment evaluates the ability of BIs and physical/chemical indicators to detect a compromised sterilization cycle, specifically one with introduced non-condensable gases (NCGs).

Protocol Overview based on [23]:

Simulated Failure Mode: A controlled failure is induced in a steam sterilizer through either a controlled chamber leakage or a door seal failure, introducing known quantities of air (0–30 L/min or 0–30% failure).

Indicator Placement:

- Test Articles: Self-contained BIs, Type 5 chemical indicators (CIs), and physical indicators (thermocouples) are placed within the chamber, including in proposed challenge locations.

- Control: A patented integrated air detector is used as a reference standard for NCG detection.

Execution and Analysis: Multiple sterilization cycles are run with varying levels of introduced air. The response of each indicator type is recorded and compared against the reference air detector.

Key Findings: The study demonstrated that individually placed BIs, CIs, and thermocouples were unable to detect small volumes of NCGs. In contrast, the integrated air detector (objective quantification) identified the failure from the first air injection [23]. This highlights a critical limitation of point-of-use biological and chemical monitors in certain failure scenarios.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Equipment for Sterilization Validation Research

| Item | Function & Description | Application in Research |

|---|---|---|

| BIER Vessel | A precision resistometer that delivers exact, rapid-cycle steam sterilization exposures for highly accurate D-value determination [22]. | Foundational for the objective quantification of BI resistance. |

| Self-Contained BI | A single-use vial containing bacterial spores, a growth medium, and a pH indicator. Simplifies use and reduces contamination risk [26] [28]. | The standard "recovery biomarker" for routine sterilization validation and cycle challenges. |

| Geobacillus stearothermophilus Spores | Highly resistant bacterial spores used as the biological challenge organism for steam sterilization processes. | The active biological component in steam BIs; the "analyte" whose inactivation is monitored. |

| Type 5 Chemical Indicator (Moving Front) | An integrator that reacts to all critical process variables (time, temperature, steam) and is designed to simulate the performance of a BI [24]. | Provides a rapid, quantitative-like visual assessment of cycle conditions at the point of use. |

| Rapid-Read BI | Utilizes fluorescence or colorimetric technology to detect spore enzyme activity, reducing readout time from days to hours (e.g., 1-3 hours) [25]. | Bridges the gap between the speed of objective quantification and the direct biological relevance of traditional BIs. |

| Non-Condensable Gas (NCG) Detector | An electronic device integrated into the sterilizer to objectively quantify the presence of air or other NCGs in the chamber during the cycle [23]. | Critical for diagnosing specific physical process failures that may not be detected by BIs placed inside a load. |

Visualizing the Verification Workflow and Performance Relationship

The following diagram illustrates the logical workflow for the experimental D-value verification protocol of a Biological Indicator, highlighting the integration of objective quantification with a biological endpoint.

Diagram 1: Experimental workflow for biological indicator D-value verification.

The relationship between the quantitative measurements from physical sensors and the qualitative result from a Biological Indicator is the basis of sterilization cycle validation. The following diagram conceptualizes this critical link.

Diagram 2: Logical relationship between objective quantification and biological indicators in process validation.

This guide provides a systematic comparison of diagnostic, predictive, and prognostic biomarkers, foundational to precision medicine and therapeutic development. For researchers and drug development professionals, understanding these distinct roles is critical for clinical trial design, patient stratification, and therapeutic decision-making. We objectively compare their clinical applications, validation methodologies, and performance characteristics using recent experimental data and emerging technologies, contextualized within the framework of recovery versus concentration biomarkers research.

Prognostic biomarkers inform about a disease's natural history, predictive biomarkers forecast response to a specific therapy, and diagnostic biomarkers confirm disease presence [29] [30]. The following sections detail their functional relationships, supported by quantitative data and experimental protocols.

Biomarker Classification and Functional Relationships

Biomarkers are objectively measurable indicators of biological processes, pathogenic states, or pharmacological responses [31]. Their clinical utility is defined by specific functional roles:

- Diagnostic Biomarkers: Confirm the presence or type of a disease. Example: Glial fibrillary acidic protein (GFAP) in mild traumatic brain injury (mTBI) shows moderate sensitivity (84.5%) and improved specificity (61.0%) for confirming injury [32].

- Prognostic Biomarkers: Provide information about a patient's clinical outcome, such as disease recurrence or progression, independent of therapeutic intervention. Example: Elevated Lactate Dehydrogenase (LDH) is incorporated into American Joint Committee on Cancer (AJCC) staging for melanoma, indicating poor overall survival [29].

- Predictive Biomarkers: Identify patients likely to respond to a specific treatment. Example: Programmed Death-Ligand 1 (PD-L1) expression ≥50% in non-small cell lung cancer (NSCLC) predicts improved overall survival with pembrolizumab (30.0 months vs. 14.2 months with chemotherapy) [29].

The relationship between these categories is illustrated below:

Comparative Performance Data

Table 1: Performance Characteristics of Key Biomarkers Across Categories

| Biomarker | Category | Clinical Context | Sensitivity | Specificity | Key Clinical Outcome |

|---|---|---|---|---|---|

| S100B | Diagnostic | Mild Traumatic Brain Injury | 91.6% | 42.4% | Effective rule-out to minimize unnecessary CT scans [32] |

| GFAP | Diagnostic | Mild Traumatic Brain Injury | 84.5% | 61.0% | Confirmatory marker for mTBI diagnosis [32] |

| PD-L1 | Predictive | NSCLC (Pembrolizumab) | N/A | N/A | Median OS: 30.0 mo vs 14.2 mo (chemotherapy); HR: 0.63 [29] |

| MSI-H/dMMR | Predictive | Pan-cancer (Pembrolizumab) | N/A | N/A | ORR: 39.6%; Durable responses in 78% [29] |

| TMB ≥10 mut/Mb | Predictive | Pan-cancer (Pembrolizumab) | N/A | N/A | ORR: 29% vs 6% (low-TMB); Tissue-agnostic approval [29] |

| LDH | Prognostic | Melanoma | N/A | N/A | Independent prognostic factor in AJCC staging [29] |

| IL-6 | Prognostic/Predictive | Malnutrition & Nutritional Therapy | N/A | N/A | High levels (≥11.2 pg/mL): 3.5x mortality increase (adj. HR); attenuated nutritional therapy benefit [30] |

| ctDNA Reduction | Predictive | Post-Immunotherapy (Multiple Cancers) | N/A | N/A | ≥50% reduction at 6-16 weeks correlates with better PFS/OS [29] |

Abbreviations: OS, Overall Survival; HR, Hazard Ratio; ORR, Objective Response Rate; PFS, Progression-Free Survival; N/A, Not Applicable.

Table 2: Emerging Biomarkers in Early Cancer Detection

| Biomarker | Category | Technology | Clinical Utility | Key Challenges |

|---|---|---|---|---|

| ctDNA | Diagnostic/Predictive | Liquid Biopsy, NGS | Early cancer detection, monitoring treatment response | Low concentration, fragmentation, clearance [33] |

| Exosomes | Diagnostic | Liquid Biopsy, Isolation Kits | Cargo analysis (proteins, nucleic acids) for early detection | Complexity of isolation, standardization [33] |

| MicroRNAs (miRNAs) | Diagnostic/Prognostic | PCR, Microarrays | Disease subtyping, treatment response prediction | Inter-patient variability, lack of standardization [33] |

| Multi-omics Signatures | Predictive/Prognostic | AI/ML Integration | Improved patient stratification, ~15% predictive accuracy improvement [29] [31] | Data heterogeneity, integration complexity [31] [34] |

Experimental Protocols and Methodologies

Predictive Biomarker Discovery (MarkerPredict Framework)

The MarkerPredict framework exemplifies a modern, computational approach to identifying predictive biomarkers for targeted cancer therapies [35].

Workflow Overview:

Detailed Protocol:

- Network and Motif Analysis: Three signaling networks (Human Cancer Signaling Network, SIGNOR, ReactomeFI) are analyzed using FANMOD software to identify three-nodal motifs. Triangles containing both intrinsically disordered proteins (IDPs) and known therapeutic targets are selected [35].

- Feature Extraction: Features include network topological properties and protein disorder scores from DisProt, AlphaFold (pLLDT<50), and IUPred (score>0.5) [35].

- Training Set Construction: 880 target-interacting protein pairs from literature evidence. Positive controls (class 1) are proteins established as predictive biomarkers in CIViCmine database [35].

- Machine Learning: Random Forest and XGBoost models are trained on network-specific and combined data. Hyperparameters are optimized via competitive random halving [35].

- Validation: Leave-one-out-cross-validation (LOOCV) and k-fold cross-validation are performed. Models achieve LOOCV accuracy of 0.7–0.96 [35].

- Biomarker Probability Score (BPS): A normalized summative rank of the 32 different models is calculated to classify 3,670 target-neighbor pairs [35].

Inflammatory Biomarker Assessment in Nutritional Therapy

This protocol assesses prognostic value and ability to predict response to nutritional intervention [30].

Detailed Protocol:

- Study Design: Secondary analysis of the randomized controlled EFFORT trial.

- Patient Population: 996 medical inpatients at risk of malnutrition.

- Intervention: Individualized nutritional support to achieve energy and protein targets vs. usual care.

- Biomarker Measurement:

- IL-6 and TNF-α: Measured from biobank samples using MSD Multi-Spot Assay System.

- CRP: Data obtained from hospital's routine laboratory analysis.

- Endpoint: Primary endpoint was 30-day all-cause mortality.

- Statistical Analysis: Multivariate Cox regression adjusted for confounding factors. IL-6 high/low cutoff: 11.2 pg/mL.

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Platforms for Biomarker Research

| Reagent/Platform | Function | Application Example |

|---|---|---|

| U-PLEX Human Assay (MSD) | Multiplex cytokine quantification | Measured IL-6 and TNF-α in nutritional therapy study [30] |

| AlphaFold DB | Protein structure prediction (pLLDT score) | Identifying intrinsically disordered regions for biomarker potential [35] |

| IUPred2.0 | Intrinsic protein disorder prediction | Supplemental disorder analysis in MarkerPredict [35] |

| CIViCmine Database | Literature-mined biomarker evidence | Training set construction for predictive biomarker classification [35] |

| 10x Genomics Platform | Single-cell multi-omics (RNA, protein) | Uncovering clinically actionable tumor subgroups missed by RNA alone [34] |

| Element Biosciences AVITI24 | Integrated sequencing and cell profiling | Combined DNA, RNA, and protein analysis from single sample [34] |

| Sapient Biosciences Platform | Industrialized multi-omics profiling | High-throughput molecular profiling for biomarker discovery [34] |

Discussion and Clinical Perspectives

The biomarker landscape is rapidly evolving with multi-omics and artificial intelligence driving discovery. MarkerPredict demonstrates how integrating network topology and protein disorder achieves high-accuracy (0.7–0.96 LOOCV) predictive biomarker classification [35]. Furthermore, inflammatory biomarkers like IL-6 show dual utility, providing both prognostic mortality risk (adjusted HR 3.5) and predicting nutritional therapy response [30].

Critical challenges persist in clinical translation, including data heterogeneity, assay standardization, and regulatory hurdles like Europe's In Vitro Diagnostic Regulation (IVDR) [31] [34]. Multi-omics integration, facilitated by AI, improves predictive accuracy by approximately 15% and is reshaping biomarker development from a "one mutation, one target" model to comprehensive molecular profiling [29] [34].

For researchers comparing recovery versus concentration biomarkers, the distinction is contextual: a single biomarker like IL-6 can serve multiple roles, while emerging multi-omics signatures combine various biomarker types for superior stratification. Future directions include standardizing biomarker thresholds, validating in diverse populations, and integrating continuous monitoring through digital biomarkers and wearable devices.

Strategic Implementation: Methodological Pathways and Real-World Applications in Research

In the field of precision medicine, biomarkers serve as critical indicators of biological processes, pathogenic states, or pharmacological responses to therapeutic interventions [36]. Within this broad category, recovery biomarkers and concentration biomarkers represent two distinct classes with different applications and methodological requirements. Recovery biomarkers, often used in nutritional and metabolic studies, provide a quantitative measure to calibrate self-reported dietary intake and correct for measurement errors in exposure assessment [15]. In contrast, concentration biomarkers typically measure the presence and quantity of specific biological molecules, such as proteins, genetic mutations, or metabolic products, and are more commonly applied in disease detection, diagnosis, and prognosis [36].

The fundamental distinction between these biomarker types lies in their underlying purpose and measurement characteristics. Recovery biomarkers are designed to estimate the recovery of an administered substance or the accuracy of reported intake, thereby enabling the calibration of self-reported data. Concentration biomarkers, however, quantify the specific concentration of an analyte in a biological specimen, serving as direct indicators of biological state or pathological processes. This comparison guide examines the study designs, experimental methodologies, and validation approaches essential for identifying and validating these distinct biomarker classes within drug development and clinical research contexts.

Comparative Analysis: Recovery vs. Concentration Biomarkers

Table 1: Fundamental Characteristics of Recovery and Concentration Biomarkers

| Characteristic | Recovery Biomarkers | Concentration Biomarkers |

|---|---|---|

| Primary Function | Calibrate self-reported data; correct measurement error [15] | Disease detection, diagnosis, prognosis, prediction [36] |

| Measurement Focus | Accuracy of reported intake or recovery of administered substance [15] | Quantity of specific biological molecules [36] |

| Typical Applications | Nutritional studies, dietary assessment, exposure calibration [15] | Oncology, cardiovascular disease, neurological disorders [36] [37] |

| Key Study Designs | Controlled feeding studies, biomarker development cohorts [15] | Randomized clinical trials, case-control studies, prospective cohorts [36] |

| Validation Priorities | Ability to correct measurement error in self-reported data [15] | Analytical validity, clinical validity, clinical utility [36] |

| Regulatory Considerations | Fit-for-purpose validation for dietary assessment [15] | FDA biomarker categories (diagnostic, prognostic, predictive, etc.) [37] |

Study Designs for Biomarker Discovery and Validation

Study Designs for Recovery Biomarkers

The development of recovery biomarkers employs specialized study designs focused on quantifying and correcting measurement errors in self-reported data. As highlighted in nutritional research, three regression calibration approaches are particularly relevant [15]:

Traditional Calibration Approach: This method relies on a calibration cohort and assumes the existence of an objective biomarker with random independent measurement error.

Biomarker Development Cohort Approach: This innovative design obviates the need for pre-existing objective biomarkers by utilizing controlled feeding studies to develop new biomarkers specifically for calibration purposes.

Two-Stage Approach: This hybrid method leverages both calibration and biomarker development cohorts to enhance the precision of diet-disease association estimates.

These approaches were validated through simulation studies demonstrating that the traditional method can produce biased association estimates when its underlying assumptions are violated, while the proposed alternatives provide more robust error correction without requiring objective biomarkers [15]. Application of these methods to Women's Health Initiative cohorts supported significant findings about associations between sodium-potassium intake ratios and cardiovascular disease risk while improving statistical efficiency.

Study Designs for Concentration Biomarkers

Concentration biomarker development follows established pathways emphasizing rigorous statistical design and validation. The biomarker journey from discovery to clinical use involves multiple phases, with intended use and target population defined early in development [36]. Key considerations include:

Prognostic vs. Predictive Biomarker Identification:

- Prognostic biomarkers are identified through retrospective studies using biospecimens from cohorts representing target populations, with validation through main effect tests of association between biomarker and outcome [36].

- Predictive biomarkers require data from randomized clinical trials and are identified through interaction tests between treatment and biomarker in statistical models [36].

Bias Mitigation Strategies: Randomization and blinding represent crucial tools for avoiding bias in concentration biomarker studies. Randomization controls for non-biological experimental effects, while blinding prevents unequal assessment of biomarker results by keeping laboratory personnel unaware of clinical outcomes [36].

Table 2: Methodological Requirements for Different Concentration Biomarker Types

| Biomarker Type | Study Design Requirements | Statistical Analysis | Example |

|---|---|---|---|

| Prognostic | Retrospective studies with prospectively collected specimens; case-control studies; single-arm trials [36] | Main effect test of association between biomarker and outcome | STK11 mutation associated with poorer outcome in non-squamous NSCLC [36] |

| Predictive | Randomized clinical trials; retrospective analysis of trial data [36] | Interaction test between treatment and biomarker | EGFR mutation status predicting response to gefitinib in IPASS study [36] |

| Diagnostic | Cohort studies; case-control designs; prospective screening trials [37] | Sensitivity, specificity, ROC analysis, positive/negative predictive value [36] | Biomarkers for pain conditions or neurological disorders [37] |

| Pharmacodynamic/Response | Pre-post intervention studies; dose-response trials [37] | Change from baseline analysis; dose-response relationship | Target engagement biomarkers for pain therapeutics [37] |

Experimental Protocols and Methodologies

Analytical Methods and Validation Metrics

Robust analytical methods are essential for both recovery and concentration biomarker development. The analytical plan should be predefined and documented prior to data collection to avoid data-driven conclusions [36]. Key methodological considerations include:

Multiple Comparison Control: When evaluating multiple biomarkers, controlling false discovery rates (FDR) is especially important for genomic or high-dimensional data [36].

Performance Metrics: Different metrics apply depending on study goals and biomarker type [36]:

- Sensitivity and Specificity: Proportion of true cases testing positive and true controls testing negative, respectively

- Predictive Values: Function of disease prevalence, indicating the probability of actual disease given test results

- Discrimination: Ability to distinguish cases from controls, typically measured by area under the ROC curve

- Calibration: How well a biomarker estimates disease risk or event probability

Multi-Biomarker Panels: Combining multiple biomarkers often improves performance despite added measurement error. Using continuous rather than dichotomized measures retains maximal information for model development [36].

Advanced Computational Approaches

Emerging computational methods are enhancing biomarker discovery for both recovery and concentration applications: