Regression Calibration for Dietary Measurement Error: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive overview of regression calibration methods to address the pervasive challenge of dietary measurement error in biomedical research.

Regression Calibration for Dietary Measurement Error: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive overview of regression calibration methods to address the pervasive challenge of dietary measurement error in biomedical research. Tailored for researchers, scientists, and drug development professionals, it explores the foundational concepts of systematic and random error in nutritional data, details methodological applications from standard to advanced survival and high-dimensional techniques, and offers practical strategies for troubleshooting and optimization. The content further covers critical validation study designs and comparative analyses of correction methods, synthesizing current evidence and best practices to strengthen the validity of diet-disease association studies and evidence generation from real-world data.

Understanding and Quantifying Dietary Measurement Error

Defining Systematic vs. Random Error in Dietary Assessment

Accurate dietary assessment is fundamental to nutrition research, enabling the investigation of diet-disease relationships and the formulation of public health policy [1]. However, self-reported dietary intake data are notoriously susceptible to measurement errors that can obscure true associations and compromise research validity [1] [2]. Understanding the fundamental distinction between systematic error (bias) and random error is therefore crucial for designing robust studies and applying appropriate statistical corrections [3]. This document delineates these error types within the context of dietary assessment and outlines protocols for their quantification and adjustment, with particular emphasis on regression calibration methods for measurement error research.

Conceptual Foundations of Measurement Error

Measurement error in dietary assessment can be defined as the difference between the reported dietary intake and the true usual intake. These errors are broadly categorized into systematic error (bias) and within-person random error (day-to-day variation) [2].

Systematic Error (Bias)

Systematic error consistently distorts measurements in a specific direction and does not average out with repeated administrations [2]. Its components include:

- Intake-related bias: The systematic error that is correlated with true intake levels. A key manifestation is the "flattened-slope" phenomenon, where individuals with high true intake tend to under-report, and those with low true intake tend to over-report [2].

- Person-specific bias: The component of systematic error unique to an individual, which may be influenced by characteristics like social desirability or body image [2].

A primary challenge with systematic error is that it cannot be eliminated through averaging multiple measurements or standard statistical modeling without a reference instrument [2].

Within-Person Random Error

Within-person random error refers to the day-to-day variation in an individual's diet and reporting, which causes their reported intake on any single day to deviate from their true long-term usual intake [2]. Unlike systematic error, data affected only by random error are not biased, but imprecise. Averaging multiple 24-hour recalls or food records can reduce the influence of this random variation, providing a better estimate of usual intake [1] [2]. When repeated measures are available, statistical modeling can adjust for day-to-day variation to estimate the usual intake distribution for a population [2].

Table 1: Characteristics of Systematic vs. Random Error in Dietary Assessment

| Feature | Systematic Error (Bias) | Within-Person Random Error |

|---|---|---|

| Definition | Consistent, directional deviation from true value | Day-to-day variation in intake and reporting |

| Impact on Data | Introduces bias | Introduces imprecision |

| Reduction via Averaging | No | Yes |

| Primary Components | - Intake-related bias- Person-specific bias | - Biological day-to-day variation- Measurement error on a given day |

| Correction Methods | Requires a reference instrument (e.g., recovery biomarker) | Statistical modeling of repeated measures |

Quantitative Characterization of Errors Across Assessment Methods

The magnitude and nature of measurement errors vary significantly across different dietary assessment tools. Table 2 summarizes the primary error profiles and key considerations for major methods.

Table 2: Error Profiles of Common Dietary Assessment Instruments

| Method | Primary Systematic Error | Primary Random Error | Key Considerations |

|---|---|---|---|

| 24-Hour Recall (24HR) | Least biased for energy intake; potential under-reporting influenced by interview mode [1] | High day-to-day variation; requires multiple (≥2) non-consecutive administrations to estimate usual intake [1] [2] | Relies on memory; interviewer-administered versions can be costly [1] |

| Food Record | High potential for reactivity (participants change diet when recording) [1] | Day-to-day variation; can be reduced by extending recording period (typically 3-4 days) [1] | Requires a literate, highly motivated population; burden increases with days recorded [1] |

| Food Frequency Questionnaire (FFQ) | Systematic error due to portion size estimation and limited food list; prone to energy under-reporting [1] | Lower random error for habitual intake assessment as it queries a long time period [1] | Designed to rank individuals by intake; not precise for absolute intake values [1] |

| Experience Sampling (ESM) | Potential for reduced reactivity and recall bias through real-time assessment [4] | Error depends on sampling intensity and recall period (e.g., 15 min to 3.5h) [4] | Emerging method; protocol design (duration, prompts) is critical to balance feasibility and accuracy [4] |

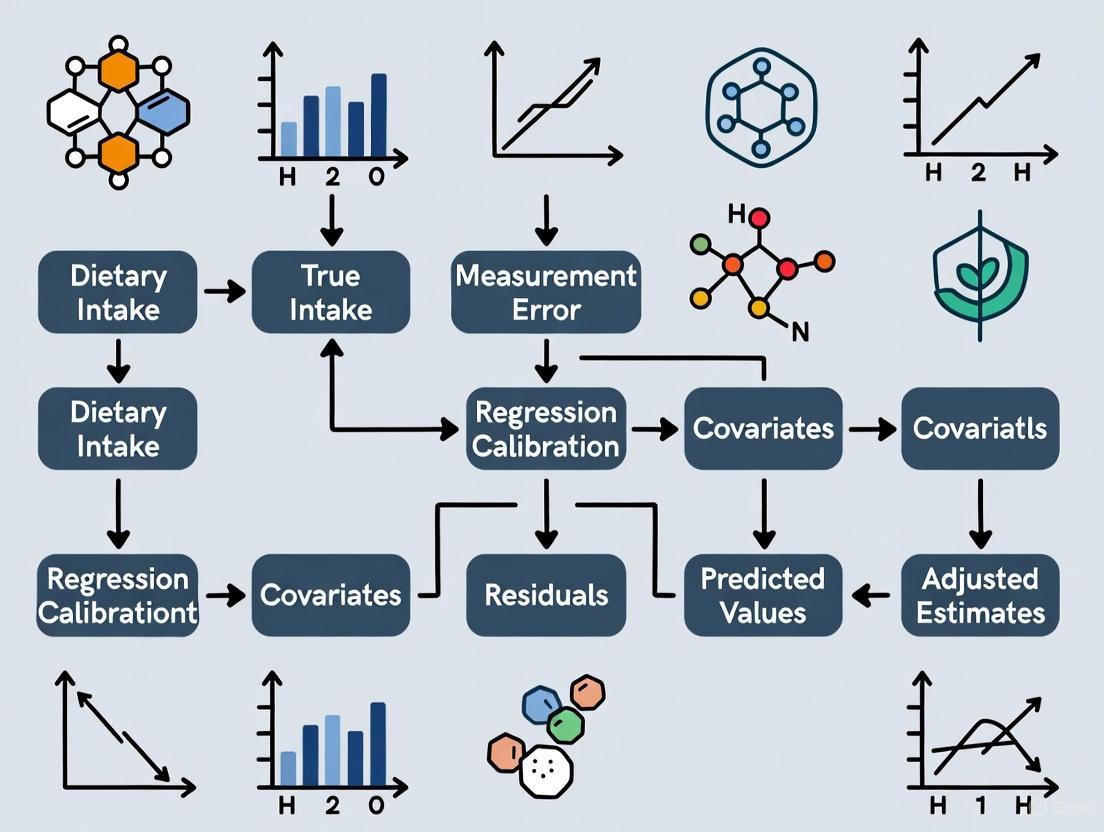

The following diagram illustrates the relationship between true intake, the different types of error, and the resulting reported intake across various assessment methods.

Experimental Protocols for Error Quantification

Protocol 1: Validation Study Using Recovery Biomarkers

Objective: To quantify systematic error (bias) in self-reported energy and protein intake. Background: Recovery biomarkers, such as doubly labeled water for energy and urinary nitrogen for protein, are considered unbiased references because they objectively measure metabolized intake, largely independent of self-report [1].

Materials:

- Participants from main study cohort

- Self-report instrument(s) under validation (e.g., 24HR, FFQ)

- Doubly labeled water kits and urine collection equipment

- Protocol for biomarker analysis (e.g., mass spectrometry)

Procedure:

- Recruitment: Recruit a subsample (e.g., 10-20%) from your main study population to form an internal validation study [3].

- Data Collection:

- Administer the self-report dietary instrument (e.g., multiple 24HRs) to participants.

- Concurrently, administer the recovery biomarker protocol:

- Data Analysis:

- Calculate the reported intake from the dietary instrument.

- Calculate true intake from the biomarker measurements using established equations.

- For each participant ( i ), compute the measurement error ( \omegai = X^i - X_i ), where ( X^ ) is self-reported intake and ( X ) is biomarker-based intake.

- Fit a linear measurement error model: ( X^* = \alpha0 + \alphaX X + e ) [3].

- The intercept ( \alpha0 ) indicates location bias, and the slope ( \alphaX ) indicates scale bias. A value of ( \alpha_X < 1 ) is indicative of the "flattened-slope" phenomenon [2] [3].

Protocol 2: Reliability Study for Random Error Estimation

Objective: To estimate the magnitude of within-person random error (day-to-day variation) in a dietary assessment method. Background: Repeated short-term measurements (e.g., 24HRs) on the same individual allow for the decomposition of total variance into between-person and within-person components [2].

Materials:

- Study participants

- Standardized dietary interview protocol or food record application

- Nutrient database for analysis

Procedure:

- Study Design: Conduct repeated administrations of the dietary instrument (e.g., 24HR or food record). A minimum of two non-consecutive days per participant is required, but more repeats (e.g., 3-4) yield more precise estimates [1] [2].

- Data Collection: Collect dietary data on the predetermined, randomized days. If using 24HR, ensure interviews are blinded to previous responses.

- Data Analysis:

- Compute nutrient intakes for each participant and each day.

- Use a random effects analysis of variance (ANOVA) model to partition the total variance ( \sigma^2{Total} ) into:

- Between-person variance (( \sigma^2B )): Variance of the individuals' usual intakes.

- Within-person variance (( \sigma^2_W )): Variance of day-to-day deviations around each individual's usual intake.

- The ratio ( \sigma^2W / \sigma^2B ) informs the number of repeat days needed to estimate usual intake reliably. A high ratio indicates substantial day-to-day variation, necessitating more repeat measures [2].

Regression Calibration for Error Adjustment

Regression calibration is a primary statistical method to correct point and interval estimates in regression models for bias introduced by measurement error [5] [3]. The following workflow details the application of this method.

Procedure:

- Obtain Calibration Data: Perform an internal validation study (Protocol 1) where both the error-prone measurement ( X^* ) (e.g., FFQ intake) and a reference measurement ( X ) (e.g., biomarker or multiple 24HRs) are collected for a subset of participants [3].

- Estimate Calibration Equation: In the validation subset, fit a model predicting the true exposure ( X ) from the mismeasured exposure ( X^* ), often adjusting for covariates ( Z ): ( X = \lambda0 + \lambda1 X^* + \lambda_Z Z + \epsilon ) [5] [3]. This is the calibration equation.

- Compute Calibrated Values: For all individuals in the main study, compute a calibrated exposure value using the coefficients from Step 2: ( \hat{X} = \hat{\lambda}0 + \hat{\lambda}1 X^* + \hat{\lambda}_Z Z ) [3].

- Run Outcome Model: Use the calibrated exposure ( \hat{X} ) in place of ( X^* ) in the primary outcome model (e.g., logistic or Cox regression) relating diet to health outcome [5]. This mitigates bias in the estimated diet-disease association.

This method has been extended for complex outcomes, such as Survival Regression Calibration (SRC) for time-to-event data, which calibrates parameters of survival models rather than applying a simple linear correction to event times [6].

Table 3: Key Resources for Dietary Measurement Error Research

| Resource / Reagent | Function / Application |

|---|---|

| Recovery Biomarkers | Objective, unbiased reference measures for specific nutrients (energy, protein, potassium, sodium) to quantify systematic error in validation studies [1] [2]. |

| ASA-24 (Automated Self-Administered 24HR) | A freely available, web-based tool for collecting multiple 24-hour recalls, reducing interviewer burden and cost, useful for both main studies and as a reference in validation [1]. |

| Dietary Assessment Primer (NCI) | A comprehensive online resource covering dietary assessment concepts, measurement error, and best practices for researchers [2]. |

| Regression Calibration Software (SAS/R Macros) | Specialized statistical code (e.g., as referenced in [5]) to implement measurement error corrections in epidemiological analyses. |

| Validation Study Dataset | An internal or external dataset containing paired measurements of error-prone and reference instruments, essential for estimating calibration equations [3] [6]. |

| Nutrient Database | A detailed database of food composition, required to convert reported food consumption into estimated nutrient intakes for analysis [1]. |

In dietary measurement error research, identifying and mitigating systematic errors is fundamental to obtaining valid findings in nutritional epidemiology and chronic disease studies. The most pervasive challenges in self-reported dietary data are recall bias, social desirability bias, and portion misestimation [7] [8]. These errors distort true diet-disease relationships, leading to attenuated effect estimates, reduced statistical power, and potentially flawed scientific conclusions [7]. Regression calibration methods provide a powerful statistical framework for correcting these biases, using reference measurements to adjust for systematic measurement errors in self-reported data [9]. This paper details the quantitative impacts of these error sources and presents standardized protocols for implementing regression calibration in dietary research.

Quantitative Impacts of Dietary Measurement Errors

The following table summarizes the documented effects of key measurement errors on diet-disease association estimates, as demonstrated in empirical studies:

Table 1: Quantitative Impacts of Measurement Errors on Diet-Disease Associations

| Error Source | Nutrient/Food Example | Impact on Association | Empirical Evidence |

|---|---|---|---|

| Recall Bias & General Measurement Error | Protein & Potassium | Attenuation (AF ≠ 1) | AF of 1.14 (Protein) and 1.28 (Potassium) with standard RC versus uncorrected FFQ [7]. |

| Systematic Underreporting | High-Fat Foods (Bacon, Fried Chicken) | Distorted consumption estimates | Machine learning models identified 78-92% accuracy in classifying underreported entries [8]. |

| Social Desirability Bias | Self-Reported FFQ Data | Systematic under/over-reporting | Associated with individual characteristics like BMI; leads to biases not automatically rectified in analysis [10]. |

Experimental Protocols for Regression Calibration

Core Regression Calibration Protocol

This protocol corrects for systematic measurement error in a Food Frequency Questionnaire (FFQ) using 24-hour dietary recalls (24hR) as a reference instrument [7] [11].

1. Study Design and Population:

- Conduct the study within a large prospective cohort.

- Administer the main instrument (FFQ) to all study participants.

- Select an internal validation sub-sample (e.g., n=150-250) from the cohort.

- Administer the reference instrument (multiple 24hR) to the validation sub-sample. The 24hR should be conducted on non-consecutive days to capture day-to-day variation [11].

2. Data Collection:

- FFQ: A self-administered, semi-quantitative questionnaire assessing habitual diet over the past month or year, capturing food items, frequencies, and portion sizes [7].

- 24hR: Multiple telephone-administered 24-hour recalls using a standardized protocol like the five-step multiple-pass method, conducted by trained dietitians. Foods are coded and nutrient intakes calculated using a standard food composition table [7].

3. Calibration Model Fitting:

- Using data only from the validation sub-sample, fit a linear regression calibration model for the nutrient of interest:

R_i,24hR = α + β * FFQ_i + ε_i[11] where:R_i,24hRis the nutrient intake from the 24hR for participanti.FFQ_iis the nutrient intake from the FFQ for participanti.αis the intercept, representing systematic additive bias.βis the calibration coefficient, quantifying the scaling error.ε_iis the random error term.

4. Application in Main Study:

- For every participant in the full cohort, compute the calibrated intake estimate:

Calibrated_FFQ_i = α + β * FFQ_i[9] - The calibrated values replace the raw FFQ values in subsequent diet-disease risk models.

5. Enhanced Regression Calibration (ERC) Variant:

- When 24hR data are available for the entire cohort, an enhanced method incorporates individual random effects to the calibration model, which accounts for within-person random error in the 24hR and can improve precision [7].

The following workflow diagram illustrates the regression calibration process:

Protocol for High-Dimensional Biomarker Development

This advanced protocol leverages high-dimensional metabolomic data to develop objective biomarkers for dietary components when traditional biomarkers are unavailable [10].

1. Study Design and Population (Three-Stage):

- Sample 1 (Feeding Study - Biomarker Development): A small subgroup undergoes a controlled feeding study where meals are provided with standardized, well-documented nutrient content. Blood and urine measurements (high-dimensional metabolites, W ∈ ℝ^p) are collected [10].

- Sample 2 (Biomarker Sub-Study - Calibration): A separate subgroup from the main cohort has both self-reported dietary data (Q) and high-dimensional objective measurements (W) collected [10].

- Sample 3 (Association Study): The full main cohort with self-reported dietary data for association analysis [10].

2. Data Collection:

- High-Dimensional Metabolites: Mass spectrometry or NMR-based profiling of blood/urine samples from Samples 1 and 2, yielding hundreds to thousands of metabolite features [10].

- Objective Covariates: Age, sex, BMI, and clinical biomarkers (e.g., LDL cholesterol, blood glucose) [10].

3. Biomarker Model Fitting (in Sample 1):

- Use penalized regression techniques like Lasso or SCAD to handle high-dimensionality (p >> n) and select metabolites predictive of the dietary component of interest.

- Train a random forest classifier as an alternative to capture non-linear relationships and rank predictor importance [8].

- Tune model hyperparameters via cross-validation to optimize predictive performance and avoid overfitting [10].

4. Calibration and Variance Estimation:

- Apply the trained biomarker model to Sample 2 to predict the dietary intake.

- Fit a calibration equation relating the predicted biomarker value to the self-reported intake (Q), adjusting for covariates (V).

- Use refitted cross-validation (RCV) or degrees-of-freedom corrected estimators to address challenges in variance estimation introduced by high-dimensional modeling [10].

5. Association Analysis (in Sample 3):

- Use the calibration equation from Sample 2 to compute calibrated intake estimates for the full cohort (Sample 3).

- Use these calibrated estimates in Cox or logistic regression models to assess diet-disease associations [10].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Dietary Error Research

| Item | Function/Application | Key Features & Considerations |

|---|---|---|

| Semi-Quantitative FFQ | Main dietary assessment instrument in large cohorts; assesses habitual intake. | Should be validated for target population; cost-effective and low-burden [7] [11]. |

| 24-Hour Dietary Recall (24hR) | Reference instrument for regression calibration; assesses short-term actual intake. | Uses multiple-pass method; conducted by trained dietitians; requires multiple non-consecutive days [7]. |

| Urinary Recovery Biomarkers | Unbiased reference measurement for specific nutrients (e.g., protein, potassium). | Objective and free of self-report bias; available for only a limited number of nutrients [7] [9]. |

| High-Dimensional Metabolomics Data | Objective measurements for developing novel biomarkers for a wider range of dietary components. | Mass spectrometry/NMR-based; requires specialized variable selection and modeling techniques [10]. |

| Web-Based Dietary Assessment Platforms | Facilitate administration of 24hR, dietary records, and FFQs in large studies. | Reduces burden and cost; improves data entry standardization [7]. |

| Controlled Feeding Study Data | Provides ground truth data for developing and testing biomarker models. | Logistically complex and costly; provides highly accurate intake data for a short period [10]. |

Recall bias, social desirability bias, and portion misestimation introduce substantial error into nutritional research, but regression calibration provides a robust methodological correction. The successful application of these protocols requires careful study design, appropriate selection of reference instruments, and rigorous statistical modeling. By implementing these detailed protocols and leveraging advanced tools like high-dimensional biomarkers, researchers can significantly reduce measurement error bias, leading to more accurate and reliable estimates of diet-disease relationships.

In nutritional epidemiology and observational research, measurement error is a pervasive challenge that systematically distorts scientific findings. When investigating associations between dietary components and chronic disease risk, error-prone measurements—such as self-reported dietary intake from Food Frequency Questionnaires (FFQs)—introduce bias into effect estimates and diminish the ability to detect true associations. The two primary statistical consequences of measurement error are attenuation of risk estimates and reduced statistical power, both of which can lead to false conclusions about relationships between exposures and health outcomes [3].

Attenuation, also known as "regression dilution bias," describes the phenomenon where observed associations between variables are biased toward the null hypothesis of no association [12]. This occurs because imperfectly measured variables appear less strongly related to outcomes than they truly are, potentially causing researchers to underestimate or completely miss important risk relationships. Simultaneously, measurement error reduces statistical power, increasing the risk of Type II errors (failing to detect genuine effects) and necessitating substantially larger sample sizes to achieve adequate study precision [13].

Theoretical Foundations of Measurement Error

Types of Measurement Error

Measurement error in epidemiological research is formally characterized through specific mathematical models that describe the relationship between true exposure (X) and error-prone measured exposure (X*). Understanding these models is essential for selecting appropriate correction methods.

Table 1: Classification and Properties of Measurement Error Models

| Error Model | Mathematical Formulation | Key Properties | Common Applications |

|---|---|---|---|

| Classical | (X^* = X + e) | Error (e) has mean zero, independent of X; unbiased at individual level | Laboratory measurements, technical replicates [3] |

| Linear | (X^* = \alpha0 + \alphaX X + e) | Includes both random error and systematic bias; depends on true X value | Self-reported dietary data, biased measurements [3] |

| Berkson | (X = X^* + e) | Error (e) independent of X*; unbiased at population level | Assigned group exposures, prediction model scores [3] |

The distinction between differential and non-differential error is equally critical. Error is considered non-differential when the measurement error is independent of the outcome conditionally on the true exposure and other covariates [3]. This means the error provides no additional information about the outcome beyond what the true exposure provides. In prospective studies, non-differential error is often a reasonable assumption, whereas case-control studies with self-reported exposures may suffer from differential error through recall bias.

Mechanisms of Attenuation and Power Reduction

Attenuation occurs because measurement error in an exposure variable adds extraneous variability that obscures its true relationship with an outcome. The correlation between observed variables (rxy) is always less than or equal to the correlation between the true variables (rXY), with the degree of attenuation determined by the reliability of the measurements [12]. Mathematically, this relationship is expressed through the formula:

[ r{xy} = r{XY} \times \sqrt{r{xx} \times r{yy}} ]

where rxx and ryy represent the reliabilities of the X and Y variables, respectively. As these reliabilities decrease from the perfect value of 1.00, the observed correlation becomes increasingly attenuated [12].

Reduced statistical power manifests most dramatically in studies investigating interaction effects. Khandis Blake et al. demonstrated that "even a programmatic series of six studies employing 2 × 2 designs, with samples exceeding N = 500, can be woefully underpowered to detect genuine effects" when measurement error is present [13]. This occurs because error-prone measures increase the variability in the data without adding meaningful signal, effectively diluting the apparent effect size and requiring larger samples to achieve statistical significance.

Table 2: Impact of Measurement Error on Statistical Conclusions

| Statistical Parameter | Impact of Measurement Error | Practical Consequence |

|---|---|---|

| Risk Estimate | Attenuated toward null | Underestimation of true effect size |

| Statistical Power | Reduced | Increased Type II error rate |

| Required Sample Size | Increased | Higher study costs and complexity |

| Confidence Intervals | Widened | Reduced precision in estimates |

Regression Calibration Methods

Fundamental Principles

Regression calibration is a statistical method for adjusting point and interval estimates of effect obtained from regression models for bias due to measurement error [5]. The method involves replacing the error-prone measurements in analytical models with calibrated values that better approximate the true exposures. This approach requires data from a validation study where both the error-prone measurements and reference measurements (or biomarkers) are available for a subset of participants [3].

The method is particularly valuable in nutritional epidemiology for addressing systematic measurement errors in self-reported dietary data. Strong evidence suggests that misreporting of dietary energy intake is associated with individual characteristics such as body mass index (BMI), creating systematic errors that result in estimation biases that cannot be automatically rectified without statistical correction [14].

Implementation Framework

The implementation of regression calibration follows a structured workflow that incorporates data from multiple sources:

The calibration process begins with developing a calibration equation in the validation study by regressing the reference measurements (true values or biomarkers) on the error-prone measurements and other relevant covariates [3]. This equation then gets applied to the entire study population to generate calibrated exposure values that replace the error-prone measurements in the final outcome model.

Advanced Applications

Recent methodological developments have extended regression calibration to address complex research scenarios:

Cox Proportional Hazards Models: Regression calibration has been adapted for estimating incidence rate ratios from time-to-event data, enabling correction of measurement error bias in survival analysis [5]. This approach has been applied to studies of associations between breast cancer incidence and dietary intakes of vitamin A, alcohol, and total energy.

High-Dimensional Biomarker Development: When traditional biomarkers are unavailable for specific dietary components, high-dimensional objective measurements (e.g., metabolomics data) can construct biomarkers for error correction [14]. This approach utilizes variable selection techniques like LASSO or random forests to handle the challenge of high-dimensional data where the number of potential biomarkers exceeds the sample size.

Survival Regression Calibration (SRC): For time-to-event outcomes with measurement error, SRC fits separate Weibull regression models using true and mismeasured outcomes in a validation sample, then calibrates parameter estimates according to the estimated bias in Weibull parameters [6]. This approach addresses limitations of standard regression calibration methods that assume additive error structures inappropriate for censored time-to-event data.

Practical Applications and Protocols

Dietary Assessment Calibration Protocol

The following protocol outlines the application of regression calibration for correcting measurement error in nutritional studies investigating diet-disease associations, based on methodologies employed in the Women's Health Initiative (WHI) and similar large cohorts [14]:

Study Design Requirements:

- Main Cohort: Primary study population with error-prone exposure measurements (e.g., FFQ data)

- Validation Subsample: Representative subset with both error-prone and reference measurements

- Feeding Study (Optional): For biomarker development, providing objective intake data

Data Collection Procedures:

- Collect self-reported dietary data (Q) from entire cohort using standardized FFQ

- Obtain objective biomarker measurements (W) in validation subsample (blood/urine biomarkers)

- In feeding study subsample, collect controlled dietary intake data (X~) with known nutrient composition

- Record relevant covariates (V) including age, BMI, sex, and other potential confounders

Statistical Analysis Workflow:

- Biomarker Development (if needed): Regress controlled intake (X~) on high-dimensional objective measurements (W) in feeding study sample using penalized regression methods

- Calibration Equation Development: Regress reference measurements (X or biomarker-predicted values) on error-prone measurements (Q) and covariates (V) in validation subsample

- Application to Main Study: Apply calibration equation to all participants to generate calibrated exposure values (Zcal)

- Outcome Analysis: Fit final disease model (e.g., Cox regression) using calibrated exposures (Zcal) and covariates (V)

Validation and Sensitivity Analysis:

- Assess transportability of calibration equation between populations

- Conduct bootstrap resampling to estimate standard errors accounting for calibration uncertainty

- Compare results with and without calibration to quantify impact of measurement error correction

Experimental Validation Study Design

To empirically quantify measurement error structure and develop study-specific calibration equations, implement a validation study with the following design:

Sample Size Considerations:

- Minimum of 100-200 participants for reliable calibration equation estimation

- Balance between practical constraints and statistical precision needs

- Stratified sampling to ensure representation across key demographic and exposure ranges

Reference Measurement Selection:

- Biomarkers: Doubly labeled water for energy expenditure, urinary nitrogen for protein intake

- Recovery Biomarkers: Suitable for nutrients with stable urinary excretion

- Concentration Biomarkers: Serum carotenoids for fruit/vegetable intake

- Predictive Biomarkers: Developed from high-dimensional metabolomic data [14]

- Multiple 24-Hour Recalls: As approximation of usual intake in absence of biomarkers

Data Collection Timeline:

- Collect error-prone measurements (FFQ) at baseline

- Obtain reference measurements within temporally relevant window (1-6 months)

- For biomarkers with short-term variability, consider repeated measures

- For long-term exposure assessment, align reference measurements with exposure period of interest

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Measurement Error Correction

| Tool/Resource | Function | Application Context |

|---|---|---|

| SAS Regression Calibration Macros | Implements regression calibration for logistic, Cox, and linear models | Nutritional epidemiology studies [5] |

| High-Dimensional Variable Selection (LASSO, SCAD) | Selects relevant biomarkers from high-throughput metabolomic data | Biomarker development for dietary components [14] |

| Survival Regression Calibration (SRC) | Corrects measurement error in time-to-event outcomes | Oncology real-world evidence studies [6] |

| Weighted Regression Algorithms | Addresses heteroscedasticity in calibration data | Analytical chemistry calibration curves [15] |

| Refitted Cross-Validation (RCV) | Estimates error variance in high-dimensional regression | Prevents overfitting in biomarker models [14] |

| Validation Study Design Templates | Guides collection of appropriate reference measurements | Ensuring transportable calibration equations [3] |

Technical Implementation Considerations

Software and Computational Tools:

- Specialized SAS macros for regression calibration in epidemiological studies [5]

- R packages for high-dimensional regression (glmnet, ncvreg) for biomarker development [14]

- Custom weighted regression spreadsheets for analytical calibration curves [15]

- Bootstrap and resampling procedures for variance estimation of calibrated parameters

Weighting Strategies for Heteroscedastic Data: When analytical measurements exhibit concentration-dependent variance (heteroscedasticity), implement weighted least squares regression with the following weighting schemes:

Evaluation of weighting schemes should utilize both the sum of absolute percentage relative error (Σ%RE) and visual inspection of residual plots to identify the approach that produces the most uniform variance across the concentration range [15].

Measurement error presents a fundamental challenge to the validity of nutritional epidemiology and observational research, systematically attenuating risk estimates and reducing statistical power. Regression calibration methods provide a robust statistical framework for correcting these biases, utilizing validation data to recover estimates that more accurately reflect true exposure-disease relationships. The continued development and application of these methods—including extensions for survival outcomes, high-dimensional biomarker development, and heteroscedastic data—strengthens the evidentiary foundation for dietary recommendations and public health policies. As research increasingly leverages real-world data and complex exposure assessments, rigorous measurement error correction remains essential for generating reliable scientific evidence.

In dietary measurement error research, understanding the nature and structure of error is paramount for developing appropriate correction methods. Measurement error in nutritional epidemiology is ubiquitous due to the challenges in assessing habitual intake, which relies on self-reported instruments like Food Frequency Questionnaires (FFQs) and diet records [16]. These errors, if unaddressed, can severely bias the estimated associations between dietary exposures and health outcomes, leading to flawed scientific conclusions and public health recommendations. The framework for addressing measurement error consists of three core components: the outcome model linking the true exposure to the disease, the measurement error model relating the observed exposure to the true exposure, and the distribution model of the true exposure itself [17].

This article provides a comprehensive introduction to the three fundamental measurement error models—Classical, Berkson, and Linear—that form the theoretical foundation for error correction methodologies, including regression calibration. Accurate specification of the error model is a critical prerequisite for selecting and applying the appropriate statistical correction technique [18] [3]. We detail the mathematical formulations, assumptions, and consequences of each model, with specific applications in nutritional epidemiology, and provide structured protocols for their implementation in dietary research.

Core Error Models: Mathematical Formulation and Comparison

The table below summarizes the key characteristics, mathematical models, and main implications of the three primary error models.

Table 1: Comparison of Key Measurement Error Models

| Error Model | Mathematical Formulation | Bias in Measured Variable | Typical Effect on Regression Coefficient | Common Occurrence in Nutritional Epidemiology |

|---|---|---|---|---|

| Classical | ( X^* = X + U ), where ( E(U)=0 ), ( U \perp X ) [3] | Unbiased at individual level [3] | Attenuation (bias towards null) [16] | Random within-person day-to-day variation [16] |

| Berkson | ( X = X^* + U ), where ( E(U)=0 ), ( U \perp X^* ) [3] | Biased at individual level, unbiased at population level [3] | Little or no bias in coefficient; reduces study power [19] [18] | Assignment of group-level exposure (e.g., average air pollution) [3] |

| Linear | ( X^* = \alpha0 + \alphaX X + U ), where ( E(U)=0 ), ( U \perp X ) [3] | Biased at individual level (systematic error) [3] | Complex bias (can be away from or towards null) [18] | Self-reported dietary intake with systematic bias [3] |

The following diagram illustrates the fundamental structural differences and data flow for each error model, highlighting the distinct relationships between the true exposure (X), the measured exposure (X*), and the error term (U).

Experimental Protocols for Error Model Application

Protocol 1: Handling Classical Measurement Error in Dietary Data

Purpose: To correct for random within-person variation in a dietary exposure (e.g., fruit and vegetable intake) measured by an FFQ, assuming a classical error structure.

Background: The classical error model is applicable when the measurement instrument is unbiased at the individual level but has random error that is independent of the true exposure. In nutrition, this often pertains to day-to-day variation around a person's usual intake [16].

Table 2: Reagent Solutions for Classical Error Protocol

| Item | Specification | Function |

|---|---|---|

| Main Study Data | Cohort with outcome (Y) and error-prone exposure (X*), e.g., from an FFQ. | Provides the primary data for diet-disease association analysis. |

| Replicate Measurements | At least two repeated administrations of the FFQ or multiple 24-hour recalls in a subset. | Quantifies the within-person random error variance. |

| Statistical Software | SAS, R, or Stata with measurement error packages (e.g., simex, rcme). |

Executes regression calibration or SIMEX algorithms. |

Procedure:

- Study Design: Within the main cohort, select a random subsample (the calibration/reliability study). Ensure this subsample is representative of the main study population [18].

- Data Collection: For participants in the calibration study, collect at least two replicate measurements of the dietary exposure using the same instrument (e.g., FFQ) administered at different times. The time interval should be sufficient to capture representative variation but short enough that usual intake is stable [16].

- Error Variance Estimation: Calculate the within-person variance (( \sigma^2u )) and the between-person variance (( \sigma^2x )) of the exposure from the replicate measurements using a random-effects ANOVA model.

- Reliability Ratio: Compute the reliability ratio (( \lambda )) as ( \lambda = \sigma^2x / (\sigma^2x + \sigma^2_u/n) ), where ( n ) is the number of replicates per person. This ratio quantifies the attenuation factor [18].

- Correction Analysis: Apply a correction method such as Regression Calibration:

- Replace the naive exposure value ( X^* ) in the disease model with the expected value of the true exposure given the measured exposure and covariates, ( E(X|X^*) ) [20] [21].

- Alternatively, use the Simulation-Extrapolation (SIMEX) method, which simulates data with increasing error variance and extrapolates back to the case of no error [18].

- Validation: Compare the corrected effect estimate (e.g., odds ratio, hazard ratio) with the naive estimate to assess the impact of correction.

Protocol 2: Correcting for Systematic Bias with the Linear Error Model

Purpose: To adjust for systematic and random error in self-reported dietary data (e.g., protein intake), where the reporting bias may depend on subject characteristics.

Background: The linear error model extends the classical model to account for systematic bias, which is common in self-reported dietary data where individuals may consistently over- or under-report based on factors like body mass index (BMI) [3] [16].

Table 3: Reagent Solutions for Linear Error Protocol

| Item | Specification | Function |

|---|---|---|

| Main Study Data | Cohort with outcome (Y) and error-prone self-report (X*). | Primary data for analysis. |

| Validation Study Data | A subsample with both the self-report (X*) and a reference instrument. | Used to estimate the calibration equation parameters. |

| Reference Instrument | Biomarker (e.g., urinary nitrogen), or multiple diet records. | Serves as a superior measure to approximate true intake (X). |

| Covariate Data | Variables related to systematic error (e.g., BMI, age). | Included in the calibration equation to model systematic bias. |

Procedure:

- Study Design: Establish an internal validation study within the main cohort, where participants provide both the self-reported exposure (X*) and a measurement from a reference instrument considered an unbiased marker of true intake (X) [3] [16].

- Calibration Equation Estimation: In the validation study, fit a linear regression model with the reference instrument measurement as the dependent variable and the self-report (and other relevant covariates, ( Z )) as independent variables: ( X = \alpha0 + \alpha1 X^* + \alpha2 Z + \epsilon ). This estimates the systematic location bias (( \alpha0 )) and scale bias (( \alpha_1 )) [3].

- Calibration Prediction: Use the estimated coefficients from Step 2 to predict the calibrated (corrected) exposure for every individual in the main study: ( \hat{X} = \hat{\alpha0} + \hat{\alpha1} X^* + \hat{\alpha_2} Z ).

- Outcome Analysis: Fit the disease model (e.g., logistic regression, Cox model) using the calibrated exposure ( \hat{X} ) instead of the mismeasured ( X^* ) [5] [21].

- Uncertainty Estimation: Use bootstrapping or sandwich variance estimators to obtain valid confidence intervals that account for the uncertainty in the calibration step [20].

Protocol 3: Managing Exposure with Berkson-Type Error

Purpose: To analyze data where the assigned exposure is a group mean, but the true individual exposure varies around this mean, such as when using a predicted score from a calibration equation.

Background: Berkson error arises when individuals are assigned a exposure value that is an average for their group, or when a predicted value from a model is used. Notably, using a calibrated value from Protocol 2 as a substitute for true intake in a disease model introduces Berkson error [10].

Procedure:

- Error Structure Identification: Confirm the error structure. In Berkson error, the assigned value ( X^* ) is fixed, and the true exposure ( X ) varies around it with error independent of ( X^* ) [3].

- Disease Model Analysis: For linear models, Berkson error does not cause bias in the estimated regression coefficient, but it increases the variance of the estimate, reducing statistical power [19] [18].

- Power Considerations: Plan for a larger sample size to compensate for the loss of power due to the added uncertainty from the Berkson error structure.

- Advanced Correction: For non-linear models (e.g., logistic regression), Berkson error can cause slight bias. In such cases, more complex likelihood-based or simulation-based methods may be required for full correction [18].

The Scientist's Toolkit

Table 4: Essential Research Reagents and Resources for Measurement Error Correction

| Tool / Reagent | Description | Application in Error Correction |

|---|---|---|

| Recovery Biomarkers | Objective measures with a known quantitative relationship to intake (e.g., Doubly Labeled Water for energy, 24-h Urinary Nitrogen for protein) [16]. | Serve as unbiased reference measures (gold standards) in validation studies to estimate parameters of the linear error model. |

| Repeated 24-Hour Recalls | Multiple memory-based assessments of intake over the past 24 hours, collected by a trained interviewer. | Act as an "alloyed gold standard" in calibration studies to estimate within-person random error (classical model) [16]. |

| Food Diaries/Records | Prospective records of all foods and beverages consumed over a specific period (e.g., 7 days). | Used as a high-quality reference instrument in validation studies to model systematic error in FFQs [16]. |

| Regression Calibration Software | Statistical macros and packages (e.g., in SAS or R) specifically designed for measurement error correction [21]. | Implements the regression calibration algorithm to produce corrected effect estimates. |

| SIMEX Algorithm | A simulation-based method available in statistical software (e.g., simex package in R) [18]. |

Corrects for attenuation bias due to classical measurement error without requiring a detailed model of the true exposure. |

| Internal Validation Study | A sub-study nested within the main cohort where both the error-prone measure and a superior reference measure are collected [3]. | Provides the crucial data needed to estimate the parameters of the measurement error model (classical or linear). |

The accurate identification and application of measurement error models—Classical, Berkson, and Linear—are foundational steps in producing valid results in dietary exposure research. Each model carries distinct assumptions and consequences for statistical inference. Regression calibration serves as a powerful and practical correction method, particularly for the classical and linear error models frequently encountered in nutritional epidemiology [20] [21]. The protocols outlined herein provide a structured approach for researchers to diagnose the error structure in their data and implement the appropriate corrective methodology, thereby strengthening the evidential basis for diet-disease relationships.

The Critical Distinction between Within-Person Random Error and Systematic Bias

In dietary measurement error research, understanding the distinct nature and effects of within-person random error and systematic bias is fundamental to selecting appropriate statistical methods and drawing valid scientific conclusions. These two types of error originate from different sources, manifest differently in data, and require fundamentally different correction approaches [2]. Within-person random error refers to the day-to-day variation in an individual's dietary intake and their reporting of it, while systematic bias represents a consistent directional departure from the true intake value [2] [22]. This distinction is particularly critical when applying regression calibration methods, as the effectiveness of these statistical corrections depends heavily on correctly characterizing the error structure present in the data [5] [3]. Misidentification of error types can lead to residual bias, incorrect effect estimates, and ultimately flawed inferences about diet-disease relationships.

Theoretical Foundations of Measurement Error

Defining Within-Person Random Error

Within-person random error, also known as day-to-day variation, represents the difference between an individual's reported intake on a specific occasion and their long-term usual intake [2]. This type of error arises from genuine biological variation in consumption patterns combined with random inaccuracies in reporting. In dietary research, this manifests as the natural fluctuation in what people eat from day to day, which persists even when intake is measured perfectly for each specific day [2] [23].

Data affected solely by within-person random error are unbiased but imprecise [2]. The key characteristic of this error type is that it averages toward zero with repeated measures, following the law of large numbers [22]. When multiple dietary assessments are collected from the same individual, the mean of these measurements provides a better approximation of true usual intake than any single measurement alone [2]. The primary consequence of unaddressed within-person random error in epidemiological studies is reduced statistical power to detect true associations, and in univariate models, attenuation (biasing toward the null) of effect estimates [22].

Defining Systematic Bias

Systematic bias represents consistent, directional departure from true intake values that does not average out with repeated measurements [2]. Unlike random error, systematic bias persists regardless of how many times intake is measured and introduces inaccuracy into dietary assessments. The main elements of systematic error in dietary assessment include:

- Intake-related bias: Systematic error that correlates with true intake level, exemplified by the "flattened-slope" phenomenon where individuals with high true intake tend to under-report, while those with low true intake tend to over-report [2].

- Person-specific bias: Error components related to individual characteristics that affect how a person reports dietary intake, such as social desirability or body image concerns [2].

Systematic bias can also be categorized by whether it operates primarily within individuals or between persons. Between-person systematic error can be additive (constant across all intake levels) or multiplicative (proportional to intake level), with the latter being particularly common in nutritional epidemiology [22] [24].

Comparative Analysis of Error Types

Table 1: Fundamental Characteristics of Within-Person Random Error and Systematic Bias

| Characteristic | Within-Person Random Error | Systematic Bias |

|---|---|---|

| Directional Pattern | Non-directional fluctuations around true value | Consistent directional departure from true value |

| Response to Repeated Measures | Averages toward zero with sufficient replicates | Persists regardless of number of replicates |

| Effect on Mean Estimate | Unbiased with sufficient replicates | Biased even with many replicates |

| Primary Effect on Statistical Power | Reduces power to detect associations | Can bias effects in either direction |

| Correctability via Averaging | Can be reduced by averaging multiple measures | Cannot be reduced by averaging |

| Dependence on True Intake | Independent of true intake level | May be correlated with true intake level |

Implications for Dietary Research and Regression Calibration

Differential Impact on Research Outcomes

The distinct nature of within-person random error and systematic bias leads to different consequences in nutritional research:

- For surveillance and monitoring, within-person random error inflates variance estimates and reduces precision in population-level assessments, while systematic bias distorts the accuracy of prevalence estimates for inadequate or excessive intakes [23].

- In epidemiologic studies, within-person random error typically attenuates diet-disease associations toward the null, whereas systematic bias can distort observed associations in unpredictable directions, potentially creating spurious associations or masking real ones [23] [22].

- In intervention research, within-person random error can mask true intervention effects by adding noise, while systematic bias can introduce differential measurement error if the error structure differs between intervention and control groups [23].

Regression Calibration Approaches for Different Error Types

Regression calibration is a statistical method for adjusting point and interval estimates from regression models for bias due to measurement error [5]. Its application depends critically on correctly identifying the type of measurement error present:

For within-person random error, regression calibration can effectively correct attenuation bias when the error follows the classical measurement error model, where the measured exposure equals the true exposure plus random error independent of the true value [3] [22]. This approach requires replicate measurements on at least a subset of the study population to estimate the within-person variance component [5] [3].

For systematic bias, standard regression calibration approaches require additional information, typically from a validation study that includes a reference instrument providing unbiased intake measurements [2] [3]. When systematic bias follows the linear measurement error model, the calibration equation must account for both location bias (α₀) and scale bias (αₓ) parameters [3].

Table 2: Measurement Error Models and Appropriate Calibration Methods

| Error Model | Mathematical Formulation | Error Type Addressed | Calibration Requirements |

|---|---|---|---|

| Classical Measurement Error | X* = X + e | Within-person random error | Replicate measurements of X* |

| Linear Measurement Error | X* = α₀ + αₓX + e | Systematic bias + random error | Validation study with reference measure |

| Berkson Error | X = X* + e | Assignment error | Known group averages or prediction equations |

The following diagram illustrates the conceptual relationship between error types and the appropriate calibration pathways:

Experimental Protocols for Error Assessment

Protocol for Quantifying Within-Person Random Error

Objective: To estimate the magnitude of within-person random error in dietary assessments for application in regression calibration methods.

Materials and Methods:

- Study Design: Implement a reproducibility study with repeated administrations of the same dietary assessment instrument (e.g., 24-hour recalls) on multiple non-consecutive days [3] [22].

- Sample Size: Include a minimum of 100 participants with at least two replicate measurements per person, though more replicates (≥3) improve precision of variance component estimates [25].

- Data Collection:

- Administer 24-hour recalls on randomly selected days representing both weekdays and weekends to capture habitual variation [1].

- Maintain consistent administration protocols (mode, interviewer, reference period) across assessments [25].

- For food frequency questionnaires, administer the instrument twice with an appropriate interval (e.g., 1-6 months) to assess consistency [22].

- Statistical Analysis:

- Use variance components analysis (e.g., random effects models) to partition total variance into within-person and between-person components [22].

- Calculate the within-person variance (σ²ₐ) and between-person variance (σ²ᵦ).

- Compute the intraclass correlation coefficient (ICC = σ²ᵦ / [σ²ᵦ + σ²ₐ]) to quantify the proportion of total variance due to between-person differences [22].

Protocol for Assessing Systematic Bias Using Recovery Biomarkers

Objective: To quantify systematic bias in self-reported dietary intake through comparison with objective biomarkers.

Materials and Methods:

- Study Design: Conduct a validation study incorporating both self-report dietary assessments and recovery biomarkers in the same participants [2] [22].

- Participants: Recruit a representative subsample (n≥50-100) from the main study population to ensure transportability of results [3].

- Reference Instruments:

- Data Collection:

- Collect self-reported dietary data using the instrument of interest (e.g., FFQ, 24-hour recall).

- Simultaneously administer biomarker protocols according to established guidelines (e.g., DLW dose, 24-hour urine collection) [22].

- Measure potential modifiers of systematic bias (e.g., BMI, age, sex, social desirability) [2].

- Statistical Analysis:

- Apply the method of triads to calculate validity coefficients using the self-report instrument, biomarker, and additional reference method [22].

- Fit linear measurement error models to estimate calibration parameters (α₀ and αₓ) [3].

- Assess intake-related bias by testing correlations between reporting error and true intake levels [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Dietary Measurement Error Research

| Tool Category | Specific Instrument/Method | Primary Function | Application Context |

|---|---|---|---|

| Reference Biomarkers | Doubly labeled water (DLW) | Validation of energy intake reporting | Gold standard for energy assessment [22] |

| 24-hour urinary nitrogen | Validation of protein intake | Recovery biomarker for protein [22] | |

| 24-hour urinary potassium | Validation of potassium intake | Objective measure of potassium consumption [25] | |

| Dietary Assessment Platforms | Automated Self-Administered 24-hour Recall (ASA24) | Self-administered 24-hour dietary recall | Reduces interviewer burden, standardized administration [1] |

| USDA Automated Multiple-Pass Method (AMPM) | Interviewer-administered 24-hour recall | Enhances completeness of dietary reporting [23] | |

| GloboDiet (formerly EPIC-SOFT) | Standardized 24-hour recall interface | International standardization of dietary assessment [23] | |

| Statistical Software Tools | SAS Regression Calibration Macros | Implementation of regression calibration | Correcting measurement error bias in epidemiological analyses [5] |

| Multiple Imputation Approaches | Handling differential measurement error | When measurement error depends on outcome or other variables [22] | |

| Moment Reconstruction Method | Addressing differential measurement error | Alternative approach when regression calibration assumptions are violated [22] |

Advanced Methodological Considerations

Addressing Complex Error Structures in Nutritional Epidemiology

In practice, dietary measurement error often involves complex combinations of both within-person random error and systematic bias, requiring sophisticated modeling approaches [22]. The typical error structure in self-reported dietary data includes:

- Simultaneous presence of intake-related bias (systematic) and day-to-day variation (random) [2].

- Correlated errors between different dietary components, complicating multivariate analyses [22].

- Person-specific biases that vary across population subgroups defined by characteristics such as body mass index, age, or cultural background [2].

For these complex scenarios, regression calibration methods must be extended beyond the simple classical error model. The linear measurement error model provides a more flexible framework that accommodates both random and systematic components through the inclusion of calibration parameters α₀ (location bias) and αₓ (scale bias) [3].

Emerging Methods and Future Directions

Recent methodological advances address limitations of traditional regression calibration approaches:

- Multivariate measurement error models that account for correlated errors across multiple nutrients and foods [22].

- Extension to time-to-event outcomes through methods like survival regression calibration (SRC), which addresses measurement error in endpoints such as progression-free survival [6].

- Multiple imputation for measurement error (MIME) that can handle both non-differential and differential measurement error [22].

- Moment reconstruction techniques that transform mismeasured exposures to have the same distribution as true exposures [22].

These advanced methods enhance our capacity to address the critical distinction between within-person random error and systematic bias, ultimately strengthening the validity of nutritional epidemiology and its applications in drug development and public health policy.

Implementing Regression Calibration: From Theory to Practice

Core Principles of the Regression Calibration Approach

Regression calibration is a statistical bias-correction method widely used to address the pervasive challenge of measurement error in epidemiological and nutritional research [26]. When studying the relationship between an exposure (e.g., dietary intake) and a health outcome, researchers often rely on error-prone measurements, such as self-reported dietary data. Using these measurements directly in statistical models yields biased estimates of the association parameters [26]. Regression calibration corrects this bias by replacing the error-prone exposure measurement with a calibrated estimate that better approximates the true, unobserved exposure [5]. This approach is particularly vital in nutritional epidemiology, where systematic and random errors in self-reported dietary data can substantially distort findings [5] [3]. These notes detail the core principles, methodologies, and practical applications of regression calibration, providing a protocol for its proper implementation.

Theoretical Foundations and Error Models

The Measurement Error Problem

The core problem arises when the variable of interest, the true exposure (X), is not directly observed. Instead, an error-prone measurement (X^) is available. The goal is to fit a health outcome model (e.g., linear, logistic, or Cox regression) that relates the outcome (Y) to the true (X) and other covariates (Z), but one can only fit the model using (X^) [26] [3].

- Outcome Model: This is the model of scientific interest, relating the true exposure to the outcome: (g(E(Y)) = \beta0 + \betaX X + \beta_Z Z), where (g) is a link function.

- Non-Differential Measurement Error: A key assumption is that the error is non-differential, meaning the error-prone measurement (X^) provides no additional information about the outcome (Y) beyond what is provided by the true exposure (X) and covariates (Z) [26] [3]. Formally, (Y) is conditionally independent of (X^) given (X) and (Z).

Types of Measurement Error

Understanding the structure of the error is crucial for selecting the appropriate correction method. The following table summarizes the primary measurement error models.

Table 1: Common Measurement Error Models in Epidemiological Research

| Error Model | Mathematical Form | Description | Common Example |

|---|---|---|---|

| Classical | (X^* = X + e) | Random error with mean zero, independent of (X). Unbiased at the individual level. [3] | Laboratory measurements like serum cholesterol. [3] |

| Linear (Berkson-like) | (X^* = \alpha0 + \alphaX X + e) | Allows for systematic bias (location (\alpha0) and scale (\alphaX)) in addition to random error (e). [3] | Self-reported exposures, such as dietary intake from questionnaires. [26] [3] |

| Berkson | (X = X^* + e) | The true value varies randomly around an assigned measured value. Error (e) is independent of (X^*). Unbiased at the population level. [26] [3] | Occupational studies where workers are assigned a group-level exposure. [3] |

Regression calibration is particularly effective when the error-prone measurement (X^) follows a linear or classical error structure, and a validation study is available to estimate the relationship between (X) and (X^) [5] [26].

The Regression Calibration Methodology

Core Principle and Procedure

The fundamental principle of regression calibration is to substitute the unobserved true exposure (X) in the outcome model with its conditional expectation (E(X|X^*, Z)), which is its best unbiased predictor given the available data [26]. This calibrated value, denoted (\tilde{X}), is then used in place of (X) in the outcome model.

The following workflow outlines the standard two-stage regression calibration process.

Key Statistical Insight: Induction of Berkson Error

A logical question is how using another error-prone estimate (\tilde{X}) improves the situation. The answer lies in the type of error in (\tilde{X}). While the original error in (X^) is typically classical, the error in the calibrated value (\tilde{X}) is Berkson-type [26]. By definition, (\tilde{X} = E(X | X^, Z)), so the residual error (X - \tilde{X}) is uncorrelated with (\tilde{X}) and the other covariates (Z) in the outcome model. This property is crucial as it means that using (\tilde{X}) in a linear model will not bias the coefficient estimates, which is the primary goal of the correction [26].

Practical Implementation and Protocols

Successful implementation of regression calibration depends on specific data resources. The following table lists the key "research reagents" required.

Table 2: Essential Components for a Regression Calibration Analysis

| Component | Description | Function & Importance | |

|---|---|---|---|

| Main Study | A large cohort with data on (Y), (X^*), and (Z) for all participants. | Provides the primary data for estimating the exposure-outcome association. | |

| Internal Validation Study | A random subset of the main study where the true exposure (X) (or an unbiased biomarker (W)) is measured in addition to (X^*) and (Z) [26]. | Gold standard. Allows direct estimation of the calibration equation (E(X | X^*, Z)) that is transportable to the main study. |

| Recovery Biomarker | An objective measure (e.g., urinary nitrogen for protein intake) with classical measurement error relative to true intake [27]. | Serves as an unbiased reference measurement (W) in the calibration model when true (X) is unobservable. | |

| Calibration Equation | A regression model (usually linear) predicting the true exposure (X) (or biomarker (W)) using (X^*) and (Z). | The engine for correction. Generates the calibrated exposure values (\tilde{X}) for the main study. | |

| Software with Variance Estimation | Statistical software (e.g., SAS, R) capable of implementing calibration and accounting for the extra uncertainty in (\tilde{X}) (e.g., via bootstrap or multiple imputation) [27] [28]. | Correct standard errors are essential for valid confidence intervals and hypothesis tests. |

Protocol: Applying Regression Calibration in a Dietary Study

This protocol outlines the steps to correct for measurement error in the association between sodium-to-potassium intake ratio (Na/K) and cardiovascular disease (CVD) risk, using a hypothetical cohort with a biomarker substudy [10].

Objective: To estimate the corrected hazard ratio for the association between true Na/K intake and CVD incidence. Materials: Main cohort data (CVD status, self-reported Na/K intake (Q), covariates (Z)), internal validation study data (urinary biomarkers (W) for Na/K, (Q), (Z)).

Procedure:

- Develop the Calibration Equation (Validation Study):

- In the validation study, regress the biomarker-measured Na/K ratio ((W)) on the self-reported Na/K ratio ((Q)) and all relevant covariates ((Z)) from the intended outcome model (e.g., age, sex, BMI).

- Model: (E(Wi | Qi, Zi) = \hat{\alpha}0 + \hat{\alpha}Q Qi + \hat{\alpha}Z Zi)

- This step yields the estimated calibration coefficients (\hat{\alpha}0, \hat{\alpha}Q, \hat{\alpha}_Z).

Predict Calibrated Exposure (Main Study):

- For every participant (i) in the main study, compute their calibrated Na/K intake using the equation from Step 1.

- (\tilde{X}i = \hat{\alpha}0 + \hat{\alpha}Q Qi + \hat{\alpha}Z Zi)

- This value (\tilde{X}_i) is the best estimate of their usual, true Na/K intake.

Fit the Calibrated Outcome Model:

- Fit the Cox proportional hazards model for CVD incidence, using the calibrated exposure (\tilde{X}).

- Model: (\lambda(t) = \lambda0(t) \exp(\hat{\beta}X \tilde{X} + \hat{\beta}_Z Z))

- The coefficient (\hat{\beta}_X) is the corrected log-hazard ratio for the association between the true Na/K ratio and CVD risk.

Calculate Valid Standard Errors:

- The standard errors for (\hat{\beta}_X) obtained directly from the model in Step 3 are incorrect because they ignore the uncertainty in estimating the calibration coefficients.

- Use a resampling method like the bootstrap to obtain valid standard errors and confidence intervals [27] [28].

- Repeatedly resample from the main and validation studies.

- Re-estimate the calibration equation and the outcome model for each bootstrap sample.

- The standard deviation of the (\hat{\beta}_X) estimates across all bootstrap samples is the valid standard error.

Critical Considerations and Advanced Applications

Common Implementation Pitfalls

- Covariate Selection in Calibration: The calibration model must include all covariates (Z) that will be included in the final health outcome model. Omitting a confounder from the calibration equation can reintroduce bias into the corrected estimate [26].

- Transportability of Equations: Calibration equations derived from an external validation study may not be applicable to the main study if the relationship between (X) and (X^*) differs between populations (e.g., due to different variances of (X)) [26] [3]. An internal validation study is strongly preferred.

- Variance Estimation: Failing to account for the uncertainty introduced by estimating the calibration equation will result in overly narrow confidence intervals and inflated type I error rates [26] [27]. Bootstrap or multiple imputation methods are recommended.

Extension to High-Dimensional Biomarkers

A modern challenge in nutritional epidemiology is developing biomarkers for complex dietary components. Traditional recovery biomarkers exist for only a few nutrients. A promising extension of regression calibration involves using high-dimensional metabolomic data (e.g., hundreds of metabolites from blood) to construct predictive biomarkers for a wider array of dietary exposures [10]. The protocol is similar, but the calibration step involves using penalized regression methods (e.g., Lasso) to regress a reference intake measurement from a feeding study onto the high-dimensional metabolite profile. The resulting biomarker prediction is then used in the subsequent calibration step in the main cohort [10]. Special care is required for variance estimation in this high-dimensional setting.

In nutritional epidemiology, establishing valid associations between dietary intake and chronic disease risk is fundamentally challenged by systematic measurement error in self-reported dietary data [10] [29]. Regression calibration has emerged as a predominant statistical method for correcting measurement-error bias in nutritional research [5] [9]. This method adjusts point and interval estimates from regression models to account for biases introduced by measurement error in assessing nutrients or other variables [5]. The successful application of regression calibration, however, is critically dependent on the careful design and implementation of validation and calibration studies that provide the necessary data to understand and correct for measurement error structures [20] [9]. Without these specialized studies, diet-disease association estimates remain vulnerable to distortion from both random and systematic errors inherent in self-reported dietary assessments [3] [30].

Core Concepts and Measurement Error Frameworks

Types of Measurement Error

Measurement error in nutritional epidemiology is typically categorized by its statistical properties and relationship to the true exposure:

- Classical Measurement Error: Describes a scenario where the measured value (X^) equals the true value (X) plus random error (e): (X^ = X + e), where (e) has mean zero and is independent of (X) [3]. This model is often assumed for objective biological measurements.

- Linear Measurement Error: Extends the classical model to include systematic bias: (X^* = \alpha0 + \alphaX X + e), where (\alpha0) represents location bias and (\alphaX) represents scale bias [3]. This model better captures the error structure of self-reported dietary data.

- Berkson Measurement Error: Occurs when the true value (X) varies around the measured value (X^): (X = X^ + e), where (e) has mean zero and is independent of (X^*) [3]. This often arises when subgroup averages are assigned to individuals.

The distinction between these error types is crucial as each requires different correction approaches [3].

Essential Study Designs for Error Correction

Validation and calibration studies provide the additional data required to characterize and correct measurement error:

- Validation Studies: Collect both the error-prone measurement and a reference measurement (the "gold standard" or unbiased measurement) for the same individuals [3]. These can be internal (conducted on a subgroup of the main study population) or external (conducted on a separate population) [9] [3].

- Calibration Studies: Collect a single unbiased measurement without repeated reference measurements [3]. While useful, they cannot estimate all parameters of the measurement error model.

- Reproducibility Studies: Collect repeated measurements of the error-prone measure (X^*) without reference measurements [3]. These are only sufficient for correction when classical measurement error can be assumed.

Table 1: Comparison of Study Designs for Measurement Error Correction

| Study Type | Measurements Collected | Key Assumptions | Limitations |

|---|---|---|---|

| Internal Validation | (X^*) and reference measurement on subgroup of main study | Transportability of error model within study population | Higher cost for reference data collection |

| External Validation | (X^*) and reference measurement on separate population | Transportability of error model between populations | Risk of model miscalibration if populations differ |

| Calibration Study | Single unbiased measurement for subgroup | Partial information on error structure | Cannot estimate all error model parameters |

| Reproducibility Study | Repeated (X^*) measurements | Classical measurement error structure | Cannot detect or correct systematic bias |

Data Requirements for Regression Calibration

Core Data Components

Implementing regression calibration requires specific data components that must be carefully collected through validation studies:

- Main Study Data: Typically includes the health outcome (Y), self-reported (error-prone) exposure (Q), and accurately measured covariates (V) for all participants [29].

- Reference Instrument Data: A superior exposure measurement collected in a validation subsample, which may include recovery biomarkers, 24-hour dietary recalls, food records, or feeding study data [9] [10].

- Replicate Measurements: Multiple assessments of the error-prone exposure (Q) or reference measurements to estimate within-person variation [20].

- Covariate Data: Accurately measured personal characteristics (e.g., body mass index, age, sex) that may relate to measurement error [10] [29].

Quantitative Requirements for Study Design

The statistical precision of regression calibration estimates depends on specific quantitative parameters that must be considered in study design:

Table 2: Key Quantitative Parameters for Regression Calibration Studies

| Parameter | Description | Impact on Calibration | Data Source |

|---|---|---|---|

| Validation Study Size | Number of participants with both error-prone and reference measurements | Affects precision of calibration equation coefficients | Study design |

| Number of Replicates | Repeated reference or self-report measurements per person | Reduces impact of random error in calibration | Study design |

| Correlation between X and X* | Strength of relationship between true and measured exposure | Higher correlation improves calibration performance | Validation study data |

| Variance Components | Ratio of within-person to between-person variance in exposure | Affects degree of attenuation and correction needed | Replicate measurements |

The following diagram illustrates the fundamental relationship between true intake, measured intake, and the calibration process that is quantified in validation studies:

Figure 1: Fundamental Measurement Error and Calibration Relationship. Validation studies quantify the relationship between true and measured intake to develop calibration equations.

Experimental Protocols for Validation Studies

Internal Validation Study with Biomarkers

Objective: To develop calibration equations for correcting measurement error in self-reported dietary data using objective biomarker measurements [9] [10].

Population Requirements:

- Primary cohort: Entire study population with self-reported dietary data (Q) and outcome data [29]

- Validation subsample: 5-20% of cohort with additional biomarker measurements [10]

- Sampling: Random selection stratified by key covariates (e.g., BMI, age) to ensure representativeness

Data Collection Protocol:

- Baseline Data Collection (All Participants):

Validation Subsample Data Collection:

Biomarker Analysis:

Statistical Analysis Plan:

- Calibration Equation Development:

- Regress biomarker measurements on self-report data and covariates: (X = \beta0 + \beta1 Q + \beta_2 V + \epsilon) [9] [10]

- Account for within-person variation in biomarkers using random effects models if replicates available [20]

- Validate model assumptions using residual plots and influence statistics [20]

- Application in Main Study:

Feeding Study for Biomarker Development

Objective: To establish regression-based biomarkers for dietary components when direct biomarkers are unavailable [10] [29].

Population Requirements:

- Controlled feeding study: 50-200 participants [10] [29]

- Inclusion criteria: Willing to consume provided diets for 2-4 weeks [29]

- Consideration: Representativeness to main study population on key characteristics

Study Design Protocol:

- Dietary Assessment Phase (1-2 weeks pre-feeding):

Diet Formulation Phase:

Controlled Feeding Phase (2-4 weeks):

Biospecimen Collection:

Biomarker Development Protocol:

- Data Integration:

Model Building:

Transportability Assessment:

Advanced Methodological Extensions