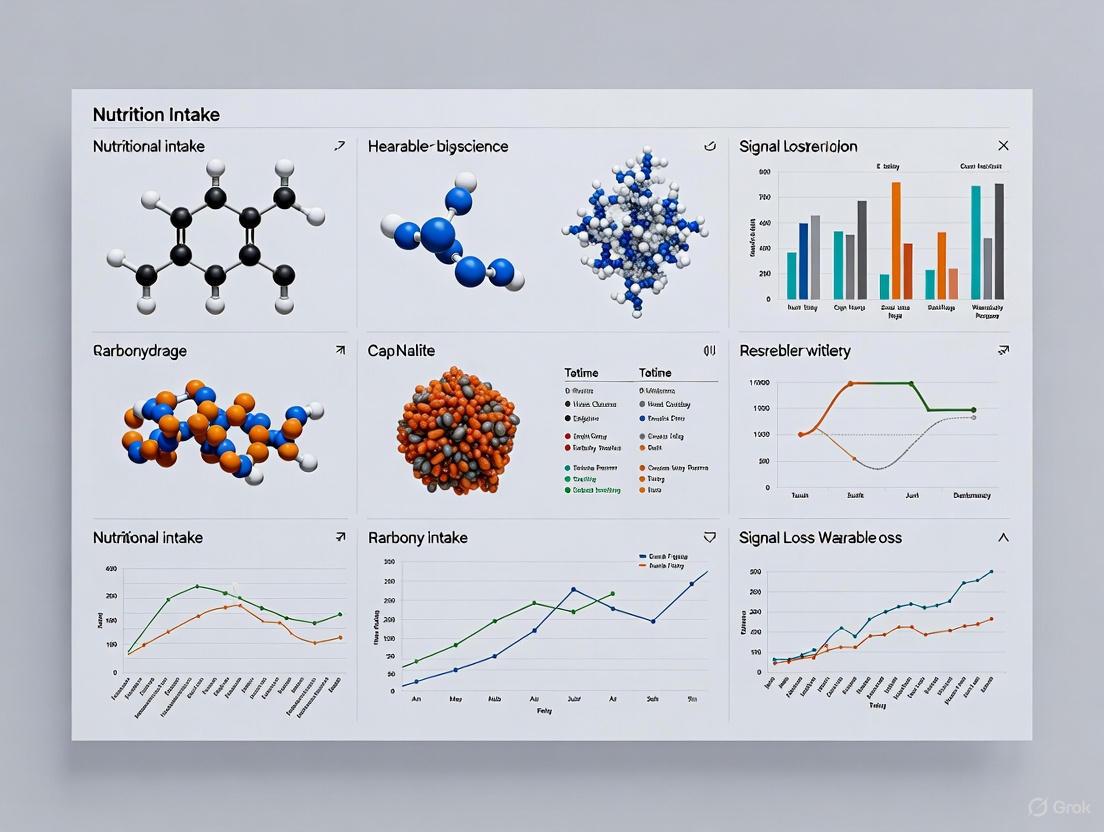

Signal Loss in Nutritional Intake Wearables: Challenges and Solutions for Biomedical Research

This article comprehensively examines the critical challenge of signal loss in emerging nutritional intake wearables, a key concern for researchers and drug development professionals.

Signal Loss in Nutritional Intake Wearables: Challenges and Solutions for Biomedical Research

Abstract

This article comprehensively examines the critical challenge of signal loss in emerging nutritional intake wearables, a key concern for researchers and drug development professionals. As wearable technology expands beyond fitness tracking to include chemical sensing for metrics like glucose, hydration, and alcohol, maintaining data integrity becomes paramount for clinical and research applications. We explore the fundamental causes of signal disruption across different sensor technologies, methodological approaches for signal recovery and data gap management, optimization strategies to minimize data loss, and validation frameworks for assessing device reliability. This systematic analysis provides essential guidance for leveraging wearable nutritional data in biomedical research, drug development pipelines, and clinical trial design while addressing the unique data quality challenges in this rapidly advancing field.

Understanding Signal Loss: Fundamental Challenges in Nutritional Wearable Technology

Technical Support Center: Troubleshooting Signal Loss in Research Settings

Frequently Asked Questions (FAQs)

Q1: Our nutritional intake wristband shows high variability in kcal/day estimates compared to controlled meal data. What is the primary source of this error? A1: Transient signal loss from the sensor technology is identified as a major source of error in computing dietary intake. A validation study of the GoBe2 wristband found this loss creates a mean bias of -105 kcal/day (SD 660) with 95% limits of agreement between -1400 and 1189 kcal/day, indicating poor reliability for precise nutritional intake measurement [1].

Q2: How does sensor placement affect data quality for wearable hydration monitors? A2: Sensor placement is critical for signal reliability. Research on electrodermal activity (EDA) sensors for hydration shows performance varies significantly by body location. Breathable, water-permeable electrodes placed on optimal body sites prevent sweat accumulation and signal saturation, improving tracking of sweat rate and hydration level during both physical and mental tasks [2].

Q3: What environmental factors most significantly impact the accuracy of wearable dietary and hydration sensors? A3: Temperature, humidity, and individual skin characteristics significantly affect sensor signals. For optical sensors used in hydration monitoring, low lighting conditions in real-world settings can compromise performance by reducing distinctive texture and characteristics needed for accurate measurements [3] [2].

Q4: Why do wearable nutrition sensors perform differently in laboratory versus free-living conditions? A4: Laboratory settings provide controlled conditions (stable lighting, minimal movement, standardized meals) that minimize signal artifacts. In free-living conditions, factors like motion artifacts, variable food types, diverse container shapes, and changing environmental conditions introduce noise and signal loss that current algorithms struggle to compensate for [1] [3].

Q5: What emerging technologies show promise for reducing signal loss in nutritional wearables? A5: Multimodal sensor systems that combine electrical, optical, and other sensors with AI-driven analysis represent the most promising direction. Additionally, egocentric vision-based pipelines (like the EgoDiet system using wearable cameras) and advanced electrode designs (micro-lace, spiral metal wire, and carbon fiber fabric electrodes) show potential for more reliable data capture with reduced signal loss [4] [3] [2].

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Signal Loss in Nutritional Intake Wearables

Problem: Erratic energy intake estimates with unexplained fluctuations.

Diagnosis Protocol:

- Check Sensor-Skin Contact: Verify continuous contact using the manufacturer's guidelines. Poor contact is the most common cause of transient signal loss [1].

- Monitor Environmental Conditions: Record ambient temperature and humidity levels during testing, as these significantly impact bioimpedance and optical sensor accuracy [2].

- Correlate with Ground Truth: Implement a reference method with calibrated study meals to quantify the specific bias and limits of agreement in your population [1].

Mitigation Strategies:

- Sensor Placement Optimization: Conduct pilot tests to identify optimal placement that minimizes motion artifacts while maintaining good skin contact [2].

- Multimodal Validation: Combine the primary sensor data with secondary validation methods (e.g., continuous glucose monitoring, periodic photographic records) to identify signal loss periods [1] [3].

- Algorithm Adjustment: Apply correction factors based on your validation study. The regression equation Y=-0.3401X+1963 (P<.001) from one study indicates devices may overestimate lower intake and underestimate higher intake, requiring population-specific calibration [1].

Guide 2: Resolving Signal Saturation in Hydration Monitoring Sensors

Problem: Signal saturation during high sweat rate conditions, particularly during physical activity.

Root Cause: Conventional non-permeable electrodes trap sweat under the sensor, causing saturation and signal degradation during heavy sweating [2].

Solution Implementation:

- Electrode Replacement: Replace standard electrodes with breathable, water-permeable variants:

- Micro-lace electrodes

- Spiral metal wire electrodes

- Carbon fiber fabric electrodes

- Body Site Selection: Place sensors on body locations less prone to extreme sweat accumulation, as identified through pilot testing [2].

- Signal Processing: Implement algorithms that distinguish between signals caused by physical exertion versus mental stress, as they manifest differently in EDA data [2].

Quantitative Data Analysis

Table 1: Performance Metrics of Nutritional Monitoring Wearables

| Device Type | Primary Signal | Mean Bias | Limits of Agreement | Key Limitation |

|---|---|---|---|---|

| Nutritional Intake Wristband (GoBe2) | Bioimpedance (fluid patterns) | -105 kcal/day | -1400 to 1189 kcal/day | Transient signal loss [1] |

| AI-Assisted Wearable Camera (EgoDiet) | Visual (food containers) | 28.0-31.9% MAPE* | N/A | Performance varies with lighting and container type [3] |

| 24-Hour Dietary Recall (Traditional Method) | Self-report | 32.5% MAPE* | N/A | Memory bias and misreporting [3] |

| Sweat Hydration Sensor (EDA-based) | Electrodermal activity | Under validation | N/A | Signal saturation during heavy sweating [2] |

*MAPE: Mean Absolute Percentage Error

Table 2: Sensor Technology Comparison for Hydration Monitoring

| Sensor Type | Key Advantage | Key Limitation | Signal Loss Risk |

|---|---|---|---|

| Electrical Sensors | Ease of use and integration | Signal saturation from sweat accumulation | High during physical activity [4] [2] |

| Optical Sensors | Higher precision, molecular-level insights | Sensitive to ambient light conditions | Moderate [4] |

| Thermal Sensors | Specialized niche applications | Limited population validation data | Variable [4] |

| Microwave-based Sensors | Deep tissue penetration | Limited commercial availability | Under investigation [4] |

| Multimodal Sensors | Improved accuracy through data fusion | Complex system integration | Low (redundancy) [4] |

Experimental Protocols for Signal Loss Investigation

Protocol 1: Reference Method Validation for Nutritional Intake Wearables

Purpose: To develop a reference method for validating wearable device estimation of daily nutritional intake and quantify signal loss impact [1].

Materials:

- Test wearable devices (e.g., GoBe2 wristband)

- Controlled dining facility with calibrated meal preparation

- Standardized weighing scales (e.g., Salter Brecknell)

- Continuous glucose monitoring system (optional)

- Food composition database (e.g., USDA)

Methodology:

- Recruit participants (n=25-30) meeting inclusion criteria (healthy adults, no chronic conditions)

- Conduct two 14-day test periods with consistent device use

- Prepare and serve calibrated study meals in controlled setting

- Record precise energy and macronutrient intake for each participant

- Collect continuous data from wearable devices

- Use Bland-Altman analysis to compare reference and test method outputs (kcal/day)

- Calculate mean bias, standard deviation, and 95% limits of agreement

- Perform regression analysis to identify systematic errors

Data Analysis:

- Bland-Altman plot analysis: Calculate mean difference (bias) and standard deviation

- Regression analysis: Develop correction equations for systematic patterns

- Signal loss quantification: Identify periods of transient signal loss and their impact on daily estimates

Protocol 2: Hydration Sensor Performance Under Physical and Mental Stress

Purpose: To evaluate wearable sweat sensor performance in tracking hydration status across different activity types and identify signal loss conditions [2].

Materials:

- Wearable sweat sensors with water-permeable electrodes (micro-lace, spiral metal wire, carbon fiber fabric)

- Electrodermal activity (EDA) measurement system

- Body weight scale for fluid loss measurement

- Standardized physical and mental task protocols

Methodology:

- Place sensors on predetermined optimal body locations

- Measure EDA as participants perform:

- Physical tasks (cycling at increasing intensity levels)

- Mental tasks (IQ tests, stress-inducing activities)

- Compare EDA signals with:

- Localized sweat measurements

- Overall fluid loss from body weight changes

- Evaluate how well each electrode design tracks sweat production

- Identify effective sensor designs and body sites for hydration monitoring

- Distinguish between signals caused by physical exertion versus mental stress

Data Analysis:

- Correlation analysis between EDA signals and objective hydration measures

- Signal-to-noise ratio calculation under different activity conditions

- Identification of saturation points for different electrode types

- Development of classification algorithms for signal type identification

Research Reagent Solutions

Table 3: Essential Materials for Nutritional Wearable Research

| Item | Function | Application Notes |

|---|---|---|

| GoBe2 Wristband (Healbe Corp) | Automatic tracking of daily energy intake and macronutrients | Uses bioimpedance signals to track fluid patterns related to nutrient influx; prone to transient signal loss [1] |

| AIM (Automatic Ingestion Monitor v2) | Dietary data collection via camera, resistance and inertial sensors | Fusion sensor system for laboratory and real-life settings; reduces labour-intensive monitoring [5] |

| eButton | Chest-pin wearable camera for dietary assessment | Chest-level imaging; captures food-eating episodes continuously and automatically [3] |

| Water-Permeable Electrodes (Micro-lace, Spiral metal wire, Carbon fiber fabric) | Prevents sweat accumulation in hydration sensors | Enables reliable EDA measurement during physical activity by preventing signal saturation [2] |

| Continuous Glucose Monitoring (CGM) System | Measures interstitial glucose levels | Provides complementary data for nutritional intake validation; not a direct nutrient intake measure [1] [6] |

| Salter Brecknell Scales | Standardized weighing for food portion measurement | Provides ground truth data for validation studies; essential for calibrated meal preparation [3] |

Technical Diagrams

Signal Loss Pathway in Nutritional Wearables

Device Validation Workflow

Troubleshooting Guide & FAQs for Research Professionals

This guide addresses the predominant technical challenges in nutritional intake wearable research, as identified in recent scientific literature. The following sections provide targeted troubleshooting methodologies to mitigate data loss and improve the reliability of your experimental data.

Frequently Asked Questions

Q1: Our research team observes high variability in energy intake estimates (kcal/day) from a wrist-worn sensor. What are the primary technical root causes, and how can we quantify this error?

A: The primary technical root causes are often transient signal loss from the sensor and algorithmic errors in converting sensor data into caloric estimates. A recent validation study of a commercial wristband (GoBe2) found a mean bias of -105 kcal/day with a wide standard deviation of 660 kcal, and 95% limits of agreement spanning from -1400 to 1189 kcal/day [1]. The regression analysis (Y = -0.3401X + 1963) indicated a tendency for the device to overestimate at lower calorie intakes and underestimate at higher intakes [1].

- Recommended Protocol for Validation:

- Employ a Reference Method: Collaborate with a metabolic kitchen or university dining facility to prepare and serve calibrated study meals. Precisely record the energy and macronutrient intake of each participant [1].

- Concurrent Monitoring: Have participants use the wearable sensor consistently during the test period.

- Statistical Analysis: Use Bland-Altman analysis to compare the daily dietary intake (kcal/day) measured by the reference method against the sensor's estimates. This will quantify the bias and limits of agreement for your specific device and cohort [1].

Q2: We suspect motion artifacts are corrupting bio-impedance signals in our dietary monitoring study. How can we detect and mitigate this?

A: Motion can indeed create artifacts in bio-impedance signals, which are often discarded in physiological monitoring but are central to dietary activity recognition [7]. Mitigation requires a combination of hardware placement, signal processing, and model training.

- Recommended Protocol for Mitigation:

- Secure Sensor Placement: Ensure the wearable device has a snug, consistent fit on the wrist to minimize baseline signal drift caused by movement. The iEat study deployed one electrode on each wrist to form a stable measurement circuit [7].

- Signal Pattern Recognition: Leverage the fact that dietary activities create unique temporal signal patterns. For example, cutting food creates a repetitive impedance pattern as the food circuit branch repeatedly opens and closes, while eating with a utensil forms a distinct circuit through the hand, utensil, and mouth [7].

- Implement Robust Classification: Train a user-independent, lightweight neural network model to classify these dynamic patterns. The iEat system achieved an 86.4% macro F1 score for recognizing food intake activities by focusing on these variation patterns rather than absolute impedance values [7].

Q3: Data loss from connectivity issues and insufficient synchronization is a major problem in our long-term, free-living studies. How can we characterize and reduce this data loss?

A: Data loss in wearable sensors is often "Missing Not at Random" (MNAR), meaning it is systematically related to time or user behavior, which can bias research outcomes [8]. A novel analysis of missing data statistics from wearable sensors in type 2 diabetes patients revealed specific patterns.

- Recommended Protocol for Analysis and Mitigation:

- Characterize the Missing Data: Analyze the gap size distribution and temporal dispersion of missing data in your dataset. Research shows that missing data in continuous glucose monitors (CGM) often cluster during the night (23:00–01:00), while activity tracker data loss can peak around specific days of the week due to infrequent synchronization [8].

- Fit a Distribution: Fit the gap size distribution with a Planck distribution to statistically test for the MNAR mechanism [8].

- Enforce Synchronization Protocols: Implement a strict, mandatory synchronization schedule for participants to prevent data loss when device memory buffers are full. For example, the Abbott Freestyle Libre CGM can only store a maximum of 8 hours of data before it must be manually synced with a receiver [8].

Q4: What are the essential materials and reagent solutions for building a foundational lab setup to investigate these technical root causes?

A: Establishing a lab for investigating signal issues in dietary wearables requires components for sensing, validation, and data analysis.

Table: Research Reagent Solutions & Essential Materials

| Item Name | Function/Explanation |

|---|---|

| Wrist-worn Bio-Impedance Sensor | Core device for capturing electrical impedance signals across the body; used to detect dietary activities via dynamic circuit variations formed by hand, mouth, utensils, and food [7]. |

| Continuous Glucose Monitor (CGM) | Research tool to measure physiological response to food intake and, concurrently, to study patterns of data loss in wearable sensors [8]. |

| Metabolic Kitchen | Gold-standard reference environment for preparing and serving calibrated study meals to validate the accuracy of wearable sensor nutrient intake estimates [1]. |

| Activity Tracker (e.g., Fitbit) | Provides complementary data on heart rate and step count; also serves as a model system for investigating missing data mechanisms in consumer-grade wearables [8]. |

| Data Analysis Software (e.g., Python/R) | For performing Bland-Altman analysis, gap size distribution fitting (e.g., Planck distribution), and training machine learning models for activity classification [1] [7] [8]. |

Visualizing Technical Root Causes and Experimental Workflow

The following diagrams map the signaling pathways of data loss and a standardized experimental workflow for technical validation, providing a clear framework for diagnosing issues in your research.

Signal Loss Pathways

Technical Validation Workflow

Physiological and environmental factors affecting signal acquisition

Signal acquisition from wearable devices is a critical process in digital health research, particularly in the emerging field of nutritional intake monitoring. These signals form the foundation for deriving meaningful physiological insights, from continuous glucose readings to metabolic responses. However, the path from raw sensor data to reliable research findings is fraught with technical challenges. Physiological variations between individuals and fluctuating environmental conditions can introduce significant noise, artifacts, and inaccuracies into the acquired signals, potentially compromising research validity.

This technical support center addresses the specific signal acquisition challenges faced by researchers, scientists, and drug development professionals working with nutritional intake wearables. By providing evidence-based troubleshooting guidance, standardized experimental protocols, and clear methodological frameworks, we aim to enhance data quality and reliability in this rapidly evolving field, ultimately strengthening the scientific evidence base for personalized nutrition and metabolic health interventions.

Troubleshooting Guides

Physiological Interference Factors

Table 1: Troubleshooting Physiological Interference in Signal Acquisition

| Symptom | Potential Cause | Diagnostic Method | Corrective Action |

|---|---|---|---|

| Signal drift or gradual baseline wander during prolonged monitoring | Changes in skin perfusion due to thermoregulation, caffeine intake, or emotional state [9] [10] | Review participant activity logs for correlated events (e.g., coffee consumption, stress). | Standardize pre-measurement participant preparation (diet, activity, rest) [11]. |

| Motion artifacts causing sharp, irregular signal spikes | Participant movement; loose sensor contact [12] [13] | Inspect signal trace during known movement periods (e.g., walking, talking). | Use secure, form-fitting device form factors (e.g., smart rings, bands) and apply motion artifact removal algorithms during data processing [12] [13]. |

| Low signal-to-noise ratio or weak signal amplitude | Skin tone variability, hair density, or tattooed skin affecting optical sensor performance [13] [10] | Check signal quality across participants with different skin tones. | Consider alternative sensing modalities (e.g., ultrasound, acoustic) less affected by skin pigmentation for specific parameters [12] [10]. |

| Inconsistent readings between identical devices on the same participant | Sensor placement variation; individual anatomical differences (e.g., tissue composition, blood vessel depth) [9] [11] | Rotate devices between positions to see if the issue follows the device or the location. | Create detailed anatomical placement guides and use templates for consistent sensor positioning across study sessions. |

| Unexpected physiological response (e.g., heart rate increase without exertion) | Psychological stress or emotional state triggering autonomic nervous system response [11] [14] | Correlate with self-reported stress/emotion logs or other physiological markers like HRV. | Incorporate brief psychological state assessments into the study protocol to contextualize data. |

Environmental Interference Factors

Table 2: Troubleshooting Environmental Interference in Signal Acquisition

| Symptom | Potential Cause | Diagnostic Method | Corrective Action |

|---|---|---|---|

| Sudden signal dropout or persistent noise | Electromagnetic interference (EMI) from nearby electronic equipment (e.g., phones, Wi-Fi routers) [11] | Move the device to a different location or shield it temporarily to see if the signal improves. | Establish a controlled testing environment, specify minimum distances from EMI sources, and use shielded cables where applicable. |

| Inaccurate optical readings | Ambient light leakage under the sensor housing [13] | Check sensor housing integrity and ensure full skin contact in a dark environment. | Ensure proper device fit, use opaque covers or patches, and validate sensor contact via a signal quality index pre-recording. |

| Abnormal temperature-related drift in sensor readings | Extreme ambient temperatures affecting sensor electronics and participant physiology [15] | Correlate signal anomalies with environmental temperature logs. | Control and monitor ambient temperature in the lab. For field studies, use devices with internal temperature compensation and log environmental data. |

| Corrupted data packets during wireless transmission | Low signal strength in Bluetooth/ANT+ transmission due to distance or physical obstacles [13] | Check the received signal strength indicator (RSSI) in the data logging software. | Ensure the receiver is within the recommended line-of-sight distance, minimizing physical obstructions between the device and receiver. |

Frequently Asked Questions (FAQs)

Q1: What are the most common physiological factors that lead to inaccurate signal acquisition in nutritional wearables? The primary physiological factors are motion artifacts from user activity, variations in skin properties (e.g., tone, temperature, perfusion, and hair density), and individual anatomical differences (e.g., tissue composition, blood vessel depth) [13] [10]. These factors are particularly problematic for optical sensors like PPG, leading to signal noise, drift, and complete dropouts. Furthermore, a user's psychological state, such as stress, can alter physiological signals like heart rate and HRV, which may be misinterpreted as a direct response to nutritional intake if not properly accounted for [11] [14].

Q2: How can researchers mitigate the impact of motion artifacts during free-living studies? Mitigation requires a multi-pronged approach. On the hardware side, using secure, form-fitting devices like smart rings or well-designed bands can minimize movement [13]. From a data processing perspective, employing advanced AI-driven algorithms is crucial. Models that integrate multi-scale convolutions (to capture local waveform details) and Long Short-Term Memory networks (to model temporal dependencies) have been shown to effectively separate motion artifacts from the underlying physiological signal, significantly improving waveform prediction accuracy [9] [12]. Additionally, having participants log their activities provides valuable context for identifying and filtering out corrupted data segments.

Q3: Why does skin tone affect some wearable sensors, and how can this bias be addressed in study design? Optical sensors, particularly Photoplethysmography (PPG), work by shining light into the skin and measuring the amount reflected. Different melanin levels in darker skin can absorb more light, reducing the signal strength and signal-to-noise ratio for the sensor [13] [10]. This can lead to systematically less accurate readings for individuals with darker skin tones. To address this, researchers should: a) Validate device accuracy across the full spectrum of skin tones in their study population, b) Consider using alternative sensing modalities like ultrasound or electrodes for specific parameters where feasible, as these are less susceptible to skin tone bias [12] [10], and c) Report participant skin tone demographics in their methodology to promote transparency.

Q4: What environmental factors are most likely to corrupt signal acquisition in a lab or clinical setting? Electromagnetic interference (EMI) from ubiquitous electronic equipment (computers, Wi-Fi, cell phones) is a major culprit, often causing sudden signal dropouts or high-frequency noise [11]. Ambient light can also severely interfere with optical sensors if it leaks under the sensor housing. Furthermore, extreme ambient temperatures can affect the performance of sensor electronics and simultaneously alter participant physiology (e.g., skin blood flow), leading to signal drift [15]. Controlling and monitoring the testing environment is essential for high-quality data collection.

Q5: What is the role of AI in improving signal acquisition and processing for wearable devices? AI, particularly deep learning models, is transformative for dealing with noisy, real-world data. AI can enhance data from low-cost sensors, making sophisticated diagnostics more accessible [12]. Specific applications include:

- Artifact Removal: AI models can learn to identify and remove motion artifacts and other noise sources [9].

- Signal Enhancement: Models can reconstruct clean physiological signals from noisy inputs. For example, CBAnet uses a combination of CNNs, LSTMs, and attention mechanisms to capture both local waveform details and long-range dependencies, achieving high-fidelity waveform prediction [9].

- Multimodal Data Fusion: AI excels at integrating data from multiple sensors (e.g., accelerometer, ECG, acoustic) to generate a more robust and accurate estimate of the underlying physiological parameter [12].

Experimental Protocols for Signal Quality Validation

Protocol for Validating Sensor Placement and Contact Quality

Objective: To establish a standardized procedure for ensuring consistent and reliable sensor placement across all study participants, thereby minimizing signal variability due to operator or participant error.

Materials:

- Wearable device(s) under investigation

- Isopropyl alcohol wipes

- Measuring tape or placement template

- Signal acquisition software with real-time display

- Marker pen (surgical skin marker)

Methodology:

- Site Selection and Preparation: Identify and mark the precise anatomical location for sensor placement according to the device manufacturer's guidelines. Clean the area with an isopropyl alcohol wipe and allow it to air dry completely.

- Baseline Signal Acquisition: Instruct the participant to remain seated and relaxed for a 5-minute baseline period. Initiate signal recording and observe the real-time output for stability, amplitude, and signal-to-noise ratio. A stable, strong signal with a clear physiological waveform (e.g., pulse wave for PPG) indicates good contact.

- Motion Challenge Test: Ask the participant to perform a series of standardized, low-intensity movements (e.g., tapping fingers, rotating wrist) for 30 seconds. Observe the signal for severe artifact intrusion. The signal should return to baseline promptly after movement ceases.

- Documentation: Document the exact placement location, any challenges encountered, and the initial signal quality metrics. Take a photograph of the sensor placement for future reference if the protocol allows.

Protocol for Quantifying Motion Artifact Susceptibility

Objective: To systematically evaluate and compare the resilience of different wearable devices or processing algorithms to motion artifacts.

Materials:

- Wearable device(s) under test

- Reference device (e.g., clinical-grade ECG for heart rate)

- Treadmill or stationary bicycle

- Data synchronization system (e.g., common trigger pulse)

Methodology:

- Setup and Synchronization: Fit the participant with all devices and the reference sensor. Start all data recording systems and send a synchronization pulse to align the data streams.

- Controlled Activity Protocol: Conduct a graded activity protocol:

- Rest (5 mins): Seated, quiet rest.

- Walking (3 mins): Slow walk (e.g., 2 km/h on a treadmill).

- Jogging (3 mins): Light jog (e.g., 6 km/h).

- Arm Movements (2 mins): Simulate eating and drinking motions while seated.

- Data Analysis: Calculate agreement metrics (e.g., RMSE, Pearson's r, MAE) between the test device and the reference device for each activity intensity level. This quantifies the degradation in performance with increasing motion.

Signaling Pathways and Workflows

Signal Acquisition Data Flow

Signal Quality Diagnostic Logic

Research Reagent Solutions

Table 3: Essential Materials for Wearable Signal Acquisition Research

| Item | Function & Specification | Example Use-Case in Research |

|---|---|---|

| Isopropyl Alcohol Wipes (70%) | Standardized skin preparation to remove oils and dead skin, ensuring consistent sensor-skin contact impedance [11]. | Pre-cleaning of electrode placement sites for bioimpedance spectroscopy or ECG to improve signal quality. |

| Electrode Gel/Hydrogel | Provides a stable, conductive medium between the skin and electrical sensors, reducing noise and baseline drift in biopotential measurements [12]. | Used with EMG sensors or wet electrodes to measure muscle activity or electrical properties of tissue. |

| Adhesive Patches/Tapes | Secures sensors firmly to the skin to minimize motion artifacts, available in various hypoallergenic materials for different study durations [13]. | Long-term continuous glucose monitoring (CGM) studies to ensure the sensor remains in place and functional for multiple days. |

| Optical Phantom Calibrators | Synthetic materials with controlled optical properties (scattering, absorption) that mimic human skin for validating and calibrating optical sensors like PPG [12]. | Benchmarking the performance of new PPG-based wearables across different "skin tones" in a controlled lab environment before human trials. |

| Reference Measurement Device | A clinical-grade, validated device (e.g., FDA-cleared ECG, BP monitor, lab-grade bioimpedance analyzer) used as a "gold standard" for ground-truth data [12] [10]. | Used in validation studies to calculate the accuracy (e.g., RMSE, MAE) of a new, investigational wearable device against an accepted reference. |

Data Integrity Implications for Longitudinal Nutritional Studies

This technical support center addresses the critical data integrity challenges in longitudinal nutritional studies that utilize wearable technology. As research shifts from population-level dietary guidelines to personalized nutrition interventions, maintaining data quality across extended monitoring periods becomes paramount. This resource provides researchers, scientists, and drug development professionals with practical troubleshooting guides and FAQs focused on specific data integrity issues, particularly signal loss, encountered during nutritional intake monitoring experiments.

Quantitative Evidence: Data Accuracy and Loss Patterns

Understanding the magnitude and patterns of data inaccuracy and loss is crucial for designing robust studies. The following tables summarize key quantitative findings from recent research.

Table 1: Wearable Sensor Accuracy in Nutritional Intake Monitoring

| Device Type / Study | Measurement Target | Reported Accuracy / Error | Key Limitation |

|---|---|---|---|

| GoBe2 Wristband [1] | Daily Energy Intake (kcal/day) | Mean bias: -105 kcal/day (SD 660); 95% limits of agreement: -1400 to 1189 kcal/day [1] | Tendency to overestimate lower intake and underestimate higher intake; transient signal loss [1] |

| iEat Wearable [7] | Food Intake Activity Recognition | Macro F1 Score: 86.4% (4 activities) [7] | Performance varies with food type and activity complexity |

| iEat Wearable [7] | Food Type Classification (7 types) | Macro F1 Score: 64.2% [7] | Lower performance on distinguishing similar food types |

Table 2: Patterns of Missing Data in Continuous Health Monitoring

| Sensor Type | Monitoring Context | Missing Data Pattern | Identified Cause [8] |

|---|---|---|---|

| Continuous Glucose Monitor (CGM) [8] | Type 2 Diabetes (2 weeks) | Higher frequency during night (23:00-01:00) [8] | Insufficient data synchronization frequency [8] |

| Fitbit (Step Count) [8] | Type 2 Diabetes (2 weeks) | Higher frequency on days 6 and 7 of monitoring [8] | Insufficient data synchronization frequency; behavioral drift [8] |

| Fitbit (Heart Rate) [8] | Type 2 Diabetes (2 weeks) | Missing Not at Random (MNAR) [8] | Device removal, synchronization issues [8] |

Experimental Protocols for Key Methodologies

Protocol 1: Validating Wearable Nutritional Intake Sensors

This protocol is adapted from a study assessing the ability of wearable technology to monitor nutritional intake in free-living adults [1].

Objective: To validate a wristband's estimation of daily nutritional intake against a controlled reference method.

Key Materials:

- Test Device: Wearable sensor wristband (e.g., GoBe2) and accompanying mobile application [1].

- Reference Method: Meals prepared, calibrated, and served by a metabolic kitchen or dining facility. Precise recording of individual energy and macronutrient intake is essential [1].

- Additional Sensors: Continuous Glucose Monitor (CGM) to measure adherence to dietary reporting protocols [1].

- Participants: Free-living adults meeting inclusion/exclusion criteria (e.g., no chronic diseases, specific dietary restrictions) [1].

Workflow:

- Pilot Testing: Conduct a pilot study to familiarize the research team with devices and procedures, and to inspect initial data exports [16].

- Participant Onboarding: Provide detailed, written protocols and onboarding instructions. Create support resources (e.g., videos) for participants unfamiliar with the technology [16].

- Data Collection:

- Participants use the nutrition tracking wristband and app consistently for the test period (e.g., two 14-day periods) [1].

- Participants consume calibrated study meals under direct observation of the research team to establish reference intake data [1].

- CGM data is collected concurrently to cross-validate adherence [1].

- Data Analysis:

Protocol 2: Investigating Missing Data Mechanisms in Sensor Data

This protocol provides a methodology to determine why data is lost, which is critical for developing appropriate countermeasures [8].

Objective: To systematically investigate the statistical characteristics of missing data from wearable sensors to determine the underlying mechanism (MCAR, MAR, MNAR).

Key Materials:

- Wearable Sensors: Devices such as CGM and activity trackers (e.g., Fitbit) collecting data at high temporal resolution (e.g., every 15 minutes for CGM, every minute for activity) [8].

- Data Processing Tools: Software for time-series analysis and statistical modeling (e.g., R, Python).

Workflow:

- Data Pre-processing:

- Resampling: Convert data to a time-invariant sampling rate using linear interpolation [8].

- Define Missing Data: Establish rules for classifying data as missing. For example, CGM data points >18 minutes from an original measurement; Fitbit data with zero heart rate and step count for extended periods [8].

- Exclusion Criteria: Remove days or participants with insufficient wear time (e.g., <70% HR data in 24h, <1000 steps/day) or >50% overall data loss [8].

- Gap Analysis:

- Identify all gaps (consecutive missing data points) in the time series.

- Plot the gap size probability distribution.

- Fit the distribution to a Planck or exponential distribution. An exponential decline suggests Missing (Completely) at Random, while deviations indicate Missing Not at Random (MNAR) [8].

- Temporal Dispersion Analysis:

- Test for significant variations in missing data frequency across different times of day (e.g., 3-hour intervals) or across measurement days using statistical tests like Kruskal-Wallis [8].

- Mechanism Inference:

- Combine gap analysis and dispersion results to conclude the missing data mechanism (e.g., MNAR due to insufficient synchronization if gaps are clustered at specific times/days) [8].

Workflow and Signaling Pathways

Data Integrity Management Workflow

The following diagram illustrates a systematic workflow for managing data integrity in a longitudinal nutritional study, from design to analysis.

Bio-Impedance Sensing Pathway for Dietary Monitoring

The following diagram outlines the sensing principle of a wearable bio-impedance device (e.g., iEat) used for automatic dietary monitoring, which leverages dynamic circuit variations [7].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Nutritional Wearable Research

| Item / Solution | Function in Research | Example / Specification |

|---|---|---|

| Wristband Sensor (Bio-impedance) [1] [7] | Automatically estimates energy intake and macronutrients via physiological response (fluid shifts). | GoBe2 device; iEat prototype with two-electrode configuration measuring impedance variation [1] [7]. |

| Continuous Glucose Monitor (CGM) [1] [8] | Provides high-frequency interstitial glucose measurements to correlate with intake and assess adherence. | Freestyle Libre; used as an adjunct sensor for validation [1] [8]. |

| Activity Tracker [8] | Monitors physical activity and heart rate to provide context for energy expenditure and detect non-wear periods. | Fitbit Charge HR/2; data used for wear time validation and contextual analysis [8]. |

| Metabolic Kitchen [1] | Prepares and serves calibrated study meals to provide the gold-standard reference for actual nutritional intake. | University dining facility with precise control over ingredients and portions [1]. |

| Data Dictionary & Metadata File [17] | Ensures interpretability by documenting all variables, coding, units, and collection context. | Separate file created before/during data collection; includes variable names, categories, and validation rules [17]. |

| Digital Data Collection Platform [18] | Streamlines remote data capture, manages participants, provides reminders, and enables real-time data validation. | Platforms like Zigpoll or Labfront; used for task management and adherence tracking [16] [18]. |

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: Our study is experiencing significant data loss from wearable devices. How can we determine if this loss is random or systematic?

Answer: Systematic investigation of missing data patterns is required.

- Step 1: Pre-process your data to define and classify missing points according to a strict protocol (e.g., zero values in heart rate, long intervals between glucose readings) [8].

- Step 2: Analyze the distribution of gap sizes (periods of consecutive missing data). If the distribution shows an exponential decline, the data may be Missing at Random. Deviations from this pattern (e.g., fitting a Planck distribution) suggest Missing Not at Random (MNAR) [8].

- Step 3: Check for unequal dispersion of missing data over time (e.g., time of day, day of week). Statistically significant clustering (e.g., more data loss at night or on specific days) confirms the missing data is not random and is likely related to participant behavior or device limitations [8].

FAQ 2: Participants in our longitudinal study are failing to charge and sync their devices regularly, leading to data loss. What strategies can improve adherence?

Answer: Proactive participant management is key to minimizing this type of data loss.

- Troubleshooting Guide:

- Pre-Study: During onboarding, provide extremely detailed, clear protocols and use videos or PowerPoints to demonstrate charging and syncing procedures. Run a pilot study to identify potential points of confusion [16].

- During Study: Implement automated reminder systems via your digital platform to notify participants to charge and sync their devices [18]. Maintain regular communication and provide remote support resources to troubleshoot technical issues quickly [16].

- Incentives: Use ethical incentive programs to motivate consistent participation and device maintenance throughout the study duration [18].

FAQ 3: How can we improve the general quality and reliability of data at the point of collection in a free-living study?

Answer: Implement robust data management practices from the very beginning.

- Define a Strategy: Plan your study, data requirements, and analysis methods together before collection begins [17].

- Create a Data Dictionary: Develop a comprehensive data dictionary that explains all variable names, coding, and units. This is crucial for interpretability and should be prepared before data collection starts [17].

- Standardize Protocols: Use validated and reliable measurement instruments. Develop and document uniform data collection protocols for all researchers and participants to follow, ensuring consistency across the study [18].

- Pilot Test: Always conduct a pilot test of your entire workflow. This allows you to check that the collected data is in the expected format and of sufficient quality, and to identify any procedural issues before the full-scale study launches [16] [18].

FAQ 4: We are overwhelmed by the volume of data generated from our wearable devices. What is the best approach for handling and analyzing this complex longitudinal data?

Answer: A streamlined and expert-supported approach is necessary.

- Focus: Only collect the metrics you explicitly need for your research objectives to avoid data overload [16].

- Expert Consultation: Consult with data analysts or statisticians who specialize in large, longitudinal datasets and complex methods like growth curve modeling or structural equation modeling [16] [18].

- Analytical Techniques: Employ advanced analytical techniques designed for longitudinal data, which can account for variable intervals, missing data, and complex relationships over time. Techniques include growth curve analysis, hierarchical linear modeling, and multiple imputation for missing data [18].

Current Limitations in Continuous Chemical Sensing Technologies

Continuous chemical sensing technologies represent a frontier in nutritional intake monitoring, enabling researchers to track dietary biomarkers and metabolic responses in real-time. However, these technologies face significant limitations that impact their reliability in research settings, particularly regarding signal stability, detection accuracy, and operational consistency. This technical support center addresses these challenges through targeted troubleshooting guidance and experimental protocols specifically framed within the context of nutritional intake wearables research.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q: What are the primary sources of signal loss in wearable chemical sensors for nutritional monitoring?

A: Signal loss primarily stems from transient sensor disconnections, physical motion artifacts, and biofouling of sensing surfaces. In wrist-worn nutrition trackers, researchers observed transient signal loss as a major source of error in computing dietary intake [1] [19]. Additionally, gradual dissociation of recognition elements from sensing surfaces creates slow signal drifts that compromise long-term measurements [20].

Q: How can I differentiate between true signal loss and actual low analytic concentration?

A: Implement control experiments with known calibrants at regular intervals and monitor internal reference signals. Fast signal changes typically indicate multivalent interactions or motion artifacts, while slow signal changes suggest gradual dissociation of sensing elements [20]. Simultaneous monitoring of multiple parameters can help distinguish true signals from noise.

Q: What sampling frequency should I use to minimize data loss while maintaining battery life?

A: Balance your specific research needs with technical constraints. For dietary activity recognition, systems like iEat have effectively used sampling rates sufficient to capture eating gestures [7]. Note that higher sampling frequencies increase power consumption and may cause packet loss in wireless systems [21]. The maximum sampling frequency before packet loss occurs depends on how many sensors are enabled and your Bluetooth hardware capabilities.

Q: How can I synchronize data from multiple wearable sensors to correlate nutritional intake with metabolic response?

A: Use systems that synchronize to a common clock. Some platforms enable synchronization of multiple devices with the PC system clock when connected via Bluetooth [21]. For optimal synchronization without sacrificing battery life, set the real-time clock on each device to a common time reference rather than using continuous master/slave Bluetooth communication [21].

Troubleshooting Common Experimental Issues

| Problem | Possible Causes | Solutions |

|---|---|---|

| Gradual signal degradation | Dissociation of biological recognition elements; Biofouling; Electrode passivation | Implement single-sided aging tests; Use fresh calibration standards; Incorporate surface regeneration protocols [20] |

| High signal variability during eating | Motion artifacts; Changing contact impedance; Variable food composition | Apply motion-tolerant algorithms; Use physical stabilization; Implement food type classification to adjust baselines [7] |

| Complete signal dropouts | Wireless connectivity issues; Electrode dislodgement; Power interruptions | Check Bluetooth signal strength; Verify electrode contact quality; Implement data gap filling algorithms [1] [21] |

| Inconsistent nutritional estimates | Variable nutrient bioavailability; Individual metabolic differences; Sensor placement variance | Use controlled meal validation; Include individual calibration; Account for food matrix effects [1] [22] |

Experimental Protocols for Signal Stability Assessment

Protocol 1: Single-Sided Aging Test for Sensor Component Stability

Purpose: To identify which sensor components (particles or surfaces) contribute most to signal drift in affinity-based continuous sensors [20].

Materials:

- Freshly prepared sensor components (particles and surfaces)

- Appropriate buffer solutions (e.g., PBS with 0.5M NaCl)

- Analytical setup for measuring bound fraction (e.g., microscopy system)

Method:

- Prepare sensing surfaces and particle suspensions following standard biofunctionalization protocols

- Age components individually for periods ranging from 4-92 hours at room temperature with rotation

- After aging, combine aged particles with fresh surfaces AND fresh particles with aged surfaces

- Measure bound fraction values using both direct and competition assay readouts

- Compare results against fresh components to identify degradation sources

Interpretation: Significant signal reduction with aged particles indicates antibody dissociation issues, while reduction with aged surfaces suggests analogue molecule dissociation [20].

Protocol 2: Validation Reference Method for Nutritional Intake Wearables

Purpose: To establish a reference method for validating wearable sensor estimates of nutritional intake against controlled meal consumption [1] [19].

Materials:

- Wearable nutrition sensors (e.g., wrist-worn devices)

- Controlled dining facility with standardized meal preparation

- Nutritional analysis software for meal calibration

- Continuous glucose monitors (optional, for adherence monitoring)

Method:

- Collaborate with metabolic kitchen to prepare calibrated study meals

- Precisely record energy and macronutrient content of all served foods

- Recruit participants without metabolic conditions that might affect measurements

- Conduct study over multiple test periods (e.g., two 14-day periods)

- Have participants consume all meals under direct observation in controlled setting

- Compare sensor-estimated intake with actual consumption using Bland-Altman analysis

Interpretation: Calculate mean bias and limits of agreement to quantify sensor accuracy. Regression analysis can identify systematic errors (e.g., overestimation at low intake, underestimation at high intake) [1] [19].

Performance Metrics of Representative Sensing Technologies

| Technology Platform | Measured Parameter | Accuracy/Limits of Agreement | Key Limitations |

|---|---|---|---|

| Wristband Nutrition Tracker [1] | Energy intake (kcal/day) | Mean bias: -105 kcal/day; 95% limits: -1400 to 1189 kcal/day | Signal loss artifacts; Underestimation at high intake |

| iEat Bioimpedance Wearable [7] | Food intake activity recognition | Macro F1 score: 86.4% (4 activities) | Dependent on food electrical properties |

| iEat Bioimpedance Wearable [7] | Food type classification | Macro F1 score: 64.2% (7 food types) | Limited to defined food categories |

| Particle Motion Biosensor [20] | Glycoalkaloid detection | Long-term signal drift over 20 hours | Gradual analogue dissociation from surface |

| Detection Sensitivity | Market Position (2024) | Primary Applications |

|---|---|---|

| Parts per billion (ppb) | Significant market share | Environmental monitoring; Industrial safety; Water quality |

| Parts per trillion (ppt) | Considerable growth anticipated | Early disease biomarkers; Trace contaminant detection |

| Micromolar to millimolar | Common in consumer wearables | Glucose monitoring; Basic nutritional assessment |

Signaling Pathways & Experimental Workflows

Bioimpedance Sensing Circuit Model

Sensor Signal Degradation Pathways

The Scientist's Toolkit: Research Reagent Solutions

Essential Materials for Continuous Chemical Sensing Research

| Research Reagent | Function in Experimental Protocol | Key Considerations |

|---|---|---|

| DBCO-ssDNA Capture Oligos [20] | Surface functionalization for biosensors | Enables covalent coupling via azide groups; Stable anchor for analogue molecules |

| Streptavidin-Coated Particles [20] | Mobile sensing elements in particle motion sensors | Consistent size distribution; High biotin binding capacity |

| Biotinylated PolyT Molecules [20] | Blocking agent for reducing nonspecific binding | Prevents multitethering in particle-based systems |

| PLL-g-PEG Polymer Coating [20] | Low-fouling surface preparation | Reduces nonspecific protein adsorption; Improves signal stability |

| ssDNA-Solanidine Analogue [20] | Analyte competitor in competitive assays | Enables reversible binding for continuous monitoring |

| Bioimpedance Electrodes [7] | Wrist-worn sensors for dietary monitoring | Medical-grade conductive materials; Consistent skin contact |

| Calibrated Meal Materials [1] | Reference method validation | Precisely measured macronutrients; Controlled preparation |

Advanced Methodologies for Signal Recovery and Data Gap Management

AI and machine learning approaches for signal reconstruction and gap filling

Frequently Asked Questions (FAQs)

Q1: What are the primary causes of signal loss in nutritional intake wearables? Signal loss, or data gaps, in nutritional intake wearables is predominantly caused by technical and user-experience factors. The main culprits include:

- Battery Drain: Continuous sensor operation, particularly for power-intensive functions like GPS tracking or heart rate monitoring, rapidly depletes battery life, leading to data loss during recharging periods [23].

- Cloud Contamination: For wearable-based imaging sensors (e.g., for food recognition), cloud cover can obstruct the view, resulting in missing or low-quality data points [24].

- Device Incompatibility & Sensor Duty-Cycling: Inconsistencies across operating systems and hardware can interrupt data collection. Furthermore, to save power, devices may use sensor duty-cycling, where high-power sensors are periodically deactivated, creating intentional gaps in the data stream [23].

- Low-Quality Observations: Sensor data can be corrupted by motion artifacts, poor sensor-skin contact, or environmental interference, rendering certain periods unusable [24].

Q2: How can AI models handle irregular time-series data from wearables? AI models, particularly those designed for sequence data, are adept at handling irregular time intervals. Long Short-Term Memory (LSTM) networks and Bidirectional LSTM (Bi-LSTM) models can learn temporal dependencies in data without assuming uniform time steps [24] [25]. These models process information from previous time points to inform predictions at missing points, making them robust for the sporadic data collection typical of free-living wearable studies [24].

Q3: What is the difference between temporal and spatiotemporal gap-filling methods? The key difference lies in the type of information used to reconstruct the missing signal.

- Temporal Methods rely solely on data from the same sensor or location across different time points. For example, a simple approach is to fill a gap using the average value from the previous and next day [24]. These methods are simple but can struggle with capturing sudden changes or complex patterns.

- Spatiotemporal Methods leverage both time and space. They fill a data gap by using information from not only the historical data at that location but also from data at other, similar sensor locations (e.g., other devices in a network or neighboring pixels in an image). Methods like CRYSTAL are designed for this purpose and generally achieve higher accuracy than temporal-only approaches [24].

Q4: We have multi-spectral data (e.g., RGB). Are there specialized gap-filling techniques? Yes. Standard gap-filling methods often treat data as a single channel (grayscale) and can miss the relationships between different spectral bands. Novel methods like the SpatioTemporal And spectRal gap-filling method (STARS) have been developed specifically for multiband data. STARS synergistically combines spatiotemporal information with RGB spectral information to reconstruct gaps, effectively accounting for variations in different light sources or sensor channels, which is crucial for accurate reconstruction [24].

Q5: How do I validate the performance of a gap-filling algorithm on my dataset? The standard protocol involves a simulation study where you artificially create gaps in a portion of your complete, high-quality data. You then apply your algorithm to fill these known gaps and compare the results to the actual values. Performance is quantified using metrics like [24]:

- R-squared (R²): Measures how much of the variance in the actual data is explained by the reconstructed data. Higher is better.

- Root-Mean-Square Error (RMSE): Measures the average magnitude of the error between the actual and reconstructed values. Lower is better.

Table 1: Key Performance Metrics for Gap-Filling Algorithm Validation

| Metric | Interpretation | Ideal Value |

|---|---|---|

| R-squared (R²) | Proportion of variance in the actual data that is predictable from the reconstructed data. | Closer to 1.0 |

| Root-Mean-Square Error (RMSE) | Average magnitude of the prediction errors; indicates the absolute fit of the model. | Closer to 0 |

Troubleshooting Guides

Problem: Rapid Battery Drain Causing Data Gaps

Application Context: A study using consumer wearables to track physical activity and estimate energy expenditure in a free-living population finds that participants frequently forget to charge devices, leading to multi-hour data gaps each day.

Solution: Implement Adaptive Sampling and Low-Power Protocols

- Diagnosis: Confirm the issue is battery-related by cross-referencing data gap timestamps with device battery log APIs.

- Algorithm Selection: Propose a switch from continuous sensing to an adaptive sampling algorithm. This AI-driven method dynamically adjusts the frequency of data collection based on detected user activity [23].

- Protocol Implementation:

- During High Activity: When the accelerometer detects motion consistent with walking or running, maintain a high sampling rate (e.g., 1 Hz).

- During Sedentary Periods: When the user is stationary for a predefined time, the algorithm automatically lowers the sampling rate (e.g., to 0.1 Hz) to conserve power [23].

- Validation: Compare data completeness and battery life logs before and after implementing the adaptive protocol. Validate that the lower sampling rate during sedentary periods does not miss clinically significant activity events.

Problem: Gaps in Continuous Glucose Monitor (CGM) Data

Application Context: A clinical trial investigating the impact of personalized nutrition on glycemic control needs complete CGM data streams for model training, but data loss occurs due to sensor dislodgement or signal dropouts.

Solution: Apply a Spatiotemporal and Spectral Gap-Filling Model

- Diagnosis: Identify the distribution and size of gaps. This guide is suitable for gaps caused by short-term signal dropouts, not prolonged sensor failure.

- Algorithm Selection: Adapt a STARS-like methodology that uses spatiotemporal and "spectral" information. In this context, "spectral" can be extended to other concurrent physiological signals [24].

- Experimental Protocol:

- Inputs: Use the CGM time series and correlated data from other sensors (e.g., heart rate, accelerometer).

- Process: The model learns the personalized relationship between physical activity, heart rate, and glucose levels for each individual.

- Reconstruction: For a gap in the CGM data, the model uses the ongoing data from the other sensors (the "spectral" inputs) and the historical spatiotemporal patterns of CGM to reconstruct the most probable glucose values [24].

- Validation: Perform a simulation study by artificially masking 10% of known CGM values and comparing the model's output against the actual masked values. The STARS method has demonstrated average R² values of 0.79, 0.78, and 0.70 for RGB bands in other applications, indicating a strong potential for performance [24].

Table 2: Comparison of Common Gap-Filling Methods for Wearable Data

| Method | Principle | Best For | Advantages | Limitations |

|---|---|---|---|---|

| Temporal (e.g., Mean/Median Fill) | Fills gaps with a statistic (e.g., mean) from data at other time points. | Simple, quick fixes; large datasets where complex modeling is infeasible. | Computational simplicity, easy to implement. | Ignores spatial correlations; poor performance with complex temporal patterns [24]. |

| Spatiotemporal (e.g., CRYSTAL) | Uses data from similar nearby sensors or time points to interpolate missing values. | Data from multi-sensor setups or wearable networks. | Higher accuracy than temporal methods; leverages sensor correlations [24]. | May not account for multi-modal data (e.g., spectral bands) [24]. |

| Machine Learning (Bi-LSTM) | Uses recurrent neural networks to learn temporal dependencies and predict missing values. | Irregular time-series data with complex long-range dependencies [24]. | High accuracy; can model complex, non-linear patterns. | Requires substantial data for training; computationally intensive [24]. |

| Spectral-Spatiotemporal (e.g., STARS) | Combines spatiotemporal information with data from correlated spectral bands or sensor modalities. | Multi-modal data (e.g., RGB, CGM + heart rate). | High accuracy for multiband data; leverages richest information source [24]. | Complex to implement; requires multiple data streams. |

Experimental Protocols for Cited Key Experiments

Protocol 1: Validating the STARS Gap-Filling Method

This protocol is based on the methodology used to validate the STARS algorithm for multispectral nighttime light imagery, which can be conceptually adapted for multichannel physiological data [24].

1. Objective: To quantitatively evaluate the performance of the STARS method in reconstructing cloud-induced gaps in multispectral satellite imagery, demonstrating its applicability for multi-sensor data reconstruction.

2. Materials and Data Input:

- Input Data: SDGSAT-1 GLI multispectral nighttime light images (RGB bands) [24].

- Software: Python or MATLAB for implementing the STARS algorithm.

3. Methodology:

- Step 1 - Simulation of Gaps: Select a complete, high-quality image (reference image). Artificially generate cloud masks to simulate data gaps of various sizes and distributions [24].

- Step 2 - Image Reconstruction: Apply the STARS algorithm to the masked image. STARS works by combining:

- Spatiotemporal Information: Data from the same location at other times and from similar pixels in the surrounding space.

- Spectral Information: The correlation between the different RGB bands to inform the reconstruction of each individual band [24].

- Step 3 - Performance Validation: Compare the reconstructed image with the original, unmasked reference image. Calculate performance metrics pixel-by-pixel for the gap areas [24].

4. Key Performance Metrics:

- R-squared (R²): Reported average values for STARS: 0.79 (Red), 0.78 (Green), 0.70 (Blue) [24].

- Root-Mean-Square Error (RMSE): STARS demonstrated lower RMSE compared to traditional methods like temporal gap-filling, mean-weighted, CRYSTAL, and STARFM [24].

Protocol 2: Implementing a Bi-LSTM for Daily Activity Data Imputation

1. Objective: To fill gaps in daily activity traces (e.g., step count) from a wearable device using a Bidirectional LSTM model, which captures temporal dependencies from both past and future data points [24].

2. Materials and Data Input:

- Input Data: A long, continuous time series of step count data at a consistent interval (e.g., per minute).

- Software: Python with deep learning libraries like TensorFlow or PyTorch.

3. Methodology:

- Step 1 - Data Preparation: Normalize the step count data. Structure it into supervised learning samples with a defined look-back and look-forward window.

- Step 2 - Model Architecture:

- Define a Bi-LSTM layer(s) that processes the sequence of data forwards and backwards.

- Add fully connected layers to output the imputed values [24].

- Step 3 - Training with Artificial Gaps: Artificially mask random segments of the training data. Train the model to predict the central value of these masked segments using the surrounding context.

- Step 4 - Imputation: Use the trained model to predict values for real gaps in the dataset.

4. Key Performance Metrics:

- Mean Absolute Error (MAE) on a test set with simulated gaps.

- Prediction Accuracy for specific activity states (e.g., sedentary vs. active).

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Tools and Datasets for Signal Reconstruction Research

| Item / Solution | Function / Application in Research |

|---|---|

| STARS Algorithm | A novel gap-filling method that uses spatiotemporal and spectral synergy; the benchmark for reconstructing multi-band or multi-modal sensor data [24]. |

| Bi-LSTM Model | A recurrent neural network architecture ideal for time-series imputation; captures long-range dependencies in both past and future directions for highly accurate reconstruction [24]. |

| Adaptive Sampling Algorithm | A power-saving protocol that dynamically adjusts sensor sampling rates based on user activity level; crucial for extending battery life and reducing data loss in free-living studies [23]. |

| Public Code Repository [26] | A curated collection of Python and MATLAB codes for signal processing and ML tasks; accelerates implementation and ensures reproducibility of methods [26]. |

| Polar H10 Chest Strap | A wearable device noted for high-fidelity heart rate variability (HRV) data collection and excellent battery life (up to 400 hours); serves as a reliable ground truth or primary data source [23]. |

| ActiGraph GT9X | A research-grade activity monitor providing reliable inertial measurement unit (IMU) data with long-term battery support; standard in clinical and public health research [23]. |

Signaling Pathways and Workflow Diagrams

AI Signal Reconstruction Workflow

Troubleshooting Data Loss Guide

Troubleshooting Guide & FAQs

This guide addresses common challenges researchers face when fusing chemical, optical, and inertial data in nutritional intake wearables, with a specific focus on mitigating signal loss.

FAQ 1: What are the primary causes of complete signal loss in a multi-modal dietary monitoring system, and how can they be resolved?

Complete signal loss often stems from connectivity disruptions or sensor hardware failure. The table below outlines common causes and solutions.

| Primary Cause | Underlying Issue | Recommended Solution |

|---|---|---|

| Connectivity Loss | Bluetooth pairing failures or sync errors in data transmission from wearable to receiver [27] [28]. | Update device firmware and app; ensure devices are within range; restart and re-pair devices; check for network instability [28]. |

| Sensor Hardware Failure | Physical damage, battery swelling/leakage, or faulty components from environmental exposure or wear and tear [28]. | Follow manufacturer guidelines for charging and storage; use protective cases; inspect for physical damage; contact manufacturer for repair if faulty [28]. |

| Power Depletion | Battery drain from continuous sensor operation or background processes, leading to shutdown [27]. | Test usage in light and heavy scenarios; track battery percentage frequently; identify and optimize power-intensive background processes [27]. |

FAQ 2: How can I differentiate between a sensor hardware failure and a data fusion algorithm error when encountering inconsistent nutritional data?

Inconsistent data requires a systematic approach to diagnose its origin. Follow the diagnostic workflow below to isolate the issue.

FAQ 3: Our inertial sensors (IMUs) suffer from significant gyroscopic drift during long-term monitoring of eating gestures. How can this be corrected using a multi-modal approach?

Gyroscopic drift is a key limitation of IMUs, but it can be mitigated by fusing data from optical systems [29].

- Problem: Gyroscopes measure angular velocity by integration over time, which causes errors to compound, resulting in drift that degrades orientation accuracy [29].

- Solution: Use Optical Motion Capture (OMC) as an intermittent reference to correct the drifting IMU orientation. An optimization-based sensor fusion algorithm can use the first and last frames of highly accurate OMC data to correct the gyroscope data that fills the gap in between [29]. This corrects for drift without being affected by magnetic disturbances or movement, which often plague magnetometer-based corrections [29].

FAQ 4: When using optical sensors for food imaging, how can we maintain accuracy in low-light conditions (e.g., dimly lit restaurants) while preserving user privacy?

This is a common challenge for vision-based dietary monitoring. The solution involves leveraging multi-modal sensing to reduce reliance on images.

- Privacy-Centric Approach: Shift from pure image analysis to methods that use non-visual sensors. Bio-impedance sensors (like the iEat wristband) can detect food intake activities and classify food types by measuring changes in electrical circuits formed by the body, utensils, and food, eliminating the need for cameras [7].

- Low-Light Mitigation: If optical sensors are necessary, ensure your system design does not rely solely on them. Fusion with other modalities is key. Inertial sensors (IMUs) can accurately track wrist movements for eating gesture recognition regardless of lighting conditions [30]. Combining this behavioural data with chemical sensor readings can provide a robust estimate of intake without a clear image.

Experimental Protocols for Validation

Protocol 1: Validating a Multi-Modal Sensor Fusion Algorithm for Gap Filling

This protocol is designed to test the efficacy of using inertial and optical data to correct for signal loss, as inspired by research on motion capture [29].

1. Objective: To evaluate the performance of a sensor fusion algorithm in reconstructing missing optical data segments using inertial measurement unit (IMU) data. 2. Materials: - Inertial Motion Capture (IMC) system with IMUs (containing gyroscopes). - Optical Motion Capture (OMC) system (e.g., high-speed cameras). - Data synchronization unit. - Computing station with sensor fusion algorithm software. 3. Methodology: - Sensor Placement: Securely attach IMUs and OMC reflective marker clusters to the body segments of interest (e.g., hand, forearm, upper arm). - Data Collection: Have participants perform a dietary-related task (e.g., simulated hand-to-mouth eating gestures) while simultaneously recording data from both IMC and OMC systems. - Simulate Gaps: In post-processing, artificially create gaps (e.g., 30-second to 5-minute durations) in the OMC data to simulate marker occlusion or signal loss [29]. - Apply Fusion Algorithm: Use an optimization-based fusion algorithm to fill the simulated gaps. The algorithm should use the first and last frames of OMC data from the gap period and the continuous gyroscope data from the IMU to reconstruct the missing orientation data [29]. - Validation: Compare the algorithm's reconstructed trajectory against the true OMC data that was artificially removed. Calculate performance metrics like Root-Mean-Square Error (RMSE) of segment orientation [29].

Quantitative Performance Metrics (Example) The following table summarizes potential outcomes based on similar research, where OMC and IMU data were fused for upper-limb motion [29].

| Sensor Placement | Simulated Gap Duration | Total Orientation RMSE |

|---|---|---|

| Hand | 5 minutes | < 1.8° |

| Forearm | 5 minutes | < 1.8° |

| Upper Arm | 5 minutes | < 1.8° |

Protocol 2: Establishing a Ground Truth for Physiological Response to Food Intake

This protocol provides a method for correlating wearable sensor data with gold-standard physiological measures, crucial for validating chemical and optical sensor readings [30].

1. Objective: To investigate the relationship between physiological parameters (HR, SpO₂, Tsk) measured by wearables and blood biochemical markers following food intake. 2. Materials: - Custom multi-sensor wearable wristband (PPG for HR/SpO₂, skin temperature sensor, IMU). - Bedside vital sign monitor (for validation). - Intravenous cannula for blood sampling. - Automated blood glucose and insulin analyzer. - Pre-defined high-calorie and low-calorie meals. 3. Methodology: - Controlled Setting: Conduct the study in a clinical research facility. Recruit healthy participants meeting specific BMI and health criteria [30]. - Experimental Procedure: - Fit participants with the wearable sensor and bedside monitor. - Insert an intravenous cannula for repeated blood sampling. - After a baseline period, provide participants with a high- or low-calorie meal in a randomized order. - Continuously record physiological data from the wearable and bedside monitor throughout the eating and post-prandial period (e.g., up to 1 hour). - Collect blood samples at regular intervals to measure glucose, insulin, and hormone levels (e.g., GLP-1, Ghrelin) [30]. - Data Analysis: Use statistical models (e.g., linear regression) to explore correlations between features extracted from the wearable sensor data (e.g., change in HR, Tsk) and the blood biomarker levels.

The logical flow of this experiment and the relationships between its components are visualized below.

The Scientist's Toolkit: Research Reagent Solutions

This table details essential materials and their functions for setting up a multi-modal dietary sensing study.

| Item | Function & Application in Dietary Monitoring |

|---|---|

| Inertial Measurement Unit (IMU) | Contains a gyroscope, accelerometer, and magnetometer. Used to track eating gestures (via hand-to-mouth movements) [30] and body segment orientation. Prone to gyroscopic drift over time [29]. |

| Pulse Oximeter (PPG Sensor) | A photoplethysmography (PPG) sensor module tracks continuous heart rate (HR) and blood oxygen saturation (SpO₂). Used to detect physiological responses to food intake and digestion, as heart rate has been shown to increase post-meal [30]. |

| Bio-Impedance Sensor | Measures the electrical impedance of biological tissues. Can be used in an atypical manner to detect dietary activities by monitoring dynamic circuit changes formed by the body, metal utensils, and food (e.g., iEat system) [7]. |

| Optical Motion Capture (OMC) | A multi-camera system considered the gold standard for tracking the 3D position of reflective markers. Provides highly accurate orientation data to validate and correct for drift in IMU data [29]. |

| Continuous Glucose Monitor (CGM) | A chemical sensor that measures interstitial glucose levels in near-real-time. Provides a key biochemical correlate for validating intake estimates from other sensor modalities [31]. |

| Skin Temperature Sensor | A sensor that monitors skin surface temperature (Tsk). Used to track the post-prandial increase in metabolism and body temperature following food consumption [30]. |

Novel Algorithmic Strategies for Nutritional Pattern Recognition Amid Missing Data

Troubleshooting Guide: Missing Data in Nutritional Wearables Research

Frequently Asked Questions

Q1: Why does missing data in my wearable dataset bias nutritional pattern recognition, and how can I fix this?

Missing data, especially when using principal component analysis (PCA) for dietary pattern derivation, leads to biased eigenvalues that distort the true underlying patterns. The bias increases with the percentage of missing data and is independent of the correlation structure between variables [32].

- Solution: Implement the Expectation-Maximization (EM) algorithm for imputation before PCA. This technique has been shown to produce eigenvalues that overlap with those derived from the original, complete dataset, effectively correcting the bias. This is particularly crucial for studies with relatively small sample sizes [32].

Q2: My food composition database has over 30% missing values for certain nutrients. What is the most accurate method to impute them?

Traditional methods like filling with mean/median values or borrowing data from other databases introduce significant error. State-of-the-art statistical imputation methods yield superior results [33].

The table below summarizes the performance of various imputation methods evaluated on real food composition data. A lower error indicates better performance.

| Imputation Method | Description | Relative Performance (Lower Error is Better) |

|---|---|---|

| Mean/Median Imputation | Replaces missing values with the variable's mean or median. | Baseline (Highest Error) |

| K-Nearest Neighbors (KNN) | Imputes based on values from the 'k' most similar data points. | Better than Mean/Median |

| Multiple Imputation by Chained Equations (MICE) | Creates multiple plausible imputations using regression models. | Better than KNN |

| Non-negative Matrix Factorization (NMF) | Decomposes the data matrix to estimate missing values. | Better than KNN |

| MissForest (Nonparametric Random Forest) | Uses a random forest model to impute missing data. | Best Performance (Lowest Error) |

- Recommendation: For the highest accuracy, use the MissForest method, as it outperforms other techniques, including MICE and KNN, across various levels of missing data (from 1% to 40%) [33].

Q3: The data from my wearable devices is often noisy and incomplete. How can I systematically assess its quality before analysis?

Data quality is a multi-faceted challenge in wearable monitoring. A robust assessment should go beyond simple data completeness [34] [35]. The following workflow outlines the key components of a wearable data quality evaluation toolkit:

- Data Completeness: The percentage of recorded vs. expected data samples. Loss can be high (up to 49%) in Bluetooth streaming mode compared to onboard storage (up to 9%) [35].

- On-Body Score: The estimated percentage of time the device was actually worn. Scores above 80% indicate good user compliance [35].