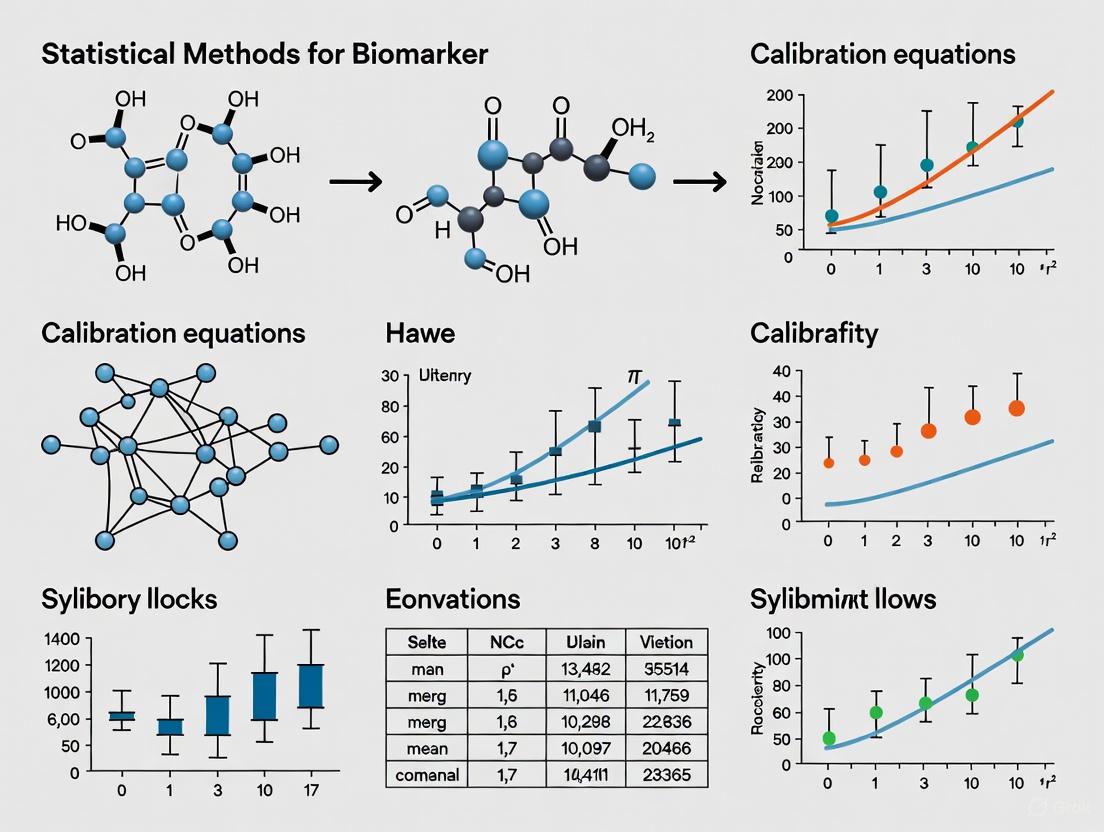

Statistical Methods for Biomarker Calibration Equations: From Foundational Concepts to Clinical Application

This article provides a comprehensive overview of statistical methods for developing and applying biomarker calibration equations, a critical process for ensuring data accuracy in biomedical research and drug development.

Statistical Methods for Biomarker Calibration Equations: From Foundational Concepts to Clinical Application

Abstract

This article provides a comprehensive overview of statistical methods for developing and applying biomarker calibration equations, a critical process for ensuring data accuracy in biomedical research and drug development. We explore the foundational principles of biomarker categories and contexts of use, detail methodological approaches including regression calibration and measurement error correction, address troubleshooting for common implementation challenges, and examine validation frameworks for regulatory acceptance. Tailored for researchers, scientists, and drug development professionals, this guide synthesizes current methodologies to enhance the reliability of biomarker data in studies ranging from nutritional epidemiology to clinical trials, ultimately supporting more robust scientific conclusions and regulatory decisions.

Understanding Biomarker Fundamentals: Categories, Context of Use, and Regulatory Definitions

In the field of statistical methods for biomarker calibration equations, a precise understanding of biomarker categories is fundamental. Biomarkers, defined as objectively measurable indicators of biological processes, pathogenic processes, or pharmacological responses to therapeutic interventions, serve as critical tools across drug development and clinical practice [1]. The rigorous classification of biomarkers enables researchers to establish appropriate statistical frameworks for calibration, validation, and application. Within a research context focused on statistical calibration, recognizing the distinct purposes and validation requirements for each biomarker category ensures the development of robust analytical models that accurately reflect biological reality.

The FDA-NIH Biomarker Working Group's BEST (Biomarkers, EndpointS, and other Tools) Resource provides standardized definitions that form the foundation for regulatory and research applications [2] [1]. These definitions create a common language for statisticians, clinicians, and researchers, facilitating clearer communication about performance characteristics and validation requirements. For statistical professionals working on calibration equations, understanding these categorical distinctions is crucial for selecting appropriate endpoints, designing validation studies, and interpreting results in context-specific frameworks.

Biomarker Categories: Definitions and Clinical Applications

Comparative Analysis of Biomarker Categories

Table 1: Core Biomarker Categories: Definitions, Applications, and Statistical Considerations

| Category | Definition | Primary Application | Key Statistical Considerations | Representative Examples |

|---|---|---|---|---|

| Diagnostic | Identifies or confirms the presence of a disease or specific condition [3] [4] [1]. | Differentiating disease states, identifying disease subtypes [3] [5]. | High sensitivity and specificity are critical; ROC analysis essential for threshold calibration [5]. | Prostate-Specific Antigen (PSA) for prostate cancer [6] [3]; C-Reactive Protein (CRP) for inflammation [3] [5]. |

| Prognostic | Identifies the likelihood of a clinical event, disease recurrence, or progression in patients diagnosed with a disease [7] [2]. | Informing disease management aggressiveness, patient stratification for trial enrichment [7] [2]. | Time-to-event analysis (e.g., Kaplan-Meier, Cox models); must be independent of specific treatments [7]. | Ki-67 for cancer aggressiveness [3]; Gleason score for prostate cancer progression [2]. |

| Predictive | Predicts the likelihood of a favorable or unfavorable response to a specific therapeutic intervention [3] [7]. | Guiding treatment selection for personalized medicine, avoiding ineffective therapies [6] [8]. | Analysis of treatment-by-biomarker interaction; clinical trial designs often require pre-specified biomarker stratification [7]. | HER2 status for trastuzumab response in breast cancer [3]; EGFR mutations for EGFR inhibitor response in lung cancer [3]. |

| Safety | Indicates the potential for, or occurrence of, toxicity or adverse effects resulting from an intervention [3]. | Monitoring patient safety during clinical trials and treatment, identifying organ-specific damage [3]. | Establishing reference ranges, determining thresholds for clinical action, monitoring longitudinal changes. | Liver function tests (ALT, AST) for hepatotoxicity [3]; Creatinine for kidney injury [3]. |

Distinguishing Prognostic and Predictive Biomarkers

A critical challenge in statistical calibration involves differentiating prognostic from predictive biomarkers, as this distinction fundamentally influences clinical trial design and analytical methodology.

Prognostic Biomarkers inform about the natural history of the disease regardless of therapy. They are measured before treatment and indicate long-term outcomes for patients receiving standard care or no treatment [7]. Statistically, a pure prognostic biomarker shows a main effect on outcome (e.g., progression-free survival, overall survival) but no significant interaction with treatment effect. For example, a high Ki-67 proliferation index indicates a more aggressive tumor biology and worse outcome across various treatment scenarios in breast cancer [3].

Predictive Biomarkers identify individuals who are more likely to respond to a specific drug. The statistical model must demonstrate a significant interaction between the biomarker and the treatment effect [7]. A biomarker can be purely predictive, both prognostic and predictive, or purely prognostic. For instance, BRAF mutations in colon cancer predict resistance to EGFR inhibitors but may not necessarily be prognostic across all treatment types [8].

Table 2: Statistical Framework for Differentiating Prognostic vs. Predictive Biomarkers

| Characteristic | Prognostic Biomarker | Predictive Biomarker |

|---|---|---|

| Clinical Question | What is the likely disease course? | Will this specific treatment work? |

| Measurement Timing | Pre-treatment (baseline) | Pre-treatment (baseline) |

| Statistical Analysis Focus | Main effect on clinical outcome | Treatment-by-biomarker interaction effect |

| Clinical Trial Design | Often used for stratification or enrichment | Often used for patient selection (e.g., biomarker-defined subgroups) |

| Impact on Treatment Decision | Informs on intensity of treatment (aggressive vs. conservative) | Informs on choice of specific therapeutic agent |

Experimental Protocols for Biomarker Validation

Protocol for Analytical Validation of Biomarker Assays

Objective: To establish and calibrate the performance characteristics of a biomarker assay for reliability and reproducibility in measuring the analyte of interest.

Materials:

- Research Reagent Solutions: Certified reference standards, assay-specific antibodies or probes, appropriate biological matrices (e.g., plasma, serum, tissue homogenates), calibration standards, and quality control samples.

Methodology:

- Precision Assessment: Conduct intra-day (repeatability) and inter-day (intermediate precision) testing using quality control samples at low, medium, and high concentrations. Calculate coefficients of variation (CV); typically acceptable CV is <15-20% depending on context [1].

- Accuracy and Calibration: Analyze certified reference standards across the assay's dynamic range. Perform linear regression analysis to establish the calibration curve. Accuracy should be within ±15% of the nominal value for bioanalytical methods.

- Specificity/Selectivity: Test potential interfering substances (e.g., hemolyzed blood, lipids, concomitant medications) to ensure they do not significantly affect the quantification.

- Lower Limit of Quantification (LLOQ): Determine the lowest concentration that can be measured with acceptable precision (CV <20%) and accuracy (80-120%). This requires replicate analysis (n≥5) of samples at progressively lower concentrations.

Statistical Analysis:

- Perform linear regression for calibration curves, reporting slope, intercept, and coefficient of determination (R²).

- For precision, calculate mean, standard deviation, and CV for each QC level.

- For LLOQ determination, use the standard deviation of the response and the slope of the calibration curve (LLOQ = 10σ/S, where σ is the standard deviation of the response and S is the slope of the calibration curve).

Protocol for Clinical Validation of a Predictive Biomarker

Objective: To demonstrate that a biomarker reliably predicts response to a specific therapeutic intervention in the target patient population.

Materials:

- Research Reagent Solutions: Validated assay kit for biomarker measurement, appropriate sample collection and storage materials, linked clinical dataset with treatment and outcome information.

Methodology:

- Study Design: Utilize a retrospective cohort from a randomized controlled trial or a prospectively designed biomarker-stratified study. The ideal design is a prospective-retrospective study using archived specimens from a completed RCT [7].

- Sample Collection and Processing: Collect and process biospecimens (e.g., tumor tissue, blood) using standardized protocols before treatment initiation. Ensure proper archiving with complete chain-of-custody documentation.

- Blinded Analysis: Perform biomarker testing in a CLIA-certified or equivalently accredited laboratory, blinded to the clinical outcomes and treatment assignment.

- Data Integration: Merge biomarker results with clinical outcome data (e.g., response rate, progression-free survival, overall survival) and treatment arm.

Statistical Analysis:

- Test for a significant interaction between the biomarker status and treatment effect in a statistical model (e.g., Cox proportional hazards model for time-to-event outcomes, logistic regression for binary outcomes).

- If a significant interaction is found, analyze outcomes within each biomarker subgroup to estimate the magnitude of benefit.

- Report hazard ratios or odds ratios with confidence intervals for the treatment effect within each biomarker-defined subgroup.

- Assess the clinical utility of the biomarker by calculating metrics like Negative Predictive Value (to identify patients unlikely to benefit) and Number Needed to Screen.

Diagram 1: Clinical validation workflow for a predictive biomarker. The critical step is testing for a statistically significant treatment-by-biomarker interaction. NPV: Negative Predictive Value; NNS: Number Needed to Screen.

Advanced Research Applications and Reagent Solutions

Emerging Technologies in Biomarker Research

The field of biomarker research is undergoing rapid transformation through technological innovations. Multi-omics approaches that integrate genomics, proteomics, and metabolomics are generating comprehensive molecular maps of diseases, enabling the discovery of complex biomarker signatures beyond single molecules [6] [9]. Liquid biopsy technology represents a groundbreaking advancement for non-invasive biomarker detection, particularly in oncology, allowing for real-time monitoring of disease progression and treatment response through circulating tumor DNA analysis [6]. Furthermore, artificial intelligence and machine learning algorithms are now being deployed to process complex, high-dimensional datasets, identifying subtle patterns that signal disease onset, progression, or treatment response with unprecedented accuracy [9] [8]. These technologies are shifting the paradigm from univariate biomarkers to multivariate panels and dynamic monitoring systems.

Essential Research Reagent Solutions for Biomarker Investigation

Table 3: Key Research Reagent Solutions for Biomarker Discovery and Validation

| Reagent/Material | Function/Application | Considerations for Statistical Calibration |

|---|---|---|

| Certified Reference Standards | Calibrating analytical instruments and assays; establishing quantitative relationships. | Essential for creating standard curves. Purity and traceability are critical for assay reproducibility and cross-study comparisons. |

| Validated Antibodies & Probes | Specific detection of target proteins, genes, or metabolites in various assay formats. | Validation data (specificity, sensitivity, lot-to-lot consistency) must be reviewed. Poor reagent quality introduces unmeasured variability. |

| Stable Isotope-Labeled Internal Standards | Normalizing sample processing variability in mass spectrometry-based assays. | Corrects for recovery differences and ion suppression; crucial for achieving precise and accurate quantitative results. |

| Standardized Biological Matrices | Diluting calibration standards to mimic the sample environment (e.g., charcoal-stripped serum). | Ensures the calibration curve behaves similarly to real samples, improving the accuracy of extrapolated concentrations. |

| Multiplex Assay Panels | Simultaneous measurement of multiple biomarkers from a single sample (e.g., multiplex immunoassays, NGS panels). | Requires specialized normalization methods. Correlation between analytes must be considered in the statistical model. |

Diagram 2: Interaction between reagent solutions and the biomarker development workflow. High-quality reagents are foundational to generating reliable data for subsequent statistical calibration.

The precise categorization of biomarkers into diagnostic, prognostic, predictive, and safety types provides an essential framework for developing statistically rigorous calibration equations. Each category demands specific validation pathways and statistical considerations, particularly in distinguishing prognostic from predictive applications. As biomarker science evolves toward multi-analyte panels, dynamic monitoring, and AI-driven discovery, the complexity of statistical calibration will increase accordingly. Future methodologies will need to integrate multi-omics data, account for temporal changes in biomarker levels, and establish robust frameworks for validating complex digital biomarkers derived from wearable sensors. For researchers focused on statistical methods for biomarker calibration, these advancements present both challenges and opportunities to develop more sophisticated models that ultimately enhance the utility of biomarkers in personalized medicine and drug development.

Establishing Context of Use (COU) for Specific Drug Development Applications

The Context of Use (COU) is a foundational concept in modern drug development, providing a precise framework for how a biomarker or other drug development tool (DDT) should be employed within regulatory decision-making. According to the U.S. Food and Drug Administration (FDA), the COU is formally defined as "a concise description of the biomarker’s specified use in drug development" that includes both the BEST biomarker category and the biomarker’s intended application [10]. This structured approach ensures that biomarkers are validated and implemented under specific conditions that clearly delineate their purpose, limitations, and appropriate application. The development of a COU statement represents a critical first step in the biomarker qualification process, as it directly influences the level of evidence required for regulatory acceptance and determines the extent of analytical and clinical validation necessary [11] [12].

The COU framework is particularly vital for ensuring that biomarkers provide reliable and reproducible information across multiple drug development programs. When a biomarker receives qualification for a specific COU through the FDA's Biomarker Qualification Program (BQP), it becomes publicly available for use by any drug developer for that qualified context without requiring re-evaluation of the supporting data [12]. This regulatory pathway promotes consistency, reduces duplication of effort, and accelerates the drug development process by creating standardized tools that can be applied across multiple development programs for the same intended purpose [13]. The COU concept extends beyond biomarkers to other drug development tools, including clinical outcome assessments (COAs) and animal models, establishing a unified framework for regulatory evaluation [14] [13].

Core Components of a Context of Use Statement

Structural Framework and BEST Biomarker Categories

A properly constructed Context of Use statement follows a specific organizational framework consisting of two primary components: the Use Statement and the Conditions for Qualified Use [12]. The Use Statement provides a concise description that identifies the biomarker and explains its purpose in drug development, while the Conditions for Qualified Use offer a comprehensive description of the specific circumstances under which the biomarker can be appropriately employed [12]. This bifurcated structure ensures clarity regarding both the intended application and the boundaries of appropriate use.

The foundation of any COU statement is the BEST biomarker category, which classifies biomarkers according to their fundamental scientific purpose [10] [11]. The BEST Resource, developed through a collaborative FDA-NIH working group, defines seven primary biomarker categories that encompass the full spectrum of biomarker applications in drug development:

- Susceptibility/Risk Biomarkers: Identify individuals with increased likelihood of developing a disease

- Diagnostic Biomarkers: Detect or confirm the presence of a disease or condition

- Monitoring Biomarkers: Assess disease status or evidence of exposure to a medical product

- Prognostic Biomarkers: Identify likelihood of a clinical event or disease progression

- Predictive Biomarkers: Identify individuals more likely to experience a favorable or unfavorable effect from a specific medical product

- Pharmacodynamic/Response Biomarkers: Indicate biological response to a medical product

- Safety Biomarkers: Measure physiological parameters indicating potential adverse effects [11]

Table 1: BEST Biomarker Categories with Examples and Applications

| Biomarker Category | Primary Use | Example |

|---|---|---|

| Susceptibility/Risk | Identify individuals with increased risk of developing breast or ovarian cancer | BRCA1 and BRCA2 genetic mutations [11] |

| Diagnostic | Diagnose diabetes and pre-diabetes in adults | Hemoglobin A1c [11] |

| Prognostic | Define higher risk disease population | Total kidney volume for autosomal dominant polycystic kidney disease [11] |

| Monitoring | Monitor response to antiviral therapy in patients with chronic Hepatitis C | HCV RNA viral load [11] |

| Predictive | Predict response to EGFR tyrosine kinase inhibitors in patients with NSCLC | EGFR mutation status in nonsmall cell lung cancer [11] |

| Pharmacodynamic/Response | Surrogate for clinical benefit in HIV drug trials | HIV RNA (viral load) [11] |

| Safety | Monitor renal function and potential nephrotoxicity during drug treatment | Serum creatinine for acute kidney injury [11] |

Intended Use in Drug Development

The second critical component of a COU statement specifies the biomarker's intended use within the drug development process. This component delineates the specific application and decision-making context in which the biomarker will be employed [10]. Common intended uses in drug development include:

- Defining inclusion/exclusion criteria for clinical trials

- Allocating patients to specific treatment arms

- Determining when a patient should cease participation in a clinical trial

- Establishing proof of concept for a drug's mechanism of action

- Supporting clinical dose selection decisions

- Enriching clinical trials for specific events or populations of interest

- Evaluating treatment response [10]

The intended use component of the COU may also include descriptive information about the patient population, disease stage, model system, stage of drug development, or mechanism of action of the therapeutic intervention [10]. This specificity ensures that the biomarker is applied consistently with the evidence supporting its validation and prevents inappropriate extrapolation beyond the conditions under which it was qualified.

Complete COU Framework

The relationship between the BEST biomarker category and intended use creates the complete COU statement, which typically follows the structure: "[BEST biomarker category] to [drug development use]" [10]. The following diagram illustrates the complete structural framework of a Context of Use statement:

Diagram 1: Structural Framework of a Context of Use Statement. This diagram illustrates the two core components of a COU (BEST Biomarker Category and Intended Use) and their subcomponents that form a complete COU statement.

Methodological Framework for COU Development

Developing the Context of Use Statement

The development of a robust COU statement requires systematic consideration of multiple factors that collectively define the appropriate application of a biomarker in drug development. According to FDA recommendations, developers should evaluate several key elements when constructing a COU, including the identity of the biomarker, the specific aspect of the biomarker that is measured and the form in which it is used for biological interpretation, the species and characteristics of the animal or human subjects studied, the purpose of use in drug development, the specific drug development circumstances for applying the biomarker, and the interpretation and decision or action based on the biomarker results [12].

The process of COU development typically begins with identifying a significant challenge in drug development that could be addressed through biomarker application [11]. This involves determining whether the proposed biomarker has the potential to improve upon standard assessments used in drug development and what studies or data are needed to validate the biomarker for the proposed COU [11]. Practical considerations such as feasibility of measurement within a drug development program, frequency of assessment needed, and whether the biomarker will need to be assessed in routine clinical care if the drug is approved must also be evaluated during COU development [11].

Table 2: Key Considerations for COU Development

| Consideration Category | Specific Elements to Define | Impact on COU Specification |

|---|---|---|

| Biomarker Identity | Molecular characteristics, biological origin, stability | Determines appropriate measurement technology and sample handling requirements |

| Measurement Specifications | Aspect measured, units of measurement, biological interpretation | Defines the quantitative or qualitative nature of the biomarker data |

| Subject Characteristics | Species, disease status, demographic factors, concomitant treatments | Establishes the population for which the biomarker is validated |

| Drug Development Purpose | Specific decision to be informed, stage of development | Guides the level of evidence required for the intended use |

| Implementation Circumstances | Timing of assessment, frequency of measurement, clinical setting | Influences practical feasibility and integration into development plans |

| Interpretation Framework | Decision thresholds, actions based on results, risk of false positives/negatives | Defines the consequences of biomarker application on development decisions |

Statistical Considerations for Biomarker Calibration

Within the framework of biomarker calibration research, measurement error models provide the statistical foundation for understanding and compensating for variability in biomarker measurements [15]. These models are essential for ensuring that biomarkers perform reliably within their specified COU. Three primary measurement error models are commonly employed in biomarker research:

The classical measurement error model is defined by X^* = X + e, where e is a random variable with mean zero that is independent of X [15]. This model assumes the measurement has no systematic bias but is subject to random error, commonly applied to laboratory and objective clinical measurements.

The linear measurement error model extends the classical model to accommodate systematic bias and is defined by X^* = α₀ + αX X + e, where e is a random variable with mean zero that is independent of X [15]. This model is particularly suitable for self-reported measures or assays with known systematic biases, where α₀ quantifies location bias and αX quantifies scale bias.

The Berkson measurement error model represents an "inverse" scenario where the true value is envisioned as arising from the measured value plus error: X = X^* + e, where e is a random variable with mean zero that is independent of X^* [15]. This model is often applicable in occupational epidemiology or when using prediction equations.

In practice, regression calibration methods are frequently employed to address measurement error in biomarker data, particularly when pooling data from multiple studies [16]. These approaches involve developing study-specific calibration models that relate local laboratory measurements to reference laboratory measurements, then using these models to estimate reference values for all subjects within each study [16]. The calibrated measurements can then be combined across studies using either two-stage methods (study-specific analysis followed by meta-analysis) or aggregated methods (pooling all data followed by analysis) [16].

Experimental Protocols for COU Implementation

Biomarker Validation Framework

The validation of biomarkers for a specific COU follows a fit-for-purpose approach in which the level and type of evidence required depends on the intended application [11]. The validation framework encompasses both analytical validation, which assesses the performance characteristics of the biomarker measurement tool, and clinical validation, which demonstrates that the biomarker accurately identifies or predicts the clinical outcome of interest [11].

Analytical validation involves rigorous assessment of the biomarker assay's performance characteristics, which may include accuracy, precision, analytical sensitivity, analytical specificity, reportable range, and reference range depending on the method of detection and the analyte of interest [11]. The specific parameters evaluated are tailored to the COU, with more stringent requirements for biomarkers that will inform critical regulatory decisions.

Clinical validation demonstrates that the biomarker accurately identifies or predicts the clinical outcome of interest within the specified context of use [11]. This typically involves assessing sensitivity and specificity, determining positive and negative predictive values, and evaluating the biomarker's performance in the intended population. The extent of clinical validation required varies significantly based on the COU - for example, a biomarker used for patient enrichment in early phase trials may require less extensive validation than one used as a surrogate endpoint to support regulatory approval [11].

The following workflow diagram illustrates the complete biomarker validation process from COU definition through regulatory acceptance:

Diagram 2: Biomarker Validation and Qualification Workflow. This diagram outlines the key stages in validating a biomarker for a specific Context of Use, from initial definition through regulatory qualification.

Protocol for Biomarker Calibration Studies

The development of calibration equations for biomarkers requires carefully designed studies that account for sources of measurement variability. The following protocol provides a standardized approach for conducting biomarker calibration studies:

Study Design Options:

- Random Sample Calibration: Biospecimens are selected at random from the study cohort for reassay at a reference laboratory [16]

- Controls-Only Calibration: Only non-cases from the cohort or controls from a case-control subsample are reassayed [16]

- Nested Validation Study: A subgroup of participants in a cohort study provides both the error-prone measurement and a reference value [15]

Sample Size Considerations:

- For reliable estimation of calibration parameters, a minimum of 100 participants is generally recommended

- Larger sample sizes may be required when expecting substantial heterogeneity in calibration equations across subgroups

- Power calculations should be based on the precision requirements for the calibration slope estimate

Laboratory Procedures:

- All biospecimens for calibration should be processed using standardized protocols

- The order of assay should be randomized between original and reference laboratories to avoid batch effects

- Replicate measurements should be included to assess within-laboratory variability

Statistical Analysis:

- Develop study-specific calibration models relating local laboratory measurements (W) to reference laboratory measurements (X): E[X|W] = a + bW [16]

- Assess linearity assumptions through residual plots and goodness-of-fit tests

- Evaluate between-study heterogeneity in calibration parameters when pooling data from multiple studies

- Apply calibration equations to all subjects within each study to generate calibrated biomarker values

Validation of Calibration Models:

- Use cross-validation techniques to assess calibration model performance

- Evaluate transportability of calibration equations across different populations [15]

- Assess impact of calibration on biomarker-disease association estimates

Regulatory Pathways for COU Qualification

FDA Qualification Programs

The FDA has established structured pathways for the qualification of drug development tools, including biomarkers, for specific contexts of use. The Biomarker Qualification Program (BQP) provides a framework for the development and regulatory acceptance of biomarkers for a specified COU [11] [12]. This program involves three distinct stages:

The Letter of Intent (LOI) stage involves submission of a concise document describing the biomarker, the relevant drug development need, and the proposed COU, along with supporting scientific rationale [13]. The FDA reviews the LOI within three months and issues a Determination Letter indicating whether the project is accepted along with recommendations for next steps.

The Qualification Plan (QP) stage requires submission of a detailed plan describing all relevant data, knowledge gaps, and the analysis plan, including full study protocols and analytic plans where appropriate [13]. The FDA reviews the QP within six months and issues a QP Determination Letter with requests for data and recommendations regarding data needs for the Full Qualification Package.

The Full Qualification Package (FQP) represents the final stage, culminating in the qualification determination [13]. The FQP includes detailed descriptions of all studies, analyses, and results related to the DDT and its COU. The FDA reviews the FQP within ten months and determines whether to qualify the proposed DDT for its proposed COU or for a modified COU.

Once qualified, a biomarker can be used by any drug developer in their drug development program without requiring FDA re-review of its suitability, provided it is used within the specified COU [12] [13]. This promotes consistency across the industry, reduces duplication of efforts, and helps streamline the development of safe and effective therapies.

Alternative Regulatory Pathways

Beyond the formal Biomarker Qualification Program, several alternative pathways exist for obtaining regulatory acceptance of biomarkers for specific contexts of use:

The IND Application Process allows drug developers to engage with the FDA through the Investigational New Drug application process to pursue clinical validation and regulatory acceptance of biomarkers within the context of specific drug development programs [11]. This pathway may be more efficient for well-established biomarkers with data available supporting their use within a specific drug development program.

Early Engagement Opportunities include mechanisms such as Critical Path Innovation Meetings (CPIM) and pre-IND meetings where drug developers and biomarker developers can engage with the FDA early in the drug development process to discuss biomarker validation plans [11]. These early discussions can help align biomarker development strategies with regulatory expectations before significant resources are invested.

The Innovative Science and Technology Approaches for New Drugs (ISTAND) Pilot Program accepts submissions for DDTs that fall outside the scope of the three existing qualification programs [13]. This pilot program is designed to expand DDT types by encouraging development of novel tools that may not be eligible for existing qualification pathways but still offer potential benefits for drug development.

Research Reagent Solutions Toolkit

Table 3: Essential Research Reagents and Materials for Biomarker Validation Studies

| Reagent/Material | Specification Requirements | Application in COU Development |

|---|---|---|

| Reference Standard | Certified reference materials with documented purity and stability | Serves as gold standard for assay calibration and validation |

| Quality Control Materials | Pooled samples with low, medium, and high biomarker concentrations | Monitors assay performance across measurement range |

| Assay Kits | FDA-cleared/approved when available; otherwise analytically validated | Provides standardized measurement methodology |

| Biological Specimens | Well-characterized samples with associated clinical data | Enables clinical validation in intended use population |

| DNA/RNA Extraction Kits | High purity and yield requirements appropriate for downstream applications | Supports molecular biomarker development and validation |

| PCR/Sequencing Reagents | Demonstrated lot-to-lot consistency and minimal contamination | Ensures reproducibility of molecular biomarker measurements |

| Cell Lines | Authenticated and mycoplasma-free | Facilitates functional characterization of biomarker candidates |

| Animal Models | Well-characterized disease models where appropriate | Supports preclinical biomarker validation |

| Data Management System | 21 CFR Part 11 compliant electronic data capture system | Maintains data integrity and regulatory compliance |

| Statistical Software | Validated computational environment | Supports development of calibration equations and validation analyses |

The establishment of a precise Context of Use is a critical prerequisite for the successful development and application of biomarkers in drug development. The COU framework provides the necessary structure to ensure that biomarkers are appropriately validated for specific applications and that the evidence generated supports their intended use in regulatory decision-making. The fit-for-purpose validation approach, which tailors the level of evidence to the specific COU, creates an efficient pathway for biomarker qualification while maintaining scientific rigor.

The integration of statistical methods for biomarker calibration strengthens the COU framework by providing tools to address measurement variability and ensure consistency across different laboratories and studies. As drug development continues to evolve toward more targeted therapies and precision medicine approaches, the proper specification and validation of biomarkers within clearly defined contexts of use will become increasingly important for efficiently bringing new treatments to patients.

The Biomarker Evidence Standardization Terminology (BEST) Resource Framework is an initiative designed to establish a unified language for biomarker research and application. In the dynamic field of biomedicine, biomarkers serve as measurable indicators of biological processes, pathogenic states, or pharmacological responses to therapeutic intervention [17]. The lack of standardized terminology creates significant challenges in data integration, sharing, and knowledge management across research institutions and pharmaceutical development pipelines [18]. The BEST Framework addresses this critical need by providing a structured ontology that enables consistent coding, analysis, and data sharing across the broader research community.

The framework's development coincides with a period of remarkable transformation in the biomarker landscape. By 2025, advanced analytical methods including next-generation sequencing (NGS), proteomics, and metabolomics have become cornerstone technologies in research laboratories [6]. The integration of artificial intelligence and machine learning has emerged as a game-changing force, accelerating biomarker discovery and enhancing understanding of complex biological systems. Within this context, the BEST Framework provides the essential semantic infrastructure needed to maximize the value of these technological advancements through consistent and unambiguous biomarker annotation.

BEST Framework Core Components

Biomarker Classification and Definitions

The BEST Framework establishes precise, standardized definitions for biomarker categories based on their clinical application and temporal measurement characteristics. This classification system enables researchers and drug developers to communicate with unambiguous specificity about biomarker function and utility. The core biomarker types defined within the framework are summarized in Table 1.

Table 1: BEST Framework Biomarker Classification and Definitions

| Biomarker Type | Measurement Timing | Definition | Primary Application |

|---|---|---|---|

| Prognostic | Baseline | Identifies likelihood of clinical event, disease recurrence or progression in patients with the disease or condition of interest [17]. | Patient stratification, trial enrichment, understanding disease natural history |

| Predictive | Baseline | Identifies individuals more likely to experience favorable/unfavorable effect from exposure to a medical product or environmental agent [17]. | Treatment selection, personalized medicine, clinical trial enrichment |

| Pharmacodynamic | Baseline & On-treatment | Indicates biologic activity of a drug; may be linked to mechanism of action or independent of it [17]. | Proof of mechanism, dose optimization, understanding biological drug effects |

| Safety | Baseline & On-treatment | Related to likelihood, presence, or extent of toxicity as an adverse effect [17]. | Toxicity prediction/monitoring, risk mitigation, dose modification |

Foundational Principles and Ontology Structure

The BEST Framework is built upon principles established by successful biomedical ontology initiatives, particularly the Open Biomedical Ontologies (OBO) Foundry. The framework adheres to three key principles that ensure its logical consistency and practical utility: (1) terms and definitions are built up compositionally from component representations taken from the same ontology or more basic feeder ontologies; (2) for each domain, there is convergence upon exactly one Foundry ontology; and (3) the ontology uses upper-level categories drawn from Basic Formal Ontology (BFO) together with relations unambiguously defined according to the pattern set forth in the OBO Relation Ontology [19].

The framework incorporates a critical distinction between generic and specific portions of reality (GPRs and SPRs) to enable precise terminology mapping. Among generic portions of reality, the framework distinguishes between universals (denoted by general terms such as 'human being') and generic configurations (formed by generic portions of reality that stand in some relation to each other). This structured approach allows the BEST Framework to maintain semantic precision while accommodating the evolving nature of biomarker science [19].

BEST Framework Core Structure

Standardization Protocols and Implementation Workflow

Biomarker Terminology Mapping Procedure

The implementation of the BEST Framework begins with a systematic terminology mapping procedure that ensures legacy data and existing research artifacts can be integrated into the standardized system. This protocol is essential for addressing the silo effects that reduce the value of annotations created using disparate systems [19]. The mapping procedure consists of four critical steps that transform legacy terminology into BEST-compliant standardized expressions.

Step 1: Concept Identification - Researchers must first identify all biomarker-related terms and concepts within their dataset or research documentation. This includes both explicitly labeled biomarkers and implicit measurements that function as biomarkers. Each term should be documented with its current definition, source terminology system (e.g., SNOMED CT, LOINC, or local institutional terms), and contextual usage.

Step 2: Ontological Analysis - Each identified concept undergoes rigorous ontological analysis to determine the type of entity it represents. The analysis distinguishes between universals (e.g., 'human being'), particulars (e.g., 'Patient X'), and configurations (e.g., 'cell membrane part_of cell') [19]. This step ensures that terms referencing entities of different types are mapped separately, preserving ontological precision.

Step 3: BEST Alignment - Following ontological analysis, concepts are aligned with the appropriate BEST Framework categories using the classification system defined in Section 2.1. During this alignment, researchers must verify that temporal characteristics (baseline vs. on-treatment measurement) and functional applications (prognostic, predictive, pharmacodynamic, or safety) are correctly specified.

Step 4: Semantic Integration - The final step involves integrating the mapped terminology into the broader BEST ontology structure, establishing appropriate relationships with existing terms, and ensuring logical consistency across the framework. This process may require creating new terms or relationships where gaps exist, following the compositional principles outlined in Section 2.2.

Experimental Protocol for Biomarker Data Pooling and Calibration

For research involving biomarker data pooled from multiple studies, the BEST Framework provides a standardized protocol for calibration and harmonization. This protocol is particularly relevant for consortia projects where biomarkers are measured using different assays, kits, or laboratories across participating studies [20]. The procedure ensures that biomarker measurements can be validly compared and analyzed despite technical variability.

Table 2: Biomarker Data Pooling and Calibration Methods

| Method | Description | Application Context | Key Considerations |

|---|---|---|---|

| Two-Stage Calibration | Study-specific analyses completed in first stage followed by meta-analysis in second stage [20]. | When individual study data must remain separated or for validation of aggregated approaches. | Maintains study integrity but may reduce statistical power for subgroup analyses. |

| Internalized Calibration | Uses reference laboratory measurement when available and estimated value derived from calibration models otherwise [20]. | When a subset of samples from each study has been re-assayed at a reference laboratory. | More complex implementation but utilizes all available reference data directly. |

| Full Calibration | Uses calibrated biomarker measurements for all subjects, including those with reference laboratory measurements [20]. | Preferred aggregated approach to minimize bias in point estimates when pooling data. | Minimizes bias in point estimates; preferred aggregated approach. |

Materials and Reagents:

- Biospecimens from participating studies with local laboratory biomarker measurements

- Reference laboratory with standardized assay protocols

- Calibration subset samples (typically 100-200 controls per study)

- Statistical software capable of implementing conditional logistic regression models

Procedure:

- Designate Reference Laboratory: Select a single reference laboratory to perform all calibration assays using standardized protocols and quality control measures.

Select Calibration Subset: Randomly select a subset of biospecimens from each study for re-assay at the reference laboratory. For nested case-control studies, selections are typically made from controls due to concerns about case specimen availability [20].

Develop Study-Specific Calibration Models: For each study using a local laboratory, estimate a calibration model that quantifies the relationship between local measurements (Xlocal) and reference measurements (Xref). The basic model structure is: Xref = β₀ + β₁Xlocal + ε, where ε represents random error.

Apply Calibration Models: Use the study-specific calibration equations to estimate reference laboratory biomarker values for all subjects in each study. For the full calibration method, apply calibrated values to all subjects, including those with direct reference measurements.

Analyze Pooled Data: Perform statistical analysis on the harmonized biomarker measurements using either two-stage or aggregated approaches as outlined in Table 2.

Quality Control Considerations:

- Document assay coefficients of variation for both local and reference laboratories

- Verify linearity assumptions in calibration models through residual analysis

- Assess potential batch effects across different processing times

- Validate calibration models with an independent sample subset when possible

Biomarker Data Pooling Workflow

Research Reagent Solutions and Materials

The successful implementation of the BEST Framework and associated biomarker research requires specific research reagents and materials that ensure reproducibility and standardization across laboratories. Table 3 details essential components of the biomarker research toolkit, with particular emphasis on resources that support terminology standardization and assay harmonization.

Table 3: Research Reagent Solutions for Biomarker Standardization

| Resource Category | Specific Examples | Function in Biomarker Research | Access Information |

|---|---|---|---|

| Reference Terminologies | NCI Thesaurus (NCIt), SNOMED CT, NCI Metathesaurus (NCIm) [18]. | Provides standardized definitions and relationships for biomarker concepts and related entities. | Publicly available through NCI Enterprise Vocabulary Services (EVS). |

| Biomarker Standards | USP Reference Standards, FNIH Biomarkers Consortium materials [21]. | Enables calibration across assay platforms and laboratories through physical reference materials. | Available through standards organizations and consortium repositories. |

| Data Standards | CDISC Terminology, FDA Terminology Value Sets [18]. | Supports regulatory compliance and data interoperability in clinical trials and biomarker studies. | Publicly available through NCI EVS and regulatory agency websites. |

| Ontology Tools | NCI Protégé, EVSRESTAPI, EVS Explore [18]. | Enables curation, mapping, and implementation of standardized biomarker terminology. | Open-source tools available through NCI and Stanford University. |

Integration with Statistical Methods for Biomarker Calibration

The BEST Framework provides the essential terminology foundation for applying advanced statistical methods to biomarker calibration research. Within the context of biomarker calibration equations, standardized terminology ensures that statistical models accurately represent biological reality and that results are interpretable across different research contexts. The framework enables researchers to implement sophisticated calibration approaches while maintaining semantic precision.

For nested case-control studies with pooled biomarker data, the conditional logistic regression model for the biomarker-disease association takes the form:

λ(t|X,Z) = λ₀(t)exp(βᵢXᵢ + γZ)

Where X represents the calibrated biomarker measurement, Z represents other covariates, and βᵢ is the log relative risk describing the biomarker-disease association [20]. The BEST Framework ensures that X is unambiguously defined according to biomarker type (prognostic, predictive, etc.), enabling appropriate interpretation of the resulting risk estimates.

When evaluating biomarker-disease associations across multiple studies, the framework facilitates the implementation of either two-stage or aggregated calibration approaches. Under the two-stage method, study-specific analyses are completed first using BEST-standardized terminology, followed by meta-analysis. In the aggregated approach, data from all studies are combined into a single dataset before analysis using either internalized or full calibration methods [20]. The BEST Framework ensures that biomarker definitions remain consistent across both approaches, enabling valid comparison of results.

The framework also supports the development of biomarker calibration equations by providing standardized terminology for covariates that may influence the relationship between local and reference laboratory measurements. By clearly distinguishing between types of biomarkers and their temporal characteristics, the framework helps researchers identify appropriate adjustment variables and avoid omitted variable bias in calibration models.

The BEST Resource Framework establishes a comprehensive system for standardizing biomarker terminology that directly supports advances in biomarker calibration research. By providing precise definitions, logical structure, and implementation protocols, the framework addresses critical challenges in data integration, sharing, and knowledge management across the biomedical research continuum. The integration of this terminology framework with statistical methods for biomarker calibration enables more robust, reproducible, and clinically meaningful research outcomes.

As biomarker science continues to evolve with emerging technologies such as liquid biopsy, multi-omics approaches, and AI-driven discovery, the importance of standardized terminology will only increase [6]. The BEST Framework provides a foundation for this future progress by establishing a common language that transcends disciplinary boundaries and technical platforms. Through widespread adoption by researchers, drug developers, and regulatory agencies, the framework promises to accelerate the translation of biomarker discoveries into clinical applications that improve patient care and treatment outcomes.

In the evolving landscape of biomarker research, the fit-for-purpose (FFP) validation framework has emerged as a pragmatic and strategic approach to biomarker method development and qualification. This paradigm emphasizes that the level of validation evidence and analytical rigor must be directly proportional to the intended application and decision-making context in drug development and clinical research [22] [23]. The fundamental premise of FFP validation is that a biomarker method should demonstrate sufficient performance characteristics to reliably support its specific context of use, without imposing unnecessary or premature regulatory burdens during early research phases [24].

The FFP approach represents a significant shift from traditional one-size-fits-all validation standards, recognizing that biomarkers serve different purposes across the drug development continuum—from early discovery and pharmacodynamic monitoring to definitive diagnostic applications [23]. This framework enables researchers to allocate resources efficiently while maintaining scientific rigor, particularly important given the critical role biomarkers play in accelerating the development of new therapies, including cancer immunotherapies [25]. The position of a biomarker in the spectrum between research tool and clinical endpoint directly dictates the stringency of experimental proof required to achieve method validation [23].

Biomarker Assay Categories and Validation Requirements

Classification of Biomarker Assays

Biomarker methods can be categorized into five distinct classes based on their analytical technology and measurement capabilities, with each category requiring different validation approaches [23]. Understanding these classifications is essential for implementing appropriate FFP validation strategies.

Table 1: Biomarker Assay Categories and Definitions

| Assay Category | Description | Key Characteristics |

|---|---|---|

| Definitive Quantitative | Uses calibrators and regression models to calculate absolute quantitative values | Fully characterized reference standard representative of the biomarker [23] |

| Relative Quantitative | Uses response-concentration calibration with non-representative reference standards | Reference standards not fully representative of the biomarker [23] |

| Quasi-Quantitative | No calibration standard; continuous response expressed in terms of sample characteristics | Non-calibrated continuous response measurement [23] |

| Qualitative (Ordinal) | Relies on discrete scoring scales (e.g., immunohistochemistry) | Categorical results based on scoring systems [23] |

| Qualitative (Nominal) | Determines presence/absence of a biomarker (e.g., gene product) | Binary yes/no results [23] |

Validation Parameters by Assay Category

The FFP approach tailors validation requirements to the specific assay category, with increasing stringency as biomarkers progress toward clinical application.

Table 2: Recommended Performance Parameters for Biomarker Method Validation by Assay Category

| Performance Characteristic | Definitive Quantitative | Relative Quantitative | Quasi-Quantitative | Qualitative |

|---|---|---|---|---|

| Accuracy | ✓ | |||

| Trueness (Bias) | ✓ | ✓ | ||

| Precision | ✓ | ✓ | ✓ | |

| Reproducibility | ✓ | |||

| Sensitivity | ✓ | ✓ | ✓ | ✓ |

| Specificity | ✓ | ✓ | ✓ | ✓ |

| Dilution Linearity | ✓ | ✓ | ||

| Parallelism | ✓ | ✓ | ||

| Assay Range | ✓ | ✓ | ✓ | |

| LLOQ/ULOQ | ✓ | ✓ |

LLOQ = Lower Limit of Quantitation; ULOQ = Upper Limit of Quantitation [23]

The Fit-for-Purpose Validation Workflow

The FFP validation process proceeds through discrete, iterative stages that emphasize continuous improvement and appropriate resource allocation based on the biomarker's development stage and intended application [23].

Diagram 1: The Five-Stage Fit-for-Purpose Validation Workflow

Stage 1: Purpose Definition and Assay Selection

The initial and most critical phase involves precisely defining the biomarker's intended use and selecting an appropriate assay technology. During this stage, researchers must establish:

- Clear context of use: Specific application (e.g., pharmacodynamic marker, predictive biomarker, diagnostic) [24]

- Decision-making consequences: The impact of false positives and false negatives on research or clinical decisions [23]

- Technology platform selection: Appropriate analytical method based on required sensitivity, specificity, and throughput [26]

- Preliminary acceptance criteria: Initial performance targets based on intended use [23]

This stage requires collaborative input from clinicians, researchers, and statisticians to ensure the intended application aligns with clinical needs and analytical capabilities [26].

Stage 2: Method Validation Planning

In this planning phase, researchers assemble appropriate reagents and components while developing a comprehensive validation plan:

- Reagent qualification: Source and characterize critical reagents, including reference standards [23]

- Validation protocol development: Document predefined acceptance criteria and experimental designs [27]

- Statistical power considerations: Determine appropriate sample sizes for validation experiments [27]

- Risk assessment: Identify potential technical and operational challenges [26]

Stage 3: Performance Verification and SOP Development

The experimental phase focuses on generating robust performance data against predefined acceptance criteria:

- Analytical performance assessment: Evaluate parameters specific to the assay category (Table 2) [23]

- Stability studies: Assess sample and reagent integrity during collection, storage, and analysis [23]

- Specificity testing: Demonstrate assay selectivity in the presence of potential interferents [26]

- Standard Operating Procedure (SOP) development: Document the finalized method for consistent implementation [23]

For definitive quantitative assays, the SFSTP recommends constructing an accuracy profile that accounts for total error (bias and intermediate precision) using 3-5 different concentrations of calibration standards and validation samples run in triplicate on 3 separate days [23].

Stage 4: In-Study Validation

This stage assesses assay performance in the actual clinical context and identifies practical challenges:

- Real-world performance monitoring: Evaluate assay robustness with clinical samples [23]

- Sample handling verification: Identify patient sampling issues, including collection and storage stability [23]

- Quality control implementation: Establish in-study QC procedures and acceptance criteria [23]

- Cross-site reproducibility: For multisite studies, verify consistent performance across locations [26]

Stage 5: Routine Use and Continuous Monitoring

The final stage focuses on maintaining assay performance during routine implementation:

- Quality control monitoring: Implement ongoing QC procedures with established rules (e.g., 4:6:15 rule for definitive quantitative assays) [23]

- Proficiency testing: Regular assessment of analyst competency and assay performance [23]

- Batch-to-batch QC: Monitor consistency across reagent lots and production batches [26]

- Continuous improvement: Iterative refinement based on performance data and evolving requirements [23]

Statistical Framework for Biomarker Validation

Performance Metrics for Biomarker Evaluation

Appropriate statistical metrics are essential for evaluating biomarker performance across different applications. The choice of metric depends on the study goals and should be determined by a multidisciplinary team including clinicians, scientists, and statisticians [27].

Table 3: Statistical Metrics for Biomarker Evaluation

| Metric | Description | Application Context |

|---|---|---|

| Sensitivity | Proportion of true cases correctly identified | Diagnostic, screening biomarkers [27] |

| Specificity | Proportion of true controls correctly identified | Diagnostic, screening biomarkers [27] |

| Positive Predictive Value | Proportion of test-positive patients with the disease | Function of disease prevalence [27] |

| Negative Predictive Value | Proportion of test-negative patients without the disease | Function of disease prevalence [27] |

| ROC Curve | Plot of sensitivity vs. 1-specificity across thresholds | Overall discriminatory performance [28] |

| AUC | Area under ROC curve; measure of discrimination | Ranges from 0.5 (random) to 1 (perfect) [28] |

| Calibration | How well biomarker estimates actual risk | Risk prediction biomarkers [27] |

| NRI (Net Reclassification Index) | Improvement in reclassification with new biomarker | Incremental value assessment [28] |

Assessing Incremental Value of Biomarkers

When adding novel biomarkers to existing clinical risk models, researchers must demonstrate incremental value beyond established factors. Statistical methods for this assessment include:

- Multivariable significance testing: Evaluates whether the biomarker remains associated with outcomes after adjusting for existing variables [28]

- Change in AUC (ΔAUC): Difference in area under ROC curve between models with and without the new biomarker [28]

- Category-free NRI: Measures reclassification improvement without predefined risk categories [28]

- Integrated Discrimination Improvement (IDI): Difference in discrimination slopes between models [28]

Before evaluating incremental value, the baseline clinical prediction model must demonstrate good calibration, meaning model-based event rates correspond to observed clinical rates [28].

Experimental Protocols for Key Validation Experiments

Protocol 1: Definitive Quantitative Assay Validation

This protocol provides a framework for validating definitive quantitative biomarker methods, such as LC-MS/MS assays [23].

Materials and Reagents

Table 4: Research Reagent Solutions for Definitive Quantitative Assays

| Reagent/Resource | Function | Specifications |

|---|---|---|

| Fully Characterized Reference Standard | Calibrator preparation | Representative of endogenous biomarker [23] |

| Stable Isotope-Labeled Internal Standard | Correction for variability | Compensates for ion suppression/extraction variability [26] |

| Matrix Blank | Specificity assessment | Biomarker-free biological matrix [23] |

| Quality Control Materials | Performance monitoring | Low, medium, high concentration QCs [23] |

| Automated Sample Preparation System | Sample processing | Liquid handling robotics for consistency [26] |

Procedure

Calibration Curve Construction

- Prepare 6-8 non-zero calibration standards covering the expected physiological range

- Include blank and zero samples (blank matrix with internal standard)

- Analyze in triplicate across three separate runs [23]

Accuracy and Precision Assessment

- Prepare validation samples (VS) at three concentrations (low, medium, high)

- Analyze five replicates of each VS per run for three runs

- Calculate within-run and between-run precision (%CV)

- Determine accuracy as mean % deviation from nominal concentration [23]

Stability Evaluation

- Conduct short-term stability under storage conditions

- Perform freeze-thaw stability through 3 cycles

- Assess processed sample stability in autosampler conditions [23]

Specificity and Selectivity

- Analyze individual blank matrix samples from at least 6 sources

- Assess potential interferents (hemolyzed, lipemic, icteric samples)

- Evaluate cross-reactivity with structurally similar compounds [26]

Data Analysis and Acceptance Criteria

Protocol 2: High-Throughput Screening for Biomarker Discovery

This protocol adapts high-throughput approaches for efficient biomarker screening while maintaining FFP principles [29].

Materials and Reagents

- Human PBMCs or relevant cell model [29]

- Small molecule libraries or experimental compounds [29]

- Multiplex cytokine detection kits (e.g., AlphaLISA, bead-based assays) [29]

- Flow cytometry antibodies for surface markers [29]

- Automated liquid handling systems [30]

- Multi-mode microplate readers [30]

Procedure

Experimental Setup

Multiplexed Readout Collection

Data Acquisition and Analysis

Validation Considerations for Discovery Phase

Applications in Drug Development and Regulatory Considerations

Biomarker Applications Across Drug Development Stages

The FFP approach aligns biomarker validation with specific applications throughout the drug development continuum [24] [25].

Diagram 2: Biomarker Applications Across Drug Development Stages

Regulatory Alignment and Clinical Implementation

Successful biomarker implementation requires careful attention to regulatory expectations and clinical utility:

- Context of use determination: Clearly define whether the biomarker serves prognostic, predictive, pharmacodynamic, or safety purposes [25]

- Evidence generation level: Match validation stringency to application context (exploratory vs. decision-making) [23]

- Clinical validity establishment: Demonstrate association between biomarker and clinical endpoints [27]

- Analytical validity verification: Ensure the test reliably measures the biomarker [27]

- Clinical utility demonstration: Prove the biomarker provides useful information for patient management [27]

For regulatory submissions, biomarkers intended as primary endpoints or companion diagnostics require the most rigorous validation, while exploratory biomarkers may utilize more flexible FFP approaches [23].

The fit-for-purpose validation framework provides a strategic, resource-efficient approach to biomarker qualification that aligns evidence generation with intended application and decision-making context. By implementing appropriate, tiered validation strategies based on assay category and application context, researchers can accelerate biomarker development while maintaining scientific rigor. The iterative nature of the FFP approach supports continuous improvement as biomarkers progress from discovery to clinical application, ultimately enhancing drug development efficiency and advancing personalized medicine. As biomarker technologies continue to evolve, maintaining this flexible yet rigorous validation paradigm will be essential for translating novel biomarkers into clinically useful tools.

Statistical Approaches for Calibration: Equations, Error Correction, and Implementation

Regression Calibration Methods for Measurement Error Correction

Regression calibration is a statistical methodology for correcting bias in effect estimates obtained from regression models that arises due to measurement error in assessed variables [31]. This approach is particularly valuable in nutritional epidemiology, drug development, and other fields where precise measurement of exposures is challenging and subject to systematic error. The fundamental principle involves replacing the error-prone measurements with their conditional expectations given the observed data and other covariates, thereby reducing bias in parameter estimates [32] [33].

In the context of biomarker calibration research, regression calibration addresses the critical challenge of systematic measurement errors that commonly affect self-reported data in association studies between dietary intake and chronic disease risk [34] [35]. These errors, if uncorrected, can lead to biased estimates of diet-disease associations, obscuring true relationships or creating spurious ones. The method has been extended beyond traditional applications to handle complex data structures including time-to-event outcomes, high-dimensional biomarkers, and functional data from wearable devices [36] [37] [33].

Theoretical Foundations and Methodological Variations

Core Principles and Assumptions

Regression calibration operates under several key assumptions. First, it requires the availability of a validation sample where both the error-prone and reference measurements are available [37] [33]. This validation sample can be internal (a subset of the main study) or external (a separate study population). Second, the method typically assumes a classical measurement error model where the surrogate measure is related to the true exposure through a linear relationship with additive error, though extensions to more complex error structures have been developed [36] [33].

The fundamental approach involves estimating the calibration model in the validation sample where both true values (X) and error-prone values (W) are available: E[X|W] = α + βW. This model is then applied to the entire study population to generate calibrated values that replace the error-prone measurements in the primary analysis [32] [16].

Methodological Variations

Table 1: Regression Calibration Methods for Different Data Structures

| Method Variant | Application Context | Key Features | Data Requirements |

|---|---|---|---|

| Standard RC [31] [32] | Linear, logistic, Cox models with univariate error-prone exposure | Corrects for classical measurement error; simple implementation | Validation sample with gold standard measurements |

| Joint RC [35] | Multiple error-prone exposures studied simultaneously | Accounts for correlated measurement errors between exposures | Biomarkers or reference measures for all correlated exposures |

| Survival RC (SRC) [37] | Time-to-event outcomes with error-prone event times | Uses Weibull parameterization; handles right-censoring | Validation sample with both true and error-prone event times |

| High-Dimensional RC [34] | Exposure measured via high-dimensional biomarkers (e.g., metabolomics) | Incorporates variable selection methods (LASSO, SCAD); handles p>n scenarios | High-dimensional objective measures (e.g., metabolites) |

| Functional RC [36] | Longitudinal functional data from wearable devices | Corrects for heteroscedastic measurement errors in functional curves | Repeated functional measurements over time |

| Two-Stage RC [38] [16] | Pooled analyses across multiple studies with between-lab variation | Calibrates measurements to reference standard; accounts for study effects | Subsample with reference measurements from each study |

Experimental Protocols and Implementation

Protocol 1: Standard Regression Calibration for Univariate Exposure

Purpose: To correct for measurement error in a continuous independent variable measured with error in generalized linear models.

Materials and Software Requirements:

- R statistical software with

rcregpackage [32] - Validation dataset with gold standard measurements

- Primary dataset with error-prone measurements

Procedure:

- Validation Phase: In the validation sample, fit the calibration model relating the true exposure (X) to the error-prone measure (W): X = α + βW + ε, where ε ~ N(0, σ²)

- Parameter Estimation: Obtain estimates â, β̂, and σ̂² from the calibration model

- Calibration Phase: For each subject in the main study, compute the calibrated value: X̂ = â + β̂W

- Primary Analysis: Replace W with X̂ in the primary regression model and estimate parameters of interest

- Variance Estimation: Apply bootstrap methods (typically nboot = 400) to obtain corrected standard errors that account for the calibration uncertainty [32]

Implementation Code (R):

Protocol 2: Joint Regression Calibration for Multiple Dietary Components

Purpose: To correct for correlated measurement errors in multiple dietary exposures when studying their joint effects on disease risk [35].

Materials:

- Controlled feeding study data for biomarker development

- Biomarker sub-study data for calibration equation development

- Association study data with disease outcomes

Procedure:

- Biomarker Development: In the feeding study (Sample 1), develop multivariate biomarker models relating objective measures (e.g., metabolites) to true dietary intakes of multiple components

- Calibration Equation: In the biomarker sub-study (Sample 2), estimate calibration equations relating self-reported intakes to biomarker-predicted values

- Disease Association: In the main association study (Sample 3), use the calibration equations to obtain calibrated exposure values and estimate their joint associations with disease risk

- Variance Estimation: Apply robust variance estimators that account for uncertainty in both biomarker development and calibration steps

Key Considerations:

- Biomarkers developed for single dietary components cannot be directly used for joint calibration without additional methodological care [35]

- The method explicitly accounts for correlated measurement errors between different dietary components

- Asymptotic distribution theory should be used to derive appropriate confidence intervals

Protocol 3: Survival Regression Calibration for Time-to-Event Outcomes

Purpose: To correct for measurement error in time-to-event outcomes when combining clinical trial and real-world data [37].

Materials:

- Validation sample with both gold-standard and error-prone event times

- Primary dataset with error-prone event times only

- Weibull regression modeling capability

Procedure:

- Validation Modeling: In the validation sample, fit separate Weibull regression models for the true (Y) and mismeasured (Y*) event times:

- True model: log(Y) = a₀ + (1/σ)ε

- Mismeasured model: log(Y) = a₀ + (1/σ)ε

- Bias Estimation: Estimate the bias parameters: δ₀ = a₀* - a₀ and δ₁ = (1/σ*) - (1/σ)

- Calibration: For each subject in the full sample, calibrate the mismeasured event time using the estimated bias parameters

- Primary Analysis: Analyze the calibrated time-to-event data using standard survival methods

Advantages over Standard RC:

- Avoids generating negative event times

- Better handles right-censored data

- Appropriately models the distributional characteristics of survival data

Visualization of Methodological Approaches

Workflow for High-Dimensional Regression Calibration

Figure 1: High-Dimensional Regression Calibration Workflow for Biomarker Development

Three-Study Design for Biomarker Development and Application

Figure 2: Three-Study Design for Biomarker-Based Regression Calibration

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for Regression Calibration Studies

| Resource Type | Specific Examples | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Statistical Software | R with CMAverse package [32] |

Implements regression calibration for various model types | Supports lm, glm, multinom, polr, coxph, survreg models |

| Biomarker Platforms | High-throughput metabolomics [34] | Provides objective measures for biomarker development | Handles high-dimensional data (p > n scenarios) |

| Variable Selection Methods | LASSO, SCAD, Random Forest [34] | Selects relevant biomarkers from high-dimensional data | Addresses collinearity and spurious correlations |

| Variance Estimation Techniques | Bootstrap, Refitted Cross-Validation (RCV) [34] | Accounts for uncertainty in calibration step | Required for valid confidence intervals |

| Calibration Study Designs | Controlled feeding studies (NPAAS-FS) [34] [38] | Provides gold-standard data for calibration | Expensive but necessary for biomarker development |

| Validation Samples | Internal or external validation subsets [37] [33] | Enables estimation of measurement error structure | Must be representative of main study population |

Applications in Nutritional Epidemiology and Drug Development

Case Study: Women's Health Initiative

In the Women's Health Initiative, regression calibration methods have been applied to examine associations between sodium/potassium intake ratio and cardiovascular disease risk [34] [38] [35]. The analysis utilized a three-stage design: (1) biomarker development from controlled feeding studies, (2) calibration equation estimation from biomarker sub-studies, and (3) disease association analysis in the full cohort. Application of joint regression calibration revealed significant positive associations between sodium intake and CVD risk, and inverse associations for potassium intake [35].

The methodology corrected for systematic measurement errors in self-reported dietary data that would have otherwise biased the estimated associations. The approach incorporated high-dimensional metabolite data to develop biomarkers for dietary components that previously lacked objective biomarkers, demonstrating the evolving capability of regression calibration methods to address complex measurement error challenges in nutritional epidemiology.

Case Study: Oncology Endpoints Calibration

In oncology research, survival regression calibration has been applied to address measurement error when combining clinical trial and real-world data for external comparator arms [37]. For newly diagnosed multiple myeloma, the method enabled calibration of real-world progression-free survival endpoints to align with trial standards, facilitating valid comparison between trial interventions and real-world standard of care.

The approach specifically addressed challenges of time-to-event outcome measurement error, including right-censoring and the presence of both systematic and random errors in event time ascertainment. By framing the measurement error problem in terms of Weibull distribution parameters, the method provided more appropriate calibration of survival endpoints compared to standard linear regression calibration approaches.

Limitations and Methodological Considerations

Despite its utility, regression calibration presents several important limitations. The method provides approximate rather than exact correction for measurement error in nonlinear models such as logistic and Cox regression [33]. The accuracy of the approximation depends on the strength of the association and the amount of measurement error, with poorer performance in settings with strong effects and substantial error.

Additionally, regression calibration requires correctly specified calibration models. Violations of the classical measurement error assumption, such as the presence of Berkson-type errors, can lead to biased estimates [34]. In high-dimensional settings, challenges in variance estimation persist due to collinearity among covariates and the presence of spurious correlations, necessitating specialized approaches such as refitted cross-validation or degrees-of-freedom corrected estimators [34].