The Convergence of Precision Nutrition and Wearable Technology: A New Paradigm for Biomedical Research and Therapeutic Development

This article examines the transformative integration of wearable sensor technology with precision nutrition, a field rapidly advancing due to artificial intelligence and multi-omics data.

The Convergence of Precision Nutrition and Wearable Technology: A New Paradigm for Biomedical Research and Therapeutic Development

Abstract

This article examines the transformative integration of wearable sensor technology with precision nutrition, a field rapidly advancing due to artificial intelligence and multi-omics data. Aimed at researchers, scientists, and drug development professionals, it explores the scientific foundations, methodological applications, current challenges, and validation frameworks for these tools. The scope spans from foundational concepts like the shift from population-based to individualized dietary guidance, to the technical mechanics of biosensors for monitoring metabolites and nutrients, the optimization of data integration and AI algorithms, and the critical evaluation of clinical and commercial evidence. This synthesis provides a roadmap for leveraging these technologies to enhance clinical trials, develop targeted therapies, and build robust, evidence-based personalized health interventions.

From One-Size-Fits-All to Individualized Diets: The Scientific Basis of Precision Nutrition

In the evolving landscape of dietary science, the terms "precision nutrition" and "personalized nutrition" are often used interchangeably, creating conceptual ambiguity for researchers, scientists, and drug development professionals. However, distinct definitions are emerging that carry significant implications for clinical research design and therapeutic development. Precision nutrition focuses on identifying specific subgroups within populations and providing tailored dietary recommendations based on deep phenotyping approaches that utilize high-throughput -omics technologies and large-scale data integration [1]. In contrast, personalized nutrition operates at the individual level, tailoring dietary recommendations based on unique genetic, phenotypic, medical, and lifestyle information [1]. Despite these methodological differences, both approaches share the same fundamental goal: to provide targeted dietary advice to individuals to preserve or improve health and well-being by leveraging human variability [1] [2].

This distinction is particularly relevant in the context of chronic disease management and therapeutic development. The integration of digital health technologies with these nutritional approaches offers a transformative paradigm for managing conditions such as diabetes and obesity, extending beyond generic dietary recommendations by tailoring interventions based on genetic, epigenetic, microbiome, and real-time metabolic data [3]. Understanding this paradigm is essential for designing robust clinical trials, developing targeted therapies, and creating effective digital health solutions.

Conceptual Distinctions: Scope, Data Requirements, and Applications

The following table delineates the core conceptual and methodological differences between precision and personalized nutrition, providing researchers with a framework for experimental design and clinical application.

Table 1: Key Paradigmatic Distinctions Between Precision and Personalized Nutrition

| Feature | Precision Nutrition | Personalized Nutrition |

|---|---|---|

| Primary Focus | Subgroups within the general population [1] | Individual-level recommendations [1] |

| Core Data Sources | Deep phenotyping technologies, high-throughput -omics (genomics, metabolomics, proteomics) [1] | Genetic, phenotypic, medical, and lifestyle information [1] [4] |

| Technological Requirements | High-dimensional data integration at scale, artificial intelligence/machine learning models [1] | Wearables, mobile health applications, clinical parameters [3] |

| Definition in Practice | "Uses multi-omics data, digital biomarkers, and advanced analytics to inform interventions" [5] | "Uses individual-specific information to promote dietary behavior change" [4] |

| Clinical Applications | Population-level intervention strategies, subgroup identification for clinical trials [6] | Individualized patient care, behavioral coaching, real-time dietary adjustments [3] |

While these distinctions provide a conceptual framework, both approaches rely on a common foundation of advanced technologies and methodologies. The evidence base informing both is multidisciplinary—integrating nutrition, systems biology, and behavioral sciences—and rapidly evolving with technological advances [1]. New biomarkers continue to be discovered, innovations in wearables and other noninvasive devices increase the amount of real-time data, and advances in artificial intelligence and machine learning models refine the ability to generate personalized recommendations for lifestyle-behavior changes [1].

Methodological Frameworks: Experimental Designs for Clinical Research

Precision Nutrition: The NIH "Nutrition for Precision Health" Framework

The Nutrition for Precision Health (NPH) program, powered by the All of Us Research Program, represents a seminal framework for precision nutrition research [1]. Launched in 2023, this comprehensive study aims to use artificial intelligence to develop algorithms that predict individual responses to foods and dietary patterns, with tiered levels of data expected to be available to the public in 2027 [1].

The experimental protocol encompasses:

- Deep Phenotyping: Collection of multi-omics data (genomics, metabolomics, proteomics, metagenomics) from participants [1] [6].

- Standardized Challenge Tests: Implementation of controlled dietary challenges (e.g., oral glucose tolerance tests, mixed macronutrient challenges) to measure metabolic responses [6] [4].

- Environmental and Behavioral Assessment: Comprehensive capture of food environment, socioeconomic factors, and psychosocial characteristics [2] [6].

- AI/ML Integration: Application of machine learning algorithms to integrated datasets to identify response patterns and subgroup classifications [1] [7].

This framework is particularly valuable for identifying patient stratification biomarkers for drug development and creating targeted dietary interventions for specific genetic or metabolic profiles.

Personalized Nutrition: N-of-1 Methodologies for Individualized Care

For research focused on individual-level outcomes, N-of-1 study designs provide a robust methodological framework [8]. These designs involve repeated measurements of health outcomes or behaviors at the individual level and are particularly suited for capturing inter-individual variability in response to dietary interventions.

The experimental protocol includes:

- Observational Designs: Monitoring a participant's usual health or behavior in naturalistic settings using Ecological Momentary Assessment (EMA) for real-time data collection [8].

- Interventional Designs: Introducing dietary or behavioral interventions with predictors and outcomes measured repeatedly during one or more intervention and control periods [8].

- Real-time Monitoring: Integration of continuous glucose monitors (CGMs), wearable devices, and mobile health applications for dynamic data collection [3] [5].

- Statistical Modeling: Application of individual-level time series analyses and aggregation methods for sets of N-of-1 trials to test hypotheses across small numbers of heterogeneous individuals [8].

This methodology is particularly relevant for clinical trials of personalized nutrition interventions where the focus is on individual response variability rather than population-level effects.

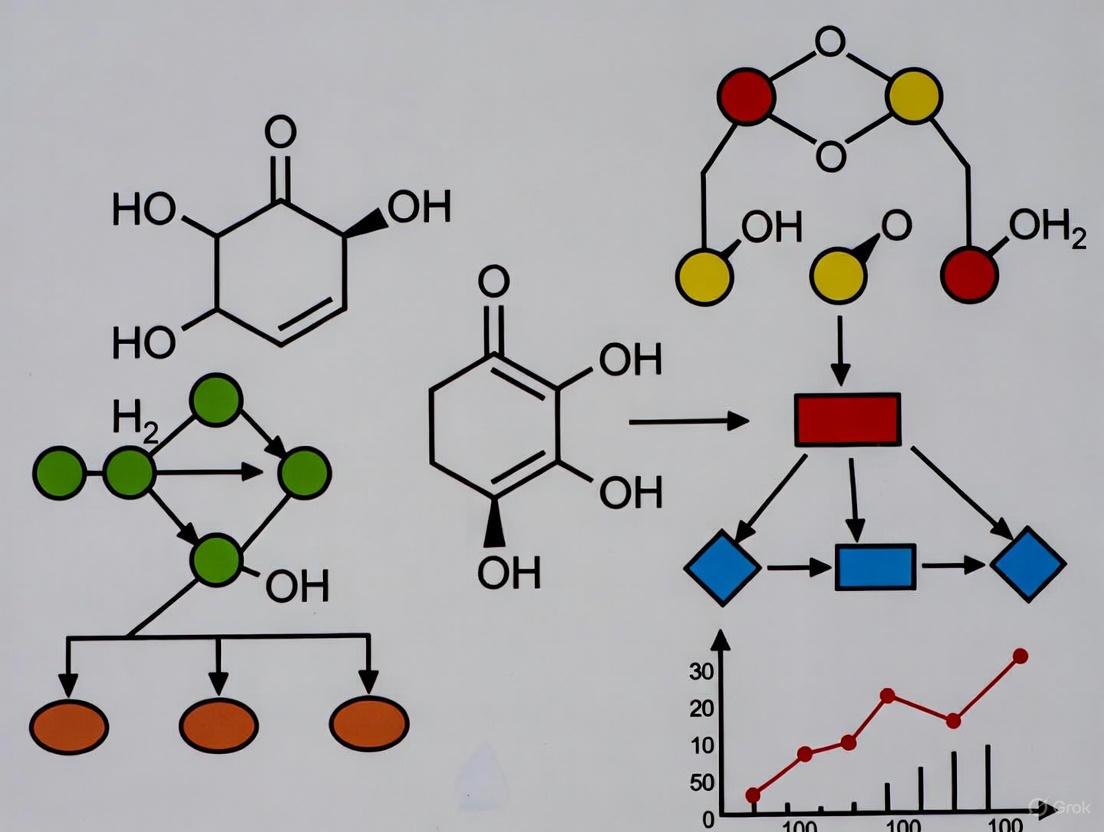

Figure 1: Experimental Design Pathways for Precision vs. Personalized Nutrition Research

The Scientist's Toolkit: Essential Reagents and Technologies

Implementation of precision and personalized nutrition research requires specialized reagents, technologies, and methodologies. The following table details essential components of the research toolkit for scientists designing studies in this domain.

Table 2: Research Reagent Solutions for Precision Nutrition Investigations

| Research Tool Category | Specific Examples | Research Function | Technical Considerations |

|---|---|---|---|

| Genomic Profiling Tools | GWAS arrays, Whole Genome Sequencing, APOE, FTO, MC4R genotyping [6] [5] | Identifies genetic susceptibility to obesity, diabetes; guides genotype-based dietary recommendations | Sample collection (saliva, blood), DNA extraction, sequencing depth, variant calling accuracy |

| Metabolomic Platforms | LC-MS, NMR spectroscopy, targeted assays for SCFAs, lipids [6] [5] | Quantifies metabolic phenotypes; reveals individual responses to dietary interventions | Sample stability (plasma, urine, fecal), normalization procedures, batch effect correction |

| Microbiome Analysis Kits | 16S rRNA sequencing, shotgun metagenomics, fecal sampling systems [6] [5] | Assesses gut microbiota composition and functional potential for personalized pre/probiotic advice | Sample preservation, DNA extraction efficiency, contamination controls |

| Continuous Monitoring Devices | CGMs, activity trackers, smart scales [3] [5] | Provides real-time physiological data (glucose, activity, weight) for dynamic feedback | Data integration protocols, sensor calibration, API access for data extraction |

| Dietary Assessment Technologies | Food image recognition AI, barcode scanners, mobile food records [2] [9] | Automates nutrient intake tracking with reduced user burden | Validation against weighed food records, food composition database accuracy |

| Challenge Test Materials | Oral Glucose Tolerance Test (OGTT), mixed macronutrient challenges [4] | Measures metabolic flexibility and phenotypic responsiveness to standardized stimuli | Protocol standardization, timing of samples, analyte stability |

Technological Integration: The Role of AI and Digital Health

Artificial intelligence and digital health technologies serve as critical enablers for both precision and personalized nutrition approaches, creating synergistic capabilities that enhance clinical applications.

AI and Machine Learning Applications

Advanced computational methods are revolutionizing both domains:

- Predictive Modeling: Machine learning algorithms (random forests, XGBoost, neural networks) predict postprandial glycemic responses to foods based on clinical, genetic, and microbiome data [7] [9].

- Pattern Recognition: Unsupervised learning methods (k-means clustering, PCA) identify metabotypes and nutritypes from high-dimensional data [9].

- Image-Based Dietary Assessment: Convolutional neural networks (CNNs) and computer vision systems automate food identification and portion size estimation with >85% accuracy [9].

- Adaptive Recommendation Systems: Reinforcement learning algorithms enable continuous personalization through feedback loops from behavioral and physiological data [9].

Digital Health Integration

Wearable devices and mobile platforms bridge the gap between precision insights and personalized delivery:

- Real-Time Monitoring: Continuous glucose monitors (CGMs) provide dynamic glucose data to inform personalized meal planning and timing [3] [5].

- Behavioral Tracking: Mobile health applications integrate dietary logging, physical activity, and medication adherence into personalized feedback systems [3] [5].

- Remote Patient Monitoring: Digital platforms enable researchers and clinicians to track intervention adherence and outcomes in real-world settings [3].

Figure 2: AI-Driven Data Integration Framework for Precision and Personalized Nutrition

Clinical Implementation and Regulatory Considerations

The translation of precision and personalized nutrition from research to clinical practice requires careful attention to regulatory frameworks and implementation challenges. Current regulatory guidance for personalized nutrition programs should focus on several key areas: (1) safety and accuracy of tests and devices; (2) credentials of experts developing advice; (3) responsible and clear communication of information and benefits; (4) substantiation of scientific claims; and (5) procedures to protect user privacy [1] [10].

For drug development professionals, understanding these frameworks is essential when designing clinical trials that incorporate nutritional components. The regulatory landscape is evolving to address the unique challenges posed by these approaches, including the combination of multiple components (food, supplements, diagnostics, devices) that may require differential regulation [10]. As the field advances with new devices, biomarkers, behavior-based tools, and AI/ML integration, adaptation of existing regulatory frameworks will be necessary to ensure safety and efficacy while promoting innovation [1] [10].

The distinction between precision and personalized nutrition represents more than semantic nuance—it reflects fundamental differences in research methodology, data requirements, and clinical applications. For researchers, scientists, and drug development professionals, understanding this paradigm is crucial for designing rigorous studies, developing targeted interventions, and navigating regulatory pathways. Precision nutrition offers powerful approaches for population subgroup identification and stratification, while personalized nutrition enables truly individualized dietary recommendations. Together, supported by advances in AI and digital health technologies, these approaches hold significant promise for advancing clinical nutrition science and improving patient outcomes in chronic disease prevention and management.

Interindividual variability in metabolic phenotypes presents a central challenge in nutritional science, disease prevention, and therapeutic development. Understanding the factors that determine why individuals respond differently to identical dietary interventions is critical for advancing precision nutrition. The integration of wearable technology with deep molecular profiling now enables researchers to move beyond population-level recommendations to individualized health strategies. This technical guide examines the key biological drivers—genetics, gut microbiome, and metabolic phenotypes—that underpin this variability, framing them within the context of modern precision nutrition research and emerging digital health technologies. We synthesize quantitative evidence from recent large-scale cohort studies, detail experimental methodologies for investigating these drivers, and visualize the complex relationships through pathway diagrams and workflow schematics to provide researchers with a comprehensive resource for advancing personalized health interventions.

Quantitative Assessment of Variability Drivers

Large-scale cohort studies have systematically quantified the relative contributions of genetics, microbiome, and diet to human metabolic variation. Research assessing 1,183 plasma metabolites in 1,368 individuals from the Lifelines DEEP and Genome of the Netherlands cohorts revealed distinct dominant factors for different metabolites [11]. The analysis quantified the proportion of inter-individual variation in the plasma metabolome explained by these different factors [11] [12].

Table 1: Dominant Factors Explaining Variance in Plasma Metabolites

| Dominant Factor | Number of Metabolites | Representative Examples | Variance Explained Range |

|---|---|---|---|

| Diet | 610 | Food components, dietary patterns | 0.4-35% |

| Gut Microbiome | 85 | Urolithins (from ellagitannins), equol (from isoflavones), hippuric acid, 15 uremic toxins | 0.7-25% |

| Genetics | 38 | Lipid species (10), amino acids (8) | 3-28% |

Table 2: Overall Variance Explained in Plasma Metabolome

| Factor | Variance Explained | Statistical Significance |

|---|---|---|

| Gut Microbiome | 12.8% | FDR < 0.05 |

| Diet | 9.3% | FDR < 0.05 |

| Genetics | 3.3% | FDR < 0.05 |

| Intrinsic Factors (age, sex, BMI) | 4.9% | FDR < 0.05 |

| Smoking | Included in overall model | FDR < 0.05 |

| Combined Total | 25.1% | FDR < 0.05 |

The gut microbiome explains the largest proportion of total plasma metabolome variance (12.8%), surpassing both diet (9.3%) and genetics (3.3%) [11]. This highlights the microbiota's crucial role as a metabolic interface between dietary intake and host physiology. Notably, 185 metabolites showed significant contributions from more than one factor, demonstrating the complex interplay between these biological systems [11]. For example, plasma 5′-carboxy-γ-chromanol showed 4% variance explained by genetics and 5% by microbiome, while hippuric acid—a uremic toxin produced by bacterial conversion of dietary proteins—showed 13% variance explained by both diet and microbiome [11].

Genetic Determinants of Metabolic Variation

Key Genetic Mechanisms

Genetic polymorphisms significantly contribute to inter-individual differences in nutrient metabolism and dietary responses [13]. These variations influence how individuals process specific nutrients, ultimately affecting metabolic phenotypes and disease risk. Several well-characterized gene-nutrient interactions demonstrate this principle:

- CYP1A2 and Caffeine Metabolism: A single-nucleotide polymorphism (SNP) in intron 1 of the cytochrome P450 enzyme CYP1A2 gene accounts for high inter-individual variability in caffeine metabolism and intrinsic concentrations [13].

- FTO and Dietary Fat Response: Individuals with the CC genotype of a specific SNP demonstrate approximately 10% higher BMI when consuming a high-saturated-fat diet, while those with the TT genotype show no such association [13].

- APOE and Lipid Metabolism: Genetic variations in the APOE gene significantly modify lipid responses to dietary fat intake, illustrating how genotype informs phenotypic expression in response to nutritional challenges [13].

Experimental Protocols for Genetic Association Studies

mQTL (metabolite Quantitative Trait Loci) Mapping Protocol:

- Cohort Design: Recruit extensively phenotyped cohorts with diverse genetic backgrounds (e.g., Lifelines DEEP, n=1,054; Genome of the Netherlands, n=77) [11].

- Genotyping: Perform genome-wide genotyping using DNA microarrays, followed by imputation to reference panels to obtain 5.3+ million genetic variants [11].

- Metabolite Profiling: Conduct untargeted metabolomics on fasting plasma samples using flow-injection time-of-flight mass spectrometry (FI-MS) to quantify 1,183 metabolites [11].

- Quality Control: Apply strict quality control filters to both genetic and metabolomic data, removing variants with low call rates and metabolites with high missingness.

- Association Testing: Perform metabolite genome-wide association studies (mGWAS) using linear mixed models adjusting for age, sex, population structure, and relatedness.

- Significance Thresholding: Apply false discovery rate (FDR) correction for multiple testing (typically FDR < 0.05) [11].

- Variance Estimation: Calculate proportion of metabolite variance explained by genetic variants using additive models with least absolute shrinkage and selection operator (lasso) method [11].

Gut Microbiome as a Metabolic Interface

Microbial Contributions to Metabolic Diversity

The gut microbiota generates remarkable inter-individual variation in metabolic phenotypes through its composition and functional capacity to transform dietary components and host metabolites [13] [14]. Systematic reviews of human studies indicate that gut microbiota plays a major role in inter-individual differences in the absorption, distribution, metabolism, and excretion (ADME) of most phenolic compounds [14]. Two major patterns of microbiota-driven variability emerge:

- Metabolite Gradients: Quantitative differences creating high and low excretors, observed for flavonoids, phenolic acids, prenylflavonoids, alkylresorcinols, and hydroxytyrosol [14].

- Distinct Metabotypes: Qualitative differences characterized by producer versus non-producer status for specific metabolites:

Methodologies for Microbiome-Metabolite Association Studies

Microbiome-Wide Association Study (MWAS) Protocol:

- Sample Collection: Collect fecal samples for microbiome analysis and plasma/serum for metabolomics from the same individuals under standardized conditions [11].

- DNA Sequencing: Perform shotgun metagenomic sequencing or 16S rRNA gene sequencing of fecal samples to characterize microbial taxonomy.

- Metabolic Pathway Profiling: Map metagenomic sequences to reference databases (e.g., MetaCyc) to quantify abundance of 343+ microbial metabolic pathways [11].

- Metabolite Profiling: Conduct untargeted metabolomics on plasma samples using FI-MS or LC-MS/MS platforms [11].

- Association Testing: Perform pairwise associations between microbial features (species, pathways) and plasma metabolites using linear models, adjusting for confounders (age, sex, BMI, diet) [11].

- Causal Inference: Apply Mendelian randomization and mediation analyses to infer putative causal relationships between microbiome features and metabolites [11].

- Validation: Replicate findings in independent cohorts (e.g., LLD2, n=237; GoNL, n=77) [11].

Integration with Wearable Technology and Digital Monitoring

Validating Wearable-Derived Metrics for Metabolic Monitoring

Recent advances in wearable technology enable continuous, real-world monitoring of physiological parameters that reflect metabolic states. Validation studies demonstrate the accuracy and limitations of these devices for precision nutrition research:

Table 3: Validation of Wearable-Derived Nocturnal HRV and RHR Metrics

| Device | Parameter | Concordance with ECG (CCC) | Mean Absolute Percentage Error | Best Use Case |

|---|---|---|---|---|

| Oura Gen 4 | Nocturnal HRV | 0.99 | 5.96 ± 5.12% | High-resolution sleep metabolism studies |

| Oura Gen 3 | Nocturnal HRV | 0.97 | 7.15 ± 5.48% | Longitudinal metabolic recovery tracking |

| WHOOP 4.0 | Nocturnal HRV | 0.94 | 8.17 ± 10.49% | Exercise-metabolism interaction studies |

| Oura Gen 4 | Nocturnal RHR | 0.98 | 1.94 ± 2.51% | Baseline metabolic rate assessment |

| Oura Gen 3 | Nocturnal RHR | 0.97 | 1.67 ± 1.54% | Long-term metabolic trend monitoring |

| WHOOP 4.0 | Nocturnal RHR | 0.91 | 3.00 ± 2.15% | Activity-related metabolic response |

Validation studies in pediatric populations with heart conditions further demonstrate the utility of wearables for metabolic monitoring, with the Corsano CardioWatch showing 84.8% accuracy and Hexoskin smart shirt showing 87.4% accuracy in heart rate monitoring compared to Holter ECG [15]. These technologies enable continuous monitoring in free-living conditions, capturing dynamic metabolic responses that traditional intermittent measurements miss.

Emerging Integration Platforms

The NOURISH project exemplifies the integration of wearable sensors with digital twin technology for personalized nutrition [16]. This system combines:

- Multi-analyte Wearable Patches: FDA-approved continuous glucose monitors enhanced with sensors for lactate, amino acids, and other clinically relevant molecules [16].

- Computational Digital Twins: Physics-informed models that simulate whole-body metabolism using real-time sensor data [16].

- Probabilistic AI Algorithms: Generate personalized nutritional guidance with confidence estimates for each recommendation [16].

This integrated approach enables prediction of individual metabolic responses to meals, activity, and sleep, creating a feedback loop for optimizing dietary interventions based on individual variability [16].

Experimental Framework and Research Toolkit

Comprehensive Experimental Workflow

Research Reagent Solutions and Essential Materials

Table 4: Essential Research Tools for Investigating Metabolic Variability

| Tool Category | Specific Examples | Function/Application | Technical Specifications |

|---|---|---|---|

| Metabolomics Platforms | Flow-injection time-of-flight mass spectrometry (FI-MS) | Untargeted plasma metabolome profiling (1,183 metabolites) | Covers lipids, organic acids, phenylpropanoids, benzenoids; validation vs. LC-MS/MS (rSpearman > 0.62) [11] |

| Genotyping Arrays | Genome-wide SNP microarrays | Genotyping followed by imputation to 5.3M+ variants | Identifies metabolite quantitative trait loci (mQTLs); 40 unique genetic variants associated with 48 metabolite associations [11] |

| Microbiome Profiling | Shotgun metagenomic sequencing | Taxonomic and functional profiling (156 species, 343 MetaCyc pathways) | Reveals microbial contributions to metabolite variance; 1,373 associations with bacterial species [11] |

| Wearable Validation | Oura Ring (Gen 3/4), WHOOP 4.0, Corsano CardioWatch | Continuous physiological monitoring (nocturnal HRV, RHR) | PPG-based sensors (10Hz sampling); validated against ECG (CCC = 0.97-0.99 for Oura) [17] [18] [15] |

| Dietary Assessment | Food Frequency Questionnaires (FFQ) | Quantification of 78 dietary habits | Correlates with metabolite-based diet quality scores; 2,854 diet-metabolite associations [11] |

| Statistical Packages | Lasso regression, Elastic Net, Mendelian randomization | Variance partitioning, causal inference | Quantifies variance explained (adjusted r²); identifies dominant factors (610 diet, 85 microbiome, 38 genetics dominant metabolites) [11] |

The systematic investigation of interindividual variability in metabolic phenotypes reveals a complex interplay between genetic predisposition, gut microbiome composition and function, and dietary exposures. Quantitative evidence demonstrates that while genetics provides the blueprint for metabolic capacity, the gut microbiome explains the largest proportion of variance in circulating metabolites, serving as a crucial modulator between diet and host physiology. The integration of wearable technology and digital monitoring platforms now enables continuous, real-time assessment of metabolic responses in free-living conditions, providing unprecedented resolution for understanding dynamic individual variation. As precision nutrition advances, the research frameworks and methodologies detailed in this technical guide provide scientists and drug development professionals with robust tools for investigating these complex relationships, ultimately enabling more targeted, effective, and personalized nutritional interventions that account for the fundamental biological diversity within human populations.

Wearable sensor technology is revolutionizing precision nutrition by enabling the continuous, objective monitoring of dietary intake and its subsequent physiological effects. This whitepaper details the technological foundations, methodological frameworks, and emerging applications of wearables for correlating eating behaviors with real-time metabolic responses. We present validated experimental protocols, analyze quantitative performance data, and introduce advanced computational models like digital twins that are pushing the frontier of personalized dietary guidance. The integration of these technologies promises to transform research and clinical practice in metabolic disease prevention and management.

Precision nutrition represents a fundamental shift from generic dietary recommendations toward interventions tailored to an individual's unique physiology, metabolism, and lifestyle [7]. The challenge has historically been the accurate, objective capture of two dynamic variables: dietary intake and the body's physiological response. Traditional methods like food frequency questionnaires and 24-hour recalls are plagued by inaccuracies due to human memory and reporting bias [19]. Wearable technology is emerging as a solution, bridging this gap by providing continuous, passive monitoring in free-living conditions.

These devices move beyond simple activity tracking to capture a rich dataset of behavioral and physiological parameters. By simultaneously monitoring hand-to-mouth movements and biomarkers like interstitial glucose, researchers can now establish direct, temporal relationships between specific eating events and their metabolic consequences [20] [21]. This capability is critical for understanding interindividual variability in response to diet and for developing truly personalized nutritional strategies to combat the global burden of metabolic diseases such as obesity, diabetes, and cardiovascular conditions [22].

Wearable Sensor Technologies for Dietary and Physiological Monitoring

A diverse ecosystem of wearable sensors is being deployed to capture different aspects of the nutrition-physiology loop. The table below summarizes the key technologies, their measured parameters, and their primary applications in nutrition research.

Table 1: Wearable Sensor Technologies for Precision Nutrition Research

| Technology Type | Measured Parameters | Research Application | Key Considerations |

|---|---|---|---|

| Continuous Glucose Monitors (CGM) | Interstitial glucose levels [23] | Monitoring postprandial glycemic responses [24]; linking food intake to glucose dynamics [21] | High clinical validation; strong correlation with blood glucose [23]. |

| Multi-Sensor Bands | Heart rate (HR), skin temperature (Tsk), oxygen saturation (SpO2), hand-to-mouth movements [20] | Identifying eating episodes and correlating with autonomic nervous system activity during digestion [20] | Fuses behavioral and physiological data; can validate against clinical gold standards [20]. |

| Image-Based Sensors (eButton) | Automated food imagery (every 3-6 seconds) [21] | Objective identification of food type, volume, and portion size [21] | Reduces manual logging burden; challenges with camera positioning and privacy [21]. |

| Bioimpedance Sensors | Extracellular/intracellular fluid shifts [19] | Estimating caloric intake based on fluid changes from nutrient absorption [19] | Method is indirect; accuracy can be variable, with one study showing a mean bias of -105 kcal/day [19]. |

| Sweat-Based Biosensors | Lactate, electrolytes, other biomarkers in sweat [23] | Non-invasive metabolic monitoring; performance nutrition | Challenged by correlation with blood levels and variable sweat production [23]. |

Experimental Protocols for Validation and Data Collection

Robust experimental design is essential for validating wearable technologies and generating high-quality datasets. The following protocols, drawn from recent research, provide a framework for rigorous investigation.

Controlled Clinical Facility Protocol

This protocol is designed for the precise validation of wearable sensor data against clinical gold standards in a controlled environment [20].

- Objective: To investigate physiological responses to energy intake and validate wearable sensor readings for dietary monitoring.

- Population: Recruit healthy volunteers (e.g., n=10), with informed consent. Exclusion criteria typically include chronic metabolic disease, medication affecting digestion/metabolism, and restrictive diets [20] [19].

- Study Visits: Participants attend two visits in a clinical research facility, consuming pre-defined high- and low-calorie meals in a randomized order [20].

- Data Collection:

- Wearable Sensors: Participants wear a multi-sensor band to track hand-to-mouth movements, HR, Tsk, and SpO2 throughout the eating episode.

- Gold-Standard Validation: Sensor readings are validated against a traditional bedside patient monitor and serial blood draws to measure glucose, insulin, and other hormones [20].

- Analysis: Correlate eating episode occurrence, duration, and calorie content with hand movement patterns, physiological signals, and blood biochemical responses [20].

Free-Living Validation Protocol

This protocol assesses the feasibility and accuracy of wearables for dietary management in a real-world setting, often in specific patient populations [21].

- Objective: To explore the experience, barriers, and facilitators of using wearables for dietary self-management in a free-living context.

- Population: Target a specific cohort (e.g., Chinese Americans with Type 2 Diabetes, n=11) recruited via clinical channels [21].

- Study Duration: A prospective cohort study over approximately two weeks [21].

- Data Collection:

- Devices Deployed: Participants wear a CGM for 14 days and an image-based sensor (eButton) during meals for 10 days.

- Supplementary Data: Participants keep a paper diary to track food intake, medication, and physical activity.

- Qualitative Feedback: Post-study individual interviews are conducted and thematically analyzed to understand user experience [21].

- Analysis: Feasibility is assessed through device compliance and qualitative feedback. Data from CGM, eButton, and diaries are reviewed together to help participants visualize the food-glucose relationship [21].

The workflow for integrating data from these protocols is complex and can be visualized as follows:

Quantitative Data and Performance Analysis

The accuracy of wearable sensors in quantifying nutritional intake is paramount. Validation studies provide critical performance metrics, as summarized below.

Table 2: Performance Metrics of Wearable Sensors in Dietary Tracking

| Sensor / Technology | Validation Method | Key Performance Metrics | Reported Challenges |

|---|---|---|---|

| GoBe2 Wristband (Bioimpedance) | Reference method with calibrated study meals [19] | Mean bias: -105 kcal/day (SD 660); 95% limits of agreement: -1400 to 1189 kcal/day [19] | Transient signal loss; tendency to overestimate lower intake and underestimate higher intake [19]. |

| Continuous Glucose Monitors (CGM) | Clinical blood glucose measurements [23] | High accuracy for interstitial glucose; dominant technology segment (45.1% market share) [23] | Well-validated for glucose, but provides a single metabolic parameter. |

| eButton (Image-Based) | Participant feedback and researcher analysis [21] | Feasible for dietary management; enables visualization of food-glucose relationship [21] | Privacy concerns, difficulty positioning camera, lack of integrated photo-glucose trend analysis [21]. |

Advanced Frontiers: Digital Twins and AI-Driven Modeling

The next frontier in precision nutrition involves moving from retrospective monitoring to predictive, personalized simulation using artificial intelligence (AI) and digital twins.

The NOURISH Project: A Digital Twin Framework

The NOURISH project exemplifies this advanced approach, developing a system for real-time, digital twin technology for personalized nutrition [16]. The framework integrates three core components:

- Multi-Biomarker Wearable Sensors: A comfortable patch that tracks glucose, lactate, amino acids, and other clinically relevant molecules in real-time, building on FDA-approved CGM hardware [16].

- Computational Digital Twins: Models that simulate an individual's whole-body metabolism. These models are updated in real-time with sensor data to predict metabolic responses to meals, activity, and sleep [16].

- AI-Guided Recommendations: Probabilistic AI algorithms translate model predictions into personalized nutritional guidance, complete with a measure of confidence for each recommendation [16].

This integrated system allows researchers and clinicians to simulate the effects of dietary choices on a digital twin before implementation in real life, potentially de-risking interventions and accelerating discovery [16].

Signaling Pathways and Computational Workflow

The process of creating and utilizing a digital twin for nutrition involves a sophisticated, multi-step workflow that integrates physical data with computational intelligence.

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers designing studies in this domain, the following table catalogues key materials and their functions as derived from the cited experimental protocols.

Table 3: Essential Research Reagents and Materials for Wearable Nutrition Studies

| Item | Function / Application | Example in Use |

|---|---|---|

| Continuous Glucose Monitor (CGM) | Tracks interstitial glucose levels to monitor postprandial glycemic responses and link food intake to metabolic outcomes [21] [23]. | Freestyle Libre Pro used to capture glucose patterns in free-living studies with diabetic populations [21]. |

| Multi-Sensor Wearable Band | Captures behavioral (hand-to-mouth movement) and physiological (HR, Tsk, SpO2) data to identify eating episodes and correlate with autonomic responses [20]. | Customized band used in controlled studies to validate sensor readings against bedside monitors [20]. |

| Image-Based Dietary Sensor (eButton) | Automatically records food images to objectively identify food type, volume, and portion size without relying on memory [21]. | eButton worn on the chest to record meal data over a 10-day period in a free-living cohort [21]. |

| Clinical-Grade Bedside Monitor | Serves as a gold-standard reference for validating the accuracy of wearable-derived physiological parameters (HR, SpO2, blood pressure) [20]. | Used in a clinical facility setting to validate data from a multi-sensor wearable band [20]. |

| Intravenous Cannula & Blood Sampling Kits | Enables serial blood collection for gold-standard measurement of blood glucose, insulin, and hormone levels in controlled clinical studies [20]. | Blood samples collected via IV cannula to measure biochemical responses to pre-defined meals [20]. |

Wearable technology is fundamentally transforming the landscape of nutritional science by providing an unprecedented window into the dynamic relationship between dietary intake and physiological response. The integration of diverse data streams—from CGM and movement sensors to image-based food capture—enables the development of robust, validated experimental protocols for both controlled and free-living studies. While challenges regarding accuracy, signal stability, and user compliance remain, the trajectory of innovation is clear. The emergence of AI-driven digital twin technology promises a future where personalized nutrition moves from reactive monitoring to predictive simulation, offering tailored dietary guidance that can effectively improve metabolic health and prevent disease on an individual level.

Market Evolution and Growth Trajectory of the Precision Nutrition Sensor Ecosystem

The convergence of biosensing, artificial intelligence, and digital health platforms is catalyzing a transformative shift in nutritional science and practice. The precision nutrition sensor ecosystem represents an advanced technological framework that moves beyond generic dietary advice to deliver highly personalized nutritional interventions based on individual physiological responses, genetic makeup, and lifestyle factors [25] [26]. This ecosystem integrates wearable sensors, multi-omics technologies, and AI-driven analytics to enable real-time monitoring of metabolic parameters and nutritional status [23] [27].

For researchers, scientists, and drug development professionals, this evolving landscape offers unprecedented opportunities to integrate continuous physiological data into clinical trials, refine therapeutic nutritional interventions, and develop novel digital biomarkers. The global precision nutrition market, valued at approximately $6.12 billion in 2024, is projected to grow at a compound annual growth rate (CAGR) of 16.3% through 2034, potentially reaching $27.70 billion [25]. Within this broader market, wearable sensors for precision nutrition represent a critical growth segment, with the market expected to expand from $2.8 billion in 2024 to $9.4 billion by 2034 at a CAGR of 12.5% [23].

Market Size and Growth Projections

Table 1: Global Precision Nutrition Market Size and Growth Projections

| Metric | 2024 Value | 2025 Value | 2034 Projection | CAGR (2025-2034) |

|---|---|---|---|---|

| Overall Precision Nutrition Market [25] | $6.12 billion | $7.12 billion | $27.70 billion | 16.3% |

| Precision Nutrition Wearable Sensors Market [23] | $2.8 billion | $3.3 billion | $9.4 billion | 12.5% |

| North America Market Share [25] | 50% | - | - | - |

| Asia Pacific Growth Rate [25] | - | - | - | Fastest CAGR |

The substantial growth differential between the overall precision nutrition market (16.3% CAGR) and the specialized wearable sensor segment (12.5% CAGR) indicates both the relative maturity of sensor technologies and the expanding integration of multiple data streams beyond wearable inputs alone [25] [23]. North America currently dominates the market landscape with approximately 50% share in 2024, driven by technological advancements, significant research funding, and a shift toward preventive healthcare [25]. The "All of Us" Research Program by the National Institutes of Health exemplifies this support, funding initiatives to develop algorithms predicting individual responses to dietary patterns [25].

Market Segmentation Analysis

Table 2: Precision Nutrition Wearable Sensors Market Segmentation by Technology (2024)

| Technology Segment | Market Share (2024) | Key Applications | Leading Companies |

|---|---|---|---|

| Continuous Glucose Monitors (CGM) [23] | 45.1% | Diabetes management, metabolic monitoring | Abbott Laboratories, Dexcom Inc. |

| Sweat-based Biosensors [23] [27] | Emerging segment | Nutrient monitoring, metabolic condition tracking | Biolinq Inc. |

| Bioimpedance Sensors [23] | Growing at 12.5% CAGR | Body composition analysis, metabolic monitoring | - |

| Optical Sensors [23] | Developing segment | Vital signs monitoring, blood oxygenation | - |

The continuous glucose monitoring segment dominates the wearable sensor market, accounting for 45.1% market share in 2024 [23]. This dominance reflects decades of technological development, clinical validation, and established regulatory pathways for diabetes management and nutritional monitoring. Beyond market leaders Abbott Laboratories and Dexcom, specialized players like Biolinq Inc. are innovating in minimally invasive biosensors, while research continues on non-invasive alternatives including sweat-based and optical sensors [23].

Table 3: Market Segmentation by Application and End-user (2024)

| Segment Category | Dominant Segment (Market Share) | Fastest-Growing Segment (CAGR) |

|---|---|---|

| Application [23] | Metabolic Health Management (50.2%) | Sports Nutrition & Performance (12.9%) |

| End-User [25] [23] | Individuals/Direct-to-Consumer (~45%) | Athletes & Sports Nutrition |

| Distribution Channel [25] | Online (~50%) | Offline |

Metabolic health management constitutes the largest application segment at 50.2%, reflecting the significant clinical need for managing conditions like diabetes, obesity, and metabolic syndrome [23]. The direct-to-consumer segment leads end-user adoption, driven by consumer demand for personalized health solutions and the expansion of digital health platforms [25]. The online distribution channel dominates with approximately 50% market share, benefiting from cost efficiency and expanded reach [25].

Technological Foundations and Experimental Approaches

Key Sensor Technologies and Research Reagents

Table 4: Research Reagent Solutions for Precision Nutrition Sensing

| Research Reagent | Function | Experimental Application |

|---|---|---|

| Molecularly Imprinted Polymers (MIPs) [27] | Serve as "artificial antibodies" for specific nutrient detection | Selective binding and sensing of target metabolites (e.g., amino acids, vitamins) in wearable sensors |

| Laser-Engraved Graphene (LEG) [27] | Provides flexible, mass-producible electrode material | Forms sensing platform for metabolites, temperature, and electrolytes in wearable patches |

| Carbachol-containing Hydrogel [27] | Muscarinic agent for localized sweat induction | Enables consistent sweat sampling for sedentary individuals and during rest |

| Redox-Active Nanoreporters [27] | Facilitate electrochemical signal transduction | Enable continuous, real-time monitoring of nutrient concentrations |

| Sheep Flock Optimization Algorithm (SFOA) [28] | Optimizes hyperparameters in deep learning models | Enhances performance of medication adherence monitoring systems |

The research reagents and materials detailed in Table 4 represent critical components advancing precision nutrition sensor capabilities. Molecularly Imprinted Polymers (MIPs) have emerged as particularly valuable alternatives to biological recognition elements due to their superior chemical and physical stability, high selectivity, and versatility in imprinting diverse targets including small molecules, peptides, and proteins [27]. Laser-Engraved Graphene (LEG) enables the development of flexible, durable sensor platforms suitable for wearable form factors, while specialized hydrogels facilitate consistent biofluid sampling across various activity states [27].

Experimental Protocols and Methodologies

Protocol: Development of a Wearable Nutrient Sensing Platform

Based on the NutriTrek platform described by Wang et al., the following protocol outlines the development process for a wearable electrochemical biosensor for metabolite and nutrient monitoring [27]:

Phase 1: Sensor Fabrication

- Fabricate laser-engraved graphene (LEG) electrodes using CO₂ laser engraving on polyimide sheets

- Synthesize molecularly imprinted polymers (MIPs) via electro-polymerization of monomer solutions containing target analyte templates (e.g., specific amino acids or vitamins)

- Functionalize LEG electrodes with MIP layers optimized for specific nutrient targets through systematic variation of polymerization parameters

- Integrate redox-active nanoreporters onto the LEG-MIP electrode structure to enable electrochemical signaling

Phase 2: System Integration

- Design flexible microfluidic module with multiple inlets for efficient sweat sampling and distribution

- Incorporate iontophoresis module with LEG electrodes and carbachol-containing hydrogel for on-demand sweat induction

- Assemble sensor array with multiplexed LEG-MIP sensors for simultaneous monitoring of multiple nutrients

- Integrate temperature and electrolyte sensors for real-time calibration of nutrient measurements

- Implement wireless communication module for data transmission to external devices

Phase 3: Validation and Testing

- Conduct benchtop validation using standard solutions with known analyte concentrations

- Perform in vivo studies with human participants across varied activities (exercise, rest)

- Correlate sensor readings with gold-standard laboratory measurements (e.g., blood tests)

- Assess sensor stability, selectivity, and reproducibility over extended monitoring periods

This protocol has demonstrated successful application for real-time monitoring of dietary nutrient intakes, central fatigue, risks of metabolic syndrome, and COVID-19 severity [27].

Protocol: Deep Learning Model for Medication Adherence Monitoring

Based on research by Alatawi et al., the following protocol details the implementation of a smart wearable sensor-based system for monitoring medication adherence behaviors [28]:

Phase 1: Data Acquisition

- Equip participants with smart wearable devices containing accelerometer and gyroscope sensors

- Record hand gesture data during medication-taking behaviors and normal activities

- Transmit sensor data to mobile application via Bluetooth connectivity

- Store timestamped data in .csv format for further processing

Phase 2: Data Preprocessing

- Normalize sensor data using Z-score normalization to standardize feature scales

- Segment data streams into discrete time windows corresponding to specific gestures

- Augment dataset with synthetic samples to address class imbalance if necessary

- Partition data into training, validation, and test sets using five-fold cross-validation

Phase 3: Model Development and Training

- Implement Attention-based Bidirectional Long Short-Term Memory (Bi-LSTM) architecture for temporal pattern recognition

- Initialize model hyperparameters using Sheep Flock Optimization Algorithm (SFOA)

- Train model to classify hand gestures associated with medication adherence

- Optimize hyperparameters using SFOA to maximize accuracy and minimize loss

Phase 4: Model Evaluation

- Assess model performance using accuracy, precision, recall, and F1-score metrics

- Validate model robustness with unseen test data

- Compare performance against conventional machine learning models

- Deploy optimized model for real-time medication adherence monitoring

This approach has demonstrated high performance, achieving 98.90% accuracy in predicting medication adherence behaviors [28].

Visualization of Key Workflows and Architectures

Wearable Biosensor Platform Architecture

Wearable Biosensor Data Flow

This architecture illustrates the integrated workflow of advanced wearable nutrient sensing platforms, showing how biofluid sampling, sensing, data processing, and feedback generation are connected in a continuous monitoring system.

Deep Learning Model for Adherence Monitoring

Medication Adherence Detection Workflow

This workflow details the deep learning approach for monitoring medication adherence, highlighting how sensor data progresses through processing stages, with the Sheep Flock Optimization Algorithm enhancing model performance through hyperparameter tuning.

Future Research Directions and Challenges

Despite rapid technological advancement, several challenges remain in the widespread adoption and validation of precision nutrition sensors. Key barriers include regulatory complexity, particularly FDA compliance requirements for novel sensor technologies; high device costs coupled with limited insurance coverage; and the need for robust clinical validation across diverse populations [23]. Technical challenges such as sensor stability, correlation between measured biomarkers and blood levels (particularly for sweat-based sensors), and individual physiological variability require continued research attention [23] [27].

Future research directions should focus on several critical areas. Multi-parameter sensors capable of simultaneously monitoring diverse nutritional biomarkers represent a significant opportunity, as does the development of increasingly non-invasive monitoring technologies [23] [27]. The integration of artificial intelligence and machine learning will continue to enhance data analytics, enabling more accurate predictions and personalized recommendations [23] [7]. Furthermore, expanding clinical validation across diverse populations and disease states will be essential for establishing evidence-based protocols and achieving widespread adoption in both clinical and consumer settings [23] [26].

The convergence of multiple technological trends—including the maturation of multi-omics integration, advancements in materials science for wearable sensors, and sophisticated AI-driven analytics—suggests that precision nutrition will increasingly become a foundational component of preventive healthcare, chronic disease management, and performance optimization [25] [26]. For researchers and drug development professionals, these advancements offer compelling opportunities to integrate continuous physiological monitoring into clinical trials, develop more personalized therapeutic approaches, and establish novel digital biomarkers for nutritional status and intervention efficacy.

Addressing Health Equity and Cultural Diversity in Precision Nutrition Research

Precision nutrition represents a transformative approach to dietary guidance that uses individual-level data to predict personal responses to specific foods or dietary patterns and tailors recommendations accordingly [2]. This approach stands in stark contrast to traditional one-size-fits-all dietary recommendations that assume individual nutritional requirements mimic the average response observed in study populations [2]. While precision nutrition has shown promise in improving health outcomes, significant concerns exist regarding health equity and cultural diversity within this emerging field. The growth of the precision nutrition market has been driven by increasing consumer interest in individualized products and services coupled with advances in technology, analytics, and omic sciences, yet important limitations persist regarding equitable access and cultural relevance [2]. Malnutrition continues to be a major threat to health, particularly maternal and child health in low-resource settings, resulting in impairments in cognitive function, growth, and development, and metabolic diseases later in life [29]. This technical guide examines the current challenges, methodological considerations, and potential frameworks for addressing health equity and cultural diversity in precision nutrition research, with particular emphasis on integrating these principles into studies involving wearable technology and advanced sensor systems.

Current Landscape of Health Equity Challenges in Precision Nutrition

Research in precision nutrition primarily focuses on comprehending individualized variations in response to dietary intake, with little attention being given to other crucial aspects of precision nutrition, including equitable access and cultural applicability [30]. The field faces several significant challenges that limit its applicability across diverse populations and resource settings.

Table 1: Key Health Equity Challenges in Precision Nutrition Research

| Challenge Category | Specific Limitations | Impact on Equity |

|---|---|---|

| Geographic and Economic Disparities | Most research from high-income settings [29] | Limited generalizability to low- and middle-income countries (LMICs) |

| Technological Access | High cost of diagnostic tests and wearable devices [2] | Exclusion of low-income populations from benefits |

| Digital Infrastructure | Technological infrastructure gaps in resource-limited settings [29] | Inability to implement AI and mobile health solutions |

| Data Representation | Underrepresentation of diverse populations in research cohorts [29] | Algorithms and models that don't reflect global diversity |

| Cultural Relevance | Lack of attention to traditional foods and eating patterns [2] | Recommendations with limited practical applicability |

The precision nutrition market is largely unregulated and dominated by small companies, with most commercial products and programs collecting data and refining algorithms as they are being used [2]. This progressive generation of data and knowledge could be at the expense of the consumer if the interpretations or recommendations being generated are incorrect or ineffective, particularly for populations not represented in the initial training datasets. This is especially concerning given that about a quarter of tweet authors presenting precision nutrition information position themselves as science or medicine experts, and nearly 15% of precision nutrition tweets contain untrue information, with nutrigenomics concepts being particularly prone to misinformation [31].

Methodological Framework for Equitable Precision Nutrition Research

Comprehensive Data Collection Framework

Achieving health equity in precision nutrition requires a multidimensional approach to data collection that captures the complex interplay of biological, environmental, social, and cultural factors that influence dietary responses and health outcomes. The precision nutrition approach should be systematic, collecting and analyzing data comprehensively while remaining evidence-based and supported by scientific evidence and robust methodology [2].

Table 2: Essential Data Dimensions for Equitable Precision Nutrition Research

| Data Dimension | Specific Variables | Collection Methods |

|---|---|---|

| Biological Factors | Genetics, metabolic profiling, microbiome composition, proteomics | Biospecimen collection, wearable sensors, omic technologies [29] [22] |

| Anthropometric Measures | Body composition, growth patterns, metabolic parameters | 3D scanning, mobile phone-based technologies, machine learning approaches [29] |

| Socioeconomic Factors | Income, education, food access, transportation | Surveys, geographic information systems, community-based participatory research |

| Cultural Considerations | Traditional foods, eating patterns, food preparation methods, cultural beliefs | Ethnographic methods, focus groups, community engagement |

| Environmental Context | Food environment, built environment, social support networks | Environmental audits, GPS tracking, social network analysis |

Community-Engaged Research Protocols

Protocol 1: Community-Based Participatory Research (CBPR) for Precision Nutrition

Objective: To develop culturally appropriate precision nutrition interventions through equitable partnership with community stakeholders.

Methodology:

- Community Advisory Board Formation: Establish a diverse advisory board representing various demographic, socioeconomic, and cultural groups within the target population.

- Co-Learning Process: Researchers and community members engage in mutual education about precision nutrition science and community context.

- Joint Development of Research Questions: Community priorities inform the specific research questions and outcomes measured.

- Culturally Adapted Measurement: Develop and validate assessment tools that are culturally appropriate and linguistically accessible.

- Shared Interpretation of Findings: Community partners contribute to data interpretation and contextualization of results.

- Dissemination Planning: Co-create dissemination strategies that ensure findings reach community audiences in accessible formats.

Implementation Considerations: Budget adequate time and resources for relationship-building; acknowledge power dynamics; compensate community partners fairly for their expertise.

Technological Innovations for Equitable Precision Nutrition

Accessible Wearable and Mobile Sensors

Recent advances in wearable and mobile chemical sensors show promise for addressing equity challenges in precision nutrition monitoring. While wearable and mobile chemical sensors have experienced tremendous growth over the past decade, their potential for tracking and guiding nutrition has emerged only over the past three years [32]. Non-invasive wearable and mobile electrochemical sensors, capable of monitoring temporal chemical variations upon the intake of food and supplements, are excellent candidates to bridge the gap between digital and biochemical analyses for a successful personalized nutrition approach [32].

Protocol 2: Developing Low-Cost Sensor Solutions for Resource-Limited Settings

Objective: To create affordable, accessible monitoring technologies for diverse economic settings.

Methodology:

- Needs Assessment: Conduct focus groups and surveys in target communities to identify specific monitoring needs and technological accessibility.

- Adapt Existing Technologies: Modify current sensor technologies to reduce cost while maintaining essential functionality:

- Utilize smartphone-based detection systems

- Develop reusable sensor components

- Implement simplified data processing algorithms

- Field Validation: Test sensor performance in real-world conditions across diverse settings.

- Usability Testing: Evaluate interface design with users of varying digital literacy levels.

- Implementation Strategy: Develop sustainable distribution and maintenance models.

Artificial Intelligence and Machine Learning Approaches

Machine learning approaches are well-suited to process data from images from 3D scanners or camera-enabled mobile devices to estimate anthropometry and body composition given that image data analysis can be automated, reducing personnel time required [29]. The coupling of rapidly emerging wearable chemical sensing devices—generating enormous dynamic analytical data—with efficient data-fusion and data-mining methods that identify patterns and make predictions is expected to revolutionize dietary decision-making toward effective precision nutrition [32].

Cultural Considerations in Precision Nutrition Implementation

Culturally Adapted Dietary Assessment Methods

Traditional dietary assessment methods, including food frequency questionnaires, diet records, and recalls, have limited resolution to provide a precise intake profile and can be burdensome to complete, particularly when they fail to account for cultural food practices [2]. The development of mobile apps offering image recognition to quantify meals and wearable sensors to detect and capture nutrient intake, along with barcode scanners to facilitate the recognition of packaged foods, may result in more precise, real-time, and user-friendly dietary assessments, but these must be adapted to diverse cultural contexts [2].

Protocol 3: Cultural Adaptation of Precision Nutrition Tools

Objective: To ensure precision nutrition assessment and intervention tools are culturally appropriate and relevant.

Methodology:

- Cultural Food Mapping: Document traditional foods, preparation methods, and eating patterns within specific cultural groups.

- Tool Translation and Adaptation: Conduct more than literal translation—adapt concepts, examples, and portion sizes to be culturally meaningful.

- Cultural Concept Validation: Ensure that constructs like "healthy eating" are interpreted similarly across cultural groups.

- Image Database Development: Create food image libraries that represent diverse cultural cuisines for dietary assessment apps.

- Community Review: Engage cultural community members in reviewing and refining all assessment tools and intervention materials.

Culturally Informed Intervention Strategies

Considering additional characteristics, including sensorial responses, personal circumstances, values, attitudes, behaviors, and social determinants of health (SDOH), will facilitate the development of PN solutions that are adequately tailored to, accepted, and adopted by the individual, resulting in improved lifestyles and lasting health [2]. Precision nutrition has the potential to complement program monitoring, efficacy evaluation, and ultimately to inform design of interventions to improve maternal and child health, particularly in low-resource settings where the burden of malnutrition is highest [29].

Table 3: Framework for Culturally Informed Precision Nutrition Interventions

| Intervention Component | Standard Approach | Culturally Informed Approach |

|---|---|---|

| Dietary Recommendations | Based on mainstream Western foods | Incorporates traditional foods and culturally appropriate substitutes |

| Behavior Change Strategies | Individual-focused counseling | Family and community-centered approaches that acknowledge collective decision-making |

| Communication Methods | Written materials, digital apps | Oral traditions, storytelling, community health workers |

| Goal Setting | Weight-centric targets | Holistic health outcomes aligned with cultural values |

| Implementation Setting | Clinical environments | Community centers, faith-based organizations, homes |

Research Reagent Solutions for Equity-Focused Precision Nutrition

The successful implementation of equitable precision nutrition research requires specific methodological tools and approaches designed to address diversity and inclusion challenges.

Table 4: Essential Research Reagents for Equity-Focused Precision Nutrition Studies

| Research Reagent | Function | Equity Considerations |

|---|---|---|

| Culturally Validated Food Frequency Questionnaires (FFQs) | Assess dietary intake patterns | Includes traditional foods and culturally specific portion sizes |

| Multi-Lingual Mobile Data Collection Platforms | Enable real-time dietary and health data collection | Available in multiple languages with culturally appropriate interface design |

| Low-Cost Wearable Sensors | Continuously monitor physiological responses | Affordable design suitable for resource-limited settings |

| Community Engagement Toolkit | Facilitate meaningful community involvement in research | Provides structured approaches for building trust and equitable partnerships |

| Bias Detection Algorithms | Identify and correct for algorithmic bias in AI models | Specifically tests for performance disparities across demographic groups |

| Culturally Diverse Biomarker Panels | Measure nutritional status and metabolic responses | Validated across diverse populations with varying genetic backgrounds |

| Food Environment Assessment Tools | Document availability of healthy food options | Captures both formal and informal food sources in diverse communities |

Implementation Framework and Future Directions

The translation of precision nutrition science into products and services can be enhanced by considering the balance of benefits and risks for both consumers and patients, with particular attention to equitable access and cultural relevance [2]. Several privately and publicly funded large-scale studies are underway to gather key data and develop the necessary knowledge and methods to elucidate which metrics are most important, what degree of granularity or resolution is necessary, and which signatures of health and disease should receive priority for testing [2].

Future research should further integrate minority and cultural perspectives to fully harness AI's potential in precision nutrition [7]. Accelerating advancement in equitable precision nutrition will require investment in multidisciplinary collaborations to enable the development of user-friendly tools applying technological advances in omics, sensors, artificial intelligence, big data management, and analytics; engagement of healthcare professionals and payers to support equitable and broader adoption of precision nutrition as medicine shifts toward preventive and personalized approaches; and system-wide collaboration between stakeholders to advocate for continued support for evidence-based precision nutrition [2]. By addressing these challenges and implementing the frameworks outlined in this technical guide, researchers can contribute to a future where the benefits of precision nutrition are accessible and effective for all populations, regardless of socioeconomic status, cultural background, or geographic location.

Biosensors in Action: Technical Mechanisms and Research Applications for Real-Time Monitoring

Continuous Glucose Monitoring (CGM) technology has undergone a revolutionary transformation, evolving from a specialized tool for diabetes management to a sophisticated biosensor platform with applications across digital health and precision medicine. The global CGM market is experiencing significant momentum, with its size projected to grow from USD 8.984 billion in 2025 to USD 17.119 billion in 2030, at a compound annual growth rate (CAGR) of 13.76% [33]. This expansion is driven by rapid advancements in biosensor technology, increasing demand for real-time metabolic insights, and a global push toward connected, personalized healthcare [34]. For researchers and drug development professionals, understanding the technical capabilities and emerging applications of CGM systems is crucial for leveraging this technology in precision nutrition studies and metabolic research beyond traditional diabetes care.

The fundamental shift enabled by CGM technology is the move from isolated blood glucose snapshots to comprehensive, real-time data streams. As Dr. Rodolfo Galindo of the University of Miami Miller School of Medicine explains, "For years, the medical and patient community relied on single-point glucose checks... This approach provided a limited assessment of glucose regulation and changes in humans. Notably, we were not able to acknowledge that until the expansion of CGM use in research and clinical practice" [35]. Modern CGM systems now provide up to 288 glucose readings per day, revealing patterns, trends, and metabolic responses that would otherwise remain undetected [36]. This rich, continuous data stream provides researchers with unprecedented insights into human metabolism and its interaction with diet, lifestyle, and therapeutic interventions.

Dominant CGM Technologies: Technical Specifications and Performance

The current CGM landscape is characterized by progressive miniaturization, enhanced accuracy, and extended functionality. Major systems including Abbott's FreeStyle Libre series, Dexcom's G7, Medtronic's Guardian systems, and implantable options like Senseonics' Eversense dominate the market, each with distinct technical profiles optimized for different research and clinical applications [37] [38].

Technical Performance Metrics and Comparative Analysis

CGM system performance is quantitatively evaluated through several key parameters, with Mean Absolute Relative Difference (MARD) serving as the primary accuracy metric. MARD represents the average percentage difference between CGM readings and reference blood glucose values, with lower values indicating higher accuracy [37]. Modern systems have achieved remarkable accuracy improvements, with MARD values now ranging from 7.9% to 11.2% depending on the device and conditions [37].

Table 1: Comparative Technical Specifications of Dominant CGM Systems in 2025

| CGM System (Manufacturer) | Size Dimensions | Sensor Duration (Days) | Warm-up Time (Minutes) | Glucose Range (mg/dL) | MARD (%) | Calibration Required |

|---|---|---|---|---|---|---|

| FreeStyle Libre 3 (Abbott) | 2.1 diameter × 0.28 cm | 14 | 60 | 40–500 | 7.9–9.4 | No |

| Dexcom G7 (Dexcom) | 2.7 × 2.4 × 0.46 cm | 10 (with 12-hr grace period) | 30 | 40–400 | 8.2–9.1 | No (optional) |

| Medtronic Guardian 4 (Medtronic) | 6.6 × 5.1 × 3.8 cm | 7 | 120 | 40–400 | 10.1–11.2 | No |

| Caresens Air/Barozen Fit (i-SENS/Handok) | 3.5 × 1.9 × 0.5 cm | 15 | 120 | 40–500 | 9.4–10.42 | Yes (every 24 hr) |

| Eversense (Senseonics) | Implantable | 180 (Eversense 3) 365 (Eversense E3) | 120 | 40–400 | 8.5–9.5 | Yes [38] |

Emerging Technological Innovations

The CGM landscape in 2025 is marked by several transformative technological developments. Non-invasive CGM technologies represent one of the most anticipated advancements, utilizing advanced biosensor technologies like Near-Infrared (NIR) Spectroscopy, Raman Spectroscopy, and Electromagnetic Sensing to eliminate the need for skin penetration [34]. These approaches potentially address key limitations of current systems, including sensor discomfort and skin irritation, thereby improving user adherence and expanding applications to preventive health and wellness markets.

Significant progress is also evident in sensor miniaturization and wearability. Abbott's FreeStyle Libre 3, at just 2.1 cm in diameter and 0.28 cm thick, represents the current pinnacle of discrete design, while fully implantable sensors like Senseonics' Eversense 365 (with 365-day wear) eliminate external hardware entirely [37] [38]. Integration capabilities have expanded substantially, with CGMs now functioning as core components in hybrid closed-loop systems that automatically adjust insulin delivery based on continuous glucose readings [36] [38]. Recent regulatory milestones include the first FDA-cleared over-the-counter CGM in 2024, dramatically improving accessibility for research populations without medical supervision [36].

CGM Applications Beyond Diabetes: Expanding Research Frontiers

The application of CGM technology has expanded significantly beyond its original purpose in diabetes management, creating new research opportunities in precision nutrition, metabolic health assessment, and chronic disease management.

Precision Nutrition and Metabolic Research

CGM technology has become a foundational tool in precision nutrition research, enabling the move from population-level dietary recommendations to individualized nutritional interventions based on real-time metabolic responses [3]. Research by Zeevi et al. demonstrated that identical meals produce highly variable glycemic responses in different individuals, influenced by factors including genetics, gut microbiome composition, and metabolic baseline [39]. CGM provides the continuous data necessary to capture this inter-individual variability and develop personalized nutrition plans.

In practice, CGM enables researchers to identify specific food triggers for excessive glycemic excursions and determine optimal food combinations for glucose stability [36]. This approach has demonstrated efficacy in weight management, metabolic health optimization, and diabetes prevention [3]. The integration of CGM data with artificial intelligence (AI) and machine learning (ML) models further enhances predictive capabilities, allowing researchers to forecast individual glycemic responses to specific foods based on multi-parameter inputs including microbiome data, genetic markers, and meal composition [39].

Specialized Clinical and Research Applications

CGMs are proving valuable across diverse clinical and research scenarios. In pregnancy and gestational metabolic research, CGM use has demonstrated significant benefits, with studies showing adjusted HbA1c reductions of 0.19% in pregnant patients with type 1 diabetes [37]. In sleep medicine research, CGMs have revealed previously undetectable glucose fluctuations associated with sleep apnea episodes, providing insights into the metabolic consequences of sleep disorders [35]. For gastrointestinal conditions like gastroparesis, CGM data helps tailor insulin regimens to unpredictable nutrient absorption patterns, reducing hypoglycemia risk [35].

Post-bariatric surgery patients represent another population benefiting from CGM monitoring, as these individuals frequently experience rapid, unpredictable glucose shifts that traditional monitoring misses [35]. In rare conditions like insulinoma, CGMs can identify hidden hypoglycemic episodes and facilitate treatment monitoring [35]. These diverse applications highlight CGM's versatility as a metabolic monitoring tool across numerous research and clinical domains.

Table 2: Emerging Non-Diabetes Applications of CGM Technology in Research Settings

| Research Application | Key Measured Parameters | Documented Benefits/Insights | Relevant Study Populations |

|---|---|---|---|

| Precision Nutrition | Postprandial glucose excursions, Glucose variability, Time-in-Range | Identifies individual glycemic responses to specific foods; Enables personalized meal planning | General population, Pre-diabetes, Metabolic syndrome |

| Pregnancy Metabolism | Nocturnal glucose patterns, Postprandial peaks, Glucose stability | Reveals pregnancy-specific glucose patterns; Optimizes gestational metabolic health | Pregnant individuals, Gestational diabetes |

| Sleep-Metabolism Interaction | Nocturnal hypoglycemia, Dawn phenomenon, Sleep-related glucose shifts | Correlates glucose fluctuations with sleep disturbances; Quantifies metabolic impact of sleep disorders | Sleep apnea patients, Shift workers |

| Post-Surgical Metabolism | Reactive hypoglycemia, Glucose trends after eating, Asymptomatic lows | Detects rapid glucose shifts after bariatric surgery; Predicts diabetes remission likelihood | Bariatric surgery patients |

| Rare Metabolic Disorders | Spontaneous hypoglycemia, Glucose patterns without external triggers | Identifies hidden hypoglycemic episodes; Monitors treatment efficacy | Insulinoma, Genetic metabolic disorders |

Experimental Protocols and Methodological Considerations

Standardized CGM Performance Assessment

Robust assessment of CGM performance requires standardized methodologies. The International Federation of Clinical Chemistry and Laboratory Medicine (IFCC) Working Group on CGM has developed comprehensive guidelines to address previous limitations in performance evaluation standardization [40]. These guidelines define specific requirements for study design, comparator measurement characteristics, and minimum accuracy standards to enable valid cross-system comparisons and reliable research outcomes.

Key methodological considerations include proper sensor placement according to manufacturer specifications, accounting for the inherent physiological lag (typically 5-15 minutes) between blood glucose and interstitial fluid glucose measurements, and understanding situations where traditional fingerstick verification remains necessary [36]. Research protocols should also incorporate appropriate run-in periods, as the first 24 hours after sensor insertion often show reduced accuracy while the sensor equilibrates with body tissues [36].

Precision Nutrition Study Design

Implementing CGM technology in precision nutrition research requires careful methodological planning. The following workflow outlines a comprehensive approach for investigating individual glycemic responses to nutritional interventions:

Precision Nutrition Research Workflow

This methodology integrates CGM data with multi-omics approaches to develop comprehensive nutritional insights. Study protocols typically include standardized meal challenges, continuous dietary logging, and collection of complementary data streams including physical activity, sleep patterns, and stress indicators [3] [39]. The resulting datasets enable researchers to develop machine learning models that can predict individual glycemic responses to specific foods based on personal characteristics, creating opportunities for highly personalized nutritional interventions [39].

Research Reagent Solutions and Technical Materials

Table 3: Essential Research Materials for CGM-Based Metabolic Studies