Validating AI-Driven Nutrition: A Technical Framework for Precision Medicine & Clinical Research

This article provides a comprehensive technical validation framework for AI-based nutrition recommendation systems, targeted at researchers and biomedical professionals.

Validating AI-Driven Nutrition: A Technical Framework for Precision Medicine & Clinical Research

Abstract

This article provides a comprehensive technical validation framework for AI-based nutrition recommendation systems, targeted at researchers and biomedical professionals. We explore the foundational principles of these systems, detailing the methodologies behind data integration, algorithm selection, and model training. The guide addresses common challenges in clinical deployment and data interoperability, and establishes rigorous protocols for performance benchmarking against traditional dietary assessment tools. Finally, we present a comparative analysis of validation metrics and discuss the implications for integrating AI nutrition into drug development and personalized healthcare interventions.

Demystifying AI Nutrition Systems: Core Principles and Scientific Basis for Researchers

The development of AI-based nutrition recommendation systems represents a continuum from explicit, human-coded logic to implicit, data-driven pattern recognition. This technical evolution is critical for a thesis focused on the systematic validation of such systems, where reproducibility, accuracy, and generalizability are paramount. The transition reflects broader shifts in computational nutrition science towards handling high-dimensional omics data, continuous biosensor streams, and heterogeneous patient phenotypes.

Categorization and Technical Specifications of Models

Table 1: Comparative Analysis of AI Nutrition Recommendation Architectures

| Model Category | Key Technical Principle | Typical Input Data Types | Output Form | Interpretability | Primary Validation Metrics |

|---|---|---|---|---|---|

| Rule-Based Systems | IF-THEN-ELSE logic trees based on dietary guidelines (e.g., USDA, EFSA). | Demographic data (age, sex), self-reported health conditions. | Static meal plans, food group servings. | High (fully transparent). | Rule adherence rate, Dietitian concordance score. |

| Classical Machine Learning (ML) | Feature engineering + algorithms (e.g., SVM, Random Forest, Bayesian Networks). | Demographic, anthropometric (BMI), lab values (fasting glucose), dietary logs. | Categorized recommendations (e.g., "low-glycemic"), macro/micro nutrient targets. | Medium to High (feature importance analyzable). | Precision/Recall (for classification), RMSE (for regression), AUC-ROC. |

| Deep Learning (DL) Models | Multi-layer neural networks for representation learning (CNNs, RNNs, Transformers). | Sequential meal data, images (food pics), genomic sequences, gut microbiome profiles, continuous glucose monitor (CGM) traces. | Personalized dynamic food items, real-time meal adjustments, predicted biomarker response. | Low (black-box, requires post-hoc XAI). | Personalization Index, Prediction AUC on held-out users, Reduction in biomarker variance (e.g., glucose spike). |

| Hybrid Systems | Combination of symbolic (rules) and sub-symbolic (DL) AI. | All of the above, often in a multi-modal setup. | Context-aware, explainable recommendations with deep personalization. | Configurable (by design). | Composite: Accuracy + Explainability Score (e.g., SHAP value consistency). |

Experimental Protocols for Model Validation

Validation within a thesis context must move beyond standard software metrics to incorporate nutritional and clinical relevance.

Protocol 3.1: In Silico Validation Using Public Nutritional Datasets

- Objective: To benchmark model performance on standardized data before clinical deployment.

- Materials: NHANES database, UK Biobank nutrition data, ASA24 response files.

- Procedure:

- Data Curation: Extract and clean dietary records, link with corresponding biomarker data (e.g., HbA1c, lipids). Annotate with food ontology (e.g., FoodOn).

- Benchmarking Split: Perform a user-wise temporal split (e.g., first 80% of a user's diary for training, last 20% for testing) to prevent data leakage and simulate real-world sequential use.

- Model Training & Tuning: Train candidate models (from Table 1). For DL models, use cross-validation on the training set for hyperparameter optimization (learning rate, network depth).

- Performance Assessment: Evaluate on the held-out test set using metrics from Table 1. Statistically compare models using paired t-tests or Wilcoxon signed-rank tests across users.

- Deliverable: A ranked model performance report with statistical significance.

Protocol 3.2: Controlled Feeding Study for Causal Validation

- Objective: To establish a causal link between model recommendations and biomarker changes under isocaloric conditions.

- Materials: Metabolic kitchen, clinical lab for assays, CGM devices, participant diaries.

- Procedure:

- Participant Stratification: Recruit participants stratified by genotype (e.g., FTO variant), phenotype (e.g., prediabetic), or microbiome enterotype.

- Study Design: Execute a randomized crossover trial. Each participant receives both a control diet (standard guidelines) and an AI-personalized diet, with a sufficient washout period.

- Intervention Delivery: Prepare meals per the AI model's output. Weigh and record all food. Collect biospecimens (fasting blood, stool) at baseline and endpoint.

- Endpoint Measurement: Primary: change in postprandial glucose AUC (from CGM). Secondary: changes in cholesterol, inflammation markers (CRP), short-chain fatty acids (from microbiome).

- Deliverable: Causal evidence of AI diet efficacy over standard care, with subgroup analysis.

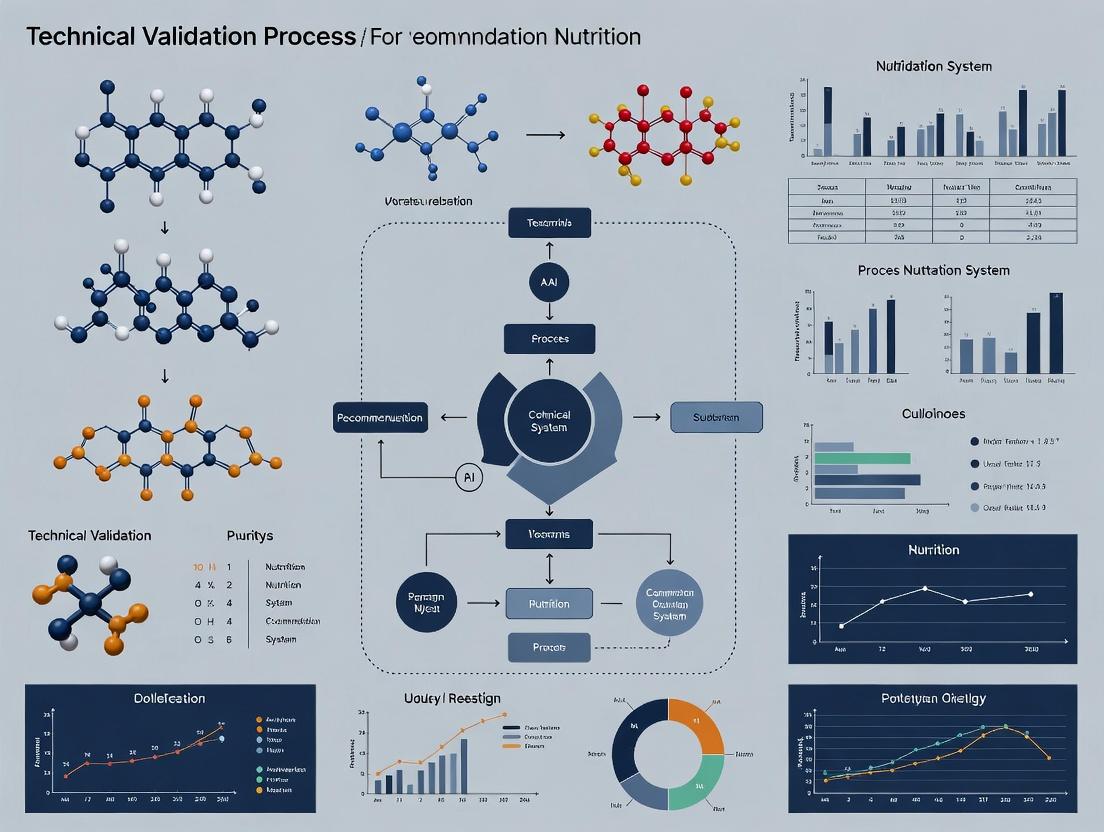

Visualizing System Architectures and Workflows

Title: Rule-Based System Logic Flow

Title: Deep Learning Personalization Feedback Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials for AI-Nutrition Validation Studies

| Item / Solution | Function in Research Context | Example Product / Specification |

|---|---|---|

| Standardized Dietary Assessment Tool | Provides structured, computable nutritional intake data for model training and testing. | Automated Self-Administered 24-hour Recall (ASA24), Food Frequency Questionnaire (FFQ) with linked food composition tables. |

| Continuous Glucose Monitor (CGM) | Delivers high-resolution, time-series glycemic response data for personalization and validation. | Dexcom G7, Abbott FreeStyle Libre 3. Data accessed via API for real-time model integration. |

| Food Ontology Database | Enables semantic reasoning and consistency by mapping foods to a standardized hierarchy. | FoodOn, USDA Food and Nutrient Database for Dietary Studies (FNDDS). |

| Metabolomics Assay Kit | Quantifies nutritional biomarkers (e.g., SCFAs, lipids, vitamins) for ground-truth validation of dietary impact. | Mass spectrometry-based targeted panels (e.g., Biocrates MxP Quant 500). |

| Bioinformatics Pipeline (Software) | Processes genomic, metagenomic, or metabolomic data for use as model input features. | QIIME 2 for microbiome analysis, PLINK for GWAS data. |

| eClinical / Nutrition Platform | Manages controlled feeding studies, randomizes diets, and collects electronic patient-reported outcomes (ePRO). | NutriAdmin, Romeo. |

| Explainable AI (XAI) Library | Provides post-hoc interpretability for black-box DL models to generate hypotheses and ensure safety. | SHAP (SHapley Additive exPlanations), LIME (Local Interpretable Model-agnostic Explanations). |

The validation of AI-based personalized nutrition systems requires the multi-modal integration of high-dimensional biological data. This document provides detailed application notes and experimental protocols for generating and integrating genomics, metabolomics, microbiomics, and clinical biomarker data streams, which serve as the foundational technical validation platform for nutritional intervention research.

Table 1: Core Multi-Omics Assays and Output Specifications

| Data Stream | Primary Assay | Key Measured Entities | Typical Throughput | Data Points/Sample | Primary Platform |

|---|---|---|---|---|---|

| Genomics | Whole Genome Sequencing (WGS) / SNP Array | Single Nucleotide Polymorphisms (SNPs), Insertions/Deletions | 48-96 samples/run | ~3 billion bases (WGS) / 0.5-5 million SNPs (Array) | Illumina NovaSeq, Illumina Global Screening Array |

| Metabolomics | Untargeted LC-MS/MS | Small molecule metabolites (<1500 Da) | 20-100 samples/day | 5,000 - 10,000 features | Thermo Q-Exactive, Sciex TripleTOF |

| Microbiomics | 16S rRNA Gene Sequencing / Shotgun Metagenomics | Bacterial 16S rRNA genes / All microbial genes | 96-384 samples/run | 10,000-100,000 sequences/sample (16S) / 20-80 million reads (Shotgun) | Illumina MiSeq, Illumina NovaSeq |

| Clinical Biomarkers | Immunoassays / Clinical Chemistry | Cytokines, Hormones, Metabolic Panel (e.g., HbA1c, Lipids) | 96-plex/sample (Luminex) / 384 samples/run (Chemistry) | 1-96 analytes (Luminex) / 20-50 analytes (Chemistry) | Luminex xMAP, Roche Cobas |

Table 2: Key Validation Metrics for AI-Nutrition Model Inputs

| Omics Layer | Pre-Analytical CV (%) | Analytical CV (%) | Recommended Sample Size for Model Training | Typical Batch Effect Correction Method |

|---|---|---|---|---|

| Genomics (SNPs) | <2% | <0.1% | >1,000 | Principal Component Analysis (PCA) |

| Plasma Metabolomics | 10-15% | 5-8% | >200 | Combat, SVA |

| Fecal Microbiomics (16S) | 15-25% | 2-5% | >300 | Remove Batch Effect (RBE), MMUPHin |

| Serum Clinical Biomarkers | 5-10% | 3-7% | >150 | Median Polish, Linear Regression |

Detailed Experimental Protocols

Protocol 3.1: Integrated Sample Collection for a Nutritional Intervention Study

Objective: Standardized collection of biospecimens for multi-omics profiling pre- and post-nutritional intervention.

Materials:

- EDTA tubes (for plasma DNA & metabolites)

- Serum separator tubes

- Stool collection kit with DNA/RNA stabilizer (e.g., OMNIgene•GUT)

- Aliquoting tubes and cryo-labels

- -80°C freezer

Procedure:

- Fasting Blood Draw: Collect venous blood into EDTA and serum tubes after a 10-12 hour overnight fast.

- Plasma/Serum Processing: Centrifuge EDTA tubes at 2000 x g for 10 min at 4°C within 30 min of draw. Aliquot plasma into 500µL cryovials. Process serum tubes per manufacturer protocol. Flash freeze in liquid nitrogen.

- Stool Collection: Participant collects sample into OMNIgene•GUT tube, shakes vigorously for 30s to homogenize and stabilize microbial DNA. Store at room temperature until transfer to lab (up to 60 days).

- Biospecimen Archiving: Store all aliquots at -80°C in barcoded boxes. Maintain a LIMS record linking sample ID, collection timestamp, and storage location.

Protocol 3.2: DNA Extraction & Sequencing for Genomics & Shotgun Metagenomics

Objective: Co-isolation of human host and microbial DNA from a single stool aliquot for parallel WGS and metagenomic sequencing.

Materials:

- QIAamp PowerFecal Pro DNA Kit (Qiagen)

- Bead beater with 0.1mm glass beads

- Qubit 4 Fluorometer and dsDNA HS Assay Kit

- Illumina DNA Prep Kit

- IDT for Illumina DNA/RNA UD Indexes

Procedure:

- Lysis: Weigh 180-220 mg of stabilized stool into PowerBead Pro tube. Add CD1 solution and heat at 65°C for 10 min.

- Mechanical Disruption: Bead beat at 5 m/s for 2 x 45s, with a 5 min ice incubation between cycles.

- DNA Purification: Follow kit protocol. Elute DNA in 50µL of elution buffer.

- QC: Measure concentration (Qubit) and integrity (TapeStation Genomic DNA ScreenTape). Accept if >1 ng/µL and DNA Integrity Number (DIN) >6.

- Library Prep for Host WGS: For human DNA, use 100ng input with Illumina DNA Prep Kit. Fragment to 350bp, attach unique dual indexes (UDI).

- Library Prep for Shotgun Metagenomics: Use 10ng of total DNA. Perform identical library prep but increase PCR cycles to 12.

- Sequencing: Pool libraries at equimolar ratios. Sequence host WGS on NovaSeq 6000 (PE150, 30x coverage). Sequence metagenomic libraries on NovaSeq (PE150, 20M reads/sample).

Protocol 3.3: Untargeted Plasma Metabolomics via LC-HRMS

Objective: Profiling of polar and non-polar metabolites from human plasma.

Materials:

- Methanol (LC-MS grade), Acetonitrile (LC-MS grade), Water (LC-MS grade)

- Internal Standard Mix (e.g., MSK-IS1 from Cambridge Isotope Labs)

- C18 column (e.g., Waters ACQUITY UPLC BEH C18, 1.7µm, 2.1x100mm)

- HILIC column (e.g., Waters ACQUITY UPLC BEH Amide, 1.7µm, 2.1x100mm)

- Thermo Q-Exactive HF-X Mass Spectrometer coupled to Vanquish UPLC

Procedure (Polar Metabolites - HILIC):

- Protein Precipitation: Thaw plasma on ice. Add 300µL of ice-cold methanol:acetonitrile (1:1) containing IS to 50µL plasma. Vortex 30s, incubate at -20°C for 1h, centrifuge at 14,000 x g for 15 min at 4°C.

- LC Conditions: Column temperature 40°C. Mobile phase A: 10mM Ammonium Acetate in 95:5 Water:ACN (pH 9.0); B: 10mM Ammonium Acetate in 95:5 ACN:Water. Gradient: 0-2 min 100% B, 2-17 min to 0% B, hold 2 min, re-equilibrate.

- MS Conditions: ESI positive/negative switching. Full scan m/z 70-1050 at 120,000 resolution. Data-dependent MS/MS (top 10) at 15,000 resolution. NCE stepped 20, 40, 60.

- Data Processing: Use Compound Discoverer 3.3 or XCMS for peak picking, alignment, and annotation against mzCloud and HMDB.

Protocol 3.4: High-Plex Clinical Biomarker Assay (Luminex)

Objective: Quantify 48-plex cytokine/chemokine panel from human serum.

Materials:

- Human Cytokine 48-Plex Discovery Assay Array (Eve Technologies)

- Luminex 200 or MAGPIX system

- Plate shaker, microplate washer

- Biotinylated detection antibody cocktail, Streptavidin-PE

Procedure:

- Assay Setup: Thaw serum and filter (0.22µm). Dilute 1:2 in provided matrix.

- Incubation: Add 50µL of standards, controls, and samples to pre-mixed antibody bead plate. Seal, cover with foil, incubate on plate shaker (850 rpm) overnight at 4°C.

- Detection: Wash plate 3x. Add 25µL of biotinylated detection antibody cocktail. Incubate 1h on shaker (room temp). Wash 3x. Add 50µL Streptavidin-PE, incubate 30 min on shaker.

- Reading: Wash 3x, resuspend in 120µL drive fluid. Read on Luminex instrument, acquiring at least 50 beads per region.

- Analysis: Use xPONENT software. Calculate concentrations from 5-PL standard curve.

Visualization of Workflows and Relationships

Title: Nutritional Intervention Multi-Omics Workflow

Title: Data Stream Convergence for AI-Nutrition

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents & Kits for Multi-Omics Nutritional Studies

| Item Name (Supplier) | Category | Brief Function in Protocol |

|---|---|---|

| OMNIgene•GUT (DNA Genotek) | Microbiomics Sample Collection | Stabilizes microbial community DNA in stool at room temperature for 60 days, critical for pre-analytical standardization. |

| QIAamp PowerFecal Pro DNA Kit (Qiagen) | DNA Extraction | Simultaneously lyses human and microbial cells in tough matrices (stool), removes PCR inhibitors, yields high-quality DNA for WGS & metagenomics. |

| Illumina DNA Prep with UD Indexes (Illumina) | Genomics Library Prep | Flexible, robust library construction for both human WGS and low-input metagenomic sequencing, featuring Unique Dual Indexes for sample multiplexing. |

| Human Cytokine 48-Plex Discovery Assay (Eve Technologies) | Clinical Biomarkers | Enables quantitative, high-throughput profiling of 48 inflammatory mediators from a single 50µL serum sample via Luminex xMAP technology. |

| MSK-IS1 Internal Standard Mix (Cambridge Isotope Labs) | Metabolomics | A curated mix of 23 stable isotope-labeled internal standards spanning key metabolic pathways, enabling QC and semi-quantitation in untargeted LC-MS. |

| PFP (Pentafluorophenyl) Propyl Phase Column (e.g., Restek Raptor) | Metabolomics LC Separation | Provides orthogonal retention mechanism to C18/HILIC, excellent for separating isomers in complex biological samples like plasma. |

| HiSeq SBS Kit v2 (500 cycles) (Illumina) | Sequencing Chemistry | Standardized reagent kit for high-output sequencing runs, ensuring consistent quality and yield for all genomic/metagenomic libraries. |

| PBS, pH 7.4 (Gibco) | General Reagent | Used as a universal diluent, wash buffer, and matrix for various assays (Luminex, sample dilution) to maintain physiological pH and ionic strength. |

Application Notes

The technical validation of AI-based nutrition recommendation systems relies on three core architectures, each addressing distinct facets of personalization, behavioral adaptation, and physiological modeling. The following notes detail their application within a research framework aimed at generating clinically actionable, evidence-based recommendations.

1. Neural Networks (NNs) for Predictive Biomarker Modeling Deep Neural Networks (DNNs), particularly Multi-Layer Perceptrons (MLPs) and Temporal Convolutional Networks (TCNs), are employed to model complex, non-linear relationships between multimodal inputs (e.g., dietary logs, metabolomic profiles, gut microbiome data, continuous glucose monitoring (CGM) traces) and physiological outcomes (e.g., postprandial glycemic response, inflammatory markers). Convolutional Neural Networks (CNNs) process image-based dietary records. Their primary validation challenge is the requirement for large, high-quality datasets and the "black box" nature which complicates mechanistic insight.

2. Reinforcement Learning (RL) for Longitudinal Behavioral Intervention RL agents, typically using policy gradient methods (e.g., Proximal Policy Optimization - PPO) or value-based methods (e.g., Deep Q-Networks - DQN), are framed as a sequential decision-making problem. The agent (recommendation system) interacts with an environment (the patient) by issuing dietary suggestions (action) and receives a reward signal based on short- and medium-term biomarker improvements and adherence metrics. This architecture is uniquely suited for personalizing intervention strategies over time, navigating trade-offs between exploration (trying new foods) and exploitation (recommending known safe options).

3. Hybrid Systems for Integrated, Explainable Recommendations Hybrid architectures combine the predictive power of NNs with the decision-making logic of RL, and often incorporate symbolic AI or knowledge graphs for explainability. A common pattern uses a DNN as a "world model" to predict patient-specific outcomes, whose outputs are used by an RL agent to optimize long-term strategies. Alternatively, neural networks process raw data into embeddings, which are then reasoned over by a rule-based system constrained by nutritional guidelines (e.g., FAO/WHO). This approach facilitates technical validation by providing more interpretable decision pathways.

Table 1: Comparative Performance of AI Architectures in Nutritional Studies (2022-2024)

| Architecture | Primary Task | Reported Accuracy / R² | Key Dataset & Size | Outcome Metric |

|---|---|---|---|---|

| CNN (ResNet-50) | Food Image Recognition | 92.4% (Top-1) | Food-101 (101k images) | Classification Accuracy |

| DNN (MLP) | PPG Glucose Prediction | R² = 0.78 ± 0.05 | Cohort: n=327, ~42k meals | Mean Squared Error |

| RL (DQN) | Meal Sequence Optimization | 18.5% Improvement | Simulation: n=10,000 agents | Adherence vs. Glycemic Target |

| Hybrid (NN+KG) | Personalized Meal Planning | 88.7% Satisfaction | Trial: n=154, 12-week | User Satisfaction & Nutrient Adequacy |

Experimental Protocols

Protocol 1: Validating a Neural Network for Postprandial Glycemic Response (PPGR) Prediction

Objective: To develop and technically validate a DNN model for predicting individualized PPGR based on pre-meal context.

Materials & Subjects:

- Cohort: n=300 adults (prediabetic range), recruited for a 14-day monitoring study.

- Data Streams: Continuous Glucose Monitors (CGM), wearable activity trackers, standardized meal challenges with photographic dietary records, baseline metabolomic panel.

Procedure:

- Data Acquisition & Preprocessing:

- Align CGM data with meal timestamps. Calculate incremental Area Under the Curve (iAUC) for 2-hour PPGR as the primary label.

- Extract meal composition via automated image analysis (CNN) linked to a standardized food database (e.g., USDA FoodData Central).

- Engineer features: macronutrient ratios, fiber content, meal timing, physical activity level in the preceding 3 hours, fasting glucose.

- Normalize all features (Z-score) and segment data into meal events.

- Model Training & Validation:

- Architecture: Implement a 5-layer MLP with dropout (rate=0.3) and ReLU activation. Final output layer is linear regression for iAUC.

- Partitioning: 70/15/15 split for training, validation, and hold-out test sets, ensuring all meals from a single participant reside in only one set.

- Training: Use Adam optimizer (lr=0.001), Mean Squared Error (MSE) loss. Train for 500 epochs with early stopping based on validation loss.

- Validation Metrics: Report R², MSE, and Mean Absolute Error (MAE) on the hold-out test set. Perform SHAP (SHapley Additive exPlanations) analysis for feature importance.

Protocol 2: Evaluating a Reinforcement Learning Agent for Personalized Meal Sequencing

Objective: To train and validate an RL agent in a simulated environment that optimizes weekly meal plans for glycemic stability.

Materials:

- Simulation Environment: Built using the OpenAI Gym framework. The environment state (S) includes: time of day, recent glycemic history, nutritional balance over past 48h, user preference profile. Action (A) is selecting from a database of 500 validated meal options. Reward (R) is a composite score:

R = w1*(Δ Glycemic Variability) + w2*(Adherence Score) + w3*(Nutritional Completeness), where weights are tuned. - Agent: Implement a DQN with experience replay and a target network. The Q-network is a 4-layer fully connected network.

Procedure:

- Environment Calibration: Populate the environment with biologically plausible transition dynamics derived from a separate cohort's CGM data (not used in final testing).

- Agent Training:

- Initialize agent. For each episode (simulated 30-day period for one virtual patient), the agent iteratively selects meals.

- Store experiences (S, A, R, S') in replay buffer. Sample mini-batches to update the Q-network via gradient descent to minimize Temporal Difference error.

- Train for 100,000 episodes, decaying the exploration rate (ε) from 1.0 to 0.05.

- Evaluation: Deploy the trained, frozen agent in a new test environment with 1000 unseen virtual patient profiles. Compare the agent's performance against a rule-based baseline (e.g., consistent carbohydrate diet) on cumulative reward, glycemic target time-in-range (TIR), and dietary variety.

Protocol 3: Testing a Hybrid Neural-Symbolic System for Contraindication-Aware Recommendations

Objective: To validate a hybrid system that combines NN-based preference prediction with a knowledge-graph-driven safety checker for patients with comorbidities (e.g., CKD).

Materials:

- Component 1: A Neural Collaborative Filtering (NCF) model trained on user-meal interaction matrices (implicit feedback).

- Component 2: A nutritional knowledge graph (KG) encoding relationships between foods, nutrients, and clinical guidelines (e.g., potassium, phosphate limits for CKD).

- Test Group: n=50 virtual patient profiles with defined CKD stages and synthetic dietary preferences.

Procedure:

- System Pipeline:

- For a given user, the NCF model generates a ranked list of top-50 meal candidates based on predicted preference score.

- Each candidate meal is queried against the KG. A symbolic reasoner checks estimated nutrient loads against the patient's stage-specific constraints.

- Any meal violating constraints is filtered out or penalized in the ranking.

- The final, filtered list is presented as recommendations.

- Validation Metrics:

- Safety: Percentage of recommended meals that comply with clinical guidelines (target: 100%).

- Personalization: Normalized Discounted Cumulative Gain (nDCG) comparing final list to a ground-truth of user preferences (simulated).

- Measure system latency (end-to-end inference time).

Diagrams

Diagram 1: Hybrid AI Nutrition System Workflow

Diagram 2: RL Agent Training Loop for Nutrition

Diagram 3: NN Model for Glycemic Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-Nutrition Research Validation

| Item / Solution | Function in Research | Example Product/Platform |

|---|---|---|

| Continuous Glucose Monitor (CGM) | Provides high-frequency, real-world glycemic response data for model training and validation. | Dexcom G7, Abbott FreeStyle Libre 3 |

| Standardized Food & Nutrient Database | Serves as the ground-truth source for converting dietary intake (text/image) into quantitative nutrient vectors. | USDA FoodData Central, McCance and Widdowson's (UK) |

| Metabolomics Assay Kit | Enables quantification of plasma/urine metabolites (e.g., SCFAs, lipids) as input features or validation biomarkers. | Nightingale Health NMR panel, Metabolon HD4 |

| Gut Microbiome Sequencing Service | Provides 16S rRNA or shotgun metagenomic data to incorporate microbiome features as predictors of nutritional response. | Services from Novogene, Microba Life Sciences |

| Behavioral Adherence Tracking Platform | Captures self-reported meal adherence, satiety, and symptoms, generating reward signals for RL and outcome data. | Custom REDCap surveys, Komodo Health (real-world evidence) |

| AI/ML Development Framework | Provides libraries for building, training, and deploying neural network and reinforcement learning models. | TensorFlow, PyTorch, Ray RLlib |

| Knowledge Graph Curation Tool | Assists in structuring nutritional knowledge, clinical guidelines, and ontologies for hybrid AI systems. | Neo4j, Apache Jena, Protégé |

Within the technical validation framework of an AI-based nutrition recommendation system (NRS), the opacity of complex algorithms presents a significant barrier to clinical adoption and regulatory approval. These "black box" models, while potentially accurate, lack inherent transparency regarding how specific dietary recommendations are generated for an individual. This document provides detailed Application Notes and Protocols for a series of experiments designed to probe, interpret, and explain the decision-making processes of dietary algorithms. The goal is to establish standardized methodologies for validating that algorithmic outputs are biologically plausible, clinically rational, and ethically sound, thereby moving from a black box to a "glass box" paradigm.

Foundational Concepts & Key Metrics

Interpretability refers to the ability to understand the mechanistic workings of a model (e.g., feature importance). Explainability refers to the ability to provide post-hoc, human-understandable reasons for a specific prediction or recommendation.

Table 1: Quantitative Metrics for Evaluating Interpretability & Explainability (XAI) in Dietary Algorithms

| Metric Category | Specific Metric | Definition & Calculation | Target Value (Benchmark) |

|---|---|---|---|

| Feature Importance | Permutation Feature Importance (PFI) | The decrease in model performance (e.g., RMSE for calorie prediction) after randomly shuffling a single feature. PFI = BaselineScore - ShuffledScore. | PFI > 2*Std_Dev of PFI distribution across features indicates significant importance. |

| Model Fidelity | Local Explanation Fidelity | The agreement between the original model's prediction and a simple, interpretable model's (e.g., linear regression) prediction for a local neighborhood. Fidelity = 1 - (MAE between two predictions). | > 0.85 for high-stakes recommendations (e.g., renal diet). |

| Explanation Quality | SHAP (SHapley Additive exPlanations) Value Consistency | The standard deviation of SHAP values for a key feature (e.g., HbA1c) across multiple bootstrap samples of the training data. Lower SD indicates higher stability. | Coefficient of Variation (CV) < 15%. |

| Human Evaluation | Post-hoc Explanation Satisfaction (Clinician Survey) | Likert scale (1-5) assessment by domain experts on whether the provided explanation (e.g., LIME output) justifies the dietary recommendation. | Mean Score ≥ 4.0. |

Experimental Protocols

Protocol 3.1: Probing Feature Importance via Ablation Studies

Objective: To identify which input features (e.g., biomarkers, dietary logs, genetics) are most critical for a specific dietary output (e.g., macronutrient split). Materials: Trained dietary algorithm, held-out validation dataset, high-performance computing cluster. Procedure:

- Establish a baseline performance metric (e.g., R², AUC) on the validation set.

- For each feature i in the input vector X: a. Create a perturbed dataset X' where values for feature i are replaced with Gaussian noise (μ=0, σ=σi) or shuffled. b. Run the model on *X'* and record the new performance metric. c. Calculate importance *Ii* = BaselineMetric - PerturbedMetric.

- Rank features by I_i. Perform statistical testing (e.g., paired t-test) to determine if the drop in performance is significant (p < 0.01, Bonferroni-corrected).

- Visualization: Generate a horizontal bar plot of ranked I_i values. Features with I_i significantly greater than zero are deemed critical drivers.

Protocol 3.2: Generating Local Explanations with LIME for a Specific Recommendation

Objective: To explain "why did the algorithm recommend a low-glycemic diet for Patient X?" Materials: Instance (Patient X data), trained black-box model, LIME software package (or equivalent), interpretable surrogate model (e.g., ridge regression). Procedure:

- Instance Selection: Choose a representative or edge-case instance from the validation set.

- Perturbation: Generate N (e.g., 5000) synthetic data points by randomly perturbing features of the selected instance within their observed distributions.

- Prediction: Obtain the black-box model's predictions (e.g., probability of "low-glycemic diet" class) for all N perturbed samples.

- Weighting: Calculate proximity weights for each synthetic sample based on its Euclidean distance to the original instance (using kernel function).

- Surrogate Fitting: Fit an interpretable model (e.g., linear regression with L2 regularization) to the weighted, perturbed dataset, where the target variable is the black-box model's prediction.

- Explanation Extraction: The coefficients of the fitted surrogate model constitute the local explanation. For example, a positive coefficient for "HbA1c = 8.5%" indicates this high value pushed the recommendation towards the "low-glycemic" class.

- Validation: Report the local fidelity score (see Table 1).

Protocol 3.3: Validating Biological Plausibility via Signaling Pathway Mapping

Objective: To assess if a genotype-based nutrient recommendation aligns with known biochemical pathways. Materials: Algorithm output (e.g., "Increase folate for genotype rs1801133 (TT)"), curated biological pathway databases (KEGG, Reactome), gene-nutrient interaction databases (NCBI, NutrigenomicsDB). Procedure:

- Extraction: Parse the algorithm's recommendation to identify the key nutrient and associated genetic variant(s).

- Pathway Retrieval: Query KEGG/Reactome for all metabolic pathways involving the nutrient (e.g., folate) and its associated metabolites (e.g., 5-MTHF).

- Gene Mapping: Overlay the genetic variant(s) (e.g., MTHFR gene for rs1801133) onto the retrieved pathways.

- Impact Analysis: Using literature, determine the functional impact of the variant (e.g., reduced MTHFR enzyme activity). Trace the expected metabolic consequence (e.g., elevated homocysteine).

- Plausibility Check: Determine if the algorithmic recommendation (increase folate) directly addresses the predicted metabolic consequence (lowers homocysteine by substrate provision). A "Yes/No" assessment is recorded with supporting literature citations.

Visualizations

Diagram 1: XAI Validation Workflow for Dietary AI

Diagram 2: MTHFR Folate Pathway & Algorithm Plausibility Check

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for XAI in Nutrition Research

| Item / Solution | Provider / Example | Function in Validation Research |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Lundberg & Lee (GitHub: shap) | A game-theoretic approach to assign consistent importance values to each feature for any model output, providing both global and local interpretability. |

| LIME (Local Interpretable Model-agnostic Explanations) | Ribeiro et al. (GitHub: lime) | Creates a local, interpretable surrogate model to approximate the predictions of the black-box algorithm for a specific instance. |

| Ancestry-Specific Genotype Panels | Illumina Global Screening Array, ThermoFisher Axiom | Provides curated, high-quality genetic variant data essential for validating nutrigenomic components of dietary algorithms. |

| Targeted Metabolomics Kits | Biocrates p180, Nightingale Health | Quantifies a wide array of blood metabolites (lipids, sugars, amino acids) to biochemically validate algorithm predictions (e.g., "improved lipid profile"). |

| Structured Clinical Nutrition Datasets | NHANES, UK Biobank, All of Us | Provides large-scale, multi-modal (diet, lab, health outcome) data for training explainable models and benchmarking black-box algorithm performance. |

| Causal Discovery Toolkits | Microsoft DoWhy, CausalNex | Helps disentangle correlation from causation in observational nutrition data, strengthening the plausibility of algorithmic recommendations. |

| Containerized AI Environment | Docker, Kubernetes with MLflow | Ensures exact reproducibility of the AI model and its XAI analyses, a critical requirement for technical validation and peer review. |

Application Notes

For the technical validation of an AI-based nutrition recommendation system, rigorous application of ethical and regulatory principles is non-negotiable. This framework ensures that research and development activities not only yield scientifically valid outcomes but also protect human subjects and promote equitable health benefits.

1. Data Privacy in Multi-Omics Nutritional Studies Modern nutritional AI systems integrate sensitive data layers, including genomic (SNPs related to metabolism), proteomic, metabolomic, and continuous glucose monitoring (CGM) data. Current regulations, notably the EU's General Data Protection Regulation (GDPR) and the US Health Insurance Portability and Accountability Act (HIPAA), define this as protected health information. A 2023 review in Nature Machine Intelligence indicated that 68% of AI health studies reported using de-identification, but only 32% implemented formal differential privacy mechanisms. Federated learning (FL) has emerged as a pivotal architecture, allowing model training across decentralized datasets without transferring raw data. Validation protocols must therefore assess both model performance and the resilience of privacy-preserving techniques against membership inference attacks.

2. Bias Mitigation Across the Development Lifecycle Bias in nutritional AI can stem from non-representative training cohorts, often skewed towards specific ethnicities, socioeconomic statuses, or age groups. A 2024 analysis of public nutrition datasets found that over 75% of genomic and dietary intake records were from populations of European descent. This can lead to recommendations that are ineffective or harmful for underrepresented groups. Mitigation is not a single-step correction but a continuous process requiring structured assessment at each phase: data curation, model training, and outcome validation.

3. Clinical Safety as a Primary Endpoint The transition from algorithm output to a nutritional intervention carries direct clinical risk. Adverse outcomes may include nutrient deficiencies, exacerbation of eating disorders, or inappropriate advice for chronic conditions (e.g., renal disease, diabetes). Safety validation must therefore extend beyond statistical accuracy to include clinical plausibility checks, monitoring for physiological harm, and establishing clear human-in-the-loop (HITL) escalation protocols.

Experimental Protocols

Protocol 1: Data Privacy Audit via Reconstruction Attack Simulation

Objective: To empirically validate the effectiveness of deployed privacy measures (e.g., differential privacy noise, k-anonymization) by attempting to reconstruct quasi-identifiers from the system's outputs or trained model weights. Methodology:

- Setup: Within a secure, isolated test environment, create a synthetic dataset

D_synthmimicking the structure of the real training data (containing fields like age bracket, postal code, gender, and rare dietary markers). - Process: Train two instances of the target nutrition recommendation model:

Model_A: Trained onD_synthwith standard protocols.Model_B: Trained onD_synthwith the organization's full privacy-enhancing technologies (PETs) applied.

- Attack Simulation: Employ a calibrated adversarial model to query both

Model_AandModel_Bwith known subset data. The attacker's goal is to predict the value of a hidden quasi-identifier field (e.g., "presence of rare metabolic SNP XYZ"). - Metric & Validation: Calculate the reconstruction accuracy for both models. Success is defined as a statistically significant reduction (p < 0.01) in reconstruction accuracy for

Model_Bcompared toModel_A.

Table 1: Privacy Audit Results from Simulation (Hypothetical Data)

| Privacy Measure Tested | Attack Query Volume | Reconstruction Accuracy (Control Model_A) | Reconstruction Accuracy (With PETs, Model_B) | p-value |

|---|---|---|---|---|

| Differential Privacy (ε=0.5) | 10,000 queries | 89.2% | 52.1% | <0.001 |

| k-anonymization (k=10) | 10,000 queries | 88.7% | 60.5% | 0.003 |

| Federated Learning + Secure Aggregation | 10,000 queries | 90.1% | 48.3% | <0.001 |

Protocol 2: Comprehensive Bias Assessment Across Demographic Strata

Objective: To quantify model performance disparities across predefined demographic subgroups to identify algorithmic bias. Methodology:

- Stratification: Partition the hold-out test dataset into subgroups based on protected or relevant attributes:

S1(Genetic Ancestry: EUR),S2(Genetic Ancestry: AFR),S3(Genetic Ancestry: EAS),S4(Age: 20-40),S5(Age: 60+),S6(Socioeconomic Status: High),S7(Socioeconomic Status: Low). - Performance Metrics: Evaluate the primary model on each subgroup using a suite of metrics:

Accuracy,F1-Score,Positive Predictive Value (PPV),Area Under the Receiver Operating Characteristic Curve (AUROC). - Disparity Calculation: Compute the maximum disparity gap (MDG) for each metric:

MDG = max(|M_i - M_baseline|), whereM_iis the metric for subgroup i andM_baselineis the metric for the largest or reference subgroup. - Validation Threshold: A model is considered to have unacceptable bias if the MDG for AUROC exceeds 0.10 or the MDG for PPV exceeds 0.15, as per draft FDA guidelines on AI/ML in software as a medical device (SaMD).

Table 2: Bias Assessment Metrics by Genetic Ancestry Subgroup

| Subgroup | Sample Size (N) | Accuracy | F1-Score | PPV | AUROC |

|---|---|---|---|---|---|

| European (EUR) - Baseline | 12,500 | 0.89 | 0.87 | 0.88 | 0.94 |

| African (AFR) | 1,850 | 0.81 | 0.76 | 0.74 | 0.85 |

| East Asian (EAS) | 2,100 | 0.86 | 0.83 | 0.82 | 0.91 |

| Maximum Disparity Gap (MDG) | - | 0.08 | 0.11 | 0.14 | 0.09 |

Protocol 3: Clinical Safety Review via Sentinel Nutrient Tracking

Objective: To proactively identify risks of nutrient deficiency or toxicity arising from AI-generated meal plans over a simulated 90-day period. Methodology:

- Simulation Engine: Develop a pharmacokinetic/pharmacodynamic (PK/PD)-inspired simulation that models body stores of sentinel nutrients (e.g., Iron, Vitamin D, Vitamin B12, Sodium, Potassium) based on AI-generated daily intake recommendations and simulated patient adherence (modeled as 80%).

- Cohort: Run the simulation for a virtual cohort of

N=10,000with heterogeneous starting baselines, gut absorption efficiency variables, and health conditions (20% with simulated CKD, 15% with HFE gene variants). - Safety Thresholds: Program physiological thresholds for each nutrient (e.g., Serum Ferritin < 15 µg/L for iron deficiency; Serum 25(OH)D < 20 ng/mL for deficiency; Serum Sodium > 145 mmol/L for hypernatremia).

- Endpoint & Monitoring: The primary safety endpoint is the incidence rate of threshold violation per 1000 person-days. A real-time monitoring dashboard flags any cohort where the incidence rate for a severe outcome exceeds 0.1/1000 person-days, triggering an automatic HITL review.

Diagrams

AI Nutrition System Data Privacy Workflow

Bias Mitigation Lifecycle for AI Nutrition Models

Clinical Safety Sentinel Monitoring Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Ethical AI Nutrition Validation Research

| Item / Solution | Function in Validation Research |

|---|---|

| Synthetic Data Generation Platform (e.g., Synthea, Gretel.ai) | Creates realistic, privacy-safe datasets for initial model prototyping and privacy attack simulations without using real PHI. |

| Federated Learning Framework (e.g., NVIDIA FLARE, Flower, PySyft) | Enables training machine learning models across multiple decentralized edge devices (or data silos) holding local data samples. |

| Fairness Assessment Library (e.g., AI Fairness 360, Fairlearn) | Provides a comprehensive set of metrics (like statistical parity, equalized odds) and algorithms to detect and mitigate bias in models. |

| Differential Privacy Library (e.g., TensorFlow Privacy, OpenDP) | Adds carefully calibrated noise to data or training processes to provide mathematically rigorous privacy guarantees. |

| Biochemical Simulation Software (e.g., PK-Sim, Berkeley Madonna) | Models the absorption, distribution, metabolism, and excretion (ADME) of nutrients to predict long-term body stores and identify toxicity/deficiency risks. |

| Secure, HIPAA/GDPR-Compliant Cloud Environment (e.g., AWS HealthLake, Google Cloud Healthcare API) | Provides the necessary infrastructure for handling real PHI, with built-in encryption, access logging, and audit controls for validation studies. |

Building a Robust AI Nutrition Engine: Data Pipelines, Model Development, and Clinical Integration

The development and technical validation of an AI-based nutrition recommendation system are fundamentally dependent on the quality, granularity, and standardization of its underlying training data. This document outlines the critical standards and protocols for curating high-fidelity dietary, biometric, and clinical outcome datasets, forming the core thesis that robust AI performance is a direct function of rigorous data curation.

Data Domain Standards & Specifications

Dietary Intake Data Standards

Dietary data must capture not only quantity and type but also temporal patterns, preparation methods, and source metadata to enable precise nutrient and bioactive compound estimation.

Table 1: Minimum Dietary Data Fields & Standards

| Data Field | Required Granularity | Measurement Unit | Validation Instrument | QC Tolerance |

|---|---|---|---|---|

| Food Item | USDA FoodData Central ID or equivalent ontology code | NA | Automated ontology matching + manual review | >99% coding accuracy |

| Portion Size | Weight in grams (pre-consumption) or household measures with weight conversion | grams | Calibrated digital scales (±1g) | <5% error vs. weighed record |

| Timing | ISO 8601 timestamp (start of consumption) | NA | Time-stamped mobile entry or wearable prompt | <15-minute entry delay |

| Preparation | Standardized cooking method code (e.g., grilling, boiling) | NA | Structured dropdown selection | 100% completion |

| Nutrient Estimate | Derived from validated database (e.g., USDA SR, FoodDB) | grams/mg/µg per day | Cross-reference with two independent DBs | <10% variance for core nutrients |

Biometric & Phenotypic Data Standards

Biometric data must be captured with devices and protocols that ensure research-grade precision, synchronized with dietary intake events.

Table 2: Core Biometric Data Collection Protocols

| Biometric | Primary Device/Assay | Collection Frequency | Pre-analytical Protocol | Reference Range Accuracy |

|---|---|---|---|---|

| Continuous Glucose | FDA-cleared CGM (e.g., Dexcom G7, Abbott Libre 3) | Every 5 minutes | Sensor placement per mfr., interstitial fluid calibration | MARD <10% vs. venous YSI |

| Resting Metabolic Rate | Indirect calorimetry (e.g., Cosmed Quark CPET) | Pre/post-intervention, fasted | 20-minute supine rest, 10-minute steady-state measurement | CV <5% across triplicate tests |

| Gut Microbiome | Fecal sample, 16S rRNA sequencing (V4 region) | Pre/post dietary intervention | Home collection kit (OMNIgene•GUT), -80°C storage within 4h | >10,000 reads/sample, negative controls included |

| Inflammatory Markers | hs-CRP via ELISA (e.g., R&D Systems Kit) | Baseline and 4-week intervals | Fasted venous blood, serum separation within 30 min, -80°C | Intra-assay CV <8%, inter-assay CV <12% |

Clinical & Patient-Reported Outcome Measures

Outcome data must utilize validated instruments with defined minimal clinically important differences (MCID) for algorithm training.

Table 4: Outcome Dataset Specifications

| Outcome Domain | Instrument (Validated) | Collection Schedule | Scoring & Transformation | MCID for AI Training |

|---|---|---|---|---|

| Gastrointestinal Symptoms | GSRS (Gastrointestinal Symptom Rating Scale) | Weekly | 7-point Likert, sum of 15 items | Δ ≥10 points |

| Energy/Fatigue | PROMIS Fatigue Short Form 8a | Daily (eDiary) | T-score metric (mean=50, SD=10) | Δ ≥3.5 T-score points |

| Body Composition | DXA (Lunar iDXA) | Baseline, 12 weeks | VAT mass (g), lean mass (g) | Δ ≥100g VAT mass |

| Medication Adjustment | Drug name & dose standardization (RxNorm) | Real-time via ePRO | Binary (adjusted/not) or dose change % | Any confirmed dose change |

Experimental Protocols for Dataset Validation

Protocol: Controlled Feeding Study for Ground Truth Dietary Data

Purpose: To generate a gold-standard dietary dataset with complete nutrient verification for AI model training. Materials:

- Metabolic kitchen with calibrated scales (Mettler Toledo, ±0.1g).

- Double-portion methodology: one for participant, one for homogenization and chemical analysis.

- Recipe standardization software (Genesis R&D SQL).

- -80°C freezer for sample archiving.

Procedure:

- Meal Preparation: Prepare all meals and snacks per standardized recipes. Weigh each ingredient to 0.1g accuracy. Record exact weights.

- Duplicate Sampling: For each meal, prepare an identical duplicate portion. Immediately homogenize the duplicate portion using a industrial blender. Aliquot 100g into polypropylene tubes.

- Nutrient Analysis: Send aliquots to accredited lab (e.g., Eurofins) for proximate analysis (AOAC methods: 2009.01 for fat, 2011.25 for protein, 2011.25 for carbohydrate).

- Data Reconciliation: Reconcile kitchen ingredient weights with chemical analysis results. Discrepancies >10% for macronutrients trigger investigation.

- Participant Adherence: Utilize direct observation during feeding and return of all non-consumed items for weighing.

Protocol: Multi-Omic Biometric Sampling Synchronized with Dietary Input

Purpose: To capture temporal phenotypic responses to nutritional interventions for causal pathway modeling. Materials:

- Wearable CGM and activity tracker (ActiGraph GT9X).

- Venous blood collection kit (serum separator tubes, EDTA tubes).

- OMNIGene•GUT stool collection kit.

- Custom mobile app for timestamped dietary logging.

Procedure:

- Baseline Sampling: After 12-hour fast, collect blood (serum, plasma, PBMCs), stool sample, and perform DXA/RMR.

- Intervention & Continuous Monitoring: Initiate prescribed dietary intervention. Participants log all food via app (timestamped photo + description). CGM records continuously.

- Triggered Postprandial Sampling: For test meals (days 7, 21), collect venous blood at T=0 (pre-meal), 30min, 60min, 120min, and 240min for metabolomics (plasma) and inflammatory markers (serum).

- Stool Collection: Participants provide stool samples at days 0, 7, 14, and 28 using standardized kits, storing at home at -20°C until transport on ice to lab.

- Data Fusion: Align all data streams (diet, CGM, metabolomics) using ISO timestamps in a central SQL database.

Visualization of Data Curation & Integration Workflow

Diagram Title: Data Curation Pipeline for Nutrition AI

Signaling Pathway: Postprandial Biomarker Response to Nutrient Input

Diagram Title: Nutrient-Response Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Reagents & Materials for Nutrition AI Data Generation

| Item | Supplier/Example | Primary Function in Data Curation |

|---|---|---|

| Standardized Meal Kits | Metabolic Solutions, Inc. | Provides isocaloric, macronutrient-controlled meals for intervention studies, ensuring dietary input precision. |

| OMNIgene•GUT Stabilization Kit | DNA Genotek | Stabilizes microbial DNA in stool at room temp for up to 60 days, critical for longitudinal microbiome fidelity. |

| PROMIS Computer Adaptive Tests (CAT) | HealthMeasures | Delivers validated, precise patient-reported outcome measures with reduced participant burden via adaptive questioning. |

| Nutrition Data System for Research (NDSR) | University of Minnesota | Software for standardized multiple-pass 24hr dietary recall collection and automated nutrient calculation. |

| CGM Data Download Suite | Dexcom CLARITY, Abbott LibreView | Research portals for batch downloading continuous glucose data with timestamps for fusion with dietary logs. |

| Homogenization & Aliquoting System (CryoSamplePro) | Brooks Life Sciences | Automates precise aliquoting of biospecimens, ensuring sample integrity and traceability for omics assays. |

| Biobank Management Software (OpenSpecimen) | Krishagni | Tracks biospecimen lifecycle from collection to analysis, maintaining chain of custody and pre-analytical variables. |

| Nutrient Database API (FoodData Central) | USDA | Programmatic access to standardized nutrient profiles for automated mapping of dietary intake data. |

Within the scope of a thesis on the technical validation of an AI-based nutrition recommendation system, feature engineering represents the critical, hypothesis-driven process of transforming raw, heterogeneous nutritional and biological data into a structured, machine-readable format. This transformation is foundational for building predictive models that can accurately correlate dietary inputs with individual health outcomes, biomarker responses, and therapeutic efficacy—a core concern for researchers and drug development professionals exploring nutraceuticals and personalized nutrition.

Nutritional AI systems integrate multimodal data. The table below summarizes primary quantitative data sources.

Table 1: Primary Data Sources for Nutritional Feature Engineering

| Data Category | Example Raw Metrics | Typical Scale/Resolution | Key Challenges |

|---|---|---|---|

| Dietary Intake | Food weight (g), volume (mL), portion count | Per meal/day; ~10-1000g range | Self-report bias, nutrient database gaps |

| Biochemical Biomarkers | Plasma glucose (mg/dL), HDL cholesterol (mg/dL), CRP (mg/L) | Continuous; ng/mL to mg/dL | Inter-lab variability, temporal lag |

| Microbiome | 16S rRNA sequence counts, OTU abundance | Relative abundance (0-1), count data (≥0) | Compositionality, high dimensionality |

| Metabolomics | LC-MS peak intensities, NMR spectral bins | Semi-quantitative, log-normalized | Batch effects, missing values |

| Clinical & Phenotypic | BMI (kg/m²), age (years), medication dose (mg) | Continuous/Categorical | Privacy, confounding variables |

| Temporal & Behavioral | Meal timing (hh:mm), sleep duration (hours) | Time-series, irregular sampling | Asynchronicity, missing segments |

Core Feature Engineering Methodologies & Protocols

Protocol: Deriving Nutrient Density & Composite Scores

Objective: Transform absolute nutrient intake into relative, biologically meaningful features that account for energy intake and dietary patterns.

Materials & Workflow:

- Input: Raw daily totals for nutrients (e.g., protein, fiber, vitamin C) and energy (kcal) from a 7-day food diary or 24-hr recall.

- Calculation:

- Nutrient Density: Nutrient_i (mass) / Total Energy (kcal). (e.g., mg vitamin C per 1000 kcal).

- Dietary Quality Index (Simplified): Assign points based on thresholds (e.g., +1 if fiber intake ≥14g/1000kcal). Sum points across all considered nutrients.

- Output: Continuous density features and a composite integer score feature per subject per time period.

Protocol: Engineering Temporal & Sequential Dietary Features

Objective: Capture meal timing, eating windows, and nutrient sequencing for circadian biology and glycemic response modeling.

Materials & Workflow:

- Input: Timestamped eating events with macronutrient composition.

- Feature Extraction:

- Chrononutrition: Calculate eating window (hours from first to last calorie), midpoint of intake.

- Nutrient Rate of Appearance: For each 30-minute postprandial window, compute (grams of carbohydrate in meal) / (duration of eating in minutes).

- Sequential Variability: Day-to-day coefficient of variation (CV) in daily carbohydrate intake.

- Output: Time-based and variability features for integration into longitudinal models.

Protocol: Microbiome Data Transformation for Predictive Modeling

Objective: Reduce dimensionality and handle compositionality of microbiome data to create features predictive of host response to dietary interventions.

Materials & Workflow:

- Input: OTU or ASV table (samples x taxa) with relative abundances.

- Processing Steps:

- Filtering: Remove taxa with prevalence <10% across samples.

- Transformation: Apply centered log-ratio (CLR) transformation to address compositionality.

- Aggregation: Create functional features by summing abundances of taxa associated with specific metabolic pathways (e.g., butyrate producers) via pre-defined databases like KEGG or MetaCyc.

- Output: CLR-transformed taxonomic features and pathway-based functional features.

Visualization of Workflows & Relationships

Diagram 1: Nutritional Feature Engineering Pipeline

Diagram 2: Interaction of Engineered Features in Predictive Model

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Nutritional Feature Engineering Research

| Item / Solution | Provider Examples | Primary Function in Feature Engineering |

|---|---|---|

| Automated 24-hr Dietary Assessment (ASA24) | National Cancer Institute (NCI) | Standardized, recall-based data collection for initial nutrient intake estimation. |

| Food & Nutrient Database (FNDDS, FoodData Central) | USDA, NCBI | Authoritative lookup tables for converting food codes to nutrient profiles. |

| Biochemical Assay Kits (CRP, HbA1c, Insulin) | Roche, Abbott, ELISA vendors | Generate raw biomarker data for creating response trajectory features. |

| 16S rRNA Gene Sequencing Kits | Illumina (16S Metagenomic), Qiagen | Produce raw microbiome sequencing data for diversity and taxonomic feature creation. |

| Metabolomics LC-MS Platforms & Suites | Agilent, Thermo Fisher, Metabolon | Generate raw spectral data for nutrient metabolite and food compound feature extraction. |

| Bioinformatics Pipelines (QIIME 2, PICRUSt2) | Open-source | Process raw sequence data into OTU/ASV tables and infer functional pathway features. |

| Statistical Software (R, Python with pandas/scikit-learn) | R Foundation, Python Software Foundation | Environment for executing transformation, aggregation, and feature selection protocols. |

| Clinical Data Harmonization Tool (REDCap) | Vanderbilt University | Securely aggregate and manage multimodal raw data from human subjects. |

This application note details advanced methodologies for training and tuning machine learning models to achieve personalization at scale, specifically within the context of validating an AI-based nutrition recommendation system. The protocols are designed for researchers, scientists, and drug development professionals engaged in technical validation research, focusing on robust, reproducible, and clinically relevant outcomes.

The broader thesis investigates the technical validation of an AI-driven system that generates personalized nutritional interventions to modulate metabolic pathways, potentially serving as adjuncts to pharmaceutical treatments. This requires models that adapt to high-dimensional, heterogeneous data (genomic, metabolomic, microbiome, clinical biomarkers, continuous glucose monitoring) while maintaining generalizability and rigorous performance standards expected in life sciences research.

Core Personalization Strategies: Architectures & Workflows

Strategy Comparison Table

| Strategy | Key Mechanism | Best For | Scalability Challenge | Primary Validation Metric |

|---|---|---|---|---|

| Global Model + Post-Hoc Calibration | Single model trained on all data; user-specific adjustment via bias term or scaling. | Large cohorts with moderate heterogeneity; initial deployment. | Low; single model serving. | Cohort-averaged RMSE; per-user calibration error. |

| Multi-Task Learning (MTL) | Shared hidden layers learn common features; task-specific heads for each user/user group. | Populations with identifiable subgroups (e.g., by genotype, disease status). | Moderate; linear growth in output layer parameters. | Macro-averaged accuracy across all tasks. |

| Mixture of Experts (MoE) | Gating network routes inputs to specialized "expert" sub-models; only a subset activated per input. | Extremely heterogeneous populations with non-linear patterns. | High; requires dynamic, sparse computation. | Expert utilization balance; overall AUC-PR. |

| Federated Learning (FL) | Model trained across decentralized devices/servers holding local data; only model updates are shared. | Privacy-sensitive data (e.g., PHI), distributed data silos (hospitals, clinics). | Very High; network and synchronization overhead. | Global model accuracy vs. centralized benchmark; convergence time. |

| Hypernetwork | A secondary network generates the weights of the primary ("target") model conditioned on a user embedding. | Highly personalized architectures where the entire model must adapt. | High; training the hypernetwork is computationally intensive. | Target model performance on held-out users; hypernetwork stability. |

Experimental Protocol: Multi-Task Learning for Phenotype-Specific Nutrition Response

Objective: To train an MTL model that predicts postprandial glycemic response (primary task) while jointly learning related auxiliary tasks (e.g., insulin sensitivity index, lipid response) for different metabolic phenotype groups.

Materials & Workflow:

- Data Curation: Cohort data (n=5,000) with labeled metabolic phenotypes (e.g., insulin-resistant, prediabetic, normoglycemic). Features include OMICS profiles, baseline biomarkers, meal nutritional composition.

- Task Definition: Define one prediction task per phenotype group + shared auxiliary tasks.

- Model Architecture: Implement a neural network with shared dense layers (512, 256 units, ReLU), branching into phenotype-specific task heads (output layers).

- Training: Use a weighted sum of losses:

L_total = Σ w_i * L_task_i. Employ gradient normalization to balance task learning. - Validation: Use leave-one-phenotype-group-out cross-validation. Compare against a global single-task model (baseline).

Diagram: MTL Model Development Workflow (99 chars)

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Personalization Research | Example/Supplier |

|---|---|---|

| Simulated Heterogeneous Datasets | Benchmarks model performance across diverse virtual patient profiles under controlled conditions. | scikit-learn make_classification with clusters; PySynth synthetic patient generators. |

| Personalization Metrics Suite | Quantifies per-user performance and fairness beyond aggregate metrics. | PerUserRMSE, Calibration Error per Subgroup, Jain's Fairness Index. |

| Meta-Learning Libraries | Implements model-agnostic meta-learning (MAML) & related algorithms for few-shot personalization. | learn2learn (PyTorch), TensorFlow Meta-Learning. |

| Federated Learning Frameworks | Enables privacy-preserving, distributed model training across simulated or real data silos. | NVFlare (NVIDIA), Flower, TensorFlow Federated. |

| Hyperparameter Optimization (HPO) Orchestrator | Automates large-scale tuning of personalization strategy parameters (e.g., expert count, task weights). | Ray Tune, Weights & Biases Sweeps, Optuna. |

| Causal Inference Toolkits | Validates that personalized recommendations have a causal effect, not just correlation. | DoWhy (Microsoft), EconML, CausalML. |

Advanced Tuning Protocol: Federated Learning with Differential Privacy

Objective: To tune a global nutrition recommendation model using federated learning across multiple institutional data silos (e.g., research hospitals) while guaranteeing user-level differential privacy (DP).

Detailed Protocol:

- Client Simulation: Partition dataset to simulate 10 clients (hospitals), ensuring non-IID data distribution.

- Algorithm Selection: Implement

FedAvg(Federated Averaging) with DP. Key tuning parameters:clipping norm (C),noise multiplier (σ),learning rate (η). - DP Mechanism: Before aggregating model updates, clip each client's gradient to a max L2-norm

C. Add Gaussian noise scaled byσandC. - Tuning Experiment: Perform a grid search over

C ∈ [0.1, 1.0],σ ∈ [0.01, 0.5],η ∈ [0.001, 0.01]. Track global model accuracy on a held-out central test set versus privacy budget(ε, δ). - Analysis: Calculate the privacy-utility trade-off curve. Select the parameter set yielding >85% of non-private baseline accuracy with

ε < 1.0, δ = 10^-5.

Quantitative Outcomes Table:

| DP Parameter Set (C, σ, η) | Final Global Model Accuracy (%) | Privacy Budget (ε) | Convergence Rounds |

|---|---|---|---|

| No DP (Baseline) | 92.7 | ∞ | 150 |

| (0.5, 0.05, 0.005) | 90.1 | 0.8 | 210 |

| (0.1, 0.1, 0.001) | 85.3 | 0.4 | 320 |

| (1.0, 0.01, 0.01) | 88.9 | 2.1 | 180 |

Diagram: Federated Learning with Differential Privacy Loop (99 chars)

Validation Framework for Nutritional AI

Core Protocol: Causal Impact Assessment of Personalized Recommendations

- Design: Conduct a simulated or pilot N-of-1 trial series. Each virtual/physical participant receives both model-personalized meals and standardized control meals in a randomized crossover sequence.

- Measurement: Primary endpoint: area under the curve (AUC) for postprandial glucose. Secondary: subjective satiety, relevant biomarkers.

- Analysis: Use a linear mixed-effects model to estimate the treatment effect (personalized vs. control), with participant ID as a random effect.

- Success Criterion: Personalized intervention shows a statistically significant (p < 0.01, adjusted for multiple comparisons) reduction in glucose AUC compared to control for >75% of the participant pool.

Achieving personalization at scale for AI-based nutrition systems necessitates a strategic selection of training architectures (MTL, MoE, FL) coupled with rigorous tuning protocols that incorporate privacy, causality, and robust validation. The methodologies outlined provide a reproducible framework for researchers aiming to technically validate such systems within the stringent context of biomedical and health applications.

This protocol details the technical pathways for integrating AI-based nutrition recommendation engines with existing clinical and digital infrastructure. The primary goal is to enable seamless data flow, ensuring that AI-generated, personalized nutritional interventions are actionable within clinical workflows and patient-facing platforms. This integration is a critical component of technical validation, moving from algorithm performance in isolation to demonstrated utility in real-world data ecosystems.

Key Integration Architectures and Data Flows

Table 1: Comparative Analysis of Primary Integration Architectures

| Architecture Type | Description | Data Flow Latency | Implementation Complexity | Best Suited For |

|---|---|---|---|---|

| HL7 FHIR API-Based | Real-time data exchange using standardized healthcare APIs (Fast Healthcare Interoperability Resources). | Low (< 2 sec) | High | EHR-integrated clinical decision support, real-time alerting. |

| Batch Export/Import | Scheduled extraction (e.g., nightly) of patient data from EHR to AI platform, with result files returned. | High (12-24 hrs) | Low | Retrospective population analysis, non-urgent recommendation batches. |

| Middleware/HL7 v2 | Use of integration engines (e.g., Rhapsody, Mirth Connect) to translate HL7 v2 messages to/from EHR. | Medium (< 5 min) | Medium | Legacy EHR systems with established ADT/ORU feeds. |

| Patient-App-Mediated | AI engine connects via patient-facing app APIs (e.g., Apple HealthKit, Google Fit), with clinician EHR view. | Variable | Medium | Digital therapeutics, direct-to-patient engagement programs. |

Diagram Title: AI-EHR Integration Data Flow Architecture

Detailed Experimental Protocol: End-to-End Integration Validation

Protocol ID: ANP-001-E2E Objective: To validate the technical performance, data fidelity, and clinical workflow compatibility of an AI nutrition recommendation system integrated via FHIR APIs with a test EHR environment.

3.1. Materials & Pre-requisites

- Test Environment: Isolated EHR sandbox (e.g., Epic HyperSpace, Cerner Millennium TEST).

- AI System: Nutrition recommendation engine with a defined input/output schema.

- Integration Layer: FHIR server (e.g., HAPI FHIR) configured with relevant profiles (Patient, Observation, NutritionOrder).

- Data Set: Synthetic patient cohort (n=500) with demographic, laboratory (e.g., HbA1c, lipids), diagnostic, and medication data.

3.2. Methodology

Phase 1: Data Extraction & Mapping Validation

- Configure the AI system to request patient data via FHIR API calls (

GET [base]/Patient/[id],GET [base]/Observation?patient=[id]&code=[loinc]). - Execute data calls for the synthetic cohort. Log all transactions.

- Manually verify a random subset (n=50) for data field accuracy and unit consistency between source EHR and received data.

- Metric: Calculate data transfer fidelity rate (% of fields mapped and transmitted correctly).

Phase 2: Recommendation Generation & Trigger Logic

- Define clinical triggers within the AI system (e.g.,

IF HbA1c > 7.0% AND diagnosis=Type 2 Diabetes). - For triggered patients, execute the AI algorithm to generate a structured

NutritionOrderFHIR resource. - Metric: Record trigger accuracy and algorithm processing time per patient.

Phase 3: Recommendation Injection into Workflow

- Configure the system to post the FHIR

NutritionOrderto the EHR sandbox as a draft clinician order or a structured note. - Simulate clinician review and "sign-off" in the sandbox.

- Metric: Measure time from trigger to order appearance in the EHR, and system usability score (SUS) from test clinicians.

Phase 4: Patient Platform Sync

- Upon simulated sign-off, push a patient-friendly version of the recommendation to a test digital platform via a secure REST API.

- Validate the receipt and display of the plan on the platform.

- Metric: Assess data synchronization latency and end-to-end encryption validation.

Table 2: Key Performance Indicators (KPIs) for Integration Validation

| KPI Category | Specific Metric | Target Threshold | Measurement Outcome |

|---|---|---|---|

| Data Integrity | FHIR Resource Mapping Accuracy | > 99.5% | [Result] |

| Technical Performance | 95th Percentile API Response Time | < 1000 ms | [Result] |

| Clinical Utility | End-to-End Latency (Trigger to EHR Inbox) | < 60 seconds | [Result] |

| Workflow Integration | Clinician Acceptance Rate (Simulated) | > 85% | [Result] |

| Security | OAuth 2.0 Token Validation Success Rate | 100% | [Result] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Integration Research & Development

| Item / Solution | Provider Examples | Primary Function in Validation Research |

|---|---|---|

| FHIR Test Servers | HAPI FHIR (Open Source), Microsoft Azure FHIR Server | Provides a standards-compliant sandbox for developing and testing healthcare data exchange. |

| Synthetic Patient Data Generators | Synthea, MDClone | Creates realistic, de-identified patient datasets for testing without privacy concerns. |

| Healthcare Integration Engines | Intersystems IRIS, NextGen Mirth Connect | Enables protocol translation and message routing between AI systems and legacy EHR interfaces. |

| API Testing & Monitoring Suites | Postman, Apache JMeter | Validates API endpoint reliability, performance under load, and security. |

| Clinical Terminology Servers | Ontoserver (SNOMED CT, LOINC), UMLS Metathesaurus | Ensures accurate mapping of nutritional concepts, lab codes, and diagnoses to standardized terminologies. |

| Digital Platform SDKs | Apple CareKit, ResearchKit, Google Health Connect | Facilitates secure development of patient-facing app modules for nutrition intervention delivery and data capture. |

Diagram Title: End-to-End Integration Validation Workflow

The validation of AI-based nutrition recommendation systems presents a transformative opportunity for clinical trial design. Precision nutritional support can mitigate drug-nutrient interactions, manage comorbidities that affect trial endpoints, and reduce adverse events (AEs), thereby improving data quality and patient retention. This document details application notes and protocols for integrating nutritional assessment and intervention within clinical trial frameworks, serving as a technical validation pillar for AI-driven systems.

Quantitative Landscape: Key Data on Nutrition, Comorbidities, and Trial Outcomes

Table 1: Impact of Nutritional Status & Comorbidities on Clinical Trial Metrics

| Metric | Malnourished Cohort | Well-Nourished Cohort | Common Comorbidity Influence (e.g., T2DM, CKD) | Data Source (Year) |

|---|---|---|---|---|

| Trial Dropout Rate | 35-40% | 12-18% | Increases dropout by 1.5-2.5x | Meta-Analysis (2023) |

| Grade 3+ AE Incidence | 65% | 32% | Increases severe AE risk by 50-80% | Oncology Trials Review (2024) |

| Protocol Deviation Rate | 22% | 9% | Increases deviation by 1.8x | FDA Audit Data Analysis (2023) |

| Hospitalization During Trial | 30% | 11% | 2-3x higher hospitalization risk | Pharmacoepidemiology Study (2024) |

| Immune Response Variability (CV%) | 45% | 20% | Can increase CV% by 15-25 points | Immunotherapy Trials (2023) |

Table 2: Efficacy of Targeted Nutritional Support in Trials

| Intervention | Target Population | Primary Outcome Result | Effect Size (Hedges' g) | Study Design |

|---|---|---|---|---|

| High-Protein, Leucine-Rich Formula | Sarcopenic Oncology Patients | Reduced CTCAE ≥Grade 2 muscle loss by 60% | 0.72 | RCT, N=220 (2024) |

| Prebiotic Fiber (GOS/FOS) Blend | Patients on Immunotherapy+Antibiotics | Restored objective response rate to baseline (32% vs. 18%) | 0.65 | Phase IIb, N=150 (2023) |

| Renal-Specific Oral Nutrition | CKD Patients in Cardiorenal Trial | 45% lower incidence of hyperkalemia events | 0.81 | RCT, N=180 (2024) |

| Medical Food for Mitochondrial Support | Patients with Fatigue-Dominant AEs | 2.5-point improvement in FACIT-Fatigue score* | 0.58 | Crossover RCT, N=95 (2023) |

| EAA + HMB Supplementation | Older Adults in Neurological Trial | Maintained cognitive battery scores vs. decline in placebo | 0.70 | RCT, N=200 (2024) |

*Clinically meaningful difference is 3-4 points.

Detailed Experimental Protocols for Technical Validation

Protocol 3.1: Assessing AI-Generated Nutritional Plans for Drug-Nutrient Interaction Mitigation

- Objective: To validate an AI system's ability to generate dietary plans that minimize pharmacokinetic (PK) interactions with an investigational tyrosine kinase inhibitor (TKI).

- Materials: AI nutrition platform, simulated patient profiles (demographics, genetics [e.g., CYP3A4 status], PK data), drug interaction database (e.g., Lexicomp), nutrient analysis software.

- Methodology:

- Input: Feed the AI system 50 virtual patient profiles and the TKI's known interaction profile (CYP3A4/5 substrate, high-fat meal increases AUC).

- AI Task: Generate 7-day personalized meal plans with goals: maintain calorie/protein needs, limit vitamin K-rich foods (if on anticoagulants), schedule low-fat meals around dosing.

- Validation: Use PK/PD simulation software (e.g., GastroPlus) to model predicted TKI AUC and Cmax for the AI-generated plan vs. a standard diet.

- Endpoint: Percentage reduction in predicted PK variability (CV%) and interaction risk score compared to control.

Protocol 3.2: Nutritional Phenotyping for Comorbidity Stratification in Trial Populations

- Objective: To implement and validate a protocol for deep nutritional phenotyping to stratify patients with metabolic comorbidities.

- Materials: DEXA scanner, bioimpedance spectroscopy device, continuous glucose monitor (CGM), metabolomics kit (plasma/urine), food diary app, microbiome sequencing kit.

- Methodology:

- Baseline Assessment (Screening Visit):

- Body Composition: DEXA for visceral fat area and lean mass.

- Metabolic Flux: 14-day CGM deployment for glycemic variability (Mean Amplitude of Glycemic Excursions - MAGE).

- Omics Sampling: Fasting plasma for NMR metabolomics (branched-chain amino acids, ketones), stool for 16S rRNA sequencing.

- Dietary Intake: 3-day weighed food record via app.

- AI Integration: Input raw data into AI system to assign a "Nutritional Comorbidity Risk Score" (NCRS) from 1-10.

- Validation: Correlate NCRS with Week 8 trial outcomes (e.g., treatment-related AEs, functional capacity) using multivariate regression.

- Baseline Assessment (Screening Visit):

Protocol 3.3: Intervention Trial for AI-Optimized Support in Managing Cachexia

- Objective: To evaluate an AI-personalized nutrition/exercise regimen for mitigating cancer cachexia in a Phase III oncology trial.

- Design: Double-blind, randomized, controlled sub-study (embedded trial).

- Arm A (AI-Optimized): Daily shake (macronutrient-adjusted), resistance exercise plan (frequency/load-adjusted), and omega-3 dose all personalized weekly by AI based on patient-reported symptoms, weight, and blood biomarkers (CRP, albumin).

- Arm B (Standard Support): Fixed-dose, standard high-protein shake and general exercise advice.

- Primary Endpoint: Change in appendicular lean mass index (ALMI) at 12 weeks via DEXA.

- Key AI Validation Metric: Correlation between AI's predicted anabolic response (based on weekly data) and actual ALMI change.

Visualizations: Pathways, Workflows, and Systems

Title: AI Nutrition System in Clinical Trial Workflow

Title: Nutritional Block of Cachexia Signaling

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Nutritional Clinical Trial Research

| Item | Function & Application in Validation Research |

|---|---|

| Indirect Calorimetry System | Measures resting energy expenditure (REE) and respiratory quotient (RQ) to validate AI predictions of caloric needs and substrate utilization in patients. |

| Point-of-Care NMR Analyzer | Quantifies serum branched-chain amino acids, ketone bodies, and lipoprotein subfractions for rapid metabolomic phenotyping and AI algorithm training. |

| Stool DNA Stabilization Kit | Preserves microbial genomic material for 16S/ITS and shotgun metagenomic sequencing, linking AI dietary inputs to microbiome outputs. |

| Electronic Patient-Reported Outcome (ePRO) Platform | Captures real-time data on food intake, symptoms, and quality of life; essential for closed-loop AI system training and validation. |

| Standardized Medical Nutrition Products | Iso-caloric, macronutrient-modular formulas (protein, carbohydrate, lipid modules) used as controlled variables in AI-driven intervention protocols. |

| Bioimpedance Spectroscopy (BIS) Device | Assesses extracellular/intracellular water and phase angle, providing validated, rapid body composition data for AI models beyond BMI. |

| Continuous Glucose Monitoring (CGM) System | Generates high-resolution glycemic variability data (e.g., TIR, MAGE) to validate AI meal plans for patients with metabolic comorbidities. |