Validating Biomarkers of Food Intake: A Comprehensive Framework for Researchers and Clinicians

This article provides a detailed guide to the criteria and methodologies for validating biomarkers of food intake (BFIs), a critical need in nutritional epidemiology and clinical research.

Validating Biomarkers of Food Intake: A Comprehensive Framework for Researchers and Clinicians

Abstract

This article provides a detailed guide to the criteria and methodologies for validating biomarkers of food intake (BFIs), a critical need in nutritional epidemiology and clinical research. Aimed at researchers, scientists, and drug development professionals, it explores the foundational concepts, including the limitations of self-reported dietary data and the role of metabolomics. The content outlines a structured, eight-criteria validation framework encompassing plausibility, dose-response, time-response, robustness, reliability, stability, analytical performance, and inter-laboratory reproducibility. It further covers practical application in study design, common challenges in biomarker development, and comparative analysis of validation approaches. The article concludes by synthesizing key takeaways and highlighting future directions, including the work of consortia like the Dietary Biomarkers Development Consortium, which aim to advance precision nutrition through robust biomarker discovery and validation.

The Critical Need for Biomarkers in Nutrition: Moving Beyond Self-Reporting

Accurate dietary assessment is a foundational pillar in understanding the relationships between diet, health, and disease, informing everything from public health policy to individualized clinical nutrition advice [1]. For decades, nutritional epidemiology has predominantly relied on self-reported dietary assessment instruments, including 24-hour dietary recalls, food frequency questionnaires (FFQs), and dietary records [2]. These tools aim to capture complex dietary behaviors but are inherently limited by their dependence on participant memory, motivation, and ability to accurately quantify intake. Within the context of validating biomarkers of food intake—a critical endeavor for advancing precision nutrition—understanding the specific limitations and error structures of self-reported data is not merely academic; it is a fundamental prerequisite for establishing objective, biologically grounded measures of dietary exposure [3] [4]. The systematic biases and measurement errors inherent in self-reporting directly impact the calibration and validation processes for novel biomarkers, potentially leading to attenuated diet-disease relationships and flawed scientific conclusions if not properly accounted for [5]. This document delineates the sources, magnitudes, and consequences of these errors, providing researchers with the necessary framework to critically evaluate dietary data and strengthen the validity of nutritional research.

Classifying and Quantifying Measurement Error in Self-Reported Diet

Measurement error in dietary self-report is not a monolithic issue but can be categorized into distinct types, each with different implications for data analysis and interpretation. A primary distinction is made between random and systematic errors, and further between differential and non-differential errors relative to an outcome of interest [6].

Types of Measurement Error

- Random Error: This error varies unpredictably between individuals and occasions, reducing the precision of intake estimates. It increases variability and attenuates (weakens) correlations between reported intake and true intake or health outcomes [7] [6].

- Systematic Error (Bias): This error consistently pushes measurements in a particular direction. In dietary assessment, the most documented systematic bias is energy underreporting, where individuals consistently report less energy than they truly consume [2].

- Non-Differential Error: This occurs when the error in reporting exposure (diet) is unrelated to the outcome (disease) status. This type typically biases associations toward the null, making true effects harder to detect [6].

- Differential Error: This occurs when the error in reporting is related to the outcome status or differs between study groups (e.g., cases vs. controls). It can cause either inflation or attenuation of observed associations and is a particular threat in case-control studies (recall bias) or in intervention studies where reporting behavior changes differentially between treatment and control arms [5] [6].

Statistical Models of Error

The statistical relationship between true intake (X) and reported intake (X*) is formalized through measurement error models [6]:

- Classical Measurement Error Model: (X^* = X + e), where (e) is random error with mean zero, independent of X. This model presumes no systematic bias.

- Linear Measurement Error Model: (X^* = α0 + αX X + e). This more general model accounts for both systematic bias (via (α0) and (αX)) and random error ((e)). It is often more applicable to self-reported dietary data than the classical model.

- Berkson Error Model: (X = X^* + e), where the error is independent of the measured value (X^*). This can occur in studies where individuals are assigned a group mean exposure level.

Table 1: Common Measurement Error Models in Dietary Assessment

| Model Type | Mathematical Form | Key Characteristics | Common Scenario in Diet Research |

|---|---|---|---|

| Classical | (X^* = X + e) | No systematic bias; random error only. Attenuates correlations. | Less common; may apply to some objective measures. |

| Linear | (X^* = α0 + αX X + e) | Captures both systematic bias (location (α0), scale (αX)) and random error. | Highly relevant for self-report data which often has proportional bias. |

| Berkson | (X = X^* + e) | Error is independent of the measured value (X^*). | Occupational studies where exposure is assigned by job title. |

The most robust evidence for systematic bias in self-reported dietary data comes from validation studies that compare reported intake against objective biomarkers of intake.

Energy Underreporting and the Doubly Labeled Water Method

The development of the doubly labeled water (DLW) method for measuring total energy expenditure (TEE) provided a gold-standard biomarker for validating reported energy intake. Under conditions of weight stability, energy intake is approximately equal to TEE, allowing for a direct comparison [2].

Studies using DLW have consistently revealed substantial underreporting of energy intake:

- A seminal study by Prentice et al. found that energy intake from 7-day food diaries was 34% lower than TEE measured by DLW in obese women, while no significant difference was found in lean women [2].

- A comprehensive review confirms that underreporting of energy is a consistent finding across studies and populations [2].

- Crucially, the magnitude of underreporting is not uniform. It has been consistently shown to increase with body mass index (BMI), indicating a systematic bias related to body weight and potentially social desirability [2]. This relationship invalidates the use of self-reported energy intake for studying energy balance in obesity research, as the error is differential.

Differential Misreporting by Macronutrient and Food Type

The underreporting is not uniform across all foods and nutrients. Analysis of macronutrient reporting compared to urinary nitrogen (a biomarker for protein intake) reveals that protein is the least underreported macronutrient [2]. This suggests that individuals do not omit foods randomly; instead, certain types of foods, particularly those perceived as socially undesirable (e.g., high-sugar, high-fat snacks), are more likely to be underreported or omitted entirely [2]. This selective misreporting distorts the apparent composition of the diet, not just its total energy value.

Table 2: Objective Biomarkers for Validating Self-Reported Dietary Intake

| Biomarker | What It Measures | Comparison to Self-Report | Key Findings from Validation Studies |

|---|---|---|---|

| Doubly Labeled Water (DLW) | Total Energy Expenditure (proxy for Energy Intake) | Self-reported Energy Intake | Significant underreporting of energy, increasing with BMI [2]. |

| Urinary Nitrogen | Protein Intake | Self-reported Protein Intake | Protein is underreported less than total energy; one study showed a 47% underestimate in women [2]. |

| Serum Carotenoids | Fruit & Vegetable Intake | Self-reported F&V Consumption | Used as a reference for validating new methods [1]. |

| Erythrocyte Fatty Acids | Fatty Acid Intake | Self-reported Fat & Oil Consumption | Used as a reference for validating new methods [1]. |

| Poly-Metabolite Scores | Intake of specific foods/diets (e.g., UPF) | Self-reported dietary patterns | Metabolomic profiles can objectively differentiate between controlled diets high and low in ultra-processed foods (UPF) [4]. |

The Pervasive Challenge in Intervention Studies: Differential Measurement Error

In longitudinal lifestyle intervention studies, a specific form of systematic error, known as differential measurement error, is a major concern [5]. This occurs when the nature or degree of measurement error differs between the intervention and control groups, or between baseline and follow-up assessments. For example:

- Participants in the intervention arm may become more motivated to appear compliant with the study's dietary goals, leading to increased underreporting of "forbidden" foods over time.

- Alternatively, they may become better at estimating portion sizes due to training, improving their reporting accuracy.

Simulation studies have demonstrated that such differential error can lead to biased estimates of the treatment effect and reduced statistical power, potentially causing researchers to incorrectly conclude an intervention is ineffective or to misjudge its true effect size [5]. Investigators are advised to account for this in study design by increasing sample sizes or incorporating internal validation studies using biomarkers.

Consequences for Research and Public Health

The pervasive and non-random nature of error in self-reported dietary data has profound implications.

- Attenuation of Diet-Disease Relationships: Random and non-differential errors tend to weaken the observed associations between dietary factors and health outcomes, leading to false null findings and obscuring true risks or benefits [2] [5].

- Inability to Accurately Study Energy Balance: The systematic underreporting of energy, which is correlated with BMI, renders self-reported energy intake data unusable for investigating the causes of obesity [2].

- Compromised Policy and Guidelines: National nutrition policies, food fortification strategies, and dietary guidelines rely on accurate population-level intake data. Systematic errors can lead to misplaced priorities and ineffective public health interventions [7].

Toward a Solution: The Role of Biomarkers in Dietary Validation

The recognition of the severe limitations of self-reported data has catalyzed a major scientific shift toward the discovery and validation of objective biomarkers of food intake [3]. These biomarkers, which can be genetic, epigenetic, proteomic, or metabolomic in nature, provide an unbiased measure of dietary exposure [8].

The Biomarker Discovery and Validation Pipeline

The development of robust dietary biomarkers follows a rigorous, multi-phase process, as exemplified by initiatives like the Dietary Biomarkers Development Consortium (DBDC) [3]:

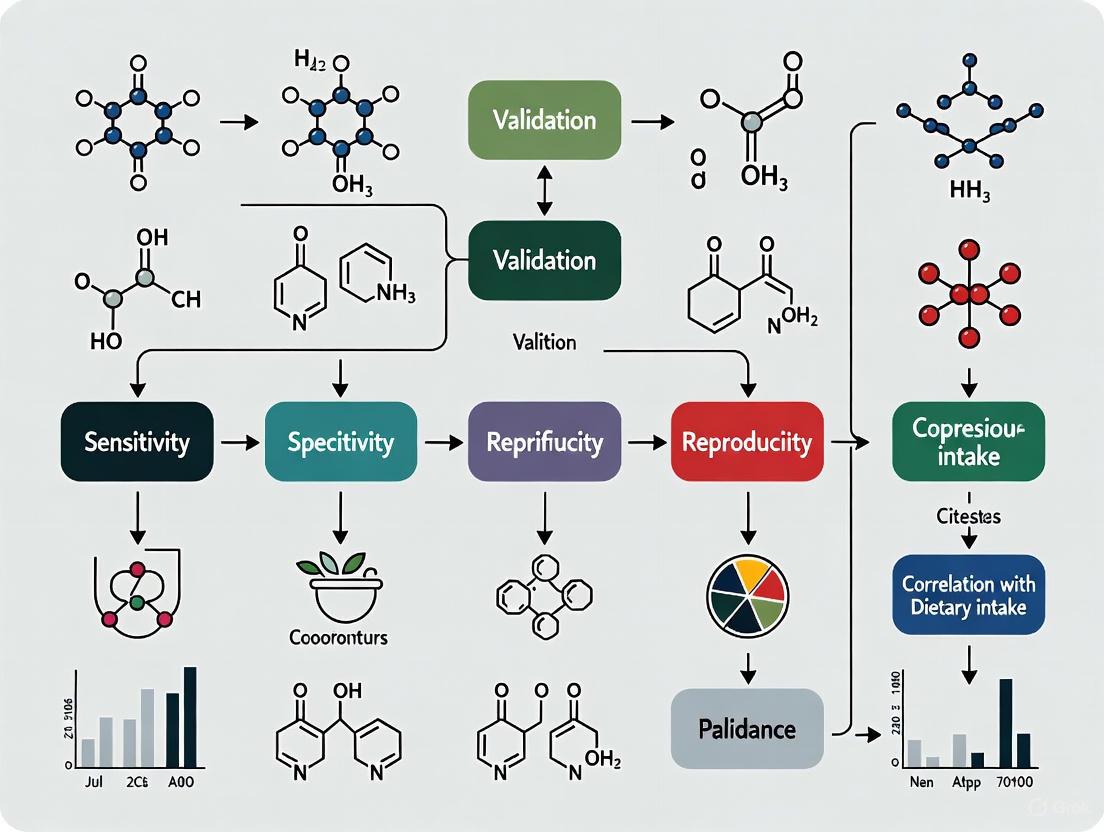

Diagram: Dietary Biomarker Validation Pipeline

This pipeline ensures that candidate biomarkers are not only statistically associated with food intake but are also sensitive, specific, and able to predict habitual consumption in free-living populations.

Experimental Protocols for Validating Dietary Assessment Methods

A modern validation study protocol, such as that for the Experience Sampling-based Dietary Assessment Method (ESDAM), illustrates the state-of-the-art approach, integrating both self-reported and objective biomarker comparisons [1]:

- Aim: To assess the validity of a novel dietary assessment method (ESDAM).

- Design: A prospective observational study over four weeks.

- Participants: Approximately 115 healthy adults with stable body weight.

- Reference Methods:

- Three 24-hour dietary recalls (for convergent validity).

- Doubly labeled water (for energy intake).

- Urinary nitrogen (for protein intake).

- Serum carotenoids and erythrocyte membrane fatty acids (for fruit/vegetable and fatty acid intake).

- Continuous glucose monitoring (as an objective measure of eating episodes to assess compliance).

- Statistical Analysis: Mean differences, Spearman correlations, Bland-Altman plots, and the method of triads to quantify measurement error components.

This comprehensive protocol highlights the necessity of using multiple biomarkers, each reflecting different aspects of dietary intake and different timeframes, to fully characterize the performance of a new dietary tool [1].

The Scientist's Toolkit: Key Reagents for Dietary Biomarker Research

Table 3: Essential Research Reagents and Methods for Dietary Biomarker Validation

| Reagent / Method | Function / Role | Application in Validation |

|---|---|---|

| Doubly Labeled Water (DLW) | Gold-standard biomarker for total energy expenditure. | Serves as the objective reference for validating self-reported energy intake in weight-stable individuals [2] [1]. |

| Ultra-HPLC with Tandem Mass Spectrometry | High-throughput metabolomic profiling of blood and urine. | Discovers and quantifies hundreds to thousands of metabolites as candidate food intake biomarkers [4]. |

| Controlled Feeding Trials | Provides known, fixed dietary exposures to participants. | The foundational study design for establishing a direct causal link between food consumption and biomarker levels [3] [4]. |

| Stable Isotopes (Deuterium, ¹⁸O) | Core components of the DLW method. | Used to calculate CO₂ production and thus energy expenditure via isotope elimination kinetics [2]. |

| Urinary Nitrogen Analysis | Objective measure of total nitrogen excretion. | Used as a recovery biomarker to validate self-reported protein intake [2] [1]. |

| LASSO Regression | A machine learning variable selection method. | Used to build poly-metabolite scores from high-dimensional metabolomics data by selecting the most predictive biomarkers for a dietary component [4]. |

The evidence is unequivocal: self-reported dietary data are plagued by significant and systematic measurement errors, most notably energy underreporting that is differential across BMI categories. These errors are not merely statistical noise but introduce substantive biases that distort diet-disease associations, compromise the findings of intervention studies, and undermine the evidence base for public health nutrition. Within the critical framework of biomarker validation, these limitations necessitate a paradigm shift. The future of robust nutritional epidemiology and precision nutrition lies in the continued development, refinement, and application of objective biomarkers of food intake. By moving beyond reliance on error-prone self-reports, researchers can build a more accurate and biologically grounded understanding of the complex role diet plays in human health.

Biomarkers of Food Intake (BFIs) represent a transformative approach in nutritional science, providing objective measures to complement or replace traditional self-reported dietary assessment methods such as food frequency questionnaires, diaries, and interviews [9] [10]. These biomarkers are defined as biological characteristics that can be objectively measured and evaluated as indicators of normal biological processes, pathogenic processes, or responses to nutritional interventions [11]. Unlike subjective reporting methods that are prone to systematic errors, recall bias, and misreporting—particularly for foods perceived as "unhealthy"—BFIs offer a reliable means to monitor dietary exposure, assess compliance with dietary interventions, and validate associations between diet and health outcomes [10] [12] [13].

The fundamental value of BFIs lies in their ability to provide objective quantification of food intake, thereby reducing classification errors that commonly plague nutritional epidemiology [9]. As the field moves toward precision nutrition, where dietary recommendations may be tailored to individual metabolic responses, the role of BFIs becomes increasingly critical [13]. These biomarkers can be classified into different categories based on their application: biomarkers of exposure (indicating what has been consumed), biomarkers of status (reflecting body stores of nutrients), and biomarkers of function (measuring functional consequences of nutrient intake) [11]. The development and validation of robust BFIs therefore represents a cornerstone for advancing evidence-based nutritional science and establishing trusted associations between dietary intake and health consequences.

Validation Framework for Biomarkers of Food Intake

The validation of BFIs requires a systematic approach that assesses both biological plausibility and analytical performance. A consensus-based procedure developed by nutritional experts has established eight key criteria for comprehensive BFI validation [9] [10]. These criteria provide a framework for evaluating candidate biomarkers and ensuring they meet rigorous scientific standards before deployment in research or clinical applications.

Table 1: Validation Criteria for Biomarkers of Food Intake

| Validation Criterion | Description | Key Considerations |

|---|---|---|

| Plausibility | Biological rationale connecting biomarker to food intake | Specificity to food; chemical relationship; metabolic pathway understanding [9] |

| Dose-Response | Relationship between intake amount and biomarker level | Sensitivity across intake range; detection limits; saturation effects [9] [14] |

| Time-Response | Temporal kinetics after food consumption | Half-life; optimal sampling window; bioavailability timing [9] |

| Robustness | Performance across diverse populations and conditions | Interactions with other foods; food matrix effects; genetic influences [9] |

| Reliability | Consistency compared to reference methods | Agreement with dietary assessment tools; confirmation with other biomarkers [9] |

| Stability | Integrity during sample processing and storage | Sample collection protocols; decomposition resistance; storage conditions [9] |

| Analytical Performance | Technical measurement characteristics | Precision; accuracy; detection limits; validation against references [9] |

| Inter-laboratory Reproducibility | Consistency across different laboratory settings | Standardized methodologies; cross-validation studies [9] |

This validation framework serves a dual purpose: to estimate the current validation level of candidate biomarkers and to identify additional studies needed for full validation [9]. The system does not employ a hierarchical scoring method, as different criteria may carry varying importance depending on the intended application of the biomarker. For instance, short-term kinetics may be irrelevant for biomarkers measured in hair samples, whereas this criterion is crucial for urinary biomarkers intended to capture recent intake [10].

Application of Validation Criteria

The practical application of these validation criteria can be illustrated through specific examples. For instance, N-methylpyridinium (NMP) has been proposed as a biomarker for roasted coffee intake [14]. The validation process for NMP involved assessing all eight criteria: establishing plausibility (NMP forms during coffee roasting), demonstrating dose-response relationships (higher coffee consumption leads to higher NMP levels), determining time-response characteristics (peak concentrations occur 0.5-2 hours post-consumption), and confirming robustness (detectable across different populations) [14]. Similarly, trimethylamine oxide (TMAO) has been validated as a biomarker for fish intake, though with limitations related to geographical variations in fish TMAO content [10].

The validation process emphasizes that BFIs must be evaluated within the context of their intended use [10]. A biomarker suitable for distinguishing consumers from non-consumers may not necessarily be appropriate for quantitative intake assessment. Furthermore, the complexity of human diets means that few biomarkers are absolutely specific to a single food; many reflect intake of related food groups or may be influenced by overlapping metabolic pathways [10] [13].

Methodological Approaches for BFI Development

Analytical Techniques

The development of BFIs relies heavily on advanced analytical technologies, particularly mass spectrometry-based metabolomics. Recent methodological advances have enabled the simultaneous quantification of multiple biomarkers, significantly enhancing the efficiency of dietary assessment [15] [16]. One recently developed method allows for the simultaneous quantification of 80 BFIs in urine, reflecting 27 different foods commonly consumed in European diets [15] [16]. This method utilizes a simple sample preparation procedure followed by separation using both reversed-phase HPLC on a C18 column and hydrophilic interaction chromatography (HILIC), combined with tandem mass spectrometry in positive and negative modes [15]. The analytical workflow achieves individual runs of just 6 minutes, making it suitable for large-scale epidemiological studies [15].

The validation of such multi-biomarker methods follows rigorous analytical standards, assessing selectivity, linearity, robustness, matrix effects, recovery, accuracy, and precision [15]. In the case of the 80-BFI method, 44 biomarkers could be absolutely quantified without limitations or with limitations only at low concentrations, while 36 could only be measured semi-quantitatively [15]. This highlights both the progress and ongoing challenges in comprehensive BFI quantification, particularly for biomarkers with uncertain validation data or low concentration levels in biological samples.

Table 2: Essential Research Reagents and Analytical Tools for BFI Research

| Research Tool | Function in BFI Research | Application Examples |

|---|---|---|

| HPLC-MS/MS Systems | Separation and detection of biomarkers in biological samples | Quantification of 80 BFIs in urine; discovery of novel biomarkers [15] [14] |

| HILIC Columns | Retention of polar biomarkers | Analysis of N-methylpyridinium for coffee intake [14] |

| Stable Isotope-Labeled Internal Standards | Quantification accuracy and precision | d3-NMP for coffee biomarker quantification [14] |

| Metabolite Databases | Compound identification and annotation | Massbank, METLIN, HMDB, mzCloud for metabolite search [13] |

| Food Composition Databases | Linking biomarkers to food sources | FoodB (University of Alberta); Phenol-Explorer (INRA) [12] |

Study Designs for Biomarker Discovery and Validation

Robust BFI development requires carefully controlled study designs that balance experimental control with real-world applicability. The MAIN Study (Metabolomics at Aberystwyth, Imperial and Newcastle) exemplifies an innovative approach addressing this challenge [12]. This study employed a randomized controlled dietary intervention where free-living participants consumed meals designed to emulate typical UK eating patterns while preparing and consuming all foods in their own homes [12]. This design provided the controlled conditions necessary for biomarker discovery while maintaining the ecological validity of real-world eating behaviors.

Key design features of successful BFI studies include:

- Comprehensive Menu Design: Testing biomarkers across a wide range of commonly consumed foods within conventional meal patterns [12]

- Multiple Sampling Timepoints: Determining optimal sampling windows for different biomarkers [12]

- Generalizability Assessment: Examining biomarker performance across related food groups and different food preparation methods [12]

- Home-Based Sample Collection: Developing minimally invasive protocols acceptable for free-living participants [12]

Controlled feeding studies (CFS) represent another crucial design for BFI development, allowing researchers to test a variety of foods and dietary patterns across diverse populations [17]. These studies are particularly valuable for establishing dose-response relationships and understanding the kinetic parameters of candidate biomarkers [9] [17].

Biomarker Validation and Classification System

As the number of putative BFIs grows, systematic classification becomes essential for prioritizing research and guiding application. A recently proposed system ranks BFIs across four utility levels based on robustness, reliability, and plausibility [13]. At the highest level (Utility Level 1), biomarkers have undergone comprehensive validation and demonstrate consistent performance across multiple studies. These include urinary biomarkers for total meat, total fish, chicken, fatty fish, total fruit, citrus fruit, banana, whole-grain wheat or rye, alcohol, beer, wine, and coffee [13]. Blood biomarkers at this level exist for fatty fish, whole grain wheat and rye, citrus, and alcohol [13].

The classification system acknowledges that fewer biomarkers have been fully validated for quantitative assessment compared to qualitative applications [13]. This reflects the additional complexity of establishing precise dose-response relationships, which can be influenced by inter-individual variations in absorption, distribution, metabolism, and excretion (ADME) of food components [13]. The system also highlights the importance of intra-class correlation (ICC) as a measure of variability within populations, with low ICC values potentially indicating suboptimal sampling timing, low consumption frequency, or significant inter-individual variation in biomarker response [13].

Workflow for Biomarker Development

The process of moving from biomarker discovery to validated application follows a structured pathway that integrates multiple study designs and validation steps.

BFI Development Pathway: This workflow illustrates the structured process from initial discovery to validated application, incorporating multiple study designs and validation steps.

Advanced Applications and Implementation Strategies

Applications in Nutritional Research and Public Health

The implementation of validated BFIs extends across multiple domains of nutritional research and public health. In epidemiological studies, BFIs can objectively assess dietary exposures, strengthening associations between diet and disease risk [13]. In clinical trials, they provide tools for monitoring compliance with dietary interventions, enabling more accurate per-protocol analyses [10] [13]. For public health monitoring, BFIs offer objective measures of population-level dietary patterns, complementing traditional survey methods [11] [12].

BFI applications can be tailored to different research needs based on the level of validation. Qualitative BFIs (sufficient for identifying consumers versus non-consumers) have broader applications than quantitative BFIs (required for estimating intake amounts) [13]. A stepwise approach has been proposed where less robust BFIs initially identify consumers of a specific food, followed by more robust BFIs to quantify intake in the identified consumer group [13]. This approach maximizes the utility of partially validated biomarkers while acknowledging their limitations.

Sampling Methodologies and Practical Implementation

Practical implementation of BFIs in research settings requires careful consideration of sampling methodologies. Optimal sampling approaches identified in recent studies include:

- Spot urine samples (first morning void or overnight cumulative samples) [13]

- Dried urine spots and dried blood spots for simplified storage and transport [13] [14]

- Vacuum tube stored samples for conventional biobanking [13]

- Microsampling techniques to reduce participant burden [13]

The MAIN Study demonstrated that home-based urine collection protocols could be successfully implemented with high participant compliance and minimal impact on normal activities [12]. This is particularly important for large-scale studies where laboratory-based sample collection would be impractical. Remote sampling methods not only increase participant reach but also enable monitoring of dietary patterns and changes over time in free-living populations [13].

For many biomarkers, timing of sample collection relative to food consumption is critical, reflecting the time-response characteristics of the biomarker [9] [12]. The MAIN Study collected urine samples at multiple timepoints to determine optimal sampling windows for different biomarkers, recognizing that this may vary substantially between different food compounds and their metabolites [12].

Future Directions and Research Needs

Despite significant advances in BFI research, important challenges remain. The number of comprehensively validated biomarkers is still limited, with particular gaps for plant-based foods, processed foods, and dietary patterns rather than single foods [15] [13]. Future research needs identified by experts in the field include:

- Validation of BFIs across diverse populations and dietary backgrounds [17] [13]

- Characterization of quantitative BFIs through dose-response studies [13]

- Development of BFI combinations to predict intake and classify dietary patterns [17] [13]

- Expansion of biomarker coverage to different food groups, cooking methods, and processed foods [13]

- Methodological improvements in statistical procedures for biomarker discovery [17]

- Standardization of reporting to support study replication and comparison [17]

The National Institutes of Health (NIH) has highlighted the need for larger controlled feeding studies, improved chemical standards covering a broader range of food constituents and human metabolites, standardized approaches for biomarker validation, comprehensive and accessible food composition databases, and a common ontology for dietary biomarker literature [17]. Multidisciplinary research teams with expertise in nutrition, metabolomics, bioinformatics, and statistics will be essential to address these challenges and advance the field of dietary biomarkers [17].

As precision nutrition evolves to address individual variations in response to diet, robust BFIs will play an increasingly critical role in establishing trusted associations between dietary intakes and health consequences [13]. The continued development and validation of these objective dietary assessment tools will ultimately enhance our ability to provide evidence-based nutritional guidance and improve public health outcomes.

The food metabolome represents the complete set of metabolites derived from the digestion and metabolism of foods and beverages, providing a comprehensive readout of dietary intake and its biochemical effects on the body [18]. These metabolites include both exogenous compounds originating directly from food and endogenous metabolites influenced by dietary intake through human metabolic pathways [18]. As the final product of gene-environment interactions, the food metabolome offers a unique window into how diet influences health and disease states, serving as a rich source for discovering candidate biomarkers of food intake.

In nutritional research, biomarkers of food intake have emerged as objective measures that can address significant limitations associated with traditional self-reported dietary assessment methods, such as recall errors, portion size misestimation, and systematic under-reporting [18]. Unlike subjective dietary recalls or food frequency questionnaires, food-derived biomarkers present in biological samples provide unbiased data that can reliably reflect intake of specific nutrients, foods, and dietary patterns [3] [19]. The field has advanced significantly through applications of metabolomic profiling, which enables simultaneous measurement of hundreds to thousands of small molecule metabolites in biological samples, accelerating the discovery of sensitive and specific biomarkers for dietary exposures [3] [19].

This technical guide examines the food metabolome as a complex source for candidate biomarkers, with particular focus on the rigorous validation criteria necessary for their application in nutrition research and drug development. We present experimental protocols, analytical frameworks, and emerging applications that are advancing the field of dietary biomarker research toward more precise and objective assessment of dietary intake.

Validation Criteria for Biomarkers of Food Intake

The transition from candidate biomarkers to validated measures requires rigorous assessment against established criteria. The validation framework for biomarkers of food intake encompasses multiple dimensions that collectively establish their reliability and utility for research applications.

Table 1: Key Validation Criteria for Biomarkers of Food Intake

| Validation Criterion | Description | Assessment Methods |

|---|---|---|

| Plausibility | Verification of specificity to the food and identification of food chemistry, processing, or experimental factors explaining increased concentration post-consumption. | Food composition analysis, metabolic pathway mapping, literature review of compound origins. |

| Dose-Response | Evaluation of biomarker response to varying food portions, considering intake range, habitual baseline, bioavailability, and saturation thresholds. | Controlled feeding studies with graded doses, correlation analysis between intake levels and biomarker concentrations. |

| Time-Response | Characterization of excretion kinetics and determination of biomarker half-life following food consumption. | Serial biological sampling after controlled intake, pharmacokinetic modeling. |

| Robustness | Performance consistency across diverse population groups with limited interactions from other foods or confounding factors. | Cross-population studies, mixed diet interventions, multivariate adjustment. |

| Reliability | Agreement with other biomarkers or assessment methods, with recognition of self-reported data limitations. | Method comparison studies, repeated measures analysis, correlation with reference biomarkers. |

| Stability | Chemical resilience in relevant biofluids during storage and processing. | Stability studies under varying conditions (time, temperature, freeze-thaw cycles). |

| Analytical Performance | Documentation of precision, accuracy, detection limits, and inter/intra-batch variation. | Quality control samples, replicate analyses, standard reference materials. |

| Reproducibility | Consistency of results across different laboratories and analytical platforms. | Inter-laboratory comparisons, standardized protocols, proficiency testing. |

| Variability | Assessment of intra- and inter-individual variation in biomarker levels. | Repeated measurements in same individuals, population studies. |

The validation process requires substantial evidence across these criteria before a biomarker can be considered fit-for-purpose. For example, proline betaine stands as a well-validated biomarker for citrus consumption, having demonstrated consistent performance across different analytical techniques, distinction between low, medium, and high consumers, and good agreement with dietary records in observational studies [18]. This level of validation remains exceptional rather than routine in the field, as systematic reviews have revealed that many putative biomarkers lack sufficient validation, with many foods still lacking well-validated biomarkers despite proliferation of candidate compounds [18].

Additional Considerations for Biomarker Application

Beyond the core validation criteria, several practical considerations influence the effective application of food metabolome biomarkers in research settings. The temporal dimension of biomarker measurement must align with research objectives, as many food intake biomarkers reflect short-term intake [18]. For assessment of habitual dietary intake, repeated measures of biomarker levels from multiple biological samples collected over time are essential, analogous to the multiple non-consecutive days of dietary recall recommended for capturing usual intake [18].

The choice of biological matrix significantly impacts biomarker utility and practical implementation. Blood and urine represent the most common matrices, with emerging evidence supporting the use of spot urine samples in lieu of more burdensome 24-hour collections for many biomarkers [18]. Each matrix offers distinct advantages: plasma/serum may provide integrated exposure measures, while urine often captures recent excretion patterns with less invasive collection.

The specificity of biomarkers varies considerably, with some compounds representing highly specific markers of single foods (e.g., proline betaine for citrus) while others reflect broader food groups or processing methods [20] [18]. This specificity continuum enables different research applications, from targeted food intake assessment to evaluation of overall dietary patterns.

Experimental Approaches for Biomarker Discovery and Validation

The discovery and validation of dietary biomarkers follows methodical experimental pathways that progress from controlled interventions to observational validation. The design of these studies significantly influences the quality and applicability of resulting biomarkers.

Discovery Study Designs

Controlled feeding trials represent the gold standard for biomarker discovery, allowing precise characterization of the relationship between dietary intake and subsequent metabolomic changes [3] [18]. These trials typically administer test foods in prespecified amounts to healthy participants under supervised conditions, followed by comprehensive metabolomic profiling of serial blood and urine specimens [3]. The inclusion of control arms with matched interventions without the target food is essential for establishing biomarker specificity [18].

Controlled trials may utilize various temporal designs depending on research questions:

- Acute postprandial studies collect biological samples in the postprandial period, sometimes extending to 24-48 hours to characterize kinetic parameters and half-lives of candidate biomarkers [18].

- Short-term interventions implement food consumption over days or weeks to assess biomarker accumulation, steady-state levels, and adaptive responses [18].

- Crossover designs expose participants to both intervention and control conditions in randomized sequence, allowing within-subject comparisons that control for inter-individual variability [20].

Alternative approaches include supplying the complete habitual diet to participants over extended periods (e.g., 2 weeks) to identify diet-metabolite associations across multiple foods simultaneously [18]. Observational studies with detailed dietary assessment can provide complementary discovery data, though they carry higher risks of confounding due to correlated food consumption patterns [18].

Analytical Methodologies

Liquid chromatography coupled with tandem mass spectrometry (LC-MS/MS) has emerged as the predominant analytical platform for dietary biomarker research, enabling both untargeted metabolomic profiling and targeted biomarker quantification [20] [21]. The technical capabilities of modern LC-MS/MS systems include:

- Ultra-high performance liquid chromatography (UHPLC) providing superior chromatographic resolution for complex biological samples [20].

- Multiple chromatographic methods including reversed-phase, hydrophilic interaction (HILIC), and ion-pairing chromatography to capture metabolites with diverse physicochemical properties [3].

- High-resolution mass spectrometry enabling precise mass measurement and structural characterization through fragmentation patterns [20].

- Multimodal ionization typically using electrospray ionization (ESI) in both positive and negative modes to broaden metabolite coverage [3].

The analytical workflow typically involves sample preparation (protein precipitation, extraction), chromatographic separation, mass spectrometric detection, data processing (peak picking, alignment, normalization), and statistical analysis [20] [21]. Quality control measures include use of internal standards, pooled quality control samples, and standardization across batches to ensure data quality and reproducibility [21].

Statistical Approaches and Validation

Statistical analysis of metabolomic data incorporates both univariate and multivariate approaches:

- Partial Spearman correlations identify metabolite-intake relationships while adjusting for potential confounders such as age, sex, and body mass index [20].

- False discovery rate (FDR) correction addresses multiple testing concerns in high-dimensional metabolomic data [20].

- Least Absolute Shrinkage and Selection Operator (LASSO) regression and other machine learning techniques select parsimonious sets of predictive metabolites for poly-metabolite scores [20].

- Regression models evaluate dose-response relationships and assess biomarker performance across validation criteria.

Validation studies progress from internal cross-validation to external validation in independent populations, ultimately testing biomarker performance in entirely different settings from the discovery cohort [3] [18].

Figure 1: Biomarker Discovery and Validation Workflow: This diagram outlines the key stages in the development and validation of dietary biomarkers, from initial study design through final validation.

Case Study: Poly-Metabolite Scores for Ultra-Processed Food Intake

A recent landmark study demonstrates the comprehensive application of food metabolome principles to develop poly-metabolite scores for ultra-processed food (UPF) intake, showcasing the integration of observational and experimental data for biomarker validation [20] [22] [4].

Study Design and Methods

The research employed a dual-phase approach combining observational data from the Interactive Diet and Activity Tracking in AARP (IDATA) Study with experimental data from a randomized controlled crossover feeding trial [20] [4]. The IDATA component included 718 participants aged 50-74 years who provided serial blood and urine samples alongside multiple 24-hour dietary recalls over 12 months [20] [4]. The feeding trial involved 20 subjects admitted to the NIH Clinical Center who consumed ad libitum diets containing either 80% or 0% energy from UPF for two weeks each in random order [20] [4].

Ultra-processed food intake was quantified according to the Nova classification system, which categorizes foods based on the extent and purpose of industrial processing [20]. Metabolomic profiling employed ultra-high performance liquid chromatography with tandem mass spectrometry (UHPLC-MS/MS) to measure >1,000 serum and urine metabolites [20]. Statistical analysis involved:

- Partial Spearman correlations to identify UPF-metabolite associations with false discovery rate correction [20].

- LASSO regression to select parsimonious sets of metabolites predictive of UPF intake [20].

- Poly-metabolite score calculation as a linear combination of selected metabolites [20].

- Paired t-tests to evaluate score differences between UPF diet phases in the feeding trial [20].

Key Findings and Biomarker Performance

The analysis identified hundreds of metabolites significantly correlated with UPF intake, including representatives from lipid, amino acid, carbohydrate, xenobiotic, cofactor and vitamin, peptide, and nucleotide metabolic pathways [20]. LASSO regression selected 28 serum and 33 urine metabolites as predictors of UPF intake, with overlapping metabolites including:

- (S)C(S)S-S-Methylcysteine sulfoxide (inverse correlation)

- N2,N5-diacetylornithine (inverse correlation)

- Pentoic acid (inverse correlation)

- N6-carboxymethyllysine (positive correlation) [20]

The resulting poly-metabolite scores demonstrated strong ability to differentiate between diet phases in the feeding trial, with significant differences (p<0.001) within individuals between the 80% and 0% UPF conditions [20] [4]. This validation in an independent experimental setting provided robust evidence for the scores' utility as objective measures of UPF intake.

Table 2: Key Metabolites Associated with Ultra-Processed Food Intake in the IDATA Study

| Metabolite | Serum Correlation (rs) | Urine Correlation (rs) | Metabolite Class | Direction of Association |

|---|---|---|---|---|

| (S)C(S)S-S-Methylcysteine sulfoxide | -0.23 | -0.19 | Sulfur-containing | Inverse |

| N2,N5-diacetylornithine | -0.27 | -0.26 | Amino acid derivative | Inverse |

| Pentoic acid | -0.30 | -0.32 | Organic acid | Inverse |

| N6-carboxymethyllysine | 0.15 | 0.20 | Advanced glycation end-product | Positive |

Implications and Applications

This case study illustrates several important principles in food metabolome research:

- Multi-metabolite signatures often provide superior predictive value compared to single biomarkers for complex dietary exposures like UPF [20] [23].

- Integration of observational and experimental data strengthens biomarker validation by combining real-world variability with controlled conditions [20].

- Machine learning approaches like LASSO regression enable development of parsimonious predictive models from high-dimensional metabolomic data [20].

- Poly-metabolite scores have potential applications in epidemiological research to complement or reduce reliance on self-reported dietary data [20] [22].

The successful development of UPF intake biomarkers demonstrates how food metabolome research can advance understanding of complex dietary patterns and their relationship to health outcomes.

Consortium Efforts and Standardized Frameworks

Major consortium-led initiatives are addressing the challenges of dietary biomarker development through coordinated, systematic approaches. The Dietary Biomarkers Development Consortium (DBDC) represents the first major effort to comprehensively discover and validate biomarkers for foods commonly consumed in the United States diet [3] [24].

DBDC Methodological Framework

The DBDC has implemented a structured three-phase approach to biomarker development:

- Phase 1: Identification - Controlled feeding trials administer test foods in prespecified amounts to healthy participants, followed by metabolomic profiling of blood and urine specimens to identify candidate compounds and characterize their pharmacokinetic parameters [3] [24].

- Phase 2: Evaluation - Controlled feeding studies of various dietary patterns assess the ability of candidate biomarkers to identify individuals consuming biomarker-associated foods [3] [24].

- Phase 3: Validation - Independent observational settings evaluate the validity of candidate biomarkers for predicting recent and habitual consumption of specific test foods [3] [24].

This systematic approach aims to significantly expand the list of validated biomarkers for foods in the U.S. diet, creating a publicly accessible database as a resource for the research community [3] [24].

Validation Criteria and Standardization

Consortium efforts have helped formalize validation criteria for dietary biomarkers, building on frameworks established by earlier initiatives like the FoodBall consortium [18]. These criteria provide standardized benchmarks for assessing biomarker quality and suitability for research applications. The validation process addresses both analytical validation (evaluating the method's reliability and accuracy for measuring the analyte) and clinical/biological validation (assessing the biomarker's ability to reflect dietary exposure) [21].

Additional standardization efforts focus on pre-analytical factors that can introduce variability in biomarker measurements, including:

- Sample collection protocols (fasting status, time of day, collection materials) [21]

- Processing and storage conditions (temperature, time to processing, freeze-thaw cycles) [21]

- Biological variability factors (diurnal rhythms, menstrual cycle, exercise) [21]

Addressing these pre-analytical variables through standardized protocols is essential for generating reproducible and comparable biomarker data across studies and laboratories.

Figure 2: DBDC Three-Phase Biomarker Development: The Dietary Biomarkers Development Consortium's systematic approach to discovering and validating dietary biomarkers progresses through identification, evaluation, and validation phases.

The Research Toolkit: Essential Methods and Reagents

The experimental approaches described in this guide rely on specialized methodologies, instrumentation, and analytical techniques that constitute the essential toolkit for food metabolome research.

Table 3: Essential Research Toolkit for Food Metabolome Biomarker Studies

| Tool/Reagent Category | Specific Examples | Function/Application |

|---|---|---|

| Analytical Instrumentation | UHPLC-MS/MS systems with ESI sources, HILIC and reversed-phase columns | Separation and detection of metabolites in complex biological samples |

| Sample Collection Materials | EDTA tubes (blood), preservative-free containers (urine), immediate cooling systems | Standardized biological sample acquisition with minimal degradation |

| Internal Standards | Stable isotope-labeled compounds, chemical analogues | Quantification normalization, quality control, recovery assessment |

| Data Processing Software | XCMS, Progenesis QI, Compound Discoverer, custom computational pipelines | Peak detection, alignment, normalization, metabolite identification |

| Statistical Packages | R, Python with specialized packages (metabolomics, machine learning) | Data analysis, biomarker selection, model building, visualization |

| Metabolite Databases | HMDB, FooDB, Metlin, MassBank | Metabolite identification, pathway mapping, food origin determination |

| Reference Materials | Certified standard compounds, pooled quality control samples | Method validation, inter-batch calibration, quality assurance |

The integration of these tools enables comprehensive metabolomic profiling and biomarker development. Liquid chromatography-tandem mass spectrometry (LC-MS/MS) remains the cornerstone technology, providing the sensitivity, specificity, and dynamic range necessary for detecting food-derived metabolites across diverse chemical classes [20] [21]. The ongoing development of reference databases specifically focused on food-derived metabolites represents a critical need for advancing the field, as current resources remain limited and incomplete [19] [18].

Challenges and Future Directions

Despite significant advances, the field of food metabolome biomarker research faces several persistent challenges that guide future development directions.

Key Methodological Challenges

Biomarker specificity remains a substantial hurdle, as many candidate biomarkers reflect multiple dietary sources or are influenced by non-dietary factors [18]. The complex composition of most diets creates challenges in disentangling biomarker signatures for specific foods from background dietary patterns [18]. Inter-individual variability in metabolism, driven by factors including genetics, gut microbiota, age, sex, and health status, introduces additional complexity that can limit biomarker generalizability [21].

Analytical validation presents technical challenges, particularly for biomarkers discovered through untargeted approaches that may not have established reference standards or optimized quantification methods [21]. The transition from biomarker discovery to clinically or epidemiologically useful assays requires development of robust, reproducible methods suitable for large-scale applications [21].

Integration with self-reported data creates methodological tensions, as biomarkers are often developed specifically to address limitations of self-reported methods, yet validation frequently relies on comparison with these same imperfect standards [18]. This creates circular challenges for establishing true biomarker accuracy in free-living populations.

Emerging Opportunities and Innovations

Several promising directions are advancing the field of food metabolome research:

- Poly-metabolite scores that combine multiple metabolites into integrated signatures show superior performance for complex dietary exposures like ultra-processed foods [20] [23].

- Multi-omics integration combining metabolomic data with genomic, proteomic, and microbiomic information provides more comprehensive understanding of metabolic pathways and inter-individual variation [25].

- Standardized validation frameworks like those implemented by the DBDC create systematic pathways from biomarker discovery to application [3] [24].

- Advanced statistical approaches including machine learning and network analysis enable more sophisticated modeling of complex diet-metabolome relationships [20] [25].

- Temporal sampling designs that capture diurnal variation and kinetic profiles improve understanding of biomarker dynamics and appropriate sampling protocols [18].

The continued development of the food metabolome as a source for candidate biomarkers holds significant promise for advancing nutritional epidemiology, clinical nutrition research, and public health interventions. As the field addresses current challenges and leverages emerging opportunities, food-derived biomarkers are positioned to transform our ability to objectively measure dietary exposures and understand their relationships to health and disease.

Diet is a complex exposure that significantly affects health outcomes across the lifespan. Traditional methods for assessing dietary intake, primarily based on self-reported data from food frequency questionnaires (FFQs) or 24-hour recalls, are subject to well-documented limitations including recall bias, measurement error, and an inability to account for biological variability in metabolism [3] [22] [23]. These limitations have constrained the precision of nutritional epidemiology and our understanding of diet-disease relationships.

Metabolomics, the comprehensive analysis of small-molecule metabolites, has emerged as a powerful tool to address these challenges by providing an objective window into dietary intake [26] [27]. This approach measures the downstream products of food metabolism, thereby capturing both intake and individual metabolic responses [26]. The metabolome represents the final downstream product of the genome, transcriptome, and proteome, positioning metabolites as the closest reflection of an organism's phenotype at a specific time point [27]. Metabolic profiles provide unique insights into physiological states and are highly responsive to dietary perturbations, making them ideal candidates for biomarkers of food intake [28].

This technical guide explores the role of metabolomics in advancing dietary assessment, with particular emphasis on frameworks for validating biomarkers of food intake. We present current methodologies, experimental protocols, and data visualization approaches that are transforming nutritional research and paving the way for precision nutrition.

Metabolomics as a Tool for Objective Dietary Assessment

Scientific Basis and Advantages

Metabolomics offers several distinct advantages over traditional dietary assessment methods. As a high-throughput technique that quantifies endogenous metabolites in biological samples, it provides a direct readout of metabolic activity influenced by dietary intake [26] [28]. Small-molecule metabolites (typically <1500 Da) include amino acids, lipids, organic acids, carbohydrates, and various exogenous compounds derived directly from food [26]. These metabolites serve as functional signatures that can reflect both recent intake and habitual consumption patterns when measured appropriately.

The fundamental premise of dietary metabolomics is that specific foods or food patterns produce characteristic metabolic signatures that can be detected in various biofluids, including blood, urine, and saliva [26]. These signatures may derive from: (1) direct food components and their metabolites; (2) endogenous metabolic responses to dietary intake; or (3) interactions between diet and gut microbiota [3]. Unlike self-reported data, metabolomic biomarkers are not subject to recall bias or systematic misreporting, and they can capture biological variability in absorption and metabolism that traditional methods cannot [22].

Analytical Platforms and Approaches

Two primary analytical platforms dominate metabolomic studies: mass spectrometry (MS), often coupled with separation techniques like liquid chromatography (LC-MS) or gas chromatography (GC-MS), and nuclear magnetic resonance (NMR) spectroscopy [26] [27] [28]. Each platform offers distinct advantages—MS provides higher sensitivity and broader metabolite coverage, while NMR offers superior structural elucidation and quantitative reproducibility [26].

Metabolomic approaches can be categorized as either untargeted or targeted:

Untargeted metabolomics aims to comprehensively measure all detectable metabolites in a sample without bias, making it ideal for hypothesis generation and novel biomarker discovery [26] [28]. This approach can identify previously unknown metabolites related to dietary intake but faces challenges in metabolite identification and quantification.

Targeted metabolomics focuses on precise quantification of predefined metabolite panels, offering higher sensitivity, specificity, and reproducibility for known compounds [28]. This approach is typically used for hypothesis testing and validation of candidate biomarkers.

Table 1: Comparison of Major Analytical Platforms in Dietary Metabolomics

| Platform | Sensitivity | Coverage | Quantitation | Sample Throughput | Key Applications in Dietary Assessment |

|---|---|---|---|---|---|

| LC-MS | High (pM-nM) | Broad | Semi-quantitative | Moderate | Discovery of novel dietary biomarkers; complex metabolite profiling |

| GC-MS | High (pM-nM) | Moderate | Quantitative | High | Volatile compounds; fatty acids; organic acids |

| NMR | Low (μM-mM) | Limited | Highly quantitative | High | Metabolic phenotyping; validation of known biomarkers |

| CE-MS | High (pM-nM) | Polar metabolites | Semi-quantitative | Moderate | Ionic compounds; amino acids; nucleotides |

Recent technological advances have significantly enhanced metabolomic capabilities. Ultra-high performance liquid chromatography (UHPLC) coupled with tandem mass spectrometry (MS/MS) now enables measurement of >1,000 metabolites in a single run [4]. Fourier-Transform mass spectrometry provides exceptional mass accuracy for compound identification, while emerging techniques like mass spectrometry imaging (MSI) allow for spatial visualization of metabolite distributions in tissues [26].

Validation Frameworks for Dietary Biomarkers

The Dietary Biomarkers Development Consortium Approach

The Dietary Biomarkers Development Consortium (DBDC) has established a rigorous, multi-phase framework for the discovery and validation of dietary biomarkers [3]. This systematic approach addresses the critical need for standardized methodologies in the field and provides a model for validating biomarkers against specific criteria.

The DBDC implements a 3-phase validation process:

Phase 1: Discovery and Characterization - Controlled feeding trials administer test foods in prespecified amounts to healthy participants, followed by metabolomic profiling of blood and urine specimens to identify candidate compounds. These studies characterize pharmacokinetic parameters of candidate biomarkers, including dose-response relationships and temporal patterns [3].

Phase 2: Evaluation of Discriminatory Performance - Controlled feeding studies of various dietary patterns assess the ability of candidate biomarkers to identify individuals consuming biomarker-associated foods. This phase tests specificity and sensitivity across different dietary backgrounds [3].

Phase 3: Validation in Observational Settings - The validity of candidate biomarkers to predict recent and habitual consumption of specific test foods is evaluated in independent observational cohorts. This critical phase tests performance in free-living populations [3].

This structured approach ensures that candidate biomarkers undergo rigorous testing under controlled conditions before deployment in epidemiological studies, addressing common limitations of previously proposed biomarkers.

Key Validation Criteria

For a metabolite or metabolite pattern to serve as a valid dietary biomarker, it should meet several key criteria:

- Specificity: The biomarker should reliably distinguish the food or food pattern of interest from other dietary components.

- Dose-response relationship: Biomarker levels should correlate with the amount of food consumed.

- Time-response relationship: The biomarker should exhibit appropriate kinetic properties relative to intake timing.

- Robustness: The biomarker should perform consistently across different populations and study designs.

- Replicability: Findings should be reproducible in independent cohorts and laboratories.

The DBDC framework systematically addresses each of these criteria through its phased approach, significantly advancing the rigor of dietary biomarker development [3].

Current Biomarkers and Metabolomic Signatures

Biomarkers for Ultra-Processed Foods

Recent research has made significant strides in identifying metabolomic signatures for complex dietary patterns, particularly consumption of ultra-processed foods (UPFs). NIH researchers have developed poly-metabolite scores—based on patterns of multiple metabolites—that objectively measure an individual's consumption of energy from UPFs [22] [23].

In a landmark study combining observational data from 718 older adults and experimental data from a controlled feeding trial, researchers identified hundreds of metabolites correlated with the percentage of energy from UPFs in the diet [4]. Using machine learning approaches, specifically LASSO regression, they developed poly-metabolite scores from 28 serum and 33 urine metabolites that accurately differentiated between high and low UPF consumption [4].

Key metabolites associated with UPF intake included:

- (S)C(S)S-S-Methylcysteine sulfoxide (inverse correlation)

- N2,N5-diacetylornithine (inverse correlation)

- Pentoic acid (inverse correlation)

- N6-carboxymethyllysine (positive correlation)

These metabolite scores successfully differentiated individuals consuming diets with 80% energy from UPFs versus 0% UPFs in a randomized crossover feeding trial, demonstrating their potential as objective measures of UPF intake [4].

Table 2: Select Metabolites Associated with Ultra-Processed Food Consumption

| Metabolite | Biospecimen | Correlation with UPF | Putative Origin/Pathway | Strength of Evidence |

|---|---|---|---|---|

| N6-carboxymethyllysine | Serum, Urine | Positive (rs=0.15-0.20) | Maillard reaction product; heat-processed foods | Consistent across observational and feeding studies |

| Pentoic acid | Serum, Urine | Negative (rs=-0.30 to -0.32) | Fruit and vegetable intake | Strong inverse association |

| N2,N5-diacetylornithine | Serum, Urine | Negative (rs=-0.26 to -0.27) | Whole grains, legumes | Consistent inverse association |

| S-Methylcysteine sulfoxide | Serum, Urine | Negative (rs=-0.19 to -0.23) | Cruciferous vegetables | Inverse association with UPF |

| Glycoprotein acetyls | Serum | Positive | Inflammatory response | Associated in multiple studies |

Biomarkers of Diet Quality

Beyond specific foods, metabolomics has identified signatures associated with overall diet quality. A randomized crossover feeding trial comparing a Healthy Australian Diet (HAD) with a Typical Australian Diet (TAD) identified 65 discriminatory metabolites (31 plasma, 34 urine) that distinguished between these dietary patterns [29].

A composite diet quality biomarker score derived from these metabolites was significantly associated with improved cardiometabolic markers, including:

- Reductions in systolic and diastolic blood pressure

- Lower LDL-cholesterol and triglycerides

- Improved fasting glucose levels

This demonstrates that metabolomic signatures not only reflect dietary intake but also capture the physiological effects of diet quality on health outcomes [29].

Another study focusing on UPF consumption in individuals with type 2 diabetes identified a 14-metabolite signature that was associated with risks of diabetic microvascular complications [30]. This signature included alterations in:

- VLDL and HDL lipid components

- Monounsaturated fatty acids (MUFA)

- Albumin

- Glycoprotein acetyls

Notably, this metabolomic signature mediated approximately 26% of the association between UPF intake and composite microvascular complications, providing insight into potential biological mechanisms linking diet to disease outcomes [30].

Methodologies and Experimental Protocols

Analytical Workflows

A standardized metabolomic workflow for dietary biomarker discovery encompasses several critical stages:

Metabolomics Workflow for Dietary Biomarker Discovery

Key Experimental Protocols

Controlled Feeding Studies with Metabolomic Profiling

The recent NIH study on UPF biomarkers provides an exemplary protocol for dietary biomarker discovery [4]:

Study Design: Randomized, controlled, crossover-feeding trial

- Participants: 20 adults admitted to the NIH Clinical Center

- Intervention: Two diet phases, each lasting 2 weeks

- Diet high in UPFs (80% of energy)

- Diet with no UPFs (0% of energy)

- Administered in random order with immediate crossover

- Sample Collection: Blood and urine specimens collected at multiple time points during each diet phase

- Metabolomic Profiling: Ultra-high performance liquid chromatography with tandem mass spectrometry (UHPLC-MS/MS) measuring >1,000 serum and urine metabolites

Analytical Considerations:

- Sample Preparation: Protein precipitation for serum samples; dilution and centrifugation for urine samples

- Quality Control: Inclusion of pooled quality control samples, internal standards, and blank samples in each batch

- Instrumentation: Reverse-phase chromatography with electrospray ionization (ESI) in both positive and negative modes

- Metabolite Identification: Comparison to authentic standards using retention time and mass fragmentation patterns

Statistical Analysis and Biomarker Development

Robust statistical analysis is crucial for deriving meaningful biomarkers from complex metabolomic data:

Pre-processing Steps:

- Missing Value Imputation: Assessment of missingness patterns (MCAR, MAR, MNAR) followed by appropriate imputation methods

- Normalization: Probabilistic quotient normalization or internal standard normalization to correct for technical variation

- Transformation: Log-transformation to address heteroscedasticity and right-skewness

Biomarker Discovery Approaches:

- Univariate Analysis: Partial Spearman correlations with false discovery rate (FDR) correction for multiple testing

- Multivariate Analysis: Least Absolute Shrinkage and Selection Operator (LASSO) regression for feature selection and poly-metabolite score development

- Validation: Internal validation through cross-validation and external validation in independent cohorts

Table 3: Essential Research Reagents and Platforms for Dietary Metabolomics

| Category | Specific Products/Platforms | Key Function in Dietary Metabolomics |

|---|---|---|

| LC-MS Systems | UHPLC-MS/MS (e.g., Thermo Q-Exactive, Sciex TripleTOF) | High-resolution separation and detection of complex metabolite mixtures |

| NMR Spectrometers | Bruker Avance, Jeol ECZ series | Structural elucidation and absolute quantification of metabolites |

| Chromatography Columns | HILIC, C18 reverse phase | Separation of polar (HILIC) and non-polar (C18) metabolites |

| Isotope-Labeled Internal Standards | Cambridge Isotopes, CDN Isotopes | Quantification and quality control |

| Metabolite Databases | HMDB, Metlin, MassBank | Metabolite identification and annotation |

| Sample Preparation Kits | Protein precipitation plates, solid-phase extraction | Rapid and reproducible metabolite extraction |

| Quality Control Materials | NIST SRM 1950 (human plasma), pooled QC samples | Inter-laboratory reproducibility and data quality assurance |

Applications and Implications

Research Applications

Metabolomic approaches to dietary assessment offer numerous applications in nutritional research:

Objective Exposure Assessment in Epidemiology: Metabolite biomarkers can complement or replace self-reported dietary data in large cohort studies, reducing measurement error and strengthening diet-disease associations [22] [23]. The poly-metabolite scores for UPF intake demonstrate how metabolomics can provide objective measures of complex dietary exposures that are difficult to assess via questionnaires.

Precision Nutrition: Metabolic phenotyping can identify individual variations in response to dietary interventions, enabling personalized dietary recommendations [30]. The inter-individual variability in metabolic responses to the same diet highlights the potential for metabolomics to guide personalized nutrition approaches.

Biomarker-Mediated Mechanisms: Metabolomic signatures can elucidate biological pathways linking dietary intake to health outcomes. For example, the identification of specific metabolites that mediate the relationship between UPF consumption and diabetic microvascular complications provides mechanistic insights into how diet influences disease risk [30].

Technical Considerations and Limitations

Despite its promise, several technical challenges must be addressed in dietary metabolomics:

Inter-individual Variability: Factors such as genetics, gut microbiota, lifestyle, and environmental exposures contribute to substantial inter-individual variation in metabolic responses to the same foods [31]. This variability can obscure diet-metabolite relationships if not properly accounted for in study design and analysis.

Analytical Standardization: The lack of standardized protocols across laboratories presents challenges for comparability and replication. Initiatives like the DBDC are addressing this through standardized operating procedures and data sharing [3].

Biomarker Specificity: Many metabolites are influenced by multiple dietary and non-dietary factors, complicating the identification of specific biomarkers for individual foods. Multi-metabolite patterns may offer greater specificity than single metabolites [4].

Temporal Dynamics: The kinetics of metabolite appearance and clearance vary substantially, requiring careful consideration of sampling timing relative to dietary intake [3].

Future Directions

The field of dietary metabolomics is rapidly evolving, with several promising directions for future research:

Integration with Other Omics Technologies: Combining metabolomics with genomics, transcriptomics, and proteomics will provide more comprehensive understanding of how diet influences health through multiple biological layers.

Advanced Computational Approaches: Machine learning and artificial intelligence will enhance our ability to extract meaningful patterns from complex metabolomic data and develop more accurate predictive models of dietary intake.

Standardization and Reproducibility: Continued efforts to standardize methodologies, data reporting, and biomarker validation criteria will strengthen the reliability and translation of findings.

Temporal Monitoring: Development of continuous monitoring approaches through wearable sensors or frequent sampling will capture dynamic metabolic responses to dietary intake.

As metabolomic technologies continue to advance and validation frameworks mature, metabolomics is poised to transform dietary assessment in research and ultimately in clinical practice, enabling more precise and personalized nutritional recommendations for health promotion and disease prevention.

Note: This article was constructed based on analysis of the cited research. For complete reference lists and methodological details, readers are encouraged to consult the original publications.

The Eight Essential Criteria for Systematic Biomarker Validation

In the rigorous validation of biomarkers of food intake (BFIs), establishing biological plausibility is a foundational pillar. This concept assures that a candidate biomarker's association with its target food is not merely correlative but is grounded in a sound, mechanistic understanding of human physiology. Two inseparable components form the core of this assessment: specificity and metabolic origin. Specificity confirms that the biomarker is uniquely or predominantly derived from the food of interest, while metabolic origin delineates the biochemical pathways responsible for its generation. Within the context of a broader biomarker validation framework, demonstrating biological plausibility is not an ancillary exercise but a critical step that lends credibility to the biomarker and strengthens its utility for objective dietary assessment in research and clinical applications [3] [32].

This guide provides an in-depth technical exploration of the strategies and methodologies required to establish the specificity and metabolic origin of BFIs, serving as a definitive resource for researchers and drug development professionals in the field.

Core Concepts: Specificity and Metabolic Origin

- Specificity: This refers to the biomarker's ability to accurately reflect the intake of a specific food or food component, distinguishing it from intake of other foods. A highly specific biomarker is less susceptible to confounding by other dietary or physiological factors. Specificity can be absolute (e.g., a unique phytochemical from a specific plant) or relative (e.g., a pattern of metabolites that collectively is characteristic of a food intake) [32].

- Metabolic Origin: This defines the biological pathway through which the biomarker is produced. It involves understanding whether the biomarker is a parent compound (i.e., the food component itself, absorbed and excreted with little or no modification) or a metabolite formed through human or gut microbial enzymatic activity [32] [26]. The metabolic origin provides the mechanistic storyline that links food consumption to the appearance of the biomarker in a biological fluid.

The relationship between these concepts and the broader validation process is hierarchical. Confidently asserting that a biomarker is specific to a food is contingent upon a clear understanding of its metabolic origin. Together, they form the evidentiary basis for biological plausibility.

Biomarker Specificity Classifications

Table 1: Classification and Characteristics of Biomarker Specificity

| Specificity Class | Description | Key Considerations | Representative Examples |

|---|---|---|---|

| Food-Specific | The biomarker is uniquely derived from a single food or a very limited group of foods. | Highest level of specificity; often parent compounds or their direct metabolites. | Alkylresorcinols from whole-grain wheat and rye [32]. |

| Food Group-Specific | The biomarker is derived from a broader category of related foods. | Useful for assessing dietary patterns but cannot pinpoint individual foods. | Proline betaine from citrus fruits [32]. |

| Component-Specific | The biomarker reflects the intake of a specific nutrient or compound found in multiple foods. | Useful for quantifying intake of a dietary component but lacks food source information. | Biomarkers for total dietary fiber intake, such as short-chain fatty acids from gut microbial fermentation [32]. |

Experimental Approaches for Establishing Specificity

Establishing specificity requires a multi-faceted experimental strategy designed to rule out confounding sources of the candidate biomarker.

Controlled Feeding Studies

The gold standard for establishing specificity involves controlled dietary interventions. In these studies, participants consume a standardized diet devoid of the target food, followed by the introduction of the test food in a precise quantity. The subsequent appearance and kinetic profile of the candidate biomarker in biofluids (e.g., blood, urine) provide direct evidence of its link to the food [3].

Protocol: Short-Term Controlled Feeding Trial

- Pre-Intervention Washout Period: Participants consume a fully controlled diet that excludes the target food and any known sources of the candidate biomarker for a sufficient period (e.g., 3-7 days) to allow for the clearance of any baseline levels.

- Baseline Biospecimen Collection: Collect 24-hour urine, fasting blood, or other relevant biofluids at the end of the washout period to establish a true baseline.

- Test Food Administration: Administer a single, defined dose of the test food. The dose should be physiologically relevant and accurately weighed.

- Intensive Biospecimen Sampling: Collect serial bio-samples over a defined period (e.g., 0, 2, 4, 6, 8, 12, 24 hours) to characterize the pharmacokinetic profile of the biomarker, including its appearance, peak concentration, and clearance.