Validating Eating Detection: A Comprehensive Guide to Ground Truth Methods for Biomedical Research

This article provides researchers, scientists, and drug development professionals with a systematic framework for validating eating detection technologies.

Validating Eating Detection: A Comprehensive Guide to Ground Truth Methods for Biomedical Research

Abstract

This article provides researchers, scientists, and drug development professionals with a systematic framework for validating eating detection technologies. It explores foundational concepts of ground truth, details methodological applications from wearable sensors to AI-based video analysis, addresses common challenges in troubleshooting and optimization, and presents rigorous validation and comparative frameworks. The synthesis of current evidence and methodologies aims to standardize validation practices, enhance the reliability of dietary assessment data, and inform their application in clinical trials and chronic disease management.

Understanding Ground Truth: The Foundation of Eating Detection Validation

Defining Ground Truth in the Context of Dietary Monitoring

Accurate dietary monitoring is essential for understanding the relationship between nutrition and health, particularly in managing chronic diseases such as type 2 diabetes and obesity [1]. A foundational challenge in this field is the establishment of a robust ground truth against which automated monitoring systems can be validated. Ground truth refers to the objective, reliable reference data that represents the actual dietary intake or eating behaviors of an individual. This document outlines the primary methodologies for defining ground truth in dietary monitoring research, providing application notes and detailed protocols for researchers and scientists engaged in the validation of eating detection technologies.

Core Ground Truth Methodologies

Several methodologies are employed to establish ground truth, each with distinct advantages, limitations, and appropriate use cases. The table below summarizes the primary approaches.

Table 1: Comparison of Primary Ground Truth Methodologies for Dietary Monitoring

| Methodology | Primary Data Collected | Key Strengths | Key Limitations | Typical Validation Metrics |

|---|---|---|---|---|

| Multimodal Sensor Systems [1] | Continuous Glucose Monitor (CGM) readings, accelerometry, food images, macronutrient data. | Provides rich, multi-faceted data in free-living conditions; captures physiological responses. | Complex data integration; requires participant compliance with multiple devices. | Agreement with standardized meals; model performance for macronutrient estimation. |

| Video Observation [2] | Video recordings of eating episodes, annotated for start/end times, bites, and chewing bouts. | Considered a "gold standard"; provides highly detailed, objective behavioral data. | Can be intrusive; restricts participant movement; raises privacy concerns. | Inter-rater reliability (e.g., Kappa ≥ 0.74); agreement with sensor predictions (e.g., Kappa ~0.78) [2]. |

| Smartwatch-Based Detection with EMA [3] | Accelerometer-derived eating gestures, Ecological Momentary Assessment (EMA) responses on eating context. | Captures contextual data (e.g., company, location) in near real-time; leverages common wearable. | Relies on self-report for context; EMA can be intrusive. | Meal detection accuracy (e.g., ~96%), precision, recall, F1-score (e.g., 87.3%) [3]. |

| AI-Based Image Analysis [4] | Food photographs, estimated food type, volume, and nutrient content. | Reduces user burden compared to manual logging; potential for automation and scaling. | Accuracy challenges with mixed dishes, portion sizes, and occluded food. | Accuracy of food recognition and nutrient estimation vs. dietitian assessment. |

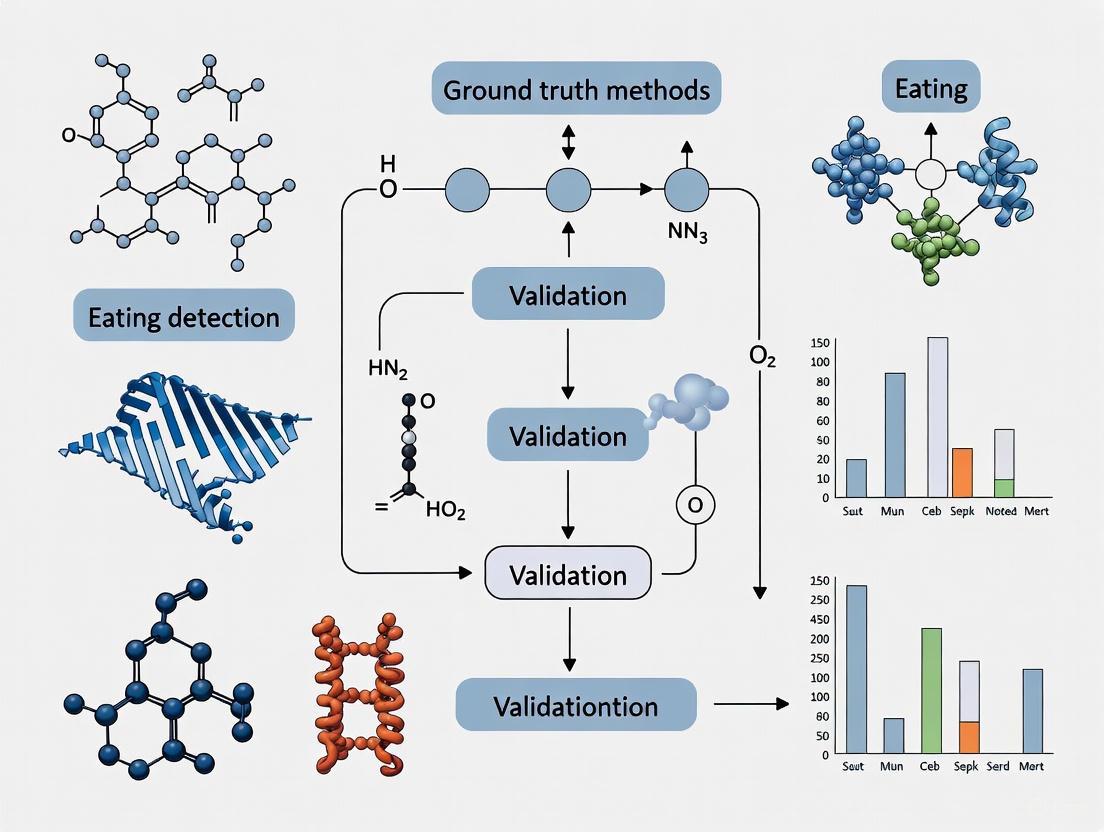

The following workflow diagram illustrates the logical relationship between these methodologies and their role in validating automated dietary monitoring systems.

Detailed Experimental Protocols

Protocol for Multicamera Video Observation in Unconstrained Environments

This protocol is designed to establish high-fidelity behavioral ground truth with minimal participant restriction [2].

Table 2: Research Reagent Solutions for Video Observation Protocol

| Item | Function/Description | Specification Example |

|---|---|---|

| Multicamera System | To capture participant activities from multiple angles in a shared space. | Six GW-2061IP cameras (1080p HD) positioned in common areas and kitchens [2]. |

| Annotation Software | For trained raters to review video footage and label activities and intake. | Software capable of playing synchronized multi-source video with annotation capabilities. |

| Wearable Sensor System (AIM) | To collect synchronized sensor data (jaw motion, hand gestures) for cross-validation. | Includes jaw motion sensor (piezoelectric strain sensor), hand gesture sensor, and data collection module [2]. |

Procedure:

- Facility Setup: Instrument a multi-room observational facility (e.g., a 4-bedroom apartment with common areas) with multiple motion-sensitive, high-definition cameras to cover all living spaces except bathrooms [2].

- Participant Recruitment: Recruit participants without conditions affecting normal chewing. Obtain informed consent and IRB approval.

- Data Collection: Simultaneously monitor multiple participants for several days (e.g., 3 days). Participants are free to move within the facility. They wear a multisensor system like the Automatic Ingestion Monitor (AIM) and have access to a fully stocked kitchen.

- Video Annotation:

- Training: Train at least three human raters to annotate videos for major activities (e.g., eating, drinking, walking) and detailed food intake (start/end of eating bouts, individual bites, chewing bouts).

- Annotation: Raters independently review the video recordings.

- Reliability Assessment: Calculate inter-rater reliability using metrics like Light's kappa for food intake annotation (target >0.8) and average kappa for activity annotation (target >0.7) [2].

- Data Integration: Synchronize the finalized video annotations with the data stream from the wearable sensors to serve as ground truth for validating sensor-based intake detection algorithms.

Protocol for Multimodal Data Collection (CGMacros Dataset)

This protocol outlines the collection of a comprehensive dataset that integrates physiological, behavioral, and nutritional data to define ground truth for free-living studies [1].

Procedure:

- Participant Screening and Recruitment: Recruit a cohort that includes healthy individuals, those with pre-diabetes, and those with type 2 diabetes. Collect baseline demographics, anthropometrics (BMI), and blood analytics (HbA1c, fasting glucose, insulin, lipids).

- Sensor Deployment:

- Apply two Continuous Glucose Monitors (e.g., Abbott FreeStyle Libre Pro and Dexcom G6 Pro) to each participant.

- Provide a fitness tracker (e.g., Fitbit Sense) to log physical activity and metabolic equivalent of tasks (METs).

- Dietary Logging and Imaging:

- Train participants to use a mobile application (e.g., MyFitnessPal) to log all meals, including the specific macronutrient composition.

- Instruct participants to take photographs of their meals before and after consumption using a messaging app (e.g., WhatsApp) to extract timestamps and estimate consumption.

- Meal Protocol: For a standardized period (e.g., 10 days), provide participants with specific meals for breakfast and lunch (e.g., protein shakes, meals from a restaurant chain) with known and varied macronutrient compositions. Dinners can be self-selected.

- Data Processing:

- Process CGM data to a uniform sampling rate (e.g., 1 minute) using linear interpolation.

- Integrate data streams (CGM, activity, nutrient intake, meal timestamps, photos) into a unified dataset for each participant.

The workflow for this integrated approach is depicted below.

Protocol for Smartwatch-Based Detection with Contextual EMA

This protocol leverages commercial smartwatches for passive detection and uses EMAs to capture the subjective context of eating [3].

Procedure:

- System Development:

- Train a machine learning model (e.g., Random Forest) on an existing dataset of accelerometer data from a smartwatch (e.g., Pebble watch) annotated for eating and non-eating gestures [3].

- Port the trained model to a smartphone application for real-time inference.

- Study Deployment:

- Deploy the system to participants (e.g., college students) for an extended period (e.g., 3 weeks).

- Participants wear a smartwatch on their dominant hand to capture accelerometer data.

- Real-Time Detection and Triggering:

- The smartphone application processes the accelerometer data in real-time to detect eating gestures.

- Upon detecting a threshold of eating gestures within a specific time window (e.g., 20 gestures in 15 minutes), the system triggers an EMA.

- Contextual Data Capture:

- The EMA prompts the user with short questions about the eating context, such as meal type, social context, location, and perceived healthfulness of the food.

- Validation: System performance is validated by the accuracy of meal detection against user self-reports or other benchmarks, and the richness of the contextual data captured is analyzed.

The Critical Role of Validation in Nutritional Science and Chronic Disease Management

The accurate measurement of dietary intake constitutes the foundation of nutritional science, yet it remains a formidable challenge. Traditional methods, such as Food Frequency Questionnaires (FFQs) and self-reported diet records, are plagued by significant limitations including recall bias, misreporting, and an inability to capture the complex microstructure of eating behavior [5] [3]. These inaccuracies in the primary data, or "ground truth," directly compromise the validity of research linking diet to chronic diseases such as obesity, type 2 diabetes, and cardiovascular conditions [6] [7]. The management and prevention of these diseases, which affect six out of ten U.S. adults, are therefore critically dependent on reliable nutritional data [6].

The emergence of objective monitoring technologies promises to revolutionize dietary assessment. However, the performance of these novel tools is entirely contingent on the quality of the validation methods used to evaluate them. This article details advanced protocols and application notes for establishing a robust ground truth in eating detection research, providing a critical framework for researchers and drug development professionals to validate next-generation tools for nutritional science and chronic disease management.

Ground Truth Methodologies: Comparative Analysis

The selection of a ground truth methodology is a primary determinant of validation quality. The table below summarizes the key characteristics of prevalent approaches.

Table 1: Comparison of Ground Truth Methodologies for Eating Detection Validation

| Methodology | Key Principle | Data Output | Strengths | Limitations |

|---|---|---|---|---|

| Video Annotation [8] [9] | Manual behavioral coding from video recordings. | Precise timing of bites, chews, and eating episodes. | High temporal precision; rich behavioral context. | Labor-intensive; privacy concerns; may not be feasible in all free-living settings. |

| Sensor-Backed Annotation [10] | Use of integrated sensors (e.g., accelerometer, camera) on wearable devices. | Synchronized sensor data and images for automated classification. | Objective; captures complementary data streams (e.g., chewing, food images). | Complex data processing; requires specialized hardware. |

| Button Press/Event Marker [11] | Self-report via button press on a wrist-worn device to mark eating episode boundaries. | Start and end times of self-identified eating episodes. | Simple for the user; suitable for all-day data collection. | Highly prone to human error (forgetting to press); noisy labels. |

| Continuous Weight Measurement (UEM) [9] | Direct measurement of food weight loss during a meal using a scale. | Second-by-second cumulative intake curve (grams). | Considered a "gold standard"; provides dynamic intake data. | Restricted to lab settings; not suitable for multi-item meals or free-living. |

Advanced Experimental Protocols for Validation

Protocol 1: Laboratory-Based Validation with OCOsense Glasses and Video Annotation

This protocol validates wearable sensor output against meticulously coded video recordings in a controlled laboratory setting, providing a high-fidelity benchmark.

- Aim: To validate the accuracy of OCOsense glasses in detecting and quantifying chewing behaviors.

- Materials:

- OCOsense glasses (or similar device with facial EMG/accelerometer sensors).

- High-definition video recording system.

- ELAN behavioral coding software (or equivalent).

- Standardized test foods (e.g., bagel, apple).

- Procedure:

- Participant Setup: Fit the participant with OCOsense glasses and ensure data logging is active.

- Video Recording: Position the camera to capture a clear view of the participant's face and upper body throughout the meal.

- Calibration Meal: Provide a standardized meal. A 60-minute lab-based breakfast session has been successfully used in prior research [8].

- Data Synchronization: Ensure the sensor data and video recording streams are synchronized using a common time signal.

- Manual Video Annotation: Trained coders use software like ELAN to annotate the precise start and end of each chewing bout, generating a manual chew count and timing.

- Algorithm Output: Process the sensor data through the device's proprietary algorithm to generate an automated chew count and timing.

- Statistical Validation: Compare the manual (video) and automated (sensor) outputs. Strong agreement is indicated by:

- Bland-Altman plots showing minimal bias.

- High Pearson correlation coefficients (e.g., r = 0.955 as reported) [8].

- Non-significant paired t-tests for mean chew counts and chewing rates between methods.

The following workflow diagram illustrates the key steps in this laboratory-based validation protocol:

Protocol 2: Free-Living Validation with AIM-2 and Integrated Detection

This protocol is designed for validating eating detection in unstructured, free-living environments, which is crucial for assessing real-world applicability.

- Aim: To validate the detection of eating episodes in free-living conditions using a multi-modal sensor system (AIM-2) that integrates image and accelerometer data.

- Materials:

- Automatic Ingestion Monitor v2 (AIM-2) device, worn on eyeglasses [10].

- Foot pedal (for pseudo-free-living lab calibration to train the sensor model).

- Procedure:

- Sensor Deployment: Participants wear the AIM-2 device during both a pseudo-free-living day (in-lab meals with controlled activities) and a full free-living day.

- Lab Calibration Ground Truth: During the pseudo-free-living session, participants press and hold a foot pedal from the moment a bite of food enters the mouth until the last swallow. This provides precise ground truth for training the accelerometer-based chewing detection model [10].

- Free-Living Data Collection: The device continuously collects two data streams:

- Egocentric Images: Captured at set intervals (e.g., every 15 seconds).

- Accelerometer Data: Sampled at a high frequency (e.g., 128 Hz) to capture head movement and chewing motions.

- Image-Based Ground Truth: For the free-living day, all captured images are manually reviewed. Annotators record the start and end times of eating episodes and draw bounding boxes around food/beverage objects to create a ground truth for image-based detection.

- Hierarchical Classification: A machine learning classifier integrates confidence scores from both the image-based food recognition and the sensor-based chewing detection.

- Performance Metrics: The integrated method's performance is evaluated against the manual image annotation ground truth using standard metrics:

- Sensitivity/Recall: Proportion of actual eating episodes correctly detected (e.g., 94.59% reported).

- Precision: Proportion of detected episodes that are true eating episodes (e.g., 70.47% reported).

- F1-Score: Harmonic mean of precision and recall (e.g., 80.77% reported) [10].

Addressing the Challenge of Noisy Ground Truth Labels

A critical, often overlooked aspect of validation is the quality of the ground truth itself. Research using the Clemson all-day (CAD) dataset, which relies on participant button presses, revealed a "strong likelihood that a significant portion of the button presses may contain errors" [11]. These "noisy labels" occur when participants forget to press the button or press it inaccurately, mislabeling the start and end times of meals.

- Mitigation Protocol:

- Classifier-Assisted Review: Train a preliminary eating detector on the original, potentially noisy ground truth. This classifier produces a continuous probability of eating, P(E), throughout the day.

- Visual Inspection & Adjustment: Raters visually compare the P(E) plot against the participant's reported button presses. Intervals where the classifier strongly disagrees with the ground truth are flagged for manual adjustment.

- Retraining: The eating detector is retrained on the adjusted, higher-quality ground truth. This process has been shown to improve classifier accuracy and reduce false positive detections, underscoring the value of iterative label refinement [11].

The Scientist's Toolkit: Key Research Reagent Solutions

The successful execution of the aforementioned protocols relies on a suite of specialized tools and computational models.

Table 2: Essential Research Reagents and Tools for Eating Detection Validation

| Tool / Reagent | Type | Primary Function in Validation | Exemplar Use Case |

|---|---|---|---|

| OCOsense Glasses [8] | Wearable Sensor | Detects facial muscle movements associated with chewing; provides objective data stream for comparison with video. | Laboratory-based validation of chewing counts and rates. |

| AIM-2 Device [10] | Wearable Sensor System | Integrates an egocentric camera and accelerometer to passively capture images and chewing motion in free-living conditions. | Multi-modal eating detection and validation in unstructured environments. |

| ELAN Software [8] | Behavioral Annotation Tool | Enables frame-accurate manual coding of eating behaviors from video recordings to create a high-precision ground truth. | Generating the reference standard for validating sensor output in lab studies. |

| Logistic Ordinary Differential Equation (LODE) Model [9] | Computational Model | Characterizes dynamic cumulative intake curves from bite timing data, using average bite sizes when continuous weight is unavailable. | Modeling meal microstructure in children or free-living studies where Universal Eating Monitors are impractical. |

| Random Forest Classifier [3] | Machine Learning Algorithm | Classifies wrist motion data from smartwatches into "eating" or "non-eating" gestures in real-time. | Powering real-time eating detection systems that can trigger Ecological Momentary Assessments (EMAs). |

The relationship between the tools, data, and validation goals can be complex. The following diagram maps the logical pathway from data acquisition to a validated outcome, highlighting the role of key tools:

The rigorous validation of objective eating detection methods opens new frontiers in chronic disease research and management. Accurate, passive monitoring enables:

- Personalized Nutritional Interventions: Objectively linking specific eating patterns (e.g., rapid eating, distracted eating) to disease biomarkers allows for highly targeted counseling. For instance, a validated system found over 99% of meals were consumed with distractions, a behavior linked to overeating [3].

- Evaluation of Dietary Patterns: Validated tools can assess adherence to therapeutic diets like the DASH diet, which is proven to improve blood pressure, insulin metabolism, and inflammatory markers [7].

- "Food as Medicine" Strategies: Reliable data is the bedrock of produce prescription programs and other food-based interventions, which have been shown to significantly improve systolic and diastolic blood pressure and glycated hemoglobin levels [12].

In conclusion, the path to mitigating the global burden of chronic disease is inextricably linked to improving the science of dietary measurement. By adopting the detailed validation protocols, tools, and models outlined in these application notes, researchers can generate the high-quality, objective data necessary to build a more rigorous and impactful evidence base for nutritional science and chronic disease management.

In the field of dietary behavior research, establishing reliable ground truth is fundamental for validating innovative assessment technologies, including wearable sensors and automated eating detection systems. Traditional methods for capturing ground truth data encompass a spectrum of approaches, from highly controlled direct observation to various forms of self-reporting and biomarker validation. These methods serve as the critical reference point against which new assessment tools are measured, despite each carrying distinct limitations and advantages. Within eating detection validation research, the choice of ground truth method significantly influences study design, data accuracy, and the validity of conclusions drawn about dietary behaviors and intake. This overview details the primary traditional ground truth methodologies, their experimental protocols, and their application within contemporary research contexts.

Direct Observation Methods

Definition and Applications

Direct observation involves the systematic recording of dietary intake by a trained researcher who visually monitors participants during eating occasions. This method is considered a criterion standard in validation studies due to its objective nature, which minimizes errors associated with recall and social desirability bias that plague self-report methods [13] [14]. It is particularly valuable in structured settings such as school cafeterias, institutional feeding programs, and laboratory-based meal studies, where it provides accurate information on the social and physical context of dietary intake [13].

Table: Characteristics of Direct Observation

| Characteristic | Assessment |

|---|---|

| Number of Participants | Small |

| Cost of Development | Low |

| Cost of Use | High |

| Participant Burden | Low |

| Researcher Burden of Data Collection | High |

| Risk of Reactivity Bias | Yes |

| Risk of Recall Bias | No |

| Risk of Social Desirability Bias | Minimized |

Experimental Protocol for Direct Observation

Objective: To obtain an objective measure of foods and beverages consumed by a participant during a defined eating occasion through systematic observation and recording.

Materials and Reagents:

- Standardized recording forms (digital or paper)

- Weighed food samples (pre- and post-consumption)

- Digital food scale (precision ±1 g)

- Visual aids for portion size estimation (e.g., quarter, half, three-quarters consumed)

- Timing device

- Camera (optional, for image-based intake estimation)

Procedure:

- Pre-Observation Training: Observers undergo extensive training to recognize food items, estimate portion sizes, and use standardized recording protocols. Inter-observer reliability should be assessed and maintained above 80% agreement [13].

- Pre-Meal Preparation: Record all foods and beverages offered to the participant, including brand names, ingredients, and preparation methods. Weigh and record initial portion sizes using a digital scale.

- In-Situ Observation: During the meal, the observer discreetly notes:

- All foods and amounts consumed.

- Food items received, given away, or spilt.

- Meal start and end times.

- Contextual factors (e.g., social environment, location).

- Post-Meal Data Collection: Weigh or visually estimate all plate waste (remaining food). Calculate consumption using the formula: Amount Consumed = Initial Food Weight - Final Food Weight.

- Data Processing: Convert all consumed foods into gram amounts. Match food items with corresponding entries in a food composition database to calculate nutrient intakes [13].

Quality Control:

- Implement a pre-test period to reduce participant reactivity.

- Use multiple observers to assess inter-rater reliability.

- Provide regular feedback and retraining sessions for observers.

- Conduct random checks of recorded data for accuracy and consistency.

Direct Observation Workflow

Limitations and Reactivity Considerations

A significant challenge with direct observation is reactivity bias, where participants alter their natural eating behavior due to awareness of being observed. A systematic review and meta-analysis found that heightened awareness of observation in laboratory settings was associated with a significant reduction in energy intake (standardized mean difference: 0.45) compared to control conditions [15]. This effect necessitates strategies to minimize intrusion, such as covert positioning in controlled settings or habituation periods where participants become accustomed to the observer's presence before formal data collection begins [13].

Self-Report Dietary Assessment Methods

Primary Modalities and Characteristics

Self-report instruments constitute the most widespread approach for dietary assessment in epidemiological and clinical research. The three primary modalities include 24-hour dietary recalls, food records (or diaries), and food frequency questionnaires (FFQs). While these methods can provide comprehensive dietary data at the group level, they are prone to systematic misreporting errors, particularly underreporting of energy intake [16].

Table: Comparison of Self-Report Dietary Assessment Methods

| Method | Description | Temporal Framework | Key Limitations |

|---|---|---|---|

| 24-Hour Dietary Recall | Structured interview assessing all foods/beverages consumed in previous 24 hours | Short-term (previous day) | Relies on memory; prone to recall bias; interviewer training required |

| Food Record/Diary | Prospective recording of all foods/beverages as consumed | Real-time recording over multiple days | High participant burden; may alter usual intake; requires literacy |

| Food Frequency Questionnaire (FFQ) | Questionnaire on frequency of consumption of specific foods over a defined period | Long-term (past month, year) | Portion size estimation difficult; memory dependent; may not capture recent diet changes |

Diet History Method: A Specialized Clinical Protocol

Objective: To assess habitual dietary intake, patterns, and behaviors through a detailed, structured interview conducted by a trained clinician or dietitian.

Materials:

- Diet history questionnaire (e.g., Burke diet history format)

- Food models and portion size visual aids

- Food composition database

- Dietary analysis software

Procedure:

- Structured Interview: Conduct a comprehensive interview covering:

- Typical intake pattern (meal timing, frequency)

- Detailed description of foods and beverages consumed

- Portion sizes estimation using standardized aids

- Food preparation methods

- Seasonal variations in diet

- Supplement use

- Disordered eating behaviors (e.g., binge eating, restriction, compensatory behaviors) [17]

- Data Quantification: Convert reported foods and portion sizes into quantitative nutrient data using food composition tables.

- Clinical Interpretation: Analyze dietary data in the context of the individual's nutritional requirements, disordered eating behaviors, and clinical status.

Quality Control:

- Interviewers should receive specialized training in diet history administration.

- Use standardized probing techniques to minimize under-reporting or over-reporting.

- Implement quality checks for data entry and nutrient analysis.

Validation Evidence: A 2025 pilot validation study in females with eating disorders found moderate to good agreement between diet history-derived nutrients and specific biomarkers: dietary cholesterol and serum triglycerides showed moderate agreement (kappa = 0.56), while dietary iron and serum total iron-binding capacity showed moderate-good agreement (kappa = 0.48-0.68) [17].

Biomarker Validation Approaches

Doubly Labeled Water for Energy Intake Validation

The doubly labeled water (DLW) method provides an objective biomarker for validating self-reported energy intake by measuring total energy expenditure. Under conditions of weight stability, energy intake approximately equals energy expenditure, allowing DLW to serve as a reference method for validating self-reported energy intake [16].

Principle: The method is based on the differential elimination kinetics of two stable isotopes (deuterium ²H and oxygen-18 ¹⁸O) from body water. The difference in elimination rates is proportional to carbon dioxide production, from which total energy expenditure can be calculated using indirect calorimetry equations [16].

Validation Findings: Studies comparing self-reported energy intake against DLW-measured energy expenditure consistently demonstrate systematic underreporting, particularly among individuals with higher body mass index. Underreporting of energy intake has been found to increase with BMI, with macronutrients not underreported equally (protein is least underreported) [16].

Protocol: Biomarker Validation of Self-Reported Intake

Objective: To validate self-reported dietary intake against objective nutritional biomarkers.

Materials:

- Self-report dietary assessment tool (e.g., food record, 24-hour recall)

- Equipment for biological sample collection (blood, urine)

- Laboratory facilities for biomarker analysis

Procedure:

- Dietary Assessment: Administer the self-report dietary assessment method concurrently with biological sample collection.

- Biological Sample Collection: Collect appropriate samples for targeted nutritional biomarkers:

- Urinary nitrogen for protein intake validation

- Serum triglycerides, cholesterol for lipid intake

- Serum iron, ferritin, total iron-binding capacity for iron intake

- Red cell folate for folate intake [17]

- Laboratory Analysis: Process samples according to standardized laboratory protocols for each biomarker.

- Statistical Analysis: Compare self-reported nutrient intakes with biomarker levels using correlation analyses (e.g., Spearman's rank correlation), kappa statistics, and Bland-Altman methods to assess agreement and systematic bias [17].

Biomarker Validation Workflow

Emerging Technological Approaches and Validation Frameworks

Ecological Momentary Assessment (EMA)

Ecological Momentary Assessment (EMA) is a methodological approach that captures real-time data on behavior and context in naturalistic settings, reducing recall bias. In eating behavior research, EMA can be implemented through smartphone applications that prompt participants to report on recent eating episodes, contextual factors (e.g., location, social environment, mood), and dietary intake [3] [18].

Validation Application: In the Monitoring and Modeling Family Eating Dynamics (M2FED) study, EMA served as the ground truth method for validating a smartwatch-based eating detection system. The study demonstrated high compliance rates (89.26% overall), supporting EMA's feasibility for capturing in-situ eating validation data [18].

Integrated Validation Protocol for Wearable Eating Detection Systems

Objective: To validate the performance of automated eating detection systems (e.g., wrist-worn sensors) in free-living settings using a combination of ground truth methods.

Materials:

- Wearable eating detection device (e.g., smartwatch with accelerometer)

- Mobile device with EMA application

- Data processing and analysis software

Procedure:

- System Deployment: Participants wear the sensing device on their dominant wrist during the study period (typically 1-2 weeks).

- Ground Truth Data Collection:

- Time-Triggered EMAs: Prompt participants at random intervals within each day to report recent eating episodes.

- Event-Triggered EMAs: Automatically prompt participants when the detection system identifies a potential eating event to confirm whether eating occurred and capture contextual information [3] [18].

- Algorithm Performance Calculation:

- True Positives: Correctly detected eating events confirmed by EMA.

- Precision: Proportion of detected events that were true eating events (e.g., 77% in the M2FED study) [18].

- Recall: Proportion of actual eating events correctly detected by the system.

Performance Metrics: The M2FED study reported a precision of 0.77, with 76.5% of detected events representing true eating events, demonstrating reasonable validity for in-field eating detection [18].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Dietary Validation Research

| Item | Function/Application | Example Use Cases |

|---|---|---|

| Doubly Labeled Water (²H₂¹⁸O) | Objective measurement of total energy expenditure | Validation of self-reported energy intake in weight-stable adults [16] |

| Standardized Food Composition Database | Nutrient calculation from reported food intake | Conversion of food records to nutrient intakes across all self-report methods |

| Digital Food Scales (±1 g precision) | Accurate quantification of food portions | Direct observation studies, weighed food records |

| Portion Size Estimation Aids | Visual guides for amount consumed | 24-hour recalls, diet history interviews, direct observation recording |

| Ecological Momentary Assessment (EMA) Platform | Real-time behavioral data collection in natural environments | Ground truth for wearable sensor validation; contextual factor assessment [3] [18] |

| Nutritional Biomarker Assays | Objective measures of nutrient status | Validation of specific nutrient intake reports (e.g., urinary nitrogen for protein) [17] |

| Wearable Inertial Sensors (Accelerometer/Gyroscope) | Automated detection of eating gestures | Development of algorithm-based eating detection systems [19] [18] |

Traditional ground truth methods for eating detection validation encompass a diverse toolkit ranging from direct observation to self-reports and biomarker validation. Each approach carries distinct strengths and limitations, with direct observation providing objective assessment in controlled settings but risking reactivity bias, while self-report methods offer practical administration but suffer from systematic misreporting. Biomarker validation provides objective verification for specific nutrients but requires specialized resources. Emerging approaches like EMA offer promising alternatives for validating wearable sensors in free-living contexts. The selection of an appropriate ground truth method depends on the research question, population, setting, and resources, with multi-method approaches often providing the most comprehensive validation framework for eating detection technologies.

Key Metrics and Performance Indicators for Eating Detection Systems

Validating eating detection systems requires a robust framework of key metrics and performance indicators to assess their accuracy, reliability, and utility in both research and clinical applications. These metrics provide the essential "ground truth" for comparing emerging technologies against established methodologies, forming a critical component of methodological validation in nutritional science, behavioral research, and drug development. As eating detection technologies evolve from laboratory instruments to automated and AI-driven systems, comprehensive performance assessment becomes paramount for scientific acceptance and clinical adoption. This document outlines the essential metrics, experimental protocols, and methodological considerations for rigorous validation of eating detection systems within a research context focused on establishing definitive ground truth methods.

Core Performance Metrics for Eating Detection Systems

The performance of eating detection systems should be evaluated across multiple dimensions, including detection accuracy, temporal precision, and practical reliability. The following metrics provide a comprehensive framework for system validation.

Table 1: Core Performance Metrics for Eating Detection Systems

| Metric Category | Specific Metric | Definition/Calculation | Interpretation in Validation Context |

|---|---|---|---|

| Detection Accuracy | Precision (Positive Predictive Value) | ( \text{Precision} = \frac{TP}{TP + FP} ) | Proportion of detected eating episodes that are correct; high value indicates low false alarms [20]. |

| Recall (Sensitivity) | ( \text{Recall} = \frac{TP}{TP + FN} ) | Proportion of actual eating episodes correctly identified; high value indicates minimal missed detections [20]. | |

| F1-Score | ( F1 = 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}} ) | Harmonic mean of precision and recall; provides a single balanced metric [21] [20]. | |

| Temporal & Microstructure Analysis | Bite Count Accuracy | ( \text{ICC} ) or correlation with manual counts | Agreement between automated and human-coded bite counts; essential for eating rate calculation [21]. |

| Meal Duration Accuracy | Mean Absolute Error (MAE) in time units | Difference between detected and actual meal start/end times. | |

| Eating Rate Consistency | Intra-class Correlation Coefficient (ICC) | Reliability of eating rate measures across repeated sessions; indicates system stability [22]. | |

| Overall System Reliability | Intra-class Correlation Coefficient (ICC) | Measures test-retest or inter-rater reliability | Quantifies measurement consistency; an ICC > 0.9 indicates excellent repeatability [22]. |

| Macro F1-Score | Average F1 across all classes (e.g., food types) | Important for multi-food or multi-behavior classification tasks [23]. |

Detailed Experimental Protocols for Validation

Protocol 1: Laboratory-Based Validation Using Universal Eating Monitors

Objective: To validate automated eating detection systems against highly accurate, laboratory-based weight-scale systems like the Universal Eating Monitor (UEM) under controlled conditions.

Background: Traditional UEMs, such as the "Feeding Table," provide high-accuracy, real-time monitoring of food intake by integrating scales into a tabletop. They are considered a reference standard for validating new detection technologies, especially for multi-food meals [22].

Table 2: Key Parameters for UEM Validation Studies

| Parameter | Specification | Rationale |

|---|---|---|

| Sample Size | 31-49 participants (based on previous studies) | Provides sufficient statistical power for reliability analysis [22]. |

| Test-Retest Interval | 2 consecutive days | Assesses day-to-day repeatability under standardized conditions [22]. |

| Food Types | Up to 12 different foods simultaneously | Evaluates system performance with complex, multi-food meals [22]. |

| Data Collection Frequency | Every 2 seconds | Provides high-resolution data on eating microstructure [22]. |

| Key Outcome Measures | ICC for energy and macronutrient intake | Quantifies consistency of primary intake measurements [22]. |

Methodology:

- Setup: Utilize a UEM system (e.g., a table with multiple integrated balances) capable of monitoring several foods simultaneously. Data should be collected at a high frequency (e.g., every 2 seconds) and transmitted in real-time to a recording computer [22].

- Participant Preparation: Recruit healthy volunteers. Standardize pre-test meals to control for hunger levels.

- Testing Procedure: Over two consecutive days, serve participants standardized meals with a variety of foods. The UEM continuously records the weight of each food item throughout the meal.

- Data Analysis: Calculate the intra-class correlation coefficient (ICC) between the two days for total energy intake and macronutrient-specific intake (protein, fat, carbohydrates). High ICC values (e.g., energy: r = 0.82) indicate good system repeatability and reliability [22].

Protocol 2: Video-Based Validation with Gold-Standard Manual Coding

Objective: To validate automated bite detection algorithms from video data against manual annotation by trained human coders, which is the current gold standard for microstructure analysis [21].

Background: Systems like ByteTrack use deep learning (e.g., CNNs and LSTM-RNNs) to automate bite detection from meal videos. This protocol outlines their validation against manual coding.

Diagram 1: Video Validation Workflow

Methodology:

- Data Collection: Record meal sessions in a controlled laboratory setting. For pediatric studies, use a wall-mounted camera (e.g., 30 fps) positioned outside the child's direct line of sight to minimize observer effects. Meals should consist of common foods, with the possibility of varying portion sizes across sessions [21].

- Gold Standard Annotation: Have trained coders manually review all videos to annotate the timestamps of each bite. This establishes the ground truth dataset.

- Automated Processing: Run the video data through the automated detection system (e.g., ByteTrack). The system typically involves a two-stage pipeline: first, detecting and tracking the participant's face, and second, classifying frames or sequences as containing a bite or not using a combination of CNNs and LSTM networks [21].

- Performance Calculation: Compare the system's output against the manual ground truth. Calculate standard metrics like precision, recall, and F1-score for bite detection. Furthermore, compute the Intra-class Correlation Coefficient (ICC) for derived measures like total bite count and eating rate to assess agreement beyond simple detection [21].

Protocol 3: Biomarker Validation for Energy and Macronutrient Intake

Objective: To validate dietary intake data from novel assessment methods (e.g., experience sampling apps) against objective biomarkers, which are not subject to self-reporting biases.

Background: The doubly labeled water (DLW) method for total energy expenditure and urinary nitrogen for protein intake are considered objective reference measures for validating self-reported energy and protein intake, respectively [24].

Methodology:

- Study Design: A prospective observational study over approximately four weeks is typical. The first two weeks establish baseline data, while the final two weeks are used for concurrent biomarker and method validation [24].

- Participant Recruitment: Aim for a sample size of at least 100-115 participants to achieve 80% power for detecting meaningful correlation coefficients (≥0.30) [24].

- Intervention/Assessment:

- Administer the tool being validated (e.g., the Experience Sampling-based Dietary Assessment Method - ESDAM) over a two-week period.

- Implement the biomarker protocols: DLW for total energy expenditure, 24-hour urine collection for nitrogen analysis, and blood sampling for serum carotenoids and erythrocyte membrane fatty acids [24].

- Data Analysis: Assess validity using Spearman's correlation coefficients between the intake values from the tool and the biomarker-derived values. Use Bland-Altman plots to visualize the limits of agreement between the two methods. The method of triads can be employed to quantify the measurement error of the tool, the 24-hour dietary recalls, and the biomarkers in relation to the unknown "true dietary intake" [24].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Solutions for Eating Detection Validation

| Reagent / Tool | Category | Primary Function in Validation | Example/Specifications |

|---|---|---|---|

| Universal Eating Monitor (UEM) | Laboratory Hardware | Provides high-resolution, real-time measurement of food weight loss during eating; the reference standard for intake amount and timing [22]. | "Feeding Table" with multiple integrated balances (e.g., 5 balances monitoring up to 12 foods), data collection every 2 seconds [22]. |

| Doubly Labeled Water (DLW) | Biochemical Biomarker | Serves as an objective reference for total energy expenditure, used to validate self-reported energy intake data against physiological consumption [24]. | Requires specialized preparation and analysis (e.g., isotope ratio mass spectrometry). |

| 24-Hour Urinary Nitrogen | Biochemical Biomarker | Provides an objective measure of protein intake, used to validate protein intake reported by dietary assessment tools [24]. | 24-hour urine collection from participants; analysis via Kjeldahl method or chemiluminescence. |

| Video Recording System | Data Acquisition | Captures visual data of eating episodes for subsequent manual coding or automated analysis of meal microstructure [21]. | Network camera (e.g., Axis M3004-V) recording at 30 fps, positioned discreetly [21]. |

| YOLO (You Only Look Once) Models | Computer Vision Algorithm | Enables real-time object detection and classification of food items within images for automated dietary assessment and portion estimation [20]. | YOLOv8 demonstrated superior performance (82.4% precision) for food component identification [20]. |

| Convolutional & Recurrent Neural Networks (CNNs/LSTMs) | AI/ML Architecture | Forms the core of advanced bite detection systems; CNNs extract spatial features from video frames, while LSTMs model temporal sequences of bites [21]. | Used in ByteTrack: EfficientNet (CNN) for frame classification + LSTM for temporal modeling [21]. |

| Standardized Food Database | Data Resource | Provides nutritional information for converting recorded food consumption into energy and macronutrient data. | Belgian Food Composition Database (NUBEL); U.S. Nutrition Facts Panel data [24] [21]. |

A Methodological Deep Dive: Sensor-Based and AI-Driven Ground Truth Techniques

Within the validation of ground truth methods for eating detection research, accurately capturing the microstructure of eating—specifically bites and chews—is paramount. Traditional self-report methods are inadequate for this purpose due to their subjective nature and lack of granularity [25]. Wearable sensor systems offer an objective, high-resolution alternative. This document provides detailed application notes and experimental protocols for three primary sensor modalities—inertial, acoustic, and strain sensors—used for detecting mastication events. The content is structured to enable researchers and drug development professionals to implement, validate, and cross-reference these methods in controlled and free-living settings.

Sensor Taxonomy and Performance Characteristics

Wearable sensors for bite and chew detection leverage different physiological signals and physical principles. The table below summarizes the core sensor modalities, their working mechanisms, and placement for detecting mastication events.

Table 1: Taxonomy of Wearable Sensors for Bite and Chew Detection

| Sensor Modality | Specific Sensor Types | Primary Measurable | Common Placement Locations | Key Measured Parameters |

|---|---|---|---|---|

| Acoustic | Microphones (air-conduction, throat) [26] [27] | Sound waves from jaw movement, food breakdown, and swallowing [26] | Ear canal, neck/throat, sternum [26] [27] | Chewing sounds, swallowing sounds, bite acoustics |

| Inertial | Accelerometers, Gyroscopes [27] | Motion and angular velocity of jaw and head | Wrist (for hand-to-mouth gestures), head, neck [25] | Jaw motion patterns, head movement, bite-related gestures |

| Strain | Piezoelectric Sensors, Bend Sensors, Strain Gauges [28] [8] | Deformation and muscle movement from mastication [28] | Temporalis muscle (via eyeglasses/headband), masseter muscle, neck [28] [8] | Temporalis muscle contraction, skin strain from jaw movement |

The performance of these sensors varies significantly based on the detection task and environmental conditions. The following table provides a comparative overview of their accuracy and key characteristics as reported in validation studies.

Table 2: Performance Comparison of Sensor Modalities for Eating Detection

| Sensor Modality | Reported Accuracy/Performance | Key Advantages | Key Limitations |

|---|---|---|---|

| Acoustic (Throat Microphone) | High; F-Measures of 91.3% and 88.5% for classifying different foods [26] | High classification accuracy for food types [26] | Higher computational overhead and power consumption; privacy concerns [26] |

| Strain (Piezoelectric on Temporalis) | High; strong agreement with video annotation (r=0.955 for chew count) [8] | Direct measurement of muscle activity; less intrusive than some acoustic methods [28] [8] | Sensor placement is critical; signal can be affected by individual anatomical differences [28] |

| Strain (Bend Sensor on Eyeglasses) | Effective; can detect differences in chewing strength for foods of varying hardness [28] | Integrates into common wearable (eyeglasses); non-invasive [28] | May be less sensitive to subtle chewing motions compared to other sensors [28] |

| Inertial (Piezoelectric on Neck) | Moderate; F-Measures of 75.3% and 79.4% for classifying foods [26] | Lower power consumption compared to audio [26] | Lower classification accuracy compared to audio [26] |

Experimental Protocols for Validation

A robust validation framework is essential for establishing any wearable system as a reliable ground truth method. The following protocols detail the procedures for data collection, annotation, and processing.

Protocol for Multi-Sensor Chewing Strength Estimation

This protocol is based on a study that compared four wearable sensors for estimating chewing strength related to food hardness [28].

1. Objective: To evaluate the feasibility of using multiple wearable sensors to estimate chewing strength and differentiate between foods of different hardness in a laboratory setting.

2. Materials and Reagents:

- Test Foods: Prepare samples with standardized hardness, measured by a penetrometer. Example: Carrot (hard), Apple (moderate), Banana (soft) [28].

- Wearable Sensors:

- Ear Canal Pressure Sensor (e.g., SM9541 with custom earbud) [28].

- Piezoresistive Bend Sensor (e.g., Spectra Symbol 2.2”) attached to the temple of eyeglasses [28].

- Piezoelectric Strain Sensor (e.g., LDT0-028K) placed on the temporalis muscle [28].

- Surface Electromyography (EMG) sensor placed on the temporalis muscle [28].

- Data Acquisition System: Microprocessors (e.g., STM32L476, MSP430F2418) for data sampling and storage (SD cards) or transmission (Bluetooth) [28].

- Video Recording System: For ground truth annotation of chewing bouts.

3. Experimental Procedure:

- Participant Preparation: Recruit participants according to ethics board approval. For each participant, create a custom-molded earbud for the ear canal sensor. Attach the piezoelectric and EMG sensors to the left temporalis muscle using medical tape. Attach the bend sensor to the right temple of a pair of eyeglasses [28].

- Data Collection: Instruct the participant to consume the test foods in a randomized order. For each food type, the participant should take and consume 10 distinct bites. Data from all four sensors should be collected simultaneously during the entire eating session [28]. Synchronize the video recording with sensor data collection.

- Ground Truth Annotation: Manually annotate the video recording to mark the start and end of each chewing sequence for every bite. This serves as the primary validation for chew count and timing.

4. Data Analysis:

- Signal Processing: For each sensor, synchronize the data and segment it according to the annotated bites.

- Feature Extraction: For each bite segment, calculate the standard deviation of the sensor signal. This metric has been shown to be significantly affected by food hardness [28].

- Statistical Analysis: Perform a single-factor ANOVA to test for a significant effect of food hardness on the standard deviation of the signals. Use a post-hoc test (e.g., Tukey's test) to confirm significant differences between the mean standard deviations for each food type [28].

Protocol for Audio vs. Inertial Sensor Comparison

This protocol outlines a objective comparison between audio-based and piezoelectric inertial sensing for swallow classification [26].

1. Objective: To objectively compare the classification accuracy and power consumption of audio-based and piezoelectric inertial sensing for dietary intake monitoring.

2. Materials and Reagents:

- Sensors:

- Commercial throat microphone (e.g., Hypario HM-2000) placed loosely on the lower neck/collarbone.

- Piezoelectric sensor (e.g., LDT0-028K) placed on the lower part of the neck for detecting swallow motions.

- Data Acquisition System: A system capable of recording audio at a sufficiently high sample rate (e.g., 44.1 kHz) and inertial data at a lower rate (e.g., 100 Hz) [26].

- Test Foods: A variety of foods with different acoustic properties, such as sandwich, chips, nuts, chocolate, meat patty, and water [26].

3. Experimental Procedure:

- Participant Preparation: Equip participants with both sensors simultaneously. Ensure the piezoelectric sensor has good skin contact on the neck.

- Data Collection: In two separate experiments, have participants consume the provided test foods. Record data from both sensors throughout the consumption period. The data collection should be structured to capture distinct swallowing events for each food type [26].

- Ground Truth: Use video observation or manual event marking to log the timing of swallows and the type of food being consumed.

4. Data Analysis:

- Feature Extraction (Audio): Use a tool like openSMILE to extract a large set of audio features from the recorded signals, including Mel-Frequency Cepstral Coefficients (MFCCs), spectral features, and voice quality features [26].

- Classification: Train a classifier (e.g., Random Forest) to distinguish between different food types based on the extracted features from both sensor modalities.

- Performance Evaluation: Calculate precision, recall, and F-Measure for each food type and sensor system [26].

- Power Consumption Modeling: Model the power overhead of both systems based on sample rate, computational requirements, and data transmission needs [26].

The Researcher's Toolkit

Table 3: Essential Research Reagents and Materials

| Item | Function/Application | Example Models/Details |

|---|---|---|

| Piezoelectric Strain Sensor | Detects muscle movement and skin strain during chewing and swallowing [28] [26] | LDT0-028K (Measurement Specialties Inc.); placed on neck or temporalis muscle |

| Throat Microphone | Captures acoustic signals of chewing and swallowing directly from the throat, reducing ambient noise [26] | Hypario HM-2000; placed loosely on lower neck |

| Custom-Molded Earbud | Creates a seal in the ear canal to measure pressure changes from jaw movement [28] | Made from silicone rubber (e.g., Sharkfin Self-Molding Earbud) |

| Piezoresistive Bend Sensor | Measures contraction of the temporalis muscle by bending with eyeglass temples [28] | Spectra Symbol 2.2" sensor; attached to eyeglass frame |

| Penetrometer | Quantifies the hardness of test foods to standardize stimulus materials [28] | Used to confirm hardness levels of foods like carrot, apple, and banana |

| OpenSMILE Toolkit | Extracts audio features from microphone data for machine learning classification [26] | Munich open Speech and Music Interpretation by Large Space Extraction toolkit |

Data Processing and Analysis Workflows

The raw signals from wearable sensors require sophisticated processing to extract meaningful eating behavior metrics. Machine learning, particularly neural networks, is often employed for this purpose [27]. The following diagram illustrates a generalized signal processing and analysis workflow applicable to data from inertial, acoustic, and strain sensors.

Generalized Sensor Data Processing Workflow

Workflow Description:

- Raw Sensor Signal: The process begins with the acquisition of raw data, which could be voltage from a piezoelectric sensor, audio waveforms from a microphone, or acceleration values from an IMU [28] [26].

- Signal Preprocessing: The raw signal is cleaned and prepared. This involves filtering to remove noise (e.g., high-frequency noise from motion artifacts) and segmenting the continuous data stream into epochs of interest, such as individual chews or distinct bites, often using a sliding window approach [27].

- Feature Extraction: From each segmented window, discriminative features are extracted. These can be temporal (e.g., signal magnitude, zero-crossing rate), spectral (e.g., energy in different frequency bands, MFCCs for audio), or statistical (e.g., standard deviation, mean) [26] [27]. The standard deviation of a strain sensor signal, for instance, can correlate with chewing strength [28].

- Machine Learning: The extracted features are fed into a machine learning model. This can be a classifier (e.g., Support Vector Machine, Random Forest, Convolutional Neural Network) to detect eating activity or identify food type [26] [27], or a regression model to estimate continuous variables like chew count or eating duration.

Inertial, acoustic, and strain sensors constitute a powerful toolkit for objective detection of bites and chews, a critical capability for establishing ground truth in eating behavior research. Each modality presents a unique trade-off between accuracy, obtrusiveness, power consumption, and robustness. Acoustic sensors offer high classification accuracy but at a higher computational and privacy cost. Strain sensors provide a direct measure of muscle activity and are increasingly integrated into wearable form-factors like eyeglasses. Inertial sensors offer a lower-power alternative but may trade off some classification performance.

The future of this field lies in the development of robust, multi-modal systems that fuse data from complementary sensors to overcome the limitations of any single modality. Furthermore, a critical focus must be placed on testing these systems in free-living conditions outside the laboratory, improving the interpretability of AI models, and developing strong privacy-preserving techniques to ensure user comfort and data confidentiality [25]. The experimental protocols and analyses detailed herein provide a foundation for researchers to rigorously validate these emerging technologies.

Meal microstructure, which encompasses eating behaviors such as bite count, bite rate, and chewing, provides critical insights into individual eating patterns, the effects of food properties, and mechanisms underlying conditions like obesity and disordered eating [21] [29]. In pediatric populations, faster eating rates and larger bites have been linked to greater food consumption and higher obesity risk [29]. The current gold standard for analyzing meal microstructure is manual observational coding, where trained annotators review meal videos and record bite timestamps. Although reliable, this method is prohibitively time-consuming, labor-intensive, and costly, limiting its scalability for large-scale research or clinical use [21] [30].

Automation using computer vision and deep learning offers a promising alternative. This document details the application notes and experimental protocols for "ByteTrack," a deep-learning system designed for automated bite count and bite rate detection from video-recorded child meals, framing it within the broader context of validating ground truth methods for eating detection research [21] [29].

Application Notes: The ByteTrack System

ByteTrack is a two-stage deep learning pipeline that automatically detects bites and calculates eating speed from video data. It was specifically developed and trained on videos of children aged 7-9 years to address challenges such as frequent movement, fidgeting, and occlusions (e.g., hands or utensils blocking the mouth) common in pediatric populations [21] [30].

System Architecture and Workflow

The following diagram illustrates the two-stage logical workflow of the ByteTrack system:

Performance Evaluation

ByteTrack's performance was evaluated on a test set of 51 videos and compared against manual observational coding (the gold standard). The table below summarizes the quantitative performance data [21] [29].

Table 1: Quantitative Performance Metrics of ByteTrack on a Test Set of 51 Videos

| Metric | Value | Interpretation |

|---|---|---|

| Average Precision | 79.4% | Proportion of detected bites that were correct (low false positives) |

| Average Recall | 67.9% | Proportion of actual bites that were successfully detected |

| F1-Score | 70.6% | Harmonic mean of precision and recall |

| Intraclass Correlation (ICC) | 0.66 (Range: 0.16 - 0.99) | Degree of absolute agreement with human coders |

Performance was notably lower in videos with extensive child movement, high occlusion (e.g., hands or utensils frequently blocking the mouth), or during the later stages of meals when children often become more fidgety [21] [30]. This highlights a key challenge for ground truth validation in real-world, unstructured eating environments.

Experimental Protocols

This section provides a detailed methodology for replicating the ByteTrack study, from data collection to model evaluation. Adherence to this protocol is crucial for ensuring the consistency and validity of results, particularly for ground truth validation studies.

Data Collection and Participant Protocol

Table 2: Participant Demographics and Data Collection Summary

| Category | Details |

|---|---|

| Participants | 94 children (49 male, 45 female) aged 7-9 years (Mean: 7.9 ± 0.6 years) [29] |

| Study Design | Longitudinal; 4 laboratory meals spaced ~1 week apart [21] |

| Meal Context | Identical foods served in varying portion sizes; children ate ad libitum for up to 30 minutes while being read a non-food related story [21] [29] |

| Video Recording | Axis M3004-V network camera at 30 fps, positioned outside the child's direct line of sight to minimize observer effect [21] |

| Total Video Data | 242 videos (1,440 minutes) used for model development [21] |

Detailed Model Building Protocol

Stage 1: Face Detection and Tracking

- Objective: To locate and track the child's face throughout the meal, providing stabilized face crops for the subsequent stage.

- Models: A hybrid pipeline was employed:

- Implementation: The system switches between these models based on frame-level difficulty metrics to balance speed and accuracy.

Stage 2: Bite Classification

- Objective: To analyze the sequence of face crops and classify whether a bite is occurring in each frame.

- Model Architecture:

- Feature Extraction: An EfficientNet convolutional neural network (CNN) pre-trained on ImageNet is used to extract spatial features from each individual frame [21] [30].

- Temporal Modeling: The sequence of feature vectors from consecutive frames is fed into a Long Short-Term Memory (LSTM) recurrent network. The LSTM learns the temporal dynamics and motion patterns that characterize a biting action versus other movements like talking or gesturing [21].

- Training: The model was trained using frames annotated by human coders. Data augmentation techniques (e.g., simulating blur, low light, rotation) were applied to improve model robustness to real-world variations [30].

The architecture of the bite classification model is detailed below:

Validation and Ground Truth Protocol

- Gold Standard: Manual observational coding by trained human annotators. Each bite was timestamped by reviewing the video recordings [21].

- Performance Validation:

- Metrics Calculation: Precision, Recall, and F1-score were calculated by comparing ByteTrack's outputs against the manual annotations on the 51-video test set [21].

- Agreement Assessment: The Intraclass Correlation Coefficient (ICC) was used to measure the absolute agreement between ByteTrack's bite counts and the human-derived counts for each meal [21] [29].

The Scientist's Toolkit: Research Reagent Solutions

This table catalogs the key computational tools and data resources essential for developing a system like ByteTrack.

Table 3: Essential Research Reagents for Automated Bite Detection Research

| Reagent / Tool | Type | Function in the Protocol |

|---|---|---|

| Axis M3004-V Camera | Hardware | Standardized video acquisition at 30 fps in a laboratory setting [21]. |

| Faster R-CNN | Software Model | Provides robust face detection in video frames with challenging conditions (occlusions, blur) [21]. |

| YOLOv7 | Software Model | Enables efficient, real-time face detection for standard video frames [21]. |

| EfficientNet | Software Model | Convolutional neural network for extracting meaningful spatial features from face crops [21] [30]. |

| LSTM Network | Software Model | Models the temporal sequence of features to distinguish bites from other facial and head movements [21] [30]. |

| Annotated Video Dataset | Data | Ground truth data for model training and validation, comprising video meals with manually coded bite timestamps [21]. |

Accurate dietary intake assessment is a cornerstone of nutritional care in clinical and research settings, particularly for managing conditions like obesity, diabetes, and metabolic disorders [4] [31]. Traditional methods, which often rely on manual self-reporting, are prone to error and impose a significant burden on both patients and healthcare providers [4] [32]. The emergence of artificial intelligence (AI) offers a transformative opportunity to automate and enhance this process. Unimodal AI systems, which process a single type of data (e.g., only images or only motion), have shown promise but face limitations in complex, real-world scenarios [33] [31].

Multimodal AI, which integrates diverse data streams such as images, motion sensors, and audio, represents a significant leap forward [34] [33]. By mirroring human perception—which naturally combines sight, sound, and other senses—multimodal systems provide a richer, more contextual understanding, leading to improved accuracy and robustness [33]. This document presents application notes and detailed experimental protocols for implementing such multi-modal systems, specifically within the context of establishing ground truth methods for eating detection validation research.

Key Applications and Performance Data

Research demonstrates that multi-modal data fusion significantly enhances the performance of automated dietary monitoring systems. The table below summarizes quantitative findings from key studies in the field.

Table 1: Performance Metrics of Multi-Modal Approaches for Eating Detection

| Application Focus | Data Modalities Fused | Key Performance Findings | Citation |

|---|---|---|---|

| Food Intake Episode Detection | Accelerometer, Gyroscope, Audio (chewing sounds) | Accuracy of eating detection improved to 85% by combining motion data and audio, outperforming single-modality systems. [31] | Bahador et al. |

| Food Type & Portion Estimation | Image (Food photos) vs. Manual Weighing | High agreement with manual weighing (gold standard): CCC = 0.957 for cereals/starchy food, CCC = 0.845 for meat/fish. [32] | Journal of Clinical Nutrition ESPEN |

| General Multi-Modal AI | Text, Images, Audio, Video | Effective fusion strategies can improve AI accuracy by up to 40% compared to single-modality approaches. [33] | Shaip Blog |

Experimental Protocols

This section provides detailed methodologies for implementing multi-modal data fusion in eating detection research.

Protocol: Image-Based Food Recognition and Portion Estimation

This protocol outlines a method for using computer vision to automatically identify foods and estimate portion sizes from meal images, suitable for validation in controlled settings like hospital wards [32].

1. Research Reagent Solutions & Materials

Table 2: Essential Materials for Image-Based Protocol

| Item | Function/Description |

|---|---|

| AI-Based Image Recognition Prototype | The core software for automated food identification and weight estimation. Developed using machine learning algorithms. [32] |

| Digital Camera or Smartphone | High-resolution image capture device for photographing meals under standardized lighting and angle conditions. |

| Manual Weighing Scale | Reference method (ground truth) for obtaining accurate component weights. Precision of ±1g is recommended. [32] |

| Standardized Background & Lighting | Minimizes environmental variables, ensuring consistent image quality for the AI model. |

| Annotation Software | For manually labeling food components in images to create training and testing datasets for the algorithm. [32] |

2. Procedure

- Meal Preparation and Ground Truth Establishment: Present a meal to the subject. Before consumption, manually weigh (MAN) each individual food component (e.g., mashed potatoes, green beans, chicken breast) and record the weights in grams [32].

- Image Acquisition: Capture a photograph of the entire meal from a top-down perspective, ensuring all components are visible and the image is in focus. The camera should be mounted at a fixed height for consistency.

- Data Annotation (Training Phase): In the algorithm development phase, use annotation software to delineate and label each food component in the image, linking it to the corresponding manually weighed mass [32].

- AI-Based Estimation (Testing Phase): Process the meal image through the trained AI prototype (PRO). The system will automatically identify the food components and output an estimated weight for each [32].

- Data Analysis: Compare the PRO weights to the MAN (ground truth) weights. Calculate statistical agreement metrics such as Lin's concordance correlation coefficient (CCC) and mean differences with 95% confidence intervals to validate the system's accuracy [32].

Protocol: Sensor Fusion for Food Intake Detection

This protocol describes a technique for fusing data from multiple wearable sensors to detect the act of eating itself, using a computationally efficient deep learning-based fusion method [35] [31].

1. Research Reagent Solutions & Materials

Table 3: Essential Materials for Sensor Fusion Protocol

| Item | Function/Description |

|---|---|

| Multi-Modal Wearable Sensor | A device such as the Empatica E4 wristband, capable of capturing data like 3-axis acceleration (ACC), photoplethysmography (BVP), electrodermal activity (EDA), and temperature (TEMP). [35] [31] |

| Data Fusion Algorithm | Custom software that transforms multi-sensor time-series data into a single 2D covariance representation (contour plot) for classification. [35] [31] |

| Deep Learning Model | A classifier (e.g., a Deep Residual Network with 2D convolutional layers) trained to identify eating episodes from the 2D contour plots. [35] [31] |

| Data Annotation Log | A tool for subjects or researchers to manually record the start and end times of eating episodes, serving as ground truth for model training and validation. |

2. Procedure

- Sensor Deployment and Data Collection: Fit participants with the wearable sensor. Collect data streams (e.g., ACC, BVP, EDA, TEMP) continuously during the monitoring period. Simultaneously, maintain a precise log of all eating episode timings [35] [31].

- Data Segmentation: Divide the continuous sensor data into temporal windows or segments. A window size of 500 samples (or epochs of 10-30 seconds) has been used effectively in prior research [35] [31].

- Covariance Matrix Calculation & 2D Representation: For each data window, form an observation matrix

Hwhere columns represent different sensors and rows represent time samples. Calculate the pairwise covariance between all sensor signals to create a covariance matrixC. Transform this matrix into a filled 2D contour plot, where colors and isolines represent the strength of correlation between sensors [35] [31]. - Model Training and Classification: Use the collected 2D contour plots as input to a deep learning model. Train the model to classify each contour plot as either an "eating episode" or "other activity" using the annotated data log as ground truth [35] [31].

- Validation: Evaluate model performance using leave-one-subject-out cross-validation, reporting metrics such as precision, recall, and accuracy to ensure generalizability [35] [31].

System Architecture and Workflow Visualization

The following diagram illustrates the logical flow and data transformation steps for the sensor fusion protocol described in Section 3.2.

Figure 1: Workflow for sensor fusion-based eating detection.

The Researcher's Toolkit

A successful multi-modal eating detection system relies on a stack of synergistic technologies. The table below details the core components and their functions within the research pipeline.

Table 4: Essential Research Toolkit for Multi-Modal Eating Detection

| Toolkit Component | Category | Specific Function in Research Context |

|---|---|---|

| Wearable Sensors (e.g., Empatica E4) | Hardware | Captures physiological and motion data (ACC, BVP, EDA, TEMP) correlated with eating activity for continuous, passive monitoring. [35] [31] |

| Computer Vision Models | Software/AI | Automates food identification and portion size estimation from meal images, reducing reliance on manual logging. [34] [32] |

| Deep Learning Frameworks (e.g., for CNNs, Residual Nets) | Software/AI | Provides the architecture for building classifiers that can identify complex patterns in fused sensor data or images. [35] [31] |

| Data Fusion Algorithm (Covariance-based) | Software/Methodology | Integrates disparate sensor data streams into a unified, lower-dimensional representation (2D contour plot) that preserves inter-modal relationships for efficient classification. [35] [31] |

| Annotation & Validation Software | Software | Enables researchers to create high-quality labeled datasets by marking food in images or timing eating episodes, which are crucial for training AI models and establishing ground truth. [33] [32] |

Accurate assessment of dietary intake is fundamental to nutrition research, yet it remains a significant challenge due to the inherent limitations of self-reported methods like dietary recalls and food frequency questionnaires (FFQs). These tools are susceptible to subjective errors related to memory, perception, and reporting bias, which can adversely affect the validity of research findings and their implications for disease risk [36]. The integration of biomarkers of dietary intake provides a more objective approach to validate these self-reported measures.

Biomarker-guided validation is particularly crucial within the broader context of establishing ground truth methods for eating detection validation research. Unlike subjective reports, biomarkers offer an independent, physiological measurement that can compensate for the biasing effects of reporting errors. This protocol details the application of biomarker correlation strategies to validate dietary recall and history data, thereby strengthening the evidence base for nutritional epidemiology and clinical diet assessment.

Core Concepts and Key Biomarkers

Dietary biomarkers are measurable biological indicators that reflect dietary intake or nutritional status. They can be broadly categorized as follows:

- Recovery Biomarkers: Provide a quantitative measure of intake over a specific period (e.g., urinary nitrogen for protein intake).

- Concentration Biomarkers: Reflect the concentration of a nutrient or compound in biological tissues or fluids (e.g., serum carotenoids for fruit and vegetable intake).

- Predictive Biomarkers: While not direct measures of intake, they have known correlations with specific food consumption (e.g., urinary 1-methylhistidine for meat intake) [36].

The underlying principle is that errors in biomarker measurements are reasonably assumed to be independent of errors in dietary questionnaires. This independence allows researchers to use biomarkers to estimate and correct for the measurement errors present in self-reported data, a process known as biomarker-guided regression calibration [36].

Table 1: Key Biomarkers for Validating Dietary Intake

| Biomarker Class | Specific Biomarker | Biological Sample | Correlated Dietary Item | Reported Correlation Value (De-attenuated) |

|---|---|---|---|---|

| Fatty Acids | Adipose 18:2 ω-6 | Adipose Tissue | Linoleic Acid Intake | 0.72 (Black subjects) [36] |

| Fatty Acids | Very Long Chain ω-3 (n-3) FAs | Blood/Adipose | Fish/Fish Oil Intake | 0.30-0.49 [36] |

| Amino Acid Metabolite | Urinary 1-Methylhistidine | Urine | Meat Consumption | 0.69 (Non-black subjects) [36] |

| Carotenoids | β-Carotene, Lycopene, etc. | Serum | Fruit & Vegetable Intake | ≥0.50 (Some, e.g., non-black fruit); 0.30-0.49 (Others) [36] |

| Vitamins | Vitamin B-12 | Serum | Animal Product Intake | ≥0.50 (Non-black subjects) [36] |