Validating Portion-Size Estimation Methods: A Comprehensive Guide for Dietary Assessment in Clinical and Biomedical Research

Accurate dietary assessment is fundamental to understanding the links between nutrition, chronic diseases, and therapeutic outcomes.

Validating Portion-Size Estimation Methods: A Comprehensive Guide for Dietary Assessment in Clinical and Biomedical Research

Abstract

Accurate dietary assessment is fundamental to understanding the links between nutrition, chronic diseases, and therapeutic outcomes. This article provides a comprehensive overview of the validation frameworks for portion-size estimation methods, crucial for researchers and drug development professionals. It explores the foundational importance of diet quality metrics, details traditional and cutting-edge methodological approaches—from physical aids to AI-powered image analysis—and addresses key challenges in implementation. Furthermore, it synthesizes evidence from recent validation studies, comparing the accuracy of various tools against criterion measures to guide the selection of robust dietary assessment methods for clinical trials and large-scale public health research.

The Critical Role of Accurate Portion Estimation in Health and Disease Research

Linking Dietary Intake to Chronic Disease and Public Health Burden

Accurate dietary intake assessment is a cornerstone of nutritional epidemiology, providing the essential data needed to understand and mitigate the global burden of chronic disease. Suboptimal nutrition is consistently ranked among the highest contributors to global morbidity and mortality worldwide [1]. The Global Burden of Disease (GBD) Study 2021 identifies dietary risks as leading factors in deaths and disability-adjusted life years (DALYs) from non-communicable diseases (NCDs), including cardiovascular diseases, neoplasms, and diabetes [2]. These diseases contribute to approximately 1.73 billion deaths and DALYs globally, representing the most significant health challenge facing the adult population [2].

The precise quantification of dietary intake, particularly portion size estimation, remains a fundamental methodological challenge in establishing robust diet-disease relationships. Errors in estimating food intake volume directly impact the accuracy of energy and nutrient intake calculations, potentially obscuring critical associations between diet and chronic disease risk [3]. As public health strategies increasingly focus on dietary interventions to reduce NCD burden, validated portion size estimation methods become indispensable for research, monitoring, and evaluation. This guide compares current portion-size estimation methodologies, their experimental validation, and their application in chronic disease burden research.

The Global Burden of Diet-Related Chronic Diseases

Current Trends and Projections

Analysis of GBD 2021 data reveals that from 1990 to 2021, global age-standardized mortality rates (ASMR) and disability-adjusted life year (DALY) rates attributable to dietary factors decreased by approximately one-third for neoplasms and cardiovascular diseases (CVD) [2]. However, this progress is unevenly distributed across countries with different socioeconomic development levels, measured by the Sociodemographic Index (SDI).

Table 1: Leading Diet-Related Risk Factors by Chronic Disease and SDI Region

| Chronic Disease | High SDI Regions | Middle SDI Regions | Low SDI Regions |

|---|---|---|---|

| Neoplasms | High red meat intake [2] | - | Diets low in vegetables [2] |

| Cardiovascular Diseases | Diets low in whole grains [2] | High-sodium diets [2] | Diets low in fruits [2] |

| Diabetes | High processed meat intake [2] | - | Diets low in fruits [2] |

Projections through 2030 indicate a continued decline in mortality from neoplasms and CVDs, but with a concerning slight increase in mortality rates from diabetes [2]. This underscores the ongoing challenge of addressing diet-related chronic diseases despite overall improvements.

Economic and Regional Disparities

The burden of chronic diseases is no longer confined to high-income nations. Developing countries increasingly suffer from high levels of public health problems related to chronic diseases, with 79% of all deaths worldwide attributable to chronic diseases already occurring in developing countries [4]. This shift has been so rapid that many developing countries now face a double burden of disease, combating both communicable diseases and chronic diseases simultaneously [4].

Comparative Analysis of Portion-Size Estimation Methods

Accurate portion-size estimation is critical for quantifying exposure to dietary risks in chronic disease research. The following section compares the performance of major estimation methods based on recent validation studies.

Table 2: Performance Comparison of Portion-Size Estimation Methods

| Method | Validation Approach | Key Metrics | Relative Advantages | Key Limitations |

|---|---|---|---|---|

| GDQS App with 3D Cubes [5] [1] | Compared to Weighed Food Records (WFR) in 170 participants | Equivalent to WFR within 2.5-point margin (p=0.006); Moderate agreement (κ=0.5685) for poor diet quality risk [5] [1] | Standardized, portable, no preparation required | Requires production of physical cubes |

| GDQS App with Playdough [5] [1] | Compared to WFR in 170 participants | Equivalent to WFR within 2.5-point margin (p<0.001); Moderate agreement (κ=0.5843) for poor diet quality risk [5] [1] | Flexible for irregular shapes, low cost | Requires preparation and can be messy |

| Multi-Angle Photography [3] | 82 participants matching observed foods to photographs at different angles | Varies by food type: cooked rice (74.4% accuracy at 45°), beverages (73.2% at 70°); Combined angles improved accuracy [3] | Digital record, suitable for remote assessment | Accuracy depends on food type and angle |

| PortionSize Smartphone App [6] | 14 adults in free-living conditions compared to digital photography | Equivalent for gram intake (p<0.001); Overestimated energy (p=0.08); Error range 11-23% for food groups [6] | Passive data collection, real-time assessment | Overestimates energy intake |

Specialized Applications for Food Types

The performance of portion estimation methods varies significantly by food type and cultural context. Research on traditional Korean foods found that optimal photography angles differed substantially: 45° provided best accuracy for cooked rice (74.4%), while 70° was superior for beverages (73.2%) [3]. Liquid and amorphous foods like soups consistently show lower accuracy across methods, highlighting the need for food-specific approaches in dietary assessment [3].

Detailed Experimental Protocols

Validation Protocol for GDQS App with Cubes and Playdough

A 2025 study established a comprehensive validation protocol for portion size estimation methods used with the Global Diet Quality Score (GDQS) app [1]:

Study Design and Participants:

- Utilized a repeated measures design with 170 participants aged ≥18 years

- Employed a convenience sample approach with post-hoc power analysis confirming >80% statistical power

- Each participant completed all three assessment methods for the same 24-hour reference period

Experimental Timeline:

- Day 1: In-person training session (40-60 minutes) on using dietary scales and weighing procedures, conducted in groups of up to five participants

- Day 2: 24-hour weighed food record (WFR) period where participants weighed all foods, beverages, and mixed dishes using provided digital dietary scales (KD-7000, capacity 7kg, MyWeigh)

- Day 3: Participants returned to complete face-to-face GDQS app interviews using both cube and playdough methods, with order randomized by the app

Statistical Equivalence Testing:

- Utilized paired two one-sided t-tests (TOST) with pre-specified equivalence margin of 2.5 GDQS points

- Calculated Kappa coefficients to quantify agreement for risk classification and food group consumption

- Assessed agreement for 25 individual GDQS food groups

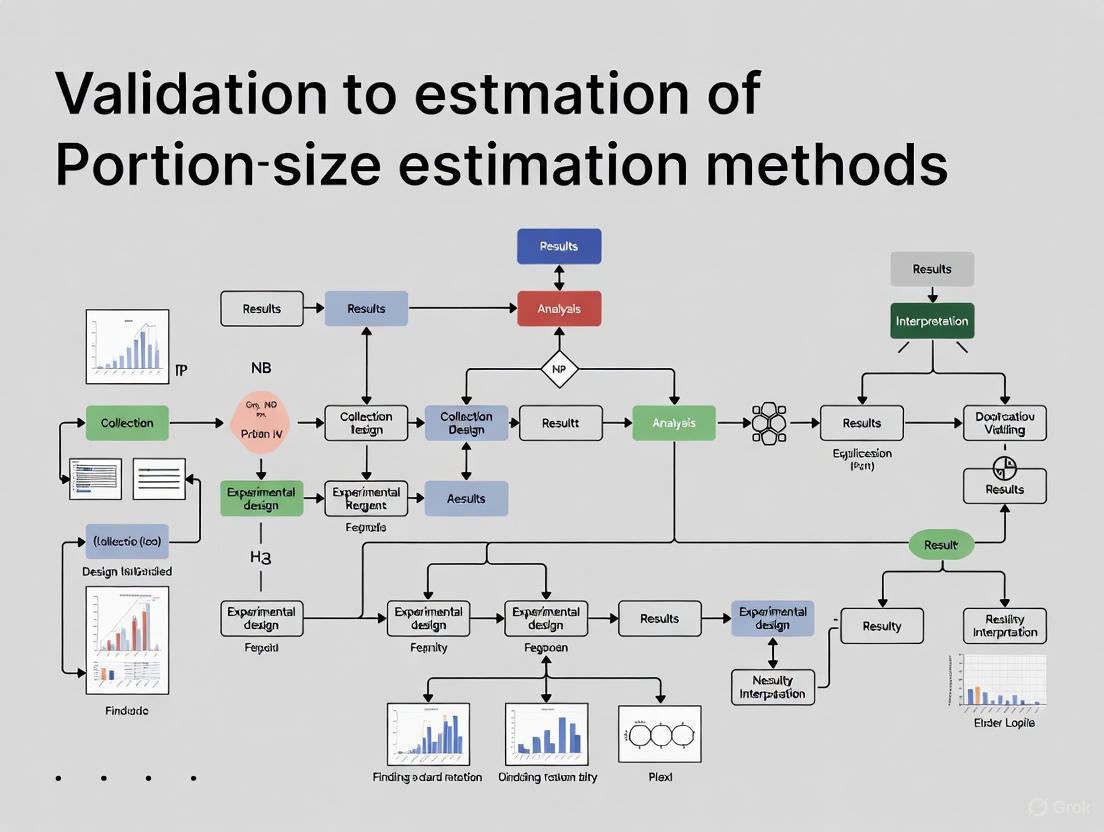

Diagram 1: GDQS Validation Workflow

Multi-Angle Photography Validation Protocol

A 2025 study developed a specialized protocol for validating food portion estimation using multi-angle photographs [3]:

Experimental Setting:

- 82 participants (41 male, 41 female) aged 20-50 years observed six food types: cooked rice, soup, grilled fish, vegetables, kimchi, and beverages

- Foods were selected based on consumption frequency from the Korea National Health and Nutrition Examination Survey

- Portion sizes were determined using percentiles (10th, 30th, 50th, 70th, and 90th) of food intake volume distribution

Procedure:

- Participants observed meals for 3 minutes approximately one hour after their last meal

- After observation, participants moved to a separate room and watched a non-food-related video for 2 minutes

- Participants then completed a computer-based survey matching observed portions to photographs taken from three different angles

- Angles were optimized by food type: 0°, 45°, 70° for solid foods and 45°, 60°, 70° for beverages

- Confidence levels were rated on a 5-point Likert scale for each selection

Data Analysis:

- Calculated accuracy rates for each food type and angle combination

- Assessed underestimation and overestimation patterns

- Evaluated the improvement in accuracy when combining multiple angles

The Researcher's Toolkit: Essential Materials and Reagents

Table 3: Essential Research Reagents and Materials for Portion-Size Estimation Studies

| Item | Specification/Model | Primary Function in Research | Key Considerations |

|---|---|---|---|

| Digital Dietary Scales [1] | KD-7000, capacity 7kg, MyWeigh | Gold standard reference method for validation studies; measures actual food weight | Requires calibration; 7kg capacity accommodates most meal portions |

| 3D Printed Cubes [1] | Set of 10 predefined sizes | Standardized portion size estimation at food group level for GDQS app | Volume determined using gram cut-offs and food density data |

| Playdough [5] [1] | Standard modeling compound | Flexible portion size estimation for irregularly shaped foods | Provides interactive, intuitive estimation method |

| Food Photography System [3] | Multi-angle setup (0°, 45°, 70° for solids; 45°, 60°, 70° for liquids) | Standardized visual reference for portion estimation | Optimal angles vary by food type and culture |

| GDQS Mobile Application [1] [7] | Smartphone-based data collection platform | Standardizes collection and tabulation of diet quality metrics | Integrates with cubes or playdough for portion estimation |

Methodological Pathways in Portion Estimation Research

The conceptual and methodological framework for validating portion-size estimation methods follows a systematic pathway from study design to application in chronic disease research.

Diagram 2: Research Validation Pathway

Implications for Chronic Disease Research and Public Health

The validation of practical portion-size estimation methods has profound implications for chronic disease research and public health policy. Accurate dietary assessment enables:

Strengthened Diet-Disease Association Studies: Validated methods like the GDQS app with cubes or playdough provide researchers with standardized tools to quantify exposure to dietary risks identified in GBD studies, such as high red meat, low fruits and vegetables, and high sodium [2]. This strengthens the evidence base for dietary recommendations.

Enhanced Monitoring and Surveillance: Simplified yet accurate methods enable more frequent and widespread monitoring of diet quality, particularly in resource-limited settings. This is crucial for tracking progress toward the UN's "2030 Sustainable Development Agenda" and WHO's "Global Non-Communicable Diseases Covenant 2020-2030" [2].

Targeted Public Health Interventions: Understanding how dietary risks vary by socioeconomic status (as reflected in SDI regions) allows for targeted interventions. For example, the finding that diets low in fruits are significantly linked to CVD and diabetes burden in low-SDI regions suggests specific priorities for food system interventions in these areas [2].

Cultural and Regional Adaptation: Research demonstrating that estimation accuracy varies by food type and that optimal methods may differ across culinary traditions supports the development of culturally adapted dietary assessment tools [3]. This is essential for global chronic disease prevention efforts.

As the burden of chronic diseases continues to evolve, with projections indicating a decline in mortality from neoplasms and CVDs but a slight increase in diabetes mortality [2], the need for accurate, practical dietary assessment methods remains paramount. The ongoing validation and refinement of portion-size estimation techniques represents a critical contribution to this global public health effort.

Poor diet quality is a leading and preventable cause of adverse health outcomes globally, contributing significantly to both maternal and child health (MCH) challenges and non-communicable diseases (NCDs) [8]. As international organizations seek indicators to monitor dietary risks across countries, the development of simple, timely, and cost-effective tools to track nutritional deficiency and NCD risks simultaneously has become a critical research priority [9]. The Global Diet Quality Score (GDQS) emerged as a food-based metric designed specifically for this purpose, with the unique capability of assessing diet quality across diverse global settings without requiring food composition tables for analysis [10] [9]. This review examines the validation of GDQS and comparable metrics against clinical endpoints, with particular focus on the crucial role of portion-size estimation methods in ensuring data accuracy and reliability for research and clinical applications.

Comparative Analysis of Diet Quality Metrics

Various dietary metrics have been developed to summarize different components of diet, though significant gaps remain in their validation against health outcomes. A systematic assessment identified 19 dietary metrics, including 7 developed for MCH and 12 for NCDs, with none developed or applied for both purposes simultaneously [8]. The GDQS addresses this gap by comprising two sub-metrics: the GDQS-positive, which includes food groups that are key sources of nutrients, and the GDQS-negative, which comprises food groups known to have negative health effects [10].

Table 1: Comparison of Major Diet Quality Metrics

| Metric Name | Primary Focus | Components | Validation Status | Key Strengths |

|---|---|---|---|---|

| Global Diet Quality Score (GDQS) | Dual burden of malnutrition | 25 food groups | Validated against nutrient adequacy & NCD biomarkers [9] | No food composition tables needed; mobile app available |

| Minimum Dietary Diversity for Women (MDD-W) | Nutrient adequacy | 10 food groups | Proxy for micronutrient adequacy [9] | Simple to administer |

| Alternative Healthy Eating Index (AHEI) | NCD risk reduction | Foods and nutrients | Convincing evidence for NCD outcomes [8] | Comprehensive nutrient focus |

| Prime Diet Quality Score (PDQS) | NCD risk | Food groups | Associated with MAFLD risk [11] | Simple food-based approach |

| Mediterranean Diet Score | NCD risk reduction | Foods and nutrients | Convincing evidence for protective associations [8] | Extensive evidence base |

The GDQS differs from other metrics through its unique scoring system that uses quantity of consumption information at the food group level expressed as low, medium, high, and very high consumption to score 25 food groups [10]. Population-based cut-offs allow for reporting the percentage of the population at high (GDQS < 15), moderate (GDQS ≥ 15 and <23), and low risk (GDQS ≥ 23) for poor diet quality outcomes [10].

Validation of Portion-Size Estimation Methods for the GDQS App

Accurate portion-size estimation represents a fundamental challenge in dietary assessment. Recognizing this, researchers have developed and validated innovative methods to standardize portion estimation specifically for the GDQS mobile application.

Experimental Protocol for Method Validation

A 2025 validation study utilized a repeated measures design with 170 participants aged 18 years or older who estimated portion sizes using three methods: (1) weighed food records (WFRs), (2) GDQS app with 3D cubes of pre-defined sizes, and (3) GDQS app with playdough [5] [10]. The study occurred over three consecutive days: on day one, participants received training on weighing foods and using dietary scales; on day two, they weighed and recorded all consumed items during a 24-hour period; and on day three, they returned to complete face-to-face GDQS app interviews using both portion estimation methods [10].

The GDQS app randomized the order in which cubes or playdough were used as portion estimation methods to eliminate order bias [10]. The cubes consisted of ten 3D-printed objects of predefined sizes, with volumes determined using gram cut-offs associated with each food group in the GDQS metric along with data on the density of foods, beverages, and ingredients belonging to each food group [10]. Playdough served as a flexible, interactive alternative for estimating a wide range of foods, including oddly shaped and amorphous items [10].

Table 2: Performance Comparison of Portion-Size Estimation Methods

| Method | Equivalence to WFR (2.5-point margin) | Agreement with WFR for Risk Classification | Food Group Agreement | Practical Considerations |

|---|---|---|---|---|

| 3D Cubes | Equivalent (p = 0.006) [5] | Moderate (κ = 0.5685, p < 0.0001) [10] | Substantial-almost perfect for 22/25 groups [10] | Requires 3D printing; portable |

| Playdough | Equivalent (p < 0.001) [5] | Moderate (κ = 0.5843, p < 0.0001) [10] | Substantial-almost perfect for 22/25 groups [10] | Flexible; suitable for irregular shapes |

| Weighed Food Records | Gold standard | Gold standard | Gold standard | Resource-intensive; burdensome |

Statistical analysis employed the paired two one-sided t-test (TOST) with 2.5 points pre-specified as the equivalence margin to assess equivalence between GDQS-WFR and GDQS-cubes or GDQS-playdough [5] [10]. Kappa coefficients quantified agreement between WFR and the alternative methods for classifying individuals at risk of poor diet quality outcomes and for food group consumption [10].

Diagram 1: Experimental workflow for validating portion-size estimation methods against weighed food records.

Key Findings from Validation Studies

The validation study demonstrated that both cube and playdough methods performed equivalently to weighed food records within the pre-specified 2.5-point margin (p = 0.006 for cubes and p < 0.001 for playdough) [5]. Both methods showed moderate agreement with WFR when classifying individuals at risk of poor diet quality outcomes (κ = 0.5685 for cubes and κ = 0.5843 for playdough, both p < 0.0001) [10]. For 22 out of the 25 GDQS food groups, researchers observed substantial to almost perfect agreement between both estimation methods and WFR [10]. Liquid oils exhibited the lowest agreement (κ = 0.059, 27.7% agreement, p = 0.009), highlighting a specific challenge in estimating certain food categories [10].

The Researcher's Toolkit: Essential Materials for Dietary Assessment

Table 3: Key Research Reagent Solutions for Dietary Assessment Validation

| Item | Specification/Description | Primary Function in Research |

|---|---|---|

| 3D Printed Cubes | Set of 10 cubes of predefined sizes | Standardized portion size estimation for GDQS food groups |

| Playdough | Flexible modeling material | Alternative portion estimation for irregular food shapes |

| Digital Dietary Scale | KD-7000, capacity 7kg, accuracy to 1g | Gold standard measurement for validation studies |

| GDQS Mobile App | Digital data collection platform | Standardized administration of GDQS metric |

| Food Composition Database | FNDDS or country-specific equivalents | Nutrient calculation for validation studies |

| 24-Hour Recall Forms | Paper or digital structured forms | Dietary data collection framework |

Linking Diet Quality Metrics to Clinical Endpoints

The ultimate value of diet quality metrics lies in their ability to predict meaningful health outcomes. Recent research has demonstrated significant associations between GDQS scores and clinical endpoints, reinforcing its utility in both research and clinical settings.

A 2025 case-control investigation conducted at Prince Sattam bin Abdulaziz University Hospital in Saudi Arabia examined the relationship between GDQS, Prime Diet Quality Score (PDQS), and metabolic-associated fatty liver disease (MAFLD) [11]. The study enrolled 225 cases and 225 controls matched by age (±3 years) and assessed dietary intake using a semi-quantitative food frequency questionnaire to calculate GDQS and PDQS [11]. The analysis revealed that cases had significantly lower GDQS and PDQS compared to controls (p < 0.001), with a higher consumption of refined grains and sugar-sweetened beverages and lower intake of fruits, vegetables, and legumes [11].

Each 1-standard deviation increase in GDQS and PDQS was associated with approximately 40% lower odds of MAFLD (OR = 0.61; 95% CI: 0.47, 0.79 and OR = 0.60; 95% CI: 0.46, 0.79, respectively) [11]. These findings suggest that improving diet quality, as measured by these metrics, could represent a key strategy for MAFLD prevention in clinical and public health settings [11].

Additional validation studies conducted in diverse global contexts, including Brazil, have demonstrated the GDQS's effectiveness as an indicator of overall nutrient adequacy [9]. In a nationally representative Brazilian sample, only 1% of the population had a low-risk diet (GDQS ≥ 23), and having a low-risk GDQS lowered the odds for nutrient inadequacy by 74% (95% CI: 63%-81%) [9]. Furthermore, an inverse correlation was found between the GDQS and ultra-processed food consumption (rho = -0.20), supporting its validity as an indicator of unhealthy dietary patterns [9].

Diagram 2: Logical pathway from GDQS assessment to clinical health endpoints.

The validation of portion-size estimation methods for the GDQS application represents a significant advancement in the field of dietary assessment. The demonstrated equivalence of both 3D cube and playdough methods to weighed food records provides researchers with practical, validated tools for field-based data collection, particularly in resource-constrained settings [5] [10]. The growing evidence linking the GDQS to clinical endpoints, including MAFLD, strengthens its utility as a comprehensive metric capable of addressing the dual burdens of malnutrition [11] [9]. As global efforts to improve dietary quality intensify, these validated tools and metrics will play an increasingly vital role in monitoring progress, evaluating interventions, and ultimately connecting dietary patterns to meaningful health outcomes across diverse populations. Future research should continue to explore the relationship between GDQS and additional clinical endpoints while refining portion estimation methods for enhanced accuracy and usability.

In the scientific validation of dietary assessment methods, a criterion measure serves as the reference standard against which new or alternative tools are evaluated. In portion-size estimation research, the Weighed Food Record (WFR) is widely regarded as this gold standard for quantifying dietary intake at the individual level. Unlike methods that rely on memory or estimation, WFR involves the precise weighing of all foods and beverages consumed during a recording period, typically using a calibrated digital scale. This direct measurement approach minimizes recall bias and portion size estimation errors that plague other dietary assessment methods. The WFR provides a foundational benchmark for validating emerging technologies and simplified tools, ensuring that advancements in dietary monitoring rest upon a bedrock of methodological rigor.

Comparing Dietary Assessment Methods

Dietary assessment methods vary significantly in their approach, precision, and sources of error. The table below summarizes the key characteristics of major dietary assessment methods, highlighting the position of WFR as a criterion measure.

Table 1: Comparison of Key Dietary Assessment Methods

| Method | Principle of Operation | Time Frame | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Weighed Food Record (WFR) | Direct weighing of all foods and beverages before and after consumption [1]. | Short-term (usually 1-7 days) [12]. | High precision for actual intake; minimizes memory and portion-size bias [13]. | High participant and researcher burden; potential for reactivity (altering diet) [12]. |

| 24-Hour Dietary Recall | Interviewer-led recall of all foods/beverages consumed in the previous 24 hours [12]. | Short-term (single day). | Low participant literacy not required; less prone to reactivity [12]. | Relies on memory; within-person variation requires multiple recalls [12]. |

| Food Frequency Questionnaire (FFQ) | Self-reported questionnaire on frequency of consuming a fixed list of foods over a long period [12]. | Long-term (months to a year). | Cost-effective for large studies; captures habitual intake [12]. | Limited food list; imprecise portion sizes; prone to systematic error [12]. |

| Dietary Assessment App (e.g., myfood24) | Digital self-reported food record, often with portion size assistance via images or descriptions [14]. | Configurable (short or long-term). | Automated analysis; reduced cost and researcher burden [14]. | Underestimation of energy and nutrients persists; requires user tech-literacy [15]. |

A systematic review of validation studies comparing dietary apps against traditional methods found that apps consistently underestimated energy intake, with a pooled mean difference of -202 kcal/day [15]. Furthermore, when compared to the objective gold standard for energy expenditure—the Doubly Labeled Water (DLW) method—most self-report dietary methods, including high-quality interviews, demonstrate significant under-reporting of energy intake [16]. This consistent finding underscores the inherent challenges in dietary assessment and reinforces the need for a reliable criterion like WFR for validation within the constraints of real-world feasibility.

Experimental Validation in Action: A Case Study on Portion-Size Methods

A critical application of WFR is validating simplified tools for large-scale dietary surveys. A 2025 validation study exemplifies this process, evaluating two portion-size estimation methods for the Global Diet Quality Score (GDQS) app against the WFR criterion [5] [1] [7].

Experimental Protocol

The study employed a repeated-measures design where 170 participants underwent assessment using three methods for the same 24-hour reference period [1]:

- Criterion Method: Weighed Food Record (WFR). Participants received training and a calibrated digital scale (KD-7000, MyWeigh) to weigh and record all foods, beverages, and mixed dish ingredients over 24 hours [1].

- Test Method 1: GDQS App with 3D Cubes. Researchers conducted a face-to-face interview using the GDQS app. Participants reported consumption for 25 food groups using a set of ten 3D-printed cubes of pre-defined sizes corresponding to gram cut-offs for each group [1].

- Test Method 2: GDQS App with Playdough. In the same session, participants also estimated portions using playdough to model the volume of food consumed per food group [1].

The primary statistical analysis used the paired two one-sided t-test (TOST) to assess the equivalence of the GDQS scores derived from the app methods compared to the WFR-derived score, with a pre-specified equivalence margin of 2.5 points [1].

Key Quantitative Findings

The study yielded the following results, which are summarized in the table below.

Table 2: Key Validation Findings for GDQS App Methods vs. Weighed Food Record (WFR) [5] [1]

| Validation Metric | GDQS with Cubes | GDQS with Playdough |

|---|---|---|

| Equivalence to WFR (TOST p-value) | p = 0.006 | p < 0.001 |

| Agreement for Risk Classification (Kappa, κ) | κ = 0.57 (p < 0.0001) | κ = 0.58 (p < 0.0001) |

| Interpretation | Equivalent to WFR; Moderate agreement | Equivalent to WFR; Moderate agreement |

The findings demonstrate that both simplified methods provided diet quality scores equivalent to the WFR criterion. The agreement for most of the 25 specific food groups was substantial to almost perfect, though liquid oils exhibited the lowest agreement (κ = 0.059, 27.7% agreement), highlighting that validation performance can vary by food type [1].

Workflow and Hierarchy of Dietary Assessment Methods

The following diagram illustrates the logical relationship and hierarchy between the criterion measure (WFR) and other dietary assessment methods in a validation context.

The diagram above shows the validation hierarchy, with WFR serving as a key criterion for common methods. The workflow for a typical validation study, like the one cited, is shown below.

The Scientist's Toolkit: Essential Research Reagents for WFR Validation

Table 3: Essential Materials for Weighed Food Record Validation Studies

| Reagent / Tool | Specification / Example | Critical Function in Research |

|---|---|---|

| Calibrated Digital Scale | e.g., KD-7000 (7 kg capacity) [1]. | Provides the fundamental objective measure of food weight; accuracy is paramount. |

| Standardized WFR Protocol | Detailed instructions for weighing items, including mixed dishes and leftovers [1]. | Ensures consistency and data quality across all participants and researchers. |

| Trained Research Dietitians | Professionals skilled in instructing participants and clarifying entries [1]. | Mitigates user error and improves the accuracy and completeness of records. |

| Validated Portion Estimation Aids | 3D cubes of defined volumes or standardized playdough [1]. | Serves as the test intervention against the WFR criterion in validation studies. |

| Dietary Analysis Software | Tool with a linked food composition database (FCDB) [14]. | Converts food consumption data from WFR or apps into nutrient intake values. |

| Statistical Analysis Plan | Pre-specified tests (e.g., TOST, Kappa) and equivalence margins [1]. | Provides the objective framework for determining whether a new method is equivalent to the criterion. |

The Weighed Food Record maintains its status as a critical criterion measure in dietary research due to its objectivity and precision. As the field evolves with digital tools and simplified metrics, the rigorous validation of these new methods against the WFR benchmark is essential for progress. The successful validation of portion-size aids like 3D cubes and playdough demonstrates that it is possible to develop less burdensome tools without sacrificing scientific validity, thereby paving the way for more frequent and widespread assessment of diet quality in diverse populations [1].

Accurate portion-size estimation is a cornerstone of reliable dietary assessment, directly influencing the quality of data in nutritional epidemiology, public health research, and clinical trials. However, three interconnected challenges consistently undermine measurement precision: memory reliance, cognitive burden, and portion distortion. Memory reliance refers to the dependency on a respondent's ability to accurately recall and quantify past food consumption. Cognitive burden encompasses the mental effort required to estimate and report portion sizes, which can be exacerbated by complex assessment tools. Portion distortion describes the phenomenon where consumers' perceptions of normal serving sizes become skewed by environmental and psychological factors, leading to systematic misestimation.

These challenges are not merely theoretical concerns but represent significant sources of measurement error that can compromise research validity and public health recommendations. This guide objectively compares current portion-size estimation methodologies by examining their experimental performance across these three critical dimensions, providing researchers with evidence-based insights for method selection and development.

Experimental Comparison of Portion-Size Estimation Methods

Quantitative Performance Comparison

Table 1: Comparative accuracy of portion-size estimation methods against weighed food records

| Estimation Method | Study Design | Sample Size | Agreement with Gold Standard | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| 3D Cubes with GDQS App [10] | Repeated measures vs. WFR | 170 participants | Equivalent to WFR (p=0.006); Moderate agreement (κ=0.57) | Standardized data collection; High equivalence margin | Requires 3D-printed cubes |

| Playdough with GDQS App [10] | Repeated measures vs. WFR | 170 participants | Equivalent to WFR (p<0.001); Moderate agreement (κ=0.58) | Flexible, interactive; No special printing needed | Potential variability in shaping |

| Computer-Based Assessment [17] | Comparison to known weights | 40 older adults, 41 younger adults | Wide variability in estimates | Suitable for all age groups | Less accurate than photographic assessment by nutritionists |

| Image-Series Questionnaire [18] | Online validation study | 295 participants | Validated against real foods | Captures normal vs. appropriate portions | Limited to predefined food items |

| 2D Food Portion Visual (FPV) [19] | Multicenter clinical trial | 43 participants | Similar proportions recalled vs. actual | Gender-dependent accuracy patterns | Accuracy varies by food category and gender |

Table 2: Demographic and cognitive factors affecting estimation accuracy

| Factor | Effect on Estimation | Supporting Evidence |

|---|---|---|

| Gender | Males more accurate with FPV for meats, mixed dishes; Females more accurate with household measures for meats, cereals [19] | Clinical feeding study (n=43) |

| Age | Older adults (65+) similar to younger adults in estimation ability [17] | Laboratory study with buffet-style foods |

| Professional Training | Nutritionists show less variability in estimates from photographs [17] | Comparison across age groups and professionals |

| Food Morphology | Significant differences for small pieces [17] | Morphology-specific analysis |

| Portion Distortion | Normal portions exceed perceived appropriate portions across all test foods [18] | Online image-series questionnaire (n=295) |

Detailed Experimental Protocols

The Global Diet Quality Score (GDQS) app validation study employed a rigorous repeated measures design to compare cube and playdough estimation methods against weighed food records (WFR) as the gold standard. The methodology encompassed:

Participant Recruitment: 170 adults recruited with eligibility criteria including age ≥18 years, COVID-19 vaccination status, and agreement to avoid mixed dishes prepared outside home during the 24-hour reference period.

Training Protocol: 40-60 minute in-person training sessions in groups of up to five participants, covering dietary scale use and weighing procedures for all foods, beverages, and mixed dish ingredients.

Equipment Standardization: Provision of calibrated digital dietary scales (KD-7000, capacity 7kg, MyWeigh, Phoenix, AZ, USA) accurate to 1 gram, with paper data collection forms and supplementary digital guides.

Data Collection Timeline: Three consecutive days comprising training (Day 1), WFR completion during 24-hour period (Day 2), and GDQS app interview with both cube and playdough methods (Day 3).

Statistical Equivalence Testing: Paired two one-sided t-test (TOST) with pre-specified 2.5-point equivalence margin for GDQS scores, with Kappa coefficients calculated for agreement on poor diet quality risk classification.

The investigation of normal versus perceived appropriate portion sizes utilized a validated online image-series questionnaire with the following methodological approach:

Participant Recruitment: 295 Australian consumers (51% female, mean age 39.5±14.1 years) recruited via social media and community flyers with quotas for age and sex subgroups.

Instrument Design: Eight successive portion size images for 15 discretionary foods across categories (sweet/savory snacks, cakes, fast foods, sugar-sweetened beverages) with randomized presentation order.

Study Design: Repeated cross-sectional assessment with two completions至少间隔一周, incorporating demographic collection and hunger level assessment.

Statistical Analysis: Quantile regression models estimating ranges (17th to 83rd percentiles) for normal and perceived appropriate portion sizes, adjusted for sex, age, physical activity, cooking confidence, SES, BMI, and baseline hunger.

Conceptual Framework and Methodological Workflows

Portion Estimation Cognitive Workflow

Diagram 1: Cognitive workflow of portion estimation

Method Selection Decision Pathway

Diagram 2: Method selection decision pathway

The Scientist's Toolkit: Essential Research Materials

Table 3: Key research reagents and materials for portion-size estimation studies

| Tool/Reagent | Primary Function | Research Application | Key Considerations |

|---|---|---|---|

| 3D Printed Cubes [10] | Standardized volume representation for food groups | GDQS app-based assessments | Requires access to 3D printing; Predefined sizes based on food density |

| Modeling Playdough [10] | Flexible portion size estimation | Alternative to cubes in GDQS app | More accessible than cubes; Enables shaping of irregular foods |

| Calibrated Dietary Scales [10] | Gold standard weight measurement | Weighed food record validation | Accuracy to 1g required; Training essential for participant use |

| Image-Series Questionnaires [18] | Visual portion size assessment | Online and in-person surveys | Requires validation against real foods; Must cover relevant food categories |

| Digital Photography Systems [17] | Meal image capture for later analysis | Laboratory and naturalistic studies | Standardized lighting and angles crucial; Reference objects in frame |

| Computer/Tablet Interfaces [17] | Digital assessment administration | All age group compatibility | Interface design affects usability; Touchscreen preferred for older adults |

Discussion: Research Gaps and Future Directions

The experimental data reveal significant methodological trade-offs in portion-size estimation. While the GDQS app with both cubes and playdough demonstrates statistical equivalence to weighed food records [10], this validation exists at the food group level rather than for individual foods. The cognitive advantages of playdough for irregularly shaped foods must be balanced against the standardization benefits of pre-defined cubes.

The consistent finding that normal portion sizes exceed perceived appropriate portions across all test foods [18] highlights the profound impact of portion distortion on self-report data. This discrepancy between consumption norms and appropriateness judgments represents a fundamental challenge for dietary assessment and public health messaging.

Future methodological development should address several critical research gaps. First, the interaction between cognitive load and estimation accuracy requires further investigation, particularly as assessment tools become increasingly digital. Second, the development of age-specific and culturally adapted tools must be prioritized, as current evidence suggests similar estimation capabilities across age groups [17] but potentially different response patterns. Finally, integration of emerging technologies such as virtual reality [20] and artificial intelligence for automated food recognition may help mitigate current limitations in memory reliance and cognitive burden.

Researchers should select portion estimation methods based on specific study requirements, considering the balanced trade-offs between accuracy, participant burden, and implementation feasibility demonstrated in the experimental comparisons presented herein.

From Cubes to AI: A Toolkit of Portion-Size Estimation Methods

Accurate portion-size estimation is a cornerstone of reliable dietary assessment, which in turn is vital for nutritional research, clinical studies, and public health monitoring [21] [22]. Traditional physical aids—including 3D food models, geometric cubes, and malleable materials like playdough—have long been employed to help individuals visualize and estimate food portions, thereby improving the accuracy of dietary recall [23]. Within validation research for portion-size estimation methods, these tools serve as critical benchmarks or experimental proxies for real food. This guide provides an objective comparison of these traditional physical aids, detailing their performance, experimental applications, and protocols based on current scientific literature. It is structured to assist researchers in selecting appropriate aids for validating both traditional and emerging digital dietary assessment technologies.

Comparative Analysis of Traditional Physical Aids

The table below summarizes the core characteristics, performance, and applications of the three primary physical aids in portion-size estimation research.

Table 1: Comparison of Traditional Physical Aids for Portion-Size Estimation

| Feature | 3D Food Models | Geometric Cubes (Cuboids) | Playdough |

|---|---|---|---|

| Primary Research Function | Volume estimation benchmark via 3D model registration and scaling [21] [22]; Consumer perception studies [24]. | Investigation of visual cues (e.g., elongation) on portion perception [23]; Fundamental shape template for model-based volume estimation [22]. | Creative, hands-on modeling of amorphous or complex food volumes; fine motor skill assessment in developmental studies [25] [26]. |

| Typical Experimental Data | Average portion estimation error of 31.10 kCal (17.67%) when used as a scaling reference in 3D model-based frameworks [22]. | Adults selected a smaller ideal portion size for an elongated product (5.5 ± 0.4 rating) vs. a wider/thicker one (8.8 ± 0.3 rating) on a visual analog scale [23]. | Data is primarily qualitative, analyzed through thematic analysis of participant explanations and metaphors [25]. |

| Key Advantages | High accuracy for rigid foods; Provides an objective, digital 3D ground truth [21] [22]. | Isolates the effect of specific geometric attributes on perception; Simple, cheap, and standardized [23]. | Highly flexible and adaptable; excellent for engaging participants and exploring non-geometric food shapes [25]. |

| Inherent Limitations | Requires specialized equipment for creation (3D scanners/printers); Less effective for amorphous foods [21] [22]. | Oversimplifies most real-food shapes; Limited application in practical volume estimation for complex items. | Subjective and difficult to standardize; lacks precision for quantitative volume estimation [25]. |

| Data Output | Quantitative (Volume in mL, Energy in kCal) [22]. | Quantitative (Perception scores, selected portion sizes) [23]. | Qualitative (Themes, metaphors, self-reported understanding). |

Detailed Experimental Protocols

This section outlines the specific methodologies employed in research utilizing these physical aids, providing a blueprint for experimental replication.

Protocol for 3D Food Model-Based Volume Estimation

This protocol is adapted from model-based food portion estimation studies [21] [22]. Its primary goal is to estimate the volume and energy of a food item in a 2D image by leveraging a pre-existing 3D model.

1. 3D Model Generation (Training Phase):

- Image Acquisition: Capture 15-20 images or a video sequence of the target food item from multiple viewpoints surrounding it. A fiducial marker (e.g., a colored checkerboard) must be present in every frame for scale and calibration [21].

- Camera Calibration: Compute the intrinsic (focal length, optical center) and extrinsic (position, orientation) camera parameters for each image using the detected checkerboard [21].

- Silhouette Extraction: Convert each camera image to a binary mask, segmenting the food item (foreground as "1") from the background ("0"). Apply morphological operators to clean boundary noise and fill small holes [21].

- Volume Voxel Carving: Define a 3D bounding box in world coordinates and fill it with a dense grid of volume voxels (V). Project every voxel on the surface of V onto all camera images. Carve away any voxel that falls outside the object mask in any image. The remaining voxels constitute the 3D model, with volume estimated by the total count of retained voxels [21].

2. Pose Estimation and Volume Calculation (Testing Phase):

- Input Processing: Take a single 2D test image containing the food and the fiducial marker. Use a segmentation model (e.g., Segment Anything Model - SAM) to obtain a precise mask of the food item [22].

- Pose Initialization: Estimate the camera's pose from the checkerboard. The food item is constrained to lie on the table plane (Zw = 0). Estimate its azimuth (ϕ) and elevation (θ) angles relative to the camera [21].

- 3D Model Registration & Scaling: Retrieve the pre-built 3D model of the identified food. Estimate the final pose by optimizing the alignment between the projected 3D model silhouette and the segmented food mask in the 2D image. Calculate a scaling factor as the ratio of the area in the segmented mask to the area of the projected 3D model. Apply this scaling factor to the known volume of the 3D model to estimate the food's volume in the test image [22].

- Energy Conversion: Convert the estimated volume to energy (kCal) using standard nutritional databases (e.g., USDA FNDDS) and known food densities [22].

The following workflow diagram illustrates this multi-phase process:

Protocol for Studying Shape Perception with Geometric Cubes

This protocol is based on research investigating how geometric attributes influence portion size perception [23].

1. Stimulus Design:

- Using CAD software (e.g., SolidWorks), generate a series of geometric shapes, typically cuboids (e.g., "cube," "taller," "wider").

- Critical Control: Maintain a constant volume across all shape variations (e.g., 90 mL) to isolate the effect of shape on perception [23].

- Create high-quality, realistic images or physical prototypes of these shapes for presentation to participants.

2. Participant Task & Data Collection:

- Method of Adjustment: Present participants with images of the different shapes on a computer screen. Ask them to adjust the portion size of a reference product until it represents their "ideal portion" for each specific shape [23].

- Visual Analog Scale (VAS): Alternatively, present pairs of shapes and ask participants to rate them on a continuous scale (e.g., a 100mm line) for attributes like "size perception," "appeal," or "ideal portion" [24] [23].

3. Data Analysis:

- Analyze the selected portion sizes or VAS ratings using Analysis of Variance (ANOVA) to determine if differences in shape lead to statistically significant differences in perception.

- The study by [23] found that elongation significantly influenced ideal portion selection, demonstrating the power of this method.

Protocol for Creative Modeling with Playdough

This protocol leverages playdough as a qualitative tool to explore conceptual understanding of portions and shapes [25].

1. Research Setup:

- Provide participants with a standard amount and variety of colors of playdough.

- Pose a research prompt, for example: "Model your understanding of a healthy portion of pasta," or "Create a model that represents a challenging food to estimate."

2. Modeling and Elicitation:

- Allow participants time to create their models individually or in groups.

- The facilitator should take photographs of the models for later analysis.

- Conduct a one-on-one or group interview where participants explain their model, what it represents, and why they made certain design choices. This narrative is the primary data [25].

3. Data Analysis:

- Thematic Analysis: Transcribe the interviews and analyze the transcripts alongside photographs of the models. Code the data for emerging themes, metaphors, and insights related to portion size estimation, challenges, and personal strategies [25].

The Scientist's Toolkit: Key Research Reagents and Materials

Table 2: Essential Materials for Portion-Size Estimation Research

| Item | Function in Research |

|---|---|

| Fiducial Marker (Checkerboard) | A reference object of known size placed in a scene. It is critical for camera calibration, establishing world coordinate systems, and determining the scale of objects in images for volume estimation [21] [22]. |

| 3D Scanner / Printer | Used in the creation of high-precision 3D food models. Scanners digitize real food items, while printers can produce physical models for perception studies or create customized shapes for testing [24] [21]. |

| Food-Ink Formulations | Edible materials (e.g., chocolate, marzipan, protein gels) used in 3D food printing to create realistic food models for consumer acceptance and perception studies [24] [27]. |

| CAD Software | Enables the design and virtual manipulation of geometric shapes (cubes, cuboids) with precise control over dimensions and volume, which is essential for perception studies [23]. |

| Playdough / Modeling Clay | A low-cost, malleable material used in qualitative research to facilitate creative expression, metaphor, and deep discussion about abstract concepts like portion size and food shape [25]. |

Accurate dietary assessment is fundamental for public health research, nutritional epidemiology, and clinical care. Traditional methods for estimating food intake, such as weighed food records and interviewer-led 24-hour recalls, face significant challenges including high participant burden, reliance on memory, and resource-intensive data coding and processing [28] [29]. Digital and image-based tools have emerged as transformative solutions to these limitations, offering standardized, scalable, and less burdensome alternatives for dietary assessment. These tools primarily utilize food photography series and online platforms to assist participants in estimating portion sizes of consumed foods and beverages.

The core technological approaches in this field include online 24-hour dietary recall systems like Intake24, which employs portion-size images and standardized prompts [30], and prospective methods such as the Remote Food Photography Method (RFPM), which captures food selection and plate waste via smartphone cameras [31]. Recent advancements have incorporated artificial intelligence, with systems like DietAI24 leveraging multimodal large language models (MLLMs) combined with Retrieval-Augmented Generation (RAG) technology to automate food recognition and nutrient estimation from food images [32]. This guide provides a comprehensive comparison of these digital tools, focusing on their validation against traditional methods, performance metrics, and implementation requirements to inform researchers and professionals in selecting appropriate dietary assessment technologies.

Comparative Performance of Digital Dietary Assessment Tools

Table 1: Validation Studies of Digital Portion-Size Estimation Tools Against Reference Methods

| Tool/Method | Reference Method | Study Population | Key Performance Metrics | Results and Agreement |

|---|---|---|---|---|

| Intake24 [28] | 3D Food Models | 70 pupils (11-12 years) | Food weight, Energy, Macronutrients | Geometric mean ratio: 1.00 for food weight; Limits of agreement: -35% to +53%; Energy intake: 1% lower than food models |

| Food Photography 24-h Recall (FP 24-hR) [29] | Weighed Food Record (WFR) | 45 women (rural Bolivia) | Food weight, Energy, Nutrients | Most foods underestimated (-2.3% to -6.8%); Beverages overestimated (+1.6%); High Spearman correlations (r=0.75-0.98) for foods |

| Remote Food Photography Method (RFPM) [31] | Estimated Energy Requirement (EER) | 40 children (7-8 years) | Energy intake | No significant difference from EER (mean difference: -148 kcal, p=0.09); Significantly less burdensome than ASA24 |

| GDQS App with Cubes/Playdough [10] | Weighed Food Record | 170 adults (≥18 years) | Global Diet Quality Score (GDQS) | Equivalent to WFR within 2.5-point margin (cubes: p=0.006; playdough: p<0.001); Moderate agreement for poor diet quality risk (κ=0.57-0.58) |

| PortionSize App [6] | Digital Photography | 14 adults (free-living) | Food weight, Energy, Food Groups | Equivalent for food weight (P<0.001); Overestimated energy (P=0.08); Equivalent for vegetables (P=0.01); Overestimated fruits, grains, dairy, protein |

Table 2: Comparative Accuracy of Nutrient and Food Group Estimation Across Methods

| Assessment Tool | Energy Estimation Accuracy | Macronutrient Accuracy | Food Group-Specific Performance | Limitations and Error Patterns |

|---|---|---|---|---|

| Intake24 [28] | High (within 1% of reference) | High (all within 6% of reference) | Strong agreement for fruits/vegetables (tertile classification) | Limits of agreement relatively wide (-35% to +53%) |

| FP 24-hR [29] | Moderate (slight underestimation) | Moderate (fat underestimated -5.98%) | Variable by food type; Leafy vegetables overestimated (+8.7%) | Systematic negative bias for some food categories |

| RFPM [31] | High (no significant difference from EER) | Not specifically reported | Captures food selection and plate waste | Requires consistent smartphone use and photography |

| AI-Enabled Apps [33] | Variable (inaccurate in mixed dishes) | Variable across apps and diets | Struggles with culturally diverse foods and mixed dishes | MyFitnessPal: 97% accuracy; Fastic: 92% accuracy |

| DietAI24 [32] | High (63% reduction in MAE) | Comprehensive (65 nutrients) | Handles mixed dishes effectively | Requires further validation in real-world settings |

Experimental Protocols and Methodologies

Intake24 Validation Protocol

The validation of Intake24 against traditional 3D food models followed a structured protocol involving 11-12 year old children from secondary schools. Participants first completed a two-day food diary, followed by an interview where they estimated food portion sizes using both 3D food models and Intake24 for the same recording days. The order of assessment was randomized to eliminate potential bias. The 3D food model method utilized physical models in various shapes and sizes including bread-shaped slices, sticks, chips, spheres, pie wedges, and standardized tableware. Food weights were calculated using conversion factors specific to each food and selected model [28].

Intake24 implementation involved participants entering all foods and drinks consumed the previous day, selecting the closest match from the system's food list, and estimating portion sizes using validated portion photographs. The system automatically assigned food codes and linked them to nutrient composition data. Statistical analysis employed Bland-Altman methods to assess agreement between the two methods, comparing mean intake for food weight, energy, and nutrients. The geometric mean ratio for food weight was 1.00, indicating no systematic bias between methods, with limits of agreement ranging from -35% to +53% [28].

Food Photography 24-Hour Recall Methodology

The Food Photography 24-hour recall (FP 24-hR) method was validated in a rural Bolivian population using a two-step approach. On the first day, participants used a photo kit containing a digital camera and gridded table mat to photograph all foods consumed over a 24-hour period. The following day, researchers conducted a 24-hour recall interview where participants used their photographs as a memory aid and a photo atlas with standardized portion sizes to estimate quantities consumed [29].

The photo atlas development followed population-based approaches, with nutritionists visiting local families to identify commonly consumed foods, typical portion sizes, and local tableware. The atlas contained 334 color photographs of 78 common foods, depicting 3-7 portion sizes arranged in descending order on two plate types (flat and soup plates). Foods were weighed and photographed at 90° and 45° angles with reference objects and grid mats for scale. Validation against weighed food records used Spearman's correlation coefficients and Bland-Altman analysis, showing high correlations (r=0.75-0.98) for most food categories and random (non-systematic) differences between methods [29].

Artificial Intelligence and Image Recognition Protocols

Recent advances in AI-based dietary assessment include the DietAI24 framework, which combines multimodal large language models (MLLMs) with Retrieval-Augmented Generation (RAG) technology. The system processes food images through three sequential steps: food recognition, portion size estimation, and nutrient content estimation. For food recognition, the model identifies all food items present in an image as a set of standardized food codes. Portion size estimation is framed as multiclass classification, selecting appropriate portion sizes from standardized options in the Food and Nutrient Database for Dietary Studies (FNDDS). Finally, nutrient content estimation integrates recognized food codes with their estimated portion sizes to compute comprehensive nutrient profiles [32].

The validation of commercial AI-enabled apps followed different protocols, with researchers creating standardized food records for Western, Asian, and Recommended dietary patterns. Foods were photographed according to strict protocols (45-degree angle, 30cm distance, controlled lighting) and analyzed through the apps' automated image recognition systems. Performance was assessed by comparing app-generated nutritional outputs with known values from the standardized meals, revealing significant variability in accuracy, particularly for mixed dishes and culturally diverse foods [33].

Visualization of Methodological Workflows

Digital Dietary Assessment Workflow

Digital Dietary Assessment Workflow: This diagram illustrates the comparative workflows between traditional and digital dietary assessment methods, highlighting the divergent paths from data collection through processing to final output.

DietAI24 Framework Architecture

DietAI24 System Architecture: This visualization details the DietAI24 framework's components and data flow, from image input through food recognition and database retrieval to comprehensive nutrient profiling.

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for Digital Dietary Assessment Studies

| Material/Tool | Specifications | Research Function | Validation Considerations |

|---|---|---|---|

| 3D Food Models [28] | Various shapes/sizes: bread slices (7), sticks (5), chips, spheres (5), pie wedges (12), tableware | Reference standard for portion size estimation during interviews | Requires food-specific conversion factors for weight calculation |

| Digital Cameras/ Smartphones [29] [31] | Standardized resolution, grid mats for scale, reference objects | Food photography for recall aids or prospective assessment | Consistency in angle (45°-90°), distance (30-50cm), lighting conditions |

| Photo Atlases [29] | 334 photos of 78 foods, 3-7 portion sizes per food, multiple angles | Portion size estimation reference during interviews | Should reflect local foods, portion ranges, and tableware |

| PortionSize Cubes [10] | 10 3D-printed cubes of predefined sizes (volume-based) | Standardized portion estimation at food group level | Cube volumes determined by gram cut-offs and food density data |

| Playdough [10] | Moldable material for creating food shapes | Flexible portion estimation method | Effective for amorphous and mixed foods; requires participant training |

| Validated Food Composition Databases [28] [32] | FNDDS, NDNS nutrient databank, localized databases | Nutrient calculation from reported foods | Must be comprehensive, culturally appropriate, and regularly updated |

| Standardized Tableware [29] | Local plates, bowls, cups in common sizes | Context for portion size estimation in photographs | Should reflect what population typically uses |

| Dietary Assessment Software Platforms [30] [34] | Intake24, ASA24, GDQS app, custom solutions | Automated food coding, portion estimation, nutrient analysis | Require localization, usability testing, and validation in target population |

Digital and image-based dietary assessment tools demonstrate significant potential to transform portion-size estimation in research settings. The accumulating validation evidence indicates that tools like Intake24 perform comparably to traditional methods like 3D food models for estimating energy and nutrient intakes, while offering advantages in scalability, reduced participant burden, and automated data processing [28] [30]. Similarly, photograph-based methods including the RFPM and FP 24-hR show reasonable agreement with reference methods while addressing limitations of memory-based recall [29] [31].

The emerging generation of AI-enhanced tools represents a promising direction for the field, with systems like DietAI24 demonstrating substantially improved accuracy through innovative approaches that combine multimodal LLMs with authoritative nutrition databases [32]. However, current commercial AI applications show variable performance, particularly for mixed dishes and culturally diverse foods, highlighting the need for continued refinement of food recognition algorithms and expansion of food databases [33].

For researchers selecting dietary assessment methods, key considerations include population characteristics (age, literacy, technological access), study resources, specific nutrients or foods of interest, and required precision. Traditional methods may remain preferable in certain contexts, but digital tools increasingly offer viable alternatives that balance accuracy with practical implementation needs. Future development should focus on improving portion size estimation for challenging food categories, enhancing user experience across diverse populations, and validating tools in real-world settings beyond controlled studies.

Accurate dietary assessment is a cornerstone of nutritional epidemiology and clinical research, yet traditional methods for estimating food portion size are plagued by limitations including recall bias, participant burden, and systematic estimation errors [35] [36]. The emergence of artificial intelligence (AI), particularly multimodal large language models (MLLMs) and advanced depth imaging techniques, offers promising solutions for automating nutritional analysis from food images [35] [37]. This review objectively compares the performance of these emerging technologies within the critical context of validation research for portion-size estimation methods, providing researchers with experimental data and methodological frameworks for evaluating these systems.

Performance Comparison of Multimodal LLMs in Dietary Assessment

Quantitative Performance Metrics Across Leading Models

Recent comparative studies have evaluated the performance of general-purpose MLLMs on standardized dietary assessment tasks. The table below summarizes key performance metrics from a controlled evaluation of three leading models using 52 standardized food photographs across different portion sizes [35].

Table 1: Performance Comparison of Multimodal LLMs on Food Estimation Tasks

| Model | Weight Estimation MAPE | Energy Estimation MAPE | Correlation with Reference Values | Systematic Bias Trend |

|---|---|---|---|---|

| ChatGPT-4o | 36.3% | 35.8% | 0.65-0.81 | Underestimation increasing with portion size |

| Claude 3.5 Sonnet | 37.3% | 35.8% | 0.65-0.81 | Underestimation increasing with portion size |

| Gemini 1.5 Pro | 64.2%-109.9% | 64.2%-109.9% | 0.58-0.73 | Underestimation increasing with portion size |

MAPE: Mean Absolute Percentage Error

The data reveals that ChatGPT and Claude demonstrate similar accuracy levels with MAPE values approximately 36-37% for weight estimation and 35.8% for energy estimation, while Gemini shows substantially higher errors across all nutrients [35]. Correlation coefficients between model estimates and reference values ranged from 0.65 to 0.81 for ChatGPT and Claude, compared with 0.58-0.73 for Gemini [35]. All models exhibited systematic underestimation that increased with portion size, with bias slopes ranging from -0.23 to -0.50 [35].

Performance Relative to Traditional Methods

When contextualized against traditional dietary assessment methods, the performance of leading MLLMs becomes particularly noteworthy. The accuracy levels achieved by ChatGPT and Claude (MAPE ~36%) are comparable with traditional self-reported dietary assessment methods but without the associated user burden [35]. This suggests potential utility as dietary monitoring tools, though the systematic underestimation of large portions and high variability in macronutrient estimation indicate these general-purpose LLMs are not yet suitable for precise dietary assessment in clinical or athletic populations where accurate quantification is critical [35].

Specialized AI systems have demonstrated further improved performance in specific contexts. The EgoDiet system, which employs a dedicated egocentric vision-based pipeline, achieved a MAPE of 28.0% for portion size estimation in field studies among African populations, outperforming the traditional 24-Hour Dietary Recall (24HR) which exhibited a MAPE of 32.5% [38]. In another study, the same system demonstrated a MAPE of 31.9% for portion size estimation compared to 40.1% for estimates made by dietitians [38].

Table 2: Comparison of AI Methods with Traditional Assessment Approaches

| Assessment Method | Weight/Portion Estimation MAPE | Key Advantages | Key Limitations |

|---|---|---|---|

| Multimodal LLMs (ChatGPT/Claude) | 35.8-37.3% | No user burden, automated analysis | Systematic underestimation of large portions |

| Specialized AI (EgoDiet) | 28.0-31.9% | Optimized for specific cuisines, passive capture | Requires specialized hardware |

| Traditional 24HR | 32.5% | Established methodology, widely validated | Recall bias, labor-intensive |

| Dietitian Estimation | 40.1% | Professional expertise | Costly, subjective variability |

Experimental Protocols for Validation Research

Standardized Benchmark Evaluation Methodology

The performance data presented in Table 1 was derived from a rigorously controlled experimental protocol designed specifically for validating AI-based dietary assessment methods [35]. The methodology can be summarized as follows:

- Image Dataset: 52 standardized food photographs including individual food components (n = 16) and complete meals (n = 36) across three portion sizes (small, medium, large) [35]

- Reference Standards: Direct weighing of food items with nutritional composition determined using Dietist NET nutritional database software [35]

- Model Prompting: Identical prompts provided to each model to identify food components and estimate nutritional content using visible cutlery and plates as size references [35]

- Evaluation Metrics: Mean absolute percentage error (MAPE), Pearson correlation coefficients, and systematic bias analysis using Bland-Altman plots [35]

This experimental framework provides a validated approach for researchers seeking to benchmark new portion-size estimation methods against established standards.

Specialized AI System Validation Protocol

The EgoDiet evaluation followed a different validation protocol tailored to real-world conditions [38]:

- Field Studies: Conducted in both London (Study A) and Ghana (Study B) among populations of Ghanaian and Kenyan origin [38]

- Hardware Configuration: Utilized two customized wearable cameras - the Automatic Ingestion Monitor (AIM, eye-level) and eButton (chest-level) - with images stored on SD cards with capacity for ≤3 weeks of data [38]

- Reference Method Comparison: In Study A, contrasted with dietitians' assessments; in Study B, compared to traditional 24-Hour Dietary Recall [38]

- Pipeline Architecture: Employed four specialized modules: SegNet for food item and container segmentation, 3DNet for depth estimation and 3D reconstruction, Feature for portion size-related feature extraction, and PortionNet for final weight estimation [38]

The following diagram illustrates the complete experimental workflow for validating portion-size estimation methods, from data collection through to performance evaluation:

Technical Architectures for Automated Estimation

Multimodal LLM Architecture for Dietary Analysis

General-purpose multimodal LLMs employ an integrated architecture for processing food images and generating nutritional estimates [39] [40]. These models:

- Utilize transformer-based architectures pre-trained on vast multimodal datasets [39] [40]

- Employ visual encoders to process food images and extract relevant features [40]

- Fuse visual representations with textual prompts to generate comprehensive food analyses [40]

- Leverage in-context learning capabilities to adapt to specific dietary assessment tasks without fine-tuning [40]

The performance of these models has been shown to be significantly influenced by prompt engineering strategies, with techniques like Chain-of-Thought prompting demonstrating improved performance in complex diagnostic tasks in other domains [41].

Specialized Depth Imaging Pipeline

The EgoDiet system implements a more specialized technical architecture specifically designed for portion size estimation [38]:

- SegNet Module: Utilizes a Mask Region-based Convolutional Neural Network (Mask R-CNN) backbone optimized for segmentation of food items and containers in African cuisine [38]

- 3DNet Module: A depth estimation network with encoder-decoder architecture that estimates camera-to-container distance and reconstructs 3D models of containers [38]

- Feature Module: Extracts portion size-related features from segmentation masks and 3D models, including Food Region Ratio (FRR) and Plate Aspect Ratio (PAR) [38]

- PortionNet Module: Estimates final portion size in weight using extracted features with relatively little labeled data (addressing the few-shot regression problem) [38]

The following diagram illustrates the technical architecture of a specialized depth imaging pipeline for portion size estimation:

The Researcher's Toolkit: Essential Materials and Methods

Table 3: Research Reagent Solutions for Portion-Size Estimation Validation

| Research Tool | Function | Example Implementation |

|---|---|---|

| Standardized Food Photographs | Controlled dataset for benchmarking | 52 photographs across multiple portion sizes and meal types [35] |

| Reference Nutritional Databases | Ground truth for nutrient composition | Dietist NET software [35] |

| Wearable Camera Systems | Passive capture of dietary intake | Automatic Ingestion Monitor (AIM) and eButton devices [38] |

| Depth Estimation Networks | 3D reconstruction from 2D images | Encoder-decoder architecture for camera-to-container distance [38] |

| Segmentation Algorithms | Food item and container identification | Mask R-CNN backbone optimized for specific cuisines [38] |

| Validation Metrics Suite | Performance quantification | MAPE, correlation coefficients, Bland-Altman analysis [35] |

The validation of portion-size estimation methods represents a critical frontier in nutritional research. Current evidence suggests that multimodal LLMs achieve accuracy levels comparable to traditional self-reported methods while significantly reducing user burden [35]. However, systematic underestimation, particularly with larger portions, remains a significant limitation [35]. Specialized AI systems employing depth imaging and computer vision techniques demonstrate improved performance in specific contexts but often require specialized hardware and optimization for particular cuisines [38].

For research applications where precise quantification is paramount, such as clinical trials or athletic nutrition, current general-purpose MLLMs show limitations but specialized systems may offer viable alternatives to traditional methods [35] [38]. Future research should focus on addressing systematic biases, expanding food databases, and developing hybrid approaches that leverage the strengths of both general-purpose MLLMs and specialized computer vision techniques.

The field shows particular promise for advancing dietary assessment in low- and middle-income countries and for long-term studies where participant burden and technical requirements present significant challenges to traditional methods [38]. As these technologies continue to evolve, rigorous validation against standardized benchmarks will remain essential for establishing their appropriate role in nutritional research and clinical practice.

Accurately quantifying food intake is a cornerstone of nutritional research, pivotal for understanding the links between diet and health outcomes such as obesity, diabetes, and cardiovascular diseases [42] [43]. Portion size estimation remains a significant source of measurement error in dietary assessment, making the choice of an appropriate estimation method a critical decision that can directly impact the validity and reliability of research findings [43]. The evolution of dietary assessment tools has introduced a diverse array of portion size estimation methods, ranging from traditional physical aids to sophisticated digital applications, each with distinct strengths, limitations, and contextual suitability.

The validation of these methods against criterion standards forms the essential evidence base for researchers to make informed decisions. This guide provides a systematic comparison of contemporary portion size estimation methods, synthesizing validation data from recent studies to assist researchers, scientists, and drug development professionals in selecting the most appropriate tool for specific research contexts and populations. By aligning methodological capabilities with research requirements, investigators can optimize the quality of dietary intake data collected in studies ranging from large-scale epidemiological surveys to clinical trials and behavioral interventions.

Comparative Analysis of Portion Size Estimation Methods

The table below summarizes the performance characteristics of major portion size estimation methods as validated in recent scientific literature.

Table 1: Comparison of Portion Size Estimation Methods and Their Validation

| Method | Research Context | Population | Key Validation Findings | Equivalence to Criterion | Limitations |

|---|---|---|---|---|---|

| 3D Cubes (GDQS App) [10] | Diet quality assessment | Adults (18+) | GDQS equivalent to WFR within 2.5-point margin (p=0.006); Moderate agreement (κ=0.57) for poor diet quality risk | Equivalent | Requires 3D printed cubes; Liquid oils had low agreement (κ=0.059) |

| Playdough (GDQS App) [10] | Diet quality assessment | Adults (18+) | GDQS equivalent to WFR within 2.5-point margin (p<0.001); Moderate agreement (κ=0.58) for poor diet quality risk | Equivalent | May not be suitable for all food types |

| PortionSize App [42] [6] | Real-time dietary feedback | Adults (18-65 years) | Overestimated energy by 83.5 kcal (12.7%); Equivalent for gram weight (p=0.01), fruits, dairy; Not equivalent for carbs, fat, vegetables, grains, protein | Mixed results | Overestimates energy intake; Requires smartphone proficiency |

| Text-Based PSE (TB-PSE) [43] | Controlled food intake studies | Adults (20-70 years) | 0% median relative error; 31% of estimates within 10% of true intake; 50% within 25% of true intake | Moderate to high accuracy | Relies on understanding of household measures |

| Image-Based PSE (IB-PSE) [43] | Controlled food intake studies | Adults (20-70 years) | 6% median relative error; 13% of estimates within 10% of true intake; 35% within 25% of true intake | Lower than TB-PSE | Influenced by perception, conceptualization, and memory |

| Food Atlas (Balkan Region) [44] | Population dietary surveys | Nutrition professionals & laypersons | 80-85% of items quantified within acceptable range; 60.2% selected correct portion on average | High for cultural-specific foods | Requires cultural adaptation; Limited to photographed foods |

| Intake24 (Online Tool) [45] | School-based dietary surveys | Children (11-12 years) | Good agreement with 3D models (mean ratio 1.00); Energy estimates 1% lower than food models | Equivalent to 3D models | Web-based requirement; Limited to database foods |

Experimental Protocols and Methodologies

Validation Study Designs

Repeated Measures Design for GDQS App Validation [10] A comprehensive validation study for the GDQS app with cubes and playdough employed a repeated measures design with 170 adult participants. The protocol spanned three consecutive days: Day 1 involved in-person training on weighing foods and using dietary scales; Day 2 consisted of participants weighing and recording all consumed foods using weighed food records (WFR); Day 3 included face-to-face GDQS app interviews using both cubes and playdough portion estimation methods. The study used paired two one-sided t-tests (TOST) with a pre-specified 2.5-point equivalence margin to compare GDQS scores derived from each method against the WFR criterion standard. This rigorous design allowed for direct comparison of methods under controlled conditions while simulating real-world application.