Wearable Sensors for Dietary Intake Monitoring: A Research and Clinical Applications Review

This article provides a comprehensive analysis of wearable sensor technology for objective dietary monitoring, addressing a critical need for researchers, scientists, and drug development professionals.

Wearable Sensors for Dietary Intake Monitoring: A Research and Clinical Applications Review

Abstract

This article provides a comprehensive analysis of wearable sensor technology for objective dietary monitoring, addressing a critical need for researchers, scientists, and drug development professionals. It explores the foundational principles of sensor modalities—including acoustic, inertial, optical, and physiological sensors—and their application in detecting eating episodes and behaviors. The content delves into methodological approaches for data acquisition and analysis, examines current challenges related to accuracy and privacy, and evaluates validation protocols and comparative performance against traditional dietary assessment methods. By synthesizing recent advancements and identifying future trajectories, this review serves as a strategic resource for integrating these technologies into clinical trials, nutritional epidemiology, and precision medicine initiatives.

The Science Behind Dietary Monitoring: Core Principles and Sensor Modalities

Accurate dietary intake measurement is fundamental for nutrition research, chronic disease management, and public health monitoring, yet it remains notoriously challenging due to the limitations of self-report methods [1]. Traditional approaches including food records, 24-hour recalls, and food frequency questionnaires (FFQs) are susceptible to significant random and systematic measurement errors that compromise data quality [1] [2]. These methods rely heavily on participant memory, literacy, and motivation, often resulting in underreporting or overreporting, particularly for foods perceived as socially desirable or undesirable [1]. The rapid advancement of wearable sensing technologies and objective measurement tools presents a paradigm shift, offering solutions to overcome these fundamental limitations and usher in a new era of precision nutrition research [3] [2].

Within research on wearable sensors for dietary intake monitoring, the move toward objective data collection is driven by the need to capture accurate, reliable, and unbiased dietary behaviors in free-living conditions. This technical guide examines the critical need for objective dietary data, surveys the current technological landscape with a focus on wearable sensors, and provides detailed methodological frameworks for implementing these approaches in research settings aimed at clinical and drug development applications.

A Critical Examination of Traditional Dietary Assessment Methods

Traditional dietary assessment methods each carry distinct strengths and weaknesses that make them suitable for specific research contexts but problematic for others [1]. The table below provides a systematic comparison of the primary self-report methods used in research settings.

Table 1: Comparative Analysis of Traditional Dietary Assessment Methods

| Characteristic | 24-Hour Recall | Food Record | Food Frequency Questionnaire (FFQ) | Screening Tools |

|---|---|---|---|---|

| Scope of interest | Total diet | Total diet | Total diet or specific components | One or a few components |

| Time frame | Short term | Short term | Long term | Varies (often prior month/year) |

| Measurement error | Random | Random | Systematic | Systematic |

| Potential for reactivity | Low | High | Low | Low |

| Time required to complete | >20 minutes | >20 minutes | >20 minutes | <15 minutes |

| Memory requirements | Specific | None | Generic | Generic |

| Cognitive difficulty | High | High | Low | Low |

| Suitable study designs | Cross-sectional, prospective, intervention | Prospective, intervention | Cross-sectional, retrospective, prospective | Cross-sectional, intervention |

Fundamental Limitations and Measurement Errors

The accuracy of self-reported dietary data is fundamentally constrained by several factors. Reactivity represents a significant concern, particularly with food records, where participants may alter their usual dietary patterns for ease of recording or to report foods perceived as "healthy" [1]. Memory dependence affects 24-hour recalls and FFQs, with the latter relying on generic memory rather than specific recall of recent intake [1].

The most substantial limitation concerns systematic measurement errors, particularly the pervasive issue of energy underreporting [1]. Recovery biomarkers, which exist only for energy, protein, sodium, and potassium, have revealed that all self-report methods contain systematic errors, with 24-hour recalls representing the least biased estimator among traditional methods [1]. Furthermore, participant burden often leads to declined quality of reporting over time, while literacy and physical ability requirements limit applicability across diverse populations [1].

The Emergence of Objective Measurement Technologies

The Paradigm Shift Toward Objective Data Collection

The rapid development of sensing technologies and artificial intelligence has inspired a fundamental shift toward objective data collection methods capable of overcoming the limitations of self-reports [2]. These technologies aim to capture dietary behaviors automatically, continuously, and unobtrusively in free-living environments, thereby reducing recall bias, social desirability bias, and participant burden [3] [2].

Objective measurement technologies span wearable and remote solutions that collect data directly from individuals or provide indirect information on food choices and intake [2]. These approaches cover the entire continuum from food-evoked emotions to food choice, eating action detection, food type identification, and quantification of consumed amounts [2]. For research on wearable sensors for dietary monitoring, this represents a critical advancement toward achieving comprehensive dietary assessment in real-world settings.

Categorization of Objective Measurement Approaches

Objective measurement technologies can be categorized into five primary domains based on their functionality and application in nutrition research:

- Detecting food-related emotions: Technologies capturing physiological responses correlated with emotional states during eating episodes

- Monitoring food choices: Systems tracking selection decisions before consumption

- Detecting eating actions: Sensors identifying the initiation, duration, and cessation of eating events

- Identifying type of food consumed: Platforms classifying specific foods and beverages ingested

- Estimating amount of food consumed: Tools quantifying volume or weight of intake [2]

These technologies encompass both wearable solutions (e.g., jaw-mounted sensors, smart glasses, wrist-worn devices) and remotely applied solutions (e.g., smartphone cameras, ambient sensors) that collect data directly from individuals or provide indirect information on consumers' food choices and dietary intake [2].

Wearable Sensing Technologies for Dietary Monitoring

Current State of Wearable Sensing Technology

Wearable sensors represent the cutting edge of objective dietary monitoring, offering the potential for continuous, unobtrusive measurement of eating behaviors in free-living conditions [3]. The systematic review protocol by Zhou et al. (2025) highlights the "rapid advancement of wearable sensing technology" that "presents a promising solution for effective dietary monitoring by reducing recall bias and enhancing user convenience" [3]. This technology shows particular promise for both clinical chronic disease management and nutritional research applications [3].

Recent research has demonstrated multiple technological approaches to wearable sensing for diet monitoring, including jawbone-mounted inertial sensing for eating episode detection [3], acoustic sensors for chewing sound analysis [3], and intelligent eyewear that can detect food consumption through physiological responses [2]. These approaches leverage various data modalities including motion, sound, and physiological signals to detect and characterize eating episodes without requiring active user input.

Methodological Framework for Wearable Sensor Implementation

Implementing wearable sensing technology in dietary monitoring research requires careful methodological planning across several dimensions:

Table 2: Key Methodological Considerations for Wearable Sensor Studies

| Dimension | Considerations | Technical Requirements |

|---|---|---|

| Sensor selection | Type of data (motion, acoustic, etc.), form factor, battery life | Sampling rate, memory storage, connectivity options |

| Study protocol | Duration, free-living vs. controlled, reference intake measures | Standardized procedures for sensor placement, calibration |

| Data processing | Signal preprocessing, feature extraction, event detection | Computational pipelines, artifact removal algorithms |

| Validation approach | Comparison with ground truth (weighed food, video), accuracy metrics | Standardized validation metrics (F1-score, precision, recall) |

The critical technical challenge lies in developing systems that balance accuracy with practical applicability in real-world settings while managing participant burden and privacy concerns [2]. Multi-sensor systems that combine complementary data modalities (e.g., inertial measurement units with acoustic sensors) often show improved performance but at the cost of increased complexity and participant burden [3].

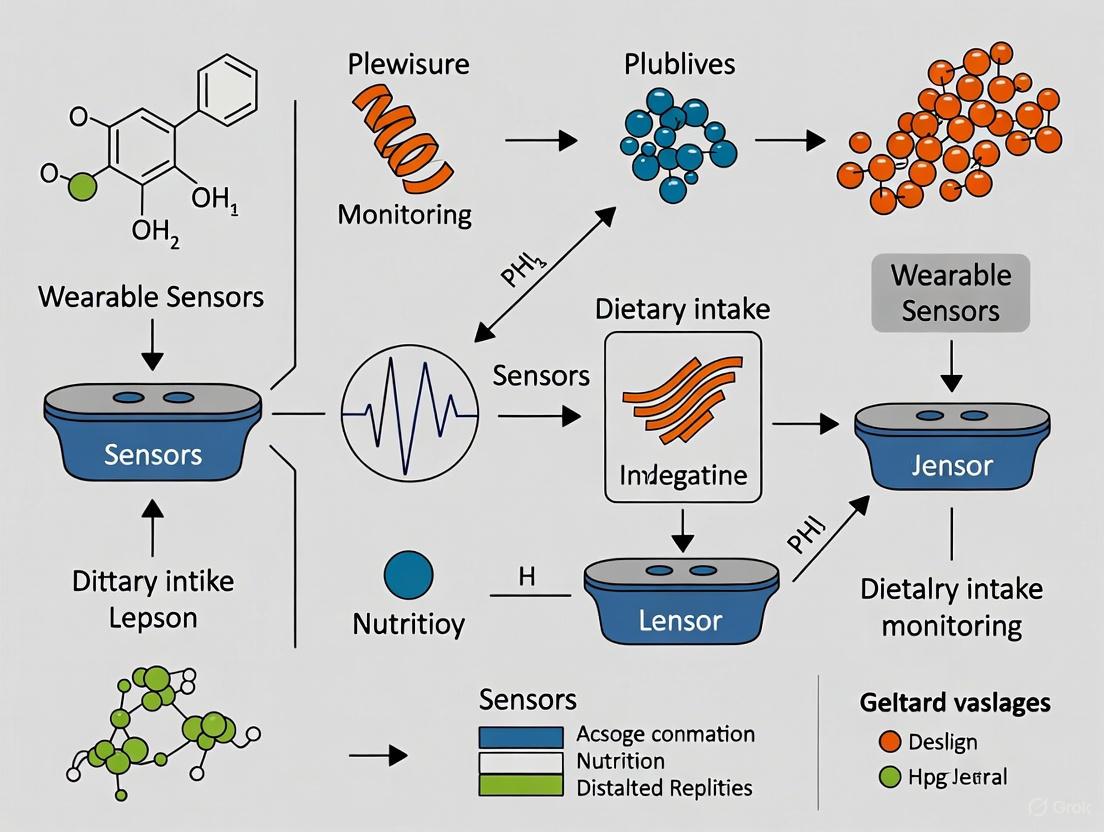

Figure 1: Wearable Sensor Data Processing Workflow

Image-Based Dietary Assessment Methods

Technological Foundations of Image-Based Analysis

Image-based food monitoring represents another major approach to objective dietary assessment, leveraging advances in computer vision and deep learning to automatically estimate nutritional intake from food images [4]. These systems typically operate through a structured pipeline involving food image segmentation, food recognition, volume estimation, and calorie calculation [4].

The core stages of image-based dietary assessment include:

- Food Segmentation: Isolating food items from the background or other objects in the image using convolutional neural networks (CNNs) and instance segmentation algorithms

- Food Classification: Identifying specific food types through deep learning models trained on large-scale food image datasets

- Volume Estimation: Calculating portion sizes through geometric modeling or reference object comparison

- Calorie Calculation: Integrating classification and volume data with nutritional databases to determine caloric and nutrient content [4]

These methodologies have shown particular promise for diabetes management and other weight-related chronic diseases where precise caloric monitoring is essential [4].

Implementation Protocols for Image-Based Assessment

Implementing image-based dietary assessment requires careful protocol design across several dimensions:

Table 3: Image-Based Food Analysis Implementation Framework

| Component | Technical Requirements | Implementation Options |

|---|---|---|

| Image capture | Resolution, lighting, angle consistency | Smartphone cameras, specialized devices |

| Segmentation | Pixel-level accuracy, boundary detection | CNN architectures (U-Net, Mask R-CNN) |

| Classification | Multi-class accuracy, food taxonomy | Transfer learning, ensemble methods |

| Volume estimation | Depth perception, shape modeling | 3D reconstruction, reference objects |

| Calorie calculation | Nutrient database integration | USDA FoodData Central, custom databases |

Recent applications have demonstrated the feasibility of fully automated systems that operate entirely on smartphones without requiring data transmission to external servers, thereby addressing privacy concerns and improving accessibility [4]. However, challenges remain in achieving accurate volume estimation without user input or specialized devices, and in validating these systems across diverse food cultures and eating environments [4].

Experimental Protocols for Dietary Monitoring Research

Protocol Framework for Wearable Sensor Validation

Robust experimental protocols are essential for validating objective dietary monitoring technologies. The systematic review protocol by Zhou et al. offers a comprehensive framework for evaluating wearable sensing technologies, following Preferred Reporting Items for Systematic Reviews and Meta-Analysis Protocols (PRISMA-P) guidelines [3]. Key elements include:

- Comprehensive literature search across multiple databases (MEDLINE, EMBASE, PubMed, IEEE Xplore, Web of Science)

- Strict inclusion criteria focusing on studies involving human participants using wearable sensors for dietary intake monitoring

- Systematic evaluation of sensor design, performance metrics, and user experience

- Exclusion of studies focusing solely on algorithm or application development without human testing [3]

For primary research studies, protocol design should include controlled feeding sessions to establish ground truth, followed by free-living validation to assess real-world performance. Studies should specifically report on sensor performance metrics including eating episode detection accuracy, food classification precision and recall, and energy intake estimation error compared to reference methods like doubly labeled water [3] [2].

Statistical Analysis and Dietary Pattern Analysis

Beyond data collection, advanced statistical methods are required to derive meaningful dietary patterns from complex intake data. Emerging approaches include:

- Finite Mixture Models (FMM): Model-based clustering methods that identify subpopulations with distinct dietary patterns

- Treelet Transform (TT): Combines principal component analysis and clustering algorithms in a one-step process

- Data Mining (DM): Discovers patterns in large dietary datasets while considering health outcomes

- Least Absolute Shrinkage and Selection Operator (LASSO): Selects relevant food items predictive of health outcomes

- Compositional Data Analysis (CODA): Transforms dietary intake into log-ratios to account for the compositional nature of diet data [5]

These methods enable researchers to move beyond simple nutrient analysis to capture the complex, multidimensional nature of dietary intake and its relationship to health outcomes [5].

Figure 2: Experimental Validation Protocol Framework

The Scientist's Toolkit: Key Research Reagents and Technologies

Core Technologies for Objective Dietary Monitoring

Table 4: Essential Research Technologies for Objective Dietary Assessment

| Technology Category | Specific Examples | Research Application | Key Function |

|---|---|---|---|

| Wearable Inertial Sensors | Jawbone-mounted sensors, wrist-worn accelerometers | Eating episode detection, chew count quantification | Captures motion patterns associated with eating gestures and jaw movement |

| Acoustic Sensors | Contact microphones, in-ear audio recorders | Food texture characterization, swallowing detection | Analyzes chewing and swallowing sounds to identify food properties |

| Computer Vision Systems | Smartphone cameras, specialized imaging devices | Food identification, portion size estimation | Automates food recognition and volume estimation through image analysis |

| Physiological Sensors | Electromyography (EMG), glucose monitors, intelligent eyewear | Metabolic response tracking, eating event detection | Monitors physiological correlates of food intake and metabolic processing |

| Integrated Sensor Platforms | Multi-sensor systems combining complementary modalities | Comprehensive dietary behavior capture | Provides complementary data streams to improve accuracy through sensor fusion |

Successful implementation of objective dietary monitoring requires not only sensing technologies but also robust validation methodologies and analytical frameworks:

- Reference Databases: Standardized food image datasets (e.g., Food-101, UFC-Food100), nutrient databases (e.g., USDA FoodData Central)

- Validation Protocols: Doubly labeled water for energy expenditure, weighed food records for intake validation, controlled feeding studies

- Analytical Tools: Compositional data analysis packages, dietary pattern analysis software, machine learning frameworks for sensor data processing [5] [4]

Future Directions and Implementation Challenges

Key Challenges in Objective Dietary Monitoring

Despite significant advances, objective dietary monitoring technologies face several implementation challenges that must be addressed for widespread adoption:

- Real-World Applicability: Balancing technical performance with practical usability in free-living environments remains challenging [2]

- Validation Across Diverse Populations: Ensuring accuracy across different age groups, ethnicities, food cultures, and health conditions [4]

- Participant Burden and Compliance: Designing systems that minimize interference with normal eating behaviors while collecting high-quality data [3]

- Data Privacy and Security: Protecting sensitive health and dietary information collected through continuous monitoring [4]

- Standardization and Interoperability: Establishing common metrics, protocols, and data formats to enable comparison across studies [2]

Emerging Opportunities and Research Priorities

The field of objective dietary monitoring presents numerous opportunities for future research and technological development:

- Multi-Modal Sensor Fusion: Combining complementary data streams (inertial, acoustic, visual, physiological) to improve accuracy and robustness [3] [2]

- Artificial Intelligence Advancements: Leveraging deep learning and transfer learning to improve food recognition and portion estimation across diverse food types [4]

- Integration with Health Monitoring Platforms: Connecting dietary assessment with physiological monitoring for comprehensive health tracking [2]

- Personalized Nutrition Applications: Enabling real-time dietary feedback and personalized recommendations based on objective intake data [4]

- Large-Scale Epidemiological Research: Deploying objective monitoring technologies in cohort studies to establish more precise diet-disease relationships [3]

For researchers and drug development professionals, the critical need for objective dietary data is no longer a theoretical concern but an imperative driven by the limitations of traditional methods and the growing availability of sophisticated sensing technologies. By adopting and refining these approaches, the research community can overcome fundamental measurement challenges and advance our understanding of diet-health relationships with unprecedented precision and reliability.

The emergence of sophisticated wearable sensor technology is revolutionizing dietary intake monitoring, moving the field beyond traditional, subjective methods like food diaries and toward objective, data-driven research. Accurate dietary assessment is critical for understanding the onset and progression of chronic diseases such as type 2 diabetes, heart disease, and obesity [6]. Wearable sensors offer a solution to the limitations of self-reporting by enabling continuous, objective data collection in naturalistic settings, thereby minimizing recall bias and enhancing user convenience [6]. This technical guide provides a taxonomy of wearable sensors, framing their functionality and application within the specific context of dietary intake monitoring research for scientists and drug development professionals. It explores how these sensors operate individually and synergistically to capture the complex physiological and behavioral signals associated with eating.

A Multi-Dimensional Taxonomy of Wearable Sensors

Wearable sensors for monitoring human health and behavior can be categorized into four primary dimensions based on the type of data they capture. The following table summarizes these core dimensions and their relevance to dietary monitoring.

Table 1: Core Dimensions of Wearable Sensing for Dietary Monitoring

| Monitoring Dimension | Key Sensor Types | Measured Parameters | Application in Dietary Intake Research |

|---|---|---|---|

| Physiological | Photoplethysmography (PPG), Electrocardiogram (ECG), Temperature, Electrodermal Activity (EDA) | Heart Rate (HR), Heart Rate Variability (HRV), Core Temperature, Stress Arousal [7] [8] | Captures autonomic nervous system responses to food intake; monitors stress and energy expenditure [9]. |

| Kinematic | Inertial Measurement Units (IMUs), Accelerometers, Gyroscopes | Body Movement, Velocity, Acceleration, Joint Angles, Hand/Wrist Gestures [7] | Detects eating-related gestures (e.g., hand-to-mouth movements) and characterizes chewing cycles [6]. |

| Biochemical | Electrochemical Sensors, Continuous Glucose Monitors (CGM), Sweat Biosensors | Glucose, Lactate, Cortisol, Electrolytes (Na+, K+) [7] [10] | Provides direct readouts of metabolic response to food intake (e.g., postprandial glucose levels) [10]. |

| Acoustic | Microphones, Acoustic Sensors | Chewing Sounds, Swallowing Sounds [6] | Identifies and characterizes ingestion events based on audio signatures of mastication and deglutition. |

Kinematic and Acoustic Sensing: Detecting Eating Behaviors

Kinematic monitoring focuses on the temporal and spatial characteristics of human movement. Inertial Measurement Units (IMUs), which often combine accelerometers and gyroscopes, are the primary sensors in this category [7]. In dietary research, their key application is the detection of eating-related gestures, specifically hand-to-mouth movements, which serve as a behavioral proxy for bite intake [6]. Furthermore, high-fidelity kinematic sensors can capture the distinct patterns of jaw movement during chewing, allowing for the estimation of chewing count and rate.

Acoustic sensing, using miniature microphones, complements kinematic data by capturing the sounds produced during chewing and swallowing [6]. The fusion of kinematic and acoustic data significantly improves the accuracy of eating event detection compared to using either modality alone, helping to distinguish actual eating from similar motions like face-touching or talking.

Physiological Sensing: Measuring Metabolic and Autonomic Responses

Physiological sensors provide insights into the body's internal state. For dietary monitoring, several parameters are key:

- Continuous Glucose Monitoring (CGM): CGM sensors measure glucose levels in interstitial fluid, providing a direct and continuous view of the glycemic response to food intake [10]. This allows researchers to move beyond crude carbohydrate estimates to understanding individual glycemic variability.

- Photoplethysmography (PPG): Typically found in wrist-worn devices, PPG can be used to derive heart rate (HR) and heart rate variability (HRV) [7]. HRV, a marker of autonomic nervous system balance, can reflect metabolic load and postprandial physiological stress [7] [9].

- Electrodermal Activity (EDA): EDA measures changes in the skin's electrical conductivity due to sweating, which is linked to sympathetic nervous system arousal [8]. It can be used to investigate stress-related eating or physiological responses to different food types.

Biochemical Sensing: Expanding the Molecular Window

While CGM is the most established biochemical wearable, research is exploring other non-invasive biomarkers. Wearable sweat biosensors are being developed to measure analytes like lactate and cortisol, which could provide further insights into energy metabolism and stress responses during nutritional studies [7]. However, challenges remain in calibration stability and the precise mapping of sweat analyte concentrations to blood levels [7].

The diagram below illustrates how data from these diverse sensors is integrated to form a comprehensive picture of dietary behavior and its metabolic consequences.

Experimental Protocols for Dietary Monitoring Research

Implementing wearable sensors in dietary research requires rigorous protocols to ensure data quality and validity. The following section details methodologies for key experiment types.

Protocol for Multi-Sensor Eating Event Detection

Objective: To validate the accuracy of a kinematic-acoustic sensor system for automatically detecting and characterizing eating episodes in a free-living environment.

Materials:

- Inertial Measurement Unit (IMU) with accelerometer and gyroscope.

- Miniature body-worn microphone (acoustic sensor).

- eButton or similar chest-worn camera for ground truth image capture [10].

- Data logger or smartphone for data storage/synchronization.

Procedure:

- Sensor Deployment: Fit participants with the sensor suite. The IMU is typically placed on the wrist of the dominant hand. The acoustic sensor is attached to the neck or sternum region. The eButton is worn on the chest.

- Calibration: Perform a brief sensor calibration sequence (e.g., prescribed arm movements) at the start of each recording day.

- Data Collection: Participants go about their daily activities for a minimum of 8 hours, encompassing at least one main meal. They are instructed not to remove the devices.

- Ground Truth Logging: Participants use the eButton to capture images of all food and beverages before and after consumption [10]. They may also keep a brief paper diary to note meal start and end times.

- Data Processing:

- Kinematic Feature Extraction: From the IMU data, extract features such as rotational velocity of the wrist and repetitive motion patterns indicative of hand-to-mouth movement.

- Acoustic Feature Extraction: From the audio data, extract features related to the frequency and amplitude of chewing and swallowing sounds.

- Sensor Fusion: Use a machine learning classifier (e.g., a support vector machine or random forest) trained on the extracted features to detect eating episodes. The video or image data from the eButton provides the ground truth for training and validating the model [10].

Validation: Algorithm performance is reported using standard metrics: accuracy, precision, recall, and F1-score, calculated by comparing detected eating events against the ground truth [6].

Protocol for Investigating Glucose Response using CGM

Objective: To correlate continuous glucose measurements with food intake data to understand individual glycemic responses to different foods.

Materials:

- Continuous Glucose Monitor (e.g., Freestyle Libre Pro) [10].

- Wearable food imaging device (e.g., eButton) or detailed food diary application.

- Data analysis platform for CGM and dietary data integration.

Procedure:

- Sensor Deployment: A research professional applies the CGM sensor to the participant's upper arm according to the manufacturer's instructions [10]. Participants are trained on the use of the eButton.

- Study Duration: The monitoring period typically lasts 10-14 days to capture a variety of meals and foods [10].

- Dietary Recording: For each eating episode, participants use the eButton to capture images of their food, which are later analyzed to determine food type and portion size, and consequently, nutrient composition (e.g., carbohydrate grams) [10].

- Data Integration: Time-synchronized CGM data and food intake records are merged. The glucose trace is analyzed for measures such as:

- Postprandial Glucose Excursion: The peak increase in glucose levels following a meal.

- Time-in-Range: The percentage of time glucose remains within a target range.

- Glycemic Variability: The degree of fluctuation in glucose levels throughout the day.

- Analysis: Statistical models (e.g., linear mixed-effects models) are used to associate specific foods, meal compositions, or eating behaviors (like meal timing) with the glycemic response metrics.

The Researcher's Toolkit for Wearable Dietary Monitoring

Successfully deploying wearable sensors in dietary research requires a suite of tools and a critical awareness of data quality. The table below lists essential "research reagent solutions" for building a robust dietary monitoring study.

Table 2: Essential Toolkit for Wearable Dietary Monitoring Research

| Tool Category | Specific Examples | Function & Importance |

|---|---|---|

| Sensor Platforms | Empatica E4, Hexoskin, ActiGraph, Custom eButton [8] [10] | Research-grade devices that provide raw data from multiple biosignals (ACC, EDA, PPG, TEMP, ECG) essential for algorithm development and validation. |

| Data Quality Toolkit | Data Completeness Score, On-Body Score, Signal Quality Indices (SQI) [8] | Metrics to quantify data loss, wear time, and signal fidelity. Critical for ensuring data reliability and interpreting study results, as all modalities are affected by artifacts [8]. |

| Ground Truth Tools | Chest-worn Camera (eButton), 24-hour Dietary Recall, Food Diaries [10] | Provides the objective reference standard against which the performance of automated dietary intake detection algorithms is measured. |

| Analysis & Fusion Software | OpenSense, Signal Processing Toolboxes (Python, MATLAB), Machine Learning Libraries (scikit-learn, TensorFlow) [7] | Software for processing raw sensor data, extracting relevant features, and implementing sensor fusion and classification models to translate signals into dietary insights. |

A crucial, often overlooked component is the Data Quality Toolkit. In real-world deployments, data is invariably corrupted by artifacts. A systematic evaluation should include [8]:

- Data Completeness: The percentage of recorded versus expected data samples, identifying periods when the device was off or not recording.

- On-Body Score: An estimate of the percentage of time the device was actually worn on the body, as opposed to being placed on a table, which is vital for interpreting data gaps.

- Modality-Specific Signal Quality: Quantitative scores (e.g., for PPG or EDA) that reflect the signal-to-noise ratio and the presence of motion artifacts. These scores are often higher at night than during the day [8].

The following diagram outlines a standard workflow for ensuring data quality and processing data from collection to analysis.

The taxonomy presented here—spanning kinematic, acoustic, physiological, and biochemical sensors—provides a structured framework for selecting and deploying wearable technologies in dietary intake monitoring. The future of this field lies not merely in multidimensional measurement, but in the development of a verifiable, reusable, and deployable precision-monitoring ecosystem [7]. For researchers and drug development professionals, this means moving from a "signal-available" to a "decision-ready" paradigm, where fused sensor data delivers actionable metrics on dietary behavior and its metabolic consequences. Overcoming challenges related to usability, data quality, and model generalizability will be key to unlocking the full potential of wearables in generating robust, objective evidence for nutritional science and therapeutic development.

The accurate assessment of dietary intake and eating behaviors represents a fundamental challenge in nutritional science, epidemiology, and chronic disease research. Traditional methods such as 24-hour recalls, food diaries, and food frequency questionnaires rely on self-reporting and are susceptible to significant limitations including recall bias, social desirability bias, and substantial participant burden [11] [12] [13]. These limitations have constrained our understanding of the complex, dynamic processes that characterize human eating behavior. The emergence of wearable sensor technologies has created new paradigms for objective dietary monitoring, enabling researchers to capture rich, high-resolution data on eating behaviors in free-living settings with minimal user interaction [11] [14]. This whitepaper delineates the key metrics of eating behavior—from micro-level movements like chewing and biting to macro-level meal patterns—that can be quantified using wearable sensors, framing them within the context of advanced dietary monitoring research for scientific and drug development applications.

Micro-Behavioral Metrics: The Building Blocks of Eating

The microstructure of eating encompasses the detailed components of eating episodes, including chewing, biting, and swallowing. These metrics provide insights into eating mechanics that are difficult to capture through self-report but have significant implications for energy intake and satiety.

Chewing and Swallowing Metrics

Chewing parameters serve as proxies for food texture, eating rate, and potentially, energy intake. Wearable sensors can detect and quantify:

- Chew Count: The total number of chewing cycles during an eating episode. In controlled studies, the number of chews has been identified as a top predictive feature for detecting overeating episodes [15].

- Chew Rate/Frequency: The number of chews per unit time, which can indicate eating pace.

- Chew Interval: The time between consecutive chews, which may reflect food properties or eating style.

- Chew-Bite Ratio: The number of chews per bite, which can vary based on food type and individual habits [15].

Swallowing detection, often captured through acoustic sensors or neck-mounted accelerometers, provides complementary data on ingestion timing and frequency [14].

Biting and Hand-to-Mouth Gestures

Biting represents the initiation of food intake and can be monitored through several approaches:

- Bite Count: The total number of bites during an eating episode. Computer vision approaches like ByteTrack have achieved 79.4% average precision and 67.9% recall in automatically detecting bites from meal videos in pediatric populations [16].

- Bite Rate: The speed of biting, typically measured in bites per minute, which has been linked to overconsumption.

- Bite Detection Latency: The time between food presentation and first bite.

Hand-to-mouth movements, detected via wrist-worn inertial sensors (accelerometers and gyroscopes), serve as behavioral proxies for bites, particularly when direct visual monitoring isn't feasible [12] [17].

Table 1: Micro-Behavioral Eating Metrics and Monitoring Technologies

| Metric Category | Specific Metrics | Common Sensing Modalities | Research Applications |

|---|---|---|---|

| Chewing | Chew count, chew rate, chew interval, chew-bite ratio | Acoustic sensors, strain sensors, jaw motion sensors, piezoelectric sensors | Predicting overeating, characterizing food texture effects, eating pace interventions |

| Swallowing | Swallow count, swallow frequency, apnea detection | Acoustic sensors (microphones), neck-mounted accelerometers, piezoelectric sensors | Monitoring ingestion timing, detecting swallowing disorders, meal duration assessment |

| Biting | Bite count, bite rate, bite size estimation | Wrist-worn IMUs, computer vision, surface EMG | Eating speed interventions, portion size estimation, microstructure analysis |

| Hand Gestures | Hand-to-mouth movement frequency, duration, acceleration patterns | Wrist-worn accelerometers, gyroscopes, magnetometers | Free-living eating detection, distinguishing eating from other activities |

Meso-Scale Metrics: Integrating Micro-Behaviors into Eating Episodes

Meso-scale metrics describe the characteristics of complete eating episodes, synthesizing micro-behaviors into holistic patterns with clinical and research significance.

Temporal Patterns of Eating

The timing of eating episodes has emerged as a significant factor in metabolic health and energy regulation:

- Meal Duration: The total time from the first to the last bite of an eating episode. Longer meal durations have been associated with greater satiety and reduced total intake.

- Eating Rate: Overall speed of consumption, typically measured as grams or calories consumed per minute. This can be derived from bite rate and average bite size estimates.

- Meal Timing: The time of day when eating occurs, with evening and late-night eating being associated with distinct metabolic consequences [18] [15].

- Pause Patterns: The frequency and duration of pauses within meals, which may reflect satiety development.

Contextual and Behavioral Dimensions

The circumstances surrounding eating episodes significantly influence food choices and consumption amounts:

- Eating Location: Home, restaurant, workplace, etc., with restaurant eating being associated with different overeating patterns [18] [15].

- Social Context: Eating alone versus with others, which affects meal duration, food choices, and quantity consumed.

- Psychological State: Pre-meal emotional states including stress, happiness, or boredom that trigger eating.

- Cognitive Factors: Perceptions of control, distraction levels, and mindful eating practices during meals.

Macro-Scale Metrics: Patterns Across Eating Episodes

Macro-scale metrics encompass the broader patterns that emerge across multiple eating episodes, providing insights into habitual eating behaviors with long-term health implications.

Dietary Intake Patterns

Traditional nutritional epidemiology has focused on what and how much people consume:

- Energy Intake: Total caloric consumption, though accurate assessment remains challenging with wearable technologies alone [13].

- Macronutrient Distribution: Proportions of carbohydrates, fats, and proteins in the diet.

- Food Choice Patterns: Preferences for specific food categories or nutritional profiles.

- Meal Frequency: The number of discrete eating episodes per day.

Identified Overeating Phenotypes

Groundbreaking research using semi-supervised learning on longitudinal sensor data has identified five distinct overeating phenotypes that demonstrate the complex interplay between behavioral, psychological, and contextual factors [18] [15]:

- Take-out Feasting: Characterized by indulging in restaurant-sourced meals (take-out), often in social settings.

- Evening Restaurant Reveling: Pleasure-driven indulgence in food, with a preference for restaurant-sourced meals (dine-in), typically consumed in the evening as part of social dining experiences.

- Evening Craving: Eating in the evening, often involving self-prepared meals and characterized by hunger, serving as a way to unwind.

- Uncontrolled Pleasure Eating: Focused on the hedonic aspect of food, involving eating for pleasure, often perceived as overeating with loss of control, and accompanied by task-oriented distractions.

- Stress-driven Evening Nibbling: Evening eating in response to stress and feelings of loneliness.

These phenotypes demonstrate that overeating is not a unitary behavior but manifests through distinct patterns requiring personalized intervention approaches.

Experimental Protocols for Eating Behavior Research

Robust experimental methodologies are essential for advancing the field of sensor-based eating behavior monitoring.

Multi-Sensor Data Collection Protocol

The Northwestern University SenseWhy study established a comprehensive protocol for capturing free-living eating behaviors [18] [15]:

- Participant Profile: 48 adults with obesity (77.1% female, mean age 41 years) providing 2302 meal-level observations.

- Sensor Array:

- HabitSense Bodycam: An activity-oriented wearable camera using thermal sensing to trigger recording only when food enters the camera's field of view, addressing privacy concerns.

- NeckSense Necklace: A custom necklace detecting eating behaviors including chewing speed, bite count, and hand-to-mouth movements.

- Wrist-worn Activity Tracker: Similar to commercial FitBit or Apple Watch devices, capturing hand movements.

- Ecological Momentary Assessment (EMA): Smartphone-based surveys collecting pre- and post-meal psychological and contextual data, including mood, hunger, satiety, and social context.

- Dietitian-Administered 24-hour Recalls: Traditional dietary assessment for validation purposes.

- Data Annotation: Manual labeling of micromovements from 6343 hours of video footage spanning 657 days.

This multi-modal approach achieved high performance in predicting overeating episodes (mean AUROC = 0.86; mean AUPRC = 0.84) when combining EMA-derived features with passive sensing data [15].

Protocol for Physiological Response Monitoring

Emerging research explores physiological correlates of eating beyond behavioral metrics [17]:

- Study Design: Controlled feeding study with randomized high- and low-calorie meals.

- Sensor Platform: Customized multi-sensor wristband integrating:

- Pulse Oximeter: For heart rate (HR) and oxygen saturation (SpO2) tracking.

- Photoplethysmography (PPG) Sensor: For continuous cardiovascular monitoring.

- Skin Temperature Sensor: For monitoring peripheral temperature fluctuations.

- Inertial Measurement Unit (IMU): For capturing eating gestures and hand movements.

- Force Sensor: For ensuring proper wear fit and sensor-skin contact.

- Validation Measures:

- Bedside vital sign monitors for concurrent physiological measurement.

- Intravenous blood sampling for glucose, insulin, and appetite-related hormones.

- Direct observation of food intake.

This protocol aims to establish relationships between eating events, hand movement patterns, and physiological responses, potentially enabling new approaches to dietary monitoring that don't rely on food imaging.

Visualization of Research Workflows

The following diagrams illustrate key experimental workflows and technological approaches in eating behavior research.

The Scientist's Toolkit: Essential Research Reagents and Technologies

Table 2: Essential Research Technologies for Eating Behavior Monitoring

| Technology/Reagent | Function | Example Applications | Performance Metrics |

|---|---|---|---|

| HabitSense Bodycam | Activity-oriented camera recording only when food is present using thermal sensing | Capturing eating context while preserving privacy | Privacy-preserving food activity detection [18] |

| NeckSense Necklace | Detects chewing rate, bite count, hand-to-mouth movements | Detailed microstructure analysis in free-living conditions | Precise eating behavior recording in real-world settings [18] |

| Wrist-worn IMU | Accelerometer, gyroscope, magnetometer for detecting eating gestures | Free-living eating episode detection and bite counting | 76.5% true positive rate in field studies [12] |

| ByteTrack Algorithm | Deep learning system (CNN + LSTM) for automated bite detection from video | Objective bite counting in laboratory meals | 79.4% precision, 67.9% recall in pediatric populations [16] |

| Multi-sensor Wristband | Integrated PPG, temperature, SpO2, IMU for physiological monitoring | Correlating physiological responses with food intake | Measures HR, SpO2, temperature changes post-meal [17] |

| EMA Platforms | Smartphone-based ecological momentary assessment for contextual data | Collecting real-time self-report on context, mood, hunger | 89.26% compliance rate in family studies [12] |

| XGBoost Algorithm | Machine learning for classifying overeating episodes | Predicting overeating from sensor and EMA features | AUROC: 0.86, AUPRC: 0.84 for overeating detection [15] |

The quantitative decoding of eating behavior through wearable sensors represents a transformative advancement in nutritional science and chronic disease research. The comprehensive framework of metrics—spanning micro-behaviors (chewing, biting), meso-scale patterns (meal duration, context), and macro-scale phenotypes (overeating patterns)—provides researchers with unprecedented analytical resolution. The experimental protocols and technologies detailed in this whitepaper establish rigorous methodologies for field-based eating behavior research, enabling more valid and reliable assessment than traditional self-report methods. As these technologies continue to evolve, they offer powerful tools for developing targeted interventions, understanding diet-disease relationships, and creating novel endpoints for clinical trials in nutrition and pharmaceutical development. The integration of multi-modal sensor data with advanced machine learning approaches will further enhance our ability to decode the complex architecture of human eating behavior in free-living populations.

The field of dietary intake monitoring is undergoing a profound transformation, driven by the rapid convergence of advanced biometric technologies and wearable sensors. For researchers and drug development professionals, this evolution presents unprecedented opportunities to move beyond traditional, subjective dietary assessment methods—such as food frequency questionnaires and 24-hour recalls—toward objective, continuous, and physiologically-rich data collection [6] [1]. The global wearable sensors market, valued at USD 2.14 billion in 2024, is projected to exceed USD 13.81 billion by 2034, expanding at a compound annual growth rate (CAGR) of over 20.5% [19]. This growth is paralleled by the emerging biometric technologies market, which is expected to grow from USD 5.5 billion in 2024 to USD 18.5 billion by 2033 at a CAGR of 14.5% [20]. This dual expansion signifies a fundamental shift in how researchers can quantify the physiological and biochemical responses to nutritional intake, enabling more precise clinical trials, personalized nutrition interventions, and robust biomarker discovery for drug development.

Market Analysis: Quantitative Growth Projections

Global Wearable Sensors Market Outlook

The wearable sensors market demonstrates robust growth potential across multiple segments and geographic regions, fueled by technological advancements and increasing application in healthcare and research settings. The table below summarizes the key market projections and regional analysis:

Table 1: Wearable Sensors Market Size and Forecast (2024-2034)

| Parameter | 2024 Value | 2025 Value | 2034 Projection | CAGR |

|---|---|---|---|---|

| Global Market Size | USD 2.14 billion [19] | USD 2.51 billion [19] | USD 13.81 billion [19] | 20.5% [19] |

| Precision Nutrition Wearable Sensors | USD 2.8 billion [21] | USD 3.3 billion [21] | USD 9.4 billion [21] | 12.5% [21] |

| North America Share | - | - | 31% [19] | - |

| Asia-Pacific Growth | - | - | Strong growth with robust CAGR [19] | - |

Table 2: Regional Market Characteristics and Drivers

| Region | Market Characteristics | Key Growth Drivers |

|---|---|---|

| North America | Largest market share (31% by 2034) [19]; Precision nutrition segment: 42.2% share [21] | Advanced healthcare infrastructure, favorable regulatory environment, high consumer adoption, strong R&D investment [21] [19] |

| Europe | Second largest market; USD 777.6 million in 2024 for precision nutrition sensors [21] | Strong healthcare systems, comprehensive regulatory frameworks, focus on preventive medicine [21] |

| Asia Pacific | Fastest growing regional market [21] [19] | Expanding healthcare infrastructure, rising disposable incomes, increasing health awareness, government digital health initiatives [21] [19] |

Emerging Biometrics Technology Segmentation

The biometric technologies market is evolving beyond traditional fingerprint recognition toward multimodal systems capable of providing continuous physiological monitoring. The table below details the key technology segments and their applications relevant to nutritional research:

Table 3: Biometric Technology Segmentation and Applications

| Technology Type | Primary Applications | Relevance to Dietary Monitoring |

|---|---|---|

| AI-driven biometrics [22] [20] | Identity verification, real-time risk assessment [20] | Pattern recognition in eating behaviors, anomaly detection in metabolic responses |

| Behavioral biometrics | Gait analysis, movement patterns [20] | Detection of eating gestures (hand-to-mouth movements), physical activity correlation [6] |

| Physiological monitoring | Stress detection, vitality assessment [20] | Cortisol monitoring, metabolic stress response to nutritional interventions [23] |

| Contactless modalities | Facial recognition, vein pattern analysis [20] | Minimal intrusion monitoring in free-living conditions |

Technological Foundations: Sensor Types and Methodologies

Biochemical Sensing Modalities

Advanced wearable sensors for dietary monitoring employ multiple technological approaches to capture biochemical and physiological data non-invasively. The experimental protocols for these sensing modalities are detailed below:

Experimental Protocol 1: Sweat-Based Biomarker Analysis

- Objective: To continuously monitor biochemical markers in eccrine sweat for assessing metabolic response to nutritional interventions [23] [24].

- Sensor Platform: Flexible epidermal patches or temporary tattoos integrating electrochemical sensors [23].

- Methodology:

- Sensor Calibration: Pre-use calibration in artificial sweat solutions with known biomarker concentrations [23].

- Biomarker Detection: Electrochemical (amperometric or potentiometric) detection of specific biomarkers:

- Signal Processing: On-board or transmitted data processing with compensation for temperature, pH, and sweat rate variations [23].

- Data Validation: Correlation with blood samples or clinical analyzer measurements in controlled settings [23] [24].

- Applications in Nutrition Research: Real-time monitoring of metabolic stress, hydration status, and energy utilization during feeding studies or nutritional interventions [23] [24].

Experimental Protocol 2: Dietary Event Detection via Multi-Modal Sensing

- Objective: To automatically detect eating episodes and characterize feeding behavior in free-living conditions [6].

- Sensor Platform: Multi-sensor systems (e.g., Automatic Ingestion Monitor V.2) incorporating inertial measurement units, acoustic sensors, and electromyography [6].

- Methodology:

- Hand-to-Mouth Gesture Detection: Inertial sensors (accelerometers, gyroscopes) on wrist-worn devices to capture characteristic movements [6].

- Mastication Monitoring:

- Swallowing Detection: Hybrid sensing combining acoustic and mechanical modalities [6].

- Sensor Fusion: Integration of multiple sensor inputs via machine learning algorithms (e.g., convolutional neural networks, recurrent neural networks) to improve detection accuracy and reduce false positives [6].

- Contextual Information: Time-stamping of eating events, meal duration, and eating rate calculation [6].

- Validation: Comparison with video observation, self-reporting, or controlled feeding studies in laboratory settings [6].

The following diagram illustrates the integrated workflow for multi-modal dietary monitoring:

Multi-Modal Dietary Monitoring Workflow

Research Reagent Solutions and Materials

The development and deployment of advanced biometric sensors for dietary monitoring requires specialized research reagents and materials. The table below details essential components and their research applications:

Table 4: Research Reagent Solutions for Biometric Dietary Monitoring

| Reagent/Material | Function | Research Application |

|---|---|---|

| Lactate oxidase enzyme [24] | Biochemical recognition element for lactate sensing | Detection of lactate in sweat as indicator of metabolic stress and energy utilization [23] [24] |

| Glucose oxidase enzyme [24] | Biochemical recognition element for glucose sensing | Monitoring of glucose dynamics in response to carbohydrate intake [24] |

| Ion-selective membranes [23] | Selective detection of specific ions (Na+, K+, Cl-) | Assessment of electrolyte balance and hydration status during nutritional interventions [23] |

| Poly(o-phenylenediamine) film [24] | Electropolymeric entrapment matrix for enzymes | Stabilization of enzymatic biosensors on electrode surfaces [24] |

| Prussian blue-graphite ink [24] | Electrode material with electrocatalytic properties | Facilitation of electron transfer in electrochemical biosensors [24] |

| Antibiofouling membranes [24] | Prevention of nonspecific protein adsorption | Enhancement of sensor stability in biological fluids (saliva, sweat) [24] |

| Flexible polyethylene terephthalate (PET) substrates [24] | Conformable material for wearable sensors | Enable comfortable, continuous wear for real-time monitoring [24] |

Analytical Frameworks: From Data to Biomarkers

The integration of multiple data streams from wearable sensors requires sophisticated analytical frameworks to transform raw sensor data into meaningful nutritional and physiological insights. The following diagram illustrates the complete analytical pathway from data collection to biomarker interpretation:

From Sensor Data to Nutritional Biomarkers

Performance Metrics and Validation Protocols

For biometric dietary monitoring technologies to gain acceptance in research and clinical trials, rigorous validation against established reference methods is essential. Key performance metrics include:

- Eating Event Detection: Accuracy, precision, recall/sensitivity, and F1-score for identification of feeding episodes [6].

- Biomarker Sensing: Sensitivity, specificity, linearity, and stability for biochemical sensors; correlation with gold-standard laboratory measurements (e.g., blood assays) [23] [24].

- User Experience and Compliance: Device wear time, user comfort ratings, and qualitative feedback on usability in free-living settings [6].

Validation protocols should include both controlled laboratory studies with precise ground truth measurements (e.g., doubly labeled water for energy expenditure, weighed food records for intake) and free-living studies comparing sensor data with participant self-reports and other objective measures [6] [1].

Future Directions and Research Opportunities

The convergence of wearable sensors and biometric technologies presents several promising research directions for advancing dietary intake monitoring:

- Multi-omics Integration: Combining continuous sensor data with genomics, proteomics, and metabolomics to develop comprehensive nutritional status assessments [21].

- Closed-Loop Intervention Systems: Developing real-time feedback systems that use sensor data to automatically adjust nutritional interventions or provide personalized recommendations [21].

- Advanced Biomarker Discovery: Identifying and validating novel biomarkers in easily accessible biofluids (sweat, saliva) that correlate with specific nutrient intake or metabolic responses [23] [24].

- AI-Driven Predictive Models: Leveraging machine learning and artificial intelligence to predict individual responses to nutritional interventions based on continuous monitoring data [21] [20].

- Miniaturization and Power Management: Developing increasingly miniaturized, energy-efficient sensors capable of extended continuous monitoring without compromising data quality [25] [19].

As these technologies continue to evolve, they will enable researchers and drug development professionals to capture increasingly rich, objective data on dietary behaviors and their physiological consequences, ultimately advancing our understanding of nutrition's role in health and disease.

Advancements in wearable sensor technology and machine learning are revolutionizing the study of human nutrition, enabling the objective identification of distinct overeating phenotypes. This technical guide details how multimodal data—passive sensing, Ecological Momentary Assessment (EMA), and physiological monitoring—can delineate behavioral patterns such as "Evening Craving" and "Stress-driven Evening Nibbling." We summarize quantitative findings from key studies, provide detailed experimental protocols for replication, and contextualize these findings within a broader thesis on wearable sensors for dietary monitoring. The precision offered by this data-driven approach provides a foundation for highly personalized interventions and pharmaceutical development targeting specific overeating behaviors.

Obesity remains a significant global public health challenge, with traditional behavioral weight loss interventions often failing to provide long-term results [26]. Overeating is a common target of obesity interventions, yet these efforts have been largely unsuccessful, potentially because they fail to account for the heterogeneous nature of eating behaviors and the dynamic interplay of psychological, contextual, and physiological factors [26]. The limitations of self-reported data—including recall bias and imprecise meal timing—have further constrained our understanding [26] [27].

Wearable sensors present a paradigm shift, enabling the passive and continuous collection of rich, objective datasets on eating behaviors [26] [17]. When analyzed with sophisticated machine learning approaches, this data allows researchers to move beyond one-size-fits-all approaches and identify clinically relevant overeating phenotypes. This whitepaper focuses on two such phenotypes—late-night snacking and stress-driven eating—delineating their unique characteristics and the technological frameworks required for their identification.

Experimental Frameworks for Phenotype Identification

The SenseWhy Study: A Model for Behavioral Phenotyping

The SenseWhy study (2018–2022) established a comprehensive protocol for identifying overeating phenotypes using semi-supervised learning [26].

Study Population & Design:

- Participants: 65 individuals with obesity were initially recruited, with 48 providing sufficient data for analysis (77.1% female, mean age 41).

- Data Collection: 2,302 meal-level observations (average 48 per participant) were collected in free-living settings.

- Technology Stack:

- Wearable Camera: An activity-oriented wearable camera collected 6,343 hours of footage spanning 657 days for manual labeling of micromovements (bites, chews).

- Mobile App & 24-hour Recall: A mobile app and dietitian-administered 24-hour dietary recalls were used for dietary assessment.

- Ecological Momentary Assessment (EMA): Psychological and contextual data was gathered before and after meals.

Machine Learning & Clustering Methodology: The study employed a semi-supervised learning approach on EMA-derived features to identify distinct overeating clusters. XGBoost was selected as the best-performing model for supervised overeating detection, achieving a mean AUROC of 0.86 and AUPRC of 0.84 on the feature-complete dataset (combining EMA and passive sensing data) [26]. The top predictive features from the combined model were:

- Perceived overeating (positive association)

- Number of chews (positive association)

- Light refreshment (negative association)

- Loss of control (positive association)

- Chew interval (negative association)

This analysis revealed five distinct overeating phenotypes, including the "Evening Craving" and "Stress-driven Evening Nibbling" profiles that are the focus of this document [26].

A Protocol for Multimodal Physiological Monitoring

A 2025 study protocol outlines an alternative approach focusing on physiological and behavioral parameters using a customized wearable multi-sensor band [17]. This methodology is particularly relevant for objective detection without the privacy concerns of camera-based systems.

Study Design:

- Participants: 10 healthy volunteers (planned sample size).

- Intervention: Randomized consumption of high- (1052 kcal) and low-calorie (301 kcal) meals in a controlled clinical research facility.

- Monitoring: Sensors are worn from 5 minutes pre-meal to 1 hour post-prandial.

Sensor Suite and Measured Parameters:

Table: Wearable Sensor Specifications and Target Parameters [17]

| Sensor Type | Measurements | Relationship to Food Intake |

|---|---|---|

| Inertial Measurement Unit (IMU) | Accelerometer, Gyroscope, Magnetometer data | Captures eating gestures (hand-to-mouth movements), duration, and speed of eating. |

| Pulse Oximeter | Heart Rate (HR), Blood Oxygen Saturation (SpO₂) | Tracks metabolic increase post-meal; HR elevation correlates with meal size. |

| Photoplethysmography (PPG) | Continuous blood volume traces | Provides cardiorespiratory information linked to digestion. |

| Skin Temperature Sensor | Skin Temperature (Tsk) | Monitors post-prandial thermogenesis (increase in metabolic heat production). |

| Force Sensor | Band tightness variation | Ensures proper skin contact for consistent sensor readings. |

Validation Measures: The protocol includes intravenous blood sampling for glucose, insulin, and appetite hormones (e.g., ghrelin, PYY), and uses a traditional bedside monitor for validation of blood pressure, HR, and SpO₂ [17].

Quantitative Results and Phenotype Comparison

The following tables synthesize key quantitative findings from the SenseWhy study, providing a clear comparison of the detection methodologies and the identified phenotypes.

Table: Machine Learning Performance for Overeating Detection (SenseWhy Study) [26]

| Model Input Features | Algorithm | AUROC (Mean) | AUPRC (Mean) | Brier Score Loss |

|---|---|---|---|---|

| EMA-only | XGBoost | 0.83 (0.02) | 0.81 (0.02) | 0.13 (0.01) |

| Passive Sensing-only | XGBoost | 0.69 (0.04) | 0.69 (0.05) | 0.18 (0.02) |

| Feature-complete (Combined) | XGBoost | 0.86 (0.04) | 0.84 (0.04) | 0.11 (0.02) |

Table: Characteristics of Identified Evening Overeating Phenotypes [26]

| Phenotype | Key Defining Features | Contextual & Behavioral Cues | Psychological & Physiological Drivers |

|---|---|---|---|

| Evening Craving | - Evening eating (positive predictor)- High Pleasure-driven Desire for Food | - Location: Likely at home- Food Source: Snacks, ready-to-eat foods | - Hedonic eating motivations- Cravings not necessarily linked to stress |

| Stress-driven Evening Nibbling | - Evening eating (positive predictor)- High Pre-meal Stress | - Location: Likely at home- Activity: May co-occur with TV watching or solitary activities | - Negative affect as a primary trigger- Potential link to HPA-axis activation |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Tools for Wearable Dietary Monitoring Research

| Item Category | Specific Examples | Function in Research |

|---|---|---|

| Wearable Sensor Platforms | Custom multi-sensor wristband [17], Commercial IMU sensors [3], Bio-impedance wearables [17] | Captures core behavioral (movement) and physiological (HR, Tsk) data in free-living or lab settings. |

| Data Annotation Software | Video annotation tools for manual bite/chew labeling [26] | Creates ground-truth datasets for training and validating machine learning models on eating micro-behaviors. |

| Ecological Momentary Assessment (EMA) Tools | Mobile app-based surveys, Pre- and post-meal questionnaires [26] | Collects real-time self-reported data on context, emotion, stress, and perceived eating traits. |

| Biochemical Assay Kits | ELISA kits for glucose, insulin, ghrelin, PYY, cortisol | Measures blood biomarkers related to glucose metabolism, appetite regulation, and stress response for validation [17]. |

| Machine Learning Frameworks | XGBoost, SVM, Naïve Bayes (e.g., via Python Scikit-learn) [26] | Classifies overeating episodes and clusters distinct behavioral phenotypes from multimodal data. |

Visualizing the Research Workflow and Phenotype Logic

The following diagram illustrates the integrated workflow for identifying overeating phenotypes, from data acquisition to clinical application.

Research Workflow for Phenotype Identification

The logical relationship between the key predictive features and the two target phenotypes is shown below.

Feature Mapping to Overeating Phenotypes

Discussion and Future Directions

The delineation of "Evening Craving" and "Stress-driven Evening Nibbling" underscores that overeating is not a unitary behavior. The former is driven primarily by hedonic factors, while the latter is triggered by negative affect [26]. This distinction is crucial for targeted interventions; a drug aimed at dampening the stress response may be highly effective for the "Stress-driven" phenotype but less so for the "Evening Craving" phenotype.

Future research must address several challenges, including the standardization of terminology and tools [27], the validation of these phenotypes in larger and more diverse populations, and the refinement of non-invasive wearable sensors to reliably capture physiological markers like heart rate and skin temperature in real-world settings [17]. The integration of these multimodal data streams through advanced machine learning presents the most promising path forward for transforming the precision of dietary monitoring and obesity treatment.

From Data to Insights: Methodological Approaches and Real-World Applications

The accurate monitoring of dietary intake is a fundamental challenge in nutrition research, chronic disease management, and pharmaceutical development. Traditional methods, such as food diaries and 24-hour recalls, are plagued by inaccuracies due to reliance on memory and subjective reporting [28]. Wearable sensors offer a promising solution for objective, continuous monitoring of intake gestures and related physiological responses [17]. No single sensor modality can fully capture the complex process of eating; inertial measurement units (IMUs) detect hand-to-mouth gestures, acoustic sensors identify chewing and swallowing sounds, and photoplethysmography (PPG) sensors track physiological changes like heart rate variations associated with food intake [17] [14]. Consequently, sensor fusion architectures that intelligently combine these complementary data streams are critical for developing robust and accurate dietary monitoring systems. This technical guide explores the core architectures, methodologies, and experimental protocols for fusing IMU, acoustic, and PPG data within the specific context of dietary intake research.

Core Sensor Fusion Architectures

Sensor fusion integrates data from multiple sources to produce more consistent, accurate, and useful information than can be obtained from a single source. In dietary monitoring, three primary fusion architectures are employed, each with distinct advantages and implementation challenges.

Data-Level Fusion

Data-level fusion, also known as early fusion, involves the direct combination of raw or pre-processed data from multiple sensors before feature extraction.

- Process: Raw signals from IMU (accelerometer, gyroscope), acoustic microphone, and PPG sensor are synchronized and concatenated to form a unified data vector [29].

- Advantages: Preserves all original information, potentially allowing the model to discover complex, cross-modal patterns that are not apparent in processed features.

- Challenges: Highly susceptible to sensor-specific noise, requires precise time synchronization, and results in high-dimensional data, which increases computational cost [29]. For example, the sampling rates of an acoustic sensor (often kHz) and a PPG sensor (often Hz) differ by orders of magnitude, making direct data-level fusion non-trivial.

Feature-Level Fusion

Feature-level fusion, or intermediate fusion, is the most common approach. It involves extracting discriminative features from each sensor modality independently and then combining them into a single feature vector for classification.

- Process:

- IMU: Features like signal magnitude area, zero-crossing rate, and frequency-domain entropy are extracted from accelerometer and gyroscope data to capture wrist movement patterns characteristic of eating gestures [30] [14].

- Acoustic: Features such as Mel-Frequency Cepstral Coefficients (MFCCs) or spectral roll-off point are extracted to characterize chewing and swallowing sounds [14].

- PPG: Heart rate variability (HRV) features and time-domain statistics are extracted from the pulse wave signal [17].

- The feature sets are concatenated and input into a machine learning classifier (e.g., Support Vector Machine, Random Forest) or a deep learning model.

- Advantages: Reduces data dimensionality compared to raw data fusion and allows for the selection of the most informative features from each modality.

- Implementation: This approach was effectively used in a robust multimodal temporal convolutional network with cross-modal attention (MM-TCN-CMA) for fusing IMU and radar data, demonstrating the framework's adaptability to other modalities like acoustic and PPG [30].

Decision-Level Fusion

Decision-level fusion, or late fusion, involves processing each sensor modality through separate models and then combining their individual predictions.

- Process: A dedicated classifier is trained for each sensor type (e.g., an IMU model for gesture detection, an acoustic model for chew detection, a PPG model for heart rate change classification). The final intake decision is made by combining the outputs of these classifiers, often through weighted averaging, majority voting, or a meta-classifier [29].

- Advantages: Highly modular and resilient to missing modalities. If one sensor fails, the system can still function with reduced performance using the remaining sensors [30].

- Challenges: Requires training multiple models and may fail to capture lower-level correlations between modalities.

Table 1: Comparison of Primary Sensor Fusion Architectures for Dietary Monitoring

| Fusion Architecture | Description | Advantages | Disadvantages |

|---|---|---|---|

| Data-Level Fusion | Raw data streams are concatenated and processed together. | Maximizes information preservation; can model complex cross-sensor interactions. | High computational load; requires precise time synchronization; sensitive to noise. |

| Feature-Level Fusion | Features are extracted from each modality and combined into a single vector for classification. | Balances information content and dimensionality; allows for feature selection. | Risk of information loss during feature extraction; feature scaling can be challenging. |

| Decision-Level Fusion | Each modality is classified independently, and predictions are fused. | Modular and robust to missing data/sensors; enables use of bespoke models per modality. | Cannot model low-level cross-modal interactions; requires multiple models. |

Advanced Fusion Frameworks and Handling Missing Data

A significant challenge in real-world multimodal systems is ensuring robustness when one or more sensor modalities are unavailable. The robust multimodal temporal convolutional network with cross-modal attention (MM-TCN-CMA) framework addresses this by integrating a missing modality handling mechanism [30]. This framework uses cross-modal attention to allow features from one modality (e.g., IMU) to inform and refine the features of another (e.g., PPG), creating a more cohesive representation. During training, the model can be exposed to modality-incomplete data, teaching it to maintain performance even when data is missing during inference. Experimental results showed that this framework maintained performance gains of 1.3% and 2.4% in missing-Radar and missing-IMU scenarios, respectively, proving its viability for handling missing acoustic or PPG data [30].

An alternative, computationally efficient method involves transforming multisensory data into a 2D covariance representation [29]. This technique is based on the hypothesis that data from various sensors are statistically correlated, and the covariance matrix of these signals has a unique distribution for each activity (e.g., eating vs. non-eating). This 2D representation, which can be visualized as a contour plot, embeds the joint variability of different modalities into a single image that is then classified using a convolutional neural network (CNN). This approach effectively reduces high-dimensional, multimodal time-series data into a compact, information-rich 2D format suitable for resource-constrained environments [29].

Experimental Protocols and Validation

Validating sensor fusion architectures requires rigorous experimental protocols conducted in both controlled laboratory and free-living settings.

Protocol for Multimodal Data Collection

A representative study protocol for investigating physiological and behavioural responses to food intake is described below [17]:

- Participants: Recruit healthy volunteers (e.g., n=10) meeting specific inclusion criteria (e.g., age 18-65, BMI 18-30 kg/m²). Sample size justification should be based on a power analysis of the primary outcome measure, such as heart rate change pre- and post-meal [17].

- Study Design: A randomized crossover design where participants attend two study visits, consuming a pre-defined high-calorie meal and a low-calorie meal in a randomized order.

- Sensor Configuration:

- IMU: A custom multi-sensor wristband equipped with an accelerometer, gyroscope, and magnetometer to track hand-to-mouth movements and eating gestures [17].

- PPG: An integrated pulse oximeter in the wristband to continuously monitor heart rate (HR) and blood oxygen saturation (SpO₂). A skin temperature sensor is also often included.

- Acoustic: A body-worn microphone (e.g., on the neck or chest) to capture chewing and swallowing sounds. The eButton, a wearable device that captures images, can also be repurposed or integrated for this function [10].

- Ground Truth Validation:

- Biochemical: Blood samples are collected via intravenous cannula to measure blood glucose, insulin, and hormone levels, providing an objective measure of metabolic response [17].

- Clinical: Bedside vital sign monitors can validate wearable HR, SpO₂, and blood pressure readings.

- Behavioral: Video recording or self-reported food diaries can serve as a reference for intake timing and food type.

Performance Metrics and Quantitative Outcomes

The performance of fusion models is typically evaluated using standard classification metrics. The following table summarizes example outcomes from relevant studies employing multimodal fusion.

Table 2: Quantitative Performance of Multimodal Approaches in Dietary and Health Monitoring

| Study / Model Description | Sensors Fused | Key Performance Metric | Reported Outcome |

|---|---|---|---|

| Robust MM-TCN-CMA [30] | IMU & Radar (as a proxy for Acoustic/PPG) | Segmental F1-Score | 4.3% and 5.2% improvement over unimodal baselines. |

| Covariance Fusion + CNN [29] | Accelerometer, PPG, EDA, Temperature | Precision (for activity recognition) | Achieved a precision of 0.803 in leave-one-subject-out cross-validation. |

| Allied Data Disparity Technique [31] | Multimodal Wearable Sensors | Precision for Health Monitoring | Reported high precision levels in diagnosis-focused analysis. |

The Scientist's Toolkit: Research Reagent Solutions

Implementing the described fusion architectures requires a suite of hardware and software components.

Table 3: Essential Research Materials and Tools for Sensor Fusion Development

| Item / Technique | Function in Dietary Monitoring Research |

|---|---|

| Multi-Sensor Wristband (Custom) [17] | A platform integrating IMU, PPG, and skin temperature sensors for synchronized data acquisition from the wrist. |

| Body-Worn Acoustic Sensor | A microphone placed on the neck or chest to capture chewing and swallowing sounds for acoustic analysis. |

| eButton [10] | A wearable, chest-mounted imaging device that automatically captures food images for ground truth validation of food type and volume. |

| Continuous Glucose Monitor (CGM) [10] | A subcutaneous sensor that provides interstitial glucose readings, used to validate the physiological response to food intake. |

| Temporal Convolutional Network (TCN) | A deep learning model architecture effective for modeling long-range dependencies in time-series sensor data [30]. |

| Cross-Modal Attention Mechanism | An algorithm that allows features from one sensor modality to interact with and refine features from another, improving fusion efficacy [30]. |

| Covariance Matrix Representation [29] | A technique to transform multi-sensor time-series data into a single 2D image that represents inter-sensor correlations, suitable for CNN-based classification. |

Visualizing a Generalized Sensor Fusion Workflow for Dietary Monitoring

The following diagram illustrates a logical workflow for a feature-level fusion architecture that incorporates robustness to missing data, as discussed in the previous sections.